Introduction

The incidence of involuntary admissions is on the rise worldwide (Sheridan Rains et al., Reference Sheridan Rains, Zenina, Dias, Jones, Jeffreys, Branthonne-Foster and Johnson2019). Involuntary admissions are used when patients are in urgent need of psychiatric inpatient treatment, but are too ill (typically psychotic) to consent (Salize & Dressing, Reference Salize and Dressing2004). Involuntary admissions can be traumatic for patients and are costly for society (Katsakou & Priebe, Reference Katsakou and Priebe2007). Therefore, various interventions to reduce the need for involuntary admissions have been investigated (de Jong et al., Reference de Jong, Kamperman, Oorschot, Priebe, Bramer, van de Sande and Mulder2016), but none are currently systematically applied in the Central Denmark Region. To ensure cost-effectiveness of implementation, these interventions should preferably target patients at high risk of involuntary admission. However, such individual risk assessments are complex.

Several risk factors for involuntary admission have been identified in large patient populations, e.g. prior involuntary admission, and psychotic or bipolar disorders (Walker et al., Reference Walker, Mackay, Barnett, Sheridan Rains, Leverton, Dalton-Locke and Johnson2019). However, assessing risk at the level of the individual patient is challenging due to potential interactions between risk factors, waxing and waning of risk factors, and irregular/noisy clinical data on risk factors (Bzdok & Meyer-Lindenberg, Reference Bzdok and Meyer-Lindenberg2018). This resonates well with the complexity of patient-level risk assessment in clinical practice. Recently, however, machine learning methods have been demonstrated to handle this level of complexity well in some cases – with notable exceptions (Christodoulou et al., Reference Christodoulou, Ma, Collins, Steyerberg, Verbakel and Van Calster2019). Unlike standard statistical analyses, machine learning inherently accommodates complex interactions and idiosyncrasies and also handles large amounts of predictors (Cerqueira, Torgo, & Soares, Reference Cerqueira, Torgo and Soares2019; Song, Mitnitski, Cox, & Rockwood, Reference Song, Mitnitski, Cox and Rockwood2004).

We are aware of two prior machine learning studies having examined involuntary admission via routine clinical data (Karasch, Schmitz-Buhl, Mennicken, Zielasek, & Gouzoulis-Mayfrank, Reference Karasch, Schmitz-Buhl, Mennicken, Zielasek and Gouzoulis-Mayfrank2020; Silva, Gholam, Golay, Bonsack, & Morandi, Reference Silva, Gholam, Golay, Bonsack and Morandi2021). Both, however, fail to construct a relevant prediction task as they do not issue predictions, which is a prerequisite for clinical relevance, but merely utilize machine learning methods for identification of risk factors for involuntary admission. Additionally, both studies only consider patients with complete data in their primary analysis, which could potentially decrease the generalizability as data from real-world practice are typically not missing at random (Bzdok & Meyer-Lindenberg, Reference Bzdok and Meyer-Lindenberg2018). We have previously shown that a machine learning model trained on routine clinical data from electronic health records (EHRs) can accurately predict mechanical restraint (Danielsen, Fenger, Østergaard, Nielbo, & Mors, Reference Danielsen, Fenger, Østergaard, Nielbo and Mors2019) and are currently in the process of implementing a decision support (risk reduction) tool based on this model in clinical practice. To our knowledge, no studies have used machine learning to predict involuntary admissions at the level of the individual patient using EHR data. Therefore, the aim of this study was to fill this gap in the literature.

Methods

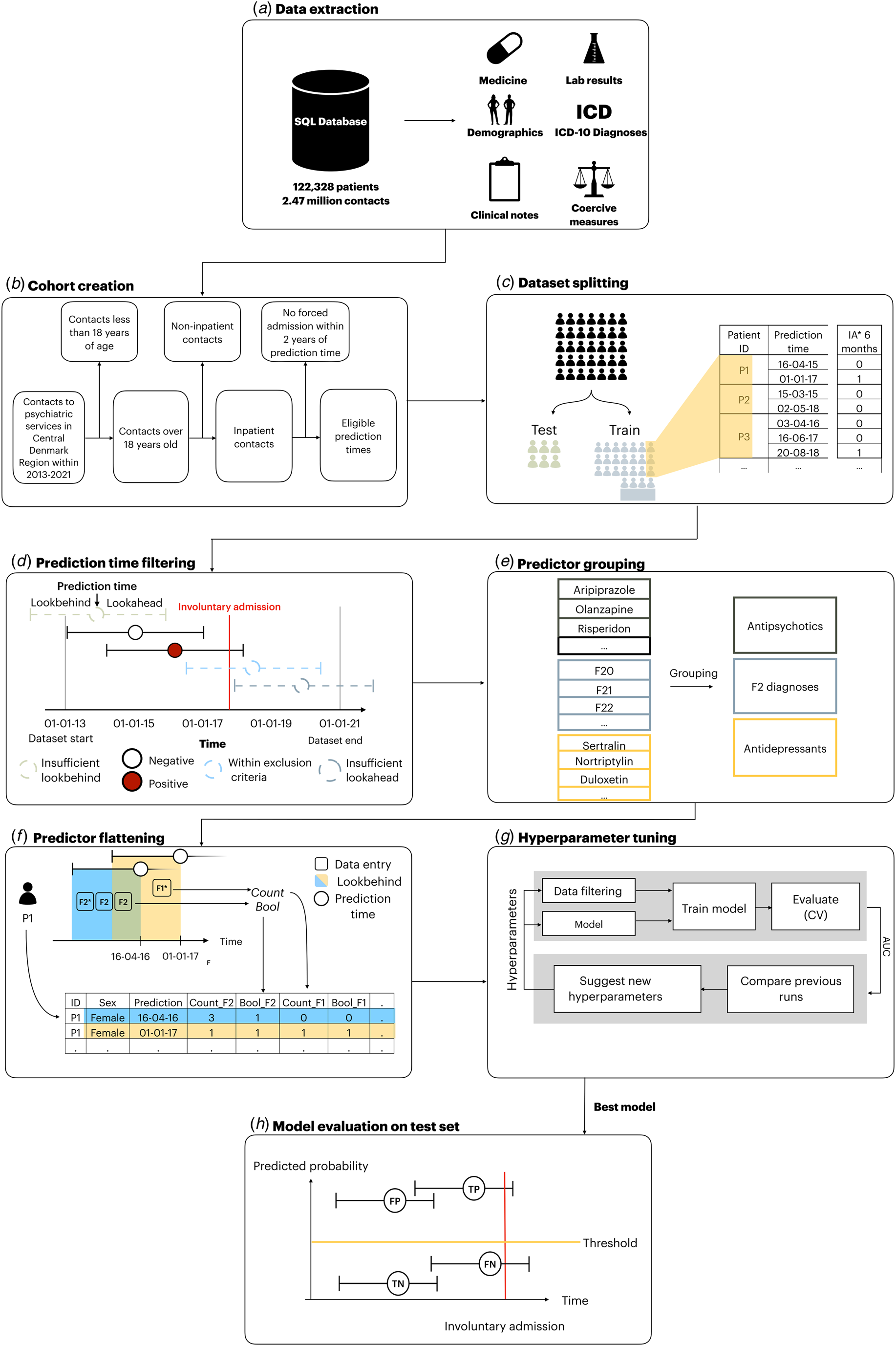

An illustration of the methods used in this study is shown in Fig. 1.

Extraction of data and outcome, dataset splitting, prediction time filtering, specification of predictors and flattening, model training, testing, and evaluation. This figure was modified to this project based on Bernstorff et al. (Reference Bernstorff, Hansen, Enevoldsen, Damgaard, Hæstrup, Perfalk and Østergaard2024). IA, involuntary admissions; F1 and F2, ICD-10 diagnoses within the group of diagnoses included in F1 and F2 chapters; CV, cross-validation; TP, true positive; FP, false positive; TN, true negative; FN, false negative.

Reporting guidelines

This study adhered to the reporting guidelines of TRIPOD+AI (Collins et al., Reference Collins, Moons, Dhiman, Riley, Beam, Van Calster and Logullo2024) and the TRIPOD+AI checklist is available in the online Supplementary materials (Table S7).

Data source

The study is based on data from the PSYchiatric Clinical Outcome Prediction cohort, encompassing routine clinical EHR data from all individuals with at least one contact to the Psychiatric Services of the Central Denmark Region in the period from January 1, 2011 to November 22, 2021 (Hansen et al., Reference Hansen, Enevoldsen, Bernstorff, Nielbo, Danielsen and Østergaard2021). The Central Denmark Region is one of the five Danish Regions and has a catchment area of approximately 1.3 million people. The dataset includes records from all service contacts to the public hospitals in the Central Denmark Region (both psychiatric and general hospitals). A service contact can be either an inpatient admission, outpatient visit, home visit, or consultation by phone, and each is labeled with a timestamp and diagnosis. Due to the universal healthcare system in Denmark, the large majority of hospital contacts are to public hospitals (there are no private psychiatric hospitals in Denmark) and, thus, covered by these data. Importantly, the dataset also includes blood samples from general practitioners as they are analyzed at public hospitals and, as a result, are included in this dataset (Bernstorff et al., Reference Bernstorff, Hansen, Enevoldsen, Damgaard, Hæstrup, Perfalk and Østergaard2024).

Data extraction

All EHR data from patients with at least one contact with the Psychiatric Services of the Central Denmark Region in the period from 2013 to 2021 were extracted (Fig. 1a). To ensure the feasibility of subsequent implementation of a predictive machine learning model potentially developed in this study, only data collected routinely as part of standard clinical practice and recorded in the EHR system were used (i.e. there was no data collection for the purpose of this study) (Hansen et al., Reference Hansen, Enevoldsen, Bernstorff, Nielbo, Danielsen and Østergaard2021).

Cohort definition

Figure 1b illustrates the cohort definition. The cohort consisted of all adult patients with at least one contact to the Psychiatric Services of the Central Denmark Region in the time period from 2011 to 2021. Data prior to 2013 were dropped due to data instability, primarily due to the gradual implementation of a new EHR system in 2011 (Bernstorff, Hansen, Perfalk, Danielsen, & Østergaard, Reference Bernstorff, Hansen, Perfalk, Danielsen and Østergaard2022; Hansen et al., Reference Hansen, Enevoldsen, Bernstorff, Perfalk, Danielsen, Nielbo and Østergaard2023). However, data on involuntary admissions from 2012 were used to establish incidence of involuntary admissions since these data were registered via an alternative digital system and, therefore, unaffected by the implementation of the new EHR system (Sundhedsdatastyrelsen:Register over Anvendelse af Tvang i Psykiatrien, 2024 ).

Dataset splitting

The data were randomly split into a training (85%) and a test (15%) set by sampling unique patients, stratified by whether they had an involuntary admission within the follow-up (see Fig. 1c). This ensured a balanced proportion of patients with involuntary admission in the training and test sets and prevented leakage of learnt subject-specific patterns (due to repeated observations) to the test set. The test set was not examined until the final stage of model evaluation, where no additional changes were made to the model.

Prediction times and exclusion criteria

Prediction times were defined as the last day of a voluntary psychiatric admission. A prediction at this timepoint would enable outpatient clinics to initiate targeted intervention/monitoring to reduce the risk of involuntary admission. Additionally, an exclusion criterion stipulating that patients should not have had an involuntary admission in the 2 years prior the prediction time was implemented. This prevented predictions in cases where clinicians were already aware of the patient's risk of involuntary admission, thus proactively reducing the risk of alert fatigue. Additionally, if a prediction time did not have a long enough lookbehind- (for predictors) or lookahead window (for outcomes), that prediction time was dropped (Fig. 1d). For definition of lookbehind- and lookahead windows, see the following two sections.

Outcome definition and lookahead window

The outcome was defined as the start of an involuntary admission. The lookahead window (the period following the prediction time in which the outcome could occur) was 180 days. Hence, all prediction times for which an involuntary admission occurred within 180 days were deemed to be positive outcomes (Fig. 1d).

Predictor engineering and lookbehind window

A full list of the predictors (a total of 1828) and their definitions is available in online Supplementary Table S1. The predictors were chosen based on the literature on risk factors for involuntary admissions (Walker et al., Reference Walker, Mackay, Barnett, Sheridan Rains, Leverton, Dalton-Locke and Johnson2019) supplemented with clinical domain knowledge. Predictors were engineered by aggregating the values for the variable of interest within a specified lookbehind window (10, 30, 180, and 365 days leading up to a prediction time) using different predictor aggregation functions (mean, max, bool, etc.). The specific aggregation methods for each variable can be found in online Supplementary Table S1. This processing was performed using the timeseriesflattener v2.0.1 package (Fig. 1f) (Bernstorff, Enevoldsen, Damgaard, Danielsen, & Hansen, Reference Bernstorff, Enevoldsen, Damgaard, Danielsen and Hansen2023). If a predictor was not present in the lookbehind period from a prediction time, it was labeled as ‘missing’. However, these instances do not indicate missing values in the conventional sense, as they stem from a genuine lack of data, rather than, e.g. a missed visit in a clinical trial. This absence reflects real-world clinical practice, and, therefore, patients with such missing data should not be excluded, as it aligns with the available data for potential implementation.

The predictors can be grouped into nine strata: age and sex, hospital contacts, psychiatric diagnoses, medications, lab results, coercive measures, psychometric rating scales, suicide risk assessment, and free-text predictors from EHR clinical notes (extracted via natural language processing). Specifically, hospital contacts included both inpatient and outpatient contacts with linked diagnoses. Diagnoses included all psychiatric subchapters (F0–F9) from the International Classification of Disease, Tenth Revision (ICD-10) (World Health Organization, n.d.-b) with specific predictors for schizophrenia (F20), bipolar disorders (F30–F31), and cluster b-personality disorders (F60.2–F60.4) (dissocial-, borderline-, and histrionic personality disorder). Medication predictors were based on structured anatomical therapeutic chemical classification system codes (World Health Organization, n.d.-a) and grouped as follows (Fig. 1e): antipsychotics, first-generation antipsychotics, second-generation antipsychotics, depot antipsychotics, antidepressants, anxiolytics, hypnotics/sedatives, stimulants, analgesics, and drugs for alcohol abstinence/opioid dependence. Finally, lithium, clozapine, and olanzapine were included as individual predictors. Predictors based on laboratory tests included plasma levels of antipsychotics, antidepressants, paracetamol, and ethanol. Coercive measures included involuntary medication, manual restraint, chemical restraint, and mechanical (belt) restraint. Scores from psychometric rating scales included the Brøset violence checklist (Woods & Almvik, Reference Woods and Almvik2002), the 17-item Hamilton depression rating scale (HAM-D17) (Hamilton, Reference Hamilton1960) and a simplified version of the Bech Rafaelsen mania rating scale (MAS-M) (Bech, Rafaelsen, Kramp, & Bolwig, Reference Bech, Rafaelsen, Kramp and Bolwig1978). Data on suicide risk assessment were based on a scoring system used in the Central Denmark Region with the following risk levels: 1 (no increased risk), 2 (increased risk), and 3 (acutely increased risk).

Predictors from free text stemmed from the subset of EHR clinical note types deemed to be most informative and stable over time, e.g. ‘Subjective Mental State’ and ‘Current Objective Mental State’ (for the full list of clinical note types, see online Supplementary Table S2) (Bernstorff et al., Reference Bernstorff, Hansen, Perfalk, Danielsen and Østergaard2022). Two different algorithms were applied to create predictors from the free text: term frequency-inverse document frequency (TF-IDF) (Pedregosa et al., Reference Pedregosa, Varoquaux, Gramfort, Michel, Thirion, Grisel and Cournapeau2011) and sentence transformers (Reimers & Gurevych, Reference Reimers and Gurevych2019). For the TF-IDF model, the unstructured free text was first preprocessed by lower-casing all words and removing stop words and symbols. Subsequently, the model generated all uni- and bi-grams. Second, top 10% by document frequency were removed (due to assumed low predictive value). Lastly, the top 750 uni- or bi-grams were included in the model. For each patient, all clinical notes within the 180 days lookbehind prior to a prediction time were concatenated into a single document from which the TF-IDF predictors were constructed. A pre-trained multilingual sentence transformer model (Reimers & Gurevych, Reference Reimers and Gurevych2019) was applied to extract sentence embeddings (model: ‘paraphrase-multilingual-MiniLM-L12-v2’). This model is bound by a maximum input sequence length of 512 tokens. For each patient, the first 512 tokens from each clinical note within the 180 days lookbehind prior to a prediction time were extracted and input to the model, yielding a contextualized embedding of the text with 384 dimensions. Subsequently, the embeddings from each note within the lookbehind window were averaged to obtain a single aggregated embedding, which was included as a predictor in the model.

Hyperparameter tuning and model training

Two types of machine learning models were trained: XGBoost and elastic net-regularized logistic regression (using Scikit-learn, version 1.2.1) (Pedregosa et al., Reference Pedregosa, Varoquaux, Gramfort, Michel, Thirion, Grisel and Cournapeau2011). XGBoost was chosen because gradient boosting techniques typically excel in predictive accuracy for structured data, offer rapid training, and intrinsically handle missing values (Grinsztajn, Oyallon, & Varoquaux, Reference Grinsztajn, Oyallon and Varoquaux2022). Elastic net-regularized logistic regression served as a benchmark model (Desai, Wang, Vaduganathan, Evers, & Schneeweiss, Reference Desai, Wang, Vaduganathan, Evers and Schneeweiss2020; Nusinovici et al., Reference Nusinovici, Tham, Chak Yan, Wei Ting, Li, Sabanayagam and Cheng2020). A five-fold stratified cross-validation was adopted for training with no patient occurring in more than one-fold. Fine-tuning of hyperparameters (see online Supplementary Table S3 for details) was performed over 300 runs to optimize the area under the receiver operating characteristic curve (AUROC) through the tree-structured parzen estimator method in Optuna v2.10.1.33 (see Fig. 1g) (Lundberg & Lee, Reference Lundberg and Lee2017). All analyses were performed using Python (version 3.10.9).

Model evaluation on test data

The XGBoost and elastic net model which achieved the highest AUROC following cross-validation on the training set were evaluated on the test set (see Fig. 1h). Apart from the AUROC, we also calculated the sensitivity, specificity, positive predictive values (PPVs), and negative predictive values (NPVs) at predicted positive rates (PPRs) of 1%, 2%, 3%, 4%, 5%, 10%, 20%, and 50%, respectively. The PPR is the proportion of all prediction times that are marked as positive. To test the robustness of the best performing model, its performance was examined across sex, age, months since the first visit, month of year, and day of week strata. Furthermore, a time-to-outcome robustness analysis was conducted to assess how the model behaved at different time-to-outcome thresholds.

Additionally, the calibration of the model was visualized with calibration plots with adjoining distribution plots of the predicted probabilities for the best XGBoost and elastic net model. Clinical usefulness was assessed by decision curve analysis (Vickers & Elkin, Reference Vickers and Elkin2006). Plots were limited to an upper bound of 0.20 which represents a one-in-five chance of having an involuntary admission within 6 months should nothing change, and it is unlikely that risk thresholds greater would be tolerated. Net benefit is calculated as the additional percentage of cases that could be intervened upon with use of our models with no increase in false-positives.

To address the temporal stability of the best performing models, we performed temporal cross-validation using gradually increasing alternating endpoints for the training set (2016–2020) with validation on the available data from the subsequent remaining years after the training set endpoint (2016–2021). This analysis replicates a case where a trained model is implemented at a given timepoint, and the performance of the model is evaluated at a later timepoint.

Estimation of predictor importance

To interpret which predictors informed the predictions in the models, we calculated predictor importance metrics. For XGBoost models, predictor importance was estimated via information gain (Chen & Guestrin, Reference Chen and Guestrin2016). In this case the gain of a predictor is calculated as the average improvement in loss when generalizing to the training data accomplished by the predictor across all node splits in the model that handle the predictor. For elastic net models, predictor importance was analyzed by obtaining the standardized model coefficients (Zou & Hastie, 2005). Standardized coefficients represent the change in log-odds for a one standard deviation increase in the predictor values. Hence, the magnitude of a coefficient specifies the strength of the relationship between the predictor and the outcome while controlling for the other predictors and this measure, therefore, allows for easy comparison of the relative importance of predictors. Importantly, the elastic net model coefficients are directed, meaning they convey whether an increase in the predictor value pushes the model toward a positive or a negative prediction. This is not the case for the information gain estimations for the XGBoost models which only inform about general predictive importance regardless of direction.

Secondary analyses of alternative model designs

As secondary analyses, we performed cross-validated model training using alternative model designs. First, we removed the implemented exclusion criterion of having an involuntary admission in the 2 years preceding a prediction time. Second, we assessed the importance of the number of predictors, by using only subsets of the full predictor set in the model training. Specifically, three distinct predictor sets were considered (all including sex and age): only diagnoses, only patient descriptors (all predictors except for text predictors), and only text predictors. Third, models with lookahead windows of 90 and 365 days, respectively, were trained. The performance metrics of the alternative model designs are derived from the cross-validation on the training set and were not tested on the hold-out test set.

Ethics

The study was approved by the Legal Office of the Central Denmark Region in accordance with the Danish Health Care Act §46, Section 2. The Danish Committee Act exempts studies based only on EHR data from ethical review board assessment (waiver for this project: 1-10-72-1-22). Handling and storage of data complied with the European Union General Data Protection Regulation. The project is registered on the list of research projects having the Central Denmark Region as data steward. There was no patient nor public involvement in this study.

Results

The full dataset consisted of 52 600 voluntary admissions distributed among 19 252 unique patients. A total of 1672 of the voluntary admissions were followed by an involuntary admission within 180 days after discharge (positive outcome), distributed across 806 unique patients (an involuntary admission can be included in multiple positive outcomes as a patient can have multiple voluntary admissions [prediction times] in the 180 days prior to an involuntary admission [positive outcome]).

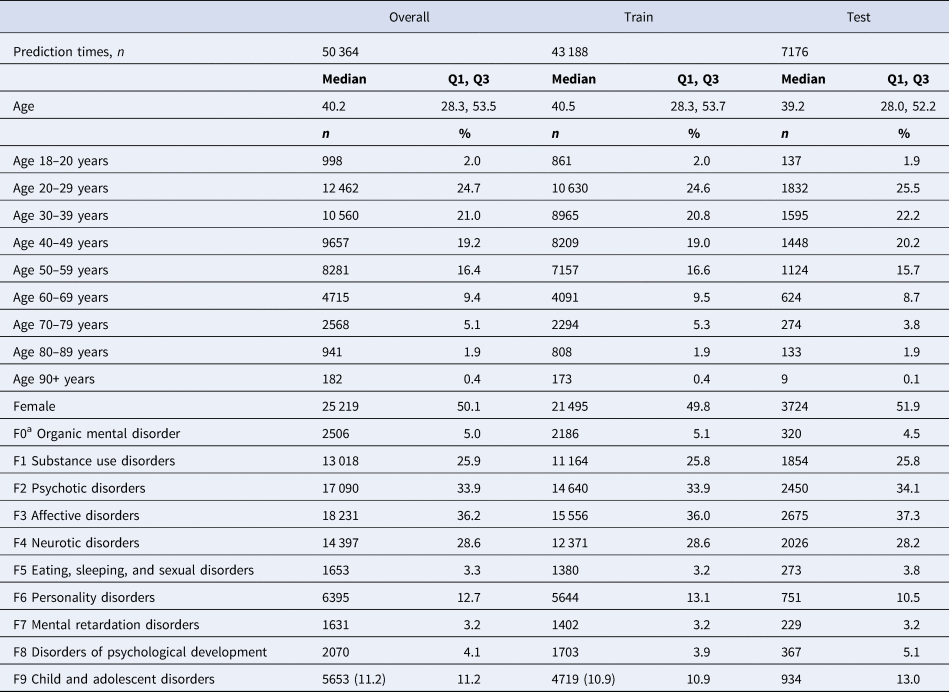

Table 1 lists clinical and demographic patient data for the prediction times included in the training and evaluation of the main model. The main model included predictors with a lookbehind window of up to 365 days. After filtering away all prediction times where the lookbehind or lookahead windows extended beyond the available data for a patient, a total of 50 364 prediction times remained. These prediction times were distributed across 17 968 unique patients (49.4% females [training set = 49.3% and test set = 50.0%], median age = 40.2 years [training set = 40.5 and test set = 39.2]).

Descriptive statistics for prediction times

a (F*) indicates the ICD-10 chapter.

Hyperparameters and model training

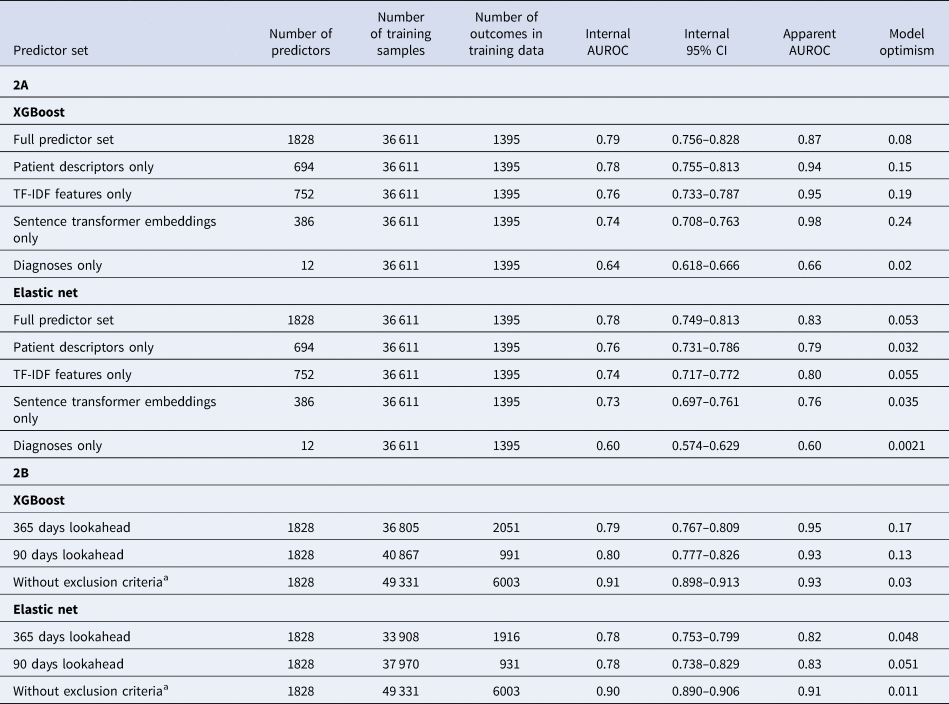

The cross-validation on the training set for model tuning showed that XGBoost (full predictor set AUROC = 0.79) outperformed elastic net (full predictor set AUROC = 0.78) across all model variations (see Table 2). The hyperparameters used for the best XGBoost and elastic net models are listed in online Supplementary Table S4.

Model performance after cross-validation hyperparameter tuning for XGBoost and elastic net models trained on different subsets of the predictors (2A) and different lookaheads (2B)

All models included demographics (age/sex). The models with different lookahead window were trained on the full predictor set. Details on predictor description can be found in online Supplementary Table S1. Apparent AUROC represents the performance on the training data and the internal AUROC represents the performance on the test folds during cross-validation. Model optimism is calculated from the apparent AUROC – Interval AUROC.

a Models trained without the exclusion criterion of having an involuntary admission in the 2 years preceding the prediction time.

Model evaluation on test data

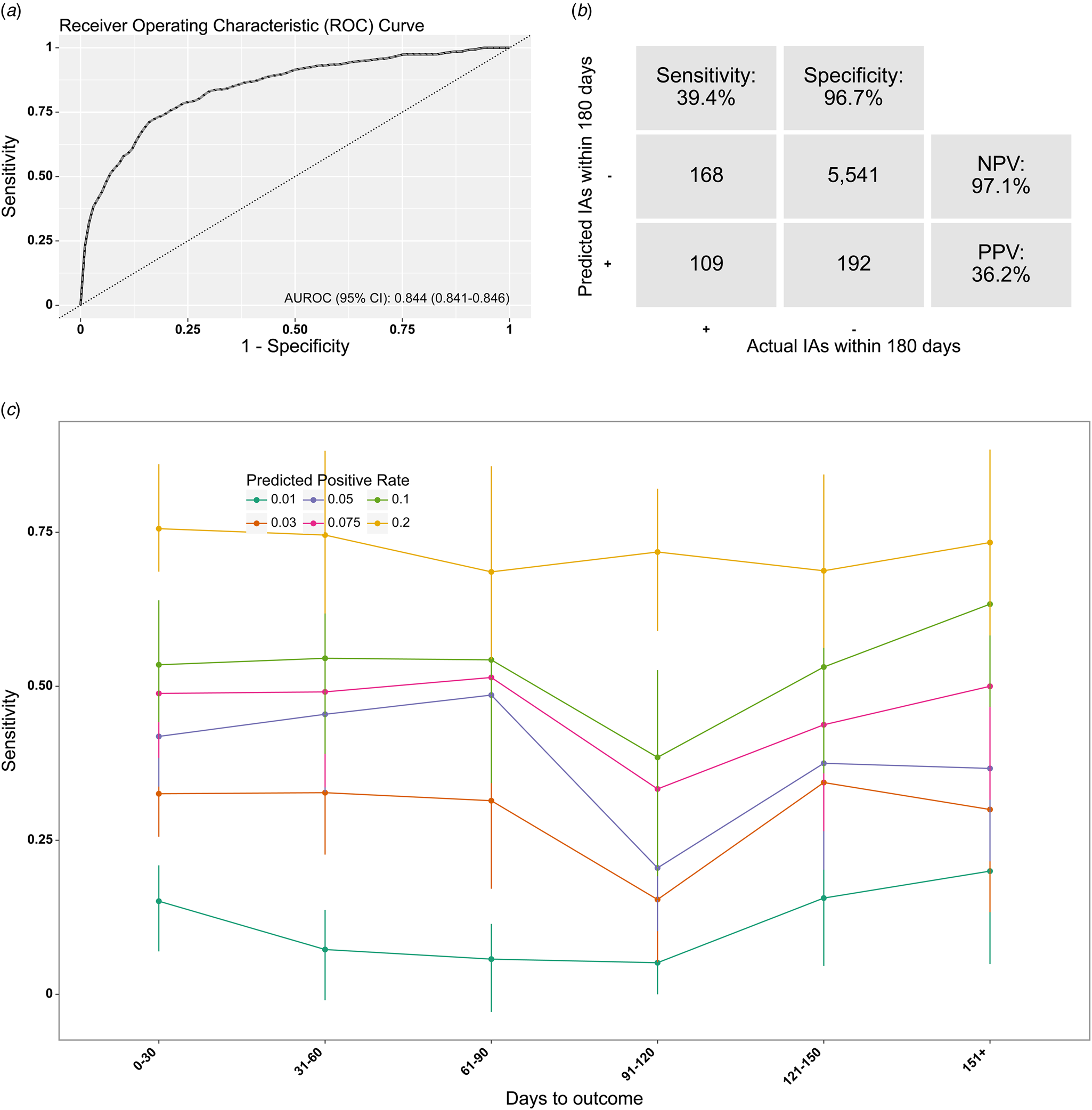

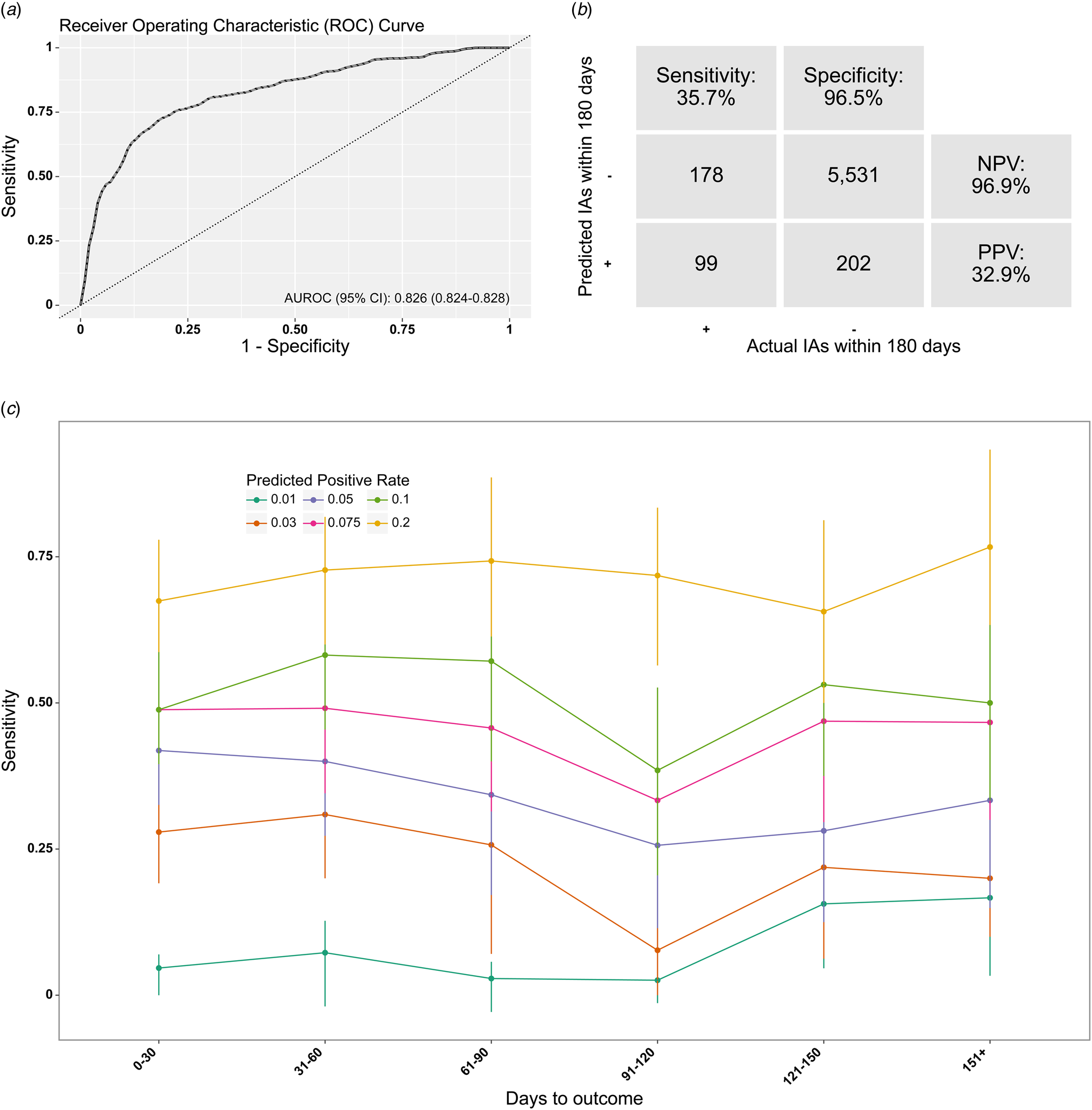

After the model selection in the training phase, the best performing XGBoost and elastic net models yielded an AUROC of 0.84 and 0.83, respectively, on the test set (see Figs 2a and 3a).

Model performance of the XGBoost model in the test set. (a) Receiver operating characteristics curve. AUROC, area under the receiver operating characteristics curve. (b) Confusion matrix. PPR, positive predictive rate; NPV, negative predictive value; IA, involuntary admission. The decision threshold is defined based on a PPR of 5%. (c) Sensitivity (at the same specificity) by months from prediction time to event, stratified by desired PPR.

Model performance of the elastic net model in the test set. (a) Receiver operating characteristics curve. AUROC, area under the receiver operating characteristics curve. (b) Confusion matrix. PPR, positive predictive rate; NPV, negative predictive value; IA, involuntary admission. The decision threshold is defined based on a PPR of 5%. (c) Sensitivity (at the same specificity) by months from prediction time to event, stratified by desired PPR.

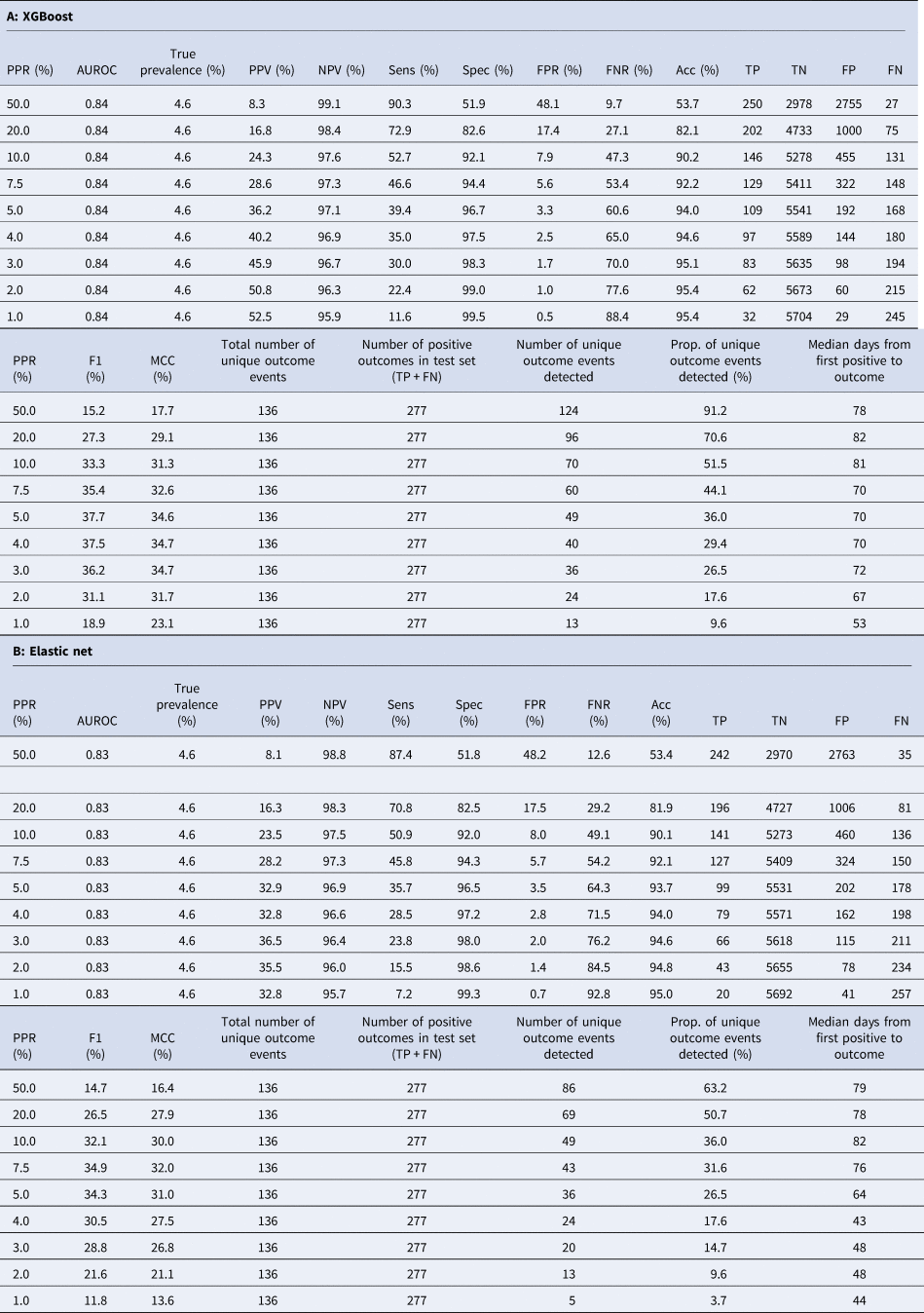

Table 3 lists the performance metrics from the XGBoost (3A) and elastic net (3B) model on the test set based on different PPRs. At a PPR of 5%, the XGBoost model has a sensitivity of 39% and a PPV of 36%. Thus, approximately two out of five of all true positive outcomes are correctly predicted, and for every three positive predictions, more than one prediction time is truly followed by an involuntary admission within 180 days. At this PPR, 36% of the unique involuntary admissions that underlie the positive outcomes are correctly detected (predicted positive) at least once. In comparison, at a PPR of 5%, the elastic net model has a sensitivity of 36% and a PPV of 33%. At this PPR, 27% of the unique involuntary admissions that underlie the positive outcomes are correctly detected (predicted positive) at least once. Decision curve analysis of the models showed that both yield a universally greater net benefit than competing strategies in a sensible threshold probability range of 0.02–0.20 (see online Supplementary Fig. S5).

Performance metrics on test set for model trained on full predictor set at varying positive rates

Predicted positive rate (PPR): the proportion of contacts predicted positive by the model. Since the model outputs a predicted probability, this is a threshold set during evaluation. True prevalence: the proportion of admissions that qualified for an outcome within the lookahead window. AUROC: area under the receiver operator characteristic curve. PPV: positive predictive value. NPV: negative predictive value. FPR: false positive rate. FNR: false negative rate. TP: true positives. Numbers are based on prediction times (end of psychiatric admission). TN: true negatives. Numbers are based on prediction times (end of psychiatric admission). FP: false positives. Numbers are based on prediction times (end of psychiatric admission). FN: false negatives. Numbers are based on prediction times (end of psychiatric admission). F1: the harmonic mean of the precision and recall. MCC: Matthew's correlation coefficient. Prop. of unique outcome events detected: proportion of the involuntary admissions that are flagged by a least one true positive prediction. Median days from first positive to outcome: for all involuntary admissions with at least one true positive, the number of days of from first-positive prediction to outcome (involuntary admission).

Figures 2C and 3C show the sensitivity of the models for prediction times with varying time to the outcome at different PPRs. The sensitivity curves appear to remain stable as the time to outcome increases for both models. The median time from the first-positive prediction to the involuntary admission was 70 days for the XGBoost model and 64 days for the elastic net model.

Online Supplementary Figs S1 and S2 show the performance of the models across different patient characteristics and calendar time subgroups. The models appear robust across all characteristics and the minor fluctuations, such as the variation in performance between the sexes, can likely be attributed to similar minor differences in sample distributions. The calibration curves (see online Supplementary Figs S3 and S4) indicate that both models are sufficiently calibrated with both models, however, slightly undershooting on patients with higher predicted probabilities. The plots are cut-off at predicted probabilities above 20% as there are too few patients with higher probabilities to make stable calibration estimates above this threshold.

Online Supplementary Tables S5 and S6 list the 30 predictors with the highest information gain (XGBoost) and standard coefficients (elastic net). For the best XGBoost model 14 out of the 30 top predictors were text predictors – both represented by TF-IDF and sentence transformers. The TF-IDF predictors were based on the following terms from free text: ‘ECT’, ‘police’, ‘social psychiatric institution’, ‘self-harm’, and ‘woman’. The 16 remaining predictors were distributed on the following patient descriptors: detention (sectioning during an inpatient stay after being admitted voluntarily), coercion due to danger to self or others, lab test of plasma-paracetamol, Brøset violence checklist score, diagnosis of child and adolescent disorder/unspecified mental disorder (ICD F9-chapter), visit due to a physical disorder, suicide risk assessment score, and diagnosis of personality disorder (ICD F6-chapter). As elastic net coefficients provide direction, the top predictors are divided into 15 positive (increases risk of outcome) and 15 negative coefficients (decreases risk of outcome). For the 15 top predictors with positive coefficients, six were free-text predictors only including TF-IDF predictors: ‘Self-harm’, ‘Social Psychiatric Institution’, ‘Contact person’, ‘Eat’, ‘Mother’, and ‘Simultaneously’. The remaining nine top predictors covered the following patient descriptors: suicide risk score, detention, plasma paracetamol, visits due to a physical disorder, involuntary medication, alcohol abstinence medication, diagnosis of child and adolescent disorder/unspecified mental disorder (ICD-10, chapter F9), and Brøset violence checklist score. For the 15 top predictors with negative coefficients, 10 were free-text predictors including both TF-IDF and sentence transformers. The TF-IDF predictors were based on the following terms from free text: ‘Energy’, ‘Looking forward to’, ‘Thursday’, ‘Receive’, ‘Daughter’, and ‘Interest’. The remaining five top predictors covered the following patient descriptors: clozapine, plasma clozapine (occurred twice with different lookbehind), plasma lithium, and coercion due to a physical disease.

The temporal stability of the elastic net and XGBoost models is visualized in online Supplementary Figs S6 and S7. Most model configurations show a gradual decline in performance as a function of time since the end of model training. However, models trained on data from a longer time period (and, thus, more data) display better temporal stability.

Secondary analyses on alternative model designs

Performance of the cross-validated models using different subsets of the full predictor set (Table 2A) different lookaheads (Table 2B), and models without the exclusion criterion of having an involuntary admission in the 2 years preceding the prediction time (Table 2B) are shown in Table 2. Among those trained on different subsets of predictors, the best performing model was the XGBoost model trained on only patient descriptors (no text). In the models trained on different outcome lookaheads, the models with a 90 day lookahead performed better than the ones with 180 and 365 day lookaheads. Both models trained without the exclusion criteria significantly outperformed any of the other model configurations.

Discussion

This study investigated if involuntary admission can be predicted using machine learning models trained on EHR data. When issuing a prediction at the discharge from a voluntary inpatient admission, based on both structured and text predictors, the best model (XGBoost) performed with an AUROC of 0.84. The model was generally stable across different patient characteristics, calendar times, and with varying times from prediction to outcome.

To our knowledge, this is the first study to develop and validate a prediction model for involuntary admission using routine clinical data from EHRs. We can, therefore, not offer a direct comparison of our results to those from other studies. However, a crude comparison to other prediction studies in psychiatry shows that our results are within the performance ranges that have previously been published (Meehan et al., Reference Meehan, Lewis, Fazel, Fusar-Poli, Steyerberg, Stahl and Danese2022). Many of these studies have, however, not been developed on routine clinical data, but rather on data collected for the purpose of the studies, which complicates clinical implementation.

On the independent test set, the prediction model performed with an AUROC above the upper boundary of the confidence interval (CI) estimated from the five-fold cross-validation in the training phase for both XGBoost and elastic net models. While this suggests that the models did not overfit to the cross-validation training folds, it does expose a high degree of uncertainty in the precision of the performance measure. The variation in model performance might be attributable to both the limited number of positive cases in the test set and the general heterogeneity of the outcome and its underlying causes. The overall discrimination and calibration between the two models on the hold-out test set were similar. However, a main performance difference is observed in the number of unique predicted outcomes where the XGBoost model consistently outperformed the elastic net model across varying positive prediction rates. This metric is important when analyzing dynamic prediction models because multiple true positive predictions for the same outcome event do not necessarily lead to multiple interventions and, thus, increased probability of preventing the outcome event; once a patient has already been ‘flagged’ as high-risk, subsequent flaggings are not equally important. At a PPR of 5%, the XGBoost model correctly ‘flagged’ 36% of all unique involuntary admissions at least once. Strikingly, this rate is first achieved for the elastic net model when the PPR is set to 10%, meaning that double the amount of ‘flaggings’ need to be made by this model to identify the same number of unique outcome events.

When considering additional performance metrics, both models demonstrated relatively stable sensitivity when increasing time from prediction to outcome (up to several months), highlighting that model performance is not merely driven by prediction of cases where an involuntary admission occurs shortly after discharge from a voluntary admission. Indeed, the median time from the first-positive prediction to the involuntary admission of 70 (XGBoost) and 64 (elastic net) days is sufficient to issue a potentially preventive intervention through, e.g. advance statements/crisis plans (de Jong et al., Reference de Jong, Kamperman, Oorschot, Priebe, Bramer, van de Sande and Mulder2016). Both models demonstrated clinical usefulness, showing a positive net benefit at a low threshold probability. This aligns with the fact that potential interventions, such as crisis plans and/or intensified outpatient treatment, would have a low clinical threshold since these interventions carry minimal side effects or risks.

A series of secondary analyses were conducted to explore the impact of various model design decisions. First, the exclusion criterion stipulating that patients could not have had an involuntary admission in the 2 years prior to a prediction time was added to minimize the potential alert fatigue in clinicians. Specifically, this measure aimed to omit scenarios where clinicians are likely already aware of an increased risk of involuntary admission, given that prior involuntary admission is a major risk factor for subsequent involuntary admission (Walker et al., Reference Walker, Mackay, Barnett, Sheridan Rains, Leverton, Dalton-Locke and Johnson2019). Indeed, this was confirmed by our results as the model trained without this exclusion criterion performed with an AUROC of 0.91 (XGBoost) and 0.90 (elastic net) (on the cross-validated training set). This highlights the challenging balance between minimizing potential alert fatigue among clinicians and optimizing model performance for prediction models in healthcare. Second, the performance of the models trained on a limited feature set including only age, sex, and diagnoses was tested, resulting in an AUROC of 0.64 (XGBoost) and 0.60 (elastic net) (on the cross-validated training set). This demonstrates that using the full predictor set resulted in substantially better predictive performance, underlining the complexity of risk prediction at the level of the individual patient. Third, a lookahead window of 180 days was chosen for the main model as this leaves a reasonable window of opportunity for prevention of an involuntary admission. Models trained with a lookahead window of 90 days achieved an AUROC of 0.80 (XGBoost) and 0.78 (elastic net) and a lookahead window of 365 days achieved an AUROC of 0.79 (XGBoost) and 0.78 (elastic net) (on the cross-validated training set). This further validates the performance-wise stability of the method across different time-to-outcome intervals and justifies determining the optimal lookahead window based on clinical judgment.

With regard to the predictors driving the discriminative abilities of the model, text features comprised of 14 out of top 30 predictors for XGBoost and 16 out of the top 30 predictors for the elastic net, showcasing the importance of including text. This might be especially true for the field of psychiatry where the clinical condition of patients is mainly described in natural language in the EHR rather than in structured variables. The inclusion of predictors based on TF-IDF and sentence transformer features/embeddings of the text also overall indicated an increased performance of the model. This is in line with prior results of both our own (Danielsen et al., Reference Danielsen, Fenger, Østergaard, Nielbo and Mors2019) and others (Irving et al., Reference Irving, Patel, Oliver, Colling, Pritchard, Broadbent and Fusar-Poli2021; Tenenbaum & Ranallo, Reference Tenenbaum and Ranallo2021). Among the XGBoost predictors extracted from the free text using TF-IDF, ‘ECT’ (electroconvulsive therapy), ‘police’, and ‘self-harm’ were among the predictors with the highest predictive value. These terms are very likely proxies for severity, as ECT is mainly used for very severe manifestations of unipolar depression, bipolar disorders and schizophrenia (Espinoza & Kellner, Reference Espinoza and Kellner2022), involvement of the police is also suggestive of severe illness (e.g. aggression or suicidality) (Canova Mosele, Chervenski Figueira, Antônio Bertuol Filho, Ferreira De Lima, & Calegaro, Reference Canova Mosele, Chervenski Figueira, Antônio Bertuol Filho, Ferreira De Lima and Calegaro2018; Mortensen, Agerbo, Erikson, Qin, & Westergaard-Nielsen, Reference Mortensen, Agerbo, Erikson, Qin and Westergaard-Nielsen2000), and self-harm may refer to a spectrum of behavior from, e.g. superficial cutting to suicide attempts (Skegg, Reference Skegg2005). For the elastic net model, the positive top predictors (increases risk of outcome) using TF-IDF included ‘self-harm’, ‘social psychiatric institution’, and the negative top predictors (decreases risk of outcome) included ‘energy’, ‘looking forward to’, and ‘interest’ which makes intuitive sense from a clinical perspective as the first are proxies for severity of illness, while the latter reflect psychological well-being. There are also TF-IDF text predictors that are challenging to interpret without the broader context of the clinical notes, such as terms like ‘Thursday’, ‘receive’, and ‘eat’. While sentence transformers can capture the contextual meaning of clinical notes, they currently lack interpretability of their embeddings (Reimers & Gurevych, Reference Reimers and Gurevych2019). There were some similarities in the structured top predictors of the two models (e.g. prior detention, Brøset violence checklist score, and suicide risk assessment score), although a direct comparison of predictors from different models should be done with caution. Furthermore, a lab test of plasma-paracetamol – another top predictor of both models – likely indicates that a patient has taken a toxic dose of paracetamol in relation to self-harm or a suicide attempt – also a manifestation of severe mental illness (Reuter Morthorst, Soegaard, Nordentoft, & Erlangsen, Reference Reuter Morthorst, Soegaard, Nordentoft and Erlangsen2016). Among the negative top predictors (decreases risk of outcome) of the elastic net model, several were related to clozapine treatment, including a lab test for plasma clozapine levels, suggesting that continuous clozapine treatment, arguably the most effective antipsychotic agent for treatment of schizophrenia (Kane, Reference Kane1988; McEvoy et al., Reference McEvoy, Lieberman, Stroup, Davis, Meltzer, Rosenheck and Davis2006), may be associated with a lower risk of involuntary admission.

Both information gain estimates and coefficients should be interpreted with caution due to model-dependent handling of predictors in the model training processes. Specifically, due to the structure of a decision tree model, top predictors containing mutual information can be omitted. Similarly, elastic net employs lasso regularization which prunes out highly correlated features. Consequently, this may lead to only one of several mutual information predictors appearing in the predictor importance tables (Chen & Guestrin, Reference Chen and Guestrin2016). The most important insight from the top predictors of both models may be that the model is not informed by a few dominant predictors, but instead relies on a plethora of predictors. In line with this, the models trained on a limited feature set ‘Diagnoses only’ performed with an AUROC of, respectively, 0.64 (XGBoost) and 0.60 (elastic net) on the training set. This demonstrates the complexity of the outcome and supports our approach of processing and including a large and diverse set of predictors, thus, enabling the models to locate the relevant information independently.

There are no set performance thresholds when a prediction algorithm should be considered for clinical implementation. At a PPR of 5%, the best performing model in the present study had a specificity of 97%, a sensitivity of 39%, an NPV of 97%, and a PPV of 36%. Both models demonstrated clinical usefulness, showing a net benefit even at a low threshold probability in the decision curve analysis. Considering that potential preventive measures informed by the model such as advance statements/crisis plans (de Jong et al., Reference de Jong, Kamperman, Oorschot, Priebe, Bramer, van de Sande and Mulder2016) are both cheap and, presumably, without substantial side effects, we would argue that implementation could, indeed, be considered. Successful implementation would rely on the clinical staff being presented with the ‘at risk’ assessment (flagging) by the model, such that the preventive intervention can be elicited at the right time. In the Psychiatric Services of the Central Denmark Region, our EHR system supports this modality, which is currently being implemented alongside a mechanical restraint prediction model (Danielsen et al., Reference Danielsen, Fenger, Østergaard, Nielbo and Mors2019). Furthermore, the clinical staff will have to trust the risk assessment performed by the model. In our experience, this requires targeted information/training of the staff (Perfalk, Bernstorff, Danielsen, & Østergaard, Reference Perfalk, Bernstorff, Danielsen and Østergaard2024). Investigation of implementation strategies, cost-effectiveness, and clinical utility of the models is beyond the scope of the present study, but should be explored going forward.

There are limitations to this study, which should be taken into account. First, there is a limited number of outcomes (involuntary admissions) in the dataset, and the main model considered a total of 1828 predictors. If not handled properly, this could result in ‘curse of dimensionality’ (Berisha et al., Reference Berisha, Krantsevich, Hahn, Hahn, Dasarathy, Turaga and Liss2021) and lead to potential overfitting. Furthermore, the limited number of outcomes introduces uncertainty in the robustness of estimates for specific subgroups, such as age and gender. To mitigate this, we employed several strategies: structured predictors were constructed based on findings from prior research and clinical domain knowledge, we used cross-validation during training, and, during hyperparameter tuning, feature selection was adopted. Finally, we used a hold-out test set to ensure that potential overfitting during the training phase is accounted for in the evaluation. Second, the test set was not independent with regard to time or geographic location, i.e. the generalizability of the model across these boundaries has not been tested. Machine learning models inherently vary in their generalizability and reusing our model 1:1 in another hospital setting would probably result in reduced performance. However, the overall approach is likely to be generalizable and, thus, retraining the model on another EHR dataset, while keeping the same architecture, could enable transferability (Curth et al., Reference Curth, Thoral, van den Wildenberg, Bijlstra, de Bruin, Elbers, Fornasa, Cellier and Driessens2020). The temporal stability analyses (see online Supplementary Figs S6 and S7) showed, as expected, slight decline in model performances as a function of time since the end of model training. Some of this decline may be driven by insufficient data (i.e. few outcomes). Also, the dataset spans several abnormalities, namely a transition to new diagnostic registration practices in March of 2019 (Bernstorff et al., Reference Bernstorff, Hansen, Perfalk, Danielsen and Østergaard2022) and the COVID-19 pandemic with onset in 2020. These events likely partially explain the general drops in performance that can be observed in 2019–2021. Ultimately, if implemented in clinical practice, it is crucial to monitor the model's performance over time and continuously recalibrate it if necessary. Third, despite several text predictors demonstrating high predictive value, the methods for obtaining the predictors from the free-text notes were relatively simple. In future studies, we believe it may be possible to unlock vastly more predictive value from the text by applying more advanced language models. Specifically, a future direction could involve a transformer-based model fine-tuned specifically to psychiatric clinical notes and the given prediction task (Huang, Altosaar, & Ranganath, Reference Huang, Altosaar and Ranganath2020). Fourth, the approach in this project is characterized by fitting a classical binary prediction framework to a task that is inherently sequential in nature. As sequential transformer-based models are gradually adapted from language modeling to the general health care domain, it is likely that such architectures may be better suited to this task and will enhance performance. The adaptation of transformer-based models to the healthcare domain is, however, still in an explorative phase, and, hence, we deem that involving such methods in this study – which was aimed at developing a model for potential clinical implementation – would be premature. Fifth, elastic net and XGBoost do not inherently handle the potential problems with repeated risk predictions on the same individual which could lead to overfitting on individual-specific risk trajectories. However, we ensured that no patient was present in both the train- and the test sets, both during cross-validation, and for the independent hold-out test set. Furthermore, if overfitting on individual-specific risk trajectories had occurred, it would have negatively impacted performance on the hold-out test set. However, no such drop in performance was observed.

Conclusion

A machine learning model using routine clinical data from EHRs can accurately predict involuntary admission. If implemented as a clinical decision support tool, this model may guide interventions aimed at reducing the risk of involuntary admission.

Supplementary material

The supplementary material for this article can be found at https://doi.org/10.1017/S0033291724002642.

Data availability statement

No pre-registration of the study was carried out. According to Danish law, the patient-level data for this study cannot be shared. The code for all analyses is available at: https://github.com/Aarhus-Psychiatry-Research/psycop-common/tree/main/psycop/projects/forced_admission_inpatient.

Acknowledgments

The authors are grateful to Bettina Nørremark for data management.

Author contributions

The study was conceptualized and designed by all authors. Funding was raised by S. D. Ø. The data were procured by S. D. Ø. The statistical analyses were carried out by E. P. and J. G. D. All authors contributed to the interpretation of the results. E. P. wrote the first draft of the manuscript, which was subsequently revised for important intellectual content by the remaining authors. All authors approved the final version of the manuscript prior to submission.

Funding statement

The study is supported by grants to S. D. Ø. from the Lundbeck Foundation (grant number: R344-2020-1073), the Central Denmark Region Fund for Strengthening of Health Science (grant number: 1-36-72-4-20), the Danish Agency for Digitisation Investment Fund for New Technologies (grant number: 2020-6720), and by a private donation to the Department of Affective Disorders at Aarhus University Hospital – Psychiatry to support research into bipolar disorder. S. D. Ø. has received grants for other purposes from the Novo Nordisk Foundation (grant number: NNF20SA0062874), the Danish Cancer Society (grant number: R283-A16461), the Lundbeck Foundation (grant number: R358-2020-2341), and Independent Research Fund Denmark (grant numbers: 7016-00048B and 2096-00055A). These funders had no role in the study design, data analysis, interpretation of data, or writing of the manuscript.

Competing interests

A. A. D. has received a speaker honorarium from Otsuka Pharmaceutical. S. D. Ø. received the 2020 Lundbeck Foundation Young Investigator Prize and S. D. Ø. owns/has owned units of mutual funds with stock tickers DKIGI, IAIMWC, SPIC25KL, and WEKAFKI, and owns/has owned units of exchange traded funds with stock tickers BATE, TRET, QDV5, QDVH, QDVE, SADM, IQQH, IQQJ, USPY, EXH2, 2B76, IS4S, OM3X, and EUNL. The remaining authors declare no competing interests.