Introduction

Protocol analysis (Ericsson and Simon, Reference Ericsson and Simon1980, Reference Ericsson and Simon1993) is a widely used method for capturing and analyzing the cognitive processes of individuals engaged in complex tasks, such as design. The method involves several steps, including the collection of verbal data (e.g., by recording participants as they verbalize their thoughts while working on a design task), transcription of the recorded sessions, and coding of the transcripts. The coding process involves segmenting the transcribed text into meaningful units and assigning codes that represent different cognitive activities, typically according to predefined categories or frameworks (e.g., the Function-Behavior-Structure (FBS) ontology (Gero, Reference Gero1990), design timelines (Atman, Reference Atman2019)). Reviews (e.g., those on conceptual design (Hay et al., Reference Hay, Duffy, McTeague, Pidgeon, Vuletic and Grealy2017), problem framing (Kelly and Gero, Reference Kelly and Gero2022), design learning (Litster and Hurst, Reference Litster and Hurst2021), and expert performance (Cross, Reference Cross2004)) have highlighted the broad application of protocol analysis in studying cognitive processes underlying design activity in a structured and systematic manner.

Although the method offers critical insights into design cognition, it is time-consuming and labor-intensive (Christensen, Reference Christensen2005; Sarkar and Chakrabarti, Reference Sarkar and Chakrabarti2013), requiring extensive manual coding of verbal data. The ability of large language models (LLMs) to interpret and generate human-like text can be leveraged to analyze design protocols more efficiently, enabling researchers to handle larger datasets. This research is thus motivated by this broader goal of determining the extent to which LLMs can support design protocol analysis, specifically within the context of one existing framework: the question-asking taxonomy by Eris (Reference Eris2004).

The taxonomy categorizes questions into low-level, deep reasoning, and generative design questions and has been successfully used in design research, to study, for example, idea generation (Cardoso et al., Reference Cardoso, Badke-Schaub and Eris2016) and feedback in design reviews (Cardoso et al., Reference Cardoso, Eriş, Badke-Schaub and Aurisicchio2014; Hurst et al., Reference Hurst, Duong, Flus, Litster, Nickel and Dai2021). We have previously reported on preliminary research (Sakib et al., Reference Sakib, Hurst, Safayeni and Gero2024) investigating the performance of GPT-4 in the question-classification task, which offered promising results. The present study builds on those findings with experiments that evaluate the performance of two LLMs in this task – GPT-4.1 and Claude Sonnet 4.5 – with particular attention to prompt sensitivity and cross-model and cross-dataset generalizability.

The rest of the paper is organized as follows. Section “Background” reviews the literature, including the role of AI and LLMs in supporting qualitative research like protocol analysis, Eris’ (2004) taxonomy, and its operationalization in design research, key findings from Sakib et al. (Reference Sakib, Hurst, Safayeni and Gero2024), and the research goals guiding the present study. Section “Method” details the research methodology, describing the datasets, tools, and experiments, the results of which are presented in Section “Experiments and results”. We summarize and discuss the findings in Section “Discussion”, providing insights into their implications, limitations, and future research.

Background

Technological supports for efficient protocol analysis

Early attempts at reducing the time and effort required in protocol studies focused on developing software tools to assist with the transcription processes, for example, through speech recognition technologies (Matheson, Reference Matheson2007), and coding software to support organizing and analyzing large volumes of qualitative data. While these tools improved the efficiency of data management, the actual coding still relied on manual input from researchers. Research has demonstrated the growing potential of natural language processing (NLP) and machine learning to automate protocol analysis in design. For example, Chandrasegaran et al. (Reference Chandrasegaran, Lloyd and Salah2022) apply computational linguistic methods such as n-gram analysis to detect problem frames within large design conversation datasets. Gero and Milovanovic (Reference Gero and Milovanovic2023) use the Natural Language Toolkit (NLTK) (Bird et al., Reference Bird, Klein and Loper2009) to track the emergence, recurrence, and connections between concepts, enabling the quantitative measurement of the design situatedness of concepts. More recently, Casakin et al. (Reference Casakin, Sopher, Anidjar and Gero2024) combine standard NLP approaches and the BERT machine learning model to track framing and reframing behavior in design.

While NLP offers promising tools for scaling protocol analysis, its effectiveness is limited by semantic ambiguity, dependence on human interpretation, difficulty in modeling situated design behavior, and challenges in generalizing across contexts. Recent advances in AI, particularly LLMs, have significantly improved the prospects for automating protocol analysis. Pre-trained on vast corpora of diverse language data, LLMs can capture intricate linguistic nuances, making them particularly adept at uncovering underlying patterns, sentiment, and context within qualitative data (Bano et al., Reference Bano, Hoda, Zowghi and Treude2023; Hayes, Reference Hayes2025). A particularly powerful feature of modern LLMs is in-context learning (ICL) (Brown et al., Reference Brown, Mann, Ryder, Subbiah, Kaplan, Dhariwal, Neelakantan, Shyam, Sastry, Askell, Agarwal, Herbert-Voss, Krueger, Henighan, Child, Ramesh, Ziegler, Wu, Winter and Amodei2020), which allows the model to understand and respond to new tasks and contexts based on a few examples (few-shot) provided within a prompt, without requiring retraining. Language models such as GPTs can be guided through ICL to recognize and categorize different cognitive activities based on predefined coding schemes, supporting a more efficient and consistent coding process, as the AI can handle large volumes of data and maintain high levels of accuracy and reliability (OpenAI et al., Reference Achiam, Adler, Agarwal, Ahmad, Akkaya, Aleman, Almeida, Altenschmidt, Altman, Anadkat, Avila, Babuschkin, Balaji, Balcom, Baltescu, Bao, Bavarian, Belgum and Zoph2024a).

Despite this promise, to our knowledge, there are few reports in the literature on the use of LLMs in qualitative analyses (Curry et al., Reference Curry, Baker and Brookes2024; Katz et al., Reference Katz, Fleming and Main2024; Anakok et al., Reference Anakok, Katz, Chew and Matusovich2025; Reeping et al., Reference Reeping, Hampton and Özkan2025) and fewer in protocol analysis. Katz et al. (Reference Katz, Wei, Nanda, Brinton and Ohland2023) evaluate the accuracy of ChatGPT in identifying topics in student comments according to an existing feedback taxonomy. Zhang et al. (Reference Zhang, Borchers, Aleven and Baker2024) analyze student think-aloud transcripts within the context of intelligent tutoring systems by using LLMs to classify verbalizations according to four categories of self-regulated learning. Another study by Li et al. (Reference Li, Huang, Wang, Zheng and Lajoie2025) explores the use of AI in analyzing students’ reasoning activities. In design research, Kelly et al. (Reference Kelly, Greentree, Sosa, Evans and Gero2024) explore semi- and fully automated representations of the design process, including proof-of-concept applications of LLMs (ChatGPT) to generate near real-time insights into framing episodes.

Eris’ question-asking taxonomy

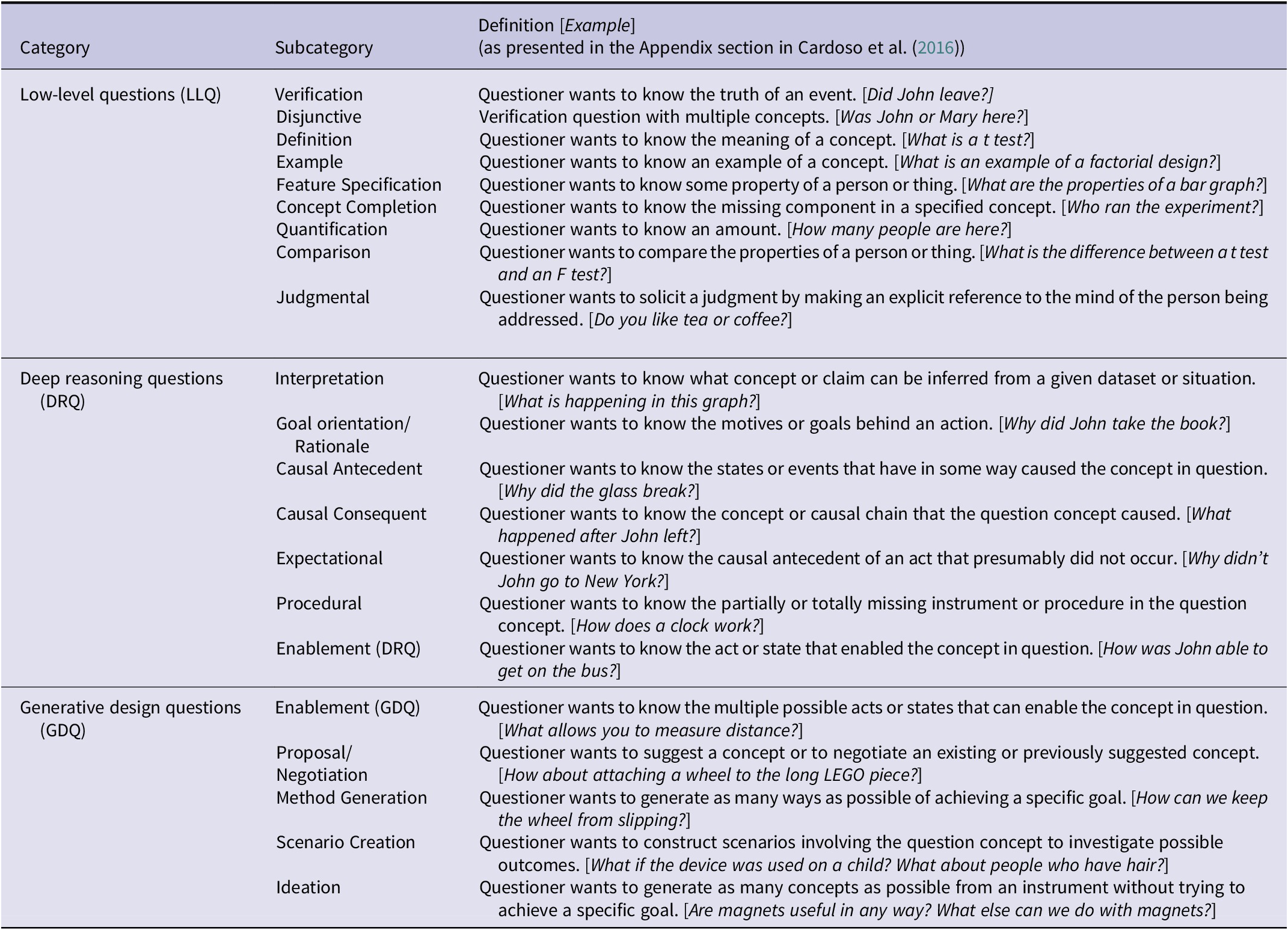

In this research, we explore the potential of LLMs for analyzing verbal protocols of design using Eris’ (Reference Eris2004) question-asking taxonomy. Question asking during designing influences how designers think, including how they think creatively, make decisions, and learn (Eris, Reference Eris2004, p. 11). A question in a design context is “a verbal utterance related to the design tasks at hand that demands an explicit verbal and/or nonverbal response” (p. 36). Building on previous taxonomies of question asking (Graesser and Person, Reference Graesser and Person1994; Lehnert, Reference Lehnert2022), Eris (Reference Eris2004) classifies questions into one of three broad categories. Low-level questions (LLQs) are posed to clarify information gaps in the design project or its processes (e.g., “Is this manual pushing or motor pushing?”). With deep reasoning questions (DRQs), the asker seeks to establish causal explanations of phenomena (e.g., “How was the candy being ejected from your machine?”). LLQs and DRQs are convergent in nature: their premise is that the answer to the question exists and is known by the responder. In contrast, the third type – generative design questions (GDQs) – stimulates divergent thinking by facilitating the generation of many possible answers, regardless of how likely they are to be deemed feasible or true. For instance, when seeking to generate alternative options for addressing a goal or obstacle, a designer might ask, “How do we animate this?” Each of the three question categories also has several subcategories, for a total of 21, as summarized in Table 1.

Table 1. Question categories and subcategories according to Eris’ (Reference Eris2004) taxonomy

Operationalizing question classification in design research

The taxonomy provides a useful lens for uncovering patterns of convergent and divergent design thinking. Operationalized as a coding scheme of verbal protocols, it has been used to study various design phenomena and processes. Table 2 presents seven examples – including the original studies in Eris’ (Reference Eris2004) book – that illustrate a range of uses of this classification scheme in design research.

Table 2. Examples of design research studies using Eris’ (2004) taxonomy

Researchers have used the taxonomy to study how high-level questions prompt creative thinking (Cardoso et al., Reference Cardoso, Badke-Schaub and Eris2016), to understand peer and expert design critique (Cardoso et al., Reference Cardoso, Eriş, Badke-Schaub and Aurisicchio2014, Reference Cardoso, Hurst and Nespoli2020; Hurst and Nespoli, Reference Hurst and Nespoli2019; Hurst et al., Reference Hurst, Duong, Flus, Litster, Nickel and Dai2021), and to examine the role of expertise in feedback (Hurst et al., Reference Hurst, Lin, Treacy, Nespoli and Gero2023). Questions are extracted from a range of contexts: emerging naturally in the process of a group design activity (Eris, Reference Eris2004; Cardoso et al., Reference Cardoso, Badke-Schaub and Eris2016), asked during formal design reviews (Cardoso et al., Reference Cardoso, Eriş, Badke-Schaub and Aurisicchio2014; Hurst and Nespoli, Reference Hurst and Nespoli2019) or in peer-led critiques (Cardoso et al., Reference Cardoso, Hurst and Nespoli2020; Hurst et al., Reference Hurst, Duong, Flus, Litster, Nickel and Dai2021), and arising in regular one-on-one design tutoring sessions (Hurst et al., Reference Hurst, Lin, Treacy, Nespoli and Gero2023). We observe that in general, question distributions are skewed: LLQ is the dominant category (frequency as high as 81%), and DRQ instances are rare (as low as 1%). Distributions seem highly sensitive to the setting (e.g., design activity vs. design review/critiques/tutoring), source of the questions (e.g., student vs. instructor), and process (asynchronous written vs. synchronous verbal feedback).

Of interest is also the analysis workflow. In his book, Eris (Reference Eris2004, p. 99) describes significant practical challenges that arise in conducting the question classification activity. In his study, numerous utterances served the purpose of questions even though they were not grammatically formed as such. Even if an utterance was correctly identified as a question, it was sometimes difficult to assign it to a question category when simply relying on the transcript. Coders needed to review audiovisual data around the question utterance, sometimes repeatedly, to infer the correct question classification from the context. Cardoso et al. (Reference Cardoso, Badke-Schaub and Eris2016) and Hurst et al. (Reference Hurst, Lin, Treacy, Nespoli and Gero2023), too, describe relying on audio/video recordings for added context.

In some cases (Eris, Reference Eris2004; Cardoso et al., Reference Cardoso, Eriş, Badke-Schaub and Aurisicchio2014, Reference Cardoso, Badke-Schaub and Eris2016), one researcher codes all questions, with a second researcher called in to code a sample of the dataset in order to perform a reliability analysis. Sometimes a third, more experienced, researcher is brought in to arbitrate classification disagreements (e.g., Hurst et al., Reference Hurst, Duong, Flus, Litster, Nickel and Dai2021, Reference Hurst, Lin, Treacy, Nespoli and Gero2023). Across studies, inter-rater reliability is reported as either percent agreement (ranging from 70% to 98%) or through Cohen’s kappa (ranging from moderate agreement (κ = 0.41) to substantial (κ = 0.72). The latter measure takes into consideration both agreement between judges and the extent to which agreement is expected by chance (Cohen, Reference Cohen1960), and might be more appropriate given the highly skewed distributions – large frequencies of LLQ and very small frequencies of DRQ – that can lead to high chance agreement between judges (Feinstein and Cicchetti, Reference Feinstein and Cicchetti1990; Byrt et al., Reference Byrt, Bishop and Carlin1993).

Automating question classification – prior research and present study aims

Given the challenges described in the previous section and evidence of the LLMs’ capabilities in interpreting qualitative data (Bano et al., Reference Bano, Hoda, Zowghi and Treude2023; Hayes, Reference Hayes2025), a natural next step is to investigate the efficiency and effectiveness of offloading the classification activity to LLM “assistants.” The advantage of using Eris’ (Reference Eris2004) taxonomy as a test case is the deductive nature of the question classification task, which is well-suited to the inherent strengths of language models in processing structured information and applying predefined categories to textual data (Chew et al., Reference Chew, Bollenbacher, Wenger, Speer and Kim2023; Xiao et al., Reference Xiao, Yuan, Liao, Abdelghani and Oudeyer2023). The model can be guided through ICL to recognize and classify text according to the specific categories (LLQ/DRQ/GDQ) defined by the taxonomy.

We have previously reported on a preliminary study to evaluate the effectiveness of LLMs (specifically GPT-4) in classifying question utterances according to this taxonomy (Sakib et al., Reference Sakib, Hurst, Safayeni and Gero2024). A series of four experiments offered a baseline understanding of performance. Specifically, GPT-4 performed reasonably well on the classification task, reaching up to 83% alignment with human-assigned categories when provided with 60–90 human-labeled examples. While various ICL conditions were tested with increasingly larger sets of pre-labeled examples (up to 300), no further alignment improvements were found. The study also found that GPT-4’s labeling is probabilistic, with some questions being labeled differently on different experimental runs, a problem that is only marginally alleviated by varying ICL conditions. Finally, providing additional context (e.g., utterances before and after a question) degraded performance – a surprising finding given how critical context is to human coders when making these judgments.

While these earlier experiments provided useful insights, they were also significantly limited in their samples and methods, impacting the reliability and generalizability of the findings. For example, all experiments were run on just one 30-question test set. The study also raised questions about prompt sensitivity, classification accuracy at the question subcategory level, and cross-dataset and cross-model generalizability. Thus, the present study aims to more deeply and rigorously investigate the extent to which LLMs – specifically OpenAI’s GPT-4.1 and Anthropic’s Claude Sonnet 4.5 – can be utilized to classify question utterances in verbal protocols of design according to Eris’ (Reference Eris2004) taxonomy, to better understand performance sensitivity to ICL, and to offer guidance to design researchers seeking to integrate LLMs into their protocol analysis workflows.

Method

Datasets

This study utilized two datasets, d 1 and d 2, both available to one of the authors.

Dataset d 1

The data were collected in a study of six small student teams who met with their tutor weekly over six weeks to discuss a term-long graduate-level project to design an enhanced automatic candy-wrapping machine. The dataset consisted of a total of 31 transcribed tutoring sessions, from which 2032 question utterances were extracted and then independently classified by two research assistants according to Eris’ (Reference Eris2004) taxonomy. Any coding disagreements were resolved with the assistance of a third, more experienced researcher. A subset of the data (1491 questions) was previously analyzed in Hurst et al. (Reference Hurst, Lin, Treacy, Nespoli and Gero2023), as already highlighted in Table 2.

Selecting the right ICL examples is crucial for achieving optimal performance (J. Liu et al., Reference Liu, Shen, Zhang, Dolan, Carin and Chen2021; Rubin et al., Reference Rubin, Herzig and Berant2022; Chang and Jia, Reference Chang and Jia2023). LLMs like GPTs are biased toward predicting labels that are frequent in the prompt (Zhao et al., Reference Zhao, Wallace, Feng, Klein and Singh2021). Therefore, 90 questions were selected for ICL through uniform sampling at the category level (30 LLQs, 30 DRQs, and 30 GDQs), to ensure a balanced representation across categories and subcategories. Care was also taken to ensure that the ICL questions selected were concise, avoiding overly elaborate phrasing that could complicate the classification process. The size of the ICL sample was chosen based on Sakib et al. (Reference Sakib, Hurst, Safayeni and Gero2024) who showed that there were no performance gains for ICL samples larger than 60 and that there was a significantly reduced effect of non-determinism when ICL was increased to 90. A total of 1942 remaining questions – 1292 (66.5%) LLQ, 144 (7.4%) DRQ, and 506 (26.1%) GDQ – comprised the held-out test set.

Dataset d 2

Data were collected in a study conducted within an undergraduate engineering capstone design course, where student teams participated in peer design reviews. After each review, teams submitted a reflection documenting the feedback received from other teams, primarily in the form of questions, and their quality rating of that feedback. This dataset was of better quality than dataset d 1: the students had taken note of these questions on their own, and any low-quality questions were likely filtered out before documentation. Moreover, students knew that they would be evaluated on the quality of the feedback/questions posed. Two independent experienced coders categorized the entire dataset – 265 questions – using Eris’ (Reference Eris2004) taxonomy, with any disagreements resolved through discussion between the two. This dataset was previously analyzed in Cardoso et al. (Reference Cardoso, Hurst and Nespoli2020), as also summarized in Table 2.

Dataset d 2 comprised 265 questions: 188 (70.9%) LLQ, 48 (18.1%) DRQ, and 29 (10.9%) GDQ, yielding an imbalanced distribution. To match the size of ICL of d1, 90 questions were sampled from d 2 as in-context examples using deterministic (seeded) stratified sampling to preserve the original LLQ/DRQ/GDQ proportions. Accordingly, the ICL example set contained 64 LLQ (71.1%), 16 DRQ (17.8%), and 10 GDQ (11.1%), while the remaining 175 questions formed the held-out test set with 124 LLQ (70.9%), 32 DRQ (18.3%), and 19 GDQ (10.9%). Because d 2 includes several sparse subcategories, stratification was performed hierarchically: first at the top-level category, then within-category to prioritize subcategory coverage before filling remaining slots proportionally, reflecting findings that in-context example composition, including minority coverage, measurably affects ICL outcomes, and that including minority classes in the prompt examples can improve fairness without hurting accuracy (Hu et al., Reference Hu, Liu, Du, Al-Onaizan, Bansal and Chen2024; Xu et al., Reference Xu, Cohen, Wang, Srikumar, Duh, Gomez and Bethard2024; Yao et al., Reference Yao, Chen, Zou, Lu, Li, Zhang, Sang, Liu, Hendler, Wang, Duh, Gomez and Bethard2024).

To avoid unreliable metrics on ultra-rare strata, and because the model, even with system-level instructions, might consider those ultra-rare cases as exceptions without direct examples, we applied a rarity threshold of 5: subcategories with fewer than five instances were treated as rare and allocated to the in-context examples pool rather than being split across the in-context examples and the test set. This aligns with the common few-shot practice that ICL outcomes can fluctuate substantially under small-support and sampling variability (Fei et al., Reference Fei, Hou, Chen, Bosselut, Rogers, Boyd-Graber and Okazaki2023; Agarwal et al., Reference Agarwal, Singh, Zhang, Bohnet, Rosias, Chan, Zhang, Faust and Larochelle2024; Hu et al., Reference Hu, Liu, Du, Al-Onaizan, Bansal and Chen2024).

Finally, the held-out test set was kept in the original question order to reflect conversational sequencing at inference time, while the in-context example question set was shuffled to reduce unintended order and recency effects known to influence predictions in causal LLM prompting (Agarwal et al., Reference Agarwal, Singh, Zhang, Bohnet, Rosias, Chan, Zhang, Faust and Larochelle2024; Xiang et al., Reference Xiang, Yan, Gui, He, Ku, Martins and Srikumar2024; Xu et al., Reference Xu, Cohen, Wang, Srikumar, Duh, Gomez and Bethard2024; Yu and Ananiadou, Reference Yu, Ananiadou, Al-Onaizan, Bansal and Chen2024; K. Zhang et al., Reference Zhang, Lv, Chen, Ha, Xu, Yan, Ku, Martins and Srikumar2024).

Tools and setup

The primary LLM used in this study was OpenAI’s GPT-4.1, a non-reasoning, low-latency model. We additionally evaluated Anthropic’s Claude Sonnet 4.5. All experiments (runs conducted in November 2025) were executed through hosted model APIs. Each request contained the full task instructions and any in-context examples needed for that condition, and no conversational state or memory was carried across experiments (or across items within an experiment). To support reproducibility, we pinned model snapshots and logged per-request metadata (e.g., response identifiers, model name, and token-usage reports) exposed by each API. Appendix 1 details the LLM configuration and access pathways used in this study, as well as other cost, latency, tracing, and failure logging considerations.

Prompt structure and schema-constrained outputs

Each API request generates text outputs in response to its inputs (“prompts”), which are divided into two segments: (i) a stable instruction layer (functionally equivalent to a system message) and (ii) a variable task input layer (functionally equivalent to a user message). The system message provides high-level context on the user’s intent or the desired outcome. In our experiments, this segment defined the labeling taxonomy, decision rules, and output requirements and was held constant within each experiment. On the other hand, the user messages contained the batch of items to label (e.g., numbered questions) and, where applicable, in-context examples required by the corresponding condition, and therefore varied across experiments.

To reduce formatting failures and support automated scoring, outputs were required to be machine-readable and schema-constrained (validated against a predefined JSON schema rather than free-form text). Responses were programmatically validated to ensure exactly one prediction per input item and to prevent downstream evaluation from being affected by malformed outputs, an important safeguard because structured, list-valued generations can otherwise be brittle without explicit constraints and validation. This design aligns with recent evidence that this approach substantially improves format compliance for LLM pipelines intended for automated evaluation and post-processing (M. X. Liu et al., Reference Liu, Liu, Fiannaca, Koo, Dixon, Terry and Cai2024; Lu et al., Reference Lu, Li, Cong, Zhang, Wu, Lin, Liu, Liu, Sun, Che, Nabende, Shutova and Pilehvar2025).

Decoding configuration and near-reproducibility

To manage cost and runtime, each experimental condition was run once (i.e., a single pass over the test set) under fixed, low-variance decoding settings in both APIs. This design yields point estimates of performance rather than Monte Carlo estimates over repeated samples. Specifically, we set the temperature to 0 for all OpenAI and Anthropic calls. Temperature controls the degree of sampling randomness during generation; lower values make the model’s choices more conservative and typically more consistent for identical inputs. The top_p (nucleus sampling) parameter was kept at its default value of 1.0 in this study, meaning that the full probability distribution was available to the decoder; we did not systematically vary top_p.

In principle, this combination of temperature = 0 and top_p = 1.0 should make outputs highly reproducible for a given model snapshot, although the underlying model remains a probabilistic system and is served via a managed API that is subject to change (OpenAI et al., Reference Hurst, Lerer, Goucher, Perelman, Ramesh, Clark, Ostrow, Welihinda, Hayes, Radford, Mądry, Baker-Whitcomb, Beutel, Borzunov, Carney, Chow, Kirillov, Nichol and Malkov2024b). Repeated runs could yield small discrepancies in a minority of cases – 3% of cases in Sakib et al. (Reference Sakib, Hurst, Safayeni and Gero2024) – even when prompts are unchanged. We therefore view our decoding configuration as providing near-deterministic behavior within our experiments, while recognizing that exact reproducibility over time or across deployments cannot be fully guaranteed (Atil et al., Reference Atil, Aykent, Chittams, Fu, Passonneau, Radcliffe, Rajagopal, Sloan, Tudrej, Ture, Wu, Xu and Baldwin2025).

Token budget, batching, and the batch-size–token relationship

Prompt length interacts directly with both (a) API context limits and (b) the probability of structural failures such as missing items or re-ordered outputs. In preliminary runs on d1 (test set n = 1,942), questions were grouped by team (six teams; 110–473 questions per team) to preserve local terminology and context. However, large per-team batches produced a higher rate of non-labeling errors (e.g., omissions and ordering mismatches) consistent with the well-known sensitivity of long-context generation to prefill overhead, where longer inputs require more up-front processing and make it easier for the model to miss or shuffle items. These errors are also consistent with output-length budget constraints, since fixed limits on how much text the model can produce may cut off long, item-by-item outputs and leave later entries incomplete. Finally, heavy token loads increase the schema-compliance burden: as responses get longer, it becomes harder to keep the required format, include every field, and preserve the expected order, so small formatting slips can accumulate into ordering mismatches or missing fields (Jiang et al., Reference Jiang, Li, Zhang, Wu, Luo, Ahn, Han, Abdi, Li, Lin, Yang and Qiu2024; Nayab et al., Reference Nayab, Rossolini, Simoni, Saracino, Buttazzo, Manes and Giacomelli2025; Sun et al., Reference Sun, Wang, Li, Liu, Li, Wen, Yuan, Zheng, Liang, Li, Liu, Christodoulopoulos, Chakraborty, Rose and Peng2025). To reduce these failure modes and keep each request well within practical token budgets, the pipeline was revised to chunk inputs such that no batch exceeded 50 questions.

Tasks

Prior work (Sakib et al., Reference Sakib, Hurst, Safayeni and Gero2024) reported preliminary results demonstrating the feasibility of using GPT-4 for automated question classification and informed the initial design of prompts, in-context examples, and evaluation procedures. Building on this foundation, the following two experiments were designed in this study:

Experiment 1: baseline performance and prompt sensitivity

This experiment establishes baseline performance for GPT-4.1 on large-scale question classification, at both the category and subcategory levels of Eris’ (Reference Eris2004) taxonomy. Prompt configurations are systematically varied, ranging from zero-shot to information-rich conditions incorporating definitions, examples, and in-context learning, to assess how different forms of task specification affect classification accuracy.

Experiment 2: cross-dataset and cross-model generalizability

This experiment evaluates the robustness and transferability of prompt configurations and in-context examples across datasets and LLMs. GPT-4.1 and Claude Sonnet 4.5 are evaluated on held-out data from the two datasets, while varying only the source of in-context examples. This design enables assessment of whether prompts and examples developed using one dataset and one model remain effective when applied to different data distributions and models.

Experiments and results

Experiment 1

Experiment 1 aimed to determine the benchmark performance of GPT-4.1 in the question classification task. Using an ablation design, we tested multiple prompt conditions to evaluate how variations in prompt structure and content – including ICL – affected labeling accuracy. GPT-4.1’s performance was evaluated across seven prompt configurations (Conditions A–G), summarized in Table 3.

Table 3. GPT-4.1 classification performance across different prompt configurations. Includes references to appendices with full prompts

The conditions varied along three dimensions: (1) system prompt, (2) the use and granularity of ICL examples, and (3) the required labeling level. System prompts ranged from none (Condition A) to taxonomy definitions only (Condition B), to definitions plus one high-quality example per subcategory (Conditions C–G). Three ICL configurations were tested: no in-context examples (Conditions A–C), 90 examples labeled at the category level (Conditions D and F), and 90 examples labeled at the subcategory level (Conditions E and G). Finally, the labeling task was either at the category level (Conditions A–E) or at the subcategory (Conditions F–G) level.

Across all conditions, the same test set of 1942 questions was used, with both in-context examples and test data drawn from dataset d 1 (Section “Dataset d 1”). Performance across all conditions was first evaluated at the category (LLQ/DRQ/GDQ) level, regardless of labeling granularity during prompting (Table 3). Overall alignment increased across conditions, from 57.00% (A) to 69.9% (B), 70.2% (C), 72.7% (D), and approximately 80% for E (79.4%), F (79.8%), and G (80.3%).

Because the same test set of questions (N = 1,942) was evaluated under all seven prompt conditions (A–G), alignment for each question can be represented as a paired binary outcome (correct vs. incorrect). Differences across conditions were thus tested using Cochran’s Q test (α = 0.05), followed by post hoc exact McNemar tests for pairwise comparisons with Holm correction to control the family-wise error rate (α = 0.05). Cochran’s Q indicated a significant difference in alignment across the seven conditions (p < 0.05). Post hoc comparisons showed that Condition A differed significantly from Conditions B–G (Holm-adjusted p < 0.05). Condition B did not differ significantly from Condition C (Holm-adjusted p ≥ 0.05), but both B and C differed significantly from D–G (Holm-adjusted p < 0.05). Condition D differed significantly from Conditions E–G (Holm-adjusted p < 0.05). Finally, no significant pairwise differences were observed among Conditions E, F, and G (Holm-adjusted p ≥ 0.05).

Condition G, which achieved the highest alignment, was selected for all subsequent experiments; however, Conditions E and F performed comparably well. A common feature of Conditions E to G is that the user prompt explicitly framed the task at the subcategory level, through in-context examples, task instructions, or both. A possible explanation for their superior performance is that these prompts more closely mimic the information and process available to human coders (e.g., research assistants), who typically first become familiar with the taxonomy (through subcategory definitions and examples) and then are trained by an experienced researcher to apply the taxonomy to the dataset to be analyzed by working through a few examples together. When conducting the labeling task, human coders label questions at the subcategory level, even though any subsequent analyses or reporting of results is typically done at the broader category levels.

Of note is that in Condition A, where no system message was provided, and the model relied solely on the user prompt without any in-context examples, GPT-4.1 achieved an alignment exceeding 50%, well above the 33.3% expected by chance for a three-class task. This suggests that the model might be leveraging implicit knowledge about typical distributions of questions across LLQ/DRQ/GDQ categories, potentially due to the mention of Eris’ taxonomy in the user message. Even without explicit definitions or examples, this implicit reference may contribute to above-chance classification performance.

The LLM’s performance can be evaluated at two levels: category and subcategory. For example, a question such as “Does this cost $100?” could be misclassified at the subcategory level (e.g., Quantification rather than Verification) while still being correctly classified at the category level (LLQ). Taking the case of the highest-performing prompt configuration (Condition G), we compared GPT-4.1’s alignment with human-assigned labels at both levels and benchmarked its performance against the inter-rater agreement of the original human coders for dataset d1. Table 4 summarizes these results.

Table 4. Alignment metrics for GPT-4.1 computed at the category and subcategory levels, benchmarked against human inter-rater agreement

When computed at the category level, the LLM’s alignment is comparable to the human inter-rater agreement (80.2% vs. 85.7%). Moreover, the LLM’s performance is also generally comparable with (and in some cases exceeds) inter-rater agreement reported in prior studies (Table 2), where agreement has been as low as 70% (Hurst and Nespoli, Reference Hurst and Nespoli2019) or κ = 0.49 (Hurst et al., Reference Hurst, Duong, Flus, Litster, Nickel and Dai2021), depending on the metric used. In contrast, subcategory-level alignment is substantially lower than the inter-rater agreement of the original human coders (52.5% vs. 74.7%). The impact/relevance of this discrepancy is limited. Generally, while the classification is conducted at the subcategory level, any downstream analyses are conducted at the category level, and indeed, only three of the studies surveyed in Table 2 report inter-rater agreement at the subcategory level (as low as κ = 0.41 (Hurst et al., Reference Hurst, Duong, Flus, Litster, Nickel and Dai2021)).

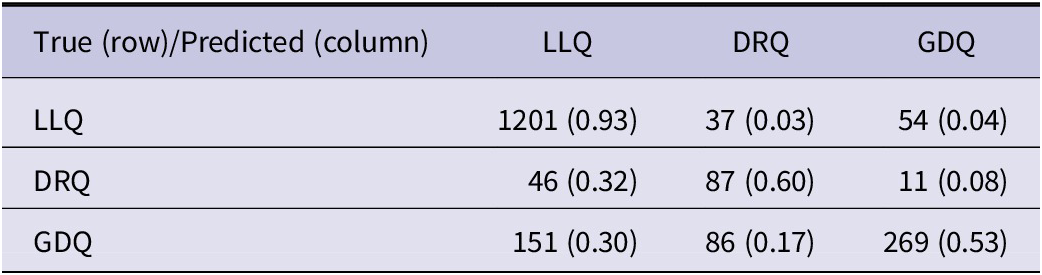

We next examine GPT-4.1’s performance across question categories and subcategories. Table 5 presents the category-level confusion matrix. Classification accuracy was highest for LLQs (93%), with errors evenly split between DRQs (3%) and GDQs (4%). DRQs were correctly labeled 60% of the time and were most often misclassified as LLQs, with relatively few confusions with GDQs (8%). This pattern likely reflects the conceptual similarity between LLQs and DRQs, both of which are convergent in nature and presuppose a known or knowable answer. GDQs exhibited the lowest category-level accuracy (53%), with misclassifications distributed across both LLQs (30%) and DRQs (17%).

Table 5. Confusion matrix for question categorization at the category level – instances (proportion of total). Prompt configuration according to Condition G

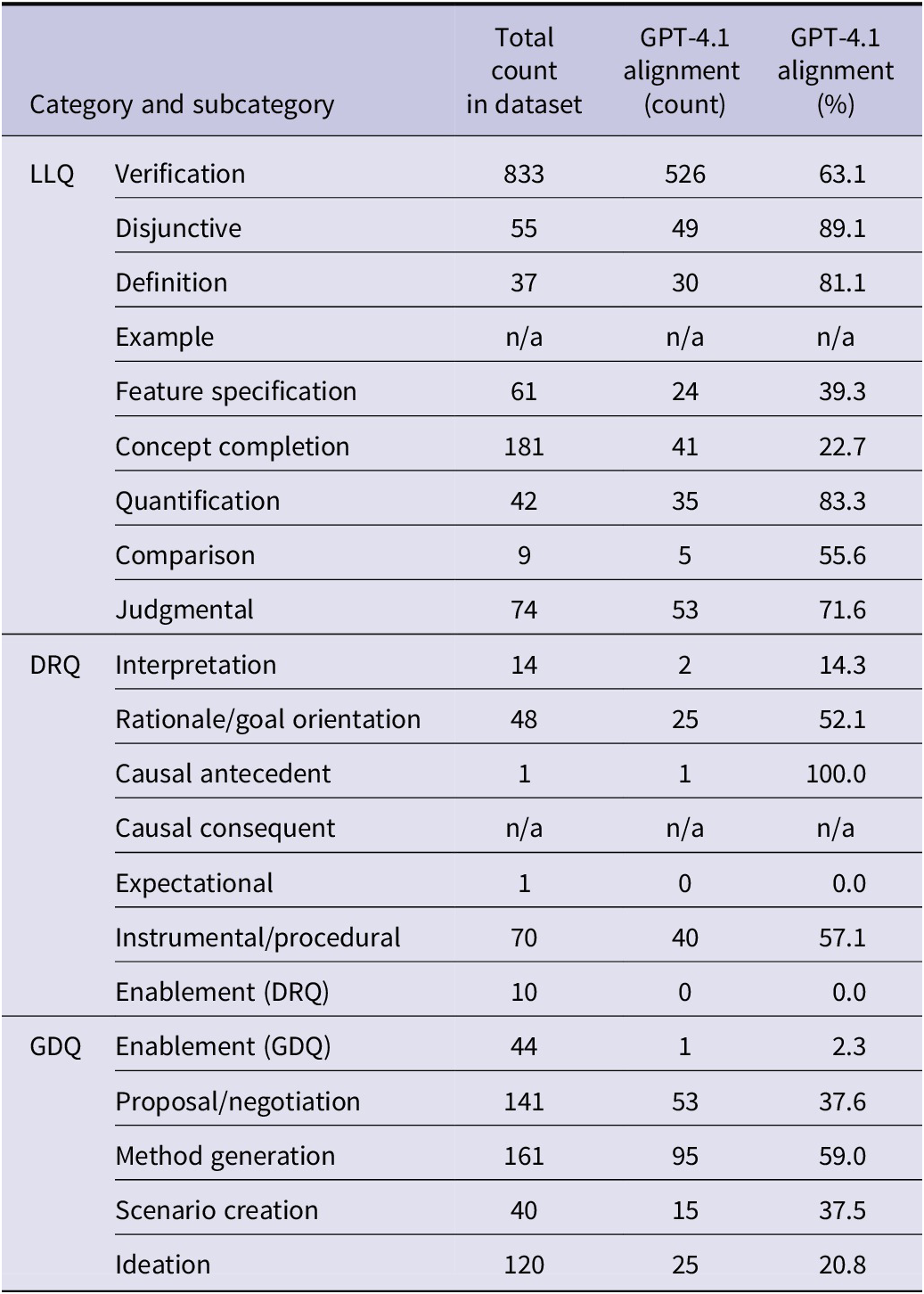

To better understand these patterns, we analyzed performance at the subcategory level. Tables 6 and 7 report subcategory-specific accuracy and the corresponding confusion matrix. Four of the 21 subcategories (Table 6) were represented by one or no instances in the test set.

Table 6. Experiment 2 results: Alignment metrics for GPT-4.1 across each question subcategory

Table 7. Confusion matrix at the subcategory level – dataset d 1, prompt configuration of Condition G

Within LLQs, Disjunctive and Quantification questions achieved the highest accuracy (89.1% and 83.3%, respectively). Verification questions – the most frequent LLQ type – were accurately classified 63.1% of the time, and most frequently misclassified as Judgmental, a confusion consistent with the minimal semantic difference between these two subcategories in the taxonomy. The subcategories that were labeled least accurately – Concept Completion (22.7%) and Feature Specification (39.3%) – were frequently confused with each other, reflecting another example of difficult-to-distinguish subcategories.

Within DRQs, the two most frequent subcategories in the dataset were also the two that were most accurately labeled by GPT-4.1: Rationale (52.1%) and Instrumental/Procedural (57.1%) – the “why” and “how” questions, both most frequently misclassified as Feature Specification (requests for design attributes).

Within GDQs, nearly half of the Proposal/Negotiation questions were misclassified as Verification or Judgmental, likely because these question types often elicit yes/no answers and are difficult to distinguish without additional contextual information. Method Generation questions were frequently confused with Instrumental/Procedural questions, reflecting their shared aim to generate the “how” – whether in what exists (Instrumental/Procedural) or what could be (Method Generation). Finally, Ideation questions exhibited very low accuracy (20.8%), with errors dispersed across multiple subcategories, indicating substantial difficulty in distinguishing open-ended idea generation from other generative or evaluative question types in the absence of discourse context.

Experiment 2

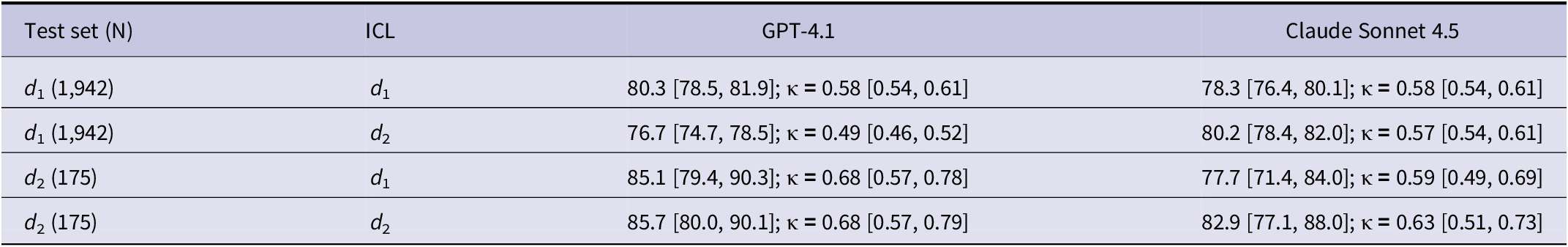

LLMs have demonstrated the ability to adapt to new tasks with relatively few examples and to generalize beyond the specific data on which they are evaluated (Brown et al., Reference Brown, Mann, Ryder, Subbiah, Kaplan, Dhariwal, Neelakantan, Shyam, Sastry, Askell, Agarwal, Herbert-Voss, Krueger, Henighan, Child, Ramesh, Ziegler, Wu, Winter and Amodei2020; Wei et al., Reference Wei, Tay, Bommasani, Raffel, Zoph, Borgeaud, Yogatama, Bosma, Zhou, Metzler, Chi, Hashimoto, Vinyals, Liang, Dean and Fedus2022; Si et al., Reference Si, Gan, Yang, Wang, Wang, Boyd-Graber and Wang2023; Yuan et al., Reference Yuan, Chen, Cui, Gao, Zou, Cheng, Ji, Liu and Sun2023). Experiment 2 aimed to evaluate the robustness and generalizability of LLM-based classification by comparing two models – GPT-4.1 and Claude Sonnet 4.5 – while holding the prompt structure constant (as in Condition G of Experiment 1 for GPT-4.1 and in Appendices 11–12 for Claude Sonnet 4.5) and varying only the source of in-context examples (d 1 vs. d 2). Both models were evaluated on held-out test items from d 1 (N = 1,942) and d 2 (N = 175).

Results are presented in Table 8. Across the various conditions, LLM alignment accuracy (at the category level) ranged from 76.7% (GPT-4.1 tested on d 1 with ICL from d 2) to 85.1% (GPT-4.1 tested on d 2 with ICL from d 1).

Table 8. Human–LLM alignment – Accuracy (%) [95% CI]; κ [95% CI] – across LLMs and ICL/test pair conditions

Model comparisons within each test/ICL pairing were evaluated using exact McNemar tests on paired item-level correctness, with Holm correction applied across the four planned comparisons (α = .05). After correction, three of the four pairings showed no statistically reliable difference between the two models; the only significant difference was observed when testing on d 1 with in-context examples from d 2, which favored Claude Sonnet 4.5 (Holm-adjusted p < .05).

In terms of the influence of the ICL source on performance, the results are mixed. When testing on d1, GPT-4.1 achieved a statistically higher (p < .05) alignment accuracy when using in-context examples from d 1 compared to when using in-context examples from d 2. However, no statistically significant differences were noted based on the ICL source when testing on d 2. In the case of Claude Sonnet 4.5, when testing on both d 1 and d 2, no statistically significant effects were found in terms of the impact of the ICL source on performance.

Across conditions, both LLMs performed consistently better when tested on dataset d 2, regardless of whether they were provided with in-context examples from dataset d 1 or d 2. However, this difference was significant (p < .05) only in the case of GPT-4.1, specifically when using ICL examples from d2 and not when using ICL examples from d1. For Claude Sonnet 4.5, the effects were small and non-significant, indicating no evidence of a reliable d2 advantage across ICL sources. The mixed pattern suggests that the observed accuracy difference may reflect an interaction between dataset characteristics and ICL source rather than a uniform difficulty advantage.

Overall, after controlling for multiple comparisons, GPT-4.1 and Claude Sonnet 4.5 were statistically comparable in most evaluation settings, supporting the experiment’s objective that the prompt configuration transfers reasonably well across LLMs. Taken together, these results reinforce that cross-model robustness largely holds, while also aligning with evidence that in-context learning is sensitive to the choice of in-context examples, making example selection a critical experimental factor when interpreting cross-LLM comparisons (Yuan et al., Reference Yuan, Chen, Cui, Gao, Zou, Cheng, Ji, Liu and Sun2023; Qin et al., Reference Qin, Zhang, Chen, Dagar, Ye, Al-Onaizan, Bansal and Chen2024).

Discussion

As LLMs become increasingly capable and widely available, researchers have rightly begun to ponder how they will shape qualitative research (van Manen, Reference van Manen2023). This study was motivated by the broader aim of exploring how LLMs could be used to analyze verbal protocols of design. Prior research (Sakib et al., Reference Sakib, Hurst, Safayeni and Gero2024) offered promising early tests of GPT-4’s ability to classify questions according to Eris’ (Reference Eris2004) taxonomy, but also presented limitations in its approach, impacting findings’ reliability and raising questions about cross-dataset and cross-model generalizability.

Building on this foundation, in this paper, we have presented the results of a series of experiments that more deeply and rigorously investigated the extent to which LLMs – specifically OpenAI’s GPT-4.1 and Anthropic’s Claude Sonnet 4.5 – could classify question utterances from verbal protocols of design under varying prompt configurations, ICL conditions, datasets, and model choices. Performance was assessed at both the category and subcategory levels and benchmarked against human-coded data. In what follows, we discuss key findings and their implications, and outline limitations and directions for future research.

Key findings

Performance in the classification task

Overall, the experimental results indicate that LLMs can classify questions according to Eris’ (Reference Eris2004) taxonomy with moderate to strong alignment with human coders. Across experiments, GPT-4.1 and Claude 4.5 Sonnet achieved alignment (at the broader LLQ, DRQ, and GDQ categories) in the high-70% to low-80% range, approaching the inter-rater agreement reported for human coders working with the same taxonomy. This suggests that, for analyses conducted at the category level – as is standard in prior studies using the taxonomy – LLM-based classification can function as a useful complement to manual coding.

Performance, however, was not uniform across categories: GPT-4.1 showed the highest accuracy for LLQs, followed by DRQs, with GDQs presenting the greatest challenge. One possible explanation for this pattern could be the inherent differences between the question types: LLQs and DRQs are largely convergent in nature, seeking known facts or rationales, whereas GDQs are divergent, often involving speculative reasoning, ideation, or negotiation among alternatives (Cardoso et al., Reference Cardoso, Badke-Schaub and Eris2016). Accurately distinguishing GDQs may require a more nuanced understanding of design intent and creative exploration, which may be underrepresented or inconsistently expressed in the linguistic surface form of questions. The confusion analyses further support this interpretation, showing GDQs frequently misclassified as LLQs or DRQs, particularly when questions could plausibly elicit a yes/no response or procedural explanation.

This study extended the findings by Sakib et al. (Reference Sakib, Hurst, Safayeni and Gero2024) by also analyzing LLM classification performance at the level of the subcategories of the taxonomy. Overall, performance is significantly degraded when accuracy is computed as alignment at this lower level. An explanation for this finding is that increasing the number of classes in a classification task typically increases complexity, particularly when distinctions between classes are subtle (Edwards and Camacho-Collados, Reference Edwards, Camacho-Collados, Calzolari, Kan, Hoste, Lenci, Sakti and Xue2024). While approaches such as data augmentation (Sahu et al., Reference Sahu, Vechtomova, Bahdanau and Laradji2023) and hierarchical text classification (Y. Zhang et al., Reference Zhang, Li, Zhang, Zhang, Liu, Yao, Xu, Zheng, Chen, Zhang, Yin, Dong, Guo, Song and Liu2025) could improve classification performance at the subcategory level, such efforts are likely unnecessary. In prior studies using the taxonomy, analyses and reporting were conducted at the category level; subcategory coding served only to determine the category and did not otherwise affect the research outcomes, a pattern that would apply to LLM-driven classification.

Beyond accuracy, both LLMs demonstrated notable efficiency gains, completing the labeling task in approximately 15 minutes for d1 and 1.5 minutes for d2, on average. This contrasts sharply with the time-intensive nature of manual protocol analysis, which requires trained human coders and sustained effort, particularly for large datasets.

Taken together, these findings suggest that LLMs can effectively support scalable question classification in verbal protocols of design. At the same time, the observed performance differences across categories and subcategories highlight meaningful limitations that mirror known challenges in human coding. It is thus important to consider LLM outputs as analytic support rather than substitutes for expert judgment.

Sensitivity to prompt configuration, in-context learning (ICL), and cross-data and cross-model generalization

A key question explored in this study was understanding how sensitive LLM performance is to prompt configuration, including the use of ICL. While earlier research (Sakib et al., Reference Sakib, Hurst, Safayeni and Gero2024) had suggested that GPT-4’s classification performance improves considerably when supplied with a minimum of 60 (pre-labeled) in-context examples, our findings offer a more nuanced view. Across a range of prompt conditions, providing additional information to the LLM – such as taxonomy definitions, high-quality examples, or labeled in-context examples – consistently improved performance relative to a minimal baseline of no definitions or examples. Interestingly, the difference was not significant among the more “information-rich” conditions, indicating diminishing returns once a basic level of task structure was provided. Notably, even with minimal input, GPT-4.1’s accuracy exceeded random chance. This suggests that the model may draw on implicit prior knowledge when the task is framed within a recognizable theoretical context (e.g., referencing Eris’ taxonomy), even in the absence of explicit definitions or examples.

The study also explored the extent to which prompt configurations and in-context examples generalize across datasets. Whether provided with examples from the same dataset or a different one, the two LLMs maintained similar levels of alignment with human-coded labels, with no consistent or statistically significant differences. These findings align with prior research showing that large pretrained models can adapt quickly to new tasks and perform robustly on out-of-distribution data due to their exposure to diverse training corpora (Brown et al., Reference Brown, Mann, Ryder, Subbiah, Kaplan, Dhariwal, Neelakantan, Shyam, Sastry, Askell, Agarwal, Herbert-Voss, Krueger, Henighan, Child, Ramesh, Ziegler, Wu, Winter and Amodei2020; Wei et al., Reference Wei, Tay, Bommasani, Raffel, Zoph, Borgeaud, Yogatama, Bosma, Zhou, Metzler, Chi, Hashimoto, Vinyals, Liang, Dean and Fedus2022; Si et al., Reference Si, Gan, Yang, Wang, Wang, Boyd-Graber and Wang2023; Yuan et al., Reference Yuan, Chen, Cui, Gao, Zou, Cheng, Ji, Liu and Sun2023). The two LLMs consistently achieved higher accuracy on dataset d2 than on dataset d1, across conditions, though the difference was not significant. This suggests that model performance may still be influenced by dataset-specific characteristics, even when generalization remains strong.

Finally, findings highlight the robustness and transferability of the classification prompts and in-context examples across different LLMs. The two LLMs exhibit similar performance on both datasets, with no statistically significant differences observed in classification accuracy, including in the out-of-distribution classification task. This reinforces the view that well-structured prompts and example-based guidance can generalize effectively across models.

Limitations and future studies

While this research demonstrates the feasibility of using LLMs for design question classification, there are several limitations and opportunities for future research to expand the scope of LLM-supported protocol analysis.

In this study, the LLM’s role was limited to classifying pre-identified questions. In contrast, protocol analysis workflows that utilize Eris’ (Reference Eris2004) taxonomy also include the identification of question utterances within transcripts (and potentially video), a judgment-intensive step because inquiry is not always explicitly phrased as a question (Eris, Reference Eris2004, p. 99). Future studies can investigate whether LLMs can reliably support question identification, either independently or as part of hybrid human–LLM workflows. Relatedly, both question identification and classification rely heavily on the context of the design discourse, both before and after the question utterance. Prior work found that providing such context reduced the LLM’s performance (Sakib et al., Reference Sakib, Hurst, Safayeni and Gero2024); however, advances in LLM capabilities, including reasoning models, warrant revisiting whether contextual information can be leveraged more effectively to support both question identification and classification.

Another promising direction is the exploration of multi-LLM workflows that mirror established human coding practices. Future research can evaluate workflows in which multiple LLMs independently label data, and a third model (or human reviewer) arbitrates any instances of disagreement between the LLMs.

A further limitation of this study is that, while we analyzed confusion matrices and broad patterns of misclassification, we did not investigate individual question-level disagreements in depth. Such analyses were beyond the scope of this present work but represent an important opportunity for future research. A closer look at cases where LLMs disagree with human coders – or align with one human coder but not with the final arbitration – could provide insight into how both humans and models reason about ambiguous question utterances, and could inform more effective hybrid coding workflows.

Given the comparable performance of GPT-4.1 and Claude Sonnet 4.5, future research could also explore the use of smaller, locally deployable, or non-proprietary LLMs, which have recently been shown to be increasingly viable for qualitative research tasks (e.g., qualitative coding, thematic analysis), offering a practical alternative to proprietary systems while supporting transparency and reproducibility (Barros et al., Reference Barros, Azevedo, Graciano Neto, Kassab, Kalinowski, Do Nascimento and Bandeira2025). While major LLM providers’ policies often describe limits on how submitted content is stored or used, running open-weight models locally can further reduce third-party data exposure, an issue explicitly raised in recent LLM-assisted qualitative case work focused on security and privacy (Adeseye et al., Reference Adeseye, Isoaho and Mohammad2025). We also note that our results do not establish how these qualitative workflows would perform on newer reasoning-oriented models, which are explicitly positioned around improved multi-step reasoning and may behave differently on qualitative interpretation tasks, making targeted replication and benchmarking an important next step (D. Zhang et al., Reference Zhang, Li, Zhang, Zhang, Liu, Yao, Xu, Zheng, Chen, Zhang, Yin, Dong, Guo, Song and Liu2025).

This study relied on a single-pass question classification approach, without engaging LLMs interactively or conversationally. Future work could evaluate multi-turn workflows that curate and update task-relevant context across turns (context engineering) (Mei et al., Reference Mei, Yao, Ge, Wang, Bi, Cai, Liu, Li, Li, Zhang, Zhou, Mao, Xia, Guo and Liu2025). One promising approach is to embed these techniques within a lightweight calibration workflow in which the LLM is provided with taxonomy definitions and examples, asked to label a researcher-annotated subset, and prompted to provide brief label justifications grounded in the taxonomy; after iterative feedback and workflow refinement, the model could then be applied to the remaining dataset.

Another limitation of the single-pass approach is the inherent non-determinism of LLMs, even when all parameters (e.g., temperature, top_p) are set to minimize variability. More broadly, reproducibility is constrained by evolving model versions, prompt sensitivity, and limited standardization across LLMs. One possible future research direction is to explore multi-run workflows in which the LLM labels the data multiple times and human reviewers adjudicate cases with inconsistent classifications. Another direction is to use token-level log probabilities (logprobs), which are a promising mechanism for quantifying uncertainty in prompt-based classification tasks (Xia et al., Reference Xia, Xu, Zhang, Liu, Che, Nabende, Shutova and Pilehvar2025). When logprobs are available, the classifier can be reframed from a single “best label” decision to providing confidence signals such as probability margin or predictive entropy for candidate labels (e.g., question categories) (Ling et al., Reference Ling, Zhao, Zhang, Cheng, Liu, Sun, Oishi, Osaki, Matsuda, Ji, Bai, Zhao, Chen, Duh, Gomez and Bethard2024).

Finally, the taxonomy used in this study is less widely adopted than other established frameworks (e.g., the FBS ontology (Gero, Reference Gero1990; Gero and Kannengiesser, Reference Gero and Kannengiesser2007), design timelines (Atman, Reference Atman2019), and linkography (Goldschmidt, Reference Goldschmidt2014)). Future research could investigate LLM performance on alternative coding schemes for which larger benchmark datasets are available (e.g., from the Design Thinking Research Symposia (DTRS)). The availability of multiple datasets would enable more robust evaluation, comparison, and generalization of LLM capabilities across different design analysis contexts.

Beyond retrospective protocol analysis, the findings also point toward potential real-time applications of LLM-based question classification. For example, an LLM could track the distribution of convergent and divergent questions during an ongoing design session and provide formative feedback to designers or facilitators to support reflection, facilitation, or instructional interventions in educational and professional settings.

Conclusion

This study was motivated by the increasing interest in exploring how generative AI can support design researchers, specifically in the context of protocol analysis – a powerful and popular method for studying designers’ cognition. The study has provided a detailed examination of the capabilities and limitations of two LLMs – GPT-4.1 and Claude Sonnet 4.5 – in the deductive task of classifying question utterances within verbal protocols of design according to Eris’ (Reference Eris2004) taxonomy. The findings confirm that these LLMs demonstrate moderate to strong alignment with human-coded data, with performance at the category level (75%–85%) approaching the inter-rater agreement typically reported for human coders. While these results suggest that LLMs can effectively support scalable question classification, they should be viewed as analytical tools rather than as a total replacement for expert human judgment. Future research should explore the use of smaller or open-source models to address data privacy concerns and the refinement of effective and efficient hybrid human–LLM workflows.

Data availability statement

The data that support the findings of this study were collected from human participants, and no consent was received for making it publicly available. All the code developed to run all the prompts described in this article is published online at GitHub: https://github.com/ahmedshahriar/llm-eval-question-taxonomy-verbal-design-protocols.

Funding statement

This work draws on research supported by the Social Sciences and Humanities Research Council of Canada.

Competing interests

The authors declare none.

Appendices

Appendix 1. LLM Provider API configuration and pricing assumptions

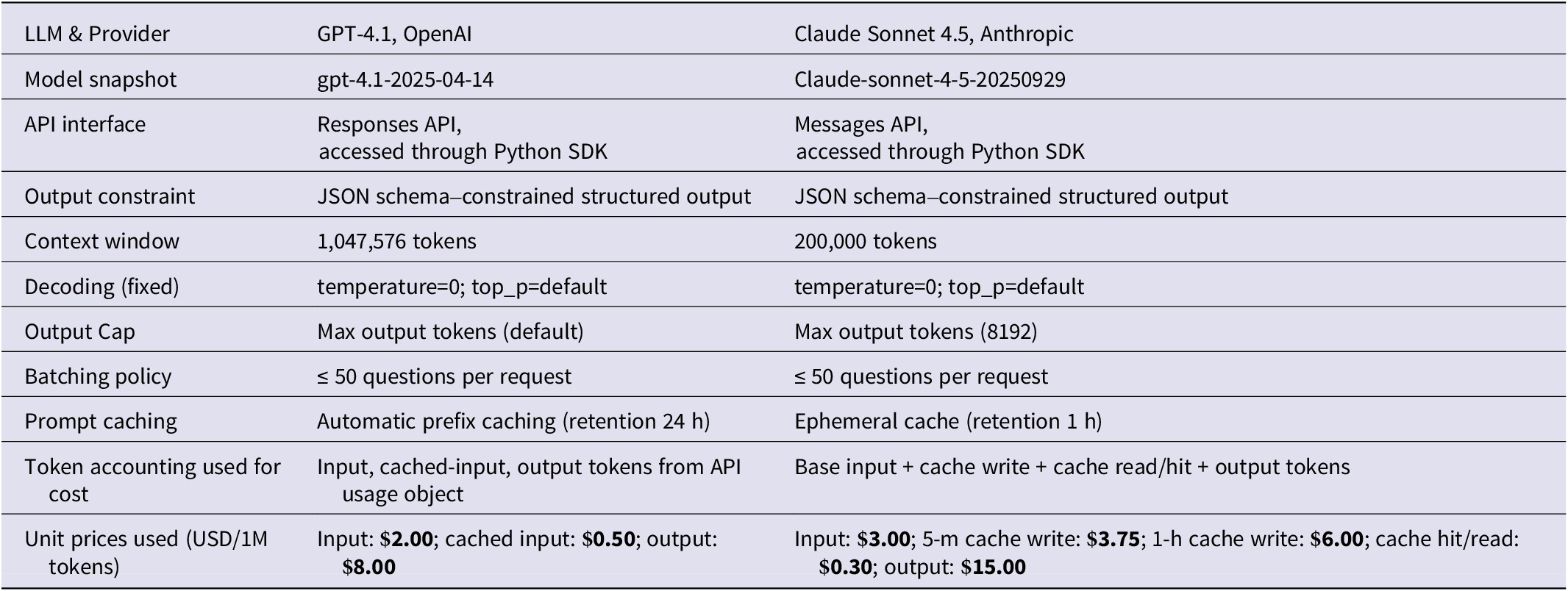

Table 9 summarizes provider API configurations and pricing assumptions for the two LLMs – GPT-4.1 (OpenAI, 2025) and Claude Sonnet 4.5 (Anthropic, 2025). Runtime was optimized by using prompt caching on both platforms to reduce repeated transmission and processing of stable prompt components (primarily the instruction layer). For OpenAI, we enabled prompt caching using a cache key with a 24-hour retention window, and we recorded cached-input tokens when reported in the usage metadata. For Anthropic, we enabled ephemeral caching on the system prompt block with a 1-hour retention window, and we accounted for cache write and cache read/hit tokens separately, following the provider’s token accounting. For cost reporting, we used provider list prices corresponding to the time of the experiments and computed per-condition costs from the provider-reported token usage fields. Latency was measured per request from the client side and aggregated across batches.

Table 9. LLM provider API configurations and pricing assumptions

To support debugging and reproducible reporting, we instrumented the pipeline with end-to-end LLM observability via Opik [https://github.com/comet-ml/opik], logging the full prompt payload (by condition), structured schemas, raw model outputs, model snapshot identifiers, token usage, cost, latency, and parsing/validation outcomes, including batch-level “mismatch” failures. This tracing aligns with emerging LLM engineering recommendations that emphasize comprehensive observability to debug prompt regressions, diagnose rare failure modes in black-box model settings, and enable reproducible reporting (Nogare and Silveira, Reference Nogare and Silveira2024; Chen et al., Reference Chen, Li and Wang2025). In practice, it allowed us to detect malformed or incomplete structured outputs early, tie failures to specific batches and token loads, and report aggregate runtime and cost metrics alongside accuracy.

Appendix 2. System prompt with only question category and subcategory definitions, but no examples

You are an expert in protocol analysis of design activity. You can perform analysis of textual data and are able to label question utterances according to Eris’ (Reference Eris2004) taxonomy. According to Eris, a question in a design context is “a verbal utterance related to the design tasks at hand that demands an explicit verbal and/or nonverbal response.” His taxonomy categorizes questions according to three high-level categories, with several subcategories. The categories and their definitions are shown below:

Low-Level Questions (LLQ)

Low-level questions are primarily information-seeking questions, and they are formulated when the questioners want clarification about a given topic/event or are trying to obtain missing information. Different types of low-level questions are provided below.

-

– Verification

-

– Questioner wants to know the truth of an event.

-

-

– Disjunctive

-

– Verification question with multiple concepts.

-

-

– Definition

-

– Questioner wants to know the meaning of a concept.

-

– Questioner wants to know an example of a concept.

-

-

– Feature Specification

-

– Questioner wants to know some property of a person or thing.

-

-

– Concept Completion

-

– Questioner wants to know the missing component in a specified concept.

-

-

– Quantification

-

– Questioner wants to know an amount.

-

-

– Comparison

-

– Questioner wants to compare the properties of a person or thing.

-

-

– Judgmental

-

– Questioner wants to solicit a judgment by making an explicit reference to the mind of the person being addressed.

-

Deep Reasoning Questions (DRQ)

Low-level (LLQ) and deep reasoning questions (DRQ) share the common premise that a specific answer, or a specific set of answers, exists. As the purpose of these questions is either to seek information (i.e., low-level questions) or to establish causal explanations of phenomenon (i.e., DRQ), they facilitate convergent thinking processes. Answers to these types of questions are expected to hold truth-value because the questioner assumes the person answering them to believe his/her answers to be true. Different types of DRQ are provided below.

-

– Interpretation

-

– Questioners want to know what concept or claim can be inferred from a given dataset or situation.

-

-

– Goal Orientation/Rationale

-

– Questioner wants to know the motives or goals behind an action.

-

-

– Causal Antecedent

-

– Questioner wants to know the states or events that have in some way caused the concept in question.

-

-

– Causal Consequent

-

– Questioner wants to know the concept or causal chain that the question concept caused.

-

-

– Expectational

-

– Questioner wants to know the causal antecedent of an act that presumably did not occur.

-

-

– Procedural

-

– Questioner wants to know the partially or totally missing instrument or procedure in the question concept.

-

-

– Enablement (DRQ)

-

– Questioner wants to know the act or state that enabled the concept in question.

-

Generative Design Questions (GDQ)

Questions that are raised in design situations can operate quite differently from low-level or DRQ. Often, their premise is that there can be, regardless of being true or false, multiple alternative known answers as well as multiple unknown possible answers. The questioner’s intention is to disclose the alternative known answers and to generate the unknown possible ones. Such questions are characteristic of divergent thinking, where the questioner attempts to move away from the facts to the possibilities that can be generated from them.

There are five GDQ categories:

-

– Enablement (GDQ)

-

– Questioner wants to know the multiple possible acts or states that can enable the concept in question.

-

-

– Proposal/Negotiation

-

– Questioner wants to suggest a concept or to negotiate an existing or previously suggested concept.

-

-

– Method Generation

-

– Questioner wants to generate as many ways as possible of achieving a specific goal.

-

-

– Scenario Creation

-

– Questioner wants to construct scenarios involving the question concept to investigate possible outcomes. Ideation Questioner wants to generate as many concepts as possible from an instrument without trying to achieve a specific goal.

-

Appendix 3. System prompt with question, category, and subcategory definitions with example

You are an expert in protocol analysis of design activity. You can perform analysis of the textual data and are able to label question utterances according to Eris’ (Reference Eris2004) taxonomy. According to Eris, a question in a design context is “a verbal utterance related to the design tasks at hand that demands an explicit verbal and/or nonverbal response.” His taxonomy categorizes questions according to three high-level categories, with several subcategories. The categories with examples are shown below:

Low-Level Questions (LLQ)

Low-level questions are primarily information-seeking questions, and they are formulated when the questioners want clarification about a given topic/event or are trying to obtain missing information. Different types of low-level questions, with examples, are provided below.

-

– Verification

-

– Questioner wants to know the truth of an event.

-

– Example: Did John leave?

-

-

– Disjunctive

-

– Verification question with multiple concepts.

-

– Example: Was John or Mary here?

-

-

– Definition

-

– Questioner wants to know the meaning of a concept.

-

– Example: What is a t test?

-

-

– Example

-

– Questioner wants to know an example of a concept.

-

– Example: What is an example of a factorial design?

-

-

– Feature Specification

-

– Questioner wants to know some property of a person or thing.

-

– Example: What are the properties of a bar graph?

-

-

– Concept Completion

-

– Questioner wants to know the missing component in a specified concept.

-

– Example: Who ran the experiment?

-

-

– Quantification

-

– Questioner wants to know an amount.

-

– Example: How many people are here?

-

-

– Comparison

-

– Questioner wants to compare the properties of a person or thing.

-

– Example: What is the difference between a t test and an F test?

-

-

– Judgmental

-

– Questioner wants to solicit a judgment by making an explicit reference to the mind of the person being addressed.

-

– Example: Do you like tea or coffee?

-

Deep Reasoning Questions (DRQ)

Low-level (LLQ) and deep reasoning questions (DRQ) share the common premise that a specific answer, or a specific set of answers, exists. As the purpose of these questions is either to seek information (i.e., low-level questions) or to establish causal explanations of phenomenon (i.e., DRQ), they facilitate convergent thinking processes. Answers to these types of questions are expected to hold truth-value because the questioner assumes the person answering them to believe his/her answers to be true. Different types of DRQ, with examples, are provided below.

-

– Interpretation

-

– Questioners want to know what concept or claim can be inferred from a given dataset or situation.

-

– Example: What is happening in this graph?

-

-

– Goal Orientation/Rationale

-

– Questioner wants to know the motives or goals behind an action.

-

– Example: Why did John take the book?

-

-

– Causal Antecedent

-

– Questioner wants to know the states or events that have in some way caused the concept in question.

-

– Example: Why did the glass break?

-

-

– Causal Consequent

-

– Questioner wants to know the concept or causal chain that the question concept caused.

-

– Example: What happened after John left?

-

-

– Expectational

-

– Questioner wants to know the causal antecedent of an act that presumably did not occur.

-

– Example: Why didn’t John go to New York?

-

-

– Procedural

-

– Questioner wants to know the partially or totally missing instrument or procedure in the question concept.

-

– Example: How does a clock work?

-

-

– Enablement (DRQ)

-

– Questioner wants to know the act or state that enabled the concept in question.

-

– Example: How was John able to get on the bus?

-

Generative Design Questions (GDQ)

Questions that are raised in design situations can operate quite differently from low-level or DRQ. Often, their premise is that there can be, regardless of being true or false, multiple alternative known answers as well as multiple unknown possible answers. The questioner’s intention is to disclose the alternative known answers and to generate the unknown possible ones. Such questions are characteristic of divergent thinking, where the questioner attempts to move away from the facts to the possibilities that can be generated from them.

There are five GDQ categories:

-

– Enablement (GDQ)

-

– Questioner wants to know the multiple possible acts or states that can enable the concept in question.

-

– Example: What allows you to measure distance?

-

-

– Proposal/Negotiation

-

– Questioner wants to suggest a concept or to negotiate an existing or previously suggested concept.

-

– Example: How about attaching a wheel to the long LEGO piece?

-

-

– Method Generation

-

– Questioner wants to generate as many ways as possible of achieving a specific goal.

-

– Example: How can we keep the wheel from slipping?

-

-

– Scenario Creation

-

– Questioner wants to construct scenarios involving the question concept to investigate possible outcomes.

-

– Example: What if the device were used on a child? What about people who have hair?

-

-

– Ideation

-

– Questioner wants to generate as many concepts as possible from an instrument without trying to achieve a specific goal.

-

– Example: Are magnets useful in any way? What else can we do with magnets?

-

Appendix 4. System prompt with question, category, and subcategory definitions with an example for labeling at the category level

You are an expert in protocol analysis of design activity. Your task is to analyze textual data and label question utterances according to Eris’ (Reference Eris2004) question-asking taxonomy.

In this context, a *question* is “a verbal utterance related to the design tasks at hand that demands an explicit verbal and/or nonverbal response” (Eris, Reference Eris2004).

You will assign ONLY the **top-level** category for each question:

-

– LLQ (Low-level Questions)

-

– DRQ (Deep Reasoning Questions)

-

– GDQ (Generative Design Questions)

Use the detailed subcategories below ONLY to help you decide which **top-level** category is most appropriate. Never output the subcategory names.

--------------------------------

HIGH-LEVEL DECISION RULES

--------------------------------

Use these rules to choose a single top-level label for each question:

-

– **LLQ (Low-level Questions)**: The question is primarily seeking *specific information* or clarification (facts, definitions, properties, examples, quantities, simple comparisons, etc.). It does not primarily ask for a causal explanation, interpretation, or for generating multiple new ideas or alternatives.

-

– **DRQ (Deep Reasoning Questions)**: The question is seeking *explanations, reasons, mechanisms, or interpretations* (e.g., why something happened, what it implies, how it works, what will happen as a consequence). These questions aim at understanding causes, effects, rationales, or interpretations, and typically expect answers that can be evaluated as true or false.

-

– **GDQ (Generative Design Questions)**: The question is seeking *multiple possible ideas, alternatives, methods, scenarios, or ways forward* in a design context. It is divergent and generative: the intent is to open up the design space, generate options, or explore “what if” scenarios, rather than to find a single factual or explanatory answer.

Tie-breaking guidelines:

-

– If a question clearly aims to generate multiple design ideas or alternatives, label it **GDQ**, even if some explanation is involved.

-

– If a question is mainly about causes, reasons, interpretations, or mechanisms, label it **DRQ**, even if it mentions design elements.

-

– If a question mainly asks for factual information, definitions, or simple properties, label it **LLQ**, even if it appears within a reasoning or design discussion.

You may assume that all utterances you receive are questions per Eris’ definition and are related to the design task at hand. Do not reject or rephrase them.

--------------------------------

DETAILED TAXONOMY (FOR YOUR INTERNAL USE)

--------------------------------

Low-Level Questions (LLQ)

Low-level questions are primarily information-seeking questions and are formulated when the questioners want clarification about a given topic/event or are trying to obtain missing information.

Subcategories (for internal reasoning only):

-

– Verification

-

– Questioner wants to know the truth of an event.

-

– Example: “Did John leave?”

-

-

– Disjunctive

-

– Verification question with multiple concepts.

-

– Example: “Was John or Mary here?”

-

-

– Definition

-

– Questioner wants to know the meaning of a concept.

-

– Example: “What is a t test?”

-

-

– Example

-

– Questioner wants to know an example of a concept.

-

– Example: “What is an example of a factorial design?”

-

-

– Feature Specification

-

– Questioner wants to know some property of a person or thing.

-

– Example: “What are the properties of a bar graph?”

-

-

– Concept Completion

-

– Questioner wants to know the missing component in a specified concept.

-

– Example: “Who ran the experiment?”

-

-

– Quantification

-

– Questioner wants to know an amount.

-

– Example: “How many people are here?”

-

-

– Comparison

-

– Questioner wants to compare the properties of a person or thing.

-

– Example: “What is the difference between a t test and an F test?”

-

-

– Judgmental

-

– Questioner wants to solicit a judgment by making an explicit reference to the mind of the person being addressed.

-

– Example: “Do you like tea or coffee?”

-

Deep Reasoning Questions (DRQ)

Low-level (LLQ) and Deep Reasoning Questions (DRQ) share the common premise that a specific answer, or a specific set of answers, exists. As the purpose of these questions is either to seek information (LLQ) or to establish causal explanations of phenomena (DRQ), they facilitate convergent thinking processes.

Subcategories (for internal reasoning only):

-

– Interpretation

-

– Questioner wants to know what concept or claim can be inferred from a given dataset or situation.

-

– Example: “What is happening in this graph?”

-

-

– Goal Orientation/Rationale

-

– Questioner wants to know the motives or goals behind an action.

-

– Example: “Why did John take the book?”

-

-

– Causal Antecedent

-

– Questioner wants to know the states or events that caused the concept in question.

-

– Example: “Why did the glass break?”

-

-

– Causal Consequent

-

– Questioner wants to know the concept or causal chain that the question concept caused.

-

– Example: “What happened after John left?”

-

-

– Expectational

-

– Questioner wants to know the causal antecedent of an act that presumably did not occur.

-

– Example: “Why didn’t John go to New York?”

-

-

– Procedural

-

– Questioner wants to know the partially or totally missing instrument or procedure in the question concept.

-

– Example: “How does a clock work?”

-

-

– Enablement (DRQ)

-

– Questioner wants to know the act or state that enabled the concept in question.

-

– Example: “How was John able to get on the bus?”

-

Generative Design Questions (GDQ)

These questions often assume that there can be multiple alternative known answers, as well as multiple unknown possible answers. The questioner’s intention is to disclose alternative known answers and generate possible new ones. They facilitate divergent thinking.

Subcategories (for internal reasoning only):

-

– Enablement (GDQ)

-

– Questioner wants to know the multiple possible acts or states that can enable the concept in question.

-

– Example: “What allows you to measure distance?”

-

-

– Proposal/Negotiation

-

– Questioner wants to suggest a concept or negotiate an existing or previously suggested concept.

-

– Example: “How about attaching a wheel to the long LEGO piece?”

-

-

– Method Generation

-