Differential equation models have become increasingly popular for investigating dynamic processes in social and behavioral sciences. These models describe the relations between a variable’s value and its derivatives over time (Boker et al., Reference Boker, Neale, Rausch, Montfort, Oud and Satorra2004), offering a powerful framework for modeling the mechanisms underlying behavioral processes using intensive longitudinal data. In psychological research, second-order differential equation models, such as the damped oscillator model, are commonly employed to capture the dynamics of systems around its equilibrium. These models are especially useful for describing self-regulatory processes, which are central to many psychological theories (Boker et al., Reference Boker, Neale, Rausch, Montfort, Oud and Satorra2004; Hu & Treinen, Reference Hu and Treinen2019). Given their ability to model complex dynamics and their theoretical relevance, second-order differential equation models have been applied to examine dynamic mechanisms across a variety of psychological constructs, such as affects (Chow et al., Reference Chow, Ram, Boker, Fujita and Clore2005; Ollero et al., Reference Ollero, Estrada, Hunter and Cáncer2025; Reed et al., Reference Reed, Barnard and Butler2015; Steele & Ferrer, Reference Steele and Ferrer2011), physiological reactivity (Helm et al., Reference Helm, Sbarra and Ferrer2012), substance use (Boker & Graham, Reference Boker and Graham1998), working memory (Gasimova et al., Reference Gasimova, Robitzsch, Wilhelm, Boker, Hu and Hülür2014), sexual desire (Farr et al., Reference Farr, Diamond and Boker2014), and parent–adolescent interactions (Zheng et al., Reference Zheng, Xu, Li and Hu2024).

With the growing application of differential equation models, their estimation methods have seen substantial developments in recent decades. These methods generally fall into two categories: one-stage and two-stage methods. The one-stage method employs the analytic solution or numerical integration of the differential equation model to perform nonlinear least squares regression. For instance, Hu and Treinen (Reference Hu and Treinen2019) demonstrated high estimation accuracy for parameters and their standard errors (SEs) in a simulation study based on the analytic solution of the damped oscillator model. However, this method requires multiple starting values (for the variable, its derivatives and model parameters), and the estimation results can be highly sensitive to these starting values, as will be illustrated in Section 1. Another one-stage approach is based on state-space models (Durbin & Koopman, Reference Durbin and Koopman2012), which provide a general modeling framework for dynamic processes, including those governed by differential equations. These models are often regarded as an effective approach for estimating differential equation parameters, especially given their ability to handle measurement and dynamic errors within a unified framework. This approach can be implemented in R packages, such as dynr (Ou et al., Reference Ou, Hunter and Chow2019), OpenMx (Neale et al., Reference Neale, Hunter, Pritikin, Zahery, Brick, Kirkpatrick and Boker2016), and ctsem (Driver et al., Reference Driver, Oud and Voelkle2017).

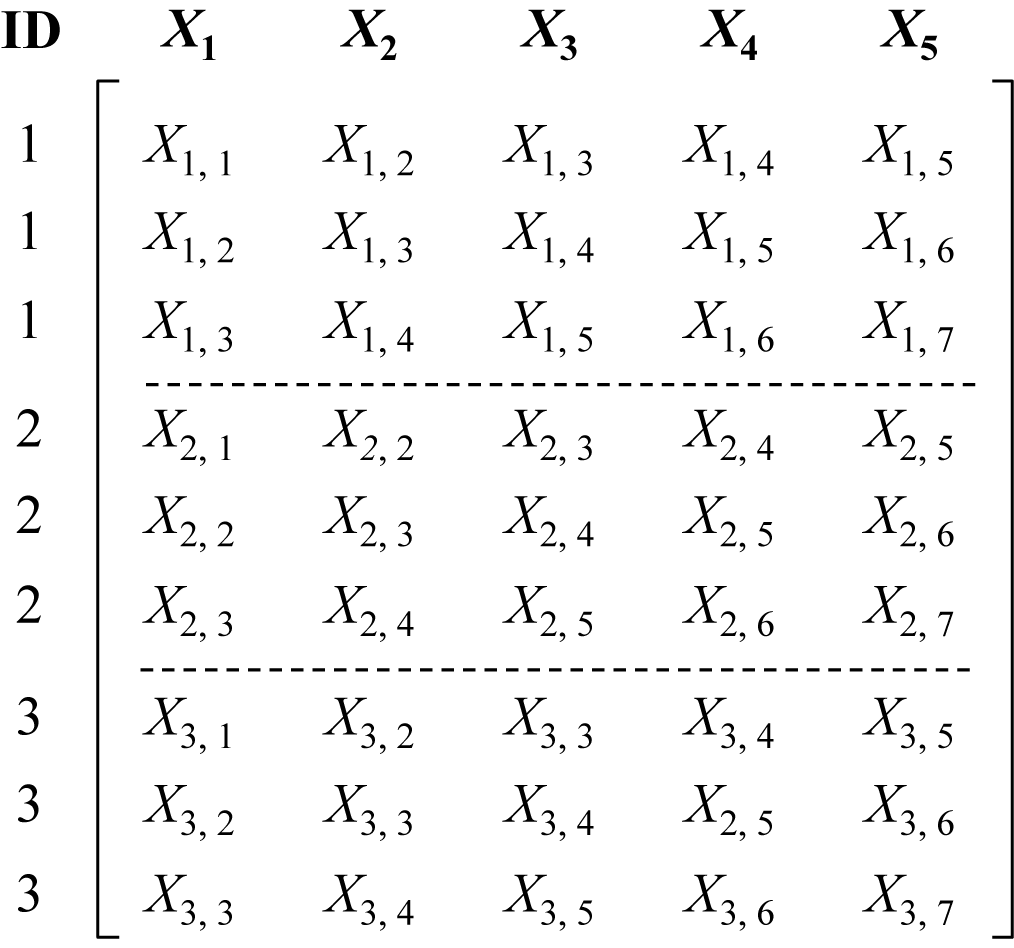

The two-stage methods are grounded in the linear relations between a variable and its derivatives in differential equation models. These methods first estimate derivatives from the observed data, and then use these estimated derivatives to obtain model parameters through linear regression. This procedure offers a practical advantage, as it can be easily implemented using standard linear regression tools. A widely used method in this category is the generalized local linear approximation (GLLA; Boker et al., Reference Boker, Deboeck, Edler, Keel, Chow, Ferrer and Hsieh2010), which computes derivatives from observed data and estimates parameters in a linear regression model. Before estimating derivatives and model parameters, an essential preprocessing step is to reorganize the dataset into a time-delay embedded matrix. Specifically, the time series is cut into overlapping segments, which are then stacked as rows in the matrix. The length of these overlapping segments, referred to as the embedding dimension, determines the number of local time points used for derivative estimation. An example of a five-dimensional time-delay embedded matrix is shown in Figure 1 (

![]() $N = 3$

subjects,

$N = 3$

subjects,

![]() $T = 7$

observations). This time-delay embedding technique enhances the precision of parameter estimation using overlapping samples (Von Oertzen & Boker, Reference Von Oertzen and Boker2010) and has been shown to be robust to misspecification of sampling intervals (Boker et al., Reference Boker, Tiberio, Moulder, Montfort, Oud and Voelkle2019).

$T = 7$

observations). This time-delay embedding technique enhances the precision of parameter estimation using overlapping samples (Von Oertzen & Boker, Reference Von Oertzen and Boker2010) and has been shown to be robust to misspecification of sampling intervals (Boker et al., Reference Boker, Tiberio, Moulder, Montfort, Oud and Voelkle2019).

Illustration of a five-dimensional time-delay embedded data matrix (

![]() $N = 3$

subjects,

$N = 3$

subjects,

![]() $T = 7$

observations).

$T = 7$

observations).

The latent differential equation (LDE; Boker et al., Reference Boker, Neale, Rausch, Montfort, Oud and Satorra2004) method is a variant of GLLA that also builds upon the time-delay embedded data matrix. Unlike GLLA, which explicitly calculates the derivatives and treats them as observed variables in differential equation models, LDE specifies derivatives as latent variables indicated by observed time-delay embedded variables with fixed factor loadings.Footnote 1 Coefficients of the differential equation model are estimated as path coefficients among latent derivative variables. Although LDE can leverage the advantages of the SEM framework, both GLLA and LDE may produce biased estimates of differential equation coefficients at the second stage due to potential inaccuracies in derivative estimation at the first stage—whether through explicit calculation in GLLA or fixed loading specification in LDE. These limitations of GLLA and LDE will be further demonstrated in Section 1.

Given the flexibility, ease of implementation, and wide applications of GLLA in estimating differential equation models, addressing the bias in its parameters estimates is of critical importance. To this end, we propose a bias-correction method for the GLLA estimates in second-order differential equation models. The main idea behind this method is solving a bias-correction equation (derived from the relation between the true parameter values and their biased estimates) via stochastic approximation (SA). We demonstrate that this approach yields asymptotically unbiased point estimators even when the initial estimator (i.e., GLLA in our case) is asymptotically biased.Footnote 2 Additionally, we develop a numerical procedure to compute asymptotically valid SEs for the bias-corrected estimator.

The remainder of the article is organized as follows. We begin by illustrating the limitations of existing estimation methods, including the one-stage nonlinear regression approach and the two-stage methods (GLLA and LDE), using a simulated dataset based on a simple second-order differential equation model (i.e., the linear oscillator model with time-independent measurement error). Next, we introduce the bias-correction method for two-stage GLLA estimates, outlining its general idea and implementation via SA, and demonstrate its application to correct the bias of a single parameter using the same simulated dataset. We also provide a numerical computation procedure of the SEs for the bias-corrected estimator. We then extend the bias-correction method to a more commonly used second-order differential equation model—the damped linear oscillator model. In this extension, we first examine the performance of the bias-correction method in simultaneously addressing multiple parameters and then further investigate its effectiveness under more realistic scenarios by incorporating time-dependent dynamic error. Following this, we conduct a simulation study to evaluate the performance of the bias-correction method in handling multiple parameters in the damped linear oscillator model with dynamic error. Throughout these extended analyses and the simulation study, we compare the performance of our bias-correction method against estimates obtained from state-space models. We further use empirical data to demonstrate different results of GLLA and the bias-correction method. Finally, we discuss the broader implications of this study and suggest directions for future research.

1 Limitations of existing estimation methods: A simple illustration

The limitations of the one-stage method (i.e., nonlinear regression estimation) and the two-stage methods (GLLA and LDE) are illustrated using the linear oscillator model with measurement error. The model is specified as

where

![]() $X(t)$

is the measured displacement of a variable from its equilibrium at time t,

$X(t)$

is the measured displacement of a variable from its equilibrium at time t,

![]() $x(t)$

is the latent value of the displacement at time t,

$x(t)$

is the latent value of the displacement at time t,

![]() $e(t)$

is the measurement error that follows a normal distribution

$e(t)$

is the measurement error that follows a normal distribution

![]() $e(t) \sim N(0,\sigma _{e})$

,

$e(t) \sim N(0,\sigma _{e})$

,

![]() $\ddot {x}(t)$

is the second derivative of

$\ddot {x}(t)$

is the second derivative of

![]() $x(t)$

with respect to time at time t, and

$x(t)$

with respect to time at time t, and

![]() $\eta $

is the frequency parameter that determines the frequency of oscillation.

$\eta $

is the frequency parameter that determines the frequency of oscillation.

![]() $\eta $

is restricted to be negative. Equations (1) and (2) describe a self-regulatory system: when the system deviates from its equilibrium, it is pulled back to its equilibrium. Higher absolute values of

$\eta $

is restricted to be negative. Equations (1) and (2) describe a self-regulatory system: when the system deviates from its equilibrium, it is pulled back to its equilibrium. Higher absolute values of

![]() $\eta $

indicate more frequent fluctuations.Footnote

3

$\eta $

indicate more frequent fluctuations.Footnote

3

1.1 Data generation

A dataset with a large number of time points (

![]() $T = 1,000$

) for a single individual (

$T = 1,000$

) for a single individual (

![]() $N = 1$

) was generated according to Equations (1) and (2). Specifically, we first simulated data without measurement error using the analytic solution of Equation (2):

$N = 1$

) was generated according to Equations (1) and (2). Specifically, we first simulated data without measurement error using the analytic solution of Equation (2):

where

![]() $\omega = \sqrt {- \eta }$

,

$\omega = \sqrt {- \eta }$

,

![]() $A = x_{1}$

, and

$A = x_{1}$

, and

![]() $B = \dot {x}_{1}/\omega $

. Here,

$B = \dot {x}_{1}/\omega $

. Here,

![]() $x_{1}$

and

$x_{1}$

and

![]() $\dot {x}_{1}$

are the initial values of the displacement and the first derivative, respectively. We set

$\dot {x}_{1}$

are the initial values of the displacement and the first derivative, respectively. We set

![]() $\eta = -0.8$

to account for a typical cycle length of 7 time points (i.e.,

$\eta = -0.8$

to account for a typical cycle length of 7 time points (i.e.,

![]() $2\pi /\omega = 2\pi /\sqrt {- \eta } \approx 7$

), which, for instance, corresponds to a weekly cycle with one observation per day.

$2\pi /\omega = 2\pi /\sqrt {- \eta } \approx 7$

), which, for instance, corresponds to a weekly cycle with one observation per day.

1.2 One-stage estimation

In the one-stage method (i.e., nonlinear regression estimation), we used the analytic solution (Equation (3)) of the linear oscillator model to perform a nonlinear least square regression, aiming to estimate

![]() $\eta $

,

$\eta $

,

![]() $x_{1}$

, and

$x_{1}$

, and

![]() $\dot {x}_{1}$

by minimizing the residual sum of squares (RSS). This was implemented using the R function nls.

$\dot {x}_{1}$

by minimizing the residual sum of squares (RSS). This was implemented using the R function nls.

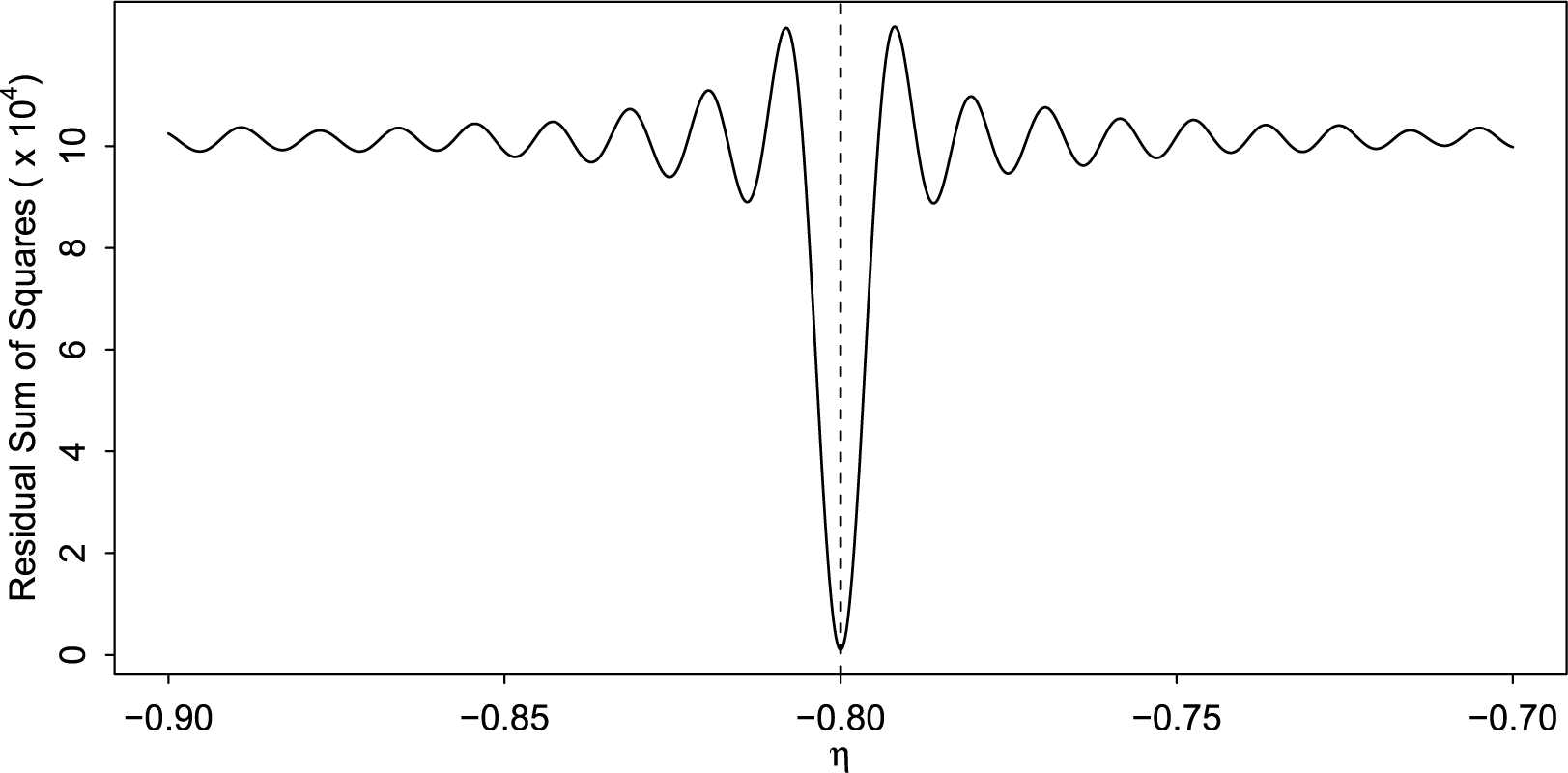

However, we found that the estimates were highly sensitive to the choice of starting values, particularly for

![]() $\eta $

. As shown in Figure 2, the RSS varies as a function of

$\eta $

. As shown in Figure 2, the RSS varies as a function of

![]() $\eta $

when

$\eta $

when

![]() $x_{1}$

and

$x_{1}$

and

![]() $\dot {x}_{1}$

are fixed at their true values. The RSS function has multiple local minima; therefore, numerical search algorithms may get trapped at different suboptimal solutions depending on the user-supplied starting values of

$\dot {x}_{1}$

are fixed at their true values. The RSS function has multiple local minima; therefore, numerical search algorithms may get trapped at different suboptimal solutions depending on the user-supplied starting values of

![]() $\eta $

. The presence of local optima poses computational challenges for one-stage estimation of differential equation models—even when the model can be expressed in closed form.

$\eta $

. The presence of local optima poses computational challenges for one-stage estimation of differential equation models—even when the model can be expressed in closed form.

Residual sum of squares (RSS) across different values of

![]() $\eta $

in the linear oscillator model.

$\eta $

in the linear oscillator model.

Note: The solid line represents the RSS for each candidate

![]() $\eta $

. The vertical dashed line indicates the true value of

$\eta $

. The vertical dashed line indicates the true value of

![]() $\eta $

used in data generation.

$\eta $

used in data generation.

1.3 Two-stage estimation

In the two-stage methods (GLLA and LDE), we reorganized the dataset to construct a time-delay embedded matrix with an embedding dimension of 5 (as illustrated in Figure 1). For GLLA, we first estimated the zero- and second-order derivatives using this matrix, and then fitted a linear regression model using the R function lm to estimate the regression effect (i.e.,

![]() $\eta $

) of the zero-order derivative

$\eta $

) of the zero-order derivative

![]() $x(t)$

on the second-order derivative

$x(t)$

on the second-order derivative

![]() $\ddot {x}(t)$

. For LDE, we specified a structural equation model using the R package lavaan (Rosseel, Reference Rosseel2012), treating the derivatives as latent variables, the dimensions of the time-delay embedded data as observed variables, and fixing the factor loadings to predefined values (Boker et al., Reference Boker, Neale, Rausch, Montfort, Oud and Satorra2004; Hu et al., Reference Hu, Boker, Neale and Klump2014).

$\ddot {x}(t)$

. For LDE, we specified a structural equation model using the R package lavaan (Rosseel, Reference Rosseel2012), treating the derivatives as latent variables, the dimensions of the time-delay embedded data as observed variables, and fixing the factor loadings to predefined values (Boker et al., Reference Boker, Neale, Rausch, Montfort, Oud and Satorra2004; Hu et al., Reference Hu, Boker, Neale and Klump2014).

The results showed that both methods produced biased estimates of

![]() $\eta $

, with GLLA yielding an estimate of

$\eta $

, with GLLA yielding an estimate of

![]() $-0.618$

and LDE yielding

$-0.618$

and LDE yielding

![]() $-0.626$

, both deviating substantially from the true value of

$-0.626$

, both deviating substantially from the true value of

![]() $-0.8$

. These findings highlight the limitations of the two-stage methods in providing accurate estimates of the dynamic parameter

$-0.8$

. These findings highlight the limitations of the two-stage methods in providing accurate estimates of the dynamic parameter

![]() $\eta $

in differential equation models.Footnote

4

$\eta $

in differential equation models.Footnote

4

2 Bias correction for two-stage GLLA estimates

In this section, we demonstrate how to correct the bias of two-stage GLLA estimates in differential equation models. We first introduce the general idea of the bias-correction method, and its implementation via SA. Then, the same dataset generated based on the linear oscillator model was used to illustrate the procedure of the bias-correction method.

2.1 Bias correction via stochastic approximation

2.1.1 General idea of the bias-correction method

Let

![]() $\boldsymbol {Y} = (Y_{1}, \ldots ,Y_{n})'$

represent sample data generated from the model

$\boldsymbol {Y} = (Y_{1}, \ldots ,Y_{n})'$

represent sample data generated from the model

![]() $\mathbb {P}_{\boldsymbol {\theta }^{*}}$

, where

$\mathbb {P}_{\boldsymbol {\theta }^{*}}$

, where

![]() $\boldsymbol {\theta }^{*} \in \Theta $

is the true parameter vector, and

$\boldsymbol {\theta }^{*} \in \Theta $

is the true parameter vector, and

![]() $\hat {\boldsymbol {\theta }} = \hat {\boldsymbol {\theta }}(\boldsymbol {Y})$

is a point estimate of

$\hat {\boldsymbol {\theta }} = \hat {\boldsymbol {\theta }}(\boldsymbol {Y})$

is a point estimate of

![]() $\boldsymbol {\theta }^{*}$

.Footnote

5

The bias of

$\boldsymbol {\theta }^{*}$

.Footnote

5

The bias of

![]() $\hat {\boldsymbol {\theta }}$

can be expressed as

$\hat {\boldsymbol {\theta }}$

can be expressed as

If there exists a

![]() $\boldsymbol {\theta }$

such that

$\boldsymbol {\theta }$

such that

![]() $\mathbb {E}_{\boldsymbol {\theta }}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\} = \mathbb {E}_{\boldsymbol {\theta }^{*}}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\}$

, and the map from

$\mathbb {E}_{\boldsymbol {\theta }}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\} = \mathbb {E}_{\boldsymbol {\theta }^{*}}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\}$

, and the map from

![]() $\boldsymbol {\theta }$

to

$\boldsymbol {\theta }$

to

![]() $\mathbb {E}_{\boldsymbol {\theta }}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\}$

is local injective (i.e.,

$\mathbb {E}_{\boldsymbol {\theta }}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\}$

is local injective (i.e.,

![]() $\mathbb {E}_{\boldsymbol {\theta }_1}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\} = \mathbb {E}_{\boldsymbol {\theta }_2}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\} \Rightarrow \boldsymbol {\theta }_1 = \boldsymbol {\theta }_2$

), we know that this

$\mathbb {E}_{\boldsymbol {\theta }_1}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\} = \mathbb {E}_{\boldsymbol {\theta }_2}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\} \Rightarrow \boldsymbol {\theta }_1 = \boldsymbol {\theta }_2$

), we know that this

![]() $\boldsymbol {\theta }$

must be the true value

$\boldsymbol {\theta }$

must be the true value

![]() $\boldsymbol {\theta }^{*}$

. However, since

$\boldsymbol {\theta }^{*}$

. However, since

![]() $\mathbb {E}_{\boldsymbol {\theta }^{*}}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\}$

is unknown, we approximate it with its unbiased estimate

$\mathbb {E}_{\boldsymbol {\theta }^{*}}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\}$

is unknown, we approximate it with its unbiased estimate

![]() $\hat {\boldsymbol {\theta }}(\boldsymbol {y})$

, where

$\hat {\boldsymbol {\theta }}(\boldsymbol {y})$

, where

![]() $\boldsymbol {y} = (y_{1}, \ldots , y_{n})'$

denotes a specific realization (or observed counterpart) of the random sample

$\boldsymbol {y} = (y_{1}, \ldots , y_{n})'$

denotes a specific realization (or observed counterpart) of the random sample

![]() $\boldsymbol {Y}$

. Then, if we can find a

$\boldsymbol {Y}$

. Then, if we can find a

![]() $\boldsymbol {\theta }$

such that

$\boldsymbol {\theta }$

such that

![]() $\mathbb {E}_{\boldsymbol {\theta }}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\} = \hat {\boldsymbol {\theta }}(\boldsymbol {y})$

, we can infer that this

$\mathbb {E}_{\boldsymbol {\theta }}\{\hat {\boldsymbol {\theta }}(\boldsymbol {Y})\} = \hat {\boldsymbol {\theta }}(\boldsymbol {y})$

, we can infer that this

![]() $\boldsymbol {\theta }$

should be close to

$\boldsymbol {\theta }$

should be close to

![]() $\boldsymbol {\theta }^{*}$

. Thus, the goal of bias correction becomes solving for

$\boldsymbol {\theta }^{*}$

. Thus, the goal of bias correction becomes solving for

![]() $\boldsymbol {\theta }$

from the following equation:

$\boldsymbol {\theta }$

from the following equation:

The solution to (5), denoted by

![]() $\tilde {\boldsymbol {\theta }}(\boldsymbol {y})$

, has been shown by Leung and Wang (Reference Leung and Wang1998) to reduce the bias to

$\tilde {\boldsymbol {\theta }}(\boldsymbol {y})$

, has been shown by Leung and Wang (Reference Leung and Wang1998) to reduce the bias to

![]() $O(n^{-1})$

for estimating single-parameter models under mild assumptions. In the Appendix, we generalize the bias-correction result in multiparameter settings, demonstrating that the bias-corrected estimator maintains the same

$O(n^{-1})$

for estimating single-parameter models under mild assumptions. In the Appendix, we generalize the bias-correction result in multiparameter settings, demonstrating that the bias-corrected estimator maintains the same

![]() $O(n^{-1})$

bias order and thus remains asymptotically unbiased.

$O(n^{-1})$

bias order and thus remains asymptotically unbiased.

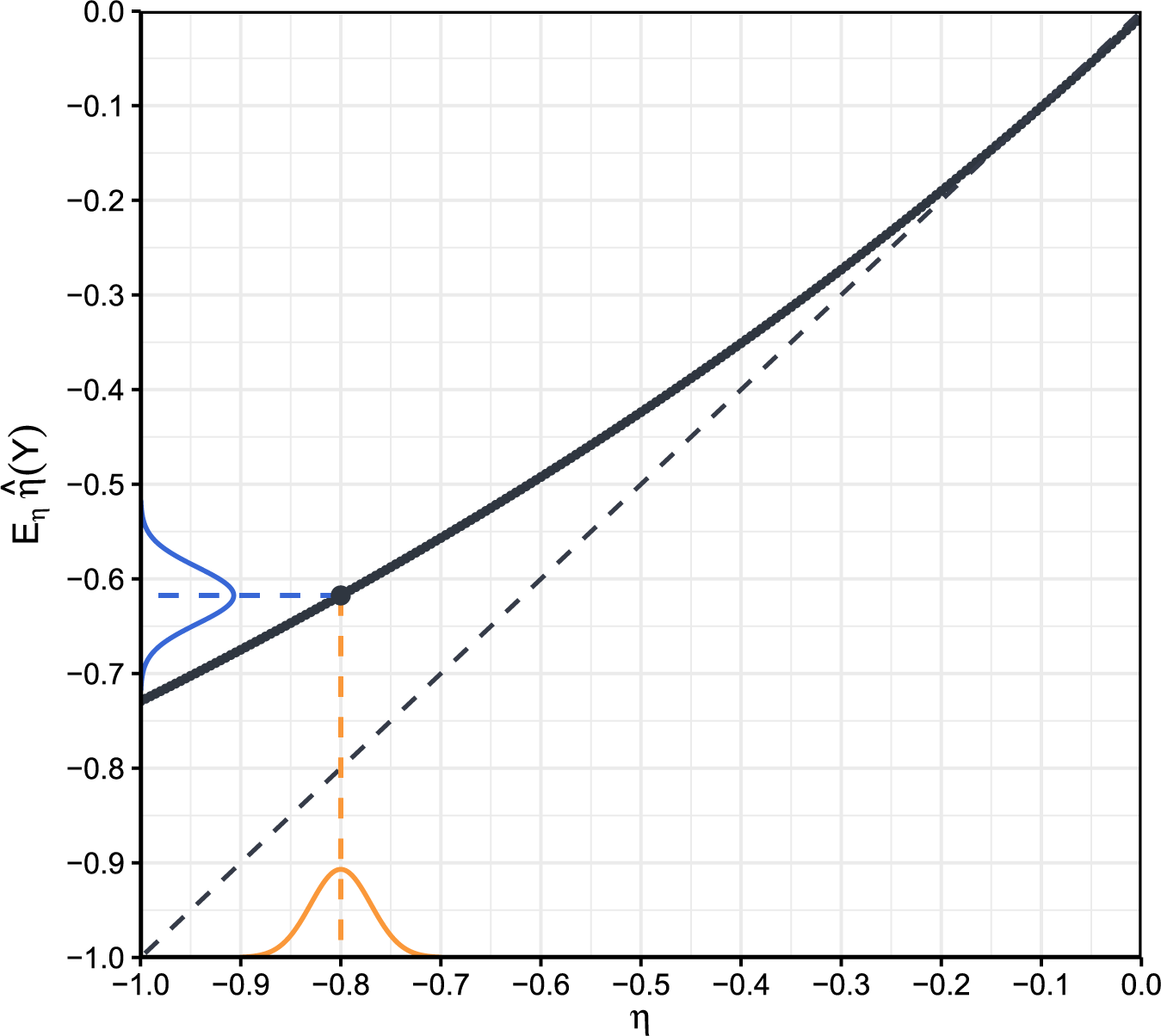

The rationale behind this approach is illustrated in Figure 3, using the estimation of

![]() $\eta $

in the linear oscillator model as an example. We varied the true values of

$\eta $

in the linear oscillator model as an example. We varied the true values of

![]() $\eta $

from

$\eta $

from

![]() $-1$

to

$-1$

to

![]() $0$

(excluding

$0$

(excluding

![]() $0$

), and fixed the other parameters at the values used in the previous illustrative example to generated datasets. For each value of

$0$

), and fixed the other parameters at the values used in the previous illustrative example to generated datasets. For each value of

![]() $\eta $

, 10,000 datasets were generated, and

$\eta $

, 10,000 datasets were generated, and

![]() $\eta $

was estimated using GLLA. The expectation of

$\eta $

was estimated using GLLA. The expectation of

![]() $\eta $

(denoted by

$\eta $

(denoted by

![]() $\mathbb {E}_{\eta }\{\hat {\eta }(\boldsymbol {Y})\}$

) is plotted as a function of

$\mathbb {E}_{\eta }\{\hat {\eta }(\boldsymbol {Y})\}$

) is plotted as a function of

![]() $\eta $

in Figure 3 (the solid black line). The estimator

$\eta $

in Figure 3 (the solid black line). The estimator

![]() $\hat {\eta }(\boldsymbol {Y})$

is found to be biased, and the bias increases as the magnitude of

$\hat {\eta }(\boldsymbol {Y})$

is found to be biased, and the bias increases as the magnitude of

![]() $\eta $

increases. This trend is reflected in the deviation of

$\eta $

increases. This trend is reflected in the deviation of

![]() $\mathbb {E}_{\eta }\{\hat {\eta }(\boldsymbol {Y})\}$

from the diagonal (the dotted black line). For the true value of

$\mathbb {E}_{\eta }\{\hat {\eta }(\boldsymbol {Y})\}$

from the diagonal (the dotted black line). For the true value of

![]() $\eta = -0.8$

(indicated by the orange dashed line), GLLA overestimated the parameter (i.e.,

$\eta = -0.8$

(indicated by the orange dashed line), GLLA overestimated the parameter (i.e.,

![]() $\mathbb {E}_{-0.8}\{\hat {\eta }(\boldsymbol {Y})\}> -0.8$

). To correct the bias, we can use the biased estimator

$\mathbb {E}_{-0.8}\{\hat {\eta }(\boldsymbol {Y})\}> -0.8$

). To correct the bias, we can use the biased estimator

![]() $\hat {\eta }(\boldsymbol {Y})$

, whose sampling distribution is centered around

$\hat {\eta }(\boldsymbol {Y})$

, whose sampling distribution is centered around

![]() $\mathbb {E}_{-0.8}\{\hat {\eta }(\boldsymbol {Y})\}$

(depicted by the blue distribution), to obtain the sampling distribution for

$\mathbb {E}_{-0.8}\{\hat {\eta }(\boldsymbol {Y})\}$

(depicted by the blue distribution), to obtain the sampling distribution for

![]() $\tilde {\eta }(\boldsymbol {y})$

(the orange distribution) by identifying the corresponding x-coordinate from the solid black line. The sampling distribution of the bias-corrected estimator

$\tilde {\eta }(\boldsymbol {y})$

(the orange distribution) by identifying the corresponding x-coordinate from the solid black line. The sampling distribution of the bias-corrected estimator

![]() $\tilde {\eta }(\boldsymbol {y})$

is expected to center around the true value of

$\tilde {\eta }(\boldsymbol {y})$

is expected to center around the true value of

![]() $\eta = -0.8$

.

$\eta = -0.8$

.

Illustration of the bias-correction procedure using the

![]() $\eta $

estimation in the linear oscillator model as an example.

$\eta $

estimation in the linear oscillator model as an example.

Note: The solid black line represents the expectation

![]() $\mathbb {E}_{\eta }\{\hat {\eta }(\boldsymbol {Y})\}$

as a function of

$\mathbb {E}_{\eta }\{\hat {\eta }(\boldsymbol {Y})\}$

as a function of

![]() $\eta $

. The orange dashed line marks the true value of

$\eta $

. The orange dashed line marks the true value of

![]() $\eta = -0.8$

, and the blue and orange distributions show the sampling distributions of the biased estimator

$\eta = -0.8$

, and the blue and orange distributions show the sampling distributions of the biased estimator

![]() $\hat {\eta }(\boldsymbol {Y})$

and the bias-corrected estimator

$\hat {\eta }(\boldsymbol {Y})$

and the bias-corrected estimator

![]() $\tilde {\eta }(\boldsymbol {y})$

, respectively.

$\tilde {\eta }(\boldsymbol {y})$

, respectively.

2.1.2 Stochastic approximation and the Robbins–Monro algorithm

To solve Equation (5), SA methods can be used to obtain bias-corrected estimates. These methods are particularly well-suited for solving equations involving intractable expectations. Specifically, let

![]() $\boldsymbol {A}$

be a data-generating algorithm that produces simulated data

$\boldsymbol {A}$

be a data-generating algorithm that produces simulated data

![]() $\boldsymbol {Y}$

based on the parameter

$\boldsymbol {Y}$

based on the parameter

![]() $\boldsymbol {\theta }$

and a random variable

$\boldsymbol {\theta }$

and a random variable

![]() $\boldsymbol {U}$

with distribution

$\boldsymbol {U}$

with distribution

![]() $\mathbb {P}$

independent of

$\mathbb {P}$

independent of

![]() $\boldsymbol {\theta }$

, that is,

$\boldsymbol {\theta }$

, that is,

![]() $\boldsymbol {Y} = A(\boldsymbol {\theta }, \boldsymbol {U})$

. Consider solving for

$\boldsymbol {Y} = A(\boldsymbol {\theta }, \boldsymbol {U})$

. Consider solving for

![]() $\boldsymbol {\theta }$

from the equation (or a set of equations)

$\boldsymbol {\theta }$

from the equation (or a set of equations)

where

![]() $\boldsymbol {g}(\boldsymbol {\theta }) = \mathbb {E}\{\hat {\boldsymbol {\theta }}[\boldsymbol {A}(\boldsymbol {\theta }, \boldsymbol {U})] \} - \hat {\boldsymbol {\theta }}(\boldsymbol {y})$

and

$\boldsymbol {g}(\boldsymbol {\theta }) = \mathbb {E}\{\hat {\boldsymbol {\theta }}[\boldsymbol {A}(\boldsymbol {\theta }, \boldsymbol {U})] \} - \hat {\boldsymbol {\theta }}(\boldsymbol {y})$

and

![]() $\boldsymbol {G}(\boldsymbol {\theta }, \boldsymbol {U}) = \hat {\boldsymbol {\theta }}[\boldsymbol {A}(\boldsymbol {\theta }, \boldsymbol {U})] - \hat {\boldsymbol {\theta }}(\boldsymbol {y})$

. The expectation is taken over the random variable

$\boldsymbol {G}(\boldsymbol {\theta }, \boldsymbol {U}) = \hat {\boldsymbol {\theta }}[\boldsymbol {A}(\boldsymbol {\theta }, \boldsymbol {U})] - \hat {\boldsymbol {\theta }}(\boldsymbol {y})$

. The expectation is taken over the random variable

![]() $\boldsymbol {U} \sim \mathbb {P}$

while the observed data

$\boldsymbol {U} \sim \mathbb {P}$

while the observed data

![]() $\boldsymbol {y}$

are kept fixed. In the previously discussed example based on the linear oscillator model, the frequency parameter

$\boldsymbol {y}$

are kept fixed. In the previously discussed example based on the linear oscillator model, the frequency parameter

![]() $\eta $

can be viewed as the parameter

$\eta $

can be viewed as the parameter

![]() $\boldsymbol {\theta }$

, and the measurement error

$\boldsymbol {\theta }$

, and the measurement error

![]() $e(t)$

can be interpreted as parameter-free random components

$e(t)$

can be interpreted as parameter-free random components

![]() $\boldsymbol {U}$

.Footnote

6

$\boldsymbol {U}$

.Footnote

6

To solve Equation (6), a fundamental SA method—the Robbins–Monro algorithm (RM algorithm; Robbins & Monro, Reference Robbins and Monro1951)—can be applied. This algorithm iteratively updates the approximation to the root using the following recursive scheme:

where

![]() $\boldsymbol {\theta }_{k}$

denotes the k-th iterate (

$\boldsymbol {\theta }_{k}$

denotes the k-th iterate (

![]() $k = 1, 2, \ldots , K$

; K is the total number of iterations) of

$k = 1, 2, \ldots , K$

; K is the total number of iterations) of

![]() $\boldsymbol {\theta }$

,

$\boldsymbol {\theta }$

,

![]() $\gamma _{k}$

is a sequence of constants converging to zero, and

$\gamma _{k}$

is a sequence of constants converging to zero, and

![]() $\hat {\boldsymbol {g}}_{m}(\boldsymbol {\theta }_{k})$

is an unbiased Monte Carlo (MC) estimate of

$\hat {\boldsymbol {g}}_{m}(\boldsymbol {\theta }_{k})$

is an unbiased Monte Carlo (MC) estimate of

![]() $\boldsymbol {g}(\boldsymbol {\theta }_{k})$

, which is computed as

$\boldsymbol {g}(\boldsymbol {\theta }_{k})$

, which is computed as

$$ \begin{align} \hat{\boldsymbol{g}}_{m}(\boldsymbol{\theta}_{k}) = \frac{1}{m} \sum_{j=1}^{m} \boldsymbol{G}(\boldsymbol{\theta}_{k}, \boldsymbol{U}_{j}). \end{align} $$

$$ \begin{align} \hat{\boldsymbol{g}}_{m}(\boldsymbol{\theta}_{k}) = \frac{1}{m} \sum_{j=1}^{m} \boldsymbol{G}(\boldsymbol{\theta}_{k}, \boldsymbol{U}_{j}). \end{align} $$

Here,

![]() $\boldsymbol {U}_{1}, \ldots , \boldsymbol {U}_{m}$

are independent and identically distributed samples drawn from

$\boldsymbol {U}_{1}, \ldots , \boldsymbol {U}_{m}$

are independent and identically distributed samples drawn from

![]() $\mathbb {P}$

, and m is the total number of MC simulations (which is typically not large in practice). In this study, we set

$\mathbb {P}$

, and m is the total number of MC simulations (which is typically not large in practice). In this study, we set

![]() $m = 5$

for all simulations and the empirical example.

$m = 5$

for all simulations and the empirical example.

The sequence

![]() $\gamma _{k}$

must satisfy the following conditions to ensure convergence of the algorithm:

$\gamma _{k}$

must satisfy the following conditions to ensure convergence of the algorithm:

These conditions ensure that

![]() $\gamma _{k}$

decreases slowly to zero, allowing the algorithm to filter out the noise effect and ensuring that the sequence of estimates converges to the root of

$\gamma _{k}$

decreases slowly to zero, allowing the algorithm to filter out the noise effect and ensuring that the sequence of estimates converges to the root of

![]() $\boldsymbol {g}(\cdot )$

. In this study, we set

$\boldsymbol {g}(\cdot )$

. In this study, we set

![]() $\gamma _{k} = \alpha k^{-\beta }$

with some

$\gamma _{k} = \alpha k^{-\beta }$

with some

![]() $\alpha> 0$

and

$\alpha> 0$

and

![]() $\beta \in (\frac {1}{2}, 1]$

, ensuring that the constants decrease slowly to zero.

$\beta \in (\frac {1}{2}, 1]$

, ensuring that the constants decrease slowly to zero.

2.2 Illustrative example

2.2.1 Procedure of bias correction

The dataset generated based on the linear oscillator model is used to illustrate the bias-correction procedure of the two-stage GLLA estimates. For illustrative purposes, we focus on correcting the biased estimate of the frequency parameter

![]() $\eta $

. The RM algorithm takes the following steps to correct the biased estimates:

$\eta $

. The RM algorithm takes the following steps to correct the biased estimates:

-

Step 0: Initialize by setting

$k = 0$

and obtaining the initial estimates

$k = 0$

and obtaining the initial estimates

$\hat {\boldsymbol {\theta }}_0$

using GLLA. Set

$\hat {\boldsymbol {\theta }}_0$

using GLLA. Set

$\boldsymbol {\theta }_1 = \hat {\boldsymbol {\theta }}_0$

.

$\boldsymbol {\theta }_1 = \hat {\boldsymbol {\theta }}_0$

. -

Step 1: Set

$k = k + 1$

. Generate

$k = k + 1$

. Generate

$\boldsymbol {U}_{1}, \dots , \boldsymbol {U}_{m}$

from

$\boldsymbol {U}_{1}, \dots , \boldsymbol {U}_{m}$

from

$\mathbb {P}$

and compute: (10)

$\mathbb {P}$

and compute: (10) $$ \begin{align} \hat{\boldsymbol{g}}_m(\boldsymbol{\theta}_k) = \frac{1}{m}\sum_{j = 1}^m\hat{\boldsymbol{\theta}}[\boldsymbol{A}(\boldsymbol{\theta}_k,\boldsymbol{U}_j)] - \hat{\boldsymbol{\theta}}(\boldsymbol{y}). \end{align} $$

$$ \begin{align} \hat{\boldsymbol{g}}_m(\boldsymbol{\theta}_k) = \frac{1}{m}\sum_{j = 1}^m\hat{\boldsymbol{\theta}}[\boldsymbol{A}(\boldsymbol{\theta}_k,\boldsymbol{U}_j)] - \hat{\boldsymbol{\theta}}(\boldsymbol{y}). \end{align} $$

When generating datasets, the initial values of the displacement (

$x_{1}$

) and the first derivative (

$x_{1}$

) and the first derivative (

$\dot {x}_{1}$

) are fixed to their values used in the previous simulation illustration for simplicity.

$\dot {x}_{1}$

) are fixed to their values used in the previous simulation illustration for simplicity. -

Step 2: Update

$\boldsymbol {\theta }_{k}$

using Equation (7), with

$\boldsymbol {\theta }_{k}$

using Equation (7), with

$\alpha = 0.3$

,

$\alpha = 0.3$

,

$\beta = 0.6$

for all simulations in this study.

$\beta = 0.6$

for all simulations in this study. -

Step 3: Repeat Steps 1 and 2 until convergence.

The final point estimates after a sufficiently large number of iterations K are obtained using the weighted average

$$ \begin{align} \overline{\boldsymbol{\theta}}_{K} = \frac{\sum_{k=\lfloor K/2 \rfloor + 1}^{K}\gamma_{k}\boldsymbol{\theta}_{k}}{\sum_{k=\lfloor K/2 \rfloor + 1}^{K}\gamma_{k}}, \end{align} $$

$$ \begin{align} \overline{\boldsymbol{\theta}}_{K} = \frac{\sum_{k=\lfloor K/2 \rfloor + 1}^{K}\gamma_{k}\boldsymbol{\theta}_{k}}{\sum_{k=\lfloor K/2 \rfloor + 1}^{K}\gamma_{k}}, \end{align} $$

where

![]() $\lfloor \cdot \rfloor $

denotes the integer part of a real number. In this study, we run a fixed total of 5,000 iterations. Convergence was considered achieved if the relative difference between the point estimates at iterations 4,999 and 5,000 (i.e., their difference divided by the estimate at 4,999) fell below a tolerance threshold of 0.01%.

$\lfloor \cdot \rfloor $

denotes the integer part of a real number. In this study, we run a fixed total of 5,000 iterations. Convergence was considered achieved if the relative difference between the point estimates at iterations 4,999 and 5,000 (i.e., their difference divided by the estimate at 4,999) fell below a tolerance threshold of 0.01%.

Additionally, the SE of the frequency parameter was estimated using the following equation (the derivation of a more general form is provided in the Appendix as Equation (16); Equation (12) is a special case of Equation (16)):

where

![]() $\widehat {\mathrm {Var}}_{\overline {\boldsymbol {\theta }}_{K}}\{\hat {\boldsymbol {\theta }}\}$

was estimated using the MC approximation with 10,000 simulations. The expected value

$\widehat {\mathrm {Var}}_{\overline {\boldsymbol {\theta }}_{K}}\{\hat {\boldsymbol {\theta }}\}$

was estimated using the MC approximation with 10,000 simulations. The expected value

![]() $\mathbb {E}_{\boldsymbol {\theta }}\{\hat {\boldsymbol {\theta }}\}$

is denoted as

$\mathbb {E}_{\boldsymbol {\theta }}\{\hat {\boldsymbol {\theta }}\}$

is denoted as

![]() $\boldsymbol {L}(\boldsymbol {\theta })$

, and

$\boldsymbol {L}(\boldsymbol {\theta })$

, and

![]() $\widehat {\dot {\boldsymbol {L}}}(\overline {\boldsymbol {\theta }}_{K})$

is its derivative evaluated at the final point estimate

$\widehat {\dot {\boldsymbol {L}}}(\overline {\boldsymbol {\theta }}_{K})$

is its derivative evaluated at the final point estimate

![]() $\overline {\boldsymbol {\theta }}_{K}$

. This derivative was computed numerically using the finite differences method with the jacobian function in the R package numDeriv (Gilbert & Varadhan, Reference Gilbert and Varadhan2016), where we fixed the parameter-free random components

$\overline {\boldsymbol {\theta }}_{K}$

. This derivative was computed numerically using the finite differences method with the jacobian function in the R package numDeriv (Gilbert & Varadhan, Reference Gilbert and Varadhan2016), where we fixed the parameter-free random components

![]() $\boldsymbol {U}$

to ensure numerical variability arises solely from parameter perturbations. This approach is also applied in the computation of the more general form of SE described later. For notational simplicity, from Equation (12) onward, we omit explicit dependence notation in estimators.

$\boldsymbol {U}$

to ensure numerical variability arises solely from parameter perturbations. This approach is also applied in the computation of the more general form of SE described later. For notational simplicity, from Equation (12) onward, we omit explicit dependence notation in estimators.

2.2.2 Results

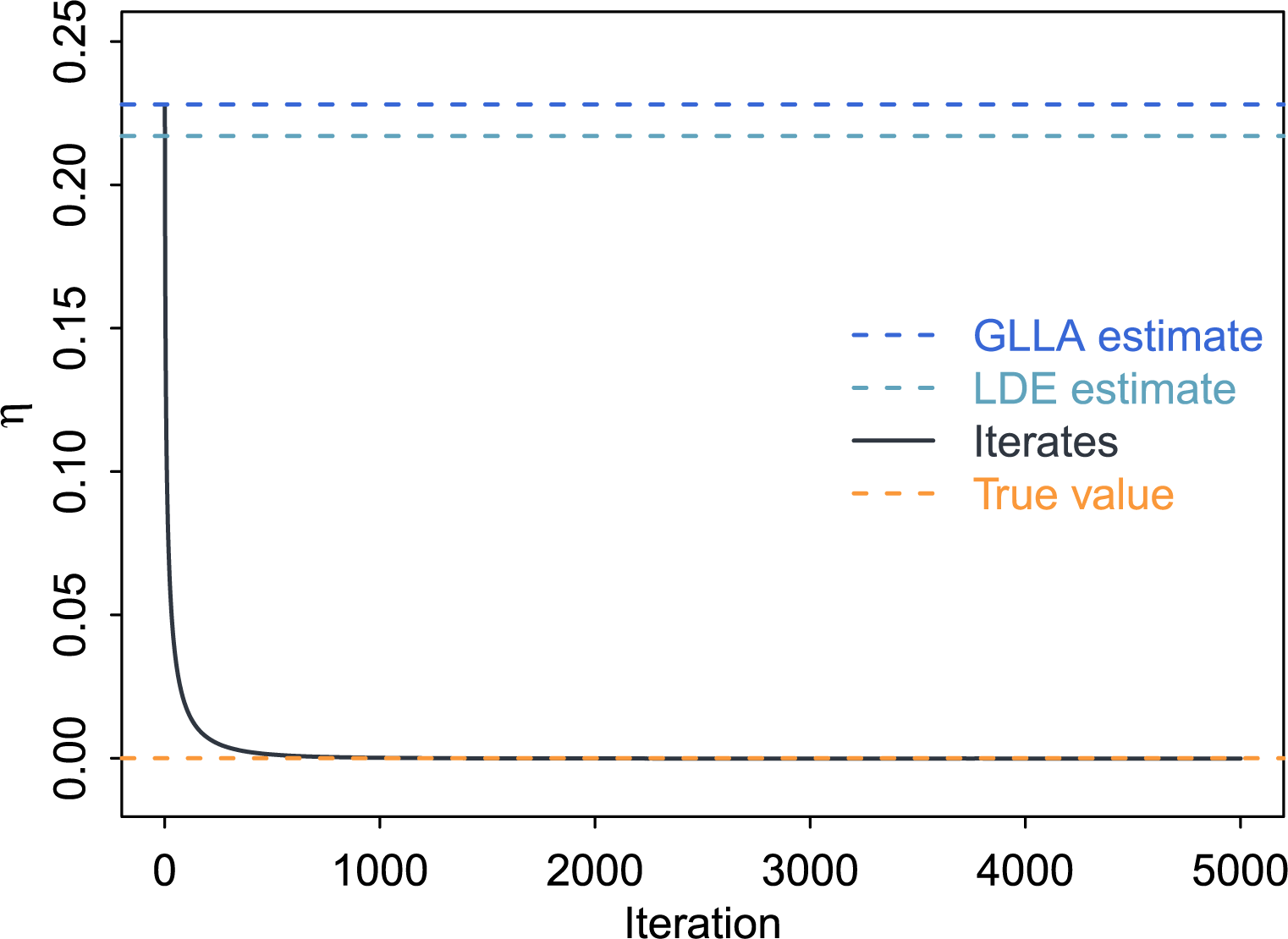

Figure 4 displays the iterates of

![]() $\eta $

using the bias-correction method. Although starting from the highly biased GLLA estimate (

$\eta $

using the bias-correction method. Although starting from the highly biased GLLA estimate (

![]() $\hat {\eta } = -0.618$

), the iterates gradually converged, with the final estimate (

$\hat {\eta } = -0.618$

), the iterates gradually converged, with the final estimate (

![]() $\overline {\eta }_{K} = -0.800$

) after

$\overline {\eta }_{K} = -0.800$

) after

![]() $K = 1,000$

iterations matching the true value to the third decimal place. This result demonstrates the effectiveness of the bias-correction procedure in reducing bias in the two-stage GLLA estimates in differential equation models. In addition, the SE estimate for

$K = 1,000$

iterations matching the true value to the third decimal place. This result demonstrates the effectiveness of the bias-correction procedure in reducing bias in the two-stage GLLA estimates in differential equation models. In addition, the SE estimate for

![]() $\eta $

was

$\eta $

was

![]() $0.169 \times 10^{-3}$

, indicating high precision in the final estimate.

$0.169 \times 10^{-3}$

, indicating high precision in the final estimate.

Iterates and point estimates of

![]() $\eta $

in the linear oscillator model.

$\eta $

in the linear oscillator model.

Note: The solid line represents the iterates of

![]() $\eta $

using the bias-correction method. The dashed lines indicate the true value (orange), the point estimates using GLLA (blue), and the point estimate using LDE (teal).

$\eta $

using the bias-correction method. The dashed lines indicate the true value (orange), the point estimates using GLLA (blue), and the point estimate using LDE (teal).

2.2.3 Summary

This example illustrates the bias-correction procedure in a relatively simple setting, where (a) only one parameter is of focus (i.e., the frequency parameter

![]() $\eta $

in the linear oscillator model) and (b) the model involves only time-independent noise (i.e., measurement error). To assess the performance of the bias-correction method in more general settings, the remainder of this article will extend the approach to the damped linear oscillator model, (a) addressing bias correction and SE estimation for multiple parameters and (b) incorporating time-dependent process noise (i.e., dynamic error) into the model.

$\eta $

in the linear oscillator model) and (b) the model involves only time-independent noise (i.e., measurement error). To assess the performance of the bias-correction method in more general settings, the remainder of this article will extend the approach to the damped linear oscillator model, (a) addressing bias correction and SE estimation for multiple parameters and (b) incorporating time-dependent process noise (i.e., dynamic error) into the model.

3 Extension to the damped linear oscillator model

The damped linear oscillator model is a widely used differential equation model in psychological research (e.g., Chow et al., Reference Chow, Ram, Boker, Fujita and Clore2005; Ollero et al., Reference Ollero, Estrada, Hunter and Cáncer2025; Hu & Treinen, Reference Hu and Treinen2019). In this section, we first demonstrate how the bias-correction procedure can be applied to reduce bias in the estimation of multiple parameters in the damped linear oscillator model. We then further evaluate the effectiveness of the bias-correction method by incorporating dynamic error into the model.

3.1 Bias correction for multiple parameters in the damped linear oscillator model

The damped linear oscillator model with measurement error is specified as follows:

Equation (13) is the same as Equation (1). In Equation (14),

![]() $\dot {x}(t)$

and

$\dot {x}(t)$

and

![]() $\ddot {x}(t)$

represent the first and second derivatives of

$\ddot {x}(t)$

represent the first and second derivatives of

![]() $x(t)$

with respect to time, respectively. The frequency parameter

$x(t)$

with respect to time, respectively. The frequency parameter

![]() $\eta $

describes the frequency of oscillation. The damping parameter

$\eta $

describes the frequency of oscillation. The damping parameter

![]() $\zeta $

governs the amplitude of oscillation.Footnote

7

A negative value of

$\zeta $

governs the amplitude of oscillation.Footnote

7

A negative value of

![]() $\zeta $

indicates a decrease in oscillation amplitude over time, while a positive value of

$\zeta $

indicates a decrease in oscillation amplitude over time, while a positive value of

![]() $\zeta $

indicates an increase in amplitude. When

$\zeta $

indicates an increase in amplitude. When

![]() $\zeta =0$

, the oscillation amplitude remains constant, and the model reduces to the linear oscillator model.

$\zeta =0$

, the oscillation amplitude remains constant, and the model reduces to the linear oscillator model.

3.1.1 Data generation

To preliminarily assess the effectiveness of the bias-correction method for GLLA estimates of multiple parameters, we generated a large dataset based on the damped linear oscillator model, in which the frequency parameter, damping parameter, standard deviation of measurement error, and initial values were all treated as unknown parameters to be estimated. Specifically, individual time-series data (

![]() $T = 100$

) were generated according to Equations (13) and (14) for

$T = 100$

) were generated according to Equations (13) and (14) for

![]() $N = 1,000$

individuals. The measurement error-free data were first simulated using the analytic solution of Equation (14), which is valid when

$N = 1,000$

individuals. The measurement error-free data were first simulated using the analytic solution of Equation (14), which is valid when

![]() $\eta <0$

and

$\eta <0$

and

![]() $\zeta ^2+4\eta <0$

(Hu & Treinen, Reference Hu and Treinen2019):

$\zeta ^2+4\eta <0$

(Hu & Treinen, Reference Hu and Treinen2019):

where

![]() $\omega ^{\prime} = \sqrt {- \eta - \zeta ^{2}/4}$

,

$\omega ^{\prime} = \sqrt {- \eta - \zeta ^{2}/4}$

,

![]() $A = x_{1}$

, and

$A = x_{1}$

, and

![]() $B = {\dot {x}}_{1}/\omega ^{\prime}$

, with

$B = {\dot {x}}_{1}/\omega ^{\prime}$

, with

![]() $x_{1}$

and

$x_{1}$

and

![]() ${\dot {x}}_{1}$

representing the initial values of the displacement and the first derivative, respectively. We set

${\dot {x}}_{1}$

representing the initial values of the displacement and the first derivative, respectively. We set

![]() $\eta = -0.8$

and

$\eta = -0.8$

and

![]() $\zeta = -0.04$

to reflect a typical cycle length of 7 time points (i.e.,

$\zeta = -0.04$

to reflect a typical cycle length of 7 time points (i.e.,

![]() $2\pi /\omega ^{\prime} = 2\pi /\sqrt {- \eta - \zeta ^{2}/4} \approx 7$

) and a commonly small value of

$2\pi /\omega ^{\prime} = 2\pi /\sqrt {- \eta - \zeta ^{2}/4} \approx 7$

) and a commonly small value of

![]() $\zeta $

(Cho et al., Reference Cho, Chow, Marini and Martire2024).

$\zeta $

(Cho et al., Reference Cho, Chow, Marini and Martire2024).

When estimating derivatives using a five-dimensional time-delay embedded matrix via GLLA (see Figure 1), the first row of the resulting matrix represents the derivative estimates at the third time point of the original time series. Therefore, we set the values of the third time point for the zero- and first-order derivatives (

![]() $x_{3} = -10$

and

$x_{3} = -10$

and

![]() ${\dot {x}}_{3} = -10$

) in order to approximate the time series starting near a positive peak of the oscillation while simultaneously obtaining the true initial values for GLLA. We then used these values as initial conditions to generate (

${\dot {x}}_{3} = -10$

) in order to approximate the time series starting near a positive peak of the oscillation while simultaneously obtaining the true initial values for GLLA. We then used these values as initial conditions to generate (

![]() $T - 2$

) time points forward and 2 time points backward according to Equation (15), resulting in T time points for each individual. The observed dataset for each individual was generated by adding measurement error drawn from a normal distribution with mean zero and the standard deviation of

$T - 2$

) time points forward and 2 time points backward according to Equation (15), resulting in T time points for each individual. The observed dataset for each individual was generated by adding measurement error drawn from a normal distribution with mean zero and the standard deviation of

![]() $\sigma _{e} = 1$

. This generating process was repeated N times, yielding a final dataset with N individuals, each having T observations.

$\sigma _{e} = 1$

. This generating process was repeated N times, yielding a final dataset with N individuals, each having T observations.

3.1.2 Estimation and bias correction

To obtain the GLLA estimates of parameters in the damped linear oscillator model, we first estimated the zero-, first-, and second-order derivatives using a five-dimensional time-delay embedded matrix. We then fitted a linear regression model with the zero- and first-order derivatives as predictors and the second-order derivative as the outcome variable to estimate the frequency parameter

![]() $\eta $

and the damping parameter

$\eta $

and the damping parameter

![]() $\zeta $

. To estimate the third time point for the zero-order (

$\zeta $

. To estimate the third time point for the zero-order (

![]() $x_{3}$

) and first-order (

$x_{3}$

) and first-order (

![]() ${\dot {x}}_{3}$

) derivatives, we averaged the first row of the corresponding derivative estimates across individuals. For the standard deviation of measurement error, we used the between-person standard deviation of the first row of the zero-order derivative as an estimate.

${\dot {x}}_{3}$

) derivatives, we averaged the first row of the corresponding derivative estimates across individuals. For the standard deviation of measurement error, we used the between-person standard deviation of the first row of the zero-order derivative as an estimate.

To obtain the bias-corrected estimates, we followed the same procedure as in the linear oscillator model, except that in Step 1, five parameters (

![]() $\eta $

,

$\eta $

,

![]() $\zeta $

,

$\zeta $

,

![]() $x_{3}$

,

$x_{3}$

,

![]() ${\dot {x}}_{3}$

, and

${\dot {x}}_{3}$

, and

![]() $\sigma _{e}$

) were estimated and used to generate datasets during each iteration. The final point estimate for each parameter was calculated using Equation (11). The SE estimates were computed as

$\sigma _{e}$

) were estimated and used to generate datasets during each iteration. The final point estimate for each parameter was calculated using Equation (11). The SE estimates were computed as

where the covariance matrix

![]() $\widehat {\boldsymbol {\Sigma }}$

is approximated by (the derivation is provided in the Appendix)

$\widehat {\boldsymbol {\Sigma }}$

is approximated by (the derivation is provided in the Appendix)

The covariance matrix

![]() ${\widehat {\mathrm {Cov}}}_{{\overline {\boldsymbol {\theta }}}_{K}}\left \{ \widehat {\boldsymbol {\theta }} \right \}$

was estimated using MC approximation with 10,000 simulations, and the Jacobian matrix

${\widehat {\mathrm {Cov}}}_{{\overline {\boldsymbol {\theta }}}_{K}}\left \{ \widehat {\boldsymbol {\theta }} \right \}$

was estimated using MC approximation with 10,000 simulations, and the Jacobian matrix

![]() $\widehat {\dot {\boldsymbol {L}}}\left ( {\overline {\boldsymbol {\theta }}}_{K} \right )$

was computed using the finite differences method.

$\widehat {\dot {\boldsymbol {L}}}\left ( {\overline {\boldsymbol {\theta }}}_{K} \right )$

was computed using the finite differences method.

For comparison, we also estimated the model parameters using state-space modeling (SSM) implemented in the R package dynr (Ou et al., Reference Ou, Hunter and Chow2019). The initial values for the frequency parameter

![]() $\eta $

and damping parameter

$\eta $

and damping parameter

![]() $\zeta $

were set to the GLLA estimates, while the initial value for the measurement error standard deviation

$\zeta $

were set to the GLLA estimates, while the initial value for the measurement error standard deviation

![]() $\sigma _e$

was set to the between-person standard deviation of the first row of the zero-order derivative estimates, consistent with the approach used for GLLA and the bias-correction method. For the initial conditions, which in dynr correspond to the first time point of the latent states (i.e., the zero- and first-order derivatives), we used the sample means across individuals of the first time point values for the zero-order derivative, and the mean of the numerical derivatives between the first and second time points for the first-order derivative. Given that the SSM estimates initial conditions at the first time point, whereas the bias-correction method estimates them at the third time point, a direct comparison of these initial condition parameters is not feasible. We imposed appropriate boundary constraints, restricting

$\sigma _e$

was set to the between-person standard deviation of the first row of the zero-order derivative estimates, consistent with the approach used for GLLA and the bias-correction method. For the initial conditions, which in dynr correspond to the first time point of the latent states (i.e., the zero- and first-order derivatives), we used the sample means across individuals of the first time point values for the zero-order derivative, and the mean of the numerical derivatives between the first and second time points for the first-order derivative. Given that the SSM estimates initial conditions at the first time point, whereas the bias-correction method estimates them at the third time point, a direct comparison of these initial condition parameters is not feasible. We imposed appropriate boundary constraints, restricting

![]() $\sigma _e> 0$

and

$\sigma _e> 0$

and

![]() $\eta < 0$

. All other estimation settings were kept at their default values.

$\eta < 0$

. All other estimation settings were kept at their default values.

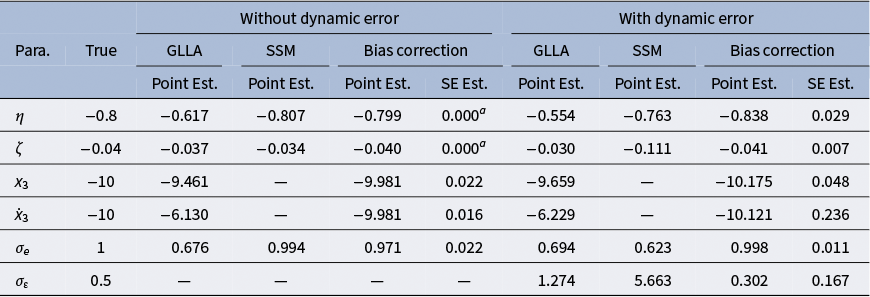

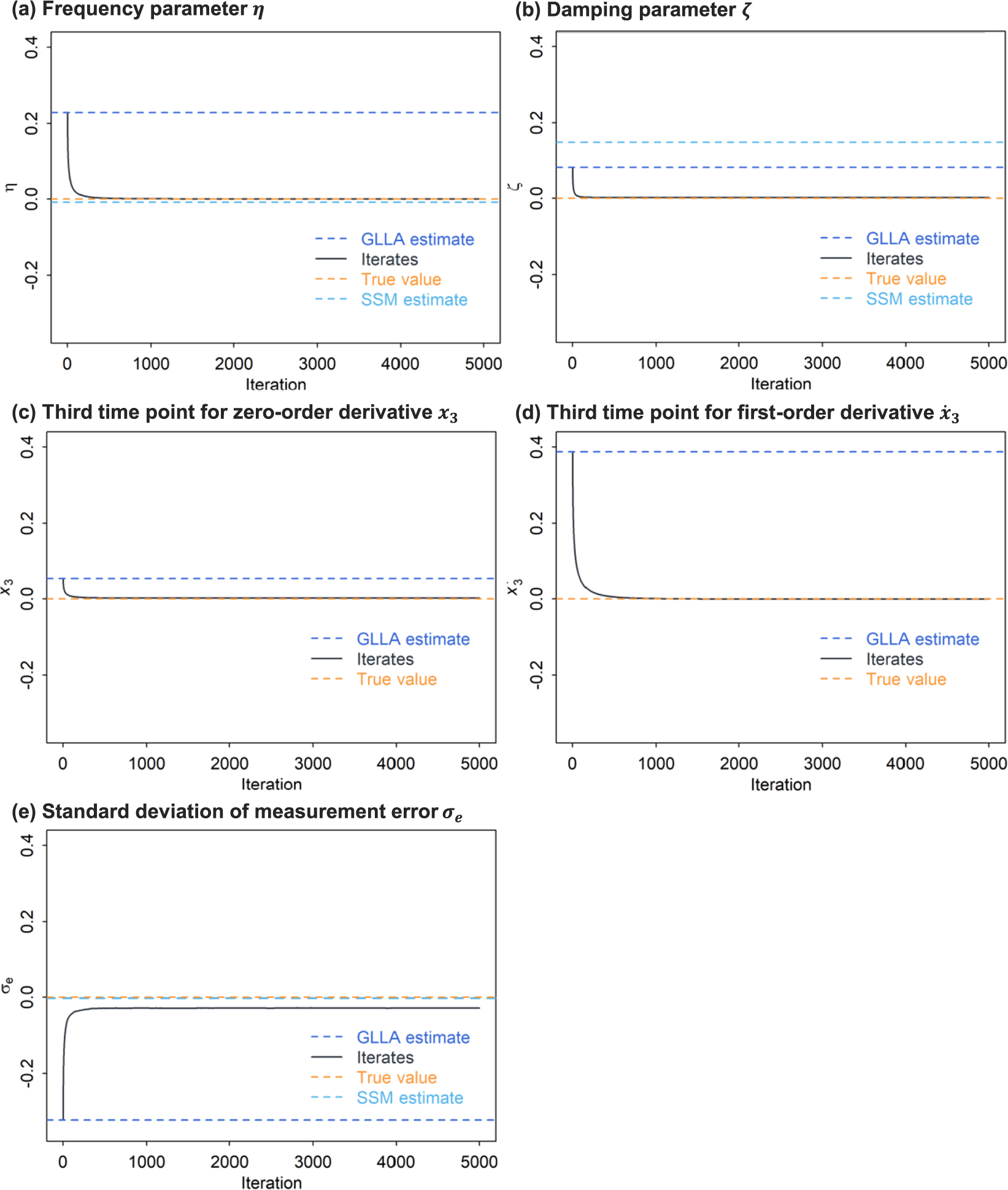

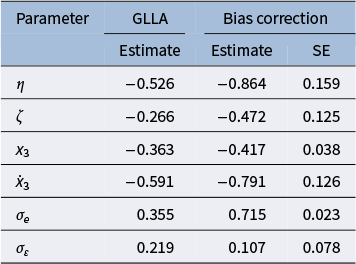

3.1.3 Results

Figure 5 shows the iterates of parameters using the bias-correction method. All parameter iterates converged to equilibrium within 5,000 iterations. Compared to the GLLA estimates, the biased-corrected estimates were much closer to the true values, and exhibited small SE estimates (see Table 1). The SSM estimates for the frequency parameter

![]() $\eta $

and the measurement error standard deviation

$\eta $

and the measurement error standard deviation

![]() $\sigma _e$

were also highly accurate. However, for the damping parameter

$\sigma _e$

were also highly accurate. However, for the damping parameter

![]() $\zeta $

, the SSM estimate exhibited substantial bias, which was even larger than that of the GLLA estimate. This suggests that the bias-correction method effectively reduces the bias of the GLLA estimates for multiple parameters in the damped linear oscillator model with only time-independent noise (i.e., measurement error). This result motivates further investigation into the performance of the bias-correction method when time-dependent dynamic error is introduced into the model.

$\zeta $

, the SSM estimate exhibited substantial bias, which was even larger than that of the GLLA estimate. This suggests that the bias-correction method effectively reduces the bias of the GLLA estimates for multiple parameters in the damped linear oscillator model with only time-independent noise (i.e., measurement error). This result motivates further investigation into the performance of the bias-correction method when time-dependent dynamic error is introduced into the model.

Parameter estimates in the damped linear oscillator model with and without dynamic error using GLLA, state-space modeling (SSM), and the bias-correction method

Note:

![]() $^a$

The values of 0.000 are a result of rounding to three decimal places. When rounded to four decimal places, the values are 0.0001.

$^a$

The values of 0.000 are a result of rounding to three decimal places. When rounded to four decimal places, the values are 0.0001.

Relative bias of the parameter estimates and iterates in the damped linear oscillator model.

Note: The solid line represents the iterates of parameters using the bias-correction method. The dashed lines indicate the true value (orange), the GLLA estimates (dark blue), and the SSM estimates (light blue).

3.2 Bias correction in the damped linear oscillator model with dynamic error

The damped linear oscillator model with both time-independent measurement error and time-dependent dynamic error can be expressed as follows:

Equation (18) is identical to Equations (1) and (13). In Equation (19), the original damped linear oscillator model in Equation (14) is multiplied by

![]() $\Delta t$

with the addition of the change in dynamic error

$\Delta t$

with the addition of the change in dynamic error

![]() $\Delta \varepsilon (t)$

, resulting in a stochastic version of the model.Footnote

8

The change in dynamic error

$\Delta \varepsilon (t)$

, resulting in a stochastic version of the model.Footnote

8

The change in dynamic error

![]() $\Delta \varepsilon (t)$

over a short time interval

$\Delta \varepsilon (t)$

over a short time interval

![]() $[t,t + \Delta t]$

follows a normal distribution with mean zero and variance

$[t,t + \Delta t]$

follows a normal distribution with mean zero and variance

![]() $\sigma _{\varepsilon }^{2}\Delta t$

. In other words,

$\sigma _{\varepsilon }^{2}\Delta t$

. In other words,

![]() $\Delta \varepsilon (t)$

can be modeled as the increment of a univariate standard Wiener process

$\Delta \varepsilon (t)$

can be modeled as the increment of a univariate standard Wiener process

![]() $W(t)$

scaled by the standard deviation

$W(t)$

scaled by the standard deviation

![]() $\sigma _{\varepsilon }$

, such that

$\sigma _{\varepsilon }$

, such that

![]() $\Delta \varepsilon (t) = \sigma _{\varepsilon }\Delta W(t)$

, where

$\Delta \varepsilon (t) = \sigma _{\varepsilon }\Delta W(t)$

, where

![]() $\Delta W(t) = W(t + \Delta t) - W(t)$

follows a normal distribution with mean zero and variance of

$\Delta W(t) = W(t + \Delta t) - W(t)$

follows a normal distribution with mean zero and variance of

![]() $\Delta t$

(i.e.,

$\Delta t$

(i.e.,

![]() $\Delta W(t) \sim N(0,\Delta t)$

).

$\Delta W(t) \sim N(0,\Delta t)$

).

Although both dynamic error

![]() $\varepsilon (t)$

and measurement error

$\varepsilon (t)$

and measurement error

![]() $e(t)$

follow normal distributions, they serve fundamentally different roles in the model. The dynamic error captures stochastic shocks that continuously influence the current and future states of the dynamic system, while the measurement error represents noise that only affects the current state (i.e., the measurement occasion) of the system (Chen et al., Reference Chen, Chow, Hunter, Montfort, Oud and Voelkle2018). In psychological studies that use differential equation models to examine dynamic processes, incorporating dynamic error is a more realistic assumption. This assumption reflects that the current state of the dynamic system cannot be perfectly predicted by its previous state, as random noise can contribute to the dynamic process at any moment (Ollero et al., Reference Ollero, Estrada, Hunter and Cáncer2025). Moreover, it accounts for the impact of unknown or unmodeled factors on the system’s evolution.

$e(t)$

follow normal distributions, they serve fundamentally different roles in the model. The dynamic error captures stochastic shocks that continuously influence the current and future states of the dynamic system, while the measurement error represents noise that only affects the current state (i.e., the measurement occasion) of the system (Chen et al., Reference Chen, Chow, Hunter, Montfort, Oud and Voelkle2018). In psychological studies that use differential equation models to examine dynamic processes, incorporating dynamic error is a more realistic assumption. This assumption reflects that the current state of the dynamic system cannot be perfectly predicted by its previous state, as random noise can contribute to the dynamic process at any moment (Ollero et al., Reference Ollero, Estrada, Hunter and Cáncer2025). Moreover, it accounts for the impact of unknown or unmodeled factors on the system’s evolution.

3.2.1 Data generation

To generate data for the damped linear oscillator model with dynamic error, we first simulate the measurement-error-free time-series data for each individual using the Euler method for numerical integration. This method approximates the state of the system over discrete time steps with the following update rules:

where the time step size is

![]() $\Delta t = 0.01$

. The initial conditions for the zero- and first-order derivatives were set at the third time point (

$\Delta t = 0.01$

. The initial conditions for the zero- and first-order derivatives were set at the third time point (

![]() $x_{3}$

and

$x_{3}$

and

![]() ${\dot {x}}_{3}$

). Numerical integration was used to generate

${\dot {x}}_{3}$

). Numerical integration was used to generate

![]() $(T - 2)/\Delta t$

time points forward and

$(T - 2)/\Delta t$

time points forward and

![]() $2/\Delta t$

time points backward, where

$2/\Delta t$

time points backward, where

![]() $T = 1,000$

, resulting in a total of

$T = 1,000$

, resulting in a total of

![]() $T/\Delta t = 100,000$

states. A thinning interval of

$T/\Delta t = 100,000$

states. A thinning interval of

![]() $1/\Delta t$

was then applied, resulting in

$1/\Delta t$

was then applied, resulting in

![]() $T = 1,000$

data points for each individual. The observed dataset for each individual was generated by adding measurement error drawn from a normal distribution with mean zero and standard deviation

$T = 1,000$

data points for each individual. The observed dataset for each individual was generated by adding measurement error drawn from a normal distribution with mean zero and standard deviation

![]() $\sigma _{e}$

. This process was repeated

$\sigma _{e}$

. This process was repeated

![]() $N = 100$

times, yielding a final dataset with N individuals, each having T observations. The true values of parameters were set to the same values used in the previous simulation (

$N = 100$

times, yielding a final dataset with N individuals, each having T observations. The true values of parameters were set to the same values used in the previous simulation (

![]() $\eta = -0.8$

,

$\eta = -0.8$

,

![]() $\zeta = -0.04$

,

$\zeta = -0.04$

,

![]() $x_{3} = -10$

,

$x_{3} = -10$

,

![]() ${\dot {x}}_{3} = -10$

, and

${\dot {x}}_{3} = -10$

, and

![]() $\sigma _{e} = 1$

), and the standard deviation of the dynamic error was set to

$\sigma _{e} = 1$

), and the standard deviation of the dynamic error was set to

![]() $\sigma _{\varepsilon } = 0.5$

.

$\sigma _{\varepsilon } = 0.5$

.

3.2.2 Estimation and bias correction

The GLLA estimates of the parameters

![]() $\eta $

,

$\eta $

,

![]() $\zeta $

,

$\zeta $

,

![]() $x_{3}$

,

$x_{3}$

,

![]() ${\dot {x}}_{3}$

, and

${\dot {x}}_{3}$

, and

![]() $\sigma _{e}$

were obtained using the same method as in the previous section. Additionally, the standard deviation of dynamic error

$\sigma _{e}$

were obtained using the same method as in the previous section. Additionally, the standard deviation of dynamic error

![]() $\sigma _{\varepsilon }$

was estimated using the residual standard deviation from the linear regression of the zero- and first-order derivatives on the second-order derivative. The bias-corrected estimates were obtained for all six parameters (

$\sigma _{\varepsilon }$

was estimated using the residual standard deviation from the linear regression of the zero- and first-order derivatives on the second-order derivative. The bias-corrected estimates were obtained for all six parameters (

![]() $\eta $

,

$\eta $

,

![]() $\zeta $

,

$\zeta $

,

![]() $x_{3}$

,

$x_{3}$

,

![]() ${\dot {x}}_{3}$

,

${\dot {x}}_{3}$

,

![]() $\sigma _{e}$

, and

$\sigma _{e}$

, and

![]() $\sigma _{\varepsilon }$

). The point estimates and SE estimates computed according to Equations (11) and (16), respectively.

$\sigma _{\varepsilon }$

). The point estimates and SE estimates computed according to Equations (11) and (16), respectively.

We also estimated the model parameters using SSM method. We specified the model following the example provided in the package documentation (https://github.com/mhunter1/dynr/blob/master/demo/LinearSDE.R). The initial values for the five parameters (

![]() $\eta $

,

$\eta $

,

![]() $\zeta $

,

$\zeta $

,

![]() $x_{3}$

,

$x_{3}$

,

![]() ${\dot {x}}_{3}$

, and

${\dot {x}}_{3}$

, and

![]() $\sigma _{e}$

) were set following the same procedure as described in the previous section for the model without dynamic error. For the dynamic error standard deviation

$\sigma _{e}$

) were set following the same procedure as described in the previous section for the model without dynamic error. For the dynamic error standard deviation

![]() $\sigma _{\varepsilon }$

, the initial value was set to the GLLA estimate, consistent with the approach used for the bias-correction method. We imposed boundary constraints, requiring

$\sigma _{\varepsilon }$

, the initial value was set to the GLLA estimate, consistent with the approach used for the bias-correction method. We imposed boundary constraints, requiring

![]() $\sigma _e> 0$

,

$\sigma _e> 0$

,

![]() $\sigma _{\varepsilon }> 0$

, and

$\sigma _{\varepsilon }> 0$

, and

![]() $\eta < 0$

.

$\eta < 0$

.

3.2.3 Results

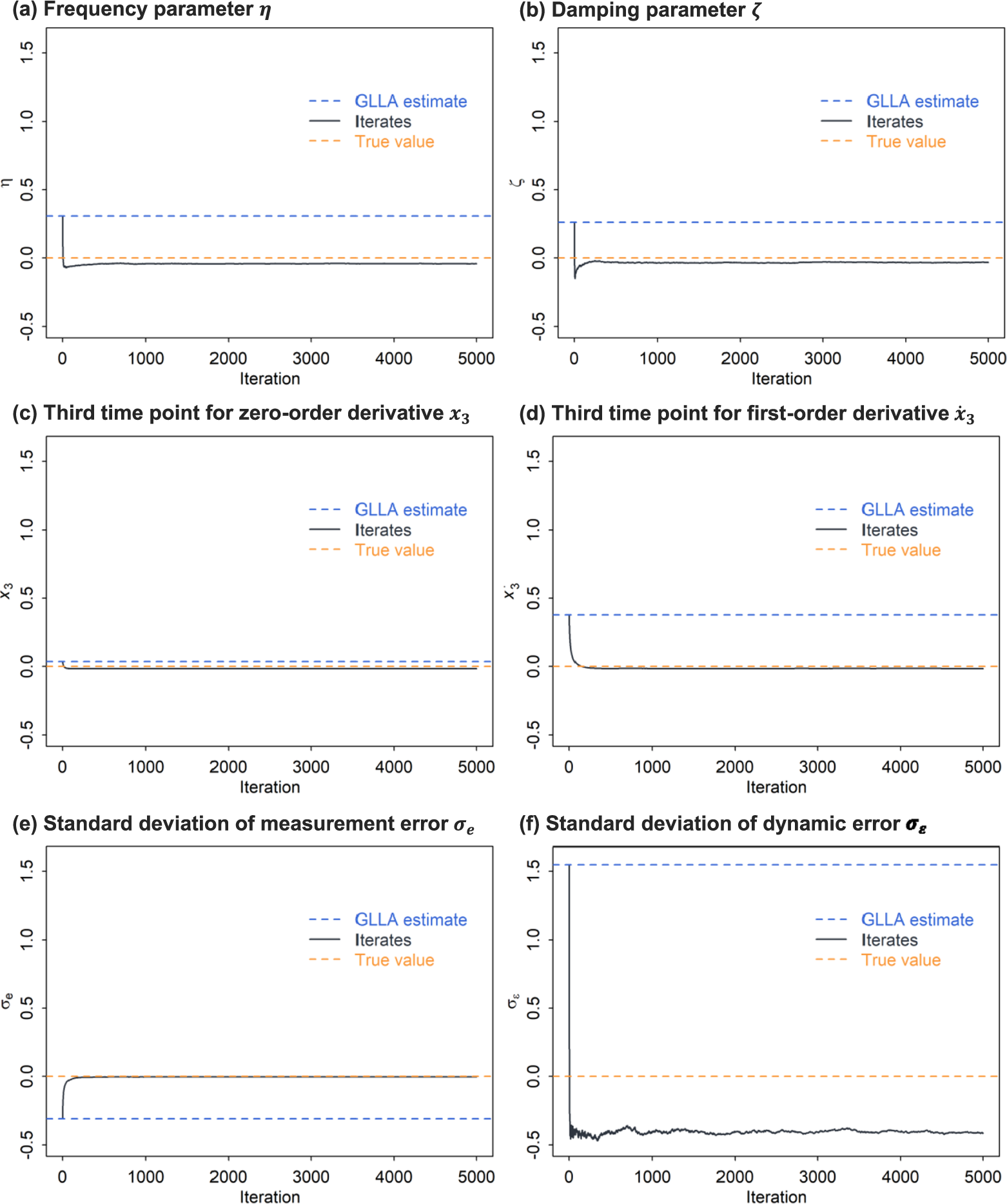

Figure 6 presents the parameter iterates using the bias-correction method in the damped linear oscillator model with dynamic error. All parameters converged to their equilibrium within 5,000 iterations. As shown in Table 1, the GLLA estimates of the frequency parameter

![]() $\eta $

and the damping parameter

$\eta $

and the damping parameter

![]() $\zeta $

in the damped linear oscillator model with dynamic error exhibited larger bias than those in the model without dynamic error. The GLLA estimate of

$\zeta $

in the damped linear oscillator model with dynamic error exhibited larger bias than those in the model without dynamic error. The GLLA estimate of

![]() $\sigma _{\varepsilon }$

in the model with dynamic error also showed a large deviation from its true value. The SSM estimates for the frequency parameter

$\sigma _{\varepsilon }$

in the model with dynamic error also showed a large deviation from its true value. The SSM estimates for the frequency parameter

![]() $\eta $

and the measurement error standard deviation

$\eta $

and the measurement error standard deviation

![]() $\sigma _e$

showed acceptable bias levels. However, consistent with the results from the model without dynamic error, the SSM estimate for the damping parameter

$\sigma _e$

showed acceptable bias levels. However, consistent with the results from the model without dynamic error, the SSM estimate for the damping parameter

![]() $\zeta $

exhibited substantial bias that was larger than that of the GLLA estimate. Furthermore, the SSM estimate for the dynamic error standard deviation

$\zeta $

exhibited substantial bias that was larger than that of the GLLA estimate. Furthermore, the SSM estimate for the dynamic error standard deviation

![]() $\sigma _{\varepsilon }$

showed severe overestimation. For the biased-corrected estimates, although some still deviated slightly from their true values, the bias was largely reduced compared to the initial estimates using GLLA. The SE estimates were relatively larger than those in the damped linear oscillator model without dynamic error (except for the SE estimate of

$\sigma _{\varepsilon }$

showed severe overestimation. For the biased-corrected estimates, although some still deviated slightly from their true values, the bias was largely reduced compared to the initial estimates using GLLA. The SE estimates were relatively larger than those in the damped linear oscillator model without dynamic error (except for the SE estimate of

![]() $\sigma _{e}$

). These findings provide initial evidence for the effectiveness of the bias-correction method in addressing multiple parameters in a differential equation model with dynamic error.

$\sigma _{e}$

). These findings provide initial evidence for the effectiveness of the bias-correction method in addressing multiple parameters in a differential equation model with dynamic error.

Relative bias of the parameter estimates and iterates in the damped linear oscillator model with dynamic error.

Note: The solid line represents the iterates of parameters using the bias-correction method. The dashed lines indicate the true value (orange), the GLLA estimates (blue), and the SSM estimates (purple). The SSM point estimates (see Table 1) are not included in this figure to maintain clarity in visualization, as the large relative bias for

![]() $\sigma _{\varepsilon }$

would disproportionately scale the y-axis.

$\sigma _{\varepsilon }$

would disproportionately scale the y-axis.

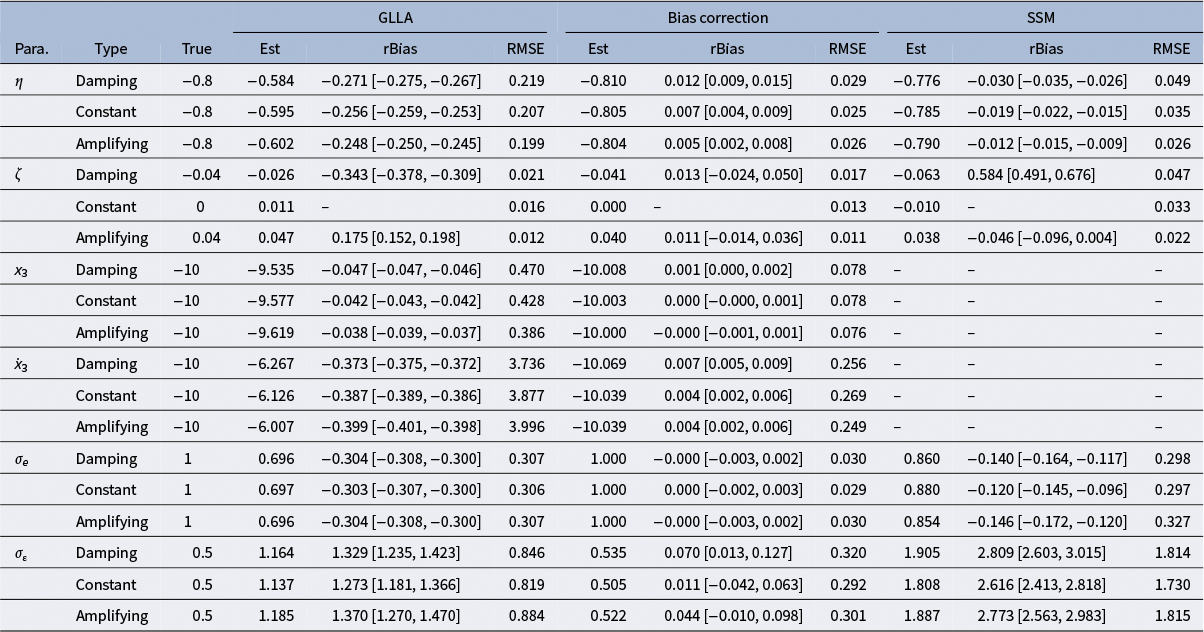

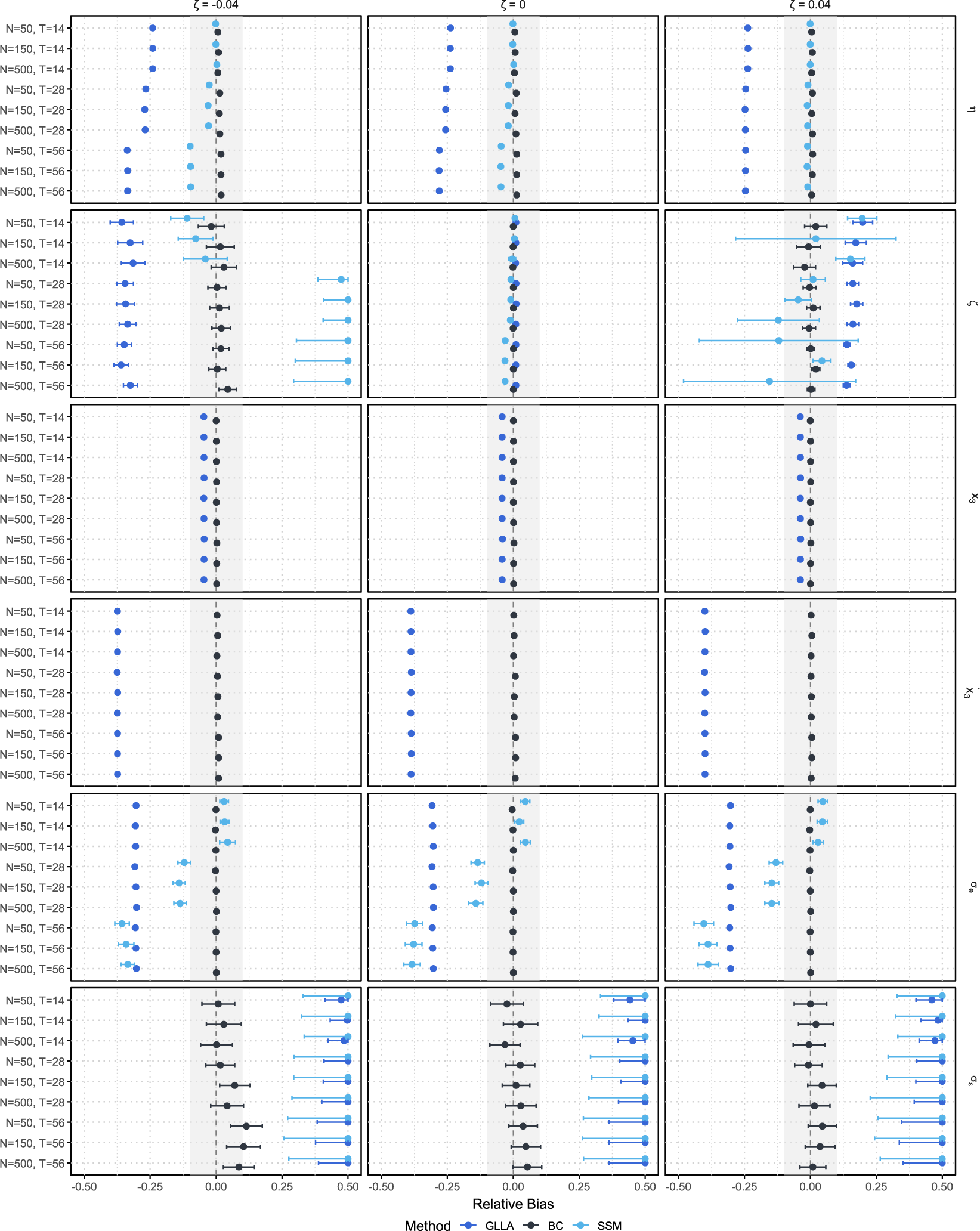

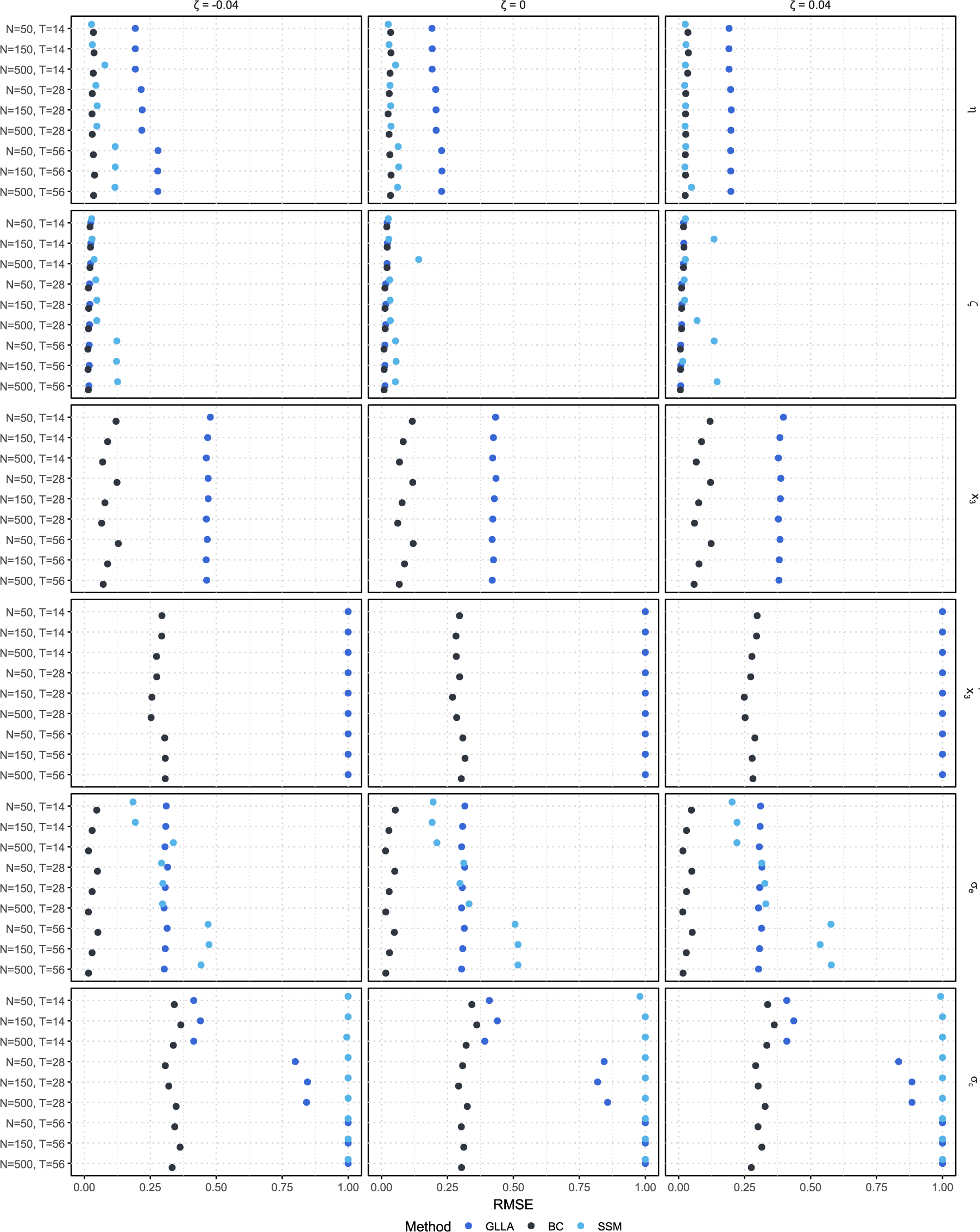

4 Simulation study

Next, we conducted a simulation study to examine the effectiveness of the bias-correction method in the damped linear oscillator model with dynamic error. Specifically, we varied the true values of the damping parameter, the sample size, and the number of time points per individual, and compared the parameter estimates using GLLA, SSM, and the bias-correction method to assess their performance across different scenarios.

4.1 Procedure

We adopted the same procedure of data generation and parameter estimation as described in Section 3.2. In summary, we first simulated time series for each individual using the Euler method for numerical integration, and added measurement error to obtain observed data. This process was repeated for N individuals, each having T observations. For parameter estimation, we first constructed a five-dimensional time-delay embedded matrix and applied GLLA to estimate zero-, first-, and second-order derivatives. Using the estimated derivatives, we obtained the GLLA estimates of the six parameters (

![]() $\eta $

,

$\eta $

,

![]() $\zeta $

,

$\zeta $

,

![]() $x_{3}$

,

$x_{3}$

,

![]() ${\dot {x}}_{3}$

,

${\dot {x}}_{3}$

,

![]() $\sigma _{e}$

, and

$\sigma _{e}$

, and

![]() $\sigma _{\varepsilon }$

) in the damped linear oscillator model with dynamic error. Bias correction was performed using the RM algorithm outlined in Section 2.2.1. The point estimates were computed according to Equation (11), and the SE estimates were obtained using MC approximation and numerical differentiation method according to Equation (16). For comparison, we also estimated the parameters based on state-space models using the dynr package (Ou et al., Reference Ou, Hunter and Chow2019), following the same procedure as described in the previous section. The analysis was conducted in R 4.2.2 (R Core Team, 2020).

$\sigma _{\varepsilon }$

) in the damped linear oscillator model with dynamic error. Bias correction was performed using the RM algorithm outlined in Section 2.2.1. The point estimates were computed according to Equation (11), and the SE estimates were obtained using MC approximation and numerical differentiation method according to Equation (16). For comparison, we also estimated the parameters based on state-space models using the dynr package (Ou et al., Reference Ou, Hunter and Chow2019), following the same procedure as described in the previous section. The analysis was conducted in R 4.2.2 (R Core Team, 2020).

4.2 Simulation conditions

We varied the damping parameter (

![]() $\zeta $

=

$\zeta $

=

![]() $-$

0.04, 0, 0.04), the sample size (N = 50, 150, 500), and the number of time points per individual (T = 14, 28, 56), which were fully crossed, resulting in 27 (= 3

$-$

0.04, 0, 0.04), the sample size (N = 50, 150, 500), and the number of time points per individual (T = 14, 28, 56), which were fully crossed, resulting in 27 (= 3

![]() $\times $

3

$\times $

3

![]() $\times $

3) simulation conditions. In each condition, we replicated the MC simulation 500 times. The other parameters (

$\times $

3) simulation conditions. In each condition, we replicated the MC simulation 500 times. The other parameters (

![]() $\eta = -0.8$

,

$\eta = -0.8$

,

![]() $x_{3} = -10$

,

$x_{3} = -10$

,

![]() ${\dot {x}}_{3} = -10$

,

${\dot {x}}_{3} = -10$

,

![]() $\sigma _{e} = 1$

, and

$\sigma _{e} = 1$

, and

![]() $\sigma _{\varepsilon } = 0.5$

) were held constant across all simulation conditions. The frequency parameter

$\sigma _{\varepsilon } = 0.5$

) were held constant across all simulation conditions. The frequency parameter

![]() $\eta $

and the damping parameter

$\eta $

and the damping parameter

![]() $\zeta $

are of primary interest in the damped linear oscillator model. In the simulation study, we focused on

$\zeta $

are of primary interest in the damped linear oscillator model. In the simulation study, we focused on

![]() $\eta = -0.8$

to account for a typical cycle length of 7 time points (e.g., one week with one measurement per day), and varied

$\eta = -0.8$

to account for a typical cycle length of 7 time points (e.g., one week with one measurement per day), and varied

![]() $\zeta $

to represent a system with a damping process (

$\zeta $

to represent a system with a damping process (

![]() $\zeta < 0$

), an amplification process (

$\zeta < 0$

), an amplification process (

![]() $\zeta> 0$

), or without damping (

$\zeta> 0$

), or without damping (

![]() $\zeta = 0$

). For sample size, we used 50, 150, and 500 to represent small, medium, and large samples, respectively, as in typical intensive longitudinal studies. For the number of time points per individual, we chose 14, 28, and 56 to represent common study durations, covering approximately two, four (about one month), and eight (about two months) cycles.

$\zeta = 0$

). For sample size, we used 50, 150, and 500 to represent small, medium, and large samples, respectively, as in typical intensive longitudinal studies. For the number of time points per individual, we chose 14, 28, and 56 to represent common study durations, covering approximately two, four (about one month), and eight (about two months) cycles.

4.3 Evaluation criteria

4.3.1 Convergence rate

For the bias-correction method, we run a fixed total of 5,000 iterations. Convergence was determined by computing the relative difference between the point estimates at iterations 4,999 and 5,000 (i.e., their difference divided by the estimate at 4,999), with a tolerance threshold set to 0.01%.Footnote

9

For the converged cases of the bias-correction and SSM methods, we further examined the SE estimates to exclude outliers or improper solutions (i.e., SE estimates greater than 10 were considered outliers, as they were typically much less than 10). For the bias-correction method, we identified one and two cases containing outliers in the following two simulation conditions, respectively: (a)

![]() $N = 500$

,

$N = 500$

,

![]() $T = 56$

,

$T = 56$

,

![]() $\zeta = 0.04$

and (b)

$\zeta = 0.04$

and (b)

![]() $N = 50$

,

$N = 50$

,

![]() $T = 56$

,

$T = 56$

,

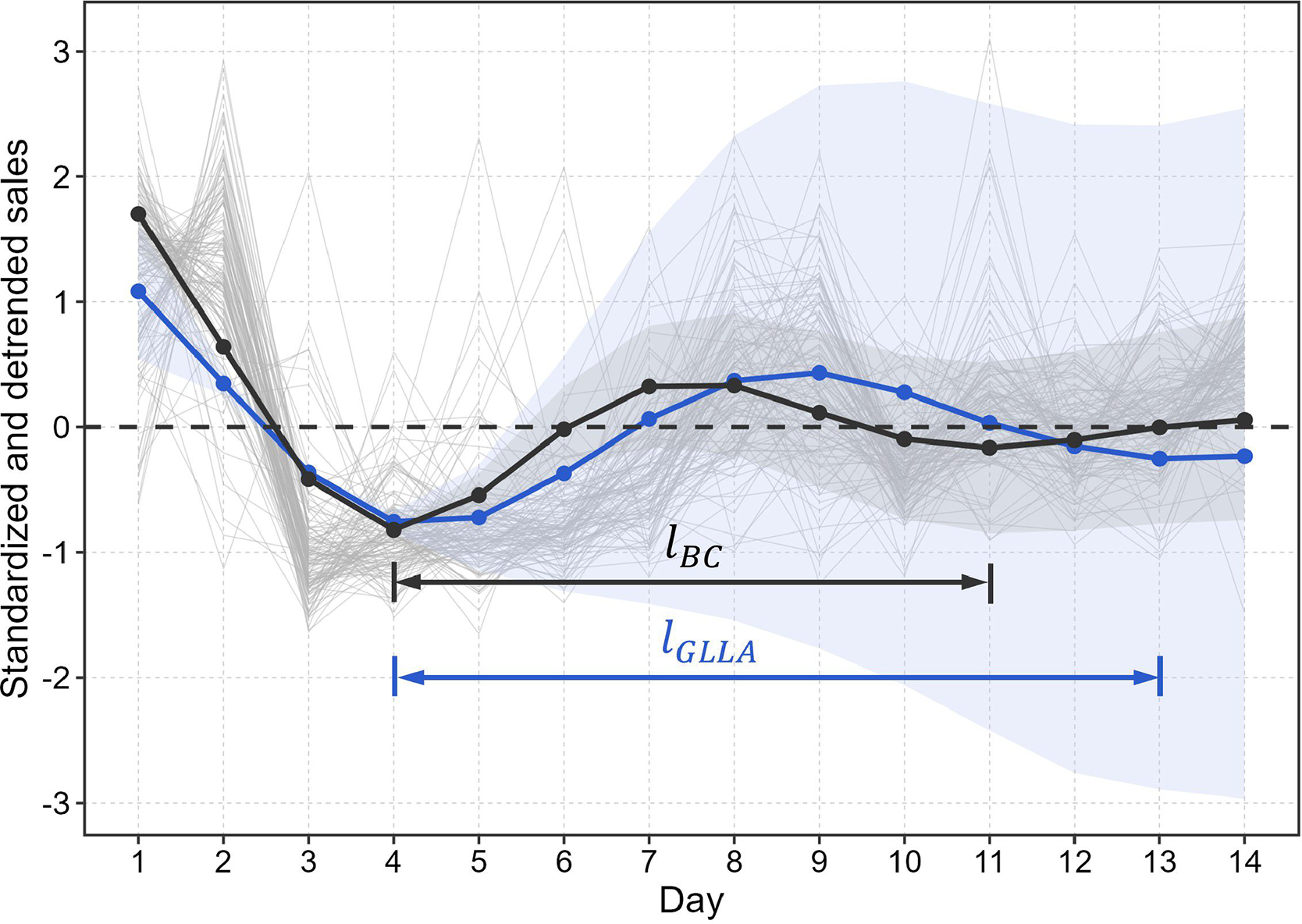

![]() $\zeta = 0$