1. Introduction

Compound Poisson distributions serve as a basis for building risk models with applications in property and casualty insurance, such as risk management, pricing, and reserves. In these contexts, the loss

![]() $X_v$

for a given risk v is often assumed to follow a compound Poisson distribution, and the portfolio’s aggregate loss is thereby defined as

$X_v$

for a given risk v is often assumed to follow a compound Poisson distribution, and the portfolio’s aggregate loss is thereby defined as

where

![]() $N_v \sim \mathrm{Poisson}(\lambda_v)$

is referred to as the risk’s frequency and

$N_v \sim \mathrm{Poisson}(\lambda_v)$

is referred to as the risk’s frequency and

![]() $\{B_{v, j}, j \in \mathbb{N}_1\}$

as its sequence of severities, with the convention

$\{B_{v, j}, j \in \mathbb{N}_1\}$

as its sequence of severities, with the convention

![]() $\sum_{j=1}^0 B_{v,j} = 0$

.

$\sum_{j=1}^0 B_{v,j} = 0$

.

Dependence between risks of a portfolio may arise from their frequencies. The model in (1.1) has the advantage, as discussed in Cummins and Wiltbank (Reference Cummins and Wiltbank1983), of allowing for proper accommodation of this dependence while explicitly accounting for events of different sources, which may have distinct claim amount distributions. Mathematically, this translates into components of the frequency random vector

![]() ${\boldsymbol{{N}}}= (N_v,\, v\in\mathcal{V})$

being dependent in (1.1). In this paper, the sequences of claim amounts

${\boldsymbol{{N}}}= (N_v,\, v\in\mathcal{V})$

being dependent in (1.1). In this paper, the sequences of claim amounts

![]() $\{B_{1,j},\, j\in\mathbb{N}_{1}\},\ldots,\{B_{d,j},\, j\in\mathbb{N}_1\}$

are assumed to be mutually independent and independent of

$\{B_{1,j},\, j\in\mathbb{N}_{1}\},\ldots,\{B_{d,j},\, j\in\mathbb{N}_1\}$

are assumed to be mutually independent and independent of

![]() $\boldsymbol{{N}}$

. Let the random variables within each sequence be identically distributed, and thus we may refer to claim amounts with stand-in random variables

$\boldsymbol{{N}}$

. Let the random variables within each sequence be identically distributed, and thus we may refer to claim amounts with stand-in random variables

![]() $B_1,\ldots, B_d$

for convenience. The portfolio

$B_1,\ldots, B_d$

for convenience. The portfolio

![]() ${\boldsymbol{X}} = (X_v,\, v\in\mathcal{V})$

thus follows a multivariate compound distribution of Type 2 according to the terminology in Sundt and Vernic (Reference Sundt and Vernic2009); see Chapters 19–20. In a risk modeling setting, multivariate compound Poisson distributions of Type 2 have been studied notably in Cossette et al. (Reference Cossette, Mailhot and Marceau2012) and Kim et al. (Reference Kim, Jang and Pyun2019).

${\boldsymbol{X}} = (X_v,\, v\in\mathcal{V})$

thus follows a multivariate compound distribution of Type 2 according to the terminology in Sundt and Vernic (Reference Sundt and Vernic2009); see Chapters 19–20. In a risk modeling setting, multivariate compound Poisson distributions of Type 2 have been studied notably in Cossette et al. (Reference Cossette, Mailhot and Marceau2012) and Kim et al. (Reference Kim, Jang and Pyun2019).

One may rely on three approaches to conceive a multivariate Poisson distribution for the random vector

![]() $\boldsymbol{{N}}$

: copulas, common shocks, and binomial thinning; see for instance Inouye et al. (Reference Inouye, Yang, Allen and Ravikumar2017) and Liu et al. (Reference Liu, Shi, Yao and Yang2024). The copula approach allows separate modeling of marginals and dependence but faces theoretical and computational challenges in a discrete setting (Genest and Nešlehová, Reference Genest and Nešlehová2007; Henn, Reference Henn2022). The common-shock approach, dating back to M’Kendrick (Reference M’Kendrick1925) and later extended to higher dimensions (Krishnamoorthy, Reference Krishnamoorthy1951; Teicher, Reference Teicher1954), offers a clear stochastic interpretation but quickly becomes intractable due to the exponential growth in parameters (Karlis, Reference Karlis2003). The family of common-shock-based models, while widely referred to as multivariate Poisson, does not encompass all Poisson-marginal distributions; see Çekyay et al. (Reference Çekyay, Frenk and Javadi2023) for a historical recap.

$\boldsymbol{{N}}$

: copulas, common shocks, and binomial thinning; see for instance Inouye et al. (Reference Inouye, Yang, Allen and Ravikumar2017) and Liu et al. (Reference Liu, Shi, Yao and Yang2024). The copula approach allows separate modeling of marginals and dependence but faces theoretical and computational challenges in a discrete setting (Genest and Nešlehová, Reference Genest and Nešlehová2007; Henn, Reference Henn2022). The common-shock approach, dating back to M’Kendrick (Reference M’Kendrick1925) and later extended to higher dimensions (Krishnamoorthy, Reference Krishnamoorthy1951; Teicher, Reference Teicher1954), offers a clear stochastic interpretation but quickly becomes intractable due to the exponential growth in parameters (Karlis, Reference Karlis2003). The family of common-shock-based models, while widely referred to as multivariate Poisson, does not encompass all Poisson-marginal distributions; see Çekyay et al. (Reference Çekyay, Frenk and Javadi2023) for a historical recap.

The third approach is to rely on stochastic representations employing binomial thinning. Binomial thinning was introduced in Steutel et al. (Reference Steutel, Vervaat and Wolfe1983) and first employed to incorporate dependence between Poisson random variables in McKenzie (Reference McKenzie1985) and McKenzie (Reference McKenzie1988). Such an approach has been used for risk modeling in Yuen and Wang (Reference Yuen and Wang2002), Lindskog and McNeil (Reference Lindskog and McNeil2003), and Wang and Yuen (Reference Wang and Yuen2005). In Côté et al. (Reference Côté, Cossette and Marceau2025b), the authors encapsulate binomial thinning operations within a tree structure to provide a much wider variety of dependence schemes under this approach. The resulting tree-structured Markov random field (MRF) has explicit probability mass function (pmf) and probability generating function (pgf) expressions, enabling efficient computation in high dimensions.

In a risk modeling context, scalability of computations and estimation methods to high dimensions is of the most important consideration. In particular, to introduce dependence between Poisson risk frequencies, the current methods based on common shocks are either little flexible or computationally intensive. This work draws inspiration from the MRF in Côté et al. (Reference Côté, Cossette and Marceau2025b) to reconcile flexibility and scalability without recourse to copulas. In this paper, the authors’ main focus is the MRF’s distributional properties. Here, we put forth its relevance for risk modeling. To do so, we begin by allowing more flexibility for the modeling of marginals by letting the MRF’s means be heterogeneous, despite a slight trade-off in the disconnect between marginal and dependence. We also show the MRF’s connection to the multivariate Poisson and discuss methods for the model’s estimation.

We put forth the MRF’s relevance for risk modeling through two objectives. One of our objectives is to highlight the computational methods’ practicality and their applicability to actuarial science. We will discuss this through two tasks. First, we aim to evaluate the aggregate risk of the portfolio by studying the distribution of S in (1.1) and developing efficient methods to evaluate its pmf without resorting to approximations. Second, we aim to assess the contribution of every component of the portfolio

![]() $\boldsymbol{{X}}$

to the aggregate claim amount, and we perform this risk allocation twofold. For an allocation ex ante, we resort to the contribution to the Tail Value-at-Risk (TVaR) under Euler’s principle, see Tasche (Reference Tasche2007); for an allocation ex post, we turn to conditional-mean risk sharing, see Denuit and Dhaene (Reference Denuit and Dhaene2012) and subsequent work. Algorithms for its exact computation are developed, inspired from the methods put forth in Blier-Wong et al. (Reference Blier-Wong, Cossette and Marceau2025). We furthermore provide results allowing for a better understanding of the portfolio’s asymptotic behavior by defining the model on infinite-dimensional trees.

$\boldsymbol{{X}}$

to the aggregate claim amount, and we perform this risk allocation twofold. For an allocation ex ante, we resort to the contribution to the Tail Value-at-Risk (TVaR) under Euler’s principle, see Tasche (Reference Tasche2007); for an allocation ex post, we turn to conditional-mean risk sharing, see Denuit and Dhaene (Reference Denuit and Dhaene2012) and subsequent work. Algorithms for its exact computation are developed, inspired from the methods put forth in Blier-Wong et al. (Reference Blier-Wong, Cossette and Marceau2025). We furthermore provide results allowing for a better understanding of the portfolio’s asymptotic behavior by defining the model on infinite-dimensional trees.

Another objective is to illustrate the practical relevance and effectiveness of the proposed risk model in real-world applications. Notably, from Theorem 7.1 of Coles (Reference Coles2001), counts of extreme events follow Poisson distributions. This insight provides a natural avenue for applying our model. We perform a detailed risk management study on extreme rainfall events data, in which we evaluate tail risk measures (e.g., TVaR) and assess the allocation of extreme losses across different station locations. Our study showcases the risk model’s ability to capture dependence structures in multivariate extreme events while maintaining interpretability. This paper contributes to the growing use of graphical models in actuarial science, including Oberoi et al. (Reference Oberoi, Pittea and Tapadar2020), Denuit and Robert (Reference Denuit and Robert2022), and Boucher et al. (Reference Boucher, Crainic, LeBlanc and Masse2024).

The structure of the paper is as follows. In Section 2, we present the tree-structured MRF with Poisson marginal distributions and discuss its connection with the distributions obtained through the common-shock approach. In Section 3, we perform our first risk management task, evaluating the risk associated with S. In Section 4, we perform our second risk management task, allocating that risk to the components of

![]() $\boldsymbol{{X}}$

; we also provide results for asymptotic cases of infinitely large portfolios. In Section 5, we discuss the MRF’s parameters’ estimation. Section 6 comprises the application to extreme rainfall data. Section 7 provides concluding remarks. All proofs are relegated to Appendix A.

$\boldsymbol{{X}}$

; we also provide results for asymptotic cases of infinitely large portfolios. In Section 5, we discuss the MRF’s parameters’ estimation. Section 6 comprises the application to extreme rainfall data. Section 7 provides concluding remarks. All proofs are relegated to Appendix A.

2. Tree-structured MRFs with Poisson marginal distributions

In this section, we present the tree-structured MRF with Poisson marginal distributions, which will be the center of consideration in the following sections. Distributions of this family describe tree-structured MRFs. We recall below the definition of a MRF (Cressie and Wikle, Reference Cressie and Wikle2015, Chapter 4.2) and some elementary notions pertaining to trees. Let

![]() $\mathcal{V} = \{1, 2, \ldots, d\}$

, with

$\mathcal{V} = \{1, 2, \ldots, d\}$

, with

![]() $d \in \mathbb{N}_1$

, represent a set of vertices, and

$d \in \mathbb{N}_1$

, represent a set of vertices, and

![]() $\mathcal{E}\subseteq \mathcal{V} \times \mathcal{V}$

be a set of edges.

$\mathcal{E}\subseteq \mathcal{V} \times \mathcal{V}$

be a set of edges.

Definition 2.1 (MRF). A vector of random variables

![]() $ \boldsymbol{{X}} = (X_v, v \in \mathcal{V}) $

is a MRF if it satisfies the local Markov property with respect to a graph

$ \boldsymbol{{X}} = (X_v, v \in \mathcal{V}) $

is a MRF if it satisfies the local Markov property with respect to a graph

![]() $\mathcal{G}=(\mathcal{V},\mathcal{E})$

; that is, for any two of its components, say

$\mathcal{G}=(\mathcal{V},\mathcal{E})$

; that is, for any two of its components, say

![]() $ X_u $

and

$ X_u $

and

![]() $ X_w $

, such that

$ X_w $

, such that

![]() $(u,w) \notin \mathcal{E} $

,

$(u,w) \notin \mathcal{E} $

,

where

![]() $ \perp\!\!\!\perp $

denotes conditional independence. A MRF is tree-structured if its underlying graph is a tree.

$ \perp\!\!\!\perp $

denotes conditional independence. A MRF is tree-structured if its underlying graph is a tree.

A tree, denoted by

![]() $\mathcal{T}$

, is a simple and connected undirected graph such that no path from a vertex to itself exists. A path from vertex u to vertex v, written

$\mathcal{T}$

, is a simple and connected undirected graph such that no path from a vertex to itself exists. A path from vertex u to vertex v, written

![]() $\mathrm{path}(u,v)$

, is the set of edges

$\mathrm{path}(u,v)$

, is the set of edges

![]() $e \in \mathcal{E}$

such that u and v participate in an edge once and any other involved vertices twice. All graphs considered in this paper are trees. Labeling a specific vertex

$e \in \mathcal{E}$

such that u and v participate in an edge once and any other involved vertices twice. All graphs considered in this paper are trees. Labeling a specific vertex

![]() $r \in \mathcal{V}$

as the root of a tree, we define

$r \in \mathcal{V}$

as the root of a tree, we define

![]() $\mathcal{T}_r$

as the r-rooted version of

$\mathcal{T}_r$

as the r-rooted version of

![]() $\mathcal{T}$

. A root for the tree defines a parent

$\mathcal{T}$

. A root for the tree defines a parent

![]() $\mathrm{pa}(v)$

(except for the root), children

$\mathrm{pa}(v)$

(except for the root), children

![]() $\mathrm{ch}(v),$

and descendants

$\mathrm{ch}(v),$

and descendants

![]() $\mathrm{dsc}(v)$

for each

$\mathrm{dsc}(v)$

for each

![]() $v \in \mathcal{V}$

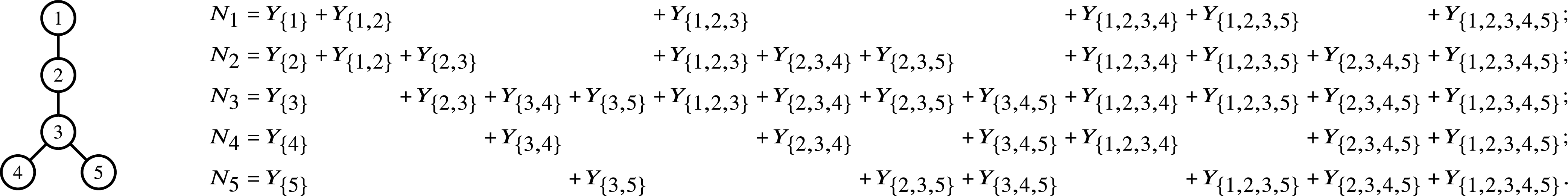

. An example of this notation is provided in Figure 1, where vertex 1 acts as the tree’s root. We refer to Section 3.3 of Saoub (Reference Saoub2021) for further insight on the terminology surrounding rooted trees.

$v \in \mathcal{V}$

. An example of this notation is provided in Figure 1, where vertex 1 acts as the tree’s root. We refer to Section 3.3 of Saoub (Reference Saoub2021) for further insight on the terminology surrounding rooted trees.

Filial relations in a rooted tree.

By encrypting a MRF on a tree, the random vector’s dependence scheme inherits the structural properties of the latter. For instance, the correlation between two of its components decays multiplicatively along the path between them. Although constraining feasible dependence schemes, this comes with the benefit of a greater interpretability: this is the idea underlying probabilistic graphical models.

The construction of tree-structured MRFs with Poisson marginal distributions relies on the binomial thinning operator, denoted by

![]() $\circ$

, defined in terms of a random variable Y taking values in

$\circ$

, defined in terms of a random variable Y taking values in

![]() $\mathbb{N}$

as

$\mathbb{N}$

as

![]() $\theta \circ Y \, :\!= \, \sum_{j=1}^{Y} I_{j}^{(\theta)}, \; \theta \in [0, 1],$

where

$\theta \circ Y \, :\!= \, \sum_{j=1}^{Y} I_{j}^{(\theta)}, \; \theta \in [0, 1],$

where

![]() $ \{I_{j}^{(\theta)}, j \in \mathbb{N}_1 \} $

is a sequence of independent Bernoulli random variables with a probability of success

$ \{I_{j}^{(\theta)}, j \in \mathbb{N}_1 \} $

is a sequence of independent Bernoulli random variables with a probability of success

![]() $\theta$

; see Steutel et al. (Reference Steutel, Vervaat and Wolfe1983). This operation can be interpreted as sampling among Y elements, each with probability

$\theta$

; see Steutel et al. (Reference Steutel, Vervaat and Wolfe1983). This operation can be interpreted as sampling among Y elements, each with probability

![]() $\theta$

of being selected independently. We refer the interested reader to Weiß (Reference Weiß2008) for further insight on the binomial thinning operator.

$\theta$

of being selected independently. We refer the interested reader to Weiß (Reference Weiß2008) for further insight on the binomial thinning operator.

2.1. Main characteristics of tree-structured MRFs with Poisson marginal distributions

To construct a risk model with heterogeneous marginal behaviors in Section 3, we define tree-structured MRFs with Poisson marginals where each component has its own mean parameter

![]() $\lambda_v$

,

$\lambda_v$

,

![]() $v \in \mathcal{V}$

.

$v \in \mathcal{V}$

.

Theorem 2.2. Consider a tree

![]() $ \mathcal{T} = (\mathcal{V}, \mathcal{E})$

, and let

$ \mathcal{T} = (\mathcal{V}, \mathcal{E})$

, and let

![]() $\mathcal{T}_r$

be its rooted version, for some

$\mathcal{T}_r$

be its rooted version, for some

![]() $r \in \mathcal{V}$

. Given a vector of mean parameters

$r \in \mathcal{V}$

. Given a vector of mean parameters

![]() $\boldsymbol{\lambda}=(\lambda_v,\,v\in\mathcal{V})$

where

$\boldsymbol{\lambda}=(\lambda_v,\,v\in\mathcal{V})$

where

![]() $\lambda_v\gt0$

for every

$\lambda_v\gt0$

for every

![]() $v\in\mathcal{V}$

and a vector of dependence parameters

$v\in\mathcal{V}$

and a vector of dependence parameters

![]() $\boldsymbol{\alpha} = (\alpha_e,\, e\in \mathcal{E})$

where

$\boldsymbol{\alpha} = (\alpha_e,\, e\in \mathcal{E})$

where

![]() $\alpha_{(\mathrm{pa}(v), v)} \in (0, \min(\sqrt{{\lambda_{v}}/{\lambda_{\mathrm{pa}(v)}}}, \sqrt{{\lambda_{\mathrm{pa}(v)}}/{\lambda_{v}}})]$

for every

$\alpha_{(\mathrm{pa}(v), v)} \in (0, \min(\sqrt{{\lambda_{v}}/{\lambda_{\mathrm{pa}(v)}}}, \sqrt{{\lambda_{\mathrm{pa}(v)}}/{\lambda_{v}}})]$

for every

![]() $(\mathrm{pa}(v), v)\in \mathcal{E}$

. Let

$(\mathrm{pa}(v), v)\in \mathcal{E}$

. Let

![]() $\boldsymbol{{L}}=(L_v,\,v\in\mathcal{V})$

be a vector of independent random variables such that

$\boldsymbol{{L}}=(L_v,\,v\in\mathcal{V})$

be a vector of independent random variables such that

![]() $L_v\sim \text{Poisson}(\lambda_v ( 1- \alpha_{(\mathrm{pa}(v), v)}\sqrt{\lambda_{\mathrm{pa}(v)}/\lambda_v}))$

for every

$L_v\sim \text{Poisson}(\lambda_v ( 1- \alpha_{(\mathrm{pa}(v), v)}\sqrt{\lambda_{\mathrm{pa}(v)}/\lambda_v}))$

for every

![]() $v\in\mathcal{V}$

, with

$v\in\mathcal{V}$

, with

![]() $\alpha_{(\mathrm{pa}(r), r)}=0$

since the root has no parent. Define

$\alpha_{(\mathrm{pa}(r), r)}=0$

since the root has no parent. Define

![]() $\boldsymbol{{N}} = (N_v,\, v \in \mathcal{V})$

as a vector of random variables such that

$\boldsymbol{{N}} = (N_v,\, v \in \mathcal{V})$

as a vector of random variables such that

\begin{align}N_v = \begin{cases} L_r, & \text{if } v=r \\[3pt] \left(\alpha_{(\mathrm{pa}(v), v)}\sqrt{\frac{\lambda_v}{\lambda_{\mathrm{pa}(v)}}} \right)\circ N_{\mathrm{pa}(v)}+ L_v,& \text{if } v\in\mathrm{dsc}(r) \end{cases},\quad \text{for every } v\in\mathcal{V}.\end{align}

\begin{align}N_v = \begin{cases} L_r, & \text{if } v=r \\[3pt] \left(\alpha_{(\mathrm{pa}(v), v)}\sqrt{\frac{\lambda_v}{\lambda_{\mathrm{pa}(v)}}} \right)\circ N_{\mathrm{pa}(v)}+ L_v,& \text{if } v\in\mathrm{dsc}(r) \end{cases},\quad \text{for every } v\in\mathcal{V}.\end{align}

Then,

![]() $\boldsymbol{{N}}$

is a MRF with a unique joint distribution whichever the chosen root of

$\boldsymbol{{N}}$

is a MRF with a unique joint distribution whichever the chosen root of

![]() $\mathcal{T}$

, where the random variable

$\mathcal{T}$

, where the random variable

![]() $N_v$

follows a Poisson distribution of parameter

$N_v$

follows a Poisson distribution of parameter

![]() $\lambda_v$

, for all

$\lambda_v$

, for all

![]() $v\in \mathcal{V}$

.

$v\in \mathcal{V}$

.

Henceforth, we write

![]() $\boldsymbol{{N}}\sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

to signify

$\boldsymbol{{N}}\sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

to signify

![]() $\boldsymbol{{N}}$

admits the stochastic representation in (2.2), and we let

$\boldsymbol{{N}}$

admits the stochastic representation in (2.2), and we let

![]() ${\boldsymbol{\Lambda}}$

denote the set of admissible parameters

${\boldsymbol{\Lambda}}$

denote the set of admissible parameters

![]() $(\boldsymbol{\lambda}, \boldsymbol{\alpha})$

. We write

$(\boldsymbol{\lambda}, \boldsymbol{\alpha})$

. We write

![]() $\mathbb{MPMRF}$

the family of all such distributions for

$\mathbb{MPMRF}$

the family of all such distributions for

![]() $\boldsymbol{{N}}$

.

$\boldsymbol{{N}}$

.

The MRF studied in Côté et al. (Reference Côté, Cossette and Marceau2025b) is a special case of the MRF constructed in Theorem 2.2, where the mean parameters are homogeneous. In (2.2), the components of

![]() $\boldsymbol{{N}}$

, except the root, are defined as the sum of two independent random variables. We interpret them as the propagation and the innovation random variables, respectively. The propagation random variable

$\boldsymbol{{N}}$

, except the root, are defined as the sum of two independent random variables. We interpret them as the propagation and the innovation random variables, respectively. The propagation random variable

![]() $(\alpha_{(\mathrm{pa}(v), v)}\sqrt{{\lambda_v}/{\lambda_{\mathrm{pa}(v)}}} )\circ N_{\mathrm{pa}(v)}$

expresses the number of events that have duplicated by binomial thinning from

$(\alpha_{(\mathrm{pa}(v), v)}\sqrt{{\lambda_v}/{\lambda_{\mathrm{pa}(v)}}} )\circ N_{\mathrm{pa}(v)}$

expresses the number of events that have duplicated by binomial thinning from

![]() $N_{\mathrm{pa}(v)}$

to

$N_{\mathrm{pa}(v)}$

to

![]() $N_v$

. The innovation random variable

$N_v$

. The innovation random variable

![]() $L_v$

expresses the number of events occurring at vertex v that have not propagated from vertex

$L_v$

expresses the number of events occurring at vertex v that have not propagated from vertex

![]() $\mathrm{pa}(v)$

. The thinning parameter

$\mathrm{pa}(v)$

. The thinning parameter

![]() $\alpha_{(\mathrm{pa}(v), v)}\sqrt{{\lambda_v}/{\lambda_{\mathrm{pa}(v)}}}$

for the propagation random variable is an adjustment of the dependence parameter

$\alpha_{(\mathrm{pa}(v), v)}\sqrt{{\lambda_v}/{\lambda_{\mathrm{pa}(v)}}}$

for the propagation random variable is an adjustment of the dependence parameter

![]() $\alpha_{(\mathrm{pa}(v), v)}$

taking into account the heterogeneous means, so that the correlation between

$\alpha_{(\mathrm{pa}(v), v)}$

taking into account the heterogeneous means, so that the correlation between

![]() $N_{\mathrm{pa}(v)}$

and

$N_{\mathrm{pa}(v)}$

and

![]() $N_v$

is

$N_v$

is

![]() $\alpha_{(\mathrm{pa}(v), v)}$

. The upper bound

$\alpha_{(\mathrm{pa}(v), v)}$

. The upper bound

![]() $\alpha_{(\mathrm{pa}(v),v)} \lt\min(\sqrt{\lambda_v/\lambda_{\mathrm{pa}(v)}},\sqrt{\lambda_{\mathrm{pa}(v)}/\lambda_v})$

stems from the fact that dependence is characterized by this thinning mechanism. The fraction of events propagating from one random variable to another cannot exceed the root of the ratio of the marginal rates; otherwise, the innovation component would require more inherited events than the number of events possible on the parent. The admissible parameter space therefore reflects a structural feature of event-sharing mechanisms in count data. Note that any vertex can be chosen as the root of the tree; accordingly, the constraint on the dependence parameters takes into account the reversibility of parent–child relationships that may occur, thus preserving the root-free formulation of the distribution; see Remark 2.3. For illustration, if

$\alpha_{(\mathrm{pa}(v),v)} \lt\min(\sqrt{\lambda_v/\lambda_{\mathrm{pa}(v)}},\sqrt{\lambda_{\mathrm{pa}(v)}/\lambda_v})$

stems from the fact that dependence is characterized by this thinning mechanism. The fraction of events propagating from one random variable to another cannot exceed the root of the ratio of the marginal rates; otherwise, the innovation component would require more inherited events than the number of events possible on the parent. The admissible parameter space therefore reflects a structural feature of event-sharing mechanisms in count data. Note that any vertex can be chosen as the root of the tree; accordingly, the constraint on the dependence parameters takes into account the reversibility of parent–child relationships that may occur, thus preserving the root-free formulation of the distribution; see Remark 2.3. For illustration, if

![]() $(\lambda_1,\lambda_2) = (5,5)$

, the upper bound is 1, maximal correlation. If

$(\lambda_1,\lambda_2) = (5,5)$

, the upper bound is 1, maximal correlation. If

![]() $(\lambda_1,\lambda_2) = (3,5)$

, the upper bound is

$(\lambda_1,\lambda_2) = (3,5)$

, the upper bound is

![]() $\sqrt{0.6} \approx\!0.77$

, compared to the Fréchet upper bound of

$\sqrt{0.6} \approx\!0.77$

, compared to the Fréchet upper bound of

![]() $\approx\!0.97$

.

$\approx\!0.97$

.

Remark 2.3. As put forth in the proof of Theorem 2.2, the symmetry of pairwise stochastic dynamics, combined with the local Markov property, yields a root-free distribution of the MRF. The directionality seemingly implied by the necessity to choose a root is illusory: to define a parent–child dynamic is necessary to have a binomial thinning representation, but it has no impact on the joint distribution of

![]() $\boldsymbol{{N}}$

. The model is thus truly undirected; we will provide in Section 2.2 a root-free stochastic construction. The artificial directionality, required for the binomial thinning representation, is what allows an iterative way of proceeding along trees, resulting in algorithmic efficiency. A similar situation occurs for tree-structured Ising models; see Côté et al. (Reference Côté, Cossette and Marceau2025a).

$\boldsymbol{{N}}$

. The model is thus truly undirected; we will provide in Section 2.2 a root-free stochastic construction. The artificial directionality, required for the binomial thinning representation, is what allows an iterative way of proceeding along trees, resulting in algorithmic efficiency. A similar situation occurs for tree-structured Ising models; see Côté et al. (Reference Côté, Cossette and Marceau2025a).

Remark 2.4. Compared to the homogeneous-mean model in Côté et al. (Reference Côté, Cossette and Marceau2025b),

![]() $\boldsymbol{\alpha}$

is not a true dependence parameter, as it is constrained by

$\boldsymbol{\alpha}$

is not a true dependence parameter, as it is constrained by

![]() $\boldsymbol{\lambda}$

. To circumvent this, one could let

$\boldsymbol{\lambda}$

. To circumvent this, one could let

![]() $\theta_v = \alpha_{(\mathrm{pa}(v), v)}\sqrt{{\lambda_v}/{\lambda_{\mathrm{pa}(v)}}} $

, and

$\theta_v = \alpha_{(\mathrm{pa}(v), v)}\sqrt{{\lambda_v}/{\lambda_{\mathrm{pa}(v)}}} $

, and

![]() $\boldsymbol{\theta}$

would be a true dependence parameter, ranging from 0 to 1. The values of

$\boldsymbol{\theta}$

would be a true dependence parameter, ranging from 0 to 1. The values of

![]() $\boldsymbol{\theta}$

would however vary with the root, so this parameter change would come at the cost of the undirectionality of the model. Also note that, because the constraints on

$\boldsymbol{\theta}$

would however vary with the root, so this parameter change would come at the cost of the undirectionality of the model. Also note that, because the constraints on

![]() $\boldsymbol{\alpha}$

come directly from the binomial thinning operations, they should not be limiting if the stochastic dynamics underlying the data are effectively of propagation and innovation.

$\boldsymbol{\alpha}$

come directly from the binomial thinning operations, they should not be limiting if the stochastic dynamics underlying the data are effectively of propagation and innovation.

The following sequence of joint pgfs {

![]() $\eta_{v}^{\mathcal{T}_r},\,v\in\mathcal{V}\}$

will prove useful throughout the paper.

$\eta_{v}^{\mathcal{T}_r},\,v\in\mathcal{V}\}$

will prove useful throughout the paper.

Definition 2.5. Consider a tree

![]() $ \mathcal{T} = (\mathcal{V}, \mathcal{E})$

, and let

$ \mathcal{T} = (\mathcal{V}, \mathcal{E})$

, and let

![]() $\mathcal{T}_r$

be its rooted version,

$\mathcal{T}_r$

be its rooted version,

![]() $r \in \mathcal{V}$

. For any vector

$r \in \mathcal{V}$

. For any vector

![]() $\boldsymbol{\theta}$

of thinning parameters, let

$\boldsymbol{\theta}$

of thinning parameters, let

![]() $\boldsymbol{\theta}_{\mathrm{dsc}(v)} = (\theta_{j },\, j \in \mathrm{dsc}(v))$

for all

$\boldsymbol{\theta}_{\mathrm{dsc}(v)} = (\theta_{j },\, j \in \mathrm{dsc}(v))$

for all

![]() $v\in\mathcal{V}$

. We define

$v\in\mathcal{V}$

. We define

![]() $\{\eta_{v}^{\mathcal{T}_r},\,v\in\mathcal{V}\}$

as a sequence of joint pgfs through the recursive relation

$\{\eta_{v}^{\mathcal{T}_r},\,v\in\mathcal{V}\}$

as a sequence of joint pgfs through the recursive relation

where

![]() $\boldsymbol{{t}}_{v\mathrm{dsc}(v)}$

is a short-hand notation for the vector

$\boldsymbol{{t}}_{v\mathrm{dsc}(v)}$

is a short-hand notation for the vector

![]() $(t_j, \, j \in \{v\} \cup \mathrm{dsc}(v))$

, and with the convention

$(t_j, \, j \in \{v\} \cup \mathrm{dsc}(v))$

, and with the convention

![]() $\eta_{j}^{\mathcal{T}_r}(\boldsymbol{{t}}_{j\mathrm{dsc}(j)};\;\boldsymbol{\theta}_{\mathrm{dsc}(j)}) = t_j$

for vertices j that have no children according to the rooting in r.

$\eta_{j}^{\mathcal{T}_r}(\boldsymbol{{t}}_{j\mathrm{dsc}(j)};\;\boldsymbol{\theta}_{\mathrm{dsc}(j)}) = t_j$

for vertices j that have no children according to the rooting in r.

In the following proposition, we present the joint pmf and joint pgf of

![]() $\boldsymbol{{N}}$

as in (2.2).

$\boldsymbol{{N}}$

as in (2.2).

Proposition 2.6. Let

![]() $\boldsymbol{{N}} \sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

, where

$\boldsymbol{{N}} \sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

, where

![]() $(\boldsymbol{\lambda},\boldsymbol{\alpha})\in\boldsymbol{\Lambda}$

. For a chosen root

$(\boldsymbol{\lambda},\boldsymbol{\alpha})\in\boldsymbol{\Lambda}$

. For a chosen root

![]() $r \in \mathcal{V}$

, let

$r \in \mathcal{V}$

, let

![]() $\mathcal{T}_r$

be the rooted version of

$\mathcal{T}_r$

be the rooted version of

![]() $\mathcal{T}$

and

$\mathcal{T}$

and

![]() $\zeta_{L_v} = \lambda_v ( 1- \alpha_{(\mathrm{pa}(v), v)}\sqrt{\lambda_{\mathrm{pa}(v)}/\lambda_v})$

for

$\zeta_{L_v} = \lambda_v ( 1- \alpha_{(\mathrm{pa}(v), v)}\sqrt{\lambda_{\mathrm{pa}(v)}/\lambda_v})$

for

![]() $v \in \mathcal{V}\backslash\{r\}$

. Then,

$v \in \mathcal{V}\backslash\{r\}$

. Then,

-

(i) the joint pmf of

$\boldsymbol{{N}}$

is given by (2.4)for

$\boldsymbol{{N}}$

is given by (2.4)for \begin{equation}p_{\boldsymbol{{N}}}(\boldsymbol{{x}}) = \frac{\mathrm{e}^{-\lambda_r}\lambda_r^{x_r}}{x_r!} \prod_{v\in\mathrm{dsc}(r)} \sum_{k=0}^{\min(x_{\mathrm{pa}(v)},x_v)} \frac{{ \mathrm{e}}^{-\zeta_{L_v}} (\zeta_{L_v})^{x_v-k}}{(x_v-k)!} \binom{x_{\mathrm{pa}(v)}}{k} \left( \theta_v \right)^{k} \left(1- \theta_v\right)^{x_{\mathrm{pa}(v)}-k},\end{equation}

\begin{equation}p_{\boldsymbol{{N}}}(\boldsymbol{{x}}) = \frac{\mathrm{e}^{-\lambda_r}\lambda_r^{x_r}}{x_r!} \prod_{v\in\mathrm{dsc}(r)} \sum_{k=0}^{\min(x_{\mathrm{pa}(v)},x_v)} \frac{{ \mathrm{e}}^{-\zeta_{L_v}} (\zeta_{L_v})^{x_v-k}}{(x_v-k)!} \binom{x_{\mathrm{pa}(v)}}{k} \left( \theta_v \right)^{k} \left(1- \theta_v\right)^{x_{\mathrm{pa}(v)}-k},\end{equation}

$\boldsymbol{{x}} \in \mathbb{N}^d$

, where

$\boldsymbol{{x}} \in \mathbb{N}^d$

, where

$\theta_v = \alpha_{(\mathrm{pa}(v),v)}\sqrt{{\lambda_v}/{\lambda_{\mathrm{pa}(v)}}}$

for all

$\theta_v = \alpha_{(\mathrm{pa}(v),v)}\sqrt{{\lambda_v}/{\lambda_{\mathrm{pa}(v)}}}$

for all

$v\in \mathrm{dsc}(r)$

;

$v\in \mathrm{dsc}(r)$

;

-

(ii) the joint pgf of

$\boldsymbol{{N}}$

is given by (2.5)where

$\boldsymbol{{N}}$

is given by (2.5)where \begin{equation}\mathcal{P}_{\boldsymbol{{N}}}(\boldsymbol{{t}}) = \prod_{v\in\mathcal{V}} \mathrm{e}^{\zeta_{L_v}\left(\eta_{v}^{\mathcal{T}_r}\left(\boldsymbol{{t}}_{v\mathrm{dsc}(v)};\,\boldsymbol{\theta}_{\mathrm{dsc}(v)}\right) - 1\right)},\quad \boldsymbol{{t}}\in[\!-1,1]^d,\end{equation}

\begin{equation}\mathcal{P}_{\boldsymbol{{N}}}(\boldsymbol{{t}}) = \prod_{v\in\mathcal{V}} \mathrm{e}^{\zeta_{L_v}\left(\eta_{v}^{\mathcal{T}_r}\left(\boldsymbol{{t}}_{v\mathrm{dsc}(v)};\,\boldsymbol{\theta}_{\mathrm{dsc}(v)}\right) - 1\right)},\quad \boldsymbol{{t}}\in[\!-1,1]^d,\end{equation}

$\boldsymbol{\theta}_{\mathrm{dsc}(v)}= (\alpha_{(\mathrm{pa}(k), k)}\sqrt{{\lambda_k}/{\lambda_{\mathrm{pa}(k)}}}, \, k \in \mathrm{dsc}(v))$

is the vector of thinning parameters for the propagation random variables according to a rooting in r.

$\boldsymbol{\theta}_{\mathrm{dsc}(v)}= (\alpha_{(\mathrm{pa}(k), k)}\sqrt{{\lambda_k}/{\lambda_{\mathrm{pa}(k)}}}, \, k \in \mathrm{dsc}(v))$

is the vector of thinning parameters for the propagation random variables according to a rooting in r.

The joint pmf in (2.4) factorizes along the tree, underscoring that

![]() $\boldsymbol{{N}}$

is a MRF. For further discussions on Gibbs distributions and pmf factorization, we refer to Appendix B, as well as Chapter 4.2 of Cressie and Wikle (Reference Cressie and Wikle2015) and Côté et al. (Reference Côté, Cossette and Marceau2025b). The analytical form of the joint pmf in (2.4) enables efficient numerical evaluation of the likelihood, making it particularly well suited for parameter estimation procedures, as shown in Sections 5 and 6.

$\boldsymbol{{N}}$

is a MRF. For further discussions on Gibbs distributions and pmf factorization, we refer to Appendix B, as well as Chapter 4.2 of Cressie and Wikle (Reference Cressie and Wikle2015) and Côté et al. (Reference Côté, Cossette and Marceau2025b). The analytical form of the joint pmf in (2.4) enables efficient numerical evaluation of the likelihood, making it particularly well suited for parameter estimation procedures, as shown in Sections 5 and 6.

In the upcoming subsection, we show that every distribution of

![]() $\mathbb{MPMRF}$

may be reparameterized such that

$\mathbb{MPMRF}$

may be reparameterized such that

![]() $\boldsymbol{{N}}$

admits an alternative stochastic representation based on common shocks. Whereas models based on the common-shock approach, whose family of distributions we write

$\boldsymbol{{N}}$

admits an alternative stochastic representation based on common shocks. Whereas models based on the common-shock approach, whose family of distributions we write

![]() $\mathbb{MPCS}$

, may become intractable in high dimensions due to the exponential growth in the number of possible shock configurations, the

$\mathbb{MPCS}$

, may become intractable in high dimensions due to the exponential growth in the number of possible shock configurations, the

![]() $\mathbb{MPMRF}$

family scales conveniently to high dimensions.

$\mathbb{MPMRF}$

family scales conveniently to high dimensions.

2.2. A subfamily of the multivariate Poisson distribution based on common shocks

Let

![]() ${\mathcal{V}} = \{1, 2, \ldots, d\}$

be a set of indices and let

${\mathcal{V}} = \{1, 2, \ldots, d\}$

be a set of indices and let

![]() $\mathscr{P}(\mathcal{V})$

be the power set of

$\mathscr{P}(\mathcal{V})$

be the power set of

![]() ${\mathcal{V}}$

, that is, the set of all subsets of

${\mathcal{V}}$

, that is, the set of all subsets of

![]() $\mathcal{V}$

, including the empty set and

$\mathcal{V}$

, including the empty set and

![]() $\mathcal{V}$

itself. For every

$\mathcal{V}$

itself. For every

![]() $v\in\mathcal{V}$

, let

$v\in\mathcal{V}$

, let

![]() $\mathscr{P}(\mathcal{V};\;v) = \{\mathcal{W} \in \mathscr{P}(\mathcal{V})\;:\; v\in \mathcal{W} \}$

, that is,

$\mathscr{P}(\mathcal{V};\;v) = \{\mathcal{W} \in \mathscr{P}(\mathcal{V})\;:\; v\in \mathcal{W} \}$

, that is,

![]() $\mathscr{P}(\mathcal{V};\;v)$

comprises the elements of

$\mathscr{P}(\mathcal{V};\;v)$

comprises the elements of

![]() $\mathscr{P}(\mathcal{V})$

in which v participates. Hence,

$\mathscr{P}(\mathcal{V})$

in which v participates. Hence,

![]() $\bigcup_{v\in\mathcal{V}} \mathscr{P}(\mathcal{V};\;v) = \mathscr{P}(\mathcal{V})$

. We define

$\bigcup_{v\in\mathcal{V}} \mathscr{P}(\mathcal{V};\;v) = \mathscr{P}(\mathcal{V})$

. We define

![]() $\boldsymbol{{Y}} = (Y_{\mathcal{W}}, \mathcal{W} \in\mathscr{P}(\mathcal{V}))$

as a vector of independent Poisson distributed random variables with a corresponding mean-parameter vector

$\boldsymbol{{Y}} = (Y_{\mathcal{W}}, \mathcal{W} \in\mathscr{P}(\mathcal{V}))$

as a vector of independent Poisson distributed random variables with a corresponding mean-parameter vector

![]() $\boldsymbol{\gamma}= (\gamma_{\mathcal{W}}, \, \mathcal{W}\in\mathscr{P}(\mathcal{V}))$

, with

$\boldsymbol{\gamma}= (\gamma_{\mathcal{W}}, \, \mathcal{W}\in\mathscr{P}(\mathcal{V}))$

, with

![]() $\gamma_{\mathcal{W}}\geq 0$

for every

$\gamma_{\mathcal{W}}\geq 0$

for every

![]() $\mathcal{W} \in \mathscr{P}(\mathcal{V})$

. We use the convention

$\mathcal{W} \in \mathscr{P}(\mathcal{V})$

. We use the convention

![]() $Y_{\mathcal{W}} = 0$

whenever

$Y_{\mathcal{W}} = 0$

whenever

![]() $\gamma_{\mathcal{W}} = 0$

. Letting

$\gamma_{\mathcal{W}} = 0$

. Letting

![]() $\boldsymbol{{D}} = (D_v,\,v \in \mathcal{V}) \sim \text{MPCS}(\boldsymbol{\lambda})$

, we have

$\boldsymbol{{D}} = (D_v,\,v \in \mathcal{V}) \sim \text{MPCS}(\boldsymbol{\lambda})$

, we have

![]() $D_v = \sum_{\mathcal{W} \in \mathscr{P}(\mathcal{V};\;v)} Y_{\mathcal{W}}, \; v \in \mathcal{V},$

where, from the closure on convolution of the Poisson distribution, each component of

$D_v = \sum_{\mathcal{W} \in \mathscr{P}(\mathcal{V};\;v)} Y_{\mathcal{W}}, \; v \in \mathcal{V},$

where, from the closure on convolution of the Poisson distribution, each component of

![]() $\boldsymbol{{D}}$

is Poisson distributed with parameter

$\boldsymbol{{D}}$

is Poisson distributed with parameter

![]() $\lambda_v = \sum_{\mathcal{W} \in \mathscr{P}(\mathcal{V};\;v)} \gamma_{\mathcal{W}}$

. The joint pgf of

$\lambda_v = \sum_{\mathcal{W} \in \mathscr{P}(\mathcal{V};\;v)} \gamma_{\mathcal{W}}$

. The joint pgf of

![]() $\boldsymbol{{D}}$

is given by

$\boldsymbol{{D}}$

is given by

with

![]() $\gamma_0 = - \sum_{\mathcal{W}\in \mathscr{P}(\mathcal{V})} \gamma_{\mathcal{W}}$

. We recall that the parameter vector

$\gamma_0 = - \sum_{\mathcal{W}\in \mathscr{P}(\mathcal{V})} \gamma_{\mathcal{W}}$

. We recall that the parameter vector

![]() $\boldsymbol{\gamma}$

is of length

$\boldsymbol{\gamma}$

is of length

![]() $|\mathscr{P}(\mathcal{V})| = 2^d$

. This may make computations regarding the multivariate Poisson distribution cumbersome, as discussed earlier.

$|\mathscr{P}(\mathcal{V})| = 2^d$

. This may make computations regarding the multivariate Poisson distribution cumbersome, as discussed earlier.

The following proposition provides an alternative parameterization and stochastic representation of

![]() $\boldsymbol{{N}}$

in terms of common shocks.

$\boldsymbol{{N}}$

in terms of common shocks.

Proposition 2.7. Consider a tree

![]() $\mathcal{T}= (\mathcal{V},\mathcal{E})$

and, for every

$\mathcal{T}= (\mathcal{V},\mathcal{E})$

and, for every

![]() $v\in\mathcal{V}$

, let

$v\in\mathcal{V}$

, let

![]() $\Theta_v$

be the set of all subtrees of

$\Theta_v$

be the set of all subtrees of

![]() $\mathcal{T}$

in which v participates, meaning

$\mathcal{T}$

in which v participates, meaning

![]() $\Theta_v = \{\mathcal{W} \in \mathscr{P}(\mathcal{V};\;v)$

: for every

$\Theta_v = \{\mathcal{W} \in \mathscr{P}(\mathcal{V};\;v)$

: for every

![]() $i, j \in \!\mathcal{W}, \, k,l \in\! \mathcal{W}$

for every

$i, j \in \!\mathcal{W}, \, k,l \in\! \mathcal{W}$

for every

![]() $(k, l) \in\! \mathrm{path}(i,j)\}$

. If

$(k, l) \in\! \mathrm{path}(i,j)\}$

. If

![]() $\boldsymbol{{N}}\sim\text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

, with

$\boldsymbol{{N}}\sim\text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

, with

![]() $(\boldsymbol{\lambda},\boldsymbol{\alpha})\in{\boldsymbol{\Lambda}}$

, then

$(\boldsymbol{\lambda},\boldsymbol{\alpha})\in{\boldsymbol{\Lambda}}$

, then

![]() $\boldsymbol{{N}}$

admits the following alternative stochastic representation:

$\boldsymbol{{N}}$

admits the following alternative stochastic representation:

where

![]() $\{Y_{\mathcal{W}},\, \mathcal{W}\in\bigcup_{v\in\mathcal{V}}\Theta_v\}$

are independent Poisson random variables of respective parameters

$\{Y_{\mathcal{W}},\, \mathcal{W}\in\bigcup_{v\in\mathcal{V}}\Theta_v\}$

are independent Poisson random variables of respective parameters

\begin{equation} \gamma_{\mathcal{W}} = \left( \prod_{w\in \mathcal{W}} \lambda_w \right)\left(\prod_{(i,j)\in\mathcal{E}_{\mathcal{W}}} \frac{\alpha_{(i,j)}}{\sqrt{\lambda_i\lambda_j}}\right)\left(\prod_{(i,j)\in\mathcal{E}^{\dagger}_{\mathcal{W}}} \left(1-\alpha_{(i,j)}\sqrt{\frac{\lambda_j}{\lambda_i}}\right)\right)\!, \quad \mathcal{W}\in \bigcup_{v\in\mathcal{V}}\Theta_v, \end{equation}

\begin{equation} \gamma_{\mathcal{W}} = \left( \prod_{w\in \mathcal{W}} \lambda_w \right)\left(\prod_{(i,j)\in\mathcal{E}_{\mathcal{W}}} \frac{\alpha_{(i,j)}}{\sqrt{\lambda_i\lambda_j}}\right)\left(\prod_{(i,j)\in\mathcal{E}^{\dagger}_{\mathcal{W}}} \left(1-\alpha_{(i,j)}\sqrt{\frac{\lambda_j}{\lambda_i}}\right)\right)\!, \quad \mathcal{W}\in \bigcup_{v\in\mathcal{V}}\Theta_v, \end{equation}

with

![]() $\mathcal{E}_{\mathcal{W}} = \{(i,j)\in\mathcal{E} :\, i,j\in \mathcal{W}\}$

and

$\mathcal{E}_{\mathcal{W}} = \{(i,j)\in\mathcal{E} :\, i,j\in \mathcal{W}\}$

and

![]() $\mathcal{E}_{\mathcal{W}}^{\dagger} = \{(i,j)\in\mathcal{E} :\, i\in \mathcal{W}, j\not\in \mathcal{W}\}$

.

$\mathcal{E}_{\mathcal{W}}^{\dagger} = \{(i,j)\in\mathcal{E} :\, i\in \mathcal{W}, j\not\in \mathcal{W}\}$

.

The upper limit for

![]() $\alpha_e$

,

$\alpha_e$

,

![]() $e\in\mathcal{E}$

, for

$e\in\mathcal{E}$

, for

![]() $(\boldsymbol{\lambda},\boldsymbol{\alpha})$

to be in

$(\boldsymbol{\lambda},\boldsymbol{\alpha})$

to be in

![]() ${\boldsymbol{\Lambda}}$

, ensures

${\boldsymbol{\Lambda}}$

, ensures

![]() $\gamma_{\mathcal{W}}\geq0$

for every

$\gamma_{\mathcal{W}}\geq0$

for every

![]() $\mathcal{W}\in\bigcup_{v\in\mathcal{V}}\Theta_v$

.

$\mathcal{W}\in\bigcup_{v\in\mathcal{V}}\Theta_v$

.

Given Proposition 2.7, one easily sees that

![]() $\boldsymbol{{N}}$

follows a multivariate Poisson with vector of parameters

$\boldsymbol{{N}}$

follows a multivariate Poisson with vector of parameters

![]() $\boldsymbol{\gamma}= (\gamma_V, \, V\in\mathscr{P}(\mathcal{V}))$

such that

$\boldsymbol{\gamma}= (\gamma_V, \, V\in\mathscr{P}(\mathcal{V}))$

such that

\begin{equation*} \gamma_{V} =\begin{cases}\gamma_{\mathcal{W}},&\text{if }{\mathcal{W}\in{\bigcup_{v \in \mathcal{V}}\Theta_v}}\\[3pt]0,&\text{else}\end{cases},\quad V \in \mathscr{P}(\mathcal{V}).\end{equation*}

\begin{equation*} \gamma_{V} =\begin{cases}\gamma_{\mathcal{W}},&\text{if }{\mathcal{W}\in{\bigcup_{v \in \mathcal{V}}\Theta_v}}\\[3pt]0,&\text{else}\end{cases},\quad V \in \mathscr{P}(\mathcal{V}).\end{equation*}

Hence, Proposition 2.7 shows

![]() $\mathbb{MPMRF}\subseteq\mathbb{MPCS}$

. For a further discussion on the connection between the thinning and the common-shock approaches for Poisson random variables, see Liu et al. (Reference Liu, Shi, Yao and Yang2024) and their Remark 2.3 in particular. Although the number of non-zero parameters in the common-shock representation of

$\mathbb{MPMRF}\subseteq\mathbb{MPCS}$

. For a further discussion on the connection between the thinning and the common-shock approaches for Poisson random variables, see Liu et al. (Reference Liu, Shi, Yao and Yang2024) and their Remark 2.3 in particular. Although the number of non-zero parameters in the common-shock representation of

![]() $\mathbb{MPMRF}$

is lower than

$\mathbb{MPMRF}$

is lower than

![]() $2^d$

(as for

$2^d$

(as for

![]() $\mathbb{MPCS}$

), the reduction is not substantial enough to overcome computation challenges. Moreover, the parameterization in terms of

$\mathbb{MPCS}$

), the reduction is not substantial enough to overcome computation challenges. Moreover, the parameterization in terms of

![]() $\boldsymbol{\gamma}$

intertwines the dependencies and the marginals, thereby removing their intended parametric disconnection. Theorem 2.2 remains a simpler representation, as put forth in Example 2.8. The family

$\boldsymbol{\gamma}$

intertwines the dependencies and the marginals, thereby removing their intended parametric disconnection. Theorem 2.2 remains a simpler representation, as put forth in Example 2.8. The family

![]() $\mathbb{MPMRF}$

being a subset of

$\mathbb{MPMRF}$

being a subset of

![]() $\mathbb{MPCS}$

, it cannot grow out of the latters’ limitations in terms of achievable dependence structures. The advantage of

$\mathbb{MPCS}$

, it cannot grow out of the latters’ limitations in terms of achievable dependence structures. The advantage of

![]() $\mathbb{MPMRF}$

over generic elements of

$\mathbb{MPMRF}$

over generic elements of

![]() $\mathbb{MPCS}$

comes from its binomial thinning stochastic representation (2.2) and the computational efficiency ensuing.

$\mathbb{MPCS}$

comes from its binomial thinning stochastic representation (2.2) and the computational efficiency ensuing.

Example 2.8. A 5-variate distribution in

![]() $\mathbb{MPCS}$

generally requires

$\mathbb{MPCS}$

generally requires

![]() $2^5 = 32$

parameters. Consider

$2^5 = 32$

parameters. Consider

![]() $\boldsymbol{{N}} \sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

where

$\boldsymbol{{N}} \sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

where

![]() $\mathcal{T}$

is structured as in Figure 2. Using (2.7), we develop

$\mathcal{T}$

is structured as in Figure 2. Using (2.7), we develop

![]() $\boldsymbol{{N}}$

into its common shock representation in Figure 2. One notices that constructing

$\boldsymbol{{N}}$

into its common shock representation in Figure 2. One notices that constructing

![]() $\boldsymbol{\gamma}$

demands

$\boldsymbol{\gamma}$

demands

![]() $|\bigcup_{v \in \mathcal{V}}\Theta_v| = 17$

non-zero parameters, which is a meaningful diminution, but still much higher than the nine parameters required by the representation in Theorem 2.2. A comparison of

$|\bigcup_{v \in \mathcal{V}}\Theta_v| = 17$

non-zero parameters, which is a meaningful diminution, but still much higher than the nine parameters required by the representation in Theorem 2.2. A comparison of

![]() $N_1$

and

$N_1$

and

![]() $N_2$

in Figure 2 reveals that a change in

$N_2$

in Figure 2 reveals that a change in

![]() $\gamma_{\{1,2\}}$

affects both mean parameters of the random variables

$\gamma_{\{1,2\}}$

affects both mean parameters of the random variables

![]() $N_1$

and

$N_1$

and

![]() $N_2$

. The parameters

$N_2$

. The parameters

![]() $\gamma_{\mathcal{W}}$

associated with each

$\gamma_{\mathcal{W}}$

associated with each

![]() $Y_{\mathcal{W}}$

in Figure 2.8 are given in Table 1. We verify easily that

$Y_{\mathcal{W}}$

in Figure 2.8 are given in Table 1. We verify easily that

![]() $N_v\sim\text{Poisson}(\lambda_v)$

for every

$N_v\sim\text{Poisson}(\lambda_v)$

for every

![]() $v\in\{1,\ldots,5\}$

. In Table 1 of Supplement A, a numerical instance illustrates the equivalence between

$v\in\{1,\ldots,5\}$

. In Table 1 of Supplement A, a numerical instance illustrates the equivalence between

![]() $\mathbb{MRMRF}$

and

$\mathbb{MRMRF}$

and

![]() $\mathbb{MPCS}$

.

$\mathbb{MPCS}$

.

Tree

![]() $\mathcal{T}$

of Example 2.8 and

$\mathcal{T}$

of Example 2.8 and

![]() $\boldsymbol{{N}}$

components’ common shock representations.

$\boldsymbol{{N}}$

components’ common shock representations.

Proposition 2.7 makes clear the difference between

![]() $\mathbb{MPMRF}$

and the tree-structured multivariate Poisson distribution examined in Kızıldemir and Privault (Reference Kzldemir and Privault2017). In the latter, there are only random variables

$\mathbb{MPMRF}$

and the tree-structured multivariate Poisson distribution examined in Kızıldemir and Privault (Reference Kzldemir and Privault2017). In the latter, there are only random variables

![]() $Y_{\mathcal{W}}$

from the representation in (2.7) for

$Y_{\mathcal{W}}$

from the representation in (2.7) for

![]() $\mathcal{W}\in\mathscr{P}(\mathcal{V};\;v)$

comprising two elements, given by the set of edges

$\mathcal{W}\in\mathscr{P}(\mathcal{V};\;v)$

comprising two elements, given by the set of edges

![]() $\mathcal{E}$

of the graph. There are no shock random variables

$\mathcal{E}$

of the graph. There are no shock random variables

![]() $Y_{\mathcal{W}}$

for

$Y_{\mathcal{W}}$

for

![]() $|\mathcal{W}|\geq 3$

. As a consequence, the multivariate distribution does not exhibit the conditional independence relations from Definition 2.1 to render a MRF.

$|\mathcal{W}|\geq 3$

. As a consequence, the multivariate distribution does not exhibit the conditional independence relations from Definition 2.1 to render a MRF.

While previous work has extended the multivariate Poisson distribution based on common shocks to higher dimensions, no method combines minimal parameters with the wide variety of dependence structures achievable by

![]() $\mathbb{MPMRF}$

. For instance, Schulz et al. (Reference Schulz, Genest and Mesfioui2021) generalize the bivariate Poisson model from Genest et al. (Reference Genest, Mesfioui and Schulz2018) to higher dimensions, requiring only

$\mathbb{MPMRF}$

. For instance, Schulz et al. (Reference Schulz, Genest and Mesfioui2021) generalize the bivariate Poisson model from Genest et al. (Reference Genest, Mesfioui and Schulz2018) to higher dimensions, requiring only

![]() $d+1$

parameters, but this approach imposes limitations on the correlation structure by restricting dependence to a single parameter. Murphy and Schulz (Reference Murphy and Schulz2025) address this limitation with the multivariate Poisson distribution based on triangular comonotonic shocks but requires

$d+1$

parameters, but this approach imposes limitations on the correlation structure by restricting dependence to a single parameter. Murphy and Schulz (Reference Murphy and Schulz2025) address this limitation with the multivariate Poisson distribution based on triangular comonotonic shocks but requires

![]() $d+d(d-1)/2 = \mathcal{O}(d^2)$

parameters, still computationally intensive in high-dimensional settings. The

$d+d(d-1)/2 = \mathcal{O}(d^2)$

parameters, still computationally intensive in high-dimensional settings. The

![]() $\mathbb{MPMRF}$

family, by comparison, achieves complex dependence structures with only

$\mathbb{MPMRF}$

family, by comparison, achieves complex dependence structures with only

![]() $2d-1$

parameters, scaling more efficiently at

$2d-1$

parameters, scaling more efficiently at

![]() $\mathcal{O}(d)$

. This allows for convenient estimation in higher dimensions; further discussion is provided in Section 6. The comonotonic shock approach in the above papers accommodates a broader range of correlations, but it cannot be expressed as a thinning operation. One could modify

$\mathcal{O}(d)$

. This allows for convenient estimation in higher dimensions; further discussion is provided in Section 6. The comonotonic shock approach in the above papers accommodates a broader range of correlations, but it cannot be expressed as a thinning operation. One could modify

![]() $\mathbb{MPMRF}$

by letting the construction in (2.7) be in terms of comonotonic shocks but would therefore be unable to reexpress it in terms of an iterative stochastic construction as in (2.2). The latter is what enables efficient computational methods, as we discuss in the upcoming sections.

$\mathbb{MPMRF}$

by letting the construction in (2.7) be in terms of comonotonic shocks but would therefore be unable to reexpress it in terms of an iterative stochastic construction as in (2.2). The latter is what enables efficient computational methods, as we discuss in the upcoming sections.

3. Aggregate analysis of the portfolio

A risk model

![]() $\boldsymbol{{X}} = (X_v =\sum_{j=1}^{N_v} B_{v,j}, \; v\in\mathcal{V})$

as defined in (1.1) with

$\boldsymbol{{X}} = (X_v =\sum_{j=1}^{N_v} B_{v,j}, \; v\in\mathcal{V})$

as defined in (1.1) with

![]() $\boldsymbol{{N}}$

from Theorem 2.2 benefits from analytical and computable expressions, even if the dimension

$\boldsymbol{{N}}$

from Theorem 2.2 benefits from analytical and computable expressions, even if the dimension

![]() $d =|\mathcal{V}|$

is high. The flexibility in choosing parameters

$d =|\mathcal{V}|$

is high. The flexibility in choosing parameters

![]() $(\boldsymbol{\lambda},\boldsymbol{\alpha})\in\boldsymbol{\Lambda}$

and the underlying tree

$(\boldsymbol{\lambda},\boldsymbol{\alpha})\in\boldsymbol{\Lambda}$

and the underlying tree

![]() $\mathcal{T}$

provides a diversity of dependence structures.

$\mathcal{T}$

provides a diversity of dependence structures.

The joint Laplace–Stieltjes transform (LST) of

![]() $\boldsymbol{{X}}$

, denoted

$\boldsymbol{{X}}$

, denoted

![]() $\mathcal{L}_{\boldsymbol{{X}}}$

, used to obtain the distribution of the aggregate claim amount for the portfolio, is given by

$\mathcal{L}_{\boldsymbol{{X}}}$

, used to obtain the distribution of the aggregate claim amount for the portfolio, is given by

\begin{equation}\mathcal{L}_{\boldsymbol{{X}}}(\boldsymbol{{t}}) =\mathbb{E}\left[\prod_{v \in \mathcal{V}} \mathrm{e}^{-t_v X_v} \right] =\mathcal{P}_{\boldsymbol{{N}}}(\mathcal{L}_{B_1}(t_1), \ldots, \mathcal{L}_{B_d}(t_d)) = \prod_{v\in\mathcal{V}} \mathrm{e}^{\zeta_{L_v}\left(\eta_{v}^{\mathcal{T}_r}(\pmb{\mathcal{L}}_{B_v}(\boldsymbol{{t}}_{v\mathrm{dsc}(v)}); \boldsymbol{\theta}_{\mathrm{dsc}(v)}) - 1\right)},\end{equation}

\begin{equation}\mathcal{L}_{\boldsymbol{{X}}}(\boldsymbol{{t}}) =\mathbb{E}\left[\prod_{v \in \mathcal{V}} \mathrm{e}^{-t_v X_v} \right] =\mathcal{P}_{\boldsymbol{{N}}}(\mathcal{L}_{B_1}(t_1), \ldots, \mathcal{L}_{B_d}(t_d)) = \prod_{v\in\mathcal{V}} \mathrm{e}^{\zeta_{L_v}\left(\eta_{v}^{\mathcal{T}_r}(\pmb{\mathcal{L}}_{B_v}(\boldsymbol{{t}}_{v\mathrm{dsc}(v)}); \boldsymbol{\theta}_{\mathrm{dsc}(v)}) - 1\right)},\end{equation}

for

![]() $\boldsymbol{{t}} \in \mathbb{R}_+^d$

, with the sequence of joint pgfs

$\boldsymbol{{t}} \in \mathbb{R}_+^d$

, with the sequence of joint pgfs

![]() $\{\eta_{v}^{\mathcal{T}_r},\,v\in\mathcal{V}\}$

defined by the recursive relation in (2.3), and with the vectors

$\{\eta_{v}^{\mathcal{T}_r},\,v\in\mathcal{V}\}$

defined by the recursive relation in (2.3), and with the vectors

![]() $\pmb{\mathcal{L}}_{B_v}(\boldsymbol{{t}}_{v\mathrm{dsc}(v)}) = (\mathcal{L}_{B_{j}}(t_j), j \in \{v\} \cup \mathrm{dsc}(v))$

and

$\pmb{\mathcal{L}}_{B_v}(\boldsymbol{{t}}_{v\mathrm{dsc}(v)}) = (\mathcal{L}_{B_{j}}(t_j), j \in \{v\} \cup \mathrm{dsc}(v))$

and

![]() $\boldsymbol{\theta}_{\mathrm{dsc}(v)}= (\alpha_{(\mathrm{pa}(k), k)}\sqrt{{\lambda_k}/{\lambda_{\mathrm{pa}(k)}}}, k \in \mathrm{dsc}(v))$

for every

$\boldsymbol{\theta}_{\mathrm{dsc}(v)}= (\alpha_{(\mathrm{pa}(k), k)}\sqrt{{\lambda_k}/{\lambda_{\mathrm{pa}(k)}}}, k \in \mathrm{dsc}(v))$

for every

![]() $v\in\mathcal{V}$

.

$v\in\mathcal{V}$

.

Given

![]() $\mathcal{L}_{S}(t) = \mathcal{L}_{\boldsymbol{{X}}}(t,\ldots,t) = \mathcal{P}_{\boldsymbol{{N}}}(\mathcal{L}_{B_{1}}(t), \ldots, \mathcal{L}_{B_{d}}(t))$

,

$\mathcal{L}_{S}(t) = \mathcal{L}_{\boldsymbol{{X}}}(t,\ldots,t) = \mathcal{P}_{\boldsymbol{{N}}}(\mathcal{L}_{B_{1}}(t), \ldots, \mathcal{L}_{B_{d}}(t))$

,

![]() $t \geq 0$

(Theorem 1 of Wang, Reference Wang1998), the joint LST in (3.1) leads to the following LST of the aggregate claim S:

$t \geq 0$

(Theorem 1 of Wang, Reference Wang1998), the joint LST in (3.1) leads to the following LST of the aggregate claim S:

implying that S follows a compound Poisson distribution with primary mean parameter

![]() $\lambda_S = \sum_{v \in \mathcal{V}} \zeta_{L_v}$

and secondary LST given by

$\lambda_S = \sum_{v \in \mathcal{V}} \zeta_{L_v}$

and secondary LST given by

![]() $\mathcal{L}_{C_S}(t) = \sum_{v \in \mathcal{V}}\left({\zeta_{L_v}}/{\lambda_S}\right) \eta_v^{\mathcal{T}_r} (\pmb{\mathcal{L}}_{B_v}(t \,\boldsymbol{1}_{v\mathrm{dsc}(v)}); \boldsymbol{\theta}_{\mathrm{dsc}(v)})$

,

$\mathcal{L}_{C_S}(t) = \sum_{v \in \mathcal{V}}\left({\zeta_{L_v}}/{\lambda_S}\right) \eta_v^{\mathcal{T}_r} (\pmb{\mathcal{L}}_{B_v}(t \,\boldsymbol{1}_{v\mathrm{dsc}(v)}); \boldsymbol{\theta}_{\mathrm{dsc}(v)})$

,

![]() $t\geq 0$

.

$t\geq 0$

.

Generating realizations of

![]() $\boldsymbol{{X}}$

is straightforward, given that, the stochastic representation of

$\boldsymbol{{X}}$

is straightforward, given that, the stochastic representation of

![]() $\boldsymbol{{N}}$

allows for an easily scalable sampling method. One generates realizations for each component of

$\boldsymbol{{N}}$

allows for an easily scalable sampling method. One generates realizations for each component of

![]() $\boldsymbol{{N}}$

successively; this is well suited for high-dimensional contexts. In this vein, by adapting Algorithm 2 from Côté et al. (Reference Côté, Cossette and Marceau2025b) to accommodate flexible mean parameters, one can efficiently produce a realization of

$\boldsymbol{{N}}$

successively; this is well suited for high-dimensional contexts. In this vein, by adapting Algorithm 2 from Côté et al. (Reference Côté, Cossette and Marceau2025b) to accommodate flexible mean parameters, one can efficiently produce a realization of

![]() $\boldsymbol{{X}}$

by independently producing a realization of

$\boldsymbol{{X}}$

by independently producing a realization of

![]() $\boldsymbol{{N}}$

and of the claim amounts.

$\boldsymbol{{N}}$

and of the claim amounts.

For discrete claim amount random variables, values of the pmf of S can directly be computed using the fast Fourier transform (FFT) algorithm or Panjer’s recursion. Algorithm 1 in Supplement B illustrates a procedure for our context using FFT. Discretization methods or mixed Erlang approximations may be used for continuous claim amounts. Mixed Erlang distributions are known to approximate any continuous positive distribution effectively; see for instance, Tijms (Reference Tijms1994).

Remark 3.1. Let

![]() $\boldsymbol{{X}}$

be a multivariate compound Poisson with

$\boldsymbol{{X}}$

be a multivariate compound Poisson with

![]() $\boldsymbol{{N}} \sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

. We assume each

$\boldsymbol{{N}} \sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

. We assume each

![]() ${B_v},\,v \in \mathcal{V}$

, follows a mixed Erlang distribution with parameters

${B_v},\,v \in \mathcal{V}$

, follows a mixed Erlang distribution with parameters

![]() $(\boldsymbol{\pi}_v, \beta_v)$

where

$(\boldsymbol{\pi}_v, \beta_v)$

where

![]() $\boldsymbol{\pi}_v = (\pi_{v,k}, k \in \mathbb{N}_1)$

is a vector of non-negative weight parameters,

$\boldsymbol{\pi}_v = (\pi_{v,k}, k \in \mathbb{N}_1)$

is a vector of non-negative weight parameters,

![]() $\sum_{k=1}^{\infty} \pi_{v,k} = 1$

, and

$\sum_{k=1}^{\infty} \pi_{v,k} = 1$

, and

![]() $\beta_v \gt 0$

. The LST of S in (3.2) becomes

$\beta_v \gt 0$

. The LST of S in (3.2) becomes

\begin{equation}\mathcal{L}_{S}(t) = \exp\left\{\lambda_S \left( \sum_{v \in \mathcal{V}} \frac{\zeta_{L_v}}{ \lambda_S} \mathcal{P}_{\boldsymbol{{G}}^{\mathcal{T}_r}_v}\left\{ \left(\mathcal{P}_{\widetilde{K}_j}(\mathcal{L}_{B_{\max}}(t)),\, j\in \{v\}\cup\mathrm{dsc}(v) \right) \right\} \right) \right\} = \mathcal{P}_W(\mathcal{L}_{B_{\max}}(t)),\end{equation}

\begin{equation}\mathcal{L}_{S}(t) = \exp\left\{\lambda_S \left( \sum_{v \in \mathcal{V}} \frac{\zeta_{L_v}}{ \lambda_S} \mathcal{P}_{\boldsymbol{{G}}^{\mathcal{T}_r}_v}\left\{ \left(\mathcal{P}_{\widetilde{K}_j}(\mathcal{L}_{B_{\max}}(t)),\, j\in \{v\}\cup\mathrm{dsc}(v) \right) \right\} \right) \right\} = \mathcal{P}_W(\mathcal{L}_{B_{\max}}(t)),\end{equation}

for

![]() $t \geq 0$

, where

$t \geq 0$

, where

![]() $\widetilde{K}_j$

is as defined in Appendix A.4,

$\widetilde{K}_j$

is as defined in Appendix A.4,

![]() $B_{\max}\sim$

Exp

$B_{\max}\sim$

Exp

![]() $({\max}_{v\in\mathcal{V}} \beta_{v})$

and

$({\max}_{v\in\mathcal{V}} \beta_{v})$

and

![]() $\boldsymbol{{G}}^{\mathcal{T}_r}_v = (G^{\mathcal{T}_r}_{v,j}, j \in \{v\} \cup \mathrm{dsc}(v))$

is a vector of discrete random variables whose joint pgf is given by

$\boldsymbol{{G}}^{\mathcal{T}_r}_v = (G^{\mathcal{T}_r}_{v,j}, j \in \{v\} \cup \mathrm{dsc}(v))$

is a vector of discrete random variables whose joint pgf is given by

![]() $\eta^{\mathcal{T}_r}_v(\boldsymbol{{t}}_{v\mathrm{dsc}(v)};\;\boldsymbol{\theta}_{\mathrm{dsc}(v)})$

,

$\eta^{\mathcal{T}_r}_v(\boldsymbol{{t}}_{v\mathrm{dsc}(v)};\;\boldsymbol{\theta}_{\mathrm{dsc}(v)})$

,

![]() $\boldsymbol{{t}}\in[\!-1,1]^d$

, as in Definition 2.5. We recognize in (3.3) the LST of a mixed Erlang distribution.

$\boldsymbol{{t}}\in[\!-1,1]^d$

, as in Definition 2.5. We recognize in (3.3) the LST of a mixed Erlang distribution.

Hence, to perform computations regarding S, one must simply compute the pmf of W, relying on (3.3) and Algorithm 1. This is at the core of Algorithm 2 in Supplement B, which computes the cumulative distribution function (cdf) of S under mixed Erlang claim amounts. With the distribution of S, one may compute the portfolio’s required capital through different risk measures. This allows to complete our first risk management task regarding the quantification of the portfolio’s risk.

4. Risk sharing

A subsequent risk management task involves the proper allocation of the portfolio’s required capital to each component. This allocation can be performed ex ante; the allocation rule divides the overall portfolio’s risk, quantified by a risk measure, into shares for each component of

![]() $\boldsymbol{{X}}$

based on their respective levels of risk. When dealing with positively homogeneous risk measures, Euler’s principle can be utilized to determine the value of these shares. A well-known example of such risk measures is the TVaR. For a random variable Z, the TVaR at confidence level

$\boldsymbol{{X}}$

based on their respective levels of risk. When dealing with positively homogeneous risk measures, Euler’s principle can be utilized to determine the value of these shares. A well-known example of such risk measures is the TVaR. For a random variable Z, the TVaR at confidence level

![]() $\kappa\in[0,1)$

is given by

$\kappa\in[0,1)$

is given by

![]() $ \mathrm{TVaR}_{\kappa}(Z) = \frac{1}{1 - \kappa} \int_{\kappa}^1 \text{VaR}_u(Z) \, \mathrm{d}u$

, where

$ \mathrm{TVaR}_{\kappa}(Z) = \frac{1}{1 - \kappa} \int_{\kappa}^1 \text{VaR}_u(Z) \, \mathrm{d}u$

, where

![]() $\mathrm{VaR}_u(Z) = \inf\{x\in\mathbb{R} \;:\; F_Z(x)\geq u\}$

, and

$\mathrm{VaR}_u(Z) = \inf\{x\in\mathbb{R} \;:\; F_Z(x)\geq u\}$

, and

![]() $u\in[0,1)$

. Let us recall that mixed Erlang distributions may approximate any continuous claim amount distributions; we showed in Section 3 that the pmf of S can be exactly computed in this case. The results from Cossette et al. (Reference Cossette, Mailhot and Marceau2012) are thus readily applicable for computing the exact contribution to the TVaR based on Euler’s rule. If claim amount distributions are discrete, additional manipulations are required to allocate risk. In such a case, the contribution of

$u\in[0,1)$

. Let us recall that mixed Erlang distributions may approximate any continuous claim amount distributions; we showed in Section 3 that the pmf of S can be exactly computed in this case. The results from Cossette et al. (Reference Cossette, Mailhot and Marceau2012) are thus readily applicable for computing the exact contribution to the TVaR based on Euler’s rule. If claim amount distributions are discrete, additional manipulations are required to allocate risk. In such a case, the contribution of

![]() $X_v$

,

$X_v$

,

![]() $v\in\mathcal{V}$

, to the TVaR of S under Euler’s principle is given by

$v\in\mathcal{V}$

, to the TVaR of S under Euler’s principle is given by

\begin{align} \mathcal{C}^{\mathrm{TVaR}}_{\kappa}(X_v;\, S) &= \frac{1}{1-\kappa}\left( \mathbb{E}[X_v\unicode{x1D7D9}_{\{S\gt\mathrm{VaR}_{\kappa}(S)\}}] + \mathbb{E}[X_v|S=\mathrm{VaR}_{\kappa}(S)](F_S(\mathrm{VaR}_{\kappa}(S))-\kappa)\right) \notag\\[3pt] &= \frac{1}{1-\kappa}\left( \mathbb{E}[X_v] - \sum_{k=0}^{\mathrm{VaR}_{\kappa}(S)}\mathbb{E}[X_v\unicode{x1D7D9}_{\{S=k\}}] + \frac{F_S(\mathrm{VaR}_{\kappa}(S))-\kappa}{p_S(\mathrm{VaR}_{\kappa}(S))} \mathbb{E}[X_v\unicode{x1D7D9}_{\{S=\mathrm{VaR}_{\kappa}(S)\}}]\right)\!,\end{align}

\begin{align} \mathcal{C}^{\mathrm{TVaR}}_{\kappa}(X_v;\, S) &= \frac{1}{1-\kappa}\left( \mathbb{E}[X_v\unicode{x1D7D9}_{\{S\gt\mathrm{VaR}_{\kappa}(S)\}}] + \mathbb{E}[X_v|S=\mathrm{VaR}_{\kappa}(S)](F_S(\mathrm{VaR}_{\kappa}(S))-\kappa)\right) \notag\\[3pt] &= \frac{1}{1-\kappa}\left( \mathbb{E}[X_v] - \sum_{k=0}^{\mathrm{VaR}_{\kappa}(S)}\mathbb{E}[X_v\unicode{x1D7D9}_{\{S=k\}}] + \frac{F_S(\mathrm{VaR}_{\kappa}(S))-\kappa}{p_S(\mathrm{VaR}_{\kappa}(S))} \mathbb{E}[X_v\unicode{x1D7D9}_{\{S=\mathrm{VaR}_{\kappa}(S)\}}]\right)\!,\end{align}

for

![]() $\kappa \in [0,1)$

; see, for instance, Section 2 in Mausser and Romanko (Reference Mausser and Romanko2018).

$\kappa \in [0,1)$

; see, for instance, Section 2 in Mausser and Romanko (Reference Mausser and Romanko2018).

A risk modeler may prefer the covariance-based allocation rule instead of

![]() $C^{\mathrm{TVaR}}_\kappa(X_v, S)$

for

$C^{\mathrm{TVaR}}_\kappa(X_v, S)$

for

![]() $v \in \mathcal{V}$

. The contribution amount of risk

$v \in \mathcal{V}$

. The contribution amount of risk

![]() $X_v$

, denoted by

$X_v$

, denoted by

![]() $C^{\mathrm{Cov}}_\kappa(X_v, S)$

, is given by

$C^{\mathrm{Cov}}_\kappa(X_v, S)$

, is given by

Both allocation rules ensure that the sum of the contributions equals

![]() $\mathrm{TVaR}_\kappa(S)$

, the required capital for the tail risk of the portfolio. Moreover, both rules satisfy Euler’s principle. For detailed discussions on these allocation principles, we refer the reader to Tasche (Reference Tasche1999) and McNeil et al. (Reference McNeil, Frey and Embrechts2015) and to Hesselager and Andersson (Reference Hesselager and Andersson2002) for further information on the covariance-based allocation rule.

$\mathrm{TVaR}_\kappa(S)$

, the required capital for the tail risk of the portfolio. Moreover, both rules satisfy Euler’s principle. For detailed discussions on these allocation principles, we refer the reader to Tasche (Reference Tasche1999) and McNeil et al. (Reference McNeil, Frey and Embrechts2015) and to Hesselager and Andersson (Reference Hesselager and Andersson2002) for further information on the covariance-based allocation rule.

To allocate the aggregate risk ex post, one may choose a fair risk sharing rule. A risk sharing rule is a mapping that assigns to each participant a contribution

![]() $h_{v,d}(S)$

such that

$h_{v,d}(S)$

such that

![]() $\sum_{v=1}^d h_{v,d}(S) = S$

. A rule is said to be fair if it also satisfies

$\sum_{v=1}^d h_{v,d}(S) = S$

. A rule is said to be fair if it also satisfies

![]() $\mathbb{E}[h_{v,d}(S)] = \mathbb{E}[X_v] = \mu_v$

for all v, ensuring participants pay their expected loss on average. In the context of peer-to-peer insurance, for instance, risk sharing rules serve to determine each participant’s contribution to the pool (Denuit et al. Reference Denuit, Dhaene and Robert2022).

$\mathbb{E}[h_{v,d}(S)] = \mathbb{E}[X_v] = \mu_v$

for all v, ensuring participants pay their expected loss on average. In the context of peer-to-peer insurance, for instance, risk sharing rules serve to determine each participant’s contribution to the pool (Denuit et al. Reference Denuit, Dhaene and Robert2022).

Linear fair rules take the form

![]() $h_{v,d}^{\text{lin}}(S) = \mu_v + a_{v,d}(S - \mathbb{E}[S])$

, where the coefficients

$h_{v,d}^{\text{lin}}(S) = \mu_v + a_{v,d}(S - \mathbb{E}[S])$

, where the coefficients

![]() $a_{v,d}$

satisfy

$a_{v,d}$

satisfy

![]() $\sum_{v \in \mathcal{V}} a_{v,d} = 1$

. Two notable examples include the proportional rule, where

$\sum_{v \in \mathcal{V}} a_{v,d} = 1$

. Two notable examples include the proportional rule, where

![]() $a_{v,d} = \mu_v / \mathbb{E}[S]$

, allocating risk in proportion to expected losses; the linear regression rule, where

$a_{v,d} = \mu_v / \mathbb{E}[S]$

, allocating risk in proportion to expected losses; the linear regression rule, where

![]() $a_{v,d} = \mathrm{Cov}(X_v,S)/\mathrm{Var}(S)$

, allocating deviations according to volatility. This rule minimizes the mean squared error

$a_{v,d} = \mathrm{Cov}(X_v,S)/\mathrm{Var}(S)$

, allocating deviations according to volatility. This rule minimizes the mean squared error

![]() $\mathbb{E}[(X_v - h_{v,d}(S))^2]$

among all linear fair rules.

$\mathbb{E}[(X_v - h_{v,d}(S))^2]$

among all linear fair rules.

The (nonlinear) conditional-mean risk sharing rule (Denuit and Dhaene, Reference Denuit and Dhaene2012), defined by

![]() $h_{v,d}^{\star}(S) = \mathbb{E}[X_v|S]$

, minimizes

$h_{v,d}^{\star}(S) = \mathbb{E}[X_v|S]$

, minimizes

![]() $\mathbb{E}[(X_v - h_{v,d}(S))^2]$

over all measurable functions

$\mathbb{E}[(X_v - h_{v,d}(S))^2]$

over all measurable functions

![]() $h_{v,d}$

with finite variance. This rule is Pareto-optimal under risk aversion and does not rely on individual preference inclusion, making it particularly suitable for peer-to-peer insurance frameworks (Denuit and Dhaene, Reference Denuit and Dhaene2012). For discrete distributions, we have

$h_{v,d}$

with finite variance. This rule is Pareto-optimal under risk aversion and does not rely on individual preference inclusion, making it particularly suitable for peer-to-peer insurance frameworks (Denuit and Dhaene, Reference Denuit and Dhaene2012). For discrete distributions, we have

![]() $\mathbb{E}[X_v|S=k] = {\mathbb{E}[X_v\unicode{x1D7D9}_{\{S=k\}}]}/{p_{S}(k)}, \; v\in\mathcal{V}, \; k\in\mathrm{supp}(S)$

.

$\mathbb{E}[X_v|S=k] = {\mathbb{E}[X_v\unicode{x1D7D9}_{\{S=k\}}]}/{p_{S}(k)}, \; v\in\mathcal{V}, \; k\in\mathrm{supp}(S)$

.

4.1. Computation of risk allocations

A crucial component for calculating both

![]() $\mathcal{C}^{\mathrm{TVaR}}_{\kappa}(X_v;\;S)$

and

$\mathcal{C}^{\mathrm{TVaR}}_{\kappa}(X_v;\;S)$

and

![]() $\mathbb{E}[X_v|S=k]$

is the expected allocation:

$\mathbb{E}[X_v|S=k]$

is the expected allocation:

![]() $\mathbb{E}[X_v\unicode{x1D7D9}_{\{S=k\}}]$

, for

$\mathbb{E}[X_v\unicode{x1D7D9}_{\{S=k\}}]$

, for

![]() $k\in\mathrm{supp}(S)$

. The significance of expected allocations in the context of capital allocation is thoroughly discussed in Blier-Wong et al. (Reference Blier-Wong, Cossette and Marceau2025). The authors introduce an ordinary generating function for expected allocations, which is defined as follows.

$k\in\mathrm{supp}(S)$

. The significance of expected allocations in the context of capital allocation is thoroughly discussed in Blier-Wong et al. (Reference Blier-Wong, Cossette and Marceau2025). The authors introduce an ordinary generating function for expected allocations, which is defined as follows.

Definition 4.1 (OGFEA). Consider a vector of discrete random variables

![]() $\boldsymbol{{Z}}=(Z_1,\ldots, Z_d)$

taking values in

$\boldsymbol{{Z}}=(Z_1,\ldots, Z_d)$

taking values in

![]() $\mathbb{N}^{d}$

. The ordinary generating function of expected allocations (OGFEA) of

$\mathbb{N}^{d}$

. The ordinary generating function of expected allocations (OGFEA) of

![]() $Z_v$

,

$Z_v$

,

![]() $v\in\{1,\ldots,d\}$

, to the sum of components

$v\in\{1,\ldots,d\}$

, to the sum of components

![]() $\sum_{v=1}^d Z_v$

is given by

$\sum_{v=1}^d Z_v$

is given by

![]() $\mathcal{P}^{[v]}_{\sum_{v=1}^d Z_v}(t) = \sum_{k=0}^{\infty} \mathbb{E}[Z_v\unicode{x1D7D9}_{\{\sum_{v=1}^d Z_v = k\}}] t^k, \;t\in[\!-1,1].$

$\mathcal{P}^{[v]}_{\sum_{v=1}^d Z_v}(t) = \sum_{k=0}^{\infty} \mathbb{E}[Z_v\unicode{x1D7D9}_{\{\sum_{v=1}^d Z_v = k\}}] t^k, \;t\in[\!-1,1].$

The convenience of OGFEAs lies in the fact that information on expected allocations for all total outcomes is encapsulated within a single power series. We present the OGFEA for our model in the following theorem.

Theorem 4.2. Consider the risk model in (1.1), where

![]() $\boldsymbol{{N}} = (N_v,\,v \in \mathcal{V}) \sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

, for

$\boldsymbol{{N}} = (N_v,\,v \in \mathcal{V}) \sim \text{MPMRF}(\boldsymbol{\lambda}, \boldsymbol{\alpha}, \mathcal{T})$

, for

![]() $(\boldsymbol{\lambda},\boldsymbol{\alpha})\in\boldsymbol{\Lambda}$

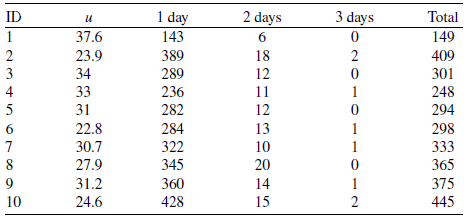

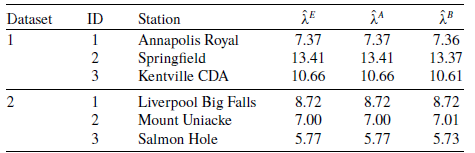

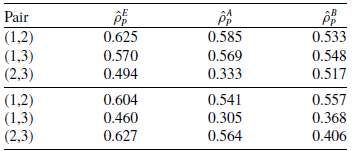

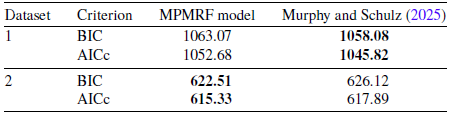

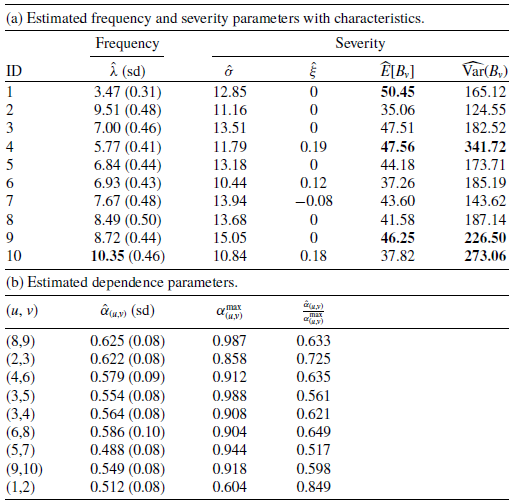

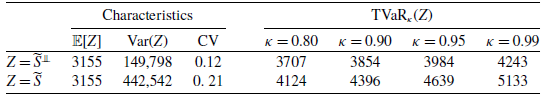

, and a tree