1 Introduction

In educational and psychological measurement, item response times (RTs), as a type of process data, offer valuable insights into examinees’ test-taking behaviors and performance (Lee & Chen, Reference Lee and Chen2011; Ratcliff & Kang, Reference Ratcliff and Kang2021; Schneider et al., Reference Schneider, Junghaenel, Meijer, Stone, Orriens, Jin, Zelinski, Lee, Hernandez and Kapteyn2023; Sinharay, Reference Sinharay2020; Ulitzsch et al., Reference Ulitzsch, von Davier and Pohl2020a, Reference Ulitzsch, von Davier and Pohl2020b, Reference Ulitzsch, Pohl, Khorramdel, Kroehne and von Davier2022; Wang & Xu, Reference Wang and Xu2015; Wang et al., Reference Wang, Zhang, Douglas and Culpepper2018). With the increasing prevalence of computerized testing, the routine collection of RTs has become more feasible, providing researchers with unprecedented opportunities to explore its psychometric properties and applications across diverse assessment settings.

A central methodological task is the development of appropriate statistical models for analyzing RTs. Early work (e.g., Entink, van der Linden, et al., Reference Entink, van der Linden and Fox2009; Rouder et al., Reference Rouder, Sun, Speckman, Lu and Zhou2003; van der Linden, Reference van der Linden2006) focused on modeling RTs independently of item responses, aiming to characterize their statistical properties and infer latent cognitive mechanisms. Several distributional models have been proposed in this context, including the log-normal model (van der Linden, Reference van der Linden2006), the Box–Cox normal model (Entink, van der Linden, et al., Reference Entink, van der Linden and Fox2009), the semiparametric model (Wang et al., Reference Wang, Fan, Chang and Douglas2013), and the shifted Weibull distribution (Rouder et al., Reference Rouder, Sun, Speckman, Lu and Zhou2003), among which the log-normal model has gained wide adoption due to its estimation simplicity and robust empirical performance (Sinharay & van Rijn, Reference Sinharay and van Rijn2020).

To better leverage RTs for psychological and educational measurement, it is crucial to propose the joint model for RTs and item responses. One of the most widely used frameworks is the hierarchical model (HM) proposed by van der Linden (Reference van der Linden2007), which specifies separate submodels for RTs and responses at the first level and captures their correlations at the person and item levels through a second-level structure. This framework focuses on the between-person relationship between ability and speed and has been extended in various directions to accommodate different testing contexts (Entink, Fox, et al., Reference Entink, Fox and van der Linden2009; Klein Entink et al., Reference Klein Entink, Kuhn, Hornke and Fox2009; Ulitzsch et al., Reference Ulitzsch, Pohl, Khorramdel, Kroehne and von Davier2022; Zhan, Jiao, & Liao, Reference Zhan, Jiao and Liao2018; Zhan, Jiao, Wang, et al., Reference Zhan, Jiao, Wang and Man2018). A key limitation of the HM, however, is its assumption of conditional independence between responses and RTs given the latent traits. Empirical studies have questioned this assumption, demonstrating residual dependence not fully explained by the correlation between latent traits (Bolsinova & Tijmstra, Reference Bolsinova and Tijmstra2019; Bolsinova, De Boeck, et al., Reference Bolsinova, De Boeck and Tijmstra2017; Meng et al., Reference Meng, Tao and Chang2015). To address this issue, various conditional joint models for item responses and RTs have been proposed.

One approach is modeling the joint distribution of RTs and responses as a bivariate distribution with a nonzero dependence parameter. For example, Ranger and Ortner (Reference Ranger and Ortner2012) proposed a conditional joint model that incorporates a covariance structure to capture local dependence between speed and accuracy. Extending this approach, Meng et al. (Reference Meng, Tao and Chang2015) allowed the conditional correlation to vary across both items and individuals, thereby improving the model’s flexibility in capturing person- and item-specific dependencies. Another widely used strategy factorizes the joint distribution into the product of a marginal distribution for RTs and a conditional distribution for responses given RTs. This approach provides greater modeling flexibility while maintaining interpretability. For instance, Bolsinova, De Boeck, et al. (Reference Bolsinova, De Boeck and Tijmstra2017) introduced a joint model that incorporates residual RTs into the item response model to account for unexplained dependence. Similarly, Bolsinova, Tijmstra, et al. (Reference Bolsinova, Tijmstra and Molenaar2017) proposed a joint modeling framework in which the conditional distribution of responses given RTs follows a two-parameter normal ogive (2PNO) model, with the intercept expressed as a linear function of log-transformed RTs. In contrast, Bolsinova and Tijmstra (Reference Bolsinova and Tijmstra2019) proposed another strategy by reversing the factorization direction. Specifically, they factorized the joint distribution into the product of a marginal distribution for responses and a conditional distribution for RTs given responses. This formulation allows for modeling RT heterogeneity as a function of response accuracy, explicitly distinguishing between correct and incorrect responses.

In addition, process-based approaches have emerged that seek to model both RTs and responses as outputs of an underlying cognitive process. These models integrate item response theory with decision-making models. For instance, diffusion IRT models embed a diffusion decision process within an IRT framework (Tuerlinckx & De Boeck, Reference Tuerlinckx and De Boeck2005) and were implemented through the diffIRT package (Molenaar et al., Reference Molenaar, Tuerlinckx and van der Maas2015b). Building on these works, Kang et al. (Reference Kang, De Boeck and Ratcliff2022) extended the diffusion IRT framework by allowing person- and item-level variability, thereby allowing the model to capture the conditional dependence between responses and RTs. Further, Kang, Jeon, et al. (Reference Kang, Jeon and Partchev2023) introduced a latent space diffusion IRT model in which both persons and items are embedded in a latent space to capture complex dependency between RTs and responses. These models depart from conventional statistical formulations and instead aim to recover the cognitive mechanisms driving observed RT and response patterns.

Despite these methodological advances, most existing joint models have been developed for dichotomous items, and research on polytomous items remains scarce. Molenaar et al. (Reference Molenaar, Tuerlinckx and van der Maas2015a, Reference Molenaar, Tuerlinckx and van der Maas2015c) demonstrated that, for graded response items, the HM can be reformulated as a generalized linear factor model when parameter dependence is ignored, enabling estimation using Mplus. These studies extended the applications of the HM and provided powerful and efficient computational tools for estimating joint models of item responses and RTs. Nevertheless, their work does not propose a dedicated joint modeling framework for graded-response or mixed-format assessments. More recently, Kang, Molenaar, et al. (Reference Kang, Molenaar and Ratcliff2023) proposed a process-based model for Likert-type items, but their framework is confined to non-cognitive assessments. Consequently, a fully specified joint model for graded responses and RTs in cognitive assessment is still lacking. This gap underscores the need for joint models that can accommodate these item formats and effectively capture the relationship between item responses and RTs.

In this study, drawing on the framework of Bolsinova and Tijmstra (Reference Bolsinova and Tijmstra2019), we develop a joint model for graded responses and RTs. The proposed approach develops a conditional RT model given item responses and integrates it with a marginal response model based on Samejima’s graded response model (GRM; Samejima, Reference Samejima1969). This integration yields a conditional joint model that captures the within-person relationship between item responses and RTs. We further extend this model into a hierarchical formulation that accommodates between-person relationship between ability and speed. A second major contribution of this work is the development of a stochastic approximation EM (SAEM) algorithm for computing the marginal maximum likelihood estimate (MMLE) of the proposed model. The proposed estimation procedure is capable of handling mixed-format assessments that contain both binary and graded items. Finally, the proposed model and estimation procedure are evaluated through two simulation studies and an empirical analysis, demonstrating their practical applicability and strong performance.

The remainder of the article is organized as follows. Section 2 introduces a joint modeling framework for graded item responses and RTs. Section 3 presents an SAEM algorithm for estimating the proposed model. Section 4 reports simulation studies evaluating parameter recovery and comparing the graded response–response time (GR–RT) model with the HM. Section 5 applies the proposed method to empirical data to demonstrate its practical utility. Finally, we conclude with a discussion of the findings and directions for future research.

2 Model and rationale

In this section, we present a joint modeling framework for graded item responses and RTs, beginning with an empirical example that illustrates the dependence between RTs and response categories. Building on these observations, we construct a conditional joint model and further extend it into a two-level hierarchical structure.

2.1 Empirical investigation of the relationship between RTs and response categories

In this empirical analysis, we examine the differences in RTs across students with varying graded responses, using data from the Programme for International Student Assessment (PISA) scientific literacy assessment. The dataset contains responses from 717 Spanish students to 10 items, including six dichotomous and four polytomous items.

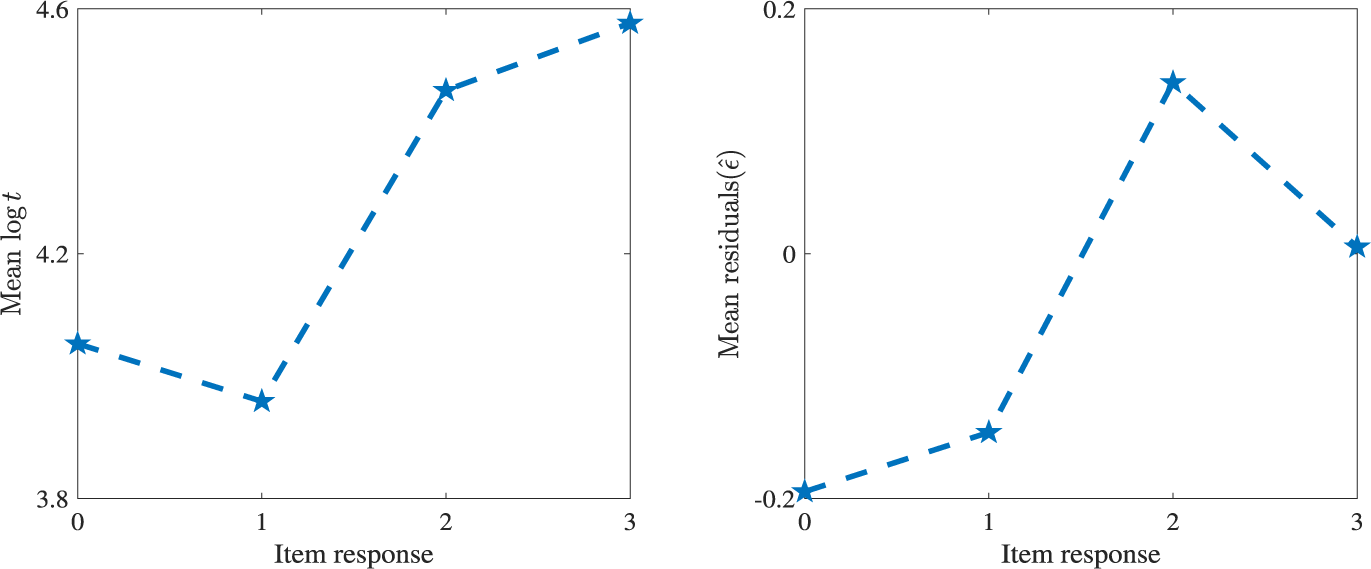

Because RTs are typically modeled on the log scale, we analyze the logarithmic transformation of RTs. Substantial variation in mean log-RTs is observed across response categories. As shown in the left panel of Figure 1, for a four-category item (“DS643Q04R”), the mean log-RTs increases with response category, particularly from category 1 to 2. F tests confirm that these differences are statistically significant at the 0.05 level. This pattern suggests that responses in higher categories tend to be associated with longer RTs. However, part of this relationship may be driven by individual differences in speed. To account for this, we conducted a residual-based analysis.

Figure 1 Line charts of mean log–response times

![]() $(\log t_{ij})$

and mean residuals

$(\log t_{ij})$

and mean residuals

![]() $(\hat {\epsilon }_{ij})$

across different response categories for item “DS643Q04R.”

$(\hat {\epsilon }_{ij})$

across different response categories for item “DS643Q04R.”

Specifically, we modeled the RT data using the log-normal model proposed by Klein Entink et al. (Reference Klein Entink, Kuhn, Hornke and Fox2009), which decomposes log-RT into item-level and person-level components. Let

![]() $T_{ij}$

denote the RT variable of test taker i on item j, with

$T_{ij}$

denote the RT variable of test taker i on item j, with

![]() $t_{ij}$

denoting its realization. This model is given by

$t_{ij}$

denoting its realization. This model is given by

where

![]() $v_{i}\in \left ( -\infty ,+\infty \right )$

is the latent speed trait for examinee i, and

$v_{i}\in \left ( -\infty ,+\infty \right )$

is the latent speed trait for examinee i, and

![]() $\beta _{j}\in \left ( -\infty ,+\infty \right )$

,

$\beta _{j}\in \left ( -\infty ,+\infty \right )$

,

![]() $\varphi _{j}\in \left ( 0,+\infty \right )$

, and

$\varphi _{j}\in \left ( 0,+\infty \right )$

, and

![]() $\sigma _{j}^2\in \left ( 0,+\infty \right )$

denote the time intensity, speed discrimination, and the variance parameters for item j, respectively. After estimating the model, we obtained parameter estimates

$\sigma _{j}^2\in \left ( 0,+\infty \right )$

denote the time intensity, speed discrimination, and the variance parameters for item j, respectively. After estimating the model, we obtained parameter estimates

![]() $\hat {\beta }_j, \hat {\varphi }_j, \hat {\sigma }_j$

, and

$\hat {\beta }_j, \hat {\varphi }_j, \hat {\sigma }_j$

, and

![]() $\hat {v}_i$

, and computed residuals

$\hat {v}_i$

, and computed residuals

which represent log-RTs adjusted for individual speed and item-level effects. These residuals allow for a reassessment of RT differences across response categories without confounding due to person or item variability.

The right panel of Figure 1 presents the mean residuals across response categories. Compared to the raw log-RTs, the residual pattern differs slightly, displaying a peak at category 2 followed by a decline. This indicates that part of the trend in raw log-RTs was attributable to individual speed differences. Nevertheless, an overall upward pattern remains, and F tests confirm that the differences in mean residuals across categories are remain statistically significant at the 0.05 level.

Overall, these findings indicate a clear relationship between RTs and response categories. To more adequately capture this relationship, we propose an RT model that explicitly incorporates category-specific effects, as described in the following section.

2.2 A joint model for graded responses and RTs

We begin by defining some notation. Let test takers be indexed by

![]() $i=1,\ldots ,N$

and items indexed by

$i=1,\ldots ,N$

and items indexed by

![]() $j=1,\ldots ,M$

. Let

$j=1,\ldots ,M$

. Let

![]() $Y_{ij}$

be the response variable of test taker i to item j, with

$Y_{ij}$

be the response variable of test taker i to item j, with

![]() $y_{ij}$

representing its observed value. Without loss of generality, we assume

$y_{ij}$

representing its observed value. Without loss of generality, we assume

![]() $y_{ij}=0,\ldots ,K_j-1$

, where K

j

≥ 2 is the total number of response categories. Finally, let I

(⋅) denote the indicator function.

$y_{ij}=0,\ldots ,K_j-1$

, where K

j

≥ 2 is the total number of response categories. Finally, let I

(⋅) denote the indicator function.

2.2.1 A conditional model for RTs given item responses

To capture the effect of the ordinal response Y

ij

on the RTs, we adopt a dummy coding approach. Specifically, we define a dummy variable vector as

![]() $\mathbf {Z}_{Y_{ij}}$

,

$\mathbf {Z}_{Y_{ij}}$

,

Let

![]() $\mathbf {z}_{y_{ij}}=(z_{ijk})_{1\leq k \leq K_{j}-1}$

denote an observation of

$\mathbf {z}_{y_{ij}}=(z_{ijk})_{1\leq k \leq K_{j}-1}$

denote an observation of

![]() $\mathbf {Z}_{Y_{ij}}$

. Under this encoding scheme, the relationship between y

ij

and

$\mathbf {Z}_{Y_{ij}}$

. Under this encoding scheme, the relationship between y

ij

and

![]() $\mathbf {z}_{y_{ij}}$

is given by

$\mathbf {z}_{y_{ij}}$

is given by

$$ \begin{align} \begin{aligned} y_{ij} = 0 & \Leftrightarrow \mathbf{z}_{y_{ij}} = (0, 0, \dots, 0) \\ y_{ij} = 1 & \Leftrightarrow \mathbf{z}_{y_{ij}} = (1, 0, \dots, 0) \\ &\vdots \\ y_{ij} = K_{j} - 1 & \Leftrightarrow \mathbf{z}_{y_{ij}} = (0, 0, \dots, 1). \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} y_{ij} = 0 & \Leftrightarrow \mathbf{z}_{y_{ij}} = (0, 0, \dots, 0) \\ y_{ij} = 1 & \Leftrightarrow \mathbf{z}_{y_{ij}} = (1, 0, \dots, 0) \\ &\vdots \\ y_{ij} = K_{j} - 1 & \Leftrightarrow \mathbf{z}_{y_{ij}} = (0, 0, \dots, 1). \end{aligned} \end{align} $$

As noted above, the log-normal model is widely used for modeling RTs because it fits various data well and allows for reliable inference and future extensions. Therefore, we adopt the log-normal model in this study. To incorporate the influence of response categories on RTs, we extend the log-normal model by introducing category-specific parameters. The resulting conditional model for RTs given the observed response

![]() $Y_{ij}=y_{ij}$

is

$Y_{ij}=y_{ij}$

is

Here,

![]() $v_{i}$

,

$v_{i}$

,

![]() $\beta _{j}$

,

$\beta _{j}$

,

![]() $\varphi _{j}$

, and

$\varphi _{j}$

, and

![]() $\sigma _{j}^2$

are introduced above;

$\sigma _{j}^2$

are introduced above;

![]() ${\boldsymbol {\delta }}_{j}=(\delta _{j1},\delta _{j2},\ldots ,\delta _{j(K_{j}-1)})^\top $

represents the item response-specific effect parameter for item j. The parameter

${\boldsymbol {\delta }}_{j}=(\delta _{j1},\delta _{j2},\ldots ,\delta _{j(K_{j}-1)})^\top $

represents the item response-specific effect parameter for item j. The parameter

![]() ${\boldsymbol {\delta }}_{j}$

quantifies the systematic differences in RTs associated with each response category.

${\boldsymbol {\delta }}_{j}$

quantifies the systematic differences in RTs associated with each response category.

2.2.2 Item response model

In this study, we employ the widely-used GRM proposed by Samejima (Reference Samejima1969) to model the ordered categorical response variable

![]() $Y_{ij}$

. Under the GRM, the probability that test taker i selects response category

$Y_{ij}$

. Under the GRM, the probability that test taker i selects response category

![]() $k~(k=0,\ldots ,K_j-1)$

for item j is given by

$k~(k=0,\ldots ,K_j-1)$

for item j is given by

where

$$ \begin{align*} P(Y_{ij} \geq 0 \mid \theta_i, a_j,b_j,{\pmb{\tau}}_j) &= 1, \nonumber \\\ P(Y_{ij} \geq k \mid \theta_i, a_j,b_j,{\pmb{\tau}}_j) &= F(a_j \theta_i - b_j - \tau_{jk}), \quad k = 1, 2, \dots, K_j - 1, \nonumber \\ \ P(Y_{ij} \geq K_j \mid \theta_i, a_j,b_j,{\pmb{\tau}}_j) &= 0. \nonumber\end{align*} $$

$$ \begin{align*} P(Y_{ij} \geq 0 \mid \theta_i, a_j,b_j,{\pmb{\tau}}_j) &= 1, \nonumber \\\ P(Y_{ij} \geq k \mid \theta_i, a_j,b_j,{\pmb{\tau}}_j) &= F(a_j \theta_i - b_j - \tau_{jk}), \quad k = 1, 2, \dots, K_j - 1, \nonumber \\ \ P(Y_{ij} \geq K_j \mid \theta_i, a_j,b_j,{\pmb{\tau}}_j) &= 0. \nonumber\end{align*} $$

Here,

![]() $\theta _i$

denotes the latent ability of test taker i,

$\theta _i$

denotes the latent ability of test taker i,

![]() $a_j$

is the discrimination parameter,

$a_j$

is the discrimination parameter,

![]() $b_j$

is the difficulty parameter, and

$b_j$

is the difficulty parameter, and

![]() ${\pmb {\tau }}_j=(\tau _{j1},\ldots ,\tau _{j(K_j-1)})$

is the thresholds for item j, which correspond to the cut-off points between the response categories. The threshold parameters must satisfy the monotonicity condition

${\pmb {\tau }}_j=(\tau _{j1},\ldots ,\tau _{j(K_j-1)})$

is the thresholds for item j, which correspond to the cut-off points between the response categories. The threshold parameters must satisfy the monotonicity condition

![]() $\tau _{j1}<\tau _{j2}<\cdots <\tau _{j(K_{j}-1)}$

. In addition, to ensure identifiability between

$\tau _{j1}<\tau _{j2}<\cdots <\tau _{j(K_{j}-1)}$

. In addition, to ensure identifiability between

![]() $b_j$

and

$b_j$

and

![]() $\boldsymbol {\tau }$

, we impose the constraint:

$\boldsymbol {\tau }$

, we impose the constraint:

![]() $\sum _{k=1}^{K_j-1}\tau _{jk}=0 \quad \text {for } j=1,\dots ,M$

. The function

$\sum _{k=1}^{K_j-1}\tau _{jk}=0 \quad \text {for } j=1,\dots ,M$

. The function

![]() $F(\cdot )$

refers to the cumulative distribution function (CDF) of either the logistic or the standard normal distribution. In this study, we use the standard normal CDF for convenience in developing the SAEM algorithm for model estimation.

$F(\cdot )$

refers to the cumulative distribution function (CDF) of either the logistic or the standard normal distribution. In this study, we use the standard normal CDF for convenience in developing the SAEM algorithm for model estimation.

Finally, based on the dummy variable vector

![]() $\mathbf {Z}_{Y_{ij}}$

in Equations (1) and (2), the probability mass function of the item response variable

$\mathbf {Z}_{Y_{ij}}$

in Equations (1) and (2), the probability mass function of the item response variable

![]() $Y_{ij}$

can be expressed as

$Y_{ij}$

can be expressed as

$$ \begin{align} P(Y_{ij}=y_{ij}|\theta_i,a_j,b_j,{\pmb{\tau}}_j)=p_{ij0}^{1-\sum_{k=1}^{K_j-1}z_{ijk}}\prod_{k=1}^{K_j-1} p_{ijk}^{z_{ijk}}, \quad \text{where } y_{ij}=0,\dots,K_j-1. \end{align} $$

$$ \begin{align} P(Y_{ij}=y_{ij}|\theta_i,a_j,b_j,{\pmb{\tau}}_j)=p_{ij0}^{1-\sum_{k=1}^{K_j-1}z_{ijk}}\prod_{k=1}^{K_j-1} p_{ijk}^{z_{ijk}}, \quad \text{where } y_{ij}=0,\dots,K_j-1. \end{align} $$

2.2.3 A joint model for graded responses and RTs

Let

![]() $\boldsymbol {\zeta }_i=(\theta _i,v_i)^\top $

denote the latent trait vector of examinee i. For item j, let the GRM parameters be

$\boldsymbol {\zeta }_i=(\theta _i,v_i)^\top $

denote the latent trait vector of examinee i. For item j, let the GRM parameters be

![]() $\boldsymbol {\eta }_j = (a_j, b_j, \boldsymbol {\tau }_j)^\top $

, and let the RT model parameters be

$\boldsymbol {\eta }_j = (a_j, b_j, \boldsymbol {\tau }_j)^\top $

, and let the RT model parameters be

![]() $\boldsymbol {\alpha }_j = (\beta _j, \varphi _j, \boldsymbol {\delta }_j^\top , \sigma _j)^\top $

. The complete parameter vector for item j is given by

$\boldsymbol {\alpha }_j = (\beta _j, \varphi _j, \boldsymbol {\delta }_j^\top , \sigma _j)^\top $

. The complete parameter vector for item j is given by

![]() $\boldsymbol {\xi }_j = (\boldsymbol {\eta }_j^\top , \boldsymbol {\alpha }_j^\top )^\top $

.

$\boldsymbol {\xi }_j = (\boldsymbol {\eta }_j^\top , \boldsymbol {\alpha }_j^\top )^\top $

.

Based on the conditional RT model in Equation (3) and the GRM in Equation (4), the conditional joint model of

![]() $Y_{ij}$

and

$Y_{ij}$

and

![]() $T_{ij}$

given

$T_{ij}$

given

![]() ${\boldsymbol {\zeta }}_i$

can be written as

${\boldsymbol {\zeta }}_i$

can be written as

$$ \begin{align} f(t_{ij},y_{ij}| {\boldsymbol{\zeta}}_i, {\boldsymbol{\xi}}_{j})=\frac{1}{t_{ij}}\phi\left(\frac{\ln{t_{ij}}-\beta_j+\varphi_jv_i-\mathbf{z}_{y_{ij}}{\boldsymbol{\delta}}_{j}}{\sigma_j}\right) P(Y_{ij}=y_{ij}|\theta_i,a_j,b_j,{\pmb{\tau}}_j), \end{align} $$

$$ \begin{align} f(t_{ij},y_{ij}| {\boldsymbol{\zeta}}_i, {\boldsymbol{\xi}}_{j})=\frac{1}{t_{ij}}\phi\left(\frac{\ln{t_{ij}}-\beta_j+\varphi_jv_i-\mathbf{z}_{y_{ij}}{\boldsymbol{\delta}}_{j}}{\sigma_j}\right) P(Y_{ij}=y_{ij}|\theta_i,a_j,b_j,{\pmb{\tau}}_j), \end{align} $$

where

![]() $\phi (\cdot )$

is the probability density function of the standard normal distribution.

$\phi (\cdot )$

is the probability density function of the standard normal distribution.

Following the hierarchical modeling framework proposed by van der Linden (Reference van der Linden2007), the population-level distribution of the latent variables

![]() ${\boldsymbol {\zeta }}_i$

is assumed to follow a bivariate normal distribution

${\boldsymbol {\zeta }}_i$

is assumed to follow a bivariate normal distribution

where

![]() $\boldsymbol {\mu }_p$

and

$\boldsymbol {\mu }_p$

and

![]() $\boldsymbol {\Sigma }_{p}$

denote the mean vector and covariance matrix, respectively. For identification purposes, these parameters are typically specified as

$\boldsymbol {\Sigma }_{p}$

denote the mean vector and covariance matrix, respectively. For identification purposes, these parameters are typically specified as

$$ \begin{align*}{\boldsymbol{\mu}}_{p} = (0,0)^\top, \quad \boldsymbol{\Sigma}_p=\begin{pmatrix} 1 & \sigma_{\theta v} \\ \sigma_{\theta v}& 1 \end{pmatrix}.\end{align*} $$

$$ \begin{align*}{\boldsymbol{\mu}}_{p} = (0,0)^\top, \quad \boldsymbol{\Sigma}_p=\begin{pmatrix} 1 & \sigma_{\theta v} \\ \sigma_{\theta v}& 1 \end{pmatrix}.\end{align*} $$

Finally, based on Equations (5) and (6), a two-level hierarchical joint model for

![]() $Y_{ij}$

and

$Y_{ij}$

and

![]() $T_{ij}$

is given by

$T_{ij}$

is given by

$$ \begin{align} \left\{ \begin{aligned} f(t_{ij}, y_{ij} | {\boldsymbol{\zeta}}_i, {\boldsymbol{\xi}}_j) &= \frac{1}{t_{ij}} \phi \left( \frac{\ln{t_{ij}}-\beta_j + \varphi_j v_i - \mathbf{z}_{y_{ij}} {\boldsymbol{\delta}}_j}{\sigma_j} \right) P(Y_{ij}=y_{ij}|\theta_i,a_j,b_j,{\pmb{\tau}}_j), \\ f({\boldsymbol{\zeta}}_i|\sigma_{\theta v})&= \frac{1}{2\pi \sqrt{1-\sigma_{\theta v}^{2}}} \exp\left\{ -\frac{\theta_{i}^{2} + v_{i}^2 - 2\sigma_{\theta v}\theta_{i}v_{i}}{2(1-\sigma_{\theta v}^{2})} \right\}. \end{aligned} \right. \end{align} $$

$$ \begin{align} \left\{ \begin{aligned} f(t_{ij}, y_{ij} | {\boldsymbol{\zeta}}_i, {\boldsymbol{\xi}}_j) &= \frac{1}{t_{ij}} \phi \left( \frac{\ln{t_{ij}}-\beta_j + \varphi_j v_i - \mathbf{z}_{y_{ij}} {\boldsymbol{\delta}}_j}{\sigma_j} \right) P(Y_{ij}=y_{ij}|\theta_i,a_j,b_j,{\pmb{\tau}}_j), \\ f({\boldsymbol{\zeta}}_i|\sigma_{\theta v})&= \frac{1}{2\pi \sqrt{1-\sigma_{\theta v}^{2}}} \exp\left\{ -\frac{\theta_{i}^{2} + v_{i}^2 - 2\sigma_{\theta v}\theta_{i}v_{i}}{2(1-\sigma_{\theta v}^{2})} \right\}. \end{aligned} \right. \end{align} $$

In this article, the proposed joint model is referred to as the GR–RT model, as it accounts for both graded responses and response times. Notably, when

![]() ${\boldsymbol {\delta }}_{j}=\mathbf {0}$

, the GR–RT model reduces to the basic HM framework proposed by Klein Entink et al. (Reference Klein Entink, Kuhn, Hornke and Fox2009) for graded scoring items, where responses and RTs are conditionally independent. Moreover, since binary scoring is a special case of graded scoring, the GR–RT model provides a unified framework for analyzing test data that include both dichotomous and polytomous items.

${\boldsymbol {\delta }}_{j}=\mathbf {0}$

, the GR–RT model reduces to the basic HM framework proposed by Klein Entink et al. (Reference Klein Entink, Kuhn, Hornke and Fox2009) for graded scoring items, where responses and RTs are conditionally independent. Moreover, since binary scoring is a special case of graded scoring, the GR–RT model provides a unified framework for analyzing test data that include both dichotomous and polytomous items.

Remark 1. The proposed GR–RT model is not a direct extension of the heterogeneity model introduced by Bolsinova and Tijmstra (Reference Bolsinova and Tijmstra2019), primarily due to certain practical challenges. In their framework, heterogeneity between correct and incorrect responses is modeled through multiple aspects, including the slope parameters (

![]() $\varphi _j$

), residual variance (

$\varphi _j$

), residual variance (

![]() $\sigma _j^2$

), and the joint population distribution of

$\sigma _j^2$

), and the joint population distribution of

![]() $\theta $

and v. For polytomous items, modeling different slope parameters and residual variances for each response category, along with different distributions of

$\theta $

and v. For polytomous items, modeling different slope parameters and residual variances for each response category, along with different distributions of

![]() $\theta $

and v, results in a highly complex model structure. This complexity introduces significant challenges: not only does it make parameter estimation more difficult, but it also reduces the overall credibility of the model due to increased uncertainty in the estimation process. Moreover, real-world data often exhibit sparsity in certain response categories, which magnifies the uncertainty and undermines the reliability of statistical inference. Given these practical challenges, the present study adopts a more parsimonious approach by modeling response category-specific heterogeneity solely through intercept terms. While this approach captures only one aspect of heterogeneity, it provides a robust, manageable framework for analyzing polytomous items in the presence of RT data, and it lays the groundwork for future extensions that could incorporate richer heterogeneity structures as the field advances.

$\theta $

and v, results in a highly complex model structure. This complexity introduces significant challenges: not only does it make parameter estimation more difficult, but it also reduces the overall credibility of the model due to increased uncertainty in the estimation process. Moreover, real-world data often exhibit sparsity in certain response categories, which magnifies the uncertainty and undermines the reliability of statistical inference. Given these practical challenges, the present study adopts a more parsimonious approach by modeling response category-specific heterogeneity solely through intercept terms. While this approach captures only one aspect of heterogeneity, it provides a robust, manageable framework for analyzing polytomous items in the presence of RT data, and it lays the groundwork for future extensions that could incorporate richer heterogeneity structures as the field advances.

The practical applicability of a measurement model depends on the feasibility of accurately estimating its parameters. The complex structure of the GR–RT model presents significant challenges for parameter estimation. Therefore, developing an effective computational method to estimate the parameters of the GR–RT model is a central focus of this study. In the following section, we present an SAEM algorithm designed to compute the MMLE of the GR–RT model.

3 Model estimation

This section presents an SAEM algorithm for estimating the GR–RT model. In Section 3.1, a data augmentation scheme is introduced for the GR–RT model. Building on this data augmentation, Section 3.2 presents an SAEM algorithm for computing the MMLE of the GR–RT model.

3.1 A data augmentation scheme for GR−RT model

Data augmentation is fundamental for developing the SAEM algorithm. Following Camilli and Geis (Reference Camilli and Geis2019), we introduce a latent response vector

![]() ${\mathbf {X}}_{ij}=(X_{ij1},\ldots ,X_{ij(K_j-1)})^\top $

, where each

${\mathbf {X}}_{ij}=(X_{ij1},\ldots ,X_{ij(K_j-1)})^\top $

, where each

![]() $X_{ijk}$

follows a normal distribution:

$X_{ijk}$

follows a normal distribution:

Moreover, the relationship between the observed response

![]() $Y_{ij}$

and the latent response

$Y_{ij}$

and the latent response

![]() $X_{ijk}$

is defined as

$X_{ijk}$

is defined as

$$ \begin{align} X_{ijk} = \left\{ \begin{aligned} &\geq0, \quad \text{if } Y_{ij} \geq k, \\ &<0, \quad \text{otherwise}, \end{aligned} \right. \quad \text{for } k = 1, \dots, K_j - 1. \end{align} $$

$$ \begin{align} X_{ijk} = \left\{ \begin{aligned} &\geq0, \quad \text{if } Y_{ij} \geq k, \\ &<0, \quad \text{otherwise}, \end{aligned} \right. \quad \text{for } k = 1, \dots, K_j - 1. \end{align} $$

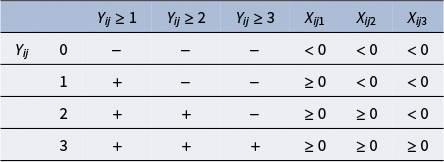

To intuitively illustrate this relationship between

![]() ${\mathbf {X}}_{ij}$

and

${\mathbf {X}}_{ij}$

and

![]() $Y_{ij}$

, consider a four-category graded response item, where

$Y_{ij}$

, consider a four-category graded response item, where

![]() $Y_{ij}\in \{0,1,2,3\}$

and

$Y_{ij}\in \{0,1,2,3\}$

and

![]() ${\mathbf {X}}_{ij}=(X_{ij1}, X_{ij2}, X_{ij3})^\top $

. When

${\mathbf {X}}_{ij}=(X_{ij1}, X_{ij2}, X_{ij3})^\top $

. When

![]() $Y_{ij}$

takes the values

$Y_{ij}$

takes the values

![]() $0, 1, 2$

, and

$0, 1, 2$

, and

![]() $3$

, the corresponding ranges of the elements of

$3$

, the corresponding ranges of the elements of

![]() ${\mathbf {X}}_{ij}$

are determined, as shown in Table 1.

${\mathbf {X}}_{ij}$

are determined, as shown in Table 1.

Table 1 Relationship between

![]() $Y{}_{ij}$

and

$Y{}_{ij}$

and

![]() ${\mathbf {X}}{}_{ij}$

for four-category graded response

${\mathbf {X}}{}_{ij}$

for four-category graded response

Note:

![]() $+$

indicates the condition is satisfied and

$+$

indicates the condition is satisfied and

![]() $-$

indicates not satisfied.

$-$

indicates not satisfied.

We now define some notations. Let

![]() $\mathbf {y}_{\cdot j}= \left (y_{1j},\ldots , y_{Nj}\right )^\top $

,

$\mathbf {y}_{\cdot j}= \left (y_{1j},\ldots , y_{Nj}\right )^\top $

,

![]() $\mathbf {t}_{\cdot j}= \left (t_{1j},\ldots , t_{Nj}\right )^\top $

, and

$\mathbf {t}_{\cdot j}= \left (t_{1j},\ldots , t_{Nj}\right )^\top $

, and

![]() $\ln \mathbf {t}_{.j}=(\ln t_{1j}, \ln t_{2j}, \ldots ,\\ \ln t_{Nj})^\top $

denote the observed response, RT and log-RT vectors of item j, respectively. The observed response and RT data for all items are denoted by

$\ln \mathbf {t}_{.j}=(\ln t_{1j}, \ln t_{2j}, \ldots ,\\ \ln t_{Nj})^\top $

denote the observed response, RT and log-RT vectors of item j, respectively. The observed response and RT data for all items are denoted by

![]() $\mathbf {y}=\left ( \mathbf {y}_{\cdot 1},\ldots ,\mathbf {y}_{\cdot M}\right )$

and

$\mathbf {y}=\left ( \mathbf {y}_{\cdot 1},\ldots ,\mathbf {y}_{\cdot M}\right )$

and

![]() $\mathbf {t}=\left ( \mathbf {t}_{\cdot 1},\ldots ,\mathbf {t}_{\cdot M}\right )$

. Let

$\mathbf {t}=\left ( \mathbf {t}_{\cdot 1},\ldots ,\mathbf {t}_{\cdot M}\right )$

. Let

![]() ${\mathbf {X}}_{j}= ({\mathbf {X}}_{1j}, {\mathbf {X}}_{2j},\ldots ,{\mathbf {X}}_{Nj})$

denote the latent response matrix for item j. Let

${\mathbf {X}}_{j}= ({\mathbf {X}}_{1j}, {\mathbf {X}}_{2j},\ldots ,{\mathbf {X}}_{Nj})$

denote the latent response matrix for item j. Let

![]() $\mathbf {x}_{ij}=(x_{ij1},\ldots ,x_{ij(K_j-1)})^\top $

denote the realization of

$\mathbf {x}_{ij}=(x_{ij1},\ldots ,x_{ij(K_j-1)})^\top $

denote the realization of

![]() ${\mathbf {X}}_{ij}$

, and

${\mathbf {X}}_{ij}$

, and

![]() $\mathbf {x}_{j}= (\mathbf {x}_{1j}, \mathbf {x}_{2j},\ldots ,\mathbf {x}_{Nj})$

denote the realization of

$\mathbf {x}_{j}= (\mathbf {x}_{1j}, \mathbf {x}_{2j},\ldots ,\mathbf {x}_{Nj})$

denote the realization of

![]() ${\mathbf {X}}_{j}$

. Let

${\mathbf {X}}_{j}$

. Let

![]() $\boldsymbol {\theta } = (\theta _1, \dots , \theta _N)^\top $

and

$\boldsymbol {\theta } = (\theta _1, \dots , \theta _N)^\top $

and

![]() $\mathbf {v} = (v_1, \dots , v_N)^\top $

denote the latent ability and speed vectors, respectively. The combined latent traits are represented as

$\mathbf {v} = (v_1, \dots , v_N)^\top $

denote the latent ability and speed vectors, respectively. The combined latent traits are represented as

![]() $\boldsymbol {\zeta } = (\boldsymbol {\theta }, \mathbf {v})$

. The complete data for the test, including observed responses, RTs, latent responses, and latent traits, are denoted by

$\boldsymbol {\zeta } = (\boldsymbol {\theta }, \mathbf {v})$

. The complete data for the test, including observed responses, RTs, latent responses, and latent traits, are denoted by

![]() $\mathbf {u}=(\mathbf {y}, \mathbf {t},\mathbf {x}^\top , {\boldsymbol {\zeta }})$

, while the complete data for item j are denoted by

$\mathbf {u}=(\mathbf {y}, \mathbf {t},\mathbf {x}^\top , {\boldsymbol {\zeta }})$

, while the complete data for item j are denoted by

![]() $\mathbf {u}_{.j}=(\mathbf {y}_{.j}, \mathbf {t}_{.j},\mathbf {x}_j^\top , {\boldsymbol {\zeta }})$

. Let

$\mathbf {u}_{.j}=(\mathbf {y}_{.j}, \mathbf {t}_{.j},\mathbf {x}_j^\top , {\boldsymbol {\zeta }})$

. Let

![]() $\boldsymbol {\eta }=(\boldsymbol {\eta }_1,\ldots ,\boldsymbol {\eta }_M)$

be the collection of GRM parameters and

$\boldsymbol {\eta }=(\boldsymbol {\eta }_1,\ldots ,\boldsymbol {\eta }_M)$

be the collection of GRM parameters and

![]() $\boldsymbol {\alpha }=(\boldsymbol {\alpha }_1,\ldots ,\boldsymbol {\alpha }_M)$

be the collection of RT model parameters. The collection of all item parameters in the GR–RT model, combining of

$\boldsymbol {\alpha }=(\boldsymbol {\alpha }_1,\ldots ,\boldsymbol {\alpha }_M)$

be the collection of RT model parameters. The collection of all item parameters in the GR–RT model, combining of

![]() $\boldsymbol {\eta }$

and

$\boldsymbol {\eta }$

and

![]() ${\boldsymbol {\alpha }}$

, is denoted by

${\boldsymbol {\alpha }}$

, is denoted by

![]() $\boldsymbol {\xi }=(\boldsymbol {\xi }_1,\ldots ,\boldsymbol {\xi }_M)$

. Let

$\boldsymbol {\xi }=(\boldsymbol {\xi }_1,\ldots ,\boldsymbol {\xi }_M)$

. Let

![]() $\boldsymbol {\Omega }=(\boldsymbol {\xi }, \sigma _{\theta v})$

be the set of parameters to be estimated in the GR–RT model.

$\boldsymbol {\Omega }=(\boldsymbol {\xi }, \sigma _{\theta v})$

be the set of parameters to be estimated in the GR–RT model.

Based on Equations (7)–(9), we have

$$ \begin{align} f(y_{ij}, t_{ij}, \mathbf{x}_{ij}, {\boldsymbol{\zeta}}_{i}|{\boldsymbol{\xi}}_{j}, \sigma_{\theta v} ) &= f(y_{ij}, t_{ij}, \mathbf{x}_{ij}| {\boldsymbol{\zeta}}_{i}, {\boldsymbol{\xi}}_{j})f ({\boldsymbol{\zeta}}_{i}| \sigma_{\theta v} ) \nonumber\\&= \prod_{k=1}^{K_{j}-1}[I_{(x_{ijk}< 0)} I_{(y_{ij}< k)}+I_{(x_{ijk}\geq0)} I_{(y_{ij}\geq k)}] \phi(x_{ijk}-a_{j}\theta_{i} +b_{j} +\tau_{jk}) \nonumber\\& \quad \times \frac{1}{t_{ij}}\phi\left(\frac{\ln t_{ij}-\beta_{j}+\varphi_{j}v_{i}-\mathbf{z}_{y_{ij}}{\boldsymbol{\delta}}_{j}}{\sigma_{j}}\right) f({\boldsymbol{\zeta}}_i|\sigma_{\theta v}). \end{align} $$

$$ \begin{align} f(y_{ij}, t_{ij}, \mathbf{x}_{ij}, {\boldsymbol{\zeta}}_{i}|{\boldsymbol{\xi}}_{j}, \sigma_{\theta v} ) &= f(y_{ij}, t_{ij}, \mathbf{x}_{ij}| {\boldsymbol{\zeta}}_{i}, {\boldsymbol{\xi}}_{j})f ({\boldsymbol{\zeta}}_{i}| \sigma_{\theta v} ) \nonumber\\&= \prod_{k=1}^{K_{j}-1}[I_{(x_{ijk}< 0)} I_{(y_{ij}< k)}+I_{(x_{ijk}\geq0)} I_{(y_{ij}\geq k)}] \phi(x_{ijk}-a_{j}\theta_{i} +b_{j} +\tau_{jk}) \nonumber\\& \quad \times \frac{1}{t_{ij}}\phi\left(\frac{\ln t_{ij}-\beta_{j}+\varphi_{j}v_{i}-\mathbf{z}_{y_{ij}}{\boldsymbol{\delta}}_{j}}{\sigma_{j}}\right) f({\boldsymbol{\zeta}}_i|\sigma_{\theta v}). \end{align} $$

Further, the complete-data likelihood of

![]() $\boldsymbol {\xi }_j$

and

$\boldsymbol {\xi }_j$

and

![]() $\sigma _{\theta v}$

can be expressed as

$\sigma _{\theta v}$

can be expressed as

$$ \begin{align*} L(\mathbf{u}_{.j} \mid {\boldsymbol{\xi}}_{j}, \sigma_{\theta v}) = \prod_{i=1}^N f(y_{ij}, t_{ij}, \mathbf{x}_{ij}, {\boldsymbol{\zeta}}_{i}|{\boldsymbol{\xi}}_{j}, \sigma_{\theta v} ) \propto L_j^1\times L_j^2\times L^3, \nonumber \end{align*} $$

$$ \begin{align*} L(\mathbf{u}_{.j} \mid {\boldsymbol{\xi}}_{j}, \sigma_{\theta v}) = \prod_{i=1}^N f(y_{ij}, t_{ij}, \mathbf{x}_{ij}, {\boldsymbol{\zeta}}_{i}|{\boldsymbol{\xi}}_{j}, \sigma_{\theta v} ) \propto L_j^1\times L_j^2\times L^3, \nonumber \end{align*} $$

where

$$ \begin{align} L_j^1&= \exp\left\{-\frac{1}{2} \sum_{i=1}^{N} \sum_{k=1}^{K_{j}-1} (x_{ijk} - a_{j}\theta_{i} + b_{j} + \tau_{jk})^2 \right\}I_{(\tau_{j1}<\cdots<\tau_{j(K_j-1)}, \sum_{k=1}^{K_{j}-1}\tau_{jk}=0)}, \end{align} $$

$$ \begin{align} L_j^1&= \exp\left\{-\frac{1}{2} \sum_{i=1}^{N} \sum_{k=1}^{K_{j}-1} (x_{ijk} - a_{j}\theta_{i} + b_{j} + \tau_{jk})^2 \right\}I_{(\tau_{j1}<\cdots<\tau_{j(K_j-1)}, \sum_{k=1}^{K_{j}-1}\tau_{jk}=0)}, \end{align} $$

$$ \begin{align} L_j^2&= \sigma_{j}^{-N} \exp\left\{-\frac{1}{2\sigma_{j}^2} \sum_{i=1}^{N} ( \ln t_{ij} - \beta_{j} + \varphi_{j}v_{i} - \mathbf{z}_{y_{ij}}{\boldsymbol{\delta}}_{j})^2 \right\}, \end{align} $$

$$ \begin{align} L_j^2&= \sigma_{j}^{-N} \exp\left\{-\frac{1}{2\sigma_{j}^2} \sum_{i=1}^{N} ( \ln t_{ij} - \beta_{j} + \varphi_{j}v_{i} - \mathbf{z}_{y_{ij}}{\boldsymbol{\delta}}_{j})^2 \right\}, \end{align} $$

$$ \begin{align} L^3&=(1-\sigma_{\theta v}^2) ^{-N/2}\exp\left\{ -\frac{1}{2(1-\sigma_{\theta v}^{2})} \sum_{i=1}^{N} (\theta_{i}^{2} + v_{i}^2 - 2\sigma_{\theta v}\theta_{i}v_{i}) \right\}. \end{align} $$

$$ \begin{align} L^3&=(1-\sigma_{\theta v}^2) ^{-N/2}\exp\left\{ -\frac{1}{2(1-\sigma_{\theta v}^{2})} \sum_{i=1}^{N} (\theta_{i}^{2} + v_{i}^2 - 2\sigma_{\theta v}\theta_{i}v_{i}) \right\}. \end{align} $$

It can be observed that

![]() $L(\mathbf {u}_{.j} \mid {\boldsymbol {\xi }}_{j}, \sigma _{\theta v})$

is the product of three normal likelihoods, which places it within the exponential family. When the data likelihood takes the form of an exponential family (Barndorff-Nielsen, Reference Barndorff-Nielsen2014), the implementation of the EM algorithm and its extensions is simplified to working with sufficient statistics (Dempster et al., Reference Dempster, Laird and Rubin1977). Therefore, the exponential family structure of the complete data likelihood is particularly advantageous for developing an SAEM algorithm for the GR–RT model.

$L(\mathbf {u}_{.j} \mid {\boldsymbol {\xi }}_{j}, \sigma _{\theta v})$

is the product of three normal likelihoods, which places it within the exponential family. When the data likelihood takes the form of an exponential family (Barndorff-Nielsen, Reference Barndorff-Nielsen2014), the implementation of the EM algorithm and its extensions is simplified to working with sufficient statistics (Dempster et al., Reference Dempster, Laird and Rubin1977). Therefore, the exponential family structure of the complete data likelihood is particularly advantageous for developing an SAEM algorithm for the GR–RT model.

For brevity, the exponential family form of

![]() $L(\mathbf {u}_{.j} \mid {\boldsymbol {\xi }}_{j}, \sigma _{\theta v})$

is not given here; instead, we present only the sufficient statistics for

$L(\mathbf {u}_{.j} \mid {\boldsymbol {\xi }}_{j}, \sigma _{\theta v})$

is not given here; instead, we present only the sufficient statistics for

![]() ${\boldsymbol {\xi }}_{j}$

and

${\boldsymbol {\xi }}_{j}$

and

![]() $\sigma _{\theta v}$

.

$\sigma _{\theta v}$

.

Specifically, the sufficient statistics for

![]() $\boldsymbol {\eta }_j$

are

$\boldsymbol {\eta }_j$

are

$$ \begin{align} S_{\boldsymbol{\eta}_j}=\left\{\sum_{i=1}^{N} \sum_{k=1}^{K_{j}-1} \theta_{i} x_{ijk}, \sum_{i=1}^N \mathbf{x}_{ij}, \sum_{i=1}^{N}\theta_{i}, \boldsymbol{\theta}^\top\boldsymbol{\theta}\right\}, \end{align} $$

$$ \begin{align} S_{\boldsymbol{\eta}_j}=\left\{\sum_{i=1}^{N} \sum_{k=1}^{K_{j}-1} \theta_{i} x_{ijk}, \sum_{i=1}^N \mathbf{x}_{ij}, \sum_{i=1}^{N}\theta_{i}, \boldsymbol{\theta}^\top\boldsymbol{\theta}\right\}, \end{align} $$

where

![]() $\sum _{i=1}^N \mathbf {x}_{ij}=(\sum _{i=1}^N x_{ij1},\ldots ,\sum _{i=1}^N x_{ij(K_j-1)})^\top $

.

$\sum _{i=1}^N \mathbf {x}_{ij}=(\sum _{i=1}^N x_{ij1},\ldots ,\sum _{i=1}^N x_{ij(K_j-1)})^\top $

.

The sufficient statistics for

![]() $\boldsymbol {\alpha }_j$

are

$\boldsymbol {\alpha }_j$

are

where

$$ \begin{align*} \boldsymbol{\Lambda}_{j} = \left( \begin{array}{cccc} v_{1} & v_{2} & \cdots & v_{N} \\ -1 & -1 & \cdots & -1 \\ -I_{(y_{1j}=1)} & -I_{(y_{2j}=1)} & \cdots & -I_{(y_{Nj}=1)} \\ \vdots & \vdots & \ddots & \vdots \\ -I_{(y_{1j}=K_{j}-1)} & -I_{(y_{2j}=K_{j}-1)} & \cdots & -I_{(y_{Nj}=K_{j}-1)} \end{array} \right).\nonumber \end{align*} $$

$$ \begin{align*} \boldsymbol{\Lambda}_{j} = \left( \begin{array}{cccc} v_{1} & v_{2} & \cdots & v_{N} \\ -1 & -1 & \cdots & -1 \\ -I_{(y_{1j}=1)} & -I_{(y_{2j}=1)} & \cdots & -I_{(y_{Nj}=1)} \\ \vdots & \vdots & \ddots & \vdots \\ -I_{(y_{1j}=K_{j}-1)} & -I_{(y_{2j}=K_{j}-1)} & \cdots & -I_{(y_{Nj}=K_{j}-1)} \end{array} \right).\nonumber \end{align*} $$

Finally, the sufficient statistics for

![]() $\sigma _{\theta v}$

are

$\sigma _{\theta v}$

are

3.2 An SAEM algorithm for the MMLE of GR–RT model

We begin by introducing the EM algorithm for computing the MMLE of the GR–RT model, followed by the proposal of the SAEM algorithm.

3.2.1 EM algorithm

With the introduction of

![]() ${\mathbf {X}}_{ij}$

, the E-step of EM algorithm simplifies to working with sufficient statistics, and the M-step directly updates the estimate of

${\mathbf {X}}_{ij}$

, the E-step of EM algorithm simplifies to working with sufficient statistics, and the M-step directly updates the estimate of

![]() $\boldsymbol {\Omega }$

using the updated values of these sufficient statistics. Below, we present the general procedure of the EM algorithm, with a detailed implementation provided in Appendix A of the Supplementary Material.

$\boldsymbol {\Omega }$

using the updated values of these sufficient statistics. Below, we present the general procedure of the EM algorithm, with a detailed implementation provided in Appendix A of the Supplementary Material.

Let

![]() $\boldsymbol {\Omega }^{0}=(\boldsymbol {\xi }_{1}^{0},\ldots , \boldsymbol {\xi }_{M}^{0}, \sigma _{\theta v}^{0})$

be the initial values of

$\boldsymbol {\Omega }^{0}=(\boldsymbol {\xi }_{1}^{0},\ldots , \boldsymbol {\xi }_{M}^{0}, \sigma _{\theta v}^{0})$

be the initial values of

![]() $\boldsymbol {\Omega }$

, and

$\boldsymbol {\Omega }$

, and

![]() $\boldsymbol {\Omega }^{r-1}=(\boldsymbol {\xi }_{1}^{r-1},\ldots , \boldsymbol {\xi }_{M}^{r-1}, \sigma _{\theta v}^{r-1})$

denote the parameter values updated at the end of the

$\boldsymbol {\Omega }^{r-1}=(\boldsymbol {\xi }_{1}^{r-1},\ldots , \boldsymbol {\xi }_{M}^{r-1}, \sigma _{\theta v}^{r-1})$

denote the parameter values updated at the end of the

![]() $(r-1)$

th iteration. The rth EM iteration consists of the following steps:

$(r-1)$

th iteration. The rth EM iteration consists of the following steps:

-

1. E-step: Compute the conditional expectations of the sufficient statistics

$S_{\boldsymbol {\eta }_{j}}$

,

$S_{\boldsymbol {\eta }_{j}}$

,

$S_{\boldsymbol {\alpha }_{j}}$

, and

$S_{\boldsymbol {\alpha }_{j}}$

, and

$ S_{\sigma _{\theta v}}$

given

$ S_{\sigma _{\theta v}}$

given

$\mathbf {y}$

,

$\mathbf {y}$

,

$\mathbf {t}$

, and

$\mathbf {t}$

, and

$\boldsymbol {\Omega }^{r-1}$

: and

$\boldsymbol {\Omega }^{r-1}$

: and $$ \begin{align*} {s}_{\boldsymbol{\eta}_j}^{r}=E_{(\mathbf{x}_j, \boldsymbol{\theta})}(S_{\boldsymbol{\eta}_{j}}|\mathbf{y}, \mathbf{t},\boldsymbol{\Omega}^{r-1}), s_{\boldsymbol{\alpha}_j}^{r}=E_{\mathbf{v}}(S_{\boldsymbol{\alpha}_{j}}|\mathbf{y}, \mathbf{t},\boldsymbol{\Omega}^{r-1}), \quad \text{for } j=1,\ldots,M, \nonumber \end{align*} $$

$$ \begin{align*} {s}_{\boldsymbol{\eta}_j}^{r}=E_{(\mathbf{x}_j, \boldsymbol{\theta})}(S_{\boldsymbol{\eta}_{j}}|\mathbf{y}, \mathbf{t},\boldsymbol{\Omega}^{r-1}), s_{\boldsymbol{\alpha}_j}^{r}=E_{\mathbf{v}}(S_{\boldsymbol{\alpha}_{j}}|\mathbf{y}, \mathbf{t},\boldsymbol{\Omega}^{r-1}), \quad \text{for } j=1,\ldots,M, \nonumber \end{align*} $$

$$ \begin{align*} s_{\sigma_{\theta v}}^{r}=E_{\boldsymbol{\zeta}}(S_{\sigma_{\theta v}}|\mathbf{y}, \mathbf{t},\boldsymbol{\Omega}^{r-1}).\nonumber \end{align*} $$

$$ \begin{align*} s_{\sigma_{\theta v}}^{r}=E_{\boldsymbol{\zeta}}(S_{\sigma_{\theta v}}|\mathbf{y}, \mathbf{t},\boldsymbol{\Omega}^{r-1}).\nonumber \end{align*} $$

-

2. M-step: Update the parameter estimate of

$\boldsymbol {\Omega }$

by and

$\boldsymbol {\Omega }$

by and $$ \begin{align*}\boldsymbol{\eta}^r_j=\hat{\boldsymbol{\eta}}_j(s_{\boldsymbol{\eta}_j}^{r}), \boldsymbol{\alpha}^r_j=\hat{\boldsymbol{\alpha}}_j(s_{\boldsymbol{\alpha}_j}^{r}),\text{for } j=1,\ldots,M,\end{align*} $$

$$ \begin{align*}\boldsymbol{\eta}^r_j=\hat{\boldsymbol{\eta}}_j(s_{\boldsymbol{\eta}_j}^{r}), \boldsymbol{\alpha}^r_j=\hat{\boldsymbol{\alpha}}_j(s_{\boldsymbol{\alpha}_j}^{r}),\text{for } j=1,\ldots,M,\end{align*} $$

$$ \begin{align*}\sigma_{\theta v}^r=\hat{\sigma}_{\theta v}(s_{\sigma_{\theta v}}^{r}),\end{align*} $$

$$ \begin{align*}\sigma_{\theta v}^r=\hat{\sigma}_{\theta v}(s_{\sigma_{\theta v}}^{r}),\end{align*} $$

where the expressions of

$\hat {\boldsymbol {\eta }}_j(s_{\boldsymbol {\eta }_j}^{r})$

,

$\hat {\boldsymbol {\eta }}_j(s_{\boldsymbol {\eta }_j}^{r})$

,

$\hat {\boldsymbol {\alpha }}_j(s_{\boldsymbol {\alpha }_j}^{r})$

, and

$\hat {\boldsymbol {\alpha }}_j(s_{\boldsymbol {\alpha }_j}^{r})$

, and

$\hat {\sigma }_{\theta v}(s_{\sigma _{\theta v}}^{r})$

are provided in Equations (A10)–(A13) in the Supplementary Material.

$\hat {\sigma }_{\theta v}(s_{\sigma _{\theta v}}^{r})$

are provided in Equations (A10)–(A13) in the Supplementary Material.

While the M-step in the above EM iteration is straightforward and avoids the need for tedious optimization procedures, the E-step involves complex multidimensional integrals. To address this challenge, we propose an SAEM algorithm, inspired by existing studies (Camilli & Geis, Reference Camilli and Geis2019; Lavielle & Mbogning, Reference Lavielle and Mbogning2014; Meng & Xu, Reference Meng and Xu2023), which replaces the E-step computation with stochastic approximation, thereby reducing the computational burden.

3.2.2 A dimensionality reduction computation of

$E_{(\mathbf {x}_j, \boldsymbol {\theta })}(S_{\boldsymbol {\eta }_{j}} \mid \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

$E_{(\mathbf {x}_j, \boldsymbol {\theta })}(S_{\boldsymbol {\eta }_{j}} \mid \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

Using the law of total expectation (Kolmogorov, Reference Kolmogorov2018),

From Equation (14), the inner expectation

![]() $E_{\mathbf {x}_{j}}(S_{\boldsymbol {\eta }_{j}} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

is given by

$E_{\mathbf {x}_{j}}(S_{\boldsymbol {\eta }_{j}} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

is given by

$$ \begin{align} E_{\mathbf{x}_{j}}(S_{\boldsymbol{\eta}_{j}} \mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r - 1})=\left\{ \sum_{i = 1}^{N}\sum_{k = 1}^{K_{j}-1}\theta_{i}E(X_{ijk} \mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r - 1}), \sum_{i = 1}^{N}E({\mathbf{X}}_{ij}\mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r - 1}), \sum_{i = 1}^{N}\theta_{i}, \boldsymbol{\theta}^\top\boldsymbol{\theta}\right\}, \end{align} $$

$$ \begin{align} E_{\mathbf{x}_{j}}(S_{\boldsymbol{\eta}_{j}} \mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r - 1})=\left\{ \sum_{i = 1}^{N}\sum_{k = 1}^{K_{j}-1}\theta_{i}E(X_{ijk} \mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r - 1}), \sum_{i = 1}^{N}E({\mathbf{X}}_{ij}\mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r - 1}), \sum_{i = 1}^{N}\theta_{i}, \boldsymbol{\theta}^\top\boldsymbol{\theta}\right\}, \end{align} $$

where

$$ \begin{align} \sum_{i=1}^{N}E({\mathbf{X}}_{ij}\mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1})=\left(\sum_{i=1}^N E(X_{ij1}\mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1}),\dots,\sum_{i=1}^N E(X_{ij(K_j-1)}\mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1})\right)^\top. \end{align} $$

$$ \begin{align} \sum_{i=1}^{N}E({\mathbf{X}}_{ij}\mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1})=\left(\sum_{i=1}^N E(X_{ij1}\mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1}),\dots,\sum_{i=1}^N E(X_{ij(K_j-1)}\mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1})\right)^\top. \end{align} $$

According to the relationship between

![]() $X_{ijk}$

and

$X_{ijk}$

and

![]() $Y_{ij}$

defined in Equation (9), the conditional distribution of

$Y_{ij}$

defined in Equation (9), the conditional distribution of

![]() $X_{ijk}$

given

$X_{ijk}$

given

![]() $Y_{ij}=y_{ij}$

is given by

$Y_{ij}=y_{ij}$

is given by

$$ \begin{align*}\nonumber X_{ijk}|y_{ij}\sim \left\{ \begin{aligned} & N(a_j\theta_i-b_j-\tau_{jk},1)I_{x_{ijk}<0}, \quad &\text{if } y_{ij} < k, \\ &N(a_j\theta_i-b_j-\tau_{jk},1)I_{x_{ijk}\geq0}, \quad &\text{if }y_{ij} \geq k. \end{aligned} \right. \end{align*} $$

$$ \begin{align*}\nonumber X_{ijk}|y_{ij}\sim \left\{ \begin{aligned} & N(a_j\theta_i-b_j-\tau_{jk},1)I_{x_{ijk}<0}, \quad &\text{if } y_{ij} < k, \\ &N(a_j\theta_i-b_j-\tau_{jk},1)I_{x_{ijk}\geq0}, \quad &\text{if }y_{ij} \geq k. \end{aligned} \right. \end{align*} $$

Further, it can be derived that the conditional expectation of

![]() $X_{ijk}$

given

$X_{ijk}$

given

![]() $\boldsymbol {\theta }$

,

$\boldsymbol {\theta }$

,

![]() $\mathbf {y}$

,

$\mathbf {y}$

,

![]() $\mathbf {t}$

, and

$\mathbf {t}$

, and

![]() $\boldsymbol {\Omega }^{r-1}$

is

$\boldsymbol {\Omega }^{r-1}$

is

$$ \begin{align} E(X_{ijk} \mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1}) = \begin{cases} a_j^{r-1} \theta_{i} - b_j^{r-1} - \tau_{jk}^{r-1} - \frac{\phi(-a_j^{r-1} \theta_{i} + b_j^{r-1} + \tau_{jk}^{r-1})}{\Phi(-a_j^{r-1} \theta_{i} + b_j^{r-1} + \tau_{jk}^{r-1})}, & \text{if } y_{ij} < k, \\ a_j^{r-1} \theta_{i} - b_j^{r-1} - \tau_{jk}^{r-1} + \frac{\phi(-a_j^{r-1} \theta_{i} + b_j^{r-1} + \tau_{jk}^{r-1})}{1 - \Phi(-a_j^{r-1} \theta_{i} + b_j^{r-1} + \tau_{jk}^{r-1})}, & \text{if }y_{ij} \geq k. \end{cases} \end{align} $$

$$ \begin{align} E(X_{ijk} \mid \boldsymbol{\theta}, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1}) = \begin{cases} a_j^{r-1} \theta_{i} - b_j^{r-1} - \tau_{jk}^{r-1} - \frac{\phi(-a_j^{r-1} \theta_{i} + b_j^{r-1} + \tau_{jk}^{r-1})}{\Phi(-a_j^{r-1} \theta_{i} + b_j^{r-1} + \tau_{jk}^{r-1})}, & \text{if } y_{ij} < k, \\ a_j^{r-1} \theta_{i} - b_j^{r-1} - \tau_{jk}^{r-1} + \frac{\phi(-a_j^{r-1} \theta_{i} + b_j^{r-1} + \tau_{jk}^{r-1})}{1 - \Phi(-a_j^{r-1} \theta_{i} + b_j^{r-1} + \tau_{jk}^{r-1})}, & \text{if }y_{ij} \geq k. \end{cases} \end{align} $$

Finally, by substituting the expression for

![]() $E(X_{ijk} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

in Equation (19) into Equations (17) and (18), the closed form of

$E(X_{ijk} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

in Equation (19) into Equations (17) and (18), the closed form of

![]() $E_{\mathbf {x}_{j}}(S_{\boldsymbol {\eta }_{j}} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r - 1})$

is obtained. Let

$E_{\mathbf {x}_{j}}(S_{\boldsymbol {\eta }_{j}} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r - 1})$

is obtained. Let

![]() $h_j^r({\boldsymbol {\theta }})=E_{\mathbf {x}_{j}}(S_{\boldsymbol {\eta }_{j}} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

, then we have

$h_j^r({\boldsymbol {\theta }})=E_{\mathbf {x}_{j}}(S_{\boldsymbol {\eta }_{j}} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

, then we have

This reduces the expectation of

![]() $S_{\boldsymbol {\eta }_{j}}$

with respect to

$S_{\boldsymbol {\eta }_{j}}$

with respect to

![]() $\boldsymbol {\theta }$

and

$\boldsymbol {\theta }$

and

![]() ${\mathbf {X}}$

to the expectation of

${\mathbf {X}}$

to the expectation of

![]() $h_j^r({\boldsymbol {\theta }})$

with respect to

$h_j^r({\boldsymbol {\theta }})$

with respect to

![]() $\boldsymbol {\theta }$

, thereby achieving dimensionality reduction.

$\boldsymbol {\theta }$

, thereby achieving dimensionality reduction.

3.2.3 General framework of the SAEM algorithm

Based on the E-step in Equation (20), and following Delyon et al. (Reference Delyon, Lavielle and Moulines1999), the rth SAEM iteration consists of the following three steps:

-

1. S-step: Randomly sample

$\boldsymbol {\zeta }_{i}^{l}=(\theta _i^l, v_i^l) (l=1,\ldots ,m_r)$

from

$\boldsymbol {\zeta }_{i}^{l}=(\theta _i^l, v_i^l) (l=1,\ldots ,m_r)$

from

$f(\boldsymbol {\zeta }_{i}|\mathbf {y},\mathbf {t},\boldsymbol {\Omega }^{r-1})$

, for

$f(\boldsymbol {\zeta }_{i}|\mathbf {y},\mathbf {t},\boldsymbol {\Omega }^{r-1})$

, for

$i=1,\ldots ,N$

. The detailed sampling procedure is given in Appendix B of the Supplementary Material.

$i=1,\ldots ,N$

. The detailed sampling procedure is given in Appendix B of the Supplementary Material. -

2. SA-step: Let

$\boldsymbol {\theta }^l=(\theta _1^l,\ldots ,\theta _N^l)^\top $

and

$\boldsymbol {\theta }^l=(\theta _1^l,\ldots ,\theta _N^l)^\top $

and

$\mathbf {v}^l=(v_1^l,\ldots ,v_N^l)^\top $

. Using the generated

$\mathbf {v}^l=(v_1^l,\ldots ,v_N^l)^\top $

. Using the generated

$\boldsymbol {\theta }^l$

and

$\boldsymbol {\theta }^l$

and

$\mathbf {v}^l$

, compute

$\mathbf {v}^l$

, compute

$S_{\boldsymbol {\alpha }_{j}}^{l}$

,

$S_{\boldsymbol {\alpha }_{j}}^{l}$

,

$S_{\sigma _{\theta v}}^{l}$

, and

$S_{\sigma _{\theta v}}^{l}$

, and

$h_j^{r}(\boldsymbol {\theta }^{l})$

according to Equations 15–17, for

$h_j^{r}(\boldsymbol {\theta }^{l})$

according to Equations 15–17, for

$l=1,\ldots ,m_r$

. The sufficient statistics are then updated as follows: and

$l=1,\ldots ,m_r$

. The sufficient statistics are then updated as follows: and $$ \begin{align*} s_{\boldsymbol{\eta}_j}^{r}&=s_{\boldsymbol{\eta}_j}^{r-1}+\gamma_r\left(\frac{\sum_{l=1}^{m_r}h_j^{r}(\boldsymbol{\theta}^{l})}{m_r}-s_{\boldsymbol{\eta}_j}^{r-1}\right),\nonumber\\ s_{\boldsymbol{\alpha}_j}^{r}&= s_{\boldsymbol{\alpha}_j}^{r-1}+\gamma_r\left(\frac{\sum_{l=1}^{m_r}S_{\boldsymbol{\alpha}_j}^{l}}{m_r}-s_{\boldsymbol{\alpha}_j}^{r-1}\right), \text{ for } j=1,\ldots,M, \nonumber \end{align*} $$

where

$$ \begin{align*} s_{\boldsymbol{\eta}_j}^{r}&=s_{\boldsymbol{\eta}_j}^{r-1}+\gamma_r\left(\frac{\sum_{l=1}^{m_r}h_j^{r}(\boldsymbol{\theta}^{l})}{m_r}-s_{\boldsymbol{\eta}_j}^{r-1}\right),\nonumber\\ s_{\boldsymbol{\alpha}_j}^{r}&= s_{\boldsymbol{\alpha}_j}^{r-1}+\gamma_r\left(\frac{\sum_{l=1}^{m_r}S_{\boldsymbol{\alpha}_j}^{l}}{m_r}-s_{\boldsymbol{\alpha}_j}^{r-1}\right), \text{ for } j=1,\ldots,M, \nonumber \end{align*} $$

where $$ \begin{align*} s_{\sigma_{\theta v}}^{r}=s_{\sigma_{\theta v}}^{r-1}+\gamma_r\left(\frac{\sum_{l=1}^{m_r}S_{\sigma_{\theta v}}^{l}}{m_r}-s_{\sigma_{\theta v}}^{r-1}\right), \nonumber \end{align*} $$

$$ \begin{align*} s_{\sigma_{\theta v}}^{r}=s_{\sigma_{\theta v}}^{r-1}+\gamma_r\left(\frac{\sum_{l=1}^{m_r}S_{\sigma_{\theta v}}^{l}}{m_r}-s_{\sigma_{\theta v}}^{r-1}\right), \nonumber \end{align*} $$

$ \gamma _r>0$

,

$ \gamma _r>0$

,

$\sum _{r=1}^{+\infty }\gamma _r=+\infty $

and

$\sum _{r=1}^{+\infty }\gamma _r=+\infty $

and

$\sum _{r=1}^{+\infty }\gamma _r^2<+\infty $

.

$\sum _{r=1}^{+\infty }\gamma _r^2<+\infty $

.

-

3. M-Step: Compute

and $$ \begin{align*}\boldsymbol{\eta}^r_j=\hat{\boldsymbol{\eta}}_j(s_{\boldsymbol{\eta}_j}^{r}),\boldsymbol{\alpha}^r_j=\hat{\boldsymbol{\alpha}}_j(s_{\boldsymbol{\alpha}_j}^{r}), \text{for } j=1,\ldots,M\end{align*} $$

according to Equations (A10)–(A13) in the Supplementary Material.

$$ \begin{align*}\boldsymbol{\eta}^r_j=\hat{\boldsymbol{\eta}}_j(s_{\boldsymbol{\eta}_j}^{r}),\boldsymbol{\alpha}^r_j=\hat{\boldsymbol{\alpha}}_j(s_{\boldsymbol{\alpha}_j}^{r}), \text{for } j=1,\ldots,M\end{align*} $$

according to Equations (A10)–(A13) in the Supplementary Material. $$ \begin{align*}\sigma_{\theta v}^r=\hat{\sigma}_{\theta v}(s_{\sigma_{\theta v}}^{r}),\end{align*} $$

$$ \begin{align*}\sigma_{\theta v}^r=\hat{\sigma}_{\theta v}(s_{\sigma_{\theta v}}^{r}),\end{align*} $$

Remark 2. A crucial element of our SAEM algorithm is the use of

![]() $h_j^r({\boldsymbol {\theta }})=E_{\mathbf {x}_{j}}(S_{\boldsymbol {\eta }_{j}} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

, which eliminates the need to sample

$h_j^r({\boldsymbol {\theta }})=E_{\mathbf {x}_{j}}(S_{\boldsymbol {\eta }_{j}} \mid \boldsymbol {\theta }, \mathbf {y}, \mathbf {t}, \boldsymbol {\Omega }^{r-1})$

, which eliminates the need to sample

![]() $\mathbf {x}_j$

during the S-step. This enhances computational efficiency and, more importantly, ensures that the estimates of

$\mathbf {x}_j$

during the S-step. This enhances computational efficiency and, more importantly, ensures that the estimates of

![]() $\tau _{jk}^r (k=1,\ldots ,K_j-1)$

obtained in the M-step reliably preserve the desired ascending order:

$\tau _{jk}^r (k=1,\ldots ,K_j-1)$

obtained in the M-step reliably preserve the desired ascending order:

![]() $\tau ^r_{j1}<\tau ^r_{j2}<\cdots <\tau ^r_{j(K_j-1)}$

. In contrast, if

$\tau ^r_{j1}<\tau ^r_{j2}<\cdots <\tau ^r_{j(K_j-1)}$

. In contrast, if

![]() $\mathbf {x}_j$

were randomly sampled during S-step, this order could be violated, as highlighted by Camilli and Geis (Reference Camilli and Geis2019). To illustrate this advantage, we first define

$\mathbf {x}_j$

were randomly sampled during S-step, this order could be violated, as highlighted by Camilli and Geis (Reference Camilli and Geis2019). To illustrate this advantage, we first define

![]() $\tilde {\tau }_{jk,l}^r$

according to Equation (A11) in the Supplementary Material, as follows:

$\tilde {\tau }_{jk,l}^r$

according to Equation (A11) in the Supplementary Material, as follows:

$$ \begin{align*} \tilde{\tau}_{jk,l}^r=\frac{- \sum_{i=1}^N E(X_{ijk}\mid \boldsymbol{\theta}^l, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1})}{N}+\frac{\left(\sum_{i=1}^{N}E({\mathbf{X}}_{ij}\mid\boldsymbol{\theta}^l, \mathbf{y},\mathbf{t},\boldsymbol{\Omega}^{r-1})\right)^\top\mathbf{1}_{K_j-1}}{N(K_{j}-1)}, \nonumber \end{align*} $$

$$ \begin{align*} \tilde{\tau}_{jk,l}^r=\frac{- \sum_{i=1}^N E(X_{ijk}\mid \boldsymbol{\theta}^l, \mathbf{y}, \mathbf{t}, \boldsymbol{\Omega}^{r-1})}{N}+\frac{\left(\sum_{i=1}^{N}E({\mathbf{X}}_{ij}\mid\boldsymbol{\theta}^l, \mathbf{y},\mathbf{t},\boldsymbol{\Omega}^{r-1})\right)^\top\mathbf{1}_{K_j-1}}{N(K_{j}-1)}, \nonumber \end{align*} $$

where

![]() $\mathbf {1}_{K_j-1}$

denotes a

$\mathbf {1}_{K_j-1}$

denotes a

![]() $(K_j-1)\times 1$

column vector with all entries equal to

$(K_j-1)\times 1$

column vector with all entries equal to

![]() $1$

. The term

$1$

. The term

![]() $\left (\sum _{i=1}^{N}E({\mathbf {X}}_{ij}\mid \boldsymbol {\theta }^l, \mathbf {y},\mathbf {t},\boldsymbol {\Omega }^{r-1})\right )^\top \mathbf {1}_{K_j-1}$

represents the summation of the elements within the vector

$\left (\sum _{i=1}^{N}E({\mathbf {X}}_{ij}\mid \boldsymbol {\theta }^l, \mathbf {y},\mathbf {t},\boldsymbol {\Omega }^{r-1})\right )^\top \mathbf {1}_{K_j-1}$

represents the summation of the elements within the vector

![]() $\sum _{i=1}^{N}E({\mathbf {X}}_{ij}\mid \boldsymbol {\theta }^l, \mathbf {y},\mathbf {t},\boldsymbol {\Omega }^{r-1})$

. Using

$\sum _{i=1}^{N}E({\mathbf {X}}_{ij}\mid \boldsymbol {\theta }^l, \mathbf {y},\mathbf {t},\boldsymbol {\Omega }^{r-1})$

. Using

![]() $\tilde {\tau }_{jk,l}^r$

, the estimate

$\tilde {\tau }_{jk,l}^r$

, the estimate

![]() $\tau _{jk}^r$

in M-step of our SAEM can be equivalently computed as

$\tau _{jk}^r$

in M-step of our SAEM can be equivalently computed as

$$ \begin{align*}\tau_{jk}^r=\tau_{jk}^{r-1}+\gamma_r \left(\frac{\sum_{l=1}^{m_r}\tilde{\tau}_{jk,l}^r}{m_r}-\tau_{jk}^{r-1}\right).\end{align*} $$

$$ \begin{align*}\tau_{jk}^r=\tau_{jk}^{r-1}+\gamma_r \left(\frac{\sum_{l=1}^{m_r}\tilde{\tau}_{jk,l}^r}{m_r}-\tau_{jk}^{r-1}\right).\end{align*} $$

Moreover, it can be easily shown that if the initial values of

![]() $\boldsymbol {\tau }_j$

satisfy

$\boldsymbol {\tau }_j$

satisfy

![]() $\tau _{j1}^{0}<\cdots <\tau _{j(K_{j}-1)}^{0}$

, the following order holds:

$\tau _{j1}^{0}<\cdots <\tau _{j(K_{j}-1)}^{0}$

, the following order holds:

Thus, the order

![]() $\tilde {\tau }_{j1,l}<\dots <\tilde {\tau }_{j(K_j-1),l}$

is preserved, ensuring that

$\tilde {\tau }_{j1,l}<\dots <\tilde {\tau }_{j(K_j-1),l}$

is preserved, ensuring that

![]() $\tau _{j1}^r<\dots <\tau _{j(K_j-1)}^r$

holds.

$\tau _{j1}^r<\dots <\tau _{j(K_j-1)}^r$

holds.

It is important to note that the SAEM procedure does not require the number of response categories

![]() $K_j$

to be the same across items, which makes it well-suited for estimating the GR–RT model with mixed-scoring items. In addition, because the HM is nested within the GR–RT model, the SAEM algorithm developed here can be directly applied to estimate the HM.

$K_j$

to be the same across items, which makes it well-suited for estimating the GR–RT model with mixed-scoring items. In addition, because the HM is nested within the GR–RT model, the SAEM algorithm developed here can be directly applied to estimate the HM.

Large-scale assessments often employ matrix-sampling designs, leading to sparse response matrices with non-random missing data. This missingness is not a flaw in data collection but rather a natural consequence of the test booklet assignment process. To facilitate the flexible application of the GR–RT model in this context, we extended the SAEM algorithm to accommodate non-random missing data by introducing an indicator matrix

![]() $\mathbf {D} = \{d_{ij}\}_{N \times M}$

, where

$\mathbf {D} = \{d_{ij}\}_{N \times M}$

, where

![]() $d_{ij} = 1$

denotes that item j is administered to respondent i, and

$d_{ij} = 1$

denotes that item j is administered to respondent i, and

![]() $d_{ij} = 0$

otherwise. This extension explicitly accounts for the non-ignorable missingness mechanism, allowing for valid parameter estimation under complex large-scale assessment designs. The detailed procedures of the extended SAEM algorithm are provided in Appendix C of the Supplementary Material.

$d_{ij} = 0$

otherwise. This extension explicitly accounts for the non-ignorable missingness mechanism, allowing for valid parameter estimation under complex large-scale assessment designs. The detailed procedures of the extended SAEM algorithm are provided in Appendix C of the Supplementary Material.

3.2.4 Some specifications for implementing the SAEM algorithm

Initialization: Several studies, including Delyon et al. (Reference Delyon, Lavielle and Moulines1999), Lavielle and Mbogning (Reference Lavielle and Mbogning2014), and Meng and Xu (Reference Meng and Xu2023), have demonstrated that the SAEM algorithm is robust to the selection of initial values. To evaluate this robustness of our SAEM algorithm, we conducted a simulation study comparing parameter estimation performance with three different sets of initial values: a set of randomly selected values, a set of common values, and the true values of

![]() $\boldsymbol {\Omega }$

. The parameter recovery results across these different initial values were nearly identical, providing additional evidence of the SAEM algorithm’s robustness in terms of initial value selection. For the sake of conciseness, the details of this simulation study are not reported here. In both our simulation and empirical studies, we used the set of common values as the initial values.

$\boldsymbol {\Omega }$

. The parameter recovery results across these different initial values were nearly identical, providing additional evidence of the SAEM algorithm’s robustness in terms of initial value selection. For the sake of conciseness, the details of this simulation study are not reported here. In both our simulation and empirical studies, we used the set of common values as the initial values.

The specifications of

![]() $\gamma _r$

: The choice of step size

$\gamma _r$

: The choice of step size

![]() $\gamma _r$

plays an important role in determining the convergence of the SAEM algorithm. Drawing on existing studies (Camilli & Geis, Reference Camilli and Geis2019; Lavielle & Mbogning, Reference Lavielle and Mbogning2014; Meng & Xu, Reference Meng and Xu2023), we adopt a two-stage step size sequence selection approach. Specifically, we set

$\gamma _r$

plays an important role in determining the convergence of the SAEM algorithm. Drawing on existing studies (Camilli & Geis, Reference Camilli and Geis2019; Lavielle & Mbogning, Reference Lavielle and Mbogning2014; Meng & Xu, Reference Meng and Xu2023), we adopt a two-stage step size sequence selection approach. Specifically, we set

![]() $\gamma _r=1$

during the initial R iterations (the first stage) and

$\gamma _r=1$

during the initial R iterations (the first stage) and

![]() $\gamma _r=\frac {1}{(r-R)^\kappa }$

for

$\gamma _r=\frac {1}{(r-R)^\kappa }$

for

![]() $r> R$

(the second stage), where

$r> R$

(the second stage), where

![]() $0.5 < \kappa \leq 1$

. In this study, we use

$0.5 < \kappa \leq 1$

. In this study, we use

![]() $\kappa = 1$

. The step size sequence is thus expressed as

$\kappa = 1$

. The step size sequence is thus expressed as

![]() $\gamma _r = I_{(r \le R)} + I_{(r> R)}\,\frac {1}{r - R}$

. In addition, through simulation studies, we verify that the convergence of the SAEM algorithm is ensured when

$\gamma _r = I_{(r \le R)} + I_{(r> R)}\,\frac {1}{r - R}$

. In addition, through simulation studies, we verify that the convergence of the SAEM algorithm is ensured when

![]() $R = 500$

.

$R = 500$

.

The specifications of

![]() $m_r$

: Delyon et al. (Reference Delyon, Lavielle and Moulines1999) have demonstrated that the convergence of the SAEM algorithm is guaranteed with

$m_r$

: Delyon et al. (Reference Delyon, Lavielle and Moulines1999) have demonstrated that the convergence of the SAEM algorithm is guaranteed with

![]() $m_r = 1$

. Notably, increasing

$m_r = 1$

. Notably, increasing

![]() $m_r$

does not improve computational accuracy but instead adds to the computational burden. Therefore, in practical applications of the SAEM algorithm,

$m_r$

does not improve computational accuracy but instead adds to the computational burden. Therefore, in practical applications of the SAEM algorithm,

![]() $m_r$

is typically set to

$m_r$

is typically set to

![]() $1$

.

$1$

.

Convergence check: The convergence of the SAEM iteration is monitored using

![]() $\Delta ^r=\|\boldsymbol {\Omega }^{r} - \boldsymbol {\Omega }^{r-1}\|_{\max }$

. Here,

$\Delta ^r=\|\boldsymbol {\Omega }^{r} - \boldsymbol {\Omega }^{r-1}\|_{\max }$

. Here,

![]() $\|\cdot \|_{\max }$

denotes the maximum of the absolute values within a vector. In this study, the SAEM iteration is recommended to terminate when

$\|\cdot \|_{\max }$

denotes the maximum of the absolute values within a vector. In this study, the SAEM iteration is recommended to terminate when

![]() $\Delta ^r<10^{-4}$

or the maximum number of iterations exceeds 1,000.

$\Delta ^r<10^{-4}$

or the maximum number of iterations exceeds 1,000.

4 Simulation studies

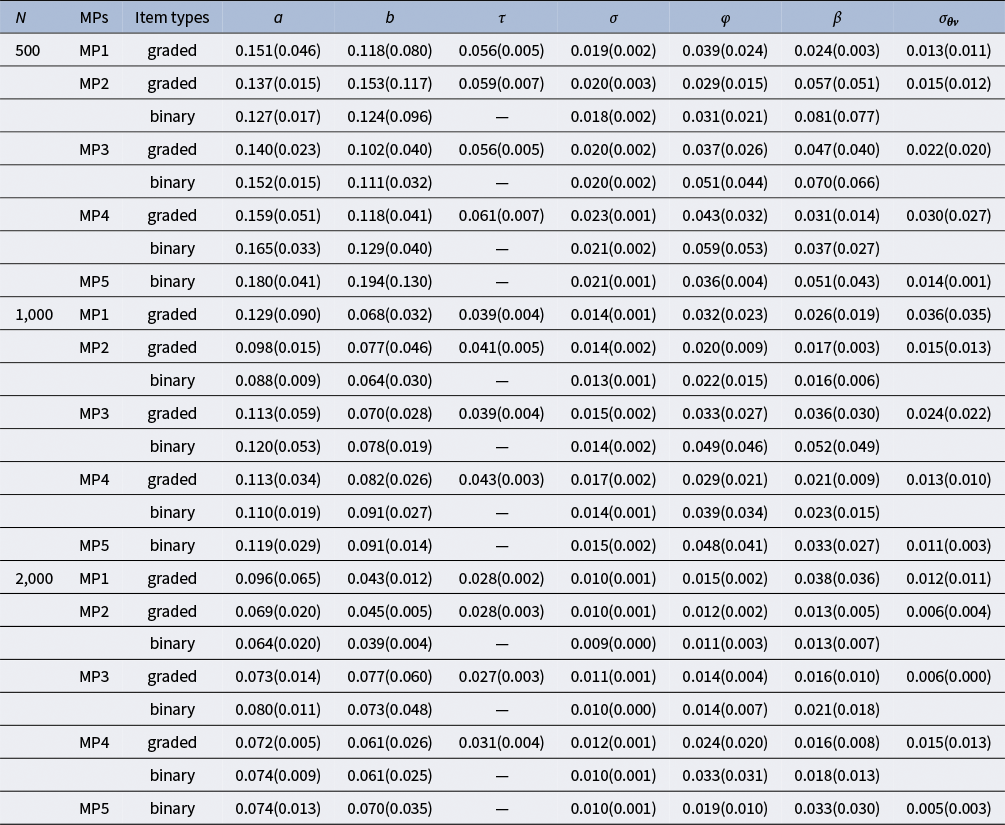

This section presents two simulation studies. Simulation Study 1 evaluated the parameter recovery of the GR–RT model and the HM within the MMLE framework using the SAEM algorithm, and also compared the results obtained from Mplus estimation. Simulation Study 2 compared the performance of the GR–RT model and the HM, and assessed the effectiveness of Akaike information criterion (AIC) and Bayesian information criterion (BIC) in selecting the correct model.

4.1 Simulation study 1

4.1.1 Design

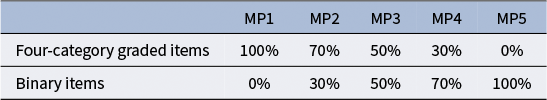

This study investigated the performance of the proposed SAEM algorithm under mixed-format testing conditions that included both dichotomous and graded-response items. Without loss of generality, the graded items were specified to have four response categories. To assess the impact of item-type composition, five mixture proportions (MPs) of binary and graded-scoring items were considered, as shown in Table 2.

Table 2 Five mixture proportion (MP) structures of four-category graded and binary items

Since the sample size (N) and test length (M) are two important factors influencing the accuracy of the parameter estimation, this simulation considered three sample sizes (

![]() $N = 500$

, 1,000, and 2,000) and two test lengths (

$N = 500$

, 1,000, and 2,000) and two test lengths (

![]() $M = 20$

and 40). The three factors (sample size, test length, and MP) were crossed, resulting in

$M = 20$

and 40). The three factors (sample size, test length, and MP) were crossed, resulting in

![]() $ 3 \times 2 \times 5 = 30$

simulation conditions. For each simulation condition, the item response and RT data were generated under two true models: the GR–RT model and the HM. The true parameter values were set as follows.

$ 3 \times 2 \times 5 = 30$

simulation conditions. For each simulation condition, the item response and RT data were generated under two true models: the GR–RT model and the HM. The true parameter values were set as follows.

Let

![]() $M_g$

denote the number of graded-scored items, where

$M_g$

denote the number of graded-scored items, where

![]() $j = 1, \dots , M_g$

indexes the graded items and

$j = 1, \dots , M_g$

indexes the graded items and

![]() $j = M_g + 1, \dots , M$

indexes the binary items. Let

$j = M_g + 1, \dots , M$

indexes the binary items. Let

![]() $U(l_1,l_2)$

denote the uniform distribution between

$U(l_1,l_2)$

denote the uniform distribution between

![]() $l_1$

and

$l_1$

and

![]() $l_2$

, and

$l_2$

, and

![]() $LN(\mu ,\sigma ^2)$

denote the log-normal distribution with parameters

$LN(\mu ,\sigma ^2)$

denote the log-normal distribution with parameters

![]() $\mu $

and

$\mu $

and

![]() $\sigma ^2$

.

$\sigma ^2$

.

-

1. GR–RT model:

-

(a) Graded-scored items:

-

• GRM: For

$j=1, \dots , M_g$

, the discrimination parameter

$j=1, \dots , M_g$

, the discrimination parameter

$a_j$

was generated from a uniform distribution,

$a_j$

was generated from a uniform distribution,

$a_j \sim U(0.5,2.5)$

, allowing for moderate to high discrimination. The location parameter

$a_j \sim U(0.5,2.5)$

, allowing for moderate to high discrimination. The location parameter

$b_j$

was generated from a standard normal distribution,

$b_j$

was generated from a standard normal distribution,

$b_j\sim N(0,1)$

, to center the items on the ability scale. These specifications follow previous studies (e.g., Zhang & Chen, Reference Zhang and Chen2024; Wang & Xu, Reference Wang and Xu2015; Meng & Xu, Reference Meng and Xu2023). The threshold parameters

$b_j\sim N(0,1)$

, to center the items on the ability scale. These specifications follow previous studies (e.g., Zhang & Chen, Reference Zhang and Chen2024; Wang & Xu, Reference Wang and Xu2015; Meng & Xu, Reference Meng and Xu2023). The threshold parameters

$\tau _{j1}, \tau _{j2}$

, and

$\tau _{j1}, \tau _{j2}$

, and

$\tau _{j3}$

were generated as follows:

$\tau _{j3}$

were generated as follows:

$\tau _{j1} \sim U(-1,-0.3)$

,

$\tau _{j1} \sim U(-1,-0.3)$

,

$\tau _{j2} \sim U(-0.25,0.25)$

, and

$\tau _{j2} \sim U(-0.25,0.25)$

, and

$\tau _{j3}=-\tau _{j1}-\tau _{j2}$

, such as

$\tau _{j3}=-\tau _{j1}-\tau _{j2}$

, such as

$\sum _{k=1}^{3}\tau _{jk}=0$

. These specifications based on empirical results from the data of one of the test booklets of the PISA 2022 science literacy assessment.

$\sum _{k=1}^{3}\tau _{jk}=0$

. These specifications based on empirical results from the data of one of the test booklets of the PISA 2022 science literacy assessment. -

• Log-normal RT model: For

$j=1, \dots , M_g$

,

$j=1, \dots , M_g$

,

$\beta _j \sim U(3,5)$

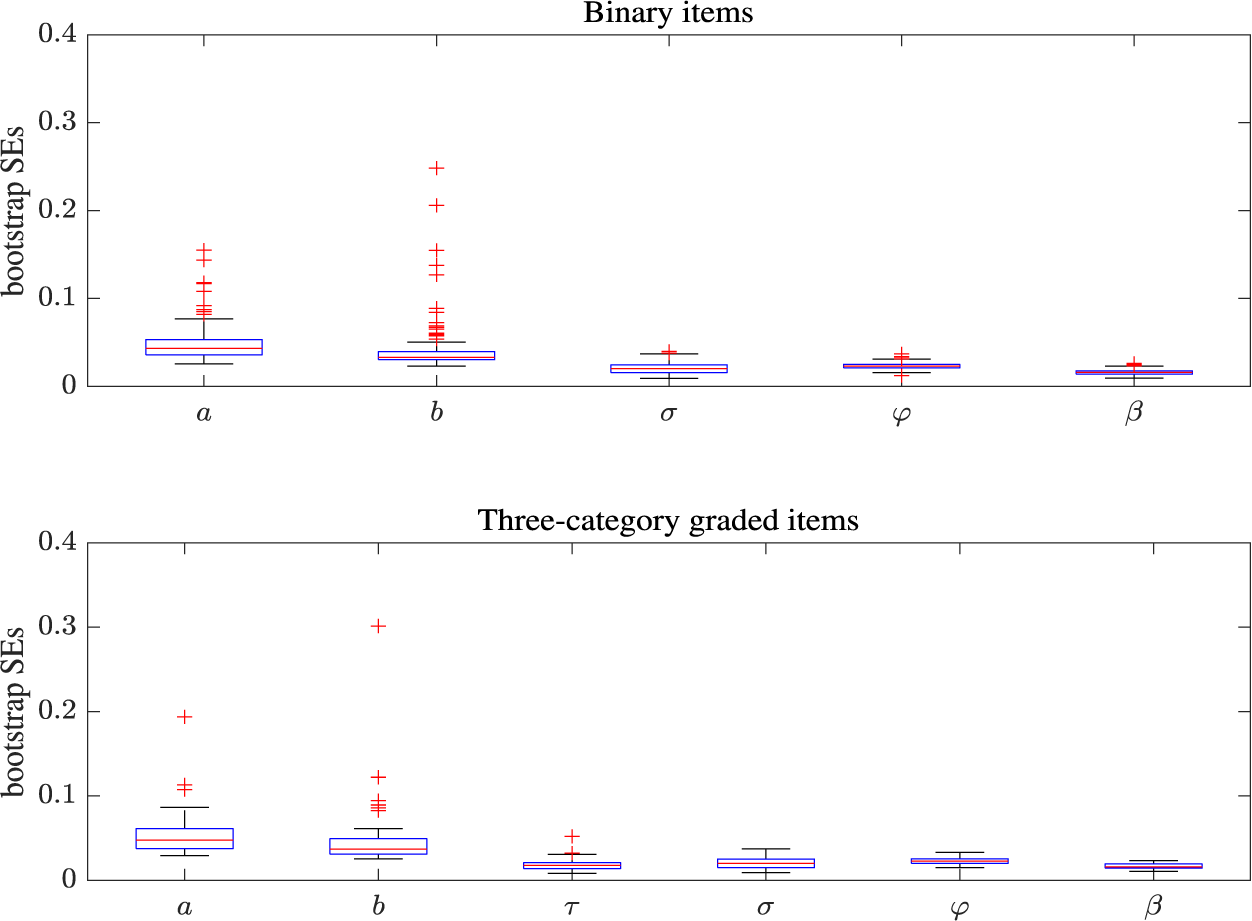

,