Introduction

Background and rationale

The development of intelligent algorithmic systems constitutes one of the main drivers of transformation in contemporary organizations. Artificial intelligence (AI) affects not only technical or logistical processes but also reshapes decision-making, work management, and interactions among companies, employees, and customers (Davenport & Ronanki, Reference Davenport and Ronanki2018; Gerlick & Liozu, Reference Gerlick and Liozu2020; Rai, Reference Rai2020). From automated marketing to personnel selection, AI is integrated at multiple levels of the business environment, promising efficiency and personalization (Davenport, Guha, Grewal & Bressgott, Reference Davenport, Guha, Grewal and Bressgott2020; Kozodoi, Jacob & Lessmann, Reference Kozodoi, Jacob and Lessmann2022; Shrestha, Ben-Menahem & von Krogh, Reference Shrestha, Ben-Menahem and von Krogh2019) due to its ability to process large volumes of data and adjust decisions in real time (Kaplan & Haenlein, Reference Kaplan and Haenlein2019; Singh, Nanavati, Kar & Gupta, Reference Singh, Nanavati, Kar and Gupta2023). However, this potential raises ethical and social challenges that require systematic critical reflection (Ashok, Madan, Joha & Sivarajah, Reference Ashok, Madan, Joha and Sivarajah2022; Ettlinger, Reference Ettlinger2018; Kaur, Uslu, Rittichier & Durresi, Reference Kaur, Uslu, Rittichier and Durresi2023; Wright & Schultz, Reference Wright and Schultz2018).

AI is not a homogeneous phenomenon but rather a set of technologies (machine learning, neural networks, natural language processing, or expert systems) that emulate human learning and reasoning (Campolo, Sanfilippo, Whittaker & Crawford, Reference Campolo, Sanfilippo, Whittaker and Crawford2017; Huang & Rust, Reference Huang and Rust2018; Shankar, Reference Shankar2018; Syam & Sharma, Reference Syam and Sharma2018). These systems, lacking self-reflection (Alkhatib & Bernstein, Reference Alkhatib and Bernstein2019), operate through rules rather than contexts, and their opacity turns many models into ‘black boxes’ that are unintelligible even to their developers (Guidotti et al., Reference Guidotti, Monreale, Ruggieri, Turin, Giannotti and Pedreschi2019). AI-based management can thus erode organizational trust and make error identification more difficult (Ananny & Crawford, Reference Ananny and Crawford2018; Angwin, Larson, Mattu & Kirchner, Reference Angwin, Larson, Mattu and Kirchner2016; Rai, Reference Rai2020).

The effects of AI on labor generate divergent views. Some studies highlight productivity gains (Arntz, Gregory & Zierahn, Reference Arntz, Gregory and Zierahn2017; Seele, Dierksmeier, Hofstetter & Schultz, Reference Seele, Dierksmeier, Hofstetter and Schultz2021), whereas others warn of risks of substitution, deskilling, and dehumanization of work (Benlian et al., Reference Benlian, Wiener, Cram, Krasnova, Maedche, Möhlmann and Remus2022; Bruun & Duka, Reference Bruun and Duka2018; Campolo et al., Reference Campolo, Sanfilippo, Whittaker and Crawford2017; Lee, Reference Lee2018; Schafheitle et al., Reference Schafheitle, Weibel, Ebert, Kasper, Schank and Leicht-Deobald2020). Furthermore, the concentration of decision-making power in the hands of algorithm designers exacerbates organizational asymmetries (Curchod, Patriotta, Cohen & Neysen, Reference Curchod, Patriotta, Cohen and Neysen2020; Rahman, Reference Rahman2021).

Another critical issue is bias reproduction. Algorithms are not neutral; they tend to reflect and amplify inequalities embedded in data (Akter et al., Reference Akter, McCarthy, Sajib, Michael, Dwivedi, D’Ambra and Shen2021; Barocas & Selbst, Reference Barocas and Selbst2016; Ntoutsi, Fafalios, Gadiraju, Iosifidis & Nejdl et al., Reference Ntoutsi, Fafalios, Gadiraju, Iosifidis, Nejdl, Vidal and Staab2020). Documented biases related to race, sex, or class have affected decision-making in justice, healthcare, and employment (Caliskan, Bryson & Narayanan, Reference Caliskan, Bryson and Narayanan2017; Fuster, Goldsmith-Pinkham, Walther & Ramadorai, Reference Fuster, Goldsmith-Pinkham, Walther and Ramadorai2022; Mehrabi, Morstatter, Saxena, Lerman & Galstyan, Reference Mehrabi, Morstatter, Saxena, Lerman and Galstyan2021). Even when sensitive variables are not explicitly used, indirect data correlations may produce discriminatory outcomes (Lambrecht & Tucker, Reference Lambrecht and Tucker2019), as evidenced by the COMPAS case in the US judicial system (Angwin et al., Reference Angwin, Larson, Mattu and Kirchner2016).

The lack of explainability and transparency deepens ethical dilemmas. There is no consensus on what it means to ‘explain’ an AI system (Carvalho, Pereira & Cardoso, Reference Carvalho, Pereira and Cardoso2019; Confalonieri, Weyde, Besold & Martín, Reference Confalonieri, Weyde, Besold and Martín2021), which hinders supervision and accountability (Arrieta et al., Reference Arrieta, Díaz-Rodríguez, Del Ser, Bennetot, Tabik, Barbado, García, Gil-López, Molina, Poyatos, Ribeiro and Herrera2020; Meske, Bunde, Schneider & Gersch, Reference Meske, Bunde, Schneider and Gersch2022). In contexts where fundamental rights are at stake, technological opacity poses additional risks (Alt, Reference Alt2018; Feuerriegel, Dolata & Schwabe, Reference Feuerriegel, Dolata and Schwabe2020; Kaminski, Reference Kaminski2019; Langer et al., Reference Langer, Oster, Speith, Hermanns, Kästner, Schmidt and Baum2021). Excessive reliance on automated systems (the automation bias) leads to uncritical acceptance of algorithmic decisions (Goddard, Roudsari & Wyatt, Reference Goddard, Roudsari and Wyatt2012), while limited understanding of personal data processing restricts user agency (Kozodoi et al., Reference Kozodoi, Jacob and Lessmann2022; Selbst & Powles, Reference Selbst and Powles2017).

These tensions have spurred the search for robust ethical and regulatory frameworks. Although principles such as responsibility and transparency are widely cited, their implementation still faces technical and organizational barriers (Morley et al., Reference Morley, Kinsey, Elhalal, García, Ziosi and Floridi2023). Stronger regulation is demanded to protect individual and collective rights in the face of algorithmic expansion (Adams-Prassl, Binns & Kelly-Lyth, Reference Adams-Prassl, Binns and Kelly-Lyth2023; Kaminski & Malgieri, Reference Kaminski and Malgieri2021; Mökander, Juneja, Floridi & Watson, Reference Mökander, Juneja, Floridi and Watson2022; Schuett, Reference Schuett2024), as current legal frameworks remain insufficient (Cath, Wachter, Mittelstadt, Taddeo & Floridi, Reference Cath, Wachter, Mittelstadt, Taddeo and Floridi2018; Mehrabi et al., Reference Mehrabi, Morstatter, Saxena, Lerman and Galstyan2021). The governance of AI requires verifiable mechanisms of oversight, auditing, and social participation (Novelli, Taddeo & Floridi, Reference Novelli, Taddeo and Floridi2024; Winfield & Jirotka, Reference Winfield and Jirotka2018).

Hence, the need for an interdisciplinary approach integrating sociological, economic, philosophical, and psychological perspectives (Boyd & Holton, Reference Boyd and Holton2018; Campolo et al., Reference Campolo, Sanfilippo, Whittaker and Crawford2017; Crawford & Calo, Reference Crawford and Calo2016; Rahwan et al., Reference Rahwan, Cebrian, Obradovich, Bongard, Bonnefon, Breazeal, Crandall, Christakis, Couzin, Jackson, Jennings, Kamar, Kloumann, Larochelle, Lazer, McElreath, Mislove, Parkes, Pentland and Wellman2019). The relationships among algorithms, power, and labor cannot be analyzed apart from their institutional and cultural contexts (Anderson, Reference Anderson2013; Arnold & Scheutz, Reference Arnold and Scheutz2016). Within this framework, concepts such as transparency, agency, epistemic justice, and accountability gain special relevance, as they point to an understanding of algorithmic ethics as a practical field of reflection (Munn, Reference Munn2023). From this perspective, the present study provides a structured overview of algorithmic ethics in organizational contexts through co-citation bibliometric techniques, with the purpose of articulating dialogue among conceptual analysis, ethical reflection, and empirical evidence.

Although the terms AI ethics, algorithmic ethics, and responsible management are often used interchangeably in the literature, this study adopts the concept of algorithmic ethics to emphasize the computational processes and automated decision-making logics that operate in organizational contexts, as well as their implications in terms of transparency, impartiality, and accountability.

The gap in the review literature

In recent years, the growing incorporation of algorithmic systems into organizations has led to an increasing number of literature reviews focused on AI ethics and its implications for management and work. These reviews reflect the consolidation of the field and have contributed to systematizing its main technical, organizational, and normative concerns. However, a close examination of this body of work reveals that existing knowledge remains fragmented into sector-specific or instrumental approaches, which hinders an integrated understanding of the field’s intellectual structure.

A first stream of reviews has focused on specific organizational contexts, particularly within the domain of human resource management. For example, Taslim, Rosnani and Fauzan (Reference Taslim, Rosnani and Fauzan2025) conduct a systematic review of AI-mediated decision-making in human resources, highlighting the role of employee participation and the ethical challenges associated with transparency and fairness. Similarly, Park, Chai, Park and Oh (Reference Park, Chai, Park and Oh2025) examine AI adoption from an organizational development perspective, emphasizing its implications for change processes, organizational culture, and internal legitimacy. While these studies offer valuable insights, their sector-specific focus limits their ability to capture the diversity of ethical debates that extend across other areas of management and organizational decision-making.

A second stream of reviews addresses specific ethical issues arising from the use of algorithms in business contexts. Kordzadeh and Ghasemaghaei (Reference Kordzadeh and Ghasemaghaei2022), for instance, synthesize the literature on algorithmic bias from an information systems perspective, developing a theoretical framework that links algorithm design to perceptions of justice and to organizational behaviors such as the acceptance, use, or rejection of algorithmic systems. Complementarily, Wang, López and Okazaki (Reference Wang, López and Okazaki2025) provide a bibliometric analysis centered on AI transparency in business settings, identifying thematic clusters such as trust, explainability, and user acceptance. Although these contributions deepen the understanding of key dimensions of algorithmic ethics, their thematically delimited scope prevents a comprehensive view of how the field is structured as a whole.

A third stream of reviews adopts an instrumental and governance-oriented perspective, focusing on the design of practical mechanisms to ensure the responsible use of AI. In this vein, Laine, Minkkinen and Mäntymäki (Reference Laine, Minkkinen and Mäntymäki2024) systematically review the literature on ethical audits of AI systems, highlighting their potential to strengthen accountability and organizational governance. Nevertheless, this type of research tends to concentrate on applied solutions and specific operational frameworks, without examining how such proposals are embedded within the broader intellectual structure of the academic debate on algorithmic ethics.

Taken together, these reviews indicate that algorithmic ethics in organizational contexts has been approached from multiple relevant perspectives, albeit in a partial and fragmented manner. Most existing studies rely on traditional narrative or systematic reviews, or on bibliometric analyses confined to specific subfields. As a result, their capacity to empirically map the intellectual structure of the field, identify cross-cutting thematic clusters, and detect works that act as bridges between technical, managerial, and normative approaches remains limited.

Against this backdrop, a clear gap persists in the literature: the absence of a bibliometric review based on co-citation techniques that systematically examines algorithmic ethics from a broad organizational and management perspective. In particular, there is a lack of studies capable of elucidating how the main research axes have been configured, what conceptual tensions run through them, and which works have contributed to connecting debates on transparency, bias, governance, and automated decision-making in business environments. The present study addresses this gap through a co-citation bibliometric analysis applied to a large corpus of publications indexed in the Web of Science, offering an integrated mapping of the field and laying the groundwork for a more coherent dialogue between algorithmic ethics and organizational management.

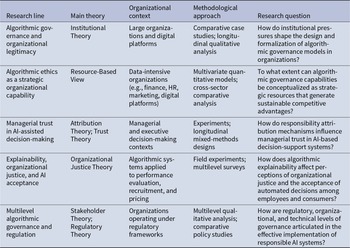

To situate this gap in the literature more precisely, Table 1 summarizes the main characteristics of some of the most influential and recent reviews on algorithmic ethics in organizational contexts. The comparison highlights differences in terms of thematic scope, methodological approach, and level of analysis, showing how prior studies tend to focus on specific functional domains, particular ethical issues, or instrumental governance mechanisms. This comparative perspective makes it possible to systematically visualize the fragmentation of the field and to contextualize the specific contribution of the present study.

Major reviews on algorithmic ethics in organizational contexts

As summarized in Table 1, despite the growing number of reviews addressing ethical issues related to AI in organizational contexts, the existing literature exhibits a notable fragmentation in terms of scope, methodological approach, and level of analysis. In particular, prior studies tend to focus on specific functional domains, discrete ethical issues, or delimited subfields, without offering an integrated view of the field’s intellectual structure from a broad organizational and management perspective. In contrast, the present study contributes to the literature in three main ways: methodologically, by applying co-citation techniques to a large corpus of publications indexed in the Web of Science; in terms of scope, by explicitly focusing on organizational and business contexts; and at the level of results, by identifying the main thematic clusters and the bridging works that articulate debates on transparency, bias, governance, and automated decision-making.

Purpose and scope of the study

The purpose of this study is to analyze and map the emerging field of algorithmic ethics in organizational contexts, aiming to clarify its conceptual foundations, main research areas, and relevance for business management and decision-making. To this end, a co-citation bibliometric analysis is conducted to identify the intellectual structure and thematic configuration of the field, as well as the most influential works and authors and the publications that serve as bridges between different disciplinary approaches.

More specifically, the study seeks to (i) delineate a representative corpus of academic publications on algorithmic ethics applied to organizations; (ii) identify the authors, journals, and works with the greatest impact on the consolidation of the field; (iii) detect the main thematic clusters and interpret their ethical and practical significance in the business domain; (iv) highlight the studies that connect previously separated research streams; and (v) examine the current limitations of the field, proposing possible directions for future research.

In line with these objectives, the study addresses five interrelated research questions: How is the academic debate on algorithmic ethics articulated within organizational settings? Which thematic areas structure this field? Which authors, journals, and publications have played a central role in its development? Which studies have acted as bridges between different lines of research? Which key aspects remain insufficiently explored in the current literature on algorithmic ethics in organizations?

The study adopts an exploratory approach based on co-citation bibliometric analysis to identify the main thematic cores of the field. The article is structured as follows: Section 2 describes the methodology employed; Section 3 presents the results of the analysis; Section 4 discusses the findings, limitations, and future research directions; and Section 5 summarizes the main conclusions.

Methodology

This study adopts a hybrid methodological design that combines a systematic literature review with a bibliometric co-citation analysis in order to map the intellectual structure of the field of algorithmic ethics in organizational and management contexts. This approach integrates the rigor of systematic review protocols with the analytical advantages of scientific mapping techniques, providing a structured, objective, and replicable overview of the development of the field.

In line with methodological recommendations for knowledge structuring and research gap identification (Tranfield, Denyer & Smart, Reference Tranfield, Denyer and Smart2003), the study is articulated in two complementary phases: (i) a systematic literature review following the PRISMA protocol, aimed at ensuring transparency, traceability, and replicability in corpus selection; and (ii) a bibliometric co-citation network analysis conducted using CiteSpace, designed to identify the main theoretical cores, research streams, and bridging publications that structure the academic debate.

Methodological approach and research design

This study conducts a bibliometric analysis of the field of algorithmic ethics with the aim of describing its conceptual structure and internal dynamics. Co-citation techniques and scientific mapping tools are employed to visually represent relationships among authors and topics and to identify the most influential clusters within the research community.

The study applies the co-citation technique, defined as the joint occurrence of two references in the bibliography of the same document, which is particularly useful for revealing the intellectual structure of a research field (Yan & Zhiping, Reference Yan and Zhiping2023). This approach enables the detection of consolidated research streams, interdisciplinary connections, and studies that act as conceptual bridges between thematic areas.

Recent studies have highlighted the usefulness of bibliometric techniques for analyzing the academic corpus of a field in a rigorous and objective manner (Öztürk, Kocaman & Kanbach, Reference Öztürk, Kocaman and Kanbach2024; Yan & Zhiping, Reference Yan and Zhiping2023). Compared with traditional narrative reviews, bibliometric analysis provides a quantitative and systematic perspective on scientific development (Kraus, Breier, Lim & Dabic et al., Reference Kraus, Breier, Lim and Dabic2022; Merigó, Gil-Lafuente & Yager, Reference Merigó, Gil-Lafuente and Yager2015), allowing the identification of influential authors, core journals, and the temporal evolution of a research domain (Cobo, López-Herrera, Herrera-Viedma & Herrera, Reference Cobo, López-Herrera, Herrera-Viedma and Herrera2011).

In line with this trend, the present study aligns with the growing adoption of bibliometric methods to examine the evolution of research in management and organization (Bahuguna, Srivastava & Tiwari, Reference Bahuguna, Srivastava and Tiwari2023; Escamilla-Solano, Díez-Martín, Blanco-González & Fernández-de Las Peñas, Reference Escamilla-Solano, Díez-Martín, Blanco-González and Fernández-de Las Peñas2023). In an increasingly complex knowledge environment, interdisciplinary collaboration and the capacity to process large volumes of information have become essential (Cao, Reference Cao2017). Systematic and bibliometric reviews make it possible to structure existing knowledge, identify emerging trends, and detect research gaps that guide future inquiry (Kraus, Bouncken & Aránega, Reference Kraus, Bouncken and Aránega2024; Snyder, Reference Snyder2019), thereby supporting informed decision-making in both academic and professional contexts (Palmatier, Houston & Hulland, Reference Palmatier, Houston and Hulland2018).

Data source, search strategy, and inclusion criteria

The data source used in this study was the Web of Science Core Collection, which is widely recognized as a reference database in bibliometric research due to its coverage, quality, and standardization of records (Gaviria-Marin, Merigó & Baier-Fuentes, Reference Gaviria-Marin, Merigó and Baier-Fuentes2019; Yan & Zhiping, Reference Yan and Zhiping2023). The following collections were included: Science Citation Index Expanded (SCI-Expanded), Social Sciences Citation Index (SSCI), Arts & Humanities Citation Index (AHCI), and Emerging Sources Citation Index (ESCI), which concentrate the most influential scientific production in the fields of management, social sciences, applied ethics, and AI.

The search was restricted to the period 2015–2025, in order to analyze the recent evolution of a rapidly expanding field. Only research articles and review articles published in peer-reviewed journals and written in English were considered.

The search strategy was designed through a structured combination of three interrelated semantic blocks, connected by Boolean operators:

• A first block related to algorithmic ethics and AI (e.g., algorithmic ethics, AI ethics, responsible AI, explainable AI, algorithmic fairness, algorithmic bias, AI governance).

• A second block linked to organizational and management contexts (e.g., business, management, corporate, human resources, marketing, operations, platform work, gig economy).

• A third block corresponds to general ethical principles and concepts (e.g., responsibility, fairness, accountability, transparency, privacy, human rights, impact assessment).

The search was applied to the Topic (TS) field – which integrates Title, Abstract, Author Keywords, and Keywords Plus – using proximity operators (NEAR/3) and wildcards in order to maximize conceptual precision. In addition, category filters were applied in Web of Science, selecting primarily those related to management and organizations (‘Management’, ‘Business’, ‘Operations Research Management Science’, ‘Industrial Relations Labor’, ‘Business Finance’, and ‘Economics’), together with complementary ethical, legal, and technological areas (‘Ethics’, ‘Law’, ‘Computer Science, Artificial Intelligence’, and ‘Philosophy’).

Based on these three semantic blocks, the following search expression was constructed and applied to the Topic (TS) field:

TS = ((algorithmic* NEAR/3 ethic* OR algorithmic* NEAR/3 fairness OR algorithmic* NEAR/3 bias OR algorithmic* NEAR/3 accountability OR algorithmic* NEAR/3 transparency OR AI NEAR/3 ethic* OR ‘artificial intelligence’ NEAR/3 ethic* OR ‘responsible AI’ OR ‘trustworthy AI’ OR ‘explainable AI’ OR XAI OR interpretab* OR ‘AI governance’ OR ‘algorithmic accountability’ OR ‘algorithmic transparency’ OR ‘algorithmic fairness’ OR ‘algorithmic bias’ OR ‘algorithmic decision making’ OR ‘automated decision making’ OR ‘algorithmic management’) AND (business OR corporat* OR firm* OR enterprise* OR organisation* OR organization* OR ‘corporate governance’ OR management NEAR/3 business OR management NEAR/3 corporate OR management NEAR/3 organizational OR ‘human resource*’ OR marketing OR ‘supply chain’ OR operations OR ‘platform work’ OR ‘gig economy’ OR workplace) AND (ethic* OR responsib* OR fairness OR bias OR discrimination OR accountability OR transparency OR privacy OR ‘data protection’ OR compliance OR audit* OR ‘human rights’ OR ‘impact assessment’ OR ‘risk assessment’ OR ‘bias mitigation’))

The application of this strategy, together with the defined temporal, linguistic, and categorical filters, retrieved an initial set of 1,910 records, which constitute the basis of the selection process described in the following section.

Study selection process and PRISMA protocol

The identification, selection, and refinement of the corpus followed the PRISMA protocol, which provides an explicit, sequential, and iterative methodological framework aimed at ensuring transparency, traceability, and replicability in systematic reviews (Haddaway, Page, Pritchard & McGuinness, Reference Haddaway, Page, Pritchard and McGuinness2022; Liberati et al., Reference Liberati, Altman, Tetzlaff, Mulrow, Gøtzsche, Ioannidis and Moher2009; Moher et al., Reference Moher, Shamseer, Clarke, Ghersi, Liberati, Petticrew and Stewart2015; Page et al., Reference Page, McKenzie, Bossuyt, Boutron, Hoffmann, Mulrow and Brennan2021).

Although PRISMA was originally developed for evidence-based medical research, its application has progressively expanded to other disciplinary fields, including the social sciences and management research (Tranfield et al., Reference Tranfield, Denyer and Smart2003), where it has become a methodological benchmark for rigorous literature structuring.

The PRISMA flow diagram comprises four stages:

• Identification. In this phase, potentially relevant studies were identified through a systematic search in Web of Science. The initial search, applied to the period 2015–2025 and the selected categories, retrieved a total of 1,910 records.

• Screening. After removing 134 duplicate records, 1,776 unique publications remained. These records were subjected to an initial screening based on titles and abstracts, applying the predefined inclusion and exclusion criteria. As a result of this phase, 250 articles were excluded for not meeting the thematic focus of the study.

• Eligibility. The remaining 1,526 articles were assessed at the full-text level to determine their relevance to the research question. At this stage, 89 studies were excluded due to insufficient linkage to organizational contexts or the absence of an explicit ethical dimension.

• Inclusion. The final sample consisted of 1,437 publications, which constitute the definitive corpus of the bibliometric analysis.

The complete process of identification, screening, eligibility, and inclusion is presented visually in the PRISMA flow diagram (Figure 1), which provides an integrated overview of the selection procedure and transparently illustrates the progressive refinement of the initial records into the final corpus of publications analyzed.

PRISMA flow diagram of the study selection process.

The figure illustrates the identification, screening, eligibility, and inclusion stages applied to construct the final corpus of publications analyzed in the bibliometric study.

Corpus description

The final corpus comprises 1,437 articles published between 2015 and 2025. This body of literature has generated 24,973 citing articles (24,242 excluding self-citations) and has received a total of 33,370 citations (31,333 excluding self-citations), with an average of 23.22 citations per item. The associated h-index of the corpus is 77, indicating a high level of academic impact.

From a structural perspective, the resulting co-citation network consists of 873 nodes and 2,986 links, with a network density of 0.0078. The largest connected component includes 682 nodes, representing approximately 78% of the total network, which indicates a high degree of intellectual interconnection within the field.

Bibliometric analysis and construction of the co-citation network

The bibliometric analysis was conducted using CiteSpace software (version 6.4.R2 Advanced), a tool widely employed in co-citation studies and scientific mapping research (Chen, Reference Chen2017). The analysis was performed using annual time slices (slice length = 1), and the selected term sources were Title, Abstract, Author Keywords (DE), and Keywords Plus (ID). The selected node type was Reference, and the similarity measure applied was cosine.

Node selection was performed using the g-index, which is recommended for generating balanced and structurally consistent networks (Chen & Song, Reference Chen and Song2019). The k parameter was set to 25. No network pruning procedures (Pathfinder, Minimum Spanning Tree, or pruning of sliced or merged networks) were applied in order to preserve the structural integrity of the field.

To ensure methodological transparency and replicability, Table 2 summarizes the main configuration parameters used in the construction of the co-citation network.

CiteSpace configuration parameters used in the bibliometric analysis

Cluster identification was assessed using the modularity (Q) and silhouette (S) indices, which respectively measure the degree of differentiation between clusters and the internal cohesion of each cluster. High Q values indicate a well-defined map structure, whereas high S values reflect thematic consistency within each cluster (Chen et al., Reference Chen, Chen, Horowitz, Hou, Liu and Pellegrino2009; Chen, Ibekwe-sanjuan & Hou, Reference Chen, Ibekwe-Sanjuan and Hou2010; Rousseeuw, Reference Rousseeuw1987). In addition, betweenness centrality was calculated as an indicator of intermediary influence, allowing the identification of publications that act as conceptual bridges between research areas or that signal potential paradigm shifts (Chen, Reference Chen2006).

This procedure combines the systematic identification of relevant documents with a replicable structural analysis, providing an integrated view of the thematic cores and emerging trajectories of algorithmic ethics in organizational contexts.

Results of the analysis

This section presents the main results of the bibliometric analysis conducted using CiteSpace, offering an integrated view of the evolution, intellectual structure, and internal dynamics of the field of algorithmic ethics in organizational contexts. Through descriptive indicators, co-citation network analysis, and the identification of key publications, the results make it possible to characterize both well-established research areas and emerging lines of inquiry that are currently shaping the development of the field.

Corpus characterization

The final corpus consists of 1,437 publications, reflecting the progressive consolidation of algorithmic ethics as a relevant research domain at the intersection of AI, ethics, and organizational management. The temporal analysis of scientific output reveals a clearly accelerated expansion over the past decade.

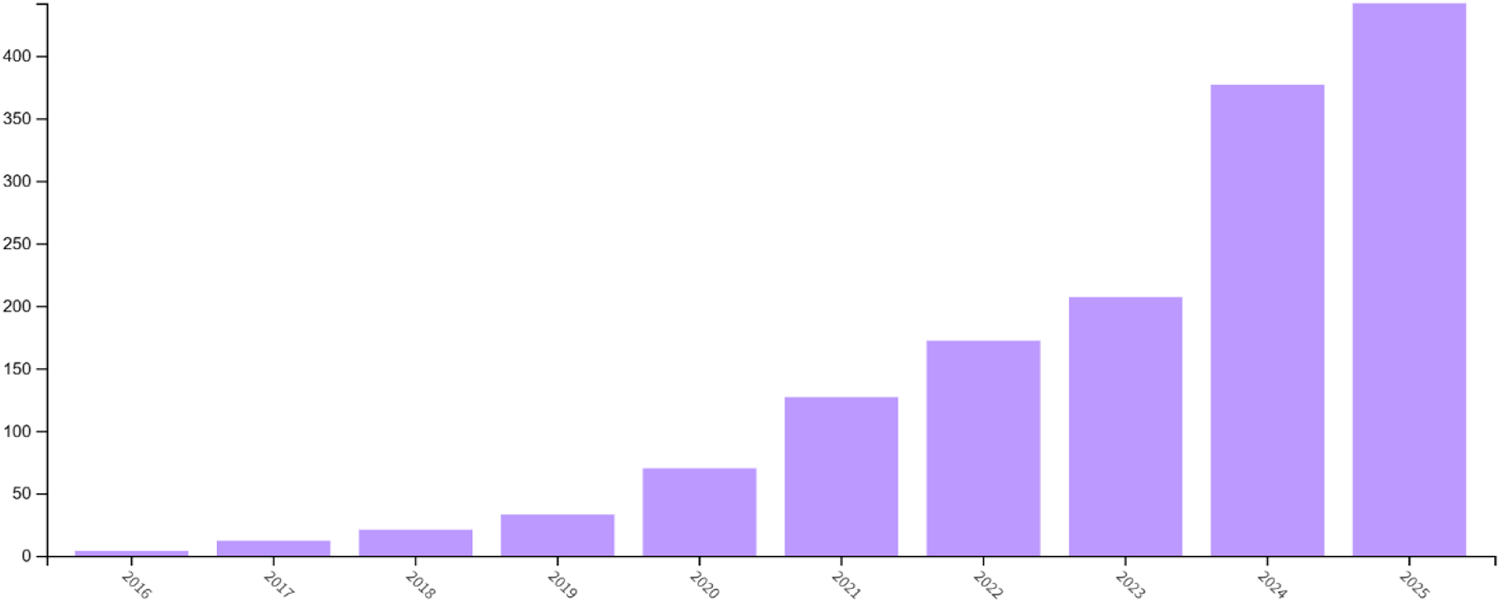

As shown in Figure 2, between 2015 and 2019, annual production remained below 50 articles. From 2020 onwards, a sustained and significant increase can be observed, reaching 477 publications in 2025. This trend highlights the growing academic interest in the ethical implications of AI within organizational settings.

Number of publications per year (2015–2025).

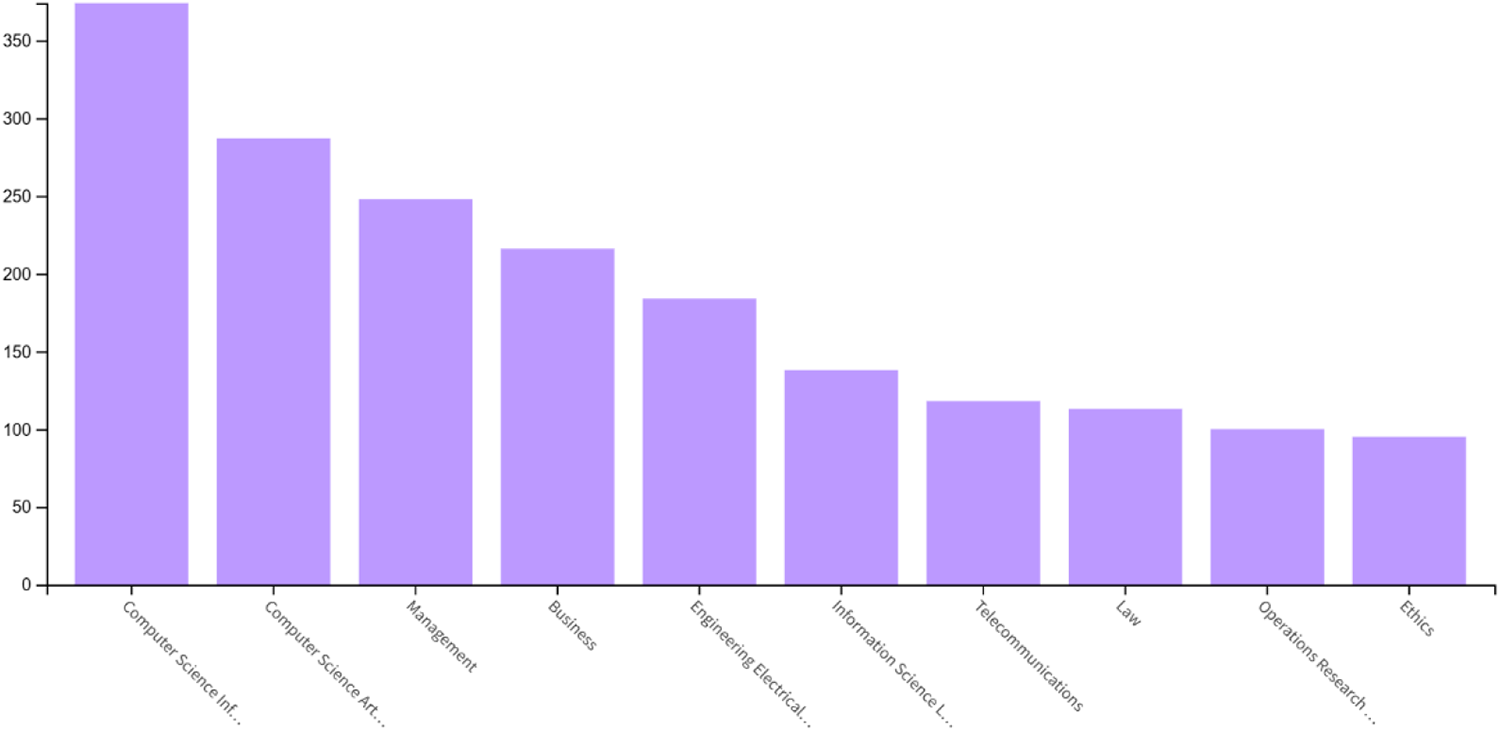

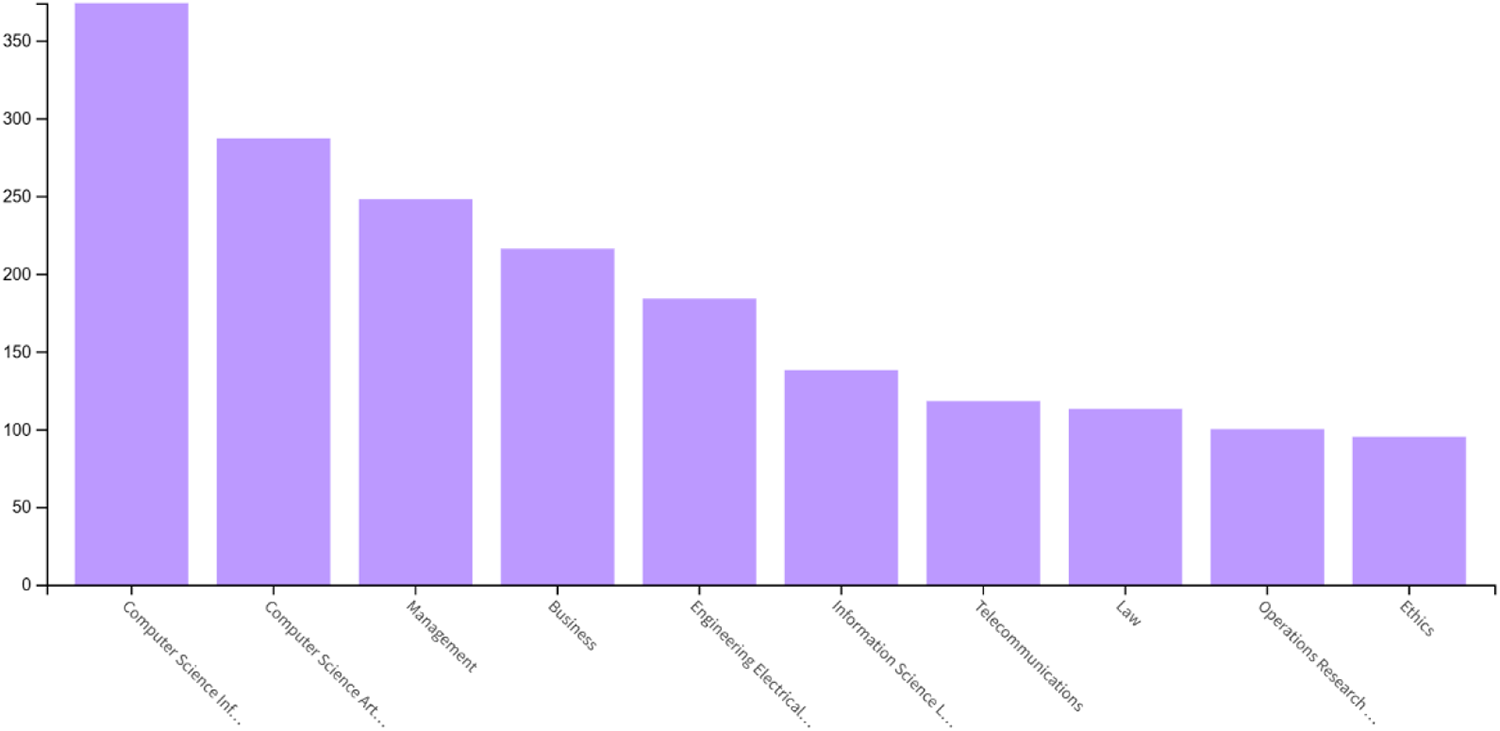

The classification of the corpus by Web of Science categories highlights the distinctly cross-cutting nature of the field. As shown in Figure 3, the most prominent areas are Computer Science, Information Systems; Computer Science, Artificial Intelligence; and Management, followed by Business and Engineering, Electrical & Electronic. This distribution reflects the convergence of technological and organizational perspectives in the analysis of the ethical challenges associated with the use of algorithmic systems in business environments.

Number of publications by Web of Science categories.

The analysis of the main publication sources reinforces this interdisciplinary reading. As reported in Table 3, the journals with the highest volume of articles combine ethical, technical, and managerial perspectives, with IEEE Access, AI & Society, Frontiers in Artificial Intelligence, Journal of Business Ethics, and Expert Systems with Applications standing out. The presence of these journals confirms the progressive institutionalization of the debate on algorithmic ethics within the field of Business & Management.

Top 10 journals by number of publications in the selected Web of Science corpus

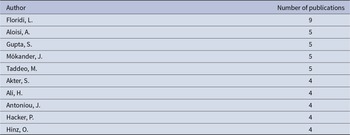

With regard to authorship, Table 4 presents the 10 most productive authors in the analyzed corpus. Scholars such as Luciano Floridi, Mariarosaria Taddeo, Jakob Mökander, and Antonio Aloisi occupy prominent positions, reflecting the centrality of normative, philosophical, and legal–organizational approaches in the development of the field. At the same time, the disciplinary diversity of these authors underscores the hybrid nature of the academic debate on algorithmic ethics.

Ten most productive authors in the selected Web of Science corpus

Finally, the analysis by institutional affiliation shows the consolidation of international research networks characterized by notable geographical diversity. As summarized in Table 5, institutions such as the Alan Turing Institute, the State University System of Florida, and Carnegie Mellon University stand out in terms of publication volume, confirming the global scope of the field and its transdisciplinary character.

Leading institutions by number of publications

These descriptive results highlight the rapid expansion, disciplinary cross-cutting nature, and institutional consolidation of the field of algorithmic ethics in organizational contexts. Building on this foundation, the co-citation analysis makes it possible to further examine the intellectual structure of the field by identifying the main thematic clusters and their interrelationships.

Identification and thematic description of the main clusters

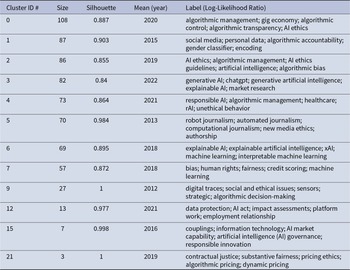

The co-citation analysis conducted using CiteSpace made it possible to identify 12 thematic clusters that shape the intellectual structure of the field of algorithmic ethics in organizational contexts. Each cluster was automatically labeled using the Log-Likelihood Ratio method, which identifies the most representative terms of each group based on their statistical significance. The resulting labels reflect the dominant themes of each cluster and facilitate a first systematic approach to the field’s thematic diversity.

In addition, the clusters display substantial sizes and high levels of internal coherence, with silhouette values ranging between 0.84 and 1, indicating strong thematic consistency (Chen et al., Reference Chen, Ibekwe-Sanjuan and Hou2010; Rousseeuw, Reference Rousseeuw1987). Overall, the network exhibits a clearly differentiated structure (modularity Q = 0.726), underscoring the degree of maturity and diversification achieved by the field of study.

Table 6 summarizes the main characteristics of the identified clusters, including their size, silhouette values, average year of publication, and the thematic labels that define them.

Summary of co-citation clusters in CiteSpace

As shown in Figure 4, the co-citation network exhibits a segmented yet interconnected configuration, in which the proximity between nodes reflects conceptual affinities, while the links between clusters reveal the circulation of ideas across different lines of research. This visualization makes it possible to capture, in relational terms, how ethical, technical, and managerial debates are articulated around the use of AI in organizations.

Cluster map (CiteSpace).

The following section describes the most relevant clusters from a thematic and organizational perspective, taking into account their size, internal coherence, and most representative intellectual contributions.

Cluster #0 (algorithmic management)

Cluster #0, comprising 108 publications, is the largest in the network and constitutes the most extensive and structurally central core of the co-citation analysis. This confirms algorithmic management as one of the backbone themes of algorithmic ethics in organizational contexts. The cluster exhibits high internal cohesion (silhouette = 0.887), reflecting strong conceptual consistency around the progressive assumption by algorithmic systems of functions traditionally performed by humans, including supervision, control, performance evaluation, and decision-making. With a mean publication year around 2020, the cluster reflects the relatively recent consolidation of this debate within the management literature.

The most influential contributions within this cluster are concentrated in leading journals in management, organizational studies, and information systems, such as Academy of Management Review, Management Science, Administrative Science Quarterly, and Computers in Human Behavior. From the perspective of worker experience, Shin and Park (Reference Shin and Park2019) show that trust in algorithmic systems depends on perceptions of fairness, transparency, and traceability, while Lee (Reference Lee2018) finds that algorithmic decisions tend to be perceived as impersonal when compared to the authority of human managers, particularly in contexts requiring subjective judgment.

In the domains of human resource management and organizational strategy, Tambe, Cappelli and Yakubovich (Reference Tambe, Cappelli and Yakubovich2019) identify challenges related to task complexity, data limitations, and fairness in automated decision-making, and argue for participatory approaches in the design and oversight of these systems. Along complementary lines, Benlian et al. (Reference Benlian, Wiener, Cram, Krasnova, Maedche, Möhlmann and Remus2022) emphasize the ambivalent nature of algorithmic management, which combines efficiency gains with risks of opacity, demotivation, and the erosion of organizational justice, thereby calling for explicit accountability mechanisms.

From the standpoint of organizational theory, Schafheitle et al. (Reference Schafheitle, Weibel, Ebert, Kasper, Schank and Leicht-Deobald2020) warn that the datafication of work intensifies surveillance practices and transforms control mechanisms, while Curchod et al. (Reference Curchod, Patriotta, Cohen and Neysen2020) and Rahman (Reference Rahman2021) show how algorithmic evaluation generates anxiety, reshapes power relations, and shifts authority toward opaque technical infrastructures. In turn, Lambrecht and Tucker (Reference Lambrecht and Tucker2019) document the persistence of gender bias in algorithmic optimization systems, and Balasubramanian, Ye and Xu (Reference Balasubramanian, Ye and Xu2022) introduce the notion of ‘algorithmic myopia’ to describe how excessive reliance on automated models can constrain organizational learning and strategic adaptation. In this sense, Cluster #0 positions algorithmic management as a central challenge for contemporary management, shaped by the persistent tension between technological efficiency and labor ethics.

Cluster #1 (social media)

Cluster #1 brings together an early and highly cohesive line of research, comprising 87 publications, with a high level of internal consistency (silhouette = 0.903) and a mean publication year around 2015. This cluster focuses on the ethical issues arising from the use of algorithms in data-intensive digital environments, addressing concerns such as opacity, bias, and accountability, with direct implications for organizational decision-making, governance arrangements, and regulatory frameworks.

The most influential contributions, published in leading journals such as Science, International Data Privacy Law, Columbia Business Law Review, and Stanford Law Review, examine the normative risks associated with delegating decisions to algorithmic systems. Caliskan et al. (Reference Caliskan, Bryson and Narayanan2017), in Science, demonstrate that language models reproduce racial and sex-based stereotypes, with consequences for organizational processes such as the selection and classification of individuals. Wachter and Mittelstadt (Reference Wachter and Mittelstadt2019), in Columbia Business Law Review, and Selbst and Powles (Reference Selbst and Powles2017), in International Data Privacy Law, emphasize the limits of purely technical notions of transparency and argue for rights to explanation and to reasonable inferences as safeguards against automated decision-making.

From a governance perspective, Kroll et al. (Reference Kroll, Huey, Barocas, Felten, Reidenberg, Robinson and Yu2017), in University of Pennsylvania Law Review, propose technical and institutional mechanisms to ensure accountability without compromising trade secrets, while Kaminski (Reference Kaminski2019), in Southern California Law Review, advances a dual governance approach combining individual rights with collective responsibility. At the institutional level, Cath et al. (Reference Cath, Wachter, Mittelstadt, Taddeo and Floridi2018), in Science and Engineering Ethics, criticize the lack of comprehensive strategies in AI policy initiatives, and Wexler (Reference Wexler2018), in Stanford Law Review, warns of the risks that opaque algorithms pose to fairness and legal legitimacy. Overall, Cluster #1 illustrates a shift in the debate from technical design toward the legal, organizational, and institutional conditions of legitimacy, consolidating algorithmic ethics as a central governance challenge in digital environments.

Cluster #2 (AI ethics)

Cluster #2, comprising 86 publications, represents one of the normative pillars of the field. It exhibits a high level of conceptual coherence (silhouette = 0.855) and a mean publication year around 2019, reflecting the recent consolidation of AI ethics as a cross-cutting domain. This cluster focuses on translating abstract ethical principles – such as justice, autonomy, and responsibility – into operational governance frameworks applicable to concrete technological and organizational contexts.

The most influential contributions, published in leading journals such as Science, Philosophical Transactions of the Royal Society A, and AI & Society, articulate conceptual models that have structured the normative debate. Taddeo and Floridi (Reference Taddeo and Floridi2018), in Science, advocate a translational ethics approach aimed at converting normative values into practical methodologies, while Floridi (Reference Floridi2016), in Philosophical Transactions of the Royal Society A, introduces the notion of distributed moral responsibility, which is central to understanding the attribution of obligations in complex sociotechnical systems. Along similar lines, Winfield and Jirotka (Reference Winfield and Jirotka2018) propose a governance model based on codes of conduct, ethics training, transparency, and institutional engagement as key pillars for strengthening public trust.

More recent contributions extend these frameworks toward concrete mechanisms of accountability and deliberation. Novelli et al. (Reference Novelli, Taddeo and Floridi2024) develop an accountability model incorporating authority, contestability, and limits to power, while Arvan (Reference Arvan2023) argues for the integration of deliberative processes to address context-sensitive moral dilemmas. Baum (Reference Baum2020) emphasizes the need for explicit decisions regarding legitimacy and moral aggregation, and Munn (Reference Munn2023) critiques the largely symbolic nature of many corporate ethics codes, calling for applied ethics with tangible institutional effects. Finally, Tsolakis, Schumacher, Dora and Kumar (Reference Tsolakis, Schumacher, Dora and Kumar2023) highlight the potential of technologies such as AI and blockchain to strengthen digital governance and organizational transparency. As a whole, Cluster #2 provides the normative scaffolding underpinning organizational applications of AI, linking applied ethics, governance, and responsible management.

Cluster #3 (generative AI)

Cluster #3, comprising 82 publications, exhibits high internal coherence (silhouette = 0.84) and a mean publication year around 2022, reflecting the rapid emergence of generative AI as a new focus of ethical and organizational inquiry. Despite its recent development, the cluster’s size and structure indicate that it already represents a consolidated line of research, driven by the widespread adoption of generative models across multiple productive sectors.

The most influential contributions, published in leading journals such as International Journal of Information Management, Journal of Management, and Nature, examine both opportunities and risks. Dwivedi et al. (Reference Dwivedi, Kshetri, Hughes, Slade, Jeyaraj, Kar and Ahuja2023) and Ooi et al. (Reference Ooi, Tan, Al-Emran, Al-Sharafi, Capatina, Chakraborty and Lee2025) analyze the cross-cutting impact of generative AI in areas such as banking, education, and marketing, warning of issues related to bias, misinformation, and loss of organizational control, while emphasizing the need for transparency and accountability frameworks tailored to specific industries. Bockting, van Dis, Bollen, van Rooij and Zuidema (Reference Bockting, van Dis, Bollen, van Rooij and Zuidema2023) highlight the importance of human oversight and traceability in response to the growing reliance on large language models.

From an organizational perspective, Kim, Lee, Kim, Kim and Duhachek (Reference Kim, Lee, Kim, Kim and Duhachek2023) show that human–AI interaction can enhance performance, but may also weaken moral restraint in the absence of auditable interfaces. In the domain of work management, Böhmer and Schinnenburg (Reference Böhmer and Schinnenburg2023) identify critical ambiguities in the adoption of generative AI in human resources, while Choudhary, Marchetti, Shrestha and Puranam (Reference Choudhary, Marchetti, Shrestha and Puranam2025) demonstrate that human–AI complementarity improves performance when errors do not overlap.

Other contributions extend the analysis to emerging risks and conditions of legitimacy. Yao et al. (Reference Yao, Duan, Xu, Cai, Sun and Zhang2024) examine vulnerabilities such as prompt injection and model extraction, proposing security mechanisms and continuous monitoring, while Kaur et al. (Reference Kaur, Uslu, Rittichier and Durresi2023) stress that the public legitimacy of generative AI depends on the establishment of standards for fairness, explainability, and reliability. Taken as an emerging yet firmly established stream, this cluster shows how generative AI intensifies ethical dilemmas while consolidating its role as a strategic vector for contemporary management.

Cluster #4 (responsible AI)

Cluster #4, comprising 73 publications, exhibits high internal coherence (silhouette = 0.864) and a mean publication year around 2021, positioning it as a central node in the debate on responsible AI in business and organizational contexts. This cluster lies at the intersection of applied ethics, organizational design, and strategy, and focuses on how ethical principles can be embedded into the practical implementation of AI systems as part of corporate governance.

The most influential contributions, published in leading management and information systems journals such as Business Horizons, Journal of the Academy of Marketing Science, and Information Systems Management, propose operational frameworks for the responsible management of AI. Kaplan and Haenlein (Reference Kaplan and Haenlein2019) introduce the Three C model (Confidence, Change, and Control), warning of structural risks such as the loss of oversight, while Davenport et al. (Reference Davenport, Guha, Grewal and Bressgott2020) conceptualize AI as a complement to human decision-making, subordinate to organizational and ethical criteria. In the marketing domain, Rai (Reference Rai2020) emphasizes explainability as a key condition for sustaining trust and fairness in algorithmic applications.

Other contributions extend the analysis toward social and institutional dimensions. Wright and Schultz (Reference Wright and Schultz2018), drawing on stakeholder theory, argue for the need for a just transition in the context of automation, while Ashok et al. (Reference Ashok, Madan, Joha and Sivarajah2022) develop an operational digital ethics framework linking technological decisions to impacts across physical, cognitive, informational, and governance domains. Along convergent lines, Meske et al. (Reference Meske, Bunde, Schneider and Gersch2022) call for renewed emphasis on explainability as an organizational requirement, while Morley et al. (Reference Morley, Kinsey, Elhalal, García, Ziosi and Floridi2023) and Anagnostou et al. (Reference Anagnostou, Karvounidou, Katritzidaki, Kechagia, Melidou, Mpeza and Magnisalis2022) stress that responsible AI demands practical, inclusive, and collaborative approaches involving developers, firms, and civil society. Viewed in this light, Cluster #4 captures the shift from abstract ethical principles toward actionable models of responsible AI that are embedded in organizational strategy and governance.

Cluster #5 (robot journalism)

Cluster #5, comprising 70 publications, exhibits exceptionally high internal cohesion (silhouette = 0.984) and a mean publication year around 2013, making it one of the earliest and most conceptually consistent cores identified in the analysis. This cluster examines the impact of automation and algorithmic systems on journalism, anticipating many of the ethical and organizational dilemmas that later extended to other information- and data-intensive sectors.

The most influential contributions are concentrated in leading journals in communication studies and the sociology of technology, such as Digital Journalism, New Media & Society, and Science, Technology & Human Values. Carlson (Reference Carlson2015) introduces the debate through the case of Narrative Science, highlighting how automation reshapes professional routines and raises risks related to precarization and the loss of autonomy. Dörr (Reference Dörr2016) extends this analysis by examining natural language generation, emphasizing both its operational usefulness and the need for ethical frameworks governing its organizational deployment.

From a sociological perspective, Anderson (Reference Anderson2013) analyzes how algorithms reconfigure professional hierarchies and criteria of legitimacy within newsrooms, while Coddington (Reference Coddington2015) categorizes different forms of data journalism, underscoring their technical and cultural implications. From a more applied standpoint, Broussard (Reference Broussard2015) shows that AI can strengthen investigative journalism when integrated as a collaborative support tool, and Young and Hermida (Reference Young and Hermida2015) demonstrate that the adoption of algorithmic technologies depends on specific resources, professional norms, and organizational structures.

Ananny (Reference Ananny2016) provides a pivotal contribution by arguing that algorithms should be evaluated not only in terms of their outputs but also in terms of how they structure social and organizational interactions. On this basis, Cluster #5 positions journalism as an early testing ground for the ethical dilemmas of automation, with direct implications for knowledge management and organizational governance.

Cluster #6 (explainable AI)

Cluster #6 comprises 69 publications and displays high internal coherence (silhouette = 0.895), with a mean publication year around 2018. This cluster focuses on the development of explainable artificial intelligence (XAI) as a response to the opacity of algorithmic systems, addressing explainability as a technical, ethical, and organizational condition for the legitimacy of automated decision-making.

The most influential contributions appear in leading journals in AI, information systems, and management, such as Artificial Intelligence, ACM Computing Surveys, Journal of Business Research, and IEEE Transactions on Industrial Informatics. Carvalho et al. (Reference Carvalho, Pereira and Cardoso2019) highlight the absence of shared interpretability frameworks and propose context- and user-adapted metrics, while Arrieta et al. (Reference Arrieta, Díaz-Rodríguez, Del Ser, Bennetot, Tabik, Barbado, García, Gil-López, Molina, Poyatos, Ribeiro and Herrera2020) offer an integrative taxonomy of XAI approaches linking explainability, fairness, and transparency.

Guidotti et al. (Reference Guidotti, Monreale, Ruggieri, Turin, Giannotti and Pedreschi2019) provide a systematic classification of explanatory methods that has helped structure the field, and Langer et al. (Reference Langer, Oster, Speith, Hermanns, Kästner, Schmidt and Baum2021) connect social expectations with the technical properties of AI systems, calling for an empirical and interdisciplinary approach. From an organizational perspective, Chowdhury, Budhwar, Dey, Joel-Edgar and Abadie (Reference Chowdhury, Budhwar, Dey, Joel-Edgar and Abadie2022) show that clear explanations enhance human–AI collaboration and strengthen trust in business environments.

At a more technical level, Confalonieri et al. (Reference Confalonieri, Weyde, Besold and Martín2021) introduce explanatory methods capable of detecting bias, while Ahmed, Jeon and Piccialli (Reference Ahmed, Jeon and Piccialli2022) apply XAI to Industry 4.0, underscoring its role in safety, auditability, and the ethical use of AI. In this way, Cluster #6 establishes explainability as a foundational requirement for the responsible governance of algorithmic systems within organizations.

Cluster #7 (bias)

Cluster #7 brings together 57 publications, with high internal consistency (silhouette = 0.872) and a mean publication year around 2018. This cluster addresses the reproduction of inequalities and structural biases in algorithmic systems applied to organizations and markets, highlighting the difficulties involved in ensuring fair and ethically legitimate decision-making.

The most influential contributions are published in leading journals in data science, operations, and management, such as ACM Computing Surveys, European Journal of Operational Research, Journal of Finance, and Business & Information Systems Engineering. Ntoutsi et al. (Reference Ntoutsi, Fafalios, Gadiraju, Iosifidis, Nejdl, Vidal and Staab2020) argue that data-driven models reflect pre-existing social inequalities and propose the use of realistic metrics and datasets to advance toward contextualized algorithmic justice. Mehrabi et al. (Reference Mehrabi, Morstatter, Saxena, Lerman and Galstyan2021) document multiple cases of algorithmic discrimination and call for closer integration between technical rigor and ethical sensitivity.

In the financial domain, Moscato, Picariello and Sperlí (Reference Moscato, Picariello and Sperlí2021) show that the application of XAI in credit decision-making improves transparency and trust, while Kozodoi et al. (Reference Kozodoi, Jacob and Lessmann2022) demonstrate that incorporating neutrality constraints can reduce bias without sacrificing performance. Fuster et al. (Reference Fuster, Goldsmith-Pinkham, Walther and Ramadorai2022) provide evidence of persistent racial disparities in mortgage algorithms, even in the absence of explicit race variables.

From an organizational perspective, Madaio, Egede, Subramonyam, Vaughan and Wallach (Reference Madaio, Egede, Subramonyam, Vaughan and Wallach2022) contend that algorithmic fairness requires internal cultural transformation, while Feuerriegel et al. (Reference Feuerriegel, Dolata and Schwabe2020) suggest that ethical AI governance emerges from the interaction between users, technology, and organizational structures. In this regard, Cluster #7 emphasizes that algorithmic bias constitutes a systemic challenge rather than a purely technical problem.

Cluster #9 (digital traces)

Cluster #9 comprises 27 publications and exhibits perfect internal coherence (silhouette = 1), with a mean publication year around 2012, placing it among the conceptual antecedents of the field. This cluster addresses the ethical dilemmas associated with digital surveillance, data traceability, and information management in organizational contexts.

The most influential contributions appear in journals such as Journal of Strategic Information Systems, Information Technology & People, and Information, Communication & Society. Gerlach, Widjaja and Buxmann (Reference Gerlach, Widjaja and Buxmann2015) show how privacy policies shape users’ willingness to share data, thereby directly affecting organizational strategies of data monetization. Whittington (Reference Whittington2015) argues that technologies such as Big Data are not neutral tools but rather practices that actively shape business strategy.

Abbas, Michael and Michael (Reference Abbas, Michael and Michael2015) analyze the ethical dilemmas surrounding geolocation technologies and call for interdisciplinary regulatory approaches, while Coll (Reference Coll2015) interprets privacy as a mechanism of risk self-management within informational capitalism. In this respect, Cluster #9 uncovers the early foundations of the debate on digital control and data ethics in organizational settings.

Cluster #12 (data protection)

Cluster #12 brings together 13 publications and exhibits high internal coherence (silhouette = 0.977), with a mean publication year around 2021. This cluster focuses on the regulation of automated decision-making systems and data protection, with particular attention to algorithmic accountability in labor and organizational contexts.

The most influential contributions are published in leading legal journals such as Modern Law Review, International Data Privacy Law, and European Journal of Risk Regulation. Adams-Prassl et al. (Reference Adams-Prassl, Binns and Kelly-Lyth2023) criticize the legal treatment of algorithmic bias as a form of mere indirect discrimination, while Kaminski and Malgieri (Reference Kaminski and Malgieri2021) propose reinterpreting General Data Protection Regulation Data Protection Impact Assessments (GDPR DPIAs) as genuine Algorithmic Impact Assessments.

Mökander et al. (Reference Mökander, Juneja, Floridi and Watson2022) compare regulatory frameworks in the United States and the European Union, calling for governance approaches oriented toward social ends, while Schuett (Reference Schuett2024) examines the application of the AI Act to high-risk systems. In this sense, Cluster #12 positions regulation as a key pillar for the democratic legitimacy of organizational AI.

Cluster #15 (couplings)

Cluster #15 comprises seven publications and displays extremely high internal coherence (silhouette = 0.998), with a mean publication year around 2016. This cluster brings together pioneering works that anticipated many of the ethical and political debates that currently surround AI in complex organizational systems.

Key contributions appear in high-impact interdisciplinary journals such as Nature and Ethics and Information Technology. Crawford and Calo (Reference Crawford and Calo2016) call for a systemic approach to analyzing the real-world impacts of AI on institutions, while Arnold and Scheutz (Reference Arnold and Scheutz2016) question the imitation of human judgment as an ethical benchmark. Campolo et al. (Reference Campolo, Sanfilippo, Whittaker and Crawford2017) warn about the lack of oversight in critical sectors, and Amodei et al. (Reference Amodei, Olah, Steinhardt, Christiano, Schulman and Mané2016) examine the risks arising from poorly specified objectives. In this respect, Cluster #15 functions as a conceptual antecedent of contemporary algorithmic ethics.

Cluster #21 (contractual justice)

Cluster #21 is the smallest in the network, comprising three publications with perfect internal coherence (silhouette = 1) and a mean publication year around 2019. It focuses on the ethical and legal dilemmas associated with algorithmic pricing and data-driven personalization.

Key contributions appear in journals such as Journal of Consumer Policy, Journal of Revenue and Pricing Management, and Journal of Business Ethics. Borgesius and Poort (Reference Borgesius and Poort2017) examine price discrimination in e-commerce, highlighting consumer perceptions of unfairness, while Gerlick and Liozu (Reference Gerlick and Liozu2020) propose an integrated ethical–legal framework that combines principles of fairness and social justice with regulatory domains such as data protection and antitrust law. Seele et al. (Reference Seele, Dierksmeier, Hofstetter and Schultz2021) extend this line of inquiry through a systematic review of dynamic pricing practices, calling for the establishment of audits and ethics committees. This cluster illustrates how AI-driven pricing mechanisms reshape firm–consumer relationships, creating new asymmetries that demand coordinated ethical and regulatory responses.

The cluster analysis reveals a highly diversified yet structured field, in which technical, ethical, legal, and organizational approaches converge around a shared concern: the responsible governance of AI in business contexts. The coexistence of well-established clusters, cross-cutting normative cores, and emerging lines of inquiry points to both the growing maturity of the field and its conceptual dynamism. This interconnected thematic structure provides the basis for identifying publications that play a central role as articulation nodes between research streams, an issue addressed in the following subsection through the analysis of turning points and betweenness centrality.

Key publications and turning points: connecting research streams

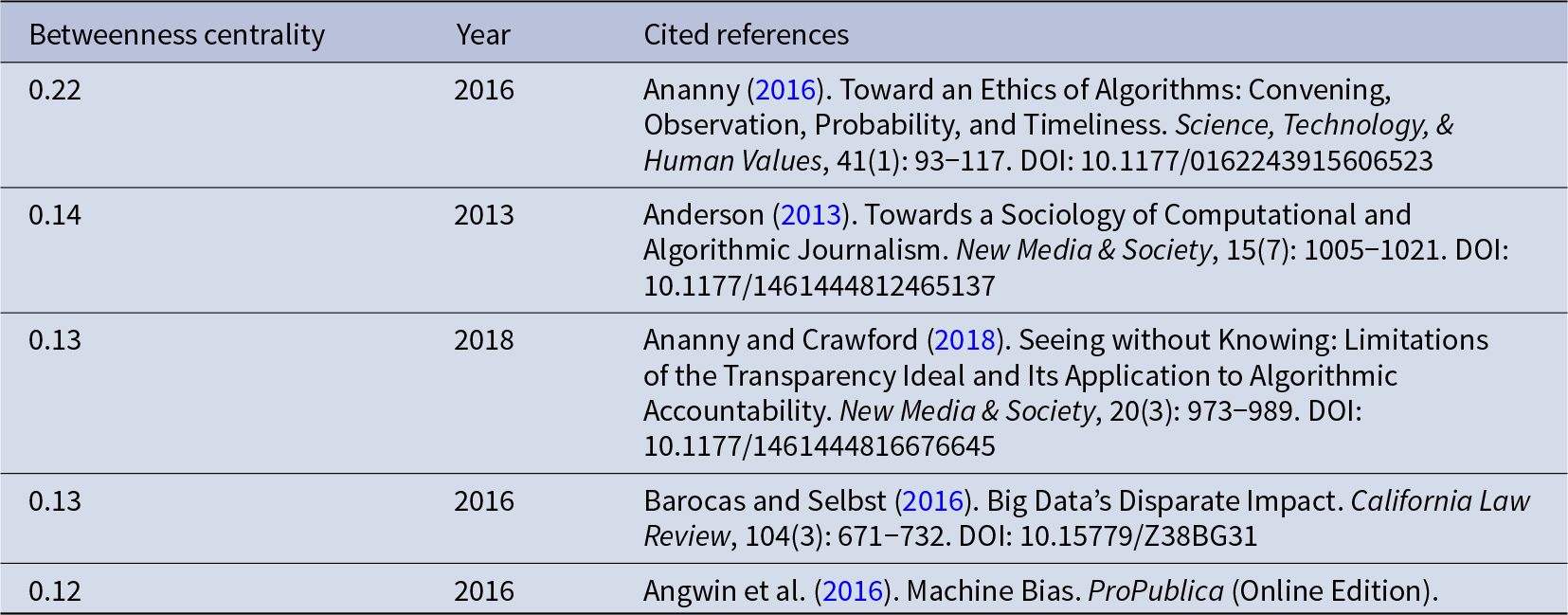

Within the thematic structure outlined in the previous subsection, the co-citation analysis makes it possible to identify publications that play a key structural role as intermediary nodes between different lines of research. These works, referred to as intellectual turning points, act as bridges between clusters and contribute decisively to the integration of knowledge and the direction of the field’s evolution (Chen et al., Reference Chen, Chen, Horowitz, Hou, Liu and Pellegrino2009). The identification of such turning points is based on the indicator of betweenness centrality, which measures the frequency with which a node lies on the shortest paths connecting other nodes in the network. High centrality values indicate a strategic role in the circulation of ideas, facilitating connections between theoretical traditions, disciplinary approaches, and previously fragmented research agendas.

In bibliometric research, nodes with high betweenness centrality are also commonly associated with sustained academic impact and cross-cutting influence on the development of a field (Chen et al., Reference Chen, Chen, Horowitz, Hou, Liu and Pellegrino2009; Shibata, Kajikawa & Matsushima, Reference Shibata, Kajikawa and Matsushima2007). According to Chen et al. (Reference Chen, Chen, Horowitz, Hou, Liu and Pellegrino2009), betweenness centrality values above 0.1 indicate a high capacity for mediation. In the co-citation map of algorithmic ethics in organizational contexts, five publications exceed this threshold. These works, listed in Table 7, constitute the main turning points of the field, as they connect diverse thematic clusters and reinforce the overall coherence of its intellectual structure.

Key publications connecting different research streams (turning points)

The contribution with the highest centrality is Ananny (Reference Ananny2016), with a betweenness centrality value of 0.22, published in Science, Technology, & Human Values. This work represents the primary turning point in the intellectual map by advancing a pragmatic ethical framework that conceptualizes algorithms as sociotechnical systems in which code, norms, organizational practices, and power structures are intertwined. By introducing dimensions such as convening, probability, and temporality, Ananny shifts the analytical focus away from technical transparency toward the social and institutional contexts in which algorithms operate. This perspective directly links debates originating in the robot journalism and social media clusters with normative discussions on governance, accountability, and organizational responsibility, thereby explaining its high intermediary capacity within the network.

The second turning point in terms of centrality is Anderson (Reference Anderson2013), with a value of 0.14, published in New Media & Society. Drawing on the sociology of computational journalism, this study extends the analysis of automation beyond technical dimensions by examining how algorithms reconfigure professional roles, organizational hierarchies, and criteria of legitimacy. Its central position stems from its ability to connect early research on informational automation with later debates on algorithmic management, governance, and organizational ethics, thus serving as a bridge between media-oriented clusters and those focused on broader organizational structures.

With a betweenness centrality value of 0.13, Ananny and Crawford (Reference Ananny and Crawford2018), also published in New Media & Society, constitute another key intermediary node. This work challenges the normative ideal of algorithmic transparency, arguing that technical openness alone does not ensure understanding, control, or accountability. By introducing a relational interpretation of algorithmic power, the authors connect legal, organizational, and technological debates, linking the AI ethics, social media, and algorithmic management clusters. Their contribution is central to explaining why many algorithmic governance initiatives fail when they rely exclusively on decontextualized technical solutions.

Along convergent lines, Barocas and Selbst (Reference Barocas and Selbst2016), with a centrality value of 0.13 and published in California Law Review, play a pivotal role by demonstrating how algorithmic systems can generate discriminatory outcomes even in the absence of explicit intent. Their analysis of disparate impact provides a robust articulation of debates on algorithmic bias, organizational justice, and legal responsibility, connecting legally oriented clusters with those focused on management, human resources, and automated decision-making. This capacity to integrate normative and organizational perspectives accounts for their function as a key turning point in the field’s intellectual structure.

Finally, Angwin et al. (Reference Angwin, Larson, Mattu and Kirchner2016), with a betweenness centrality of 0.12 and published by ProPublica, stand out as a crucial empirical turning point. Through their analysis of the COMPAS system, this work exposes racial biases in algorithms used in high-stakes decision-making, offering a concrete illustration of the ethical risks of automation. Its significance lies not only in its journalistic contribution but also in its ability to connect academic debates on bias, transparency, and accountability with real-world organizational and policy practices, acting as a catalyst for subsequent research streams in applied algorithmic ethics.

Taken together, these turning points articulate the core axes of the field – transparency, justice, governance, and responsibility – and help explain how algorithmic ethics in organizational contexts has evolved into an interdisciplinary domain where technological, legal, and management-oriented approaches converge.

Discussion

The results of the bibliometric analysis make it possible to move beyond a purely descriptive mapping of the field and to advance toward a more far-reaching theoretical interpretation. Building on the identified intellectual structure – dominant clusters, emerging lines of research, and bridging works – this section proposes an interpretive shift from empirical patterns to their implications for organizational and management theory. In particular, the discussion seeks to conceptualize how algorithmic ethics is no longer operating primarily as an external set of normative principles, but is increasingly taking shape as an institutional logic of governance embedded within organizations.

From algorithmic ethics to an institutional logic of organizational governance

The findings of the bibliometric analysis suggest that algorithmic ethics in organizational contexts is undergoing a significant transformation. Rather than remaining a set of external or reactive normative concerns, it is progressively becoming an institutional logic of governance integrated into organizational structures, processes, and practices. This evolution is clearly reflected in the centrality of the clusters on Algorithmic Management (#0), Responsible AI (#4), and Explainable AI (#6), which, when considered jointly, articulate a transition from a predominantly technical design focus toward an organizational, strategic, and institutional understanding of the ethical challenges associated with AI.

This transformation can be fruitfully interpreted through Institutional Theory, which offers a robust framework to explain how organizations incorporate new practices and normative frameworks in response to increasing legitimacy pressures in environments characterized by high technological uncertainty (Campbell, Reference Campbell2007; DiMaggio & Powell, Reference DiMaggio and Powell1983). In this regard, the core contributions within the AI Ethics cluster (#2) highlight the emergence of ethical principles and standards that operate as normative pressures, encouraging organizations to adopt algorithmic governance frameworks in order to align with professional, social, and regulatory expectations. Floridi’s (Reference Floridi2016) notion of distributed moral responsibility, later developed in organizational terms by Taddeo and Floridi (Reference Taddeo and Floridi2018), is particularly useful for understanding this process, as it shifts ethical attribution away from the individual programmer toward the institutional arrangements within which algorithmic systems are designed, implemented, and used.

Complementarily, the studies associated with the Data Protection cluster (#12) underscore the growing relevance of coercive pressures, as regulatory frameworks such as the GDPR and the AI Act are redefining organizational obligations regarding transparency, risk assessment, and accountability (Kaminski & Malgieri, Reference Kaminski and Malgieri2021; Mökander et al., Reference Mökander, Juneja, Floridi and Watson2022; Schuett, Reference Schuett2024). These regulatory dynamics not only impose compliance requirements but also contribute to the institutionalization of practices such as algorithmic impact assessments, audits, and internal oversight mechanisms as organizationally legitimate responses to automated decision-making.

The Algorithmic Management cluster (#0) illustrates particularly clearly how these institutional pressures translate into concrete organizational tensions. The adoption of algorithmic systems for monitoring, task allocation, or performance evaluation promises efficiency gains, while simultaneously generating risks related to opacity and the erosion of internal trust. Influential studies show that acceptance of algorithmic management by employees and managers largely depends on perceptions of fairness, traceability, and contestability (Benlian et al., Reference Benlian, Wiener, Cram, Krasnova, Maedche, Möhlmann and Remus2022; Shin & Park, Reference Shin and Park2019), as well as on how these systems reconfigure power relations and authority within organizations (Curchod et al., Reference Curchod, Patriotta, Cohen and Neysen2020; Rahman, Reference Rahman2021). From an institutional perspective, such tensions help explain why organizations cannot merely deploy algorithmic technologies but are compelled to accompany them with formal ethical governance structures in order to preserve legitimacy.

Within this context, the Explainable AI cluster (#6) plays a key integrative role. Explainability emerges not only as a technical challenge but as a central institutional mechanism linking normative requirements, social expectations, and organizational practices. The taxonomies and frameworks proposed by Arrieta et al. (Reference Arrieta, Díaz-Rodríguez, Del Ser, Bennetot, Tabik, Barbado, García, Gil-López, Molina, Poyatos, Ribeiro and Herrera2020) and Guidotti et al. (Reference Guidotti, Monreale, Ruggieri, Turin, Giannotti and Pedreschi2019) emphasize that explainability should be understood in a contextual and stakeholder-oriented manner, while more recent contributions underline the importance of XAI for sustaining trust, human–AI collaboration, and accountability in business environments (Chowdhury et al., Reference Chowdhury, Budhwar, Dey, Joel-Edgar and Abadie2022; Langer et al., Reference Langer, Oster, Speith, Hermanns, Kästner, Schmidt and Baum2021). From this perspective, explainability acts as a bridge between technological efficiency and organizational legitimacy, thereby reinforcing the institutionalization of algorithmic ethics.

Once algorithmic ethics is understood as an emerging institutional logic, its strategic implications can be further explored through the Resource-Based View, according to which organizations derive enduring advantages from resources and capabilities that are valuable, difficult to imitate, and embedded in specific organizational contexts (Barney, Reference Barney1991). The results suggest that capabilities related to algorithmic governance – such as the design of explainable systems, bias management, and the implementation of accountability mechanisms – can function as intangible resources of this kind. In this sense, algorithmic ethics ceases to be merely a compliance cost and instead becomes an organizational capability that strengthens trust among employees and customers and contributes to the long-term persistence of organizational legitimacy (Morley et al., Reference Morley, Kinsey, Elhalal, García, Ziosi and Floridi2023; Rai, Reference Rai2020).

Taken together, an institutional reading of the structuring clusters supports the argument that algorithmic ethics in organizational contexts is evolving toward an institutional ethics of algorithmic governance, in which ethical principles are systematically embedded in organizational design, strategy, and decision-making processes. This shift does not eliminate the tensions between efficiency and business ethics, but it does redefine how organizations manage them, relocating the focus from the isolated algorithm to the institutional frameworks that shape its use and its consequences.

From ethical concerns to organizational architectures of algorithmic governance

Beyond its gradual institutionalization as a logic of legitimacy, the results of the bibliometric analysis indicate that algorithmic ethics is increasingly materializing in concrete organizational architectures of governance, through which organizations pragmatically manage the tensions associated with the use of algorithmic systems. This shift becomes particularly visible when the clusters on Algorithmic Management (#0), Responsible AI (#4), and Explainable AI (#6) are examined jointly, as they illustrate how ethical principles are translated into structures, routines, and control mechanisms embedded in day-to-day management.

In this regard, recent literature shows that algorithmic governance is no longer articulated exclusively through normative statements or codes of conduct, but rather through the incorporation of stable organizational devices, such as algorithmic oversight committees, impact assessment protocols, human-in-the-loop review procedures, and internal auditing systems. Studies on organizational AI adoption indicate that these structures perform a dual function: on the one hand, they enable the management of ethical risks associated with opacity or bias in automated decision-making; on the other, they operate as internal coordination mechanisms across technical, legal, and managerial domains (Davenport et al., Reference Davenport, Guha, Grewal and Bressgott2020; Feuerriegel et al., Reference Feuerriegel, Dolata and Schwabe2020; Meske et al., Reference Meske, Bunde, Schneider and Gersch2022).

From this perspective, algorithmic governance acquires a socio-organizational character, in which technical decisions are embedded within formal processes of deliberation and oversight. The literature on algorithmic management clearly demonstrates that the introduction of algorithmic systems in areas such as human resources, digital platforms, or performance evaluation reconfigures relations of authority and control, making it necessary to redefine organizational roles, decision contestation channels, and criteria of internal legitimacy (Benlian et al., Reference Benlian, Wiener, Cram, Krasnova, Maedche, Möhlmann and Remus2022; Curchod et al., Reference Curchod, Patriotta, Cohen and Neysen2020; Rahman, Reference Rahman2021). In such contexts, algorithmic governance functions as an organizational infrastructure that absorbs tensions between operational efficiency and organizational justice.

A central element of these governance architectures is explainability, which emerges in the literature not merely as a property of the technical system, but as an organizational condition for the legitimate acceptance and use of AI. Contributions within the Explainable AI cluster (#6) show that the effectiveness of explanatory mechanisms depends on their alignment with different organizational audiences – managers, employees, auditors, or regulators – and on their integration into clearly defined decision-making processes (Arrieta et al., Reference Arrieta, Díaz-Rodríguez, Del Ser, Bennetot, Tabik, Barbado, García, Gil-López, Molina, Poyatos, Ribeiro and Herrera2020; Chowdhury et al., Reference Chowdhury, Budhwar, Dey, Joel-Edgar and Abadie2022; Langer et al., Reference Langer, Oster, Speith, Hermanns, Kästner, Schmidt and Baum2021). In this sense, XAI operates as a structural component of algorithmic governance, facilitating decision traceability and the allocation of responsibility within organizations.

Complementarily, the clusters related to Bias (#7) and Contractual Justice (#21) highlight that these governance architectures become particularly salient in contexts where the distributive effects of AI on employees and consumers are more visible. Studies in domains such as recruitment, credit allocation, or dynamic pricing suggest that perceptions of fairness and procedural justice depend less on the technical sophistication of the algorithm than on the presence of clear organizational safeguards, including explicit review criteria, periodic audits, and meaningful opportunities for contestation (Fuster et al., Reference Fuster, Goldsmith-Pinkham, Walther and Ramadorai2022; Kozodoi et al., Reference Kozodoi, Jacob and Lessmann2022; Seele et al., Reference Seele, Dierksmeier, Hofstetter and Schultz2021). These findings reinforce the view that algorithmic ethics is primarily operationalized through organizational decisions, rather than solely through technical adjustments.