Highlights

What is already known?

-

• Accurate intraclass correlation coefficient (ICC) estimates are needed for power analyses and meta-analytic adjustments to effect sizes from clustered data.

-

• The ICC does not have a known sampling distribution shape, nor have a known sampling variance.

-

• Not all meta-analytic pooling techniques are robust when variance formulas are mis-specified or when the effect size estimate’s sampling distribution is asymmetric.

What is new?

-

• An empirical evaluation of different ICC variance formulas used as weights in meta-analytic pooling.

-

• Identification of the Fisher transformation-based formula as the most accurate weighting approach across varied conditions.

Potential impact for RSM readers

-

• Provides guidance on selecting variance formulas when pooling ICC estimates to be used in power analyses and effect size corrections for clustered data.

1 Introduction

The intraclass (or intracluster) correlation coefficient (ICC)Reference Fisher 1 – Reference McGraw and Wong 3 captures the degree of clustering (or similarity) in the data. ICC estimates are necessary for several statistical techniques for handling clustered data arising from contexts, such as cluster-randomized trials (CRTs) or studies assessing the consistency of measurements across subjects. The CRT is an experimental design that involves randomly assigning entire clusters of individuals (such as classrooms of students or hospitals of patients) to treatment conditions.Reference Raudenbush and Bryk 4 – Reference Murray and CotDoEDM 7 In meta-analysis, the estimated ICC is necessary to adjust effect sizes from studies with clustered data.Reference Hedges 8 – 11 However, primary studies with clustered data do not always report ICC estimates, requiring meta-analysts to impute ICC values from other studies with similar design characteristics. Similarly, in an a priori power analysis, reasonable ICC estimates are needed if the experimental design of the planned study involves clustered data such as a CRT.

ICC estimates are necessary when clustered data are encountered both in meta-analysis and a priori power analysis because the standard error (SE) of a treatment effect in clustered data is inflated by the variance inflation factor, which is itself a function of the ICC. Even if the magnitude of the ICC estimate (and thus the degree of cluster-dependence) is small, if the average size of a cluster is large, then the ICC estimate has a major effect on the SE of the treatment effect.Reference Cornfield 12 The accuracy of both types of clustered data analyses depends on the accuracy of the ICC estimates used. Because raw data are not available for prospective power analyses or meta-analyses, researchers typically have to impute reasonable values from ICC estimates reported in related prior studies.

To address the need for accurate ICC values for clustered data, large secondary databases of design parameters have been used to offer reasonable ICC values for a set of research designs, outcomes, and population groups.Reference Hedges and Hedberg 13 – Reference Adams, Gulliford, Ukoumunne, Eldridge, Chinn and Campbell 16 Unfortunately, such databases and, thus, ICC values are not comprehensive for all designs, outcomes, and populations. In addition, even if the ICCs captured in those datasets were based on very similar studies, the sampling distribution of ICC estimates is unknown, making it unclear how best to pool together ICC estimates from such databases.

Beyond the context of clustered individuals’ data, the ICC metric is also used as a measure of reliability for multiple measures of a construct. An ICC in this context is the proportion of the variance due to the objects of a measurement.Reference McGraw and Wong 3 , Reference Chen, Taylor and Haller 17 There are many types of ICCs in this context (see McGraw and WongReference McGraw and Wong 3 for a review), but for this article, we are interested in the ICC from a one-way random effects model, in which unordered measurement observations are nested within objects (e.g., participants). For example, a sample of participants is each rated by the same set of raters, and the ICC provides a measure of inter-rater reliability. Alternatively, multiple measurements are taken for a group of participants across multiple time points as a measure of test–retest reliability.Reference Chen, Taylor and Haller 17 Some meta-analysts correct the standardized mean difference (SMD) effect sizes for measurement artifacts. When unaccounted for, measurement error in the dependent variable can impact the SMD effect size by increasing the magnitude of the within-group variance used to calculate each primary study’s SMD estimate. When the SMD estimates are not corrected for measurement error, the pooled SMD estimate can be under-estimated, heterogeneity in effect size estimates can be inflated, and there can be other negative implications, including erroneous inferences made about moderator effects and publication bias.Reference Wiernik and Dahlke 18 The ICC, as a measure of inter-rater reliability, is among the reliability measures that some meta-analysts use to correct the SMD for rater error.Reference Wiernik and Dahlke 18 Thus, the ICC has multiple important uses.

Several methods have been proposed to provide ICC values for secondary analyses. Some applied researchers have taken the arithmetic mean or median of a set of ICC estimates from a similar population context.Reference Puzio, Colby and Algeo-Nichols 19 , Reference Graham, Kiuhara and MacKay 20 Others have constructed confidence intervals for the ICC parameter and proposed that applied researchers use the upper limit as a conservative estimate of the ICC.Reference Donner and Wells 21 In the case of power analysis, this method would produce a sample size larger than needed and could result in wasted resources.Reference Donner and Wells 21 Finally, some have applied Bayesian methods to create a posterior distribution for the ICC estimates.Reference Spiegelhalter 22 – Reference Turner, Thompson and Spiegelhalter 24 These methods have relied on strong assumptions about the distribution of ICC estimates and its moments. In addition, simple averages of ICCs do not capture the differing precisions of the ICC estimates. ICC estimates based on larger sample sizes will be more precise than those based on smaller sample sizes. More weight should be assigned to more precise ICC estimates when calculating a pooled ICC.

Thus, another alternative for imputing a reasonable ICC estimate can involve the use of principled meta-analytic methods that are more typically used to pool effect sizes. When pooling effect sizes, a meta-analyst can calculate a weighted average effect size where weights are a direct function of precision. Most typically, the inverse of the effect size’s variance is used as the effect size’s weight, such that the more precise the estimate, the smaller its SE and the more weight it is afforded.Reference Tanner-Smith and Tipton 25 Similarly, a researcher could pool ICC estimates from different studies’ datasets and assign more weight in the pooling to more precise ICC estimates. However, accurate use of meta-analytic pooling requires knowledge of the shape and variance of the sampling distribution of the effect size of interest (here, of ICC estimates). While many different formulas for the variance of an ICC estimate have been proposed,Reference Fisher 1 , Reference Smith 2 , Reference Swiger, Harvey, Everson and Gregory 26 – Reference Fisher 29 it is unclear which formulation of the variance of ICC estimates might offer the best weight formulation when pooling ICC estimates to obtain an estimate of the population ICC. Furthermore, ICC estimates have already been used as an outcome in meta-analyses, particularly in psychology, education, and the health sciences,Reference Hedberg and Hedges 15 , Reference Kivlighan, Aloe and Adams 30 – Reference Goerdten, Yuan and Huybrechts 33 although the sampling variance formula used in the weights and the meta-analysis model has varied across these studies, which further justifies the need for a simulation study investigating the performance.

The aim of this study is to compare methods for quantitatively synthesizing ICC values to obtain the best pooled ICC estimate. There are several choices for how to pool effect sizes (for this study, ICCs) using meta-analysis. Two options for random effects pooling of effect sizes include method of moments estimation in the robust variance estimation (RVE) frameworkReference Hedges, Tipton and Johnson 34 and restricted maximum likelihood estimation (REML).Reference Viechtbauer, López-López, Sánchez-Meca and Marín-Martínez 35 RVE is robust to the choice of distributional form of the effect size being pooled and should be less impacted by the effect size weights used in the meta-analytic model.Reference Hedges, Tipton and Johnson 34 Because the distributional form and sampling variance of ICC estimates are unknown, a meta-analytic technique, such as RVE, seems well matched for meta-analysis of ICC estimates. For comparison, we also evaluated REML estimation with random effects pooling, as it remains a commonly used estimation procedure in meta-analysis. In addition to comparing estimation procedures, we also investigated how well different proposed formulas for the variance of the ICC sampling distribution performed in the inverse variance weights used when pooling ICC estimates. This study is intended to provide guidelines for obtaining unbiased pooled ICC estimates that ultimately can be used in multiple scenarios, including a priori power analyses and meta-analysis of effect size estimates for scenarios with clustered individual or measurement data.

1.1 Why do we need estimates for ICCs for secondary analyses?

One goal of this study is to evaluate the synthesis of ICC sample estimates in order to obtain an accurate estimate of an ICC for a secondary analysis when the raw data are not available. There are two common contexts in which this is needed: (1) prospective power analyses when designing a CRT and (2) meta-analysis of effect sizes that need to be corrected for clustering or psychometric artifacts.

1.1.1 Power analysis

When designing an experiment, it is necessary to determine the sample size needed to achieve sufficient statistical power to detect a pre-specified minimal treatment effect. A prospective power analysis for two-level clustered data requires specifying the desired power, the appropriate

![]() $\alpha $

-level, the expected minimum effect size, a plausible value for the ICC and either the total sample size (when determining the number of clusters needed) or the total number of clusters (when determining the total sample size).Reference Raudenbush

36

–

Reference Hedges and Rhoads

38

The sample size formulas that do not account for clustering will underestimate the number of required individuals in clustered data.

$\alpha $

-level, the expected minimum effect size, a plausible value for the ICC and either the total sample size (when determining the number of clusters needed) or the total number of clusters (when determining the total sample size).Reference Raudenbush

36

–

Reference Hedges and Rhoads

38

The sample size formulas that do not account for clustering will underestimate the number of required individuals in clustered data.

The variance inflation factor (VIF) accounts for clustering, and the power to detect the true effect size is dependent on the ICC estimate. VIF is equal to:

![]() $VIF = 1 + (n-1)\rho $

, where

$VIF = 1 + (n-1)\rho $

, where

![]() $\rho $

is the ICC and n is the average number of within-cluster units. The VIF is a multiplicative factor that quantifies the increase in variance due to randomizing clusters into treatment conditions. This shows that if either the

$\rho $

is the ICC and n is the average number of within-cluster units. The VIF is a multiplicative factor that quantifies the increase in variance due to randomizing clusters into treatment conditions. This shows that if either the

![]() $\rho $

, n, or both

$\rho $

, n, or both

![]() $\rho $

and n are larger then this results in a large increase in the variance of the treatment effect estimate. Because CRTs have a larger variance for the treatment effect and have fewer degrees of freedom than designs that randomize students,Reference Cornfield

12

CRTs also have relatively less power. So if the number of clusters in a study is small, the power analysis is highly dependent on the

$\rho $

and n are larger then this results in a large increase in the variance of the treatment effect estimate. Because CRTs have a larger variance for the treatment effect and have fewer degrees of freedom than designs that randomize students,Reference Cornfield

12

CRTs also have relatively less power. So if the number of clusters in a study is small, the power analysis is highly dependent on the

![]() $\rho $

estimate.

$\rho $

estimate.

It would be ideal to know the ICC prior to conducting a power analysis; however, because it is not possible to know the ICC before collecting the data, researchers must impute an expected ICC from previously conducted studies that are similar. Mis-specifying the ICC in a power analysis can lead to a drastic miscalculation of the sample size necessary to achieve a desired level of power. If the estimate for

![]() $\rho $

used in the prospective power analysis’ sample size calculation is lower than the true ICC value, the necessary sample size will be underestimated, and the study will be under-powered. Alternatively, using a conservative

$\rho $

used in the prospective power analysis’ sample size calculation is lower than the true ICC value, the necessary sample size will be underestimated, and the study will be under-powered. Alternatively, using a conservative

![]() $\rho $

estimate (i.e., larger than the true ICC) in a prospective power analysis will inflate the sample size used, thereby unnecessarily wasting resources in the actual study.

$\rho $

estimate (i.e., larger than the true ICC) in a prospective power analysis will inflate the sample size used, thereby unnecessarily wasting resources in the actual study.

Furthermore, while it is advisable to do a sensitivity analysis for the assumed ICC when conducting power analyses, a more accurate ICC value provides a necessarily better starting point for potential sensitivity analyses. Considering the number of design parameters that also need to be considered in a power analysis, the use of the optimal ICC value can assist applied researchers in the initial planning. Ultimately, a researcher must decide on a target sample size, and a suitable ICC value helps in that determination.

1.1.2 Correcting effect sizes for artifacts in meta-analysis

Correcting for clustering: Meta-analysis offers another scenario in which accurate ICC estimation can be critical. Meta-analysis is a collection of statistical procedures that evaluate and synthesize findings from a comprehensive set of primary studies on a similar topic. Meta-analysts summarizing treatment effects across relevant primary studies may need to capture effect sizes from studies with varying experimental designs. These effect sizes must first be standardized using a common metric before they can be included in the meta-analysis. For example, if the treatment effect at the individual level is of interest to the meta-analyst, then effect sizes from CRTs must be adjusted to reflect individual-level effects using the ICC.Reference Hedges 8 , Reference Hedges 9 Often, primary studies do not report an ICC value, nor do they provide sufficient information for a meta-analyst to calculate it manually. Thus, as with power analyses, researchers must impute ICC estimates prior to making the adjustments necessary to include a primary study’s clustered data’s effect size estimates and associated variances. For two-level data, such methods were first introduced in HedgesReference Hedges 8 and later corrected in Supplement to the What Works Clearninghouse Procedures Handbook, Version 4.1. 39 For three-level data using MLM, the formulas for effect sizes adjusted for clustering are presented in Hedges.Reference Hedges 9

If a meta-analyst wanted to calculate Hedges’ g for a CRT with one level of clustering (i.e., a two-level design), they would use the following formula:

$$ \begin{align} g = \frac{\omega(\overline{Y}_T - \overline{Y}_C)}{s_p}\sqrt{1-\frac{2(n-1)\hat{\rho}}{N-2}}, \end{align} $$

$$ \begin{align} g = \frac{\omega(\overline{Y}_T - \overline{Y}_C)}{s_p}\sqrt{1-\frac{2(n-1)\hat{\rho}}{N-2}}, \end{align} $$

where

![]() $\hat{\rho}$

is the ICC estimate, N is the total number of level-1 units, n is the average cluster size,

$\hat{\rho}$

is the ICC estimate, N is the total number of level-1 units, n is the average cluster size,

![]() $s_p$

is the pooled within-group standard deviation,

$s_p$

is the pooled within-group standard deviation,

![]() $\overline {Y}_T$

is the mean of the individuals in the treatment group,

$\overline {Y}_T$

is the mean of the individuals in the treatment group,

![]() $\overline {Y}_C$

is the mean of the individuals in the control group,

$\overline {Y}_C$

is the mean of the individuals in the control group,

![]() $\omega $

is the small sample size adjustment equal to

$\omega $

is the small sample size adjustment equal to

![]() $1+3/(4df-1)$

, and

$1+3/(4df-1)$

, and

![]() $\sqrt {1-\frac {2(n-1)\hat{\rho} }{N-2}}$

corrects the effect size for upward bias when

$\sqrt {1-\frac {2(n-1)\hat{\rho} }{N-2}}$

corrects the effect size for upward bias when

![]() $\hat{\rho} $

is greater than

$\hat{\rho} $

is greater than

![]() $0$

. As can be seen from Equation (1.1), the cluster-adjusted effect size is dependent on the

$0$

. As can be seen from Equation (1.1), the cluster-adjusted effect size is dependent on the

![]() $\hat{\rho} $

estimate. The

$\hat{\rho} $

estimate. The

![]() $df$

is the degrees of freedom for a two-level model and is found using the following expression

11

,

Reference Taylor, Pigott and Williams

40

:

$df$

is the degrees of freedom for a two-level model and is found using the following expression

11

,

Reference Taylor, Pigott and Williams

40

:

$$ \begin{align*} df = \frac{[(N-2)-2(n-1)\hat{\rho}]^2}{(N-2)(1-\hat{\rho})^2+n(N-2n)\hat{\rho}^2+2(N-2n)\hat{\rho}(1-\hat{\rho})}. \end{align*} $$

$$ \begin{align*} df = \frac{[(N-2)-2(n-1)\hat{\rho}]^2}{(N-2)(1-\hat{\rho})^2+n(N-2n)\hat{\rho}^2+2(N-2n)\hat{\rho}(1-\hat{\rho})}. \end{align*} $$

The equation for the cluster-assignment effect size SE is as follows:

$$ \begin{align*} \begin{aligned} se &= \omega\ \times \\ & \sqrt{\left[\frac{N}{n_Tn_C}\right]\left(1+\left(n-1\right)\hat{\rho}\right) + g^2\frac{(N-2)(1-\hat{\rho})^2 + n\left(N-2n\right)\hat{\rho}^2 + 2\left(N-2n\right)\hat{\rho}(1-\hat{\rho})}{2[(N-2)-2 \left(n-1 \right)\hat{\rho}]^2}}, \end{aligned} \end{align*} $$

$$ \begin{align*} \begin{aligned} se &= \omega\ \times \\ & \sqrt{\left[\frac{N}{n_Tn_C}\right]\left(1+\left(n-1\right)\hat{\rho}\right) + g^2\frac{(N-2)(1-\hat{\rho})^2 + n\left(N-2n\right)\hat{\rho}^2 + 2\left(N-2n\right)\hat{\rho}(1-\hat{\rho})}{2[(N-2)-2 \left(n-1 \right)\hat{\rho}]^2}}, \end{aligned} \end{align*} $$

where

![]() $n_T$

is the number of individuals in the treatment group and

$n_T$

is the number of individuals in the treatment group and

![]() $n_C$

is the number of individuals in the control group. This SE is used as weights (by squaring the SE to obtain the associated sampling variance for the adjusted effect size and then taking the inverse of the variance) when combining effect size estimates across studies of different experimental designs.

11

,

Reference Taylor, Pigott and Williams

40

These equations highlight the importance of the ICC value used in terms of its contribution to both the adjusted SMD effect size for clustered data and its sampling variance.

$n_C$

is the number of individuals in the control group. This SE is used as weights (by squaring the SE to obtain the associated sampling variance for the adjusted effect size and then taking the inverse of the variance) when combining effect size estimates across studies of different experimental designs.

11

,

Reference Taylor, Pigott and Williams

40

These equations highlight the importance of the ICC value used in terms of its contribution to both the adjusted SMD effect size for clustered data and its sampling variance.

The Cochrane Handbook for Systematic Reviews of InterventionsReference Higgins, Thomas, Chandler, Cumpston, Li and Page 10 has an alternative approach to accounting for CRTs (or even individually randomized trials with clustering) in a meta-analysis. They suggest multiplying the SE of the effect size estimate (continuous or dichotomous) by the square root of the VIF (or as they refer to it as the design effect).Reference Higgins, Thomas, Chandler, Cumpston, Li and Page 10

Correcting effect sizes for psychometric artifacts: While there are different types of reliability coefficients that account for different sources of measurement error variance, the ICC as an inter-rater reliability coefficient can be used to correct SMD effects for measurement error in observer ratings.Reference Wiernik and Dahlke

18

One way that this artifact correction is obtained is by calculating the ICC as a reliability coefficient for each group (e.g., treatment and control groups). The pooled sample-size-weighted average of the ICC estimates (

![]() $\overline{\hat{\rho}}$

) is then calculated and used to correct the observed SMD (

$\overline{\hat{\rho}}$

) is then calculated and used to correct the observed SMD (

![]() $d_{obs})$

:

$d_{obs})$

:

$$ \begin{align*} d_c = \frac{d_{obs}}{\sqrt{\overline{\hat{\rho}}}}, \end{align*} $$

$$ \begin{align*} d_c = \frac{d_{obs}}{\sqrt{\overline{\hat{\rho}}}}, \end{align*} $$

where

![]() $d_{c}$

is the corrected SMD.Reference Wiernik and Dahlke

18

$d_{c}$

is the corrected SMD.Reference Wiernik and Dahlke

18

2 Literature review

As emphasized, there are multiple scenarios in which accurate ICC estimates are needed. One potential method for obtaining an accurate ICC estimate consists of pooling reported values from related prior studies. However, there is a lack of consensus about how best to pool ICCs. In the following few sections, we review the research on methodological considerations when determining the best method for pooling ICC estimates. First, we provide a very brief overview of clustered data analysis, which serves as the framework for understanding ICCs and provides a formal definition of the ICC. Next, we explain the importance of good ICC estimates and review past methods for imputing them when conducting power analyses and meta-analyses. We describe what is known about the sampling distribution of ICC estimates, including the associated variance formulations. Last, we summarize the meta-analytic methods relevant to pooling ICC estimates.

2.1 Analyzing clustered data

While several analytic methods have been developed to handle clustered data,Reference Donner and Koval 28 , Reference Liang and Zeger 41 , Reference McNeish and Kelley 42 multilevel modeling (MLM; also referred to as mixed-effects, random-effects, hierarchical linear, and random-coefficients modelingReference Raudenbush and Bryk 4 ) is a common modeling technique used in applied social science research. When the functional form of an MLM model is correctly specified for the data structure, MLM has been shown to result in more precise SEs.Reference McNeish and Kelley 42 MLM models cluster dependence by portioning the overall variance in an outcome into within-cluster and between-cluster components. A common applied data scenario entails lower-order units (like students) that are clustered within higher-order units (like schools). In this study, we focus on two-level data in which only the lower-level units are nested within only one level of higher-level units. Future research can explore scenarios with additional levels and further complications in clustered data.

In the MLM framework, both the between- and the within-cluster variation must be specified in the model.Reference Raudenbush and Bryk 4 For example, in a dataset with students nested within schools, the nested structure can be modeled using a two-level random-effects model as follows:

$$ \begin{align} \begin{aligned} Level \quad 1: Y_{ij} &= \beta_{0j} + e_{ij} \\ Level \quad 2: \beta_{0j} &= \gamma_{00} + u_{0j}, \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} Level \quad 1: Y_{ij} &= \beta_{0j} + e_{ij} \\ Level \quad 2: \beta_{0j} &= \gamma_{00} + u_{0j}, \end{aligned} \end{align} $$

where

![]() $Y_{ij}$

is the outcome score for student i in school j,

$Y_{ij}$

is the outcome score for student i in school j,

![]() $\gamma _{00}$

is the grand mean of the outcome

$\gamma _{00}$

is the grand mean of the outcome

![]() $Y_{ij}$

,

$Y_{ij}$

,

![]() $u_{0j}$

is the random effect for school j, and

$u_{0j}$

is the random effect for school j, and

![]() $e_{ij}$

is the level-1 residual that varies around school j’s mean,

$e_{ij}$

is the level-1 residual that varies around school j’s mean,

![]() $\beta _{0j}$

. Both residual terms,

$\beta _{0j}$

. Both residual terms,

![]() $u_{0j}$

and

$u_{0j}$

and

![]() $e_{ij}$

, are typically assumed to follow independent normal distributions, each with a mean of zero and variances of

$e_{ij}$

, are typically assumed to follow independent normal distributions, each with a mean of zero and variances of

![]() $\sigma ^2_u$

and

$\sigma ^2_u$

and

![]() $\sigma _e^2$

, respectively. In the above specification, we assume errors from different clusters are independent, while errors within a cluster are modeled as correlated. The correlation is commonly assumed to be the same for each pair of outcomes within and across clusters. More complex specifications are available, but for the purpose of this study, we will maintain these assumptions.

$\sigma _e^2$

, respectively. In the above specification, we assume errors from different clusters are independent, while errors within a cluster are modeled as correlated. The correlation is commonly assumed to be the same for each pair of outcomes within and across clusters. More complex specifications are available, but for the purpose of this study, we will maintain these assumptions.

2.2 Intraclass correlation coefficient

In the MLM framework, the ICC is used to summarize the degree of dependence in the data by capturing the proportion of total variance in the outcome that is attributable to between-cluster variation. For MLMs, the variance within clusters is typically assumed to be the same across clusters. Formally, the equation for the ICC, denoted as

![]() $\rho $

, from a two-level unconditional model, a model with no predictors at either level as depicted in Equation (2.1), is as follows:

$\rho $

, from a two-level unconditional model, a model with no predictors at either level as depicted in Equation (2.1), is as follows:

$$ \begin{align} \rho=\frac{\sigma^2_u}{\sigma^2_u+\sigma^2_e}, \end{align} $$

$$ \begin{align} \rho=\frac{\sigma^2_u}{\sigma^2_u+\sigma^2_e}, \end{align} $$

where

![]() $\rho $

equals the level-2 variance divided by the level-2 variance plus the level-1 variance, or total variance. The ICC is non-negative,

$\rho $

equals the level-2 variance divided by the level-2 variance plus the level-1 variance, or total variance. The ICC is non-negative,

![]() $\rho \geq 0$

, because variance components are non-negative. Higher ICC values imply that there is a stronger clustering effect, and an ICC value of zero implies that there is no clustering dependence. Another application of the ICC is as a reliability estimate for the degree of absolute agreement among measurements from a one-way random effects model.Reference McGraw and Wong

3

,

Reference Chen, Taylor and Haller

17

,

Reference Shoukri, Al-Hassan, DeNiro, El Dali and Al-Mohanna

43

Although typically, the ICC in this context was computed in the ANOVA framework,Reference McGraw and Wong

3

it can also be estimated using the MLM framework.Reference Chen, Taylor and Haller

17

For example, in this context, the level-2 units could be subjects, and each subject is measured by the same instruments (e.g., raters or repeated measurements), which would be the level-1 units.

$\rho \geq 0$

, because variance components are non-negative. Higher ICC values imply that there is a stronger clustering effect, and an ICC value of zero implies that there is no clustering dependence. Another application of the ICC is as a reliability estimate for the degree of absolute agreement among measurements from a one-way random effects model.Reference McGraw and Wong

3

,

Reference Chen, Taylor and Haller

17

,

Reference Shoukri, Al-Hassan, DeNiro, El Dali and Al-Mohanna

43

Although typically, the ICC in this context was computed in the ANOVA framework,Reference McGraw and Wong

3

it can also be estimated using the MLM framework.Reference Chen, Taylor and Haller

17

For example, in this context, the level-2 units could be subjects, and each subject is measured by the same instruments (e.g., raters or repeated measurements), which would be the level-1 units.

The ICC is typically estimated using an unconditional model as shown in Equation (2.1).Reference Hedges 8 , Reference Hedges and Hedberg 14 That being said, however, it is possible to estimate a conditional ICC that incorporates the change in the variance explained by including covariates in the MLM. However, in this study, we focused on unconditional ICCs because that is what is typically used as the ICC value in meta-analysis and a priori power analyses for clustered data.Reference Taylor, Pigott and Williams 40 Use of unconditional ICCs offers a starting point for this line of research. Future research can begin to consider how best to synthesize conditional ICCs.

The magnitude of an ICC estimate in the context of clustered data is dependent on the study’s experimental design, outcome measure, and population. Despite the full range of ICC values being between 0 and 1, ICC values estimated from real data will have a restricted range depending on the context. For example, the ICC estimates reported by Hedges and HedbergReference Hedges and Hedberg 13 range from 0.045 to 0.271 in educational research settings. Yet ICC values as low as 0.05 and 0.10 can result in severe type-I error biases if clustering is ignored in the associated analyses.Reference Hox and Maas 44 , Reference Muthen and Satorra 45 In the context of measurement and reliability, typical ICC values can be much higher, with a common categorization of ICC values of less than 0.5, 0.5–0.75, 0.75–0.9, and greater than 0.9 being generally interpreted as indicating poor, moderate, good, and excellent reliability, respectively.Reference Koo and Li 46 Essentially, for a measurement to be considered reliable in this context, the level-1 variance needs to be much smaller compared to the level-2 variance. While these classifications are not universal and the context of the study should always be considered, we mention this categorization to contextualize the expected magnitude for ICC estimates when used as a reliability measure.

2.3 Review of past methods to handle missing ICC values

In the following section, we review the methods that have been proposed when an ICC estimate is needed for a prospective power analysis or a meta-analysis of clustered data. These methods are not evaluated in this study due to the limitations that we will present in this section. However, these methods help inform some of the problems that should be considered when tackling this methodological dilemma.

2.3.1 Single-value imputation methods

As stated earlier, an inaccurate ICC value can have negative consequences on the result of either a power analysis or a meta-analysis of CRTs. One possible method used to estimate an ICC in the case of a prospective power analysis is to run a pilot studyReference Rhoads 47 entailing a much smaller dataset than the proposed full study. However, a pilot study will result in imprecise level-1 and level-2 variance estimates and thus also in the resulting ICC estimate based on the small pilot study. In addition, funding and time constraints further limit the practicality of running a pilot study.

One option is to impute an ICC value from a prior study with a similar design. In educational and medical research, several investigators have compiled typical design parameters, including sample sizes and associated ICC values, for multilevel experimental designs from data collected at the cluster unit or national level for single-value imputation purposes.Reference Hedges and Hedberg 13 , Reference Hedberg and Hedges 15 , Reference Bloom, Richburg-Hayes and Black 48 These authors have found that ICCs varied depending on a number of design characteristics, including, for example, the outcome measure, age, and socioeconomic status.

When researchers are unable to find studies with sufficiently similar design parameters, they employ ad hoc procedures to impute an ICC. For example, Puzio et al.Reference Puzio, Colby and Algeo-Nichols 19 imputed a value of 0.2 for an ICC approximation based on ICC values that Hedges and HedbergReference Hedges and Hedberg 13 reported, where the mean (across grade level) ICC for reading outcomes was 0.224. In another example, Graham et al.Reference Graham, Kiuhara and MacKay 20 took the median of the ICC values across all educational outcomes reported in Hedges and Hedberg.Reference Hedges and Hedberg 13 The What Works Clearinghouse Procedures Handbook v. 5.0 11 recommends imputing an ICC value of 0.20 for achievement outcomes and 0.10 for behavioral outcomes. However, those values may be inaccurate and may not be relevant to all outcome subtypes and study designs more generally. And as noted earlier, inaccurate ICCs can negatively impact results from a priori power and meta-analytic inferences.

2.3.2 Confidence interval estimates of the ICC

Instead of only imputing a value from a previous study of similar design, other researchers have suggested adjusting for potential uncertainty in the ICC parameter by constructing confidence interval estimates and using the upper limit as the imputed value.Reference Donner and Wells

21

Using the variance formulas discussed in detail later, confidence intervals for the ICC value can be constructed. To avoid under-powering a study, applied researchers could use the upper limit of a 95% confidence interval estimate of

![]() $\rho $

as a conservative ICC estimate to substitute in a power analysis. However, use of the upper limit value can project a larger than necessary sample sizeReference Donner and Wells

21

and result in less accurate adjustments when meta-analyzing effect sizes from clustered data.

$\rho $

as a conservative ICC estimate to substitute in a power analysis. However, use of the upper limit value can project a larger than necessary sample sizeReference Donner and Wells

21

and result in less accurate adjustments when meta-analyzing effect sizes from clustered data.

2.3.3 Utilizing Bayesian methods to estimate ICC values

Another proposed method of obtaining ICC estimates utilizes a Bayesian framework, which is not often implemented by applied researchers in social science research. Instead of hypothesizing a single true ICC value, a plausible prior distribution can be specified to derive a posterior distribution of ICC values. To capture reasonable ICC estimates for analyses, SpiegelhalterReference Spiegelhalter 22 discussed the use of eight different priors that could be placed on the multilevel model’s variance components and the ICC estimate (Equation (2.2)). SpiegelhalterReference Spiegelhalter 22 evaluated the priors through a Markov chain Monte Carlo (MCMC) demonstration and found that the inverse gamma priors, the log-uniform prior, and the uniform shrinkage prior all led to substantially smaller estimates of the ICC, which would result in inflated statistical power in the prospective designs. Findings from this study support using the uniform prior for the ICC, or the uniform shrinkage prior, among the uninformative prior options when estimating an ICC. The work of SpiegelhalterReference Spiegelhalter 22 relies on the availability of the raw data in order to specify the likelihood function. However, when conducting power analyses or meta-analyses, previous estimates of ICCs are rarely accompanied by the raw data, and researchers must rely on summary statistics instead.

To develop a method that relies on summary statistics instead of raw data when estimating an ICC, Turner et al.Reference Turner, Toby Prevost and Thompson 23 compared possible forms for the likelihood function of ICC estimates using methods described in Donner and WellsReference Donner and Wells 21 and Ukoumunne.Reference Ukoumunne 49 The proposed likelihood distribution functions do not rely on raw data and only require values for the ICC estimate, the total number of observations, and the total number of clusters. Turner et al.Reference Turner, Toby Prevost and Thompson 23 conducted an empirical MCMC demonstration to construct posterior distributions for the true ICC value using the various forms of the likelihood when paired with a Uniform(0, 1) prior. Then they transformed the resulting posterior distribution into a distribution of power values. As noted earlier and discussed in greater detail later, the distributional form of an ICC is unknown, and the methods of Turner et al.Reference Turner, Toby Prevost and Thompson 23 rely heavily on the assumptions made about the likelihood. Furthermore, her demonstration only used one or six ICC estimates, so the conclusions were entirely dependent on the prior and likelihood choices. Thus, while there are a number of ad hoc suggestions for imputing an ICC estimate, there are challenges identified with each. In the following sections, we describe how a more principled synthesis of prior ICC estimates could be used to impute a reasonable value.

2.4 Characteristics of the sampling distribution of ICCs

Pooling ICC estimates requires specifying a sampling variance for the ICC. Despite the sampling distribution of the ICC being unknown due to it being a ratio of variances, several methods have been proposed to construct confidence intervals for the ICC to capture uncertainty in the parameter. In the scenario where there are equal cluster sizes and large samples, there are closed-form approximations for constructing confidence intervals of ICC estimates.Reference Fisher

1

,

Reference Donner

50

For designs with unequal cluster sizes, closed-form approximations for confidence intervals of ICCs have been proposed assuming either an F-statisticReference Searle

51

or a large-sample approximation to the variance of an ICC estimate. UkoumunneReference Ukoumunne

49

and Donner and WellsReference Donner and Wells

21

detail several methods for constructing confidence intervals for

![]() $\rho $

where the sampling distribution of ICC estimates and transformed ICC estimates are assumed to follow a normal distribution.Reference Fisher

1

,

Reference Smith

2

,

Reference Swiger, Harvey, Everson and Gregory

26

,

Reference Hedges, Hedberg and Kuyper

27

,

Reference Donner and Koval

52

$\rho $

where the sampling distribution of ICC estimates and transformed ICC estimates are assumed to follow a normal distribution.Reference Fisher

1

,

Reference Smith

2

,

Reference Swiger, Harvey, Everson and Gregory

26

,

Reference Hedges, Hedberg and Kuyper

27

,

Reference Donner and Koval

52

There have been studies that explored the empirical ICC distributions based on real data using observed variability and simulated data using assumed models and parameters. The shape of the empirical distribution of ICC estimates depends on the magnitude of the true ICC parameter and data context. For example, Hedberg and HedgesReference Hedberg and Hedges

15

assessed the empirical distribution of 3,555 within-district ICC estimates they collected and found it to be non-normal and highly positively skewed with a median ICC value of

![]() $0.056$

. But, as they noted, the empirical distribution of ICC estimates in their context accounts for both the sampling variance of a school-level ICC estimate and between-district variance (comparable to a between-study variance in a meta-analytic context), which could impact the shape of the distribution. Furthermore, when ICC estimates are near zero, the estimates would pile up near the lower bound of zero.

$0.056$

. But, as they noted, the empirical distribution of ICC estimates in their context accounts for both the sampling variance of a school-level ICC estimate and between-district variance (comparable to a between-study variance in a meta-analytic context), which could impact the shape of the distribution. Furthermore, when ICC estimates are near zero, the estimates would pile up near the lower bound of zero.

Simulation studies that do not assume a distribution of population ICC estimates, but instead assume only a single underlying ICC parameter value, can evaluate the shape of the ICC estimates’ sampling distribution by using an empirical distribution of simulated ICC estimates under specified models or other parameters. Liljequist et al.Reference Liljequist, Elfving and Roaldsen 53 conducted a Monte Carlo simulation to evaluate ICC distributions in the context of ICC as a measure of reliability. They assumed that the level-1 and level-2 residual terms were normally distributed and calculated an ICC value using an ANOVA model. When the true ICC value was 0.8, the empirical distribution was highly left-skewed, and when the true ICC value was 0.5 or 0.64, the resulting empirical distributions of ICC estimates were more symmetric but still skewed to the left. The authors also evaluated the impact of increasing the number of cluster units (participants in this context) and found that the empirical distribution becomes narrower as the number of clusters increases. They also examined the magnitude of within-cluster units (measurements in this context). The values of the number of within-cluster units they evaluated ranged from 2 to 10 measurements per subject, which is small in comparison to the values of the number of within-cluster units in CRT contexts. They found that increasing the number of within-cluster units also decreases the width of the empirical distribution.

Assumptions about the ICC sampling distribution following a normal distribution are likely often violated in practice (particularly for smaller samples) and negate the validity of using confidence interval estimates of the ICC, which have traditionally assumed a normal distribution for ICC estimates.

Regarding the sampling variance,

![]() $v_{\hat{\rho}}$

, of an ICC estimate, researchers have derived different formulas for large samples. SmithReference Smith

2

proposed a large sample approximation to the variance of the ICC estimate by using the weighted mean cluster size (

$v_{\hat{\rho}}$

, of an ICC estimate, researchers have derived different formulas for large samples. SmithReference Smith

2

proposed a large sample approximation to the variance of the ICC estimate by using the weighted mean cluster size (

![]() $n_0$

):

$n_0$

):

$$ \begin{align} n_0 = \frac{1}{j-1}\left[N-\sum_{i=1}^j\frac{n_i^2}{N}\right], \end{align} $$

$$ \begin{align} n_0 = \frac{1}{j-1}\left[N-\sum_{i=1}^j\frac{n_i^2}{N}\right], \end{align} $$

where j is the number of clusters, N is the total sample size, and

![]() $n_i$

is the number of units in the ith cluster. The formula derived by SmithReference Smith

2

for the variance of an ICC estimate is as follows:

$n_i$

is the number of units in the ith cluster. The formula derived by SmithReference Smith

2

for the variance of an ICC estimate is as follows:

$$ \begin{align} \begin{aligned} v_{\hat{\rho}}&= \frac{2(1-\hat{\rho})^2}{n_0^2}\bigg\{\frac{[1+\hat{\rho}(n_0-1)]^2}{(N-j)} \\& \quad + \frac{(j-1)(1-\hat{\rho})[1+\hat{\rho}(2n_0-1)] + \hat{\rho}^2\left[\sum n_i^2 - 2N^{-1}\sum n^3_i + N^{-2}\left(\sum n_i^2\right)^2\right]}{(j-1)^2}\Bigg\}, \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} v_{\hat{\rho}}&= \frac{2(1-\hat{\rho})^2}{n_0^2}\bigg\{\frac{[1+\hat{\rho}(n_0-1)]^2}{(N-j)} \\& \quad + \frac{(j-1)(1-\hat{\rho})[1+\hat{\rho}(2n_0-1)] + \hat{\rho}^2\left[\sum n_i^2 - 2N^{-1}\sum n^3_i + N^{-2}\left(\sum n_i^2\right)^2\right]}{(j-1)^2}\Bigg\}, \end{aligned} \end{align} $$

where

![]() $\hat{\rho}$

is an estimate of

$\hat{\rho}$

is an estimate of

![]() $\rho $

.

$\rho $

.

The formula for

![]() $v_{\hat{\rho}}$

proposed by Swiger et al.Reference Swiger, Harvey, Everson and Gregory

26

is a variation of Smith’s approach, Equation (2.4), with a simpler formula for the variance of the ICC estimate:

$v_{\hat{\rho}}$

proposed by Swiger et al.Reference Swiger, Harvey, Everson and Gregory

26

is a variation of Smith’s approach, Equation (2.4), with a simpler formula for the variance of the ICC estimate:

$$ \begin{align} v_{\hat{\rho}} = \frac{2(N-1)(1-{\hat{\rho}}^2)[1+(n_0-1){\hat{\rho}}]^2}{n_0^2(N-j)(j-1)}. \end{align} $$

$$ \begin{align} v_{\hat{\rho}} = \frac{2(N-1)(1-{\hat{\rho}}^2)[1+(n_0-1){\hat{\rho}}]^2}{n_0^2(N-j)(j-1)}. \end{align} $$

Using the delta method, Hedges et al.Reference Hedges, Hedberg and Kuyper 27 derived the large sample variance of an ICC estimate for a two-level model in designs with equal cluster sizes as follows:

$$ \begin{align} v_{\hat{\rho}}= \frac{(1-{\hat{\rho}})^2v_2}{(\sigma_e^2+\sigma^2_u)^2}, \end{align} $$

$$ \begin{align} v_{\hat{\rho}}= \frac{(1-{\hat{\rho}})^2v_2}{(\sigma_e^2+\sigma^2_u)^2}, \end{align} $$

where

![]() $v_2$

is the variance of

$v_2$

is the variance of

![]() $\sigma ^2_u$

. The variance of the level-2 variance is commonly not reported in primary research studies, so applied meta-analysts will not be able to implement it, unlike other formulas that do not require it.

$\sigma ^2_u$

. The variance of the level-2 variance is commonly not reported in primary research studies, so applied meta-analysts will not be able to implement it, unlike other formulas that do not require it.

Hedges et al.Reference Hedges, Hedberg and Kuyper 27 stated that in balanced designs with equal cluster sizes, Equation (2.6) is equivalent to the formulation derived by FisherReference Fisher 1 for large samples. The formula derived by FisherReference Fisher 1 is as follows:

$$ \begin{align} v_{\hat{\rho}}=\frac{2(1-{\hat{\rho}})^2[1+(n-1){\hat{\rho}}]^2}{n(n-1)(j-1)}, \end{align} $$

$$ \begin{align} v_{\hat{\rho}}=\frac{2(1-{\hat{\rho}})^2[1+(n-1){\hat{\rho}}]^2}{n(n-1)(j-1)}, \end{align} $$

where n is the average cluster size.

For designs with unequal cluster sizes, Donner and KovalReference Donner and Koval

52

derived a formula for the large sample variance of

![]() $\rho $

estimates as follows:

$\rho $

estimates as follows:

$$ \begin{align} v_{\hat{\rho}}=\frac{2N(1-{\hat{\rho}})^2}{N\sum_{i=1}^{j}n_i(n_i-1)\hat{V_i}\hat{W_i}^{-2}-{\hat{\rho}}^2\Big[\sum_{i=1}^{j}n_i(n_i-1)\hat{W_i}^{-1}\Big]^2}, \end{align} $$

$$ \begin{align} v_{\hat{\rho}}=\frac{2N(1-{\hat{\rho}})^2}{N\sum_{i=1}^{j}n_i(n_i-1)\hat{V_i}\hat{W_i}^{-2}-{\hat{\rho}}^2\Big[\sum_{i=1}^{j}n_i(n_i-1)\hat{W_i}^{-1}\Big]^2}, \end{align} $$

where

![]() $\hat {W_i} = 1 + (n_i-1){\hat{\rho}}$

and

$\hat {W_i} = 1 + (n_i-1){\hat{\rho}}$

and

![]() $\hat {V_i} = 1 + (n_i-1){\hat{\rho}}^2$

. With equal cluster sizes, when

$\hat {V_i} = 1 + (n_i-1){\hat{\rho}}^2$

. With equal cluster sizes, when

![]() $n_1=\cdots =n_j=n$

, Equation (2.8) reduces to Fisher’s formulation, Equation (2.7).

$n_1=\cdots =n_j=n$

, Equation (2.8) reduces to Fisher’s formulation, Equation (2.7).

Finally, there is also Fisher’s method using a large sample approximation to the SE of a normalizing transformation of

![]() $\rho $

.Reference Fisher

29

First, the ICC estimates are assumed to be normally transformed using the following:

$\rho $

.Reference Fisher

29

First, the ICC estimates are assumed to be normally transformed using the following:

and the corresponding variance formula is

In summary, FisherReference Fisher

1

derived a formula for

![]() $v_{\hat{\rho}}$

that requires the ICC estimate, the average cluster size, and the number of clusters. Swiger et al.Reference Swiger, Harvey, Everson and Gregory

26

presented a formula that is the same as Fisher’s formula except scaled by a function of the total number of individuals in the sample. Hedges et al.Reference Hedges, Hedberg and Kuyper

27

derived a formula requiring knowledge of the variance of the level-2 random effects variance for a primary study, which is often not reported in primary studies. Donner and KovalReference Donner and Koval

52

and SmithReference Smith

2

derived formulas for unbalanced cluster sizes; the Donner and KovalReference Donner and Koval

52

formula is equivalent to Fisher’s formula when cluster sizes are equal. FisherReference Fisher

29

presented a formula that uses a normalizing transformation of the ICC estimate and requires only the weighted mean cluster size and the ICC estimate. The aim of this study is to compare how well these variance formulas work when quantitatively synthesizing ICC estimates.

$v_{\hat{\rho}}$

that requires the ICC estimate, the average cluster size, and the number of clusters. Swiger et al.Reference Swiger, Harvey, Everson and Gregory

26

presented a formula that is the same as Fisher’s formula except scaled by a function of the total number of individuals in the sample. Hedges et al.Reference Hedges, Hedberg and Kuyper

27

derived a formula requiring knowledge of the variance of the level-2 random effects variance for a primary study, which is often not reported in primary studies. Donner and KovalReference Donner and Koval

52

and SmithReference Smith

2

derived formulas for unbalanced cluster sizes; the Donner and KovalReference Donner and Koval

52

formula is equivalent to Fisher’s formula when cluster sizes are equal. FisherReference Fisher

29

presented a formula that uses a normalizing transformation of the ICC estimate and requires only the weighted mean cluster size and the ICC estimate. The aim of this study is to compare how well these variance formulas work when quantitatively synthesizing ICC estimates.

Many simulation studies have compared confidence interval methods for single (not pooled) ICC estimates, which implicitly evaluate the performance of the different sampling variance formulas. Among the formulas we presented above, Donner and WellsReference Donner and Wells

21

examined those proposed by Swiger et al.,Reference Swiger, Harvey, Everson and Gregory

26

Fisher,Reference Fisher

29

and SmithReference Smith

2

by assessing confidence interval coverage probabilities for

![]() $\rho $

when the number of lower-level units within clusters varied across clusters (e.g., unbalanced design). They found that the Swiger formulaReference Swiger, Harvey, Everson and Gregory

26

had excellent coverage for

$\rho $

when the number of lower-level units within clusters varied across clusters (e.g., unbalanced design). They found that the Swiger formulaReference Swiger, Harvey, Everson and Gregory

26

had excellent coverage for

![]() $\rho $

values less than

$\rho $

values less than

![]() $0.3$

and adequate coverage up to when

$0.3$

and adequate coverage up to when

![]() $\rho = 0.7$

. Additionally, the Smith formulaReference Smith

2

performed well across all values of

$\rho = 0.7$

. Additionally, the Smith formulaReference Smith

2

performed well across all values of

![]() $\rho $

, and they recommended its use in practice. In contrast, the Fisher normalizing transformation formula (Fisher TF)Reference Fisher

29

consistently performed conservatively relative to the other variance formulas they evaluated.

$\rho $

, and they recommended its use in practice. In contrast, the Fisher normalizing transformation formula (Fisher TF)Reference Fisher

29

consistently performed conservatively relative to the other variance formulas they evaluated.

In the context of CRTs for values of

![]() $\rho \leq 0.3$

, UkoumunneReference Ukoumunne

49

compared the performance of the Smith formula, Swiger formula, Fisher large samples formula (Equation (2.7)),Reference Fisher

1

and Fisher TF formula in an unbalanced design. They found that although the Smith estimator provided better coverage than the other formulas based on the untransformed ICC, the Fisher TF formula had substantially higher coverage across all large-sample approximations they evaluated. Coverage for the Fisher TF formula also improved as the number of clusters increased. In contrast, the variance formulas for the untransformed ICC performed particularly poorly in the condition with a small number of clusters (

$\rho \leq 0.3$

, UkoumunneReference Ukoumunne

49

compared the performance of the Smith formula, Swiger formula, Fisher large samples formula (Equation (2.7)),Reference Fisher

1

and Fisher TF formula in an unbalanced design. They found that although the Smith estimator provided better coverage than the other formulas based on the untransformed ICC, the Fisher TF formula had substantially higher coverage across all large-sample approximations they evaluated. Coverage for the Fisher TF formula also improved as the number of clusters increased. In contrast, the variance formulas for the untransformed ICC performed particularly poorly in the condition with a small number of clusters (

![]() $j=10$

) and small average cluster size (

$j=10$

) and small average cluster size (

![]() $n_0 = 10$

), where coverage proportions for all three methods fell below 90%. Overall, UkoumunneReference Ukoumunne

49

also found that the precision of all confidence interval methods improved as the average cluster size and the number of clusters increased, but worsened as the magnitude of the ICC parameter increased.

$n_0 = 10$

), where coverage proportions for all three methods fell below 90%. Overall, UkoumunneReference Ukoumunne

49

also found that the precision of all confidence interval methods improved as the average cluster size and the number of clusters increased, but worsened as the magnitude of the ICC parameter increased.

Demetrashvili et al.Reference Demetrashvili, Wit and van den Heuvel

54

also evaluated confidence intervals for the ICC from a one-way random effects model, where they proposed new approaches (one assuming an F-distribution and using the Satterthwaite approximation, and another assuming a Beta distribution). They compared the method proposed by Searle,Reference Searle

51

the Fisher TF formula, and the Smith formula across a large range of ICC parameter values (0.1–0.9). In conditions where the number of level-1 units was smaller than the number of level-2 units, the authors found that the Smith formula had poor confidence interval coverage rates. They also found that the Fisher TF formula resulted in conservative coverage probabilities across all values of

![]() $\rho $

that they evaluated.

$\rho $

that they evaluated.

Across all of these methods, it is unclear which formulation, when used for meta-analytic pooling, would result in an accurate pooled ICC estimate. Furthermore, it is unknown whether the distributional assumptions made by these methods are accurate, since the form of the sampling distribution of the ICC has not been derived. Methods for pooling ICC estimates that are agnostic to distributional form, therefore, may provide an ideal alternative because they do not require strong assumptions about the distributional shape of the statistic being pooled.

2.5 Meta-analytic methods to pool ICC estimates

In the next section, we review the most commonly used meta-analytic methods of relevance to this study. Ultimately, the hope is that an applied researcher could use these methods to pool ICC estimates from prior studies to be imputed for use in a secondary analysis (such as a power analysis of CRTs or meta-analysis of effects from clustered data), and that the resulting pooled ICC reflects the relevant population and research context.

One of the fundamental applications of meta-analysis entails pooling effect sizes from primary studies to obtain an overall effect size for the set of studies included in the analysis. The type of effect size varies depending on the type of data and the research questions of the primary studies, and most typically includes SMDs, log odds ratios, and correlations.Reference Cooper, Hedges and Valentine

55

Typically, an effect size is assumed to follow a normal distribution (otherwise, it is transformed to follow an approximate normal distribution) with a known sampling SE.Reference Hedges

56

The standardized effect size for study i is generically represented as

![]() $T_i$

. For this study, the ICC estimate is the “effect size” that is being pooled using meta-analytic techniques.

$T_i$

. For this study, the ICC estimate is the “effect size” that is being pooled using meta-analytic techniques.

In meta-analytic pooling, assuming that there are k studies included in a meta-analysis, that each study reports one independent effect size (simplest scenario),

![]() $T_i$

, for studies

$T_i$

, for studies

![]() $i= 1,\ldots ,k$

. The effect sizes are pooled using the following:

$i= 1,\ldots ,k$

. The effect sizes are pooled using the following:

$$ \begin{align} \hat{\mu} = \frac{\sum_{i=1}^k w_iT_i}{\sum_{i=1}^k w_i}, \end{align} $$

$$ \begin{align} \hat{\mu} = \frac{\sum_{i=1}^k w_iT_i}{\sum_{i=1}^k w_i}, \end{align} $$

where

![]() $w_i$

are model-dependent weights specified by the analyst. The most typical weight applied is the inverse of the effect size’s variance estimate.

$w_i$

are model-dependent weights specified by the analyst. The most typical weight applied is the inverse of the effect size’s variance estimate.

2.5.1 Using REML estimation to estimate the pooled ICC

While there are many estimation methods for random effects meta-analytic models, REML is the default of the meta-analytic R software package metafor Reference Viechtbauer 57 and is commonly used in most meta-analysis packages. Unlike other maximum likelihood estimators that negatively bias the effect size estimates’ variance component, REML corrects for this and has been adapted for meta-analytic random effects pooling.Reference Raudenbush 58 , Reference Viechtbauer 59

2.5.2 Using robust variance estimation to estimate the pooled ICC

RVEReference Hedges, Tipton and Johnson 34 is an alternative meta-analysis regression model estimation approach. It is typically employed to account for the dependence among multiple effect sizes within studies when estimating SEs. More generally, RVE uses the weighted least squares (WLS) framework, which incorporates a set of weighting matrices in estimation. It was adapted by Tipton and PustejovskyReference Tipton and Pustejovsky 60 for meta-analyses involving only a small number of studies, using the Satterthwaite degrees-of-freedom correction. When there is no dependence among the effect sizes, as is the case with a random effects model with independent effect sizes, RVE can also be used to obtain robust estimates of the variance of the pooled effect because RVE is robust to the distributional shape of the effect size being pooled.Reference Hedges, Tipton and Johnson 34 RVE estimates parameters using method-of-moment estimators proposed by Hedges et al.Reference Hedges, Tipton and Johnson 34

RVE does not require knowledge of the exact covariance structure between pairs of effect size estimates because the variances of the meta-regression coefficients are estimated with sandwich estimators using the cross-product of observed residuals to estimate the covariance between pairs of effect sizes rather than the known covariances.Reference Hedges, Tipton and Johnson 34 , Reference Tipton and Pustejovsky 60 RVE is used with a variety of data structures, including those that are nested or correlated.

Even though any set of weights can be used, the inverse variance weights are typically specified because if the working model is correct, using inverse variance weights will result in a fully efficient estimator.Reference Hedges, Tipton and Johnson 34 There are different formulations of the inverse variance weights depending on the assumptions made about the working model.Reference Hedges, Tipton and Johnson 34 Because the true covariance structure between effect sizes is typically unknown, exact inverse variance weights cannot be calculated. The approximate inverse variance weights improve efficiency compared to any other type of weights.Reference Hedges, Tipton and Johnson 34

Since we evaluate only one ICC per study in this study, the working model will also be a random-effects meta-regression model, and the weight will be specified as, where

![]() $\hat{v}_{\hat{\rho}i}$

is the sample variance estimate for

$\hat{v}_{\hat{\rho}i}$

is the sample variance estimate for

![]() $\hat{\rho}$

from study

$\hat{\rho}$

from study

![]() $i$

and

$i$

and

![]() $\hat{\tau}_{\rho}^2$

is the between-study variance estimate for the ICC

$\hat{\tau}_{\rho}^2$

is the between-study variance estimate for the ICC

![]() $W_i=\frac {1}{(\hat {v}_{\hat{\rho}i}+\hat {\tau }_\rho ^2)}$

. However, instead of using REML for estimation, Hedges et al.Reference Hedges, Tipton and Johnson

34

used method of moments estimation to estimate the between-study variance

$W_i=\frac {1}{(\hat {v}_{\hat{\rho}i}+\hat {\tau }_\rho ^2)}$

. However, instead of using REML for estimation, Hedges et al.Reference Hedges, Tipton and Johnson

34

used method of moments estimation to estimate the between-study variance

![]() $\tau _{\rho }^2$

and the weights in the RVE technique. So, essentially, when the working model is a random effects model with independent effect sizes (as we use in the current study), the comparison between RVE and REML is really a comparison between REML and the method of moments estimation of the between-study variance of the ICC,

$\tau _{\rho }^2$

and the weights in the RVE technique. So, essentially, when the working model is a random effects model with independent effect sizes (as we use in the current study), the comparison between RVE and REML is really a comparison between REML and the method of moments estimation of the between-study variance of the ICC,

![]() $\tau _\rho ^2$

.

$\tau _\rho ^2$

.

RVE has two advantages over typical meta-analytic pooling that uses estimation methods like REML. First, the RVE estimate of the variance of the weighted mean effect size is an unbiased estimate regardless of the choice of weights.Reference Hedges, Tipton and Johnson 34 The lack of reliance on the choice of weights means a lack of dependence on the effect size estimate variance specification because the weights are typically a function of those variances. Second, RVE performs well without requiring strict distributional assumptions for analyses of effect size estimates. Because the aim of this study is to pool ICC estimates (as the “effect sizes” being meta-analytically pooled), these advantages are useful because the distributional form and the variance of the sampling distribution of ICC estimates are unknown. For optimal performance, other models or methods of pooling rely on distributional assumptions or weight specification for accurate estimation of parameters.

2.5.3 Applied meta-analyses of ICC estimates

Meta-analytically pooling ICC estimates is not a novel notion. Applied researchers have used these methods to find representative ICC values for specific research designs, outcome types, and populations. The methods used for meta-analyzing ICC estimates in practice vary widely.

For example, as mentioned earlier, Hedberg and HedgesReference Hedberg and Hedges 15 meta-analyzed ICC values collected from districts estimated from raw data. The two-level ICC values in the dataset were estimated for each school within each district using REML. Then, the authors used the random effects model to pool the within-district ICCs estimates across districts (the random effects) using a method of moments estimator derived by Hedges and VeveaReference Hedges and Vevea 61 to account for the sampling error and the variance in the district ICC values around the population average ICC. For the variance of the ICC, they used the large sample variance derived by FisherReference Fisher 1 (see Equation (2.7)).

In another applied example of a meta-analysis of ICC estimates, Kivlighan et al.Reference Kivlighan, Aloe and Adams

30

meta-analyzed ICCs from CRTs of group therapy. The meta-analysis summarized 169 ICC estimates from 37 studies using RVE. The researchers used RVE to handle the within-study dependency in ICCs resulting from multiple ICC values being reported per study. For the inverse variance weights, the authors estimated the variance of the ICC,

![]() $v_{\hat{\rho}}$

, using the formula proposed by Hedges et al.,Reference Hedges, Hedberg and Kuyper

27

which requires the variance of the cluster-level variance. However, there are several limitations in the methods used by Kivlighan et al.,Reference Kivlighan, Aloe and Adams

30

which should be considered when interpreting their results. First, the authors did not provide the exact form of the variance they used in the meta-analysis; they only provided the weights, so we could not verify how they were able to calculate the variances of the ICC estimates. They did not detail any assumptions they made about the variance of the cluster-level variances used when calculating the ICC estimate variances. Furthermore, they pooled level-2 ICC estimates and level-3 ICC estimates together. The range of ICC estimates at different nesting levels has been found to vary,Reference Hedges and Hedberg

13

,

Reference Hedges and Hedberg

14

so pooling ICC estimates from different levels of an MLM is not appropriate.

$v_{\hat{\rho}}$

, using the formula proposed by Hedges et al.,Reference Hedges, Hedberg and Kuyper

27

which requires the variance of the cluster-level variance. However, there are several limitations in the methods used by Kivlighan et al.,Reference Kivlighan, Aloe and Adams

30

which should be considered when interpreting their results. First, the authors did not provide the exact form of the variance they used in the meta-analysis; they only provided the weights, so we could not verify how they were able to calculate the variances of the ICC estimates. They did not detail any assumptions they made about the variance of the cluster-level variances used when calculating the ICC estimate variances. Furthermore, they pooled level-2 ICC estimates and level-3 ICC estimates together. The range of ICC estimates at different nesting levels has been found to vary,Reference Hedges and Hedberg

13

,

Reference Hedges and Hedberg

14

so pooling ICC estimates from different levels of an MLM is not appropriate.

In the context of an ICC as a reliability measure, Noble et al.Reference Noble, Scheinost and Constable 31 meta-analyzed 25 independent ICC estimates as a measure of test–retest reliability of fMRI-based functional connectivity using a multilevel model (to account for studies nested with datasets) with REML estimation using a modified version of the Fisher formula (Equation (2.7)) presented in Shoukri et al.Reference Shoukri, Al-Hassan, DeNiro, El Dali and Al-Mohanna 43 However, they also mention that they used this sample variance formula for other types of ICCs included in their meta-analysis, beyond the one-way random-effects ICC.

Goerdten et al.Reference Goerdten, Yuan and Huybrechts 33 meta-analyzed ICC estimates as a measure of reproducibility over time for a biomarker involved in metabolism (metabolites). They also used a multilevel random-effects model to account for a study reporting multiple ICC estimates (with effects nested within authors). They did not specify the estimation method, but they conducted their analyses in metafor, which uses REML as a default. For the sampling variance for the ICC estimate, they also used the modified version of the Fisher formula presented in Shoukri et al.Reference Shoukri, Al-Hassan, DeNiro, El Dali and Al-Mohanna 43

Nicolaï et al.Reference Nicolaï, Viechtbauer, Kruidenier, Candel, Prins and Teijink 32 also meta-analyzed 29 ICC estimates across eight studies to assess the most reliable treadmill protocol for patients with peripheral arterial disease. The authors used a fixed effects meta-regression model using the Fisher TF formula for the ICC sampling error variance and accounted for the covariance of ICC estimates that were dependent using the equation for covariance given by Donner and Zou.Reference Donner and Zou 62

Regardless, many applied meta-analyses of ICC estimates are using a variety of meta-analytic modeling methods and different variance formulas in the formulation of the weights. More insight is needed on how best to pool ICCs, including which formulations, assumptions, and methods work best.

2.5.4 Methodological research on meta-analyses of ICC estimates

Across two simulation studies, Ahn et al.Reference Ahn, Myers and Jin 63 evaluated the synthesis of SMD effect sizes when the primary studies were a mix of research designs, including 2-level clustered and individual randomized design studies. To compute the ICC needed for correcting effect sizes for clustering, the authors pooled the log-transformed cluster and total standard deviations (separately).Reference Raudenbush and Bryk 4 The first simulation study aimed to see how well the true ICC was captured by pooling the sampled studies’ standard deviation components. The authors fixed the number of clusters and individuals per cluster to 30 (not evaluating other cluster or per-cluster sizes) and varied the population ICC value, mean differences between treatment and control, and the number of cluster-level and individual-level studies in the sample. Across these conditions, they found that the estimated ICC was unbiased and fairly precise using relative bias and mean squared error as performance criteria.

The second simulation that the authors conducted evaluated the use of the estimated ICC in a meta-analysis. They generated a meta-analytic dataset and varied the number of studies with and without clustering, population ICC value, population mean difference, and total number of studies in the meta-analysis. They found that the overall effect size estimates that resulted from using a default ICC of 0.2 or from not correcting for clustering at all were both substantially biased.

In this study, the authors did not detail the estimation procedure they used for obtaining the cluster-level and total variance components used to calculate each study’s ICC. In addition, to pool standard deviations across studies, the scale of the outcome has to be assumed constant across all studies in the meta-analysis. This greatly limits the use of the authors’ method for synthesizing ICCs using the reported cluster and total variances. However, the substantial bias found when a generic rather than a more accurate ICC value is used for correcting effect sizes for clustering is critical and relevant to this study. These findings highlight the importance of determining the optimal procedure for pooling ICCs to obtain an accurate ICC estimate.

In summary, the methods that have been proposed to estimate an ICC from external sources (primary studies) are less than ideal. Primarily, the methods each include one or more of the four limitations: (1) reliance on the availability of raw data, (2) requirement of strong assumptions of distributional form for the sampling distribution of ICC estimates, (3) potentially biased/imprecise ICC estimates, and (4) overly conservative ICC estimates. One potential method for addressing these shortcomings is a pooling technique typically applied to meta-analysis of effect sizes.

Limited work has been done to evaluate meta-analytic estimation methods used when pooling ICCs. In particular, best practice for specifying weights requires additional attention and guidance. Meta-analytic weights are typically a function of the effect size’s sampling error variance. Because multiple formulations for the ICC’s variance have been suggested, research on the optimal formulation for this variance is also needed.

The current study aims to address the following research question: How well does pooling of ICC estimates capture the population ICC value a) under a variety of conditions when assuming a random effects model paired with b) method of moments versus REML estimation and when c) both estimation procedures are used with different ICC estimate variance formulas in the inverse variance weights?

2.6 An application of meta-analyzing ICC estimates

To assess the performance of the various formulas for the sampling variance of an ICC and the estimation methods for the random effects model, we meta-analyzed a public dataset published by the Massachusetts Comprehensive Assessment System (MCAS). The dataset includes raw English Language Arts scores and mathematics test scores for each student. From raw student data, we estimated a two-level ICC estimate at the school level within each school district with five or more schools using an MLM with REML estimation. Using raw data for this demonstration enables the computation of all the sample variance formulas. However, when gathering ICC estimates from primary studies, the Smith,Reference Smith 2 Hedges,Reference Hedges, Hedberg and Kuyper 27 and DonnerReference Donner and Koval 52 formulas will be difficult to implement due to what is primarily reported in primary studies.

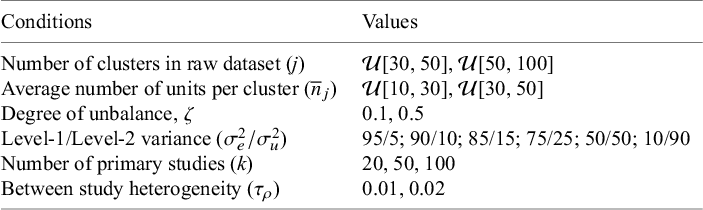

Figure 1 depicts the density distributions of the ICC estimates for each grade. The graph on the left shows the density plots of the ICC estimates when English Language Arts scores were the outcome, and the graph on the right shows the density distributions when mathematics scores were the outcome. As was the case for Hedberg and Hedges,Reference Hedberg and Hedges 15 these distributions have sources of variation from both sampling error and between-district variability. Due to these sources of variation, these empirical distributions may be a good representation of the type of data one might collect for a meta-analysis of ICC estimates from different primary studies, since between-district variability is comparable to between-study variability. The resulting empirical distributions were positively skewed.

Figure 1 Empirical distributions of ICC estimates by grade level for English Language Arts scores (left) and mathematics scores (right).

For each grade (grades 3–8), we meta-analytically pooled the ICC estimates using RVE or REML in combination with the six different inverse variance weights formulations. For this example, we evaluate only the overall pooled ICC estimates to be ultimately used in secondary analyses.

Figure 2 displays the graphs (left has the results for the English Language Arts scores and right has the results for the mathematics scores) of the overall pooled ICC estimates for each grade using either RVE or REML in combination with the six variance formula as weights. The dotted red line represents the arithmetic mean of the ICC estimate. Results demonstrate that REML and RVE differ slightly in some of the grades when the distributions of ICC estimates are more spread out, as is the case with the mathematics scores for grades 3, 4, 6, 7, and 8. There were no differences between REML and RVE when the distributions of ICC estimates are more peaked (grade 5 for mathematics scores and grades 4, 6, 7, and 8 for the English Language Arts scores), regardless of the number of ICC estimates pooled.

Figure 2 Pooled ICC estimates by grade level using either REML or RVE estimation in meta-analytic pooling in combinations with six different inverse variance weights for English Language Arts scores (left) and mathematics scores (right).

Note: Dotted redline represents the arithmetic mean of the ICC estimates.