Immigration is widely considered one of the most prominent sources of political conflict in contemporary democracies (Hooghe and Marks Reference Hooghe and Marks2018; Wilde et al. Reference Pieter de, Koopmans and Merkel2019). This has naturally made the immigration debate a major focus of scholarly attention. Yet while studies have examined how this debate unfolds in news media (Brug et al. Reference van der Brug, D’Amato, Ruedin and Berkhout2015; Eberl et al. Reference Eberl, Meltzer, Heidenreich, Herrero, Theorin, Lind, Berganza, Boomgaarden, Schemer and Strömbäck2018; Helbling Reference Helbling2014; Hovden and Mjelde Reference Hovden and Mjelde2019; Kim and Wanta Reference Kim and Wanta2018), campaign materials (Charteris-Black Reference Charteris-Black2006; Dancygier and Margalit Reference Dancygier and Margalit2020; Green-Pedersen and Otjes Reference Green-Pedersen and Otjes2019), and legislative assemblies (Huysmans and Buonfino Reference Huysmans and Buonfino2008; Kesting et al. Reference Kesting, Reiberg and Hocks2018; Wyss et al. Reference Wyss, Beste and Bächtiger2015), we know little about how citizens themselves perceive it. This is a notable gap, as citizens’ perceptions of the debate shape their sense of what is at stake and their impression of whom they are up against. In particular, whether they recognize legitimate arguments on the other side influences their tolerance of disagreement (Mutz Reference Mutz2002; Verkuyten et al. Reference Verkuyten, Schlette, Adelman and Yogeeswaran2022), their feelings towards opponents (Stanley et al. Reference Stanley, Whitehead, Sinnott-Armstrong and Seli2020; Tsfati and Nir Reference Tsfati and Nir2017), and their willingness to engage in political discussion (Schneider and Weinmann Reference Schneider and Weinmann2023).

The contentiousness of the immigration debate is often traced to a deep value conflict, leaving little room for mutual understanding. According to this view, the two sides operate with fundamentally different standards for what counts as a legitimate argument and so end up talking past one another. This paper advances an alternative account: that mutual understanding is obstructed less by fundamentally different standards of legitimacy than by the appearance of such differences—an appearance shaped and reinforced by distorted perceptions of the debate. On this view, the issue is not that citizens fail to recognize legitimate arguments on the opposing side, but that they mistakenly believe those arguments are not representative of their opponents’ way of thinking.

I test this claim using a survey experiment with open-ended questions. Respondents first formulate an argument supporting their own view, then articulate and rate one of two types of counterarguments: either the argument they believe opponents endorse, or what they consider the strongest argument for their opponents’ position. These responses reflect, respectively, the kind of opposition citizens think they are up against and their sense of what the most legitimate version of that opposition might look like. The analysis compares how respondents rate these two types of counterarguments and assesses which more closely resembles the arguments opponents actually endorse. To this end, I employ two complementary text-analysis methods.

The experiment is conducted in Norway, a low-polarization, consensus-oriented context where relatively accurate and charitable interpretations of opponents’ reasoning might be expected. Still, the findings reveal that while many acknowledge that there are legitimate arguments on the other side, they attribute what they consider much weaker arguments to their opponents. Moreover, their preferred counterarguments align more closely with opponents’ actual reasoning than most respondents realize. This pattern emerges in two ways: first, respondents who accurately portray their opponents’ arguments evaluate those arguments more favorably than those who mischaracterize them; secondly, the arguments respondents identify as the strongest for the opposing side align more closely with opponents’ actual arguments than those they attribute to them.

These findings suggest that failures of mutual understanding in the immigration debate arise less from a lack of appreciation for opponents’ reasoning than from systematic misrepresentation of it. This contributes new evidence to an already substantial literature linking political conflict to misperceptions of opponents’ views. While prior studies have shown that citizens routinely misperceive opponents’ policy positions, exaggerating the extent and prevalence of disagreement (Fernbach and Boven Reference Fernbach and Van Boven2022; Levendusky and Malhotra Reference Levendusky and Malhotra2016; Westfall et al. Reference Westfall, van Boven, Chambers and Judd2015), the evidence presented here suggests that they also misperceive the reasoning behind those positions, underestimating the scope for mutual understanding within a given disagreement. This distinct form of misrepresentation is particularly relevant when disagreement is genuine, and constructive engagement depends on accurate and fair representation of opponents’ reasoning.

Deep Disagreement or Mutual Misunderstanding?

The contentiousness of immigration debates is often traced to a deeper conflict over so-called cultural values. While this conflict has been described in various terms,Footnote 1 the overarching view is that the broadly economic divisions which once dominated political debates have been increasingly supplanted by a new cleavage centered on questions of identity and belonging in an increasingly globalized world (Hooghe and Marks Reference Hooghe and Marks2018; Wilde et al. Reference Pieter de, Koopmans and Merkel2019). On one side of this divide are those who embrace greater interconnectedness, cultural diversity, and openness; on the other, those who long for social cohesion, tradition, and a reaffirmation of national identity. Immigration, perhaps more than any other issue, brings these opposing visions into sharp relief.

Disagreements rooted in conflicting value orientations are often thought to be especially divisive because values serve as the standards by which claims and arguments acquire meaning and legitimacy. When interlocutors operate with fundamentally different values, they lack a shared framework for determining what counts as a legitimate argument and end up talking past one another. In such situations, as Sniderman (Reference Sniderman2017, 27) put it, ‘what counts as a justification is in dispute’. Thus, what to some is an appeal to patriotism and national interest is interpreted by others as xenophobic and exclusionary. Conversely, what some regard as a defense of openness and inclusion is viewed by others as an attempt to dilute or dismantle cherished traditions and long-standing ways of life. In this sense, the immigration debate—and the broader value conflict it embodies—is no less than a clash over ‘[m]utually exclusive truths’ (Zürn and Wilde Reference Zürn and de Wilde2016, 284).

From this, we can construct a straightforward account of what makes immigration debates so contentious. The absence of shared values to which the parties can make common appeal prevents them from recognizing the disagreement as a ‘legitimate controversy with rationales on both sides’ (Mutz Reference Mutz2002, 122). Unmoved or even offended by the counterarguments, each side remains confident in the unassailability of their own position. And believing reason and evidence is firmly on their side, ‘disagreement becomes an indictment of the other [side’s] intelligence, benevolence, or objectivity’ (Minson and Dorison Reference Minson and Dorison2022, 186).

But is this an accurate portrayal of immigration debates? Two considerations call for caution. First, value orientations may not be as mutually exclusive as the culture war narrative suggests. A robust finding in psychological research is that people broadly share a common set of core values, and differ primarily in the relative importance they assign to those values when tensions arise (Rokeach Reference Rokeach2008; Schwartz Reference Schwartz, Roccas and Sagiv2017). A preference for tradition, for example, does not necessarily entail a failure to appreciate the value of diversity, just as a commitment to openness need not entail outright disregard for cultural continuity. Thus, even if value conflict plays a central role in structuring disagreement over immigration, it does not necessarily follow that participants in the debate fail to recognize the legitimacy or relevance of opposing arguments.Footnote 2

Secondly, the culture war narrative captures only a narrow slice of the concerns relevant to immigration. In practice, immigration policy engages a broad range of considerations—including, but not limited to, humanitarian obligations, international law, language integration, welfare provision, state capacity, gender equality, crime, terrorism, national security, labor shortages, and labor competition. Few of these concerns map neatly onto the cultural value divide, and some even invert expected alignments. For instance, a number of studies demonstrate that concerns about socially conservative views among immigrant communities can lead otherwise tolerant and cosmopolitan individuals to adopt more restrictive positions on immigration (Ivarsflaten and Sniderman Reference Ivarsflaten and Sniderman2022; Lancaster Reference Lancaster2022; Sniderman and Hagendoorn Reference Sniderman and Hagendoorn2009).

For both reasons, people may be more susceptible to cross-pressures, and more open to competing considerations, than is often assumed. In line with this, several studies have found that while individuals may appear divided when immigration is discussed in general or abstract terms, they often converge in their views when specific contexts and policy details are taken into account. This ‘hidden consensus’ on immigration comes into view when studies disaggregate the type of immigration (for example, high-skilled v. low-skilled, humanitarian v. economic) (Hainmueller and Hopkins Reference Hainmueller and Hopkins2015), or examine how people respond to concrete policy features such as language requirements, work permits, or integration measures (Helbling et al. Reference Helbling, Jäger, Maxwell and Traunmüller2023; Helbling Reference Helbling, Maxwell and Traunmüller2024; Wright et al. Reference Wright, Levy and Citrin2016). That citizens on both sides of the debate respond similarly to such considerations suggests that they share a common set of immigration-related concerns.

Why, then, are immigration debates so contentious? One possibility is that it has less to do with a failure to recognize valid concerns on the opposing side than a failure to associate those concerns with opponents. On this account, individuals on both sides acknowledge that there are legitimate arguments on the other side, but mistakenly assume that these arguments are not representative of their opponents’ way of thinking. In other words, mutual understanding is obstructed not by fundamentally different standards of legitimacy, but by the appearance of such differences—an impression shaped by distorted perceptions of the debate. The next section considers how such distortions might arise.

Argument Misattribution in Immigration Debates

Citizens’ perceptions of political debates are shaped both by how those debates unfold in public discourse and by psychological mechanisms that influence how they interpret information about those debates. At both levels, a range of factors can lead to distorted impressions of opponents’ reasoning.

At the level of public discourse, much depends on who sets the terms of the debate. In immigration debates, this is often the most radical actors. Centrist parties have historically been reluctant to engage openly and consistently with the issue, often due to internal divisions or fears of alienating segments of their electoral base. This ‘conspiracy of silence’ (Freeman Reference Freeman1995) creates space for actors with clearer cultural agendas—such as populist right-wing parties on one side and the new left on the other—to define the terms of debate. Even as centrist parties have become more vocal in response to the radical right’s electoral success (Hutter and Kriesi Reference Hutter and Kriesi2022), they frequently struggle to offer a coherent alternative. Some adopt the rhetoric and framing of their challengers, while others issue mixed or contradictory messages (Hertner Reference Hertner2022; Odmalm and Bale Reference Odmalm and Bale2014).

In this discursive environment, ideologically committed actors continue to dominate. Radical right parties, in particular, have emerged as key agenda-setters (Hutter and Kriesi Reference Hutter and Kriesi2022), helping to mainstream exclusionary rhetoric that was once confined to the political fringes (Mudde Reference Mudde2019; Valentim Reference Valentim2024). More broadly, the diffusion of moralized language—casting the issue in terms of right and wrong—is common on both sides of the debate (Simonsen and Widmann Reference Simonsen and Widmann2025; Widmann and Simonsen Reference Widmann and Simonsen2025) and has been shown to heighten hostility between camps (Simonsen and Bonikowski Reference Simonsen and Bonikowski2022). While such cues shape how the public perceives the debate, they do not necessarily map onto how individuals themselves reason about the issue, yielding a perception of the debate that is more antagonistic than it truly is.

Besides, perceptions of opponents’ arguments depend not only on how opponents themselves frame their position, but also on how that position is portrayed by adversaries. A common strategy in public debates, straw-manning, involves attributing weak or distorted arguments to opponents to delegitimize and undermine their position (Aikin and Talisse Reference Aikin and Talisse2020, 28). While such practices are a routine feature of adversarial politics, they may be especially pronounced in immigration debates, where the experience of being misrepresented is itself a recurring theme.

Anti-immigration rhetoric, in particular, is often intertwined with a sense of grievance over being vilified or deliberately misunderstood by an ‘out-of-touch’ political and media elite. This frequently includes claims that mainstream institutions caricature restrictionist views as inherently racist, xenophobic, or culturally regressive. While this sense of victimization is sometimes strategically amplified for political gain, such narratives likely resonate because they tap into a genuine experience of misrepresentation (Elad-Strenger and Kessler Reference Elad-Strenger and Kessler2024; Müeller Reference Müeller2019; Rostbøll Reference Rostbøll2023; Rovamo and Sakki Reference Rovamo and Sakki2024).

At the same time, the rhetoric employed by right-wing populist movements advancing these grievances often seeks to discredit the motives of immigration-friendly actors, portraying them as knowing accomplices in the erosion of national identity or as prioritizing the interests of outsiders over those of the ‘real’ people (Busby et al. Reference Busby, Gubler and Hawkins2019; Moffitt Reference Moffitt2016). These accusations often take the form of conspiratorial tropes, such as claims about ‘cultural Marxism’ or ‘wokeness’, which frame pro-immigration stances as expressions of fringe ideological agendas (Asen Reference Asen2024). A contemporary manifestation of this dynamic can be seen in the proliferation of internet memes that caricature and mock political opponents (Hakoköngäs et al. Reference Hakoköngäs, Halmesvaara and Sakki2020), a phenomenon common across the political spectrum.

In this climate, fostering accurate and fair representations of opponents’ arguments requires deliberate effort. Yet research in political psychology suggests that people are often inclined in the opposite direction. A well-documented perceptual bias, naïve realism, leads individuals to assume that their own perceptions and judgments are objective and grounded in reality, while those who disagree must be uninformed, biased, or irrational (Ross and Ward Reference Ross, Ward, Reed, Turiel and Brown1996). Social identity theory further predicts that such tendencies intensify when disagreements are bound up with salient group identities (Tajfel Reference Tajfel1974)—such as partisan affiliation (Iyengar et al. Reference Iyengar, Lelkes, Levendusky, Malhotra and Westwood2019)—or when the issue itself becomes an object of identification in its own right (Hobolt et al. Reference Hobolt, Leeper and Tilley2021).

Both mechanisms have been linked to citizens’ tendency to misperceive the policy positions of political opponents, seeing them as more extreme and ideologically coherent than they in fact are (Fernbach and Boven Reference Fernbach and Van Boven2022; Levendusky and Malhotra Reference Levendusky and Malhotra2016; Westfall et al. Reference Westfall, van Boven, Chambers and Judd2015). The same forces likely distort perceptions of the reasoning behind those positions, leading citizens to view opponents’ arguments as more doctrinaire and less broadly appealing than they actually are.

Taken together, the adversarial nature of immigration discourse and the perceptual biases that shape how citizens interpret that discourse may contribute to a distorted impression of the debate. As a result, even citizens who recognize that there are legitimate arguments on the other side may doubt that those arguments reflect their opponents’ way of thinking, instead attributing to them arguments they perceive as weak or ideologically fringe.

Hypotheses

To evaluate these claims, I test four hypotheses about how citizens perceive and evaluate arguments on the opposing side of the immigration debate. First;

H1. Citizens recognize that there are legitimate arguments on the other side but nevertheless attribute weaker arguments to their opponents.

Next, I expect that the arguments citizens attribute to opponents are a poor reflection of what opponents actually argue.

H2. The arguments citizens attribute to opponents differ substantively from the arguments opponents themselves use to defend their position.

Furthermore, I expect that this misrepresentation helps explain the negative evaluations of opponents’ arguments observed under Hypothesis 1. A direct implication is that the subset of respondents who do not misrepresent opponents’ arguments should evaluate them more favorably than those who do.

H3. Citizens who accurately represent opponents’ arguments evaluate them more favorably than citizens who misrepresent them.

Ideally, we would show not only that citizens who accurately portray opponents’ arguments evaluate them more favorably, but also that those who misrepresent opponents’ reasoning would have evaluated them more favorably had they produced more accurate representations. While this counterfactual cannot be observed directly, we can approximate it using respondents’ own statements about what they regard as the strongest argument on the other side. These statements reveal the counterarguments they themselves see as most legitimate, given their own values and priorities, and thus the form of opposition they are most prepared to regard as reasonable. If these strongest counterarguments more closely resemble opponents’ actual reasoning than the arguments respondents attribute to them, it would indicate that respondents underestimate the extent to which they and their opponents agree on what counts as a legitimate argument.

H4. The arguments citizens regard as the strongest for the opposing side more closely resemble opponents’ own arguments than those they attribute to them.

Data and Context

To test these hypotheses, I use data collected through the Norwegian Citizen Panel (NCP), a probability-based online survey of the general population administered by the Digital Social Science Core Facility at the University of Bergen (Ivarsflaten et al. Reference Ivarsflaten, Dahlberg, Storelv, Løvseth, Bjånesøy, Bye, Böhm, Gregersen, Knudsen, Nordø, Schakel, Tvinnereim, Skjervheim, Bjørnebekk, Wettergreen, Grendal and Høgestøl2023). Panel members are recruited offline through invitations mailed to a random sample drawn from the Norwegian Population Registry. The relevant items were fielded in June 2023, to a sample of 2,007 individuals.

The study focuses specifically on disagreement over non-European immigration for two reasons. First, as a Schengen country, Norway experiences relatively uncontroversial intra-European mobility, making European immigration less politically salient. Second, the questions were fielded amid a large influx of Ukrainian refugees. In contrast to other immigrant groups, these arrivals were generally welcomed by the public (Bjånesøy and Bye Reference Bjånesøy and Bye2023). Focusing on non-European immigration therefore centers the analysis on the most contested form of immigration.

Norway’s immigration history is relatively recent, beginning with labor migration in the early 1970s, followed by inflows of refugees, asylum seekers, and family migrants from the mid-1970s onward (Borchmann and Kjeldstadli Reference Borchmann and Kjeldstadli2008). While European labor migrants still account for the largest group of immigrants, the share of the population with non-European origin has risen steeply—from roughly 1.8 per cent in 1990 to approximately 7.5 per cent in 2023 (Statistics Norway 2025). Over this period, immigration has become an increasingly prominent and controversial topic in Norwegian public debates (Hovden and Mjelde Reference Hovden and Mjelde2019; Midtbøen et al. Reference Midtbøen, Steen-Johnsen and Thorbjørnsrud2019).

In many respects, this debate mirrors broader patterns found across Western Europe. As in other countries, the issue was initially politicized by the populist right—most prominently by the Progress Party—which continues to serve as the main advocate for restrictive immigration policies (Jenssen and Ivarsflaten Reference Jenssen, Ivarsflaten, Bergh and Aardal2019; Jupskås Reference Jupskås and Sarah2016). At the opposite end of the spectrum are parties of the ‘new left’, the Socialist Left and the Greens, whose broadly pro-immigration platforms reflect an ideological commitment to solidarity, inclusion, and humanitarianism. This stance was especially visible during the height of the Syrian refugee crisis, when these parties were the only ones that opposed tightening asylum policies. Situated between these poles are the center-left Labor Party and the center-right Conservative Party, whose internal divisions on immigration have historically led them to avoid taking clear or consistent positions (Jenssen and Ivarsflaten Reference Jenssen, Ivarsflaten, Bergh and Aardal2019).

In other respects, however, Norway stands apart. The Progress Party, with its neoliberal origins, is sometimes considered more moderate on immigration than many of its populist-right counterparts in Europe. The party participated in government as a junior coalition partner from 2013 to 2020, and there is evidence that this inclusion contributed to a further moderation of its rhetoric (Jupskås Reference Jupskås and Sarah2016). These developments reflect Norway’s broader tradition of power-sharing and consensus-oriented politics, which are frequently credited with sustaining comparatively low levels of partisan hostility (Gidron et al. Reference Gidron, Adams and Horne2019). Consistent with this picture, recent analyses report little attitudinal polarization on immigration from 2001–19, with the main exception of a modest uptick in polarization in attitudes towards Islam (Wollebæk Reference Wollebæk2024). Consequently, the immigration debate in Norway has generally been less antagonistic and inflammatory than in more polarized systems, potentially reducing the incentives for political actors—and citizens—to systematically mischaracterize the views of the opposing side.

In this sense, I argue that Norway constitutes a ‘least likely case’ for mutual misperception. While it shares many structural features of European immigration debates, its political culture and more restrained public discourse make systematic misconstrual less expected. If it is nevertheless observed in this context, such patterns are likely to be even more pronounced in more adversarial and polarized contexts.

Survey Design

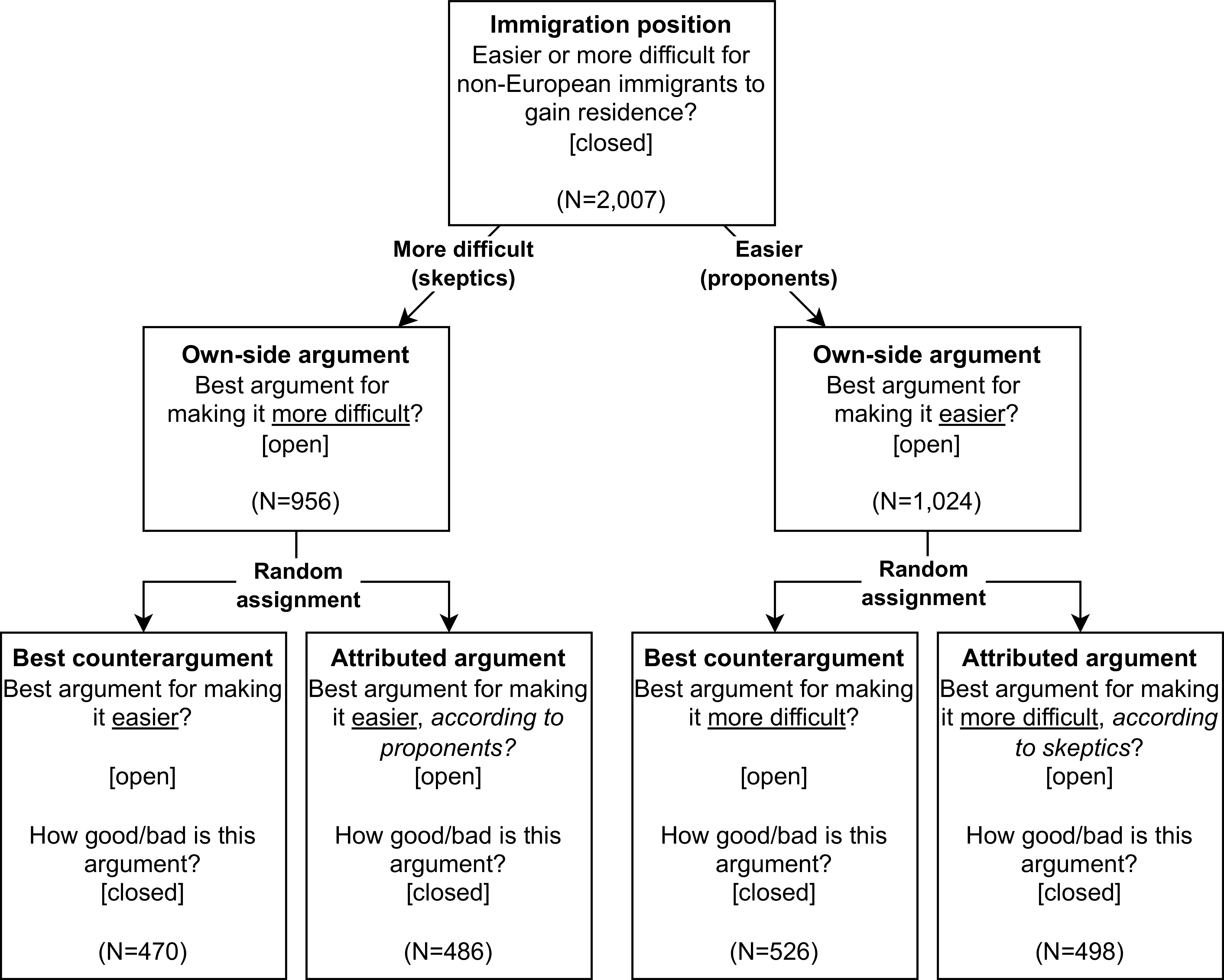

Figure 1 summarizes the experimental design. First, respondents’ positions on immigration were assessed using the question: ‘Do you think it should be easier or more difficult for non-European immigrants to gain residence in Norway?’ Responses were recorded on a 6-point scale, ranging from ‘Much easier’ to ‘Much more difficult’, with no neutral midpoint. Respondents selecting options 1–3 were classified as immigration proponents, and those selecting 4–6 as immigration skeptics.

Respondents then articulated two arguments in open-ended responses. First, they wrote what they regarded as the single best argument in support of their own view. These responses capture the arguments those on each side of the debate actually endorse. Once they had argued their own position, respondents were randomly assigned to one of two counterargument tasks.

In the best counterargument condition, participants were asked to write the single best argument they could think of for the opposing side. For example, respondents who favored making it more difficult for non-European immigrants to gain residence were asked to provide the best argument for making it easier, and vice versa. This prompt made no reference to opponents and was intended to engage ‘self-persuasion’ (Catapano et al. Reference Catapano, Tormala and Rucker2019)—the reflective weighing of counterattitudinal arguments within respondents’ own decision calculus. Accordingly, it elicited the counterarguments respondents found most compelling in light of their own evaluative framework.

In the argument attribution condition, participants were asked to imagine a person who holds the opposite view, adopt that person’s perspective, and write what they believed that person would see as the best argument for their position. For example, a respondent who thought it should be more difficult for non-European immigrants to gain residence was asked to imagine someone who thinks it should be easier and to state the argument that person would regard as strongest. Framed as a perspective-taking task,Footnote 3 this prompt was designed to elicit arguments respondents believed their opponents would find persuasive, given their evaluative framework.

These responses represent, respectively, the arguments respondents themselves regard as most legitimate for the opposing side and the arguments they believe opponents regard as most legitimate. To capture how they feel about these different arguments, participants then rated the quality of the counterargument they had just written on a 7-point scale ranging from ‘Very bad’ to ‘Very good’ (coded from –3 to 3, with 0 indicating a neutral evaluation). The difference in mean evaluations across conditions provides the empirical test of Hypothesis 1: if respondents rate what they see as the best arguments for the opposing side substantially more favorably than the arguments they attribute to opponents, it suggests a tendency to assign weaker arguments to opponents despite recognizing that stronger ones exist.

The remaining hypotheses concern the content of the text responses and are evaluated using the text-analysis procedures described below. To examine whether respondents misrepresent opponents’ arguments (Hypothesis 2), I assess whether arguments produced in the attribution arm differ substantively from opponents’ own arguments from the first task. To assess whether accurate portrayals are evaluated more favorably (Hypothesis 3), I test whether attributed arguments that closely resemble opponents’ own receive more favorable evaluations than those that do not. Because representational accuracy is not randomly assigned, this test is correlational only.

To test whether respondents’ best counterarguments resemble opponents’ own more than those they attribute to them (Hypothesis 4), I compare both sets of counterarguments to opponents’ actual arguments. Because assignment to the two counterargument tasks is randomized, any difference in similarity to opponents’ arguments can be attributed to the task rather than to respondent characteristics. Thus, support for Hypothesis 4 would indicate that, when reasoning from their own evaluative framework (best counterarguments), respondents generate counterarguments closer to opponents’ actual reasoning than when explicitly adopting their opponents’ evaluative framework (argument attribution)—suggesting that they underestimate the scope for mutual understanding.

Overview of experimental design.

Text Analytic Strategy

To examine whether respondents misrepresent opponents’ arguments, I train a binary text classification model to distinguish between the arguments they attribute to opponents and the arguments opponents actually use to justify their position. Intuitively, if the classifier easily differentiates these two argument sets (that is, achieves accuracy well above chance), it indicates that they are empirically distinct; if it struggles to make a distinction, the two sets are linguistically similar.Footnote 4 To verify that any detected differences are substantively meaningful, I inspect the features and example texts most associated with each predicted category. If Hypothesis 2 holds and respondents do misrepresent opponents’ reasoning, attributed arguments should be both statistically separable and substantively distinct from the arguments opponents actually use.

To identify the subset of attributed arguments that are representative of opponents’ own, I leverage the model’s misclassifications: an attributed argument is deemed representative if it resembles opponents’ own closely enough to ‘trick’ the classifier into labeling it as such.Footnote 5 Under Hypothesis 3, these misclassified attributed arguments should, on average, receive higher quality ratings than those correctly classified as attributed.

To evaluate Hypothesis 4, I apply the trained classifier to the texts produced in the best counterargument condition. Because the model is trained specifically to distinguish arguments attributed to opponents from opponents’ own, its predictions indicate which of these two argument sets the best counterarguments most closely resemble. The expectation is that these best counterarguments will be classified as opponents’ own more often than as attributed.

I implement these analyses by fine-tuning a Norwegian BERT classifier (Devlin et al. Reference Devlin, Chang, Lee, Toutanova, Burstein, Doran and Solorio2019), training separate models for pro- and anti-immigration arguments. Transformer models such as BERT are pre-trained on vast text corpora—here, large web and reference texts together with the Norwegian National Library’s full-text collection (Kummervold et al. Reference Kummervold, de la Rosa, Wetjen and Brygfjeld2021)—which equips them to learn regularities in syntax and semantics in naturally occurring language. Using this pre-trained knowledge to encode the open-ended responses yields high-dimensional numeric vector representations (embeddings) that capture synonymy and contextual meaning. These embeddings thus provide rich representations of the arguments, capturing not only lexical content but also aspects of argument structure (for example, how claims and qualifiers are sequenced). Fine-tuning then adapts these representations to the specific task of separating arguments attributed to opponents from opponents’ own.

One potential drawback of these rich linguistic representations is that they may inadvertently pick up on cues unrelated to substantive content. In the present application, a particular concern is that respondents might shift grammatical perspective across tasks, writing in the first person when expressing their own view and in the second person when attributing an argument to opponents. This could exaggerate the distinctiveness of attributed arguments (inflating support for Hypothesis 2) and bias the classification of best counterarguments (also first person) towards the ’own-side’ category, complicating tests of Hypothesis 4. To reduce the influence of such cues, I masked personal pronouns and referents (for example, ‘I’, ‘you’, ‘person’) before training.

Because own-side arguments outnumbered attributed arguments by roughly two to one (Figure 1; Table 1), I applied inverse-frequency class weights to upweight errors on the rarer class. To curb overfitting and avoid train–test contamination (Grimmer et al. Reference Grimmer, Roberts and Stewart2022, 269–71), I generated out-of-sample predictions via five-fold cross-validation. To stabilize estimates against the idiosyncrasies of any particular fold assignment, I repeated this procedure five times (twenty-five folds in total) and averaged the resulting out-of-sample probabilities. An attributed argument was labeled representative if this averaged predicted probability exceeded 0.5, otherwise it was labeled unrepresentative. If Hypothesis 2 is correct, the model should classify attributed and own-side arguments with accuracy noticeably above chance. The separation should also capture meaningful substantive differences, as explored through inspection of key features and example texts. If Hypothesis 3 is correct, attributed arguments misclassified as opponents’ own should, on average, receive higher quality ratings than those correctly classified as attributed.

Response rates and mean word count in open-ended responses

To classify the best counterargument texts, I feed them through the same models and average the predicted probabilities across all twenty-five cross-validation folds. Each prediction is the estimated probability that a given best counterargument was written by an opponent justifying their own view, rather than by a respondent attributing an argument to them. To capture not only the final classification but also the certainty and dispersion of these predictions, I plot the full distribution of the resulting probabilities. If Hypothesis 4 is correct, this distribution should skew right, indicating that these best counterarguments are more typical of opponents’ own arguments than of the arguments attributed to them. For comparison, I also plot the corresponding probability distributions for attributed arguments and for opponents’ own arguments. By comparing these distributions, we can assess how similar the best counterarguments are to each set, not only relative to the typical case, but in a way that accounts for the fact that attributed and own-side arguments themselves vary in typicality.

Complementary Thematic Analysis

The classifier-based approach described above compresses rich variation in the open-ended responses onto a single latent dimension capturing their relative similarity to attributed versus own-side arguments. While this dimension aligns closely with the hypotheses, two caveats motivate an additional check. First, despite masking perspective-related words prior to training, residual stylistic or pronominal cues may remain and could bias the results. Secondly, because the models are trained only on opponents’ own and attributed texts, they may underweight genuinely novel content that appears primarily in the best counterarguments. If such content lies off the learned dimension, the projections could understate or overstate similarity to opponents’ own arguments.

To address both concerns, I pair the classification models with thematic analyses that code responses into broad substantive topics that are independent of style and perspective, and compare topic prevalence across opponents’ own, attributed, and best counterargument corpora. This facilitates comparisons along several substantive dimensions that are independent of stylistic markers while not restricting the analysis to themes present in the training data (attributed and own arguments). If the thematic profiles of best counterarguments more closely resemble opponents’ own than the profile of attributed arguments, this provides convergent evidence for Hypothesis 4 (and simultaneously reinforces Hypothesis 2).

To implement the thematic analysis, I hand-labeled a random subsample of responses into intentionally coarse substantive themes and propagated those labels to the remaining texts using SetFit (Sentence Transformer Fine-tuning; Tunstall et al. Reference Reimers, Jo, Bates, Korat, Wasserblat and Pereg2022). SetFit fine-tunes a sentence-embedding model with contrastive pairs and a lightweight classifier, yielding strong accuracy with small training sets. Because arguments can express multiple themes, I treated the task as multilabel and targeted at least thirty labeled examples per theme. Labeling proceeded in two steps: an initial random batch of 100 responses to refine the codebook, followed by a second batch of an additional 100 responses that oversampled underrepresented themes using embedding-space semantic search to identify candidates. I evaluated performance and calibrated per-theme classification thresholds via five-fold cross-validation (see Figure A.4 in supplementary materials), then applied the calibrated classifier to the remaining unlabeled responses.

Analysis

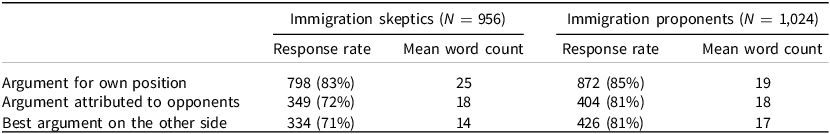

Table 1 summarizes response rates and mean word counts for arguments written by immigration proponents (N = 1, 024) and skeptics (N = 956). Responses are coded as missing if no text was provided or if the entry was an explicit non-response (for example, ‘I don’t know’, ‘there are no good arguments for…’), as identified in the thematic coding.Footnote 6 All subsequent analyses treat these cases as missing.

Both groups were similarly likely to argue their own side (proponents: 85 per cent; skeptics: 83 per cent). Proponents also frequently generated counterarguments (81 per cent in both conditions), whereas skeptics did so less often (72 per cent attribution; 71 per cent best counterargument). Proponents wrote similar-length texts across tasks (own: 19 words; attribution: 18; best counterargument: 17). Among skeptics, texts were longer on their own side (25) than in counterargument tasks (attribution: 18; best: 14).

While response rates are encouraging overall, skeptics’ lower propensity to generate counterarguments is noteworthy. If this non-response itself signals a lack of recognition of the legitimacy of opposing arguments, treating these responses as missing could inflate skeptics’ average evaluations of opposing arguments relative to proponents’. While we cannot rule this out, Table A.2 in the supplementary materials shows education is the strongest predictor of providing a counterargument, while attitude strength and partisanship are weak or negligible, suggesting an effort/ability gradient rather than motivated avoidance. Still, all results should be interpreted with this caveat in mind.

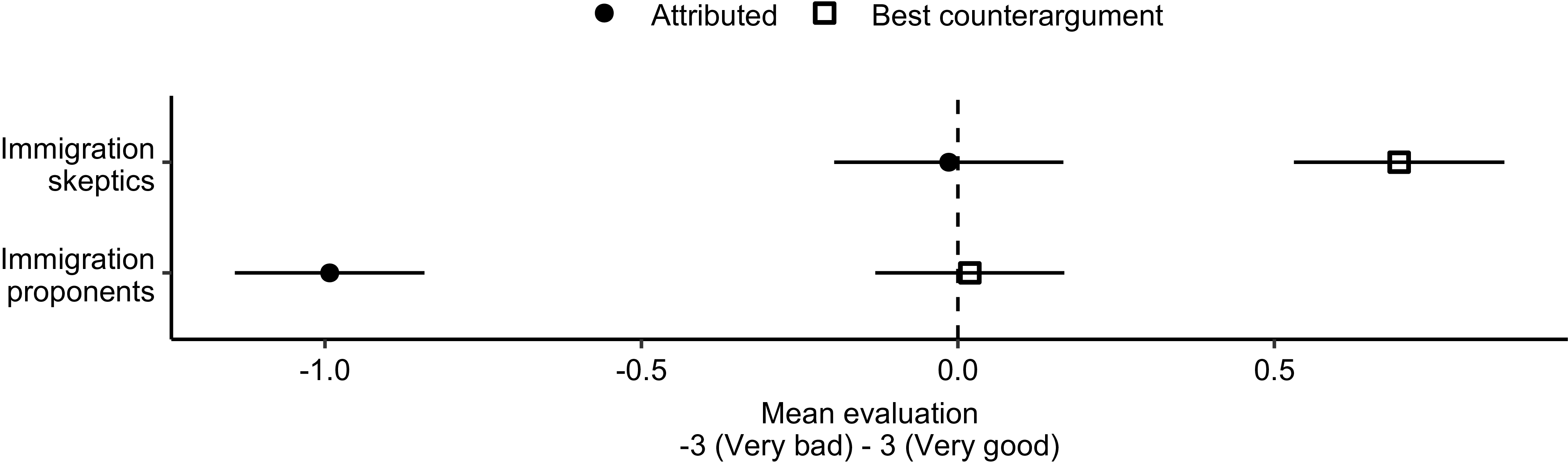

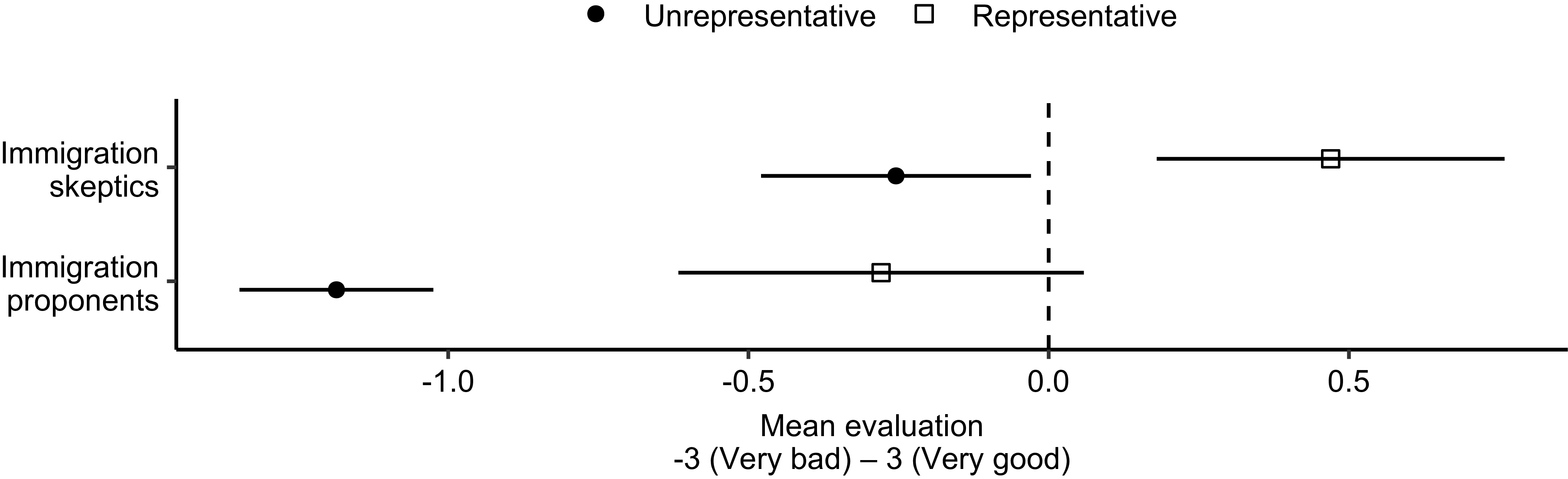

Evaluations of Best and Attributed Counterarguments

Figure 2 shows how respondents evaluated the arguments they attributed to opponents and the best arguments they could think of for the opposing side, separately for immigration proponents and skeptics. Skeptics rated the arguments they attributed to proponents essentially at neutral (−0.01 ± 0.09) but evaluated their strongest pro-immigration counterargument positively (0.71 ± 0.08), a difference of 0.72 points. Proponents evaluated arguments they attributed to skeptics much more negatively (−0.99 ± 0.08), near ‘somewhat bad’ (−1), yet rated their strongest anti-immigration counterargument as essentially neutral (0.02 ± 0.08), a 1.01-point difference. In both groups, the best counterarguments respondents could think of were evaluated much more favorably than the arguments they attributed to opponents, consistent with Hypothesis 1. Notably, the discrepancy is especially pronounced among immigration proponents, who also evaluate opposing arguments more negatively overall than skeptics.

Mean evaluation of argument attributed to opponents versus best argument for their side. Error bars indicate 95 per cent confidence intervals.

Argument Misattribution

Having shown that respondents have a relatively unfavorable perception of the arguments they attribute to opponents, I next examine how closely these attributed arguments capture opponents’ actual reasoning. To do so, I evaluate the performance of the classification models in differentiating between the arguments respondents attributed to opponents and the arguments opponents themselves produced: if the models achieve accuracy well above chance, it implies that respondents’ attributions differ systematically from opponents’ actual reasoning; if they do not, it implies that the two sets of arguments are linguistically indistinguishable.

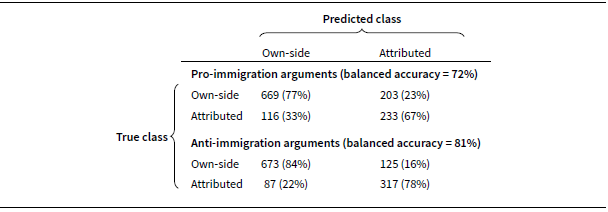

Table 2 reports classification performance of both models.Footnote 7 Using a 0.5 threshold, the model for pro-immigration arguments achieved a balanced accuracy of 72 per cent—well above the 50 per cent chance baseline that would be expected if the two sets were linguistically indistinguishable. The model for anti-immigration arguments performed even better, achieving a balanced accuracy of 81 per cent, suggesting that immigration skeptics’ arguments are especially prone to misrepresentation.

Classifier predictions for own-side and attributed arguments. Cells show counts with row percentages (share of the true class predicted in each category). Balanced accuracy is the average of the two class-specific correct classification rates (diagonal percentages). Classifications are based on a 0.5 threshold

The key quantity of interest is the model’s error rate on attributed arguments, which identifies the subset of attributions that are closest to opponents’ actual arguments. For pro-immigration arguments, 33 per cent (N = 116) of attributed arguments were predicted as opponents’ own; for anti-immigration arguments, 22 per cent (N = 87) were. I interpret these misclassified cases as representative of opponents’ arguments while the remaining, correctly classified attributions are treated as unrepresentative. By this measure, a large majority of attributions are unrepresentative—67 per cent on the pro-immigration side and 78 per cent on the anti-immigration side.

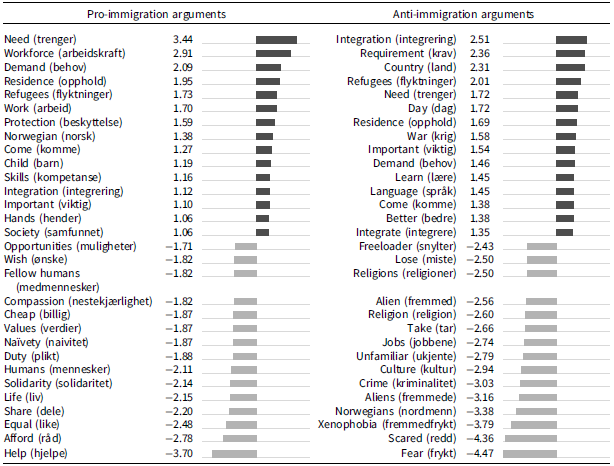

Before concluding that these patterns signal misrepresentation, it is necessary to verify that the observed statistical separation captures substantive differences in the texts. I therefore inspect the vocabulary most strongly associated with texts classified as attributed versus opponents’ own. Table 3 lists the fifteen terms most characteristic of each category, separately for pro- and anti-immigration arguments. All terms are lemmatized (reduced to their dictionary form) and ranked by their relative distinctiveness across the two categories.Footnote 8 Positive z values indicate terms more prevalent in texts predicted as own; negative values indicate terms more prevalent in texts predicted as attributed.

Words predictive of own-side (+) v. attributed (–) arguments

Note: entries are standardized log-odds (z) from a prior-adjusted (Monroe et al. Reference Monroe, Colaresi and Quinn2008) comparison of term frequencies between arguments classified as attributed to opponents v. opponents’ own. Positive z indicates a term used more in own-side arguments; negative z indicates usage in attributed arguments. Original Norwegian lemmas are shown in brackets.

When justifying their own position, immigration proponents tended to emphasize labor-market needs and broader societal capacity. Their arguments frequently invoked terms such as ‘need’, ‘workforce’, ‘demand’, ‘work’, ‘skills’, and ‘hands’ (as in needing more hands on deck). They also employed humanitarian language referring to protection and vulnerability—‘refugees’, ‘protection’, and ‘child’—suggesting a blend of pragmatic and humanitarian considerations. Two responses classified with high probability as own-side illustrate this pattern:

Those who genuinely need residency must be allowed to stay in Norway. We should use common sense in cases that don’t always fit the standard rules. Norway will need large numbers of health-care workers in the near future. Immigrants can help meet part of that need.

Family reunification appears unnecessarily difficult. We also need labor, especially in the health-care sector.

In contrast, the arguments attributed to proponents by skeptics leaned more on moral and universalist language, frequently invoking terms such as ‘help’, ‘afford’ (that is, we can afford to help), ‘equal’, ‘share’, ‘life’, ‘solidarity’, ‘humans’, ‘duty’, ‘values’, ‘compassion’, and ‘fellow humans’. Two responses predicted with high probability as attributed are:

They want everyone to have a good life—which I do as well—and believe that everyone will change their views and adapt to the Norwegian legal system and culture.

Thinks Norway should help the whole world.

This contrast suggests that skeptics tend to mischaracterize proponents’ arguments by emphasizing moral appeals and overlooking the pragmatic and utilitarian justifications that feature prominently in proponents’ own reasoning. It should be noted, however, that while these differences are substantively meaningful, several of the responses with the highest predicted probability of being attributed do include second-person descriptions of the imagined proponent, indicating that perspective cues may contribute to the classifier’s separation.

Immigration skeptics, for their part, tended to justify their views by emphasizing the practical challenges of integration, frequently invoking terms such as ‘integration’, ‘requirement’, ‘learn’, ‘language’, and ‘integrate’. This is illustrated by the following two responses classified with a high probability of being written by skeptics themselves:

Immigration must be adjusted so that genuine integration into Norwegian society is possible. In the municipalities I know, capacity is maxed out when it comes to providing adequate housing, education, employment, and more.

We need to put more effort into integration—to prevent crime and promote self-reliance among immigrants. Processing times for asylum applications must be reduced so applicants receive a decision on residence within a reasonable period. It is unacceptable that people are deported after having established themselves in society and lived here for several years.

In contrast, the arguments attributed to skeptics by proponents emphasized fear and cultural threat, invoking terms such as ‘fear’, ‘afraid’, ‘xenophobia’, ‘foreigner/alien’, ‘culture’, ‘the unfamiliar’, ‘religion/religions’, as well as references to crime and economic competition (for example, ’jobs’, ’freeloader’). Two responses predicted with high probability as attributed are:

They are afraid of the unknown and unwilling to share their material prosperity.

Fear of losing/having to change the values we have in Norway.

Taken together, these patterns suggest that proponents tend to overemphasize cultural and identity-based elements in skeptics’ reasoning, whereas skeptics themselves more often articulate their concerns in practical or policy-oriented terms, highlighting integration challenges and the state’s limited capacity to absorb newcomers. Again, these substantive differences do appear alongside perspective cues in the responses most confidently classified as attributed.

This brief content analysis suggests that the classifiers capture thematically coherent, meaningful differences between how people argue their own case and how that case is portrayed by opponents. Combined with strong predictive performance, this indicates that the models are detecting substantive, interpretable contrasts. Consistent with Hypothesis 2, opponents’ own arguments and attributed arguments are both statistically separable and substantively distinct. Notably, the presence of third-person perspective in the responses most confidently classified as attributed underscores the importance of validating the results with the additional thematic analyses presented below.

Evaluations of (In)Accurate Representations of Opponents’ Arguments

Having found strong evidence that respondents on both sides of the immigration debate misconstrue opponents’ arguments, the next question is whether this misconstrual is associated with more negative evaluations of opponents’ reasoning (Hypothesis 3). Figure 3 shows how respondents evaluated arguments they attributed to opponents, distinguishing between those that were representative of opponents’ reasoning (that is, misclassified as opponents’ own) and those that were unrepresentative (that is, correctly classified as attributed).

Mean evaluations of arguments attributed to opponents by representativeness, with 95 per cent confidence intervals. Sample sizes: skeptics rating pro-immigration arguments – representative n = 116, unrepresentative n = 233; proponents rating anti-immigration arguments – representative n = 87, unrepresentative n = 317.

Immigration skeptics rated representative portrayals of pro-immigration arguments positively (0.47 ± 0.15) and unrepresentative ones slightly negatively (−0.25 ± 0.11), corresponding to a 0.72-point gap. Immigration proponents rated representative portrayals of anti-immigration arguments slightly negatively (−0.28 ± 0.17) but were considerably harsher on unrepresentative ones (−1.19 ± 0.08), corresponding to a 0.91-point gap. In both groups, then, representative attributions are evaluated significantly more favorably than unrepresentative ones, consistent with Hypothesis 3. Additional analyses in the supplementary materials show that this association remains robust when controlling for attitude strength, partisanship, and demographic characteristics (Tables A.3–A.4).

Similarity of Best Counterargument and Opponents’ Actual Arguments

The previous section showed that respondents who accurately portray opponents’ arguments also evaluate them more favorably than those who misrepresent them. What it could not reveal is how those who misrepresent opponents’ arguments would have evaluated those arguments had they produced more accurate representations. While that counterfactual cannot be observed directly, the best counterargument prompt—administered to a separate, randomly assigned group—offers a proxy. If these best counterarguments more closely resemble opponents’ own arguments than the attributed set, it suggests that opponents’ reasoning is more consistent with respondents’ own evaluative standards than they recognize.

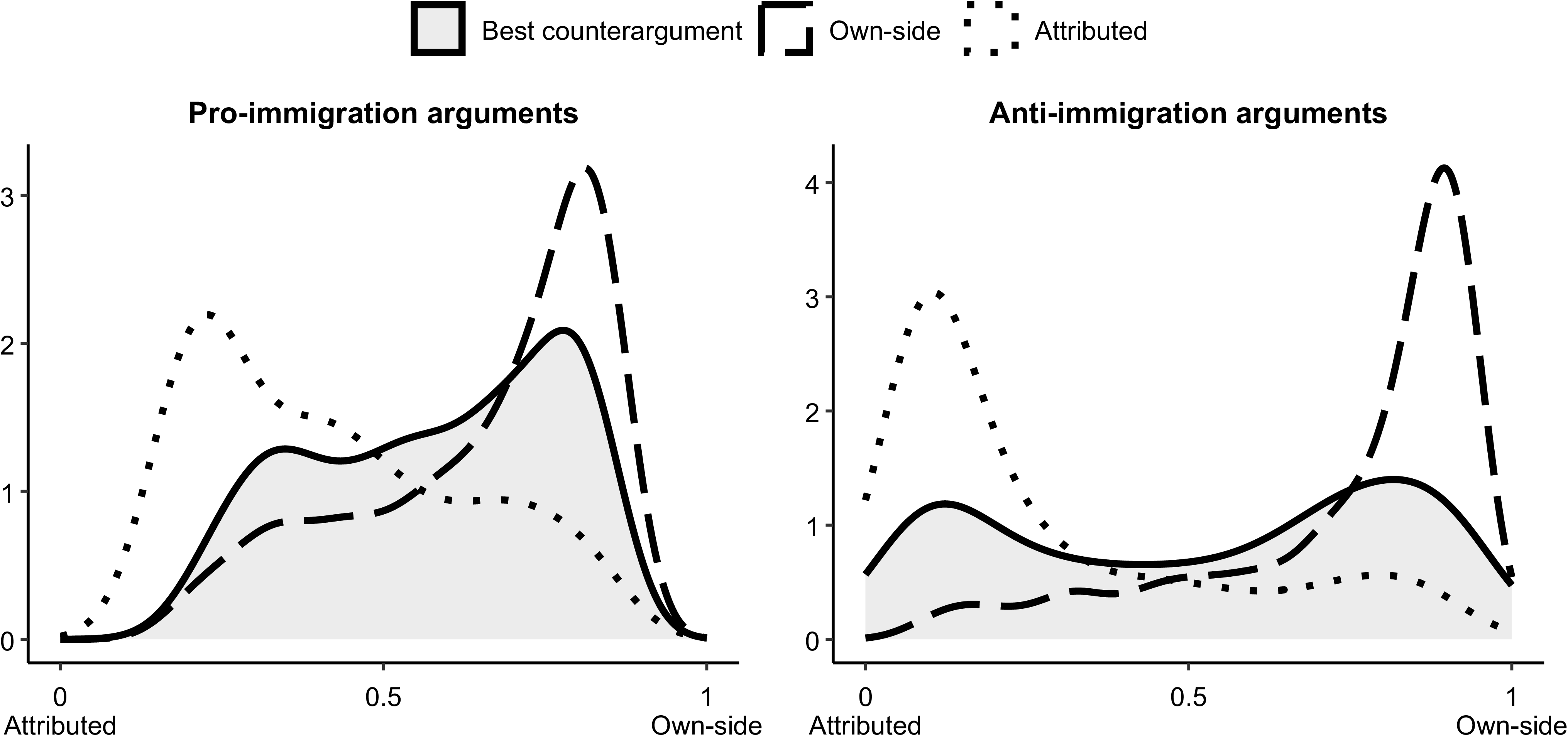

To test this, I apply the classifiers—trained to distinguish arguments attributed to opponents from opponents’ own—to the best counterargument texts and take the predicted probabilities as a proxy for which set they most closely resemble. Figure 4 plots the full distributions of these probabilities, alongside the reference distributions for attributed and opponents’ actual arguments. In both samples, the best counterargument distributions are distinctly bimodal, with peaks on opposing sides of the scale and relatively little mass around the midpoint. This indicates that respondents’ best counterarguments mostly resemble either opponents’ actual arguments or the attributed set, rather than introducing novel, ambiguous content.

Immigration skeptics’ best pro-immigration arguments overlap especially closely with the distribution of proponents’ own arguments, suggesting strong alignment with opponents’ reasoning: using the 0.5 threshold, 65 per cent are classified as opponents’ own. Immigration proponents’ best anti-immigration arguments show a more balanced pattern, with comparable peaks on both sides; nevertheless, a majority of 55 per cent are classified as opponents’ own. Taken together, the best counterarguments cluster nearer to opponents’ own arguments than to the attributed set and, relative to attributed arguments, provide a much closer match to opponents’ actual reasoning. This pattern supports Hypothesis 4: when asked to state the strongest counterargument, respondents on both sides produce texts that more closely reflect opponents’ reasoning than the arguments they attribute to them.

Predicted probability that arguments were written by the opposing side, by argument type.

Thematic Analysis

The text analyses presented so far provide support for Hypothesis 2 to Hypothesis 4. Yet they all rely on the same classification models, which compress variation in the texts onto a single latent dimension capturing their relative similarity to arguments attributed to opponents and those actually used by them. As discussed above, this carries two potential risks.

First, the separation it captures may partly reflect stylistic cues—such as shifts in grammatical perspective—rather than substantive differences. Indeed, inspection of texts most confidently classified as attributed reveals some remaining second-person phrasing, suggesting that such cues may have contributed to separation and, in turn, biased the predicted classifications of the best counterarguments toward the own-side category.

Secondly, because the models were trained exclusively on opponents’ own and attributed texts, they may underweight genuinely novel content that appears primarily in the best counterarguments. This concern is somewhat mitigated by the bimodal distributions in Figure 4, which indicate that most of the best counterarguments were classified with relatively high confidence rather than clustering around the midpoint—making extensive unrecognized content less likely. Nevertheless, to ensure that these results are not artifacts of model-based projection, I complement the classifier results with a thematic analysis that abstracts from stylistic markers and compares arguments across all three sets on their broader substantive content.

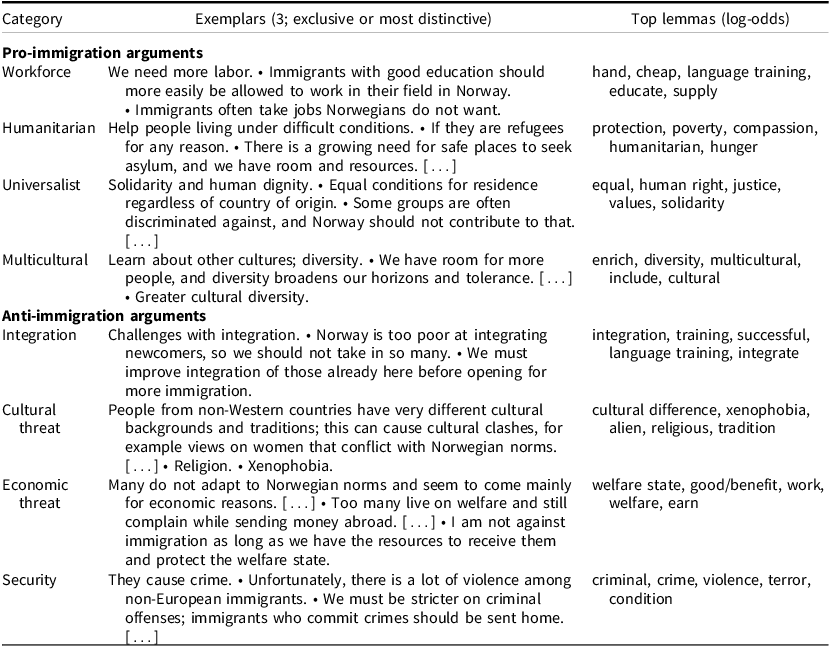

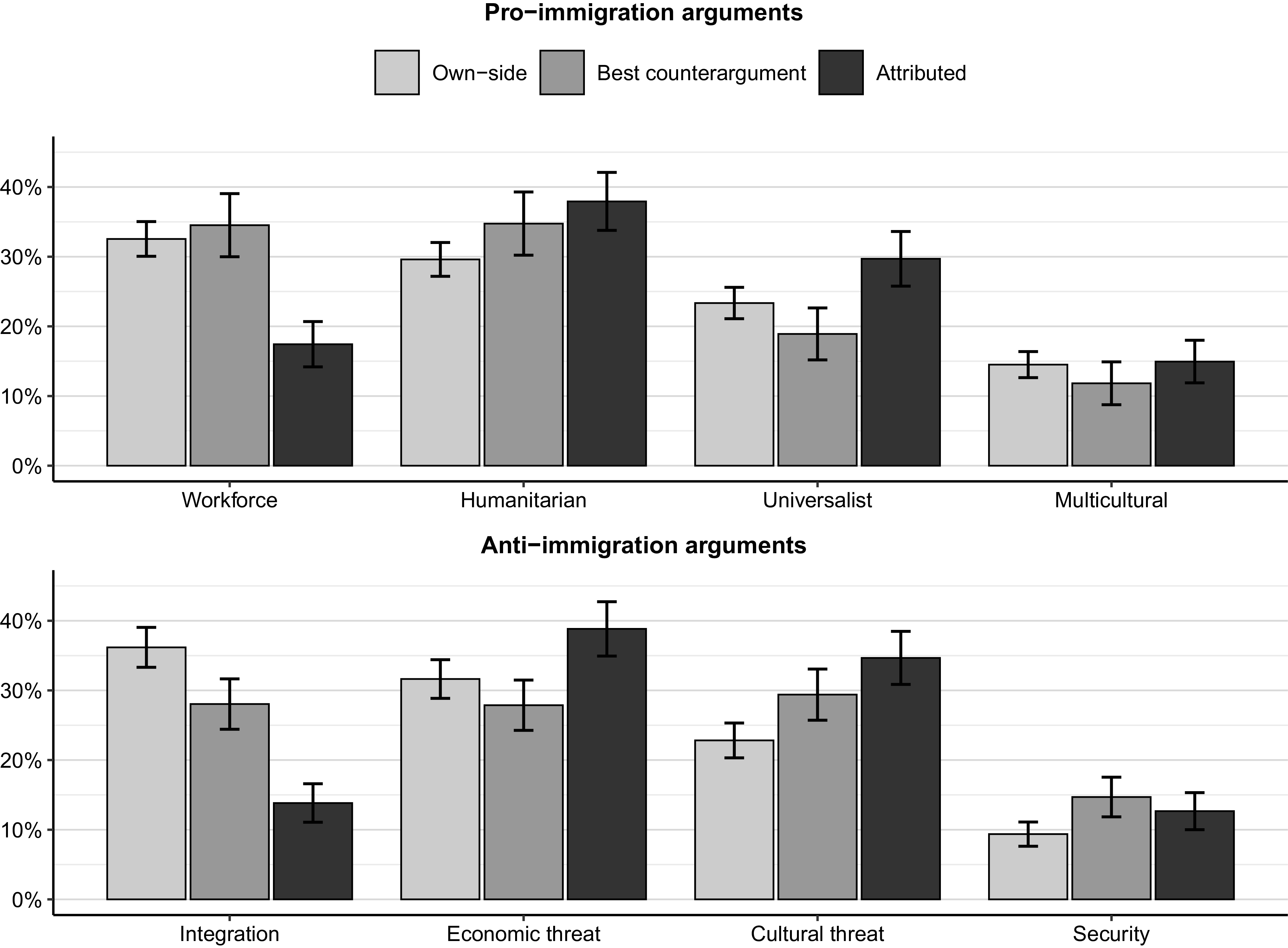

Table 4 reports the themes identified at the hand-coding stage, alongside three representative responsesFootnote 9 and the most distinctive lemmas.Footnote 10 On both the pro- and anti-immigration sides, four broad themes are observed in addition to the explicit non-response category (for example, ‘I don‘t know’), which is treated as missing and omitted. As shown in supplementary Table A.8, the models recovered the hand-coded labels with good accuracy under cross-validation.

Representative exemplars and distinctive lemmas for pro- and anti-immigration argument categories

Among pro-immigration arguments, the category labeled Workforce presents immigration as an economic necessity, emphasizing labor shortages, skill gaps, and the need to sustain growth. The Humanitarian topic frames immigration as a duty to protect people in hardship or fleeing danger, invoking compassion and basic protection needs. The Universalist category appeals to equal human rights, dignity, and solidarity across borders, stressing that geography should not limit access to a good life. Finally, the Multicultural category highlights the benefits of diversity—cultural exchange, broadened horizons, and a more vibrant society.

Among anti-immigration arguments, the Integration category emphasizes perceived limits of Norway’s integration capacity—insufficient language acquisition, weak integration policy, and the view that admission should be conditional on effective integration (for example, Norwegian proficiency). The Cultural threat category centers on cultural distance and value conflict, invoking worries about religion, traditions, and social cohesion. The Economic threat category stresses pressures on the welfare state and labor market—claims about benefit dependence, fiscal burden, and a preference to ‘help in place’ rather than via admission. Finally, the Security category frames immigration as a public-safety risk, linking immigration to crime, disorder, and terror.

Figure 5 shows the thematic composition of each side’s own arguments, the arguments attributed to that side by opponents, and opponents’ best counterarguments (shares normalized to 100 per cent). On the pro-immigration side, confidence intervals for proponents’ own arguments and skeptics’ best counterarguments overlap in every category, indicating close alignment. By contrast, skeptics’ attributions understate proponents’ emphasis on Workforce and overstate their emphasis on the Humanitarian and Universalist themes; there is no significant difference on the Multicultural theme. These patterns mirror the classifier results both in separation (own v. attributed are distinct; own v. best counterarguments overlap, Figure 4) and in substance (opponents underweight Workforce and overweight Humanitarian/Universalist, Table 3).

On the anti-immigration side, the picture is more mixed. The figure still shows a clear gap between what immigration skeptics themselves emphasize in their arguments and what pro-immigration respondents attribute to them: attributions downplay Integration and instead inflate more threat-oriented themes (Economic, Cultural, and, to a lesser extent, Security). While proponents’ best counterarguments do not fully replicate skeptics’ profile, they align more closely with it than the attributed ones do: they match skeptics on Economic threat, they are much closer on integration, somewhat closer on Cultural threat, but display the same small overemphasis on the Security theme. As on the pro-immigration side, these patterns are consistent with the classifier results: in terms of separation, own and attributed arguments are clearly distinct, while best counterarguments fall in between (consistent with Figure 4); in terms of substance, opponents understate integration concerns and overstate cultural and economic threat content (consistent with Table 3).

Thematic composition for each side’s own arguments, opponents’ attributed arguments, and best counterarguments (shares normalized to 100 per cent). Error bars indicate 95 per cent confidence intervals.

Taken together, the thematic analysis reinforces the earlier conclusions: respondents systematically misrepresent their opponents’ arguments, whereas the arguments they identify as the best available to opponents more closely resemble what opponents actually say.

Discussion and Conclusion

The immigration debate is often framed as a deep value conflict with little room for mutual understanding. This study challenges that interpretation. Using a survey experiment with open-ended questions, it shows that citizens do recognize legitimate arguments on the opposing side, but that they mistakenly believe those arguments are not representative of their opponents’ way of thinking.

This invites two contrasting interpretations. On an optimistic reading, it suggests that both sides underestimate the potential for mutual understanding, and that this untapped potential could be leveraged for greater co-operation and compromise on immigration policy. On a more pessimistic reading, however, the findings reveal the difficulty of mobilizing this mutual understanding, even under seemingly favorable conditions: respondents were aware of their opponents’ arguments, recognized them as legitimate, yet did not let this alter their perceptions of opponents’ reasoning.

This amounts to a violation of what philosophers know as the principle of charity—the injunction that we should always interpret our opponents’ arguments in their strongest and most reasonable form. While this carries the risk of overly generous or naïve readings, the evidence presented here suggests that, at least in the Norwegian immigration debate, such concerns are unfounded. Had respondents adopted more charitable interpretations of opponents’ reasoning, they would have arrived at both more accurate and more favorable perceptions of their opponents’ arguments.

Their failure to do so poses a distinct challenge to familiar strategies for mitigating political conflict. It shows that merely promoting knowledge of counterarguments—often presumed to foster tolerance and improve evaluations of political opponents (Arceneaux and VanderWielen Reference Arceneaux and VanderWielen2017; Mutz Reference Mutz2002; Stanley et al. Reference Stanley, Whitehead, Sinnott-Armstrong and Seli2020)—may have muted effects, since citizens do not necessarily use their knowledge of legitimate counterarguments to revise their image of opponents’ reasoning. Furthermore, it raises doubts about the promise of perspective-taking interventions: encouraging citizens to imagine the world from their opponents’ point of view may backfire if what it brings to mind is stereotyped and distorted portrayals of opponents’ reasoning (for an extended discussion of this backfire effect, see Sassenrath (Reference Sassenrath2022)). Both approaches ultimately presuppose a willingness to interpret opponents in good faith, which may be the primary barrier to mutual understanding.

In addition to these broader insights, one notable pattern in the data is the observed asymmetry between how the two sides view each other’s arguments. Immigration proponents were more prone to misrepresent skeptics’ arguments, and evaluated those arguments more negatively, than skeptics did. This is somewhat surprising in light of evidence that anti-immigration positions typically are held with greater salience than pro-immigration ones (Kustov Reference Kustov2023). At the same time, it is consistent with prior evidence that cosmopolitan, pro-immigration individuals hold more negative views of their more communitarian immigration-skeptic counterparts than vice versa (Helbling and Jungkunz Reference Helbling and Jungkunz2020). One possible explanation for this asymmetry is the association of anti-immigration views with the widely disliked populist right (Gidron et al. Reference Gidron, Adams and Horne2023; Harteveld et al. Reference Harteveld, Mendoza and Rooduijn2022), whose confrontational and norm-violating rhetorical style may color proponents’ perceptions of anti-immigration arguments (Bjånesøy Reference Bjånesøy2023; Sarsfield Reference Sarsfield, Chryssogelos, Hawkins and Hawkins2024).

However, several considerations warrant caution in interpreting this asymmetry. First, skeptics were less likely than proponents to articulate counterarguments (Table 1), which may itself signal a tendency to dismiss opposing arguments not captured in the main analyses. Secondly, the pattern is strongly conditioned by attitude strength: among respondents with stronger immigration attitudes (that is, excluding middle categories), differences in how each side evaluates opposing arguments are much smaller (Figure A.2). Thirdly, strong skeptics’ own arguments align more closely with proponents’ portrayals of skeptics than do the arguments of weaker skeptics (Table A.7). Because immigration attitudes were measured without a neutral option, ‘weak’ positions may contain ambivalent attitudes, in which case the arguments of strong skeptics may provide a cleaner indication of skeptics’ reasoning. If so, proponents’ misrepresentations of skeptics are somewhat less pronounced than the main analysis implies. While the adjustment is too small to overturn the conclusion that proponents misrepresent skeptics’ arguments (Figure A.3), it does call into question whether they do so more than skeptics misrepresent proponents.

Another potential concern relates to how we interpret the arguments respondents used to justify their own views. It is well known that the reasons people give for their views tend to be post-hoc rationalizations rather than accurate causal accounts of attitude formation (Blumenau Reference Blumenau2025). This, by itself, does not undercut the conclusions: rationalizations are not random talk but efforts to justify one’s position in light of one’s values and beliefs (Mercier Reference Mercier2011). Accordingly, the fact that individuals on both sides converge on what they regard as valid ways to think about immigration still indicates a meaningful basis for mutual understanding.

A potentially more serious concern, however, is that respondents consciously tailored their arguments to signal socially desirable reasoning rather than to express their own evaluative criteria. If so, what I interpret as misrepresentation of opponents’ reasoning could instead reflect accurate inferences about opponents’ ‘true’ priorities that differ from their public justifications. While social desirability bias is notoriously difficult to rule out, two considerations mitigate this concern. First, strong skeptics—arguably the group most exposed to such pressures—were more, not less, likely to argue in line with proponents’ expectations (Table A.7). Secondly, it is not obvious that producing socially desirable arguments differs from the deliberative ideal of offering publicly justifiable reasons rather than appealing to private motivations (Habermas Reference Habermas1996; Rawls Reference Rawls1997). If that is what respondents were doing, the results suggest that they did so successfully, articulating reasons that resonate with the evaluative standards of the broader public.

As a final note, this paper has focused on citizens’ perceptions of the arguments behind the views of those with whom they disagree, on the premise that these perceptions are central to how they judge the legitimacy of disagreement. Yet how people construe others’ reasoning is relevant to many other relationships in democratic politics. How, for example, do voters interpret the reasoning of elected officials, and vice versa? Such perceptions may shape whether citizens feel that their arguments are represented in political debate and in the policy-making process. On the topic of immigration specifically, this line of inquiry connects with familiar accusations that elected officials are ‘out of touch’ with the public’s concerns and priorities. The experimental approach developed here provides a novel way to examine this and related questions.

Supplementary material

The supplementary material for this article can be found at https://doi.org/10.1017/S0007123426101483.

Data availability statement

Replication data for this article can be found in Harvard Dataverse at: https://doi.org/10.7910/DVN/AD7GJY.

Acknowledgments

I thank the three anonymous reviewers for their thoughtful and constructive feedback. I am also grateful to Elisabeth Ivarsflaten, Marc Helbling, and Rune Slothuus for helpful comments and guidance on this project. I thank participants at the 2025 ECPR Joint Sessions workshop, the 2024 APSA Annual Meeting, the 2024 Nordic Political Science Congress (NoPSA), the 2023 ECPR General Conference, and the 2023 Norwegian Political Science Conference for valuable feedback. All remaining errors are my own.

Financial support

This study is funded by the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation program. Consolidator grant: INCLUDE #101001133.

Competing interests

None to disclose.