1 Introduction

The (1+1) dimensional Kardar-Parisi-Zhang (KPZ) equation [Reference Kardar, Parisi and ZhangKPZ86] is a stochastic partial differential equation (SPDE) given by

where

![]() $\gamma>0$

and

$\gamma>0$

and

![]() $\xi (t,x)$

is a standard Gaussian space-time white noise on

$\xi (t,x)$

is a standard Gaussian space-time white noise on

![]() ${\mathbb {R}}_+\times {\mathbb {R}}$

. As an SPDE, (KPZ) is ill-posed due to the presence of the nonlinear term

${\mathbb {R}}_+\times {\mathbb {R}}$

. As an SPDE, (KPZ) is ill-posed due to the presence of the nonlinear term

![]() $(\partial _xH)^2$

. One way to make sense of the equation is to consider

$(\partial _xH)^2$

. One way to make sense of the equation is to consider

![]() $\mathcal {U}:= e^H$

which formally solves the stochastic heat equation (SHE) with multiplicative noise:

$\mathcal {U}:= e^H$

which formally solves the stochastic heat equation (SHE) with multiplicative noise:

The SHE is known to be well-posed and has a well-developed solution theory based on the Itô integral and chaos expansions [Reference WalshWal86, Reference Bertini and CancriniBC95, Reference QuastelQua11, Reference CorwinCor12]. In this paper, we will consider the solution to (SHE) started with Dirac delta initial data

![]() $\mathcal {U}(0,x) = \delta _0(x)$

. For this initial data, [Reference Moreno FloresFlo14] established that

$\mathcal {U}(0,x) = \delta _0(x)$

. For this initial data, [Reference Moreno FloresFlo14] established that

![]() $\mathcal {U}(t,x)> 0$

for all

$\mathcal {U}(t,x)> 0$

for all

![]() $t> 0$

and

$t> 0$

and

![]() $x \in \mathbb {R}$

almost surely (see also [Reference MuellerMue91]). Thus

$x \in \mathbb {R}$

almost surely (see also [Reference MuellerMue91]). Thus

![]() $H=\log \mathcal {U}$

is well-defined and is called the Hopf-Cole solution of the KPZ equation. This is the notion of solution that we will work with in this paper, and it coincides with other existing notions of solutions using regularity structures [Reference HairerHai13, Reference HairerHai14], energy solutions [Reference Gubinelli and JaraGJ13, Reference Gonçalves and JaraGJ14, Reference Gubinelli and PerkowskiGP18], paracontrolled products [Reference Gubinelli, Imkeller and PerkowskiGIP15, Reference Gubinelli and PerkowskiGP17, Reference Perkowski and Cornelis RosatiPR19], and the Polchinski flow [Reference Kupiainen and MarcozziKM17, Reference DuchDuc25, Reference Chandra and FerdinandCF24].

$H=\log \mathcal {U}$

is well-defined and is called the Hopf-Cole solution of the KPZ equation. This is the notion of solution that we will work with in this paper, and it coincides with other existing notions of solutions using regularity structures [Reference HairerHai13, Reference HairerHai14], energy solutions [Reference Gubinelli and JaraGJ13, Reference Gonçalves and JaraGJ14, Reference Gubinelli and PerkowskiGP18], paracontrolled products [Reference Gubinelli, Imkeller and PerkowskiGIP15, Reference Gubinelli and PerkowskiGP17, Reference Perkowski and Cornelis RosatiPR19], and the Polchinski flow [Reference Kupiainen and MarcozziKM17, Reference DuchDuc25, Reference Chandra and FerdinandCF24].

The KPZ equation has been studied intensively over the past three decades in both the mathematics and physics literature. It first gained attention in the physics community in [Reference Kardar, Parisi and ZhangKPZ86], and since then a precise mathematical understanding of the equation and its well-posedness theory has gradually evolved. In the mathematical physics literature, the relevance of the equation is that it appears as the fluctuation of a number of physically complex systems such as interacting particle systems [Reference Bertini and GiacominBG97] and directed polymers [Reference Alberts, Khanin and QuastelAKQ14]. We refer to [Reference Ferrari and SpohnFS10, Reference QuastelQua11, Reference CorwinCor12, Reference Quastel and SpohnQS15, Reference Chandra and WeberCW17, Reference Corwin and ShenCS20] for some surveys of the mathematical studies of the KPZ equation, and some of the models in which it arises.

The present work will be concerned with how the KPZ equation arises in a family of probabilistic models called stochastic flows of kernels. Roughly speaking, a discrete-time stochastic flow of kernels is a family of random probability measures indexed by each site of space-time (

![]() $\mathbb N\times {\mathbb {Z}}$

or

$\mathbb N\times {\mathbb {Z}}$

or

![]() $\mathbb N\times {\mathbb {R}}$

depending on the desired model), where spatial correlations between the randomized transitions are allowed but temporal correlations are not. The notion of stochastic flows initially gained importance for their relation to geometry and PDEs with random coefficients, see the monograph [Reference KunitaKun97]. A resurgence of interest and a broadening of the scope of stochastic flow theory was introduced by work of Tsirelson-Vershik on continuous products of probability spaces [Reference Tsirelson and VershikTV98, Reference TsirelsonTsi04], and further work of Le Jan-Raimond on their relation to projective families of Markov chains [Reference Le Jan and RaimondLJR02, Reference Le Jan and RaimondLJR04b, Reference Le Jan and RaimondLJR04a].

$\mathbb N\times {\mathbb {R}}$

depending on the desired model), where spatial correlations between the randomized transitions are allowed but temporal correlations are not. The notion of stochastic flows initially gained importance for their relation to geometry and PDEs with random coefficients, see the monograph [Reference KunitaKun97]. A resurgence of interest and a broadening of the scope of stochastic flow theory was introduced by work of Tsirelson-Vershik on continuous products of probability spaces [Reference Tsirelson and VershikTV98, Reference TsirelsonTsi04], and further work of Le Jan-Raimond on their relation to projective families of Markov chains [Reference Le Jan and RaimondLJR02, Reference Le Jan and RaimondLJR04b, Reference Le Jan and RaimondLJR04a].

In a recent work [Reference Das, Drillick and ParekhDDP24b], we were able to show that (SHE) arises as a continuum limit in the following discrete stochastic flow model. Consider a family of IID

![]() $[0,1]$

-valued random variables

$[0,1]$

-valued random variables

![]() $\omega _{r,y}$

with

$\omega _{r,y}$

with

![]() $r \in \mathbb {Z}_{\ge 0}$

and

$r \in \mathbb {Z}_{\ge 0}$

and

![]() $y\in {\mathbb {Z}}$

, drawn from a common law

$y\in {\mathbb {Z}}$

, drawn from a common law

![]() $\nu $

. A random walk

$\nu $

. A random walk

![]() $(R(r))_{r\ge 0}$

in the environment

$(R(r))_{r\ge 0}$

in the environment

![]() $\omega $

starts from

$\omega $

starts from

![]() $(r,y)=(0,0)$

and at each time step goes from

$(r,y)=(0,0)$

and at each time step goes from

![]() $(r,y)\to (r+1,y+1)$

with probability

$(r,y)\to (r+1,y+1)$

with probability

![]() $\omega _{r,y}$

and from

$\omega _{r,y}$

and from

![]() $(r,y)\to (r+1,y-1)$

with probability

$(r,y)\to (r+1,y-1)$

with probability

![]() $1-\omega _{r,y}$

. Consider the (quenched) random transition probabilities

$1-\omega _{r,y}$

. Consider the (quenched) random transition probabilities

A series of physics papers [Reference Thiery and Le DoussalTLD16, Reference Le Doussal and ThieryLDT17, Reference Barraquand and Le DoussalBLD20] conjectured that the fluctuations of the random field

![]() $P^\omega (r,y)$

should be described by the KPZ equation at some point within the tail of that probability measure at spatial distance of order

$P^\omega (r,y)$

should be described by the KPZ equation at some point within the tail of that probability measure at spatial distance of order

![]() $r^{3/4}$

, when r is very large. We thus define for

$r^{3/4}$

, when r is very large. We thus define for

![]() $t\ge 0$

and

$t\ge 0$

and

![]() $x\in {\mathbb {R}}$

and

$x\in {\mathbb {R}}$

and

![]() $N\in \mathbb N$

the random field

$N\in \mathbb N$

the random field

The main results of [Reference Das, Drillick and ParekhDDP24b] proved the conjecture of [Reference Thiery and Le DoussalTLD16, Reference Le Doussal and ThieryLDT17, Reference Barraquand and Le DoussalBLD20], by showing that the random field

![]() $\mathscr U_N(t,x)$

converges in law (in some topology of tempered distributions) to the solution of (SHE) with

$\mathscr U_N(t,x)$

converges in law (in some topology of tempered distributions) to the solution of (SHE) with

![]() $\gamma ^2= \frac {8\sigma ^2}{1-4\sigma ^2}$

, where

$\gamma ^2= \frac {8\sigma ^2}{1-4\sigma ^2}$

, where

![]() $\sigma ^2 = \mathrm {Var}(\omega _{t,x})$

. It turns out that the spatial distance of order

$\sigma ^2 = \mathrm {Var}(\omega _{t,x})$

. It turns out that the spatial distance of order

![]() $N^{3/4}$

is the unique location within the tail of

$N^{3/4}$

is the unique location within the tail of

![]() $P^\omega (Nt,\cdot )$

where one expects to see (SHE) appear: at smaller exponents one observes a Gaussian limit and at larger exponents one sees Tracy-Widom limits (see Section 1.2 below). This established a “KPZ crossover” at spatial location

$P^\omega (Nt,\cdot )$

where one expects to see (SHE) appear: at smaller exponents one observes a Gaussian limit and at larger exponents one sees Tracy-Widom limits (see Section 1.2 below). This established a “KPZ crossover” at spatial location

![]() $N^{3/4}$

when time is of order N, with a somewhat unexpected value for the noise coefficient in the limiting SPDE. Thus we say that the crossover exponent for this model is

$N^{3/4}$

when time is of order N, with a somewhat unexpected value for the noise coefficient in the limiting SPDE. Thus we say that the crossover exponent for this model is

![]() $3/4$

.

$3/4$

.

A number of follow-up questions may then be asked, such as whether the methods of proving the convergence to (SHE) could be generalized to more complex models in which there is non-nearest-neighbor random walk in a random environment, or correlations between the transition kernels at each lattice site. Numerical work of J. Hass and coauthors in the physics community [Reference Hass, Carroll-Godfrey, Corwin and CorwinHCGCC23, Reference Hass, Corwin and CorwinHCC23, Reference Hass, Drillick, Corwin and CorwinHDCC24, Reference HassHas25] observed that there are non-nearest-neighbor models where one should observe KPZ fluctuations at spatial location

![]() $N^{7/8}$

rather than

$N^{7/8}$

rather than

![]() $N^{3/4}.$

More precisely, at each lattice site

$N^{3/4}.$

More precisely, at each lattice site

![]() $(r,y) \in {\mathbb {Z}}_{\ge 0}\times {\mathbb {Z}}$

sample IID Uniform[0,1] random variables. Then impose that the random walker goes from

$(r,y) \in {\mathbb {Z}}_{\ge 0}\times {\mathbb {Z}}$

sample IID Uniform[0,1] random variables. Then impose that the random walker goes from

![]() $(r,y) \to (r+1,y+1)$

with probability

$(r,y) \to (r+1,y+1)$

with probability

![]() $(1-U_{r,y})/2$

, from

$(1-U_{r,y})/2$

, from

![]() $(r,y)\to (r+1,y)$

with probability

$(r,y)\to (r+1,y)$

with probability

![]() $U_{r,y}$

and from

$U_{r,y}$

and from

![]() $(r,y) \to (r+1,y-1)$

with probability

$(r,y) \to (r+1,y-1)$

with probability

![]() $(1-U_{r,y})/2$

. The physicists observed that imposing this “first-order symmetry” of the transition kernels changes the crossover exponent from

$(1-U_{r,y})/2$

. The physicists observed that imposing this “first-order symmetry” of the transition kernels changes the crossover exponent from

![]() $3/4$

to

$3/4$

to

![]() $7/8$

, but the KPZ equation is still expected to arise at the new exponent.

$7/8$

, but the KPZ equation is still expected to arise at the new exponent.

A completely different type of model posed to us by I. Corwin is the following. Let

![]() $(\omega _{r,x})_{r\in \mathbb N, x\in {\mathbb {Z}}}$

be an IID collection of random variables. Let

$(\omega _{r,x})_{r\in \mathbb N, x\in {\mathbb {Z}}}$

be an IID collection of random variables. Let

![]() $b: {\mathbb {Z}}\to [0,1]$

be any deterministic function, nonzero on at least two sites, such that

$b: {\mathbb {Z}}\to [0,1]$

be any deterministic function, nonzero on at least two sites, such that

![]() $\sum _{x\in {\mathbb {Z}}} b(x)=1$

. Define the kernels

$\sum _{x\in {\mathbb {Z}}} b(x)=1$

. Define the kernels

$$ \begin{align*}K_r(x,y):= \frac{b(y-x) e^{\omega_{r,y}}}{\sum_{y'\in {\mathbb{Z}}} b(y'-x) e^{\omega_{r,y'}}}.\end{align*} $$

$$ \begin{align*}K_r(x,y):= \frac{b(y-x) e^{\omega_{r,y}}}{\sum_{y'\in {\mathbb{Z}}} b(y'-x) e^{\omega_{r,y'}}}.\end{align*} $$

Consider the “random landscape model” of random walk in a random environment on

![]() ${\mathbb {Z}}_{\ge 0}\times {\mathbb {Z}}$

in which one goes from site

${\mathbb {Z}}_{\ge 0}\times {\mathbb {Z}}$

in which one goes from site

![]() $(r,x) \to (r+1,y)$

with probability

$(r,x) \to (r+1,y)$

with probability

![]() $K_r(x,y)$

. This model is substantially different from the random walk models considered above, since the transitions of nearby particles can be strongly correlated via depending on the same weights. A natural question is to investigate whether or not one also finds the KPZ equation at location of order

$K_r(x,y)$

. This model is substantially different from the random walk models considered above, since the transitions of nearby particles can be strongly correlated via depending on the same weights. A natural question is to investigate whether or not one also finds the KPZ equation at location of order

![]() $N^{3/4}$

away from the expected displacement, if one probes the quenched transition density of the random walker at time of order N.

$N^{3/4}$

away from the expected displacement, if one probes the quenched transition density of the random walker at time of order N.

All of these questions then led to the desire to prove a “super-universality theorem” or “generalized invariance principle” for the equation (SHE) arising in stochastic flows of kernels, that is, a minimal set of conditions for determining when and where (SHE) is expected to arise in any family of stochastic kernels, and moreover to test the robustness of the methods developed in [Reference Das, Drillick and ParekhDDP24a, Reference Das, Drillick and ParekhDDP24b]. This is exactly the subject of the present work. Our main result will be Theorem 1.5 below, where we find a collection of reasonably sharp conditions under which one expects to see (SHE) arise in a family of stochastic kernels. Moreover this theorem will give the precise location where (SHE) can be found (analogous to

![]() $N^{3/4}$

above), as well as identify the noise coefficient of the limiting equation (analogous to

$N^{3/4}$

above), as well as identify the noise coefficient of the limiting equation (analogous to

![]() $\frac {8\sigma ^2}{1-4\sigma ^2}$

above).

$\frac {8\sigma ^2}{1-4\sigma ^2}$

above).

1.1 Generalized model and main results

Definition 1.1. Let

![]() $I={\mathbb {R}}$

or

$I={\mathbb {R}}$

or

![]() $I=c {\mathbb {Z}}$

where

$I=c {\mathbb {Z}}$

where

![]() $c>0$

is fixed henceforth. Let

$c>0$

is fixed henceforth. Let

![]() $\mathcal B(I)$

denote the Borel

$\mathcal B(I)$

denote the Borel

![]() $\sigma $

-algebra on I. A Markov kernel on I is a continuous function from

$\sigma $

-algebra on I. A Markov kernel on I is a continuous function from

![]() $I\to \mathcal P(I)$

, where

$I\to \mathcal P(I)$

, where

![]() $\mathcal P(I)$

is the space of probability measures on

$\mathcal P(I)$

is the space of probability measures on

![]() $(I,\mathcal B(I)),$

equipped with the topology of weak convergence of probability measures.

$(I,\mathcal B(I)),$

equipped with the topology of weak convergence of probability measures.

Any Markov kernel K on I is written as

![]() $K(x,A)$

, which is identified with the continuous function from

$K(x,A)$

, which is identified with the continuous function from

![]() $I\to \mathcal P(I)$

given by

$I\to \mathcal P(I)$

given by

![]() $x\mapsto K(x,\cdot )$

. We denote by

$x\mapsto K(x,\cdot )$

. We denote by

![]() $\mathcal M(I)$

the space of all Markov kernels on I.

$\mathcal M(I)$

the space of all Markov kernels on I.

We equip

![]() $\mathcal M(I)$

with the weakest topology under which the maps

$\mathcal M(I)$

with the weakest topology under which the maps

![]() $T_{f,x}:\mathcal M(I)\to {\mathbb {R}}$

given by

$T_{f,x}:\mathcal M(I)\to {\mathbb {R}}$

given by

are continuous, where one varies over all

![]() $x\in I$

and all bounded continuous functions

$x\in I$

and all bounded continuous functions

![]() $f:I\to {\mathbb {R}}$

. This topology endows

$f:I\to {\mathbb {R}}$

. This topology endows

![]() $\mathcal M(I)$

with a Borel

$\mathcal M(I)$

with a Borel

![]() $\sigma $

-algebra which allows us to talk about random variables taking values in the space of Markov kernels. For

$\sigma $

-algebra which allows us to talk about random variables taking values in the space of Markov kernels. For

![]() $a\in I$

define the translation operator

$a\in I$

define the translation operator

![]() $\tau _a:\mathcal M(I)\to \mathcal M(I)$

by

$\tau _a:\mathcal M(I)\to \mathcal M(I)$

by

![]() $(\tau _a K)(x,A):= K(x+a,A+a)$

which is a Borel-measurable map on this space.

$(\tau _a K)(x,A):= K(x+a,A+a)$

which is a Borel-measurable map on this space.

Assumption 1.2 (Assumptions for the main result)

Assume we have a family

![]() $\{K_n\}_{n\ge 1}$

of random variables in the space

$\{K_n\}_{n\ge 1}$

of random variables in the space

![]() $\mathcal M(I)$

, defined on some probability space

$\mathcal M(I)$

, defined on some probability space

![]() $(\boldsymbol \Omega , \mathcal F^\omega , \mathbb P)$

such that the following hold true.

$(\boldsymbol \Omega , \mathcal F^\omega , \mathbb P)$

such that the following hold true.

-

1. (Stochastic flow increments)

$K_1,K_2,K_3,...$

are independent and identically distributed under

$K_1,K_2,K_3,...$

are independent and identically distributed under

$\mathbb P$

.

$\mathbb P$

. -

2. (Spatial translational invariance)

$K_1$

has the same law as

$K_1$

has the same law as

$\tau _a K_1$

for all

$\tau _a K_1$

for all

$a\in I,$

under

$a\in I,$

under

$\mathbb P$

.

$\mathbb P$

. -

3. (Exponential moments for the annealed law) Letting

$\mu (A) := {\mathbb {E}}[ K_1(0,A)],$

we have that the moment generating function exists, that is,

$\mu (A) := {\mathbb {E}}[ K_1(0,A)],$

we have that the moment generating function exists, that is,

$\int _I e^{\eta |x|}\mu ({\mathrm {d}} x)<\infty $

for some

$\int _I e^{\eta |x|}\mu ({\mathrm {d}} x)<\infty $

for some

$\eta>0$

. Letting

$\eta>0$

. Letting

$m_k:= \int _I x^k \mu ({\mathrm {d}} x)$

denote the sequence of moments of

$m_k:= \int _I x^k \mu ({\mathrm {d}} x)$

denote the sequence of moments of

$\mu $

, we also impose that the variance of

$\mu $

, we also impose that the variance of

$\mu $

is 1, that is,

$\mu $

is 1, that is,

$m_2-m_1^2=1$

.

$m_2-m_1^2=1$

. -

4. (The first

$p-1$

moments are deterministic) Define the k-point correlation kernel There exists some

$p-1$

moments are deterministic) Define the k-point correlation kernel There exists some $$ \begin{align*}\boldsymbol p^{(k)}\big((x_1,...,x_k),({\mathrm{d}} y_1,...,{\mathrm{d}} y_k)\big):= {\mathbb{E}}[ K_1(x_1,{\mathrm{d}} y_1)\cdots K_1(x_k,{\mathrm{d}} y_k)].\end{align*} $$

$$ \begin{align*}\boldsymbol p^{(k)}\big((x_1,...,x_k),({\mathrm{d}} y_1,...,{\mathrm{d}} y_k)\big):= {\mathbb{E}}[ K_1(x_1,{\mathrm{d}} y_1)\cdots K_1(x_k,{\mathrm{d}} y_k)].\end{align*} $$

$p\in \mathbb N$

such that

$p\in \mathbb N$

such that

$\int _I y^kK_1(0,{\mathrm {d}} y)=m_k \mathbb P$

-almost surely for

$\int _I y^kK_1(0,{\mathrm {d}} y)=m_k \mathbb P$

-almost surely for

$1\leq k \leq p-1$

, or equivalently Furthermore

$1\leq k \leq p-1$

, or equivalently Furthermore $$ \begin{align*}\int_{I^2} (x-x_0)^k(y-y_0)^k \; \boldsymbol p^{(2)}((x_0,y_0),({\mathrm{d}} x,{\mathrm{d}} y))=m_k^2,\;\;\;\;\;\; x_0,y_0\in I, \;\;\;1\leq k \leq p-1.\end{align*} $$

$$ \begin{align*}\int_{I^2} (x-x_0)^k(y-y_0)^k \; \boldsymbol p^{(2)}((x_0,y_0),({\mathrm{d}} x,{\mathrm{d}} y))=m_k^2,\;\;\;\;\;\; x_0,y_0\in I, \;\;\;1\leq k \leq p-1.\end{align*} $$

$p-1$

is the largest such value, that is,

$p-1$

is the largest such value, that is,

$\int _I y^{p}K_1(0,{\mathrm {d}} y)$

is nondeterministic.

$\int _I y^{p}K_1(0,{\mathrm {d}} y)$

is nondeterministic.

-

5. (Strong decay of correlations up to

$(4p)^{th}$

joint moments) Consider a bounded function

$(4p)^{th}$

joint moments) Consider a bounded function

$\mathrm {F_{decay}}:\Bbb [0,\infty )\to [0,\infty )$

such that

$\mathrm {F_{decay}}:\Bbb [0,\infty )\to [0,\infty )$

such that

$x\mapsto x\mathrm {F_{decay}}(x)$

is decreasing for large x, and

$x\mapsto x\mathrm {F_{decay}}(x)$

is decreasing for large x, and

$\int _0^\infty x \mathrm {F_{decay}}(x){\mathrm {d}} x<\infty $

. Then assume that the following spatial decay of correlations holds for the moments of the Markov kernel

$\int _0^\infty x \mathrm {F_{decay}}(x){\mathrm {d}} x<\infty $

. Then assume that the following spatial decay of correlations holds for the moments of the Markov kernel

$K_1$

: for all

$K_1$

: for all

$k\in \{2p,...,4p\}$

and all

$k\in \{2p,...,4p\}$

and all

$x_1,...,x_k\in I$

we have where

$x_1,...,x_k\in I$

we have where $$ \begin{align*}\max_{\substack{0\le r_1,...,r_k\le 4p-1\\2p\leq r_1+...+r_k\leq 4p}}\bigg| \int_{I^k} \prod_{j=1}^k (y_j-x_j)^{r_j} \boldsymbol p^{(k)} \big( {\mathbf{x}}, {\mathrm{d}}{\mathbf{y}}\big)- \int_{I^k} \prod_{j=1}^k y_j^{r_j}\mu^{\otimes k}({\mathrm{d}}{\mathbf{y}})\bigg| \leq \mathrm{F_{decay}} \big(\min_{1\le i<j\le k}|x_i-x_j|\big)\end{align*} $$

$$ \begin{align*}\max_{\substack{0\le r_1,...,r_k\le 4p-1\\2p\leq r_1+...+r_k\leq 4p}}\bigg| \int_{I^k} \prod_{j=1}^k (y_j-x_j)^{r_j} \boldsymbol p^{(k)} \big( {\mathbf{x}}, {\mathrm{d}}{\mathbf{y}}\big)- \int_{I^k} \prod_{j=1}^k y_j^{r_j}\mu^{\otimes k}({\mathrm{d}}{\mathbf{y}})\bigg| \leq \mathrm{F_{decay}} \big(\min_{1\le i<j\le k}|x_i-x_j|\big)\end{align*} $$

${\mathbf {x}}:= (x_1,...,x_k)$

and

${\mathbf {x}}:= (x_1,...,x_k)$

and

${\mathbf {y}}=(y_1,...,y_k).$

${\mathbf {y}}=(y_1,...,y_k).$

-

6. (Nondegeneracy) Define the Markov kernel

$\boldsymbol {p}_{\mathbf {dif}}(x,A):=\int _I \mathbf {1}_{\{y_1-y_2\in A\}} \boldsymbol p^{(2)} \big ((x,0),({\mathrm {d}} y_1,{\mathrm {d}} y_2)\big )$

indexed by

$\boldsymbol {p}_{\mathbf {dif}}(x,A):=\int _I \mathbf {1}_{\{y_1-y_2\in A\}} \boldsymbol p^{(2)} \big ((x,0),({\mathrm {d}} y_1,{\mathrm {d}} y_2)\big )$

indexed by

$x\in I$

and

$x\in I$

and

$A\in \mathcal B(I)$

. We impose:

$A\in \mathcal B(I)$

. We impose:-

• (Regularity) The family of transition laws

$x\mapsto \boldsymbol {p}_{\mathbf {dif}}(x,\bullet )$

are continuous in total variation norm.

$x\mapsto \boldsymbol {p}_{\mathbf {dif}}(x,\bullet )$

are continuous in total variation norm. -

• (Irreducibility) For any two points

$x,y \in I$

and any

$x,y \in I$

and any

$\varepsilon>0$

there exists

$\varepsilon>0$

there exists

$m\in \mathbb N$

such that

$m\in \mathbb N$

such that

${\boldsymbol {p}_{\mathbf {dif}}^m\big (x,(y-\varepsilon ,y+\varepsilon )\big )>0,}$

where

${\boldsymbol {p}_{\mathbf {dif}}^m\big (x,(y-\varepsilon ,y+\varepsilon )\big )>0,}$

where

$\boldsymbol {p}_{\mathbf {dif}}^m$

is the

$\boldsymbol {p}_{\mathbf {dif}}^m$

is the

$m^{th}$

power of this Markov kernel.

$m^{th}$

power of this Markov kernel.

-

Condition (4) says that the model exhibits “symmetry up to order p.” This integer p will play an extremely important role in all of the theorems and calculations throughout the rest of the paper.

To clarify an important detail about condition (1), we are not assuming that

![]() $K_i(x,\cdot ),K_i(x',\cdot )$

are independent for distinct

$K_i(x,\cdot ),K_i(x',\cdot )$

are independent for distinct

![]() $x,x'.$

Rather we are assuming that the family

$x,x'.$

Rather we are assuming that the family

![]() $\{K_1(x,\cdot )\}_{x\in I}$

is independent of

$\{K_1(x,\cdot )\}_{x\in I}$

is independent of

![]() $\{K_2(x,\cdot )\}_{x\in I}$

and has the same distribution as that family, and so on. But this says nothing about how

$\{K_2(x,\cdot )\}_{x\in I}$

and has the same distribution as that family, and so on. But this says nothing about how

![]() $K_i(x,\cdot ),K_i(x',\cdot )$

might be correlated for different

$K_i(x,\cdot ),K_i(x',\cdot )$

might be correlated for different

![]() $x,x'$

. There are many interesting examples where there are indeed spatial correlations. This question of correlation of distinct spatial locations is covered by condition (5).

$x,x'$

. There are many interesting examples where there are indeed spatial correlations. This question of correlation of distinct spatial locations is covered by condition (5).

Indeed, condition (5) is the most important part of the assumption, whereas the other parts are more definitional. Notice that if

![]() $r_1+...+r_k<2p$

then the left side in Item (5) vanishes identically thanks to Item (4), thus the maximum is really over all

$r_1+...+r_k<2p$

then the left side in Item (5) vanishes identically thanks to Item (4), thus the maximum is really over all

![]() $0\leq r_1+...+r_k \leq 4p$

. We remark that the finite-range models, in which

$0\leq r_1+...+r_k \leq 4p$

. We remark that the finite-range models, in which

![]() $\mathrm {F_{decay}}$

is compactly supported, already constitute many interesting examples. Additionally, note that all bounds in (5) are automatically satisfied if there exists

$\mathrm {F_{decay}}$

is compactly supported, already constitute many interesting examples. Additionally, note that all bounds in (5) are automatically satisfied if there exists

![]() $\mathrm {C_{len}}>0$

so that for all

$\mathrm {C_{len}}>0$

so that for all

![]() $x_1,...,x_{4p}\in I$

, one has the total variation bound

$x_1,...,x_{4p}\in I$

, one has the total variation bound

Here the first measure denotes the translate of

![]() $\mu ^{\otimes k}$

by the vector

$\mu ^{\otimes k}$

by the vector

![]() $(x_1,...,x_k)$

. Indeed if (1.1) holds, then one may verify using a coupling argument that the inequality of Item (5) holds with

$(x_1,...,x_k)$

. Indeed if (1.1) holds, then one may verify using a coupling argument that the inequality of Item (5) holds with

![]() $\mathrm {F_{decay}}(x)$

being some multiple of

$\mathrm {F_{decay}}(x)$

being some multiple of

![]() $e^{-\frac {x}{2\mathrm {C_{len}}}}$

. The constant

$e^{-\frac {x}{2\mathrm {C_{len}}}}$

. The constant

![]() $\mathrm {C_{len}}$

can be thought of as the correlation length of the microscopic model of stochastic kernels. In most specific models of interest, it is not difficult to show (1.1) for any fixed

$\mathrm {C_{len}}$

can be thought of as the correlation length of the microscopic model of stochastic kernels. In most specific models of interest, it is not difficult to show (1.1) for any fixed

![]() $k\in \mathbb {N}$

(see Section 6). Nonetheless, we have stated the more general condition (5) above for interest, as it only requires control on a finite number of observables (which is much weaker than total variation bounds).

$k\in \mathbb {N}$

(see Section 6). Nonetheless, we have stated the more general condition (5) above for interest, as it only requires control on a finite number of observables (which is much weaker than total variation bounds).

Condition (6) is just irreducibility of the Markov kernel

![]() $\boldsymbol {p}_{\mathbf {dif}}$

in the usual sense when

$\boldsymbol {p}_{\mathbf {dif}}$

in the usual sense when

![]() $I=c \mathbb {Z}$

. In particular there is no loss of generality as long as the chain is merely recurrent, because one can always replace I by the communicating class of

$I=c \mathbb {Z}$

. In particular there is no loss of generality as long as the chain is merely recurrent, because one can always replace I by the communicating class of

![]() $0$

throughout all of the assumptions and results. For

$0$

throughout all of the assumptions and results. For

![]() $I={\mathbb {R}}$

, (6) is a technical condition that ensures that the Markov kernel

$I={\mathbb {R}}$

, (6) is a technical condition that ensures that the Markov kernel

![]() $\boldsymbol {p}_{\mathbf {dif}}$

is sufficiently regularizing. In models of interest, both conditions in Item (6) will be quite easy to check. Thanks to Assumption (6), we will prove the following, see Theorem 3.5 and Proposition 3.8.

$\boldsymbol {p}_{\mathbf {dif}}$

is sufficiently regularizing. In models of interest, both conditions in Item (6) will be quite easy to check. Thanks to Assumption (6), we will prove the following, see Theorem 3.5 and Proposition 3.8.

Proposition 1.3. Let

![]() $(X_k)_{k\ge 0}$

denote the Markov chain on I associated to the Markov kernel

$(X_k)_{k\ge 0}$

denote the Markov chain on I associated to the Markov kernel

![]() $\boldsymbol {p}_{\mathbf {dif}}$

from Assumption 1.2 Item (6). There exists an invariant measure

$\boldsymbol {p}_{\mathbf {dif}}$

from Assumption 1.2 Item (6). There exists an invariant measure

![]() $\pi ^{\mathrm {inv}}$

for

$\pi ^{\mathrm {inv}}$

for

![]() $\boldsymbol {p}_{\mathbf {dif}}$

, unique up to scalar multiple. This measure

$\boldsymbol {p}_{\mathbf {dif}}$

, unique up to scalar multiple. This measure

![]() $\pi ^{\mathrm {inv}}$

necessarily has full support. For all measurable functions

$\pi ^{\mathrm {inv}}$

necessarily has full support. For all measurable functions

![]() $f:I\to {\mathbb {R}}$

such that

$f:I\to {\mathbb {R}}$

such that

![]() $|f(x)| \leq F(|x|)$

for some decreasing

$|f(x)| \leq F(|x|)$

for some decreasing

![]() $F:[0,\infty )\to [0,\infty )$

such that

$F:[0,\infty )\to [0,\infty )$

such that

![]() $\sum _{k=0}^\infty F(k)<\infty $

, one has that

$\sum _{k=0}^\infty F(k)<\infty $

, one has that

![]() $\int _I |f|d\pi ^{\mathrm {inv}}<\infty $

. Furthermore for all

$\int _I |f|d\pi ^{\mathrm {inv}}<\infty $

. Furthermore for all

![]() $f,g\in L^1(\pi ^{\mathrm {inv}})$

such that

$f,g\in L^1(\pi ^{\mathrm {inv}})$

such that

![]() $\int _I g\;d\pi ^{\mathrm {inv}}\ne 0$

, we have that

$\int _I g\;d\pi ^{\mathrm {inv}}\ne 0$

, we have that

$$ \begin{align*}\lim_{r\to \infty} \frac{\sum_{k=0}^r f(X_k)}{\sum_{k=0}^r g(X_k)} = \frac{\int_I f\;d\pi^{\mathrm{inv}}}{\int_I g\;d\pi^{\mathrm{inv}}}\end{align*} $$

$$ \begin{align*}\lim_{r\to \infty} \frac{\sum_{k=0}^r f(X_k)}{\sum_{k=0}^r g(X_k)} = \frac{\int_I f\;d\pi^{\mathrm{inv}}}{\int_I g\;d\pi^{\mathrm{inv}}}\end{align*} $$

almost surely starting from any point

![]() $X_0\in I$

.

$X_0\in I$

.

The measure

![]() $\pi ^{\mathrm {inv}}$

will always be infinite under Assumption 1.2, typically resembling Lebesgue or counting measure on I but with extra mass near the origin. With these preliminaries in place, we can state our main result. For

$\pi ^{\mathrm {inv}}$

will always be infinite under Assumption 1.2, typically resembling Lebesgue or counting measure on I but with extra mass near the origin. With these preliminaries in place, we can state our main result. For

![]() $r\in \mathbb N$

one may define the probability measure

$r\in \mathbb N$

one may define the probability measure

where the product of two Markov kernels is another Markov kernel that is defined by

![]() $K_1K_2(x,A) := \int _I K_2(y,A)K_1(x,{\mathrm {d}} y)$

, and onward by associativity. Intuitively,

$K_1K_2(x,A) := \int _I K_2(y,A)K_1(x,{\mathrm {d}} y)$

, and onward by associativity. Intuitively,

![]() $P^\omega (r,\cdot )$

is the quenched transition probability at time r of a random walker starting from the origin, such that the walker at position x at time

$P^\omega (r,\cdot )$

is the quenched transition probability at time r of a random walker starting from the origin, such that the walker at position x at time

![]() $n-1$

uses the kernel

$n-1$

uses the kernel

![]() $K_n(x,\cdot )$

to determine what the position will be at time n, for every

$K_n(x,\cdot )$

to determine what the position will be at time n, for every

![]() $n\ge 0$

and

$n\ge 0$

and

![]() $x\in I$

. With these definitions and notations in place, we now define the drift constants

$x\in I$

. With these definitions and notations in place, we now define the drift constants

Then define the family of deterministic space-time functions

Definition 1.4. Let

![]() $P^\omega (r,\cdot )$

be as in (1.2) and let

$P^\omega (r,\cdot )$

be as in (1.2) and let

![]() $d_N$

be as in (1.3). Let

$d_N$

be as in (1.3). Let

![]() $D_{N,t,x}$

be the deterministic sequence of space-time functions defined just above. For

$D_{N,t,x}$

be the deterministic sequence of space-time functions defined just above. For

![]() $t\in N^{-1}{\mathbb {Z}}$

we define the rescaled density field

$t\in N^{-1}{\mathbb {Z}}$

we define the rescaled density field

Thus for each

![]() $t\in N^{-1}{\mathbb {Z}}_{\ge 0}$

we note that

$t\in N^{-1}{\mathbb {Z}}_{\ge 0}$

we note that

![]() $\mathfrak H^N(t,\cdot )$

is a nonnegative Borel measure on

$\mathfrak H^N(t,\cdot )$

is a nonnegative Borel measure on

![]() ${\mathbb {R}}$

, which is informally equal to the function given by

${\mathbb {R}}$

, which is informally equal to the function given by

![]() $x\mapsto N^{1/2}D_{N,t,x} P^\omega (Nt,d_Nt +N^{1/2}x)$

. The definition of

$x\mapsto N^{1/2}D_{N,t,x} P^\omega (Nt,d_Nt +N^{1/2}x)$

. The definition of

![]() $\mathfrak H^N(t,\cdot )$

is extended to

$\mathfrak H^N(t,\cdot )$

is extended to

![]() $t\in {\mathbb {R}}_+$

by linearly interpolating, that is, taking an appropriate convex combination of the two measures at the two nearest points of

$t\in {\mathbb {R}}_+$

by linearly interpolating, that is, taking an appropriate convex combination of the two measures at the two nearest points of

![]() $N^{-1}{\mathbb {Z}}_{\ge 0}$

.

$N^{-1}{\mathbb {Z}}_{\ge 0}$

.

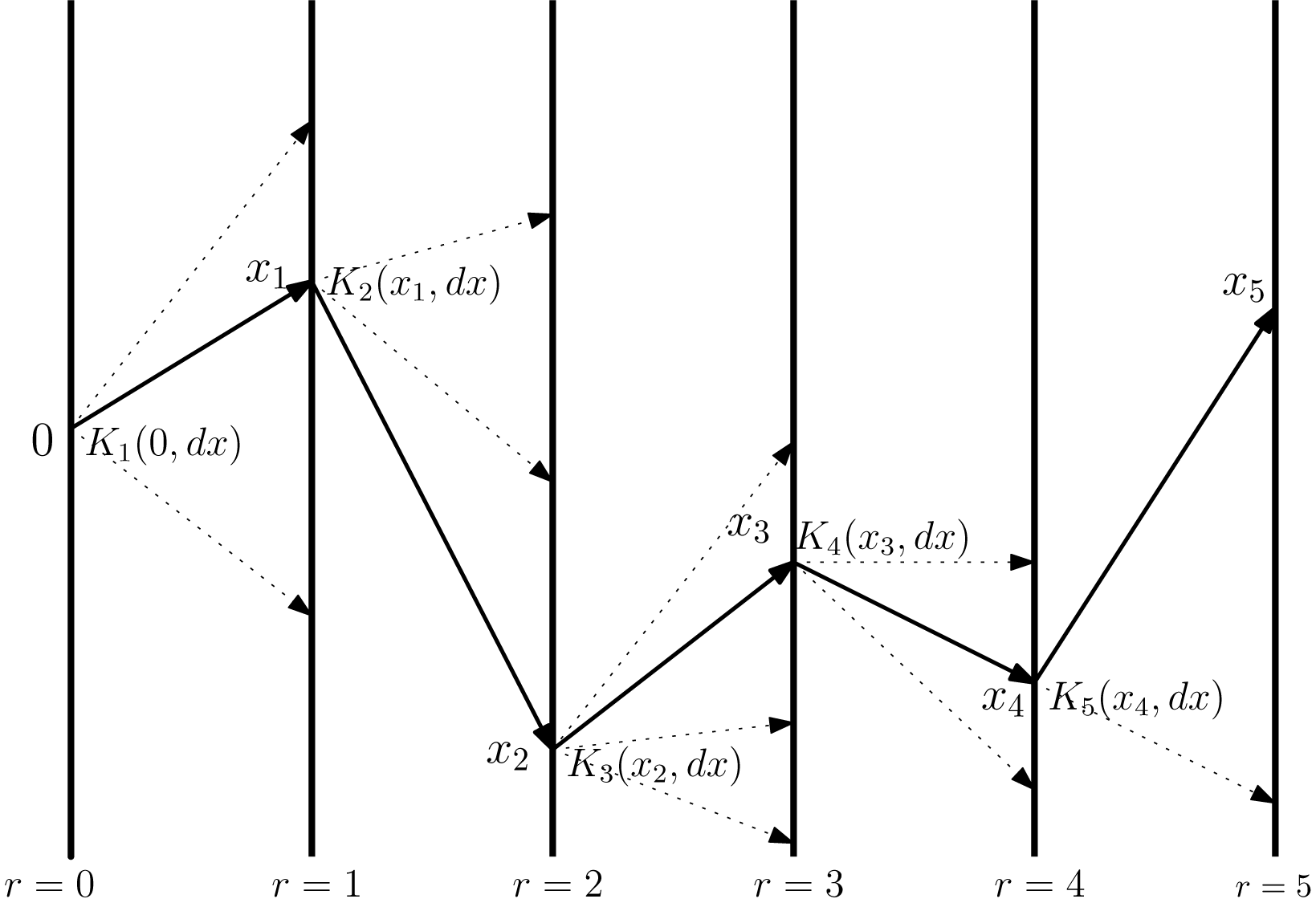

An illustration of our generalized model of random walk in random environment. Throughout the paper,

![]() $r \in {\mathbb {Z}}_{\ge 0}$

will be used to denote the microscopic time variable. The random walker starts at position

$r \in {\mathbb {Z}}_{\ge 0}$

will be used to denote the microscopic time variable. The random walker starts at position

![]() $x=0$

at time

$x=0$

at time

![]() $r=0$

, then samples

$r=0$

, then samples

![]() $x_1$

from the random probability measure

$x_1$

from the random probability measure

![]() $K_1(0,{\mathrm {d}} x)$

. After landing at position

$K_1(0,{\mathrm {d}} x)$

. After landing at position

![]() $x_1$

at time

$x_1$

at time

![]() $r=1$

, the random walker then samples

$r=1$

, the random walker then samples

![]() $x_2$

from the probability measure

$x_2$

from the probability measure

![]() $K_2(x_1,{\mathrm {d}} x)$

, and continues in this fashion. Each vertical line represents a copy of the underlying lattice

$K_2(x_1,{\mathrm {d}} x)$

, and continues in this fashion. Each vertical line represents a copy of the underlying lattice

![]() $I= {\mathbb {Z}}$

or

$I= {\mathbb {Z}}$

or

![]() $I={\mathbb {R}}$

, and the Markov kernel

$I={\mathbb {R}}$

, and the Markov kernel

![]() $K_i$

should be thought of as the entire collection of probability measures

$K_i$

should be thought of as the entire collection of probability measures

![]() $\{K_i(x,\cdot )\}_{x\in I}$

which lives on the vertical line

$\{K_i(x,\cdot )\}_{x\in I}$

which lives on the vertical line

![]() $r=i-1$

. The random environment is then the whole collection of Markov kernels

$r=i-1$

. The random environment is then the whole collection of Markov kernels

![]() $\{K_i\}_{i=1}^\infty $

which in this paper are assumed to be independent of one another for distinct values of i, translationally invariant in law, and sampled from the same distribution on Markov kernels on I. The dotted arrows in the above picture represent any one of many possible jumps that were not actually executed by the random walker in this random environment, while the solid arrows represent the jumps that were executed.

$\{K_i\}_{i=1}^\infty $

which in this paper are assumed to be independent of one another for distinct values of i, translationally invariant in law, and sampled from the same distribution on Markov kernels on I. The dotted arrows in the above picture represent any one of many possible jumps that were not actually executed by the random walker in this random environment, while the solid arrows represent the jumps that were executed.

Theorem 1.5 (Main result)

Let

![]() $\{K_n\}_{n\ge 1}$

denote an environment satisfying Assumption 1.2, fix

$\{K_n\}_{n\ge 1}$

denote an environment satisfying Assumption 1.2, fix

![]() ${T>0}$

, and let

${T>0}$

, and let

![]() $\mathfrak H^N$

be as in (1.4). There is an explicit Banach space X of distributions on

$\mathfrak H^N$

be as in (1.4). There is an explicit Banach space X of distributions on

![]() ${\mathbb {R}}$

, which is continuously embedded in

${\mathbb {R}}$

, which is continuously embedded in

![]() $\mathcal S'({\mathbb {R}})$

, such that the collection

$\mathcal S'({\mathbb {R}})$

, such that the collection

![]() $\{\mathfrak H^N\}_{N \ge 1}$

is tight with respect to the topology of

$\{\mathfrak H^N\}_{N \ge 1}$

is tight with respect to the topology of

![]() $C([0,T], X)$

. Furthermore any limit point as

$C([0,T], X)$

. Furthermore any limit point as

![]() $N\to \infty $

lies in

$N\to \infty $

lies in

![]() $C((0,T],C({\mathbb {R}}))$

and coincides with the law of the Itô solution of the SPDE

$C((0,T],C({\mathbb {R}}))$

and coincides with the law of the Itô solution of the SPDE

started with initial data

![]() $\mathcal U(0,x)=\delta _0(x)$

. Here

$\mathcal U(0,x)=\delta _0(x)$

. Here

![]() $\xi $

is a standard space-time white noise on

$\xi $

is a standard space-time white noise on

![]() ${\mathbb {R}}_+\times {\mathbb {R}},$

and

${\mathbb {R}}_+\times {\mathbb {R}},$

and

$$ \begin{align*}\gamma_{\mathrm{ext}}^2:= \frac{ \frac{(-1)^{p+1}}{(2p)!}\int_{I} \bigg[ \int_{I^2}(x-y)^{2p}\mu({\mathrm{d}} x)\mu({\mathrm{d}} y)-\int_I (a-z)^{2p} \boldsymbol{p}_{\mathbf{dif}}(z,\mathrm da)\bigg] \pi^{\mathrm{inv}}(\mathrm dz)}{\int_{I} \bigg[- |z|+\int_{I} |a| \; \boldsymbol{p}_{\mathbf{dif}}(z,\mathrm da)\bigg]\pi^{\mathrm{inv}}(\mathrm dz)}.\end{align*} $$

$$ \begin{align*}\gamma_{\mathrm{ext}}^2:= \frac{ \frac{(-1)^{p+1}}{(2p)!}\int_{I} \bigg[ \int_{I^2}(x-y)^{2p}\mu({\mathrm{d}} x)\mu({\mathrm{d}} y)-\int_I (a-z)^{2p} \boldsymbol{p}_{\mathbf{dif}}(z,\mathrm da)\bigg] \pi^{\mathrm{inv}}(\mathrm dz)}{\int_{I} \bigg[- |z|+\int_{I} |a| \; \boldsymbol{p}_{\mathbf{dif}}(z,\mathrm da)\bigg]\pi^{\mathrm{inv}}(\mathrm dz)}.\end{align*} $$

The explicit Banach space X is given by a weighted Hölder space with negative exponent and polynomial weight (neither will be optimized). See Section 5 for more details.

The identification of the coefficient

![]() $\gamma _{\mathrm {ext}}^2$

for this general context is one of the main technical challenges and novelties of this work. As remarked earlier, identifying this constant was a challenge even for very simple examples of models driven by IID weights. We remark that (by using that the first

$\gamma _{\mathrm {ext}}^2$

for this general context is one of the main technical challenges and novelties of this work. As remarked earlier, identifying this constant was a challenge even for very simple examples of models driven by IID weights. We remark that (by using that the first

![]() $p-1$

moments are deterministic, and the binomial formula to expand the

$p-1$

moments are deterministic, and the binomial formula to expand the

![]() $(2p)^{th}$

power) another way to write the integrand in the numerator is

$(2p)^{th}$

power) another way to write the integrand in the numerator is

$$ \begin{align*}\frac{(-1)^{p+1}}{(2p)!}\bigg[ &\int_{I^2}(x-y)^{2p}\mu({\mathrm{d}} x)\mu({\mathrm{d}} y)-\int_I (a-z)^{2p} \boldsymbol{p}_{\mathbf{dif}}(z,\mathrm da)\bigg]\\&= - (p!)^{-2} \bigg[m_{p}^2-\int_{I^2} (x-x_0)^p(y-y_0)^p \; \boldsymbol p^{(2)}((x_0,y_0),({\mathrm{d}} x,{\mathrm{d}} y))\bigg] \\ &= (p!)^{-2} \mathrm{Cov}\bigg( \int_I (x-x_0)^p K_1(x_0,{\mathrm{d}} x), \int_I (y-y_0)^p K_1(y_0,{\mathrm{d}} y)\bigg) ,\;\;\;\;\;\; \text{ where } x_0-y_0=z. \end{align*} $$

$$ \begin{align*}\frac{(-1)^{p+1}}{(2p)!}\bigg[ &\int_{I^2}(x-y)^{2p}\mu({\mathrm{d}} x)\mu({\mathrm{d}} y)-\int_I (a-z)^{2p} \boldsymbol{p}_{\mathbf{dif}}(z,\mathrm da)\bigg]\\&= - (p!)^{-2} \bigg[m_{p}^2-\int_{I^2} (x-x_0)^p(y-y_0)^p \; \boldsymbol p^{(2)}((x_0,y_0),({\mathrm{d}} x,{\mathrm{d}} y))\bigg] \\ &= (p!)^{-2} \mathrm{Cov}\bigg( \int_I (x-x_0)^p K_1(x_0,{\mathrm{d}} x), \int_I (y-y_0)^p K_1(y_0,{\mathrm{d}} y)\bigg) ,\;\;\;\;\;\; \text{ where } x_0-y_0=z. \end{align*} $$

In the second line, we use the fact that all terms except the middle terms are zero by the deterministic moments in Assumption 1.2 Item (4). We will give for each

![]() $p\in \mathbb N$

a nontrivial example of a model where Theorem 1.5 is applicable. In [Reference Das, Drillick and ParekhDDP24b] we had coined the term “environmental variance coefficient” for

$p\in \mathbb N$

a nontrivial example of a model where Theorem 1.5 is applicable. In [Reference Das, Drillick and ParekhDDP24b] we had coined the term “environmental variance coefficient” for

![]() $\gamma _{\mathrm {ext}}$

, which in that paper was

$\gamma _{\mathrm {ext}}$

, which in that paper was

![]() $\frac {8\sigma ^2}{1-4\sigma ^2}$

for the specific choice of model discussed earlier. In the expression for

$\frac {8\sigma ^2}{1-4\sigma ^2}$

for the specific choice of model discussed earlier. In the expression for

![]() $\gamma _{\mathrm {ext}}^2$

, one may show from Assumption 1.2 that the integrand in both the numerator and denominator are exponentially decaying functions of the variable z, thus by Proposition 1.3 they are both finite quantities. Jensen’s inequality and the full support of

$\gamma _{\mathrm {ext}}^2$

, one may show from Assumption 1.2 that the integrand in both the numerator and denominator are exponentially decaying functions of the variable z, thus by Proposition 1.3 they are both finite quantities. Jensen’s inequality and the full support of

![]() $\pi ^{\mathrm {inv}}$

imply that the denominator is nonvanishing, thus

$\pi ^{\mathrm {inv}}$

imply that the denominator is nonvanishing, thus

![]() $\gamma _{\mathrm {ext}}^2$

is always a finite value. It will be an artifact of the proof of the above theorem that the numerator of the expression for

$\gamma _{\mathrm {ext}}^2$

is always a finite value. It will be an artifact of the proof of the above theorem that the numerator of the expression for

![]() $\gamma _{\mathrm {ext}}^2$

cannot be a negative value (hence there is no contradiction); see, for example, Proposition 5.18(4) below. With this said, we are not sure if

$\gamma _{\mathrm {ext}}^2$

cannot be a negative value (hence there is no contradiction); see, for example, Proposition 5.18(4) below. With this said, we are not sure if

![]() $\gamma _{\mathrm {ext}}^2$

can be zero under the above assumptions, though one may directly show

$\gamma _{\mathrm {ext}}^2$

can be zero under the above assumptions, though one may directly show

![]() $\gamma _{\mathrm {ext}}^2>0$

for many models of interest.

$\gamma _{\mathrm {ext}}^2>0$

for many models of interest.

1.2 Context and discussion about the result

Besides the fact that the limit is given by the multiplicative-noise stochastic heat equation (1.5), there are two other aspects of the above result that are worth noting.

-

1. As (1.4) suggests, these KPZ fluctuations are obtained by probing the tail of the probability distribution

$P^\omega (r,{\mathrm {d}} y)$

near spatial location

$P^\omega (r,{\mathrm {d}} y)$

near spatial location

$y= d_N t+N^{1/2}x$

at time

$y= d_N t+N^{1/2}x$

at time

$r = Nt$

, where N is very large. It is not so surprising that the spatial variable x needs a factor

$r = Nt$

, where N is very large. It is not so surprising that the spatial variable x needs a factor

$N^{1/2}$

at time of order N, since this scaling respects the parabolic structure of the limiting equation. However the factor

$N^{1/2}$

at time of order N, since this scaling respects the parabolic structure of the limiting equation. However the factor

$d_N$

in front of t is more mysterious and eluded a rigorous understanding until recently. This drift term of course begs the question of whether or not there are other locations in the tail of

$d_N$

in front of t is more mysterious and eluded a rigorous understanding until recently. This drift term of course begs the question of whether or not there are other locations in the tail of

$P^\omega (Nt,\cdot )$

where nontrivial fluctuation behavior can be observed.

$P^\omega (Nt,\cdot )$

where nontrivial fluctuation behavior can be observed.It is now known that

$d_Nt $

is the unique spatial location at which one expects the KPZ equation to arise. If one were to probe the tail of

$d_Nt $

is the unique spatial location at which one expects the KPZ equation to arise. If one were to probe the tail of

$P^\omega (Nt,\cdot )$

at a spatial location of order that is strictly smaller than

$P^\omega (Nt,\cdot )$

at a spatial location of order that is strictly smaller than

$d_Nt$

, one will instead obtain a Gaussian field as the limit, as evidenced by works [Reference Balázs, Rassoul-Agha and SeppäläinenBRAS06, Reference YuYu16, Reference Joseph, Rassoul-Agha and SeppäläinenJRAS19]. These Gaussian fluctuations can be proved for the present model using similar techniques to the ones developed in the present work, see [Reference Drillick and ParekhDP25].

$d_Nt$

, one will instead obtain a Gaussian field as the limit, as evidenced by works [Reference Balázs, Rassoul-Agha and SeppäläinenBRAS06, Reference YuYu16, Reference Joseph, Rassoul-Agha and SeppäläinenJRAS19]. These Gaussian fluctuations can be proved for the present model using similar techniques to the ones developed in the present work, see [Reference Drillick and ParekhDP25].On the other hand, when

$d_Nt$

is replaced by a larger quantity (i.e., probing even deeper into the tail of

$d_Nt$

is replaced by a larger quantity (i.e., probing even deeper into the tail of

$P^\omega (Nt,\cdot )$

) it was shown in [Reference Barraquand and CorwinBC17, Reference Barraquand and RychnovskyBR20] that under certain exactly solvable stochastic kernels

$P^\omega (Nt,\cdot )$

) it was shown in [Reference Barraquand and CorwinBC17, Reference Barraquand and RychnovskyBR20] that under certain exactly solvable stochastic kernels

$K_i$

, one obtains the Tracy-Widom GUE distribution as the one-point fluctuations. The Tracy-Widom GUE distribution is the one-point marginal of a space-time process called the directed landscape [Reference Matetski, Quastel and RemenikMQR21, Reference Dauvergne, Ortmann and ViragDOV22], a recently constructed universal scaling limit of models in the KPZ universality class. We expect that for all models of stochastic kernels as above and all locations in the tail of

$K_i$

, one obtains the Tracy-Widom GUE distribution as the one-point fluctuations. The Tracy-Widom GUE distribution is the one-point marginal of a space-time process called the directed landscape [Reference Matetski, Quastel and RemenikMQR21, Reference Dauvergne, Ortmann and ViragDOV22], a recently constructed universal scaling limit of models in the KPZ universality class. We expect that for all models of stochastic kernels as above and all locations in the tail of

$P^\omega (Nt,\cdot )$

that are strictly larger than

$P^\omega (Nt,\cdot )$

that are strictly larger than

$d_N t$

, we have the directed landscape as the scaling limit. However, this is still a difficult open problem and far beyond the scope of the present work.

$d_N t$

, we have the directed landscape as the scaling limit. However, this is still a difficult open problem and far beyond the scope of the present work.Consequently, the spatial location

$d_N$

is where one observes the “crossover” from Gaussian to non-Gaussian fluctuation behavior, and the Hopf-Cole solution of the KPZ equation describes this crossover.

$d_N$

is where one observes the “crossover” from Gaussian to non-Gaussian fluctuation behavior, and the Hopf-Cole solution of the KPZ equation describes this crossover. -

2. There is a nontrivial environmental variance coefficient

$\gamma _{\mathrm {ext}}$

appearing in front of the noise, which depends on the weights only through their two point motions

$\gamma _{\mathrm {ext}}$

appearing in front of the noise, which depends on the weights only through their two point motions

$\boldsymbol p^{(2)}$

. We shall see later in our proofs that this coefficient arises through interaction of the two particles of the two-point motion generated by the stochastic kernels. Theorem 1.5 thus implies that under the scaling (1.4), the information about the kernels that survives in the limit is only up to the two point motion. Even then, only certain specific observables of the two-point motion are detected by the limiting equation (1.5). But any finer information about higher-order particle interactions will vanish in the limit. This question is related to physics literature of extreme diffusion theory [Reference Le Doussal and ThieryLDT17, Reference Thiery and Le DoussalTLD16, Reference Barraquand and Le DoussalBLD20, Reference Hass, Carroll-Godfrey, Corwin and CorwinHCGCC23, Reference Hartmann, Krajenbrink and Le DoussalHKLD23, Reference Hass, Corwin and CorwinHCC23, Reference Le DoussalLD23, Reference Hass, Drillick, Corwin and CorwinHDCC24, Reference HassHas25].

$\boldsymbol p^{(2)}$

. We shall see later in our proofs that this coefficient arises through interaction of the two particles of the two-point motion generated by the stochastic kernels. Theorem 1.5 thus implies that under the scaling (1.4), the information about the kernels that survives in the limit is only up to the two point motion. Even then, only certain specific observables of the two-point motion are detected by the limiting equation (1.5). But any finer information about higher-order particle interactions will vanish in the limit. This question is related to physics literature of extreme diffusion theory [Reference Le Doussal and ThieryLDT17, Reference Thiery and Le DoussalTLD16, Reference Barraquand and Le DoussalBLD20, Reference Hass, Carroll-Godfrey, Corwin and CorwinHCGCC23, Reference Hartmann, Krajenbrink and Le DoussalHKLD23, Reference Hass, Corwin and CorwinHCC23, Reference Le DoussalLD23, Reference Hass, Drillick, Corwin and CorwinHDCC24, Reference HassHas25].

Works such as [Reference Brockington and WarrenBW22, Reference Hass, Drillick, Corwin and CorwinHDCC24, Reference Das, Drillick and ParekhDDP24b] suggest that for particular models the Markov chain

![]() $\boldsymbol {p}_{\mathbf {dif}}$

is reversible with respect to

$\boldsymbol {p}_{\mathbf {dif}}$

is reversible with respect to

![]() $\pi ^{\mathrm {inv}}$

, and that the coefficient

$\pi ^{\mathrm {inv}}$

, and that the coefficient

![]() $\gamma _{\mathrm {ext}}^2$

can be simplified further into nicer forms. For example, for the model of [Reference Das, Drillick and ParekhDDP24b] explained earlier, the Markov chain

$\gamma _{\mathrm {ext}}^2$

can be simplified further into nicer forms. For example, for the model of [Reference Das, Drillick and ParekhDDP24b] explained earlier, the Markov chain

![]() $\boldsymbol {p}_{\mathbf {dif}}$

just evolves as simple symmetric random walk on

$\boldsymbol {p}_{\mathbf {dif}}$

just evolves as simple symmetric random walk on

![]() ${\mathbb {Z}}$

except that the origin is more attractive, hence

${\mathbb {Z}}$

except that the origin is more attractive, hence

![]() $\pi ^{\mathrm {inv}}$

is counting measure on

$\pi ^{\mathrm {inv}}$

is counting measure on

![]() ${\mathbb {Z}}$

with some extra mass at the origin which can be calculated explicitly by solving the detailed balance equations. In the present work we do not explore the general question of trying to massage the expression for

${\mathbb {Z}}$

with some extra mass at the origin which can be calculated explicitly by solving the detailed balance equations. In the present work we do not explore the general question of trying to massage the expression for

![]() $\gamma _{\mathrm {ext}}^2$

into alternative forms.

$\gamma _{\mathrm {ext}}^2$

into alternative forms.

One of the novel aspects of our result is that we have not assumed very strong spatial mixing conditions on the kernel

![]() $K_1$

, only the much weaker condition (5) in Assumption 1.2, which states that fast spatial mixing holds only up to the first

$K_1$

, only the much weaker condition (5) in Assumption 1.2, which states that fast spatial mixing holds only up to the first

![]() $4p$

joint moments. In particular, we allow the model to have “infinite range of interaction.” As we will see in Section 6, Theorem 1.5 includes as special cases our main results from [Reference Das, Drillick and ParekhDDP24b, Reference Das, Drillick and ParekhDDP24a], with the caveat that we could prove additional interesting results in those papers thanks to the specific structure (see Remark 1.8 below). As noted above, these generalized stochastic kernel models may see a nontrivial interaction among nearby particles, which would include models such as the transport SPDE from [Reference Brockington and WarrenBW22]. The main purpose of the present work is to illustrate the scope and generality of our methods from [Reference Das, Drillick and ParekhDDP24a, Reference Das, Drillick and ParekhDDP24b], showing that they can still be applied in the above setting. It would be difficult to implement, for example, a polynomial chaos in this setting because we do not assume spatial independence, only fast decay of correlations up to (4

$4p$

joint moments. In particular, we allow the model to have “infinite range of interaction.” As we will see in Section 6, Theorem 1.5 includes as special cases our main results from [Reference Das, Drillick and ParekhDDP24b, Reference Das, Drillick and ParekhDDP24a], with the caveat that we could prove additional interesting results in those papers thanks to the specific structure (see Remark 1.8 below). As noted above, these generalized stochastic kernel models may see a nontrivial interaction among nearby particles, which would include models such as the transport SPDE from [Reference Brockington and WarrenBW22]. The main purpose of the present work is to illustrate the scope and generality of our methods from [Reference Das, Drillick and ParekhDDP24a, Reference Das, Drillick and ParekhDDP24b], showing that they can still be applied in the above setting. It would be difficult to implement, for example, a polynomial chaos in this setting because we do not assume spatial independence, only fast decay of correlations up to (4

![]() $p)^{th}$

-order joint moments of

$p)^{th}$

-order joint moments of

![]() $K_1(x,\cdot )$

, as in Assumption 1.2 Item (5). But even more crucially, it is unclear what the chaos variables would be under the minimal nature of Assumption 1.2.

$K_1(x,\cdot )$

, as in Assumption 1.2 Item (5). But even more crucially, it is unclear what the chaos variables would be under the minimal nature of Assumption 1.2.

Next, we give some further remarks about how to understand the result, and further problems about the quenched density that still remain open.

Remark 1.6. (Drift term and crossover exponents) Let us comment on the drift term

![]() $d_N$

from (1.3). By Taylor expansion at the origin of the function

$d_N$

from (1.3). By Taylor expansion at the origin of the function

![]() $\lambda \mapsto \int _I xe^{x\lambda -\log M(\lambda )}\mu ({\mathrm {d}} x)$

we see that to leading order,

$\lambda \mapsto \int _I xe^{x\lambda -\log M(\lambda )}\mu ({\mathrm {d}} x)$

we see that to leading order,

The terms in the expansion are just the cumulants of

![]() $\mu $

. In Theorem 1.5 it is actually allowable to replace

$\mu $

. In Theorem 1.5 it is actually allowable to replace

![]() $d_N$

by

$d_N$

by

![]() $\tilde d_N$

, where the latter is given by truncating the above expansion once it reaches scales smaller than

$\tilde d_N$

, where the latter is given by truncating the above expansion once it reaches scales smaller than

![]() $N^{1/2}$

, as that is the spatial fluctuation scale in Theorem 1.5. If

$N^{1/2}$

, as that is the spatial fluctuation scale in Theorem 1.5. If

![]() $p=1$

and

$p=1$

and

![]() $m_1=0$

, then

$m_1=0$

, then

![]() $\tilde d_N=N^{3/4}+\frac 12 m_3 N^{1/2},$

because these are the only terms of order

$\tilde d_N=N^{3/4}+\frac 12 m_3 N^{1/2},$

because these are the only terms of order

![]() $N^{1/2}$

and higher, and subleading order terms may thus be disregarded. However if

$N^{1/2}$

and higher, and subleading order terms may thus be disregarded. However if

![]() $p=2$

and

$p=2$

and

![]() $m_1=0$

the leading order term is

$m_1=0$

the leading order term is

![]() $N^{7/8}$

but there are still additional relevant terms of order

$N^{7/8}$

but there are still additional relevant terms of order

![]() $N^{3/4}$

and then

$N^{3/4}$

and then

![]() $N^{5/8}$

which cannot be disregarded as these surpass the spatial scaling of order

$N^{5/8}$

which cannot be disregarded as these surpass the spatial scaling of order

![]() $N^{1/2}.$

Likewise, for larger values of p, there will be increasingly many subleading terms that contribute to the correct drift term. For instance when

$N^{1/2}.$

Likewise, for larger values of p, there will be increasingly many subleading terms that contribute to the correct drift term. For instance when

![]() $p=20$

there may be as many as 39 terms of subleading order beyond the leading order term

$p=20$

there may be as many as 39 terms of subleading order beyond the leading order term

![]() $N^{79/80}$

that are still relevant in the recentering, and the coefficients of these terms would depend in some complicated way on the first 41 moments of

$N^{79/80}$

that are still relevant in the recentering, and the coefficients of these terms would depend in some complicated way on the first 41 moments of

![]() $\mu $

. This is why we have simply chosen to write the simpler nonexpanded form of

$\mu $

. This is why we have simply chosen to write the simpler nonexpanded form of

![]() $d_N,$

as opposed to previous special cases [Reference Das, Drillick and ParekhDDP24a, Reference Das, Drillick and ParekhDDP24b]. This idea of going out to larger scales to see the KPZ equation arising is very much reminiscent of [Reference Hairer and QuastelHQ18, Theorem 1.1], where the authors observed a similar hierarchy of nonequivalent scalings leading to the KPZ equation, albeit in a very different context than stochastic flow models. It is also reminiscent of [Reference Hairer and ShenHS17], where the authors similarly observe cumulant-based techniques for certain models of non-Gaussian polymers to obtain the KPZ equation as the scaling limit.

$d_N,$

as opposed to previous special cases [Reference Das, Drillick and ParekhDDP24a, Reference Das, Drillick and ParekhDDP24b]. This idea of going out to larger scales to see the KPZ equation arising is very much reminiscent of [Reference Hairer and QuastelHQ18, Theorem 1.1], where the authors observed a similar hierarchy of nonequivalent scalings leading to the KPZ equation, albeit in a very different context than stochastic flow models. It is also reminiscent of [Reference Hairer and ShenHS17], where the authors similarly observe cumulant-based techniques for certain models of non-Gaussian polymers to obtain the KPZ equation as the scaling limit.

In this sense, Theorem 1.5 shows that

![]() $\frac {4p-1}{4p}$

is the crossover exponent of this model, that is, the unique exponent where one observes the crossover from Gaussian to non-Gaussian behavior (since

$\frac {4p-1}{4p}$

is the crossover exponent of this model, that is, the unique exponent where one observes the crossover from Gaussian to non-Gaussian behavior (since

![]() $d_N-m_1N$

is given by

$d_N-m_1N$

is given by

![]() $N^{\frac {4p-1}{4p}}$

to leading order). The question of calculating these crossover exponents is physically interesting, and Theorem 1.5 thus provides a fairly complete picture of finding the crossover exponent in one-dimensional random walks in random media, as well as the question of calculating the environmental variance coefficient in full generality. Interestingly, the possible crossover exponents form a discrete set

$N^{\frac {4p-1}{4p}}$

to leading order). The question of calculating these crossover exponents is physically interesting, and Theorem 1.5 thus provides a fairly complete picture of finding the crossover exponent in one-dimensional random walks in random media, as well as the question of calculating the environmental variance coefficient in full generality. Interestingly, the possible crossover exponents form a discrete set

![]() $\{3/4, 7/8, 11/12, 15/16,...\}$

. While discussing the subject of the drift term and the crossover exponents, let us now acknowledge two concurrent physics works that are strongly related to this work, and provided much of the motivation for it.

$\{3/4, 7/8, 11/12, 15/16,...\}$

. While discussing the subject of the drift term and the crossover exponents, let us now acknowledge two concurrent physics works that are strongly related to this work, and provided much of the motivation for it.

While we were initially only aware of the case

![]() $p=1$

, it was observed by J. Hass [Reference HassHas25] that for certain types of models satisfying “symmetry up to order p” one expects different locations than

$p=1$

, it was observed by J. Hass [Reference HassHas25] that for certain types of models satisfying “symmetry up to order p” one expects different locations than

![]() $N^{3/4}$

at which the KPZ equation appears in the tail, for instance

$N^{3/4}$

at which the KPZ equation appears in the tail, for instance

![]() $N^{7/8}$

or

$N^{7/8}$

or

![]() $N^{11/12}.$

That paper observes that the fluctuations would approach the deterministic heat equation if, for instance,

$N^{11/12}.$

That paper observes that the fluctuations would approach the deterministic heat equation if, for instance,

![]() $p=2$

but one probes into location

$p=2$

but one probes into location

![]() $N^{3/4}$

within the tail of the quenched density

$N^{3/4}$

within the tail of the quenched density

![]() $P^\omega (Nt,\cdot )$

rather than

$P^\omega (Nt,\cdot )$

rather than

![]() $N^{7/8}$

as in Theorem 1.5. That paper furthermore establishes the conjecture of super-universality of KPZ arising for different values of p, based on a more physical argument (in the spirit of earlier work of [Reference Le Doussal and ThieryLDT17, Reference Barraquand and Le DoussalBLD20] on the 3/4 case). For the 7/8 case, [Reference HassHas25] is also able to numerically validate his predictions and show they hold to reasonable size of N. This paper of Hass provided the inspiration for us to consider the

$N^{7/8}$

as in Theorem 1.5. That paper furthermore establishes the conjecture of super-universality of KPZ arising for different values of p, based on a more physical argument (in the spirit of earlier work of [Reference Le Doussal and ThieryLDT17, Reference Barraquand and Le DoussalBLD20] on the 3/4 case). For the 7/8 case, [Reference HassHas25] is also able to numerically validate his predictions and show they hold to reasonable size of N. This paper of Hass provided the inspiration for us to consider the

![]() $p>1$

case in the present work.

$p>1$

case in the present work.

Another physics paper [Reference Hass, Drillick, Corwin and CorwinHDCC24] argues based on convergence of second moments to those of the SHE for the convergence to the KPZ equation just in the 3/4 exponent case

![]() $(p=1)$

. That paper also includes a very nice interpretation for the coefficient

$(p=1)$

. That paper also includes a very nice interpretation for the coefficient

![]() $\gamma _{\mathrm {ext}}$

, translates the convergence result into the language of extreme diffusion theory, and numerically validates the asymptotic predictions down to rather small values of N.

$\gamma _{\mathrm {ext}}$

, translates the convergence result into the language of extreme diffusion theory, and numerically validates the asymptotic predictions down to rather small values of N.

Remark 1.7 (Optimality questions)

In Condition (3) the normalization condition

![]() $m_2-m_1^2=1$

is not crucial, one can always change the lattice spacing to make this condition true (e.g., replace

$m_2-m_1^2=1$

is not crucial, one can always change the lattice spacing to make this condition true (e.g., replace

![]() $I={\mathbb {Z}}$

by

$I={\mathbb {Z}}$

by

![]() $I=a{\mathbb {Z}}$

for the appropriate value of

$I=a{\mathbb {Z}}$

for the appropriate value of

![]() $a>0$

, and rescale the kernels accordingly). For the limiting equation, this rescaling just corresponds to changing the diffusion coefficient

$a>0$

, and rescale the kernels accordingly). For the limiting equation, this rescaling just corresponds to changing the diffusion coefficient

![]() $\frac 12$

to some other value in (1.5).

$\frac 12$

to some other value in (1.5).

On the other hand, we are not sure if the exponential moments condition on

![]() $\mu $

can be relaxed without changing the outcome of Theorem 1.5, for example, in polymers just six moments is enough [Reference Alberts, Khanin and QuastelAKQ14, Reference Dey and ZygourasDZ16]. However in our model we rely on the moment generating function more crucially and we are unsure if there are more optimal conditions. Certainly at least

$\mu $

can be relaxed without changing the outcome of Theorem 1.5, for example, in polymers just six moments is enough [Reference Alberts, Khanin and QuastelAKQ14, Reference Dey and ZygourasDZ16]. However in our model we rely on the moment generating function more crucially and we are unsure if there are more optimal conditions. Certainly at least

![]() $4p$

moments is necessary since this many moments appear in all of the cumulant expansions and the statement of Theorem 1.5, but we have no conjecture about optimality.

$4p$

moments is necessary since this many moments appear in all of the cumulant expansions and the statement of Theorem 1.5, but we have no conjecture about optimality.

Another question is whether it is possible to upgrade the convergence in Theorem 1.5 to this topology, since the law of the limiting SPDE is supported on

![]() $C((0,T],C(\Bbb R))$

. We showed in [Reference Das, Drillick and ParekhDDP24b] that this is impossible. This is intimately related to the failure of the chaos expansion technique for this model and the failure of the noise coefficient of the limiting SPDE to match the noise coefficient of the prelimiting model (both of which were also explained there). See the end of Subsection 6.3 for more discussion on this. Thus, we conclude that weak convergence in a topology of tempered distributions is the best that one may hope for in Theorem 1.5.

$C((0,T],C(\Bbb R))$

. We showed in [Reference Das, Drillick and ParekhDDP24b] that this is impossible. This is intimately related to the failure of the chaos expansion technique for this model and the failure of the noise coefficient of the limiting SPDE to match the noise coefficient of the prelimiting model (both of which were also explained there). See the end of Subsection 6.3 for more discussion on this. Thus, we conclude that weak convergence in a topology of tempered distributions is the best that one may hope for in Theorem 1.5.

Another interesting question is if the decay of

![]() $\mathrm {F_{decay}}$

in Assumption 1.2 Item (5) can be replaced by a weaker decay such as just being decreasing and integrable, for example,

$\mathrm {F_{decay}}$

in Assumption 1.2 Item (5) can be replaced by a weaker decay such as just being decreasing and integrable, for example,

![]() $\int _0^\infty \mathrm {F_{decay}}(x){\mathrm {d}} x<\infty $

. Although we have not tried to optimize this assumption, we believe that the latter condition is the truly optimal one. The only place we use the stronger condition is the proof of Proposition 4.9, where it is needed to control one of the error terms coming from the martingale equation.

$\int _0^\infty \mathrm {F_{decay}}(x){\mathrm {d}} x<\infty $

. Although we have not tried to optimize this assumption, we believe that the latter condition is the truly optimal one. The only place we use the stronger condition is the proof of Proposition 4.9, where it is needed to control one of the error terms coming from the martingale equation.

An even more interesting question would be to introduce some weak temporal correlation between the stochastic kernels

![]() $K_i$

, rather than assuming they are independent as we do here. This would require a completely different proof than the one given here, as the k-point motion would lose the Markov property which is crucial in all of our calculations. This may be explored in future work.

$K_i$

, rather than assuming they are independent as we do here. This would require a completely different proof than the one given here, as the k-point motion would lose the Markov property which is crucial in all of our calculations. This may be explored in future work.

Remark 1.8 (Quenched tail field)

While we have defined the rescaled field (1.4) as a distribution-valued field (i.e., only well-defined when integrated against spatial test functions), one might ask about function-valued counterparts. This leads us to define the quenched tail field. For

![]() $t\in N^{-1}{\mathbb {Z}}_{\ge 0}$

and

$t\in N^{-1}{\mathbb {Z}}_{\ge 0}$

and

![]() $x\in {\mathbb {R}}$

we define the quenched tail field by

$x\in {\mathbb {R}}$

we define the quenched tail field by

with

![]() $P^\omega $

as in (1.2). Then we may prove the following result.

$P^\omega $

as in (1.2). Then we may prove the following result.

Fix

![]() $m\in \mathbb {N}$

, and

$m\in \mathbb {N}$

, and

![]() $\phi _1,\ldots ,\phi _m\in C_c^{\infty }(\mathbb {R})$

. Consider sequences

$\phi _1,\ldots ,\phi _m\in C_c^{\infty }(\mathbb {R})$

. Consider sequences

![]() $t_{N,1},\ldots ,t_{N,m} \in N^{-1}{\mathbb {Z}}_{\ge 0}$

such that

$t_{N,1},\ldots ,t_{N,m} \in N^{-1}{\mathbb {Z}}_{\ge 0}$

such that

![]() $t_{N,i} \to t_i>0$

for all

$t_{N,i} \to t_i>0$

for all

![]() $1\le i \le m$

as

$1\le i \le m$

as

![]() $N\to \infty .$

Then

$N\to \infty .$

Then

$$ \begin{align*} \bigg( \int_{\mathbb{R}} \phi_i(x) F_N(t_{N,i},x) {\mathrm{d}} x\bigg)_{i=1}^m \stackrel{d}{\to} \bigg( \int_{\mathbb{R}} \phi_i(x) \mathcal U_{t_i}(x) {\mathrm{d}} x\bigg)_{i=1}^m. \end{align*} $$

$$ \begin{align*} \bigg( \int_{\mathbb{R}} \phi_i(x) F_N(t_{N,i},x) {\mathrm{d}} x\bigg)_{i=1}^m \stackrel{d}{\to} \bigg( \int_{\mathbb{R}} \phi_i(x) \mathcal U_{t_i}(x) {\mathrm{d}} x\bigg)_{i=1}^m. \end{align*} $$

Here

![]() $(t,x)\mapsto \mathcal U_t(x)$

is the solution of (1.5).

$(t,x)\mapsto \mathcal U_t(x)$

is the solution of (1.5).

The proof of this follows using the same exact arguments used in Sections 7 of [Reference Das, Drillick and ParekhDDP24a] and [Reference Das, Drillick and ParekhDDP24b], first proving that

![]() ${\mathbb {E}}[F_N(t,x)] \leq 4(\pi t)^{-1/2}$

and then proceeding exactly as we did in those papers using the result of Theorem 1.5 and integrating by parts.

${\mathbb {E}}[F_N(t,x)] \leq 4(\pi t)^{-1/2}$

and then proceeding exactly as we did in those papers using the result of Theorem 1.5 and integrating by parts.

While proving convergence with test functions is interesting, one might ask about multi-point convergence in law of

![]() $F_N$

at some finite collection of individual points

$F_N$

at some finite collection of individual points

![]() $\{(t_{N,i},x_{N,i})\}_{i=1}^m$

converging as

$\{(t_{N,i},x_{N,i})\}_{i=1}^m$

converging as

![]() $N\to \infty $

to

$N\to \infty $

to

![]() $\{(t_i,x_i)\}_{i=1}^m$