1 Introduction

Archaeologists have focused on the study of the provenance of cultural materials to solve inquiries such as which natural deposits were exploited in the past, whether the artifacts were of local or foreign origin, what the procedures were for manufacturing specific cultural materials, which routes of exchange existed, and more (Baxter et al., Reference Baxter, Beardah, Cool and Jackson2003; Glascock, Reference Glascock and Neff1992). Nowadays, this kind of research usually includes the use of instrumental analysis techniques, like neutron activation (NAA), X-ray diffraction, and X-ray fluorescence (XRF), among others, basing their inferences mainly on the search for groups with similar physical or chemical characteristics so that cultural questions can be answered. With the advancement of modern technology, archaeologists have access to a greater amount of information. This implies that the data dimensionality is larger and more complex. Therefore, it is necessary to use methods that can process this information to extract the underlying structure of the data.

In the publication A Systematic Approach to Obsidian Source Characterization (Glascock et al., Reference Glascock, Braswell, Cobean and Shackley1998), the authors proposed an ordered set of procedures for determining the provenance of obsidian artifacts in which they recommended placing special emphasis on: (i) the collection of samples, (ii) their chemical analysis, and (iii) the statistical procedure. These authors stated that if these steps were properly applied, there could be a high level of confidence in the correct assignment of obsidian artifacts with their respective geological deposits. In the last step, historically, the process of data exploration and classification has been partially solved by using biplots of individual chemical elements or ratios of elements or multivariate statistics such as principal component analysis (PCA), cluster analysis (CA), or discriminant analysis (DA). However, it has now been proved that these classical techniques have serious limitations in terms of their ability to recover the true structure of the cluster or even to recognize that the data do not have real grouping structures. That is why, to validate any type of inference, it takes more than just applying any multivariate technique following pre-established procedures.

By reviewing published literature related to the provenance query, it has become evident that, despite the passage of time, there has been no reflection on the ineffectiveness of the traditional procedures used to associate the samples to their respective natural deposits, which therefore distances the archaeological interpretation from reality due to the propagation of errors introduced by poor data management. For example, we have been able to verify that there are errors in the assignments of samples in many applications. Even in cases in which the original geological sources are known and they belong to different geographical regions, there are overlaps between the groups of samples that make it almost impossible to assign with certainty items to any of the sources or to differentiate sub-sources. These types of results can be the consequence of several factors involved in the handling of the data, among which are an inadequate transformation of the data, an incorrect or absent diagnosis of the results, or a violation in the theoretical assumptions of the classical statistics used, causing the lack of fit between the model and the data and biased results that are far from reality.

In most cases an adequate diagnosis of the data is not made, ignoring the existence of outliers or simply eliminating those samples whose behavior is unusual based on unreliable criteria such as their dispersion according to the standard deviation or their Mahalanobis distance, the latter of which has been proved to have masking and swamping problems that considerably affect it as a reliable criterion for outlier detection (Ben-Gal, Reference Ben-Gal, Maimon and Rokach2005). Another example refers to validation, which makes it possible to evaluate the degree of accuracy, bias, reproducibility, success, and traceability of results. When validation is avoided, it is impossible to determine if the studied process or method is suitable for the research, the solution of the algorithm is adequate, and the logic of the procedures to evaluate and validate the results is appropriate. Table 1 summarizes several archaeometric investigations related to provenance studies, indicating where overlap problems are present or what we could consider a lack of fitness or the poor prediction of models.

Examples of publications that use bivariate plots of elements, ratios between elements, or statistical components and in which overlaps between groups are observed

| Reference | Archaeological site and/or geographical region | n | Analytical instrument | Multivariate technique | Bivariate plots | Detected overlaps between sources |

|---|---|---|---|---|---|---|

| Glascock et al., Reference Glascock, Braswell, Cobean and Shackley1998 | Central Mexico and Guatemala | 712 | NAA | PCA | (log10) PC1 vs. PC2 | Guatemala region and Orizaba with Otumba; Pachuca 1, 2, and 3 |

| Glascock, Reference Glascock2002 | Chichen Itza, Yucatan, Mexico | 421 | NAA | N/A | Mn vs. Na | Otumba with Paredon |

| Smith et al., Reference Smith, Burke, Hare and Glascock2007 | Yautepec, Morelos, Mexico | 390 | XRF and NAA | DA | Na vs. Mn; Dy vs. Nm | Otumba with Paredon; Fuentezuelas with El Paraiso |

| Glascock et al., Reference Glascock, Weigand, Esparza López, Ohnersorgen, Garduño Ambriz, Mountjoy, Darling, Kuzmin and Glascock2010 | Western and Central Mexico | 596 | long and short NAA, and XRF | N/A | Cs and Sc; Mn vs. Na; Mn vs. Dy | Ucareo with Altotonga; Ucareo with Zinapecuaro; Sta. Elena with Paredon, Otumba, Malpaís, and Derrumbadas; Zaragoza with Altotonga; Sierra Pachca 2 with Tulancingo; Sierra Pachca 3 with Pengamo 1, 2, and Fuentezuelas; Altotonga with Ucareo |

| Glascock et al., Reference Glascock, Weigand, Esparza López, Ohnersorgen, Garduño Ambriz, Mountjoy, Darling, Kuzmin and Glascock2010 | Jalisco, Nayarit, and Zacatecas, Mexico | >1,000 | XRF | N/A | Rb vs. Zr | Overlapping between several deposits from Nayarit (San Lionel, Ixtlan del Rio), Zacatecas (Huistla) and Jalisco (Llano Grande, San Isidro, Hacienda Guadalupe, La Lobera, Ixtepete, Sta. Teresa, La Providencia, Osotero, San Juan de los Arcos, Boquillas, Navaja, Ahisculco, and La Quemada) |

| Reference | Archaeological site and/or geographical region | n | Analytical instrument | Multivariate technique | Bivariate plots | Detected overlaps between sources |

|---|---|---|---|---|---|---|

| Millhauser et al., Reference Millhauser, Rodríguez-Alegría and Glascock2011 | Xaltocan, Tlaxcalla, Mexico | 103 | pXRF | PCA and CA | PC1 vs. PC2 | Otumba with Paredon; Tulancingo and Tepalzingo with Penjamo; Cruz Negra with Zinapecuaro and Ucareo; Magdalena with Tequila and Ixtepeque; Derrumbadas with El Chayal |

| Moholy-Nagy et al., Reference Moholy-Nagy, Meierhoff, Golitko and Kestle2013 | Tikal, Guatemala | 2,283 | pXRF | N/A | Ir vs. Mg; Rb vs. Zr | Ixtepeque with Otumba and Paredon; Cerro Varal with Ucareo and San Martin Jilotepeque |

Pierce, Reference Pierce2015 | Western Mexico | >1,000 | XRF | N/A | Fe vs. Mn | All West Mexican sources |

| Waite, Reference Waite2020 | Coba, Quintana Roo, and Yucatan | 113 | pXRF | N/A | Sr vs. Zr; Rb vs. Zr; Zr vs. Sr | Ixtepeque with Otumba; Zacualtipan with Zaragoza and Ucareo |

Note: NAA = neutron activation analysis; PCA = principal component analysis; N/A = no multivariate analysis was applied (only biplots); PC1 = first principal component; PC2 = second principal component; XRF = X ray fluorescence; pXRF = portable X ray fluorescence.

Nowadays, new, less rigid and more robust clustering and/or classification techniques have been implemented, showing better performance in data handling and allowing more reliable results with a smaller margin of error. However, the procedure for the search of groups is much more complex than has usually been done and involves different steps, all with the sole purpose of being able to give certainty to the inferences made from the data. The order of the steps proposed and described in this Element for processing compositional data acquired from archaeological materials is the following:

1. the selection of the adequate number of samples to be processed;

2. the imputation of values, when few are missing;

3. the choice of the right transformation;

4. the adequate diagnosis of the data using robust methods for outlier detection;

5. the reduction of dimensionality or the selection of variables when dealing with large datasets;

6. the application of clustering algorithms; and

7. the validation of the resulting models.

To exemplify the proposed procedures and the efficiency of variable-selection semi-supervised clustering methods, in Sections 9 and 10, two exercises are described with a practical application to archaeological materials. The scripts related to each step of the process, which should be run in the open-source R environment (R Core Team, 2020), are provided in the Appendix.

2 Sample Size

The provenance postulate explicitly states that in order to determine the sources of origin of archaeological artifacts through compositional analysis, samples extracted from the same deposit of raw material must register a lower chemical variability than the variability observed between different sources of raw material (Weigand et al., Reference Weigand, Harbottle, Sayre, Earle and Ericson1977). Therefore, when studying a deposit, the internal variability of the site must be considered, as well as how homogeneous its composition is, understanding “variability” as the measure of the dispersion of data in a distribution, whether theoretical or of a sample (Dagnino, Reference Dagnino2014). For example, if in a volcanic region there were various eruptive events that caused the formation of sub-sources, it would be necessary to study the variability of each of these and, simultaneously, assess the variability between the deposits of the different regions. Theoretically, the distribution of each particular source or sub-source should be different, and this should be reflected in the chemical variability of its components. It should be noted that variability in the data may also come from measurement errors of the studied features.

A sample is a significant fraction of the total population. So, to obtain consistent statistical results, the sample size is critical in any inferential study. Statistically speaking, if the number of observations (n) becomes larger, the type I error decreases and, therefore, the precision error too. In addition, in techniques that assume normality of the data, the central limit theorem becomes operational. Thus, to estimate variability, it is necessary to consider a statistically adequate sample size so that the estimates are unbiased, and it is advisable that this be at least n ≥ 30. Some authors, like Glascock et al. (Reference Glascock, Braswell, Cobean and Shackley1998), recommend that a sample of at least 12 units of analysis be taken in order to determine the range of variation in the composition of simple obsidian deposits and at least 100 units of analysis in cases where the deposits are more extensive and complex (Glascock et al., Reference Glascock, Braswell, Cobean and Shackley1998). Ben Dor et al. (Reference Ben Dor, Finkel and Ben-Yosef2023), by evaluating several statistical techniques, proposed that 10 to 20 specimens are enough for distinguishing sources with a certainty of >0.9, depending on the inherent variability of the studied materials. However, regardless of the sample size, the higher the n (i.e., n → ∞), the more statistically accurate the estimation of the parameters will be, and the fewer biases will be involved.

For example, Cobean (Reference Cobean2002) presented the results of the NAA analysis of samples from 20 obsidian deposits in central Mexico, publishing only descriptive statistics such as mean, standard deviation, and minimum and maximum values. Although for 14 of these sources he was able to analyze 30 samples, for six sources he analyzed much fewer: in the case of Derrumbadas, in Puebla, only five (n = 5) samples were analyzed; for Tepalzingo, in Hidalgo, n = 10; for Pachuca 2, in Hidalgo, n = 11; for Cruz Negra, in Michoacán, n = 15; for Penjamo 1, in Guanajuato, n = 12; and for Penjamo 2, n = 15. In cases where n < 30, nothing can be generalized when measuring variables that have variation in the population, so it is necessary to increase the sample size to have a better idea of the variability of these deposits. On the other hand, for these same cases, descriptive statistics and punctual estimators such as mean and standard deviation, among others, are not sufficient to estimate the unknown parameters of the population, so the estimates can be biased. For these reasons, you cannot have complete confidence in the results obtained with small sample sizes.

3 Imputation of Missing Values

It is common to come across the problem of recording missing data or data that are below the limit of detection (LOD). To elude this problem, a common approach is to remove variables with zeros, negative values, or missing values. This approach is considered an aggressive method of elimination or, in the words of Michael Baxter and Hilary Cool (Reference Baxter and Cool2016), a draconian approach, since the removal of an entire variable means some existing data are also deleted, leading to a significant loss of information. Instead, to avoid significant data loss, we recommend using value substitution, also called imputation. In the case of compositional data where one or more components may have the problem of being below the LOD, multiple imputations can be used to produce a single value that will be used to replace the missing data, reducing bias in the estimates.

In some cases, missing values have been substituted using the Mahalanobis distance, but this distance is not an imputation method since it only minimizes the distance of the missing data from the centroid of the dataset (Glascock, Reference Glascock and Shackley (ed.)2011). Imputation methods such as Mahalanobis distance can distort the covariance structure of the data and, in general, the covariance structure of the parties involved (Martín-Fernández et al., Reference Martín-Fernández, Barceló-Vidal and Pawlowsky-Glahn2003). In this Element, we recommend the use of two packages written for the R environment for the imputation of values: multivariate imputation by chained equations (MICE) (van Buuren & Groothuis-Oudshoorn, Reference van Buuren and Groothuis-Oudshoorn2011) and zCompositions (Palarea-Albaladejo & Martín-Fernández, Reference Palarea-Albaladejo and Martín-Fernández2015). The former is recommended for missing or zero values, while the latter is useful for values that are below the LOD of the instrument.

Multivariate imputation by chained equations is used to impute multiple replacement values for missing multivariate data. This method specifies the multivariate imputation model on a variable-by-variable basis using a set of conditional densities, one for each incomplete variable. Starting from an initial value, MICE obtains imputations by iterating on conditional densities, so it is recommended for cases where an adequate multivariate distribution of the data cannot be found. To inspect the distributions of the original and imputed data, MICE has the “stripplot()” function; the graphs show the distribution of the variables as individual points. The full data values are recorded in blue, while the imputed values are shown in red. The imputed values should suitably follow the pattern of the non-imputed values.

For its part, zCompositions is a package that runs under the compositional approach for the imputation of left-censored data, or data < LOD. Its ‘log-ratio DA’ algorithm is available, which imputes values < LOD through simulated values of their posterior predictive distributions through the representation of coordinates that take as a reference the values recorded from the rest of the data. In addition, it uses an iterative Markov chain Monte Carlo procedure that is incorporated as a maximum likelihood method with the expectation maximization (EM) algorithm to estimate the parameters of the common conjugate normal-inverse-Wishart probability distribution with non-informative Jeffreys prior. The method gives special relevance to aspects related to compositional data, such as scale invariance, sub-compositional coherence, and the preservation of the multivariate relative structure of the data.

4 Data Transformation

Once compositional data (expressed in weight percentages or parts per million) have been acquired from the archaeological materials employing the preferred analytical technique, it must be decided whether it is necessary to transform them or not. It is important to note that the type of transformation employed has a lot to do with the results obtained, since many techniques are based on the calculation of measurements of dissimilarity of Euclidean distance that are dependent on the scale; similarly, other techniques rely on theoretical assumptions such as the normality of the data or the homogeneity of variance of the groups – conditions that are not always fulfilled. Although on certain occasions the assumption of multivariate normality is not a requirement, as is the case with exploratory analyses such as PCA, CA, or partial least squares, if the behavior of the data is close to normal, the results of exploratory analyses will show more evident separations between the groups of data.

In the published literature, there are those who analyze the raw data (Ambrose et al., Reference Ambrose, Allen, O’Connor, Spriggs, Vasco Oliveira and Reepmeyer2009; Dolan, Reference Dolan2016; Glascock, Reference Glascock2002), those who argue in favor of standardizing the data to the Z-score with mean zero and variance 1 (Baxter, Reference Baxter2001; Baxter, Reference Baxter2015; Baxter & Buck, Reference Baxter, Buck, Ciliberto and Spoto2000), those who transform data to log10 (Glascock et al., Reference Glascock, Braswell, Cobean and Shackley1998; Hall, Reference Hall2004; Hall & Minyaev, Reference Hall and Minyaev2002; Millhauser et al., Reference Millhauser, Rodríguez-Alegría and Glascock2011), and those who recommend log ratio transformations (Aitchison, Reference Aitchison1986; Greenacre, Reference Greenacre2017; Martín-Fernández et al., Reference Martín-Fernández, Buxeda i Garrigós, Pawlowsky-Glahn, Barcelo and Bogdanovic2015). However, raw data can have substantial differences of magnitude, and the variables can have different types of distributions. Considering that many multivariate techniques use distance calculation to detect patterns in the data, it is obvious that the magnitude of the differences between the variables will have an impact on the calculation of the differences between the variables. That is, if the data are processed as they were measured, the variables with a greater range of variation or greater variance will have a greater influence on the modeling, and the variables that could have some type of structure will be difficult to detect.

In contrast, if the data are measured on different measurement scales, they cannot be compared, so it is necessary to make sure all the variables have the same scale so that they can be compared. That is why Baxter and Buck (Reference Baxter, Buck, Ciliberto and Spoto2000) recommend the standardization of archaeometric data with simple Z-score normalization, which is equivalent to a linear transformation, calculated by

(1)

(1)

where

![]() is the original value of the variable,

is the original value of the variable,

![]() is the mean of the variable, and

is the mean of the variable, and

![]() is the standard deviation. The authors argue that the values obtained through this transformation make up variables with the same weight; in this way, the components will have a distribution that is closer to normal. However, standardization is only recommended to eliminate dependence on the units of measurement used, that is, when the variables have different units of measurement and very different ranges (Wehrens, Reference Wehrens2011). Moreover, this transformation is not enough to modify more complex features of a distribution such as asymmetry, so nonlinear transformations must be made instead. In this regard, Reimann et al. (Reference Reimann, Filzmoser and Garrett2002) stated that Z standardization makes little sense in geochemistry since it is known that the empirical distributions of the data are strongly biased.

is the standard deviation. The authors argue that the values obtained through this transformation make up variables with the same weight; in this way, the components will have a distribution that is closer to normal. However, standardization is only recommended to eliminate dependence on the units of measurement used, that is, when the variables have different units of measurement and very different ranges (Wehrens, Reference Wehrens2011). Moreover, this transformation is not enough to modify more complex features of a distribution such as asymmetry, so nonlinear transformations must be made instead. In this regard, Reimann et al. (Reference Reimann, Filzmoser and Garrett2002) stated that Z standardization makes little sense in geochemistry since it is known that the empirical distributions of the data are strongly biased.

In the research conducted by Milligan & Cooper (Reference Milligan and Cooper1988) with eight different methods of standardization under several error conditions (including error-free data, error-perturbed distances, the inclusion of outliers, and the addition of random noise dimensions), it was shown that, for variables measured in the same units, standardization of the Z-score is not advisable as it can remove important information about the variation between groups. In addition, standardization is less sensitive to observations at the boundaries between groups and to the existence of outliers. The authors’ experiments also showed that those approaches that standardize using the division by rank of the variable (i.e., the difference between the maximum and minimum value) yield a consistently superior retrieval of the group structure. Furthermore, when standardized data are clustered using conventional clustering techniques, outliers are usually forced to merge with existing clusters in the data (Milligan & Cooper, Reference Milligan and Cooper1988).

The conversion of data to log10 is another very commonly applied transformation, whose use is assumed to prevent some variables from having a greater weight and playing a dominant role in the classification. In the case of trace elements, the trend of the data gets closer to normal (Glascock, Reference Glascock and Neff1992; Neff, Reference Neff1992). Nonetheless, Reimann et al. (Reference Reimann, Filzmoser and Garrett2002) proved that most geochemical datasets do not show behavior approaching normal or logarithmic normal and that even when other transformation methods are used with log10, more than 70% of all components in each dataset do not approximate normal distribution. This is because data of this nature present problems of multimodality and the presence of outliers. Baxter & Cool (Reference Baxter and Cool2016) have also shown that geochemical data tend to have longer tails than would be expected for normally distributed data and that the transformation log10 does not avoid the dominant effects of greater variance in some of the variables. In personal experiments made with databases of both lithic and ceramic analyzed with NAA and pXRF, in which we transformed chemical data to log10 and used histograms and normal probability graphs to assess whether there was any deviation from normal, we verified that this transformation did not yield normality in most cases.

For any analysis, it is important to first consider the intrinsic nature of the data. It is undeniable that compositional data are vectors of strictly positive data and that the range of values they can take varies from 0 to k, where k is a constant (k = 1, 100%, or 106 parts per million [ppm]). These restrictions cause the compositional data to follow another geometry, called Aitchison geometry (Aitchison, Reference Aitchison1986). In this, the geometric space is called the simplex, and any vector x, whose components are D-parts of a whole, is subject to the constraint that the sum of its parts must be equal to the constant k, so it is a closed space. In this way, the simplex allows compositions to be represented in orthogonal coordinates. Recognizing the properties of compositional data, in this Element we recommend using the Aitchison method, which is based on the transfer of compositional data from the restricted space to the multivariate real space based on the logarithms of the ratios between the parts (Mateu-Figueras et al., Reference Mateu-Figueras, Martín-Fernández, Pawlowsky-Glahn and Barceló-Vidal2003). These transformations include the additive log ratio, the centered log ratio (clr), and the isometric log ratio (ilr) (Pawlowsky-Glahn & Buccianti, Reference Pawlowsky-Glahn and Buccianti2011). With these transformations, the condition of the constant sum is fulfilled, leading to data that are not constrained and that can take any real value between –∞ and +∞. Working with log ratios also ensures a basic logical principle of sub-compositional coherence (Mateu-Figueras et al., Reference Mateu-Figueras, Martín-Fernández, Pawlowsky-Glahn and Barceló-Vidal2003).

Once this transformation is made, the data can be analyzed using any multivariate analysis technique (Aitchison, Reference Aitchison2003; Pawlowsky-Glahn & Egozcue, Reference Pawlowsky-Glahn, Egozcue, Buccianti, Mateu-Figueras and Pawlowsky-Glahn2006; Pawlowsky-Glahn et al., Reference Pawlowsky-Glahn, Egozcue and Tolosana-Delgado2007). To effectively conduct the procedures described here, in this Element it was decided to work with the clr and ilr transformations. The clr transformation uses the geometric mean of the sample vector as a reference and has a one-to-one relationship between the original D-parts. The ilr coordinates map a composition in the D-part Aitchison’s simplex, isometrically turning it into a D-1 dimensional Euclidean vector (Egozcue et al., Reference Egozcue, Pawlowsky-Glahn, Mateu-Figueras and Barceló-Vidal2003; Filzmoser et al., Reference Filzmoser, Hron and Reimann2009). In this way, an orthonormal base can be defined with each element of the simplex identified according to its coordinate vector, allowing the direct handling of the geometric elements in this space (Egozcue et al., Reference Egozcue, Pawlowsky-Glahn, Mateu-Figueras and Barceló-Vidal2003). The advantage of these transformations is that they preserve all the metric properties of the compositional data, such as isometry and the non-singular covariance matrix (Egozcue & Pawlowsky-Glahn, Reference Egozcue and Pawlowsky-Glahn2011).

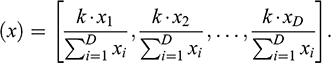

It is important to emphasize that, although compositional data are presented as weight percentages, proportions, or ppm, the sum of each of the vectors rarely adds up to the constant k. Strictly speaking, such data are always sub-compositions, since only a subset of the components is measured or recorded. This means that there is an additional implicitly defined component, known as the residual part, which completes the constant k (Pawlowsky-Glahn & Egozcue, Reference Pawlowsky-Glahn, Egozcue, Buccianti, Mateu-Figueras and Pawlowsky-Glahn2006). In order to respect the constant sum constraint, there are two options: either you can add the residual variable or you can close the data. In the first option, the residual variable is calculated by summing each of the parts of the vector and subtracting them from the constant k (given in percentages or ppm); the difference will make up the residual variable (Baxter et al., Reference Baxter, Beardah, Cool and Jackson2003; Baxter et al., Reference Baxter, Beardah, Cool and Jackson2005). For the second case, the vector can be normalized with the closure operator:

(2)

(2)

This transforms each vector of D-parts so that its components sum to a constant k (Baxter et al., Reference Baxter, Beardah, Cool and Jackson2005; Egozcue & Pawlowsky-Glahn, Reference Egozcue and Pawlowsky-Glahn2011). The components of the closed vector are called parts and constitute the simplex of D-parts. Either of these two operations yields completely compositional data, which can then be transformed into log ratio coordinates. To transform the data to the centered and/or the isometric log ratio, the “compositions” package for R can be used (van den Boogaart et al., Reference van den Boogaart, Tolosana-Delgado and Bren2023).

5 Data Diagnosis

Another important point to consider is the proper diagnosis of the data, as it has been shown that the presence of outliers can have a strong impact on estimates (Filzmoser et al., Reference Filzmoser, Hron and Reimann2012; Hubert & Van der Veeken, Reference Hubert and Van der Veeken2008). Outliers are experimental units that present a value or combination of values in the recorded variables that clearly differentiate them from the rest of the observations; in other words, an outlier is an observation that deviates considerably from the other observations in the sample set (Hawkins, Reference Hawkins1980; van den Boogaart & Tolosana-Delgado, Reference van den Boogaart and Tolosana-Delgado2013). Nowadays, it is recommended to use robust methods that have greater resistance to the presence of outliers and that exceed the performance of classic statistics. For example, Mahalanobis distance presents two types of classification error that occur in the presence of outliers within the data: the “masking” of an outlier as a non-outlier and the “swamping” of a non-outlier as an outlier. For this reason, it is important to detect outliers so that the soundness of the procedures can be rigorously evaluated.

Given the high-dimensional nature of compositional data, the use of multivariate approaches for outlier detection is recommended, like the robust PCA method called “ROBPCA” (Hubert et al., Reference Hubert, Rousseeuw and Vanden Branden2005). This method reduces the dimensionality of data: the robust loadings are computed by applying projection-pursuit techniques, and the minimum covariance determinant method is used to determine the robust center and covariance matrix. The ROBPCA algorithm produces a diagnostic plot that shows and classifies outliers. However, this algorithm runs under the assumption that regular observations are normally distributed. Therefore, given the asymmetric nature of compositional data, it is recommended to use an alternative algorithm, known as skewness-adjusted outlyingness (Hubert & Van der Veeken, Reference Hubert and Van der Veeken2008).

Adjusted outlyingness is a generalization of Stahel–Donoho outlyingness, which does not need the assumption of normality to be fulfilled and is not based on visual inspection. This estimator detects outliers in multivariate biased data based on the outer limit of the data points with respect to the data core; outliers are essentially obtained by projecting observations in many univariate directions and calculating a robust center and scale at each projection. When the sample size (n) is large enough compared to the dimension p, the number of components (n > 5*p) generates orthogonal directions to the hyperplane through p random observations, so the adjusted outlyingness will then be affine invariant (Hubert & Van der Veeken, Reference Hubert and Van der Veeken2008).

To find outliers with the adjusted outlyingness (AO) algorithm, we recommend employing the function “adjOutlyingness” of the package robustbase (Maechler, Reference Maechler2023). In the process, the AO algorithm computes the “medcouple” – a robust concept and estimator of skewness. The medcouple is defined as a scaled median difference between the left and right half of a distribution, and hence is not based on the third moment, as in classical skewness. The observations are then weighted according to their outliers, and robust Stahel–Donoho estimates are obtained with a weighted mean and a covariance matrix. An observation is labeled as atypical when its adjusted outlyingness is too great (Hubert & Van der Veeken, Reference Hubert and Van der Veeken2008).

6 Dimensionality Reduction

In the current published literature, it has been shown that not all variables are relevant in the task of clustering and that many of them make up noise, providing little or no information and having a negative impact on the detection of the underlying structure of the data and especially on the estimation of the optimal number of groups (Scrucca & Raftery, Reference Scrucca and Raftery2018). Thus, considering all variables simultaneously in an analysis or taking only a subset without considering a statistically sound procedure can have negative effects on the pooling results (Andrews & McNicholas, Reference Andrews and McNicholas2014; Fop & Murphy, Reference Fop and Murphy2018; Scrucca & Raftery, Reference Scrucca and Raftery2018). In provenance studies we can find three different approaches concerning the inclusion or exclusion of variables, which are described in Sections 6.1, 6.2, and 6.3.

6.1 First Approach: Subjective Approach

The first approach, which we call the subjective approach, is one in which it is stated that certain components are more effective than others in discriminating compositional groups based on personal opinions (Craig et al., Reference Craig, Speakman, Popelka-Filcoff, Glascock, Robertson, Shackley and Aldenderfer2007; Glascock et al., Reference Glascock, Weigand, Esparza López, Ohnersorgen, Garduño Ambriz, Mountjoy, Darling, Kuzmin and Glascock2010; Mendelsohn, Reference Mendelsohn2018; Moholy-Nagy et al., Reference Moholy-Nagy, Meierhoff, Golitko and Kestle2013). In this approach, the criteria for arriving at such statements are not discussed in detail, although the most common tactic is to display two of the components or ratios between components in bivariate graphs (Iñañez et al., Reference Iñañez, Speakman, Buxeda-i-Garrigós and Glascock2009; Italiano et al., Reference Italiano, Correale, Di Bella, Martin, Martinelli, Sabatino and Spatafora2018; Tykot, Reference Tykot2016; Waite, Reference Waite2020). The first thing to note here is that the use of bivariate plots turns out to be a subjective procedure, since choosing which pair of variables offers the best separation consists of examining a total of

![]() graphics, making it impractical when you have a large number of variables (Salem & Hussein, Reference Salem and Hussein2019). For example, if we obtained p = 28 components when analyzing our samples with NAA, a total of 378 bivariate plots would have to be inspected to select the one that we thought better fit our hypotheses.

graphics, making it impractical when you have a large number of variables (Salem & Hussein, Reference Salem and Hussein2019). For example, if we obtained p = 28 components when analyzing our samples with NAA, a total of 378 bivariate plots would have to be inspected to select the one that we thought better fit our hypotheses.

In most of the published cases, a great deal of overlap is observed between the points that make up the assumed groups. In other cases, a dissimilarity in the number of identified groups can be seen depending on which components are graphed. In Table 1, there are detailed examples of publications that use bivariate graphs of elements, ratios between elements, or statistical components (i.e., PCA), showing the overlapping of their groups. However, some of the minor and trace elements vary considerably in concentration from one instrument to another due to the different detection limits and working ranges managed by each. The calibrations and standards used also vary from technique to technique, which can cause the concentrations of elements and accuracy of results to be different in data recorded with different instruments. Therefore, this approach is not recommended.

6.2 Second Approach: Feature Extraction

The second approach, known as feature extraction, states that the inclusion of the largest possible number of components in the analysis is preferable to achieve effective discrimination of chemical groups. The inclusion of all the components (or as many as possible) is due to the idea that, if only a limited number of components are analyzed, it will not be possible to obtain an optimal result since it is unknown a priori which of the components will be most effective for the discrimination of the groups (Glascock, Reference Glascock and Shackley (ed.)2011; Harbottle, Reference Harbottle1976). In this approach, the most common dimensionality reduction methods aim to apply linear transformations to project the data onto a subspace derived from the original space; examples of these methods are PCA and linear DA (LDA). Principal component analysis bases its estimates on the mean vectors and the covariance matrix, replacing the original data matrix with a coordinate system in which variance is maximized (Thrun, Reference Thrun2018) and where it is established that the greatest amount of information (the greatest variability or variance captured) is contained in the fewest possible components.

The percentage of cumulative variance explained by the components is considered a measure of the quality of the projection, thus reflecting the situation of the experimental units in the high-dimensional space (Varmuza & Filzmoser, Reference Varmuza and Filzmoser2009). It is important to consider that PCA analysis assumes that the variables are highly correlated; however, if the correlation is not linear, the characteristics that differentiate the groups will not be orthogonal, and the principal components (PCs) cannot be adequately determined. There are databases in which groups are difficult to discriminate or at least are not linearly separable; examples of this are the data from Chainlink (Ultsch, Reference Ultsch1995), on leukemia (Haferlach et al., Reference Haferlach, Kohlmann, Wieczorek, Basso, Te Kronnie, Béné, De Vos, Hernández, Hofmann, Mills, Gilkes, Chiaretti, Shurtleff, Kipps, Rassenti, Yeoh, Papenhausen, Liu, Williams and Foà2010), and on iris flowers (Fisher, Reference Fisher1936). Of these, the best-known database in the pattern recognition literature is that of iris flowers, whose dataset has three classes (Iris setosa, Iris versicolor, and Iris virginica) with 50 instances each, of which four morphological characteristics were measured. Using unsupervised exploratory techniques, only two of the groups could be discriminated, while the third group was more difficult to separate; moreover, the groups were not separable without the information from the labels of each flower.

For this reason, it is very important to consider which dimensionality reduction method(s) to use, since, if the data have a certain tendency and do not fit the model, certain projection techniques can introduce spurious groups. In archaeology, it is very common to find publications that use PCA or LDA for the reduction of dimensionality and data processing (Glascock et al., Reference Glascock, Braswell, Cobean and Shackley1998; Millhauser et al., Reference Millhauser, Rodríguez-Alegría and Glascock2011; Smith et al., Reference Smith, Burke, Hare and Glascock2007), both of which have their pros and cons. In this regard, PCA is sensitive to the scale of measurement of characteristics. That is why standardization with mean 0 and variance 1 should only be applied in cases where the scale of measurement of the variables is recorded in different types of units, otherwise the variables whose scale is larger will dominate and therefore contribute more to the overall variance. It should be remembered that PCA is not robust against outliers, since their significant presence will bias the classic matrices of location and dispersion. This is one of the reasons why it is recommended to perform a good diagnosis of the data using robust methods to detect or remove outliers before performing PCA or any other multivariate technique.

In addition to the above, PCA has other limitations. For example, PCA assumes that the compositional dataset has no missing values (no empty rows), so the imputation of the missing values must be ensured using a robust method. Only if the missing values are a higher percentage within a variable is it advisable to remove the whole component. Another limitation is the assumption of orthogonality of the PCs, since they are by design orthogonal to each other. Depending on the situation, there may be more “appropriate” base vectors to reduce data that are not orthogonal. Another drawback is that, as a projection method, PCA uses the plane of the two major axes of the ellipsoid; in this regard, it has been shown that the visualization of structures in high-dimensional data based on plane projections is difficult to interpret and favors overlap between groups, causing the partition of data points that belong to the same group into several different groups (Ultsch & Thrun, Reference Ultsch and Thrun2017).

Conversely, LDA is used to find a linear combination of features that characterizes or separates two or more classes. Being a supervised classifier, the labels in the learning samples are assumed to be error-free. This causes LDA to be sensitive to noise, so if the learning dataset contains some wrong labels, biased results can be obtained (Bouveyron et al., Reference Bouveyron, Celeux, Murphy and Raftery2019). Another limitation of this technique derives from two of its essential theoretical assumptions: (1) the distribution of input variables must follow a Gaussian distribution, and (2) the variances between groups must be homogeneous. Finally, LDA is also extremely sensitive to outliers, and in cases where the number of observations exceeds the number of features, LDA may not work well. All the above results in LDA not being suitable for nonlinear problems and not performing well for unbalanced datasets.

6.3 Third Approach: Feature or Variable Selection

The third approach is related to finding the most informative components that can contribute to the clustering or classification, which can be a challenge in cases such as archaeometry. This step is important because when a learning model has a greater number of components, it tends to overadjust and its performance loses predictive power (Alelyani et al., Reference Alelyani, Tang, Liu, Aggarwal and Reddy2014). In addition, the inclusion of redundant components in the model introduces noise, which leads to the fact that the true structure of data clustering is not discovered (Xie, Pan & Shen, Reference Xie, Pan and Shen2008). It has been shown that non-informative variables, or “masking variables” (Fowlkes & Mallows, Reference Fowlkes and Mallows1983), can cause errors in the grouping results (Wang & Zhu, Reference Wang and Zhu2008). Conversely, it has been shown that selecting an optimal subset of features can facilitate both model fitting and interpretation of results (Andrews & McNicholas, Reference Andrews and McNicholas2014; Raftery & Dean, Reference Raftery and Dean2006).

Feature selection is the process of choosing a smaller subset of features that preserves only the most important and/or relevant variables in the dataset according to a certain evaluation criterion (Alelyani et al., Reference Alelyani, Tang, Liu, Aggarwal and Reddy2014). A variable is considered relevant if it is useful for discovering groups or classes; otherwise, it is irrelevant. On the other hand, a variable is considered redundant if it is highly correlated with other variables. In real life, any high-dimensional database will have variables relevant for discriminating groups and will also contain variables whose information is not meaningful, therefore causing the accuracy of the grouping to be negatively affected if all of them are used. Using all available variables will increase the complexity of the model and decrease the performance of the classification method; but selecting the best set of features that substantially contribute to the tasks of data analysis can achieve higher performance in the classification. Based on this fact, different clustering techniques have been proposed that use variable selection methods, like the ones we will see later in this Element.

Baxter and Jackson (Reference Baxter and Jackson2001) pointed out that the operation of variable selection is inevitable in archaeometric work, proposing two methods to reduce dimensionality and be able to retain only the informative variables based on the analysis of PCs and classification trees; but, for the reasons already explained, these methods proved to be ineffective. In most cases in archaeometry, the selection of variables to discriminate groups has been made arbitrarily and without theoretical foundations, arguing that a few bivariate graphs are enough to relate the samples to their respective sites (Baxter et al., Reference Baxter, Beardah, Cool and Jackson2003; Glascock, Reference Glascock and Shackley (ed.)2011). It should be borne in mind that, in each study, the properties of the materials are different; therefore, it cannot be generalized. If, in the classification of certain material, the selection of certain variables obtained a good result, it is not equivalent to affirming that comparable results can be obtained in all studies on this subject. In other words, there is no guarantee that selecting the same variables for two different studies will yield similar results.

The above can be clearly examined in published references, in which overlaps in the data or variation in the number of groups can commonly be seen when trying to classify ceramic and lithic materials using combinations of elements in bivariate graphs. Personally, we believe that looking for the fastest solution in the provenance analysis does not always have optimal results. Considering that our approach is based on the analysis of log ratios with strictly positive D-components, the selection of compositional data variables can be done in two different ways: selecting parts or selecting log ratios. If you choose to work with parts, you must find a subset of them based on an optimization criterion, then close the data and process it with log ratios using this sub-composition. If you prefer to work directly with log ratios, a subset of the transformed parts will be selected using a statistical criterion for further analysis (Greenacre, Reference Greenacre2017). For example, Ben Dor et al. (Reference Ben Dor, Finkel and Ben-Yosef2023) applied an approach based on the clr transformation and considering the p-value of the Kruskal–Wallis test and their pairwise correlation structure.

Although there are a large number of published methods for feature selection (filters, wrappers, and hybrid models), our goal is not to provide an exhaustive comparison of variable selection methods; such comparisons can be found in other references (Maugis et al., Reference Maugis, Celeux and Martin-Magniette2009a, Reference Maugis, Celeux and Martin-Magniette2009b; Raftery & Dean, Reference Raftery and Dean2006; Scrucca & Raftery, Reference Scrucca and Raftery2018). Our aim in any case is to show that proper preprocessing of data along with variable selection increases both model performance and interpretation. However, an adequate definition of the basic concepts must be made, particularly exploring parametric methods for variable selection with finite mixture model-based clustering (MBC).

Model-based clustering places the clustering task in a formal modeling framework where the empirical distribution of data is modeled through a finite mixture of theoretical probability distributions, typically the multivariate Gaussian distribution (Fop & Murphy, Reference Fop and Murphy2018). Here, each component k (group) is modeled using the Gaussian distribution, whose parameters are represented by μk (vector of means) and ∑k (covariance matrix). Therefore, discovering the optimal number of groups and the best cluster model will be a statistical model selection problem in which there is a finite number of candidate models with different numbers and types of component distributions (Fraley & Raftery, Reference Fraley and Raftery1998). In this sense, it is considered that the data come from different groups or subpopulations that correspond to the components of the mixture. Thus, by assuming that the experimental data in the different groups were generated by different probability distributions, reference is made to the provenance postulate (Weigand et al., Reference Weigand, Harbottle, Sayre, Earle and Ericson1977), which establishes that different chemical groups represent different sources that are discriminated due to their particular internal compositional variability.

Variable selection methods point out that it is essential to distinguish between relevant and irrelevant variables (Fop & Murphy, Reference Fop and Murphy2018). Relevant variables are defined in terms of statements of probabilistic dependence (or independence); these hold the purest and most contamination-free information and turn out to be fundamental in the grouping. On the other hand, irrelevant variables, as their name implies, are unimportant and are divided into redundant and non-informative variables. The former are called “redundant” because in other variables you already have the information you need; that is, they are strongly correlated and can be omitted without incurring much loss of information. The latter are variables that do not contribute to the model since they correspond to noise, so they lack information. Variable selection and MBC consider these distribution assumptions on the relevant and irrelevant variables.

The advantage of using these methods is that, instead of obtaining a single output or result such as a dendrogram or a two-dimensional projection (i.e., PCA or LDA), MBC uses the same matrix of input data to obtain m candidate models, which are compared to each other using a model selection criterion (Fop & Murphy, Reference Fop and Murphy2018). Once the best model has been chosen within this finite family of models, simultaneously, the most relevant variables for clustering and the optimal number of clusters are automatically selected by the algorithm, retaining in the end the cluster model that best fits the empirical data (Celeux & Govaert, Reference Celeux and Govaert1995).

In the mathematical context, by assuming that the sample components are extracted from a multivariate normal mixture, a model

![]() is obtained where

is obtained where

![]() = (π1, …, πk-1) are the mixing probabilities such that

= (π1, …, πk-1) are the mixing probabilities such that

![]() ≥ 0 and

≥ 0 and

![]() , and MNV

, and MNV

![]() is the q-dimensional Gaussian density function with parameters

is the q-dimensional Gaussian density function with parameters

![]() (which corresponds to the vector of means) and

(which corresponds to the vector of means) and

![]() (which is the matrix of covariances). The mixture is characterized by the constraints imposed on the Σk matrices in terms of their eigenvalue decomposition, which allows the generation of different geometric properties of the candidate models that are obtained through the Gaussian components. These constraints are summarized in the three terms to the right of Equation (3),

(which is the matrix of covariances). The mixture is characterized by the constraints imposed on the Σk matrices in terms of their eigenvalue decomposition, which allows the generation of different geometric properties of the candidate models that are obtained through the Gaussian components. These constraints are summarized in the three terms to the right of Equation (3),

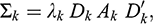

(3)

(3)

where the first term

![]() indicates the constraints on the volume parameter in the kth cluster, the second term

indicates the constraints on the volume parameter in the kth cluster, the second term

![]() indicates the constraints on the orientation of the groups in the matrix, and the third term

indicates the constraints on the orientation of the groups in the matrix, and the third term

![]() indicates the constraints on the array of the group shapes.

indicates the constraints on the array of the group shapes.

In the context of MBC, the R package clustvarsel version ≥2.0 (Scrucca & Raftery, Reference Scrucca and Raftery2018) performs the selection of subsets of informative variables based on a wrapper method. This package runs in conjunction with the mclust package (Fraley et al., Reference Fraley, Raftery, Murphy and Scrucca2012), which allows automatic selection of the number of components in the mixture together with the selection of covariance structures from the parameterization of eigenvalue decomposition (see Eq. 3). With mclust, you can specify m = 14 potential models with different geometric features. Raftery and Dean (Reference Raftery and Dean2006) propose dividing X variables in the model into three sets that have no elements in common:

1. XC = the set of current clustering variables;

2. XP = the variable(s) proposed for inclusion or exclusion from the set of clustering variables;

3. XNC = the set of other variables not significant for clustering.

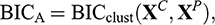

According to Raftery and Dean (Reference Raftery and Dean2006), the solution to the problem of variable selection is based on the use of the Bayesian information criterion (BIC) to approximate Bayes factors to compare adjusted mixed models in nested subsets of variables (Scrucca & Raftery, Reference Scrucca and Raftery2018). That is, the candidate models are compared using the BIC approximation to their marginal probabilities:

(4)

(4)

(5)

(5)

where BICclust(XC,XP ) is the BIC of a Gaussian mixture model in which XP adds relevant information for clustering, BICno clust(XC) is the BIC of the MBC on the current set of clustering variables, and BICreg(XP |XC) is the BIC of the regression of XP on XC. If the difference BICA − BICB is > 0, XP is included in the clustering variable set. To make the selection, variables are added/removed, and different models are compared using a stepwise greedy search algorithm (Fop & Murphy, Reference Fop and Murphy2018).

The R package SelvarMix version 1.0 (Sedki et al., Reference Sedki, Celeux and Maugis2014, Reference Sedki, Celeux and Maugis-Rabusseau2017) provides a method based on the approach described by Maugis et al. (Reference Maugis, Celeux and Martin-Magniette2009b), preceded by a step in which the variables are ranked using a regularization of the likelihood procedure (ℓ1), which is a procedure for selecting and estimating linear models. The ℓ1 penalty is placed on the Gaussian mixture mean vectors and the Gaussian mixture component precision matrices (Sedki et al., Reference Sedki, Celeux and Maugis2014). This model, called SRUW, distinguishes between relevant variables (S) and irrelevant variables (Sc), dividing the latter into two classes. A part (U) of the irrelevant variables may depend on a subset (R) of the relevant variables, and another part (W) is independent of other variables. The parameters of the mixture are estimated by maximum likelihood using the EM algorithm (Dempster et al., Reference Dempster, Laird and Rubin1977), and both the number of K components and the shape of the mixture are chosen using the BIC.

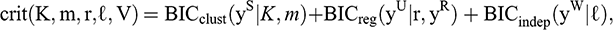

The SRUW model reformulates the variable selection problem for MBC as a model selection problem (Maugis et al., Reference Maugis, Celeux and Martin-Magniette2009b), where candidate models are indexed by maximizing a BIC-type criterion:

(6)

(6)

where BICclust is the BIC criterion of the MBC of the relevant variables (S), BICreg represents the BIC criterion of the regression model of the irrelevant variables (U) on the subset of relevant variables (R), and BICindep represents the BIC criterion of the Gaussian model with the variables (W) independent of the other variables (Sedki, Celeux & Maugis, Reference Sedki, Celeux and Maugis2014).

Finally, the VarSelLCM variable selection method (Marbac & Sedki, Reference Marbac and Sedki2017) allows the complete selection of the model and the selection of variables, in addition to the optimal number of clusters. The procedure rests on the assumption of conditional independence and on a new information criterion based on the integrated complete-data likelihood, named the maximum integrated complete-data likelihood criterion, which does not require multiple calls of the EM algorithm. In this, a variable is not significant for clustering if its one-dimensional marginal distributions are equal between classes. To recognize that a variable is relevant, the authors introduce a binary indicator variable ω = (ω1, …, ωj, …, ωJ), such that ωj = 1 if the variable Xj is relevant; otherwise, ωj = 0. In this way, candidate models are defined both by the number of components and by the vector ω. These methods can be used to select the variables relevant to MBC in Gaussian mixture configurations.

7 Classification Algorithms

Another important aspect is to carefully consider the algorithm(s) for the clustering and/or classification task, whose main goal is to partition the total set of data into subsets (called clusters) whose characteristics are similar; that is, to uncover meaningful groups in data based on the principle of maximizing intra-class similarity and minimizing inter-class similarity (Heller, Reference Heller2007). According to the type of groups obtained, clustering algorithms can be classified as disjoint or fuzzy (Jain & Dubes, Reference Jain and Dubes1988). In the first, also known as hard (crisp) partitioning techniques, each experimental unit is assigned to one, and only one, compact cluster with well-defined boundaries that is well separated from the other clusters. It is assumed that the samples in a group are more like each other because they share common characteristics referring to their class, which allows a relationship of similarity and uniformity to be established between them. In contrast, in fuzzy sets, data boundaries are not defined, and sample items may belong to more than one of the clusters into which the dataset has been divided, but with a certain value or degree of membership (known as the fuzzy degree of membership), so there may be overlaps.

In archaeometry, cluster analysis is a commonly applied method. Cluster analysis can be considered as a dimensionality reduction technique since it condenses the information into a graph where it is possible to appreciate the formation of the groups. Unfortunately, this traditional clustering method and derived techniques have several drawbacks. One of the problems is that many of these algorithms implicitly impose a model of fixed geometric nature (known as an objective function) to detect the clusters that will be formed, regardless of the underlying distribution of the data in n-dimensional space (Handl et al., Reference Handl, Knowles and Kell2005; Thrun, Reference Thrun2018). Because they are dependent on the transformation applied to the data, different clustering algorithms typically give very different results or detect clusters even if the distribution of the data is random, as most of these methods classify data based on the similarities between pairs of objects determined by a metric and using different criteria for merging or nesting clusters.

In addition, these analyses are unstable when the researcher chooses to add new cases or delete some. This happens because, when varying the cases, the calculation of distances changes, so the configurations of the clusters will also change. Another disadvantage is that these classic techniques do not offer any guidance for choosing the “optimal” number of groups, such as the level at which the dendrogram should be cut. On the other hand, with nonhierarchical clustering techniques, it is necessary to provide a priori the number of groups, which in most cases is unknown. Also, they are not robust in the presence of outliers. In the case of hierarchical clustering techniques, since they are rigid partitioning methods, once a data point is assigned to a cluster, it will not be considered again, which means that hierarchical algorithms are not able to correct potential classification errors. It is evident that classic clustering techniques have a series of disadvantages. In short, it can be stated that “these techniques do not provide a consistent way to predict the probability or membership of data belonging to existing groups” (Heller, Reference Heller2007, p. 12).

Cluster analysis is considered a step in the knowledge discovery process (Thrun & Ultsch, Reference Thrun and Ultsch2021). Currently, clustering algorithms relate the classification problem to the discovery of natural clusters in data. The characteristic of natural clusters is that the subsets are clearly defined in the data and do not need to be dissected. Thrun (Reference Thrun2018) defines natural clusters as those that are characterized by a discontinuity that can be based on distance or density. Those that are based on distance are defined as compact structures with small intra-cluster distances and large inter-cluster distances. In these, there are continuous regions of high-dimensional space represented by medium or densely populated point clouds that are surrounded by continuous and relatively empty regions of space. On the other hand, natural clusters defined by their density are based on the idea of neighborhoods present between experimental units, which can result in one-way or multi-directional neighborhoods.

Current algorithms have several advantages that allow the recognition of such clusters, greatly facilitating the understanding of the relationships between all the elements of the research and allowing a good generalization or prediction about the attributes. As a projection method, it is advisable to use a nonlinear and parameter-free method. For this, in this Element we recommend using projection-based clustering known as databionic swarm, or DBS (Thrun, Reference Thrun2018; Thrun & Ultsch, Reference Thrun and Ultsch2021), which is a clustering method that projects the high-dimensional points into two dimensions using the conversion of distances or dissimilarities. In other words, the swarm projects high-dimensional data onto a two-dimensional plane by using intelligent agents working in a toroidal and polar network. This results in a three-dimensional topographic map from a self-organized, simplified emergent map with hypsometric colors and with reliefs derived from the unified distance matrix of projected points.

The topographic map can be described as a 3D virtual landscape with a specific color scale that defines the contour lines, where the valleys or basins represent the groups, and the hills and mountains surrounding the basins represent the boundaries between the groups (López-García et al., Reference López-García, Argote and Thrun2020; Thrun, Reference Thrun2018). The optimal number of clusters can be visually estimated with the help of the map. Moreover, DBS can detect the absence of natural groups in the data (i.e., when the data is not groupable). The DBS method can be implemented using the R package DatabionicSwarm (Thrun, Reference Thrun2025). For more details on the theory and implementation of the algorithm, see Thrun (Reference Thrun2018) and López-García et al. (Reference López-García, García-Gómez, Acosta-Ochoa and Argote2024); for its application in archaeology, see López-García et al. (Reference López-García, Argote and Thrun2020) and Argote et al. (Reference Argote, López-García, Torres-García and Thrun2024). It can also be consulted on the website of the algorithm developer, Michael Thrun, at https://cran.r-project.org/web/packages/DatabionicSwarm/index.html.

In general, in the process of discovering if the data present a group structure, there are three different classification methods: unsupervised classification, supervised classification, and semi-supervised classification, which is halfway between the previous two. In unsupervised classification, also known as clustering, no a priori class of the data is established during the classification processes. The partitions are established using a distance criterion to quantify the similarity between the observations so that observations within the same group are similar to each other and different from observations found in the other groups. In contrast, supervised classification methods are based on a set of previously known classes to cluster the samples that are labeled as belonging to two or more classes, intending to predict the correct class of the data without labeling the correct structure of the groups. Linear discriminant analysis is a clear example of this type of classification. However, this method assumes homogeneity of the covariance matrices of the groups, and continuous variables must follow a multivariate normal distribution – two conditions that are difficult to fulfil.

Halfway between both methods is semi-supervised classification, which is a learning model for both labeled and unlabeled data. In this, labeled data are used to train a classifier to determine the allocation of unlabeled samples into pre-established groups. Since the objective of provenance is to associate data of unknown origin with predefined groups or classes, for example, data from raw material deposits (labeled data) and artifacts of unidentified source (unlabeled data), then we are dealing with a problem of partial labeling of the data, therefore leading us to semi-supervised classification. In the following section, this method is explained in detail.

7.1 Semi-Supervised Classification

For determining the provenance of artifacts, archaeologists collect samples directly from the assumed sources of raw material as a reference to associate them with the objects of unknown origin, commonly applying bivariate or multivariate analyses as tools. But, as mentioned above, these procedures cause overlaps between different groups, and it is more complicated to assign the samples to the deposits if the analytical technique is less conclusive. If samples of known origin are available, semi-supervised classification techniques are ideal for generating a model to assign samples of unknown origin to groups of samples with known origin.

Semi-supervised learning is very useful in machine learning and pattern recognition because it uses unlabeled data (i.e., artifacts recovered from archaeological sites) to improve supervised learning tasks when a small number of labeled data (raw material source samples) are available; hence, this method is known as partially supervised mixture modeling (Bouveyron et al., Reference Bouveyron, Celeux, Murphy and Raftery2019; Krijthe, Reference Krijthe, Kerautret, Colom and Monasse2016; McLachlan & Peel, Reference McLachlan and Peel2000). In this paradigm, it is assumed that the number of data to be labeled is greater than the number of data already labeled (Zhu & Goldberg, Reference Zhu and Goldberg2009). Instead of fitting a model using only labeled data or training data, as in DA, in semi-supervised methods both training data and unlabeled data (or test data) are used in model fitting to predict class labels from unlabeled data. It is from these two subsets of data that a classifier

![]() is trained, which allows better performance than that of a supervised classifier trained only with labeled data.

is trained, which allows better performance than that of a supervised classifier trained only with labeled data.

Many of these algorithms assume finite mixed Gaussian distributions for clustering; in the case of partial labeling, a semi-supervised analogue of MBC is used (Dang et al., Reference Dang, Gallaugher, Browne and McNicholas2019). In the adjustment of the mixture models, the observations are assigned a label that is the most likely a posteriori depending on the selected model and its estimated parameters (Baudry et al., Reference Baudry, Raftery, Celeux, Lo and Gottardo2010). Several approaches have been proposed that follow the semi-supervised classification paradigm, most of which follow the generative paradigm. For details on fitting this variant of the finite mixture model, see Banfield and Raftery (Reference Banfield and Raftery1993) and Celeux and Govaert (Reference Celeux and Govaert1995). For reasons of simplicity, here we only detail some of the easiest packages for archaeologists to use.

Rmixmod (Lebret et al., Reference Lebret, Lovleff, Langrognet, Biernacki, Celeux and Govaert2015) is an exploratory data analysis tool for solving cluster analysis and supervised problems by fitting a mixture model with Gaussian mixture distributions. It can be used in semi-supervised situations where the dataset is partially labeled. Rmixmod takes as arguments one matrix of data with labels and another matrix with data whose labels are unknown, assuming that each xi arises from a population described by a probability density function. This probability density function is a finite mixture of density functions of parametric components, where each component models one of the K groups; this model fits the data for maximum likelihood. The membership of the observations in one of the K groups can be estimated by means of a rule called maximum a posteriori probability, which considers the conditional probability that observation xi arises from group k (Lebret et al., Reference Lebret, Lovleff, Langrognet, Biernacki, Celeux and Govaert2015). Semi-supervised classification in Rmixmod is accomplished by calling two functions: mixmodLearn() and mixmodPredict().

The first function requires two essential arguments to be able to run: an array of X data and a vector containing the known labels z. This function can be combined with other instances to maximize classification performance. Creating an instance of the ‘[GaussianModel]’ class with the ‘mixmodGaussianModel’ function allows you to define a list of 28 Gaussian models to test in the classification, from which the most suitable model will be selected based on selection criteria that measure the goodness of fit of a statistical model (i.e., BIC or cross-validation [CV]). The arguments in this function define a family of models (general, diagonal, and spherical):

(7)

(7)

Forcing this argument to “all” instructs the program to test all models: those that impose spherical covariance matrices, those that impose diagonal covariance matrices, and those models with variable proportions, volume, and orientation. Rmixmod uses the EM algorithm to obtain parameter estimates for mixed models (Dempster et al., Reference Dempster, Laird and Rubin1977). The EM algorithm is applied in situations where one wishes to estimate a set of θ parameters that describe an underlying probability distribution, given only an observed part of the complete data produced by the distribution. It consists of two steps: step E and step M. Step E (expectation) calculates the probability that each of the observations belongs to one of the Gaussians; step M (maximization) maximizes the likelihood function with the probabilities calculated in step E, obtaining a new set of parameters that are used to update the conditional expectation estimate of the unknown data in the next iteration. The values obtained in step M provide the posterior probability that observation xi belongs to group k (Cribbin, Reference Cribbin2008). Steps E and M are repeated iteratively until the likelihood converges (Lerdo de Tejada Pavón, Reference Lerdo de Tejada Pavón2014).

At the end of the process, the ‘mixmodLearn()’ function returns an instance of the ‘mixmodLearn’ class. Its two attributes will have the following outputs:

Results: a list of MixmodResults objects containing all the results sorted in ascending order according to the given criterion (in descending order in the CV criterion);

bestResult: a MixmodResults object that includes the best results from the model fitting.

Regarding the second function, mixmodPredict(), it only needs two arguments: an array of data from the unlabeled observations and a classification rule that extracts the parameters obtained with the ‘bestResult’ attribute to make the prediction. The algorithm returns an instance of the MixmodPredict class containing predicted partitions and probabilities (Langrognet et al., Reference Langrognet, Lebret, Poli, Lovleff and Auder2025). For the classification of partial data, there are two criteria: BIC and CV. For BIC, the log-likelihood of partial labeling must be used, where all the tags known as a priori (

![]() ) remain fixed in step E of the EM algorithm (Lebret et al., Reference Lebret, Lovleff, Langrognet, Biernacki, Celeux and Govaert2015). As for CV, which is the default criterion, this is a technique for evaluating how the results of a statistical analysis generalize to a set of independent data. What it does is form a subgroup and call it a training set and validate it with another subgroup called a test set. By using random partitions in V-blocks of approximately equal sizes, it obtains unbiased estimates of the error rate (Lebret et al., Reference Lebret, Lovleff, Langrognet, Biernacki, Celeux and Govaert2015). The classification rule used in the model adjustment includes the number of clusters, the selected model, and the best parameters of the model using the criteria (BIC or CV); under this scheme, it is possible to select the criterion that gives the best configuration.

) remain fixed in step E of the EM algorithm (Lebret et al., Reference Lebret, Lovleff, Langrognet, Biernacki, Celeux and Govaert2015). As for CV, which is the default criterion, this is a technique for evaluating how the results of a statistical analysis generalize to a set of independent data. What it does is form a subgroup and call it a training set and validate it with another subgroup called a test set. By using random partitions in V-blocks of approximately equal sizes, it obtains unbiased estimates of the error rate (Lebret et al., Reference Lebret, Lovleff, Langrognet, Biernacki, Celeux and Govaert2015). The classification rule used in the model adjustment includes the number of clusters, the selected model, and the best parameters of the model using the criteria (BIC or CV); under this scheme, it is possible to select the criterion that gives the best configuration.

Finally, for displaying the estimated classification structure, we recommend using the R package ClusVis, which performs a Gaussian-based visualization using the conditional memberships of the model fitted to the input data. The algorithm rests on the assumption that only one display output projected on a plane R2 must match the mixture of the input classification; this is justified because both objects involved are of the same nature (i.e., they are probabilistic objects) (Biernacki et al., Reference Biernacki, Marbac and Vandewalle2021). The output of the mixture includes spherical Gaussian components with the same number of components as the initial clustering mixture. The accuracy of the mapping from the original mixture space (f) to the final mixture space (

![]() ) is evaluated through the normalized entropy difference δE(f,

) is evaluated through the normalized entropy difference δE(f,

![]() ). The range of normalized entropy values is [−1, 0, 1], with δE = 0 being the most accurate value in terms of mapping accuracy between the overlap of components f and (

). The range of normalized entropy values is [−1, 0, 1], with δE = 0 being the most accurate value in terms of mapping accuracy between the overlap of components f and (

![]() ). Like other projection methods, the percentage of inertia per axis can also be used as a measure of mapping quality, with the closest to 100% being the best fit of the model.

). Like other projection methods, the percentage of inertia per axis can also be used as a measure of mapping quality, with the closest to 100% being the best fit of the model.

The ClusVis function requires two parameters: the logarithm of the classification probabilities of each specimen and the proportion of the mixture. By specifying the option add.obs = FALSE, the graph will represent the overlap between the groups. Each group is represented by its centers, with a 95% confidence level border. For further details on the implementation of the R package Rmixmod, see (Langrognet et al., Reference Langrognet, Lebret, Poli, Lovleff and Auder2025); for the Gaussian-based visualization (ClusVis), see (Biernacki et al., Reference Biernacki, Marbac and Vandewalle2021).

8 Model Validation

In most archaeometry research, exploratory techniques are used without validating the results. However, the validation of statistical results is a mandatory process that allows the confirmation of the initial research hypotheses and statistically verifies the clustering or classification obtained, since many traditional clustering algorithms can generate a partition of the sample space regardless of whether the data have a clusterable structure (Duda et al., Reference Duda, Hart and Stork2001; Xu & Wunsch, Reference Xu and Wunsch2005). These methods impose certain constraints on the behavior of the data and, if the actual clusters in the data do not match the target function of the clustering algorithm and have another geometric shape, then the data is forced to match the target function of the algorithm, producing misleading results (Thrun, Reference Thrun2018). For example, Ward’s method only works efficiently when clusters are spherical and leads to misclassifications when clusters deviate from this assumption, as well as not being robust in the presence of outliers.