Introduction

Systematic tracking of participant contacts is essential for maintaining study validity, as incomplete contact documentation and loss to follow-up can introduce bias and undermine generalizability [Reference Hunt and White1]. However, most clinical research management systems document study contacts only after enrollment begins [Reference Shen, Zhai, Pan, Zhao, Zhou and Liu2,Reference Köpcke and Prokosch3]. This pattern likely reflects the absence of reporting requirements for pre-consent recruitment activity, as well as the highly variable nature of recruitment workflows. Indeed, identifying eligible patients often requires significant manual effort, with research teams frequently relying on ad hoc tools such as spreadsheets and email to track recruitment contacts longitudinally [Reference Fitzer, Haeuslschmid and Blasini4]. As a result, few tools support the systematic monitoring of outreach and contact attempts prior to enrollment, despite their importance for understanding recruitment efficiency, yield, and representativeness.

When implemented, recruitment monitoring tools can provide real-time insight into enrollment patterns, facilitate identification of hard-to-recruit subgroups, and enable adaptive strategies (e.g., re-contacting or switching recruitment modes) to enhance participation [Reference Park, Yoon and Koo5,Reference Dowling, Olson, Mish, Kaprakattu and Gleason6]. These capabilities are especially important in rare disease research, where recruitment inefficiencies driven by limited eligible populations, heterogeneous or fragmented care delivery, and variable site engagement constrain recruitment efficiency and yield [Reference Whicher, Philbin and Aronson7,Reference Subbiah, Othus, Palma, Cuglievan and Kurzrock8]. This constrained and fragmented research landscape is also true in studies of neuroendocrine tumors (NETs), where available trials remain relatively few, are often small or early-phase, and are unevenly distributed geographically [Reference Melhorn, Sadeghi, Raderer and Kiesewetter9], underscoring the need for efficient, multi-site approaches to identifying, engaging, and enrolling patients across diverse care settings.

The Neuroendocrine Tumors – Patient Reported Outcomes (NET-PRO) study is a multicenter cohort designed to compare treatment approaches for gastroenteropancreatic (GEP) and lung NETs on patient outcomes [Reference ORorke and Chrischilles10]. To minimize nonparticipation bias, NET-PRO employed both online and postal recruitment and developed a centralized tool (Supplemental Material) to track and manage recruitment efforts across sites. This paper illustrates how recruitment monitoring data can be used to optimize enrollment processes and improve representativeness in rare disease research.

Materials and methods

Study population

NET-PRO is a cohort of 2,538 patients aged ≥18 years diagnosed with a GEP or lung NET between January 1, 2018, and September 30, 2024, identified across 14 medical centers from four PCORnet networks. Eligible patients had ≥1 clinical encounter at the recruiting site during this period; those with a prior NET diagnosis before 2018 were excluded. Additional exclusions included duplicate or ineligible consents and participants with missing or conflicting tumor site reports (Supplementary Figure 1).

Participating centers included Allina Health, Kansas University Medical Center, Mayo Clinic, Medical College of Wisconsin, Medical University of South Carolina, The Ohio State University, University of Florida, University of Iowa, University of Michigan, University of North Carolina, University of Pittsburgh Medical Center, University of Texas Southwestern, University of Utah, and Vanderbilt University Medical Center. The University of Iowa served as the coordinating center for the study and was the Institutional Review Board (IRB) of record for all sites (ID#: 202104599) except Vanderbilt University (ID#: 221155).

Recruitment and enrollment

Enrollment began January 10, 2022, and concluded February 20, 2025. Enrollment was defined as completion of both informed consent and the baseline survey; individuals who consented but did not complete the baseline survey were not considered enrolled for analytic purposes. A validated computable phenotype was used to identify potentially eligible patients via electronic medical records (EMRs), with tailored versions for different strategies: a low-touch, high-specificity phenotype (for email/mail), a high-sensitivity version (for in-person/chart review), and a tumor registry-based approach leveraging structured oncology data. Patients identified via computable phenotypes or tumor registry queries were subsequently entered into the REDCap participation monitoring workflow, which linked case identification with outreach tracking and enrollment management.

Sites could use multiple outreach methods (email, phone, mail, in-clinic), depending on local resources, patient preferences, and regulatory requirements. Most patients were emailed a unique link to the study portal where informed consent was obtained and a baseline survey administered [Reference Hourcade, ORorke and Chrischilles11]. Sites also used mailed packets for patients without email, for those not responding to email or other approaches, or upon patient request.

A centralized participation monitoring project was developed using Research Electronic Data Capture (REDCap) tools hosted at The University of Iowa for tracking recruitment and enrollment events. REDCap is a secure, web-based software platform designed to support data capture for research studies [Reference Harris, Taylor and Minor12,Reference Harris, Taylor, Thielke, Payne, Gonzalez and Conde13]. Each site received a REDCap project template containing reports and a dashboard for case tracking that could be used to log patient contacts, track status (e.g., declines, withdrawals, deceased), and send email invitations. A subset of shared fields enabled sites to share recruitment data with the study coordinating center, while protecting patient personal health information (PHI). Similarly, individual sites could download a report from the study coordinating center containing status updates (e.g., patient enrolled or declined; patient reported as deceased, etc.) for import into their site project. This approach supported sites in near real-time to exclude cases from subsequent recruitment efforts and to monitor recruitment progress.

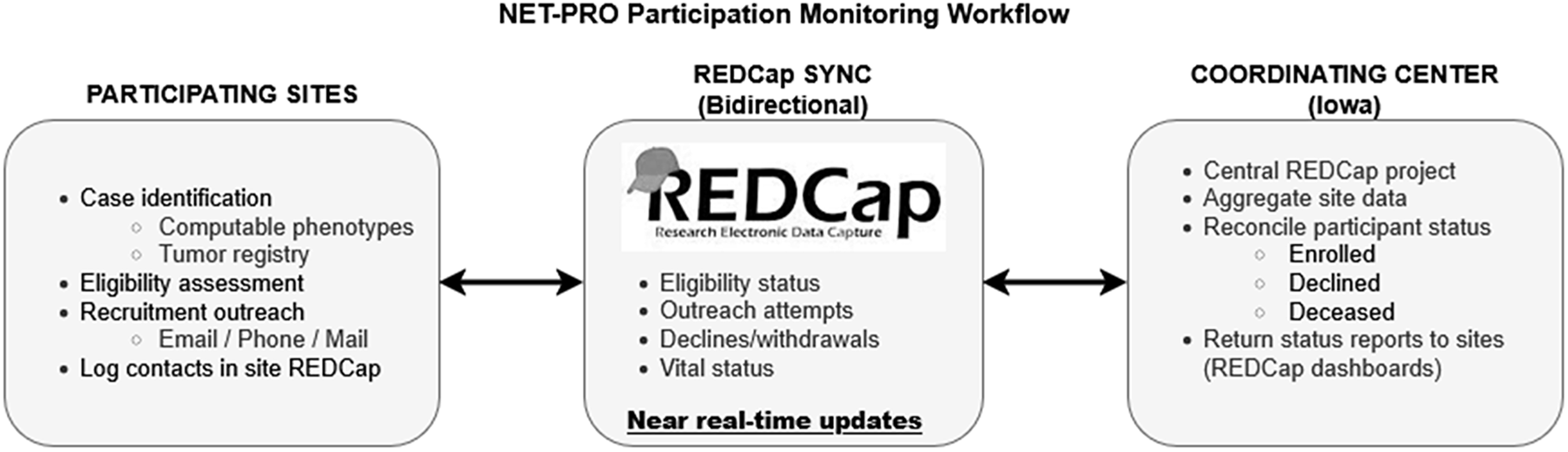

Participating sites were responsible for local case identification, eligibility assessment, and recruitment outreach, including application of computable phenotypes, initiation of contact attempts, and logging of recruitment contacts, while the coordinating center hosted the centralized participation monitoring infrastructure, aggregated recruitment status data across sites, and returned updated enrollment status reports to support coordinated recruitment and prevent redundant outreach. The division of responsibilities and bidirectional flow of recruitment information are illustrated in Figure 1. All sites were encouraged to use the participation monitoring process, although implementation varied by local resources and regulatory constraints. The system was operationally validated through routine cross-site use, including reconciliation of enrollment status and exclusion of ineligible or previously enrolled patients from further outreach. Three sites (Iowa, MUSC, and UTSW) recorded all potentially eligible patients before screening; others logged only those they intended to contact. These three sites maintained comprehensive screening logs of all potentially eligible patients prior to outreach, reflecting local regulatory approvals and staffing capacity to document precontact eligibility; other sites recorded only patients they intended to contact due to institutional policies or resource constraints.

NET-PRO participation monitoring workflow. Participating sites identified potentially eligible patients using validated computable phenotypes, tumor registry queries, or manual chart review. Eligibility assessment and recruitment outreach (email, phone, mail, or in-clinic) were conducted locally and logged in site-level REDCap projects. A centralized REDCap participation monitoring project, hosted by the coordinating center, enabled bidirectional exchange of limited recruitment and status data, including eligibility confirmation, outreach attempts, enrollment, declines, and vital status. Near–real-time synchronization allowed sites to exclude patients who had already enrolled, declined participation, or were deceased, and supported adaptive recruitment strategies across sites. Enrollment was defined by completion of informed consent and baseline survey, either through the online study portal or via mailed materials.

Study variables and analysis

Surveys were completed online or by mail, with enrollment mode defined by the mode of informed consent and baseline survey completion (online study portal versus mailed paper materials), collecting a range of patient-reported data [Reference ORorke and Chrischilles10]. In-person consent was used infrequently in this study; “online” enrollment refers exclusively to consent and survey completion via the study portal. For this analysis, we assessed patterns in enrollment mode and recruitment difficulty (number of contact attempts before consent) across sociodemographic characteristics, tumor stage, and comorbidities. We also examined the mix of contact methods (e.g., email, phone, in-clinic, mail) across recruitment attempts (email, EMR message, phone call, in-clinic, letter, mailed study packet). Because participation-related factors may confound treatment-outcome associations, we explored differences in tumor stage and comorbidities by enrollment mode and recruitment difficulty, both potentially related to treatment choices and patient-reported outcomes.

We used standardized mean differences (SMDs) [Reference Flury and Riedwyl14–Reference Yoshida, Bartel and Chipman17] to compare enrollment mode groups (with a SMD ≥ 0.2, 0.5, and 0.8 indicating small, medium, and large effect sizes, respectively) [Reference Faraone18]. For recruitment difficulty (individuals who required multiple contact attempts before consenting to participate in the study), categorical variables were compared across difficulty groups using Pearson’s chi-square test or Fisher’s exact test, if any cell in the contingency table had fewer than five observations. Continuous variables were compared using one-way ANOVA.

Results

Response and completion rates

Of 9279 eligible patients contacted (range: 1–11 contacts overall; 1–9 among enrollees), 2675 consented (response rate: 28.8%), and 2538 enrolled (27.4%) (Figure 1, Table 1). Most enrolled online (n = 2,111; 83.2%) while 427 (16.8%) enrolled by mail. Among three sites that recorded all potentially eligible patients the proportion deemed eligible after medical record review ranged from 41.6% to 62.3%.

Survey response and completion rates

a Applies only to the three study sites that used the database to load all patients they intended to screen. Eligible proportion is the number contacted divided by the number screened at those sites.

b Response proportion is the number who consented divided by the number contacted.

c Enrolled proportion is the number who completed the baseline survey divided by the number contacted.

Iowa =University of Iowa, MUSC= Medical University of South Carolina, UTSW = University of Texas South Western.

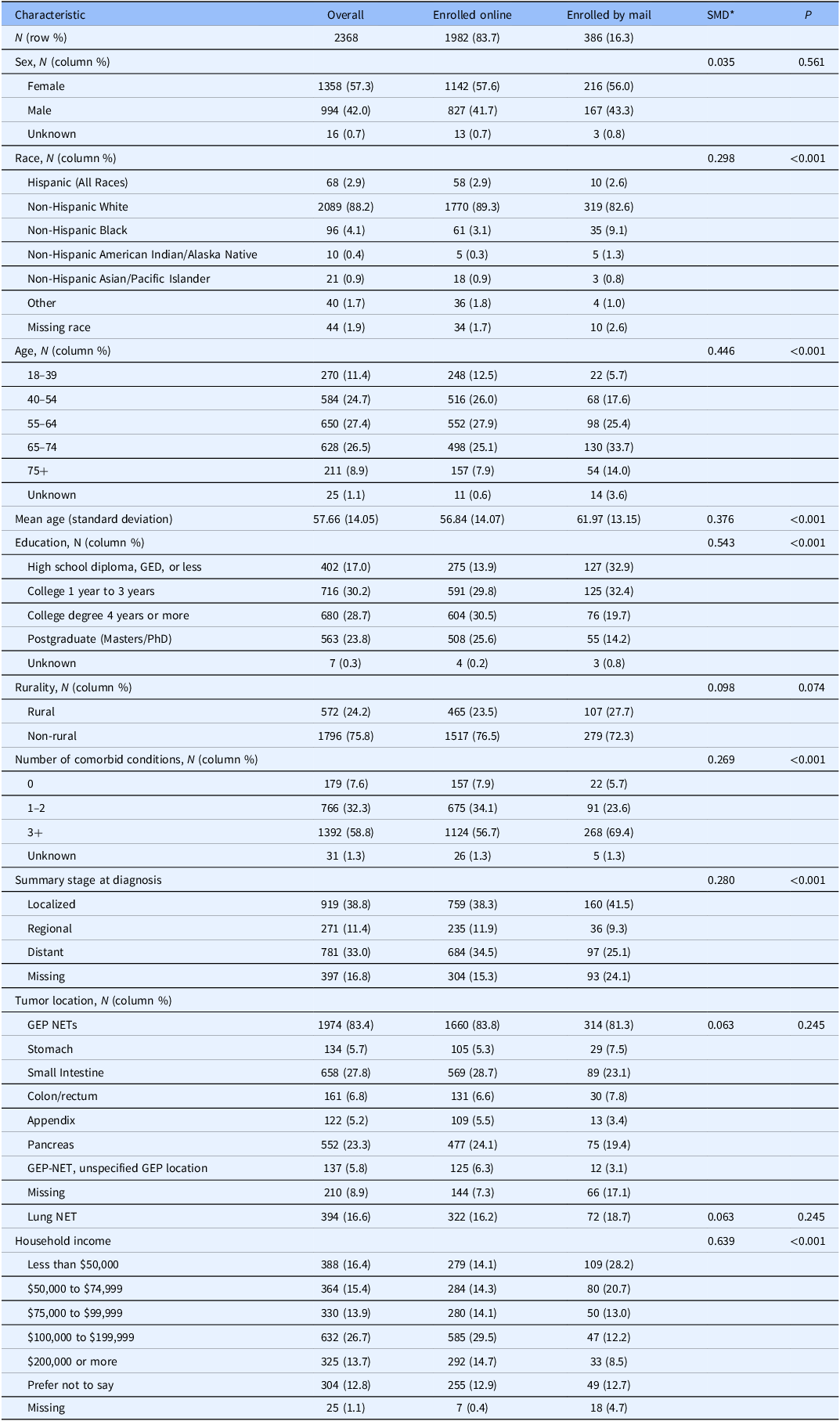

Participant characteristics by enrollment mode

There were substantial differences in sociodemographic and health status characteristics between those who enrolled online versus by mail. Mail enrollees were older (mean difference: 4.7 years; p < 0.001), had lower educational attainment and income (both p < 0.001), and reported more comorbidities (p < 0.001) than online enrollees (Table 2). Non-Hispanic Black participants were more likely to enroll by mail. There were no differences by sex or tumor site, though tumor stage was more often missing among mail respondents.

Participant characteristics by enrollment mode

* Standardized mean differences (SMDs) were computed as the difference in means divided by the pooled standard deviation for continuous variables. For categorical variables, SMDs were calculated based on differences in proportions across groups (9-12).

N = Number of participants, GED = General Educational Development (high school equivalency), PhD = Doctor of Philosophy, NET = Neuroendocrine tumor, GEP NETs / GEP-NET = Gastroenteropancreatic neuroendocrine tumors, SD = Standard deviation, P = P-value, + = Indicates “or more.”

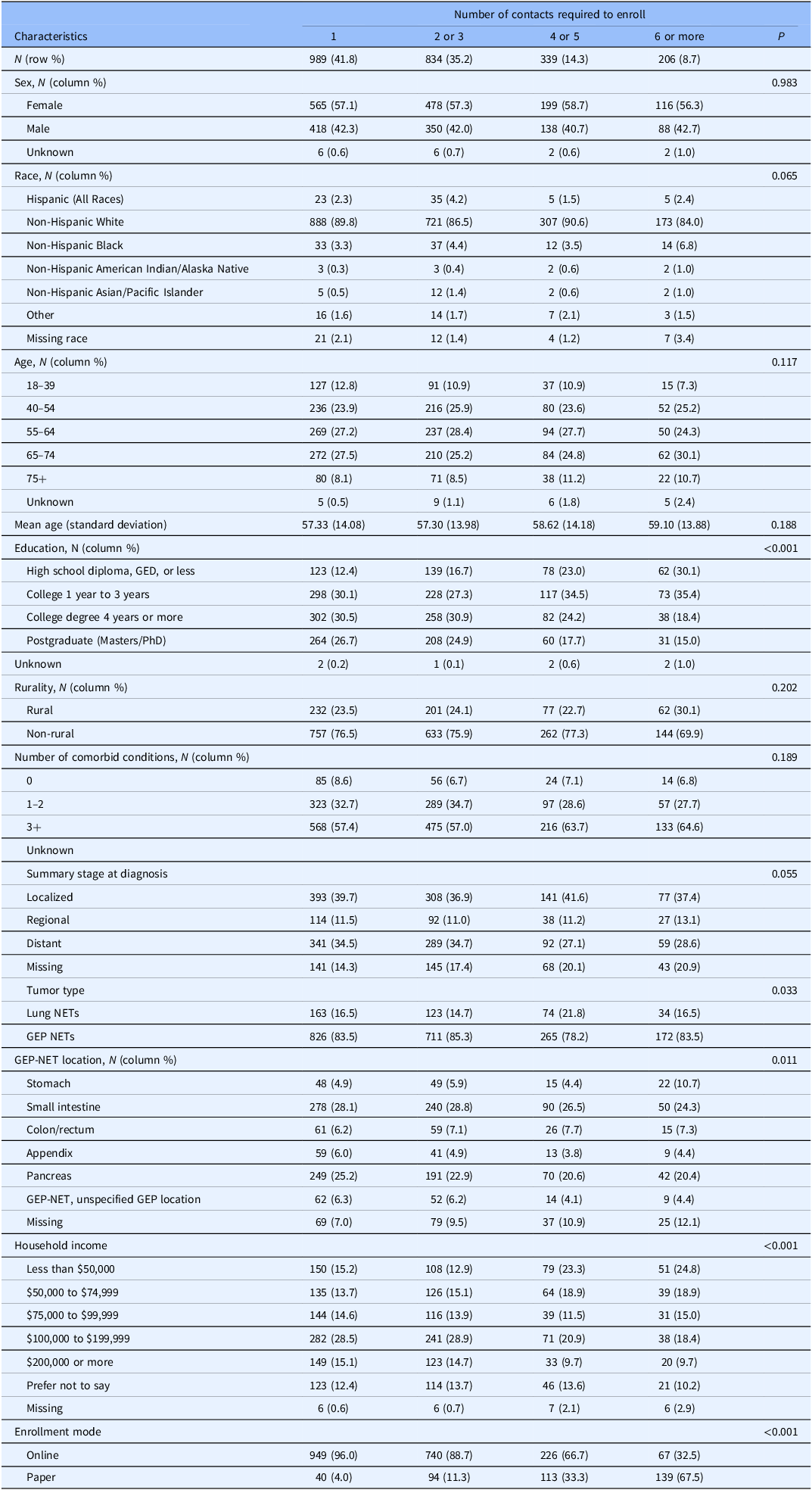

Participant characteristics by recruitment difficulty

Most participants enrolled after one (41.8%) or two to three contacts (35.2%), while 14.3% required four to five, and 8.7% needed six or more contacts (Table 3). Participants who were more difficult to enroll, as measured by a higher number of recruitment contacts prior to enrollment, tended to be older, have lower income and education, and had higher rates of missing tumor site and stage data (Table 3). There was no clear pattern by tumor stage or GEP-NET location. Recruitment difficulty and enrollment mode were related but not synonymous. While harder-to-reach participants, individuals requiring a higher number of contact attempts prior to consenting to participate (reflecting increased recruitment effort rather than lack of interest or disengagement), were more likely to enroll by mail (p = <0.001), the majority of harder-to-reach participants nonetheless completed enrollment online.

Influence of recruitment difficulty on enrolled participant characteristics

*Categorical variables were compared across groups using Pearson’s chi-square test or Fisher’s exact test, if any cell in the contingency table had fewer than five observations. Continuous variables were compared using one-way ANOVA.

N = Number of participants, GED = General Educational Development (high school equivalency), NET = Neuroendocrine tumor, GEP NETs / GEP-NET = Gastroenteropancreatic neuroendocrine tumors, SD = Standard deviation, P = P-value, ANOVA = Analysis of variance, + = Indicates “or more.”

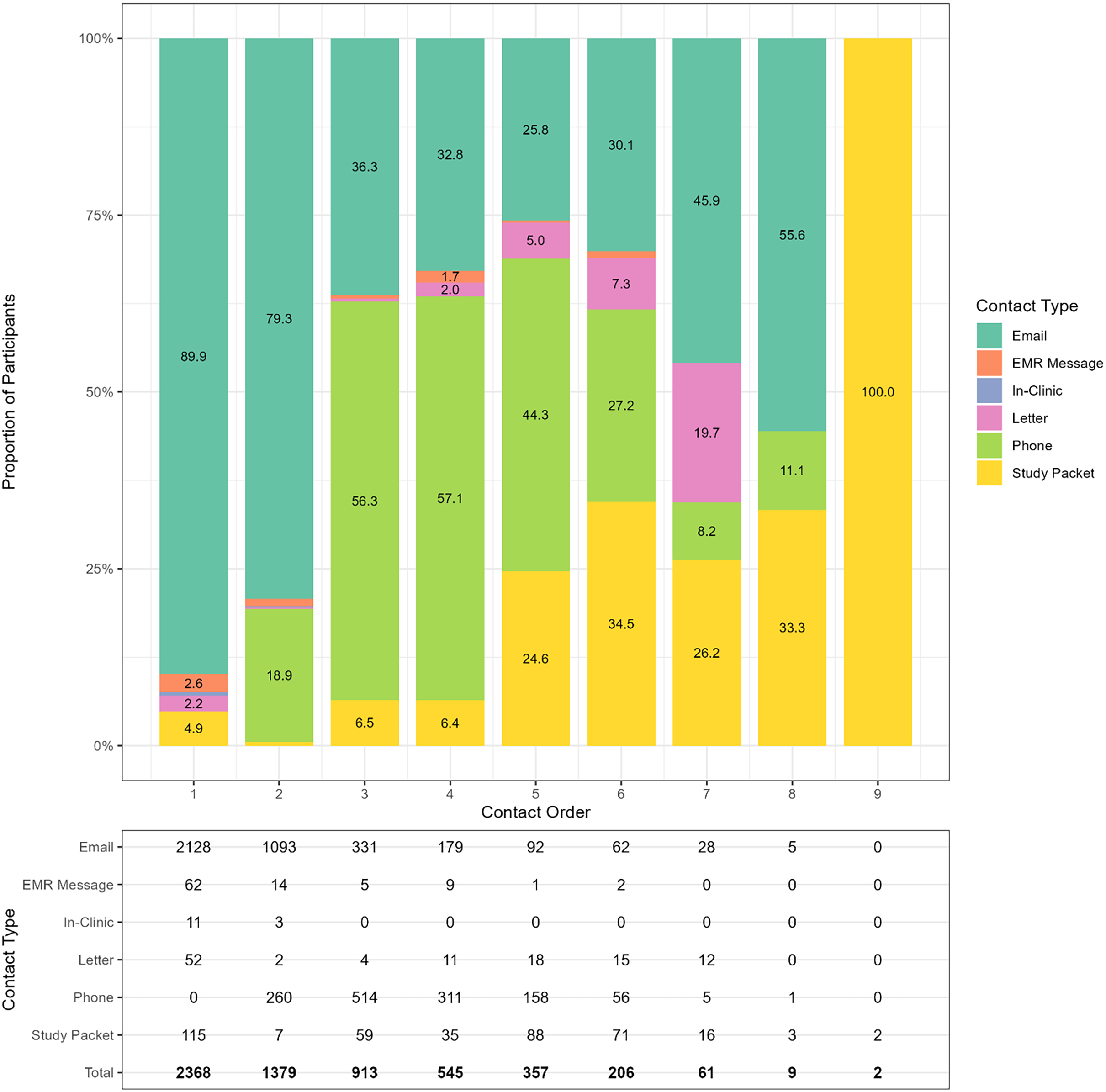

Recruitment mode by contact attempt

Recruitment efforts evolved across contact attempts (Figure 2). While 89.9% of first contacts were via email, this dropped to 25.8% by the fifth attempt, then rose to 55.6% by the eighth. Mailed packets were typically initiated after the fourth attempt. Phone calls began as early as the second contact and accounted for over half of third and fourth contacts, highlighting their importance in engaging harder-to-reach participants and prompting consent among those not responsive to earlier email invitations.

Stacked bar chart showing the distribution of contact types across successive contact attempts. The x-axis represents the contact order (1st attempt, 2nd attempt, etc.), while the y-axis indicates the proportion of each contact type (e.g., email, phone, letter, etc.) used at each attempt. The numbers in the data box indicate the number of participants per contact order by contact type.

Discussion

In this multicenter PCORnet rare disease study, deploying multiple recruitment modes improved participation while supporting broader inclusion. The participation monitoring tool allowed sites to document recruitment contacts, determine when outreach should stop (e.g., after enrollment or decline), and adapt recruitment strategies over time.

Substantial sociodemographic differences were observed by enrollment mode and recruitment difficulty (Tables 2 and 3). Mail enrollees and harder-to-recruit participants were generally older, had lower educational attainment and household income, and were more likely to identify as non-Hispanic Black. These findings indicate that tracking recruitment effort and offering multiple recruitment pathways can facilitate inclusion of individuals who are often underrepresented in electronic-only recruitment strategies. Consistent with prior survey research, reliance on electronic enrollment alone may disproportionately exclude older adults and individuals with lower socioeconomic status [Reference Edwards, Roberts, Clarke, DiGuiseppi, Woolf and Perkins19,Reference Tolonen, Helakorpi, Talala, Helasoja, Martelin and Prättälä20]. While mixed-mode approaches may increase participation, they do not necessarily mitigate sociodemographic disparities [Reference Grønkjær, Elsborg and Lau21]. These patterns are particularly salient in rare disease research, where small eligible populations, fragmented care pathways, and limited trial availability often necessitate sustained, multi-site recruitment efforts and adaptive outreach strategies [Reference Whicher, Philbin and Aronson7,Reference Melhorn, Sadeghi, Raderer and Kiesewetter9,Reference Griggs, Batshaw and Dunkle22].

In contrast, associations between clinical characteristics and enrollment mode or recruitment difficulty were modest. Mail enrollees reported more comorbidities, and participants requiring more contact attempts were more likely to have missing self-reported tumor stage data (Table 2). This is relevant for observational comparative effectiveness research, as factors such as comorbidity and tumor stage influence both treatment selection and outcomes (quality of life or tumor progression). The absence of strong or consistent differences in clinical characteristics between easier and harder-to-recruit participants may therefore be reassuring, suggesting limited selection bias with respect to overall health status (Table 3). The need for repeated contact among certain subgroups aligns with prior recruitment studies showing that varied and sustained outreach is often required to engage individuals who are not responsive to initial electronic invitations [Reference Edwards, Roberts, Clarke, DiGuiseppi, Woolf and Perkins19,Reference Grønkjær, Elsborg and Lau21]. This pattern is clearly illustrated in Figure 2, which depicts the temporal evolution of outreach methods across successive contact attempts.

This study also demonstrates the feasibility of using EMR computer algorithms (also called computable phenotypes) to support recruitment in rare disease research. For the NET-PRO study, all participating sites used one or more computable phenotypes to identify potentially eligible patients, and three sites (Iowa, MUSC, and UTSW) recorded all patients screened for eligibility (Table 1). This allowed an estimation of the eligible proportions for a rare disease computable phenotype approach to recruitment. These proportions ranged from 42% to 62%, consistent with prior frameworks emphasizing that phenotype performance varies with clinical complexity, data availability, and algorithm design choices [Reference Carrell, Floyd and Gruber23]. Thus, differences across sites likely reflect the specific phenotyping strategies employed, including high-touch (low sensitivity) and low-touch (high positive predictive value) EHR-based approaches and tumor registry or other site-specific data queries. Nevertheless, these findings support the feasibility of efficiently identifying eligible participants in a rare disease cohort.

Finally, this analysis also highlights the advantages of using REDCap for participation monitoring rather than developing bespoke software or licensing specialized commercial platforms [Reference Palac, Alam, Kaiser, Ciolino, Lattie and Mohr24]. REDCap is available at no cost to academic, nonprofit, and government organizations [Reference Harris, Taylor, Thielke, Payne, Gonzalez and Conde13]. This widespread availability enabled the University of Iowa coordinating center to deploy a centralized monitoring project and share standardized site-level templates, facilitating harmonized tracking of recruitment and enrollment across sites.

Although assessment of representativeness was not a prespecified study aim, observed differences by enrollment mode and recruitment difficulty provide insight into how recruitment strategies may influence cohort composition. These findings are therefore interpreted cautiously as implications of recruitment processes rather than as formal assessments of population representativeness, particularly given the use of English-only recruitment materials and other pragmatic constraints. Additional limitations include the inability to estimate eligibility proportions at sites that did not track patients prior to eligibility confirmation, and reliance on self-reported tumor stage, though EHR-based stage abstraction is currently underway.

Conclusion

In this multicenter study, a centralized participation monitoring tool supported substantial participation while enabling systematic documentation of recruitment effort and enrollment outcomes. By tracking recruitment contacts across sites and modes, this approach allowed adaptive outreach, reduced redundant contact, and facilitated empirical evaluation of how recruitment effort influenced cohort composition. In rare disease research, where eligible populations are limited and recruitment inefficiencies can substantially constrain study feasibility, this pragmatic, REDCap-based infrastructure offers a scalable solution readily transferable to other multi-site observational studies and pragmatic trials.

Supplementary material

The supplementary material for this article can be found at https://doi.org/10.1017/cts.2026.10703.

Acknowledgements

We gratefully acknowledge the patients who generously contributed their time and experiences to the NET-PRO study. Their participation made this research possible and meaningful. We also extend our sincere thanks to the dedicated site personnel who comprised the NET-PRO study team across the PCORnet CRNs. We thank our stakeholders, patient advocacy organizations (NorCalCarciNET community, Healing NET Foundation, Neuroendocrine Cancer Awareness Network and the Neuroendocrine Tumor Research Foundation) and patient representatives who actively engaged with the study. Their insights and partnership were critical in shaping the study’s design, conduct, and relevance to the broader neuroendocrine tumor community. Finally, we are indebted to the Patient-Centered Outcomes Research Institute (PCORI) for their support, particularly our program officer and the broader PCORI staff, whose guidance and oversight were invaluable throughout the course of this project.

Author contributions

Michael A. O’Rorke: Conceptualization, Data curation, Formal analysis, Funding acquisition, Investigation, Methodology, Project administration, Resources, Supervision, Validation, Visualization, Writing-original draft, Writing-review and editing; Brian M. Gryzlak: Conceptualization, Data curation, Investigation, Methodology, Project administration, Resources, Software, Supervision, Writing-original draft, Writing-review and editing; Tao Xu: Data curation, Investigation, Methodology, Project administration, Resources, Software, Writing-original draft, Writing-review and editing; Bradley D. McDowell: Data curation, Investigation, Methodology, Project administration, Resources, Writing-original draft, Writing-review and editing; Rhonda R. DeCook: Data curation, Formal analysis, Investigation, Methodology, Project administration, Software, Writing-original draft, Writing-review and editing; Nicholas J. Rudzianski: Data curation, Investigation, Methodology, Project administration, Resources, Writing-original draft, Writing-review and editing; Kimberly C. Serrano: Investigation, Project administration, Writing-original draft, Writing-review and editing; Abigayle M. Wehrheim: Investigation, Project administration, Writing-original draft, Writing-review and editing; Udhayvir S. Grewal: Investigation, Writing-original draft, Writing-review and editing; Chandrikha Chandrasekharan: Data curation, Investigation, Writing-original draft, Writing-review and editing; Joseph S. Dillon: Data curation, Investigation, Methodology, Writing-original draft, Writing-review and editing; Thorvardur R. Halfdanarson: Data curation, Investigation, Writing-original draft, Writing-review and editing; Michael J. Schnell: Project administration, Writing-original draft, Writing-review and editing; Carrie L. Witter: Project administration, Writing-original draft, Writing-review and editing; T Gamblin: Data curation, Investigation, Writing-original draft, Writing-review and editing; Syed Kazmi: Data curation, Investigation, Writing-original draft, Writing-review and editing; Lindsay G. Cowell: Data curation, Investigation, Writing-original draft, Writing-review and editing; Tobias Else: Data curation, Investigation, Writing-original draft, Writing-review and editing; Heloisa P. Soares: Data curation, Investigation, Writing-original draft, Writing-review and editing; Vineeth Sukrithan: Data curation, Investigation, Writing-original draft, Writing-review and editing; Sravani Chandaka: Data curation, Investigation, Writing-original draft, Writing-review and editing; Hanna K. Sanoff: Data curation, Investigation, Writing-original draft, Writing-review and editing; Fiona C. He: Data curation, Investigation, Writing-original draft, Writing-review and editing; David A. Geller: Data curation, Investigation, Writing-original draft, Writing-review and editing; Robert A. Ramirez: Data curation, Investigation, Writing-original draft, Writing-review and editing; Mei Liu: Data curation, Investigation, Writing-original draft, Writing-review and editing; William Lancaster: Data curation, Investigation, Writing-original draft, Writing-review and editing; Josh A. Mailman: Conceptualization, Funding acquisition, Investigation, Project administration, Writing-original draft, Writing-review and editing; Heather Moran: Investigation, Project administration, Writing-original draft, Writing-review and editing; Maryann Wahmann: Investigation, Project administration, Writing-original draft, Writing-review and editing; Elyse Gellerman: Investigation, Project administration, Writing-original draft,Writing-review and editing; Elizabeth Chrischilles: Conceptualization, Data curation, Funding acquisition, Investigation, Project administration, Resources, Visualization, Writing-original draft, Writing-review and editing.

Funding statement

Research reported in this paper is funded through a Patient-Centered Outcomes Research Institute (PCORI) Award (RD-2020C2-20329); the National Center for Advancing Translational Sciences of the National Institutes of Health (UM1TR004403); and shared resources administered under a Cancer Center support Grant (P30 CA086862). The content is solely the responsibility of the authors and does not necessarily represent the official views of PCORI, or the National Institutes of Health.

Competing interests

None.