1 Introduction

Cognitive diagnostic models (CDMs) are a family of restricted latent class models that describe how a person’s underlying discrete latent traits, in conjunction with item characteristics, influence their responses to test items. CDMs provide fine-grained diagnostic information about whether an individual has mastered specific skills or attributes. This detailed feedback makes CDMs particularly valuable in a wide range of applications across education, behavioral sciences, and social sciences (Chen et al., Reference Chen, Liu, Xu and Ying2015; De La Torre & Douglas, Reference De La Torre and Douglas2004, Reference De La Torre and Douglas2008; Tatsuoka, Reference Tatsuoka1984; Von Davier, Reference Von Davier2008).

As a class of confirmatory psychometric models, CDMs require several key components to be specified prior to application to a dataset. Two essential latent structures must be defined. The first is the Q-matrix (Tatsuoka, Reference Tatsuoka1983), which specifies the relationship between items and the attributes they measure. The second structure concerns the relationships among the latent attributes themselves. Although many earlier studies did not impose any assumptions about hierarchical structures among attributes, accumulating evidence suggests that hierarchical dependencies are frequently present in practice. For example, in mathematics, multiplication is essentially a simplified form of addition, such as

![]() $2 \times 4 = 2 + 2 + 2 + 2 = 8$

. Therefore, mastering addition is widely regarded as a fundamental prerequisite for mastering multiplication. Recognizing such dependencies, scholars have explored ways to incorporate attribute hierarchies into CDMs. As a result, a growing body of methodological research has aimed to formally integrate hierarchical structures—where mastery of one attribute is required before another—into CDMs (e.g., Leighton et al., Reference Leighton, Gierl and Hunka2004; Ma & Xu, Reference Ma and Xu2022; Templin & Bradshaw, Reference Templin and Bradshaw2014).

$2 \times 4 = 2 + 2 + 2 + 2 = 8$

. Therefore, mastering addition is widely regarded as a fundamental prerequisite for mastering multiplication. Recognizing such dependencies, scholars have explored ways to incorporate attribute hierarchies into CDMs. As a result, a growing body of methodological research has aimed to formally integrate hierarchical structures—where mastery of one attribute is required before another—into CDMs (e.g., Leighton et al., Reference Leighton, Gierl and Hunka2004; Ma & Xu, Reference Ma and Xu2022; Templin & Bradshaw, Reference Templin and Bradshaw2014).

In many CDM-related studies, the Q-matrix and attribute hierarchy are often fully or partially specified by test developers based on cognitive theory or expert judgment. More recently, data-driven approaches have emerged that estimate the Q-matrix, attribute hierarchy, or both directly from examinees’ response data. The literature on Q-matrix estimation can be broadly divided into two categories: validation and refinement of Q-matrix with partial knowledge (Chiu, Reference Chiu2013; DeCarlo, Reference DeCarlo2012; de la Torre & Chiu, Reference de la Torre and Chiu2016; Templin & Henson, Reference Templin and Henson2006; Wang et al., Reference Wang, Cai and Tu2020), and completely data-driven estimation of unknown Q-matrix (Chen et al., Reference Chen, Liu, Xu and Ying2015; Chen et al., Reference Chen, Culpepper, Chen and Douglas2018; Chung, Reference Chung2019; Liu et al., Reference Liu, Xu and Ying2012, Reference Liu, Xu and Ying2013; Xu & Shang, Reference Xu and Shang2018). The estimation methods include both frequentist (Chen et al., Reference Chen, Liu, Xu and Ying2015; Liu et al., Reference Liu, Xu and Ying2012, Reference Liu, Xu and Ying2013; Xiang, Reference Xiang2013) and Bayesian approaches (Chen et al., Reference Chen, Culpepper, Chen and Douglas2018; Chung, Reference Chung2019; Culpepper, Reference Culpepper2019; Templin & Henson, Reference Templin and Henson2006).

For the estimation of attribute hierarchy structures, Wang and Lu (Reference Wang and Lu2021) introduced two exploratory methods using regularization techniques to learn hierarchies directly from data. Liu et al. (Reference Liu, Xin and Jiang2022) proposed a z-statistic approach to assess the significance of structural parameter estimates, and Zhang et al. (Reference Zhang, Jiang, Xin and Liu2024) refined this method for improved accuracy. Moreover, Lee and Gu (Reference Lee and Gu2024) proposed the latent conjunctive Bayesian networks (LCBNs) to unify the attribute hierarchy and the Bayesian network, and developed a two-step EM algorithm to estimate the hierarchy structure and item parameters. Assuming a known Q-matrix, Chen and Wang (Reference Chen and Wang2023) developed a Bayesian framework for estimating attribute hierarchies using a Metropolis-within-Gibbs sampler.

A few studies have sought to estimate both the Q-matrix and the attribute hierarchy jointly. For example, Ma et al. (Reference Ma, Ouyang and Xu2023) proposed a penalized likelihood approach to first select significant latent classes and item parameter structures, then recover the number of attributes, the attribute hierarchy, the Q-matrix, and item-level models. These developments reflect a growing interest in flexible, data-driven frameworks for cognitive diagnosis modeling.

The goal of this study is to address the problem of simultaneously learning the Q-matrix, attribute hierarchy, and CDM parameters from examinees’ item response data. Building on the deterministic inputs, noisy “and” gate (DINA) model, we propose a Bayesian estimation method that jointly estimates the attribute hierarchy, Q-matrix, item parameters, and examinees’ latent attribute profiles—without assuming prior knowledge of either the Q-matrix or the attribute hierarchy. Our approach extends the work of Chen and Wang (Reference Chen and Wang2023) on attribute hierarchy learning and Chung (Reference Chung2019) on Q-matrix estimation by developing a Metropolis–Hastings within Gibbs (MH-Gibbs) algorithm to estimate all parameters in a unified framework. To improve computational efficiency, we further incorporate a mini-batch strategy, enabling faster estimation while maintaining accuracy. Moreover, compared with Ma et al. (Reference Ma, Ouyang and Xu2023), which adopts a two-step procedure to estimate the attribute hierarchy and the Q-matrix, our method allows for the simultaneous estimation of all parameters in a unified framework. Moreover, unlike deterministic methods, our Bayesian approach summarizes the estimation of

![]() $\text {Q}$

and

$\text {Q}$

and

![]() $\text {G}$

based on posterior samples. This enables a more flexible, theory-driven validation process, where experts can evaluate the plausibility of multiple high-probability structures informed by domain knowledge, rather than relying solely on a single point estimate.

$\text {G}$

based on posterior samples. This enables a more flexible, theory-driven validation process, where experts can evaluate the plausibility of multiple high-probability structures informed by domain knowledge, rather than relying solely on a single point estimate.

In the remainder of this article, we first present the problem setup in Section 2. Section 3 introduces our proposed Bayesian estimation framework and details the development of an MH-Gibbs algorithm for jointly estimating the attribute hierarchy, Q-matrix, item parameters, and examinees’ latent attribute profiles under the DINA model. In Section 4, we provide further discussions on several issues of implementing the algorithm to obtain final point estimation of model parameters, including the selection of mini-batch sample size, the potential label-switching issues, and the determination of cut-off values for finalizing the latent structures. In Section 5, we conduct a comprehensive simulation study to evaluate the performance of the proposed algorithm and investigate the impact of various data conditions. Section 6 applies the proposed method to a real dataset to further demonstrate its practical utility. Finally, Section 7 concludes the article with a summary of key findings and outlines directions for future research.

2 Preliminaries: Model setup

2.1 DINA model

To introduce DINA model formulation, let

![]() $i~(i=1,\ldots ,N)$

denote examinees,

$i~(i=1,\ldots ,N)$

denote examinees,

![]() $j~(j=1,\ldots ,J)$

denote items, and

$j~(j=1,\ldots ,J)$

denote items, and

![]() $k~(k=1,\ldots ,K)$

denote attributes. Whether examinee i masters the attribute k is denoted as a binary variable

$k~(k=1,\ldots ,K)$

denote attributes. Whether examinee i masters the attribute k is denoted as a binary variable

![]() $\alpha _{ik}$

, where

$\alpha _{ik}$

, where

![]() $\alpha _{ik}=1$

if examinee i has mastered the attribute k and

$\alpha _{ik}=1$

if examinee i has mastered the attribute k and

![]() $\alpha _{ik}=0$

otherwise. We use a K-dimensional binary vector,

$\alpha _{ik}=0$

otherwise. We use a K-dimensional binary vector,

![]() $\boldsymbol {\alpha }_{i}=(\alpha _{i1},\ldots ,\alpha _{ik},\ldots ,\alpha _{iK})^{\text {T}}$

, to represent the latent attribute profile of examinee i. Therefore, there are

$\boldsymbol {\alpha }_{i}=(\alpha _{i1},\ldots ,\alpha _{ik},\ldots ,\alpha _{iK})^{\text {T}}$

, to represent the latent attribute profile of examinee i. Therefore, there are

![]() $C=2^K$

possible attribute profiles. Let class c denote the bijection index of the attribute profile, where

$C=2^K$

possible attribute profiles. Let class c denote the bijection index of the attribute profile, where

![]() $c=0,\ldots,C-1$

.

$c=0,\ldots,C-1$

.

Furthermore, a

![]() $J \times K $

-dimensional

$J \times K $

-dimensional

![]() $\text {Q}$

-matrix,

$\text {Q}$

-matrix,

![]() $\text {Q}=(\boldsymbol {q}_1,\ldots ,\boldsymbol {q}_j,\ldots ,\boldsymbol {q}_J)^{\text {T}}$

, is introduced to describe which attributes are measured by each item. Here,

$\text {Q}=(\boldsymbol {q}_1,\ldots ,\boldsymbol {q}_j,\ldots ,\boldsymbol {q}_J)^{\text {T}}$

, is introduced to describe which attributes are measured by each item. Here,

![]() $\boldsymbol {q}_j^{\text {T}}=(q_{j1},\ldots ,q_{jk},\ldots ,q_{jK})$

is the j-th row of

$\boldsymbol {q}_j^{\text {T}}=(q_{j1},\ldots ,q_{jk},\ldots ,q_{jK})$

is the j-th row of

![]() $\text {Q}$

-matrix, corresponding to measured attributes by item j with

$\text {Q}$

-matrix, corresponding to measured attributes by item j with

![]() $q_{ik}=1$

if the attribute k is required by item j, and

$q_{ik}=1$

if the attribute k is required by item j, and

![]() $q_{ik}=0$

otherwise. Let

$q_{ik}=0$

otherwise. Let

![]() $Y_{ij}$

denote the binary item response variable for the examinee i to the item j, where

$Y_{ij}$

denote the binary item response variable for the examinee i to the item j, where

![]() $Y_{ij}$

equals 1 if the ith examinee correctly answered the item j, and 0 otherwise.

$Y_{ij}$

equals 1 if the ith examinee correctly answered the item j, and 0 otherwise.

The DINA model assumes an examinee need to master all the required attributes for item j to have a high chance to answer that item correctly. To reflect this assumption, the ideal response variable

![]() $\eta _{ij}=\prod _{k=1}^{K}\alpha _{ik}^{q_{jk}}$

is defined to formulate the item response model. Here,

$\eta _{ij}=\prod _{k=1}^{K}\alpha _{ik}^{q_{jk}}$

is defined to formulate the item response model. Here,

![]() $\eta _{ij}=1$

indicates that examinee i possesses all the required attributes for item j; otherwise,

$\eta _{ij}=1$

indicates that examinee i possesses all the required attributes for item j; otherwise,

![]() $\eta _{ij}=0$

. In addition, two item parameters are introduced for each item j: the slipping parameter

$\eta _{ij}=0$

. In addition, two item parameters are introduced for each item j: the slipping parameter

![]() $s_j$

and the guessing parameter

$s_j$

and the guessing parameter

![]() $g_j$

which can be formally defined by

$g_j$

which can be formally defined by

Then, the probability of a correct response for the DINA can be expressed as

where

![]() $\boldsymbol {\alpha }_{c}$

denotes one possible attribute profile value and

$\boldsymbol {\alpha }_{c}$

denotes one possible attribute profile value and

![]() $\eta _{cj}$

is the corresponding ideal response variable.

$\eta _{cj}$

is the corresponding ideal response variable.

2.2 Attribute hierarchy and

$\text {Q}$

-matrix

$\text {Q}$

-matrix

One type of latent structure with CDM is the attribute hierarchy, which may be commonly considered when defining attributes within many educational and psychological tests. Based on the nature of assessment, attributes may have different types of dependency, where one attribute may be a prerequisite for another attribute. Four types of popular hierarchical structure are discussed by Leighton et al. (Reference Leighton, Gierl and Hunka2004), which are presented as four directed acyclic graphs (DAGs) in Figure 1. For a DAG, each node represents an attribute, and a directed edge between two nodes indicates a prerequisite relationship, where the attribute represented by the source node is required for mastering the attribute represented by the target node. For instance, with the linear hierarchy represented by Figure 1(a), the directed edge

![]() $1\rightarrow 2$

indicates that the mastery of

$1\rightarrow 2$

indicates that the mastery of

![]() $\alpha _1$

is a prerequisite for mastering

$\alpha _1$

is a prerequisite for mastering

![]() $\alpha _2$

.

$\alpha _2$

.

Figure 1 Four attribute hierarchy structures, arranged from left to right: (a)

![]() $\text {G}_1$

Linear; (b)

$\text {G}_1$

Linear; (b)

![]() $\text {G}_2$

Convergent; (c)

$\text {G}_2$

Convergent; (c)

![]() $\text {G}_3$

Divergent; and (d)

$\text {G}_3$

Divergent; and (d)

![]() $\text {G}_4$

Unstructured.

$\text {G}_4$

Unstructured.

In this article, we adopt the same notation for describing the attribute hierarchy as Chen and Wang (Reference Chen and Wang2023). Let

![]() $ V $

and E denote the set of attributes and the directed edges, respectively, and

$ V $

and E denote the set of attributes and the directed edges, respectively, and

![]() $G(V;E)$

denote the attribute hierarchy structure. Mathematically, one

$G(V;E)$

denote the attribute hierarchy structure. Mathematically, one

![]() $G(V;E)$

can be presented by its corresponding adjacency matrix,

$G(V;E)$

can be presented by its corresponding adjacency matrix,

![]() $\text {G}$

. For example, let

$\text {G}$

. For example, let

![]() $G(V;E)$

represent the linear hierarchy in Figure 1(a). With this structure,

$G(V;E)$

represent the linear hierarchy in Figure 1(a). With this structure,

![]() $V=\{1,2,3,4\}$

and

$V=\{1,2,3,4\}$

and

![]() $E=\{1 \rightarrow 2,2 \rightarrow 3, 3 \rightarrow 4\}$

. This particular

$E=\{1 \rightarrow 2,2 \rightarrow 3, 3 \rightarrow 4\}$

. This particular

![]() $G(V;E)$

can be denoted by its adjacency matrix

$G(V;E)$

can be denoted by its adjacency matrix

![]() $\text {G}$

as

$\text {G}$

as

$$ \begin{align*}\text{G}=\begin{bmatrix} 0 & 1 & 0 &0\\ 0& 0 & 1&0\\ 0& 0& 0&1\\ 0& 0& 0&0\\ \end{bmatrix}, \end{align*} $$

$$ \begin{align*}\text{G}=\begin{bmatrix} 0 & 1 & 0 &0\\ 0& 0 & 1&0\\ 0& 0& 0&1\\ 0& 0& 0&0\\ \end{bmatrix}, \end{align*} $$

where

![]() $\text {G}(k,k^{'})=1$

represents there is a directed edge

$\text {G}(k,k^{'})=1$

represents there is a directed edge

![]() $k \rightarrow k'$

and 0 otherwise. When there is no hierarchical structure between any attributes, all elements in

$k \rightarrow k'$

and 0 otherwise. When there is no hierarchical structure between any attributes, all elements in

![]() $\text {G}$

are 0, and we denote this structure as

$\text {G}$

are 0, and we denote this structure as

![]() $\text {G}_{null}$

. Because of this one-to-one correspondence between a DAG and its adjacency matrix

$\text {G}_{null}$

. Because of this one-to-one correspondence between a DAG and its adjacency matrix

![]() $\text {G}$

, we will just use the adjacency matrix

$\text {G}$

, we will just use the adjacency matrix

![]() $\text {G}$

to denote its corresponding hierarchical structure

$\text {G}$

to denote its corresponding hierarchical structure

![]() $G(V,E)$

.

$G(V,E)$

.

In addition to the direct relationships discussed above, there are also indirect dependencies among attributes. To better capture both direct and indirect dependencies between attributes, the reachability matrix

![]() $\text {R}$

is introduced, where

$\text {R}$

is introduced, where

![]() $\text {R}(k,k^{'})=1$

represents that there exists a path from k to

$\text {R}(k,k^{'})=1$

represents that there exists a path from k to

![]() $k'$

, and it can be obtained through Boolean addition and multiplication of the adjacency matrix (Tatsuoka, Reference Tatsuoka2012). The reachability matrix

$k'$

, and it can be obtained through Boolean addition and multiplication of the adjacency matrix (Tatsuoka, Reference Tatsuoka2012). The reachability matrix

![]() $\text {R}$

of Figure 1(a) can be expressed as

$\text {R}$

of Figure 1(a) can be expressed as

$$ \begin{align*}\text{R}=\begin{bmatrix} 1 & 1 & 1 &1\\ 0& 1 & 1&1\\ 0& 0& 1&1\\ 0& 0& 0&1\\ \end{bmatrix}. \end{align*} $$

$$ \begin{align*}\text{R}=\begin{bmatrix} 1 & 1 & 1 &1\\ 0& 1 & 1&1\\ 0& 0& 1&1\\ 0& 0& 0&1\\ \end{bmatrix}. \end{align*} $$

Note that different hierarchical structures may lead to the same reachability matrix. For example, consider the structure

![]() $ \text {G}^* $

, corresponding to

$ \text {G}^* $

, corresponding to

![]() $ V^* = \{1, 2, 3, 4\} $

and

$ V^* = \{1, 2, 3, 4\} $

and

![]() $E^* = \{1 \rightarrow 2, 2 \rightarrow 3, 3 \rightarrow 4, 1 \rightarrow 4\}$

. The reachability matrix of

$E^* = \{1 \rightarrow 2, 2 \rightarrow 3, 3 \rightarrow 4, 1 \rightarrow 4\}$

. The reachability matrix of

![]() $ \text {G}^* $

will be the same as that of

$ \text {G}^* $

will be the same as that of

![]() $ \text {G} $

for Figure 1(a), meaning that both exhibit the same dependencies between attributes. However, it is clear that the structure of

$ \text {G} $

for Figure 1(a), meaning that both exhibit the same dependencies between attributes. However, it is clear that the structure of

![]() $ \text {G} $

in Figure 1(a) is simpler. Therefore, our proposed Bayesian estimation method estimates the transitive reduction of

$ \text {G} $

in Figure 1(a) is simpler. Therefore, our proposed Bayesian estimation method estimates the transitive reduction of

![]() $\text {G}$

, which refers to a subgraph of

$\text {G}$

, which refers to a subgraph of

![]() $ \text {G} $

with the fewest edges that maintains the same reachability as

$ \text {G} $

with the fewest edges that maintains the same reachability as

![]() $\text {G}$

(Chen & Wang, Reference Chen and Wang2023).

$\text {G}$

(Chen & Wang, Reference Chen and Wang2023).

2.2.1 The impact of

$\text {G}$

to latent attribute profile distribution

$\text {G}$

to latent attribute profile distribution

Typically when assuming no attribute hierarchy among K attributes, that is, under

![]() $\text {G}_{null}$

, there are

$\text {G}_{null}$

, there are

![]() $C=2^K$

possible attribute profiles. With a specific attribute hierarchical structure, the number of possible attribute profiles is reduced from

$C=2^K$

possible attribute profiles. With a specific attribute hierarchical structure, the number of possible attribute profiles is reduced from

![]() $2^K$

. For example, consider the linear structure

$2^K$

. For example, consider the linear structure

![]() $\text {G}_1$

in Figure 1(a), the attribute profile

$\text {G}_1$

in Figure 1(a), the attribute profile

![]() $(1,0,1,0)$

violates the relationship that

$(1,0,1,0)$

violates the relationship that

![]() $\alpha _2$

is the prerequisite of

$\alpha _2$

is the prerequisite of

![]() $\alpha _3$

, thus cannot exist with

$\alpha _3$

, thus cannot exist with

![]() $\text {G}_1$

. Therefore, the attribute profile space depends on the specific hierarchical structure

$\text {G}_1$

. Therefore, the attribute profile space depends on the specific hierarchical structure

![]() $\text {G}$

. Denote

$\text {G}$

. Denote

![]() $C_G$

the number of permissible attribute profiles under

$C_G$

the number of permissible attribute profiles under

![]() $\text {G}$

. Here, we provide a definition of an equivalence class. Let

$\text {G}$

. Here, we provide a definition of an equivalence class. Let

![]() $ \boldsymbol {\alpha }_c \stackrel {\text {G}}{=} \boldsymbol {\alpha }_{\tilde {c}} $

indicate that

$ \boldsymbol {\alpha }_c \stackrel {\text {G}}{=} \boldsymbol {\alpha }_{\tilde {c}} $

indicate that

![]() $ \boldsymbol {\alpha }_c $

and

$ \boldsymbol {\alpha }_c $

and

![]() $ \boldsymbol {\alpha }_{\tilde {c}} $

are equivalent under structure

$ \boldsymbol {\alpha }_{\tilde {c}} $

are equivalent under structure

![]() $\text {G}$

. Specifically, if a mastered attribute (i.e., an attribute with a value of 1) has a prerequisite that has not been mastered, it will be reassigned to 0. For example, as mentioned above, the attribute profile

$\text {G}$

. Specifically, if a mastered attribute (i.e., an attribute with a value of 1) has a prerequisite that has not been mastered, it will be reassigned to 0. For example, as mentioned above, the attribute profile

![]() $(1,0,1,0)$

is not permissible under linear structure

$(1,0,1,0)$

is not permissible under linear structure

![]() $\text {G}_1$

and is thus reassigned to (1,0,0,0), that is,

$\text {G}_1$

and is thus reassigned to (1,0,0,0), that is,

![]() $(1,0,1,0) \stackrel {\text {G}_1}{=} (1,0,0,0)$

.

$(1,0,1,0) \stackrel {\text {G}_1}{=} (1,0,0,0)$

.

2.2.2

$\text {Q}$

-matrix under

$\text {Q}$

-matrix under

$\text {G}$

$\text {G}$

Another important latent structure under CDM is the

![]() $\text {Q}$

-matrix. When attribute hierarchy exists, we assume that the hierarchical structure also affects the space of the

$\text {Q}$

-matrix. When attribute hierarchy exists, we assume that the hierarchical structure also affects the space of the

![]() $\text {Q}$

-matrix. In other words, the

$\text {Q}$

-matrix. In other words, the

![]() $\boldsymbol {q}$

-vector needs to reflect the underlying attribute hierarchy as well. For example, considering the linear structure

$\boldsymbol {q}$

-vector needs to reflect the underlying attribute hierarchy as well. For example, considering the linear structure

![]() $\text {G}_1$

, we need to make the notations consistent in Figure 1(a),

$\text {G}_1$

, we need to make the notations consistent in Figure 1(a),

![]() $\mathbf {q} = (0, 1,0,0)$

equivalent to

$\mathbf {q} = (0, 1,0,0)$

equivalent to

![]() $\mathbf {q} = (1, 1,0,0)$

, indicating that an item measuring

$\mathbf {q} = (1, 1,0,0)$

, indicating that an item measuring

![]() $\alpha _2$

also needs to measure

$\alpha _2$

also needs to measure

![]() $\alpha _1$

under this linear structure. Therefore, we introduce reduced

$\alpha _1$

under this linear structure. Therefore, we introduce reduced

![]() $\text {Q}$

-matrix

$\text {Q}$

-matrix

![]() $\text {Q}_r$

(Tatsuoka, Reference Tatsuoka2012), which including all possible

$\text {Q}_r$

(Tatsuoka, Reference Tatsuoka2012), which including all possible

![]() $\boldsymbol {q}$

-vectors under the hierarchical structure. In this case, the total

$\boldsymbol {q}$

-vectors under the hierarchical structure. In this case, the total

![]() $2^K-1$

possible

$2^K-1$

possible

![]() $\boldsymbol {q}$

-vectors under

$\boldsymbol {q}$

-vectors under

![]() $\text {G}_{null}$

are reduced to four possible

$\text {G}_{null}$

are reduced to four possible

![]() $\boldsymbol {q}$

-vectors under the linear structure

$\boldsymbol {q}$

-vectors under the linear structure

![]() $\text {G}_1$

. In this study, a structured

$\text {G}_1$

. In this study, a structured

![]() $\text {Q}$

-matrix constructed from the possible

$\text {Q}$

-matrix constructed from the possible

![]() $\boldsymbol {q}$

-vectors in

$\boldsymbol {q}$

-vectors in

![]() $\text {Q}_r(\text {G})$

is used to reflect the attribute hierarchical structure. The above relationship is shown by Equation (3), where each column in a

$\text {Q}_r(\text {G})$

is used to reflect the attribute hierarchical structure. The above relationship is shown by Equation (3), where each column in a

![]() $\text {Q}$

-matrix represents a possible

$\text {Q}$

-matrix represents a possible

![]() $\boldsymbol {q}$

-vector:

$\boldsymbol {q}$

-vector:

$$ \begin{align} \text{Q}_{all}^{\text{T}} = \left[\begin{array}{lllllllllllllll} 1 & 0 & 0 & 0 & 1 & 1 & 1 & 0 & 0 & 0 & 1 & 1 & 1 & 0 & 1 \\ 0 & 1 & 0 & 0 & 1 & 0 & 0 & 1 & 1 & 0 & 1 & 1 & 0 & 1 & 1 \\ 0 & 0 & 1 & 0 & 0 & 1 & 0 & 1 & 0 & 1 & 1 & 0 & 1 & 1 & 1 \\ 0 & 0 & 0 & 1 & 0 & 0 & 1 & 0 & 1 & 1 & 0 & 1 & 1 & 1 & 1 \end{array}\right] \stackrel{reduced}{\longrightarrow} \left(\begin{array}{lllll} 1 & 1 & 1 & 1 \\ 0 & 1 & 1 & 1 \\ 0 & 0 & 1 & 1 \\ 0 & 0 & 0 & 1 \end{array}\right) \stackrel{\triangle}{=}\text{Q}_r (\text{G}_1)^{\text{T}}. \end{align} $$

$$ \begin{align} \text{Q}_{all}^{\text{T}} = \left[\begin{array}{lllllllllllllll} 1 & 0 & 0 & 0 & 1 & 1 & 1 & 0 & 0 & 0 & 1 & 1 & 1 & 0 & 1 \\ 0 & 1 & 0 & 0 & 1 & 0 & 0 & 1 & 1 & 0 & 1 & 1 & 0 & 1 & 1 \\ 0 & 0 & 1 & 0 & 0 & 1 & 0 & 1 & 0 & 1 & 1 & 0 & 1 & 1 & 1 \\ 0 & 0 & 0 & 1 & 0 & 0 & 1 & 0 & 1 & 1 & 0 & 1 & 1 & 1 & 1 \end{array}\right] \stackrel{reduced}{\longrightarrow} \left(\begin{array}{lllll} 1 & 1 & 1 & 1 \\ 0 & 1 & 1 & 1 \\ 0 & 0 & 1 & 1 \\ 0 & 0 & 0 & 1 \end{array}\right) \stackrel{\triangle}{=}\text{Q}_r (\text{G}_1)^{\text{T}}. \end{align} $$

3 Bayesian estimation

3.1 Bayesian formulation

To start with, denote item response matrix for N examinees across J items as

![]() $\textbf {Y}=(\boldsymbol {Y}_{1},\ldots ,\boldsymbol {Y}_{i},\ldots ,\boldsymbol {Y}_{N})^{\text {T}}$

, where

$\textbf {Y}=(\boldsymbol {Y}_{1},\ldots ,\boldsymbol {Y}_{i},\ldots ,\boldsymbol {Y}_{N})^{\text {T}}$

, where

![]() $\boldsymbol {Y}_{i}=(y_{i1},\ldots ,y_{ij},\ldots ,y_{iJ})^{\text {T}}$

,

$\boldsymbol {Y}_{i}=(y_{i1},\ldots ,y_{ij},\ldots ,y_{iJ})^{\text {T}}$

,

![]() $i=1,\ldots ,N$

, is the response vector of examinee i to J items. In addition, let

$i=1,\ldots ,N$

, is the response vector of examinee i to J items. In addition, let

![]() $\textbf {A}=(\boldsymbol {\alpha }_{1},\ldots ,\boldsymbol {\alpha }_{i},\ldots ,\boldsymbol {\alpha }_{N})^{\text {T}}$

be the

$\textbf {A}=(\boldsymbol {\alpha }_{1},\ldots ,\boldsymbol {\alpha }_{i},\ldots ,\boldsymbol {\alpha }_{N})^{\text {T}}$

be the

![]() $N\times K$

matrix of attribute profiles of all examinees. Denote the parameter

$N\times K$

matrix of attribute profiles of all examinees. Denote the parameter

![]() $\boldsymbol {\pi }=(\pi _1,\ldots ,\pi _c,\ldots ,\pi _{C})$

as the latent class probability vector, that is,

$\boldsymbol {\pi }=(\pi _1,\ldots ,\pi _c,\ldots ,\pi _{C})$

as the latent class probability vector, that is,

![]() $\pi _c=P(\boldsymbol {\alpha }_i=\boldsymbol {\alpha }_c)$

, satisfying

$\pi _c=P(\boldsymbol {\alpha }_i=\boldsymbol {\alpha }_c)$

, satisfying

![]() $\sum \pi _c=1$

. Let

$\sum \pi _c=1$

. Let

![]() $\boldsymbol {s}=(s_1,\dots ,s_J)^{T}$

and

$\boldsymbol {s}=(s_1,\dots ,s_J)^{T}$

and

![]() $\boldsymbol {g}=(g_1,\dots ,g_J)^{T}$

denote the slipping and guessing parameters for all items.

$\boldsymbol {g}=(g_1,\dots ,g_J)^{T}$

denote the slipping and guessing parameters for all items.

Given the conditional independent assumption, the joint distribution of

![]() $\mathbf {Y}$

given parameters

$\mathbf {Y}$

given parameters

![]() $\boldsymbol {s},\boldsymbol {g},\boldsymbol {\pi }, \boldsymbol {A}, \text {Q}$

, and

$\boldsymbol {s},\boldsymbol {g},\boldsymbol {\pi }, \boldsymbol {A}, \text {Q}$

, and

![]() $\text {G}$

can be written as

$\text {G}$

can be written as

$$ \begin{align} p(\mathbf{Y}|\boldsymbol{s},\boldsymbol{g},\boldsymbol{\pi},\boldsymbol{A},\text{Q},\text{G})=\prod_{i=1}^N\prod_{j=1}^J\sum_{c=1}^C \boldsymbol{\pi}_c ~p(Y_{ij}|\boldsymbol{\alpha}_i=\boldsymbol{\alpha}_c,g_j,s_j, \boldsymbol{q}_j,\text{G}), \end{align} $$

$$ \begin{align} p(\mathbf{Y}|\boldsymbol{s},\boldsymbol{g},\boldsymbol{\pi},\boldsymbol{A},\text{Q},\text{G})=\prod_{i=1}^N\prod_{j=1}^J\sum_{c=1}^C \boldsymbol{\pi}_c ~p(Y_{ij}|\boldsymbol{\alpha}_i=\boldsymbol{\alpha}_c,g_j,s_j, \boldsymbol{q}_j,\text{G}), \end{align} $$

where

$$ \begin{align} \begin{aligned} p(Y_{ij}|\boldsymbol{\alpha}_i=\boldsymbol{\alpha}_c,g_j,s_j,\boldsymbol{q}_j, \text{G})&=\left(g_j^{1-\eta_{cj}}(1-s_j)^{\eta_{cj}}\right)^{y_{ij}} \left((1-g_j)^{1-\eta_{cj}}s_j^{\eta_{cj}}\right)^{1-y_{ij}},\\ \eta_{cj}&=\prod_{k=1}^{K}\alpha_{ck}^{q_{jk}}. \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} p(Y_{ij}|\boldsymbol{\alpha}_i=\boldsymbol{\alpha}_c,g_j,s_j,\boldsymbol{q}_j, \text{G})&=\left(g_j^{1-\eta_{cj}}(1-s_j)^{\eta_{cj}}\right)^{y_{ij}} \left((1-g_j)^{1-\eta_{cj}}s_j^{\eta_{cj}}\right)^{1-y_{ij}},\\ \eta_{cj}&=\prod_{k=1}^{K}\alpha_{ck}^{q_{jk}}. \end{aligned} \end{align} $$

To estimate the DINA model item parameters

![]() $\boldsymbol {s}$

and

$\boldsymbol {s}$

and

![]() $\boldsymbol {g}$

, the examinee parameters

$\boldsymbol {g}$

, the examinee parameters

![]() $\boldsymbol {A}$

and

$\boldsymbol {A}$

and

![]() $\boldsymbol {\pi }$

, and the two latent structures

$\boldsymbol {\pi }$

, and the two latent structures

![]() $\text {G}$

and

$\text {G}$

and

![]() $\text {Q}$

, we first outline the joint posterior distribution of all model parameters.

$\text {Q}$

, we first outline the joint posterior distribution of all model parameters.

Let

![]() $p(s_j,g_j)$

,

$p(s_j,g_j)$

,

![]() $p(\boldsymbol {\alpha }_i\mid \boldsymbol {\pi },\text {G})$

,

$p(\boldsymbol {\alpha }_i\mid \boldsymbol {\pi },\text {G})$

,

![]() $p(\boldsymbol {\pi }\mid \text {G})$

,

$p(\boldsymbol {\pi }\mid \text {G})$

,

![]() $~p(\text {Q}\mid \text {G})$

, and

$~p(\text {Q}\mid \text {G})$

, and

![]() $p(\text {G})$

be the corresponding prior of

$p(\text {G})$

be the corresponding prior of

![]() $(s_j,g_j)$

,

$(s_j,g_j)$

,

![]() $\boldsymbol {\alpha }_i$

,

$\boldsymbol {\alpha }_i$

,

![]() $\boldsymbol {\pi }$

,

$\boldsymbol {\pi }$

,

![]() $\text {Q}$

, and

$\text {Q}$

, and

![]() $\text {G}$

, respectively. The joint posterior distribution of all model parameters is

$\text {G}$

, respectively. The joint posterior distribution of all model parameters is

$$ \begin{align} \begin{aligned} &p(\boldsymbol{s},\boldsymbol{g},\boldsymbol{\pi},\boldsymbol{A},\text{Q},\text{G}\mid \mathbf{Y}) \\&\quad\propto ~p(\mathbf{Y}\mid \boldsymbol{s},\boldsymbol{g},\boldsymbol{\pi},\boldsymbol{A},\text{Q},\text{G}) p(\boldsymbol{s},\boldsymbol{g})~p(\mathbf{A}\mid \boldsymbol{\pi},\text{G})~p(\boldsymbol{\pi}\mid \text{G})~p(\text{Q}\mid \text{G})~p(\text{G})\\ &\quad\propto ~p(\mathbf{Y}\mid \boldsymbol{s},\boldsymbol{g},\boldsymbol{\pi},\boldsymbol{A},\text{Q},\text{G})\left(\prod_{j=1}^J p(s_j,g_j)\right) \left(\prod_{i=1}^N p(\boldsymbol{\alpha}_i \mid \boldsymbol{\pi},\text{G})\right)~ p(\boldsymbol{\pi}\mid \text{G})~p(\text{Q}\mid \text{G})~p(\text{G}), \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} &p(\boldsymbol{s},\boldsymbol{g},\boldsymbol{\pi},\boldsymbol{A},\text{Q},\text{G}\mid \mathbf{Y}) \\&\quad\propto ~p(\mathbf{Y}\mid \boldsymbol{s},\boldsymbol{g},\boldsymbol{\pi},\boldsymbol{A},\text{Q},\text{G}) p(\boldsymbol{s},\boldsymbol{g})~p(\mathbf{A}\mid \boldsymbol{\pi},\text{G})~p(\boldsymbol{\pi}\mid \text{G})~p(\text{Q}\mid \text{G})~p(\text{G})\\ &\quad\propto ~p(\mathbf{Y}\mid \boldsymbol{s},\boldsymbol{g},\boldsymbol{\pi},\boldsymbol{A},\text{Q},\text{G})\left(\prod_{j=1}^J p(s_j,g_j)\right) \left(\prod_{i=1}^N p(\boldsymbol{\alpha}_i \mid \boldsymbol{\pi},\text{G})\right)~ p(\boldsymbol{\pi}\mid \text{G})~p(\text{Q}\mid \text{G})~p(\text{G}), \end{aligned} \end{align} $$

where

![]() $p(\mathbf {Y}\mid \boldsymbol {s},\boldsymbol {g},\boldsymbol {\pi },\boldsymbol {A},\text {Q},\text {G})$

is the joint distribution function (4). In the following section, we specify the prior distributions of each parameter, followed by the inference of their posterior distributions.

$p(\mathbf {Y}\mid \boldsymbol {s},\boldsymbol {g},\boldsymbol {\pi },\boldsymbol {A},\text {Q},\text {G})$

is the joint distribution function (4). In the following section, we specify the prior distributions of each parameter, followed by the inference of their posterior distributions.

3.2 Posterior inference

Firstly, the prior of

![]() $s_j$

and

$s_j$

and

![]() $g_j$

follows the Beta distribution with parameter

$g_j$

follows the Beta distribution with parameter

![]() $a_{s0}$

,

$a_{s0}$

,

![]() $b_{s0}$

,

$b_{s0}$

,

![]() $a_{g0}$

, and

$a_{g0}$

, and

![]() $b_{g0}$

:

$b_{g0}$

:

Then we can derive that posterior distribution of

![]() $s_j$

is a Beta distribution with parameter

$s_j$

is a Beta distribution with parameter

![]() $a_{sj}$

and

$a_{sj}$

and

![]() $b_{sj}$

as following:

$b_{sj}$

as following:

where

$$ \begin{align*} \begin{aligned} a_{sj} = \sum_{i=1}^{N} \eta_{ij}(1-y_{ij}),\quad b_{sj} = \sum_{i=1}^{N} \eta_{ij}y_{ij}. \end{aligned} \end{align*} $$

$$ \begin{align*} \begin{aligned} a_{sj} = \sum_{i=1}^{N} \eta_{ij}(1-y_{ij}),\quad b_{sj} = \sum_{i=1}^{N} \eta_{ij}y_{ij}. \end{aligned} \end{align*} $$

And the posterior distribution of

![]() $g_j$

is a Beta distribution with parameter

$g_j$

is a Beta distribution with parameter

![]() $a_{gj}$

and

$a_{gj}$

and

![]() $b_{gj}$

as following:

$b_{gj}$

as following:

where

$$ \begin{align*} \begin{aligned} a_{gj} = \sum_{i=1}^{N} (1-\eta_{ij})y_{ij},\quad b_{gj} = \sum_{i=1}^{N} (1-\eta_{ij})(1-y_{ij}). \end{aligned} \end{align*} $$

$$ \begin{align*} \begin{aligned} a_{gj} = \sum_{i=1}^{N} (1-\eta_{ij})y_{ij},\quad b_{gj} = \sum_{i=1}^{N} (1-\eta_{ij})(1-y_{ij}). \end{aligned} \end{align*} $$

Following Culpepper (Reference Culpepper2015), we assign Beta(1,1) priors to both

![]() $s_j$

and

$s_j$

and

![]() $g_j$

in this article, that is,

$g_j$

in this article, that is,

![]() $a_{s0} = b_{s0} = a_{g0} = b_{g0} = 1$

.

$a_{s0} = b_{s0} = a_{g0} = b_{g0} = 1$

.

Secondly, the prior of

![]() $\boldsymbol {\pi }$

under structure

$\boldsymbol {\pi }$

under structure

![]() $\text {G}_{null}$

is a Dirichlet distribution with parameter

$\text {G}_{null}$

is a Dirichlet distribution with parameter

![]() $\boldsymbol {\delta }_0$

:

$\boldsymbol {\delta }_0$

:

$$ \begin{align} p(\boldsymbol{\pi} \mid \boldsymbol{\delta}_0) \propto \prod_{c=0}^{C-1} \pi_{c}^{ \delta_{0c}}, 0 \leq \pi_c\leq 1, \sum \pi_c=1. \end{align} $$

$$ \begin{align} p(\boldsymbol{\pi} \mid \boldsymbol{\delta}_0) \propto \prod_{c=0}^{C-1} \pi_{c}^{ \delta_{0c}}, 0 \leq \pi_c\leq 1, \sum \pi_c=1. \end{align} $$

However, as discussed in Section 2.2, the existence of a hierarchical structure among attributes imposes constraints on the attribute profiles. Specifically, some attribute profiles become impermissible under the hierarchy structure

![]() $\text {G}$

. This restriction reduces the set of permissible attribute profiles, thereby influencing the distribution of

$\text {G}$

. This restriction reduces the set of permissible attribute profiles, thereby influencing the distribution of

![]() $\boldsymbol {\pi }$

. Moreover, since

$\boldsymbol {\pi }$

. Moreover, since

![]() $\text {G}$

is allowed to change during the estimation process, the distributions of

$\text {G}$

is allowed to change during the estimation process, the distributions of

![]() $\boldsymbol {\alpha }_i$

also change accordingly. Therefore, when incorporating a hierarchical structure

$\boldsymbol {\alpha }_i$

also change accordingly. Therefore, when incorporating a hierarchical structure

![]() $\text {G}$

, it is necessary to adjust the corresponding priors to reflect the structural constraints imposed by

$\text {G}$

, it is necessary to adjust the corresponding priors to reflect the structural constraints imposed by

![]() $\text {G}$

.

$\text {G}$

.

Let

![]() $L_G$

denote the set of permissible attribute profiles under a specific hierarchical structure

$L_G$

denote the set of permissible attribute profiles under a specific hierarchical structure

![]() $\text {G}$

and

$\text {G}$

and

![]() $C_G=|L_G|$

denote the number of permissible attribute profiles. Define

$C_G=|L_G|$

denote the number of permissible attribute profiles. Define

![]() $\boldsymbol {\pi }_G$

as the current latent class probability vector. Thus, the prior of

$\boldsymbol {\pi }_G$

as the current latent class probability vector. Thus, the prior of

![]() $\boldsymbol {\pi }_G$

now follows an aggregated Dirichlet distribution with parameters

$\boldsymbol {\pi }_G$

now follows an aggregated Dirichlet distribution with parameters

![]() $\boldsymbol {\delta }_0^G$

:

$\boldsymbol {\delta }_0^G$

:

where

Based on the prior in Eq. (11) and the joint posterior distribution in Eq. (6), we can derive that posterior distribution of

![]() $\boldsymbol \pi _G$

is a Dirichlet distribution with parameter

$\boldsymbol \pi _G$

is a Dirichlet distribution with parameter

![]() $\boldsymbol {\delta }_G$

as follows:

$\boldsymbol {\delta }_G$

as follows:

where

$$ \begin{align*} \delta^G_{\tilde{c}}= \sum_{i=1}^{N}\mathcal{I}(\boldsymbol{\alpha}_i=\boldsymbol{\alpha}_{\tilde{c}})+\delta_{0\tilde{c}}^G. \end{align*} $$

$$ \begin{align*} \delta^G_{\tilde{c}}= \sum_{i=1}^{N}\mathcal{I}(\boldsymbol{\alpha}_i=\boldsymbol{\alpha}_{\tilde{c}})+\delta_{0\tilde{c}}^G. \end{align*} $$

We set

![]() $\boldsymbol {\delta }_0 = (1, \ldots , 1)_L$

in our study, following the setting in Culpepper (Reference Culpepper2015).

$\boldsymbol {\delta }_0 = (1, \ldots , 1)_L$

in our study, following the setting in Culpepper (Reference Culpepper2015).

Thirdly, the prior of

![]() $\boldsymbol {\alpha }_i$

follows a categorical distribution with parameter

$\boldsymbol {\alpha }_i$

follows a categorical distribution with parameter

![]() $\boldsymbol {\pi }$

under structure

$\boldsymbol {\pi }$

under structure

![]() $\text {G}_{null}$

:

$\text {G}_{null}$

:

Similar to parameter

![]() $\boldsymbol {\pi }$

, the prior of

$\boldsymbol {\pi }$

, the prior of

![]() $\boldsymbol {\alpha }_i$

also needs to be adjusted according to the hierarchy structure

$\boldsymbol {\alpha }_i$

also needs to be adjusted according to the hierarchy structure

![]() $\text {G}$

. Accordingly, the prior of

$\text {G}$

. Accordingly, the prior of

![]() $\boldsymbol {\alpha }_i$

follows a collapsed categorical distribution with parameter

$\boldsymbol {\alpha }_i$

follows a collapsed categorical distribution with parameter

![]() $\boldsymbol {\pi }_G$

under structure G:

$\boldsymbol {\pi }_G$

under structure G:

Based on the prior in Eq. (14) and the joint posterior distribution in Eq. (6), we can derive that posterior distribution of

![]() $\boldsymbol {\alpha }_i$

is a categorical distribution with parameter

$\boldsymbol {\alpha }_i$

is a categorical distribution with parameter

![]() $\boldsymbol {\rho }_{i}^G=(\rho _{i0}^G,\ldots ,\rho _{iC_G-1}^G)$

as follows:

$\boldsymbol {\rho }_{i}^G=(\rho _{i0}^G,\ldots ,\rho _{iC_G-1}^G)$

as follows:

where

$$ \begin{align*} \rho_{i\tilde{c}}^G \propto \pi_{\tilde{c}}^G \prod_{j=1}^J \left(g_j^{1-\eta_{\tilde{c}j}}(1-s_j)^{\eta_{\tilde{c}j}}\right)^{y_{ij}} \left((1-g_j)^{1-\eta_{\tilde{c}j}}s_j^{\eta_{\tilde{c}j}}\right)^{1-y_{ij}}. \end{align*} $$

$$ \begin{align*} \rho_{i\tilde{c}}^G \propto \pi_{\tilde{c}}^G \prod_{j=1}^J \left(g_j^{1-\eta_{\tilde{c}j}}(1-s_j)^{\eta_{\tilde{c}j}}\right)^{y_{ij}} \left((1-g_j)^{1-\eta_{\tilde{c}j}}s_j^{\eta_{\tilde{c}j}}\right)^{1-y_{ij}}. \end{align*} $$

Fourthly, as for the prior of the

![]() $\text {Q}$

-matrix, we adopted the approach in Chung (Reference Chung2019) by introducing additional parameters for each Q-matrix entry and made corresponding adjustments. With K attributes, there are

$\text {Q}$

-matrix, we adopted the approach in Chung (Reference Chung2019) by introducing additional parameters for each Q-matrix entry and made corresponding adjustments. With K attributes, there are

![]() $H=2^K-1$

possible

$H=2^K-1$

possible

![]() $\boldsymbol {q}$

-vector patterns for each item under structure

$\boldsymbol {q}$

-vector patterns for each item under structure

![]() $\text {G}_{null}$

, which is denoted as

$\text {G}_{null}$

, which is denoted as

![]() $\boldsymbol {\epsilon }_{H \times K}=\left (\epsilon _{h k}\right )_{H \times K}$

. Suppose

$\boldsymbol {\epsilon }_{H \times K}=\left (\epsilon _{h k}\right )_{H \times K}$

. Suppose

![]() $\boldsymbol {q}_j$

following a categorical distribution with parameter

$\boldsymbol {q}_j$

following a categorical distribution with parameter

![]() $\boldsymbol {\theta }_{0j}=(\theta _{0j1},\ldots ,\theta _{0jH})$

:

$\boldsymbol {\theta }_{0j}=(\theta _{0j1},\ldots ,\theta _{0jH})$

:

$$ \begin{align} p(\boldsymbol{q}_j \mid \boldsymbol{\theta}_{0j}) \propto \prod_{h=1}^H \theta_{0jh}^{\mathcal{I}(\boldsymbol{q}_j=\boldsymbol{\epsilon}_{h})}, \end{align} $$

$$ \begin{align} p(\boldsymbol{q}_j \mid \boldsymbol{\theta}_{0j}) \propto \prod_{h=1}^H \theta_{0jh}^{\mathcal{I}(\boldsymbol{q}_j=\boldsymbol{\epsilon}_{h})}, \end{align} $$

where

![]() $\boldsymbol {\phi }_{jh} =(\phi _{jh1},\ldots ,\phi _{jhK}), h\in (1,\ldots ,H)$

. We define

$\boldsymbol {\phi }_{jh} =(\phi _{jh1},\ldots ,\phi _{jhK}), h\in (1,\ldots ,H)$

. We define

![]() $\phi _{jhk}=P(q_{jk}=1)$

and the prior for

$\phi _{jhk}=P(q_{jk}=1)$

and the prior for

![]() $\phi _{jhk}$

is

$\phi _{jhk}$

is

Therefore, the conditional posterior for each element

![]() $\phi _{jhk}$

is distributed as

$\phi _{jhk}$

is distributed as

Moreover, the prior for

![]() $\boldsymbol {\theta }_{0j}$

is then modeled as

$\boldsymbol {\theta }_{0j}$

is then modeled as

$$ \begin{align} \begin{aligned} \boldsymbol{\theta}_{0j}=g( \boldsymbol{\Phi}_j)& =(g(\boldsymbol{\phi}_{j1}),\ldots,g(\boldsymbol{\phi}_{jH})\propto \left(\prod_{k=1}^K \phi_{j1 k}^{\epsilon_{1 k}}\left(1-\phi_{j1 k}\right)^{1-\epsilon_{1 k}},\ldots, \prod_{k=1}^K \phi_{jh k}^{\epsilon_{h k}}\right. \\ &\left.\quad \times\left(1-\phi_{jh k}\right)^{1-\epsilon_{h k}}, \ldots, \prod_{k=1}^K \phi_{jH k}^{\epsilon_{H k}}\left(1-\phi_{jH k}\right)^{1-\epsilon_{H k}}\right). \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} \boldsymbol{\theta}_{0j}=g( \boldsymbol{\Phi}_j)& =(g(\boldsymbol{\phi}_{j1}),\ldots,g(\boldsymbol{\phi}_{jH})\propto \left(\prod_{k=1}^K \phi_{j1 k}^{\epsilon_{1 k}}\left(1-\phi_{j1 k}\right)^{1-\epsilon_{1 k}},\ldots, \prod_{k=1}^K \phi_{jh k}^{\epsilon_{h k}}\right. \\ &\left.\quad \times\left(1-\phi_{jh k}\right)^{1-\epsilon_{h k}}, \ldots, \prod_{k=1}^K \phi_{jH k}^{\epsilon_{H k}}\left(1-\phi_{jH k}\right)^{1-\epsilon_{H k}}\right). \end{aligned} \end{align} $$

Considering that in the DINA model, restricting

![]() $\boldsymbol {\alpha }$

is equivalent to restricting the

$\boldsymbol {\alpha }$

is equivalent to restricting the

![]() $ \text {Q} $

-matrix, we do not impose any additional structural constraints on the

$ \text {Q} $

-matrix, we do not impose any additional structural constraints on the

![]() $ \text {Q} $

-matrix during its update process. Therefore, based on the prior in Eq. (18) and the joint posterior distribution in Eq. (6), we can derive that posterior distribution of

$ \text {Q} $

-matrix during its update process. Therefore, based on the prior in Eq. (18) and the joint posterior distribution in Eq. (6), we can derive that posterior distribution of

![]() $\boldsymbol {q}_j$

is a categorical distribution with parameter

$\boldsymbol {q}_j$

is a categorical distribution with parameter

![]() $\boldsymbol {\theta }_j=(\theta _{j1},\ldots ,\theta _{jH})$

as follows:

$\boldsymbol {\theta }_j=(\theta _{j1},\ldots ,\theta _{jH})$

as follows:

where

$$ \begin{align} \gamma_{jh} = p(\boldsymbol{\phi}_{jh}) \prod_{i=1}^N \left(g_j^{1-\eta_{ij}}(1-s_j)^{\eta_{ij}}\right)^{y_{ij}} \left((1-g_j)^{1-\eta_{ij}}s_j^{\eta_{ij}}\right)^{1-y_{ij}}. \end{align} $$

$$ \begin{align} \gamma_{jh} = p(\boldsymbol{\phi}_{jh}) \prod_{i=1}^N \left(g_j^{1-\eta_{ij}}(1-s_j)^{\eta_{ij}}\right)^{y_{ij}} \left((1-g_j)^{1-\eta_{ij}}s_j^{\eta_{ij}}\right)^{1-y_{ij}}. \end{align} $$

Finally, for the hierarchical structure

![]() $\text {G}$

, we adopt a uniform prior, which means for any two possible transitive reduction structure

$\text {G}$

, we adopt a uniform prior, which means for any two possible transitive reduction structure

![]() $\text {G}$

and

$\text {G}$

and

![]() $\text {G}^*$

, we have

$\text {G}^*$

, we have

![]() $p(\text {G})=p(\text {G}^*)$

. As we are not able to get the closed form of the posterior distribution of G, similar to Chen and Wang (Reference Chen and Wang2023), the MH sampling method is utilized to update

$p(\text {G})=p(\text {G}^*)$

. As we are not able to get the closed form of the posterior distribution of G, similar to Chen and Wang (Reference Chen and Wang2023), the MH sampling method is utilized to update

![]() $\text {G}$

. First, we need to sample a new structure

$\text {G}$

. First, we need to sample a new structure

![]() $\text {G}^*$

. Note that the new

$\text {G}^*$

. Note that the new

![]() $\text {G}^*$

can only be obtained by either removing an existing edge or adding an edge to the current structure

$\text {G}^*$

can only be obtained by either removing an existing edge or adding an edge to the current structure

![]() $\text {G}$

. Denote p as the probability of adding an edge, indicating

$\text {G}$

. Denote p as the probability of adding an edge, indicating

![]() $1-p$

as the probability of removing an edge. We first use the probability p to determine whether the new structure

$1-p$

as the probability of removing an edge. We first use the probability p to determine whether the new structure

![]() $\text {G}^*$

involves adding or removing an edge, and then randomly generate a possible

$\text {G}^*$

involves adding or removing an edge, and then randomly generate a possible

![]() $\text {G}^*$

. It is worth to note that this sampling procedure only exists between transitive reduction

$\text {G}^*$

. It is worth to note that this sampling procedure only exists between transitive reduction

![]() $\text {G}$

s, meaning that each time a new

$\text {G}$

s, meaning that each time a new

![]() $\text {G}^*$

is sampled, it must have a different reachability matrix from

$\text {G}^*$

is sampled, it must have a different reachability matrix from

![]() $\text {G}$

. According to this rule, each time an edge is added, it must be selected from the edges between two attributes that do not have any direct or indirect relationship. Such a pair of attributes can be found through

$\text {G}$

. According to this rule, each time an edge is added, it must be selected from the edges between two attributes that do not have any direct or indirect relationship. Such a pair of attributes can be found through

![]() $0$

s in the off-diagonal entries in the reachability matrix. Let

$0$

s in the off-diagonal entries in the reachability matrix. Let

![]() $T\left (\text {G}, \text {G}^*\right )$

denote the transition probability from

$T\left (\text {G}, \text {G}^*\right )$

denote the transition probability from

![]() $\text {G}$

to the proposed new

$\text {G}$

to the proposed new

![]() $\text {G}^*$

. Define

$\text {G}^*$

. Define

when adding an edge, and

when removing an edge. Then the accept ratio of

![]() $\text {G}^*$

is

$\text {G}^*$

is

$$ \begin{align} \begin{aligned} r & =\min \left\{1, \frac{p\left(\text{G}^*\mid \textbf{Y}, \boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \mathbf{Q}\right) T\left(\text{G}^*, \text{G}\right)}{p\left(\text{G}\mid \textbf{Y}, \boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \mathbf{Q}\right) T\left(\text{G}, \text{G}^*\right)}\right\} \\ & =\min \left\{1, \frac{p\left(\textbf{Y} \mid \text{G}^*,\boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \text{Q}\right) p\left(\text{G}^*\right) T\left(\text{G}^*, \text{G}\right)}{p(\textbf{Y} \mid \text{G},\boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \text{Q}) p(G) T\left(\text{G}, \text{G}^*\right)}\right\}\\ &=\min \left\{1, \frac{p\left(\textbf{Y} \mid \text{G}^*,\boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \mathbf{Q}\right) T\left(\text{G}^*, \text{G}\right)}{p(\textbf{Y} \mid \text{G}, \boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \text{Q}) T\left(\text{G}, \text{G}^*\right)}\right\}. \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} r & =\min \left\{1, \frac{p\left(\text{G}^*\mid \textbf{Y}, \boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \mathbf{Q}\right) T\left(\text{G}^*, \text{G}\right)}{p\left(\text{G}\mid \textbf{Y}, \boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \mathbf{Q}\right) T\left(\text{G}, \text{G}^*\right)}\right\} \\ & =\min \left\{1, \frac{p\left(\textbf{Y} \mid \text{G}^*,\boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \text{Q}\right) p\left(\text{G}^*\right) T\left(\text{G}^*, \text{G}\right)}{p(\textbf{Y} \mid \text{G},\boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \text{Q}) p(G) T\left(\text{G}, \text{G}^*\right)}\right\}\\ &=\min \left\{1, \frac{p\left(\textbf{Y} \mid \text{G}^*,\boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \mathbf{Q}\right) T\left(\text{G}^*, \text{G}\right)}{p(\textbf{Y} \mid \text{G}, \boldsymbol{s},\boldsymbol{g}, \mathbf{A}, \text{Q}) T\left(\text{G}, \text{G}^*\right)}\right\}. \end{aligned} \end{align} $$

Then, a random number is drawn from a uniform distribution and compared to the acceptance ratio r to determine whether to accept the proposed

![]() $\text {G}^*$

.

$\text {G}^*$

.

Our proposed MH within Gibbs sampling procedure is summarized in Table 1, and T represent the length of the Markov chain. The prespecified probability p can be adjusted to improve the acceptance ratio of the newly proposed structure

![]() $\text {G}^*$

. In this article, we set

$\text {G}^*$

. In this article, we set

![]() $p=0.5$

.

$p=0.5$

.

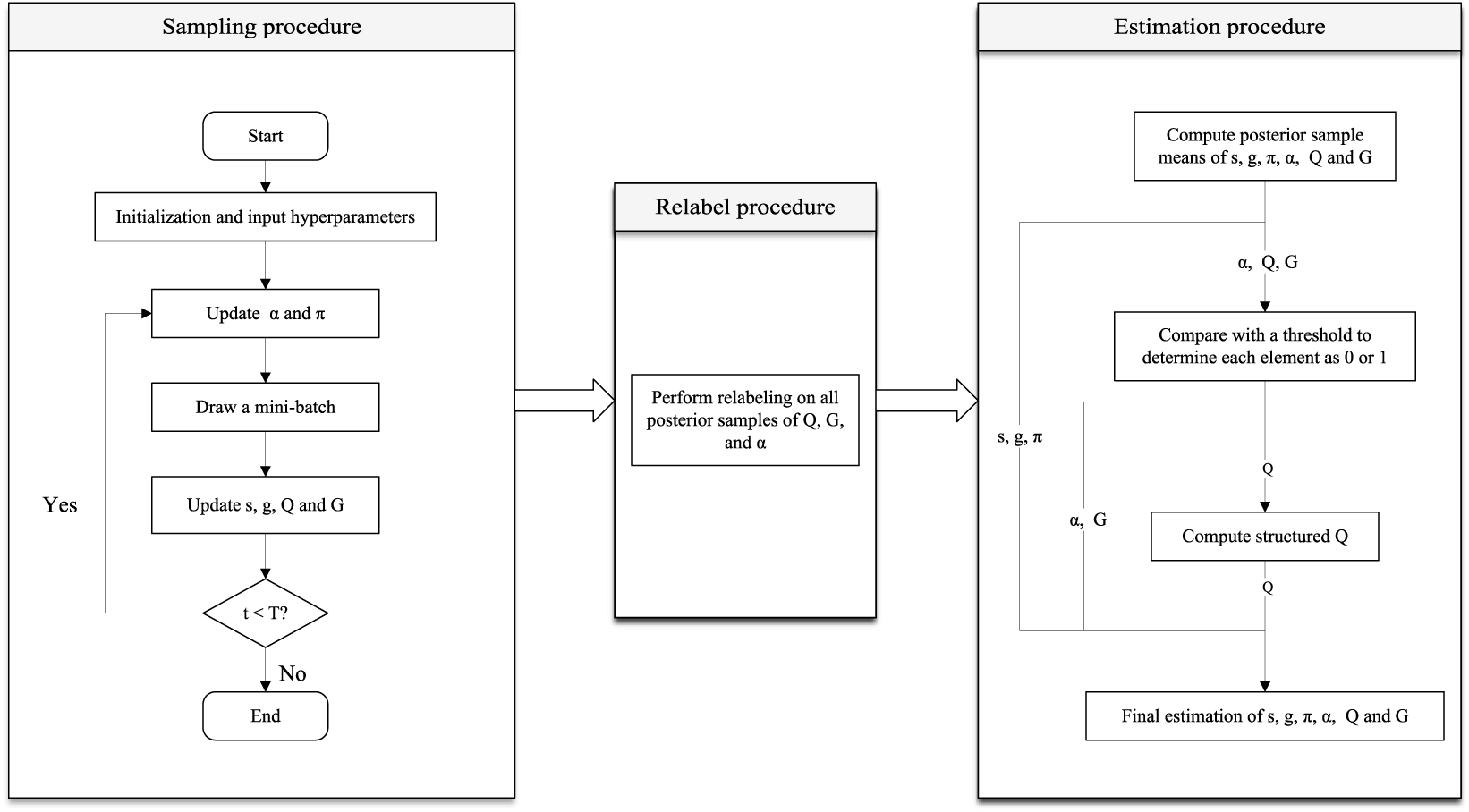

Table 1 MH-Gibbs sampling procedure

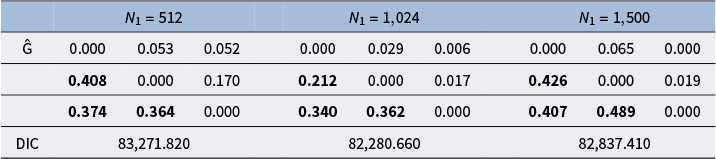

4 Mini-batch and final estimation for model parameters

In this section, we summarize the implementation of the proposed algorithm, including the use of mini-batch method in each iteration, addressing the potential label switch issue, and the way to obtain the final estimation for two latent structures and model parameters after the sampling procedure. The overview of the complete procedure from sampling procedure to final parameter estimation is provided in Figure 2. We provided details in the rest of this section.

Figure 2 The overview of the complete Bayesian estimation procedure, including the sampling procedure, relabeling procedure, and final parameter estimation.

4.1 Mini-batch method in sampling procedure

We propose to use the mini-batch method, where, during each iteration of the sampling algorithm (Table 1), we randomly select a subset of samples to update

![]() $\boldsymbol {s}$

,

$\boldsymbol {s}$

,

![]() $\boldsymbol {g}$

,

$\boldsymbol {g}$

,

![]() $\boldsymbol {Q}$

, and

$\boldsymbol {Q}$

, and

![]() $\text {G}$

. Note that

$\text {G}$

. Note that

![]() $\boldsymbol {\alpha }$

and

$\boldsymbol {\alpha }$

and

![]() $\boldsymbol {\pi }$

cannot be updated using sub-samples, as they are parameters specific to each individual sample. The mini-batch method is a widely used technique in machine learning, commonly applied in stochastic gradient descent. The method works by updating model parameters using only a subset of the samples during each iteration. In recent years, the mini-batch method has also found applications in the Bayesian field, such as in MH (Wu et al., Reference Wu, Rachel Wang and Wong2022), Gibbs sampling (De Sa et al., Reference De Sa, Chen and Wong2018), Hamiltonian Monte Carlo (HMC) (Zou & Gu, Reference Zou and Gu2021), and variational Bayes (Hoffman et al., Reference Hoffman, Blei, Wang and Paisley2013). This approach offers two main advantages. First, it improves the computational efficiency of the algorithm. Second, the mini-batch method introduces some noise, thereby increasing the diversity of the sampling and helping to prevent rapid convergence to local optima.

$\boldsymbol {\pi }$

cannot be updated using sub-samples, as they are parameters specific to each individual sample. The mini-batch method is a widely used technique in machine learning, commonly applied in stochastic gradient descent. The method works by updating model parameters using only a subset of the samples during each iteration. In recent years, the mini-batch method has also found applications in the Bayesian field, such as in MH (Wu et al., Reference Wu, Rachel Wang and Wong2022), Gibbs sampling (De Sa et al., Reference De Sa, Chen and Wong2018), Hamiltonian Monte Carlo (HMC) (Zou & Gu, Reference Zou and Gu2021), and variational Bayes (Hoffman et al., Reference Hoffman, Blei, Wang and Paisley2013). This approach offers two main advantages. First, it improves the computational efficiency of the algorithm. Second, the mini-batch method introduces some noise, thereby increasing the diversity of the sampling and helping to prevent rapid convergence to local optima.

When implementing mini-batch with our proposed algorithm, one needs to determine the mini-batch size. Common practice in machine learning involves selecting batch sizes that are powers of two, such as 32, 64, 128, or 256, due to their compatibility with CPU and GPU memory architectures (Keskar et al., Reference Keskar, Mudigere, Nocedal, Smelyanskiy and Tang2016). Keskar et al. (Reference Keskar, Mudigere, Nocedal, Smelyanskiy and Tang2016) have discussed that large-batch sample size tend to converge to sharp minimizers, while small-batch sample size consistently converge to flatter minimizers. This suggests that using a larger batch size does not necessarily lead to better estimation performance. In contrast, a relatively smaller batch size may introduce more stochasticity, which can help the algorithm escape from local optima. Given these considerations, we will specifically explore the impact of different mini-batch size to the model estimation results in the simulation section.

4.2 Label switch

Before computing the final estimations from the sampling procedure, it is necessary to address the issue of label switching. In the context of Bayesian methods, label switching is a common concern that may arise both within a single Markov chain and across multiple chains. In this article, the unknown Q-matrix may lead to label switching issues among several attributes, which also impacts

![]() $\boldsymbol {\alpha }$

and

$\boldsymbol {\alpha }$

and

![]() $\text {G}$

. Therefore, it is crucial to impose a relabeling process on the iterative data. Denote the

$\text {G}$

. Therefore, it is crucial to impose a relabeling process on the iterative data. Denote the

![]() $J \times K \times T$

-dimension

$J \times K \times T$

-dimension

![]() $\mathcal {Q}$

as the iteration samples of Q. Following the relabeling method in Erosheva and Curtis (Reference Erosheva and Curtis2017), we define

$\mathcal {Q}$

as the iteration samples of Q. Following the relabeling method in Erosheva and Curtis (Reference Erosheva and Curtis2017), we define

![]() $\overline {\mathcal {Q}}$

as the average of the sampled

$\overline {\mathcal {Q}}$

as the average of the sampled

![]() $\mathcal {Q}$

, which serves as the first reference. For each

$\mathcal {Q}$

, which serves as the first reference. For each

![]() $\text {Q}^{(t)} \in \mathcal {Q}$

, we leverage the Hungarian algorithm to address the relabeling issue. Specifically, we construct a

$\text {Q}^{(t)} \in \mathcal {Q}$

, we leverage the Hungarian algorithm to address the relabeling issue. Specifically, we construct a

![]() $K \times K$

cost matrix

$K \times K$

cost matrix

![]() $C^{(t)} = \{c_{kk'}^{(t)}\}$

, where

$C^{(t)} = \{c_{kk'}^{(t)}\}$

, where

Let

![]() $\mathcal {P}$

denote the set of all permutations of

$\mathcal {P}$

denote the set of all permutations of

![]() $\{1,\ldots ,K\}$

. Then, the relabeled

$\{1,\ldots ,K\}$

. Then, the relabeled

![]() $\text {Q}^{(t)}$

, denoted as

$\text {Q}^{(t)}$

, denoted as

![]() $\text {Q}_R^{(t)}$

, is obtained by solving the following assignment problem:

$\text {Q}_R^{(t)}$

, is obtained by solving the following assignment problem:

$$ \begin{align} p^{\ast} = \arg\min_{p \in \mathcal{P}} \sum_{k=1}^K c_{k,p(k)}^{(t)}, \end{align} $$

$$ \begin{align} p^{\ast} = \arg\min_{p \in \mathcal{P}} \sum_{k=1}^K c_{k,p(k)}^{(t)}, \end{align} $$

Note that the above steps need to be repeated. In other words, after obtaining the new relabeled

![]() $\mathcal {Q}_R$

, we need to calculate the new average matrix

$\mathcal {Q}_R$

, we need to calculate the new average matrix

![]() $\overline {\mathcal {Q}}_R$

and repeat the relabel procedure until

$\overline {\mathcal {Q}}_R$

and repeat the relabel procedure until

![]() $\mathcal {Q}_R$

converge. In addition, the same permutation must be applied to both

$\mathcal {Q}_R$

converge. In addition, the same permutation must be applied to both

![]() $\boldsymbol {\alpha }$

and

$\boldsymbol {\alpha }$

and

![]() $\text {G}$

simultaneously, as they are also subject to the same relabel issues involved in Q matrix.

$\text {G}$

simultaneously, as they are also subject to the same relabel issues involved in Q matrix.

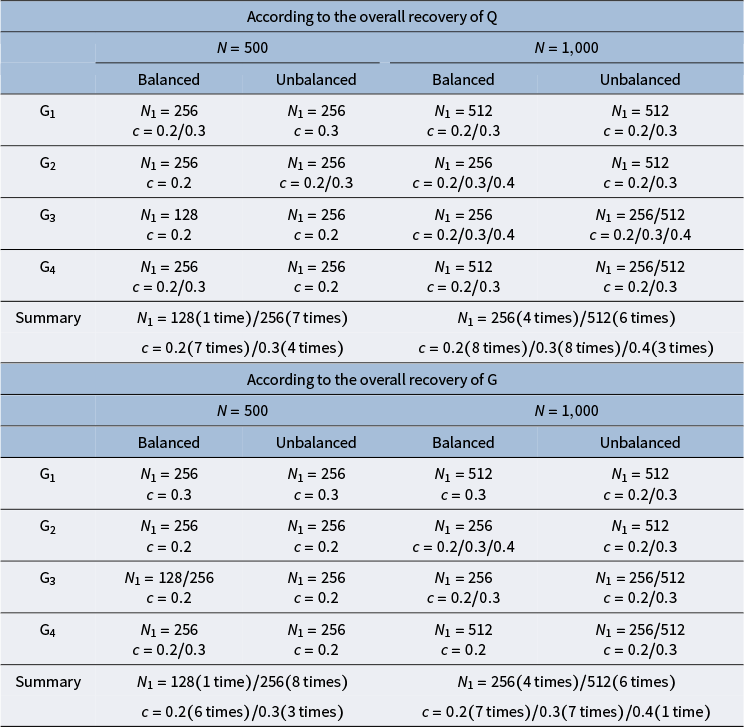

4.3 Final estimation summary

4.3.1 The estimation of

$\text {G}$

$\text {G}$

The estimation of attribute hierarchy is reflected through the estimation of the adjacency matrix

![]() $\text {G}$

. We can use the posterior sample mean as a posterior summary statistic for

$\text {G}$

. We can use the posterior sample mean as a posterior summary statistic for

![]() $\text {G}$

, denoted as

$\text {G}$

, denoted as

![]() $\hat {\text {G}}$

. As a result, each element

$\hat {\text {G}}$

. As a result, each element

![]() $\hat {\text {G}}_{kk'}$

takes a value between 0 and 1, which summarizes the probability for a potential edge from attribute k to

$\hat {\text {G}}_{kk'}$

takes a value between 0 and 1, which summarizes the probability for a potential edge from attribute k to

![]() $k'$

. If an explicit structure is needed for the interpretation of probable hierarchy relationships, a carefully chosen threshold can decide on the final structure. Here, we define a threshold c, referred to as the cut-off value, to make the final estimation of

$k'$

. If an explicit structure is needed for the interpretation of probable hierarchy relationships, a carefully chosen threshold can decide on the final structure. Here, we define a threshold c, referred to as the cut-off value, to make the final estimation of

![]() $\text {G}$

. Specifically, for each element of

$\text {G}$

. Specifically, for each element of

![]() $\hat {\text {G}}$

, if

$\hat {\text {G}}$

, if

![]() $\hat {\text {G}}_{kk'} < c$

, we set

$\hat {\text {G}}_{kk'} < c$

, we set

![]() $\hat {\text {G}}_{kk'} = 0$

; otherwise,

$\hat {\text {G}}_{kk'} = 0$

; otherwise,

![]() $\hat {\text {G}}_{kk'} = 1$

. Chen and Wang (Reference Chen and Wang2023) found that for edges that do not exist and have no indirect relationships between attributes, the estimated values are close to 0. For non-existing edges with indirect relationships between attributes, the estimated values range between 0.2 and 0.4. The specific value of the cut-off value will be explored in the simulation studies.

$\hat {\text {G}}_{kk'} = 1$

. Chen and Wang (Reference Chen and Wang2023) found that for edges that do not exist and have no indirect relationships between attributes, the estimated values are close to 0. For non-existing edges with indirect relationships between attributes, the estimated values range between 0.2 and 0.4. The specific value of the cut-off value will be explored in the simulation studies.

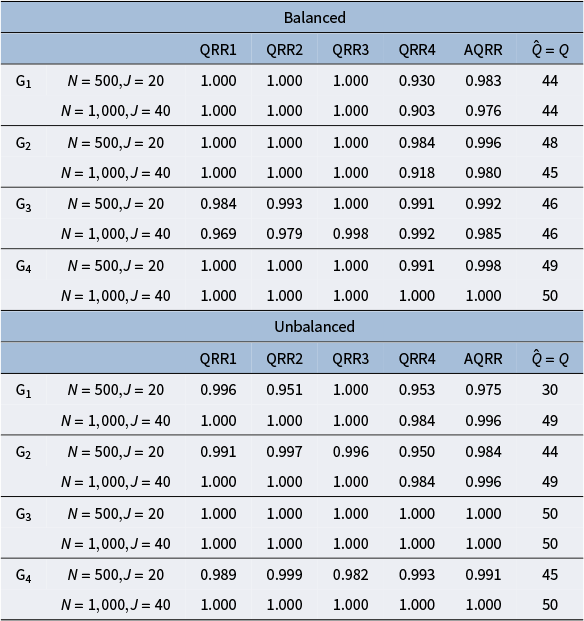

4.3.2 The estimation of

$\text {Q}$

matrix

$\text {Q}$

matrix

After estimating the hierarchical structure, we obtain the estimated Q-matrix through the following two steps. First, similar to the estimation process of

![]() $\hat {\text {G}}$

, we compute the posterior sample mean of the Q-matrix, denoted as

$\hat {\text {G}}$

, we compute the posterior sample mean of the Q-matrix, denoted as

![]() $\hat {\text {Q}}$

, and determine each element as 0 or 1 based on a threshold of 0.5. Second, given the estimated hierarchical structure

$\hat {\text {Q}}$

, and determine each element as 0 or 1 based on a threshold of 0.5. Second, given the estimated hierarchical structure

![]() $\hat {\text {G}}$

, we construct a structured

$\hat {\text {G}}$

, we construct a structured

![]() $\hat {\text {Q}}$

according to the reduced Q-matrix

$\hat {\text {Q}}$

according to the reduced Q-matrix

![]() $\text {Q}_r(\hat {\text {G}})$

, which serves as the final estimation of the Q-matrix.

$\text {Q}_r(\hat {\text {G}})$

, which serves as the final estimation of the Q-matrix.

4.3.3 The other model parameters

For the parameters

![]() $\boldsymbol {s}$

,

$\boldsymbol {s}$

,

![]() $\boldsymbol {g}$

, and

$\boldsymbol {g}$

, and

![]() $\boldsymbol {\pi }$

, we can use their posterior sample means as point estimates. And for the attribute profiles

$\boldsymbol {\pi }$

, we can use their posterior sample means as point estimates. And for the attribute profiles

![]() $\boldsymbol {\alpha }$

, we compute the posterior means and then dichotomize each element using a threshold of 0.5.

$\boldsymbol {\alpha }$

, we compute the posterior means and then dichotomize each element using a threshold of 0.5.

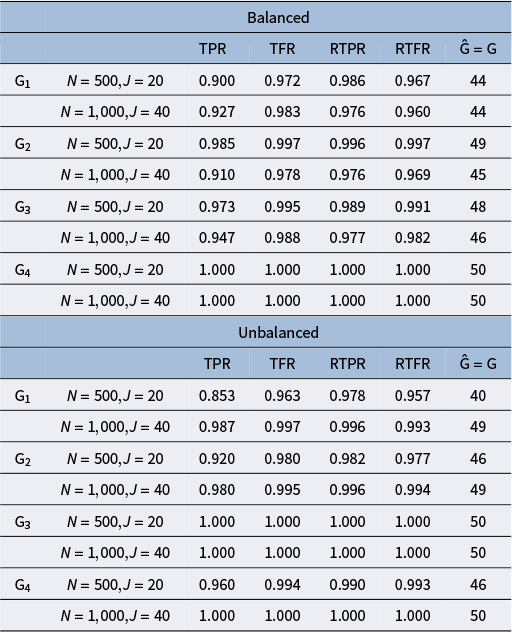

5 Simulation studies

The purpose of this set of simulation studies is to evaluate the performance of the proposed MH-Gibbs algorithm in recovering DINA model parameters across the following factors: 1) sample size and test length, 2) mini-batch size, 3) cutoff values for

![]() $\text {G}$

, 4) attribute profile distribution, and 5) different attribute hierarchy structures.

$\text {G}$

, 4) attribute profile distribution, and 5) different attribute hierarchy structures.

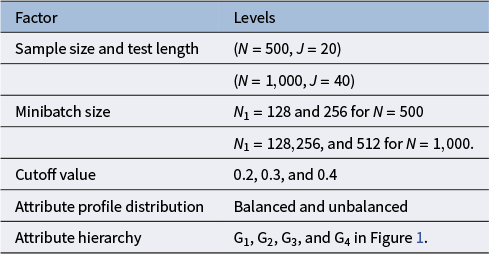

5.1 Design

We consider a set up that a total of

![]() $K=4$

attributes were assessed, and we fix the slipping and guessing parameters in a practical level as

$K=4$

attributes were assessed, and we fix the slipping and guessing parameters in a practical level as

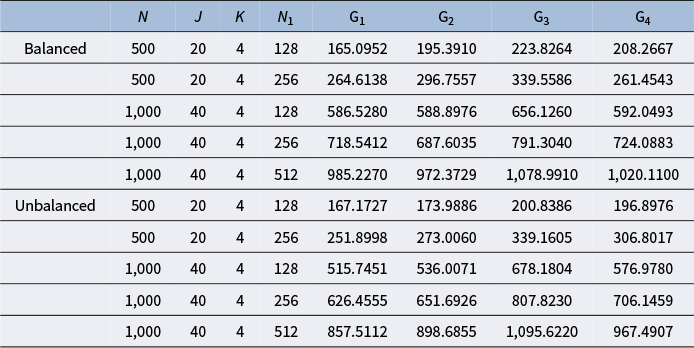

![]() $s_j=g_j=0.2$

. The summary of the five factors considered and their corresponding level is presented in Table 2. These factors were fully crossed, yielding 40 conditions. We conducted four chains in each replication, with the chain length of 20,000 and the burn in value of 10,000, and each simulation repeated 50 times.

$s_j=g_j=0.2$

. The summary of the five factors considered and their corresponding level is presented in Table 2. These factors were fully crossed, yielding 40 conditions. We conducted four chains in each replication, with the chain length of 20,000 and the burn in value of 10,000, and each simulation repeated 50 times.

Table 2 Simulation factors and levels

The two test lengths represent relatively short and medium-length assessments. Recognizing that estimating a high-dimensional, unknown Q-matrix necessitates a larger sample size for accuracy, we increased the sample size paired with the increase of test length in our simulation design. This adjustment reflects both practical considerations and the need for a sufficiently large sample size. The four hierarchical structures of the attributes, as well as studied in previous related work (Chen & Wang, Reference Chen and Wang2023), were therefore also considered in our study.

As for the mini-batch size, as noted in Section 3.3, common practice in machine learning involves selecting batch sizes that are powers of two. To balance convergence speed and computational efficiency, we avoided batch sizes that are excessively small or large. Consequently, we chose batch sizes ranging from one-quarter to one-half of the total sample size. Specifically, for

![]() $N=500$

with

$N=500$

with

![]() $J=20$

, we selected batch sizes of 128 and 256; for

$J=20$

, we selected batch sizes of 128 and 256; for

![]() $N=1,000$

with

$N=1,000$

with

![]() $J=40$

, we chose batch sizes of 128, 256, and 512.

$J=40$

, we chose batch sizes of 128, 256, and 512.

Given the findings in Chen and Wang (Reference Chen and Wang2023), for edges that do not exist and have no indirect relationships between attributes, the estimated values are close to 0. For non-existing edges with indirect relationships between attributes, the estimated values range between 0.2 and 0.4. Therefore, when selecting the cut-off value, we choose 0.2, 0.3, and 0.4.

Finally, following the design in Chen and Wang (Reference Chen and Wang2023), we also considered a balanced and unbalanced attribute profile distribution. For the balanced distribution, the attribute profiles were randomly generated from one of its permissible patterns. For the unbalanced distribution, we first generated

![]() $\boldsymbol {\alpha }^*_{i}=(\alpha ^*_{i1},\ldots ,\alpha ^*_{ik},\ldots ,\alpha ^*_{iK})^{\text {T}}$

from a multivariate normal distribution

$\boldsymbol {\alpha }^*_{i}=(\alpha ^*_{i1},\ldots ,\alpha ^*_{ik},\ldots ,\alpha ^*_{iK})^{\text {T}}$

from a multivariate normal distribution

![]() $\boldsymbol {\alpha }^*_i\sim \text {N}(\boldsymbol {0}_K,\boldsymbol {\Sigma }_{K\times K})$

, where

$\boldsymbol {\alpha }^*_i\sim \text {N}(\boldsymbol {0}_K,\boldsymbol {\Sigma }_{K\times K})$

, where

![]() $\boldsymbol {0_K}=(0,\dots ,0)^{\text {T}}_{K\times 1}$

and

$\boldsymbol {0_K}=(0,\dots ,0)^{\text {T}}_{K\times 1}$

and

$$ \begin{align*}\boldsymbol{\Sigma_{K\times K}}=\begin{bmatrix} 1 & \dots & \sigma\\ \vdots& \ddots & \vdots\\ \sigma & \dots& 1 \end{bmatrix}_{K \times K}, \end{align*} $$

$$ \begin{align*}\boldsymbol{\Sigma_{K\times K}}=\begin{bmatrix} 1 & \dots & \sigma\\ \vdots& \ddots & \vdots\\ \sigma & \dots& 1 \end{bmatrix}_{K \times K}, \end{align*} $$

the off-diagonal elements of

![]() $\Sigma _{K\times K}$

are

$\Sigma _{K\times K}$

are

![]() $\sigma =0.5$

. Then the relationships between the attribute profile

$\sigma =0.5$

. Then the relationships between the attribute profile

![]() $\boldsymbol {\alpha }_i$

and

$\boldsymbol {\alpha }_i$

and

![]() $\boldsymbol {\alpha }^*_i$

can be expressed as

$\boldsymbol {\alpha }^*_i$

can be expressed as

![]() $\alpha _{ik}=1$

if

$\alpha _{ik}=1$

if

![]() $\alpha _{ik}^*>0$

and

$\alpha _{ik}^*>0$

and

![]() $\alpha _{ik}=0$

otherwise.

$\alpha _{ik}=0$

otherwise.

5.2

$\text {Q}$

-matrix design

$\text {Q}$

-matrix design

The establishment of parameter identifiability is a prerequisite for the accurate estimation and reliable interpretation of CDMs. To this end, it is essential to impose a set of constraints on the

![]() $\text {Q}$

-matrix. Previous studies have discussed the identifiability conditions of parameters in CDMs (Chen et al., Reference Chen, Liu, Xu and Ying2015; Chen et al., Reference Chen, Culpepper, Chen and Douglas2018; Gu & Xu, Reference Gu and Xu2023; Ma et al., Reference Ma, Ouyang and Xu2023). In particular, Gu and Xu (Reference Gu and Xu2023) explicitly addressed the identifiability conditions when a hierarchical structure exists among attributes and the

$\text {Q}$

-matrix. Previous studies have discussed the identifiability conditions of parameters in CDMs (Chen et al., Reference Chen, Liu, Xu and Ying2015; Chen et al., Reference Chen, Culpepper, Chen and Douglas2018; Gu & Xu, Reference Gu and Xu2023; Ma et al., Reference Ma, Ouyang and Xu2023). In particular, Gu and Xu (Reference Gu and Xu2023) explicitly addressed the identifiability conditions when a hierarchical structure exists among attributes and the

![]() $\text {Q}$

-matrix is unknown. Therefore, in this study, we followed the identifiability conditions proposed by Gu and Xu (Reference Gu and Xu2023) when generating the

$\text {Q}$

-matrix is unknown. Therefore, in this study, we followed the identifiability conditions proposed by Gu and Xu (Reference Gu and Xu2023) when generating the

![]() $\text {Q}$

-matrix. Specifically, they defined the “densified” version

$\text {Q}$

-matrix. Specifically, they defined the “densified” version

![]() $\mathcal {D}^{\text {G}}(\text {Q})$

and the “sparsified” version

$\mathcal {D}^{\text {G}}(\text {Q})$

and the “sparsified” version

![]() $\mathcal {S}^{\text {G}}(\text {Q})$

of the

$\mathcal {S}^{\text {G}}(\text {Q})$

of the

![]() $\text {Q}$

-matrix. The “densified” version

$\text {Q}$

-matrix. The “densified” version

![]() $\mathcal {D}^{\text {G}}(\text {Q})$

corresponds to what we referred to earlier as the structured

$\mathcal {D}^{\text {G}}(\text {Q})$

corresponds to what we referred to earlier as the structured

![]() $\text {Q}$

-matrix in Section 2.2, which is defined as: for any

$\text {Q}$

-matrix in Section 2.2, which is defined as: for any

![]() $q_{j,k'} = 1$

and

$q_{j,k'} = 1$

and

![]() $k \to k'$

, set

$k \to k'$

, set

![]() $q_{j,k} = 1$

. Conversely, the “sparsified” version

$q_{j,k} = 1$

. Conversely, the “sparsified” version

![]() $\mathcal {S}^{\text {G}}(\text {Q})$

is defined as: for any

$\mathcal {S}^{\text {G}}(\text {Q})$

is defined as: for any

![]() $q_{j,k'} = 1$

and

$q_{j,k'} = 1$

and

![]() $k \to k'$

, set

$k \to k'$

, set

![]() $q_{j,k} = 0$

. For example, under the structure

$q_{j,k} = 0$

. For example, under the structure

![]() $\text {G}_1$

, given a q-vector

$\text {G}_1$

, given a q-vector

![]() $\boldsymbol {q} = (0,1,1,0)$

, the “densified” version

$\boldsymbol {q} = (0,1,1,0)$

, the “densified” version

![]() $\mathcal {D}^{\text {G}}(\boldsymbol {q})$

becomes

$\mathcal {D}^{\text {G}}(\boldsymbol {q})$

becomes

![]() $(1,1,1,0)$

, whereas the “sparsified” version

$(1,1,1,0)$

, whereas the “sparsified” version

![]() $\mathcal {S}^{\text {G}}(\boldsymbol {q})$

becomes

$\mathcal {S}^{\text {G}}(\boldsymbol {q})$

becomes

![]() $(0,0,1,0)$

. Moreover, under

$(0,0,1,0)$

. Moreover, under

![]() $G_1$

, these three versions are equivalent, that is,

$G_1$

, these three versions are equivalent, that is,

Denote the

![]() $\text {Q}$

-matrix contains the following two components:

$\text {Q}$

-matrix contains the following two components:

$$ \begin{align*}\mathbf{Q} = \left( \begin{array}{c} \mathbf{Q}^0_{K\times K} \\ \mathbf{Q}^{\star}_{(J-K)\times K} \end{array} \right). \end{align*} $$

$$ \begin{align*}\mathbf{Q} = \left( \begin{array}{c} \mathbf{Q}^0_{K\times K} \\ \mathbf{Q}^{\star}_{(J-K)\times K} \end{array} \right). \end{align*} $$

Specifically, we generate the Q-matrix satisfying the following three identifiability conditions: (A) the sub-matrix

![]() $\text {Q}^0$

is equivalent to the identity matrix

$\text {Q}^0$

is equivalent to the identity matrix

![]() $I_K$

, that is,

$I_K$

, that is,

![]() $\text {Q}^0 \stackrel {\text {G}}{\sim }I_K$

; (B) the

$\text {Q}^0 \stackrel {\text {G}}{\sim }I_K$

; (B) the

![]() $\mathcal {S}^{\text {G}}(\text {Q})$

has at least three ones in each column; and (C) the

$\mathcal {S}^{\text {G}}(\text {Q})$

has at least three ones in each column; and (C) the

![]() $\mathcal {D}^{\text {G}}\left (\text {Q}^{\star }\right )$

contains K distinct column vectors.

$\mathcal {D}^{\text {G}}\left (\text {Q}^{\star }\right )$

contains K distinct column vectors.

Moreover, once we generated

![]() $\text {Q}$

-matrices that satisfied the identifiability conditions, we uniformly adopted their corresponding “densified” versions, that is, the structured Q-matrices. It is worth noting that during the 50 replications under each simulation condition, we generated 50 distinct Q-matrices. This design may reduce comparability across different conditions. However, it allows us to better evaluate the performance and robustness of our algorithm across different Q-matrices under each condition.