Auditory processing is a critical mechanism for language learning, which enables individuals to analyze and interpret auditory input (Bavin et al., Reference Bavin, Grayden, Scott and Stefanakis2010; Mueller et al., Reference Mueller, Friederici and Männel2012). It encompasses key components such as perceptual acuity, selective attention, and audio-motor integration, which collectively facilitate the perception and production of language (Kraus & Banai, Reference Kraus and Banai2007; Saito et al., Reference Saito, Kachlicka, Suzukida, Mora-Plaza, Ruan and Tierney2024). In the context of first language (L1) acquisition, auditory processing plays a crucial role in supporting language development processes from birth, including word segmentation, phonological development, and syntactic parsing (Kuhl, Reference Kuhl2004; Saffran et al., Reference Saffran, Aslin and Newport1996a; Thompson & Newport, Reference Thompson and Newport2007). While its role in L1 learning is extensively researched, the contribution of auditory processing to second language (L2) acquisition has begun to explore relatively recently, mainly in the domain of L2 speech perception and production (Lengeris & Hazan, Reference Lengeris and Hazan2010). Emerging evidence suggests that auditory statistical learning (SL), the ability to detect and internalize patterns in auditory input, functions as a dimension of auditory processing and may underlie broader linguistic outcomes in language acquisition, including vocabulary and grammar development (Erickson & Thiessen, Reference Erickson and Thiessen2015; Prince et al., Reference Prince, Stevens, Jones and Tillmann2018). Like other auditory processing abilities (e.g., acuity), these findings highlight that auditory SL may also contribute to L2 acquisition, with the potential to shape learners’ ability to acquire complex linguistic structures (Hamrick et al., Reference Hamrick, Lum and Ullman2018). To extend this line of research and deepen our understanding of the role of auditory SL, the current study aims to expand existing auditory processing research by examining whether pitch SL (rather than perceptual acuity) predicts L2 morphosyntactic knowledge. Additionally, drawing on research on threshold effects in auditory processing and L2 learning (Ruan & Saito, Reference Ruan and Saito2023), we investigate whether learners with lower and higher pitch SL abilities benefit differently from auditory input in L2 morphosyntactic learning. This study provides new empirical evidence on how pitch SL mechanisms shape L2 grammar acquisition, expanding our understanding of auditory processing beyond phonological learning.

The role of auditory processing in second language acquisition

L2 acquisition is a complex phenomenon and how far and how well one can acquire a language is subject to individual differences such as learners’ L1, amount of L2 exposure and use, and presence of instruction (see Li et al, Reference Li, Hiver and Papi2022, for a comprehensive review). One of the factors that is also sought to be a key determinant of L2 learning is domain-general auditory processing (Kachlicka et al., Reference Kachlicka, Saito and Tierney2019; Mueller et al., Reference Mueller, Friederici and Männel2012). It is considered as a bottleneck for language learning (Goswami, Reference Goswami2015) and a fundamental and dynamic system critical for understanding and interacting with the auditory environment (Kraus & Banai, Reference Kraus and Banai2007). This collection of functions includes the perceptual acuity (i.e., ability to detect subtle differences of acoustic properties of sound), selective attention (i.e., directing attention to certain acoustic dimensions over others), and motor integration abilities (i.e., remembering and mapping of sounds to motor representations) that involves in speech perception as well as music appreciation (e.g., Bent et al., Reference Bent, Bradlow and Wright2006; Krishnan et al., Reference Krishnan, Xu, Gandour and Cariani2005; for more discussion of the composite and dynamic nature of auditory processing, see Kraus & Banai, Reference Kraus and Banai2007; Saito et al., Reference Saito, Kachlicka, Suzukida, Mora-Plaza, Ruan and Tierney2024). Research on L1 acquisition demonstrated that auditory processing is a source of lower-level processing skills (e.g., phonological perception; Kuhl, Reference Kuhl2000, Reference Kuhl2004) and higher-level linguistic skills (e.g., lexical and morphosyntax knowledge; Anvari et al., Reference Anvari, Trainor, Woodside and Levy2002; Bavin et al., Reference Bavin, Grayden, Scott and Stefanakis2010; Boets et al., Reference Boets, Wouters, van Wieringen, De Smedt and Ghesquière2008; Cutler & Butterfield, Reference Cutler and Butterfield1992; Kalashnikova et al. Reference Kalashnikova, Goswami and Burnham2019; Marslen-Wilson et al., Reference Marslen-Wilson, Tyler, Warren, Grenier and Lee1992; Talcott et al., Reference Talcott, Witton, McLean, Hansen, Rees, Green and Stein2000). For instance, Bavin et al. (Reference Bavin, Grayden, Scott and Stefanakis2010) showed that frequency discrimination and temporal processing of 4–5-year-old children were associated with their ability to language comprehension (such as comprehending words, sentences, and instructions) as well as language production (speaking words, forming sentences, and using language to communicate effectively). Furthermore, Tierney et al. (Reference Tierney, Gomez, Fedele and Kirkham2021) found that children’s ability to perceive the temporal structure of sound was linked to their reading skills. Such evidence collectively suggests that auditory processing may be a fundamental factor for various aspects of language acquisition.

In the context of L2 learning, research indeed confirmed that auditory processing continues to play a role (Hamrick et al., Reference Hamrick, Lum and Ullman2018) and its impact may be more consequential. With Greek learners of English, Lengeris and Hazan (Reference Lengeris and Hazan2010) identified that those who showed better frequency discriminators achieved higher accuracy in English vowel perception and production. Another study by Saito et al. (Reference Saito, Sun and Tierney2020b) identified the boosting role of auditory processing in L2 speech learning. They conducted a longitudinal investigation into the auditory acuity (formant, duration, and pitch) among Mandarin Chinese speakers and examined its relationship with English pronunciation proficiency. The results highlighted the critical role of formant auditory acuity in enhancing learners’ immersion experience, enabling some individuals to achieve greater segmental and word stress accuracy, which in turn led to more advanced L2 phonological skills characterized by greater fluency and accuracy (see Saito et al., Reference Saito, Kachlicka, Sun and Tierney2020a for sensitivity to different types of formant and L2 pronunciation proficiency; also see Zheng et al., Reference Zheng, Saito and Tierney2020 for the evidence of acuity of duration discrimination as a contributing factor for better segmental and word stress learning). Similarly, Saito et al. (Reference Saito, Sun and Tierney2020b) found that the function of auditory processing becomes more evident when learners are in an environment where they are exposed to a target language and use it for interaction for a longer period of time (i.e., immersion setting). Furthermore, not only acuity but also audio-motor integration and attention (i.e., the ability to focus on a single acoustic feature while ignoring irrelevant dimensions) predicted successful L2 learning (Saito et al., Reference Saito, Kachlicka, Suzukida, Mora-Plaza, Ruan and Tierney2024).

This line of research suggests that, in addition to one’s L1 system as well as experiential, psychological, and cognitive variables, auditory processing plays a crucial role in L2 acquisition, where higher levels of precision, integration, and selective attention significantly enhance the rate of learning and ultimate attainment. However, while the predictive power of domain-general auditory processing has been extensively explored in the context of accurate L2 sound perception and production (e.g., Bakkouche & Saito, Reference Bakkouche and Saito2025; Lengeris & Hazan, Reference Lengeris and Hazan2010; Ruan & Saito, Reference Ruan and Saito2023; Saito et al., Reference Saito, Kachlicka, Sun and Tierney2020a, Reference Saito, Sun and Tierney2020b; Sun et al., Reference Sun, Saito and Tierney2021; Zheng et al., Reference Zheng, Saito and Tierney2020), its contribution to the learning of other linguistic aspects remains largely underexplored. Limited evidence includes Kachlicka et al.’s (Reference Kachlicka, Saito and Tierney2019) study, which identified a link between auditory processing and morphosyntactic knowledge, and Saito et al.’s (Reference Saito, Sun, Kachlicka, Alayo, Nakata and Tierney2022c) findings that precise auditory processing contributes to vocabulary and morphosyntactic knowledge (see also Saito et al., Reference Saito, Macmillan, Kroeger, Magne, Takizawa, Kachlicka and Tierney2022a). To deepen our understanding of how auditory processing contributes to L2 learning beyond pronunciation, particularly in contrast to L1 learning (e.g., Saffran et al., Reference Saffran, Newport and Aslin1996b; Thompson & Newport, Reference Thompson and Newport2007; Tomasello, Reference Tomasello2000), this study aims to extend previous efforts by investigating the impact of auditory processing on L2 morphosyntactic knowledge. In particular, we focus on the SL of acoustic properties of sound (i.e., an ability to track particular acoustic patterns) as a part of auditory processing abilities.

Auditory statistical learning as a component of auditory processing

Domain-general auditory processing has been identified as a fundamental ability that drives both L1 and L2 learning. From a theoretical perspective, auditory processing encompasses a set of abilities that are dynamic and malleable, shaped by experience, environmental factors, and training (Kraus & Banai, Reference Kraus and Banai2007), and most existing literature on auditory processing concerns perceptual acuity (i.e., assessing how accurately discriminate subtle acoustic details of sounds; Bavin et al., Reference Bavin, Grayden, Scott and Stefanakis2010 for tone frequency discrimination; Tierney et al., Reference Tierney, Gomez, Fedele and Kirkham2021 for rhythm perception), audio-motor integration (e.g., Flaugnacco et al., Reference Flaugnacco, Lopez, Terribili, Zoia, Buda, Tilli and Monasta2014 with rhythm reproduction; Pulvermüller et al., Reference Pulvermüller, Huss, Kherif, Moscoso del Prado Martin, Hauk and Shtyrov2006 for neurological evidence), and selective attention (Kumar et al., Reference Kumar, Nayak and Muthu2023; Stewart, Reference Stewart2017). Building on the current understanding of domain-general auditory processing as a shared system for both L1 and L2 learning, we propose that auditory statistical learning/SL may serve as a crucial yet understudied component of auditory processing in L2 acquisition (see Hamrick et al., Reference Hamrick, Lum and Ullman2018, for a discussion on the domain-general learning system’s contributions to both L1 and L2 acquisition). While auditory SL plays a well-established role in L1 learning, its specific impact on L2 learning remains less explored (e.g., Prince et al., Reference Prince, Stevens, Jones and Tillmann2018). In L1 literature, auditory SL is recognized as distinct from perceptual acuity, which involves the fine-grained perception of acoustic properties such as pitch, duration, and intensity (e.g., Aslin, Reference Aslin2017; Theeuwes et al., Reference Theeuwes, Bogaerts and van Moorselaar2022). While perceptual acuity provides the sensory foundation necessary for recognizing individual sounds, auditory SL transcends this by enabling the detection and utilization of statistical regularities in sequences of auditory input. This ability plays a key role in the development of language, perception, motor skills, and social behavior (Perruchet & Pacton, Reference Perruchet and Pacton2006; Ren et al., Reference Ren, Wang and Arciuli2023; Siegelman et al., Reference Siegelman, Bogaerts and Frost2017). SL has been assessed through various measures including the serial reaction time (SRT) task (e.g., Nissen & Bullemer, Reference Nissen and Bullemer1987), 2-alternative forced-choice test (e.g., Saffran et al., Reference Saffran, Aslin and Newport1996a), artificial grammar learning (e.g., Reber, Reference Reber1989), and all involve identifying patterns in the statistical structure of input, such as how often certain linguistic or nonlinguistic elements occur, their variability, and how they are distributed or co-occur (see Isbilen et al., Reference Isbilen and Christiansen2022; Erickson & Thiessen, Reference Erickson and Thiessen2015 for a details of multiple approaches to SL). This mechanism of tracking patterns, critical for parsing continuous auditory streams into meaningful units, such as words or phrases, is vital for early language acquisition (Saffran et al., Reference Saffran, Aslin and Newport1996a) and multiple aspects of language learning including recognizing word boundaries (e.g., Perruchet & Vinter, Reference Perruchet and Vinter1998; Swingley, Reference Swingley2005; Saffran et al., Reference Saffran, Newport and Aslin1996b; Thiessen et al., Reference Thiessen and Erickson2013), acquiring phonological knowledge (e.g., Maye et al., Reference Maye, Werker and Gerken2002; Thiessen & Saffran, Reference Thiessen and Saffran2003, Reference Thiessen and Saffran2007), and understanding syntactic rules (e.g., Thompson & Newport, Reference Thompson and Newport2007; Tomasello, Reference Tomasello2000). A well-known study by Saffran et al. (Reference Saffran, Aslin and Newport1996a) focused on how 8-month-old infants process auditory input by exposing them to a continuous stream of spoken syllables. The findings revealed that, through passive listening, infants were able to discern the underlying structure of the speech stream and segment it into meaningful units, recognizing syllable patterns that consistently co-occurred as novel words. Since their seminal work, various insights have emerged from research, including artificial linguistic input to explore how learners detect and utilize patterns in their auditory environment (e.g., Erickson & Thiessen, Reference Erickson and Thiessen2015; Prince et al., Reference Prince, Stevens, Jones and Tillmann2018). For instance, SL functions as infants’ ability to identify word boundaries by observing the likelihood of certain syllables occurring together, thus segmenting continuous streams of speech into discrete words (e.g., Newport & Aslin, Reference Newport and Aslin2000). The consistent pairing of words with objects or events in their surroundings provides infants with clues about word meanings, facilitating the formation of connections between lexical items and their referents (e.g., Vouloumanos, Reference Vouloumanos2008; Yu & Smith, Reference Yu and Smith2007). In addition, by paying attention to how often certain words or sounds appear together in similar situations, nonadjacent dependency learning occurs too (i.e., recognizing patterns or connections between words or sounds that are separated by other words, such as “is” and “ing” in “Grandma is singing”; example taken from Lammertink et al., Reference Lammertink, Witteloostuijn, Boersma, Wijnen and Rispens2019). Over time, our brains use this statistical information to figure out the rules of the language, such as which words or endings typically go together, and this learning seems to occur even if they are not right next to each other (e.g., Creel et al., Reference Creel, Newport and Aslin2004). This type of learning is a key part of how we understand and use grammar in languages, and these examples underscore that auditory SL ability is a foundational process of language learning (see Erickson & Thiessen, Reference Erickson and Thiessen2015, for a review). The evidence above collectively indicates auditory SL facilitates language acquisition by enhancing word segmentation and syntax learning in complex auditory environments (Marchetto & Bonatti, Reference Marchetto and Bonatti2015).

Beyond these L1 studies, a line of work in SLA and cognitive science has established implicit SLFootnote 1 as a fundamental cognitive process that supports grammar acquisition more generally (e.g., Rebuschat & Monaghan, Reference Rebuschat and Monaghan2019, for a review). Large-scale reviews such as Frost et al. (Reference Frost, Armstrong and Christiansen2019) emphasize that sensitivity to distributional properties in input is a fundamental, domain-general mechanism, although its influence on language learning can be constrained by modality-specific factors, resulting in the development of different types of L2 knowledge (Frost et al., Reference Frost, Armstrong, Siegelman and Christiansen2015; Walk & Conway, Reference Walk, Conway and Rebuschat2015). In particular, Kim and Godfroid (Reference Kim and Godfroid2019) directly compared auditory and written input in an artificial grammar learning paradigm. They found that auditory exposure fostered more implicit sensitivity to underlying grammatical regularities, whereas written exposure encouraged more explicit, metalinguistic knowledge (e.g., Conway & Christiansen, Reference Conway and Christiansen2005; Emberson et al., Reference Emberson, Conway and Christiansen2011). Similarly, Zhao et al. (Reference Zhao, Kormos, Rebuschat and Suzuki2021) demonstrated that modality interacts with awareness. Auditory exposure promoted implicit grammatical sensitivity even without awareness, while visual exposure more readily encouraged explicit knowledge when awareness was present. Following this line of research, the current study adopted a modality-specific view (i.e., auditory and visual SL are two related yet independent phenomena) with our main focus on the former as an individual ability rather than a learning process.

Importantly, modality specificity appears to be mirrored at the individual-differences level (i.e., the focus of the current study). Siegelman and Frost (Reference Siegelman and Frost2015) administered a large battery of visual and auditory SL tasks, showing that SL is not a unitary construct but a constellation of partially independent abilities. Learners who were stronger in one modality or stimulus type did not necessarily excel in another. Extending this perspective, Siegelman et al. (Reference Siegelman, Bogaerts, Elazar, Arciuli and Frost2018) reported that auditory SL ability varies by stimulus domain. Comparing auditory–verbal (speech-like items such as lenamo) with auditory–nonverbal conditions (everyday sounds such as whistles, car beeps, and animal calls), they found the former to be susceptible to learners’ L1 knowledge. Despite scholarly interest in individual differences in auditory SL, direct links to L2 development remain limited. Godfroid and Kim (Reference Godfroid and Kim2021) assessed an aptitude battery comprising an auditory SL task (exposure to everyday sounds followed by four-alternative forced-choice; offline, reflection-based measure), a visual SL task (complex shapes followed by four-alternative forced-choice; offline, reflection-based measure), the alternating SRT task (RT-based visual SL; online, processing-based measure), the Tower of London, and a set of L2 grammar measures to model implicit versus explicit knowledge (e.g., word monitoring, self-paced reading, timed GJTs, oral production). They found that neither auditory nor visual SL (offline measures) uniquely predicted implicit L2 grammar knowledge (indexed by the grammar tests), whereas the online SL measure (visual ASRT) provided the only reliable unique prediction. These findings not only suggest that processing-based (online) measures may offer a clearer link to L2 grammar knowledge, but also underscore the need for further investigation of the role of auditory SL beyond everyday sounds or domain-specific stimuli such as syllables. In fact, while this line of research collectively situates auditory SL within a wider theoretical framework in which SL is a core driver of L2 grammatical development, it is crucial to note that, despite ample evidence of sound processing and grammar acquisition in L1 (Thompson & Newport, Reference Thompson and Newport2007; Tomasello, Reference Tomasello2000) and L2 (Kim & Godfroid, Reference Kim and Godfroid2019; Zhao et al. Reference Zhao, Kormos, Rebuschat and Suzuki2021), no prior SLA studies have examined SL of acoustic properties (i.e., domain-general, nonverbal) from the perspective of auditory processing (an approach that is clearly distinct from the pattern detection of everyday sounds such as car beeps or animal sounds used in the previous studies). More specifically, given the prominent role of pitch in speech comprehension and syntactic parsing (e.g., Steffman & Jun, Reference Steffman and Jun2019), we were particularly interested in how SL of pitch sequences (i.e., pitch SL) relates to the morphosyntactic aspects of L2 learning.

Furthermore, imprecision of auditory processing appears to be linked to delays in language acquisition. Research shows that toddlers with difficulties discriminating certain sound dimensions are more likely to experience slower phonological, lexical, and morphosyntactic development, which may lead to broader language challenges such as dyslexia (e.g., Casini et al., Reference Casini, Pech-Georgel and Ziegler2018 for duration; Goswami et al., Reference Goswami, Fosker, Huss, Mead and Szűcs2011 for amplitude rise time; McArthur & Bishop, Reference McArthur and Bishop2005 for fundamental frequency). However, some studies challenge the causal relationship between auditory processing and L1 impairments (Rosen, Reference Rosen2003; Schulte-Körne & Bruder, Reference Schulte-Körne and Bruder2010). Findings from L1 acquisition research consistently suggest that imprecise or low levels of auditory processing are associated with delays in language learning (e.g., Hämäläinen et al., Reference Hämäläinen, Salminen and Leppänen2012, for auditory deficits in dyslexia; Kalashnikova et al., Reference Kalashnikova, Goswami and Burnham2019, for longitudinal evidence linking auditory processing to literacy development within the first three years of life). Individual differences in auditory processing have also been linked to challenges in L1 acquisition (Goswami et al., Reference Goswami, Fosker, Huss, Mead and Szűcs2011) and literacy development (Gibson et al., Reference Gibson, Hogben and Fletcher2006). Yet, these delays may be mitigated by other cognitive and perceptual abilities, such as attention, memory, and cognitive control (e.g., Jasmin et al., Reference Jasmin, Sun and Tierney2020; McArthur & Bishop, Reference McArthur and Bishop2005; Snowling et al., Reference Snowling, Bishop and Stothard2018; see also Rosen & Manganari, Reference Rosen and Manganari2001 for further discussion). Similarly, emerging evidence of L2 speech learning suggests that L2 learners with imprecise auditory processing abilities may struggle to analyze the acoustic properties of L2 input, particularly where these properties differ from their L1 input. As a result, such learners may continue to rely on L1 perception strategies and fail to adopt more native-like L2 perception strategies, even after prolonged exposure to L2 input (Perrachione et al., Reference Perrachione, Lee, Ha and Wong2011; Ruan & Saito, Reference Ruan and Saito2023 for the mediating role of auditory processing in L2 pronunciation instruction). Interestingly, with participants’ auditory acuity as their focus, Ruan and Saito (Reference Ruan and Saito2023) demonstrated that the participants with poorer auditory processing abilities did not demonstrate the development of their L2 sound perceptual accuracy, whereas those who showed better auditory processing showed a tangible gain from the instruction. These findings suggest that there may be a threshold to the auditory processing ability to benefit from language instruction. In other words, learners may need to reach a certain level of auditory processing to make the most of the input and turn it into their learning. However, whether and to what extent poorer or better pitch SL impacts L2 learning remains unclear (a focus of the current study).

Current study

Research evidence suggests that auditory SL may function as an integral component of auditory processing (e.g., Lammering et al., Reference Lammertink, Witteloostuijn, Boersma, Wijnen and Rispens2019). Auditory SL refers to the ability to detect and internalize patterns in auditory input, which is critical for identifying linguistic regularities. Studies indicate that auditory SL abilities might have a facilitative role in language learning, as this skill supports the extraction of structural patterns necessary for linguistic aspects such as vocabulary and grammar acquisition in L1 (de Waard et al., Reference de Waard, Theeuwes and Bogaerts2025; Marchetto & Bonatti, Reference Marchetto and Bonatti2015) and in L2 (e.g., Godfroid & Kim, Reference Godfroid and Kim2021). However, the extent to which auditory SL affects L2 learning remains understudied. Furthermore, despite the wealth of research on auditory processing and its impact on phonological aspects of L2 learning (both production and perception), relatively few studies have explored its role in higher-order linguistic dimensions, such as morphosyntactic acquisition. Existing evidence, such as that of Kachlicka et al. (Reference Kachlicka, Saito and Tierney2019) and Saito et al. (Reference Saito, Sun, Kachlicka, Alayo, Nakata and Tierney2022c), highlights small-to-medium associations between auditory processing acuity and morphosyntactic knowledge. However, these findings primarily address the acuity dimension of auditory processing and do not capture the role of SL (i.e., detecting and internalizing patterns in sound sequences rather than discriminating individual acoustic features with precision). This gap underscores the need to investigate how auditory SL, as a part of auditory processing, can predict not only phonological but also higher-order linguistic outcomes in L2 learning.

The current study focuses specifically on pitch SL because pitch, together with duration, is considered to play a fundamental role in perceiving language as it provides prosodic cues that support the processing of sentence intonation and syntactic parsing (e.g., Braun & Johnson, Reference Braun and Johnson2011; Steffman & Jun, Reference Steffman and Jun2019). For instance, in English (the focus of the current study as L2), slight changes in pitch and duration signify phrase boundaries, enabling listeners to distinguish where one phrase ends and another begins within sentences (de Pijper & Sanderman, Reference De Pijper and Sanderman1994). Also, linguistic focus is often marked by greater pitch movement within the emphasized phrase or word, highlighting its importance in conveying meaning (Breen et al., Reference Breen, Fedorenko, Wagner and Gibson2010). As such, pitch and duration systematically shape the acoustic and articulatory properties of speech segments (see Cho, Reference Cho2016, for an overview). Difficulties with discerning pitch patterns, therefore, could delay the acquisition of syntax, (Shukla et al., Reference Shukla, Nespor and Mehler2007; Tremblay et al., Reference Tremblay, Broersma and Coughlin2018) and the ability to detect linguistic structure (Langus et al., Reference Langus, Marchetto, Bion and Nespor2012; Marslen-Wilson et al., Reference Marslen-Wilson, Tyler, Warren, Grenier and Lee1992). Thus, this study addresses the following research questions:

-

1. Does auditory SL ability in pitch, rather than pitch acuity, contribute to L2 morphosyntactic learning?

-

2. If so, do lower and higher levels of pitch SL ability differentially affect L2 morphosyntactic learning?

Based on the existing studies, the following hypotheses can be developed. First, given that auditory SL is critical for detecting and internalizing linguistic patterns in an auditory stream (Perruchet & Pacton, Reference Perruchet and Pacton2006), and that pitch is a key prosodic feature used in syntactic parsing of languages (Braun & Johnson, Reference Braun and Johnson2011; de Pijper & Sanderman, Reference De Pijper and Sanderman1994), we hypothesize that learners with stronger auditory SL abilities in pitch will demonstrate greater morphosyntactic proficiency in their L2. Second, while pitch acuity (i.e., the ability to discriminate subtle pitch differences) is fundamental to speech perception and production (e.g., Bakkouche & Saito, Reference Bakkouche and Saito2025; Saito et al., Reference Saito, Kachlicka, Sun and Tierney2020a; Sun et al., Reference Sun, Saito and Tierney2021; Zheng et al., Reference Zheng, Saito and Tierney2020), auditory SL goes beyond mere perception and enables learners to extract structural patterns that underlie syntax (Shukla et al., Reference Shukla, Nespor and Mehler2007; Tremblay et al., Reference Tremblay, Broersma and Coughlin2018). Therefore, we expect the association of auditory SL in pitch to predict L2 morphosyntactic learning more strongly than pitch acuity. Finally, previous research suggests that auditory processing ability may have a threshold effect on L2 learning (Ruan & Saito, Reference Ruan and Saito2023; Saito & Tierney, forthcoming), meaning that learners with lower auditory processing abilities may struggle to fully benefit from linguistic input, which may cause a delay in the language acquisition process. Following this line of thought, we predict that learners with lower levels of auditory SL may struggle to acquire morphosyntactic structures relative to those with higher levels of auditory SL.

Methods

Participants

A total of 93 Japanese learners (M age = 21.5, range = 18–32) studying English as a Foreign Language (EFL) participated in the study. At the time of the study, they were university students in Japan, studying in various departments. They had received a formal English education in junior high and high school in Japan. Their exposure to spoken English outside the university was less than 30 minutes per week. Participants’ L2 English proficiency varied considerably, ranging from A2 to C1, according to their TOEIC score.

Auditory statistical learning

Participants’ pitch SL ability was assessed with an auditory SRT task, adapted from the classic visual SRT paradigm developed by Nissen and Bullemer (Reference Nissen and Bullemer1987). The original SRT task has been widely employed to measure implicit learning of sequential regularities (e.g., Destrebecqz & Cleeremans, Reference Destrebecqz and Cleeremans2001; Kaufman et al., Reference Kaufman, DeYoung, Gray, Jiménez, Brown and Mackintosh2010; Stefaniak et al., Reference Stefaniak, Willems, Adam and Meulemans2008), and numerous studies have created auditory or domain-specific adaptations of this paradigm to capture SL across different modalities (e.g., Lum & Kidd, Reference Lum and Kidd2012; Hamrick, Reference Hamrick2015; Tagarelli et al., Reference Tagarelli, Ruiz, Vega and Rebuschat2016). Building on this work, the present study introduces a novel pitch-focused auditory SRT task, which has not previously been applied in SLA research. The task utilized four distinct auditory stimuli differing only in pitch to create a specific 10-item sequence (e.g., 2–1–4–3–4–1–3–2–4–1). Following the methodological decisions of Sun et al.’s (Reference Sun, Saito and Tierney2021) pitch sequence reproduction task, the fundamental frequencies of the stimuli were set at 220 Hz, 246.9 Hz, 277.2 Hz, and 311.1 Hz, representing the first four notes of the major scale. These frequencies were chosen for their distinctiveness, ensuring relatively clear perceptual differentiation between the notes. The amplitude was kept constant across all harmonics. The sequences were presented auditorily through headphones connected to a PC running the online platform Gorilla (Anwyl-Irvine et al., Reference Anwyl-Irvine, Massonnié, Flitton, Kirkham and Evershed2020). Each pitch was mapped to a specific key on a keyboard connected to the PC (220 Hz mapped to the D key, 246.9 Hz to the F key, 277.2 Hz to the J key, and 311.1 Hz to the K key; see Figure 1). The key assignments remained consistent across trials for all participants. To help participants associate each pitch with its corresponding key and become familiar with the use of the keyboard while listening to the sounds, they completed a practice session prior to the main task. After confirming that participants had established stable associations between the pitches and their corresponding keys, they proceeded to the main task. The main task consisted of six blocks. The first and sixth blocks presented pseudorandom sequences, while the second to fifth blocks presented a patterned sequence (transitional probability between 0.33 and 1), with the letters corresponding to the keyboard keys). Each block contained 60 trials, resulting in a total of 360 trials (cf. Nissen & Bullemer, Reference Nissen and Bullemer1987). Participants’ SL scores were calculated by subtracting their RTs in the final patterned block (fifth block) from their RTs in the final pseudorandom block (sixth block). Only RTs for correct responses were included in the calculation. To control for individual differences in overall reaction speed, the values were standardized across participants (i.e., converted into z-scores). Importantly, participants were explicitly instructed to react to tones and press keys as accurately and quickly as possible in order to minimize the likelihood that they would exert conscious efforts to learn regularities. Based on the post-task survey asking if participants noticed any sequences or patterns during the task, none reported recognizing the regularities, suggesting that explicit learning was unlikely to occur. Following Buffington and Morgan-Short (Reference Buffington, Morgan-Short, Kalish, Rau, Zhu and Rogers2018), the internal reliability of the SRT learning effect was evaluated using the Spearman–Brown split-half coefficient. SRT tasks involve long sequences of trials rather than discrete test items, and therefore a simple split-half correlation would underestimate reliability. To address this, the task was divided into two halves, and learning scores (reaction time difference between random and patterned trials) were calculated separately for each half. The correlation between the two halves was then adjusted using the Spearman–Brown prophecy formula (see Kaufman et al., Reference Kaufman, DeYoung, Gray, Jiménez, Brown and Mackintosh2010, for a similar approach for the reliability calculation of SRT tasks). The correlation between first- and second-half learning scores was r = .91 (95% CI [.87, .94], p < .001). A Spearman–Brown corrected reliability was r = .95. This indicates that the SRT in the present study constitutes a robust measure of procedural learning ability, comparable to previous reports (e.g., .99 in Buffington & Morgan-Short, 2018; .96 in Godfroid & Kim, Reference Godfroid and Kim2021; .33 in Kaufman et al., Reference Kaufman, DeYoung, Gray, Jiménez, Brown and Mackintosh2010).

Visual image of how participants interact with the task. The participants position their fingers on the D, F, J, and K keys and listen to tones played through the computer. As each tone is played, they press the corresponding key: 220 Hz is mapped to the D key, 246.9 Hz to the F key, 277.2 Hz to the J key, and 311.1 Hz to the K key.

Pitch discrimination ability

Participants completed a pitch discrimination task to measure auditory pitch acuity. The task was adapted from Saito et al. (Reference Saito, Kachlicka, Suzukida, Mora-Plaza, Ruan and Tierney2024) and made available through Gorilla Open Materials (Anwyl-Irvine et al., Reference Anwyl-Irvine, Massonnié, Flitton, Kirkham and Evershed2020; https://app.gorilla.sc/open-materials/497080). The task followed an A × B discrimination format, where three tones were presented with an inter-stimulus interval of 0.5 seconds. Participants identified whether the first or third tone in each trial differed from the others by pressing “1” or “3.” An adaptive threshold procedure (cf. Levitt, Reference Levitt1971) was used, with the size of the difference between tones varying based on participants’ performance. A total of 101 500-ms four-harmonic complex tones was generated for the task with a fundamental frequency set at 330 Hz and equal amplitude across harmonics. The test began at Level 50. For each incorrect response, the task became easier by reducing difficulty in 10-step increments (widening the difference). Conversely, three consecutive correct responses increased difficulty by 10 steps (narrowing the difference). After the second reversal (a shift from increasing to decreasing difficulty, or vice versa), the step size decreased: first to five, then to two, and finally to one. The task concluded after 70 trials or eight reversals.

Pitch acuity scores were calculated as the mean threshold of all reversals from the second onward, with lower scores indicating greater sensitivity to pitch differences. The target acoustic dimension varied in steps of 0.3 Hz in F0 (330.3–360 Hz). In terms of reliability, because the thresholds are derived from the full adaptive trajectory rather than from a set of parallel items, internal-consistency metrics (e.g., split-half and Cronbach’s α) are deemed to be inappropriate. Following existing auditory processing research (e.g., Saito et al., Reference Saito, Sun and Tierney2020b; Saito et al., Reference Saito, Macmillan, Kroeger, Magne, Takizawa, Kachlicka and Tierney2022a), reliability is best evaluated through test–retest stability across sessions. To this end, we conducted a follow-up analysis with a separate group of participants (N = 30 EFL Japanese learners; not included in the present study), who completed the task twice with a 24-hour interval between sessions. The test–retest correlation was r = .62, representing medium strength and satisfactory reliability for this type of perceptual threshold measure.

L2 learning outcome: morphosyntactic knowledge

A timed version of the Grammatical Judgment Test (GJT; Godfroid et al., Reference Godfroid, Loewen, Jung, Park, Gass and Ellis2015) was used to assess the participants’ morphosyntactic knowledge in English. GJT has been widely used in the field of SLA, and there is a consensus that GJT serves as a proxy of L2 morphosyntax proficiency (cf. Kachlicka et al., Reference Kachlicka, Saito and Tierney2019). There is ample evidence that the task can tap into the degree of automatization of morphosyntax knowledge that matters for real-life L2 communication (Ellis, Reference Ellis2005) and that the outcomes of GJT have been linked to a range of key developmental phenomena in SLA such as age of acquisition (Johnson & Newport, Reference Johnson and Newport1989) and length of immersion (Faretta-Stutenberg & Morgan-Short, Reference Faretta-Stutenberg and Morgan-Short2018). The test asks participants to evaluate the acceptability of grammatical errors in written sentences in a timed situation, which enables them to make quick and intuitive judgments about sentence correctness. Importantly, participants did not need to identify the details of the errors in the sentences or provide the correct form. The test comprised grammatical and ungrammatical versions of 68 English sentences. Including a wide range of examples ensured a robust assessment of grammatical acceptability across all targeted structures, with 8 sentences per grammatical feature. The test covered seventeen challenging grammar aspects for L2 learnersFootnote 2 (for the details, such as the selection of the targeted grammatical features, and test validity, please see Godfroid et al., Reference Godfroid, Loewen, Jung, Park, Gass and Ellis2015; Loewen, Reference Loewen, Ellis, Loewen, Elder, Erlam, Philp and Reinders2009). Participants’ final scores were calculated by summing their correct identifications of grammatical sentences and their correct rejections of ungrammatical ones, then converted into ratio (1–100%). Sentences were presented one by one, and participants were required to judge their grammatical acceptability by pressing either a “grammatical” or “ungrammatical” button on the screen. A time limit, determined by the sentence length, was imposed for each item to minimize inspection or overthinking (see Godfroid et al., Reference Godfroid, Loewen, Jung, Park, Gass and Ellis2015, for more details of the time limits and target sentences). The descriptive statistics of all three tasks are summarized in Table 1.

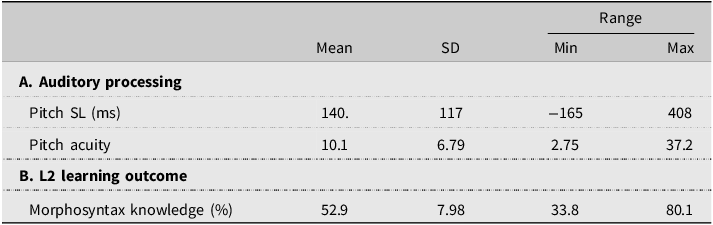

Descriptive statistics

Procedure

All of the data collection process took place online by using an online experiment builder, Gorilla (Anwyl-Irvine et al., Reference Anwyl-Irvine, Massonnié, Flitton, Kirkham and Evershed2020). After filling in the background questionnaire, the participants first completed the SRT task to measure their auditory SL ability, then moved on to GJT, and pitch discrimination task. All of the progress was carefully monitored by the authors to make sure that the participants engaged in the task without any technical issues.

Data analysis

All the variables (pitch SL, pitch acuity, and morphosyntactic knowledge) were converted to z-scores for statistical analyses. The normality of the three variables was assessed by using the Shapiro–Wilk test. Pitch SL (W = .99, p = .60) and morphosyntactic knowledge (W = .98, p = .29) did not significantly deviate from normality, while pitch acuity significantly deviated from normality (W = .73, p < .001). Therefore, pitch acuity was log-transformed for running parametric tests. Subsequently, in order to examine the relationship between the pitch acuity and learning of pitch regularity, a correlation analysis was conducted. We further explored whether better pitch SL may lead to better learning of morphosyntax knowledge by running a correlation and piece-wise correlation with a single break point of median (c.f., Chandrasekaran et al., Reference Chandrasekaran, Sampath and Wong2010). The latter correlation was selected to see if better/poorer auditory SL may show a differential impact on morphosyntactic learning.

Results

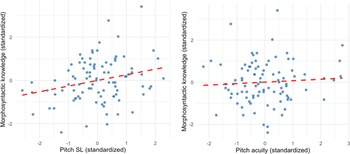

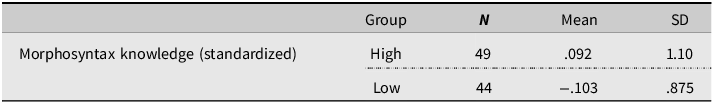

The first research question was to identify the role of auditory SL of pitch and pitch acuity. First, based on the inspection of the scatter plots, the participants’ pitch SL scores are widely varied and showed great individual differences among them, On the other hand, the majority of the data points are clustered towards the lower end of the x-axis (Pitch acuity values below 20), indicating that most participants demonstrated relatively great sensitivity to subtle differences in pitch (cf. Saito et al., Reference Saito, Suzukida, Tran and Tierney2021). Second, to examine the relationship between the variables, two correlation analyses were conducted. Since morphosyntactic knowledge followed a normal distribution, Pearson’s correlation was used (see Figure 2 for the scatter plots). The result suggested that while pitch SL showed a weak but positive correlation (r = .26, p = .011), pitch acuity did not show any association with morphosyntax knowledge (r = .07, p = .51). These correlation results suggest that pitch SL of pitch pattern rather than pitch acuity (i.e., to what extent one can perceive subtle differences in pitch) facilitates morphosyntactic aspects of English. Furthermore, pitch SL and pitch acuity did not show any significant correlation (r = .04, p = .74).

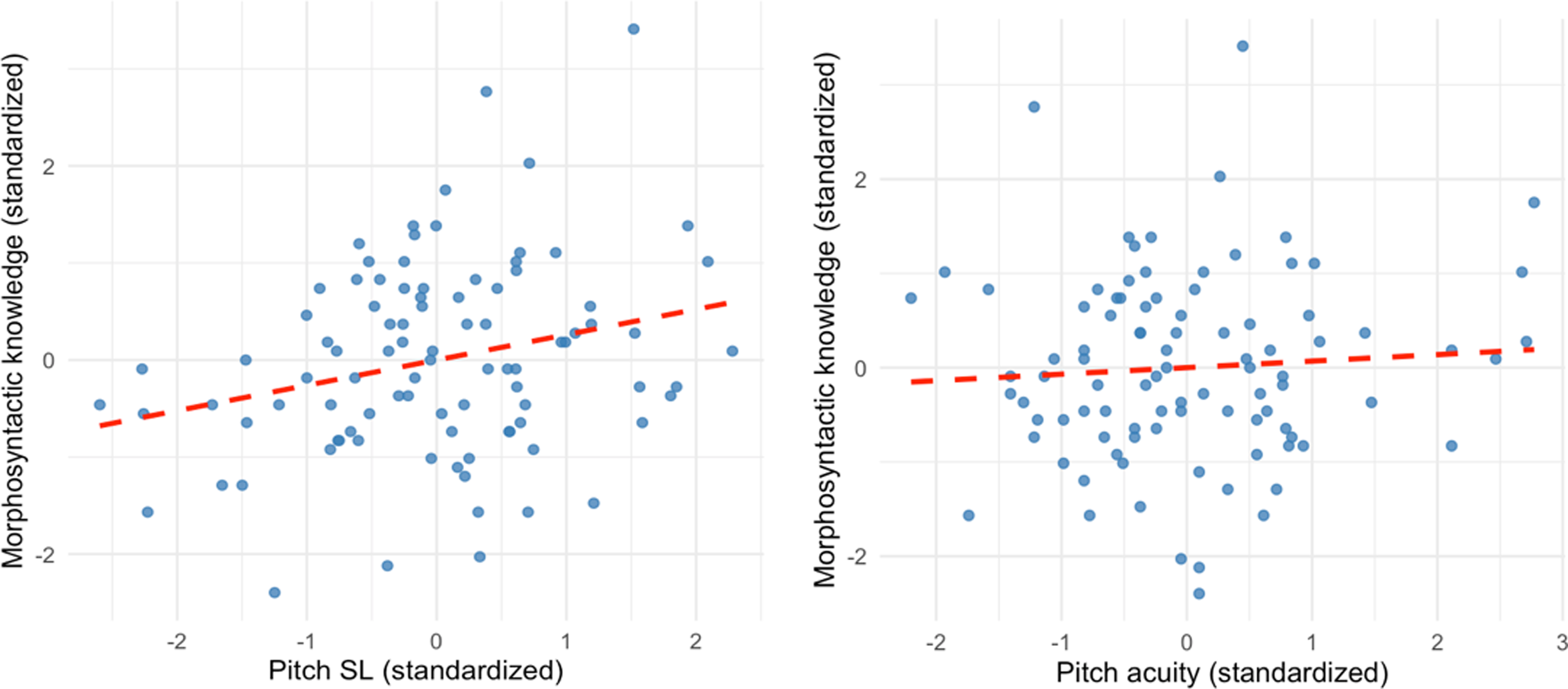

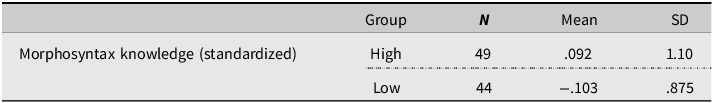

The second research question examines whether limited or superior auditory SL ability differentially affects morphosyntactic learning. To this end, the participants were divided into groups, and then a piece-wise correlation was conducted. To identify the breakpoint(s), R package “segmented” was used on R (R core team, 2024). The package allows the algorithms to estimate a breakpoint (or breakpoints) within variables where the relationship between the variables in question changes significantly (see Muggeo, Reference Muggeo2003, for more details). First, a standard linear regression model was fitted to predict L2 morphosyntactic knowledge from pitch SL scores. The segmented function was then applied to this model to iteratively estimate the most likely breakpoint in the relationship. Based on the output, −.075 was identified as an estimated breakpoint. Therefore, auditory SL levels were divided into two groups: Low (i.e., Pitch SL score ≤ breakpoint) and High (i.e., Pitch SL score > breakpoint). For the Low group, a significant positive correlation was found between pitch SL and morphosyntax knowledge (r = .47, p = .001). This result indicates a moderately positive relationship between pitch SL and morphosyntax knowledge at lower levels of pitch SL. Conversely, for the High group, the correlation was negligible and not statistically significant (r = .17, p = .25). These findings suggest that the strength of the relationship between pitch SL and morphosyntax knowledge diminishes at higher levels of pitch SL (i.e., −.075). Figure 3 illustrates the relationship between pitch SL and morphosyntax knowledge for the Low and High groups Table 2.

Two scatter plots showing the relationship between auditory processing measures and morphosyntactic knowledge scores. The left-hand plot illustrates the relationship between pitch SL and morphosyntax score. The right-hand plot shows the relationship between pitch acuity (log-transformed) and morphosyntax score (note that lower scores reflect better perceptual acuity).

Scatterplot showing the relationship between pitch SL and morphosyntax knowledge (standardized), stratified by low and high groups based on an estimated breakpoint of pitch SL at −.075. The lines represent the fitted trend lines for each group.

Mean comparison between morphosyntax scores

Discussion and conclusion

While domain-general auditory processing, encompassing abilities such as auditory acuity, selective attention, and audio-motor integration, has long been recognized as a fundamental driving force for L1 acquisition, increasing research has recently highlighted its critical role in L2 learning (Hamrick et al., Reference Hamrick, Lum and Ullman2018; Saito et al., Reference Saito, Kachlicka, Suzukida, Mora-Plaza, Ruan and Tierney2024). However, SL, which is a key mechanism underpinning L1 acquisition and enabling learners to detect and internalize linguistic regularities, has not been sufficiently investigated in the context of L2 learning. Exploring the role of SL is particularly significant given that SL plays an essential role in facilitating word segmentation, syntactic parsing, and grammar acquisition in L1 settings (e.g., Mandikal Vasuki et al., Reference Mandikal Vasuki, Sharma, Ibrahim and Arciuli2017; Perruchet & Pacton, Reference Perruchet and Pacton2006). Furthermore, while extensive research has linked auditory processing to L2 speech perception and production (e.g., Bakkouche & Saito, Reference Bakkouche and Saito2025; Ruan & Saito, Reference Ruan and Saito2023; Saito et al., Reference Saito, Kachlicka, Sun and Tierney2020a, Reference Saito, Sun and Tierney2020b; Sun et al., Reference Sun, Saito and Tierney2021), emerging evidence suggests its contribution to broader linguistic dimensions, such as vocabulary and morphosyntactic acquisition (Kachlicka et al., Reference Kachlicka, Saito and Tierney2019; Saito et al., Reference Saito, Macmillan, Kroeger, Magne, Takizawa, Kachlicka and Tierney2022a). To address these gaps, the current study focused on 93 Japanese learners of English in an EFL context, examining the role of pitch SL ability in L2 morphosyntax learning. Specifically, the study aimed to evaluate the relationship between auditory SL of pitch and morphosyntactic knowledge, contrasting this with the link between pitch sensitivity (i.e., pitch acuity) and morphosyntactic knowledge. By doing so, this research sought to deepen our understanding of how domain-general auditory processing, particularly pitch SL, contributes to L2 grammatical learning.

The role of auditory statistical learning in L2 morphosyntactic knowledge

The first research question examined whether pitch SL in pitch, rather than pitch acuity, contributes to L2 morphosyntactic learning. Our findings revealed a weak-to-medium but significant positive correlation between pitch SL and morphosyntactic knowledge (r = .26, p = .011), whereas pitch acuity showed no significant association (r = .07, p = .51).Footnote 3 These results align with previous research highlighting pitch SL as a mechanism for detecting and internalizing linguistic patterns in auditory input (Perruchet & Pacton, Reference Perruchet and Pacton2006). In addition, a relatively weak association between pitch SL and morphosyntactic knowledge might not be too surprising, as the correlation values reported in past studies that investigate the association between SL and L2 grammatical knowledge seem to be also small-to-medium (e.g., r = .181 with reflection-based auditory SL, r = .335 with a visual mode of processing-based task in Godfroid & Kim, Reference Godfroid and Kim2021). The weak association might be also to the nature of the task used to measure auditory SL.

The lack of correlation between pitch acuity and morphosyntactic knowledge suggests that simply being able to discriminate fine-grained pitch differences alone may not contribute to L2 grammar acquisition. Instead, it may function as a part of composite ability (see Saito et al., Reference Saito, Kachlicka, Suzukida, Mora-Plaza, Ruan and Tierney2024 for evidence that a composite of attention, integration, and acuity serves as a stronger predictor of L2 phonological and morphosyntactic proficiency). An alternative explanation can also be made based on the effect of the quality and quantity of L2 input. According to the auditory processing literature, the type of context in which postpubertal L2 learning takes place seems to influence the role of auditory processing abilities (e.g., EFL vs. immersion context; Omote et al., Reference Omote, Jasmin and Tierney2017; Saito et al., Reference Saito, Sun and Tierney2020b; Saito et al., Reference Saito, Suzukida, Tran and Tierney2021; Sun et al., Reference Sun, Saito and Tierney2021). Since the current study focused on participants in an EFL setting, where learners have few hours of formal instruction per week and limited opportunities to interact with different types of native interlocutors in various social settings (Muñoz, Reference Muñoz2014), the relationship between auditory SL, acuity, and L2 morphosyntactic learning may differ from that of participants in an immersion setting.

However, when we examine the current results in the context of EFL, the differences in the association with morphosyntactic knowledge support our hypothesis that pitch SL (which involves pattern detection rather than perceptual discrimination ability) plays a more prominent role in the learning of morphosyntactic structures. The findings are also in line with studies showing that auditory SL supports syntactic and word segmentation abilities (de Waard et al., Reference de Waard, Theeuwes and Bogaerts2025; Marchetto & Bonatti, Reference Marchetto and Bonatti2015), suggesting that learners with stronger auditory SL ability may be better at extracting syntactic regularities from auditory input. In addition, nonsignificant correlation coefficient between pitch SL and pitch acuity (r = .04, p = .74) suggests that tracking pitch patterns (i.e., a process of SL) and identifying detailed acoustic properties (acuity) may be two unique abilities that are collectively part of domain-general auditory processing (Mandikal Vasuki et al., Reference Mandikal Vasuki, Sharma, Demuth and Arciuli2016).

Threshold effect of auditory statistical learning on morphosyntactic learning

The second research question examined whether lower and higher levels of pitch SL differentially affect L2 morphosyntactic learning. To establish a threshold for classifying learners, we used the R package segmented (cf. Muggeo, Reference Muggeo2003; R core team, 2024), which iteratively built regression models and estimated −.075 as a breakpoint. This allowed us to divide learners into low pitch SL (≤−.075) and high pitch SL (>−.075) groups. Subsequently, using a piece-wise correlation, we found a moderately strong positive correlation (r = .44, p = .007) between pitch SL and morphosyntactic knowledge among learners with low pitch SL. This suggests that individuals with weaker pitch SL abilities rely more on pitch SL for morphosyntactic learning. In other words, those with poorer pitch SL may struggle to detect morphosyntactic features in incoming L2 input, which leads to delays in acquiring L2 grammar. For learners with high-pitched SL, the correlation was negligible (r = .17, p = .25), indicating no clear link between stronger pitch SL abilities and morphosyntactic knowledge.

Although these findings do not fully support our hypothesis (i.e., higher levels of auditory SL in pitch lead to greater L2 morphosyntactic attainment while lower levels of pitch SL may limit learners’ ability to acquire morphosyntactic structures), they partially align with the idea that auditory SL is a critical mechanism for learning linguistic structures, especially for those with less developed auditory pattern extraction skills. Moreover, once learners exceed a certain pitch SL threshold, the contribution of pitch SL ability to morphosyntactic learning appears to become negligible. This pattern mirrors findings in the area of auditory processing and L2 phonological learning, where studies show that learners with lower auditory processing abilities benefit more from improvements in those skills, while those with higher abilities may have already reached a ceiling effect (i.e., they gain fewer benefits; Ruan & Saito, Reference Ruan and Saito2023). Extending this perspective to higher-order linguistic domains, the present study suggests that pitch SL plays a more prominent role in L2 grammar acquisition among learners who struggle to extract statistical patterns.

Implications, limitations, and future directions

The current findings provide new insights into the role of auditory SL (in particular, pitch SL) in L2 morphosyntactic learning and highlight important theoretical and pedagogical implications. Theoretically, the study underscores pitch SL as a distinct yet integral component of auditory processing that plays a unique role in morphosyntactic acquisition, separate from pitch perceptual acuity. These results contribute to the growing body of literature demonstrating that domain-general auditory mechanisms extend beyond phonological processing to support higher-level linguistic learning (e.g., Kachlicka et al., Reference Kachlicka, Saito and Tierney2019; Saito et al., Reference Saito, Sun, Kachlicka, Alayo, Nakata and Tierney2022c). Moreover, the identification of a threshold effect, where learners with low pitch SL ability benefit more from auditory processing interventions while high auditory SL learners show diminishing returns, highlights the nuanced relationship between aptitude and language acquisition (Perrachione et al., Reference Perrachione, Lee, Ha and Wong2011; Ruan & Saito, Reference Ruan and Saito2023). Pedagogically, since auditory processing is malleable (Kraus & Banai, Reference Kraus and Banai2007) and trainable (Saito et al., Reference Saito, Petrova, Suzukida, Kachlicka and Tierney2022b), the interventions aimed at enhancing pitch SL may benefit learners with lower pitch SL abilities, particularly in settings where explicit grammar instruction is less emphasized and learners must extract linguistic patterns from auditory input (e.g., immersion environments). Training methods such as auditory training (Saito et al., Reference Saito, Petrova, Suzukida, Kachlicka and Tierney2022b) and exposure to structured linguistic input (Walenta, Reference Walenta2018) could be explored as ways to facilitate grammar acquisition for learners with weaker pitch SL abilities.

The limitations of this study should be considered in light of several factors affecting the generalizability and interpretation of the findings. First, while the study focused on auditory SL in pitch, a broader range of cognitive and experiential factors (such as memory, attention, and prior linguistic experience) should also be considered and explored for their impacts, as these individual differences are known to influence L2 acquisition (Li et al., Reference Li, Hiver and Papi2022). Second, although pitch was emphasized as a key prosodic cue for syntactic parsing, SL also involves other prosodic elements, such as duration, stress, and rhythmic structure, which play essential roles in speech segmentation and morphosyntactic processing. Future research should examine how these additional auditory cues contribute to L2 grammar acquisition. Third, since the current study focused on the normal-hearing population, pitch acuity scores were naturally skewed (cf. Saito et al., Reference Saito, Kachlicka, Sun and Tierney2020a, Reference Saito, Macmillan, Kroeger, Magne, Takizawa, Kachlicka and Tierney2022). In order to generalize our findings and further examine the relationship between pitch acuity and L2 morphosyntactic learning, future research may consider exploring populations whose first languages are nontonal (e.g., Arabic and Korean). Finally, the study relied on reaction-time-based tasks, which may not fully capture the cognitive processes underlying SL. To obtain a more comprehensive understanding, future studies should incorporate complementary cognitive measures, including recall-based tasks and neurophysiological assessments (e.g., Romberg & Saffran, Reference Romberg and Saffran2010; Saffran et al., Reference Saffran, Aslin and Newport1996a), to better characterize how pitch SL (and SL of other acoustic properties) interacts with broader cognitive mechanisms in L2 acquisition. Further research should also investigate the cognitive underpinnings of pitch SL across different L1–L2 combinations, where prosodic and syntactic structures vary, and explore its role in other linguistic domains, such as lexical acquisition and pragmatic competence, to provide a more complete picture of its contribution to second language learning.

Conclusion

This study highlights the significant role of pitch SL in facilitating L2 morphosyntactic acquisition. Results demonstrate that pitch SL in pitch is a more robust predictor of morphosyntactic knowledge compared to pitch perceptual acuity, underscoring the importance of statistical pattern detection in domain-general auditory input for grammar learning. Additionally, findings revealed a threshold effect, whereby participants with low pitch SL abilities benefit more from auditory pattern learning, while the impact diminishes for those with higher pitch SL abilities. This result may contribute to a deeper understanding of auditory processing beyond phonological acquisition and suggests that interventions targeting pitch SL may particularly benefit learners with weaker auditory processing skills. Future studies should explore how pitch SL and SL of other acoustic properties interact with other cognitive and experiential factors and investigate their role across diverse linguistic contexts.

Funding statement

This project was supported by the JSPS KAKENHI Grant Number 21K19995.