1 Introduction

The assumption of local independence (LI) postulates the unsystematic nature of any effect that differentiates the response behavior of individuals above and beyond the constructs assessed by a test. However, a variety of person-, test-, or context-related effects can cause local dependence (LD), which threatens model validity, invalidates the likelihood, and results in biased estimates of both item parameters and construct degrees (see, e.g., Andrich, Reference Andrich and Embretson1985; Chen & Thissen, Reference Chen and Thissen1997; Edwards et al., Reference Edwards, Houts and Cai2018; Junker, Reference Junker1991; Reese, Reference Reese1995; Tuerlinckx & De Boeck, Reference Tuerlinckx and De Boeck2001; Yen, Reference Yen1984; Yen, Reference Yen1993; Zenisky et al., Reference Zenisky, Hambleton and Sired2002). A well-known example is that of artificially inflated slopes of dependent items (see, e.g., Chen & Thissen, Reference Chen and Thissen1997; Edwards et al., Reference Edwards, Houts and Cai2018; Masters, Reference Masters1988), which manifests as positively biased discrimination parameters in item response theory (IRT) models and/or, equivalently, as positively biased loadings in factor analysis models.

The lack of a systematized perspective on the modeling of LD has however led to a fragmented literature with a plethora of indices, statistics, and approaches with different nomenclatures. As an example, Chen and Thissen (Reference Chen and Thissen1997) distinguished between surface LD (SLD), due to item similarity in content or location, and underlying LD (ULD), due to the existence of unmodeled constructs. Similar notions are known as “response dependence (RD)” and “trait dependence” (see, e.g., Marais & Andrich, Reference Marais and Andrich2008). However, while trait dependence is the same as ULD, RD differs from SLD as it models conditional item dependence. Similarly, Hoskens and De Boeck (Reference Hoskens and De Boeck1997) distinguished between “order dependency (OD)” and “combination dependency (CD)” where the former represents a factual (e.g., presentation order) or conceptual (e.g., mastering at different stages of learning) dependence, while the latter focuses on a whole set of items (e.g., gestalt-like effects or shared content). Although these conceptually resemble RD and SLD, they assume different modeling mechanisms.

This fragmentation is also a potential source of confusion. As stressed by Marais and Andrich (Reference Marais and Andrich2008), trait dependence and RD are “generally not distinguished clearly in the literature with the term multidimensionality used for trait dependence and the generic term local dependence used for both trait and response dependence”. Likewise, Edwards et al. (Reference Edwards, Houts and Cai2018) stressed that “there is substantial confusion surrounding the issues of dimensionality and local independence. Many researchers assume IRT models must be unidimensional to satisfy the local independence requirement,” a misbelief attributed to the misuse of early definitions of LI as operative definitions of uni-dimensionality (see, e.g., Hattie, Reference Hattie1985; Henning, Reference Henning1989). However, as one can imagine dependent items in a uni-dimensional context and independent items conditional to several traits, dimensionality is distinct from LI. Even so, while LD can be caused by multidimensionality, it can also occur in its absence if items directly affect each other.

Additional issues further complicate the topic: many available overall goodness-of-fit statistics are general purpose indices that neither are associated with specific sources of LD nor isolate LD from other model assumptions like dimensionality, monotonicity, or the specification of a latent trait density (see, e.g., Houts & Edwards, Reference Houts and Edwards2015; Liu & Maydeu-Olivares, Reference Liu and Maydeu-Olivares2012); multi-dimensional IRT models are empirically indistinguishable from locally dependent uni-dimensional IRT models (Ip, Reference Ip2010); different mechanisms can generate the same amount of LD: extremely high specific-to-general slope ratios for ULD models (based on a bifactor model) can mirror the polychoric correlations generated by models with mid-range to high SLD (Houts & Edwards, Reference Houts and Edwards2015), a result that might explain why some mechanisms like SLD are considered to be more easily detectable than others like ULD (see, e.g., Chen & Thissen, Reference Chen and Thissen1997; Houts & Edwards, Reference Houts and Edwards2013).

The present manuscript attempts a systematization and generalization of some approaches to LD based on a framework suggested by Noventa et al. (Reference Noventa, Heller and Kelava2024, Reference Noventa, Heller, Ye and Kelava2025) that, drawing on the abstract nature of knowledge space theory (KST; see, e.g., Falmagne & Doignon, Reference Falmagne and Doignon2011), unifiesFootnote 1 models from IRT, KST, and cognitive diagnostic assessment (CDA, see, e.g., Rupp et al., Reference Rupp, Templin and Henson2010; von Davier & Lee, Reference Davier and Lee2019). The gist of this framework is the observation that all these theories postulate some conditional relation between item responses and latent knowledge, attributes, skills, or traits. Consider the item “

![]() $2+3=?$

.” In the original KST approach, one wonders if the capability of mastering the item (the latent knowledge) will also allow to solve it (the observed response). In a CDA/competence-based KST approach, one wonders if an individual possesses the (dichotomous) skills/attributes (e.g., basic mental calculation skills) needed to master or solve the item. In an IRT approach, one wonders if an individual can master or solve the item given some continuous measure of the mental ability. Only two primitives are needed to formalize this state of affairs: the KST notion of “structure,” which formalizes the different entities in the theories, and the notion of “process,” which formalizes their relations. Intuitively, given a domain of elements of a given “sort” (e.g., items or attributes), a structure is the collection of all the combinations of these elements (called states) that can exist according to some existing hierarchy between the elements themselves. Consider the items “

$2+3=?$

.” In the original KST approach, one wonders if the capability of mastering the item (the latent knowledge) will also allow to solve it (the observed response). In a CDA/competence-based KST approach, one wonders if an individual possesses the (dichotomous) skills/attributes (e.g., basic mental calculation skills) needed to master or solve the item. In an IRT approach, one wonders if an individual can master or solve the item given some continuous measure of the mental ability. Only two primitives are needed to formalize this state of affairs: the KST notion of “structure,” which formalizes the different entities in the theories, and the notion of “process,” which formalizes their relations. Intuitively, given a domain of elements of a given “sort” (e.g., items or attributes), a structure is the collection of all the combinations of these elements (called states) that can exist according to some existing hierarchy between the elements themselves. Consider the items “

![]() $2+3=?$

” and “

$2+3=?$

” and “

![]() $\frac {1}{2}+\frac {1}{3}=?,$

” if one assumes that an individual capable of mastering the latter must also be able to master the former (but not vice versa) the structure contains three states: a) no items mastered, b) only “

$\frac {1}{2}+\frac {1}{3}=?,$

” if one assumes that an individual capable of mastering the latter must also be able to master the former (but not vice versa) the structure contains three states: a) no items mastered, b) only “

![]() $2+3=?$

” mastered, and c) both items mastered. In other words, if a similar structure is imposed, the responses would form an incomplete pairwise contingency table in which a cell is missing by design. As a consequence, response patterns that are incompatible with the hierarchy (e.g., only “

$2+3=?$

” mastered, and c) both items mastered. In other words, if a similar structure is imposed, the responses would form an incomplete pairwise contingency table in which a cell is missing by design. As a consequence, response patterns that are incompatible with the hierarchy (e.g., only “

![]() $\frac {1}{2}+\frac {1}{3}=?$

” is solved) must be modeled stochastically in order to obtain a complete contingency table for the responses. Structures made of items are called “knowledge structures” and model the latent knowledge (items mastered) and the response patterns (items solved). “Competence structures” are instead used to organize skills, attributes, and traits. The notion of process instead makes explicit the relation between the states of different structures. Following a nomenclature coined by Hutchinson (Reference Hutchinson1991), a process capturing the attempt of individuals to master an item based on their ability (i.e., connecting a competence structure to a knowledge structure) is called a p-process, while a process capturing the effects of pure chance on item solving (i.e., connecting two knowledge structures) is called a g-process. Assessment models are then obtained by connecting processes and by setting assumptions on their conditional probabilities. Two operations are common: “factorization,” which rewrites a probability as a product of different terms (e.g., assuming LI) and “reparameterization,” which provides alternative functional forms to the models (e.g., using a logit or a probit link function). Interestingly, a simple sequence of a p-process and a g-process provides a taxonomy of most KST, CDA, and IRT models. As an example, in a 3-parameter logistic (3PL) model, the p-process is the 2-parameter logistic (2PL) model, while the g-process either adds a constant guessing parameter or some ability-based guessing (San Martin et al., Reference San Martin, del Pino and De Boeck2006).

$\frac {1}{2}+\frac {1}{3}=?$

” is solved) must be modeled stochastically in order to obtain a complete contingency table for the responses. Structures made of items are called “knowledge structures” and model the latent knowledge (items mastered) and the response patterns (items solved). “Competence structures” are instead used to organize skills, attributes, and traits. The notion of process instead makes explicit the relation between the states of different structures. Following a nomenclature coined by Hutchinson (Reference Hutchinson1991), a process capturing the attempt of individuals to master an item based on their ability (i.e., connecting a competence structure to a knowledge structure) is called a p-process, while a process capturing the effects of pure chance on item solving (i.e., connecting two knowledge structures) is called a g-process. Assessment models are then obtained by connecting processes and by setting assumptions on their conditional probabilities. Two operations are common: “factorization,” which rewrites a probability as a product of different terms (e.g., assuming LI) and “reparameterization,” which provides alternative functional forms to the models (e.g., using a logit or a probit link function). Interestingly, a simple sequence of a p-process and a g-process provides a taxonomy of most KST, CDA, and IRT models. As an example, in a 3-parameter logistic (3PL) model, the p-process is the 2-parameter logistic (2PL) model, while the g-process either adds a constant guessing parameter or some ability-based guessing (San Martin et al., Reference San Martin, del Pino and De Boeck2006).

The same abstract approach used to construct a taxonomy of KST, CDA, and IRT models is here applied to systematize the different approaches to LD. As the focus of the present manuscript is to frame LD models from a unified perspective, a greater relevance is given to theoretical rather than applied aspects, since they allow to understand how LD models are systematized, related, and therefore built through the choice of specific assumptions, thus also suggesting new families of models. Moreover, it should be stressed that since the focus of the manuscript is to consider a unified perspective encompassing KST, IRT, and CDA models only categorical response variables and the associated probabilistic models are considered. The manuscript will not cover approaches like factor analysis of continuous response variables and associated important techniques like the study of residuals to model LD. Nonetheless, due to the well-established equivalence between two-parameter IRT models and factor analysis of dichotomous variables (see, e.g., Takane & de Leeuw, Reference Takane and de Leeuw1987), the results also hold for the latter.

As an immediate consequence of considering a top-down approach that moves from general abstract primitives, the existence of two main primitives of assessment models (i.e., structure and process) implies the existence of two distinct but not mutually exclusive mechanisms of modeling LD (i.e., via the structure or via the process). Modeling via the structure is of a deterministic nature, while modeling via the process is of a probabilistic nature. This has direct implications for the approaches used in modeling local LD: two major approaches here called “probabilistic” and “deterministic” LD can be identified. In probabilistic LD, which encompasses most IRT approaches to LD, a power set structure is considered and models with only a p-process are applied. This approach captures situations in which items do not directly affect each other (a power set corresponds to a complete contingency table and therefore allows for all possible response patterns). As in such cases, LD is modeled only by assuming a specific functional form of the p-process, LD alters the likelihood of improbable but possible patterns and it is of a purely stochastic nature. On the converse, in deterministic LD, which encompasses some IRT approaches and the KST-IRT models introduced by Noventa et al. (Reference Noventa, Spoto, Heller and Kelava2019), a structure prohibits certain response patterns by imposing direct effects between the items (i.e., by imposing with a structure an incomplete contingency table of responses). In such a case, the only way to recover those response patterns that are in principle prohibited is to impose a g-process over the deterministic structure. The distinction between probabilistic and deterministic LD should therefore not be conceived as a distinction between probabilistic and non-probabilistic models, but as an indication on whether a deterministic constraint is imposed or not (i.e., the structure) prior to the stochastic modeling of the response patterns (i.e., the processes). A Guttman scalogram of the items “

![]() $2+3=?$

” and “

$2+3=?$

” and “

![]() $\frac {1}{2}+\frac {1}{3}=?$

” illustrates the differences. The IRT approach to Guttman’s scaling is to fit a Rasch model (p-process) under the assumption of LI (which requires a power set, i.e., all possible states). Instead, within a KST-IRT perspective, Guttman’s scaling is captured by a p-process between a competence structure (e.g., skills, attributes, or traits) and a knowledge structure with three states as described above, which is known as a “chain.” A g-process then leads to all four possible response patterns.

$\frac {1}{2}+\frac {1}{3}=?$

” illustrates the differences. The IRT approach to Guttman’s scaling is to fit a Rasch model (p-process) under the assumption of LI (which requires a power set, i.e., all possible states). Instead, within a KST-IRT perspective, Guttman’s scaling is captured by a p-process between a competence structure (e.g., skills, attributes, or traits) and a knowledge structure with three states as described above, which is known as a “chain.” A g-process then leads to all four possible response patterns.

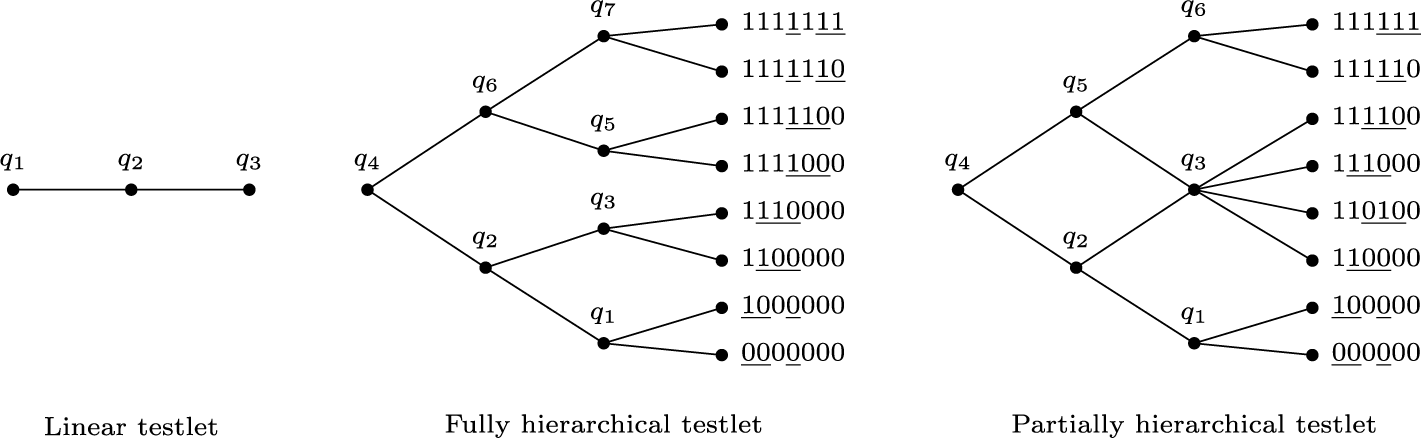

We argue that such a distinction has four main advantages: First, it allows for the modeling of different phenomena within psychological and educational testing that require distinct substantive assumptions. A probabilistic LD approach may be applied in clinical assessment, since many psychological disorders (e.g., major depressive episode) are diagnosed when a certain number of independent criteria are met (e.g., having suicidal thoughts and lack of appetite). Conversely, performance-based assessments (e.g., intelligence or learning disorders tests) are well-suited for a deterministic LD perspective since items are usually administered according to a difficulty order, and specific response patterns are expected. Of course, instances where applying a deterministic LD approach within clinical assessment would be preferable, and vice versa, might manifest (e.g., one might argue that feeling sadness might precede having suicidal thoughts). Second, it might explain why some forms of LD are more easily detected. While probabilistic LD and multi-dimensionality are both modeled within the p-process, deterministic LD is modeled by a separate mechanism. As most IRT approaches to LD appear to be of a probabilistic form, with the exception of SLD and boundary copula functions that are examples of deterministic LD, this might explain why extreme ULD is needed to mirror mid-range to high SLD (Houts & Edwards, Reference Houts and Edwards2015). Third, as deterministic LD can model situations that are too extreme for probabilistic LD, it allows to avoid extreme values of the model parameters (e.g., inflated slopes). Fourth, while some LD sources might be modeled as either deterministic or probabilistic depending on their magnitude and on the substantive nature of the items (e.g., Guttman’s scaling), other sources might be of only a probabilistic or a deterministic nature (e.g., the categories of a polytomous item). Models capturing a polytomous item and models representing multiple dependent dichotomous items appear indeed to be formally equivalent up to the interpretation of the elements of the structure. As a consequence, structures provide a natural approach to testlets, which are often analyzed by summing LD binary items and scoring them as a single polytomous item.

As to the plan of the work. In Section 2, we provide basic notions about LD and introduce the unified framework. In Section 3, probabilistic and deterministic LD are systematized. As the purpose of this work is to frame some approaches to LD, these are not introduced but directly derived. The appendices of the manuscript cover more in detail some technical or ancillary aspects: Appendix A highlights the relation between SLD and the approach of Ackerman and Spray (Reference Ackerman and Spray1987), Appendix B provides the generalization of the results of Huynh (Reference Huynh1994, Reference Huynh1996) to probabilistic and deterministic LD, while Appendix C provides some considerations on the so-called “disordered-threshold controversy.” Finally, the Supplementary Material to the manuscript also provides a brief list of indices, statistics, and approaches to LD.

2 Preliminary notions

In this section, we firstly recall the notions of strong and weak LI and discuss the pairwise contingency tables associated with LD. Secondly, we introduce the primitives of the unified framework.

2.1 Basic notions of LD: Strong and weak LI and contingency tables

Let Q be a set of dichotomous items

![]() $q_i\in Q$

for

$q_i\in Q$

for

![]() $i\in \{1,\dots , |Q|\}$

, and let

$i\in \{1,\dots , |Q|\}$

, and let

![]() $X_i$

be the random variable with realizations

$X_i$

be the random variable with realizations

![]() $x_i\in \{0,1\}$

, expressing if the answer to the item

$x_i\in \{0,1\}$

, expressing if the answer to the item

![]() $q_i\in Q$

is correct or incorrect. LI is often formalized as probabilistic independence of the random variables

$q_i\in Q$

is correct or incorrect. LI is often formalized as probabilistic independence of the random variables

![]() $X_i$

conditional to some latent variable

$X_i$

conditional to some latent variable

![]() $\theta \in \mathbb {R}$

capturing the construct. A common parametric definition is the strong form

$\theta \in \mathbb {R}$

capturing the construct. A common parametric definition is the strong form

$$ \begin{align} P(X = x |\Gamma,\theta) = \prod_{i=1}^{|Q|}P(X_{i} = x_{i}|\Gamma_i,\theta) = \prod_{i=1}^{|Q|} P(X_{i} = 1|\Gamma_i,\theta)^{x_{i}}P(X_{i} = 0|\Gamma_i,\theta)^{1-x_{i}}, \end{align} $$

$$ \begin{align} P(X = x |\Gamma,\theta) = \prod_{i=1}^{|Q|}P(X_{i} = x_{i}|\Gamma_i,\theta) = \prod_{i=1}^{|Q|} P(X_{i} = 1|\Gamma_i,\theta)^{x_{i}}P(X_{i} = 0|\Gamma_i,\theta)^{1-x_{i}}, \end{align} $$

where the joint probability of the random vector

![]() $X = \{X_1,\ldots ,X_n\}$

with realizations

$X = \{X_1,\ldots ,X_n\}$

with realizations

![]() $x\in \{0,1\}^{|Q|}$

is expressed as the product of the marginal probabilities of the random variables

$x\in \{0,1\}^{|Q|}$

is expressed as the product of the marginal probabilities of the random variables

![]() $X_i$

.

$X_i$

.

![]() $\Gamma $

comprises the collections

$\Gamma $

comprises the collections

![]() $\Gamma _i$

of parameters of the item response function (IRF) for the item

$\Gamma _i$

of parameters of the item response function (IRF) for the item

![]() $q_i\in Q$

. In principle, IRFs might be parametric or non-parametric. The 4-parameter logistic model (4PL) is given by

$q_i\in Q$

. In principle, IRFs might be parametric or non-parametric. The 4-parameter logistic model (4PL) is given by

where

![]() $\Gamma _i=\{a_i, b_i, c_i, d_i\}$

contains the discrimination, difficulty, guessing, and slipping parameters. The 3PL model is obtained for

$\Gamma _i=\{a_i, b_i, c_i, d_i\}$

contains the discrimination, difficulty, guessing, and slipping parameters. The 3PL model is obtained for

![]() $d_i=0$

, the 2PL/Birnbaum model for

$d_i=0$

, the 2PL/Birnbaum model for

![]() $d_i=c_i =0$

, the 1PL/Rasch model by also setting

$d_i=c_i =0$

, the 1PL/Rasch model by also setting

![]() $a_i=1$

. If the parameter

$a_i=1$

. If the parameter

![]() $c_i$

is replaced by the function

$c_i$

is replaced by the function

![]() $c_i(\theta )=(1+\exp {(\tilde {c}_i-\theta )})^{-1}$

for some

$c_i(\theta )=(1+\exp {(\tilde {c}_i-\theta )})^{-1}$

for some

![]() $\tilde {c}_i\in \mathbb {R,}$

the model is known as 1PL ability-based guessing (1PLAG; Martin et al., Reference San Martin, del Pino and De Boeck2006). The same principle can be applied to the slipping parameter by considering

$\tilde {c}_i\in \mathbb {R,}$

the model is known as 1PL ability-based guessing (1PLAG; Martin et al., Reference San Martin, del Pino and De Boeck2006). The same principle can be applied to the slipping parameter by considering

![]() $d_i(\theta )=(1+\exp {(\theta - \tilde {d}_i)})^{-1}$

.

$d_i(\theta )=(1+\exp {(\theta - \tilde {d}_i)})^{-1}$

.

In spite of its simplicity, LI (1) is extremely restrictive and in practical applications is often replaced by a null conditional item covariance form, known as pairwise or weak LI (see, e.g., Stout, Reference Stout2002), given by

In principle, for both LI (1) and (3), one can consider a likelihood of the form

![]() $P(X|\Gamma ,\Theta )$

in which

$P(X|\Gamma ,\Theta )$

in which

![]() $\Theta \in \mathbb {R}^d$

and d is the number of latent dimensions. The smallest value of d such that LI is satisfied provides a traditional definition of test dimensionality, which has the strong limitation of assuming that all dimensions have the same relevance (see, e.g., McDonald, Reference McDonald1981; Stout, Reference Stout1990). For the present work, however, any unaccounted trait, irrespective of its major or minor nature, implies a violation of LI (1) or (3).

$\Theta \in \mathbb {R}^d$

and d is the number of latent dimensions. The smallest value of d such that LI is satisfied provides a traditional definition of test dimensionality, which has the strong limitation of assuming that all dimensions have the same relevance (see, e.g., McDonald, Reference McDonald1981; Stout, Reference Stout1990). For the present work, however, any unaccounted trait, irrespective of its major or minor nature, implies a violation of LI (1) or (3).

Weak LI (3) constitutes the backbone of many procedures and indices to detect violations of LI. Excess covariation or association in the pairwise marginal contingency tables of items

![]() $q_i,q_{i'}$

is detected by comparing the observed proportions

$q_i,q_{i'}$

is detected by comparing the observed proportions

![]() $p_{x_i x_{i'}}$

with the expected ones

$p_{x_i x_{i'}}$

with the expected ones

![]() $p^{*}_{x_i x_{i'}}$

for a chosen IRT model, that is,

$p^{*}_{x_i x_{i'}}$

for a chosen IRT model, that is,

where

![]() $f(\theta )$

is the probability density function of the ability. For the present work, we identify two relevant patterns of LD that manifest themselves as pairwise incomplete contingency tables, that is,

$f(\theta )$

is the probability density function of the ability. For the present work, we identify two relevant patterns of LD that manifest themselves as pairwise incomplete contingency tables, that is,

$$ \begin{align} \begin{aligned} \text{Table A:}\quad q_i\rightarrow q_{i'} := \left( \begin{matrix} p_{00} & 0 \\ p_{10} & p_{11} \end{matrix} \right) & \quad \text{Table B:}\quad q_i\leftrightarrow q_{i'} := \left( \begin{matrix} p_{00} & 0 \\ 0 & p_{11} \end{matrix} \right), \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} \text{Table A:}\quad q_i\rightarrow q_{i'} := \left( \begin{matrix} p_{00} & 0 \\ p_{10} & p_{11} \end{matrix} \right) & \quad \text{Table B:}\quad q_i\leftrightarrow q_{i'} := \left( \begin{matrix} p_{00} & 0 \\ 0 & p_{11} \end{matrix} \right), \end{aligned} \end{align} $$

where Table A corresponds to a prerequisite relation between items so that one cannot master item

![]() $q_{i'}$

if item

$q_{i'}$

if item

![]() $q_i$

is not mastered (e.g., if a person masters the item “

$q_i$

is not mastered (e.g., if a person masters the item “

![]() $\frac {1}{2}+\frac {1}{3} = ?,$

” then it also masters the item “

$\frac {1}{2}+\frac {1}{3} = ?,$

” then it also masters the item “

![]() $2+3=?$

,” but not vice versa), while Table B captures two items that are either mastered or failed jointly (e.g., if a person masters the item “

$2+3=?$

,” but not vice versa), while Table B captures two items that are either mastered or failed jointly (e.g., if a person masters the item “

![]() $2+3=?$

,” it also masters the item “

$2+3=?$

,” it also masters the item “

![]() $3+2 = ?$

,” and vice versa). The fact that items manifesting dependence show artificially inflated slopes under LI is apparent for a 2PL model (we set

$3+2 = ?$

,” and vice versa). The fact that items manifesting dependence show artificially inflated slopes under LI is apparent for a 2PL model (we set

![]() $c_i=d_i=0$

for what follows), for which both Tables A and B are obtained in the limit

$c_i=d_i=0$

for what follows), for which both Tables A and B are obtained in the limit

![]() $a_i, a_{i'}\rightarrow \infty $

. If

$a_i, a_{i'}\rightarrow \infty $

. If

![]() $b_{i'}> b_i$

, one obtains Table A since individuals with

$b_{i'}> b_i$

, one obtains Table A since individuals with

![]() $\theta> b_{i'}$

,

$\theta> b_{i'}$

,

![]() $b_{i'}> \theta > b_i$

, and

$b_{i'}> \theta > b_i$

, and

![]() $\theta < b_{i}$

, respectively, belong to

$\theta < b_{i}$

, respectively, belong to

![]() $p_{11}$

,

$p_{11}$

,

![]() $p_{10}$

, and

$p_{10}$

, and

![]() $p_{00}$

. Exception is given by individuals with

$p_{00}$

. Exception is given by individuals with

![]() $\theta =b_i$

(

$\theta =b_i$

(

![]() $\theta = b_{i'}$

), which belong to both

$\theta = b_{i'}$

), which belong to both

![]() $p_{00}$

and

$p_{00}$

and

![]() $p_{10}$

(

$p_{10}$

(

![]() $p_{10}$

and

$p_{10}$

and

![]() $p_{11}$

). Similarly, if

$p_{11}$

). Similarly, if

![]() $b_i=b_{i'}$

, then one approximates Table B since individuals with

$b_i=b_{i'}$

, then one approximates Table B since individuals with

![]() $\theta> b_{i}$

(

$\theta> b_{i}$

(

![]() $\theta < b_{i}$

) belong to

$\theta < b_{i}$

) belong to

![]() $p_{11}$

(

$p_{11}$

(

![]() $p_{00}$

), while individuals with

$p_{00}$

), while individuals with

![]() $\theta =b_i$

are distributed among all cells. These behaviors are apparent in the following LI Tables (only the values of the 2PL’s item parameters are reported in parentheses as

$\theta =b_i$

are distributed among all cells. These behaviors are apparent in the following LI Tables (only the values of the 2PL’s item parameters are reported in parentheses as

![]() $c_i=d_i=0$

is set for both items). The density

$c_i=d_i=0$

is set for both items). The density

![]() $f(\theta )$

is a standard normal. Starting from Table LI-1 and moving to the right, the value of

$f(\theta )$

is a standard normal. Starting from Table LI-1 and moving to the right, the value of

![]() $b_2$

increases, and the tables approximate Table A. Moving from top to bottom, the discrimination parameters

$b_2$

increases, and the tables approximate Table A. Moving from top to bottom, the discrimination parameters

![]() $a_i$

increase, and Tables LI-4 and LI-5 approximate Tables B and A. However, under LI,

$a_i$

increase, and Tables LI-4 and LI-5 approximate Tables B and A. However, under LI,

![]() $p_{11}$

also approaches zero as in Table LI-6.

$p_{11}$

also approaches zero as in Table LI-6.

$$ \begin{align*}\nonumber \underset{\substack{(a_1=1,a_2=1,\\ b_1=0,b_2=0)}}{\text{Table LI-1:}} \quad \left( \begin{matrix} 0.293 & 0.207 \\ 0.207 & 0.293 \end{matrix} \right) \quad \underset{\substack{(a_1=1,a_2=1,\\ b_1=0,b_2=2)}}{\text{Table LI-2:}} \quad \left( \begin{matrix} 0.446 & 0.054 \\ 0.398 & 0.102 \end{matrix} \right) \quad \underset{\substack{(a_1=1,a_2=1,\\ b_1=0,b_2=4)}}{\text{Table LI-3:}} \quad \left( \begin{matrix} 0.491 & 0.009 \\ 0.481 & 0.019 \end{matrix} \right) \end{align*} $$

$$ \begin{align*}\nonumber \underset{\substack{(a_1=1,a_2=1,\\ b_1=0,b_2=0)}}{\text{Table LI-1:}} \quad \left( \begin{matrix} 0.293 & 0.207 \\ 0.207 & 0.293 \end{matrix} \right) \quad \underset{\substack{(a_1=1,a_2=1,\\ b_1=0,b_2=2)}}{\text{Table LI-2:}} \quad \left( \begin{matrix} 0.446 & 0.054 \\ 0.398 & 0.102 \end{matrix} \right) \quad \underset{\substack{(a_1=1,a_2=1,\\ b_1=0,b_2=4)}}{\text{Table LI-3:}} \quad \left( \begin{matrix} 0.491 & 0.009 \\ 0.481 & 0.019 \end{matrix} \right) \end{align*} $$

$$ \begin{align*}\nonumber \underset{\substack{(a_1=10,a_2=10,\\ b_1=0,b_2=0)}}{\text{Table LI-4:}} \quad \left( \begin{matrix} 0.461 & 0.039 \\ 0.039 & 0.461 \end{matrix} \right) \quad \underset{\substack{(a_1=10,a_2=10,\\ b_1=0,b_2=2)}}{\text{Table LI-5:}} \quad \left( \begin{matrix} 0.500 & 0.000 \\ 0.475 & 0.025 \end{matrix} \right) \quad \underset{\substack{(a_1=10,a_2=10,\\ b_1=0,b_2=4)}}{\text{Table LI-6:}} \quad \left( \begin{matrix} 0.500 & 0.000 \\ 0.500 & 0.000 \end{matrix} \right) \end{align*} $$

$$ \begin{align*}\nonumber \underset{\substack{(a_1=10,a_2=10,\\ b_1=0,b_2=0)}}{\text{Table LI-4:}} \quad \left( \begin{matrix} 0.461 & 0.039 \\ 0.039 & 0.461 \end{matrix} \right) \quad \underset{\substack{(a_1=10,a_2=10,\\ b_1=0,b_2=2)}}{\text{Table LI-5:}} \quad \left( \begin{matrix} 0.500 & 0.000 \\ 0.475 & 0.025 \end{matrix} \right) \quad \underset{\substack{(a_1=10,a_2=10,\\ b_1=0,b_2=4)}}{\text{Table LI-6:}} \quad \left( \begin{matrix} 0.500 & 0.000 \\ 0.500 & 0.000 \end{matrix} \right) \end{align*} $$

2.2 Unified framework: Taxonomy of CDA, KST, and IRT models

Before formally introducing the primitives of the framework (structure and process) and the main operations (factorization and reparameterization), let us reformulate the 4PL model of Eq. (2) to provide an intuitive understanding of these notions. A little algebra allows to rewrite the 4PL model as

where

![]() $\pi (K_i=1|a_i,b_i,\theta )$

is the IRF of a 2-parameter model in which the random variable

$\pi (K_i=1|a_i,b_i,\theta )$

is the IRF of a 2-parameter model in which the random variable

![]() $K_i$

represents if the i-th item is mastered or not. According to Eq. (6), an item can be solved (

$K_i$

represents if the i-th item is mastered or not. According to Eq. (6), an item can be solved (

![]() $X_i=1$

) either from guessing a non-mastered item (

$X_i=1$

) either from guessing a non-mastered item (

![]() $K_i=0$

) or from not slipping on a mastered item (

$K_i=0$

) or from not slipping on a mastered item (

![]() $K_i = 1$

). By definition, it holds that

$K_i = 1$

). By definition, it holds that

![]() $c_i := P(X_i=1|K_i=0)$

and

$c_i := P(X_i=1|K_i=0)$

and

![]() $d_i := P(X_i=0|K_i=1)$

. The conditional probabilities

$d_i := P(X_i=0|K_i=1)$

. The conditional probabilities

![]() $P(X_i|K_i)$

and

$P(X_i|K_i)$

and

![]() $\pi (K_i|\theta ),$

respectively, characterize the g-process and the p-process. The former captures the relation between the knowledge of the item (

$\pi (K_i|\theta ),$

respectively, characterize the g-process and the p-process. The former captures the relation between the knowledge of the item (

![]() $K_i$

) and the actual response (

$K_i$

) and the actual response (

![]() $X_i$

), and provides the so-called left-side added parameters (Thissen & Steinberg, Reference Thissen and Steinberg1986), while the latter captures the relation between the latent trait (

$X_i$

), and provides the so-called left-side added parameters (Thissen & Steinberg, Reference Thissen and Steinberg1986), while the latter captures the relation between the latent trait (

![]() $\theta $

) and the knowledge of the item (

$\theta $

) and the knowledge of the item (

![]() $K_i$

), and corresponds to the 2-parameter IRF. Eq. (7) is an example of reparameterization by means of a logit link function endowing the 2-parameter model with a specific form. In a traditional latent variable approach, the random variable

$K_i$

), and corresponds to the 2-parameter IRF. Eq. (7) is an example of reparameterization by means of a logit link function endowing the 2-parameter model with a specific form. In a traditional latent variable approach, the random variable

![]() $K_i$

(

$K_i$

(

![]() $X_i$

) represents the latent classes associated with mastering (solving) and not mastering (not solving). A feature of the unified framework is to model the structure of these latent classes. Indeed, although

$X_i$

) represents the latent classes associated with mastering (solving) and not mastering (not solving). A feature of the unified framework is to model the structure of these latent classes. Indeed, although

![]() $X_i$

and

$X_i$

and

![]() $K_i$

are different random variables, they are both defined over the same states (i.e., the same structure): an empty state (no item) and a state containing only one item. More in general, latent classes associated with items, responses, attributes, and traits are captured via the notion of structure: let D be a non-empty domain of elements

$K_i$

are different random variables, they are both defined over the same states (i.e., the same structure): an empty state (no item) and a state containing only one item. More in general, latent classes associated with items, responses, attributes, and traits are captured via the notion of structure: let D be a non-empty domain of elements

![]() $d_i\in D$

. Any pair like

$d_i\in D$

. Any pair like

![]() $(D,\mathcal {Y}),$

where

$(D,\mathcal {Y}),$

where

![]() $\mathcal {Y}$

is a family of subsets

$\mathcal {Y}$

is a family of subsets

![]() $Y\subseteq D$

such that

$Y\subseteq D$

such that

![]() $\{\emptyset , D\}\subseteq \mathcal {Y}\subseteq 2^D$

is called a structure, and any subset like

$\{\emptyset , D\}\subseteq \mathcal {Y}\subseteq 2^D$

is called a structure, and any subset like

![]() $Y\in \mathcal {Y}$

a state. Informally, the domain is omitted. Figure 1 provides examples of structures on

$Y\in \mathcal {Y}$

a state. Informally, the domain is omitted. Figure 1 provides examples of structures on

![]() $D=\{d_1, d_2,d_3\}$

.

$D=\{d_1, d_2,d_3\}$

.

Figure 1 Examples of structures on

![]() $D=\{d_1, d_2, d_3\}$

.

$D=\{d_1, d_2, d_3\}$

.

A structure like

![]() $\mathcal {Y}_1$

, in which the elements form a linearly ordered set, is called a chain, a structure like

$\mathcal {Y}_1$

, in which the elements form a linearly ordered set, is called a chain, a structure like

![]() $\mathcal {Y}_3$

is a power set

$\mathcal {Y}_3$

is a power set

![]() $2^D$

, while

$2^D$

, while

![]() $\mathcal {Y}_2$

is an arbitrary relation. In all these structures, the states are latent classes ordered by set inclusion. Nominal latent classes are instead represented by a collection of singletons of mutually exclusive elements. As an example,

$\mathcal {Y}_2$

is an arbitrary relation. In all these structures, the states are latent classes ordered by set inclusion. Nominal latent classes are instead represented by a collection of singletons of mutually exclusive elements. As an example,

![]() $\mathcal {Y}=\{\{d_1\},\{d_2\}\}$

represents the collection of the latent classes of the mutually exclusive elements

$\mathcal {Y}=\{\{d_1\},\{d_2\}\}$

represents the collection of the latent classes of the mutually exclusive elements

![]() $d_1$

and

$d_1$

and

![]() $d_2$

(e.g., males and females). If a probability distribution

$d_2$

(e.g., males and females). If a probability distribution

![]() $P_{\mathcal {Y}}: \mathcal {Y}\rightarrow [0,1]$

is defined so that

$P_{\mathcal {Y}}: \mathcal {Y}\rightarrow [0,1]$

is defined so that

![]() $P(Y)$

is the probability of the state

$P(Y)$

is the probability of the state

![]() $Y\in \mathcal {Y}$

(the subscript in

$Y\in \mathcal {Y}$

(the subscript in

![]() $P_{\mathcal {Y}}$

is omitted), the triple

$P_{\mathcal {Y}}$

is omitted), the triple

![]() $(D,\mathcal {Y}, P_{\mathcal {Y}})$

is called a probabilistic structure, and provides a set-theoretical recast of a contingency table for cross-classified dichotomous variables. Every state

$(D,\mathcal {Y}, P_{\mathcal {Y}})$

is called a probabilistic structure, and provides a set-theoretical recast of a contingency table for cross-classified dichotomous variables. Every state

![]() $Y\in \mathcal {Y}$

(e.g.,

$Y\in \mathcal {Y}$

(e.g.,

![]() $Y=\{d_1,d_3\}$

) labels a non-empty cell of the table since it can be mapped into the realization

$Y=\{d_1,d_3\}$

) labels a non-empty cell of the table since it can be mapped into the realization

![]() $y\in \{0,1\}^{|D|}$

(e.g.,

$y\in \{0,1\}^{|D|}$

(e.g.,

![]() $y=(1,0,1)$

), of a random vector Y such that

$y=(1,0,1)$

), of a random vector Y such that

![]() $Y_i = 1$

iff

$Y_i = 1$

iff

![]() $d_i\in Y$

. The state probability

$d_i\in Y$

. The state probability

![]() $P(Y)$

captures the associated probability/frequency. If

$P(Y)$

captures the associated probability/frequency. If

![]() $\mathcal {Y}=2^D$

, the table is complete. If

$\mathcal {Y}=2^D$

, the table is complete. If

![]() $\mathcal {Y}\subset 2^D$

, the table is incomplete with structural zeros (missing cells by design) corresponding to the subsets in

$\mathcal {Y}\subset 2^D$

, the table is incomplete with structural zeros (missing cells by design) corresponding to the subsets in

![]() $2^D\setminus \mathcal {Y}$

.

$2^D\setminus \mathcal {Y}$

.

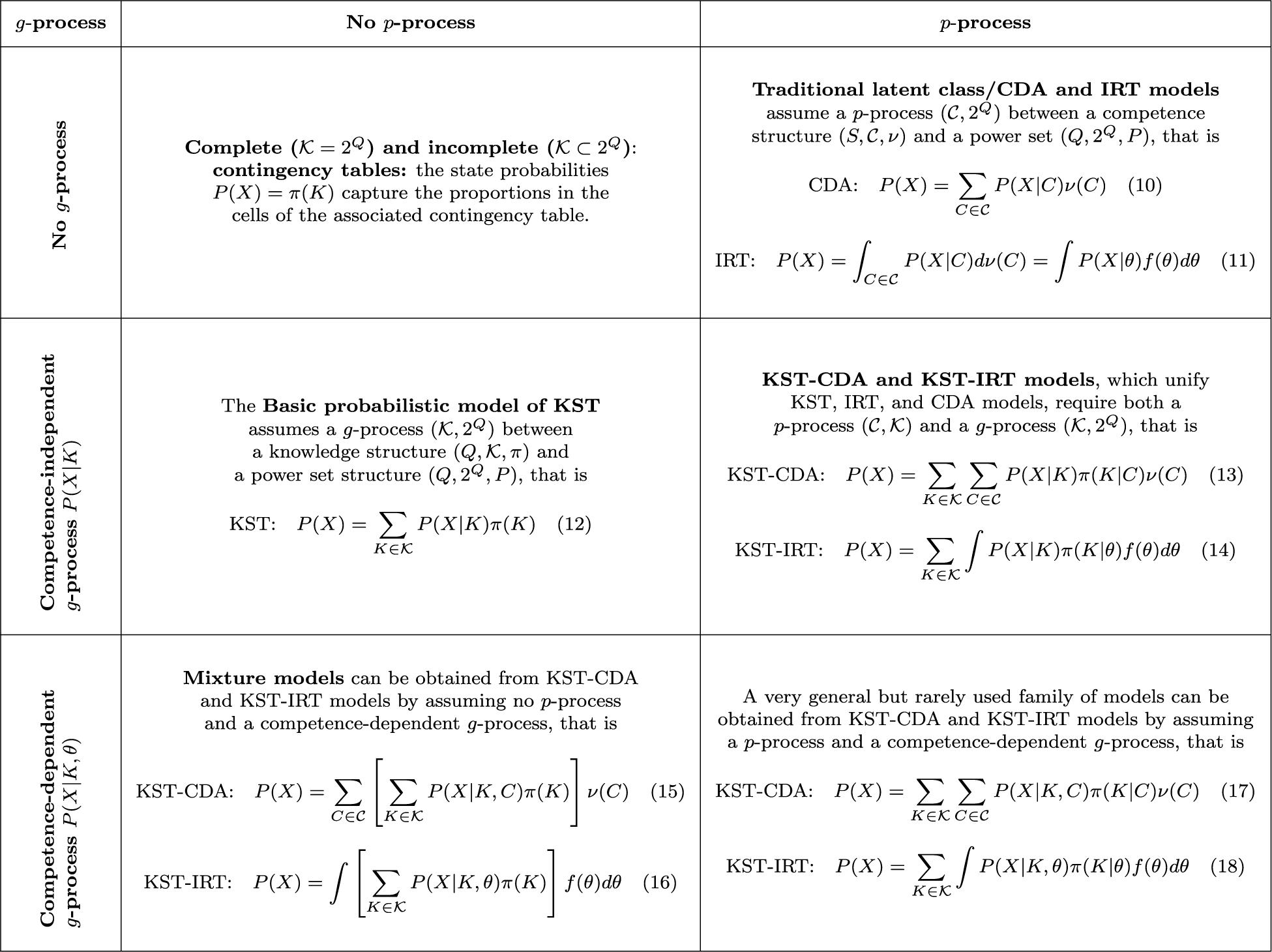

Table 1 Table summarizing the taxonomy of families of KST-CDA-IRT models based on the application of p- and g-processes

Structures can then be given different interpretations, we provide here some examples:

-

• Given a domain Q of dichotomous items

$q_i$

, a (probabilistic) knowledge structure is a triple

$q_i$

, a (probabilistic) knowledge structure is a triple

$(Q,\mathcal {K}, \pi )$

with

$(Q,\mathcal {K}, \pi )$

with

$\mathcal {K}$

a family of knowledge states

$\mathcal {K}$

a family of knowledge states

$K\subseteq Q$

and

$K\subseteq Q$

and

$\pi : \mathcal {K}\rightarrow [0,1]$

a probability distribution. As an example, let

$\pi : \mathcal {K}\rightarrow [0,1]$

a probability distribution. As an example, let

$Q=\{q_1,q_2\}$

, then a chain of the form

$Q=\{q_1,q_2\}$

, then a chain of the form

$\mathcal {K}_A=\{\emptyset , \{q_1\}, \{q_1, q_2\}\}$

as in Table A represents a sequence of items such that

$\mathcal {K}_A=\{\emptyset , \{q_1\}, \{q_1, q_2\}\}$

as in Table A represents a sequence of items such that

$q_2$

cannot be mastered unless

$q_2$

cannot be mastered unless

$q_1$

is mastered (e.g., “

$q_1$

is mastered (e.g., “

$2+3=?$

” and “

$2+3=?$

” and “

$\frac {1}{2}+\frac {1}{3}=?$

” ). A power set

$\frac {1}{2}+\frac {1}{3}=?$

” ). A power set

$2^Q =\{\emptyset , \{q_1\}, \{q_2\}, \{q_1, q_2\}\} $

would capture the independence of two items (e.g., “Having suicidal thoughts” and “Lacking appetite”). The power set will be shown to be necessary for LI. A structure like

$2^Q =\{\emptyset , \{q_1\}, \{q_2\}, \{q_1, q_2\}\} $

would capture the independence of two items (e.g., “Having suicidal thoughts” and “Lacking appetite”). The power set will be shown to be necessary for LI. A structure like

$\mathcal {K}_B=\{\emptyset ,\{q_1, q_2\}\}$

as in Table B is well-suited if items are always mastered or failed jointly (e.g., “

$\mathcal {K}_B=\{\emptyset ,\{q_1, q_2\}\}$

as in Table B is well-suited if items are always mastered or failed jointly (e.g., “

$2+3=?$

” and “

$2+3=?$

” and “

$3+2=?$

”). Items like these are known in KST as “equally informative items.”

$3+2=?$

”). Items like these are known in KST as “equally informative items.” -

• Given a domain S of dichotomous attributes

$s_a$

, a (probabilistic) competence structure is a triple

$s_a$

, a (probabilistic) competence structure is a triple

$(S,\mathcal {C}, \nu )$

with

$(S,\mathcal {C}, \nu )$

with

$\mathcal {C}$

a family of competence states

$\mathcal {C}$

a family of competence states

$C\subseteq S$

and

$C\subseteq S$

and

$\nu : \mathcal {C}\rightarrow [0,1]$

a probability distribution. As an example, let

$\nu : \mathcal {C}\rightarrow [0,1]$

a probability distribution. As an example, let

$S=\{s_1,s_2\},$

where

$S=\{s_1,s_2\},$

where

$s_1$

and

$s_1$

and

$s_2$

are, respectively, the attributes “addition” and “fraction” needed to master items ‘

$s_2$

are, respectively, the attributes “addition” and “fraction” needed to master items ‘

$2+3=?$

’ and ‘

$2+3=?$

’ and ‘

$\frac {1}{2}+\frac {1}{3}=?$

’. Assuming a power set

$\frac {1}{2}+\frac {1}{3}=?$

’. Assuming a power set

$2^S = \{\emptyset , \{s_1\}, \{s_2\}, \{s_1, s_2\}\}\}$

or a chain

$2^S = \{\emptyset , \{s_1\}, \{s_2\}, \{s_1, s_2\}\}\}$

or a chain

$\mathcal {C}=\{\emptyset , \{s_1\}, \{s_1, s_2\}\}$

would imply different claims about the relation between these attributes.

$\mathcal {C}=\{\emptyset , \{s_1\}, \{s_1, s_2\}\}$

would imply different claims about the relation between these attributes. -

• Interpretations of structures are not limited to items and attributes. If the elements

$d_i\in D$

are suitably interpreted so that the state

$d_i\in D$

are suitably interpreted so that the state

$\emptyset $

(D) represents the first (last) category, a chain like

$\emptyset $

(D) represents the first (last) category, a chain like

$\mathcal {Y}_1$

can be used to model a Likert item or a polytomous attribute with four categories. As in IRT, one changes category by exceeding a “threshold,” we call item (attribute) thresholds the elements used to model a polytomous (attribute) item. For simplicity’s sake, we retain for these latter the same formalism of knowledge (competence) structures. We can then reinterpret a chain

$\mathcal {Y}_1$

can be used to model a Likert item or a polytomous attribute with four categories. As in IRT, one changes category by exceeding a “threshold,” we call item (attribute) thresholds the elements used to model a polytomous (attribute) item. For simplicity’s sake, we retain for these latter the same formalism of knowledge (competence) structures. We can then reinterpret a chain

$\mathcal {K}=\{\emptyset , \{q_1\}, \{q_1, q_2\}\}$

as a Likert item, and a chain

$\mathcal {K}=\{\emptyset , \{q_1\}, \{q_1, q_2\}\}$

as a Likert item, and a chain

$\mathcal {C}=\{\emptyset , \{s_1\}, \{s_1, s_2\}\}$

as a polytomous attribute. Moreover, we can use the latter to define latent traits. Intuitively, one needs to generalize a finite chain like

$\mathcal {C}=\{\emptyset , \{s_1\}, \{s_1, s_2\}\}$

as a polytomous attribute. Moreover, we can use the latter to define latent traits. Intuitively, one needs to generalize a finite chain like

$\mathcal {C}$

first to an infinite and then to an uncountable number of states (see Part III of Noventa et al., Reference Noventa, Ye, Kelava and Spoto2024, for details). If the elements

$\mathcal {C}$

first to an infinite and then to an uncountable number of states (see Part III of Noventa et al., Reference Noventa, Ye, Kelava and Spoto2024, for details). If the elements

$s_a\in \mathcal {C}$

are interpreted as attribute thresholds, the resulting competence structure is an uncountable attribute

$s_a\in \mathcal {C}$

are interpreted as attribute thresholds, the resulting competence structure is an uncountable attribute

$\mathcal {C}$

capturing a continuum of competence states. This allows to link IRT to the other theories: like the random variables

$\mathcal {C}$

capturing a continuum of competence states. This allows to link IRT to the other theories: like the random variables

$K_i$

and

$K_i$

and

$X_i$

were defined over the states of a finite structure, one can define a latent trait as a random variable

$X_i$

were defined over the states of a finite structure, one can define a latent trait as a random variable

$\theta : \mathcal {C}\rightarrow \mathbb {R}$

defined over a continuous competence structure

$\theta : \mathcal {C}\rightarrow \mathbb {R}$

defined over a continuous competence structure

$\mathcal {C}$

.

$\mathcal {C}$

.

Given now two probabilistic structures

![]() $(D,\mathcal {Y}, P_{\mathcal {Y}})$

and

$(D,\mathcal {Y}, P_{\mathcal {Y}})$

and

![]() $(R,\mathcal {X}, P_{\mathcal {X}})$

, we can formalize the notion of process as a pair

$(R,\mathcal {X}, P_{\mathcal {X}})$

, we can formalize the notion of process as a pair

![]() $(\mathcal {Y}, \mathcal {X})$

such that the probability distributions

$(\mathcal {Y}, \mathcal {X})$

such that the probability distributions

![]() $P_{\mathcal {Y}}$

and

$P_{\mathcal {Y}}$

and

![]() $P_{\mathcal {X}}$

are related by

$P_{\mathcal {X}}$

are related by

for all

![]() $X\in \mathcal {X}$

. Eq. (8) generalizes latent class models to the relation between two structures. Eq. (9) generalizes latent trait models in presence of an uncountable structure

$X\in \mathcal {X}$

. Eq. (8) generalizes latent class models to the relation between two structures. Eq. (9) generalizes latent trait models in presence of an uncountable structure

![]() $(D,\mathcal {Y}, P_{\mathcal {Y}})$

that allows to replace the summation in Eq. (8) with an integral, and to substitute the integral of

$(D,\mathcal {Y}, P_{\mathcal {Y}})$

that allows to replace the summation in Eq. (8) with an integral, and to substitute the integral of

![]() $P(X|Y)$

w.r.t. the probability measure

$P(X|Y)$

w.r.t. the probability measure

![]() $P_{\mathcal {Y}}$

with the integration w.r.t. a random variable

$P_{\mathcal {Y}}$

with the integration w.r.t. a random variable

![]() $\theta : \mathcal {Y}\rightarrow \mathbb {R}$

, with density function

$\theta : \mathcal {Y}\rightarrow \mathbb {R}$

, with density function

![]() $f(\theta )$

. Using Eqs. (8) and (9), the taxonomy of assessment models represented in Table 1 is obtained by systematizing the processes involved in a model: we consider whether a p-process is absent or present and whether a g-process is absent, competence-independent, or competence-dependent. Since a power set structure

$f(\theta )$

. Using Eqs. (8) and (9), the taxonomy of assessment models represented in Table 1 is obtained by systematizing the processes involved in a model: we consider whether a p-process is absent or present and whether a g-process is absent, competence-independent, or competence-dependent. Since a power set structure

![]() $(Q, 2^Q, P)$

models the responses, the random vector X with realizations

$(Q, 2^Q, P)$

models the responses, the random vector X with realizations

![]() $x\in \{0,1\}^{|Q|}$

is used in place of the state

$x\in \{0,1\}^{|Q|}$

is used in place of the state

![]() $K\in 2^Q$

so that

$K\in 2^Q$

so that

![]() $X_i=1$

if

$X_i=1$

if

![]() $q_i\in K$

, zero otherwise. The p-processes

$q_i\in K$

, zero otherwise. The p-processes

![]() $\pi (K|C)$

and

$\pi (K|C)$

and

![]() $\pi (K|\theta )$

are referred to as state response functions (SRFs) as they generalize the IRFs to a set of items. Families of models in Table 1 therefore grow in generality from top to bottom and from left to right.

$\pi (K|\theta )$

are referred to as state response functions (SRFs) as they generalize the IRFs to a set of items. Families of models in Table 1 therefore grow in generality from top to bottom and from left to right.

Most importantly, the general KST-IRT Eq. (14) is the marginalization of

which is a latent trait-extended version of Eq. (12) and allows to derive left side-added IRT models. As an example, a 4-parameter model follows from Eq. (19) by considering the structure

![]() $\mathcal {K}=\{\emptyset , \{q_i\}\}$

, that is,

$\mathcal {K}=\{\emptyset , \{q_i\}\}$

, that is,

$$ \begin{align*} P(X_i = 1|\theta) & = \sum_{K\in\mathcal{K}}P(X_i = 1|K)\pi(K|\theta)= P(X_i = 1|\emptyset)\pi(\emptyset|\theta)+P(X_i = 1|\{q_i\})\pi(\{q_i\}|\theta)\\ & = c_i\pi(K_i = 0|\theta)+ (1-d_i)\pi(K_i = 1|\theta) = c_i + (1-c_i-d_i)\pi(K_i = 1|\theta), \end{align*} $$

$$ \begin{align*} P(X_i = 1|\theta) & = \sum_{K\in\mathcal{K}}P(X_i = 1|K)\pi(K|\theta)= P(X_i = 1|\emptyset)\pi(\emptyset|\theta)+P(X_i = 1|\{q_i\})\pi(\{q_i\}|\theta)\\ & = c_i\pi(K_i = 0|\theta)+ (1-d_i)\pi(K_i = 1|\theta) = c_i + (1-c_i-d_i)\pi(K_i = 1|\theta), \end{align*} $$

where in the last passages, we have replaced the states

![]() $\emptyset $

and

$\emptyset $

and

![]() $\{q_i\}$

with the realizations of the random variable

$\{q_i\}$

with the realizations of the random variable

![]() $K_i$

and used the definitions

$K_i$

and used the definitions

![]() $c_i := P(X_i = 1|K_i = 0)$

and

$c_i := P(X_i = 1|K_i = 0)$

and

![]() $d_i := P(X_i = 0|K_i = 1) $

. Application of a competence-based g-process yields models like the 1PLAG (Martin et al., Reference San Martin, del Pino and De Boeck2006).

$d_i := P(X_i = 0|K_i = 1) $

. Application of a competence-based g-process yields models like the 1PLAG (Martin et al., Reference San Martin, del Pino and De Boeck2006).

Specific KST, CDA, and IRT models are derived from the models in Table 1 by imposing further assumptions on the p- and g-processes. Two common assumptions are associated with two operations: factorization rewrites a given probability as a product of terms and reparameterization gives new functional forms to the conditional probabilities by means of link functions as in generalized latent variable models.

As to factorization, an example is provided by LI (1). Applications of LI are common: in CDA and IRT, LI is used to factorize the conditional probabilities of Eqs. (10) and (11) into

![]() $P(X|C)=\prod _{i=1}^{|Q|}P(X_i|C)$

and

$P(X|C)=\prod _{i=1}^{|Q|}P(X_i|C)$

and

![]() $P(X|\theta )=\prod _{i=1}^{|Q|}P(X_i|\theta )$

. Similarly, imposing LI on the g-process

$P(X|\theta )=\prod _{i=1}^{|Q|}P(X_i|\theta )$

. Similarly, imposing LI on the g-process

![]() $P(X|K)$

yields:

$P(X|K)$

yields:

$$ \begin{align} \begin{aligned} P(X|K) & = \prod_{i=1}^{|Q|} P(X_i=1|K)^{X_i}P(X_i=0|K)^{1-X_i}\\ \text{with} & \quad P(X_i=1|K) = (1-\beta_{i})^{K_i}\eta_{i}^{1-K_i}, \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} P(X|K) & = \prod_{i=1}^{|Q|} P(X_i=1|K)^{X_i}P(X_i=0|K)^{1-X_i}\\ \text{with} & \quad P(X_i=1|K) = (1-\beta_{i})^{K_i}\eta_{i}^{1-K_i}, \end{aligned} \end{align} $$

where

![]() $K_i=1$

if

$K_i=1$

if

![]() $q_i\in K$

, zero otherwise. Eq. (20) in conjunction with Eq. (12) is known as the basic LI model (BLIM, see, e.g., Falmagne & Doignon, Reference Falmagne and Doignon2011). The parameters

$q_i\in K$

, zero otherwise. Eq. (20) in conjunction with Eq. (12) is known as the basic LI model (BLIM, see, e.g., Falmagne & Doignon, Reference Falmagne and Doignon2011). The parameters

![]() $\eta _i$

and

$\eta _i$

and

![]() $\beta _i$

are known as lucky guess and careless error and are equivalent to the IRT guessing and slipping parameters

$\beta _i$

are known as lucky guess and careless error and are equivalent to the IRT guessing and slipping parameters

![]() $c_i$

and

$c_i$

and

![]() $d_i$

under LI (see below). The latent trait-extended version of the BLIM, known as

$d_i$

under LI (see below). The latent trait-extended version of the BLIM, known as

![]() $\Theta $

-BLIM, is given by Eqs. (19) and (20). In the

$\Theta $

-BLIM, is given by Eqs. (19) and (20). In the

![]() $\Theta $

-BLIM, the SRF

$\Theta $

-BLIM, the SRF

![]() $\pi (K|\theta )$

is unspecified. Another example of factorization that will be used in what follows is provided by generalized LI (GLI; Noventa et al., Reference Noventa, Spoto, Heller and Kelava2019), which generalizes LI (1) to certain families of structures (see footnote 2 below). Instead of providing a general definition of GLI, we directly provide its application to factorize a p-process

$\pi (K|\theta )$

is unspecified. Another example of factorization that will be used in what follows is provided by generalized LI (GLI; Noventa et al., Reference Noventa, Spoto, Heller and Kelava2019), which generalizes LI (1) to certain families of structures (see footnote 2 below). Instead of providing a general definition of GLI, we directly provide its application to factorize a p-process

![]() $\pi (K|\theta )$

as

$\pi (K|\theta )$

as

where

![]() $\pi (K_i=1|\theta )$

plays the role of the IRF (e.g., 2PL model) and where the outer fringe of K, defined by

$\pi (K_i=1|\theta )$

plays the role of the IRF (e.g., 2PL model) and where the outer fringe of K, defined by

![]() $K^{\mathcal {O}} = \{q_i\in Q | K\cup \{q_i\}\in \mathcal {K}\}$

, is the set of all items that can be added one at the time to a state K returning another state of the structure.Footnote 2 Most importantly, GLI (21) yields LI (1) if

$K^{\mathcal {O}} = \{q_i\in Q | K\cup \{q_i\}\in \mathcal {K}\}$

, is the set of all items that can be added one at the time to a state K returning another state of the structure.Footnote 2 Most importantly, GLI (21) yields LI (1) if

![]() $\mathcal {K}=2^Q$

, since

$\mathcal {K}=2^Q$

, since

![]() $K^{\mathcal {O}} = Q\setminus K$

thus showing that the power set is a necessary condition to LI. Eq. (21) is also known as the

$K^{\mathcal {O}} = Q\setminus K$

thus showing that the power set is a necessary condition to LI. Eq. (21) is also known as the

![]() $\Theta $

-simple learning model (

$\Theta $

-simple learning model (

![]() $\Theta $

-SLM) since it is a latent trait-extended version of the SLM (see, e.g., Falmagne & Doignon, Reference Falmagne and Doignon2011), which is just Eq. (21) without the conditional dependence on

$\Theta $

-SLM) since it is a latent trait-extended version of the SLM (see, e.g., Falmagne & Doignon, Reference Falmagne and Doignon2011), which is just Eq. (21) without the conditional dependence on

![]() $\theta $

. The

$\theta $

. The

![]() $\Theta $

-SLM is a template for IRT sequential/step models (see, e.g., Tutz, Reference Tutz and Linden2016). Indeed, given an item

$\Theta $

-SLM is a template for IRT sequential/step models (see, e.g., Tutz, Reference Tutz and Linden2016). Indeed, given an item

![]() $q_i$

which is a prerequisite for an item

$q_i$

which is a prerequisite for an item

![]() $q_{i'}$

as in Table A, the IRF

$q_{i'}$

as in Table A, the IRF

![]() $\pi (K_i=1|\theta )$

is a marginal distribution, but the IRF

$\pi (K_i=1|\theta )$

is a marginal distribution, but the IRF

![]() $\pi (K_{i'}=1|\theta )$

is a conditional one (the conditional dependence

$\pi (K_{i'}=1|\theta )$

is a conditional one (the conditional dependence

![]() $\pi (K_{i'}=1|K_i,\theta )$

might be made explicit or not). If the underlying structure is a chain, and

$\pi (K_{i'}=1|K_i,\theta )$

might be made explicit or not). If the underlying structure is a chain, and

![]() $q_i$

is interpreted as an item threshold, then Eq. (21) is a sequential approach to polytomous IRT models as in the graded response model (Samejima, Reference Samejima1969).

$q_i$

is interpreted as an item threshold, then Eq. (21) is a sequential approach to polytomous IRT models as in the graded response model (Samejima, Reference Samejima1969).

As to the reparameterization operation, like in generalized latent variable models, it consists in the transformation of the conditional probabilities

![]() $P(X|Y)$

or

$P(X|Y)$

or

![]() $P(X_i|Y)$

by means of a link function, that is,

$P(X_i|Y)$

by means of a link function, that is,

where the functions

![]() $f_r$

and

$f_r$

and

![]() $f_{r_i}$

are called kernels and are often given a linear form. The indexes r and

$f_{r_i}$

are called kernels and are often given a linear form. The indexes r and

![]() $r_i$

indicate the association with an arbitrary element

$r_i$

indicate the association with an arbitrary element

![]() $r\in R$

or with the i-th element

$r\in R$

or with the i-th element

![]() $r_i$

. A common choice for

$r_i$

. A common choice for

![]() $\ell _r$

is the logarithm, while common choices for

$\ell _r$

is the logarithm, while common choices for

![]() $\ell _{r_i}$

are the identity, the probit, the logit, and the logarithm. Exactly like the logit was used in Eq. (7) to transform a 2-parameter IRF into a 2PL, the reparameterization operation can be used to provide a very general form of the SRF by setting for every

$\ell _{r_i}$

are the identity, the probit, the logit, and the logarithm. Exactly like the logit was used in Eq. (7) to transform a 2-parameter IRF into a 2PL, the reparameterization operation can be used to provide a very general form of the SRF by setting for every

![]() $K\in \mathcal {K}$

that

$K\in \mathcal {K}$

that

where

![]() $\ell _{q}$

is an arbitrary link function. The kernel

$\ell _{q}$

is an arbitrary link function. The kernel

![]() $f_{q}(K,\theta )$

is a linear combination of functional parameters

$f_{q}(K,\theta )$

is a linear combination of functional parameters

![]() $\lambda ^L_K(\theta )$

that for every state

$\lambda ^L_K(\theta )$

that for every state

![]() $K\in \mathcal {K}$

capture main effects (singletons), second-order interaction effects (pairs of items), and so on, associated with subsets

$K\in \mathcal {K}$

capture main effects (singletons), second-order interaction effects (pairs of items), and so on, associated with subsets

![]() $L\subseteq K$

. The intercept

$L\subseteq K$

. The intercept

![]() $\lambda ^{\emptyset }_K(\theta ) = \lambda _{\emptyset }(\theta )$

is typically set equal for all states and provides a normalization term. The families

$\lambda ^{\emptyset }_K(\theta ) = \lambda _{\emptyset }(\theta )$

is typically set equal for all states and provides a normalization term. The families

![]() $\mathcal {L}_K$

establish which coefficients are retained like in log-linear models. If

$\mathcal {L}_K$

establish which coefficients are retained like in log-linear models. If

![]() $\mathcal {L}_K=2^K$

, the model is saturated. Non-saturated, non-hierarchical, and non-standard models are obtained via specific choices of

$\mathcal {L}_K=2^K$

, the model is saturated. Non-saturated, non-hierarchical, and non-standard models are obtained via specific choices of

![]() $\mathcal {L}_K$

and of the signs of the coefficients. As an example, a choice of log-link

$\mathcal {L}_K$

and of the signs of the coefficients. As an example, a choice of log-link

![]() $\ell _{q}$

, main effects

$\ell _{q}$

, main effects

![]() $\lambda ^{\{q_i\}}_K(\theta )=\theta -b_i$

and null interactions

$\lambda ^{\{q_i\}}_K(\theta )=\theta -b_i$

and null interactions

![]() $\lambda ^L_K(\theta )=0$

for

$\lambda ^L_K(\theta )=0$

for

![]() $|L|\geq 2$

yields

$|L|\geq 2$

yields

$$ \begin{align} \pi(K|\theta) = \frac{e^{\sum_{q_i\in K}(\theta-b_i)}}{\sum_{L\in\mathcal{K}}e^{\sum_{q_i\in L}(\theta-b_i)}}. \end{align} $$

$$ \begin{align} \pi(K|\theta) = \frac{e^{\sum_{q_i\in K}(\theta-b_i)}}{\sum_{L\in\mathcal{K}}e^{\sum_{q_i\in L}(\theta-b_i)}}. \end{align} $$

If

![]() $\mathcal {K}=2^Q$

, Eq. (24) yields the likelihood of

$\mathcal {K}=2^Q$

, Eq. (24) yields the likelihood of

![]() $|Q|$

locally independent Rasch models. If

$|Q|$

locally independent Rasch models. If

![]() $\mathcal {K}$

is a chain of item thresholds, the SRF (24) yields a divide-by-total (Thissen & Steinberg, Reference Thissen and Steinberg1986) polytomous IRT model known as partial credit model (PCM; Masters, Reference Masters1982). In KST, a choice of SRF (24) within the

$\mathcal {K}$

is a chain of item thresholds, the SRF (24) yields a divide-by-total (Thissen & Steinberg, Reference Thissen and Steinberg1986) polytomous IRT model known as partial credit model (PCM; Masters, Reference Masters1982). In KST, a choice of SRF (24) within the

![]() $\Theta $

-BLIM (19) is known as the logistic knowledge structure (LKS; Stefanutti, Reference Stefanutti2006).

$\Theta $

-BLIM (19) is known as the logistic knowledge structure (LKS; Stefanutti, Reference Stefanutti2006).

Finally, it is useful to remark that the power set is the condition in which traditional IRT models are defined. If

![]() $\mathcal {K}=2^Q$

, then a GLI assumption on both p- and g-processes in Eq. (14) is an assumption of LI on both processes and it can be shown (see, e.g., Noventa et al., Reference Noventa, Spoto, Heller and Kelava2019, Reference Noventa, Heller and Kelava2024) that it holds

$\mathcal {K}=2^Q$

, then a GLI assumption on both p- and g-processes in Eq. (14) is an assumption of LI on both processes and it can be shown (see, e.g., Noventa et al., Reference Noventa, Spoto, Heller and Kelava2019, Reference Noventa, Heller and Kelava2024) that it holds

$$ \begin{align*}\nonumber P(X) = \sum_{K\in 2^Q} \int \prod_{i=1}^{|Q|}(1-\beta_i)^{K_i}\eta_i^{1-K_i}\pi(K_i|\theta)f(\theta)d\theta = \int \prod_{i=1}^{|Q|}[\eta_i+(1-\eta_i-\beta_i)\pi(X_i=1|\theta)]f(\theta)d\theta, \end{align*} $$

$$ \begin{align*}\nonumber P(X) = \sum_{K\in 2^Q} \int \prod_{i=1}^{|Q|}(1-\beta_i)^{K_i}\eta_i^{1-K_i}\pi(K_i|\theta)f(\theta)d\theta = \int \prod_{i=1}^{|Q|}[\eta_i+(1-\eta_i-\beta_i)\pi(X_i=1|\theta)]f(\theta)d\theta, \end{align*} $$

which is indeed the likelihood of a 4-parameter IRT model as soon as one identifies

![]() $\eta _i=c_i$

and

$\eta _i=c_i$

and

![]() $\beta _i=d_i$

. As an example, application of Eq. (19) to a complete

$\beta _i=d_i$

. As an example, application of Eq. (19) to a complete

![]() $2\times 2$

table

$2\times 2$

table

![]() $2^Q$

with

$2^Q$

with

![]() $Q=\{q_i,q_{i'}\}$

yields

$Q=\{q_i,q_{i'}\}$

yields

$$ \begin{align*} \begin{bmatrix} P(00|\theta)\\ P(01|\theta)\\ P(10|\theta)\\ P(11|\theta) \end{bmatrix}= \begin{bmatrix} (1-\eta_i)(1-\eta_{i'}) & (1-\eta_{i})\beta_{i'} & \beta_{i}(1-\eta_{i'}) & \beta_{i}\beta_{i'}\\ (1-\eta_i)\eta_{i'} & (1-\eta_i)(1-\beta_{i'}) &\beta_i\eta_{i'}& \beta_i(1-\beta_{i'})\\ \eta_{i}(1-\eta_{i'}) & \eta_i\beta_{i'} & (1-\beta_i)(1-\eta_{i'}) & (1-\beta_i)\beta_{i'} \\ \eta_i\eta_{i'} & \eta_i(1-\beta_{i'}) & (1-\beta_i)\eta_{i'} & (1-\beta_i)(1-\beta_{i'}) \end{bmatrix}\begin{bmatrix} \pi(\emptyset|\theta)\\ \pi(\{q_{i'}\}|\theta)\\ \pi(\{q_i\}|\theta)\\ \pi(\{q_i,q_{i'}\}|\theta) \end{bmatrix}, \end{align*} $$

$$ \begin{align*} \begin{bmatrix} P(00|\theta)\\ P(01|\theta)\\ P(10|\theta)\\ P(11|\theta) \end{bmatrix}= \begin{bmatrix} (1-\eta_i)(1-\eta_{i'}) & (1-\eta_{i})\beta_{i'} & \beta_{i}(1-\eta_{i'}) & \beta_{i}\beta_{i'}\\ (1-\eta_i)\eta_{i'} & (1-\eta_i)(1-\beta_{i'}) &\beta_i\eta_{i'}& \beta_i(1-\beta_{i'})\\ \eta_{i}(1-\eta_{i'}) & \eta_i\beta_{i'} & (1-\beta_i)(1-\eta_{i'}) & (1-\beta_i)\beta_{i'} \\ \eta_i\eta_{i'} & \eta_i(1-\beta_{i'}) & (1-\beta_i)\eta_{i'} & (1-\beta_i)(1-\beta_{i'}) \end{bmatrix}\begin{bmatrix} \pi(\emptyset|\theta)\\ \pi(\{q_{i'}\}|\theta)\\ \pi(\{q_i\}|\theta)\\ \pi(\{q_i,q_{i'}\}|\theta) \end{bmatrix}, \end{align*} $$

where the SRF

![]() $\pi (K|\theta )$

captures the p-process

$\pi (K|\theta )$

captures the p-process

![]() $(\mathcal {C}, 2^Q)$

, while the conditional probabilities

$(\mathcal {C}, 2^Q)$

, while the conditional probabilities

![]() $P(X|K)$

capture the g-process

$P(X|K)$

capture the g-process

![]() $(2^Q, 2^Q)$

. The traditional IRT approach is to impose LI (1) to the SRF so that

$(2^Q, 2^Q)$

. The traditional IRT approach is to impose LI (1) to the SRF so that

$$ \begin{align*} P(X_i=1|\theta) & = P(10|\theta)+P(11|\theta) = \begin{cases} \eta_i+(1-\beta_i-\eta_i)\pi(K_i=1|\theta) &\quad\text{for the } \Theta\text{-SLM}\\ \eta_i+(1-\beta_i-\eta_i)\frac{e^{\theta-b_i}}{1+e^{\theta-b_i}} & \quad\text{for the LKS} \end{cases}\\ P(X_{i'}=1|\theta) & = P(01|\theta)+P(11|\theta) =\begin{cases} \eta_{i'}+(1-\beta_{i'}-\eta_{i'})\pi(K_{i'}=1|\theta) &\quad\text{for the } \Theta\text{-SLM}\\ \eta_{i'}+(1-\beta_{i'}-\eta_{i'})\frac{e^{\theta-b_{i'}}}{1+e^{\theta-b_{i'}}} & \quad\text{for the LKS,} \end{cases} \end{align*} $$

$$ \begin{align*} P(X_i=1|\theta) & = P(10|\theta)+P(11|\theta) = \begin{cases} \eta_i+(1-\beta_i-\eta_i)\pi(K_i=1|\theta) &\quad\text{for the } \Theta\text{-SLM}\\ \eta_i+(1-\beta_i-\eta_i)\frac{e^{\theta-b_i}}{1+e^{\theta-b_i}} & \quad\text{for the LKS} \end{cases}\\ P(X_{i'}=1|\theta) & = P(01|\theta)+P(11|\theta) =\begin{cases} \eta_{i'}+(1-\beta_{i'}-\eta_{i'})\pi(K_{i'}=1|\theta) &\quad\text{for the } \Theta\text{-SLM}\\ \eta_{i'}+(1-\beta_{i'}-\eta_{i'})\frac{e^{\theta-b_{i'}}}{1+e^{\theta-b_{i'}}} & \quad\text{for the LKS,} \end{cases} \end{align*} $$

where the LKS marginal probability is the 4PL model (2) and corresponds to a logit-link reparameterization of the IRF

![]() $\pi (K_i=1|\theta )$

with a choice of kernel

$\pi (K_i=1|\theta )$

with a choice of kernel

![]() $f(K_i,\theta )=K_i(\theta -b_i)$

. The 2PL considers

$f(K_i,\theta )=K_i(\theta -b_i)$

. The 2PL considers

![]() $\eta _i=\beta _i=0$

so that there is no g-process. The LKS factorizes into a product of Rasch models only if

$\eta _i=\beta _i=0$

so that there is no g-process. The LKS factorizes into a product of Rasch models only if

![]() $\mathcal {K}=2^Q$

.

$\mathcal {K}=2^Q$

.

3 Probabilistic and deterministic models of local dependence

We consider two approaches to LD referred to as “probabilistic” and “deterministic” LD. In terms of structures, probabilistic LD is based on the power set

![]() $\mathcal {K}=2^Q$

(i.e., a complete contingency table of the responses), while deterministic LD is based on an arbitrary structure

$\mathcal {K}=2^Q$

(i.e., a complete contingency table of the responses), while deterministic LD is based on an arbitrary structure

![]() $\mathcal {K}\subset 2^Q$

(i.e., a proper subset of the power set, an incomplete contingency table of the responses). It is worth repeating that deterministic LD does not refer to a non-probabilistic approach but to the fact that a deterministic constraint is imposed (via the underlying structure) to the system prior to any probabilistic assumption (via the processes): in both cases, the final model is made stochastic by the processes defined over the structures. As in probabilistic LD, a power set structure is considered, a p-process

$\mathcal {K}\subset 2^Q$

(i.e., a proper subset of the power set, an incomplete contingency table of the responses). It is worth repeating that deterministic LD does not refer to a non-probabilistic approach but to the fact that a deterministic constraint is imposed (via the underlying structure) to the system prior to any probabilistic assumption (via the processes): in both cases, the final model is made stochastic by the processes defined over the structures. As in probabilistic LD, a power set structure is considered, a p-process

![]() $(\mathcal {C}, 2^Q)$

is sufficient to obtain all possible response patterns. Hence, LD is modeled only by the functional choice of p-process (i.e., it is purely probabilistic). In deterministic LD, the structure

$(\mathcal {C}, 2^Q)$

is sufficient to obtain all possible response patterns. Hence, LD is modeled only by the functional choice of p-process (i.e., it is purely probabilistic). In deterministic LD, the structure

![]() $\mathcal {K}\subset 2^Q$

introduces a deterministic component. In such a case, a g-process

$\mathcal {K}\subset 2^Q$

introduces a deterministic component. In such a case, a g-process

![]() $(\mathcal {K},2^Q)$

is needed to stochastically recover those response patterns that are in principle prohibited. Table 2 summarizes the taxonomy of traditional and new approaches to model LD according to the number of processes involved and whether a power set structure is considered or not. Table 2 is obtained by further expanding on the taxonomy of Table 1 and by matching the LD models with the associated family. Three conditions for the p-process are considered: a) absent, b) non-factorized, and c) factorized using GLI (21). As to the g-process, three conditions are considered: a) absent, b) competence-independent, and c) competence-dependent. As it can be seen, most of the IRT approaches to LD belong to probabilistic LD, and most of the new approaches to deterministic LD. Approaches discussed in this manuscript are referenced. Families without referenced models have currently not been discussed in the literature. Like in Table 1, families of models in Table 2 grow in generality from top to bottom, with models in the higher rows that are nested within the lower rows. As it can be seen in Table 2, complete and incomplete contingency tables assume no p-process and no g-process. Single process models are either in the first row (no g-process) or in the first column (no p-process). All other families of models are two-process models.

$(\mathcal {K},2^Q)$

is needed to stochastically recover those response patterns that are in principle prohibited. Table 2 summarizes the taxonomy of traditional and new approaches to model LD according to the number of processes involved and whether a power set structure is considered or not. Table 2 is obtained by further expanding on the taxonomy of Table 1 and by matching the LD models with the associated family. Three conditions for the p-process are considered: a) absent, b) non-factorized, and c) factorized using GLI (21). As to the g-process, three conditions are considered: a) absent, b) competence-independent, and c) competence-dependent. As it can be seen, most of the IRT approaches to LD belong to probabilistic LD, and most of the new approaches to deterministic LD. Approaches discussed in this manuscript are referenced. Families without referenced models have currently not been discussed in the literature. Like in Table 1, families of models in Table 2 grow in generality from top to bottom, with models in the higher rows that are nested within the lower rows. As it can be seen in Table 2, complete and incomplete contingency tables assume no p-process and no g-process. Single process models are either in the first row (no g-process) or in the first column (no p-process). All other families of models are two-process models.

Table 2 Table summarizing the taxonomy of families of models for LD based on a) the application of p- and g-processes and b) the choice of a power set (probabilistic LD) or of an arbitrary structure (deterministic LD)

3.1 Systematization of probabilistic LD: Single p-process models (no g-process)

Most traditional IRT models of LD are classified in the first row of Table 2 since they require

![]() $\mathcal {K}=2^Q$

and a p-process

$\mathcal {K}=2^Q$

and a p-process

![]() $(\mathcal {C}, 2^Q)$

. Although in principle, one might add a g-process

$(\mathcal {C}, 2^Q)$

. Although in principle, one might add a g-process

![]() $(2^Q,2^Q)$

, most of the IRT approaches to LD assume no g-process so that

$(2^Q,2^Q)$

, most of the IRT approaches to LD assume no g-process so that

![]() $P(X|\theta )=\pi (K|\theta )$

(or

$P(X|\theta )=\pi (K|\theta )$

(or

![]() $P(X_i,X_{i'}|\theta ) = \pi (K_i, K_{i'}|\theta )$

in the bivariate case). If there is no p-process, the state probabilities

$P(X_i,X_{i'}|\theta ) = \pi (K_i, K_{i'}|\theta )$

in the bivariate case). If there is no p-process, the state probabilities

![]() $\pi (K)$

yield the proportions of individuals in a cell of the complete contingency table (e.g.,

$\pi (K)$

yield the proportions of individuals in a cell of the complete contingency table (e.g.,

![]() $\pi (\emptyset )=p_{00}$

,

$\pi (\emptyset )=p_{00}$

,

![]() $\pi (\{q_i\})=p_{10}$

,

$\pi (\{q_i\})=p_{10}$

,

![]() $\pi (\{q_{i'}\})=p_{01}$

, and

$\pi (\{q_{i'}\})=p_{01}$

, and

![]() $\pi (\{q_i,q_{i'}\})=p_{11}$

). Log-linear models are then a reparameterization with a log link function of said proportions (or of the associated frequencies). If a p-process is present, LI corresponds to a factorized p-process. Traditional IRT models of LD correspond to different assumptions on the SRF

$\pi (\{q_i,q_{i'}\})=p_{11}$