1 Introduction

In educational and psychological testing, pure power tests and pure speed tests aim to measure examinees’ effective abilities and effective speeds (Meijer & Sotaridona, Reference Meijer and Sotaridona2006; van der Linden, Reference van der Linden2007), respectively, under ideal testing environments. However, for the convenience of test administration, time constraints are typically imposed on the tests, resulting in what is known as time-limit tests. The degree to which the time limit of a test affects the performance of examinees is referred to as test speededness. This manifests when examinees, within the time limit, are unable to devote sufficient consideration or effort toward the end of the test (Bejar, Reference Bejar1985). Van der Linden (Reference van der Linden2011) argued that test speededness was the cumulative outcome of the interaction among the cognitive speed of the examinee, the workload of the items, and the test’s time limit. Various definitions of test speededness exist, but they all share a common aspect: Test speededness can inadvertently affect the test scores when the time limit influences the examinee’s performance, even though speed is not a core aspect of the construct that the test seeks to measure (Evans & Reilly, Reference Evans and Reilly1972; Schnipke & Scrams, Reference Schnipke and Scrams1997; Shao et al., Reference Shao, Li and Cheng2016). Such instances of speededness can induce a decrease in response accuracy, lead to missing responses (Goegebeur et al., Reference Goegebeur, De Boeck and Molenberghs2010), bias the item and ability estimates (Oshima, Reference Oshima1994), and potentially compromise the validity of the test scores by distorting or diminishing the correlation between test scores and other interested variables. This ultimately impacts the interpretation and use of test scores in decision-making processes.

Due to the necessity of time limits, it is almost impossible to entirely eliminate test speededness. Consequently, it is essential to identify response patterns affected by speededness to maintain the reliability and validity of tests. More specifically, it becomes critical to pinpoint the locations and magnitudes of test speededness at the individual level. Over several decades, various approaches have been developed to measuring and detecting test speededness, and they can be broadly classified into three categories. The first category is non-model-based approaches, such as rules of thumb and descriptive statistics, which provide basic guidelines and analytic techniques for identifying and assessing speededness. However, these methods have limitations in capturing individual differences in test speededness. To overcome this issue, the second approach known as model-based methods is proposed (e.g., Lu & Sireci, Reference Lu and Sireci2007; Schnipke & Scrams, Reference Schnipke and Scrams1997; Wise & Kong, Reference Wise and Kong2005). One such method is the hybrid modeling framework proposed by Yamamoto (Reference Yamamoto1995). This framework assumes different models before and after the speeded point, with the same speeded location for examinees (i.e., speededness homogeneity). Boughton and Yamamoto (Reference Boughton, Yamamoto, von Davier and Carstensen2007) extended this approach to the hybrid Rasch model, allowing for different speeded locations among examinees (i.e., speededness heterogeneity). Another model-based method is the two-class mixture Rasch model proposed by Bolt et al. (Reference Bolt, Cohen and Wollack2002). This model assumes a single switch point, beyond which examinees engage in rapid guessing behavior. Wang and Xu (Reference Wang and Xu2015) proposed a mixture hierarchical model to distinguish solution behavior from rapid guessing behavior based on responses and RTs, which allows to consider multiple switch points for each examinee. However, these models assumed that the probabilities of correct response after the switch points are fixed or suddenly decrease. More flexible models are needed to model the gradual switch among test-taking behaviors. Wollack and Cohen (Reference Wollack and Cohen2004) addressed this by introducing a gradual change model, where the probabilities of correct responses decline gradually after the switch point.

The third category involves detecting aberrant patterns of responses and/or RTs at individual level. Person-fit indices based on responses (e.g., Meijer & Sijtsma, Reference Meijer and Sijtsma2001) and/or RTs (Marianti et al., Reference Marianti, Fox, Marianna, Veldkamp and Tijmstra2014; van der Linden & van Krimpen-Stoop, Reference van der Linden and van Krimpen-Stoop2003) are introduced. Key to this approach is that regular behavior should be adequately fitted by a psychometric model, otherwise, it will induce false detection rate due to blurred classifications of normal and aberrant behaviors. Another popular method for detecting test speededness is the cumulative sum (CUSUM) (e.g., Armstrong & Shi, Reference Armstrong and Shi2009; Egberink et al., Reference Egberink, Meijer, Veldkamp, Schakel and Smid2010; Meijer, Reference Meijer2002; Tendeiro & Meijer, Reference Tendeiro and Meijer2012; van Krimpen-Stoop & Meijer, Reference van Krimpen-Stoop and Meijer2001). However, the CUSUM procedures have higher power only when the underlying statistical model before and after the change point are known. Change point analysis (CPA) is another approach used to detect test speededness (Sinharay, Reference Sinharay2017). Shao et al. (Reference Shao, Li and Cheng2016) implemented a CPA based on the likelihood ratio test for detecting test speededness using responses only, thereby pinpointing the locations where examinees begin to speed up. Cheng and Shao (Reference Cheng and Shao2022) extended this method, focusing solely on RTs, and utilized both likelihood ratio test and Wald test to detect test speedness. Sinharay (Reference Sinharay2016) introduced three statistics based on CPA using only responses. Subsequently, Yu and Cheng (Reference Yu and Cheng2020) conducted a comprehensive comparison between these three change-point analysis methods and twelve CUSUM methods based solely on responses. However, the above methods primarily consider either response data or RT data, lacking integration of both types of data. Even though several studies focus on responses and RTs to detect aberrancy, they are either limited to identifying cheating behavior from item preknowledge (Demirkaya, Reference Demirkaya2022; Demirkaya et al., Reference Demirkaya, Bezirhan and Zhang2023; Sinharay & Johnson, Reference Sinharay and Johnson2020) or unable to differentiate between specific types of aberrancies (Gorney et al., Reference Gorney, Sinharay and Liu2024). Recently, Lu et al. (Reference Lu, Wang, Zhang and Wang2024) proposed a real-time two-stage detection procedure to detect rapid guessing and cheating behaviors, in which Stage I identifies the aberrant RTs and then Stage II determines the type of aberrant behavior. This approach is optimal for immediate real-time monitoring and swift change detection in computer-based testing.

In contrast, likelihood ratio and Wald tests are more appropriate for post-hoc analysis on existing datasets. They involve testing a single hypothesis between two models, that is, with and without the specific change point, at a time. Specifically, null hypothesis assumes no change point (regarded as one model), and alternative hypothesis assumes a change point at a specific item location (regarded as the other model). These two methods determine whether to reject the null hypothesis based on the ratio between these two models.

In this article, we aim to provide a more effective CPA method compared to the likelihood ratio and Wald tests. The proposed CPA approaches based on Schwarz information criterion (SIC) (abbreviated as SIC-CPA) rely on the idea of model selection in statistics. SIC is preferred over other information criteria such as Akaike information criterion because it introduces a stronger penalty for model complexity, leading to more parsimonious models. This is particularly beneficial for detecting change points in noisy data, where overly complex models might capture random variations instead of true changes. Additionally, SIC has strong asymptotic properties (Chen et al., Reference Chen, Gupta and Gupta2000), ensuring that as the sample size increases, it is more likely to select the true model, thereby offering a robust and reliable framework in detecting structural changes within data.

The principle of the SIC-CPA method is as follows: it fits models to all possible change point locations, calculates the SIC value for each item location, and then the location with the smallest SIC is selected as the most possible change point. This comprehensiveness of the SIC-CPA method ensures identifying true change points more accurately. Moreover, the SIC-CPA method is computationally straightforward, thereby enhancing the computational efficiency of change point detection. More importantly, for test speededness detection with just one change point, the proposed SIC-CPA approaches take the model complexity into account when making change point decision, penalizing the model based on the number of parameters. In contrast, the likelihood ratio and Wald tests depend solely on the model fit without directly considering its complexity. Consequently, in data with subtle variations, the likelihood ratio and Wald tests might falsely pinpoint change points, leading to higher Type-I errors. In such cases, the proposed SIC-CPA approach tends to favor models without a change point, reducing the Type-I errors but possibly increasing Type-II errors. However, if the change point has a significant effect and substantially improves the model fit, the SIC-CPA approach can efficiently detect it, thereby reducing Type-II errors. Furthermore, given that the SIC-CPA approach introduces penalties for model complexity, it is more resistant to random fluctuations in the data, preventing these fluctuations from being mistaken for actual change points. All three approaches are implemented conditional on the available item parameter values, which are needed to evaluate model fit and to obtain the person parameter estimates used in the test statistics. In simulation studies, item parameters are either fixed at their true values or estimated through calibration to reflect applied testing scenarios.

In the context of aberrant behavior detection, the mixture approach often integrates both responses and RTs to differentiate between normal and aberrant behaviors. Such joint mixture models can be estimated using either Bayesian or frequentist strategies, and the computational burden depends on model specifications, estimation algorithms, and whether item parameters are treated as known. In contrast, the main advantage of the SIC-CPA method lies in its relatively simple detection-oriented and lightweight feature: when item parameters are treated as known, change points can be identified via SIC-based model comparison without needing to estimate the additional latent parameters typically involved in joint mixture models (e.g., class membership and/or person-by-item latent indicators indexing normal versus aberrant behavior). Importantly, both the SIC-CPA approach and joint mixture models typically involve estimating examinees’ ability and speed parameters. The key difference is that joint mixture models additionally model latent behavioral structure along with all model parameters simultaneously, which typically requires more complex model specification and estimation and, in some cases, involve high-dimensional data matrices (e.g., test-taking indicators at the person-by-item level). Besides, the mixture model usually requires that both response data and RT data exhibit aberrancies on the same set of items. If aberrant RTs seem normal while response data remains aberrant, the mixture model may fail to accurately identify the aberrant behavior. This is because the assumption of the mixture model, which expects aberrancies to occur in both dimensions at once, is violated in such cases. In contrast, the SIC method is capable of accurately identifying speeded behavior even when there is significant overlap between the aberrant and normal RT distributions, as long as there is a clear difference in the response data (see simulation study III for detailed analysis).

The structure of this article is as follows. First, we introduce three CPA approaches based on SIC, which focus on response data only, RT data only, and the combination of both, respectively. Second, the critical value and significance level of SIC-CPA method are given to determine whether test speededness occurs at the specific item location. Third, we conduct simulation studies to evaluate the effectiveness of the proposed SIC-CPA method in detecting test speededness. Fourth, a real data set is employed to show the application of the proposed SIC-CPA method. Finally, we conclude by discussing the strengths and limitations of this article and outline potential future research directions.

2 Method

2.1 Schwarz information criterion to detect change points

Information criteria have been extensively utilized in model selection for change point problems (Chen & Gupta, Reference Chen and Gupta1997; Yao, Reference Yao1988). SIC is a well-established method for detecting change points, and Chen and Gupta (Reference Chen and Gupta1997) showed that it is a highly effective method for detecting change points and is marginally superior to likelihood ratio procedures and Bayesian methods.

SIC is a statistical method for model selection that was first proposed by Schwarz (Reference Schwarz1978). It is more commonly known as the Bayesian information criterion (BIC) and reflects a trade-off between model fit and complexity, which is typically determined by the number of parameters.

Specifically, SICFootnote 1 is mathematically equivalent to BIC and is calculated as

where

![]() $L\left(\widehat{\theta}\right)$

is the maximum likelihood,

$L\left(\widehat{\theta}\right)$

is the maximum likelihood,

![]() $p\times \log (n)$

is called as the penalty parameter,

$p\times \log (n)$

is called as the penalty parameter,

![]() $p$

is the number of parameters, and

$p$

is the number of parameters, and

![]() $n$

is the sample size. In change point detection, SIC is used to identify the optimal model that fits the data with the fewest number of change points.

$n$

is the sample size. In change point detection, SIC is used to identify the optimal model that fits the data with the fewest number of change points.

2.2 CPA based on SIC for item responses

Throughout Section 2.2, we treat item parameters as given; this is a prerequisite for computing the likelihood and the SIC values, and the setting in which item parameters are unknown is studied in simulation study V. Given a test length of

![]() $J$

items to be answered by the

$J$

items to be answered by the

![]() $i$

th examinee, it is assumed that the

$i$

th examinee, it is assumed that the

![]() $i$

th examinee will answer the

$i$

th examinee will answer the

![]() $j$

th item with latent ability

$j$

th item with latent ability

![]() ${\theta}_{i,j}$

, where

${\theta}_{i,j}$

, where

![]() $j=1,2,\dots, J$

. Therefore, the following hypothesis testing is considered. The null hypothesis is

$j=1,2,\dots, J$

. Therefore, the following hypothesis testing is considered. The null hypothesis is

against the alternative hypothesis

This alternative hypothesis assumes that change point occurs between item

![]() $k$

and

$k$

and

![]() $k+1$

.

$k+1$

.

As an illustration, the traditional two parameter logistic model (2PLM; Birnbaum, Reference Birnbaum, Lord and Novick1968) is used as the item response theory (IRT) model, that is,

$$\begin{align}{P}_j\left({\theta}_i\right)=p\left({y}_{ij}=1\mid {\theta}_i,{a}_j,{b}_j\right)=\frac{\exp \left[{a}_j\left({\theta}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\theta}_i-{b}_j\right)\right]}.\end{align}$$

$$\begin{align}{P}_j\left({\theta}_i\right)=p\left({y}_{ij}=1\mid {\theta}_i,{a}_j,{b}_j\right)=\frac{\exp \left[{a}_j\left({\theta}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\theta}_i-{b}_j\right)\right]}.\end{align}$$

In Eq. (4),

![]() ${y}_{ij}$

represents the response of the

${y}_{ij}$

represents the response of the

![]() $i$

th examinee answering the

$i$

th examinee answering the

![]() $j$

th item, where

$j$

th item, where

![]() $i=1,\dots, N$

and

$i=1,\dots, N$

and

![]() $j=1,\dots J$

. The correct response probability is expressed as

$j=1,\dots J$

. The correct response probability is expressed as

![]() ${P}_j\left({\theta}_i\right)$

, and the corresponding incorrect response probability is

${P}_j\left({\theta}_i\right)$

, and the corresponding incorrect response probability is

![]() ${Q}_j\left({\theta}_i\right)=1-{P}_j\left({\theta}_i\right).{a}_j$

is the discrimination parameter of the

${Q}_j\left({\theta}_i\right)=1-{P}_j\left({\theta}_i\right).{a}_j$

is the discrimination parameter of the

![]() $j$

th item, and

$j$

th item, and

![]() ${b}_j$

is the difficulty parameter of the

${b}_j$

is the difficulty parameter of the

![]() $j$

th item.

$j$

th item.

![]() ${\theta}_i$

denotes the latent ability of the

${\theta}_i$

denotes the latent ability of the

![]() $i$

th examinee.

$i$

th examinee.

Therefore, under

![]() ${H}_0^{\theta }$

, the maximum likelihood function based on the 2PL model is

${H}_0^{\theta }$

, the maximum likelihood function based on the 2PL model is

$$\begin{align}{L}_{i0}\left({\boldsymbol{y}}_i\mid {\hat{\theta}}_i\right)=\prod \limits_{j=1}^J\kern0.1em {\left\{\frac{\exp \left[{a}_j\left({\widehat{\theta}}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\widehat{\theta}}_i-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}},\end{align}$$

$$\begin{align}{L}_{i0}\left({\boldsymbol{y}}_i\mid {\hat{\theta}}_i\right)=\prod \limits_{j=1}^J\kern0.1em {\left\{\frac{\exp \left[{a}_j\left({\widehat{\theta}}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\widehat{\theta}}_i-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}},\end{align}$$

where

![]() ${\boldsymbol{y}}_i$

denotes the vector of item responses for the

${\boldsymbol{y}}_i$

denotes the vector of item responses for the

![]() $i$

th examinee, that is,

$i$

th examinee, that is,

![]() ${\boldsymbol{y}}_i={\left({y}_{i1},{y}_{i2},\dots, {y}_{iJ}\right)}^{\prime }$

.

${\boldsymbol{y}}_i={\left({y}_{i1},{y}_{i2},\dots, {y}_{iJ}\right)}^{\prime }$

.

![]() ${\widehat{\theta}}_i$

is the maximum likelihood estimate (MLE) of the latent ability obtained from the responses based on all items of the test. Therefore, the SIC (Chen & Gupta, Reference Chen and Gupta1997; Schwarz, Reference Schwarz1978) under

${\widehat{\theta}}_i$

is the maximum likelihood estimate (MLE) of the latent ability obtained from the responses based on all items of the test. Therefore, the SIC (Chen & Gupta, Reference Chen and Gupta1997; Schwarz, Reference Schwarz1978) under

![]() ${H}_0^{\theta }$

, that is,

${H}_0^{\theta }$

, that is,

![]() ${\mathrm{SIC}}_{\theta }(J)$

, can be expressed as

${\mathrm{SIC}}_{\theta }(J)$

, can be expressed as

$$\begin{align}{\mathrm{SIC}}_{\theta_i}(J)&=-2\left[\log {L}_{i0}\left({\boldsymbol{y}}_i\mid {\widehat{\theta}}_i\right)\right]+\log (J)\nonumber\\&=-2\sum \limits_{j=1}^J\kern0.20em \left[{y}_{ij}\log {P}_j\left({\widehat{\theta}}_i\right)+\left(1-{y}_{ij}\right)\log {Q}_j\left({\widehat{\theta}}_i\right)\right]+\log (J),\end{align}$$

$$\begin{align}{\mathrm{SIC}}_{\theta_i}(J)&=-2\left[\log {L}_{i0}\left({\boldsymbol{y}}_i\mid {\widehat{\theta}}_i\right)\right]+\log (J)\nonumber\\&=-2\sum \limits_{j=1}^J\kern0.20em \left[{y}_{ij}\log {P}_j\left({\widehat{\theta}}_i\right)+\left(1-{y}_{ij}\right)\log {Q}_j\left({\widehat{\theta}}_i\right)\right]+\log (J),\end{align}$$

where

![]() ${P}_j{\left(\hat{\theta}\right)}_i)$

and

${P}_j{\left(\hat{\theta}\right)}_i)$

and

![]() ${Q}_j\left({\widehat{\theta}}_i\right)$

are the values of

${Q}_j\left({\widehat{\theta}}_i\right)$

are the values of

![]() ${P}_j\left(\theta \right)$

and

${P}_j\left(\theta \right)$

and

![]() ${Q}_j\left(\theta \right)$

by plugging in

${Q}_j\left(\theta \right)$

by plugging in

![]() ${\widehat{\theta}}_i$

, respectively.

${\widehat{\theta}}_i$

, respectively.

Here, the item parameters are available, and only one latent ability parameter is needed to be estimated for the examinee

![]() $i$

under the null hypothesis. Therefore, the number of parameters included in the penalty term is 1 (i.e.,

$i$

under the null hypothesis. Therefore, the number of parameters included in the penalty term is 1 (i.e.,

![]() $p=1$

), and the number of observations included in the penalty term is

$p=1$

), and the number of observations included in the penalty term is

![]() $J$

(i.e.,

$J$

(i.e.,

![]() $n=J$

). Note that when item parameters are unknown, the penalty term should include both item and person parameters. As a result, the effective penalty becomes substantially larger, which may make the criterion more conservative in selecting a change point model.

$n=J$

). Note that when item parameters are unknown, the penalty term should include both item and person parameters. As a result, the effective penalty becomes substantially larger, which may make the criterion more conservative in selecting a change point model.

Under

![]() ${H}_1^{\theta }$

, the corresponding maximum likelihood function can be obtained as

${H}_1^{\theta }$

, the corresponding maximum likelihood function can be obtained as

$$\begin{align}{L}_{i1}\left({\boldsymbol{y}}_{\boldsymbol{i}}\mid {\hat{\theta}}_i,{\widehat{\theta}}_i^{\prime}\right)&={\prod}_{j=1}^k\kern0.1em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}} \nonumber\\& \quad \times {\prod}_{j=k+1}^J\kern0.1em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}},\end{align}$$

$$\begin{align}{L}_{i1}\left({\boldsymbol{y}}_{\boldsymbol{i}}\mid {\hat{\theta}}_i,{\widehat{\theta}}_i^{\prime}\right)&={\prod}_{j=1}^k\kern0.1em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}} \nonumber\\& \quad \times {\prod}_{j=k+1}^J\kern0.1em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}},\end{align}$$

where

![]() ${\widehat{\theta}}_i$

and

${\widehat{\theta}}_i$

and

![]() ${\widehat{\theta}}_i^{\prime }$

are the MLEs of the latent ability obtained from the responses based on the

${\widehat{\theta}}_i^{\prime }$

are the MLEs of the latent ability obtained from the responses based on the

![]() $k$

and

$k$

and

![]() $J-k$

items in the test, respectively. Therefore, the

$J-k$

items in the test, respectively. Therefore, the

![]() $\mathrm{SIC}$

under

$\mathrm{SIC}$

under

![]() ${H}_1^{\theta }$

, that is,

${H}_1^{\theta }$

, that is,

![]() ${\mathrm{SIC}}_{\theta }(k)$

, for

${\mathrm{SIC}}_{\theta }(k)$

, for

![]() $k=2,\dots, J-1$

, can be expressed as

$k=2,\dots, J-1$

, can be expressed as

$$\begin{align}{\mathrm{SIC}}_{\theta_i}(k)&=-2\left[\log {L}_{i1}\left({\boldsymbol{y}}_i\mid {\widehat{\theta}}_i,{\widehat{\theta}}_i^{\prime}\right)\right]+2\log (J)\nonumber\\& =-2\sum \limits_{j=1}^k\kern0.20em \left[{y}_{ij}\log {P}_j\left({\widehat{\theta}}_i\right)+\left(1-{y}_{ij}\right)\log {Q}_j\left({\widehat{\theta}}_i\right)\right]\nonumber\\& \quad -2\sum \limits_{j=k+1}^J\kern0.20em \left[{y}_{ij}\log {P}_j\left({\widehat{\theta}}_i^{\prime}\right)+\left(1-{y}_{ij}\right)\log {Q}_j\left({\widehat{\theta}}_i^{\prime}\right)\right]+2\log (J),\end{align}$$

$$\begin{align}{\mathrm{SIC}}_{\theta_i}(k)&=-2\left[\log {L}_{i1}\left({\boldsymbol{y}}_i\mid {\widehat{\theta}}_i,{\widehat{\theta}}_i^{\prime}\right)\right]+2\log (J)\nonumber\\& =-2\sum \limits_{j=1}^k\kern0.20em \left[{y}_{ij}\log {P}_j\left({\widehat{\theta}}_i\right)+\left(1-{y}_{ij}\right)\log {Q}_j\left({\widehat{\theta}}_i\right)\right]\nonumber\\& \quad -2\sum \limits_{j=k+1}^J\kern0.20em \left[{y}_{ij}\log {P}_j\left({\widehat{\theta}}_i^{\prime}\right)+\left(1-{y}_{ij}\right)\log {Q}_j\left({\widehat{\theta}}_i^{\prime}\right)\right]+2\log (J),\end{align}$$

where the number of parameters included in the penalty term is 2 (i.e.,

![]() $p=2$

). According to the minimum information criterion principle,

$p=2$

). According to the minimum information criterion principle,

![]() ${H}_0$

is rejected if

${H}_0$

is rejected if

and the estimated change point position denoted by

![]() $\widehat{k}$

such that

$\widehat{k}$

such that

Remark 1. Although the SIC method can be applied to detect change points at any position in theory, it is suggested to be used when the ability parameters are accurately estimated. In other words, there needs to be a sufficient number of items available both before and after the change point.

Remark 2. The SIC method can be extended to identify multiple change points by performing a series of hypothesis testing for a given response sequence. The process involves the following steps:

Step 1: The null hypothesis, as defined by Eq. (2), is tested against the alternative hypothesis given by Eq. (3) to determine if there is a change point. If

![]() ${H}_0^{\theta }$

is not rejected, then no change point is present, and the process stops. If

${H}_0^{\theta }$

is not rejected, then no change point is present, and the process stops. If

![]() ${H}_0^{\theta }$

is rejected, then the estimated position of the change point, denoted by

${H}_0^{\theta }$

is rejected, then the estimated position of the change point, denoted by

![]() $\widehat{k}$

, is determined by Eq. (10), where

$\widehat{k}$

, is determined by Eq. (10), where

![]() $\widehat{k}$

is the location of the single change point at this stage. The process then moves to Step 2.

$\widehat{k}$

is the location of the single change point at this stage. The process then moves to Step 2.

Step 2: The SIC method is used to test the two subsequences before and after the change point detected by Step 1 separately for a change.

Step 3: Repeat the process until no further subsequences have change points.

Step 4: The collection of change point locations found by Steps 1–3 is denoted by

![]() $\left({\widehat{k}}_1,{\widehat{k}}_2,\dots, {\widehat{k}}_M\right)$

, and the estimated total number of change points is

$\left({\widehat{k}}_1,{\widehat{k}}_2,\dots, {\widehat{k}}_M\right)$

, and the estimated total number of change points is

![]() $M$

. Note that the calculation of SIC-CPA incorporates a penalty for model complexity, effectively preventing the selection of overly complex models with multiple change points.

$M$

. Note that the calculation of SIC-CPA incorporates a penalty for model complexity, effectively preventing the selection of overly complex models with multiple change points.

Although SIC-CPA method can detect multiple change points, this study mainly focuses on detecting test speededness, and in common scenarios, examinees exhibit a single change point at the end of the test due to factors such as test fatigue, motivation less, test disengagement behavior, etc. Further investigation of the SIC-CPA method for detecting two change points can be found in simulation study IV.

2.3 CPA based on SIC for item response times

Similarly, considering a test with

![]() $J$

items, examinee

$J$

items, examinee

![]() $i$

answers item

$i$

answers item

![]() $j$

with a speed parameter of

$j$

with a speed parameter of

![]() ${\tau}_{i,j}$

, where

${\tau}_{i,j}$

, where

![]() $j=1,2,\dots, J$

. The hypothesis testing problem is considered as follows: The null hypothesis is

$j=1,2,\dots, J$

. The hypothesis testing problem is considered as follows: The null hypothesis is

and the alternative hypothesis is

In this study, the traditional log-normal model (van der Linden, Reference van der Linden2006) is used to fit the RT data, that is,

where

![]() ${\alpha}_j$

and

${\alpha}_j$

and

![]() ${\beta}_j$

are time dispersion and time intensity parameters for item

${\beta}_j$

are time dispersion and time intensity parameters for item

![]() $j$

, respectively.

$j$

, respectively.

![]() ${\tau}_i$

is the speed parameter of examinee

${\tau}_i$

is the speed parameter of examinee

![]() $i$

. Therefore, under

$i$

. Therefore, under

![]() ${H}_0^{\tau }$

, the maximum likelihood function of observing a RT pattern

${H}_0^{\tau }$

, the maximum likelihood function of observing a RT pattern

![]() ${\boldsymbol{t}}_i$

for the

${\boldsymbol{t}}_i$

for the

![]() $i$

th examinee can be obtained as

$i$

th examinee can be obtained as

$$\begin{align}{L}_{i0}\left({\boldsymbol{t}}_i\mid {\widehat{\tau}}_i\right)=\prod \limits_{j=1}^J\kern0.1em \frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log \left({t}_{ij}\right)-\left({\beta}_j-{\widehat{\tau}}_i\right)\right]}^2}{2}\right\},\end{align}$$

$$\begin{align}{L}_{i0}\left({\boldsymbol{t}}_i\mid {\widehat{\tau}}_i\right)=\prod \limits_{j=1}^J\kern0.1em \frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log \left({t}_{ij}\right)-\left({\beta}_j-{\widehat{\tau}}_i\right)\right]}^2}{2}\right\},\end{align}$$

where

![]() ${\boldsymbol{t}}_i={\left({t}_{i1},{t}_{i2},\dots, {t}_{iJ}\right)}^{\prime }$

is a RT pattern that captures the RTs of examinee

${\boldsymbol{t}}_i={\left({t}_{i1},{t}_{i2},\dots, {t}_{iJ}\right)}^{\prime }$

is a RT pattern that captures the RTs of examinee

![]() $i$

answering all items of the test. Therefore, the

$i$

answering all items of the test. Therefore, the

![]() $\mathrm{SIC}$

under

$\mathrm{SIC}$

under

![]() ${H}_0^{\tau }$

, that is,

${H}_0^{\tau }$

, that is,

![]() ${\mathrm{SIC}}_{\tau }(J)$

, is

${\mathrm{SIC}}_{\tau }(J)$

, is

$$\begin{align}{\mathrm{SIC}}_{\tau_i}(J)&=-2\left[\log {L}_{i0}\left({\boldsymbol{t}}_i\mid {\widehat{\tau}}_i\right)\right]+\log (J)\nonumber\\& =-2\left\{\sum \limits_{j=1}^J\kern0.20em \log \left(\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\right)-\frac{1}{2}\sum \limits_{j=1}^J\kern0.20em {\alpha}_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\widehat{\tau}}_i\right)\right]}^2\right\}+\log (J).\end{align}$$

$$\begin{align}{\mathrm{SIC}}_{\tau_i}(J)&=-2\left[\log {L}_{i0}\left({\boldsymbol{t}}_i\mid {\widehat{\tau}}_i\right)\right]+\log (J)\nonumber\\& =-2\left\{\sum \limits_{j=1}^J\kern0.20em \log \left(\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\right)-\frac{1}{2}\sum \limits_{j=1}^J\kern0.20em {\alpha}_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\widehat{\tau}}_i\right)\right]}^2\right\}+\log (J).\end{align}$$

Here, we assume that the item parameters are known, and only one latent speed parameter is needed to be estimated for examinee

![]() $i$

under the null hypothesis. Therefore, the number of parameters included in the penalty term is 1 (i.e.,

$i$

under the null hypothesis. Therefore, the number of parameters included in the penalty term is 1 (i.e.,

![]() $p=1$

), and the number of observations is

$p=1$

), and the number of observations is

![]() $J$

(i.e.,

$J$

(i.e.,

![]() $J$

is number of all RT observations for examinee

$J$

is number of all RT observations for examinee

![]() $i$

).

$i$

).

Under

![]() ${H}_1^{\tau }$

, the corresponding maximum likelihood function is

${H}_1^{\tau }$

, the corresponding maximum likelihood function is

$$\begin{align}{L}_{i1}\left({\boldsymbol{y}}_i\mid {\widehat{\tau}}_i,{\hat{\tau}}_i^{\prime}\right)&=\prod \limits_{j=1}^m\kern0.20em \left[\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\widehat{\tau}}_i\right)\right]}^2}{2}\right\}\right]\nonumber\\& \quad \times \prod \limits_{j=m+1}^J\kern0.20em \left[\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i^{\prime}\right)\right]}^2}{2}\right\}\right],\end{align}$$

$$\begin{align}{L}_{i1}\left({\boldsymbol{y}}_i\mid {\widehat{\tau}}_i,{\hat{\tau}}_i^{\prime}\right)&=\prod \limits_{j=1}^m\kern0.20em \left[\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\widehat{\tau}}_i\right)\right]}^2}{2}\right\}\right]\nonumber\\& \quad \times \prod \limits_{j=m+1}^J\kern0.20em \left[\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i^{\prime}\right)\right]}^2}{2}\right\}\right],\end{align}$$

where

![]() ${\widehat{\tau}}_i$

and

${\widehat{\tau}}_i$

and

![]() ${\hat{\tau}}_i^{\prime }$

are the MLEs of the speed parameter obtained by calculating the

${\hat{\tau}}_i^{\prime }$

are the MLEs of the speed parameter obtained by calculating the

![]() $m$

and

$m$

and

![]() $J-m$

RTs, respectively. Therefore, the SIC under

$J-m$

RTs, respectively. Therefore, the SIC under

![]() ${H}_1^{\tau },{\mathrm{SIC}}_{\tau }(m)$

, for

${H}_1^{\tau },{\mathrm{SIC}}_{\tau }(m)$

, for

![]() $m=2,\dots, J-1$

, is obtained as

$m=2,\dots, J-1$

, is obtained as

$$\begin{align}{\mathrm{SIC}}_{\tau_i}(m)&=-2\left[\log {L}_{i1}\left({\boldsymbol{t}}_i\mid {\widehat{\tau}}_i,{\hat{\tau}}_i^{\prime}\right)\right]+2\;\log (J)\nonumber\\&=-2\left\{\sum \limits_{j=1}^m\kern0.20em \log \left(\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\right)-\frac{1}{2}\sum \limits_{j=1}^m\kern0.20em {\alpha}_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\widehat{\tau}}_i\right)\right]}^2\right\}\nonumber\\& \quad -2\left\{\sum \limits_{j=m+1}^J\kern0.20em \log \left(\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\right)-\frac{1}{2}\sum \limits_{j=m+1}^J\kern0.20em {\alpha}_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i^{\prime}\right)\right]}^2\right\}+2\log (J),\end{align}$$

$$\begin{align}{\mathrm{SIC}}_{\tau_i}(m)&=-2\left[\log {L}_{i1}\left({\boldsymbol{t}}_i\mid {\widehat{\tau}}_i,{\hat{\tau}}_i^{\prime}\right)\right]+2\;\log (J)\nonumber\\&=-2\left\{\sum \limits_{j=1}^m\kern0.20em \log \left(\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\right)-\frac{1}{2}\sum \limits_{j=1}^m\kern0.20em {\alpha}_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\widehat{\tau}}_i\right)\right]}^2\right\}\nonumber\\& \quad -2\left\{\sum \limits_{j=m+1}^J\kern0.20em \log \left(\frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\right)-\frac{1}{2}\sum \limits_{j=m+1}^J\kern0.20em {\alpha}_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i^{\prime}\right)\right]}^2\right\}+2\log (J),\end{align}$$

where the number of parameters included in the penalty term is 2 (i.e.,

![]() $p=2$

). Based on the minimum information criterion principle, we reject

$p=2$

). Based on the minimum information criterion principle, we reject

![]() ${H}_0^{\tau }$

if

${H}_0^{\tau }$

if

and the estimated change point position denoted by

![]() $\widehat{m}$

such that

$\widehat{m}$

such that

2.4 CPA based on SIC for item responses and response times

A modified SIC method is proposed to detect test speededness by combining both response and RT data. The key to the hypothesis testing is to examine whether the ability and speed parameters change simultaneously during the responding process. We consider the following hypothesis testing problem: The null hypothesis is

and the alternative hypothesis is

$$\begin{align}{H}_1:{\theta}_{i,1}&=\cdots ={\theta}_{i,r}\triangleq {\theta}_i\ne {\theta}_{i,r+1}=\cdots ={\theta}_{i,J}\triangleq {\theta}_i^{\prime },\nonumber\\{\tau}_{i,1}&=\cdots ={\tau}_{i,r}\triangleq {\tau}_i\ne {\tau}_{i,r+1}=\cdots ={\tau}_{i,J}\triangleq {\tau}_i^{\prime }.\end{align}$$

$$\begin{align}{H}_1:{\theta}_{i,1}&=\cdots ={\theta}_{i,r}\triangleq {\theta}_i\ne {\theta}_{i,r+1}=\cdots ={\theta}_{i,J}\triangleq {\theta}_i^{\prime },\nonumber\\{\tau}_{i,1}&=\cdots ={\tau}_{i,r}\triangleq {\tau}_i\ne {\tau}_{i,r+1}=\cdots ={\tau}_{i,J}\triangleq {\tau}_i^{\prime }.\end{align}$$

Here, van der Linden’s (Reference van der Linden2006) joint model for responses and RTs is considered. Therefore, under

![]() ${H}_0$

, the maximum likelihood function of observing response and RT pattern

${H}_0$

, the maximum likelihood function of observing response and RT pattern

![]() $\left({\boldsymbol{y}}_{\boldsymbol{i}},{\boldsymbol{t}}_{\boldsymbol{i}}\right)$

for examinee

$\left({\boldsymbol{y}}_{\boldsymbol{i}},{\boldsymbol{t}}_{\boldsymbol{i}}\right)$

for examinee

![]() $i$

is

$i$

is

$$\begin{align}{L}_{i0}\left({\boldsymbol{y}}_{\boldsymbol{i}},{\boldsymbol{t}}_{\boldsymbol{i}}\mid {\hat{\theta}}_i,{\hat{\tau}}_i\right)&=\prod \limits_{j=1}^J\kern0.20em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}}\nonumber\\& \quad \times \prod \limits_{j=1}^J\kern0.20em \frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i\right)\right]}^2}{2}\right\},\end{align}$$

$$\begin{align}{L}_{i0}\left({\boldsymbol{y}}_{\boldsymbol{i}},{\boldsymbol{t}}_{\boldsymbol{i}}\mid {\hat{\theta}}_i,{\hat{\tau}}_i\right)&=\prod \limits_{j=1}^J\kern0.20em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}}\nonumber\\& \quad \times \prod \limits_{j=1}^J\kern0.20em \frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i\right)\right]}^2}{2}\right\},\end{align}$$

where

![]() ${\boldsymbol{y}}_{\boldsymbol{i}}=\left({y}_{i1},{y}_{i2},\dots, {y}_{ij},\dots {y}_{iJ}\right)$

is the response pattern of examinee

${\boldsymbol{y}}_{\boldsymbol{i}}=\left({y}_{i1},{y}_{i2},\dots, {y}_{ij},\dots {y}_{iJ}\right)$

is the response pattern of examinee

![]() $i$

and

$i$

and

![]() ${\boldsymbol{t}}_i={\left({t}_{i1},{t}_{i2},\dots, {t}_{iJ}\right)}^{\prime }$

is the RT pattern of examinee

${\boldsymbol{t}}_i={\left({t}_{i1},{t}_{i2},\dots, {t}_{iJ}\right)}^{\prime }$

is the RT pattern of examinee

![]() $i$

. Therefore, the

$i$

. Therefore, the

![]() $\mathrm{SIC}$

under

$\mathrm{SIC}$

under

![]() ${H}_0$

, that is,

${H}_0$

, that is,

![]() ${\mathrm{SIC}}_{\theta, \tau }(J)$

, is

${\mathrm{SIC}}_{\theta, \tau }(J)$

, is

Under

![]() ${H}_1$

, the corresponding maximum likelihood function is

${H}_1$

, the corresponding maximum likelihood function is

$$\begin{align}{L}_{i1}\left({\boldsymbol{y}}_{\boldsymbol{i}},{\boldsymbol{t}}_{\boldsymbol{i}}\mid {\hat{\theta}}_i,{\hat{\theta}}_i^{\prime },{\hat{\tau}}_i,{\hat{\tau}}_i^{\prime}\right)&=\prod \limits_{j=1}^r\kern0.20em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}}\nonumber\\& \quad \times \prod \limits_{j=r+1}^J\kern0.20em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}}\nonumber\\& \quad \times \prod \limits_{j=1}^r\kern0.20em \frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i\right)\right]}^2}{2}\right\}\nonumber\\& \quad \times \prod \limits_{j=r+1}^J\kern0.20em \frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i^{\prime}\right)\right]}^2}{2}\right\},\end{align}$$

$$\begin{align}{L}_{i1}\left({\boldsymbol{y}}_{\boldsymbol{i}},{\boldsymbol{t}}_{\boldsymbol{i}}\mid {\hat{\theta}}_i,{\hat{\theta}}_i^{\prime },{\hat{\tau}}_i,{\hat{\tau}}_i^{\prime}\right)&=\prod \limits_{j=1}^r\kern0.20em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}}\nonumber\\& \quad \times \prod \limits_{j=r+1}^J\kern0.20em {\left\{\frac{\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}{1+\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}\right\}}^{y_{ij}}{\left\{\frac{1}{1+\exp \left[{a}_j\left({\hat{\theta}}_i^{\prime }-{b}_j\right)\right]}\right\}}^{1-{y}_{ij}}\nonumber\\& \quad \times \prod \limits_{j=1}^r\kern0.20em \frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i\right)\right]}^2}{2}\right\}\nonumber\\& \quad \times \prod \limits_{j=r+1}^J\kern0.20em \frac{\alpha_j}{t_{ij}\sqrt{2\pi }}\exp \left\{-\frac{\alpha_j^2{\left[\log {t}_{ij}-\left({\beta}_j-{\hat{\tau}}_i^{\prime}\right)\right]}^2}{2}\right\},\end{align}$$

where

![]() ${\widehat{\theta}}_i$

and

${\widehat{\theta}}_i$

and

![]() ${\widehat{\tau}}_i$

are the MLEs of the ability and speed parameters obtained from the first

${\widehat{\tau}}_i$

are the MLEs of the ability and speed parameters obtained from the first

![]() $r$

responses and RTs, respectively,

$r$

responses and RTs, respectively,

![]() ${\hat{\theta}}_i^{\prime }$

and

${\hat{\theta}}_i^{\prime }$

and

![]() ${\hat{\tau}}_i^{\prime }$

are the corresponding MLEs obtained from the remaining

${\hat{\tau}}_i^{\prime }$

are the corresponding MLEs obtained from the remaining

![]() $J-r$

responses and RTs, respectively. Therefore, the SIC under

$J-r$

responses and RTs, respectively. Therefore, the SIC under

![]() ${H}_1,{\mathrm{SIC}}_{\theta, \tau }(r)$

, for

${H}_1,{\mathrm{SIC}}_{\theta, \tau }(r)$

, for

![]() $r=2,\dots, J-1$

, is obtained as

$r=2,\dots, J-1$

, is obtained as

where the number of parameters included in the penalty term is 4 (i.e.,

![]() $p=4$

). Based on the minimum information criterion principle, we reject

$p=4$

). Based on the minimum information criterion principle, we reject

![]() ${H}_0$

if

${H}_0$

if

and the estimated change point position denoted by

![]() $\widehat{r}$

such that

$\widehat{r}$

such that

2.5 Critical value and significance level of CPA based SIC method

According to Gupta and Chen (Reference Gupta and Chen1996), when the difference between SICs is small, it may be difficult to determine whether the change point actually exists or is simply caused by data fluctuation. In our study, the fluctuation of responses and/or RTs may induce subtle differences in SICs, which may result in examinees without change points being incorrectly identified as speeded (i.e., exhibiting changes in ability and/or speed). For example, as verified based solely on responses in our simulation study I, among the examinees without change points, 75% of the

![]() $\mathrm{SI}{\mathrm{C}}_{\theta }(J)-{\min}_{2\le k\le J-1}\kern0.1em \mathrm{SI}{\mathrm{C}}_{\theta }(k)$

is less than critical value, which results in an extremely high Type-I error. To solve this problem, Gupta and Chen (Reference Gupta and Chen1996) introduced the significance level

$\mathrm{SI}{\mathrm{C}}_{\theta }(J)-{\min}_{2\le k\le J-1}\kern0.1em \mathrm{SI}{\mathrm{C}}_{\theta }(k)$

is less than critical value, which results in an extremely high Type-I error. To solve this problem, Gupta and Chen (Reference Gupta and Chen1996) introduced the significance level

![]() $\alpha$

and its associated critical value

$\alpha$

and its associated critical value

![]() ${c}_{\alpha }$

, where

${c}_{\alpha }$

, where

![]() ${c}_{\alpha}\ge 0$

. Therefore, we accept

${c}_{\alpha}\ge 0$

. Therefore, we accept

![]() ${H}_0$

if

${H}_0$

if

where

Unfortunately, as the (joint) distribution of our models (i.e., 2PL model, log-normal RT model, or van der Linden’s (Reference van der Linden2006) joint response and RT model) is not normal, it is not feasible to obtain a closed form of the exact critical value

![]() ${c}_{\alpha }$

. Usually, permutation (Shao et al., Reference Shao, Li and Cheng2016), bootstrap, and simulation (Armstrong & Shi, Reference Armstrong and Shi2009; Cheng & Shao, Reference Cheng and Shao2022) methods are commonly used to determine the critical values in detecting aberrancy. However, permutation and bootstrap methods are computationally expensive. Therefore, in this study, empirical critical values were used via Monte Carlo simulations following the procedure outlined by Worsley (Reference Worsley1979) and further adopted in Cheng and Shao (Reference Cheng and Shao2022). Specifically, we generated 10,000 data under the null hypothesis—each dataset representing individual responses, RTs, or a combination of both, depending on the data type used in the SIC-CPA method. For each dataset, we computed the SIC values using estimated ability and/or speed parameters, while treating the item parameters as known. As a result, these 10,000 datasets consist solely of regular data, without any aberrancies. Note that the resulting critical values are determined by the simulation settings, such as test length, item parameters, and the distribution of person parameters, rather than by the proportion of speeded examinees. For the significance levels of 0.05, 0.01, and 0.001 in a one-sided test, the 500th, 100th, and 10th largest values were selected as critical values, respectively. For each test length condition, 1000 replicated processes were performed, and the average critical value of 1000 times was used as the final critical value for each SIC-CPA approach.

${c}_{\alpha }$

. Usually, permutation (Shao et al., Reference Shao, Li and Cheng2016), bootstrap, and simulation (Armstrong & Shi, Reference Armstrong and Shi2009; Cheng & Shao, Reference Cheng and Shao2022) methods are commonly used to determine the critical values in detecting aberrancy. However, permutation and bootstrap methods are computationally expensive. Therefore, in this study, empirical critical values were used via Monte Carlo simulations following the procedure outlined by Worsley (Reference Worsley1979) and further adopted in Cheng and Shao (Reference Cheng and Shao2022). Specifically, we generated 10,000 data under the null hypothesis—each dataset representing individual responses, RTs, or a combination of both, depending on the data type used in the SIC-CPA method. For each dataset, we computed the SIC values using estimated ability and/or speed parameters, while treating the item parameters as known. As a result, these 10,000 datasets consist solely of regular data, without any aberrancies. Note that the resulting critical values are determined by the simulation settings, such as test length, item parameters, and the distribution of person parameters, rather than by the proportion of speeded examinees. For the significance levels of 0.05, 0.01, and 0.001 in a one-sided test, the 500th, 100th, and 10th largest values were selected as critical values, respectively. For each test length condition, 1000 replicated processes were performed, and the average critical value of 1000 times was used as the final critical value for each SIC-CPA approach.

3 Simulation studies

Six simulation studies were carried out to investigate the performance of the proposed SIC-CPA approaches, which employed distinct data, including responses, RTs, and a combination of response and RT data, respectively. Specifically, simulation study I compared the proposed SIC-CPA method with Shao et al.’s (Reference Shao, Li and Cheng2016) method, based solely on response data. In simulation study II, we compared the proposed SIC-CPA method and Cheng and Shao’s (Reference Cheng and Shao2022) method, based solely on RT data. Simulation study III compared the performance of the proposed three SIC-CPA methods; in this case, the data were generated from the modified gradual change joint model for responses and RTs. To manipulate test speededness, examinees with one change point were considered in simulation studies I, II, and III. Simulation study IV was conducted to evaluate the performance of the SIC-CPA method based on RTs with two change points, simultaneously considering the warm-up effect and test speededness. Simulation study V includes an item parameter estimation stage and considers two cases: (1) non-iterative calibration-detection procedure and (2) iterative detect–clean–recalibrate procedure. Under each case, we compare the SIC-CPA with the likelihood ratio and Wald tests. In addition, simulation study VI was conducted to evaluate the performance of the SIC-CPA, likelihood ratio test, and Wald methods when there are no speeded responses and/or response times (RTs) in the data. Due to page limit, simulation study VI can be found in the Supplementary Material. Each simulation condition was conducted 100 replications.

3.1 Data generation

Concerning the generation models for regular and speeded responses and/or RTs, as claimed in Gorney and Wollack’s (Reference Gorney and Wollack2022) paper, “All response accuracy (RA) models are formulated as mixture extensions of the IRT model. All RT models are formulated as mixture extensions of the lognormal RT model developed by van der Linden (Reference van der Linden2006).” The unique difference between these generation models (for example, van der Linden & van Krimpen-Stoop, Reference van der Linden and van Krimpen-Stoop2003; Wang & Xu, Reference Wang and Xu2015; Wollack & Cohen, Reference Wollack and Cohen2004) is the degree of change in the responses/RTs or ability/speed parameter, and thus they can be classified as abrupt and gradual change models. We adopted the gradual change model proposed by Suh et al., (Reference Suh, Cho and Wollack2012) for responses, along with its extensions for RTs developed by Cheng and Shao (Reference Cheng and Shao2022), as well as our modified gradual change joint model for responses and RTs to generate simulation data. These models were chosen because they offer a more realistic representation and can capture the complexity of real data. Furthermore, employing more sophisticated models for data generation allows for a more comprehensive evaluation of the robustness and flexibility of the proposed approaches (Cheng & Shao, Reference Cheng and Shao2022; Luecht & Ackerman, Reference Luecht and Ackerman2018).

Next, we introduce a modified gradual change joint model for responses and RTs:

$$\begin{align}{P}_j^{\ast}\left({\theta}_i\right)&=p\left({y}_{ij}=1\mid {\theta}_i,{a}_j,{b}_j\right)\times \min {\left(1,\left[1-\left(\frac{j}{J}-{\eta}_i\right)\right]\right)}^{\lambda_i}, \end{align}$$

$$\begin{align}{P}_j^{\ast}\left({\theta}_i\right)&=p\left({y}_{ij}=1\mid {\theta}_i,{a}_j,{b}_j\right)\times \min {\left(1,\left[1-\left(\frac{j}{J}-{\eta}_i\right)\right]\right)}^{\lambda_i}, \end{align}$$

$$\begin{align}\log \left({t}_{ij}\right)&=\left({\beta}_j-{\tau}_i+{\varepsilon}_{ij}\right)\times \min {\left(1,\left[1-\left(\frac{j}{J}-{\eta}_i\right)\right]\right)}^{\lambda_i},{\varepsilon}_{ij}\sim N\left(0,{\alpha}_j^{-2}\right),\end{align}$$

$$\begin{align}\log \left({t}_{ij}\right)&=\left({\beta}_j-{\tau}_i+{\varepsilon}_{ij}\right)\times \min {\left(1,\left[1-\left(\frac{j}{J}-{\eta}_i\right)\right]\right)}^{\lambda_i},{\varepsilon}_{ij}\sim N\left(0,{\alpha}_j^{-2}\right),\end{align}$$

where

$$\begin{align}\left(\begin{array}{l}{\theta}_i\\ {}{\tau}_i\end{array}\right)\sim \mathrm{MVN}\left({\boldsymbol{\mu}}_{\boldsymbol{p}}=\left(\begin{array}{c}{\boldsymbol{\mu}}_{\theta}\\ {}{\boldsymbol{\mu}}_{\tau}\end{array}\right),{\boldsymbol{\Sigma}}_{\boldsymbol{p}}=\left(\begin{array}{cc}{\sigma}_{\theta}^2& {\sigma}_{\theta \tau}\\ {}{\sigma}_{\theta \tau}& {\sigma}_{\tau}^2\end{array}\right)\right),\end{align}$$

$$\begin{align}\left(\begin{array}{l}{\theta}_i\\ {}{\tau}_i\end{array}\right)\sim \mathrm{MVN}\left({\boldsymbol{\mu}}_{\boldsymbol{p}}=\left(\begin{array}{c}{\boldsymbol{\mu}}_{\theta}\\ {}{\boldsymbol{\mu}}_{\tau}\end{array}\right),{\boldsymbol{\Sigma}}_{\boldsymbol{p}}=\left(\begin{array}{cc}{\sigma}_{\theta}^2& {\sigma}_{\theta \tau}\\ {}{\sigma}_{\theta \tau}& {\sigma}_{\tau}^2\end{array}\right)\right),\end{align}$$

$$\begin{align}\left(\begin{array}{l}{b}_j\\ {}{\beta}_j\end{array}\right)\sim \mathrm{MVN}\left({\boldsymbol{\mu}}_{\boldsymbol{I}}=\left(\begin{array}{c}{\boldsymbol{\mu}}_b\\ {}{\boldsymbol{\mu}}_{\beta}\end{array}\right),{\boldsymbol{\Sigma}}_{\boldsymbol{I}}=\left(\begin{array}{cc}{\sigma}_b^2& {\sigma}_{b\beta}\\ {}{\sigma}_{b\beta}& {\sigma}_{\beta}^2\end{array}\right)\right),\end{align}$$

$$\begin{align}\left(\begin{array}{l}{b}_j\\ {}{\beta}_j\end{array}\right)\sim \mathrm{MVN}\left({\boldsymbol{\mu}}_{\boldsymbol{I}}=\left(\begin{array}{c}{\boldsymbol{\mu}}_b\\ {}{\boldsymbol{\mu}}_{\beta}\end{array}\right),{\boldsymbol{\Sigma}}_{\boldsymbol{I}}=\left(\begin{array}{cc}{\sigma}_b^2& {\sigma}_{b\beta}\\ {}{\sigma}_{b\beta}& {\sigma}_{\beta}^2\end{array}\right)\right),\end{align}$$

and

![]() $p\left({y}_{ij}=1\mid {\theta}_i,{a}_j,{b}_j\right)$

follows the 2PL model in Eq. (4),

$p\left({y}_{ij}=1\mid {\theta}_i,{a}_j,{b}_j\right)$

follows the 2PL model in Eq. (4),

![]() ${\eta}_i$

(where

${\eta}_i$

(where

![]() $0<{\eta}_i<1$

) is the stage of the test that test speededness occurs on examinee

$0<{\eta}_i<1$

) is the stage of the test that test speededness occurs on examinee

![]() $i$

, and

$i$

, and

![]() ${\eta}_i\times J$

is the location of change point. For instance, if

${\eta}_i\times J$

is the location of change point. For instance, if

![]() ${\eta}_i=0.7$

, it indicates that test speededness occurs at 70% of a test, and if there are 50 items in the test (i.e.,

${\eta}_i=0.7$

, it indicates that test speededness occurs at 70% of a test, and if there are 50 items in the test (i.e.,

![]() $J=50$

), the change point would be item 35. The parameter

$J=50$

), the change point would be item 35. The parameter

![]() ${\lambda}_i$

(where

${\lambda}_i$

(where

![]() ${\lambda}_i\ge 0$

) is the speededness rate which controls how fast the correct response probability decreases as the test proceeds beyond

${\lambda}_i\ge 0$

) is the speededness rate which controls how fast the correct response probability decreases as the test proceeds beyond

![]() ${\eta}_i$

, at the same time,

${\eta}_i$

, at the same time,

![]() ${\lambda}_i$

determines the rate at which less time is spent on items after

${\lambda}_i$

determines the rate at which less time is spent on items after

![]() ${\eta}_i$

. When

${\eta}_i$

. When

![]() ${\lambda}_i$

increases, it leads to faster declines of

${\lambda}_i$

increases, it leads to faster declines of

![]() ${P}_j^{\ast}\left({\theta}_i\right)$

and

${P}_j^{\ast}\left({\theta}_i\right)$

and

![]() $\log \left({t}_{ij}\right)$

due to test speededness. For example, take two examinees with different

$\log \left({t}_{ij}\right)$

due to test speededness. For example, take two examinees with different

![]() $\lambda$

and the same

$\lambda$

and the same

![]() $\eta$

as an example, when

$\eta$

as an example, when

![]() $\frac{j}{J}>\eta$

we have

$\frac{j}{J}>\eta$

we have

![]() $\min {\left(1,\left[1-\left(\frac{j}{J}-\eta \right)\right]\right)}^{\lambda_i}={\left[1-\left(\frac{j}{J}-\eta \right)\right]}^{\lambda_i}$

, and if

$\min {\left(1,\left[1-\left(\frac{j}{J}-\eta \right)\right]\right)}^{\lambda_i}={\left[1-\left(\frac{j}{J}-\eta \right)\right]}^{\lambda_i}$

, and if

![]() ${\lambda}_1>{\lambda}_2$

, then

${\lambda}_1>{\lambda}_2$

, then

![]() ${\left[1-\left(\frac{j}{J}-{\eta}_i\right)\right]}^{\lambda_1}<{\left[1-\left(\frac{j}{J}-{\eta}_i\right)\right]}^{\lambda_2}$

, which means that

${\left[1-\left(\frac{j}{J}-{\eta}_i\right)\right]}^{\lambda_1}<{\left[1-\left(\frac{j}{J}-{\eta}_i\right)\right]}^{\lambda_2}$

, which means that

![]() ${P}_j^{\ast}\left({\theta}_1\right)<{P}_j^{\ast}\left({\theta}_2\right)$

and

${P}_j^{\ast}\left({\theta}_1\right)<{P}_j^{\ast}\left({\theta}_2\right)$

and

![]() $\log \left({t}_{1j}\right)<\log \left({t}_{2j}\right)$

. This modified gradual change joint model is reduced to van der Linden’s (Reference van der Linden2006) hierarchical response and RT model when

$\log \left({t}_{1j}\right)<\log \left({t}_{2j}\right)$

. This modified gradual change joint model is reduced to van der Linden’s (Reference van der Linden2006) hierarchical response and RT model when

![]() ${\eta}_i=0$

and

${\eta}_i=0$

and

![]() ${\lambda}_i=0$

, which means the test speededness does not happen, and hence the responses and RTs are from the regular behavior. Note that Suh et al.’s (Reference Suh, Cho and Wollack2012) gradual change model for responses and the extended gradual model for RTs used in Cheng and Shao (Reference Cheng and Shao2022) are both special cases of this modified gradual change model for responses and RTs.

${\lambda}_i=0$

, which means the test speededness does not happen, and hence the responses and RTs are from the regular behavior. Note that Suh et al.’s (Reference Suh, Cho and Wollack2012) gradual change model for responses and the extended gradual model for RTs used in Cheng and Shao (Reference Cheng and Shao2022) are both special cases of this modified gradual change model for responses and RTs.

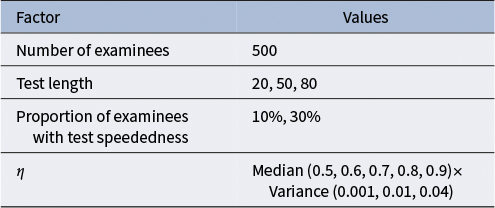

Table 1 displays the manipulated factors and their varied levels used in all four simulation studies. Here, 500 and 1,000 examinees were considered, and the proportions of speeded examinees were 10% and 30%, respectively. Three test lengths, 20 (short test), 50 (moderate test) and 80 (long test), were considered, as used in Zhu et al. (Reference Zhu, Jiao, Gao and Meng2023). Following the data generation scheme in Shao et al. (Reference Shao, Li and Cheng2016) and Cheng and Shao (Reference Cheng and Shao2022),

![]() $\eta$

was simulated from Beta distribution, and we set the median of

$\eta$

was simulated from Beta distribution, and we set the median of

![]() $\eta$

to 0.5, 0.6, 0.7, 0.8 and 0.9, and the variance of

$\eta$

to 0.5, 0.6, 0.7, 0.8 and 0.9, and the variance of

![]() $\eta$

was set to 0.001, 0.01, and 0.04. Because the detection results are consistent across sample sizes, we only show the simulation results for 500 examinees. In general, 90 (i.e., 3 (test lengths)

$\eta$

was set to 0.001, 0.01, and 0.04. Because the detection results are consistent across sample sizes, we only show the simulation results for 500 examinees. In general, 90 (i.e., 3 (test lengths)

![]() $\times$

2 (speededness proportions)

$\times$

2 (speededness proportions)

![]() $\times$

5 (median of

$\times$

5 (median of

![]() $\eta$

)

$\eta$

)

![]() $\times$

3 (variance of

$\times$

3 (variance of

![]() $\eta$

)) baseline simulation conditions were conducted. We implemented the SIC-CPA, Shao et al. (Reference Shao, Li and Cheng2016), and Cheng and Shao (Reference Cheng and Shao2022) approaches using the R programming language (R Core Team, 2022) on a computer equipped with Intel(R) Core(TM) i7-7700HQ CPU at 2.80 GHz, 8 GB RAM. Supplementary Table S1 shows the average values of critical values and the standard deviations (SD) for the SIC-CPA, likelihood ratio test, and Wald statistics across 1,000 replicated processes in simulation studies I, II, and III at the significant level of 0.01.

$\eta$

)) baseline simulation conditions were conducted. We implemented the SIC-CPA, Shao et al. (Reference Shao, Li and Cheng2016), and Cheng and Shao (Reference Cheng and Shao2022) approaches using the R programming language (R Core Team, 2022) on a computer equipped with Intel(R) Core(TM) i7-7700HQ CPU at 2.80 GHz, 8 GB RAM. Supplementary Table S1 shows the average values of critical values and the standard deviations (SD) for the SIC-CPA, likelihood ratio test, and Wald statistics across 1,000 replicated processes in simulation studies I, II, and III at the significant level of 0.01.

Table 1 Fixed factors/parameters in four simulation studies.

3.2 Evaluation criteria

We evaluate the performance of the proposed approaches from three aspects. First, concerning the detection of speeded examinees, we focus on the correct classification rate (CCR), power and Type I errors. CCR is the ratio of correctly classified examinees (including normal and speeded examinees) to the total number of examinees, power is the proportion of correctly identified speeded examinees among all identified speeded examinees, and Type I error rate is the proportion of normal examinees incorrectly classified as speeded examinees. Second, we assess the performance of the proposed method in pinpointing change points. We use “lag,” which is equal to estimated location of change point minus true location of change point, to show the recoveries of estimating change points. Because “positive lags” and “negative lags” offset each other, the “average absolute lag” (AL_mean) and its standard deviation (AL_SD) are used to compare the performance of different methods in locating change points. Third, the computational time is given to show the efficiency of the different approaches.

3.3 Simulation study I

This simulation study evaluates the performance of the proposed SIC-CPA approach based solely on the responses. Meanwhile, we compare the effectiveness of the SIC-CPA approach with Shao et al.’s (Reference Shao, Li and Cheng2016) CPA method, hereafter referred to as Shao-CPA. The speeded responses were generated from Suh et al.’s (Reference Suh, Cho and Wollack2012) gradual change model, that is, Eq. (31), and the non-speeded responses were generated from the 2PL model in Eq. (4). For the model parameters, we simulate

![]() ${a}_j\sim \log N\left(\mathrm{0,0.5}\right)$

,

${a}_j\sim \log N\left(\mathrm{0,0.5}\right)$

,

![]() ${b}_j\sim N\left(0,1\right)$

,

${b}_j\sim N\left(0,1\right)$

,

![]() ${\theta}_i\sim N\left(0,1\right)$

, and

${\theta}_i\sim N\left(0,1\right)$

, and

![]() ${\lambda}_i\sim \log N\left(\mathrm{3.912,1}\right)$

. We sampled

${\lambda}_i\sim \log N\left(\mathrm{3.912,1}\right)$

. We sampled

![]() ${\lambda}_i$

values from

${\lambda}_i$

values from

![]() $\log N\left(\mathrm{3.912,1}\right)$

following the previous studies (Goegebeur et al., Reference Goegebeur, De Boeck, Wollack and Cohen2008; Suh et al, Reference Suh, Cho and Wollack2012), which generated various test speededness patterns. Specifically, this choice represents a reasonable range of performance that accounts for both typical and extreme test speededness, ensuring that the model can simulate the full spectrum of examinee behaviors: the majority of examinees experience a gradual decline in performance, whereas a minority exhibits more extreme speededness near the end of the test. Note that, in Shao et al.’s (Reference Shao, Li and Cheng2016) simulation study, a permutation method was used to generate the null distribution for the Shao-CPA test statistics, with the false discovery rate set to 0.2. To ensure a fair comparison between their approach and our SIC-CPA approach, we used Monte Carlo simulations to determine the critical values for these two methods. Due to consistent trends in the SIC-CPA method with different critical values and page limit, we present the significance level of 0.01 for these two approaches.

$\log N\left(\mathrm{3.912,1}\right)$

following the previous studies (Goegebeur et al., Reference Goegebeur, De Boeck, Wollack and Cohen2008; Suh et al, Reference Suh, Cho and Wollack2012), which generated various test speededness patterns. Specifically, this choice represents a reasonable range of performance that accounts for both typical and extreme test speededness, ensuring that the model can simulate the full spectrum of examinee behaviors: the majority of examinees experience a gradual decline in performance, whereas a minority exhibits more extreme speededness near the end of the test. Note that, in Shao et al.’s (Reference Shao, Li and Cheng2016) simulation study, a permutation method was used to generate the null distribution for the Shao-CPA test statistics, with the false discovery rate set to 0.2. To ensure a fair comparison between their approach and our SIC-CPA approach, we used Monte Carlo simulations to determine the critical values for these two methods. Due to consistent trends in the SIC-CPA method with different critical values and page limit, we present the significance level of 0.01 for these two approaches.

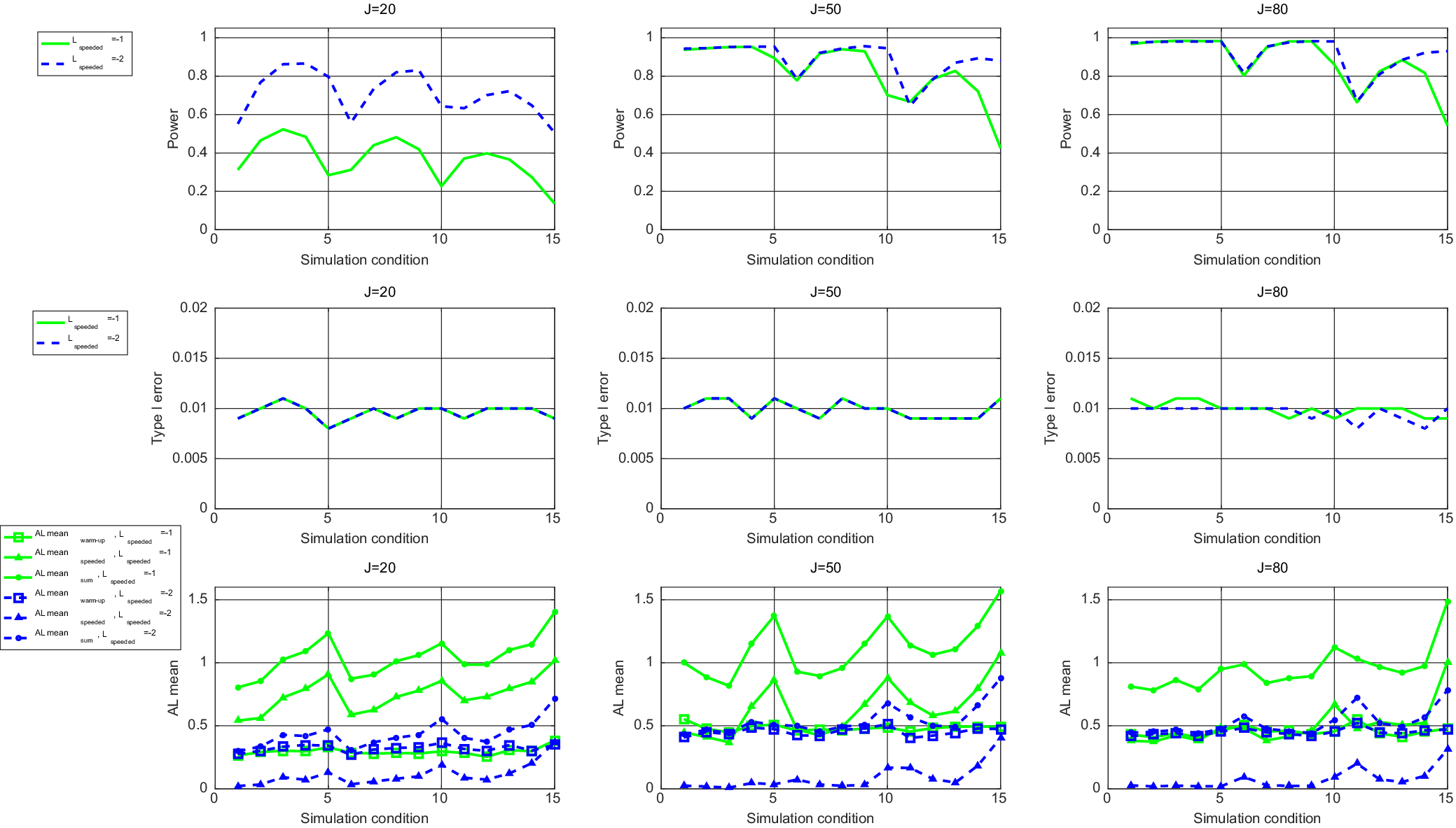

Supplementary Tables S2–S4 present the detection results of Shao-CPA and SIC-CPA methods with test lengths of 20, 50, and 80, respectively. Both methods maintain the type I error rates around 0.01 across all conditions. For the test with 20 items (Supplementary Table S2), the performance of both methods in terms of CCR and power is similar. However, SIC-CPA performs better under high speeded examinee proportions (30%) and larger values of the median and variance of

![]() $\eta$

. Specifically, SIC-CPA achieves lower AL_mean and AL_SD, which indicates more accurate change point estimation. When the median of

$\eta$

. Specifically, SIC-CPA achieves lower AL_mean and AL_SD, which indicates more accurate change point estimation. When the median of

![]() $\eta$

is 0.8, the power of both methods drops below 0.43 when the proportion of speeded examinees is 30%. This decline can be attributed to the fact that the change point locations for speeded examinees approached the end of the test, resulting in fewer speeded responses, which in turn diminishes the performance of identifying speeded examinees and pinpointing the change points.

$\eta$

is 0.8, the power of both methods drops below 0.43 when the proportion of speeded examinees is 30%. This decline can be attributed to the fact that the change point locations for speeded examinees approached the end of the test, resulting in fewer speeded responses, which in turn diminishes the performance of identifying speeded examinees and pinpointing the change points.

For the test with 50 (or 80) items in Supplementary Table S3 (or S4), as the median of

![]() $\eta$

increases, both CCRs and powers of these two methods decrease in most cases. When the variance of

$\eta$

increases, both CCRs and powers of these two methods decrease in most cases. When the variance of

![]() $\eta$

increases, indicating greater variations in change point locations, both AL_mean and AL_SD increase, indicating a decrease in change point estimation accuracy. As the proportion of speeded examinees increases, the performance of these two methods declines, and specifically, CCRs and powers decrease. Meanwhile, it becomes more difficult to accurately identify change point locations, leading to higher AL_mean and AL_SD values.

$\eta$

increases, indicating greater variations in change point locations, both AL_mean and AL_SD increase, indicating a decrease in change point estimation accuracy. As the proportion of speeded examinees increases, the performance of these two methods declines, and specifically, CCRs and powers decrease. Meanwhile, it becomes more difficult to accurately identify change point locations, leading to higher AL_mean and AL_SD values.

As the test length increases, Shao-CPA shows an increase in both AL_mean and AL_SD. This is because Shao-CPA struggles to accurately locate change points with longer tests, which is consistent with the findings of Shao et al. (Reference Shao, Li and Cheng2016). However, as the test length grows, the performance of detecting speeded examinees improves, resulting in consistently higher CCRs and power for both two methods. In particular, when the median of

![]() $\eta$

is 0.8, the power of these two approaches remains above 0.56 under the condition of 30% speeded examinees, as shown in Supplementary Table S4. Furthermore, the advantages of SIC-CPA become more evident, especially under high values of median and variance of

$\eta$

is 0.8, the power of these two approaches remains above 0.56 under the condition of 30% speeded examinees, as shown in Supplementary Table S4. Furthermore, the advantages of SIC-CPA become more evident, especially under high values of median and variance of

![]() $\eta$

. This suggests that SIC-CPA is more robust in handling data variability and it provides more accurate and stable estimates of change points. Therefore, SIC-CPA proves to be a more reliable and efficient approach, particularly in scenarios involving higher speeded examinee proportion and greater variability in the data.

$\eta$

. This suggests that SIC-CPA is more robust in handling data variability and it provides more accurate and stable estimates of change points. Therefore, SIC-CPA proves to be a more reliable and efficient approach, particularly in scenarios involving higher speeded examinee proportion and greater variability in the data.

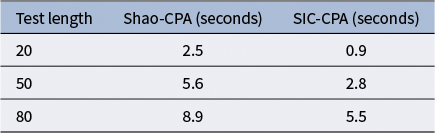

Table 2 shows the average running time of Shao-CPA and SIC-CPA when the sample size is 500. It is evident that the SIC-CPA method operates quickly, indicating a higher computational efficiency. Note that under each simulation condition, the average running time reported in this article indicates the average time for a single replication, and the running time does not include the Monte Carlo simulations used to obtain the empirical critical value.

Table 2 Average running time of Shao-CPA and SIC-CPA approaches when sample size is 500 in simulation study I.

3.4 Simulation study II

Simulation study II was conducted to assess the performance of the proposed SIC-CPA approach utilizing only RTs, and to compare its performance with Cheng and Shao’s (Reference Cheng and Shao2022) CPA method. The speeded RTs were generated from the extended gradual change model for RTs used in Cheng and Shao (Reference Cheng and Shao2022), that is, Eq. (32). For the model parameters,

![]() ${\alpha}_j\sim U\left(\mathrm{1.75,3.25}\right)$

,

${\alpha}_j\sim U\left(\mathrm{1.75,3.25}\right)$

,

![]() ${\beta}_j\sim U\left(\mathrm{3.6,0.11}\right)$

,

${\beta}_j\sim U\left(\mathrm{3.6,0.11}\right)$

,

![]() ${\tau}_i\sim N\left(\mathrm{0,0.25}\right)$

, and

${\tau}_i\sim N\left(\mathrm{0,0.25}\right)$

, and

![]() ${\lambda}_i\sim \log N\left(\mathrm{3.912,1}\right)$

. In addition, the time limits for the test lengths of 20, 50, and 80 were 30, 75, and 120 min, respectively. 90 simulation conditions were conducted, please see Table 1.

${\lambda}_i\sim \log N\left(\mathrm{3.912,1}\right)$

. In addition, the time limits for the test lengths of 20, 50, and 80 were 30, 75, and 120 min, respectively. 90 simulation conditions were conducted, please see Table 1.

Due to the consistency of results obtained from both the Wald test and likelihood ratio test, aligning with the findings from Cheng and Shao (Reference Cheng and Shao2022), this simulation study only presents the results of the Wald test. Supplementary Tables S5–S7 display the detection results of SIC-CPA method and the Wald test under the significance level of 0.01. The last column in these two tables indicates the proportion of examinees who did not complete the test within the time limit, ranging from 4% to 8%. This suggests that the majority of speeded examinees can complete the test on time. Consistent with Cheng and Shao (Reference Cheng and Shao2022), this article labels examinees who did not complete the test as speeded without performing any statistical analysis.

Overall, the results of SIC-CPA and the Wald test are nearly identical, with SIC-CPA having slightly lower Type I errors. Both methods achieve high CCRs exceeding 0.9 except under the condition with 30% speeded examinee proportion and

![]() ${\eta}_{\mathrm{median}}=0.9$

,

${\eta}_{\mathrm{median}}=0.9$

,

![]() ${\eta}_{\mathrm{var}}=0.04$

. However, when the proportion of speeded examinees is 30%, detection becomes more challenging because the change points are located closer to the end of the test. Under the condition of 30% speeded examinee proportion with

${\eta}_{\mathrm{var}}=0.04$

. However, when the proportion of speeded examinees is 30%, detection becomes more challenging because the change points are located closer to the end of the test. Under the condition of 30% speeded examinee proportion with

![]() ${\eta}_{\mathrm{median}}=0.9$

,

${\eta}_{\mathrm{median}}=0.9$

,

![]() ${\eta}_{\mathrm{var}}=0.04$

, the power of both methods decrease substantially.

${\eta}_{\mathrm{var}}=0.04$

, the power of both methods decrease substantially.

Regarding the accuracy of change point estimation, when the median of

![]() $\eta$

is 0.9, both AL_mean and AL_SD are relatively small, indicating precise change point estimation. As

$\eta$

is 0.9, both AL_mean and AL_SD are relatively small, indicating precise change point estimation. As

![]() $\eta$

variance increases, the power decreases, but the accuracy of change point estimation remains high. Furthermore, as the test length increases, the performance of recovering change points for both methods deteriorate, which is consistent with findings of simulation study I. Under the condition of 30% speeded examinee proportion with

$\eta$

variance increases, the power decreases, but the accuracy of change point estimation remains high. Furthermore, as the test length increases, the performance of recovering change points for both methods deteriorate, which is consistent with findings of simulation study I. Under the condition of 30% speeded examinee proportion with

![]() ${\eta}_{\mathrm{median}}=0.9$

and

${\eta}_{\mathrm{median}}=0.9$

and

![]() ${\eta}_{\mathrm{var}}=0.04$

, SIC-CPA outperforms Shao-CPA in change point estimation accuracy, showing lower values of AL_mean and AL_SD.

${\eta}_{\mathrm{var}}=0.04$

, SIC-CPA outperforms Shao-CPA in change point estimation accuracy, showing lower values of AL_mean and AL_SD.

Table 3 shows the average running time for SIC-CPA method, Wald test, and likelihood ratio test under the test lengths of 20, 50, and 80 when sample size is 500. It is observed that both the SIC-CPA method and Wald test exhibit similar running times. In addition, SIC-CPA is more efficient than the likelihood ratio test.

Table 3 Average running time of SIC-CPA approach, Wald test, and likelihood ratio test when sample size is 500 in simulation study II.

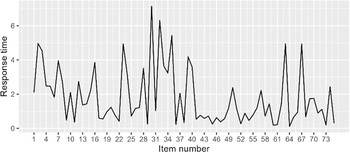

3.5 Simulation study III