Cracks in the canvas

Had Leonard Cohen come from Belfast, and if he had been more cheerful, the lines above might form part of the refrain to his elegiac song ‘Anthem’; they would scan just as well. But he didn’t, and since I can’t afford to republish even two lines from the original, they will have to serve as a placeholder.1

♫ Leonard Cohen, Anthem (1992)

When teaching a course on language and mind – whether it is on language processing, language acquisition or language disorders – the first thing I have students do is watch a BBC Horizon documentary, originally broadcast in 2009, with the title ‘Why Do We Talk?’ As it turns out, this 50-minute programme offers no clear answers at all to why we talk, probably because this is an unanswerable question: philosophers, theologians, poets and other thinkers have been trying for millennia to come up with a viable theory of human action – why we do anything – and the results so far have been less than encouraging. Free will is a troublesome concept, for one thing. What the programme provides instead is an articulate introduction to some core research questions in the area of language and cognition, many of which I aim to re-examine in this book. These include, in some particular order: the question of whether the ability to acquire and use languages appropriately depends on a special kind of mentally represented knowledge – a ‘language faculty’ – or whether this ability rests on more general cognitive capacities; supposing we all possess such a language faculty, where the knowledge it instantiates comes from; the extent to which the environment – more specifically, naturalistic linguistic input – shapes grammatical development; the extent to which language comprehension and language production skills are dissociable; the evidence for a genetic basis to language; the problems of learning artificial languages; and the possible mechanisms of human language evolution. A slew of fascinating topics, then. The broadcast also introduces viewers to some of the key figures in research on language and cognition, and provides a passing glimpse of the experimental methodologies that have been employed to elicit data from language users. All things considered, it is a remarkably useful, engaging and compact piece of television, which is why I have students watch it. You should too, if you can obtain a copy of the programme.

However, to someone who has worked in the field for over twenty years, it is not the information that emerges from the interviews with language researchers that is particularly surprising. If I wasn’t reasonably familiar with the general research questions and the results that have so far been obtained, I shouldn’t be the one writing this book. Instead, the most astonishing feature of the programme is the implied consensus among researchers concerning the answers to these key questions: through careful narration and skilful editing, the programme-makers create the impression that linguists and psycholinguists broadly agree on all the answers to the core issues in language and mind. This is airbrushing on a massive scale, and about as far from the truth as one can get without actually misrepresenting any of the individual contributors to the programme. In the ‘real world’ – that is to say, the academic real world of psycholinguistics – what is mostly observed is not consensus, but ambivalence, controversy, dissent and energetic disagreement about nearly all of these questions, not to mention a fair degree of bitter antagonism and hair pulling. It is often one’s own hair that is pulled, but still: in terms of social and political discourse, linguistics is more saloon than salon, more gladiatorial arena than forum.

There is a positive thing in some respects. A useful analogy here is to seismology. If you want to study earthquakes, two good places are Los Angeles, California, where I attended graduate school, and Kōbe, Japan, the city where I now live. Seismologists don’t spend a lot of time in Belfast, the city where I grew up. In both regions, only just beneath the surface, the ground is fragmented by myriad fissures and thousands of minor fault-lines; it is also more obviously fractured along major fault lines, immediately visible to the naked eye. In California, the most significant seismic fault is named after a Catholic saint, San Andreas, whilst in this part of Japan, it is the more prosaically named Japan Median Tectonic Line (中央構造線 Chūō Kōzō Sen), a branch of which – the Nojima fault – was responsible for the Kōbe (Great Hanshin) earthquake in January 1995, which resulted in more than 6,000 deaths and caused over 10 trillion yen in damages.

In psycholinguistics the most significant fault line is anonymous, but if one were to name it, it should rightfully be called the Noam Chomsky fault. Though he is not himself a psycholinguist, still less a saint, Chomsky has had a more profound, and divisive, impact on the field than any other academic researcher. To gloss over the major and minor fault lines in language and mind, as the Horizon programme does, is to ignore much of the volatility and friction that makes the subject as treacherous – but also as vital – as it is.

The impression of consensus that the programme conveys also seems to pre-empt further research, which is precisely the opposite of what I want to achieve with this book. By clearly exposing some of the fault-lines of psycholinguistic research – especially some of the smaller cracks, where it is easier to make progress – I hope to encourage readers to develop new experiments of their own. To let the light get in.

Of course this truth, that the field is fractured, should hardly come as a bolt from the blue. Issues in language and mind are probably no more contentious than those in any other area of theoretical or empirical enquiry. Nor is it the case that psycholinguists in general are more vituperative than any other group of academics.2 What’s more, if the issues were as done and dusted as the programme’s narration implies, the field would be intellectually moribund, and researchers with any talent would long since have moved on to something more challenging. As in all the other sciences, progress in psycholinguistics – the development of better theories and models – generally comes about through strong competition between alternative sets of hypotheses and interpretations. In principle, this competition could be dialectical in nature, the opposition of thesis and antithesis leading to a synthesis – a resolution – of the two opposed views. In practice, psycholinguistic arguments too often end in ‘winner takes all’ outcomes, which may better satisfy a journal reviewer or a funding agency but which do less to uncover the truth of the matter.

A direct consequence of the intellectual fragmentation of psycholinguistics is that many textbooks offer a partisan view, variously downplaying or dismissing – more often than not, completely ignoring – relevant research from the other side(s). These biases are particularly stark in discussions of language acquisition, but the problem of bias also extends to books on adult language processing and language disorders. One only need contrast introductory works by Guasti (Reference Guasti2004) or Crain and Thornton (Reference Crain and Thornton1998), for example, with those of Harris and Coltheart (Reference Harris and Coltheart1986) or Tomasello (Reference Tomasello1992), to observe systematic biases of reporting more typically associated with ministries of propaganda than with reasoned academic discourse.

My aim here is to provide a more balanced, ‘well-tempered’ treatment,3 considering the research questions from several different angles, giving credit to researchers operating from different theoretical viewpoints, and drawing together some of the key experiments that have brought us to present-day conclusions. This approach closely reflects my own education and training. Over the past thirty years I have been fortunate enough to learn from a wide range of teachers, colleagues and students, some committed generativists, others equally passionate functionalists, still others ‘pure psychologists’ with no particular view of linguistic theory. See Acknowledgments, credits and permissions for details. Almost without exception, I have found these people to be intelligent and intellectually honest researchers, academics who respect empirical results whilst nevertheless disagreeing sharply on what counts as empirical, or even on what they consider to be legitimate research questions. What I have not observed is any obvious correlation between deep understanding and ideological commitment: theoretical zeal can occasionally lead to significant insights, but just as often to remarkable blind spots and arrogant bloody-mindedness. The truth is a grey area: only children, zealots – and some formal semanticists4 – believe otherwise.

A core distinction is drawn here between two highly contrastive research perspectives, between what might be called the ‘two souls’ of classical psycholinguistics (to borrow an expression from Gennaro Chierchia Reference Chierchia1995):

On one side we find a competence-based perspective, inspired and often directly informed by theoretical developments in Chomskyan grammar; the primary concern of competence-based researchers is with the mental representation of linguistic – especially grammatical, and most especially syntactic – knowledge, as well as with the question of how such knowledge comes to be in the mind of adult native speakers;

On the opposite side of the intellectual fault line lies a process-oriented (‘information processing’) approach, which lays emphasis on how speakers comprehend and produce spoken language in real time, in real situations, how children and adult language learners come to acquire the full array of language processing skills that are necessary for the fluent use of particular languages, and on the ways in which these abilities change throughout our lifespan.

These two souls of psycholinguistics are distinguished in the following quote, from Seidenberg and MacDonald (Reference Seidenberg and MacDonald1999: 570):

Instead of asking how the child acquires competence grammar, we view acquisition in terms of how the child converges on adult-like performance in comprehending and producing utterances. This performance orientation changes the picture considerably with respect to classic issues about language learnability, and provides a unified approach to studying acquisition and processing.

This book is written largely in the spirit of that quotation, while still trying to do justice to the issues that competence-based theorists consider important. It must be for the reader to decide whether I manage to square that particular circle. I also discuss research questions relating to the Mental Lexicon, where issues of abstract representation and information processing are more intimately linked than in the syntactic or phonological domains, and where ideological commitments are less in evidence. Finally, at various points in the discussion I will touch on experimental research at the interface between psycholinguistics and other aspects of human cognition and brain function, as well as on language typology, change and language evolution.

A(n) historical overview: dividing the soul of psycholinguistic theory

Before setting out these parallel tracks, it is worth noting that the field of psycholinguistics was not always so riven. Interest in psychological aspects of language and/or linguistic aspects of psychology predates the latter half of the twentieth century,5 but it was only in the 1930s and 1940s in Europe, and the 1950s in the United States, that experimental psycholinguistics emerged as a discipline in its own right.6 In the immediately preceding decades, the predominance of Behaviourism in psychology, in alliance with the strict empiricism of contemporaneous philosophy (logical positivism) and linguistics, had produced a virtual neglect of language in experimental psychology, as well as of psychology within linguistics (especially mainstream North American linguistics). Prior to the 1950s, language was mostly thought of as something ‘out there’, sometimes exotic, often chaotic and mysterious: linguistic behaviour was regarded as unpredictable as it was unstructured. Most American linguists of the period were concerned with the description of the indigenous languages of North America and elsewhere: many of those who worked in this anthropological tradition proceeded from a theoretical assumption that languages ‘... [could] differ from each other without limit and in unpredictable ways’ (a much-cited, now infamous, quote from Martin Joos (Reference Joos1957: 29). Scholars at the time also generally adopted the methodological position that native speakers’ metalinguistic judgments about their own languages were inherently untrustworthy: as Sampson (Reference Sampson1980: 64) reports, linguists were minded to ‘Accept everything a native speaker says in his language, and nothing he says about it.’

The conceptual impetus for a radically different, universalist and exclusively internalist approach to language in linguistics and psychology was provided by Noam Chomsky, whose early proposals for transformational generative grammar – see especially Chomsky (Reference Chomsky1957, Reference Chomsky1965) – revolutionised thinking about language in many parts of the academic world, and who retains a pre-eminent influence in the general area of language and mind. McGilvray ([1999] Reference McGilvray2014), offers a clear, if partisan, overview.7

The ‘Chomskyan Revolution’ in linguistics came to the attention of psychologists through Chomsky’s (Reference Chomsky1959) critical review of B. F. Skinner’s Verbal Behavior, which had been published two years earlier, and which had outlined a strictly externalist account of linguistic behaviour, centred around the notion of operant conditioning. In more hagiographical discussions of Chomsky’s work, it is standard to assert that this review article provided clinching arguments that signalled the death-knell of Behaviourism as a viable psychological theory for the study of language (or, indeed, for much else).8 A case of David vs. Goliath – at least before Malcolm Gladwell’s anti-heroic re-interpretation of the Biblical story (Gladwell Reference Gladwell2013).

On closer consideration, the issues are considerably less clear-cut, as Palmer (Reference Palmer2006) discusses: strip away the polemic, and the arguments against any reasonably nuanced interpretation of Skinner’s proposals are less persuasive than they are often held to be; see also Schlinger (Reference Schlinger2008). Indeed, as Matthew Saxton (Reference Saxton2010) makes clear, Chomsky’s position at the time was in some respects ‘more Behaviourist’ than that of Skinner himself: see also Radick (Reference Radick2016). With respect to the role of imitation, for example, Saxton reminds us that it was Chomsky, not Skinner, who claimed that ‘children acquire a good deal of their verbal and non-verbal behaviour by casual conversation and imitation of adults and other children’ (Reference Chomsky1959: 42), and who later observed, with regard to grammatical intuitions, that ‘a child may pick up a large part of his vocabulary and “feel” for sentence structure from television’ (Reference Chomsky1959: 42). This latter assertion is highly questionable, as subsequent research has shown; see, for example, Kuhl, Tsao and Liu (Reference Kuhl, Tsao and Liu2003). Yet such remarks have been lost to revisionist history.

Even supposing that Chomsky’s critique had delivered a fatal blow, Behaviourism was not killed off overnight. Paradigm shifts in science, like historical grammatical changes, can take several decades at least to work through – centuries, in the case of some syntactic changes. This is the case even though they might appear abrupt in retrospect, and even where they have a clearly pinpointable year of origin; 1066, say, in the history of English. See I is for Internalism below. Moreover, whereas Behaviourism in its classic form might have died as a theory, it has survived well as a methodology: contemporary cognitive psychology inherited from the Behaviourists a concern with rigorously controlled experiments, careful quantitative analysis of elicited data, and replicability as essential aspects of good research practice. (It is another matter, of course, whether such concerns are justified: see Bauer Reference Bauer1994.)

I’ve learned from my mistakes and I’m sure I could repeat them exactly.

Viewed in the round, it seems likely that Chomsky’s critique simply nudged Behaviourist psychology in a more internalist direction rather than dislodging it entirely; see also I is for Internalism. Psychologists of language also generally retained the idea that linguistic behaviour was a worthwhile object of study in its own right, something that Chomsky soundly rejected: whereas generative linguistics abstracts away from ‘performance-related’ issues and focuses rigidly on static grammatical competence, cognitive psychology is still fundamentally concerned with the contingencies of language performance, and especially, with the constraints imposed by time. See Eysenck (Reference Eysenck1984), also C is for Competence~Performance, for discussion.

An awareness of temporal constraints on language is by no means restricted to cognitive psychology. As C. S. Lewis, another Belfast native, observed:

[A] grave limitation of [spoken: NGD] language is that it cannot, like music or gesture, do more than one thing at once. However the words in a great poet’s phrase interinanimate one another and strike the mind as a quasi-instantaneous chord, yet, strictly speaking, each word must be read or heard before the next. That way, language is as unilinear as time. Hence, in narrative, the great difficulty of presenting a very complicated change which happens suddenly. If we do justice to the complexity, the time the reader must take over the passage will destroy the feeling of suddenness. If we get the suddenness we shall not be able to get in the complexity. I am not saying that genius will not find its own way of palliating this defect in the instrument; only that the instrument is in this way defective.

Yet much of what seems crucial about language to other writers, philosophers or/and psychologists is largely ignored by most theoretical linguists, not only generativists.9 See A is for Abstraction, H is for Homogeneity, I is for Internalism, O is for Object of Study (in Part III), for further discussion; cf. Poeppel (Reference Poeppel2014), amongst others.

Whatever was the true impact of his review of Skinner’s work, Chomsky’s own theoretical proposals (Chomsky Reference Chomsky1957, Reference Chomsky1965) did much to kick-start the field of competence-based psycholinguistics as a separate discipline, shifting general scientific attention from the more directly observable aspects of linguistic behaviour – spoken and written utterances, and the corpora derived from them – to the (putative) set of implicit grammatical rules that allow native speakers to acquire and use language productively. More generally, Chomsky redirected psychologists’ attention to the ‘tacit knowledge’ that underlies Linguistic Creativity, which enables speakers to use language in ways that project beyond the primary linguistic data which they are exposed to as children; cf. Sampson (Reference Sampson2015). Within generative grammar, the theory of this implicit grammatical knowledge has come to be referred to as Universal Grammar (or UG, for short); for clear discussion, see especially Crain and Pietroski (Reference Crain and Pietroski2001). Although the precise characterisation of UG has been revised continuously since Chomsky’s early work, claims about its fundamental nature have remained largely unchanged: by hypothesis, UG is internal, implicit (inaccessible to consciousness), intensional [with an ‘s’, see below] and domain-specific. Most crucially, perhaps, for many researchers in this tradition, UG is also innate:10, 11

UG is used in this sense ... the theory of the genetic component of the language faculty ... that’s what it is.

Consequently, for psychologists and philosophers persuaded by Chomsky’s approach, theoretical interest in language resides not in linguistic behaviour per se, nor in the study of different languages (in any ordinary person’s understanding of that term), but rather in a hypothesised mental organ – the innate language faculty – that is supposed to make linguistic behaviour possible, and whose epistemic content is assumed to set strict formal limits on grammatical variation.

Initially, psychology appears to have greeted Chomsky’s proposals enthusiastically, with many experimentalists of the period setting out to test the ‘psycholinguistic reality’ of the theoretical constructs of Transformational Generative Grammar (TGG). A key construct of early TGG, which received a good deal of attention from psychologists, was the distinction between the ‘surface structure’ of a sentence and its ‘deep structure’, these two levels of representation being related by a set of transformational rules, which moved, inserted or deleted phrasal constituents.

This model can be illustrated by considering the English passive construction. In TGG it was proposed that active and passive paraphrases of a given proposition such as ‘Alice drank the potion/The potion was drunk by Alice’ shared a common deep structure, but differed in the number of transformational rules necessary to derive the two surface variants, with more transformations applying to the (ostensibly more complex) passive structure.12 Early psycholinguists reasoned that if these theoretical constructs were psychologically real, and if they were isomorphic with the processes of language comprehension and production, then grammatically more complex sentences – for instance, those involving more transformations in their derivation – should incur greater processing costs relative to derivationally simpler sentences. An additional premise was that these costs should be directly measurable in terms of increased Response Latencies – informally known as ‘reaction times’ – and/or higher error rates. This reasoning formed the basis of what became known as the Derivational Theory of Complexity (DTC).

In spite of some early apparent successes, e.g. Miller and Chomsky (Reference Miller, Chomsky, Luce, Bush and Galanter1963), Miller and McKean (Reference Miller and McKean1964), the DTC foundered rather quickly, as further empirical work failed to show any transparent relationship between derivational complexity and processing costs.13 A much-cited quote from Fodor, Bever and Garrett (Reference Fodor, Bever and Garrett1974: 368) summarises the state of play by the mid-1970s:

Investigations of DTC ... have generally proved equivocal. This argues against the occurrence of grammatical derivations in the computations involved in sentence recognition.

With hindsight – and especially given the crucial distinction between levels of explanation outlined in Marr ([1982] Reference Marr, Ullman and Poggio2010), which I’ll come to in a moment – the absence of any direct correspondence between transformational depth and reaction times or error rates is unsurprising. It’s easy to be clever after the fact. Nevertheless, the alleged failure of the DTC was one of the factors that led many psychologists interested in language to turn away from the grammatical theories offered by formal linguists. Many never turned back.

There were several other reasons. For one thing, generative research was – and generally remains – restricted to the level of the canonical sentence, that which begins with a capital letter and ends with a full stop; see T is for Sentence, v is for von Humboldt. By contrast, experimental psychologists were typically more interested in smaller or larger units of speech: for example, in the problems of real-time word recognition, the role of lexical frequency in acquisition and processing, or the interplay of grammatical and pragmatic information in the interpretation of spoken and written discourse, to cite just a few relevant issues. Two key findings of the period were those of Sachs (Reference Sachs1967), whose results suggested that listeners do not retain any conscious memory of the surface syntactic form of an utterance, though they do retain its meaning, and slightly later, the work of Bransford and Franks (Reference Bransford and Franks1971), whose experiments implied that the final interpretations that listeners derive from sentences involve (inextricable) inferential content that is not represented anywhere in the deep structure of the sentence; in other words, listeners are unable to disentangle assertions from inferences.14 Many psycholinguists came to interpret findings such as these as suggesting that transformational grammar had little empirical – or even heuristic – value.

One final consideration was as much sociological as it was empirical: psychologists turned away from theoretical linguistics because generativist theory was almost wholly unresponsive to their results, supportive or otherwise; see Cutler (Reference Cutler2005). Plus ça change. Formal linguistic theory (generative theory, at any rate) has developed considerably since the 1960s, and especially since the mid-1990s, but this has invariably been in reaction to internal theoretical arguments – to a lesser extent, to new intuitional data – rather than to the empirical results from the types of group studies favoured by psychologists. The University of Massachusetts linguist and acquisitionist Tom Roeper summed it up nicely back in 1982 (in a quotation cited by Newmeyer Reference Newmeyer1983):

When psychological evidence has failed to conform to linguistic theory, psychologists have concluded that linguistic theory was wrong, while linguists have concluded that psychological theory was irrelevant.

It could be argued that generativists’ dismissal of relevant data is a near-inevitable consequence of strictly deductive modes of reasoning. By definition, the inductive mode of enquiry favoured by most psychologists is more responsive to new data than the deductive approach pursued, in its purest form, by Chomsky and others at the vanguard of generative research. This Galilean (hypothetico-deductive) style can be clearly appreciated by watching a recent lecture, recorded at CNRS in Paris in 2010.15 See Box A below for an excerpt from the transcript; for a detailed critique, see Appendix B (website). Over 120 or so minutes, Chomsky develops a logically compelling discussion of I-language and UG – compelling, as long as one grants all of the segues from description to theory, and all of the necessary auxiliary assumptions, very few of which are presented with supporting evidence. More generally, Chomsky is often dismissive of the use of certain kinds of empirical data – particularly quantitative data – in counter-arguments to his theoretical positions. The following comment from another article is typical of his response to evidence-based challenges from non-generativists:

[Galileo] dismissed a lot of data; he was willing to say: ‘Look, if the data refute the theory, the data are probably wrong.’ And the data that he threw out were not minor.

It is hard to see that such a stridently anti-empiricist position is defensible, let alone commendable: see Yngve (Reference Yngve1986) for a diametrically opposed view.

Whatever the relative weighting of these various factors may have been, the psycholinguistic paradigms of generative linguists and those of psychologists had largely drifted apart by the mid-1970s, giving rise to the ‘two souls’ situation that persists to the present, and which is reflected in the partisan publications mentioned above.

♫ Leonard Cohen (words), Sharon Robinson, Alexandra Leaving (2001)

Excerpt from Noam Chomsky, Poverty of Stimulus: Some Unfinished Business, lecture delivered at CNRS Paris, Reference Chomsky2010. See Appendix B (website) for a critique.

The direct study of the internal capacity of a person is the study of what is sometimes called ‘internal language’ – (ii)whatever you have in your head – I-language, for short ... (iii)So, that concept ... the study of I-language is somehow logically prior ... presupposed by everything. (iv)And if we investigate that topic, we enter into an enquiry, into language as essentially part of biology ... (v)Whatever the linguistic capacity of a person is, it’s something internal to them, (vi)it’s essentially a kind of an organ of the body on a par with the visual system, or the immune system, (vii)or some other sub-system, sometimes called subsystems, or organs of the body ... (viii)So, I-language is such a system ... (ix)What is it? Well, its most elementary property is the property of what is called discrete infinity. (x)So, take sentences: you can have a five word sentence, you can have a six word sentence, but you can’t have a five-and-a-half word sentence. (xi)And it goes on indefinitely ... You can have a hundred-word sentence, a 10,000-word sentence, and so on. (xii)It’s like the numbers, the natural numbers. (xiii)That property of discrete infinity is important, and from a biological point of view it’s quite unusual ... (xiv)You don’t find it in the biological world, above the level of maybe DNA, (xv)but it seems to be a unique property of human language ... (xvi)You find it in the number system, but that’s probably an offshoot of language. (xvii)There’s no other system in the world that’s known to have that property. (xviii)Well, the general theory of discrete infinity is pretty well understood; it has been well-understood since the 1940s, it’s called the theory of algorithms, the theory of recursive function, various other names, (xix)and the theory of language is going to fit in there somewhere. (xx)That is, the core of an I-language is going to be some kind of an algorithm, some kind of a generative procedure, (xxi)that constructs an infinite array of hierarchically structured expressions ... all internal to us, (xxii)which are then transmitted to what are called interface systems, to other systems of the cognitive system, the physical system, (xxiii)and there are at least two of these: the one is the sensorimotor system – because it’s externalised somehow – (xxiv)and the other is systems of thought, planning, understanding, perception, interpretation – loosely called the Semantic Interface. (xxv)So there’s a Phonetic Interface and a Semantic Interface: Phonetic interface is with the sensorimotor system, semantic pragmatic interface is with systems of thought, planning, action, and so on. (xxvi)And the core properties of language are [sic] that it has such a generative system, that’s the I-language, which essentially provides instructions to these other systems. (xxvii)Now we’re interested in this generative procedure in what’s technically called ‘in intension’ not ‘extension’ (that’s intension with an ‘s’), (xxviii)meaning we want to know what it actually is, not just what is the class of structures that it generates, so that’s a study of function in intension, (xxix)and that’s what we’re interested in if we want to consider language as part of the biological world, (xxx)because what’s represented in the brain somehow is a particular algorithm, not a class of algorithms, which are equivalent in the sense that they have the same category of structures that they produce ...

There may be said to be two classes of people in the world; those who constantly divide the people of the world into two classes, and those who do not.

No representation without process, no process without representation

A proper understanding of how language is comprehended and produced requires an integrated approach that gets beyond ideology, and which combines theories of representation and process. In his seminal work on computer vision, titled (obviously enough) Vision, David Marr had this to say:

Vision is ... first and foremost an information-processing task, but we cannot think of it as just a process. For if we are capable of knowing what is where in the world, our brains must somehow be capable of representing this information ... The study of vision must therefore include not only the study of how to extract from images the various aspects of the world that are useful to us, but also an inquiry into the nature of the internal representations by which we capture this information and thus make it available as a basis for decisions about our thoughts and actions ...

... This duality – the representation and the processing of information – lies at the heart of most information processing tasks, and will profoundly shape our investigation of the particular problems posed by vision.

Replace the term vision with ‘(spoken) language comprehension and production’, and will with ‘should’ in the penultimate line, and you have – in a fairly large nutshell – what classical psycholinguistics is concerned with, namely: ‘the study of how to extract from [speech and text] the various aspects of the world that are useful to us, [and] an enquiry into the nature of the internal representations by which we capture this information and thus make it available as a basis for our thoughts and actions’.2

Marr’s book contains several other highly relevant ideas concerning the nature of explanation in vision research: the first chapter is required reading for anyone interested in any kind of cognitive process from a computational perspective. I’ll return to these ideas directly, not only because they had a significant influence on the direction of psycholinguistic research through the latter part of the twentieth century, but also because they provide a vital clue to understanding why many theoretical linguists and psychologists so consistently misunderstand one other when it comes to language and cognition.3

On the face of it, integration would seem to be a simple matter: take what competence-based approaches have determined about what is mentally represented and tack on what processing theories have discovered about how this internalised knowledge is put to use in language comprehension and production. Closer scrutiny, however, shows this to be about as practicable as splicing a gene sequence to the front half of a donkey to make a horse, or merging an architectural blueprint with the physical foundations of a house to create a palace.

The problem of incommensurability is compounded by the fact that even if the two theories were immediately compatible at a psychological level of explanation, we have no clear idea, a priori, of which linguistic phenomena should properly be handled in terms of stored representation, and which in terms of process, or even – as some researchers would contend – whether the distinction is a useful one. See also Stone and Davies (Reference Stone, Davies, Braisby and Gellatly2012).

A fuller appreciation of the (in)commensurability problem involves a philosophical journey from Chomsky to Marr and back (to Chomsky). Though I believe this trip is worthwhile, it’s not entirely necessary to appreciating the challenges of integration: if such scientific navel-gazing is not for you, you can skip ahead to Part II.

From Chomsky to Marr ...

My main purpose in this chapter and the next is to develop the claim that Chomskyan grammar and classical psycholinguistics constitute two largely orthogonal approaches to the study of language and mind, each with their own domains of enquiry and research agendas. The answers you get invariably depend on the questions you ask, so different questions lead to different answers, not necessarily better ones:

Most generative linguists are interested in modelling the fundamental formal properties of natural language grammars, and – in some cases – understanding the limits on grammatical variation.4 In more recent years, the emphasis has been on describing the computational properties of the ‘initial state’ of the language faculty, that is to say, the knowledge of language that all typically developing children are assumed to share at birth, prior to exposure to any particular language(s); see, in particular, Chomsky (Reference Chomsky2005). Such knowledge – supposing it exists as a distinct component of mind – is by definition extremely abstract, very far removed from the kinds of linguistic behaviour that psycholinguistics is mostly concerned with.

A comparison between investigations of the beginnings of the universe and the applied mathematics of downhill skiing is an extreme, but not totally far-fetched, analogy.

By contrast, researchers in (experimental) psycholinguists are primarily interested in explaining how adult language users are able to understand and produce the particular language(s) they actually speak. No-one ‘speaks UG’, nor does knowing UG help a native English speaker to understand spoken Hungarian, even to the same level of proficiency as a Hungarian speaker’s pet Labrador. (See Andics et al. (Reference Andics, Gábor, Gácsi, Faragó, Szabó and Miklósi2016), also Hoffman (Reference Hoffman2016), for some balanced commentary on the language ability of our canine companions.) The ability to use a variety of English, or Russian, or Chichewa, or Inuktitut – or any of the thousands of languages that humans speak – may rest in small part on innate epistemic content, but it also depends to a much larger extent on learned knowledge about those particular varieties, as well as on a diverse set of interactions with other cognitive and sensorimotor skills, most of which are not at all specific to language. It follows from this that the kinds of constructs that are postulated by some theoretical linguists as grammatical axioms are only tangentially related to the constructs recruited by psycholinguistics to explain language use. Indeed, the former concepts may not be relevant at all to explaining how we use the languages we know. See especially G is for Grammar, H is for Homogeneity, I is for Internalism below.5

This disconnect between linguistics and psycholinguistics would be clearer were it not for the fact that many of the terms that crop up in psycholinguistics also appear – without scare quotes or other flags – in more abstract grammatical theories. So, for example, it is easy to conflate the debate among generative linguists between representational vs. derivational approaches to (UG/I-language) syntactic analysis with the representational (declarative) vs. processing (procedural) distinction in psycholinguistics. The two may be related, but they are not isomorphic with one another: that is to say, there is no one-to-one correspondence between them. This lack of isomorphism means, for instance, that there is no fundamental incompatibility between the generative assumption that syntactic derivations proceed asynchronously, bottom-up (from ‘right to left’) through recursive application of Merge, or some similar Minimalist operation, and the psycholinguistic proposal that English utterances are parsed incrementally in real time, from ‘left to right’, with listeners making almost instantaneous use of all potentially relevant information to arrive at an appropriate interpretation of a sentence (in a particular context of utterance).6 Likewise, whereas construction-specific rules are anathema to most theoretical accounts of UG, this does not mean that different constructions are not parsed as such by the language processor.

[Take a breath, here.]

The refusal to distinguish these two levels of explanation has generated an enormous amount of heat – and precious little light – in the psycholinguistics world. It has also led to the publication of articles with titles such as ‘Empty categories access their antecedents during comprehension’ (Bever and McElree Reference Bever and McElree1988). This is rhetorical sleight of hand – shorthand, at best – for: ‘[Whatever mental constructs or processes are correlated with the notion of] empty categories [in the version of generative theory we are currently using] access [whatever mental constructs or processes correspond to the notion of grammatically expressed] antecedents during spoken language comprehension.’

Admittedly, the expanded version of the title is hardly slick, but it’s considerably less misleading. The general point here is that whatever turns out to be the best theory of grammar is not necessarily the right theory of language processing or of ‘language in mind’: it needn’t even be close.

In order to better appreciate this argument, it is helpful to consider some very rudimentary problems in arithmetic. This initial take will be fairly quick and mostly painless. The approach taken here is also extremely rudimentary: coming from an absurdly narrow Arts and Humanities background, my last formal brush with mathematics was a mediocre pass at GCE ‘O-level’, circa 1977. For this reason, mathematicians, and the excessively numerate, should look away.

The Joys of Arithmetick

‘Two numbers of like kind’

First, try to figure out as quickly and accurately as possible the answers to the following simple multiplication problems:

| (1) | What is ... |

| a. 14 times 5? | |

| b. 21 times 17? | |

| c. 10 times 7? | |

| d. 9 times 13? | |

| e. 9 times 12? | |

| f. 30 times 2? | |

| g. 8 times 7? |

The recursive principle involved in each of these problems is precisely the same, namely, iterative addition: the product of any two numbers is obtained by taking one number and adding it to itself the number of times represented by the other number. Or, as Edward Cocker expressed it in his immodestly titled (albeit posthumously published) Cocker’s Arithmetick (Reference Cocker1736):

Multiplication is performed by two Numbers of like Kind, for the Production of a Third, which shall have Reason to the one, as the other hath to the Unit, and in Effect is a most brief and artificial Compound Addition, of many equal Numbers of like kind into one Sum. Or, Multiplication is that by which we multiply two or more Numbers, the one into the other, to the end that their product may come forth, or be delivered. Or, Multiplication is the increasing of any one Number by any other, so often as there are Units in that Number, by which the other is increased; or, by having two Numbers given to find a Third, which shall contain one of the Numbers as many times as there are Units in the other ...

As you might recall from some school mathematics lesson, the property of commutativity means that whichever number you choose to operate on, the result is identical (mn = nm): so, (26 × 378) = (378 × 26), to take a specific pair of values. All of these problems can be described by the same recursive function. Computationally, they are identical kinds of function in intension.

In spite of this equivalence, some sums are markedly easier for the average human (calculator) to solve quickly – unless you are a mathematical prodigy – though the precise ranking from trivial to challenging depends in part upon your age, and where and when you went to school. For almost everyone, (1c) and (1g) are the easiest, closely followed by (1f) and (1e);7 problems (1b) and (1d) are among the most difficult. And for most people, (1a) is significantly harder than (1c), in spite of the fact that they are instances of the ‘same problem’: (14 × 5) = (7 × 10) = 70.

In the simpler cases, no actual calculation is involved, assuming that you’re older than nine or ten years of age. We don’t figure out 8 times 7 (1g); instead, we look it up, something we are able to do in virtue of rote learning of times tables in elementary school. In many countries, times tables go to 9 (10 is assumed to come for free) – in others, to 12; it may be even higher in some educational systems. In my own case, [‘twelve twelves are ...’] 144 constitutes the outer edge of my finite arithmetic universe, at least that part which is stored in declarative memory. As a result, for adults with my education, problem (1e) is trivial compared to problem (1d); indeed, it’s not really a problem, since nothing is computed. For Japanese readers, on the other hand, (1d) and (1e) require approximately the same amount of additional calculation.

Having declarative knowledge of up to 12 times 12 also means that the answer to (1d) is usually derived by going to the edge of the nines and adding one more: ((‘twelve nines are one-hundred-and-eight), plus nine, makes one-hundred-and-seventeen’). That at least is my intuition – and intuitional judgments presumably tell us something.

If you consider that this type of intuitional judgment might be unreliable, you are probably in good company. You are not, however, in the company of typical generative linguists, for whom such introspection is an unexceptionable part of data collection. Validating arguments for this position are offered by Sprouse and Almeida (Reference Sprouse and Almeida2012); cf. Gibson, Piantadosi and Fedorenko (Reference Gibson, Piantadosi and Fedorenko2013), also J is for Judgment below, for some opposing views.)

In the case of (1d), there are many other paths (algorithms) that might be taken to arrive at the correct answer, including adding 9 to itself 13 times in incremental steps, but – once again, intuitively – I suspect that this is the last one chosen. There is no reason to suppose that the (intensionally) simplest algorithm is the one selected. Similarly, a moment’s reflection tells us that (1a) and (1c) are equivalent, and that the fastest way to calculate (1a) is to transform it to (1c); in spite of this, it seems that most people tackle problem (1a) procedurally, through a variety of different algorithms ((14 × 5); ((14 × 2) × 2) + 14); (10 × 5) + (4 × 5), etc.), rather than converting it to a look-up problem.

Notice that it is possible that the preferred algorithm in a particular instance might vary according to the individual, or to the relative size of the numbers – for example, larger number times smaller number, or vice versa – or to the presentation order, or even, conceivably, to the time of day, or what the person had for breakfast. As long as all of these paths through numerical maze converge on the same result, they are equivalent. And no matter which path (algorithm) is chosen, it will take fractionally longer than ‘reading off’ the answer, which is what happens in the case of problem (1c); ‘real problems’ are also more prone to error.8

Despite the connotations of complexity, there is nothing inherently mysterious or difficult about the term algorithm: an algorithm is no more than a set of steps taken to lead to some outcome; in this case, a path through arithmetic space to a particular destination (or finite range of destinations). The simplest algorithms are deterministic, which is to say, they produce the same results every time. Simple addition and multiplication involves deterministic algorithms: except in George Orwell’s 1984, 2 + 2 always makes 4. Real life, however, almost invariably involves probabilistic algorithms: boil an egg for exactly three minutes and it should be perfectly soft-boiled, but not if it’s really fresh, or has been sitting around too long, or is oversized, or ... Although I adopt the metaphor of multiplication here, language processing is more like boiling an egg – making a soufflé, even – than it resembles simple arithmetic.

Something that is easily overlooked is that we never calculate through pure iterative addition even in those cases where we can’t look up the answer: without applying some declarative knowledge of times tables, most people can’t get off the ground at all. To appreciate this, try working out 43 times 89 using only iterative addition: simply adding 89 to itself three – let alone forty-three – times, is strenuous enough. Once again, Edward Cocker was clear on this point, three hundred years ago:

The Learner ought to have all the Varieties of Single Multiplitation [sic] by Heart, before he can well proceed and farther into this Art, it being of most excellent Use, and none of the following Rules in Arithmetick, but what have a principal Dependance thereupon, which may be learned by the following Table.

The availability of two systems of reckoning leads to the theoretically incoherent yet entirely reasonable conclusion that – in a purely declarative way – it is possible to know the answer to ‘9 × 6 = ?’ while being mistaken about ‘3 × 18 = ?’, or even ‘6 × 9 = ?’9 Anyone who supposes that people ‘reflexively know’ the property of commutativity must not have spent time helping average six or seven year-olds with their mathematics homework. (This observation is routinely ignored by many of my professional linguists, who may themselves have been mathematical prodigies, and whose children may display similar talents. By contrast, arithmetic calculation has always been a challenge for me, as it is for my children. There are times when being stupid helps you to understand things more clearly.)

What’s more, the general concept of abstract number appears to be a very recent cognitive attainment. Devlin (Reference Devlin1998: 21), for example, asserts that:

[It] was not recognised, nor were behavioural rules such as those concerning addition and multiplication formulated, until the era of Greek mathematics began around 600 BC.

In other words, in spite of our educated intuitions on the matter, knowledge of the principle of commutativity is more likely to be a deductively discovered fact about the world of numbers than an instinctive, biologically wired construct.

From times tables to grammatical productivity

The parallels with typical second language learning should be obvious. Thanks to rote learning, we can fairly easily learn and record the meaning of some complex grammatical expression such that we are able to use it fluently and appropriately (‘phrase-book French’); see Part IV. But without some further analysis and computation, it is impossible to generalise that knowledge to produce related expressions in the way that an adult native speaker seems to be able to do.

Two examples serve to illustrate this point.

The first case comes from Japanese, a language that has been pestering me on a daily basis for several years now. Specifically, the concern is with the Japanese expression x mitakotonai (desu), usually translated as ‘(I) haven’t seen x (before).’ The corresponding question form is x mitakotoaru? ‘Have you seen x (before)?’ Given this alternation, it doesn’t take much effort to figure out that nai is the negative form of aru (especially if you spend time around Japanese toddlers, nai being heard about as often as English No!). But the analysis of the rest of the string eluded me for nearly a year after I first heard it: it was clear how to use the expression appropriately, and what it meant, but not how that meaning was composed. One day, however, the light went on – or at least, began to flicker fitfully. At a certain moment I understood that there were four morphemes in the string: 見/mi, the root, meaning ‘see’; ta, the perfect morpheme; koto ‘fact’; nai/aru ‘not/is’. Following this stunning breakthrough, I was able to generalise the analysis to other predicates, such as 行ったことない/i.tta.koto.nai ‘(I) haven’t been there before’ (from行/ik.u ‘go’). Significantly, however, I am still not sure some five years later how to generalise to predicates from different conjugations, or to those of lower token frequency (e.g. 読む yom.u ‘read’, 買うka.u ‘buy’), nor do I know whether the same syntax can be used with light verb expressions (e.g. benkyou suru ‘study’ ➔ furansugo-o benkyou shita koto nai ‘(I) haven’t studied French before’). As soon as I hear the construction used with another predicate root, I can immediately analyse it, but I have no confidence in spontaneously producing a new form. In short, I can generalise, but only partially. Hence, though the grammatical rules that linguists invoke to describe the process may have an infinite extension in principle, in my Japanese interlanguage this grammatical knowledge is highly restricted. It hardly amounts to a rule at all. Instead, my knowledge in this syntactic domain consists of what the psychologist Michael Tomasello has termed ‘verb islands’ (Tomasello Reference Tomasello1992) – though islets or atolls might be more appropriate metaphors. And this knowledge shows no sign of further development, five years on.

Similarly, when learning German as a teenager, I struggled with the problems of nominal inflection, and in particular, with the interactions between case, number and gender across so-called ‘strong’ vs. ‘weak’ nominal paradigms. The full system of endings, no doubt familiar to readers of any standard teaching grammar (e.g. Durrell Reference Durrell2011), is exemplified in Table 1, which displays the inflectional paradigms for indefinite and definite noun-phrases, respectively.

Table 1 ‘Angry lions, brightly painted horses, [for] a little while’: nominal inflection in German.

| Case/Gender | Masculine ‘an angry lion’ | Feminine ‘a little while’ | Neuter ‘a brightly coloured horse’ | Plural |

|---|---|---|---|---|

| Nominative (default) | ein böser Löwe | eine kleine Weile | ein buntes Pferd | bunte Pferde/Löwen |

| Accusative | einen bösen Löwen | eine kleine Weile | ein buntes Pferd | bunte Pferde/Löwen |

| Genitive | eines bösen Löwens | einer kleinen Weile | eines bunten Pferdes | bunter Pferde/Löwen |

| einem bösem Löwen | einer kleinen Weile | einem bunten Pferd | bunten Pferden/Löwen | |

| Nominative (default) | der böse Löwe | die kleine Weile | das bunte Pferd | die bunten Pferde/Löwen |

| Accusative | den bösen Löwen | die kleine Weile | das bunte Pferd | die bunten Pferde/Löwen |

| Genitive | des bösen Löwens | der kleinen Weile | des bunten Pferdes | der bunten Pferde/Löwen |

| Dative | dem bösen Löwen | der kleinen Weile | dem bunten Pferd | den bunten Pferden/Löwen |

The Rilke poem below (‘Das Karussell’) offers a much more brilliant use of a subset of these forms.

Closer inspection of these tables reveals that the inflectional system of Standard German is less horrendous than it could be, given the many neutralisations across rows and columns. For example, gender distinctions in both ‘weak’ and ‘strong’ paradigms are neutralised in the plural (e.g. der~die~das ➔ die), gender distinctions between masculine and neuter are neutralised in the dative singular (both einem/dem xadj-en); strong forms of inflection are only expressed once (either on the attributive adjective or on the determiner, but not both). So in some sense, it could be a lot worse: in the limit, there could be a separate exponent for each cell in the paradigm, with different phonological endings for determiners and adjectives.

In spite of these simplifications, many second language learners never achieve full control of the inflectional system: see Lardiere (Reference Lardiere2016). Some people don’t even try, settling instead for a schwa or zero ending on every adjective, and a near-uniform choice of nominative determiner (either der, die or das, across the board). With a few tweaks, these learners could almost be speaking Dutch, which – in this regard at least – is a much simpler proposition. For learners who persevere, their knowledge of the German paradigms grows, sporadically and partially, driven by both type and token frequency:

In terms of type frequency, most learners acquire the more frequent nominative (default) and accusative singular forms of a (D)-(A)-N sequence before mastering the genitive or dative. Genitive forms (of common nouns) are hardly ever encountered in colloquial German, and so may never be fully acquired by native speakers, let alone second language learners. Indeed, several German dialects have lost their genitive declension forms, except for the possessive -s found with proper names; e.g. Marias Schwester (‘Mary’s sister’); what in English is sometimes termed the ‘Saxon Genitive’.10

The same holds for token frequency. Even a non-German speaker is likely to produce Eine Kleine Nachtmusik correctly, without recognising the case and gender of the noun Musik; yet, in spite of this, an intermediate L2 learner may have serious difficulty in deciding which of the dative alternants in (2) is grammatically appropriate (note that the preposition mit ‘with’ requires the dative case):

(2) a. Mit Eine Kleine Nachtmusik verabschieden wir uns für heute. b. Mit Einer Kleinen Nachtmusik verabschieden wir uns für heute. c. Mit Eine Kleinen Nachtmusik verabschieden wir uns für heute. ‘With Eine Kleine Nachtmusik we take our leave for today.’ Bayrischer Rundfunk (Bavarian Radio) sign-off

Somewhat remarkably – remarkably to an English speaker, that is – the answer is (2b): even titles inflect for case.

The acquisition of grammatical knowledge, here and elsewhere, is intimately tied to particular lexical entries, and to usage.11 It’s vanishingly unlikely that anyone – native speaker or second language learner – has internalised the inflectional paradigms as a table, or as a fully autonomous set of inflectional rules, even though evidently their knowledge can be represented that way in a linguistic description. Here once more, rules may be a useful way of describing grammatical knowledge, but one can have internalised grammatical knowledge without ever knowing a rule in the classical sense. For further discussion, see G is for Grammar below, also Part IV; for an enlightening, computational analysis of German inflection, see Cahill and Gazdar (Reference Cahill and Gazdar1999), also Kilbury (Reference Kilbury2001).

In Part IV, I return to the question of partial knowledge in (second and first) language acquisition, respectively. For now, these examples serve to highlight the difficulty in determining the source and generality of grammatical knowledge in the mind of any particular language learner.

Figure 3 Und dann und wann ... [ein blaues Pferd]: carousel at Kobe Zoo.

A little more arithmetic

Before leaving arithmetic, consider the additional problems in (3) and their correspondents (4) below. Computationally, these are nearly identical to one another, but for various reasons – because of the representational format in (4a), the less common sequence of operations (division vs. multiplication (4b)), the number base involved (4c) – the latter problems demand more processing resources, and considerably more time, on the part of the ordinary reader/human calculator.

| (3) | a. What is 78 times 26? |

| b. What is 12 times 80? | |

| c. What is 3 times 2? |

| (4) | a. What is LXXVIII times XXVI? |

| b. What is 960 divided by 12? | |

| c. What is (11)2 times (10)2? |

In the following extract, Frank Land provides a clear explication of the contrast between (3a) and (4a):

The difficulties of using other notations for quite simple arithmetical operations may be illustrated by the simple calculation of seventy-eight multiplied by twenty-six, using Roman numerals. We must multiply by one symbol at a time, remembering that multiplying by I simply gives the original number, multiplying by V involves replacing I by V, V times V is XXV, V times X is L, V times L is CCL. The calculation would be:

LXXVIII | |

XXVI | |

LXXVIII | |

CCLLLXXVVVV | |

DCCLXXX | |

DCCLXXX | |

MMXXVIII | |

where in adding the rows together we replace two Vs by one X, five Xs by L, two Ls by C, five Cs by D, and two Ds by M. The reader can best appreciate the work which is involved by first writing seventy-six and thirty-seven in Roman numerals, and then multiplying them together. If the answer is then checked by ordinary multiplication, the labour required by the two methods may be contrasted.

If multiplication is somewhat laborious, division becomes almost impossible.

What has this got to do with language(s)? The answer should be reasonably clear: just like mental arithmetic, language (acquisition and) processing also involves some combination of stored, rote-learned knowledge and more general procedural rules – or whatever properties of the processing system allow for productive generalisations (so-called ‘linguistic creativity’). This is what was illustrated by the examples in (1–2) above; the contrast between the examples in (3) and (4) demonstrates, in addition, some ways in which representational format significantly impacts on processing difficulty.

There are 10 kinds of people in the world: those who understand binary, and those who don’t.

Why was 6 afraid of 7? Because 7 8 9.

Why don’t jokes work in base 8? Because 7 10 11.

Hence, the empirical questions of adult (end-state) psycholinguistics boil down to these four: (i) What is actually stored in a speaker’s mind? (ii) What, if anything, is generated ‘by grammatical rule’? (iii) How does the representational format of (i) impact on (ii). Finally, (iv) to what extent do the answers to (i)–(iii) vary across languages and across individuals, and/or groups of speakers?

In addressing these questions, it is vital to distinguish between the logical answer to a different question – What would an ideal computational system look like (in respect of these questions)? – and the empirical answer (to the questions at hand). The first may be tantalising to the theoretical linguist, but it is almost completely irrelevant from a psycholinguistic point of view.

In the case of inflectional morphology, for example, it is clear that information about irregular or syncretic forms – irregular past-tense forms in English, for example – must be mentally represented in some way, as must some information concerning the form and meaning of lexical roots (see B is for Arbitrariness below). By contrast, predictable, readily calculable information, such as the plural or past-tense forms of regularly inflected nouns and verbs, does not need to be stored since in principle it can always be generated by rule. This redundancy means that stored regular plurals have no place in an elegant theory of word-formation, any more than times tables belong in a pure theory of arithmetic. But this is not to say that storing pre-compiled regular plurals or past-tense forms might not be very useful to the speaker or listener having to process English nouns and verbs in real time. Irrespective of what the theory minimally requires, it is a separate empirical question just how much redundancy is involved in morphological processing, specifically, whether regular inflected forms are represented, or derived by rule, or both, and how much the answer to this question varies from one individual, or subset of speakers, or one language variety to the next. (It is also possible that some kinds of irregular forms are treated as morphologically decomposable: see, for example, Fruchter, Stockall and Marantz (Reference Fruchter, Stockall and Marantz2013). See also Bonet and Harbour (Reference Bonet, Harbour and Trommer2012) for a particularly lucid discussion of the regular vs. irregular distinction more generally, a contrast that turns out to be more nuanced, intricate and theoretically interesting than most experimentalists have typically assumed.)

Parallel considerations apply to sentence processing. Over the years, mainstream generative syntax has dispensed with a very large number of theoretical notions. The set of discarded constructs, some of which play a significant explanatory role in other grammatical theories, includes the notion of construction. At least since Chomsky (Reference Chomsky1981) it has been taken for granted in generativist circles that there are no construction-specific rules of grammar, that every grammatical sentence is informed and regulated by a common set of more abstract rules and constraints. Take, for instance, the Passive Transformation of TGG, discussed earlier: this language-particular, construction-specific, rule of the Extended Standard Theory was replaced in the 1980s by a set of interacting constraints and general movement rules (‘Case Filter’, ‘Move-alpha’) that together derive the effects of the Passive Transformation without reference to any specific structural descriptions. For discussion, see Chomsky (Reference Chomsky1981), Baker, Johnson and Roberts (Reference Baker, Johnson and Roberts1989), also Kiparsky (Reference Kiparsky2013); though cf. Pullum (Reference Pullum, Ebert, Jäger and Michaelis2010), also Part II below. At a theoretical level then, references to particular constructions are generally considered redundant and therefore eliminable. Yet this theoretical modification clearly doesn’t exclude the possibility that constructions are psychologically real and used by the language processor in analysing utterances. Once again, for psycholinguists, this is an empirical question to be decided by experimental evidence, rather than by considerations of formal economy or theoretical elegance. And there is no shortage of data from a wide variety of sources – from syntactic priming experiments, from production errors, from first and second language acquisition studies, from agrammatism and dementia research – all of which supports the idea that constructions, as well as fully fledged formulaic utterances, are stored as pre-compiled strings in declarative memory. (With respect to dementia research, for example, see Sidtis et al. Reference Sidtis, Kougentakis, Cameron, Falconer and Sidtis2012, Sidtis and Bridges Reference Sidtis and Bridges2013; see also Theakston Reference Theakston2004, Cornips and Corrigan Reference Cornips and Corrigan2005.)

The possibility that (at least high-frequency) constructions and schemata are stored complete, ready to use ‘off the peg’, has significant implications for our capacity to judge grammatical acceptability – traditionally, one of the empirical cornerstones of generative grammar. If we only have ‘words and rules’ in our heads, the ability to judge grammaticality must depend on syntactic computation. If, however, constructions are stored as pre-compiled templates, then it is possible that we could judge the ill-formedness of *Peter buys often books or even of *Who did you say that left? without any syntactic computation, given a sufficiently enriched lexical–grammatical network, just in the same way that we can judge the ill-formedness of *8 × 7 = 66 without doing any arithmetic at all; see Allen and Seidenberg (Reference Allen, Seidenberg and MacWhinney1999), MacWhinney (Reference MacWhinney, Barlow and Kemmer2000); cf. Akhtar and Tomasello (Reference Akhtar and Tomasello1997).12 This issue is taken up again in Part II, Cases #5 and #6, and in various chapters in Part III, further below.

Before adjourning discussion of declarative vs. procedural knowledge, it is important to mention the seminal contribution of Michael Ullman and his colleagues, e.g. Ullman (Reference Ullman2001b, Reference Ullman2001a, Reference Ullman2004), Ullman and Pierpont (Reference Ullman and Pierpont2005), Hartshorne and Ullman (Reference Hartshorne and Ullman2006). Expert readers may find it remarkable that Ullman’s name has not come up before now, since he is probably more closely associated with the procedural–declarative distinction than any other psychologist. Ullman’s work offers extensive behavioural and neurological evidence of a clear distinction between two brain memory systems, one declarative, the other procedural, which together share responsibility for spoken language comprehension and production in normal speakers, and which have been shown to be selectively impaired in aphasic patients. See also Paradis (Reference Paradis2009) for discussion of the declarative vs. procedural contrast in second language learners.

The reason for omitting this research from the discussion to this point is not that it is unpersuasive – though see Embick and Marantz (Reference Embick and Marantz2005) – but because it massively complicates the picture. For what Ullman’s research demonstrates is the dramatic variability that exists within different sub-populations of speakers of the same language, with respect to how particular kinds of linguistic knowledge are represented and processed: typical native speakers are shown to contrast with typical second language learners, but so do boys and girls before, during and after puberty; women’s language processing has been shown to differ at different points in their menstrual cycle, and so on. Crucially, these are also dynamic continua: one can predict rough probabilities across sub-groups, but it is impossible to say for any individual, on any particular occasion of utterance, which of the two systems they will rely on more.

Interim summary

In this chapter, I have considered a crucial contrast that David Marr’s work helped to highlight: the distinction between representation and (algorithmic) process. My aim has been to demonstrate that the two notions are intimately inter-related, and critically balanced: the nature of the linguistic representations in our heads, in whatever modality, affects and partially determines the class of algorithms that make use of these representations. And vice versa. It has also been suggested that theoretically relevant parts of the overall language processing system are subject to variation, both cross-linguistic and inter-personal. In short, processing French or Japanese or Navajo involves far more than substituting one mental lexicon for another and flipping a few parametric switches to get the word-order to come out right.

This point is elaborated in Part II. Before considering these test cases, however, it is important to examine an equally fundamental distinction, namely, that among levels of explanation. This is the concept for which Marr is probably best remembered, and that forms the basis of the Computational Theory of Mind (CTM). By understanding how this concept works and how it applies to the study of language in mind, we may find a way to reconcile the two souls of psycholinguistic theory with which we began this section. This will be less a true conciliation of theories, than it is a ‘two-state solution’, but that is surely better than unresolved and fruitless conflict.

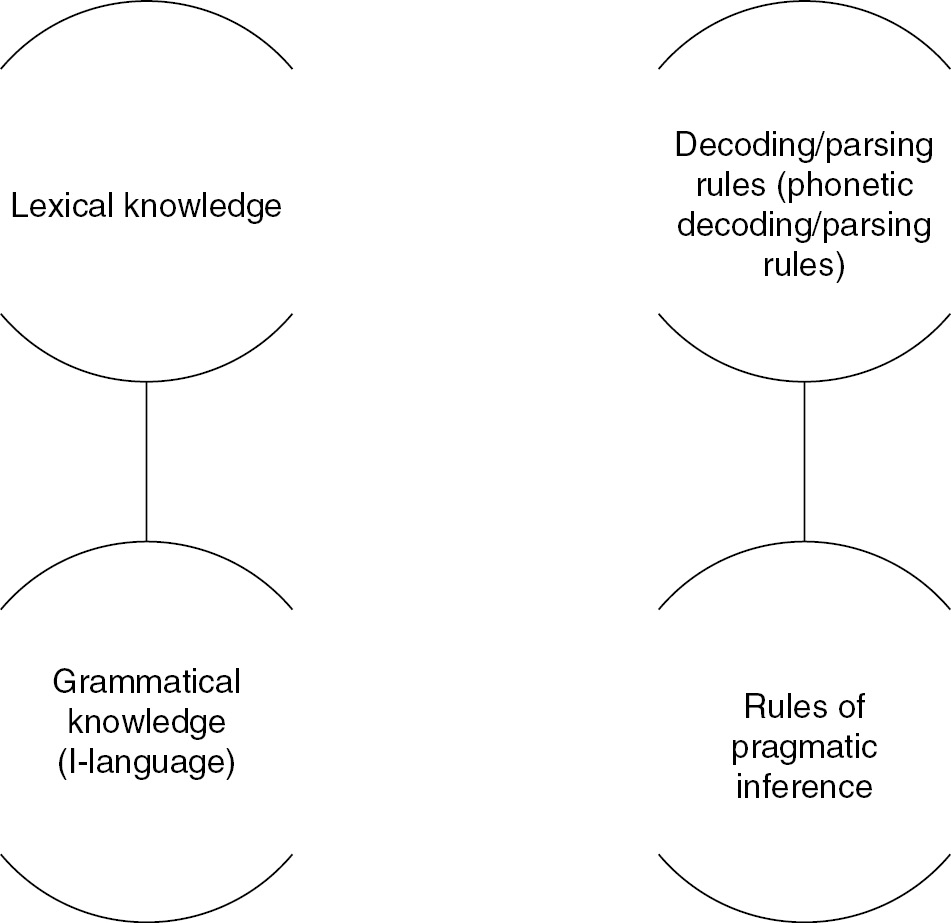

Levels of explanation: Chomsky

The claim that generative theory is only tangentially related to psycholinguistics is hardly original. In fact, it is clearly pre-figured in Chomsky’s own more general writings of the 1980s, including his 1988 monograph Language and Problems of Knowledge, in which a broader framework for understanding the competence-based approach to psycholinguistics is articulated, one that clearly dissociates three distinct levels of explanation. At the outset, we find the questions diagrammed in Figure 4 (Chomsky Reference Chomsky1988: 3).

Figure 4 Chomsky’s questions (Reference Chomsky1988).

As the figure shows, these three sets of theoretical questions are taken to map fairly directly to three separate domains of empirical enquiry: theoretical linguistics, psycholinguistics (especially language acquisition) and neurolinguistics, respectively.

There are several things to observe about this way of framing the problem. First, as has already been mentioned repeatedly, the primary concern of psycholinguistics from a Chomskyan perspective is with the mental representation of grammatical knowledge – and with the acquisition of this knowledge – rather than with developmental or processing mechanisms per se. It is easy to overlook the fact that Chomsky’s question of how knowledge of language is put to use is not the broader question of how we use language. Notice further that with respect to language acquisition theory (‘How does knowledge of language arise?’), many competence-based researchers believe that there is effectively no interesting grammatical development beyond a very early stage of parameter-setting: virtually all core grammatical knowledge is taken to be innate; see below, also Wexler (Reference Wexler1998), Chomsky (Reference Chomsky2005).

A separate point to bear in mind about this hierarchy is that Chomsky’s answers to the first question have focused almost exclusively on formal syntax: most other types of putative linguistic knowledge have been given short shrift – typically, no shrift whatsoever. The set of dismissed topics includes knowledge of phonetics, supra-segmental phonology, intonation, language-particular morphology, word-level semantics, pragmatics and discourse structure, as well as structure and organisation of the entire ‘mental lexicon’ (exceptfor abstract formal features relating to so-called ‘functional categories’) . As a result, most competence-based psycholinguists have devoted the lion’s share of their research efforts to questions of syntax. This has meant a preoccupation with syntactic parsing – how the human language processor assigns syntactic analyses (structural descriptions) to the incoming speech signal and/or with the psychological reality of specific theoretical constructs, especially empty categories and anaphoric dependencies, which are supposedly involved in syntactic computation. For language acquisition researchers taking their lead from Chomsky, the narrow focus on syntax has inspired work on young children’s knowledge of abstract syntactic principles, such as the principles of Binding Theory and Locality Constraints on Movement, as well as on the mechanisms of syntactic parameter-setting in different languages. See Cases #4–#6, in Part II below.1

Indeed, Chomsky’s answers to the Level 1 question have restricted the domain of enquiry of psycholinguistics not just to sentence grammar, but to the ‘narrow syntax’ of UG. All but the most zealous generativist will concede that this type of abstract knowledge constitutes a minuscule proportion of all the grammatical knowledge that is involved in understanding and producing spoken language. Hence, even allowing for the kinds of idealisations and abstractions discussed in Part III below, most of the knowledge relevant to processing any particular language must be non-UG-related. This problem of relevance has become especially acute post-‘Principles and Parameters’ theory – that is to say, after 1995, when many generative syntacticians moved away from any serious examination of end-state grammars (I-language), preferring to concentrate on properties of the initial state. As a result, Chomsky’s questions have encouraged an excessive regard for what are comparatively minor issues, when considered from a psychological perspective.

To draw a medical analogy: it may very well be the case that genetics plays a significant role in heart disease or congenital cardiac abnormalities – it undoubtedly plays a crucial role in embryology, which gives us a heart in the first place – but to the physiologist concerned with the functional modelling of cardiovascular behaviour in mature adults, as well as to the cardiac surgeon, genetics is largely irrelevant.2

A final point to observe about Chomsky’s framing of research questions in Language and Problems of Knowledge is that they establish a priority of linguistic theory over questions of psychology or neurophysiology. At first glance, this appears to reflect a real logical priority: after all, there would seem to be little point in examining how something is acquired if you can’t state what that something is. However, there is also the strong implication that constraints only work from the top down; conversely, that the answers to the psychological and neurophysiological questions do not also constrain what knowledge of language can look like. The latter implication is at least questionable: at all events, quite a different epistemological picture emerges if the question order is inverted:

1 What are the physical substrates and mechanisms that serve as the material basis for the processing (comprehension and production) of language; how do these constrain the set of physiologically plausible models of language use?

2 What are the logical and contingent external constraints on comprehension and production that must be incorporated into any psychologically plausible model of language processing (e.g. frequency, constraints on working memory and executive function, lexical size, fluency, etc.)? To what extent is the shape of the language processor conditioned by variation in end-state grammars? (Are all languages processed in essentially the same way?) How does the ability to comprehend and produce particular languages develop in children’s minds?

3 (Given the answers to questions 1 and 2), what is in the mind/brain of the adult speaker of English? In what ways – if any – does this knowledge overlap with the knowledge of language represented in a speaker or Spanish, or Japanese or Fula?

The notion that higher-level theories are also constrained from the bottom up is developed in radically different ways by Christiansen and Chater (Reference Christiansen and Chater2008) and Phillips and Lewis (Reference Phillips and Lewis2013). Although these authors sharply disagree on most points of content, both suggest that UG is probably not what Phillips and Lewis term ‘implementation independent’. That is to say, at least some researchers from both intellectual traditions are agreed that theories of grammar and of processing may well be shaped in crucial respects by the fact that they are implemented in the human brain. In the final analysis this is an empirical question, albeit an extremely complex one. See also Elman et al. (Reference Elman, Bates, Johnson, Karmiloff-Smith, Parisi and Plunkett1996).

The question is also one that can only be investigated once the two levels are initially teased apart. Looking at things from Both Sides, Now, it becomes clear that what counts as a satisfactory explanation to researchers investigating language and/in mind depends not just on how these more general questions are framed, but also on how they are ordered.

♫ Joni Mitchell, Both Sides, Now (2000)

Levels of explanation: Marr

This brings us back to David Marr. About a decade before Chomsky published Language and Problems of Knowledge, Marr had also developed a set of theoretical maxims for understanding vision, or any other cognitive process, in computational terms.3 These maxims, as much as the particular theory of vision he advanced, changed the way many researchers in the cognitive science community went about their business. Marr’s insight was that a real understanding of cognitive processes was impossible unless one adopted a functionalist framework with at least three (intensional) levels of explanation, each with its own primitives and relational networks. He proposed the following levels:

1 The Computational Level (⇒ ‘What is computed, and why?’)

2 The Representational Level (⇒ ‘What representations, primitive and derived, and algorithms are involved in that computation?’)