1 Introduction

My first investigations into how visible bodily action functions as utterance (with speech or without) were inspired by Ray Birdwhistell and William Condon.Footnote 1 Their work had aroused my interest in investigating in detail their claims that the bodily movements of speakers when speaking are highly patterned in relation to the phrasing of speech at different levels as well as being expressively coherent with it. My early studies verified these claims, reinforcing the idea that kinesic expression and spoken expression are products of a common process. This prompted me to consider how meaning is achieved in the kinesic medium. In 1978, in the course of fieldwork in the highlands of Papua New Guinea (gathering material for comparative studies of communication conduct), I chanced to film a young deaf woman who used a local sign language. Because I had contact with speaking locals who knew this signing, I was able to make a study of it.

Subsequent to this study, I embarked upon research on sign languages used among indigenous Australians, especially in the central desert regions. These are alternate sign languages, providing for linguistic communication when, for ritual, practical, or environmental reasons, speech is avoided or is inconvenient. They are interesting because, in contrast to deaf sign languages – or primary sign languages, as we may call them – we may expect some kind of direct relationship between the spoken language of their users and any sign language they might develop. Since verbal expression and kinesic expression have different affordances, to what extent and in what ways would or could a kinesic system serving all the functions of speech, developed by speakers as an alternative to speaking, draw upon the spoken language already immediately available to the creators of the kinesic alternative? What might this teach us about the relationship between the modality used and what it can express?

After finishing my Australian work (Reference KendonKendon, 1988), I turned to southern Italy. For the next fifteen years I was based in Naples, attempting an ecologically oriented study of the use of kinesic actions in this region. I went to Naples initially because there were reputed to be communities there in which kinesic actions were used almost as if they were an alternate sign language.Footnote 2 I wanted to know more about this and to understand the circumstances supporting it.

My Neapolitan investigations included a study of a nineteenth-century work on gesture in Naples by a Neapolitan archaeologist, Andrea De Jorio. He had proposed that because of cultural continuities between ordinary Neapolitans and their Ancient Greek predecessors, an understanding of their gesturings could throw useful light upon the various kinds of kinesic actions depicted in the mosaics, wall paintings, and vases unearthed at Pompeii and Herculaneum. De Jorio provided detailed descriptions of the kinesic expressions used by Neapolitans and of how they were used. Effectively, his was an ethnographic study of gesture in Naples unlike anything done before (Reference KendonKendon, 1995a). I published an English translation and a critical study of it in 2000 (Reference De JorioDe Jorio, 2000).

Much of the empirical work I did in Naples, along with similar work in the United States and Great Britain, was brought together in Gesture: Visible action as utterance (Reference KendonKendon, 2004). This book, semiotic and pragmatic in approach, included chapters on the history of the study of gesture, detailed descriptions of gesture use from field recordings exemplifying the diverse use of kinesic expressions by speakers, and a discussion of signing, both as it may be seen in speaker-hearers as well as among the deaf.

The subtitle of the book, “visible action as utterance,” intended to make clear the range of phenomena to be discussed. This would be (to quote definition No. 4 of “gesture” from the Oxford English Dictionary, 1989) “any movement of the body or a part of it that is expressive of thought or feeling” but with the proviso that the expressiveness of these actions is assumed to be in some way under the actor’s voluntary control (not necessarily implying that the actor is aware of them, although it might mean that the actor could become so). An action perceived as a wilful expression is perceived as “giving,” rather than “giving off,” information to use Goffman’s distinction (Reference GoffmanGoffman, 1963, p. 13). It is part of how something is “told” or “said” to another. If we adopt the wider meaning of “utterance,” that of “putting something out” by whatever means (see Reference TylorTylor, 1878, p. 14), a visible action deemed wilfully expressive is, thus, an “utterance visible action.”

How can one decide when a visible action is an utterance visible action? As I will explain below, judging whether a visible bodily action is wilfully expressive or not depends upon how many of a cluster of certain features an action is deemed to have (Reference KendonKendon, 1978; Reference KendonKendon, 2004, Chs. 1–2). A consequence of this is that the boundary between what is and what is not an “utterance visible action” cannot always be sharply drawn.

In treating this domain I have lately preferred, as far as possible, to avoid the term “gesture.” This is because “gesture,” at least in English, has many different senses. It does not always just mean visibly observable bodily actions. In common use the word “gesture” is also used to refer to actions and their outcomes at various levels of abstraction. For example, sending flowers to someone can be called a “gesture of affection,” paying a visit to someone who has been bereaved might be a fine “gesture of sympathy,” a politician attending a controversial public ceremony might be interpreted as a “gesture of support” for a given political doctrine, and so on. Further, in the scholarly literature, “gesture” has been defined and used in several different ways. It can mean any unit of purposive action; a meaningful action where the meaning is encoded analogically as opposed to digitally as language is said to be; it may refer just to actions of the hands; there are gestures in music; there are vocal gestures, and so on. Some authors slide from one of these meanings to another without indicating that they are doing so, which can be confusing (Reference KendonKendon 2017b). Since my focus here is on visible bodily actions that have “utterance” characteristics, I think it better to use an expression that is restricted to this (Reference KendonKendon, 2008, Reference Kendon2012).

In what follows, after describing my early work on speech and “gesticulation,” I consider semantic interaction between speech-concurrent visible bodily action and speech. Then I discuss my work on signing in New Guinea and indigenous Australia. I close with some remarks on the implications of utterance multimodality for the problem of language origins.

2 Speech and Body Motion in Interrelation

I was in Oxford with a project on “social skills” directed by Michael Argyle and E. R. W. F. Crossman when I first became acquainted with the work of Birdwhistell. I learned by way of an article by Albert Scheflen (Reference ScheflenScheflen, 1965) in which he, then collaborating with Birdwhistell, working on communication in psychotherapy interactions, provided a lucid summary of the findings so far developed in their work in kinesics. I was much impressed with what Scheflen described and was determined to follow it up. I was able to visit Scheflen in Philadelphia in 1965. Later he facilitated a Research Fellowship for me at the Western Pennsylvania Psychiatric Institute in Pittsburgh where I would be able to work with some of his colleagues. There I worked with William Condon, who was part of a team doing detailed analyses of sound-film recordings of social interaction to verify and extend the findings of the so-called “Natural History of an Interview” project. This project began in 1955–56 at the Center for the Advanced Study in the Behavioral Sciences in Palo Alto, California. It was probably the first project to use sound-synchronized films of conversations so that a multimodal approach to the analysis of human communication could be undertaken. Reference Leeds-HurwitzLeeds-Hurwitz (1967) gives a detailed account. The participants in this project included psychiatrists Frieda Fromm-Reichman and Henry Brosin, anthropologists Gregory Bateson and Ray Birdwhistell, and linguists Norman McQuown and Charles Hockett. One outcome of the project was the development of what came to be known as “context analysis” (Reference KendonKendon, 1990, pp. 15–48), a precursor to the so-called embodied studies of human interaction which have since been so much further expanded (see Reference Streeck, Goodwin and LeBaronStreeck, Goodwin, & Le Baron, 2011).

Working with William Condon, I became acquainted with his method for identifying movement phrase boundaries using a sound-synchronized hand-operated 16 mm projector. I also became familiar with his ideas on the synchronization of body motion with speech as well as with interactional synchrony. While in Pittsburgh, I used part of a film Birdwhistell had made in a London pub where people compared ideas about British and American national character and I selected a segment where I could study interactional synchrony (Reference KendonKendon, 1970).

After my year in Pittsburgh, I moved to Cornell and while there I worked with another part of Birdwhistell’s pub film, this time studying speech and body motion interrelationships. I used a segment in which there was an extended discourse by an Englishman, expounding on English national character. In my analysis, having divided the speaker’s spoken discourse into tone units, following Reference CrystalCrystal (1969), I showed how they could be linked into higher order groupings. The tone units were grouped into “locutions” which, in turn, were grouped into “locution groups,” which themselves grouped into “clusters,” one or more of which comprised a discourse unit. Then, using the methods of movement phrase boundary analysis learned from Condon, I mapped out the speaker’s movement phrases at several levels of organization and set this mapping against the nested hierarchical organization established for the speech. This showed a good match. Each prosodic phrase was associated with a contrasting pattern of movement in the speaker’s hands; his arm position or head position changed in concordance with each successive locution, and he shifted his whole posture at each locution group or cluster boundary. Thus, the body movements concurrent with speech had to be regarded as integral to the utterance. Uttering involves simultaneous vocal and kinesic expression. The publication of this was Reference Kendon, Siegman and PopeKendon (1972).

A second paper on speech–body movement relations was published with the title “Gesticulation and speech: Two aspects of the process of utterance” (Reference Kendon and KeyKendon, 1980), thus emphasizing the point that body movement is part of the process of utterance. The findings from my earlier paper were restated, and new analyses added in support. The paper also provided a terminology for the different “phases” into which speech accompanying arm-hand movements could be analyzed. The expressive significance of these movements was discussed, which had been little considered in my previous paper (Reference Kendon, Siegman and PopeKendon, 1972).

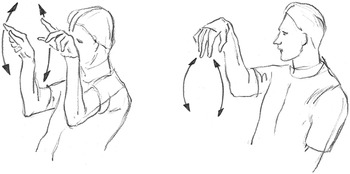

In my paper of 1980, I proposed the notion of “gesticular phrase” (in Reference KendonKendon, 2004: “gesture phrase”). The hand that is performing a gesticular phrase is moved toward a space where the movement shows a “peaking of effort” (“effort” as in Reference Laban and LawrenceLaban and Lawrence, 1947). The phase showing “effort peaking” was termed the “stroke,” the phase of movement leading to it was termed “preparation.” Thereafter, if the hand was held still, this was termed a “hold.” The phase in which the hand was relaxed and moved toward some position of rest was the “recovery.” A distinction was drawn between a “gesticular unit” (later “gesture unit”) and a “gesticular phrase” (later “gesture phrase”). A “gesture unit” extended from the beginning of any movement leading to a stroke, all the way until the hand or hands reached a rest position. The “gesture phrase” comprised “preparation,” “stroke,” and “hold” – if there was one. This distinction is necessary to allow for the fact that a hand could perform a succession of two or more “strokes” before it is again at rest. That is, a “gesture unit” could contain more than one “gesture phrase.” This terminology, slightly modified (revised and clarified in Reference KendonKendon, 2004, Ch. 7), was adopted by Reference McNeillMcNeill (1992) and adopted and modified by Sotaro Kita (see Reference Kita, van Gijn, van der Hulst, Wachsmuth and FrölichKita, Van Gijn, & van der Hulst, 1998). Since then, many others have used it.

Looking upon these manual movements that speakers make when speaking as movement phrases, and recognizing the various phases these have, allows for a precise examination of how these actions are organized in relation to speech. It is the “stroke” in which the expressive import of the phrase is manifested and which, typically, either just precedes or coincides with the nuclear tone of the tone unit with which it is associated. Taking account of the “preparation” allows one to notice how the stroke is prepared for in advance of the segment of a spoken phrase with which it is semantically coherent. It was this observation that indicated that the kinesic expression and the speech phrase must be generated together and thus are to be seen as two aspects of a common expressive process.

In 1980, I said that what the movements of gesticulation are seen to express covers a wide range, relating meaningfully to spoken discourse in many different ways. Objects, actions, or behavior styles of animate beings mentioned in the verbal discourse may be depicted, and features of the structure of a speaker’s discourse may also be expressed. Hand movements can make visible how successive parts of a discourse are related to one another, often with actions that appear to designate contrasting spaces for contrasting points in the discourse, or that successively indicate a series of points in an argument or successive items in a list.

Some of these different ways in which gesticulation is related semantically to speech within an utterance, mentioned in 1980, had, of course, been mentioned before (notably in Reference EfronEfron, 1941/1972). They have since received treatments by many other gesture scholars, as will be acknowledged in later parts of this chapter.

3 Recognizing Kinesic Expressions

The visible bodily movements made when speaking, especially of the hands, are readily recognized as being done as part of an effort to express meanings. They are not usually mistaken for practical movements, such as picking things up or manipulating them, nor as self-grooming movements or postural adjustments. In Reference KendonKendon (1978) and Reference KendonKendon (2004, pp. 10–15), I tried to clarify what features make movements recognizable as kinesic actions. I suggested that actions are more likely to be deemed expressive if they are seen as “excursions” and do not lead to any sustained change in bodily position, if they are dynamically symmetrical (they look similar whether viewed in forward or reverse), if there are clear boundaries of onset and offset, and if the movement is not made solely under the guidance of gravity. Actions that are judged as being done for practical purposes may be “infused” with features of expressiveness, however: Think of how “elegant ladies” display their fingers when holding a teacup, for example. On the other hand, a conventional kinesic expression may be intentionally disguised so that it may be mistaken for a comfort movement or some other kind of nonexpressive movement; see Reference Morris, Peter, Marsh and O’ShaughnessyMorris, Peter, Marsh, and O’Shaughnessy (1979, pp. 88–89) for how the “Forearm Jerk” can be modified to look as though one is merely rubbing one’s arm. People can manipulate how they perform actions to make them more or less expressive, according to whether they want to make the expressiveness obvious or inconspicuous. People, evidently, have a good understanding of the expressive qualities of movements and can control how they do things, determining whether and how their actions may be expressive or not (see examples in Reference De Joriode Jorio, 2000, pp. 179–180, 185, 188, 260–261).

4 Contributions of Kinesic Actions to Semantic and Pragmatic Meanings

Regarding kinesic actions made in conjunction with speech, what contributions do these make to the semantic and pragmatic meanings of the utterances of which they are a part? First, we discuss actions that contribute to utterance referential content, then those that contribute meta-discursive and pragmatic meanings. There are also kinesic expressions that serve in the management of interaction and interpersonal relations.

4.1 Referential

Kinesic actions contribute to utterance referential content in three ways: by pointing, depiction, and by conventionalized expressions (commonly, “emblems” or “quotable gestures”).

Pointing: In pointing at something, an actor can establish what is being referred to when a deictic expression is used. When, for example, the speaker GB, giving a tour of a church, says “There is Gill Crestwood,” only because he points to the statue of this person as he says this can his recipients interpret this utterance (Reference KendonKendon, 2004, p. 199).

In my work with Laura Versante on pointing (Reference Kendon, Versante and KitaKendon & Versante, 2003), we looked at the different hand shapes used to see if there are consistent differences in the contexts in which these occur. The material studied included video recordings at street stalls in Naples and nearby and a guide conducting a walking tour of a small town in the British Midlands. Six different hand shapes were observed in common use. Comparing the contexts in which they were used suggested that these different hand shapes related to how the speaker was using the object pointed at within his discourse. For example, if it was important for the speaker that recipients distinguish one specific object from another, the extended index finger was common. If the speaker referred to something as an example of a category (“Here you see a fine example of a war memorial”), if he is making a comment about the object indicated, or if there are features of it the guide’s recipients are to take note of (“You can see again the quality of this building”), a palm up or palm vertical open hand is used (Reference KendonKendon, 2004, p. 213).

Such observations suggest that, in pointing to an object (real or notional), the actor does not merely indicate it, but also, according to hand shape, shows how the object indicated is to be treated.Footnote 3 The pointing hand shapes employed are similar to those used in other expressions. Just as “palm-up-open-hand” may be used when a speaker gives an example of something, it is used in pointing when the object indicated is something the speaker wants the recipients to view as an example. In this case, “palm-up-open hand” is used as if to “present” the object to recipients as something to be contemplated. When a recipient only needs to distinguish one object from another, an extended index finger is used, as if the speaker would only touch the object rather than present it.

Depiction: Depiction (“performed depiction” [Reference ClarkClark, 2016]) is when someone acts as if showing what something looks like, how things are disposed in space, the manner in which an object or animate being moves, a pattern of action, to represent an emotional expression, to sketch the size and shape of something, to show how an object is handled, or to simulate using an instrument or tool, to “play at” doing something, or to act out a scene within imagined space. Whether fleeting or elaborate, depictions are widespread in live discourse of all sorts, in speakers and in signers alike.Footnote 4

Kinesic depictions are used by speakers in many ways. An enactment, used in conjunction with a verb phrase, can make the meaning of the verb more specific. For example, a speaker describes how someone used to “throw ground rice” over ripening cheeses to dry them off. As he says “throw” he shapes his hand as if holding a powder and does a double wrist extension as if distributing powder over a surface. The actions referred to by the verb “throw” are thereby given a more specific meaning – “scatter” might be an appropriate word (Reference KendonKendon, 2004, pp. 185–90). The same individual, in another recording, is talking about American soldiers, stationed near a village in England during the Second World War; toward the end of that war, when driving through the village, they sometimes “used to throw oranges and chewing gum (…) all off the lorries to us kids in the streets.” Here, saying “throw,” the speaker twice moves his hand rapidly backward, as if throwing things over his shoulder – a quite different sort of throwing than in the first case (Reference KendonKendon, 2004, p.186, Fig. 10.6).

Kinesic depiction allows a speaker to conjure objects into interactional space. For example, a speaker can indicate features of an object and then act as if it is actually present, holding it up exhibiting it or manipulating it, illustrating its properties: in discussing a new building, a speaker is explaining how a security arrangement will include “a bar across the double doors on the inside.” As he says “bar,” he lifts up his two hands and moves them away from each other, the hands held as if he was sliding them along a horizontally disposed elongate object. In moving apart his hands so posed, his hands seem to create the bar, making it present (Reference KendonKendon, 2004, p. 192, Fig. 10.12). A speaker, recalling lemon curd tarts and jam tarts from her childhood, moves her hands forward, fingers arranged as if holding out a little circular object in front of her (Reference KendonKendon, 2004, p. 192, Fig. 10.11).

The shape, size, and spatial characteristics of something can be shown kinesically. A speaker, explaining an archaeological excavation of an underwater village, describes finding a bronze spearhead underneath a large log. He says: “and underneath that they found a huge bronze spearhead.” When saying “huge bronze spearhead” he lifts up both hands, holding them apart with index fingers extended, depicting the spearhead’s length (also showing how “huge” was to be interpreted) (Reference KendonKendon, 2014a, Fig. 2). Again, a speaker describing how his father, a grocery store owner, received the cheeses he was to sell, said “an’ the cheeses used to come in big crates about as long as that,” holding out both hands as if holding a large elongate object in front of him, making present an imaginary crate (Reference KendonKendon, 2014a, Fig. 1b).

Spatial relationships of objects within a space can be shown, as can how objects move in relation to one another. A girl describing her aquarium refers to the two rocks inside it. As she does so verbally, she holds up her two fists side by side, modeling the rocks and showing how they are spatially disposed within the tank. Then, describing the pipe that provides aeration for the water, she draws her two hands apart, thumb and index finger of each hand extended and held with their tips separated, thus depicting a long thin object, horizontally disposed. Then she moves her two index fingers, directed vertically upwards, alternately up and down, depicting the motions of the air bubbles that come out of the pipe (Reference KendonKendon, 2004, pp. 191–194).

Objects, if depicted as if they are in front of the speaker, can then be acted upon as if still there. A speaker described a Christmas cake placed on a grocery shop counter to be sold in pieces. As he said, “the cake was this sort of size,” he sketched out a rectangular area over the table in front of him with two extended index fingers. Thereafter, as he explains how a customer can request a piece of a given size to be cut off, using a flat hand held vertically to model a knife blade, he acted as he might if cutting into the cake as placed on the table in front of him (Reference KendonKendon, 2004, pp. 194–197).

By means of such depictions, speakers provide cues, often conventionalized, which enable recipients to build in their imagination versions of what the speaker is showing. As Daniel Reference DorDor (2015) might say, they instruct the other’s imagination; the bodily movements themselves creating an imagined space within which movements and objects are shown and their spatial relations established. Verbal descriptions also enable imagined things to be present, but they do this by way of conceptual configurations designated by words. Performed depictions provide cues for percepts which allow for the building of images (Reference ClarkClark, 2016; Reference Huttenlocher, Kavanagh and CuttingHuttenlocher, 1975).

Symbolic or conventionalized kinesic actions Kinesic actions, typically manual, which have a socially shared, conventional (symbolic) meaning (“emblem,” “quotable gesture,” “symbolic gesture”) may be used in alternation with speech, but they are also often used together with speech, sometimes simultaneously with a word that has the same meaning, the speaker thus expressing the same meaning in verbal and kinesic form simultaneously. Such actions are often used to complement or to add to spoken meaning.

In my Neapolitan recordings, using kinesic and verbal forms of a lexical item simultaneously could often be observed. For example, a speaker (a shopkeeper) was explaining that nowadays in Naples there are too many thieves. As she uttered the word ladri (thieves) she used a manual expression which is always glossed with this word. Again, as a speaker said soldi (money), he rubbed the tip of his index finger against the tip of his thumb, an action always glossed as “money.” This practice of pronouncing equivalent verbal and kinesic expressions simultaneously is not seen only in Naples. A British English speaker, describing her job, says “I do everything from the accounts, to the typing, to the telephone, to the cleaning.” As she says “typing” and “telephone” and “cleaning” she does an action, in each case a conventional form, typically glossed with the same word that she utters.

In such cases the hand action seems just to convey the same meaning as the word. However, studying the contexts in which this occurs, taking into consideration how the action is performed, might suggest different effects speakers can achieve by using kinesic expressions with a fixed verbal gloss in this way. For example, duplicating a verbal meaning with a kinesic expression can ensure the meaning is present in conditions where it might not be heard or understood; it can draw the recipient’s attention to the speaker and thus assure the speaker that the recipient will have seen the meaning as well heard it. In many situations in the proverbially noisy circumstances of the Neapolitan street, speakers often find it useful to enhance their performances to facilitate the attention of addressees (Reference KendonKendon, 2004, pp. 349–354). A kinesic action can also be held beyond the duration of its verbal counterpart, thereby giving its meaning a continuing presence. This is another way in which its import can be foregrounded (see Reference KendonKendon, 2004, pp. 177–181 for examples given above).

Kinesic symbolic actions may also be used in parallel with verbal expressions, adding to the speaker’s spoken content. One illustration (Reference KendonKendon, 2004, p. 185, example [32], Fig. 10.5) must suffice: a city bus driver (in Salerno) describes the disgraceful things boys write on bus seat backs. They do this in front of girls, who are not upset but think it fun. As he says this, he holds both hands out, index fingers extended, positioned so that the two index fingers are held parallel to one another. In this way he adds the comment that boys and girls are equal participants in this activity, using here an expression glossed as “same” or “equal,” among other meanings given it (Reference De Joriode Jorio, 2000, p. 90).

4.2 Metadiscursive and Pragmatic Functions

Four ways can be distinguished in which kinesic actions operate metadiscursively in relation to spoken discourse. These are: operational, performative, modal, and parsing or punctuational (Reference KendonKendon, 2017a).

Operational: Head actions that function as an operator in relation to the speaker’s spoken meaning may be exemplified in the use of the head shake negating a verbal expression (Reference KendonKendon, 2002). A widely used hand action also may operate like this. Here the hand, with all fingers extended and adducted (so-called “open hand”), and palm facing downwards, is rapidly moved horizontally and laterally. Such an action is common in relation to negative statements or statements that imply a negative circumstance. For example, a Northampton UK shopkeeper uses this action when saying to a customer “That’s the finish of that particular brie” (Reference KendonKendon, 2004, p. 256); it may also be used with positive absolute statements, as if the hand action serves to forestall any attempts to deny what is being said: As a Neapolitan declares “La cucina di Napoli è la piú bella cucina” (“Neapolitan cooking is the most beautiful cooking”), the horizontal hand action used when saying this acts as if to say that any contrary claim will be denied (Reference KendonKendon, 2004, pp. 255–264).

Modal: Manual actions can provide an interpretative frame for a stretch of speech. The use of the “quotation marks” gesture (“air quotes”) showing that what is being said is “in quotes” is a common example. In a recording made in Salerno in 1991 (AK recording: Bocce I), someone discussing a robbery puts forward a speculation about the robber’s actions. As he does so, he touches the side of his forehead with the fingertips of a “finger bunch” hand and then moves his hand away and upward while spreading his fingers. This action, in Southern Italy, indicates something imagined. Here it serves to frame the speaker’s statement as an hypothesis.

Performative: Hand actions may be used to show the illocutionary force of an utterance. Quintilian described examples, for instance, the hand shape suited to the exordium (Quintilian, Book XI, iii, line 93 et seq. in Reference ButlerButler, 1922). Other examples include “Praying hands” (mani giunte) and “finger bunch” (grappolo), which is used for marking certain questions in Neapolitan speakers (Reference KendonKendon, 1995b; Reference Poggi, Attili and Ricci-BittiPoggi, 1983; and Reference Seyfeddinipur, Müller and PosnerSeyfeddinipur, 2004, for analogous expressions in Iran); Some of the uses of the palm-up-open-hand also serve in this manner, as when such a hand is proffered as a speaker gives an example, or when a speaker asks a question, holding out the palm-up-open-hand as if wanting something to be put in it (Reference KendonKendon, 2004, pp. 264–281; Reference Müller, Müller and PosnerMüller, 2004).

Parsing: A speaker’s actions, especially of hand or head, can serve to parse or punctuate ongoing speech. For example, as a speaker gives an account of a Christmas dinner, she lists what was served: “And then came the mince pies, the bowls of nuts and oranges” (Reference KendonKendon, 2004, p. 269). She repeats with each item an outward wrist rotation with palm up open hand as if “presenting” each item as she utters it. A speaker states four things that he supposes a burglar could have done, extending four fingers in succession, starting with the thumb, a new finger for each possible burglar action (AK recording: Bocce I). Among Neapolitan speakers, when announcing a topic, an associated “finger bunch” (grappolo) is presented, the hand is then moved outward, with the fingers spreading, as the speaker makes his comment. A thumb-tip-to-index-finger-tip “precision grip” is used by Neapolitans to mark a stretch speech of central importance, as when something specific is being said (for descriptions see Reference KendonKendon, 1995b; Reference KendonKendon, 2004, pp. 238–247; for German and American English examples of “precison grip” see Reference Neumann, Müller and PosnerNeumann, 2004 and Reference LempertLempert, 2011, respectively).

A further pragmatic function is interactional relational. This includes waving, embracing or hugging, inviting someone to sit down, offering, withdrawing, beckoning, requesting or halting turns at speaking, and so on. As far as I know, actions with these functions have received little in the way of systematic treatment, but see Reference Bavelas, Chovil, Coates and RoeBavelas, Chovil, Coates and Roe (1995).

4.3 Discussion

When we talk about things or ideas, with our words we conjure them up as objects in a virtual presence and with our kinesic actions, as noted when treating depiction, we may use various strategies to bring aspects of these objects into virtual presence, much as we do in drawing or modeling them, or in using our hands to demonstrate their functions.

These virtual objects are never produced in isolation but always in configurations with other virtual objects. These configurations are established through resources such as syntax, word choice, topic organization, and so on, but may also be established kinesically. Hands used when talking may act as if they are pushing objects into position, touching them or pointing to them, disposing them in relation to one another in space, and other operations of this sort. Many manual actions that serve in these ways are actions that can be understood as being versions of manual manipulations or manual actions upon or in relation to objects of whatever kind. The manual involvements of speaking are often schematic versions of object manipulations, as if, in speaking, objects have been conjured into a virtual space, the speaker’s hands being used to manage them (Reference Kendon, Gambarara and GiviglianoKendon, 2009; Reference StreeckStreeck, 2009).

It should be stressed that a given action can serve in more than one way simultaneously, and a given form may contribute in one way in one context and in a different way in another. The semantic functions of kinesic hand actions cannot be sorted into mutually exclusive categories. Further, the different ways we have outlined in which kinesic actions can contribute to utterance meaning have been arrived at by observers or analysts, after they have reflected upon how the form of a visible action, regarded as in some way intended or meant as part of the speaker’s expression, can be related to the semantic or pragmatic content apprehended from the speech. Our ability to do this is based upon our ability to grasp how these actions are intelligible. How do we do this? Involved here is the question of the intelligibility of kinesic expressions and how this interacts with the intelligibility of the associated spoken expressions. Reference Lascarides and StoneLascarides and Stone (2009) is a recent discussion, but this question deserves further exploration.

Finally, how can we be sure whether these kinesic actions make a difference to how recipients grasp or understand the propositional or pragmatic meanings of the utterances they are a part of? We do know, both from everyday experience and from numerous experimental studies (Reference KendonKendon, 1994 and Reference HostetterHostetter, 2011 are reviews), that these actions do make a difference for recipients, but whether they always do so, and whether they do so in the same way, we cannot say, nor do we have a good understanding of the circumstances in which they may or may not do so (Reference Rimé, Schiaratura, Feldman and RimèRimé & Schiaratura, 1991, pp. 272–275 suggest a start in how this might be investigated).

This brief survey should make clear the diverse ways in which speakers employ kinesic actions. A simple statement cannot be made about what kinesic actions do or what they are for. For me, a consideration of these different kinds of use supports the view that these actions are to be regarded as components of a speaker’s final product. That is, they are not symptoms of processes that lead to verbal expression nor are they actions brought in by the speaker as aids to the task of creating a verbal formulation. Rather, they are integral components of a person’s expression as this is achieved in the immediate circumstances of an utterance’s production. Speakers monitor their outputs and match output against some expression target. Often they follow with revisions of what was just said, and the role of kinesic action in these revisions may differ from its previous role. So although kinesic action in a current utterance can provide something that the speaker can use if a revised version is produced, and in that way “help the speaker,” kinesic expression within a current utterance is part of that utterance. It does not help the speaker’s speaking in that utterance. This is so because it is produced at the same time as the verbal expressions, not beforehand.

5 When Kinesic Action Is the Sole Vehicle for Utterance

I now turn to the work I have done with people who use kinesic action as their sole resource for utterance, either for physiological reasons, as in deafness, or for reasons of choice or convenience, whether for ritual or because of environmental or other social circumstances. As mentioned in Section 1, I first worked on a primary sign language encountered by chance in the Enga province of Papua New Guinea. Then I worked on alternate sign languages used in indigenous communities in central Australia.

5.1 Papua New Guinea

My work on a sign language in Papua New Guinea, published in 1980 as three papers in Semiotica, has since been republished as a monograph (Reference KendonKendon, 2020).Footnote 5 This work begins with a description of the nature of the units of behavior that function as signs and how contrasts between them are established. Then follows an examination of how the form of a sign may be shaped by features of its referent. This includes a comparative study, comparing signs from American Sign Language (ASL) (Reference Stokoe, Casterline and CronebergStokoe, Casterline, & Croneberg, 1965), from an alternate sign language used by the Pitta-Pitta of Northwest Queensland (Reference RothRoth, 1897), and from one used by workers in a sawmill in British Columbia (Reference Meissner and PhilpottMeissner & Philpott, 1975). This was to test a hypothesis that the “iconic device” (Reference Mandel and FriedmanMandel, 1977) a sign language uses is related to the semantic domain of the sign’s signified. A study of how pointing was used in the Enga corpus follows, and after this there is an analysis of utterance construction. Here, actions in the face and eyes concurrent with the production of manual signs are examined for how they contribute to bringing off complex sign combinations. Sign order, subject–object relations in verbal expressions, temporal reference and interrogation are also examined. In this work I used what was then available on ASL by Reference StokoeStokoe (1960), Reference Stokoe, Casterline and CronebergStokoe, Casterline, & Croneberg (1965), Ursula Bellugi and associates (Reference Bellugi, Klima, Kavanagh and CuttingBellugi & Klima, 1975, Reference Bellugi, Klima, Harnard, Steklis and Lancaster1976; Reference Fischer and GoughFischer & Gough, 1978), and by authors in Reference FriedmanFriedman (1977).

I found Enga signers signed at a rate comparable to that reported for ASL signers; they made use of a similar repertoire of locations and movement patterns as Stokoe had described for ASL. The repertoire of contrasting hand shapes was much smaller, however. A comparative analysis of the different “iconic devices” used (using Reference Mandel and FriedmanMandel, 1977, for ASL) showed that the Enga signs and those in the three unrelated sign languages all included enactment, modeling, or sketching and these were related to the semantic domains of the referents in similar ways. Enactment, the most common device, is used when signing bodily actions of all sorts: many animals are signed by enacting a movement pattern selected as characteristic of them, and objects, when they can be designated, by a fragment of an action pattern used in manipulating or using them. Modeling, often combined with enactment, is commonly used for large animals which are not handled or for objects such as tools. Sketching (as in outlining the shape of something) was found to be the least common. These findings suggest that the processes by which signs in a sign language are generated are quite general. This is fully compatible with signs in different sign languages being diverse, not only because features selected for representation can be quite different but also because the processes by which signs are shaped as they become part of a shared system ensure that signs for similar referents in different sign languages are also likely to be different from one another.

Pointing was used by Enga signers in ways very similar to what has been described for this in ASL. Facial, postural, and gaze direction activity in relation to manual signing was shown to serve as a bracketing device, marking out distinct segments of discourse, and providing context for the manual signs, so adding directly to discourse meanings. In a conclusion, I remarked “the functions of concurrent activity in respect to signing are no different from their functions in respect to speech in users of oral language. This points out quite clearly that signing, despite the fact that it makes use of quite different expressive devices, as a functional system, is the exact analogue of speech” (Reference KendonKendon, 2020, p. 112). In the light of what we know today about the functions of mouth actions in sign languages (see Reference Boyes-Braem and Sutton-SpenceBoyes-Braem & Sutton-Spence, 2001), this statement needs some modification. Nevertheless, this foreshadows a point I elaborated much later (e.g. Reference KendonKendon, 2014a), which is that, if sign language and spoken language are to be compared, they should be compared as uttering activities, not as fixed texts. Such comparison reveals how signing and speaking are variations of a fundamentally similar activity, but it also emphasizes the point that is crucial to understanding speaking as a process: Kinesic as well as vocal actions must be taken fully into account. It points to the multimodal character of uttering and suggests that the division between “paralanguage” and “language” cannot be sustained categorically. As we remark below, this has implications for how we conceive of “language” and for how we are to approach the problem of its evolutionary origins.

5.2 Sign Languages in Aboriginal Australia

In a much larger investigation, I examined sign languages in use among Australian Aborigines. In an area of central Australia that stretches northwards from above Alice Springs in the Northern Territory as far as the border with Arnhem Land, a practice is followed in which, when a woman is widowed, for an extended period thereafter, she and certain of her classificatory relatives forego the use of speech. Where this practice is observed, complex sign languages have developed. These are used among women in mourning, but they may also be used in other circumstances of everyday life as a matter of convenience or suitability. There are many interesting aspects of these sign languages, from cultural and semiotic points of view, and when comparing them to other alternate sign languages (such as those reported from North America or in Christian monastic communities – see Reference DavisDavis, 2010; Reference Umiker-Sebeok and SebeokUmiker-Sebeok & Sebeok, 1987) as well as to primary sign languages. It is also useful to compare them with other language codes, such as writing or drum and whistle languages (Reference KendonKendon, 1988, Ch. 13; Reference MeyerMeyer, 2015 is a recent study). Here I comment on just one issue, which was central in my work, and that is how these central Australian sign languages are structurally related to the spoken languages of their users.

A comparison of signing among women of different ages that I undertook at the Warlpiri settlement, Yuendumu (Reference KendonKendon, 1984), suggested that, as users grew more proficient at using their sign language, they came to use, more and more, signs that represent the semantic units expressed by the semantic morphemes of the spoken language. A notable feature is that it appears common for signs to develop which represent the meanings of the morphemes of the spoken language. In consequence, concepts expressed in spoken language by compound morphemes get expressed by compound signs that are the equivalent of these compounds. One example must suffice (for this and other examples, see Reference KendonKendon, 1988, pp. 369–372). In Warlpiri, “scorpion” is kana-parnta, a compound of kana “digging stick” and -parnta, a possessive suffix. Thus “scorpion” in Warlpiri is, literally, “digging stick-having.” In a language of a neighboring community, Warlmanpa, the same creature is known as jalangartata, a compound of jala “mouth” and ngartata “crab” or perhaps “claw.” Note that, in creating a sign for this creature, we do not find a sign for “scorpion” derived from some feature of the animal (its action of raising its tail comes to mind) but signs based on representations of the meanings of the verbal components which make up the verbal expression. Thus, in sign languages of neighboring language communities in this part of Australia, there can be differences in signs for similar things, these differences deriving from the fact that signing in part develops as kinesic representations of the semantic units of their respective spoken languages. It is interesting to compare this with writing (Reference KendonKendon, 1988, Ch. 13).

Detailed information on other alternate sign languages is scant, and there are few studies that have investigated how they might relate to the spoken languages of the communities where they developed. However, surveying such evidence as is available shows that we need not expect other alternate sign languages to be as closely guided by an associated spoken language as the Australian central desert sign languages tend to be (see Reference KendonKendon, 1988, Ch. 13). The so-called Plains Indian Sign Language of North America (in use in the nineteenth century) studied by Reference WestWest (1960) shares many features found in primary sign languages but not found in the Australian central desert sign languages. For example, exploiting space for expressing grammatical relations is found in Plains Indian Sign Language but not in Warlpiri Sign Language. Since Plains Indian Sign Language was used as a lingua franca by people with several different spoken languages, we might not expect its users to structure it according to one specific spoken language. This is what Anastasia Bauer reports in her study of signing used at a multilingual settlement in Arnhem Land (Australia), where it is also shared by deaf persons (Reference BauerBauer, 2015). Further, many of the indigenous spoken languages of North America have “polysynthetic” morphologies. This would make the creation of signs as equivalents of recombinable semantic morphemes (possible in Warlpiri) hard to achieve.

Several aspects of these alternate sign languages are of interest. At least in the central desert sign languages, there are many signs that are used to signify several different semantic morphemes, rather than one only. Studying this throws light on how users cluster meanings into broader categories (see Reference MeggittMeggit, 1954). Examining sign formation processes can also show what gets selected as the bases of iconic signs within the culture. More broadly, perhaps, it is interesting how readily kinesic equivalents of word meanings can be created. This seems to support the idea that many spoken words are closely related to schematic units of action (Reference Gullberg, Pederson and BohnemeyerGullberg, 2011; Reference PulvermullerPulvermuller, 2005). This fits in with the idea that language begins as a form of action; its transformation into a system of abstract symbols is a later development.

6 A General Conclusion

In Section 4, we saw how kinesic action enters into the construction of utterances in which speech is also used. Pointing and kinesic depiction can be done while speaking, adding to utterance content. A speaker’s utterance can thus “escape” the linearity constraints of verbal expression, at least to a degree. Objects and their features, spatial and dynamic, can be depicted directly along with spoken discourse. In sign languages, simultaneous as well as linear constructions must be envisaged as a part of their grammar (Reference Vermeerbergen, Leeson and CrasbornVermeerbergen, Leeson, & Crasborne, 2007). As far as I know, though, it is a ubiquitous speaker practice; combining speech and kinesic action in simultaneous constructions has not become established in any speaker community as a part of the formal grammar of a spoken language.Footnote 6 Nevertheless, it is clearly a feature of speaker uttering which can exploit the same syntactic dimensionality as sign languages do (Reference HockettHockett, 1976), and although this is done differently in speakers than it is in signers, this shows a fundamental continuity between uttering, whatever the modality. Further, this means that in constructing utterances we are not restricted to discrete units. Rather, various elements may be used, fashioned in whatever modalities are available, including highly conventionalized elements (words or lexical signs), elements which may be variable or flexible in form, including “nonce” elements, created in the moment to fill an expression slot with whatever resource is to hand (Reference Lepic, Occhino and BooijLepic & Occhino, 2018).

7 Utterance Visible Action, Uttering, and Language Origins

The expression “utterance visible action” or “utterance dedicated visible action” (or sometimes “kinesic action”) is a term intended to cover the phenomena that are the concern of the work here surveyed. It emphasizes our concern with the visible bodily actions with referential, metadiscursive, or pragmatic functions that humans engage in when uttering. In signing, these actions are the sole means by which all uttering is done. When speakers use them when uttering, they are integral to the project of the utterance of which they are a part.

Uttering refers to the activity of producing the complex ensembles of actions which constitute utterances.Footnote 7 It is these complex ensembles, shaped in the current communicative moment, which must be understood if we want an explanation of how utterances mean. Language refers to the abstracted system that utterers commonly make use of.Footnote 8 (Languageless utterances are common also, however.)Footnote 9 Over the course of several millennia, beginning, perhaps, with the first development of writing, we can see the growth of the idea that language can be regarded as a system existing “out there,” as if it were an external object, a “thing” in the environment, a tool of human invention which has to be learnt if it is to be used. This view of language is a product of human reflection and has given rise to the artifact studied by those who, by the end of the nineteenth century, were known as “linguists.”Footnote 10

Following a number of historical events and processes, including the rise to dominance of the printed word, “language” as it could be written down came to be regarded as the default mode of human communication and thought, all other modalities being marginalized, even ignored.Footnote 11 At least since the 1950s, however, what has become ever clearer is that this “language” is only a part of what has communicative consequence in interaction and is only a part of how humans think. It is more and more recognized that “language” viewed in this way cannot account for human communication. We must also study and understand the orchestrated deployment of multiple expressive modes and the diverse semiotic processes always involved whenever people engage one another with utterances (cf. Reference Ferrara and HodgeFerrara & Hodge, 2018)

Taking this view seriously brings important implications for the problem of language origins. Hitherto, a great deal of thought on this problem begins with the idea that “language” can be thought of as a self-sufficient entity, contained in a separable module of the mind. Thinking in this way, however, makes it difficult to understand how it could have arisen since it seems to have no connections to any other system of expression. This has led some to suppose that there must have been a genetic mutation altering the brain so that, all of a sudden, language was possible.Footnote 12 Although many reject this idea, for it is at odds with how biological evolution happens, even those advocating a more gradualist approach often seem to be wedded to the idea that it is “language” as an abstracted system that should be the target of their theorizing. Advocates of the “gesture first” hypothesis, for example, are faced with the problem of accounting for why the spoken modality is the dominant vehicle for language, which they still seem to think of as discontinuous with other systems. Many “gesture first” theorists think there was a “switch” (or a “transition”) from “gesture” into “speech” whereupon, and only then, we have language proper (Reference Kendon, Napoli and MathurKendon, 2011). This kind of thinking is even found among some of those who take sign languages into account. Those who want to maintain that there is a “cataclysmic break” between “gesture” and “sign” (Reference Goldin-Meadow and BrentariGoldin-Meadow & Brentari 2017; Reference Singleton, Goldin-Meadow, McNeill, Emmorey and ReillySingleton, Goldin-Meadow, & McNeill, 1995) seem to rely on the idea that “language” is something that excludes forms of expression that do not conform to the criteria of discreteness, and arbitrariness in the relation between the signifier and the signified.

Notwithstanding, it is the expansion of our understanding of sign languages from the beginning of the 2000s which is providing some of the best grounds for advocating that a categorical division between what is “linguistic” and what is not, is not sustainable. It is now more widely agreed that in signing there are modes of expression, important to the very working of a sign language, which do not fit a structural linguistic morphosyntactic framework. In depictive modifications of lexical signs, classifier constructions, phenomena of simultaneous construction, and so-called “constructed action,” we have modes of expression that are not categorial but analog, yet integral to the functioning of a sign language as such. Sign languages are, unavoidably, semiotically hybrid systems (see, inter alia, Reference Johnston, Vermeerbergen, Schembri, Leeson, Perniss, Pfau and SteinbachJohnston, Vermeerbergen, Schembri, & Leeson, 2007; Reference KendonKendon, 2014a; Reference LiddellLiddell, 2003).

This is just as true of spoken languages. At least, as soon as we look at uttering speakers we see that they, just like uttering signers, make much use of forms of expression that do not fit with what are commonly understood to be properly “linguistic.” The arbitrary nature of the distinction between “linguistic” and “non-linguistic” was already recognized in 1946 by Dwight Bolinger (Reference BolingerBolinger, 1946), and in 1987 Charles Hockett came to agree that between “language proper” and paralanguage and kinesics “there aren’t any boundaries, just zones of gradual transition” (Reference HockettHockett, 1987, p. 27). Regarding body movement, at first always excluded from “language,” after Birdwhistell had begun a systematic investigation of it, and onward with the work here surveyed, as well as work by Reference McNeillMcNeill (1992), Reference StreeckStreeck (2009), Reference EnfieldEnfield (2009) and Reference CalbrisCalbris (2011), among many others, it now must be seen as integral to utterance. All this supports the conclusion that uttering by people who use speech is just as semiotically “hybrid” as it is when people are uttering by signing (see Reference Lepic, Occhino and BooijLepic & Occhino, 2018).

Uttering, in other words, is multimodal. Whenever an actor engages in uttering, they will always mobilize – albeit to lesser or greater extents – whatever expressive resources they have available, adapting them and orchestrating them in relation one to another, as suits their rhetorical aim within whatever interaction situation they may be in. This, it seems to me, invites the conclusion that, so far as the question of language origins is concerned, it is fruitless to suppose that language first emerged in one modality, switched, eventually, to another (as “gesture firsters” have supposed) or somehow added in other modalities later. The shift to being able to employ and to recognize expressive actions of whatever kind in wilfully deictic and depictive ways was a shift in the perception and production of any sort of action, regardless of the modality employed (Reference KendonKendon, 2016). This shift was the first step that made any sort of language possible. How it took place has not yet been accounted for in any comprehensive theory. We do see hints of it, however, in the behavior of many animals – especially in monkeys and apes and also in many species of birds (in corvids, parrots, and artamidids, such as the Australian magpie [Reference KaplanKaplan, 2015]). A feature of this sort of behavior is that it has characteristics of “try-out” actions and “intention movements” – actions that are purposeful but which do not fully consummate their purpose even though their purpose is recognizable (cf. Reference KendonKendon, 1991). This suggests that in trying to understand the origins of symbolic action, it might be fruitful to look carefully across species at play, ritualized agonistic encounters, and courtship interactions.

After this shift had occurred, and once shared repertoires of symbolic acts began to be established, a system which eventually emerged as “language” would begin to differentiate into an increasingly specialized kind of activity (Reference Kendon, Dor, Lewis and KnightKendon, 2014b). Important for any account of language origins is to understand how this differentiation and specialization into socially shared repertoires of symbolic actions came about. It seems likely that the more complex, stable, and widespread a shared repertoire of symbolic actions becomes, the more it will tend to be modality specific. Space precludes any elaboration here but, in hominins,Footnote 13 that this specialization has favored the modality of articulated vocalization should not surprise us. Evolution builds on what is already there, and the vocal apparatus, already part of the mammalian plan and specialized for communication, would surely not have been left out of the evolutionary processes by which socially shared symbolic systems came to be established (see Reference DarwinDarwin, 1871, pp. 58–59). Nevertheless, the socially shared symbolic systems that have emerged – “languages” – not only could not function as they do today, much less could they have emerged in evolution, unless the way they are connected with the diverse range of expressive actions is fully taken into account.

1 Introduction: Aims and Challenges in Gesture Coding and Annotation

Any analysis of verbo-gestural utterances requires the processing of audiovisual material in usually at least two steps: transcribing spoken utterancesFootnote 1 and coding and annotating gestures. Coding and annotation are usually considered to be analogous and are not clearly separated. However, typically, two aspects are distinguished. The encoding scheme includes linguistic phenomena and determines the categories and the members that are necessary for the description and the analysis. The annotation structure scheme defines structural aspects, such as number of tiers, temporal, and/or hierarchical relations (see e.g. Reference Brugman, Wittenburg, Levinson and KitaBrugman, Wittenburg, Levinson, & Kita, 2002). Annotation is thus understood as the association of descriptive or analytic notation with data and can include many different kinds, such as syntactic labels, part of speech tagging, semantic role labels as well as different layers and levels (Reference Ide, Ide and PustejovskyIde, 2017, p. 2).

In this process, coding and annotation systems for verbo-gestural data are faced with particular challenges. They must reproduce both sensory modalities in their specifics and independent of each other. For gestures, categories are to be found that adequately capture the nature of the gestural sign as precisely as possible in written form. Categories have to be chosen that enrich the raw material sufficiently for the particular research question to be addressed without tampering with the raw material too much. Furthermore, the dynamics of the relation between speech and gestures, along with their simultaneous and successive nature, needs to be preserved. Technical possibilities, such as motion tracking, open up even more questions: How, and to what extent, can and should manual annotation be combined with such data? How reliably can the annotation of motion, for instance, be performed by human coders relying solely on the visual input, or is it necessary to add quantitative tracking to allow for the extraction of particular kinematic features of gestures (Reference MittelbergMittelberg, 2018; Reference Pouw, Trujillo and DixonPouw, Trujillo, & Dixon, 2019)? On another level, requirements such as searchability, browsability, and the extraction of automatic information play a role in the coding and annotation process (Reference Bird and LibermanBird & Liberman, 2001, p. 54). A tagged and searchable verbo-gestural database opens up the possibility of new research questions that rely on larger corpora and a quantitative and corpus-linguistic perspective (see e.g. Reference Steen, Hougaard, Joo, Olza, Cánovas, Pleshakova and WoźnySteen et al., 2018). The questions “How much does one abstract?” (Reference Kipp, Neff and AlbrechtKipp, Neff, & Albrecht, 2007, p. 327) and “How many and what kinds of classes and categories are needed?” thus remain key ones in coding and annotating verbo-gestural data.

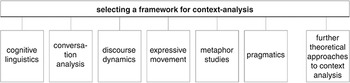

This chapter gives a selective overview of the current state of the art on gesture coding and annotation systems. It touches upon the aspects mentioned above in different levels of detail and from various perspectives by reflecting on the interrelation between subject, research question, and coding and annotation systems. Section 2 opens up the discussion by emphasizing that coding and annotations systems are always influenced by the particular theoretical framework in which they are situated. Accordingly, similar to the situation in the analysis of language, a theory-neutral analysis of gestures is not possible. This will be illustrated by consideration of some representative fields of research in gesture studies: language use, language development, cognition, interaction, and human–machine interaction. Section 3 of the chapter discusses different coding and annotation schemes addressing research questions in these fields. Rather than giving an extensive discussion of the individual systems, the section focuses on their general logic for answering a research question from a particular field. Here, differences between systems addressing the same research topic (see e.g. language use) as well as differences across research topics (see e.g. language use vs. interaction) will be explored. Section 4 of the chapter closes with some considerations on the current state of automatic gesture recognition and recording practices and possible future developments in coding and annotating verbo-gestural data.

2 Framework, Subject, and Analysis: On the Interrelation between Theory and Method in Gesture Studies

Looking at the particular chapters included in this Handbook, the ways in which gestures are considered as being part of communication, and how the role of the body in language (use) is looked upon, differ greatly: This is true not only in terms of theoretical perspectives but also in the methodological approaches taken. Hence, there is no single method or approach to coding and annotating gesture. Rather, there are many different ways, and as such, both the theoretical and methodological frameworks provide important orientation points for a study’s scope of explanation. Analyses of linguistic constructions with gestures, for instance, call for a detailed analysis of the verbal construction, its temporal relation with gestures, along with differences in form, meaning, and type of the gesture as well as a quantitative, corpus-linguistic analysis (Reference Zima and BergsZima & Bergs, 2017). Research that focuses on gestures’ relation with the material world needs to put particular emphasis on the environmental setting and the dynamics of the interaction (Reference StreeckStreeck, 2017) and follow a more qualitative perspective on the relation between speaking and gesturing. These two examples show that the particular research question determines the aspects and the level of detail of gesture–speech relations that need to be coded or not. Thus, each interest leads to a specific view on verbo-gestural data that has theoretical and practical implications for coding and annotation. Consequently, no single coding or annotation schema exists with which all possible research questions could be addressed. Rather, an adequate description of specific phenomena always calls for a particular focus and, thus, a specific coding and annotation procedure. Leaving aside for now the particularities of the individual systems and their relation to specific research interests (see Section 3 for a detailed discussion of the systems), Section 2.1 briefly discusses four general differences in current systems: (1) the relation between the verbal and gestural modalities, (2) facets and specifics of coding and annotation, (3) qualitative versus quantitative perspectives, and (4) procedures. All these aspects are influenced by theoretical assumptions about the nature of gesture–speech relations, lead to particular methodological and practical implications in the coding and annotation process, and thus influence the potential outcome of verbo-gestural analyses.

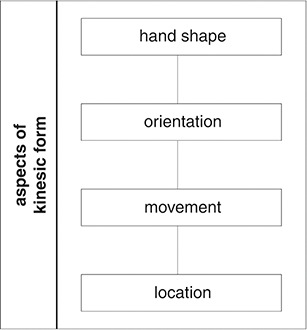

2.1 Relation between Verbal and Gestural Modalities

Speech and gesture are tightly connected on the temporal, semantic, and pragmatic levels (Reference McNeillMcNeill, 1992). For analyzing gesture–speech relations and the design of coding and annotation systems, this close connection of both modalities results in rather practical consequences, because systems have to reproduce this link on different levels. Existing systems, also depending on their technical implementation, solve this problem in different ways. The majority of systems use the transcription of speech as the center for the analysis of gesture–speech relations. As a result, gestures are placed in a position that is secondary to speech, and first steps in coding and annotation concentrate on the analysis of speech (see e.g. Reference McNeillMcNeill, 1992). Gestures are added either by annotating them into speech transcription or by making them hierarchically dependent on the speech transcription. Gestures are thus viewed in relation to speech from the beginning of the analytical process. Other systems allow for a first review and analysis of the data without an instant inclusion of speech and even allow for coding and annotating gestures without sound and, initially, independently of speech. As a result, these systems initially concentrate on the form of the gestures before bringing together speech and gestures in the analytical process (Reference Bressem, Ladewig, Müller, Müller, Cienki, Fricke, Ladewig, McNeill and TeßendorfBressem, Ladewig, & Müller, 2013; Reference Lausberg and SloetjesLausberg & Sloetjes, 2009) and thus separate speech and gestures into different parts of the analysis to allow for a preferably nonbiased coding and annotation of gestures.

2.2 Facets and Specifics of Coding and Annotation

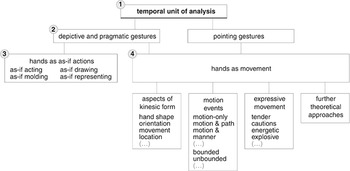

Tied to the different possible relations between, and orders of, both modalities in the coding and annotation process are differences in the type and the specificity of the aspects to be coded and annotated. As already mentioned, some systems put particular emphasis on detailed descriptions of gestural forms because this allows one, for instance, to discover regularities and structures on the level of form and meaning, along with gestures’ potential for combinatorics and hierarchical structures,Footnote 2 or the best possible reproduction of gestures in technical systems, such as avatars (Reference Kipp, Neff and AlbrechtKipp et al., 2007; Reference Pouw, Trujillo and DixonPouw et al., 2019) or robots (Jokinen, this volume; Reference Kopp, Church, Alibali and KellyKopp, 2017). Connected to this approach is often the development of particular categories and a separate description of gestural form parameters to make the kinesics and semiotic processes in gestures visible (Reference BoutetBoutet, 2015; Reference Bressem, Müller, Cienki, Fricke, Ladewig, McNeill and TeßendorfBressem, 2013a). In contrast to these approaches, other systems carry out, for instance, a selective description of gestural forms that is also dependent on the meaning expressed in speech. The focus of these systems is on a functional analysis of gestures in relation to speech, such that “only the bodily movements maintaining a relation to speech – co-speech gesture – as well as the function of the gesture are of interest to us” (Reference Colletta, Kunene, Venouil, Kaufmann and SimonColletta, Kunene, Venouil, Kaufmann, & Simon, 2008, p. 59). Categories for the description of gestural forms are taken, for instance, from sign language studies and gestures are matched to the sign language alphabet (see e.g. Reference McNeillMcNeill 1992). The differences in describing and categorizing gestural forms discussed above are only meant to exemplify how the specificity of individual systems may vary; they are meant to underline the type of consequences that may be connected to different possible conceptions of, and views on hierarchies in, the relations between speech and gestures. (Further differences in the specificity and details of the particular systems along with the underlying research foci that are decisive for the structure of the systems will be discussed in Section 3.) In general, regardless of the particular approach and focus taken, the basics of current coding and annotation systems are gesture phases, gestural forms, gesture type, a transcription of speech, and the relation between gestures and speech.

2.3 Qualitative Versus Quantitative Perspectives

A further essential difference in existing systems is found in the research perspective. A qualitative perspective is characterized by more openness and flexibility, as well as often more naturalistic data from more ecologically valid settings, by which the dynamics of gesture–speech relations can be captured and hypotheses can be developed from, and based on, the material. By contrast, quantitative research aims at the validation of given hypotheses, replicable data and larger amounts of data, that are statistically evaluable. These two different positions influence the conception and design of coding and annotation systems. Systems with a stronger qualitative emphasis might show greater variability and flexibility (see e.g. Reference Müller, Müller and PosnerMüller, 2004) that is usually not included in systems aiming for a quantitative approach. Connected to this is also the use of larger corpora as data, as well as reliability or intercoder agreement measures (see e.g. Reference Lausberg and SloetjesLausberg & Sloetjes, 2009).

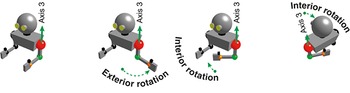

2.4 Procedures for Coding and Annotation

Annotation programs have become the standard for analyzing gesture–speech relations in recent years, both for qualitative and quantitative studies. Through the use of such programs, coding and annotation have become faster and more reliable, in terms of both technical practicability and plausibility. While the majority of studies use ELAN, a program developed by the Max Plank Institute in Nijmegen (Reference Wittenburg, Brugman, Russal, Klassmann and SloetjesWittenburg, Brugman, Russal, Klassmann, & Sloetjes, 2006), researchers also have the option of choosing other programs, such as EXMARaLDA (Reference Schmidt, Mehler and LobinSchmidt, 2004) or ANVIL (Reference KippKipp, 2001). Although the basic setup of these programs is similar, differences in their functionality may lead to differences in the coding and annotation systems. Whereas all kinds of annotation software offer the possibility of including three-dimensional motion data, ANVIL, in addition, allows “spatial annotation” where two points can be marked directly on the video screen so that the distance between two hands can be more accurately annotated (Reference Kipp, Neff and AlbrechtKipp et al., 2007, p. 331). The three annotation programs thus offer fine-grained possibilities for combining visual information only with automatic analyses of movement patterns (see e.g. Reference Ripperda, Drijvers and HollerRipperda, Drijvers, & Holler, 2020) and consequently allow for an analysis of gestural forms based on more material, on larger corpora, and at a more abstract level (Reference MittelbergMittelberg, 2018; Reference Trujillo, Vaitonyte, Simanova and ÖzyürekTrujillo, Vaitonyte, Simanova, & Özyürek, 2019). (For an overview and discussion of the different methods in multimodal motion tracking, see Reference Pouw, Trujillo and DixonPouw et al., 2019, and Trujillo, this volume.)

3 Systems of Gesture Coding and Annotation

This section will first consider some general aspects of practices of coding and annotation of gestures, highlighting the fact that theoretical assumptions influence subjects, aspects, and levels of analysis and as such also make themselves visible in annotation systems. We will then turn to an illustration of this in more detail by discussing existing coding and annotation systems from a thematic point of view. Using several research domains in gesture studies as examples, namely language (use), language development, cognition, interaction, and human–machine interaction, the section focuses on the general logic of existing systems for answering the particular research questions at hand. Because only few explicit coding and annotation systems for gestures exist, the following sections address systems as well as procedures and methods followed in studies analyzing gesture–speech relations. We will focus on a selection of systems and of coding and annotations practices that illustrate the link of theory and method for particular thematic areas and/or research questions.

3.1 Words and Gestures: Coding and Annotation for Exploring Language (Use)

Most studies exploring the relation of words and gestures in language (use) assume that usage events are dynamic and multimodal in nature, yet the degree to which gestures are part of language is variable (Reference CienkiCienki, 2015). The relation between speech and gesture is understood to be “reciprocal” such that the “gestural component and the spoken component interact with one another to create a precise and vivid understanding” (Reference KendonKendon, 2004, p. 174, emphasis in original). Spoken words and/or phrases have a close relation with gestures on different linguistic levels (see e.g. phonology, semantics, syntax, pragmatics), but, depending, for instance, on the type of gesture, the communicative context or genre, or the language community, this connection may vary. Starting from this assumption, studies have aimed to discover the tight relation between speech and gestures on these different levels and relations. Depending on the object of investigation, different aspects of coding and annotation become relevant, both regarding the temporal coordination and as the functional relation between the two sensory modalities.

Early on, research showed that the temporal relation between gestures and body movement in general shows a “precise correlation between changes of body motion and the articulated patterns of the speech stream” (Reference Condon and OgstonCondon & Ogston, 1967, p. 227). Accordingly, it is assumed that “the pattern of movement that co-occurs with the speech has a hierarchic organization which appears to match that of the speech units” (Reference Kendon, Seigman and PopeKendon, 1972, p. 190). Research addressing this relation thus requires a coding and annotation system that captures the link of both modalities regarding intonation phrases, stress, and syllables, for instance. In addition, particular movement characteristics, such as the velocity profile, may be of interest (Reference Karpiński, Jarmołowicz-Nowikow and MaliszKarpiński, Jarmołowicz-Nowikow, & Malisz, 2008). Likewise, data from programs such as Praat, a software package for phonetic speech analysis (Reference Boersma and van HeuvenBoersma & van Heuven, 2001), should be integrable. If, for instance, in addition to other factors, the connection of gestures with the narrative structure of speech is to be investigated, gestures’ relation with discursive elements has to be included. For instance, in a study on the Palm Up Open Hand (PUOH) and the type of prosodic and discourse units which are marked by this type of gesture, Reference FerréFerré (2011) transcribes, annotates, and segments speech by using a range of different programs, such as Praat and EasyAlign, and annotates speech for speech acts, intonation phrases, words, syllables, and stress. For gesture annotations, the study uses the annotation programs ELAN and ANVIL, and using the typology of gestures proposed by Reference McNeillMcNeill (1992), codes beat gestures, their phases, and their semantic and discursive functions in discourse. With this, Reference FerréFerré (2011), for instance, shows that beat gestures accompany emphatic stress in the verbal mode and that the PUOH, in particular the hand flick, fulfills different pragmatic functions in discourse and can acquire “a judgmental or epistemic value” (Reference FerréFerré, 2011, p. 16).