Discovering Mislocalizations with Moving Stimuli

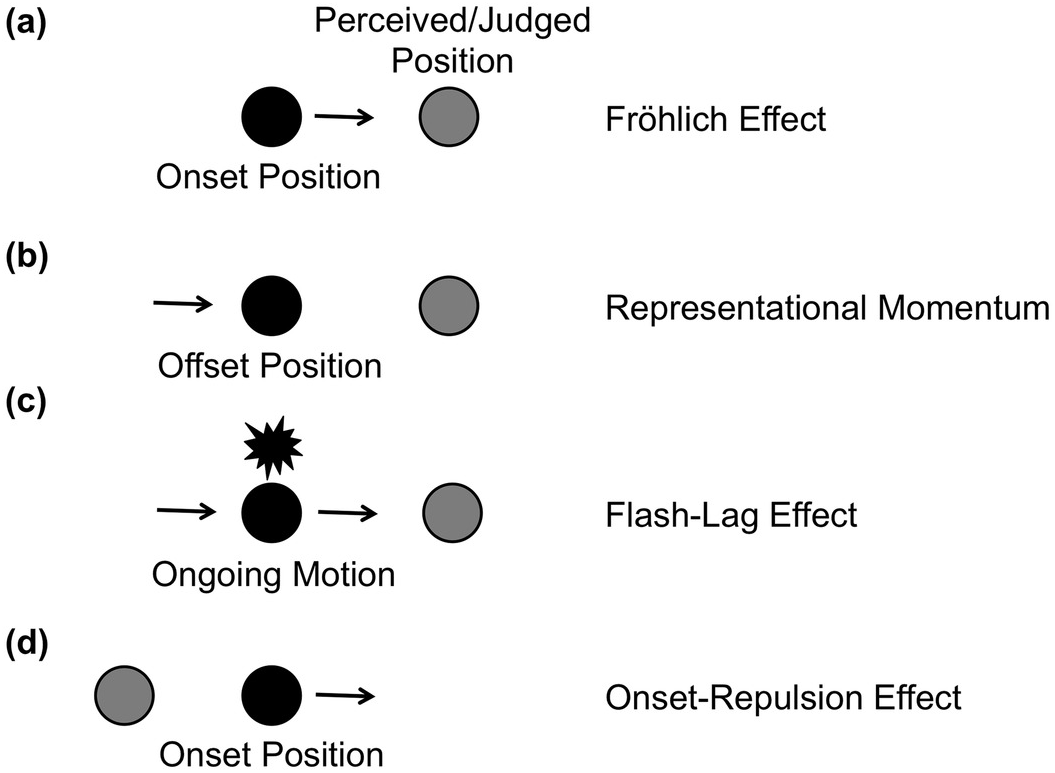

A note in a book by Friedrich W. Fröhlich (Reference Fröhlich1929) stated that in 1894 the Norwegian astronomer O. Pihl noticed a perceptual illusion when localizing the onset position of a moving target: Typically an observer did not notice the target at its physical onset position, but rather at some later position on its motion trajectory. In other words, a localization error in the direction of motion occurred (Figure 7.1a).

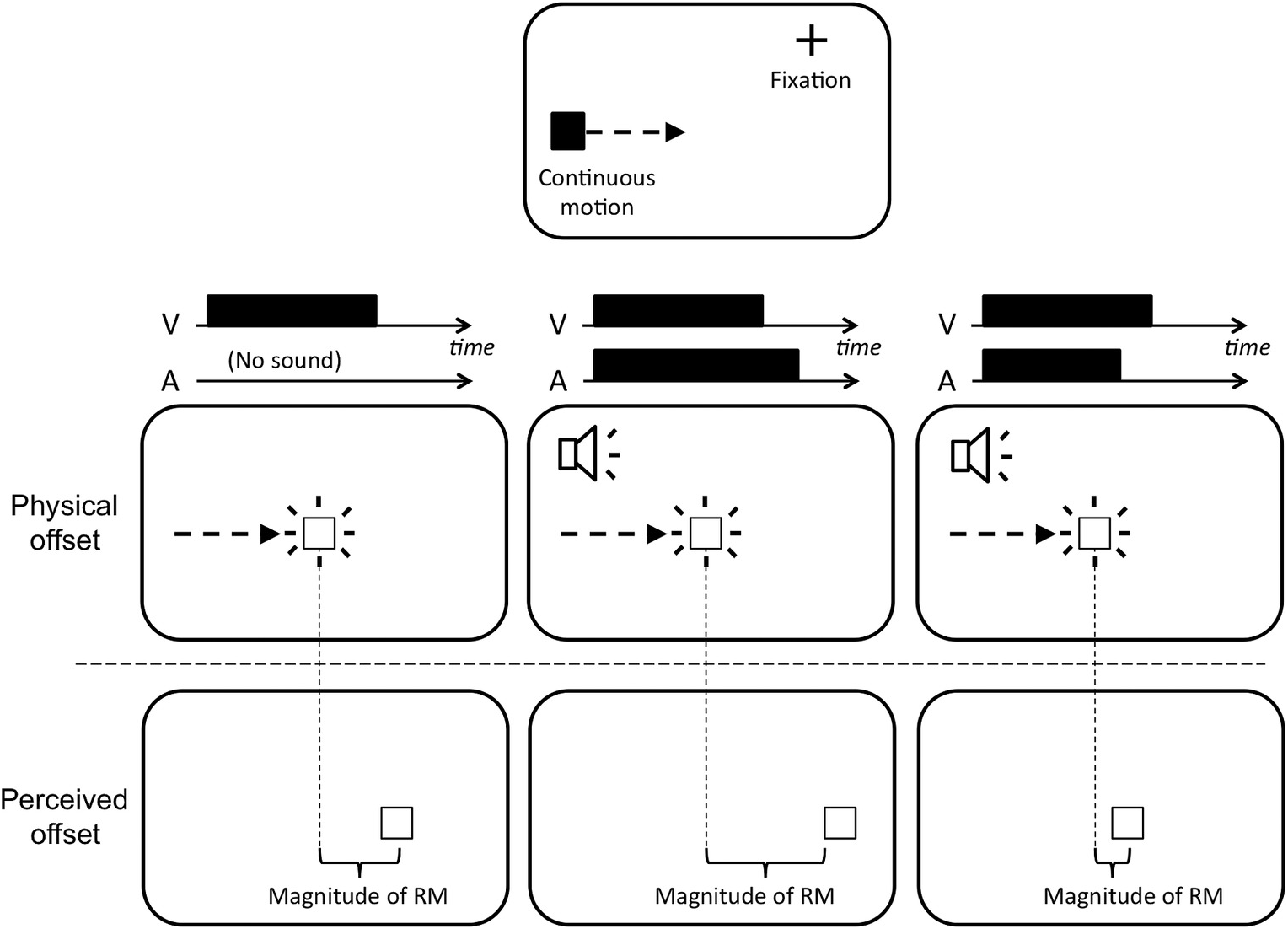

Figure 7.1 Four localization errors with moving stimuli. When the onset position (a) or the offset position (b) of a moving target is localized, observers typically make localization errors in the direction of movement. Similarly, when they judge a moving target that is presented in alignment with a flash, the target appears to lead the flash (c). These errors are known as the Fröhlich effect, flash-lag effect, and representational momentum. In the onset repulsion effect (d), the onset position is judged opposite to the direction of motion.

It took some time before the scientific community dealt with this phenomenon. Almost 30 years later, the same author (Fröhlich, Reference Fröhlich1923) was the first to publish systematic experiments on the mislocalization, and in the subsequent scientific debate the illusion was termed the Fröhlich effect. Nowadays, the Fröhlich effect is typically observed on a computer display, on which the moving target appears suddenly out of nowhere. At the time of Fröhlich (Reference Fröhlich1923), such an experimental setup was difficult to implement. Instead, he used a mechanical device with a moving bar entering a window. In this case the target is not perceived at the position adjacent to the edge of the window, but at a later position within that window.

In fact, Fröhlich (Reference Fröhlich1923) reported not only the mislocalization but also other phenomena. For instance, he reported that the perceived width of the moving bar appeared larger than that of the physical stimulus and that the bar looked brighter at its leading than at its trailing edge (for details, see Fröhlich, Reference Fröhlich1923; Kerzel, Reference Kerzel, Nijhawan and Khurana2010). The mislocalization and its first explanations were amply discussed in the 1930s (e.g., by Fröhlich, Reference Fröhlich1925, Reference Fröhlich1929, Reference Fröhlich1930, Reference Fröhlich1932; Metzger, Reference Metzger1932; Müller, Reference Müller1931; Rubin, Reference Rubin1930), but were neglected after World War II.

Interest in the Fröhlich effect was revived in the 1990s together with two further mislocalizations in motion direction. First, Nijhawan (Reference Nijhawan1994) presented a moving target in alignment with a stationary flash and observed that the target appears to lead the flash (flash-lag effect, Figure 7.1c; for an overview, see Hubbard, Chapter 9 in this volume). In fact, Nijhawan rediscovered an observation made in follow-up studies of the Fröhlich effect (cf. Metzger, Reference Metzger1932; Rubin, Reference Rubin1930; for details, see Kerzel, Reference Kerzel, Nijhawan and Khurana2010). Second, when observers localize the offset point of a moving target, they also tend to judge it to be ahead of the target’s motion trajectory. Unlike in the previous illusions, the target never reached the judged position. This observation was termed representational momentum (Figure 7.1b), as it was seen as evidence for a mental analogue to the momentum of moving physical objects (Freyd & Finke, Reference 394Freyd and Finke1984; for an overview, see Hubbard, Chapter 8 in this volume).Footnote 1

Beside these errors in motion direction, another error was reported at the beginning of the present century that was opposite to motion direction: In several studies, the target’s onset was found to be consistently mislocalized away from the physical onset position opposite to the direction of motion (onset-repulsion effect, Figure 7.1d; Thornton, Reference Thornton2002; see also Actis-Grosso & Stucchi, Reference Actis-Grosso and Stucchi2003; Hubbard & Motes, Reference Hubbard and Motes2002; Hubbard & Ruppel, Reference Hubbard and Ruppel2011; Kerzel, Reference Kerzel2002; Kerzel & Gegenfurtner, Reference Kerzel and Gegenfurtner2004). As in representational momentum, the target was never presented at the judged position, and this mislocalization has also been explained with reference to mental analogues of physical laws (Thornton, Reference Thornton2002).

The present chapter deals mainly with the observation of displacements of the perceived onset position. We will first give an overview about interpretations and findings of the Fröhlich effect. Then, we take into account the conditions and findings that resulted in the error opposite to motion direction (the onset-repulsion effect), and show how the conflict between the illusions might be resolved. We end the chapter with an outlook on the possible contributions of these phenomena to our understanding of perceptual processes in general.

The Mislocalization in Motion Direction: The Fröhlich Effect

Explanations of the Fröhlich effect can be roughly divided into four accounts: the sensation-time account, the metacontrast or lateral-inhibition account, the attentional account, and the mental extrapolation account.

Sensation Time

One main finding of Fröhlich was that the size of the mislocalization f increased with movement speed v, and that the ratio f/v proved to be fairly constant (Fröhlich, Reference Fröhlich1923). The fixed ratio f/v turns the spatial error into a temporal error, and Fröhlich interpreted the time constancy as an expression of the sensation time (“Empfindungszeit”). The sensation time was understood as the time between the retinal impact of light and the corresponding visual sensation (see also Kreegipuu & Allik, Reference Kreegipuu and Allik2003). Fröhlich saw the finding that the sensation time varied with the brightness of stimuli as plausible evidence for his explanation. It was, as expected, on the order of 100 msec with faint stimuli and about 50 msec with bright stimuli.

At first glance, the idea of sensation time appeared attractive to explain the localization error. However, the explanation was criticized early by the contemporaries of Fröhlich, both empirically and theoretically. Rubin (Reference Rubin1930), for instance, noted that reducing the size of the window (i.e., reducing the trajectory length) shortened the Fröhlich effect (see also Müsseler & Neumann, Reference Müsseler and Neumann1992) and thereby should also shorten the sensation time. Also, Metzger (Reference Metzger1932) proposed that the sensation time might be longer at motion onset than at positions further along the trajectory (similar the latency differences discussed to explain other spatiotemporal phenomena; e.g., see Bachmann, Reference Bachmann1999; Hubbard, Reference Hubbard and Ruppel2014; Whitney, Reference Whitney2002). However, these variations are not consistent with the basic idea of sensation time and are therefore theoretically problematic. Metzger (Reference Metzger1932) pointed out correctly that the concept of sensation time has to be applied not only to the onset of motion but to the entire motion trajectory. In this case, an observer should perceive the moving stimulus with a corresponding temporal delay, but at all positions of the trajectory.

Thus, the concept of sensation time could not provide a satisfactory answer to the question of why only the first positions were excluded from perception. Furthermore, at the time of Fröhlich it was not clear at all whether the first positions were perceptually missed or whether the first positions were simply displaced in the direction of motion.Footnote 2 Only later studies revealed that observers have indeed no access to the first positions: In experiments of Müsseler and coworkers (Müsseler & Aschersleben, Reference Müsseler and Aschersleben1998, Exp. 5; Müsseler & Tiggelbeck, Reference Müsseler and Tiggelbeck2013, Exp. 1), observers failed to detect brief pattern changes at motion onset but were better in detecting the change at positions further along the trajectory (see also Ansorge, Carbone, Becker, & Turatto, Reference Ansorge, Carbone, Becker and Turatto2010 for evidence from reaction time studies). Thus, a mechanism is called for that prevents the very first positions from being perceived.

Metacontrast Masking and Lateral Inhibition

At first sight, accounts of the Fröhlich effect based on metacontrast masking and lateral inhibition seemed to explain the low visibility of the initial part of the trajectory (cf. Carbone & Ansorge, Reference Carbone and Ansorge2008; Geer & Schmidt, Reference Geer and Schmidt2006; Kirschfeld & Kammer, Reference Kirschfeld and Kammer1999; Piéron, Reference Piéron1935). Metacontrast masking was first described by Stigler (Reference Stigler1910) and refers to the observation that the visibility of a stationary, flashed target is reduced when it is followed by a mask in its spatial vicinity (visual backward masking). Backward masking is optimal when target and mask share common stimulus boundaries, for instance, when the target is a disk and the mask is a surrounding ring. Optimal stimulus onset asynchronies (SOAs) between target and mask are between 40 msec and 100 msec and are thus within the range of the temporal error in the Fröhlich effect. Piéron (Reference Piéron1935) was the first to hypothesize the Fröhlich effect to be caused by masking mechanisms.

Lateral inhibition has been used to interpret metacontrast masking (e.g. Bridgeman, Reference 376Bridgeman2006). The basic assumption is that the presentation of a stimulus elicits excitatory and inhibitory neuronal activity in a retinotopic (cortical) map, for instance in the form of a simplified Mexican-hat function (Figure 7.2a). The inhibitory parts could be crucial for the Fröhlich effect, and Geer and Schmidt (Reference Geer and Schmidt2006) proposed that multiple inhibitory connections from neighboring space have a cumulative masking effect on early parts of the target trajectory (Figure 7.2b). The authors interpreted the effect of trajectory length accordingly by assuming that inhibition from adjacent stimulus positions accumulates across the trajectory. Therefore, the Fröhlich effect increases with trajectory length.

Figure 7.2 Simplified assumptions (a) of lateral inhibition with stationary stimuli, (b) cumulative lateral inhibition with moving stimuli with regard to Geer and Schmidt (Reference Geer and Schmidt2006), and (c) metacontrast and visual focal attention with regard to Kirschfeld and Kammer (Reference Kirschfeld and Kammer1999, fig. 6). The latter figure illustrates only the additional excitatory and inhibitory neuronal activity, which is elicited by the motion of the stimulus.

However, if the area of lateral inhibition or any other masking mechanism just moves across a retinotopic map (cf. Figure 7.2b), there is no way to explain why only the first positions are excluded from the perception. This explanatory gap was already encountered by the sensation time account. As a matter of fact, the masking account predicts that most of the trajectory (except for the last position) should become invisible, because each stimulus presentation is masked by the subsequent stimulus presentations. Clearly, that is not the case. Therefore, an additional component is needed, which explains why the target becomes visible at all. In the subsequent accounts, this function was assigned to visual attention.

Visual Attention and Its Neuronal Implementation

At the end of the 1990s, two independently developed accounts refer to attentional mechanisms to explain the Fröhlich effect. Kirschfeld and Kammer’s (Reference Kirschfeld and Kammer1999) masking-plus-focal-attention account assumed that positions on the trajectory behind the target are masked (cf. Figure 7.2c), similar to (Reference Piéron1935) ideas and nowadays postulated by Geer and Schmidt (Reference Geer and Schmidt2006). The new assumption was that positions on the trajectory before the target are pre-activated by the target itself (cf. Figure 7.2c). The authors associated this pre-activation with mechanisms similar to cue-induced visual focal attention. Thus, the approach combines mechanisms of metacontrast masking and visual focal attention.

With regard to Kirschfeld and Kammer (Reference Kirschfeld and Kammer1999), focal attention ensures that masking does not occur along the entire trajectory. However, focal attention must first be shifted to the moving stimulus, and that is why the first positions of the trajectory are excluded from perception (the Fröhlich effect). Once attention has reached the moving stimulus, it becomes visible because masking is counteracted and overcome.

To support their view, Kirschfeld and Kammer (Reference Kirschfeld and Kammer1999) presented a rotating rod that was continuously illuminated and was additionally flashed with far higher energy when it first appeared. The resulting percept was actually of two bars: a flashed bar at the correct initial position, and a moving bar that was displaced in the direction of motion (the Fröhlich effect). The interpretation was that the transient, flashed illumination of the initial orientation was strong enough to overcome masking, while the initial portion of the moving bar’s trajectory was suppressed until the pre-activation (focal attention) was established. Further, Kirschfeld and Kammer concluded that perception of the moving bar had a shorter latency than perception of the stationary flashed bar, because the moving bar appeared ahead of the flashed bar and both bars appeared simultaneously (cf. the flash-lag effect; Metzger, Reference Metzger1932; Nijhawan, Reference Nijhawan1994; Rubin, Reference Rubin1930). The conclusion that moving stimuli have shorter latencies than flashed stimuli has also been confirmed in reaction-time experiments (Aschersleben & Müsseler, Reference Aschersleben and Müsseler1999).

The other attentional account was originally developed without reference to masking mechanisms.Footnote 3 Müsseler and coworkers (Müsseler & Aschersleben, Reference Müsseler and Aschersleben1998; Müsseler & Neumann, Reference Müsseler and Neumann1992, Exp. 6) simply started from three well-accepted attentional mechanisms used to explain effects with stationary stimuli (e.g., spatial cuing effects), but which should be equally applicable to situations in which stimuli are in motion (see also Ansorge et al., Reference Ansorge, Carbone, Becker and Turatto2010; Carbone & Pomplun, Reference Carbone and Pomplun2007; Kerzel & Gegenfurtner, Reference Kerzel and Gegenfurtner2004; Müsseler, Stork, & Kerzel, Reference Müsseler, Stork and Kerzel2002): (1) the presentation of a stimulus in the visual field elicits an attentional shift toward that stimulus; (2) an attention shift takes time; (3) a phenomenal representation of a stimulus is not available before the end of the attention shift. Applied to the Fröhlich-effect situation, this means that with the presentation of a moving stimulus, a visual focus shift is initiated, and while this shift is under way, the stimulus continues to move. The first phenomenal representation of the stimulus is available at the end of the focus shift, and this is what is observed in the Fröhlich effect.

Both attentional accounts were able to explain the main findings observed with the Fröhlich effect, for instance, that the Fröhlich effect increases with increasing target velocity. The effect of stimulus brightness (or stimulus contrast; Fröhlich, Reference Fröhlich1923) can be plausibly addressed by assuming that establishing focal attention or eliciting an attentional shift is more effective with bright than with faint stimuli. Further, both accounts predicted that cuing the onset position with a stationary stimulus reduced the Fröhlich effect. The observed reduction effect was small but reliable (Adamian & Cavanagh, Reference Adamian and Cavanagh2017; Kerzel & Müsseler, Reference Kerzel and Müsseler2002; Müsseler & Aschersleben, Reference Müsseler and Aschersleben1998; Whitney & Cavanagh, Reference Whitney and Cavanagh2000a). Finally, they explain why mislocalizations are more pronounced in the Fröhlich effect than in the flash-lag effect: At the beginning of the movement, attention is far from the moving object and a large mislocalization results. As the motion progresses, attention catches up with the moving object and the mislocalization is reduced (Müsseler et al., Reference Müsseler, Stork and Kerzel2002).

However, the attentional accounts have also serious problems with some findings: for instance, that an increase of trajectory length led to an increase of the Fröhlich effect (Rubin, Reference Rubin1930; Müsseler & Neumann, Reference Müsseler and Neumann1992, Exp. 6). The authors of the masking-plus-focal-attention account (Kirschfeld & Kammer, Reference Kirschfeld and Kammer1999) might integrate Geer and Schmidt’s (Reference Geer and Schmidt2006) assumption that inhibition from adjacent stimulus positions accumulates across the trajectory, which leads to larger Fröhlich effects with longer trajectories. The attention-shifting account of Müsseler and Aschersleben (Reference Müsseler and Aschersleben1998) has more difficulties to accommodate this finding. The solution could be a modification of their third assumption, which claims that a phenomenal representation of a stimulus is not available before the end of the attention shift. This claim may be too strong. Note that shifting attention toward a stimulus implies at least coarse knowledge of the stimulus location prior to the start of the shift. What happens when an attention shift cannot be successfully completed, as would be the case when the moving target has a short trajectory and has already disappeared from the screen? Taking the third assumption literally, no stimulus should be perceived at all. It is more likely that the coarse representation of the stimulus at the beginning of the attention shift together with the incoming position information during the shift establishes what is seen. In any case, the perceived position should be closer to the starting position, i.e., the Fröhlich effect would be decreased.

Another point is that both attentional approaches use different levels of description. While Kirschfeld and Kammer (Reference Kirschfeld and Kammer1999) selected a neuronal level of description to address masking and attentional processes, the attention shifting account of Müsseler and coworkers (Müsseler & Aschersleben, Reference Müsseler and Aschersleben1998; Müsseler & Neumann, Reference Müsseler and Neumann1992) was framed in a functional formal way, which leaves the neuronal processes unspecified. Therefore, Müsseler et al. (Reference Müsseler, Stork and Kerzel2002; see also Müsseler & Tiggelbeck, Reference Müsseler and Tiggelbeck2013) attempted to identify the attention-shifting component in the neuronal models of Kirschfeld and Kammer (Reference Kirschfeld and Kammer1999) and particularly in the dynamic-field account of Jancke and Erlhagen (Jancke et al., Reference Jancke, Erlhagen, Dinse, Akhavan, Giese and Steinhage1999; Jancke & Erlhagen, Reference Jancke, Erlhagen, Nijhawan and Khurana2010). The dynamic-field account, originally developed to explain the activity of neuronal populations in the primary visual cortex of the cat (Jancke et al., Reference Jancke, Erlhagen, Dinse, Akhavan, Giese and Steinhage1999) and then successfully applied to perceptual mislocalizations with moving stimuli (for an overview, see Jancke & Erlhagen, Reference Jancke, Erlhagen, Nijhawan and Khurana2010), assumes that the presentation of a stimulus forms an activation pattern that is not restricted to the area covered by the stimulus (see also Hubbard, Reference Hubbard1994). Rather, it spreads its activation to and integrates contextual information from the adjacent parts of the visual field. Therefore, in response to an afferent input, the activation is assumed to interact with new incoming information and, thus, modifies suprathreshold activity.

When a stimulus moves through the visual field, it can be assumed that the incoming information contributes to and modifies the activation pattern in such a way that a stimulus-driven bow wave of activity occurs, which moves continuously across the visual scene (Müsseler et al., Reference Müsseler, Stork and Kerzel2002). Depending on velocity, it peaks at or even ahead of the leading edge of the stimulus. Since the Fröhlich effect emerges in the buildup phase of the bow wave, activation is accumulated starting from the resting level. The movement resulted in a skew wave that exceeds the perceptual threshold (distance between resting level and supraliminal activation), and the Fröhlich effect is observed. It has been suggested that spreading subthreshold activation constitutes a neuronal correlate of a cue-induced attentional mechanism that alters the processing of spatial information (Bocianski, Müsseler, & Erlhagen, Reference Bocianski, Müsseler and Erlhagen2008, Reference Bocianski, Müsseler and Erlhagen2010; Kirschfeld & Kammer, Reference Kirschfeld and Kammer1999; Müsseler & Tiggelbeck, Reference Müsseler and Tiggelbeck2013; Steinman, Steinman, & Lehmkuhle, Reference Steinman, Steinman and Lehmkuhle1995). We will return to this point later in the chapter.

Mental Extrapolation (Visual Prediction)

Mental extrapolations often occur in everyday life. When, for instance, a tennis ball flies through the visual scene, a spatial lag between the ball’s position in the real world and its perceived position should emerge from neuronal transmission latencies. In order to hit the ball with a racket successfully, there must be some form of compensation, and this compensation might be in the motor system (e.g., Kerzel & Gegenfurtner, Reference Kerzel and Gegenfurtner2004). It overcomes the lag by predicting the position of the moving target forward.

However, in Nijhawan’s view (Reference Nijhawan1994, Reference Nijhawan2008; see also Hubbard, Chapter 9 in this volume), the lag is compensated not only by motor predictions but also by visual predictions. In this view, the flash-lag effect can be understood as the visualized percept of this prediction. The flash lags because the visual system extrapolates the position of the moving target, and this is what is seen. Thus, in the strong version of the extrapolation assumption, stimuli in motion are perceived at their real-time positions and do not lag behind. Alternative accounts assume different perceptual latencies for the flash and the moving target (e.g., Baldo & Klein, Reference Baldo and Klein1995; Whitney & Murakami, Reference Whitney and Murakami1998).

The extrapolation assumption is controversially debated (see, e.g., the discussion in Baldo & Klein, Reference Baldo and Klein2008; Krekelberg, Reference Krekelberg2008; Nijhawan, Reference Nijhawan2008; Whitney, Reference Whitney2008). Although also seen as an explanation for the Fröhlich effect (e.g., Maus, Weigelt, Nijhawan, & Muckli, Reference Maus, Weigelt, Nijhawan and Muckli2010), it is especially difficult to see how an extrapolation mechanism works at the onset position of moving stimuli. Predicting future positions of a target requires some knowledge about the target’s motion direction and velocity. As there is no preceding motion trajectory at motion onset, it is unclear how extrapolation could account for the Fröhlich effect. Here one must probably recruit mechanisms that have been introduced in the previous sections.

As a last remark, it should be noted here that a predictive component also exists in the masking-plus-focal-attention account (Kirschfeld & Kammer, Reference Kirschfeld and Kammer1999) and in the bow-wave account or dynamic field model, respectively (Jancke & Erlhagen, Reference Jancke, Erlhagen, Nijhawan and Khurana2010; Müsseler, et al., Reference Müsseler, Stork and Kerzel2002). The difference is that visual prediction determines the percept while a pre-activation only prepares an area for the target to be seen.

Taking into Account the Mislocalization Opposite to the Direction of Motion: The Onset-Repulsion Effect

As already noted in the Introduction, some studies also found mislocalization opposite to the direction of motion. Note that this error is contrary to the Fröhlich effect: In the onset-repulsion effect (Thornton, Reference Thornton2002), the judged onset position of the target was found to be consistently mislocalized opposite to the direction of motion (Figure 7.1d; see also Actis-Grosso & Stucchi, Reference Actis-Grosso and Stucchi2003; Hubbard & Motes, Reference Hubbard and Motes2002; Hubbard & Ruppel, Reference Hubbard and Ruppel2011; Kerzel, Reference Kerzel2002; Kerzel & Gegenfurtner, Reference Kerzel and Gegenfurtner2004).

Studies concerned with the onset-repulsion effect sometimes revealed contradictory findings. For instance, some studies found that an increment in velocity shifted the judged onset further opposite to motion direction (Kerzel, Reference Kerzel2002; Thornton, Reference Thornton2002), whereas other studies did not find any effect of velocity (Actis-Grosso & Stucchi, Reference Actis-Grosso and Stucchi2003; Hubbard & Motes, Reference Hubbard and Motes2002; Kerzel, Reference Kerzel2002; Müsseler & Kerzel, Reference Müsseler and Kerzel2004). Some authors found the onset-repulsion effect only with a relative judgment task (Kerzel, Reference Kerzel2002), whereas others did so also with an absolute positioning task (Müsseler & Kerzel, Reference Müsseler and Kerzel2004; Thornton, Reference Thornton2002). Further, the onset-repulsion effect seems to depend on motion type and motion direction. It is largest with smooth, continuous motions and decreases with implied motions (Kerzel, Reference Kerzel2004; Thornton, Reference Thornton2002). Finally, upward motion or right-to-left motion resulted in stronger onset-repulsion effects than downward or left-to-right motion (Thornton, Reference Thornton2002).

It is obvious that accounts in terms of sensation time, metacontrast, or attention simply do not apply to these findings, most obviously because perceptual processes could never have been triggered at positions where the target was never presented (opposite to target motion). Instead, explanations of the onset-repulsion effect often refer to non-perceptual mechanisms. For instance, it is possible that the onset position is accurately perceived but is distorted during the delay before the judgment is made. In this case the onset-repulsion effect would originate from a memory failure, similar to the proposed mechanisms underlying representational momentum (Freyd & Finke, Reference 394Freyd and Finke1984; for an overview, see Hubbard, Chapter 8 in this volume). It is also possible that in this case observers have an imprecise percept of the onset position and tend to estimate the origin of the motion post hoc, which is subject to biases (see discussion below).

However, mislocalizations of the type of representational momentum are in the direction of motion, and a mechanism is needed to explain why the onset-repulsion effect is in the opposite direction. Therefore, it has been discussed that estimations of the onset position run backward along the observed trajectory, as this reflects more natural, physical tendencies (Thornton, Reference Thornton2002). In the same vein, Runeson (Reference Runeson1974) reported that observers perceive an illusory deceleration at the onset of a motion even when the physical velocity is constant. This may result in an opposite error if the post hoc estimation of the onset position is calculated on the basis of constant motion along the entire trajectory.

The most critical point, however, is how to explain the contrary findings of the Fröhlich and onset-repulsion effects. It turned out that the experimental procedures used to measure the mislocalizations were quite different. In reports of the Fröhlich effect, observers were able to predict where the moving target would appear. For instance, the moving target always appeared at the fixed edge of a window (Fröhlich, Reference Fröhlich1923) or at two fixed eccentricities to the left or right of fixation (Müsseler & Aschersleben, Reference Müsseler and Aschersleben1998). In reports of the onset-repulsion effect, however, the moving target appeared randomly in a relatively large area (Hubbard & Motes, Reference Hubbard and Motes2002; Thornton, Reference Thornton2002), and observers were unable to predict the onset positions.

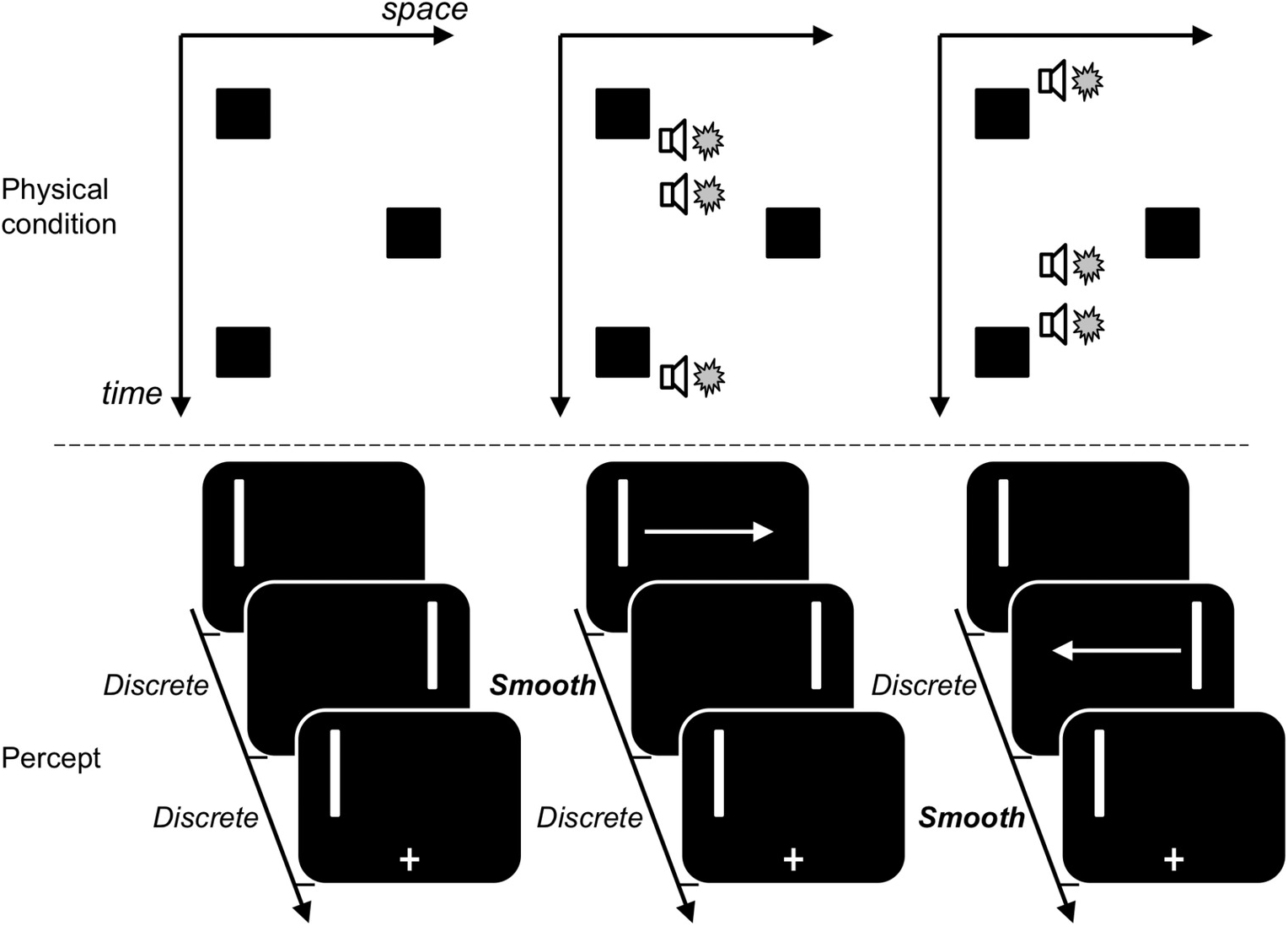

To examine the hypothesis that the error in motion direction (the Fröhlich effect) and the error opposite to motion direction (the onset-repulsion effect) originated from the predictability of onset positions, Müsseler and Kerzel (Reference Müsseler and Kerzel2004; see also Müsseler et al., Reference Müsseler, Stork and Kerzel2008) conducted experiments in which two different trial contexts were used. In the random context, the target appeared mostly at a random onset position in a large area of the computer screen (similar to Thornton, Reference Thornton2002), but in one-sixth of the trials the target appeared about 6.6° to the left or right of fixation (Figure 7.3). Only these trials of the random-context condition were compared with the trials of the constant-context condition, in which the target always appeared at the onset positions to the left or right of fixation (similar to Müsseler & Aschersleben, Reference Müsseler and Aschersleben1998). The judgments showed a huge difference between context conditions: The onset was localized –0.5° opposite to the direction of motion in the random-context condition (onset-repulsion effect) and 1.5° in the direction of motion in the constant-context condition (the Fröhlich effect). Thus, low predictability of onset positions led to the error opposite to the direction of motion, while high predictability of onset positions led to the error in the direction of motion.Footnote 4

Figure 7.3 Trial contexts and findings of Müsseler and Kerzel (Reference Müsseler and Kerzel2004, Exp. 1). In the constant-trial context, the target always appeared at constant onset positions (OP, black dots) to the left or right from fixation. In the random-trial context, the target appeared mostly at random OPs (grey dots) in a 30 x 30° field of the computer screen, but in one-sixth of the trials also at the constant OPs. In the data analysis, only these trials were compared with the trials of the constant context. The results showed that the onset was localized opposite to the direction of motion with the random context (negative localization error of –0.5°; the onset-repulsion effect) and in the direction of motion with the constant context (positive localization error of 1.5°; the Fröhlich effect).

With other authors (Actis-Grosso & Stucchi, Reference Actis-Grosso and Stucchi2003; Kerzel, Reference Kerzel2002; Kerzel & Gegenfurtner, Reference Kerzel and Gegenfurtner2004; Thornton, Reference Thornton2002), we assumed that the difference between context conditions originates from an error in the judgment phase. When positional predictability is low, as is the case in the random-context condition, observers may notice a target relatively late, and with every new trial they might become aware of a possible localization error. To avoid this error, they may overcompensate and point to positions opposite to motion. Consistent with strategic adjustments, differences between the random-context and constant-context conditions were visible after about 15–35 trials (Müsseler & Kerzel, Reference Müsseler and Kerzel2004, Exp. 4).

However, further experiments by Müsseler and Tiggelbeck (Reference Müsseler and Tiggelbeck2013) cast doubts on the overcompensation explanation. With regard to the overcompensation explanation, the error opposite to motion direction is assumed not to be a perceptual one, but to result from the tendency in the judgment phase to correct for the possible spatial error. Consequently, an overcompensation mechanism should mainly affect a localization task, but not a discrimination task. In an experiment of Müsseler and Tiggelbeck (Reference Müsseler and Tiggelbeck2013, Exp. 1), moving targets either started out as squares and changed to circles at different positions on the trajectory, or appeared as circles and did not change (cf. also Ansorge et al., Reference Ansorge, Carbone, Becker and Turatto2010). Observers’ task was to discriminate whether or not they perceived a square during the motion of the target. The overcompensation account expected equal or worse discrimination performance in the random-context condition than in the constant-context condition. This result would point out a response bias, which compensates for a possible localization error in the judgment phase. However, the contrary finding was observed: When the squares appeared in the very first positions of the motion, better discrimination performance was found in the random-context condition than in the constant-context condition. Thereafter, the difference between context conditions vanished.

This finding was somewhat surprising, as it indicated worse perceptual performance in the constant-context condition. And this disadvantage of the constant-context condition was somewhat counterintuitive: When stimuli always appeared at predictable left/right positions, as is the case in the constant-context condition, observers could direct their attention to both positions in advance (parallel allocation of visual attention to two positions; cf. Awh & Pashler, Reference Awh and Pashler2000; Cave, Bush, & Taylor, Reference Cave, Bush and Taylor2010; Franconeri, Alvarez, & Enns, Reference Franconeri, Alvarez and Enns2007; but see also Jans, Peters, & De Weerd, Reference Jans, Peters and DeWeerd2010). Directing attention to a position usually improves spatial localization in this area, as was found in several studies with stationary targets (e.g., Bocianski et al., Reference Bocianski, Müsseler and Erlhagen2008, Reference Bocianski, Müsseler and Erlhagen2010; Tsal & Bareket, Reference Tsal and Bareket1999, Reference Tsal and Bareket2005; Tsal, Meiran, & Lamy, Reference Tsal, Meiran and Lamy1995; Yeshurun & Carrasco, Reference Yeshurun and Carrasco1999). Therefore, observers in the constant-context condition of Müsseler and Tiggelbeck (Reference Müsseler and Tiggelbeck2013) had not directed their attention to the left/right onset positions, or alternatively, directing attention to these positions produced worse localization performance.

To examine the last possibility, Müsseler and Tiggelbeck (Reference Müsseler and Tiggelbeck2013, Exp. 3) used an exogenous cue to direct attention in the random-context condition. The cue was presented at the onset positions, 280 msec before the moving target appeared. If, with moving stimuli, directing attention results in worse localization performance, presenting the cue should result in comparable mislocalizations in both context conditions. And this was what the results actually showed. When the cue preceded the motion onset, the localization error of the random-context condition increased in size relative to the localization error of the constant context.Footnote 5 Thus, Müsseler and Tiggelbeck’s experiments delivered consistent results. When observers allocated their attention to the onset position, worse discrimination performance (Exp. 1) went hand in hand with worse localization precision (Exp. 3).

An issue that remains unexplained is why attention improves discrimination and localization performance with stationary stimuli while it seems to impair discrimination and localization precision at the onset position of moving stimuli. We speculated that, contrary to stationary stimuli, moving stimuli require fast spatial disengagement (Petersen & Posner, Reference Petersen and Posner2012) from the previously attended position in order to follow the stimulus, especially at the onset position. It seems plausible that this disengagement could impair processing.

How to implement this idea in the neuronal dynamic field model discussed in the previous section? Bocianski et al. (Reference Bocianski, Müsseler and Erlhagen2008, Reference Bocianski, Müsseler and Erlhagen2010) already applied the model to an illusion with stationary stimuli and extended it by integrating a top–down attentional mechanism. In the empirical part of their paper, observers were confronted with blockwise presentations, similar to the random and constant context used by Müsseler and Kerzel (Reference Müsseler and Kerzel2004). The authors assumed that the blockwise presentation of a target at constant positions modulates the attentional baseline by arousing a peak at attended locations and by suppressing all other locations (for neuronal evidence see, e.g., Bestmann, Ruff, Blakemore, Driver, & Thilo, Reference Bestmann, Ruff, Blakemore, Driver and Thilo2007; Smith, Singh, & Greenlee, Reference Smith, Singh and Greenlee2000). Empirical and modeling data showed that localization precision was improved when the static target was presented in the attended area (Bocianski et al., Reference Bocianski, Müsseler and Erlhagen2010).

When, instead of stationary stimuli, a moving target is presented at the attended area, the only assumption to add is that the target might have left the region of the attentional peak already before a suprathreshold activity is reached. Moreover, the new incoming information of the target may interact within the previous activation pattern, which may additionally impair localization performance. In a sense, the postulated mechanism is similar to that accounting for effects of spatial disengagement from previously attended positions.

Note that this extension of the neuronal dynamic field model can only account for the observed differences between the random and constant context – that is, for the clear mislocalizations in motion direction in the constant-context condition and the more or less precise localizations in the random-context condition. It cannot account for the onset-repulsion effect per se – that is, for the error opposite to motion direction.

Conclusions

The present chapter focused initially on the Fröhlich effect – the localization error at the onset position in motion direction. In the nearly century-old scientific debate on this illusion, different accounts were considered and discarded, among them the sensation-time account and the masking account. But it is worth emphasizing that these accounts did not simply disappear, but have been modified by additional findings. For instance, the sensation-time assumption is still discussed in the context of the flash-lag effect, with different latencies for the flash and the moving target (e.g., Baldo & Klein, Reference Baldo and Klein2008; Krekelberg, Reference Krekelberg2008; Whitney, Reference Whitney2008), or in the context of the Fröhlich effect, with longer latencies at the onset position than at later positions on the trajectory (e.g., Aschersleben & Müsseler, Reference Aschersleben and Müsseler1999; Kirschfeld & Kammer, Reference Kirschfeld and Kammer1999).

The underlying processing mechanisms in localizing the onset position of moving stimuli were further clarified by the discovery of the onset-repulsion effect, that is, the error opposite to motion direction. As it turned out in several studies, the localization judgments varied strongly with trial context. Perceived starting positions were in the direction of motion in constant-context conditions and opposite to motion direction (or at least essentially reduced) in random-context conditions (Müsseler & Kerzel, Reference Müsseler and Kerzel2004; Müsseler & Tiggelbeck, Reference Müsseler and Tiggelbeck2013; Müsseler et al., Reference Dodds, van Belle, Peers, Dove, Cusack and Duncan2008). It is likely that when stimuli always appear at predictable positions, as is the case in the constant-context condition, observers direct their attention to the positions in advance. However, one would expect that localization precision is improved, whereas the opposite was found. Localization precision and discrimination performance was worse with constant than with random context.

Trial context was also found to affect localization judgments with stationary stimuli (Bocianski et al., Reference Bocianski, Müsseler and Erlhagen2008, Reference Bocianski, Müsseler and Erlhagen2010), but here the findings were as expected (see also Tsal & Bareket, Reference Tsal and Bareket1999, Reference Tsal and Bareket2005; Tsal et al., Reference Tsal, Meiran and Lamy1995; Yeshurun & Carrasco, Reference Yeshurun and Carrasco1999). Localization precision was better with constant-context conditions than with random-context conditions. To account for these differences in the findings between stationary and moving stimuli, we speculated that moving stimuli require a spatial disengagement from the previously attended onset position in order to follow the target. It seems plausible that this attentional disengagement could impair processing. Certainly, this idea needs further confirmation, but if this conclusion proves to be true, attentional disengagement should be at the heart of explanations of the Fröhlich effect.

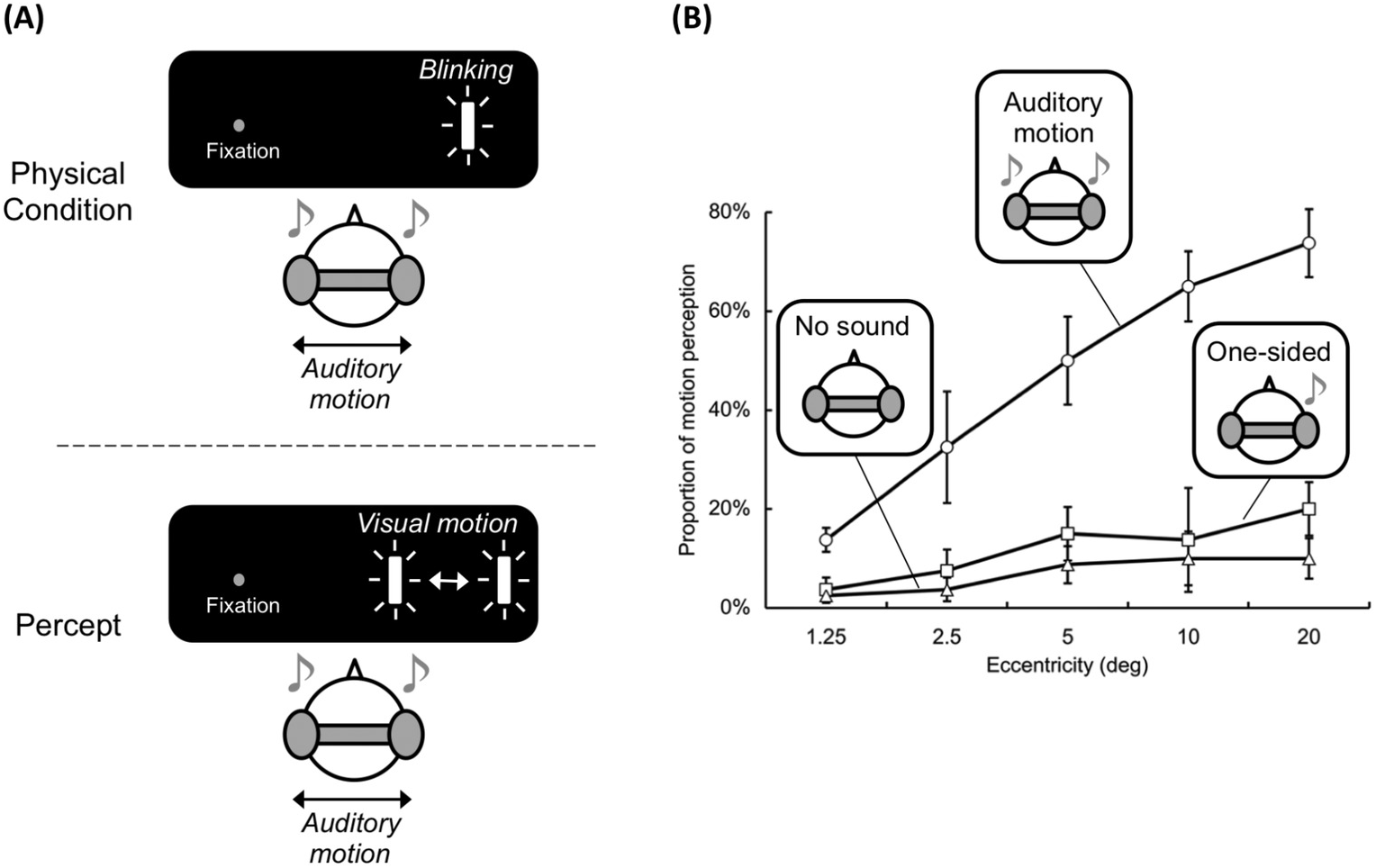

Memory for the final location of a moving target is often displaced in the direction of anticipated motion, and this is referred to as representational momentum (Freyd & Finke, Reference 394Freyd and Finke1984). Overviews of variables that influence representational momentum were presented in Hubbard (Reference Hubbard1995c, Reference Hubbard2005b, Reference Hubbard2014a), and comparisons of representational momentum with other types of momentum-like effect were presented in Hubbard (Reference Hubbard2014a, Reference Hubbard2015b, Reference Hubbard2017). This chapter consolidates earlier lists of variables that influence representational momentum into a single comprehensive catalog. Such a consolidation should be useful for future studies of representational momentum and other momentum-like effects (e.g., variables that influence representational momentum might be hypothesized to have analogous effects on other momentum-like effects). In addition, suggestions regarding a few properties of representational momentum, and the relationship of representational momentum with other spatial biases, are provided. As theories of representational momentum were addressed in Hubbard (Reference Hubbard, Nijhawan and Khurana2010), and discussion linking representational momentum with other momentum-like effects was provided in Hubbard (Reference Hubbard2014a, Reference Hubbard2015b, Reference Hubbard2017), those issues are not discussed here.

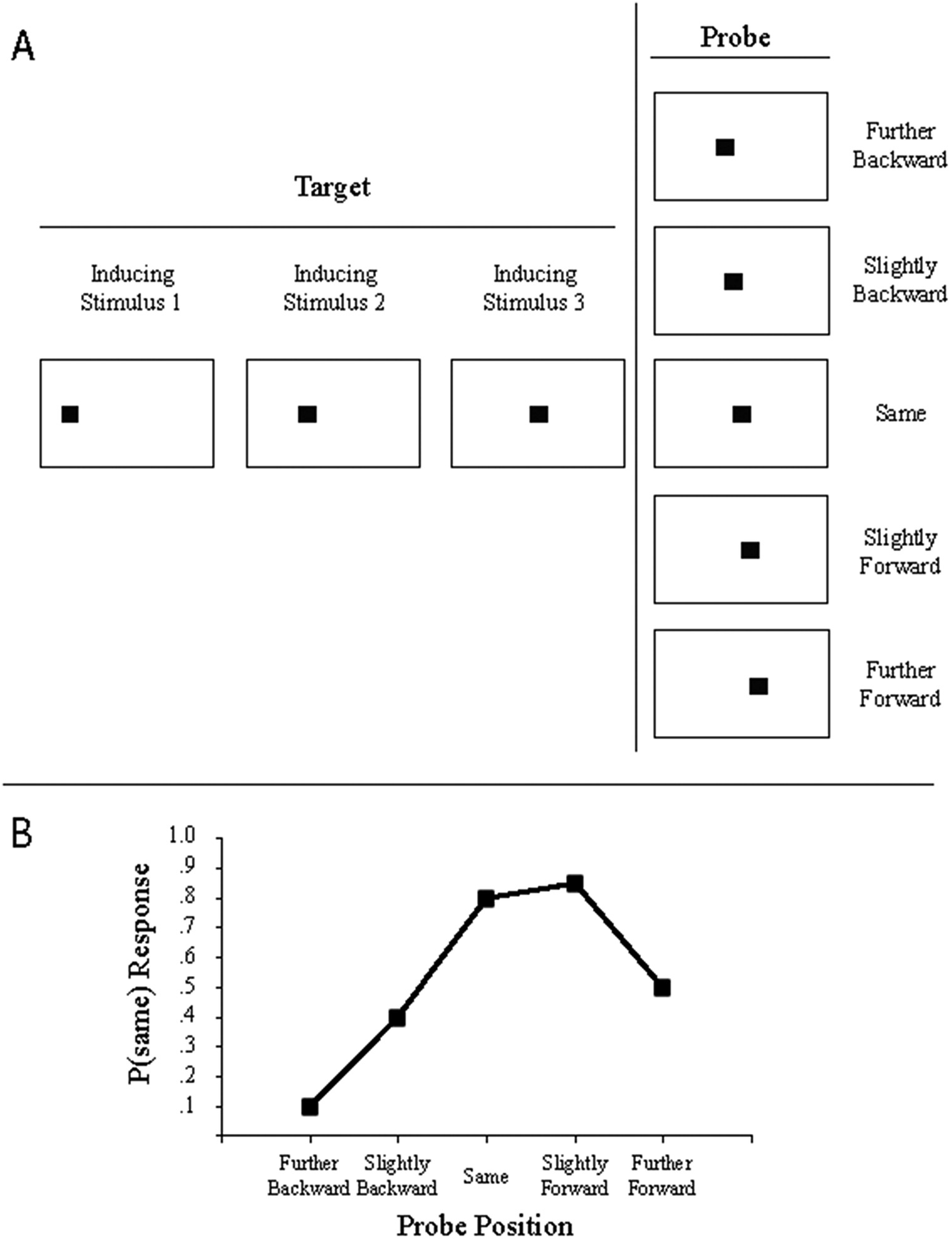

A common stimulus presentation method (implied motion) and response measure (probe judgment) used in studies of representational momentum are illustrated in Figure 8.1. In the top panel, three sequential presentations of a target (inducing) stimulus implying motion from left to right are shown. A fourth (probe) stimulus is shown, and the probe is located behind the final location of the target (i.e., shifted in the direction opposite to target motion), at the same location as the final location of the target, or beyond the final location of the target (i.e., shifted in the direction of target motion). Participants judge whether the probe is at the same location where the target vanished or at a different location. In the bottom panel, probability of a same response is plotted as a function of probe location, and representational momentum is indicated by the greater likelihood of a same response to a probe slightly beyond the final location than to a probe slightly behind the final location. Other methods of stimulus presentation include smooth continuous motion of a target or presentation of a single static target drawn from a longer motion sequence (e.g., a photograph of a dancer in mid-leap). Other methods of response measurement include using a computer mouse to position the cursor at the judged location or touching the display at the judged location.

Figure 8.1 An illustration of a typical methodology and results for an experiment assessing representational momentum. In Panel A, the large rectangles indicate the outlines of the display, and the small black squares indicate the target (left) or probe (right). There are three consecutive appearances of inducing stimuli that comprise the target. In this example, the target exhibits implied rightward motion (typically, each inducing stimulus is presented for 250 msec, and there is a 250-msec interstimulus interval between successive inducing stimuli and between the final inducing stimulus and probe). A probe is presented, and position of the probe relative to the actual final position of the target varies across trials (five potential probe positions are shown in the column on the right). In Panel B, a hypothetical but typical distribution of same responses as a function of probe position is illustrated. The presence of representational momentum is indicated by the higher probability of same responses to probes forward of the final actual target location than to probes backward of the actual final target location.

Variables That Influence Representational Momentum

Hubbard (Reference Hubbard2005b) organized variables that influence representational momentum into categories related to the target, display, context, or observer. A similar scheme is followed here. Some variables have been manipulated in numerous studies (e.g., target velocity), but discussion for each variable is limited to studies that initially demonstrated the effect or an important constraint of that variable.

Characteristics of the Target

Variables influencing representational momentum that are related to the target include (a) velocity, (b) distance traveled, (c) direction, (d) shape, (e) identity and semantic category, (f) size, (g) eccentricity, (h) visual field, (i) involvement of a human form, and (j) nonvisual stimuli.

Velocity. Faster target velocity generally leads to larger forward displacement (e.g., Freyd & Finke, Reference Freyd and Finke1985; Hubbard & Bharucha, Reference 408Hubbard and Bharucha1988; de sá Teixeira, Hecht, & Oliveira, Reference de sá Teixeira, Hecht and Oliveira2013). If final instantaneous velocity is constant, then a previously accelerating or decelerating target exhibits larger or smaller, respectively, forward displacement (Actis-Grosso, Bastianelli, & Stucchi, Reference Actis-Grosso, Bastianelli and Stucchi2008; Finke, Freyd, & Shyi, Reference Finke, Freyd and Shyi1986). Visual targets or auditory targets with an irregular velocity exhibit less representational momentum, presumably due to decreased predictability of the target (Getzmann & Lewald, Reference Getzmann and Lewald2009). Effects of velocity are diminished (a) with increases in implied friction (Hubbard, Reference Hubbard1995b), (b) if a target is initially stationary and subsequent motion is attributed to contact from another object (Hubbard & Ruppel, Reference Hubbard and Ruppel2002), (c) at very high velocities (Munger & Minchew, Reference Munger and Minchew2002), and (d) for single targets exhibiting continuous motion if observers cannot visually track that target (Kerzel, Jordan, & Musseler, Reference Kerzel, Jordan and Müsseler2001). A velocity effect has been found for changes in auditory pitch (Freyd, Kelly, & DeKay, Reference Freyd, Kelly and DeKay1990; see also Hubbard, Reference Hubbard1995a).

Distance. In most studies of representational momentum, distance traveled by the target is not a factor. Targets travel a fixed distance on every trial (e.g., 34 degrees of rotation, Freyd & Finke, Reference 394Freyd and Finke1984) or different distances of travel are counterbalanced or randomized across trials (e.g., Hubbard & Bharucha, Reference 408Hubbard and Bharucha1988). However, representational momentum decreases with increasing distance traveled by a launched target (Choi & Scholl, Reference Choi and Scholl2006; Hubbard & Ruppel, Reference Hubbard and Ruppel2002).Footnote 1 Forward displacement decreases with increases in distance traveled by the target for patients with neglect or patients with right hemisphere damage, but not for control participants (McGeorge, Beschin, Colnaghi, Rusconi, & Della Sala, Reference McGeorge, Beschin and Della Sala2006). Relatedly, forward displacement decreases as a target approaches a boundary (Hubbard & Motes, Reference Hubbard and Motes2005) or edge of the display (de sá Teixeira & Oliveira, Reference de sá Teixeira and Oliveira2011); however, this latter finding probably reflects expected change in target motion rather than distance traveled.

Direction. Horizontal motion in the picture plane leads to larger forward displacement than does vertical motion, and downward motion leads to larger forward displacement than does upward motion (Hubbard, Reference Hubbard1990; Hubbard & Bharucha, Reference 408Hubbard and Bharucha1988). Differences between leftward motion and rightward motion are usually not found (e.g., Cooper & Munger, Reference Cooper, Munger, Eilan, McCarthy and Brewer1993; Hubbard, Reference Hubbard1990), but when a difference is found, rightward motion is larger (e.g., Halpern & Kelly, Reference Halpern and Kelly1993). Forward displacement occurs for target motion in depth (e.g., Hayes, Sacher, Thornton, Sereno, & Freyd, Reference Hayes, Sacher, Thornton, Sereno and Freyd1996) and is larger when motion is away from the observer (Hubbard, Reference Hubbard1996a; Nagai, Kazai, & Yagi, Reference Nagai, Kazai and Yagi2002). Forward displacement along the line of sight occurs with motion of an observer’s viewpoint through a scene (Munger, Owens, & Conway, Reference Munger, Owens and Conway2005; Thornton & Hayes, Reference Thornton and Hayes2004). Forward displacement occurs for objects rotating in depth, and displacement is larger when rotation is around an axis that corresponds to observers’ and objects’ coordinate systems (Munger, Solberg, Horrocks, & Preston, Reference Munger, Solberg, Horrocks and Preston1999). Differences in displacement for rotation within the picture plane are usually not reported, although one study found displacement for a target moving along a rectangular clock frame was larger for clockwise motion than for counterclockwise motion (Joordens, Spalek, Razmy, & van Duijn, Reference Bartolomeo, Urbanski, Chokron, Chainay, Moroni and Siéroff2004), and rotation is larger for targets rotating downward than rotating upward (Munger & Minchew, Reference Munger and Minchew2002; Munger & Owens, Reference Munger and Owens2004). Displacement for a target following a circular path is forward along the tangent and inward toward the center (Hubbard, Reference Hubbard1996b).

Shape. The majority of studies of representational momentum presented stimuli consisting of geometric shapes. If each inducing stimulus within a trial is a radically different shape, forward displacement does not occur; however, if inducing stimuli imply a consistent change of shape of a single object (e.g., growing consistently thinner, taller, larger, etc.), then forward displacement in the direction of implied change occurs (Kelly & Freyd, Reference Kelly and Freyd1987; White, Minor, Merrell, & Smith, Reference White, Minor, Merrell and Smith1993). Implied drag does not influence forward displacement of square or rectangular translating targets or rotating pyramid shapes (Cooper & Munger, Reference Cooper, Munger, Eilan, McCarthy and Brewer1993), although in a more extreme case, a line moving parallel to its major axis (i.e., its smaller edge facing the direction of motion) exhibited larger forward displacement than did a line moving parallel to the direction of its minor axis (Hubbard, Reference Hubbard2005a). Displacement is larger if an object moves in the direction it points (Freyd & Pantzer, Reference Freyd, Pantzer, Smith, Ward and Finke1995) or faces (Nagai & Yagi, Reference Nagai and Yagi2001; Vinson & Reed, Reference Vinson and Reed2002).

Identity. If a target is given a verbal label suggesting a specific direction of motion, displacement is larger in that direction. For example, an ambiguous shape exhibited larger forward displacement for upward motion if that shape was labeled “rocket” than if labeled “steeple” (Reed & Vinson, Reference Reed and Vinson1996). This suggests displacement is influenced by object-specific information, and such information is more likely to influence displacement if the target is prototypical of its category and has a typical direction of motion (Nagai & Yagi, Reference Nagai and Yagi2001; Vinson & Reed, Reference Vinson and Reed2002). Forward displacement is increased if an object moves in the direction of its typical (forward) motion (e.g., an airplane moving forward) than if that object moves in an atypical (backward) direction (Nagai & Yagi, Reference Nagai and Yagi2001).

Size. Implied mass of pyramid-shaped objects that rotate in depth does not influence forward displacement (Cooper & Munger, Reference Cooper, Munger, Eilan, McCarthy and Brewer1993). Larger horizontally moving targets exhibit greater downward displacement than do smaller targets, larger downward-moving targets exhibit larger downward displacement than do smaller targets, and larger upward-moving targets exhibit smaller forward displacement than do smaller targets (Hubbard, Reference Hubbard1997, Reference Hubbard1998); this suggests effects of size (mass) are represented by effects of weight, as weight is experienced in the direction of gravitational attraction (i.e., the vertical axis) and not in the direction of motion. More generally, represented location of a target is influenced by implied gravitational attraction (Hubbard, Reference Hubbard1990, Reference Hubbard1997), and this has been referred to as representational gravity (see de sá Teixeira, Reference de sá Teixeira2014; de sá Teixeira & Hecht, Reference Brendel, Hecht, DeLucia and Gamer2014; Hubbard, Reference Hubbard1995c, Reference Hubbard2005b; Motes, Hubbard, Courtney, & Rypma, Reference Dodds, van Belle, Peers, Dove, Cusack and Duncan2008; Zago, Chapter 10 in this volume). If participants estimate how long it would take to stop a moving target, more dense (massive) targets are estimated to take more effort, but not more time, to stop; this has been interpreted as suggesting mass and velocity might interact in representational momentum (de sá Teixeira, Oliveira, & Amorim, Reference 441Peyrin, Michel and Schwartz2010).

Eccentricity. Some studies reported representational momentum for a continuously moving target did not occur if participants maintained central fixation and could not track the target (e.g., de sá Teixeira, Hecht, & Oliveira, Reference de sá Teixeira, Hecht and Oliveira2013; Kerzel, Reference Kerzel2000), and so there was little initial incentive to look for effects of eccentricity on representational momentum. However, one study reported representational momentum for visual targets and auditory targets increased as final target location moved from central to paracentral regions of the visual field and decreased as the final target location moved from paralateral to lateral regions of the visual field (Schmiedchen, Freigang, Rubsamen, & Richter, Reference Rastelli, Tallon-Baudry, Migliaccio, Toba, Ducorps and Pradat-Diehl2013). For visual targets, the initial increase probably reflects decreases in resolution acuity in paracentral and paralateral regions (cf., increase in forward displacement of blurred targets, Fu, Shen, & Dan, Reference Fu, Shen and Dan2001). The reason for the pattern with auditory targets is less clear.

Visual Field. If a stimulus changing in size is presented on the midline of the visual field, and probes are presented to the left or right visual field, representational momentum for size is larger for probes in the left visual field with a retention interval of 500 msec, but this difference vanishes with longer retention intervals (White et al., Reference White, Minor, Merrell and Smith1993). Similarly, representational momentum for location in the picture plane is larger if targets are in the left than in the right visual field (Gottwald, Lawrence, Hayes, & Khan, Reference Gottwald, Lawrence, Hayes and Khan2015; Halpern & Kelly, Reference Halpern and Kelly1993). When targets are lower in the picture plane, representational momentum is larger for vertically moving objects (Hubbard, Reference Hubbard2001) and horizontally moving objects in the left visual field (Gottwald et al., Reference Gottwald, Lawrence, Hayes and Khan2015).

Human Form. Presentation of animated human figures (Verfaillie & Daems, Reference Verfaillie and Daems2002) or point-light walkers (Verfaillie, De Troy, & Van Rensbergen, Reference Verfaillie, De Troy and Van Rensbergen1994) leads to forward displacement for postures of those figures; however, one study found displacement for point-light characters limited to characters presented on a textured surface or from a static viewpoint (Jarraya, Amorim, & Bardy, Reference Jarraya, Amorim and Bardy2005). Representational momentum occurs for faces changing from a neutral to a more extreme expression (Uono, Sato, & Toichi, Reference 441Peyrin, Michel and Schwartz2010, Reference Lewkowicz and Minar2014; Yoshikawa & Sato, Reference Yoshikawa and Sato2006, Reference Yoshikawa and Sato2008; but see Thornton, Reference Thornton2014). Representational momentum occurs for changes in the direction in which a face is oriented, but is reduced when gaze direction of that face differs from the direction in which the face is oriented (Hudson, Liu, & Jellema, Reference Doricchi, Merola, Aiello, Guariglia, Bruschini and Gevers2009) or emotional expression was inconsistent with approach toward the observer (Hudson & Jellema, Reference Hudson and Jellema2011). Representational momentum occurs for hand gestures in sign language; displacement is larger for gestures in the typical direction of motion than in the reversed direction, but this might reflect differences in awkwardness of motion rather than semantic meaningfulness (Wilson, Lancaster, & Emmorey, Reference 441Peyrin, Michel and Schwartz2010). Such an awkwardness effect does not occur with a static stimulus (Munger, Reference Munger2015). Representational momentum for animation of a human hand is influenced by expectations regarding whether that hand would reach toward or withdraw from an object (Hudson, Nicholson, Ellis, & Bach, Reference Hudson, Nicholson, Ellis and Bach2016; Hudson, Nicholson, Simpson, Ellis, & Bach, Reference Hudson, Nicholson, Simpson, Ellis and Bach2016).Footnote 2

Nonvisual Stimuli. Remembered final pitch of a stimulus changing in auditory frequency exhibits forward displacement in pitch (Freyd et al., Reference Freyd, Kelly and DeKay1990; Hubbard, Reference Hubbard1995a; Johnston & Jones, Reference Johnston and Jones2006; Kelly & Freyd, Reference Kelly and Freyd1987). Remembered location in physical space of a sound source translating in the picture plane is displaced in the direction of motion (Getzmann & Lewald, Reference Getzmann and Lewald2007; Getzmann, Lewald, & Guski, Reference Bartolomeo, Urbanski, Chokron, Chainay, Moroni and Siéroff2004). Forward displacement for location of a moving sound source occurs at the beginning and end, but not middle, of the trajectory (Getzmann & Lewald, Reference Getzmann and Lewald2007). Oculomotor behavior does not influence auditory representational momentum for a moving sound source (Getzmann, Reference Getzmann2005). When pitch rises and falls in a predictable manner, displacement in final pitch is backward when listeners expect direction of pitch motion to reverse (Johnston & Jones, Reference Johnston and Jones2006). Representational momentum for a moving sound source increases as vanishing point moves from central to paracentral regions and decreases as vanishing point moves from paralateral to lateral regions (Schmiedchen et al., Reference Rastelli, Tallon-Baudry, Migliaccio, Toba, Ducorps and Pradat-Diehl2013). Changes in grasp aperture consistent with representational momentum occur when participants grasp opening or closing visual pliers (Brouwer, Thornton, & Franz, Reference Brouwer, Thornton and Franz2005) or two visual spheres moving toward or away from each other (Brouwer, Franz, & Thornton Reference Bartolomeo, Urbanski, Chokron, Chainay, Moroni and Siéroff2004).

Characteristics of the Display

Variables influencing representational momentum that are related to the display include (a) surface form, (b) whether the participant controls target motion, (c) retention interval, (d) prior probability a same response to a probe would be correct, (e) response measure, and (f) contrast between target and background.

Surface Form. The term “surface form” refers to the format in which the target is presented, and three formats have been used. The first is frozen-action photographs, and these are single static images drawn from a larger motion sequence (e.g., a person walking or jumping; e.g., Freyd, Reference Freyd1983; Futterweit & Beilin, Reference Futterweit and Beilin1994).Footnote 3 The second is implied motion (as in Figure 8.1), and this involves a series of separate static stimuli that imply motion in a specific direction (e.g., a series of rectangles at different orientations; Freyd & Finke, Reference 394Freyd and Finke1984). The third is continuous motion, and this involves a target that appears to exhibit smooth and uninterrupted motion (e.g., Hubbard & Bharucha, Reference 408Hubbard and Bharucha1988). No difference between implied motion and continuous motion for displacement in orientation of a rotating rod (Munger & Owens, Reference Munger and Owens2004) or a change in auditory frequency (Hubbard, Reference Hubbard1995a) have been found. Kerzel (Reference Kerzel2003c) reported forward displacement for a target moving along a circular trajectory is less with continuous than with implied motion, and Faust (Reference Faust1990) found displacement for a horizontally moving target is less with implied than with continuous motion. Comparison of displacement across different surface forms is difficult, as direction and other characteristics of motion depicted with each type of surface form are typically different.

Control. If observers control velocity and direction (turning points) of a target, then forward displacement if that target vanishes without warning is less than if observers do not have such control (Jordan & Knoblich, Reference Jordan and Knoblich2004). If observers have control over when the target vanishes, then forward displacement decreases with increases in the latency between when observers indicate the target should vanish and when the target actually vanishes (Jordan, Stork, Knuf, Kerzel, & Musseler Reference Jordan, Stork, Knuf, Kerzel, Müsseler, Prinz and Hommel2002; but see Hubbard, Reference Hubbard2005b). Control over when the target vanishes might interact with oculomotor behavior (Stork & Müsseler, Reference Stork and Müsseler2004). If participants have previous experience controlling a target or observing another person controlling the target, then forward displacement is larger than if participants do not have such experience (Jordan & Hunsinger, Reference Jordan and Hunsinger2008).

Retention Interval. Representational momentum increases during the first few hundred milliseconds after the target vanishes (e.g., Freyd & Johnston, Reference Freyd1987; Halpern & Kelly, Reference Halpern and Kelly1993). This increase occurs with implied motion and continuous motion targets (but has not been examined for frozen-action photographs). Some studies report representational momentum asymptotes (e.g., Finke & Freyd, Reference Finke and Freyd1985; Kerzel, Reference Kerzel2000), and other studies report declines in representational momentum with subsequent increases in retention interval (e.g., Freyd & Johnson, Reference Freyd and Johnson1987; de sá Teixeira, Hecht, & Oliveira, Reference de sá Teixeira, Hecht and Oliveira2013). However, many studies claiming representational momentum asymptotes did not examine representational momentum for extended retention intervals (e.g., Kerzel, Reference Kerzel2000, did not examine retention intervals longer than 500 msec). Studies of retention interval typically use probe response measures (see below), as such measures do not confound time to locate and move a cursor with retention interval per se (although see de sá Teixeira, Hecht, & Oliveira, Reference de sá Teixeira, Hecht and Oliveira2013).

Prior Probabilities. If error feedback suggests prior probability that a same response is correct is relatively small, then overall likelihood of a same response, but not representational momentum, is decreased (Ruppel, Fleming, & Hubbard Reference Doricchi, Merola, Aiello, Guariglia, Bruschini and Gevers2009). If actual or believed prior probability that a same response is correct decreases, the likelihood of a same response, but not the magnitude of representational momentum, decreases (Hubbard & Lange, Reference Hubbard and Lange2010). In other words, decreases in prior probability that a same response is correct on any given trial decreases height of the distribution of same responses but does not influence skew (shift) of that distribution. Decreases in actual or believed prior probability decrease false alarm rates, and decreases in believed prior probability decrease hit rates and beta but not d’ (Hubbard & Lange, Reference Hubbard and Lange2010). Prior probability is most relevant to studies using probe judgment, but it is not clear how prior probability is relevant to studies using cursor positioning or reaching response measures.

Response Measure. Three response measures have been used. The first is probe judgment, in which a stimulus similar or identical to the target is presented after the target vanishes and observers judge whether the probe is the same as or different from the target (e.g., Finke et al., Reference Finke, Freyd and Shyi1986), or, less commonly, whether the probe is to the left or right of final target position (e.g., Kerzel, Reference Kerzel2000). A disadvantage of probe methods is that high numbers of probes and replications of each probe type are required, and this leads to higher numbers of trials (e.g., typically 5–9 probe positions per target position). The second type is cursor positioning (referred to as mouse pointing by Kerzel, Reference Kerzel2003c), in which observers use a computer mouse to position the cursor at the location in a display corresponding to the final location of the target. By clicking a button on the mouse, display coordinates of the cursor are recorded and can be compared with display coordinates of the target (e.g., Hubbard, Reference Hubbard1990; Hubbard & Bharucha, Reference 408Hubbard and Bharucha1988). Cursor positioning typically requires fewer trials than does probe judgment, but some stimulus dimensions are not easily adapted to such a measure (e.g., visual brightness, auditory pitch). The third method is reaching, in which participants touch (with a finger) the location in the display where the target vanished (e.g., Ashida, Reference Ashida2004; Kerzel & Gegenfurtner, Reference Kerzel and Gegenfurtner2003). A disadvantage of reaching is that spatial resolution is typically not as precise as probe judgment or cursor positioning. There haven’t been many comparisons of response measures, but one study found probe judgments resulted in smaller estimates of representational momentum than did reaching (Kerzel, Reference Kerzel2003c).

Contrast. Displacement in remembered luminance of a target changing in luminance is backward (in the direction opposite to representational momentum; Brehaut & Tipper, Reference Brehaut and Tipper1996) and interacts with a bias toward the level of background luminance (Favretto, Reference Favretto2002). If a moving target on a dark background gradually decreases in luminance (decreases in contrast), forward displacement is larger than if a target travels a shorter distance before abruptly vanishing (Maus & Nijhawan, Reference Maus and Nijhawan2006). Weaker motion signals resulting from less contrast between target and background have been suggested to result in smaller displacement (Maus & Nijhawan, Reference Maus and Nijhawan2009), although this does not appear consistent with suggestions that weaker motion signals resulting from less continuous motion result in larger displacement (Kerzel, Reference Kerzel2003c). If a light or dark target is presented on a white or black background, representational momentum for target location is larger if contrast of target and background is high or increasing and lower if contrast of target and background is low or decreasing (Hubbard & Ruppel, Reference Hubbard and Ruppel2014).

Characteristics of the Context

The context includes physical and cognitive elements. Variables influencing representational momentum that are related to the context include (a) physical surroundings, (b) landmarks, (c) shadows and shading, (d) interactions with nontarget stimuli, (e) expectations regarding future target motion, and (f) attributions regarding the source of target motion.

Physical Surroundings. If a rotating target is embedded within a larger surrounding frame, representational momentum for the target is increased if the frame rotates in the same direction or is oriented slightly beyond the final target orientation, and representational momentum for the target is decreased if the frame rotates in the opposite direction or is oriented slightly behind the final target orientation (Hubbard, Reference Hubbard1993). Similarly, if a moving target is near a larger translating grating, representational momentum of the target is increased or decreased if the grating moves in the same or opposite direction, respectively, as the target (e.g., Whitney & Cavanagh, Reference Whitney and Cavanagh2002). If a target moves between stationary distractors (Gray & Thornton, Reference Gray and Thornton2001) or toward a stationary barrier (Hubbard & Motes, Reference Hubbard and Motes2005), forward displacement is decreased. If the target is expected to bounce off a barrier, forward displacement decreases as the target approaches the barrier and reverses at the moment of contact (Hubbard, Reference Hubbard1994; Hubbard & Bharucha, Reference 408Hubbard and Bharucha1988).

If observers view video clips from real-world scenes (e.g., train station, shopping mall), representational momentum for those scenes occurs even if different stimuli in a scene move at different velocities and in different directions (Thornton & Hayes, Reference Thornton and Hayes2004). Forward displacement of an observer’s viewpoint for motion into animated scenes (pyramid landscapes) occurs if probes tested specifically for representational momentum (Munger et al., Reference Munger, Owens and Conway2005). However, if probes were more general, then responses were more consistent with boundary extension (see also DeLucia & Maldia, Reference DeLucia and Maldia2006). Forward displacement of the viewpoint occurs for observers in driving simulations (Thornton & Hayes, Reference Thornton and Hayes2004). If viewpoint of a scene rotates to the left or right, then representational momentum for objects occurs and is larger for objects entering the scene than exiting the scene (Munger et al., Reference Munger, Dellinger, Lloyd, Johnson-Reid, Tonelli, Wolf and Scott2006) even though objects are stationary and it is the viewpoint that moves. Representational momentum for objects in a scene decreases if viewpoint motion involves rotation and translation rather than just rotation (Brown & Munger, Reference Brown and Munger2010).

Landmarks. Examples of context considered above were global in the sense of surrounding a target or occupying a large portion of the surrounding environment. However, context can be local in the sense of an object or landmark providing a single point of reference. If a target moves toward or away from a landmark, forward displacement of that target increases or decreases, respectively (Hubbard & Ruppel, Reference Hubbard and Ruppel1999). This probably reflects a combination of representational momentum with a landmark attraction effect (cf. Bryant & Subbiah, Reference Bryant and Subbiah1994); when representational momentum and landmark attraction operate in the same direction (motion toward the landmark), they sum, and forward displacement is large, whereas when representational momentum and landmark attraction operate in opposite directions (motion away from the landmark), they partially cancel, and forward displacement is small. A target is displaced toward a distractor that is flashed at the moment the target vanishes or during the retention interval, and although consistent with a landmark effect, it has been argued a briefly presented nontarget stimulus should not be considered a landmark (Kerzel, Reference Kerzel2002a).

Shadows and Shading. Information regarding shape or direction of motion provided by shading and shadows influences representational momentum. If the first and third (of three) inducing stimuli are convex, forward displacement is larger if the second inducing stimulus is not concave (cf. coherent motion; Kelly & Freyd, Reference Kelly and Freyd1987), but if inducing stimuli are replaced by luminance-polarized circles (white top-half and black bottom-half for convex; black top-half and white bottom-half for concave), there is no difference in displacement (Hidaka, Kawachi, & Gyoba Reference Doricchi, Merola, Aiello, Guariglia, Bruschini and Gevers2009); thus, effects of shape and shading were due to differences in perceived depth rather than luminance. If the apparent cast shadow of a horizontally moving target diverges from the path of the target, representational momentum is larger than if the cast shadow parallels or converges with the path of the target, and this suggests the diverging shadow is interpreted as suggesting motion toward the observer (Taya & Miura, Reference Taya and Miura2010). If paths of the target and shadow converge, downward displacement of the target is larger, and this was interpreted as suggesting downward motion. Information from shape and shading influences perceived direction of motion, which in turn influences representational momentum.

Interactions with Nontarget Stimuli. A tone that increases or decreases in auditory frequency does not influence forward displacement of a vertically moving visual target, but downward displacement of a horizontally moving visual target is larger if the tone descends than if the tone ascends (Hubbard & Courtney, Reference Hubbard and Courtney2010). If onset of a horizontally moving visual target is accompanied by onset of a tone, representational momentum for the visual target is decreased or increased if the tone terminates slightly before or after, respectively, the visual target vanishes (Teramoto, Hidaka, Gyoba, & Suzuki Reference 441Peyrin, Michel and Schwartz2010). A tone presented just before a visual target vanishes leads to backward displacement of that target (Chien, Ono, & Watanabe Reference Chien, Ono and Watanabe2013; but see Teramoto et al., Reference 441Peyrin, Michel and Schwartz2010). If a tone is presented only to the left or right ear, then backward displacement is larger if the tone is on the same side of space where visual motion originated. If observers are presented with separate visual and auditory targets moving across space, then if the nonjudged modality target vanishes before or after the judged modality target vanishes, displacement of the judged modality target is biased toward the vanishing position of the nonjudged modality target (Schmiedchen et al., Reference Schmiedchen, Freigang, Nitsche and Rübsamen2012). However, if the difference in vanishing times was greater than 2,000 msec, then effects of the nonjudged modality target on the judged modality target only occurred if participants judged auditory targets.

Examples in the preceding paragraph involved target stimuli and nontarget stimuli in different modalities. However, effects of cast shadows, barriers, and landmarks accompanying visual targets suggest nontarget stimuli in the same modality as a target influence representational momentum for that target. In these latter cases, there is no contact between targets and nontarget stimuli (e.g., Hubbard, Reference Hubbard1993), but contact between targets and nontarget stimuli can influence representational momentum for the target. If a target slides along a stationary surface, forward displacement of that target is decreased relative to forward displacement of an otherwise identical target presented in isolation or clearly separated from the larger stationary surface (Hubbard, Reference Hubbard1995b, Reference Hubbard1998). This decrease is referred to as representational friction (Hubbard, Reference Hubbard1995b, Reference Hubbard1995c), and decreases in forward displacement increase as implied friction on the moving target increases (Hubbard, Reference Hubbard1995b, Reference Hubbard1998). Illusory motion of a visual nontarget stimulus might (e.g., Hubbard et al., Reference Hubbard, Piazza, Pinel and Dehaene2005) or might not (e.g., Nagai & Yagi, Reference Nagai and Saiki2005) influence displacement of a visual target. Additional examples of nontarget influences are discussed in the section below on Attributions of the Source of Target Motion (Causality).

Expectations of Future Target Motion. If a target is expected to bounce off a barrier the target is approaching, then forward displacement decreases as the target approaches the barrier, and at the moment of contact, displacement is in the expected (new) direction rather than in the previous direction of motion (Hubbard & Bharucha, Reference 408Hubbard and Bharucha1988). A physical reason for a direction change does not seem to be necessary, as similar patterns occur when visual targets changing in location (Verfaillie & d’Ydewalle, Reference Verfaillie and d’Ydewalle1991), and auditory targets changing in frequency (Johnston & Jones, Reference Johnston and Jones2006), exhibit oscillatory motion in an otherwise blank display. Similarly, forward displacement is larger if a target bounces off rather than crashes through a barrier, as if elastic bouncing off preserved more momentum and rigid crashing-through depleted more momentum (Hubbard, Reference Hubbard1994); interestingly, this difference is stronger if observers received a valid cue (e.g., a cue of “bounce” and the target then “bounced”) than if observers received an invalid cue (e.g., a cue of “bounce” and the target then “crashed”). A similar combination of effects of expected target direction and actual target direction on displacement was reported by Hudson, Nicholson, Ellis et al. (Reference Hudson, Nicholson, Ellis and Bach2016) and Hudson, Nicholson, Simpson et al. (Reference Hudson, Nicholson, Ellis and Bach2016). A spoken mimetic word describing possible target behavior presented during target motion influences displacement (Gobara, Yamada, & Miura Reference Gobara, Yamada and Miura2016).