2 The language module: architecture and representations

2.1 Chapter outline

This chapter elaborates on what was introduced in Section 1.7 of the first chapter. That is, it provides a modular account of language largely based on Jackendoff's version of modularity and, within that version, Carroll's view of input processing (Jackendoff Reference Jackendoff1987, Reference Jackendoff1997a, Reference Jackendoff2002; Carroll Reference Carroll1999, Reference Carroll2001, Reference Carroll, VanPatten and Williams2007). We will also make reference to aspects of Principles and Parameters (P&P) theory (Chomsky Reference Chomsky1986; Haegeman Reference Haegeman1994) and Chomsky's (Reference Chomsky1995) minimalist idea that crosslinguistic variation regarding the module is restricted to the lexicon to see to what extent they are compatible with, and can contribute to the broader account that we envisage (see also Boeckx Reference Boeckx2006). Since we are providing a theoretical framework, rather than a comprehensive theory, the kind of processing account we will be developing within MOGUL will draw on concepts such as functional categories, indexing and the like in a manner that should be familiar from different strands of contemporary generative linguistics but without pursuing particular theoretical analyses of phonological, syntactic, semantic, and pragmatic structure.1

As far as identifying which of the many versions of modularity we espouse, we will briefly situate our account within the broader, ongoing discussion in the literature and, since ‘the’ language module in fact is itself modular, we will also go on referring to it as ‘the core language system’. In this system phonology and syntax are, again following Jackendoff and as touched upon in the first chapter, equal partners. We will then go on to specify the general architecture of this system after which we will focus on the nature and role of representations. These will be necessary first steps to explaining later, in terms of the MOGUL framework, how cognitive structures are processed and how they develop over time in both first and second language acquisition.

The following chapter (Chapter 3) will elaborate the processing dimension of the language module as we conceive it and so the final section of this second chapter will prepare for this topic by briefly considering the questions of how representations are retained in memory both in the very short term and in the long term.

Although this chapter and the next will be concerned with specifically linguistic cognitive structures, later chapters will focus on cognitive structures and cognitive processing that are not specifically linguistic in nature but are an integral part of the MOGUL framework.

2.2 Modularity

2.2.1 Modularity in general

There is a great deal of evidence in support of the view, now quite popular in psychology, philosophy, and neuroscience, that the mind is composed to a considerable extent of functionally specialized processors, or modules. Considerable disagreement exists about which, if any, modules are genetically determined, to what extent linguistic ability can be conceived in terms of a specialized, genetically determined ‘language module’, how fine-grained the modular system is and in what way there might be relatively non-specialised parts of the mind where information from very different sources can be integrated (e.g., Fodor Reference Fodor1983, Reference Fodor2000; Jackendoff Reference Jackendoff1987, Reference Jackendoff1997a; Karmiloff-Smith Reference Karmiloff-Smith1992; Pinker Reference Pinker1994, Reference Pinker1997; Barrett and Kurzban Reference Barrett and Kurzban2006).

The kinds of modules we are interested in are as follows. They are innate and develop for the most part on their own terms. Each carries out its own function, using its own distinctive encoding system, in a largely autonomous manner. They can be composite, one large module consisting of several smaller ones which in turn are made up of still smaller ones and so on. Perhaps the best example of this composite structure is the system responsible for visual perception, which consists of distinct sub-systems specialising in various aspects of the process, such as colour and motion. A complex module will have a number of distinct codes, one for each of its submodules. Modules of (more or less) this sort have been described by a great many authors (e.g., Barkow, Cosmides, and Tooby Reference Barkow, Cosmides and Tooby1992; Gardner Reference Gardner1993, Reference Gardner1999; Hirschfeld and Gelman Reference Hirschfeld and Gelman1994; Pinker Reference Pinker1994, Reference Pinker1997; Gazzaniga Reference Gazzaniga1998).

Some of the functions served by modules have played a very prominent and very general role in species survival. Examples are language, face recognition, and visual processing in general. Given these characteristics, it is quite natural to hypothesise that they have become innate parts of the cognitive system, via natural selection. As a result, modules of this sort have relatively fixed architectures and can be found in essentially all humans, with only limited variation.

The relatively fixed architectures evolved to ensure not only that the modules are present in all people, but also that they will carry out their crucial functions in a highly efficient manner. A system that deals with only a very narrow, pre-specified range of inputs and manipulates them only in very specific, pre-determined ways can carry out its functions very quickly and very accurately, in contrast to a more general system, which necessarily makes sacrifices in efficiency for the sake of increased flexibility. When there is a need for construction of phonological representations of linguistic input, for example, a system that exists solely for this purpose should do a better job of it than one that processes many types of input in many different ways. In addition to universality and efficiency, encapsulation has the desirable effect of shielding the expert systems from non-expert tampering. Conscious processes, in particular, are not equipped to understand the workings of the specialised modules; encapsulation ensures that they will not be able to get inside them and make a mess of things.

Another product of the need for efficiency is the existence of a specialised code for each module. The representational system most efficient for any one function is unlikely to be identical to that which is most efficient for a different function. It would be quite surprising, for example, if phonological processing made use of the same code as colour processing in the visual system. Thus, there is a natural tendency, over evolutionary time, for each module to develop its own special code, not shared with any other module. This development then reinforces encapsulation, as the various modules become unable to directly read one another's representations, and therefore strengthens the relatively autonomous status of the various modules.

2.2.2 Modularity in language

Discussion of modularity in language is complicated considerably by the fact that ‘language’ is a pretheoretical notion, too inherently complex and fuzzy to be captured straightforwardly in terms of ‘language module’. As will have been clear from the preliminary sketch of MOGUL in Chapter 1, we find it important to take account of the fact that language comprises many different types of knowledge and ability, including phonetics, phonology, syntax, morphology, semantics, pragmatics, orthography and writing principles, lexical knowledge of various interrelated types, and often a great deal of metalinguistic knowledge. The sum of all these cannot be expected to neatly show the distinctive characteristics of modules described above. Drawing lines around a single language module is difficult at best. Moreover, some aspects of language, such as syntax, show modular characteristics in themselves and therefore should be considered modules, perhaps submodules of the hypothesised language module. We will return to these issues shortly.

Modularity became a major part of modern linguistic thinking as a result of Noam Chomsky's influence. Chomsky's view of the mind is in fact centred around the idea of modularity, and he has explicitly promoted this view in various places (e.g. Chomsky Reference Chomsky1972, Reference Chomsky1980; Piattelli-Palmarini, Reference Piattelli-Palmarini1980). A particularly interesting aspect of Chomsky's version of modularity is his concept of the language organ (see also Anderson and Lightfoot Reference Anderson and Lightfoot1999, Reference Anderson and Lightfoot2002). The language module is seen as analogous to physical organs such as the heart or liver in that it is innately specified, serves a particular function, and develops naturally. This view challenges the very notion of language learning/acquisition, suggesting instead that the development of language, like that of a physical organ, is best seen as a matter of natural growth. This growth idea is in fact a key part of MOGUL, as we will explain below.

The notion of a language organ is inseparable from the more widely used idea of Universal Grammar (UG), which has inspired enormous amounts of theoretical and empirical work and of course enormous controversy. UG is not, as the name might suggest, a grammar in the more usual sense of the word. It is not, for instance, a set of rules common to all the world's languages: it is rather a set of constraints on the shape in which natural grammars may grow in the individual. UG does also contain a set of primitives but grammars of particular languages make specific selections from this set and organise them in different ways. The exact nature of these primitives and the organising principles of UG has, of course, been the object of considerable theoretical linguistic debate ever since Chomsky first advanced the idea (Chomsky Reference Chomsky1965). Universal Grammar in MOGUL is represented by the structural categories and principles in the syntax and phonology modules, and also by the interfaces that mediate between these two and between them and external systems. UG will therefore also be reflected in the particular (linguistic) conceptual and auditory structures that these interfaces create, that is alongside features that reflect general auditory and conceptual principles.

The primary argument for the existence of UG has always been the logical problem of language acquisition: how is it possible for children to consistently succeed in acquiring something as complex as language, given the lack of instruction and correction (in morphosyntax) and the limits in the input with which they work? The difficulty of the task is demonstrated by the limited success achieved by generations of linguists attempting to achieve an explicit understanding of natural language grammar, and also by the limited and often flawed input that children receive and the lack of instruction and feedback on grammar. And of course small children are generally quite limited in their ability to carry out complex learning tasks. When these factors are juxtaposed with the universal success achieved by virtually every child in virtually every setting, it is difficult to escape the conclusion that the acquisition is guided by extensive innate knowledge, specifically of language.

A number of additional arguments have also been offered for the existence of UG. One is the lack of any observable relation between success in first language learning and general intelligence (as measured by IQ), suggesting again that language is not learned in any standard sense of the term but rather develops in accordance with its own principles. This dissociation is especially striking in extreme cases in which either general intelligence or language ability is severely impaired but the other remains largely intact and can even be above average. Another line of evidence involves the development of creoles from pidgins. Children exposed to a syntactically impoverished pidgin do not simply acquire it as is but rather transform it into a fully fledged language reflecting all the grammatical complexities of natural language (a creole). The implication is that acquiring (or creating) a grammatically complex language is human nature; in other words, language learning is an instinct (Pinker Reference Pinker1994).

A final area that has produced evidence for UG is sign language. Morgan (Reference Morgan and Cutler2005), for example, argued that its development in children shows such ‘remarkable similarities’ to the development of spoken language that both must be based on specifically linguistic mechanisms. Research has also found the same phenomenon observed with the creation of creoles (see the summary provided by Lust Reference Lust2006). Deaf children with no model of a sign language develop one on their own. Those with a pidgin-like model (a hearing adult who has only a limited mastery of the sign language) go far beyond their model, developing a rich syntax. Evidence of this sort has convinced a great many people of the reality of UG, though by no means everyone (see, for example, Bates, Bretherton, and Snyder Reference Bates, Bretherton and Snyder1988; Edelman Reference Edelman1992; Tomasello Reference Tomasello1998).

Fodor (Reference Fodor1983) developed the type of modularity offered by Chomsky in some detail, though differing from Chomsky in crucial respects. (See Schwartz Reference Schwartz1999, for an attempt to minimise the differences between Fodor's and Chomsky's positions.) At the heart of his approach was the distinction between central processes, concerned with belief fixation, and input systems that have the function of getting information to the central processes in a form that they can use. The input systems are modular, based on a number of characteristics that Fodor discussed in some detail. We will focus on three such characteristics. The first is that they are innately specified. For the case of language, Universal Grammar is the innate specification. The second is informational encapsulation, according to which a module deals only with very limited information, coming to it through specific channels. Most potentially relevant information in the overall cognitive system is not available to it. Third, and most important for present purposes, is that each module has its own unique encoding system or ‘language’; its representations cannot be read by other processors. This feature can be seen as a natural consequence of the need for efficiency. The representational system most efficient for a given function is unlikely to be so well-suited to other functions.

In SLA, Fodor's (Reference Fodor1983) version of modularity was adopted by Schwartz in order to reformulate the conceptual model proposed by Krashen in terms compatible with generative linguistics and learnability theory (see for example Schwartz Reference Schwartz1986, Reference Schwartz1999; also Smith and Tsimpli, Reference Smith and Tsimpli1995; Herschensohn, Reference Herschensohn2000). What Krashen (Reference Krashen1982) defined as learning, crucially involving conscious, metalinguistic reflection, corresponds to processes outside the language module, i.e. part of Fodor's central processes, and as such produces knowledge representations that are different in kind from those in the L1 or L2 grammars and have no direct influence on them. However, as already indicated, we will adopt a more recent conceptualisation of modules, that of Jackendoff (Reference Jackendoff1987, Reference Jackendoff1997a, Reference Jackendoff, Brown and Hagoort1999, Reference Jackendoff2002) and we will now develop it in somewhat more detail. Our views on the nature of those processes and knowledge outside the language module which relate to the role of conscious awareness, will be taken up in later chapters in the book beginning with Chapter 5.

2.2.3 Jackendoff's version of modularity

One great advantage of approaches such as Jackendoff's is that discussion of the architecture of the language faculty can be carried on either in terms of language structure per se, in abstract ‘competence’ terms as it were, or it can be conducted with reference to real-time processing but still employing very much the same concepts and terminology adding on, naturally, those terms and concepts specific to processing itself. Transitions between these two modes of discussing language structure are accordingly smooth and this is certainly true when dealing with the modular aspects of the system. Since MOGUL is a processing-oriented framework, we will accordingly continue the discussion in the second, ‘processing’ mode. Although Jackendoff's and Fodor's approaches are very broadly in agreement, in Jackendoff's model, the modularity is more fine-grained. The language processing system is also bi-directional for Jackendoff, accounting for both receptive and productive language use, whereas Fodor talks mainly in terms of input systems.

Jackendoff's system includes two major types of processor, namely integrative processors and interface processors. The former build complex structures from the input they receive during processing, while the latter are responsible for relating the workings of adjacent modules in a chain. This chain consists of phonological, syntactic, and conceptual/semantic structure, each level having an integrative processor and connected to the adjacent level by means of an interface processor. For example, the integrative syntactic processor simply builds syntactic structure within the syntax module. The same goes for phonological and conceptual structures within their respective modules. Each integrative processor can only recognise and manipulate representations in its own particular code and is, at least in this sense, an encapsulated module. It is important to keep in mind the fact that there is no information flow via the interfaces between different modules. The job of an interface is to match up representations in the modules that they link up. Information from syntax is simply not readable by either the phonological module or the conceptual module. The traffic between modules is therefore restricted to a matching procedure linking particular representations together but not converting one representation in one module into the code of the next one along.

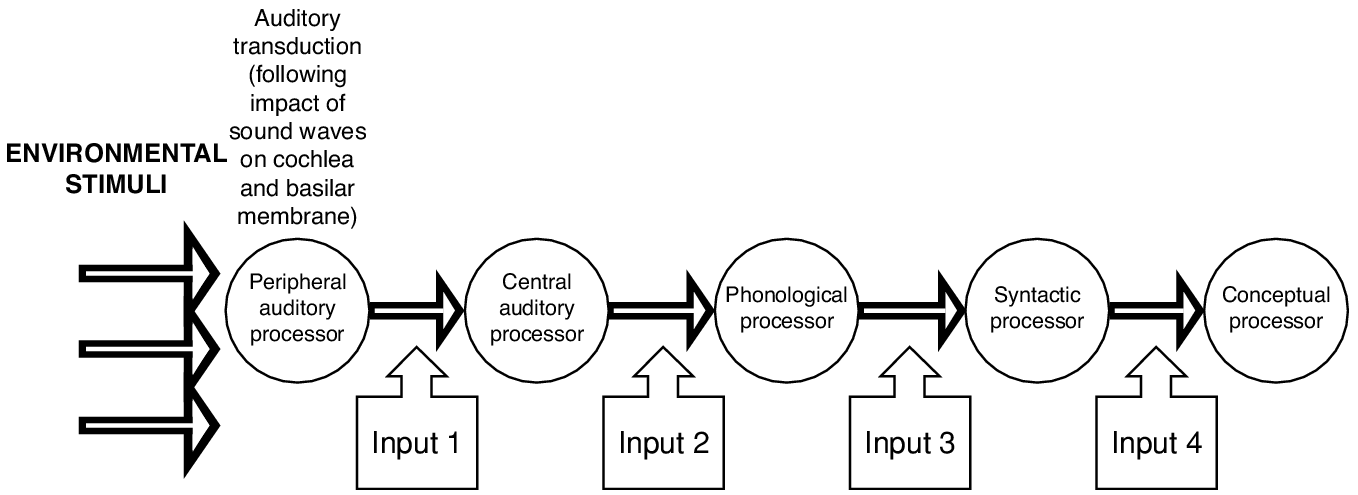

A recent application of Jackendoff's version of modularity – one that will take on great significance in the following discussion of MOGUL – is that of Carroll (Reference Carroll1999, Reference Carroll2001, Reference Carroll, VanPatten and Williams2007). Carroll made a convincing case that input (and therefore acquisition) must be seen in conjunction with a theory of language processing. Working within Jackendoff's (Reference Jackendoff1987, Reference Jackendoff1997a) theory of modularity, she defined ‘input’ as the representation that is received by one processor in the chain from the adjacent processor (see Fig. 2.1 for just one example of such a chain of inputs; cf. Carroll Reference Carroll1999: 350). It is therefore not the standard ‘input from outside’, but rather a multiple phenomenon; each processor has its own input. Carroll underlined this point by using the word ‘stimulus’ to denote the standard sense of input. The stimulus becomes input for the first time as the result of processing, not before processing has taken place. We will retain the familiar ‘external’ use of the term here, alongside ‘output’, but suitably qualify it where necessary to clarify the difference between traffic between modular processors and traffic between the organism and the external environment.

Fig 2.1 Language input as a multiple phenomenon: an example with four separate inputs triggered by environmental stimuli.2

We will take Jackendovian modularity as our starting point and make use of the leading insight of Carroll's application of it, while greatly diverging from both of these authors (especially Carroll) in many ways. One major reason for adopting Jackendoff's model as a starting point is the attention it pays to the ways in which language interfaces with other aspects of cognition. Another is its suitability for explaining language processing, and its compatibility with current findings in the psycholinguistic literature. The strength of Carroll's (Reference Carroll1999, Reference Carroll2001) approach is that, by placing acquisition within the context of language processing, it offers a more fine-grained view of input and of acquisition. It thereby allows new questions to be asked and familiar questions to be posed in new and potentially more productive forms.

2.3 The language module(s) in MOGUL

Linguistic knowledge is not limited to the language module, a point that we will develop in considerable detail below. But the module is the heart of this knowledge and therefore the focus of the discussion. We will first discuss the overall structure of the module and the nature of its components – processors and information stores.

2.3.1 The general architecture

MOGUL is an information processing approach, in the literal sense of the term. In this approach, the cognitive system consists of processors and the information stores (memory systems) with which they work. All activity in the system is to be interpreted as processors manipulating the contents of these information stores. This approach offers an explicit, parsimonious way of understanding the language module and its workings.

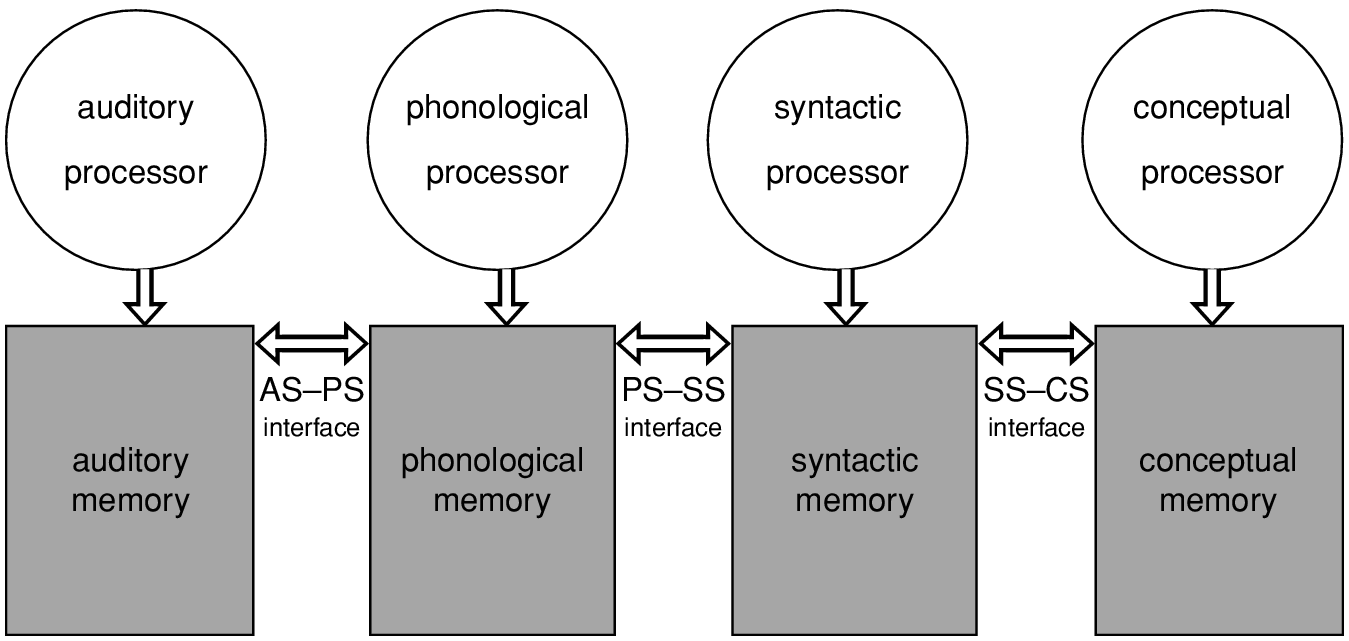

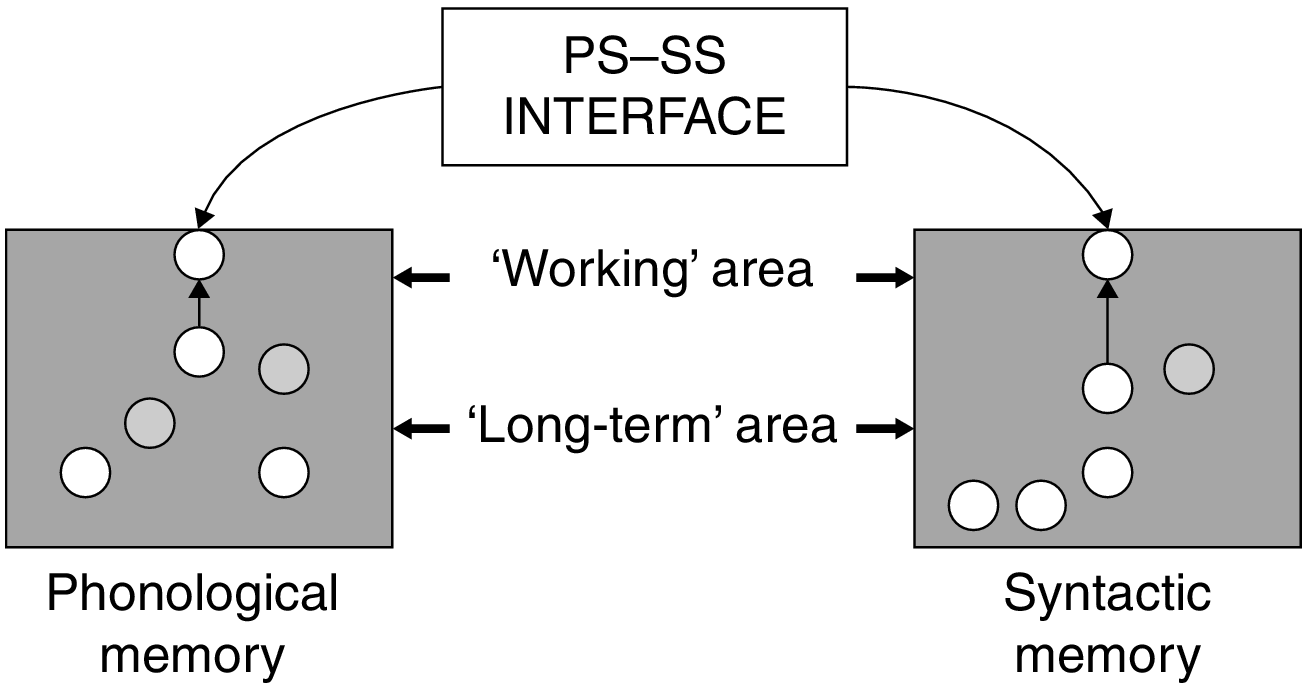

In Chapter 1 we gave an overview of MOGUL architecture, which will be developed more fully in this chapter. The architecture is presented in Fig. 2.2, an elaboration of Fig. 1.2 presented in Chapter 1 except perception is confined here to perception in the auditory mode. The different memory systems depicted in this figure constitute what we will refer to in this chapter as information stores. Also, in describing the architecture we will focus, here and below, on the syntactic portion of the system. Any structural element or combination of elements stored in one or other of these memories will be referred to as a representation. The interfaces link representations in adjacent stores. In this way, chains of representations are formed stretching across different modules.

Figure 2.2 MOGUL architecture.

Setting articulation and perception aside for the time being, the language system consists of three levels, as in Jackendoff's model: phonological, syntactic, and conceptual. Each level is made up of an information store, represented as a rectangle, and a processor, shown as a circle. Again, following Jackendoff, the levels are connected by interfaces. The arrows connecting processors and information stores indicate the flow of information. They are bidirectional because information flows both from a store to a processor (the processor reads representations in the store) and from the processor to the store (it manipulates symbols on the store).

In language comprehension, processing begins with input from auditory structures (AS) to the phonology, mediated by the auditory–phonological (AS–PS) interface, which is also part of the core language faculty. AS is the general (i.e. not specifically linguistic) output of auditory processing and therefore supports auditory representations of all sorts like representations of glass breaking, dogs barking but also including language sounds. It is the end product of a chain of auditory processing, analogous to the linguistic processing chain shown in Fig. 2.1, which is carried out unconsciously but eventually yields the potentially conscious sound representations of AS (see Chapter 8). For simplicity's sake we will deal here only with speech processing on the understanding that analogous systems exist for the processing of language in other (written and signed) modes, topics that will be taken up later.

When an auditory representation is created, the interface activates items in PS corresponding to those items that make up the AS representation or at least those elements of AS that are relevant for PS since auditory structure will also involve features that have no linguistic significance; hence the AS–PS interface processor would appear to have a selective function which implies that it is somehow ‘smart’. It is, however, more accurate to say that this interface simply works with whatever it gets. So, not only the sound of a door slamming in the background but also the breathiness of the speaker would in principle provide input for the activation of some phonological structure but the AS–PS interface may simply not manage to do anything with them. At the same time both these accompanying sounds, although not receiving any kind of representation in PS, may well acquire meaning, that is, they may well provide material for the conceptual processor. Some of this, say, meaning (conceptual structure) triggered by the breathiness, may also be interpreted as being relevant for the full interpretation of the message being currently communicated by the speaker because of its association with some emotion or certain symptoms having to do with the speaker's current state of health that also might have a bearing on the message.

Returning to what actually becomes input to the phonological system, i.e. has the effect of activating items in the phonological store, the activated items then become the raw material for construction of a PS representation.3 When the auditory/phonological (AS–PS) interface activates elements in PS, the phonological processor then produces, on the basis of this input, a combined (complex) phonological representation, which then serves as input to syntax. The PS–SS interface activates SS items corresponding to the items that make up the PS representation, and the syntactic processor builds a representation in its own code, which then serves as input for conceptual processing, via the SS–CS interface. The final product of linguistic processing is its contribution to the message, a conceptual representation that synthesises the language module's output with information from non-linguistic sources, for example the sources that were just mentioned above (door slamming and breathiness).

Language production, we hypothesise, is the same process operating in the other direction. It begins with a conceptual message, which stimulates activity in SS (syntactic structure) and then PS. Note that ‘message’ is simply a label of convenience for whatever CS representation is ultimately produced from the combination of input from SS and any other influences operating at the level of CS (conceptual structure) at the time. Thus, it is not an entity of the model. Also, it is important to keep in mind the fact that the processing of what begins as input from the environment or what begins as input from conceptual structure will typically involve many changes backwards and forwards as provisional representations are formed and then discarded in the search for the best fit. This will become clearer later.

UG has no distinct place in the picture. It is, rather, the genetic basis for the language processing system as a whole, specifying the overall architecture, the nature of the processors, and the initial state of each information store. The processors are best seen as the embodiment of UG principles. This means that we can continue to refer to the imposition of UG constraints but without implying that there is a separate system (UG) that monitors processing operations.

2.3.2 Processors

As described above, Jackendoff distinguishes two major types of processors, integrative and interface (represented by, respectively, circles and bidirectional arrows). The function of the former is to manipulate symbols in the information store it is associated with; more specifically, to construct a coherent representation from the currently active contents of the store. We treat these processors as innate and invariant. For the syntactic processing unit (i.e. the processor–store combination), on which we will focus throughout this discussion, this means that morphosyntactic acquisition occurs in SS and in the connections of items there to those in PS and CS, all done within the constraints imposed by UG. (For related ideas, see Pritchett Reference Pritchett1988, Reference Pritchett1992; Weinberg Reference Weinberg1993, Reference Weinberg, Epstein and Hornstein1999; Crocker Reference Crocker1996; Dekydtspotter Reference Dekydtspotter2001.) The syntax processor is best seen as an organised collection of subprocessors, each responsible for a specific aspect of the syntactic representation being constructed. The exact nature of these subprocessors will, as we indicated in the previous chapter, depend on the particular linguistic theory one adopts. In a Principles and Parameters approach, for example, they would correspond to the ‘modules’ of P&P theory, one being responsible, say, for X-bar structure, another for movement, a third for Case theory, and so on. The choices would be somewhat different for Minimalist approaches and considerably different for Lexical Functional Grammar or Construction Grammar or any of the many other approaches available including Jackendoff's own preference, Simpler Syntax (Culicover and Jackendoff Reference Culicover and Jackendoff2005).

Turning to the other major type of processor, we tentatively assign the interfaces a much more limited function than that which Jackendoff attributes to them. Specifically, their function is to match activation levels of adjacent modules and assign indexes to new or existing items as a necessary preliminary to activation matching. This makes them a relatively impoverished sort of processor, so much so that the term ‘processor’ might better be reserved for integrative processors. Henceforth we will adopt this convention, referring to interface processors simply as ‘interfaces’. Questions remain about the possible need to assign richer, more complex functions to them, and about the implications of such an approach for modularity. We return to these topics in Chapter 5. Another issue we will not consider here, because it has no apparent implications for other aspects of the framework, is whether the connection between two modules is better seen as a single bidirectional interface or as a pair of interfaces, each operating in only a single direction (Jackendoff Reference Jackendoff1997a).

Jackendoff (Reference Jackendoff2002) briefly discussed an additional type of processor, the inferential processor, which has the function of taking a complete existing structure and deriving from it another structure of the same sort, as in the process of drawing inferences. But the functions he assigned to it do not appear to be fundamentally different from those of standard MOGUL processors, i.e. manipulating symbols on a blackboard for the purpose of constructing a complete representation. So we do not hypothesise any distinct inferential processors.

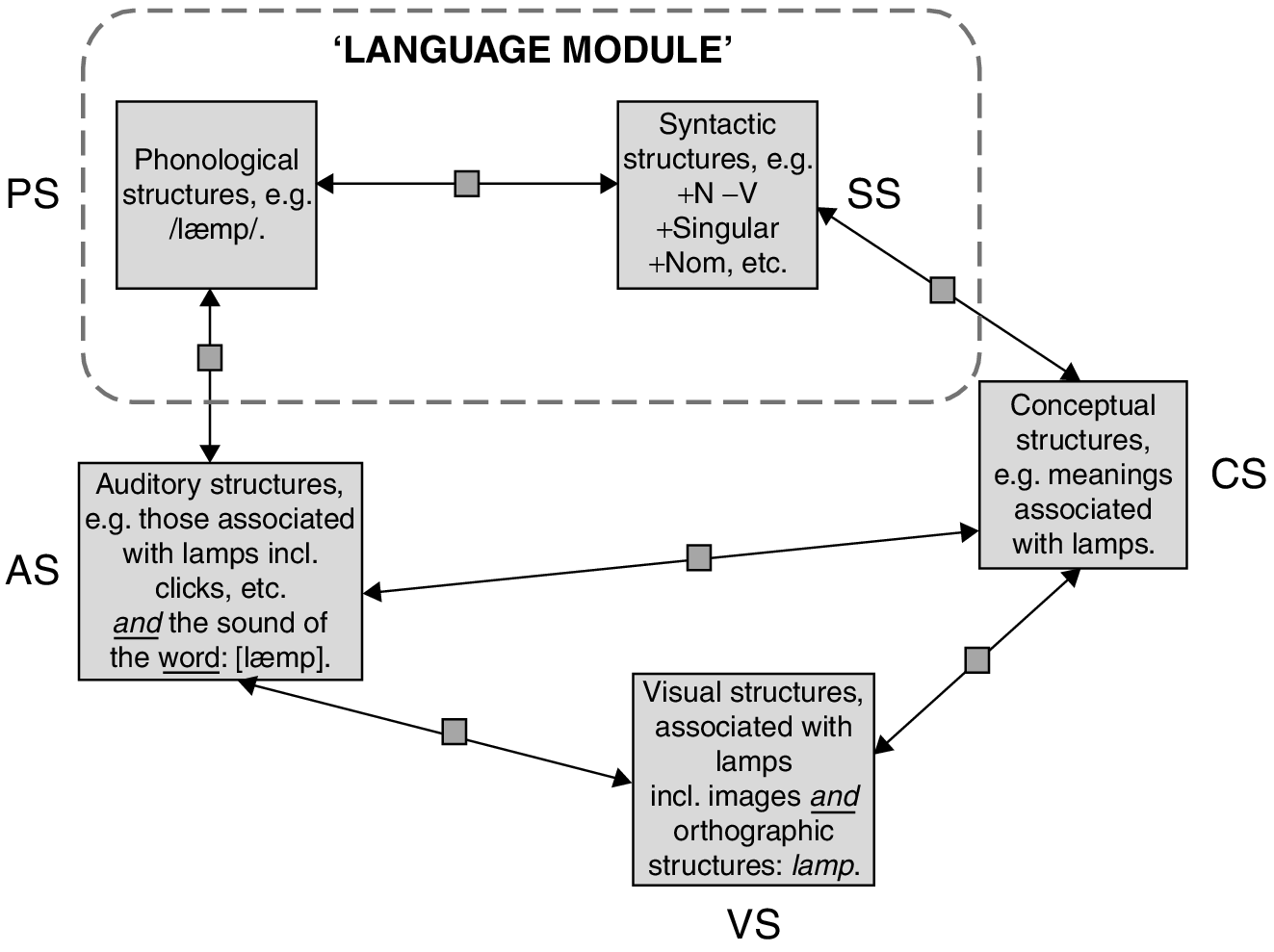

2.3.3 Lexical stores

The ‘information’ part of information processing is realised in the individual lexical stores (memories), which contain all the representations that make up modular linguistic knowledge (and some extra-modular knowledge; see below). These are what were referred to in the previous chapter as sublexicons since they each contain a component of what is conventionally thought of as a lexical item. They are also the site of the activity carried out by the processors and can therefore be thought of as both information stores and blackboards (or, alternatively, long-term and working memory respectively) where the processors write their representations; we will further explore this dual identity below. A conventional lexical entry is, then, a linking via interfaces of structures in the different sublexicons (together referred to here as ‘linguistic memory’), as in the example of lamp in Fig. 2.3, which also shows some of the many other connections beyond linguistic memory, that is, extending outside the language module. The interfaces in this figure are now each represented by a bidirectional arrow equipped with a square box to indicate its status as a simple processor. We will adopt this convention in all the figures that follow.

Figure 2.3 The word lamp as a linking of a variety of representations.

The PS consists of the entry's phonological form, while the SS contains its syntactic category and any additional features relevant to its use by the syntactic processor. The CS represents its meaning in terms of conceptual structure. Each store consists originally of innate primitives appropriate for the particular store (phonological features for PS, etc.). These primitives become combined into larger units (representations) through processing experience involving the innately specified processors, as will be described below. All types of linguistic elements are to be found in the linguistic stores, as PS–SS–CS chains. These include simple words, derivational affixes, inflections, compounds, other multi-word units such as idioms and collocations, and constructions.

Examples of basic elements in SS are features underlying a word's syntactic category and the count–mass distinction. Most important for the discussion below are the functional categories, which establish the framework for a syntactic representation (see, for example, Ouhalla Reference Ouhalla1991; Chomsky Reference Chomsky1995). Examples are tense, inflection, determiner, agreement, complementiser, and negation. They are language-specific instantiations of universal properties.

The conceptual (sub)lexicon contains universal properties written in terms of innate conceptual primitives. (For Jackendoff's theory of conceptual structures, see Jackendoff Reference Jackendoff1990.) CS, while crucial to language, is not part of the language module, though the SS–CS interface is (see below). It is best seen as chunks of conceptual knowledge connected to an SS and a PS. The connection with CS may be relatively simple, as in the case of a small morphological chunk or it may be much less simple involving much larger chunks as in the case of relationships obtaining between structures in a complex sentence. Certainly, if we consider the smaller chunks, this removal of CS from the language module fits in with the extensive evidence that lexical meaning has a very different status from other aspects of lexical knowledge, especially that it is acquired much more explicitly (Ellis Reference Ellis and Ellis1994). It also fits well with findings that word meanings are not found specifically in linguistic areas of the brain but are instead distributed in more or less predictable ways: visual aspects of a word's meaning are represented in visual areas, action aspects in motor areas, and so on (see, for example, Linden Reference Linden2007; Martin Reference Martin2007; Goldberg Reference Goldberg2009; Rissman and Wagner Reference Rissman and Wagner2012).

All relations between items in a module and those outside are mediated by an interface, because encapsulation does not allow direct connections. Following Jackendoff's notation, we capture this mediation in terms of indexes. When there is no module boundary between the two items (i.e. when they are in the same store), they can be directly connected, i.e. combined into complex representations; so there is no point in speaking of coindexing. It should be stressed that these indexes are not the indexes commonly used in linguistic theory to mark, for example, coreference or the relation between a moved element and its trace. The latter belong to the processors and are used exclusively by them, while the indexes we are referring to are in the domain of the interfaces and have no function other than to connect items across stores. The development of these indexes will be discussed in Chapter 4.

Figure 2.4 shows, in simplified form, the basic tripartite structure of a word, consisting of three items, a PS, an SS, and a CS, coindexed by interfaces across three stores and using an arbitrarily chosen index number (22). Each of the three items in this figure could, in principle, consist of a single element. Otherwise, it would stand for a complex representation, that is to say, one that is composed of a set of primitives combined according to the in-house principles of its own particular processor. For example PS22 would be a complex phonological representation composed of phonological primitives combined according to the principles of the phonological processor.

Figure 2.4 Indexes.

2.3.4 MOGUL and the nature of modularity

This discussion raises the question of what exactly constitutes the language module, and whether such a module even exists. One could reasonably take the position that there is in fact no such module, but simply a set of smaller, more specialised modules that interact with one another. This appears to be the view of Carroll (Reference Carroll2001), for example. The lack of a shared code between syntax and phonology is perhaps the strongest reason to adopt such a position. On the other hand, one reason not to adopt it is that the various parts are tightly integrated in such a way that they can serve a single function (language use), which presumably provides the reason for their existence. Another is that, given the assumption of UG, this system is innate. Finally, the combination of the syntax and the phonology is informationally encapsulated, using information from outside sources only in very limited ways. Outside influences on syntactic processing are restricted to conceptual information, and this only in the form of matching in current activation levels carried out by the interface. Outside influences on phonological processing are similarly restricted to activation levels of coindexed items in auditory structures.

So we will continue to speak of ‘the language module’ (or ‘the core language system’), with the understanding that in important respects it is not a prototypical Fodorian module but has the ‘molecular’ structure specified by Jackendoff. We also note that some aspects of language use are subserved by processors linked to, but not part of the module (see Chapter 5).

A natural view is that the language module consists of those elements that are directly attributable to UG and which came into existence (in the phylogenetic sense) primarily because they contribute to the function of language use. Included are the phonological and syntactic processors, as well as the interface linking them together and the interfaces linking the module to the auditory and conceptual processors. Also on the inside are PS and SS. Excluded from the module are auditory and acoustic processors, conceptual processors, and CS, the latter including any grammatical, pragmatic, or other knowledge obtained through processing that is not specifically linguistic, in other words what has variously been called learnedknowledge (Krashen Reference Krashen1982), learned linguistic knowledge or LLK(Schwartz Reference Schwartz1986), or metalinguistic knowledge (e.g. Sharwood Smith Reference Sharwood Smith1993; Truscott 1998b).

In discussions of modularity, syntax is commonly seen as a prototypical example of a module, showing all the standard characteristics, including those just described. Thus, if one accepts the notion of modularity, the syntactic component of the MOGUL framework must be considered a module. Syntactic structure (SS) must be seen as a part of this module. It contains exactly those features of lexical items that are used in syntactic representations, presumably using the same code as the syntax processor. The processor has constant, direct access to SS, responding to information (any information) found there. In addition, SS exists specifically for the purpose of doing syntactic processing; it has no other function. The apparent conclusion is that the syntactic processor and SS comprise a single module. Thus the definition of ‘module’ must include not only the processor itself but also the domain-specific store of information that it works with. The same sorts of considerations qualify the phonological processor and phonological structure as modular. In contrast, the conceptual processors and conceptual structure are not so clearly modular, a topic we will return to in Chapter 5. One could reasonably argue as well for the existence of an overall language module, as described above.

2.3.5 Representations: the locus of language development

The contents of the linguistic stores are what we have been calling representations (see our qualifications in 1.7). For our purposes, a representation is just this, any item in a store regardless of its exact character or its durability in the store. In this context we will use the terms representation, item, and element interchangeably. Each consists of combinations of the primitive items of its store, which we take to be innately given. The most basic type of example is that considered in the previous section, involving a simple word such as lamp, which consists primarily of three distinct representations, at PS, SS, and CS, each composed of more basic elements of its store. The word lamp is thus not a representation but rather an interconnected set of representations. This set acts as a functional unit because its components share an index and for this reason are necessarily coactivated during processing. Other types of linguistic representations share the basic characteristics of this example but vary in interesting respects.

In the following sections we will briefly survey the variety, considering SS representations and then CS representations, each in some detail, and then turn to issues involving connections among items at the various levels. As our focus continues to be syntax and (to a somewhat lesser extent) semantics, PS will receive only limited attention.

2.4 Representations at SS

We will consider two sets of SS representations. The first consists of the syntactic categories of words, the features underlying them, and combinations of those categories. The second is the functional categories and the feature values associated with them.

2.4.1 Syntactic categories and combinations of syntactic categories

Basic category features such as [+N] and [+V] are innate primitives in SS. A lexical category, such as noun or verb, is a combination of these features; in other words, it is a complex representation made up of simpler representations, the latter being the maximally simple ones in this case. All these categories are established during processing and as direct consequences of processing, as we will describe in Chapter 4. There has been some disagreement in the literature as to how many possible categories there are and the limits on the establishment of new categories. The traditional view in generative grammar is that only a very small number of features are involved and that the set of possible categories is quite limited. But others (see especially Culicover Reference Culicover1999) have argued that the set is necessarily open-ended. We will not take a position here on this question. Again, the MOGUL framework is compatible with a variety of specific theories and, as elsewhere, this fact should be kept in mind when examining our examples.

Syntactic categories, being representations, can be combined with one another to produce new representations. This observation is the beginning of an account for a wide assortment of linguistic entities, beginning with that which we consider perhaps the most fundamental, the subcategorisation frame. Subcategorisation frames specify the number and types of arguments of a word, and they are thought of as part of a speaker's knowledge of the word in the lexicon of the language. For instance, to take an English verb with two arguments, drink requires a subject noun phrase (NP) and one object NP. A so-called ‘double object’ verb, like tell, requires a subject NP, one indirect object NP, and one direct object NP. In standard generative accounts the subject argument is not included in subcategorisation frames and is accounted for independently by the Extended Projection Principle (Chomsky Reference Chomsky1982: 10). We will follow this account in our various examples. In MOGUL, following Jackendoff, there is no independent system or unit called ‘the lexicon’: subcategorisation is entirely a syntactic affair. A subcategorisation frame is the combination of the most basic syntactic structure (SS) of a word with one or more additional categories (SS representations4). The combination of the SS representation of kick with [NP]5 is the frame of kick, for example. A subcategorisation frame as such is in essence an SS item, having for all practical purposes no coindexed PS or CS counterparts; i.e., there is no standard meaning or pronunciation for it. More precisely, it has a very large number of coindexed PS and CS representations but the indexes connecting them have very low resting activation levels and are often transient; see Chapter 4. The subcategorisation frame of kick, for instance, has the following form:

(1) [Vi NP]

where i is the index of the PS and CS representations of kick and NP is approximately a generic representation of noun phrase. More precisely, it is weakly coindexed with a wide assortment of specific PS and CS representations, corresponding to noun phrases that co-occur with kick, for example the ball, a table, that annoying dog, etc.

Combinations of categories at SS can take a number of other forms as well, depending especially on how the categories are connected to PS and CS items. We will return to this topic below, in the context of interactions among representations at the various levels of the processing chain.

2.4.2 Functional categories and their feature values

In linguistics, functional categories refer to the small set of structural elements that make grammars work as opposed to lexical categories. Lexical categories classify elements that make up the lexical store of a given language, specifically those categories of words that belong to an open, as opposed to a fixed or closed class. Hence adjective (a traditional part of speech) is a lexical category and is used to classify words like ‘gentle’ and ‘hot’ thereby determining where they can appear in sentences and what they can combine with (for example before or after nouns depending on the language). In principle, there is no limit to the words that can be classified in this way so adding, say ten new adjectives to a language does not change the grammar, but just expands its lexical repertoire. If a functional category can be associated with a word at all, it is one of those words that belong to the fixed repertoire of a language (like the determiner the in English). In generative linguistics, functional categories are abstract features that reflect distinctions like tense, agreement, and case. In MOGUL, which assumes some version of generative grammar but is not committed in detail to any particular one, the essence of a functional category is an innately specified syntactic (SS) representation, which the syntax processor will insert in the overall representation it is constructing for its current input. Here, using a particular version of generative theory for the purposes of illustration, we will briefly consider two examples, Inflection (I) and Case items in order to show how they might be re-expressed in terms of the MOGUL framework.

2.4.3 I and its features

Probably the most developed example of a functional category is Inflection (I). More recent work in linguistic theory has tended to assume Pollock's (Reference Pollock1989) Split Inflection Hypothesis (see Radford Reference Radford2004), according to which I is decomposed into (at least) Tense and Agreement nodes. For the purposes of this discussion, we explore the traditional version (using I) here, because this is the way in which many discussions of functional categories in the second language acquisition literature have been framed. Considerable research has focused, in particular, on the strength feature of I, which determines whether verbs move from their canonical position in the VP to I (one of the parameters of P&P; see Lasnik Reference Lasnik1999). If the feature value is [strong], when I becomes part of a representation it will trigger this movement, with the consequences shown in (2).

(2)

*We finished quickly___our meal.

Movement of the verb finished to the left of the adverb quickly results in a sentence that is ungrammatical in English, in contrast to French, for example. Thus, the value for English is [weak], blocking the movement, while for French it is [strong], forcing the movement.

For MOGUL, the [strong] and [weak] feature values are representations in SS. The category I can be said to have a strength value when it has been combined with one of these to form a more complex representation, I+[strong] or I+[weak]. If only one of these exists, say I+[strong], we can say that I has the value [strong]. If both of the complex representations exist, I's value is a matter of degree – which representation is more highly active. The difference in degree can be so small that both values routinely appear in processing, in which case it makes little sense to say that I has one specific value, or so dramatic that for all practical purposes the weaker value does not exist. We will explore this subject in detail in subsequent chapters.

I's features also provide an account for what has been called the pro-drop parameter or the null subject parameter and is exemplified by Spanish, Greek, and all Slavic languages, which allow subject pronouns to be omitted in given contexts where the subject can be identified from the context. In the case of Spanish, Greek, and Polish, but not all pro-drop languages, the grammar possesses a rich morphology providing information that more morphologically impoverished languages like English cannot show. Hence, in English the second ungrammatical sentence with a missing subject in (3) might not, in this context, be incomprehensible but, without the context provided by the first sentence, does not tell you what thing(s) or person(s) left. In (4), however, the equivalent Polish example but where there is no preceding sentence providing context, the second word poszły (went) not only says that something or someone left, it also identifies them as feminine and plural. This additional information may form part of the disambiguating context to help identify the referent of the missing subject.

(3) The two women seemed to have come to a decision.

*left quickly.

(4) Szypko poszły.

Quickly leave+Past Feminine 3rd person Plural

In pro-drop languages like Chinese, however, there is no such supplementary support from inflectional morphology so identifying the missing subject has to be done using other types of context.

Principles and Parameters accounts of missing (null) subjects hypothesise an empty category, pro, which can appear in the subject position only if it is licensed.6 The licenser has typically been associated with Inflection (though Chinese-type languages probably require a distinct account). In line with the account of I's strength feature, we thus hypothesise that SS contains a [+pro] representation and a [−pro] counterpart, each of which can combine with I to form a more complex representation. I+[+pro] licenses pro, permitting pro-drop, while I+[-pro] blocks it.

2.4.4 Case items

In P&P overt case marking is an expression of underlying abstract Case, which is assigned to each NP (see Chomsky Reference Chomsky1986). English is relatively weak in overt Case, the distinctions being found mainly in pronouns, as in the following sentence:

(5) She kissed him.

The subject, she, has nominal form, in contrast to the accusative form her, while the object, him, shows accusative Case. The theoretical claim is that Case is present, abstractly, on all noun phrases, even when no overt distinctions are present. Thus, the subject and object in the following sentence, Mary and Bill, again have nominative and accusative Case, respectively, despite the absence of any visible markings.

(6) Mary kissed Bill.

The particular Case (nominative, accusative, etc.) is assigned by the head that is in a particular structural relation with the NP. An NP governed by I, for example, receives nominative Case. We assume that Cases in this sense are innately present, as is the principle that every noun phrase must receive Case, possibly as an explicit principle of the syntax processor and possibly more indirectly, because Case is necessary for assignment of conceptual roles (see below). More specifically, we hypothesise that there is a functional category [Case] innately present in SS. This item does not in itself distinguish the various possible Cases; these are more complex representations combining this item with the individual heads that assign Case. For example, I+[Case] carries nominative Case and V+[Case] accusative. We will henceforth use the term Case to refer to these combinations of the Case item with a head.

2.5 Representations at CS

CS representations are not actually part of the language module, but discussion of SS representations and their development is not possible without some reference to CS representations and their development. So here and in the following two chapters we will discuss aspects of CS that are most closely related to syntax, and then return to a more in-depth discussion of word meaning in Chapter 5, in the context of extramodular knowledge and its development. In this section the focus will be on conceptual roles.

2.5.1 Conceptual role items

Staying with a more or less standard generative terminology for a moment, we come now to thematic (theta/θ) roles and thematic (theta/θ) grids. This needs some elaboration so that we can more clearly explain the MOGUL version where we use the term ‘conceptual’ rather than ‘thematic’. In mainstream generative theory, theta roles can be seen as the syntactic counterparts of, and indeed are associated with, familiar semantic roles like agent and patient, the latter obviously being relevant to conceptual structure. Somewhat confusingly, theta roles use semantic terminology. In any case, a theta role just defines the number, type, and placement of obligatory arguments (see the earlier discussion of subcategorisation frames). With a sentence like Max threw the ball, the subject NP (also called the external argument) must relate to something that has volition, can do something like throwing. That is part of its theta role. Because it is restricted to defining number, type, and placement of the verb's argument, a theta role in this type of theoretical framework is not considered to be a semantic category but rather a purely syntactic one, and the use of the Greek θ (theta) is presumably supposed to act as a reminder of that fact. The subsequent spelling out of the meaning of a verb and its arguments is the job of semantics.

Theta roles in such standard approaches are stored in a verb's theta grid. The simplest form that a theta grid comes in is an ordered list between angle brackets. The theta roles are named by the most prominent semantic relation that they contain. In this notation, the theta grid for a verb such as throw is <agent, theme, goal>. From now on, we shall be talking of ‘conceptual’ grids.

In MOGUL, the interpretation of a sentence necessarily involves assignment of a conceptual role to each of the verb's arguments (as listed in its subcategorisation frame). Here, however, conceptual roles are part of conceptual and not syntactic structure. These conceptual roles in MOGUL are conceptual (CS) representations, not differing in any fundamental way from others. What is special about them is that they are those CS representations that are best suited to express the relations that participants have to the action expressed by the verb: agent, patient, recipient, and the other conceptual roles familiar from the semantic literature.

Conceptual roles are strongly, if imperfectly, associated with (syntactic) Cases; the agent role, for example, tends to go with NPs that are marked nominative. Returning to an example considered above,

(7) She kissed him.

the subject, she, with its nominative marking, is naturally interpreted as the actor, or agent in the sentence, while the object, him, with its accusative form, plays the role of patient, receiving the action of the verb kiss. Within the MOGUL framework, the implication is that Case items in syntactic structures (SS) are coindexed with CS conceptual role items. This coindexing establishes the relation, while the activation levels associated with the indexes make it probabilistic: a single Case item can be coindexed with more than one role, and the relative activation levels of the indexes determine, probabilistically, which role will be assigned to a particular NP in a particular processing episode.

2.5.2 Conceptual grids

A head is associated with a particular set of arguments. Syntactically, this association takes the form of a subcategorisation frame, as described above. The semantic (i.e. conceptual) version is a conceptual grid. The difference between the two (apart from the fact that one is syntactic and the other semantic) is that the presence of a subject is taken as a background assumption in treatments of subcategorisation frames but is explicitly included in conceptual grids. In MOGUL terms, a grid is a complex item at CS composed of the representation of the head plus one or more conceptual role items. In this way MOGUL differs from the standard Chomskyan model in its use of the terms ‘role’ and ‘grid’ by using them as part of conceptual, not syntactic structure. The conceptual grid of the verb kick, for example, includes an agent and a patient, so the complex CS item representing its grid will take the form AGENT+KICK+PATIENT.

2.6 Connections among SS, CS, and PS items

We have noted previously that in our Jackendovian framework a word is not a single entity but consists rather of (at least) three different representations, joined by a shared index. The same is true of various other common objects of linguistic study. These objects are not straightforward entities in MOGUL but are instead defined by the interaction of two or more representations at different levels. These more abstract entities are the subject of this section.

We will begin with words, focusing on an additional aspect of their representation that has been prominent in the psycholinguistics literature for some time: the question of whether a complex word is stored and accessed as a unit or in terms of its component parts. The final topic is functional categories.

2.6.1 Words: whole-form vs. decompositional storage/access

We gave a general description of the MOGUL treatment of words above, focusing on simple cases. Complexities arise, though, in the case of forms such as happiness, tractor, and trees that might be syntactically and/or semantically analysable. Three possibilities can be imagined for such words. First, each might be stored simply as an unanalysed whole. This seems quite plausible for tractor, since a typical English speaker is unlikely to have tract as an item in itself (except with unrelated meanings such as ‘piece of land’). Agentive -or, while it might well have a place in the stores, is thus unlikely to be a component of tractor.7 But this treatment seems less plausible for happiness and much less so for trees, because the composite nature of these items is obvious.

A second possibility is that complex forms are not stored at all, as such, but rather constructed from their simpler components by means of a rule whenever they are to be used. This treatment seems quite natural for trees and perhaps happiness, but not at all natural for tractor. A third possibility combines the first two: both the complex form and its component parts exist and the former can be processed either as a whole or as an on-line creation from the simpler parts. Such a treatment is intuitively appealing for happiness, which is naturally seen as a single meaningful word in itself but is clearly made up of two parts, each with its own clear meaning. The opacity of tractor makes such a treatment much less plausible for it. One might also question the idea of trees being stored as such, given that it represents nothing more than a combination of the plural affix and a word.

Not surprisingly, research suggests that all three possibilities are realised, depending on the individual item that is being studied (see Cole, Beauvillain, and Segui Reference Cole, Beauvillain and Segui1989; Laudanna, Burani, and Cermele Reference Laudanna, Burani and Cermele1994; Sereno and Jongman Reference Sereno and Jongman1997; Wurm Reference Wurm1997; Bertram, Schreuder, and Baayen Reference Bertram, Schreuder and Baayen2000; Niswander, Pollatsek, and Rayner Reference Niswander, Pollatsek and Rayner2000; Nooteboom, Weerman, and Wijnen Reference Nooteboom, Weerman and Wijnen2002; Bertram and Hyönä Reference Bertram and Hyönä2003). Various authors have explained the variation in somewhat differing ways, but there seems to be widespread agreement on the importance of transparency, both semantic and phonological (Colé, Beauvillain, and Segui Reference Cole, Beauvillain and Segui1989; Marslen-Wilson et al. Reference Marslen-Wilson, Tyler, Waksler and Older1994; Wurm, Reference Wurm1997; Vannest and Boland Reference Vannest and Boland1999; Sánchez-Casas, Igoa, and García-Albea Reference Sánchez-Casas, Igoa and García-Albea2003). In essence, a word that is more easily recognised by the processor as composite is more likely to be stored and accessed compositionally. This summary also fits with Laudanna, Burani, and Cermele's (Reference Laudanna, Burani and Cermele1994) finding that a prefix is less likely to act as a visual processing unit if it is orthographically identical to a non-morphemic string that frequently appears at the beginning of words. This situation apparently makes it difficult for the processing system to treat that string as an independent unit. Another way to conceptualise this point is in terms of the salience of the component parts (Bertram, Schreuder, and Baayen Reference Bertram, Schreuder and Baayen2000; Järvikivi, Bertram, and Niemi Reference Järvikivi, Bertram and Niemi2006; Kuperman, Bertram, and Baayen Reference Kuperman, Bertram and Baayen2010; Bertram, Hyönä, and Laine Reference Bertram, Hyönä and Laine2011).

Some authors have also pointed to the productivity of an affix as a key factor in whether that affix is stored and processed as a distinct unit (Bertram, Laine, and Karvinen Reference Bertram, Laine and Karvinen1999; Bertram, Schreuder, and Baayen Reference Bertram, Schreuder and Baayen2000). Others have suggested a role for frequency (Baayen, Dijkstra, and Schreuder Reference Baayen, Dijkstra and Schreuder1997; Colé, Segui, and Taft Reference Colé, Segui and Taft1997; Baayen, Feldman, and Schreuder Reference Baayen, Feldman and Schreuder2006). In fact, the significance of frequency is often taken as a background assumption in this research. Experimenters manipulate the frequency of the whole form and/or its component parts to determine whether each is used in processing. If the frequency of the whole form influences the speed with which it is accessed, this finding constitutes evidence for whole-word processing; if such an influence is found for the frequency of the component parts, this suggests that the form is processed compositionally. The underlying assumption is that frequency is a factor in storage and retrieval of complex forms.

If productivity is seen as an indicator of how frequently an affix appears, as seems reasonable, these two factors can be brought together: The more frequently an element appears in the input, the more likely it is to be stored as an independent item and to be processed as such. Putting this factor together with transparency/salience,8 a reasonable conclusion is that the balance between whole-form storage/access and decompositional storage/access is determined to a large extent by how easily the system can identify an element as a meaningful unit and how many opportunities it has to do so. We will return to this point in Chapter 3 and develop it further in Chapter 4.

2.6.2 Beyond subcategorisation frames

Above, we discussed the nature of subcategorisation frames as composite representations at SS consisting of a specific head and one or more generic category representations. The example of kick was used in that discussion and is repeated here as (8).

(8) [Vi NP]

The essential point is that the head, Vi in this case, represents a specific word (kick) – meaning that it is coindexed with specific PS and CS representations – while the rest of the item, the NP in this case, has no particular phonological or conceptual form but is instead weakly coindexed with a great assortment of PS and CS representations, corresponding to any noun phrase that has been encountered with kick. A natural extension of this discussion comes from asking what happens when the secondary parts of the frame do acquire strong associations with particular PS and/or CS items. This is the topic of this section.

One possibility is that the NP in the frame can be coindexed with the PS representation /the bucket/ and then the entire subcategorisation frame can be coindexed with /kick the bucket/ at PS and with DIE at CS. The result is a fixed expression, kick the bucket. The essence of a fixed expression, then, is the presence of well-established complex representations at each level and strong connections among them, i.e. shared indexes with high resting activation levels. In contrast, a subcategorisation frame is an established SS representation without PS or CS counterparts, apart from those of the lexical head that the frame belongs to.

Another type of linguistic entity falls between the two extremes represented by kick+generic NP and kick the bucket. In this case, the NP in the SS frame is coindexed with a particular PS–CS combination, but the CS that is coindexed with the frame as a whole is purely compositional. An example is kick the ball: if there is a stored CS representation for the entire unit, it simply equals the sum of its parts, contrasting sharply with the CS for kick the bucket. This is the MOGUL characterisation of collocation. Note that a great many PS–CS combinations other than the ball can be coindexed with the NP in the SS frame.

The SS in each of these cases is the subcategorisation frame of a lexical head. But this is by no means a requirement. It could be any legitimate syntactic unit. Consider the following example from Jackendoff (Reference Jackendoff1997b) (his 98).

(9) [VP V [bound pronoun]'s way PP]

‘go PP (by) V-ing’

Phrases having this structure include felt his way through the dark room and danced her way to the top. In this example only one element in the frame is entirely fixed; i.e. one (non-head) part of the SS, way, is coindexed with just a single PS. (And in other cases there is no fixed element at all; see Jackendoff for examples.) The other elements are each restricted to a limited set of PS–CS representations. The pronoun SS is coindexed with only a very small set of possible PS and CS elements. Elements coindexed with V tend to be verbs that include the GO CS, though this meaning could come instead from the overall representation rather than the verb itself, as in burped their way right out of the fashion show. The PP is loosely associated with prepositional phrases that make suitable destinations. The syntactic frame as a whole is coindexed with many composite CS representations, each expressing the meaning of an entire instantiation of the frame, the meaning of danced her way to the top, for example. This CS reflects the meanings of its component words to some extent, but also includes additional CS elements. The meanings of the various CS representations coindexed with the frame thus reflect the combination of this common conceptual element and the meanings of the component words.

Thus the various types of linguistic units are best seen not as discrete, qualitatively differing entities but rather as various instantiations of the possibilities allowed by continua reflecting the compositionality of the CS representations and the complexity of PS–SS–CS mappings. The possibilities range from a simple 1–1–1 relation at one extreme to almost arbitrarily complex mappings at the other. We will show in Chapter 4 that this situation arises naturally from the nature of processing and acquisition in MOGUL.

This discussion is at a relatively high level of abstraction in that it says little about the details of the complex representations or the linguistic principles that allow some and disallow others. Thus, a variety of instantiations are feasible, underlying one of the central points of our proposal: again, MOGUL is not a specific theory but rather a framework within which specific theories of particular areas can be formulated and related to one another.

2.6.3 Functional categories: form and meaning

In MOGUL the essence of a functional category, again, is an innately present SS representation, forming the heart of syntactic processing. But functional categories are commonly, if inconsistently, associated with meanings (CS representations) and pronunciations (PS representations), often in complex ways. These cases often fall under the category of ‘inflection’ – tense, or agreement in number, person, and gender – but determiners and auxiliary verbs are also realisations of functional categories. We now briefly survey the associations of these SS items with PS and CS items.

The meanings of functional categories are CS representations, not differing in any fundamental way from the meanings of words and other items. Given the centrality of functional categories in language, there may well be a bias built into the SS–CS interface pushing it to connect functional categories to certain types of meanings, such as those involved in time or number. But the CS items representing these meanings are simply CS items and once the connection is established (indexes are assigned) the relation between them and their SS counterparts is no different from that between word meanings and their SS counterparts.

Similarly, the pronunciations of functional categories, where overtly realised, are standard PS representations, not differing in any fundamental way from those of words. They tend to be bound affixes, but this is by no means a necessary characteristic. The English determiners this and that, for example, are free morphemes associated with a functional category, D. In contrast to the fairly limited set of meanings that appears to be associated with functional categories, there do not appear to be any constraints on their possible phonological representations.

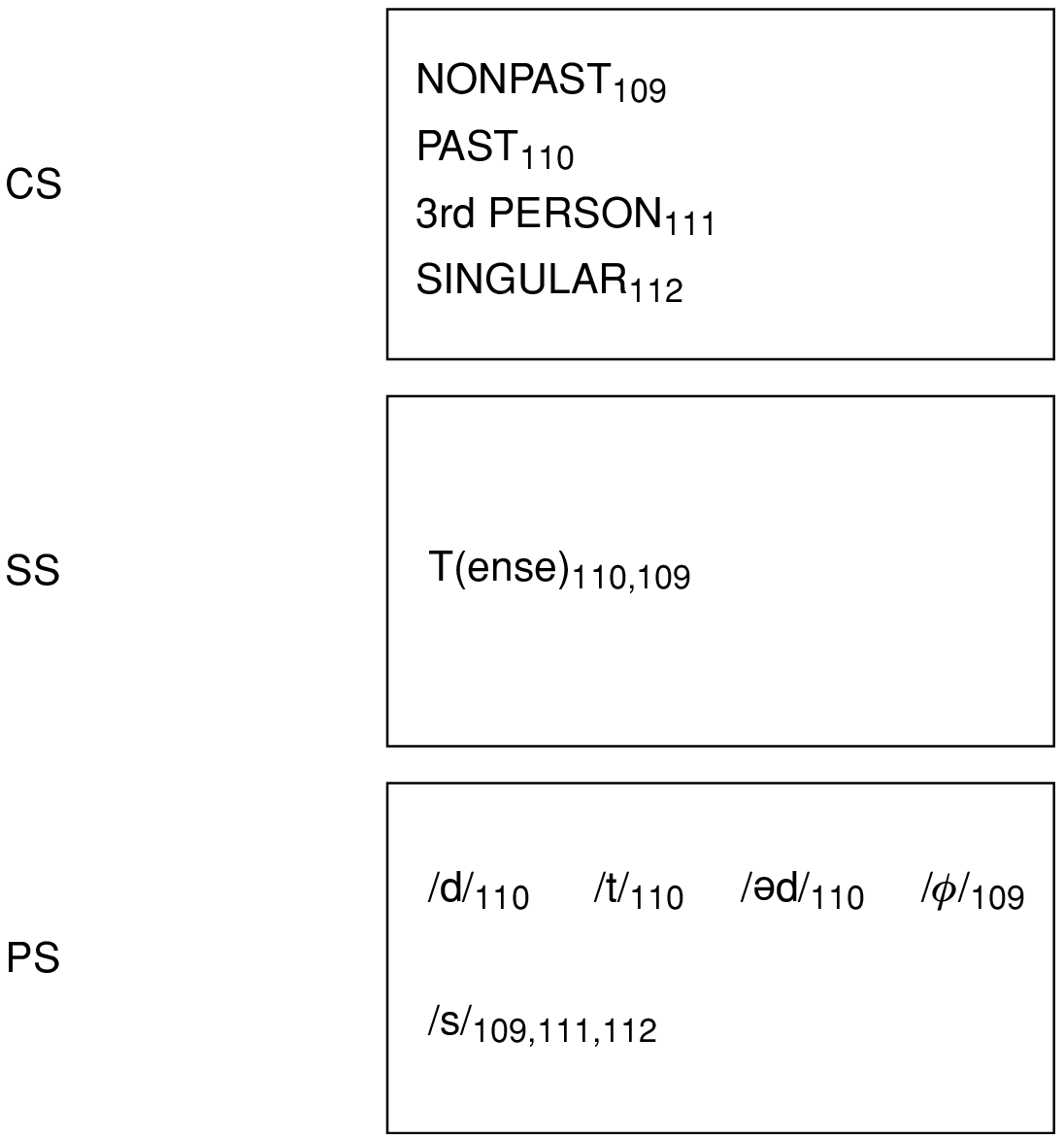

Consider first the tense (T) category. Its essence, again, is the functional category at SS. In languages in which it is overtly realised it is likely to involve a complex set of mappings across the levels of the processing chain as well. English provides a relatively simple example, illustrated in Fig. 2.5. The single representation at SS is coindexed with two CS representations, best characterised as PAST and NONPAST.9 At PS, three different representations are coindexed with PAST: /d/, /t/, and /əd/. And of course their common index is found on the single T representation at SS, which also bears the index of NONPAST. The indexing of NONPAST brings in further complications. No PS representation exists specifically for this CS, except perhaps a null representation. The verbal –s affix is coindexed with a complex CS item, composed of 3rd PERSON, SINGULAR, and NONPAST. It is also coindexed with an additional SS representation, I or Agr. These mappings become considerably more complex in languages that have rich overt inflection.

Figure 2.5 The representation of tense forms.

The other example we will consider of a functional category is that which underlies the passive construction. It is particularly relevant here for two reasons. First, it is the heart of a construction that is defined by the coindexing of different representations across the various levels of the processing chain. Second, at CS the essence of the construction is changes in the assignment of conceptual roles, which we have already discussed and will explore further in Chapter 4. For the purpose of accounting for passive within the MOGUL framework, we will adopt the most straightforward approach to the construction, leaving open the possibility (likelihood) that this relatively simple account should eventually be replaced by a more linguistically sophisticated version.

In this simple account, the central role is played by a functional category at SS, which we will simply refer to as the ‘passive’ item. Its effect is to deny Case to the NP in the object position and thereby force that NP to appear in the subject position, where it can receive Case. This functional category is innately present in the same sense that other functional categories are. Its PS counterpart consists of the verbal forms associated with passive; be –en in English. These PS representations are therefore coindexed with it. At CS, the essence of passive is a reversal of role assignments. In comprehension of the following utterance,

(10) Pat was hit by Chris.

the conceptual processor must combine PAT with the CS semantic role item that would otherwise be combined with the item following HIT. The cue for this reversal is the presence in the CS representation of an item that serves exactly this purpose, the CS counterpart of the ‘passive’ item at SS. It is coindexed with the SS passive item and so whenever the latter is active the CS item will also be active. The implication is that when input is analysed as passive at SS the appropriate reversal of conceptual roles will be carried out at CS. In Chapter 4 we will take up the question of how these items could become established in the stores.

2.6.4 A note on indexes

A final type of representation is one that is not commonly seen as such but for the sake of parsimony should be. This is the index. Like any other representation, it is an item contained in a store that can be combined with other items to form complex representations. It is different from others in that it is not composed of the primitives of its store; i.e., it is not in the code of that module and therefore cannot be read or manipulated by the associated processor. It belongs, instead, to the domain of the interfaces, the function of which is simply to read and manipulate indexes. Thus, it is necessarily the interface that combines an index with other representations and alters its current activation level during processing.

2.7 Representations and the notion of knowledge

To summarise, the notion of representation that we are suggesting is the following. Each linguistic store – phonological, syntactic, and conceptual (PS, SS, CS) – contains a set of primitives specific to the particular store and a large number of combinations of these primitives, combinations of the combinations, and so on. The various linguistic entities we have discussed, ranging from simple words and bound morphemes to complex constructions, all have their independent existence in the stores, but a great deal of on-line construction also goes on during processing, putting the existing representations together in structured ways to produce more complex representations that are appropriate for the system's current input. In Chapter 4 we will examine the mechanisms by which all these representations come into existence and become (or fail to become) established parts of processing.

Knowledge is a highly abstract notion and has no specific location or identity in the MOGUL framework. In other words, it is not an entity in this approach. Representations are of course the heart of the concept of knowledge. But because of the interconnectedness of the system a single representation cannot be considered the instantiation of knowledge. Words, for example, are an abstraction, actually made up of multiple representations on different stores. Knowledge of a word is thus distributed across stores, consisting primarily of a chain of representations, PS–SS–CS, or sound, structural information, and meaning. We have hypothesised that the processors embody the principles of Universal Grammar; they therefore constitute innate knowledge of language (UG). The interaction between these two knowledge types, processors and representations, is also an aspect of knowledge.

The treatment of processors and representations as distinct but interacting knowledge types captures the ‘words and rules’ nature of language discussed by Pinker (Reference Pinker1999; Pinker and Ullman Reference Pinker and Ullman2002; see also Clahsen Reference Clahsen1999). Pinker offered very extensive evidence and argument that there is a pervasive, fundamental distinction between stored items on the one hand and computation on the other, each making up an essential half of language and its use. In the MOGUL framework, rules are the workings of the processors, specifically the way they construct complex representations on-line. A stored item is simply a representation that is present in one of the stores. We will return to this distinction in the following chapter, after some additional groundwork has been laid in regard to the nature of processing in MOGUL.

2.8 Working memory

Working memory plays a large role in Jackendoff's (Reference Jackendoff1987, Reference Jackendoff1997a, Reference Jackendoff2002) model and an even larger role in ours, so it requires some discussion at this point. We will first selectively review the literature on this topic and then describe the place of working memory in MOGUL. This will also serve as a lead-in to the next chapter, which focuses on processing.

2.8.1 Research and theory on working memory