Introduction

Simply put, agency refers to the capacity to act, and an agent is one who has this capacity and manifests it in acting. This, however, is not very informative unless we can also say what an action is and what this capacity to act consists in. This is where difficulties begin. We cannot fruitfully investigate what having the capacity to act might involve unless we have some conception, even if preliminary, of what an action is. Our first order of business is thus to examine what counts as an action.

The distinction between activity and passivity is often taken as a starting point. To be active is to make things happen or bring about changes in the world, including yourself. In contrast, to be passive is to have things happen to one. My punching you in the nose is an instance of my activity. Having your nose punched is something that happens to you rather than something you do. Yet many doings – the wind blowing the leaves, acids corroding metals, enzymes breaking down food molecules – would not count as actions in the sense philosophers are typically interested in. Simply characterizing agency as a capacity for activity, understood as the capacity to cause changes, yields a notion of agency that appears much too broad and permissive, given that most entities in the universe enter into causal relationships and thus would count as agents, patients or both. This raises the question: What more is needed for activity to qualify as an exercise of agency?

One important difference between my opening the window and a gust of wind pushing the window open is that the former but not the latter appears to have a point, a purpose, or an aim. This idea has led philosophers from Aristotle onwards to construe action in terms of intentionality, to understand agency as the capacity to act intentionally, and to take human intentional action as their paradigm case. Intentional actions are important not just because they provide clear-cut cases of actions with a point or purpose but also because the notion of intentional action has tight connections with notions of responsibility, both moral and legal, of rationality, justification, and autonomy. This approach to agency thus opens a whole new set of questions: What does it mean to act intentionally? What is the relationship between acting intentionally and acting for a reason? What is the relationship between intentional action and intention? What is the nature of intentions and how do they relate to beliefs and desires? How should we think of actions that are not intentional? As we shall see, there is intense debate among proponents of agency as intentional action on how these questions should be answered.

Some, myself included, also worry that the agency as intentional action approach might be too restrictive. Many would agree that a cat chasing butterflies, a spider spinning its web, a pride of lions hunting an antelope, or bees collecting pollen are all acting purposefully. Yet, their actions may fail to meet the criteria for intentional actions, especially if the latter are characterized in terms of notions such as reasons, justification, intentions, practical reasoning, or conscious awareness. These examples suggest that there is room for at least another form of agency that is more demanding than agency as mere activity in that it manifests purposiveness and, yet, less demanding than full-blooded intentional agency.

In addition, a simple distinction between animal purposive agency and human intentional agency would itself be much too crude for a number of reasons. First, within the animal realm, some species are capable of much more complex agentive feats than others: The agentive capacities of cockroaches, moths, or toads are, one may suppose, much more limited than those of dolphins, elephants, or blue jays. In other words, animal purposive agency is not a homogeneous category but exhibits a variety of forms and levels. Second, while some agentive capacities may be distinctively human, such as rational, reflective intentional agency, many human actions are habitual, impulsive, spontaneous, or arational. This in turn suggests that human agency is not entirely discontinuous from animal agency. After all, humans also are animals. Third, it is unclear whether the ascription of agency should be restricted to living organisms or can apply as well to artifacts such as robots or artificial intelligence (AI) systems. The giant progress in AI and robotics in recent years makes the issue of their agentive status more and more pressing. Finally, all the cases considered so far involved individuals, but what about joint actions where individuals coordinate their actions in pursuit of a shared goal? What exactly does it take for the actions of two or more individuals to constitute a joint action?

All these questions point to the variety of forms agency may take. This in turn raises the issue whether there is at least some unity behind this diversity. Is there a basic set of conditions or criteria an entity or organism should satisfy to be attributed agency and can these conditions be realized in different ways? Are there additional criteria or dimensions of agency, such as a capacity for practical reasoning or self-consciousness that mark off more sophisticated forms of agency? Should we think of these various kinds of agency as forming a single hierarchy culminating, with typical human hybris, with full-blown human agency? Should we conceive of them rather in terms of family resemblances with some kinds of agency higher on some dimensions of agency but not necessarily all and some possibly higher on one or several dimensions than human agents?

This Element does not aim to be exhaustive, to propose answers to all the questions I have raised so far, or to discuss all the answers that have been proposed by others. There are many philosophical approaches to agency and it would be impossible to discuss them all in a way that does justice to them. Rather, the approach I will take is meant at readjusting the focus of mainstream contemporary analytic philosophy of action in three main ways. First, it aims to offer a corrective to its traditional focus on human intentional agency at the expense of other kinds of agency and to do so by examining the various forms these other kinds of agency take and their relation to human agency. Second, it brings to the fore a topic curiously neglected by traditional analytic action theory, namely the conscious dimension of (human) agency and considers the role of the subjective experience of agency in shaping agency, raising a number of key issues that have recently animated the philosophical community. Third, while joint action and shared agency have not escaped philosophers’ attention, their treatment has usually suffered from the same biases as the treatment of individual agency, with an over-emphasis on the elucidation of the notion of shared or collective intention and a near complete neglect of the phenomenology of joint agency. I will thus attempt to redress the balance.

In Section 1, I introduce the classical philosophical conception of agency as intentional action, the standard causal theory of action, and the account it offers of what counts as intentional action. I present some internal debates within this approach and discuss some of its limitations. In Section 2, I change tack, attempting to identify the basic building blocks of agency, to characterize elementary forms of agency, and to show how more sophisticated forms of agency build on them. Section 3 of this Element is concerned with conscious agency. It discusses the nature and sources of our agentive awareness, its reliability or lack thereof as a source of knowledge of our own agency, and the causal role conscious agentive states may play in shaping our actions. Section 4 extends the approach to joint action. I examine the different forms of coordination among agents on which the success of joint action depends and the various mechanisms and processes that facilitate coordination among agents. I also discuss the sense of agency in joint action and the different factors on which the emergence of a sense of shared agency depends.

1 Intentional Agency

1.1 Acting for Reasons

From Aristotle onwards, most philosophical work on agency has focused on human intentional agency. In contemporary analytic philosophy, Anscombe (Reference Anscombe1957) and Davidson (Reference Davidson1963) have been very influential in promoting the view that agency is best understood as a capacity for intentional action. Both agreed that (a) to act intentionally is to act for a reason and that (b) the notion of intentional action is more fundamental than the notion of action.

The following often quoted passage from Anscombe’s Intention (Reference Anscombe1957) illustrates the first claim:

What distinguishes actions which are intentional from those which are not? The answer that I shall suggest is that they are the actions to which a certain sense of the question “Why?” is given application; the sense is of course that in which the answer, if positive, gives a reason for acting.

Intentional action is thus behavior whose correct explanation cannot be purely mechanistic but requires us to make reference to the reason the agent has for so acting, a reason that identifies the goal or aim of the action and thus makes it intelligible in the agent’s eyes. For instance, if you ask a pedestrian why they are crossing a street and they answer that they are on their way to the shops on the other side, the pedestrian has given you a reason for their action. The reason given here may be taken as shorthand for a more complete reason explanation that takes the form of a practical syllogism with a desire and a belief as premises and the action they rationalize as the conclusion. In Davidson’s own words:

Whenever someone does something for a reason, therefore, he can be characterized as (a) having some sort of pro attitude toward actions of a certain kind, and (b) believing (or knowing, perceiving, noticing, remembering) that his action is of that kind.

In our example, (a) would be the desire to go to the shops and (b) the belief that crossing the street is a way to go to the shops.

The second point of agreement between Anscombe and Davidson is that the notion of intentional action is more fundamental that the notion of action, or, in other words, that the latter is derivative from the former. Let us go back to our pedestrian attempting to cross the street and imagine this time that as he is doing so, he jumps backwards and bumps into the woman walking behind him. Asked why he jumped backward he may answer that it was to avoid being hit by a car that had suddenly turned the corner at high speed. Asked why he bumped into the woman behind him, he might answer instead that this action was involuntary on unintentional. According to Anscombe and Davidson, one and the same event is susceptible of a number of different descriptions. Described as jumping backward, the event is an intentional action insofar as the pedestrian had a reason to do so. Under the description bumping into the woman behind him, the event is not an intentional action, yet it qualifies as an action insofar as this description refers to the same event that can also be described intentionally. We can contrast this with the following scenario: Our pedestrian is once again attempting to cross the street but this time he is the victim of a dizzy spell, loses his balance, falls backwards, and bumps into the woman behind him. This time, his falling backward and his bumping into the woman cannot be given intentional descriptions; rather they are mere happenings.

So far, we have seen that Anscombe and Davidson agree on the fundamental character of the notion of intentional action and agree that an action is intentional to the extent that it is explainable by reasons the agent has for so acting. But we still have to answer a third question, namely what is the nature of this explanation relation between reasons and actions. This is where Anscombe and Davidson clearly part ways.

In the 1950s and early 1960s, an important debate was sparked on whether agents’ reasons for their actions were also the causes of these actions. Influenced by Wittgenstein (1952), some philosophers (e.g., Melden, Reference Melden1961; Kenny, Reference Kenny1963; R. Taylor, Reference Taylor1966) argued that the connections between reasons and actions were logical, conceptual, and normative and as such could not be causal. Others (C. Taylor, Reference Taylor1964; Wilson, Reference Wilson1980) argued that explanations of actions are teleological explanations – in other words, explanations in terms of goals – and as such not analyzable as causal explanations. Anscombe herself considered that the relation between reasons and action was best approached in epistemological terms and involved a special form of practical knowledge. In contrast, Davidson (Reference Davidson1963) argued that reason explanations are causal explanations and did much to rebut the anti-causalist arguments that purported to show that reasons couldn’t be causes. Under his impetus, the causalist approach was revived and refined and became, in the eyes of many, the dominant position in philosophical action theory.

I will come back in Section 3 to the notion of practical knowledge central to Anscombe’s theory of action. For now, I shall concentrate on causal theories of action (CTA, for short), their evolution and variants, and the limitations and shortcomings they face.

1.2 Varieties of Causal Theories of Action (CTA)

Broadly speaking, causal theories consider that action is behavior that can be characterized in terms of a certain sort of psychological causes. Yet, versions of the causal approach can take widely different forms depending on (a) what they take the elements of the action-relevant causal sequence to be and (b) what part of the sequence they identify as the action.

On the basis of the second criterion, one can distinguish three broad types of causal theories. On one view, one should characterize actions in terms of their causal power to bring about certain effects, typically bodily movements and their consequences. Accordingly, proponents of this view will tend to identify an action with mental events belonging to the earlier part of a causal sequence, such as tryings (Hornsby, Reference Hornsby1980).

Conversely, one may hold that what distinguishes actions from other kinds of happenings is the nature of their causal antecedents. Actions will then be taken to be events (typically again, bodily movements and their consequences) with a distinctive mental cause. This second type of causal theory was made popular most notably by Davidson (Reference Davidson1963) and Goldman (Reference Goldman1970). When the label “causal theory of action” is used in its narrower sense, it usually refers to approaches of this second type.

A third possibility is to consider actions as causal processes rather than just causes or effects and to identify them with, if not the entire causal sequence, at least a large chunk of it. On this third view, actions are characterized, one may say, in terms of their distinctive causal structure, rather than relationally in terms of their causes or effects.

Each of these three types of theories can be made to look more or less plausible depending on what the elements of the causal chain are taken to be and on how they are characterized. For instance, some theories countenance only beliefs and desires, while others view intentions, volitions or tryings as essential elements of the action-relevant causal sequence.

1.3 Belief-Desire Versions of CTA and Their Limitations

The earlier belief-desire versions of the causal theory, made popular most notably by Davidson (Reference Davidson1963) and Goldman (Reference Goldman1970), claimed that what distinguishes an action from a mere happening is the nature of its causal antecedent, conceived as a complex of some of the agent’s beliefs and desires. This complex was held to both rationalize the action and cause it. According to Davidson (Reference Davidson1963), for instance, the causal antecedent of an action, what he calls a primary reason, is a combination of a pro-attitude toward actions of a certain kind and a belief that this action is of that kind. Pro-attitudes include “desires, wantings, urges, promptings, and a great variety of moral views, aesthetic principles, economic prejudices, social conventions, and public and private goals and values insofar as these can be interpreted as attitudes of an agent directed toward actions of a certain kind” (1963: 4).

The elegant simplicity of the belief-desire theory may have contributed to the attraction it has exerted. First, it brings into line the justificatory and the explanatory role of reasons by insisting that in cases where reasons genuinely explain, the reason-providing intentional states cause the actions for which they provide reasons. For instance, I might have two reasons to go to my office this morning: I have an appointment with a student and a technician is coming to fix the heating system. Yet, if I have forgotten about the technician, the only reason that explains my going to my office is my appointment with the student. The theory thus fosters the hope of narrowing the gap between the normative realm of explanations by reasons and the natural realm of causal explanation. A second attraction of the theory is its ontological parsimony. On this view, to say that somebody acted with a certain intention is just to say that his actions stood in the appropriate relations to his desires and beliefs. No distinct state of intending nor any special type of mental event such as willings, volitions, acts of will, settings of oneself to act, or tryings are postulated and thus, no embarrassing entity is added to the world’s furniture.

However, it soon appeared that simple belief-desire versions of the causal theory were faced with several difficulties. These difficulties make it doubtful whether it is either a necessary or a sufficient condition for an event to qualify as an action that it have as an antecedent a belief-desire complex. They also highlight important limitations in what the belief-desire approach can explain.

1.3.1 Future-Directed Intentions

First, as several philosophers pointed out, including Davidson himself (Davidson, Reference Davidson1978; Bratman, Reference Bratman1987), the relational analysis of intentions is inapplicable to intentions concerning the future, intentions which we may now have, but which are not yet acted upon, and, indeed, may never be acted upon. Acknowledging the existence of future-directed intentions forces one to admit that intentions can be states separate from the intended actions or from the reasons prompting the action. But, as Davidson himself notes, once this is admitted, there seems to be no reason not to allow that intentions of the same kind are also present in all or at least most cases of intentional actions.

Once intentions are acknowledged as separate mental states and not just relational constructs, a new issue arises. Can these states be given a reductive analysis, by being assimilated to complexes of desires and beliefs or to special kinds of beliefs or judgments, or do they form a sui generis and irreducible class of mental states?

1.3.2 Commitments

A second objection to the belief-desire version of the causal theory is that it does not account for the commitment to action that seems characteristic of intending to A as opposed to merely desiring that one As. One may have beliefs and desires that would rationalize acting in a certain way and yet they may fail to cause one to act in that way. As Davidson (Reference Davidson1973) puts it: “It might happen as simply as this: the agent wants φ, and he believes that x-ing is the best way to bring about φ, and yet he fails to put these two things together; the practical reasoning that would lead him to conclude that x is worth doing may simply fail to occur” (Davidson, Reference Davidson1973: 77). Thus, is seems that having the relevant beliefs and desires is not sufficient to lead us to act accordingly. This suggests that an intermediate step is needed between belief-desire complexes and actions, typically the formation of an intention to act on the basis of these beliefs and desires.

1.3.3 Causal Deviance

Perhaps the most notorious problem confronted by belief-desire theories is the problem of causal deviance: An event may be caused by a belief-desire complex and yet not qualify as an action or an intentional action because the manner of causation is aberrant. In a nutshell, the objection is that the theory doesn’t have the resources to exclude such aberrant manners of causation and, thus, fails to provide sufficient conditions for action or intentional action. Many philosophers distinguish between two forms of causal deviance, antecedential and consequential, respectively putting into question the status of an event as an action or as an intentional action. The following two examples illustrate the first form of deviance:

The climber: A climber might want to rid himself of the weight and danger of holding another man on a rope, and he might know that by loosening his hold on the rope he could rid himself of the weight and danger. This belief and want might so unnerve him as to cause him to loosen his hold. Yet it might be the case that he never chose to loosen his hold, nor did he do it intentionally.

The marriage proposal: Suppose I want and intend to get down on my knees to propose marriage. Contemplating my plan, I am so overcome with emotion that I suddenly feel weak and sink to my knees. Here, my sinking to my knees was not an action even though it was caused by my desire and intention to get down to my knees.

As these two examples illustrate, not every causal relation between seemingly appropriate mental antecedents and resultant events qualifies the latter as actions. The challenge then is to specify what causal connection must hold between the antecedent mental event and the resultant behavior for the latter to be considered an action.

The second form of deviance concerns the unfolding of an action once it has started and can be illustrated with the following two examples:

The murderous nephew: Carl wants to kill his rich uncle because he wants to inherit his fortune. He believes that his uncle is home and drives towards his house. His desire to kill his uncle agitates him and he drives recklessly. On the way he hits and kills a pedestrian, who happens to be his uncle.

A rude awakening: Ann wants to awaken her husband and she believes that she may do so by making a loud noise. Motivated (causally) by this desire and belief, Ann searches in the dark for a suitable noise-maker. In her search, she accidentally knocks over a lamp, producing a loud crash and startling her husband awake.

In these examples, Carl and Ann each perform a number of actions but is by mere accident that they end up having the intended outcome. Described as killing his uncle by hitting him with his car, Carl’s action was not intentional; similarly, described as awakening her husband by knocking over the lamp, Ann’s action is not intentional.

The challenge raised by causal deviance is thus to specify the causal connection that must hold between the antecedent mental events and the resultant behavior for the latter to qualify as an action (antecedential deviance) or as an intentional action (consequential deviance). The conviction that this challenge was unsurmountable led Davidson (Reference Davidson1973) to give up the program of producing a reductive analysis of intentional action and to propose instead that the belief-desire theory provides only necessary conditions for intentional agency.

1.3.4 Action Guidance and Control

This problem has close ties to the problem of consequential deviance. The objection to the belief-desire approach is that it accounts at best for how an action is initiated but not for how it unfolds once started. As Bach points out, “there is more to the causation of an action than its initiation, namely, how it is carried out” (1978: 365). It is not enough to say that the continuation of the action can be accounted for by the persistence of the motivating belief-desire complex. This in itself does not explain why the action is performed in this way rather than another, with some particular degree of skill, control, effort or attention. To explain an action, it is not enough to explain how it gets triggered; one must also explain its manner of execution, how once triggered it is guided, and to some degree controlled or monitored until completion. These aspects of action explanation are overlooked by belief-desire theorists.

1.3.5 Failed Actions and Wrong Movements

Some actions fail because some of the agent’s beliefs are false. Thus, John may fail to turn on the light because he has a false belief about which switch commands the light. The causal theory can account for failures of this kind, for it claims that the non-accidental success of an action depends on the truth of the beliefs figuring in the motivating belief-desire complex. Yet, as Israel, Perry and Tutiya (Reference Israel, Perry and Tutiya1993) point out, the failure of an action cannot always be traced back to the falsity of a motivating belief. What they call the “problem of the wrong movement” points to the fact that the truth of the beliefs figuring in the belief-desire complex does not guarantee that the bodily movements performed by the agent are appropriate. They illustrate it with the following example:

Brutus: Brutus intends to kill Caesar by stabbing him. He correctly believes that Caesar is to his left and that stabbing Caesar in the chest would kill him. Yet, Brutus fails to kill Caesar because he makes the wrong movement and misses him completely.

Israel and colleagues point out that something is missing in the traditional account: Brutus’ motivating complex needs to reflect which movement he is trying to make, and what he thinks its effect will be” (1993: 528). In other words, if we consider that a theory of action explanation should aim at explaining the actual action, not just the attempt to act, we should be ready to include in the motivating complex cognitions pertaining to movements. The motivating complex as it is conceived in the standard account is thus fundamentally incomplete, leaving a gap to be filled between the motivating cognitions and the act itself.

1.3.6 Action Awareness

Yet another objection to the belief-desire approach is that it fails to account for the specific features of our awareness of our own actions (Frankfurt, Reference Frankfurt1978; Ginet, Reference Ginet1990; Wakefield & Dreyfus, Reference Dreyfus1991). Since belief-desire versions of the causal theory claim that the main difference between actions and other events lies in their causal antecedents, it implies that actions and non-action events are not intrinsically different. Or to put it otherwise, as far as the account goes, the phenomenology of bodily motion could be exactly the same in bodily movements that are caused by belief-desire complexes and thus qualify as actions and in bodily movements that are not actions (such as Penfield motions, that is movements caused by artificial electric stimulation of the motor cortex). As Frankfurt puts it, the theory is:

committed to supposing that a person who knows he is in the midst of performing an action cannot have derived this knowledge from any awareness of what is currently happening, but that he must have derived it instead from his understanding of how was is happening was caused to happen by certain earlier conditions.

Thus, the belief-desire approach cannot envisage, as a criterion of action, that the agent may stand in a specific relation to her bodily movements during the time when she is presumed to be acting.

1.3.7 Spontaneous and Arational Actions

Finally, as a number of philosophers (Bach, Reference Bach1978; Davis, Reference Davis1979; Searle, Reference Searle1983; Brand, Reference Brand1984; Ginet, Reference Ginet1990) have remarked, it may be doubted whether being caused by a belief-desire complex is a necessary condition for an event to qualify as an action. Many actions are performed routinely, automatically, impulsively or unthinkingly. It seems quite unlikely that they are the result of some form of deliberation or practical reasoning, whether conscious or unconscious. To borrow an example from Searle (Reference Searle1983), suppose I am sitting in a chair reflecting on a philosophical problem, and I suddenly get up and start pacing about the room; my getting up and pacing about are actions of mine even though I was not prompted to do so by any antecedent conscious desire or purpose.

In addition, it may also be doubted whether being caused by a belief-desire complex is a necessary condition for an event to qualify as an intentional action. Hursthouse (Reference Hursthouse1991) argues that there is a class of emotional actions that cannot be accommodated by the belief-desire model. Examples of such actions include rumpling the hair of a child out of affection, shouting at a crashing computer out of frustration, jumping for joy or tearing one’s clothes out of grief. Hursthouse claims that such actions are intentional, yet are not done for a reason, as construed by the belief-desire theory. In actions of that kind, there is no end such that the agent has some pro-attitude toward it and believes the action will promote it. For instance, my shouting at my computer won’t stop it from malfunctioning. At the same time, she claims that the agent would not have done the action had they not been in a grip of some appropriate emotion and that their being in the grip of that emotion is a perfectly good explanation for why the agent acted as they did (out of love, out of anger, out of grief, and so on). This is why, according to Hursthouse, such actions should count as arational rather than irrational.

To recap, the two cardinal tenets of causal theories of action are that: (a) reason-explanations are causal explanations and (b) that behavior qualifies as action just in case it has a certain sort of psychological cause or involves a certain sort of psychological causal process. As the objections just reviewed make clear, a theory, such as the belief-desire theory, can be faithful to these two tenets and yet inadequate, or at least largely incomplete, as a theory of action. On the basis of these objections, we can define further conditions of adequacy a causal theory of action should meet:

1. Intentions: A theory of action should acknowledge intentions as separate mental states and not just relational constructs.

2. Commitment to action: A theory of action should account for the commitment to action characteristic of intending as opposed to merely desiring.

3. Causal deviance: A theory of action should exclude aberrant manners of causation in a principled way.

4. Guidance and monitoring: A theory of action should explain not just how actions are triggered but also how they are carried out.

5. Bodily movements: A theory of action should explain why in carrying out an action, the agent performs the bodily movements that he does.

6. Action awareness: A theory of action should do justice to the phenomenology of action.

7. Spontaneous and arational actions: A theory of action should be in a position to explain why spontaneous actions still count as actions and why arational actions count as intentional actions.

The various revisions and refinements the causal theory of action has undergone in the last decades can be seen as attempts to meet one or several of these further conditions of adequacy. I now concentrate on some of the main developments of causal approaches, indicating both what progress I think has been made and what problems remain to be solved.

1.4 Intentions as Distinctive States

Acknowledging the existence of future-directed intentions forces one to admit that intentions can be states separate from the intended actions or from the reasons that prompted the action, and, as Davidson (Reference Davidson1978) notes, once this is admitted, there seems to be no reason not to allow that intentions of the same kind are also present in all or at least most cases of intentional action. Once intentions are acknowledged as separate mental states and not just relational constructs, a new issue arises. Can these states be given a reductive analysis? In other words, can they be assimilated to complexes of desires and beliefs, to special kinds of beliefs or judgments, or do they form a sui generis and irreducible class of mental states?

Davidson himself (Reference Davidson1978) saw that the commitment-to-action feature of states of intending created a problem for the kind of reductive analysis that took intentions to be reducible to combinations of beliefs and desires. He argued that intention should be seen as a special kind of evaluation of conduct. In his view, although both desires to act and intentions are evaluative judgments, they are different kinds of judgments. Davidson distinguishes between prima facie judgments, all things considered judgments, and all-out judgments. Prima facie judgments are of the form “A is better than B in light of consideration C.” All things considered judgments constitute a subclass of prima facie judgments that assess the relative merits of potential courses of action in light of all the considerations deemed relevant by the agent. “A is the best course of action in the light of all relevant considerations” is an all things considered judgment. Both prima facie and all things considered judgments are conditional or relational judgments. In contrast, all-out judgments are absolute or unconditional judgments of the form “A is the best course of action.” According to Davidson, the transition from an all things considered judgment to an all-out judgment is governed by a substantial principle of practical rationality, which he calls “the principle of continence.” This principle enjoins agents to perform the action judged best on the basis of all available reasons and it is such all-out judgments that bridge between practical reasoning and intentional actions. Desires to act correspond to what he calls prima facie judgments, judgments that actions of a certain kind are desirable insofar in the light of certain considerations. By contrast, an intention to act corresponds to what Davidson calls an all-out judgment. In making an all-out judgment as opposed to a prima facie judgment, we settle on a course of action. Intentions are thus associated with actions in a way that mere desires are not. By acknowledging the existence of intentions as separate states, Davidson makes a fair attempt at coming to grip with the commitment-to-action feature that appears to be characteristic of intentions as opposed to mere desires. By analyzing intentions as a special kind of evaluative judgments, all-out judgments, he avoids having to postulate a sui generis kind of mental entity. Intentions, together with other pro-attitudes, are deemed to belong to the general class of evaluative judgments.

Despite its merits, Davidson’s revised position fails to capture the full import of the commitment-to-action-feature of intentions. As argued by Bratman (Reference Bratman1987), two dimensions of this commitment should be distinguished. The first dimension, what he calls the volitional dimension, can be characterized by saying that “Intentions are, whereas ordinary desires are not, conduct-controlling pro-attitudes. Ordinary desires, in contrast, are merely potential influencers of action” (1987: 16). There is yet, according to Bratman, a second dimension of commitment, what he calls the reasoning-centered dimension of commitment. What is at stake here are the roles played by intentions in the period between their initial formation and their eventual execution. First, intentions have what Bratman calls a characteristic stability or inertia: Once we have formed an intention to A, we will not normally continue to deliberate whether to A or not; in the absence of relevant new information, the intention will resist reconsideration, we will see the matter as settled and continue so to intend until the time of action. Second, during this period between the formation of an intention and action, we will frequently reason from such an intention to further intentions, reasoning from instance from intended ends to intended means or preliminary steps. And third, this intention will constrain the other intentions one may form. For instance, if I intend to go see a movie tonight, I cannot consistently intend to go to a concert at the same time.

One may think that Davidson’s analysis of intentions as all-out judgments is meant to capture the volitional dimension of commitment and that it may perhaps as well account for the stability of intentions once formed. Yet the other aspects of the reasoning-centered dimension of commitment seem to fall beyond its scope. As Bratman suggests, rather than attempting to give reductive analyses of intentions, it may be more illuminating to take seriously the idea that intentions are distinctive states of mind and that they should be characterized in terms of their own complex network of dispositions and functional roles. As another way to put it, Davidson’s approach is too exclusively backward-looking. He focuses on how intentions are arrived at and in so doing fails to appreciate important aspects of their roles.

Advocates of intentions as distinctive states tend to emphasize a number of functions plausibly attributed to intentions. They argue that intentions have their own complex and distinctive functional role that warrants considering them as forming an irreducible kind of psychological state, on a par with beliefs and desires. As we have just seen in our brief discussion of his approach, Bratman stresses three functions of intentions. First, intentions are terminators of practical reasoning in the sense that once we have formed an intention to A, we will not normally continue to deliberate whether to A or not. In the absence of relevant new information, the intention will resist reconsideration. Second, intentions are also prompters of practical reasoning, where practical reasoning is not concerned this time with whether or not one should A, but is reasoning about means of A-ing. This function of intentions thus involves devising specific plans for A-ing. Third, intentions also have a coordinative function and serve to coordinate the activities of the agent over time and to coordinate them with the activities of other agents.

Philosophers also typically point out further motivational and cognitive functions of intentions (e.g., Brand, Reference Brand1984; Mele, Reference Mele1992). Intentions are also responsible for triggering or initiating the intended action (initiating function) and for sustaining it until completion (sustaining function). An intention to A incorporates a plan for A-ing, a representation or set of representations specifying the goal of the action and how it is to be arrived at. It is this component of the intention that is relevant to its guiding function. Finally, intentions have also been assigned a monitoring function, involving a capacity to monitor progress toward the goal and to detect and correct deviations from the course of action as laid out in the guiding representation. We may call the initiating and sustaining functions motivational functions and the guiding and monitoring functions control functions. Although there seems to be some consensus as to what the initiating and sustaining functions amount to, the situation is much less clear where the control functions are concerned.

The first three functions of intentions stressed by Bratman – their roles as terminators of practical reasoning about ends, as prompters of practical reasoning about means and as coordinators – are typically played by intentions in the period between their initial formation and the initiation of the action. By contrast, the last four functions (initiating, sustaining, guiding, and monitoring) are played in the period between the initiation of the action and its completion. Attention to these differences has led a number of philosophers to develop dual-intention theories of action. For instance, Searle (Reference Searle1983) distinguishes between prior intentions and intentions-in-action, Bratman (Reference Bratman1987) between future-directed and present-directed intentions, and Mele (Reference Mele1992) between distal and proximal intentions. In all cases, an intention of the former type will only eventuate into action by first yielding an intention of the latter type. For the sake of brevity, in what follows, I shall use Mele’s terminology of distal and proximal intentions.

Dual-intention theories make available new strategies for dealing with the difficulties listed earlier. To begin with, they seem to provide at least a partial answer to the problem of causal deviance. First, they suggest that for intentions to cause actions is the right way for them to count as intentional, the intended effect must be brought about in the way specified by the plan component of the intention. In cases of consequential deviance, this condition is not satisfied. For instance, it was not part of Carl’s plan to kill his uncle by hitting him with his car. Second, for intentions to cause behavior in the right way for it to count as an action, it must also be the case that the causal chain linking the distal intention to the resultant bodily behavior include relevant proximal intentions. Cases of antecedential deviance do not satisfy this condition. For instance, in the marriage proposal example introduced earlier, the young man had formed the distal intention to intend to get down on his knees to propose marriage; yet he did not get a chance to form the corresponding proximal intention before sinking to his knees.

Dual-intention theories also open up new prospects toward a solution to the problem of spontaneous and arational actions. According to dual-intention theories, all actions have proximal intentions, but they need not always be preceded by distal intentions. For instance, when, reflecting on a philosophical problem, I start pacing about the room, I do not first engage in a deliberative process that concludes with a distal intention to pace; rather my pacing is initiated and guided by a proximal intention formed on the spot. Automatic, spontaneous, or impulsive actions may then be said to be those actions that are caused by proximal intentions but are not planned ahead of time.

Whether distinguishing between distal and proximal intentions also helps with the problems of bodily movement and action awareness is more controversial and depends on what different authors take the exact contents and functions of proximal intentions (aka, present-directed intentions or intentions-in-action) to be. There are disagreements among dual-intention theorists regarding how much of what is going on representationally in the preparation and execution of an action should be included in the content of intentions.

However, in many versions of dual-intention theories, proximal intentions are conceived as having the function of guiding and controlling the course of an ongoing action. Dual-intention theories thus seem in a position to provide some explanation of the distinctive character of our awareness of our own actions. Insofar as a proximal immediate intention has a sustaining function and is involved in the guiding and monitoring of the action, it does not terminate with the onset of action, but continues as long as the guiding and monitoring continue. As a consequence, it seems possible to reconcile the view that the main difference between actions and simple events lies in their causes with the idea that we are immediately aware that we are acting and that this awareness has a non-perceptual source. Dual-intention theorists may claim that this immediate awareness is in fact an awareness of the content of the proximal intention that is sustaining the action as it unfolds.

Some versions of the dual-intention approach may also have the resources needed to address the problem of bodily movements. For instance, Searle takes the content of intentions-in-action to differ from the content of prior intentions in more respects than just tense. In his view, a prior intention is an intention that is formed prior to the action and that represents and causes it as a whole. In contrast, an intention-in-action is the mental component of the action itself; it presents, causes, and is contemporaneous with, bodily movements. The intention-in-action together with the bodily movements it causes constitute the action. Thus, while the content of my prior intention may simply be that I raise my arm at some point in the future, the content of an intention-in-action includes a detailed representation of the movement of my arm, its speed and trajectory. Insofar as intentions-in-action include detailed representations pertaining to movement, Searle’s version of the dual-intention is in a position to address the problem of the wrong movement. Whether this is also the case for other versions of dual-intention theories depends in part on how they construe the contents of proximal intentions.

1.5 A Dynamic Theory of Intentions: The DPM Model

As we have just seen, dual-intention theories offer ways of addressing at least some of the difficulties that plague early belief-desire versions of CTA. Yet, they typically do not say much about how action guidance and control operate and do not always clearly characterize the different forms of action guidance and control associated with different types intentions. In addition, different versions of dual-intention theories offer divergent views of the contents of proximal intentions and of their exact functions.

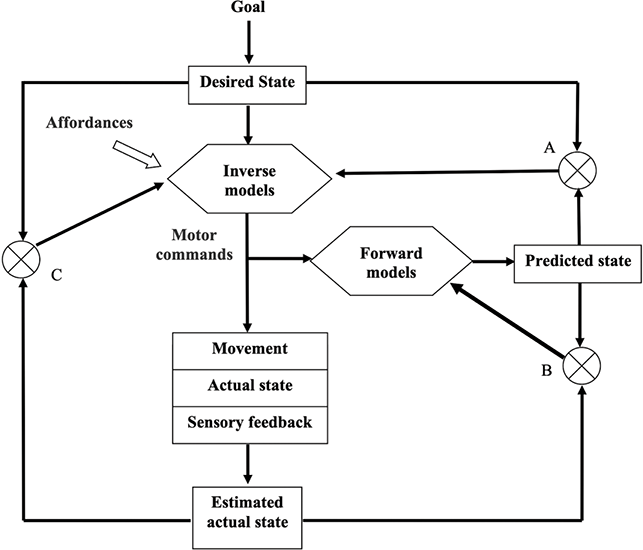

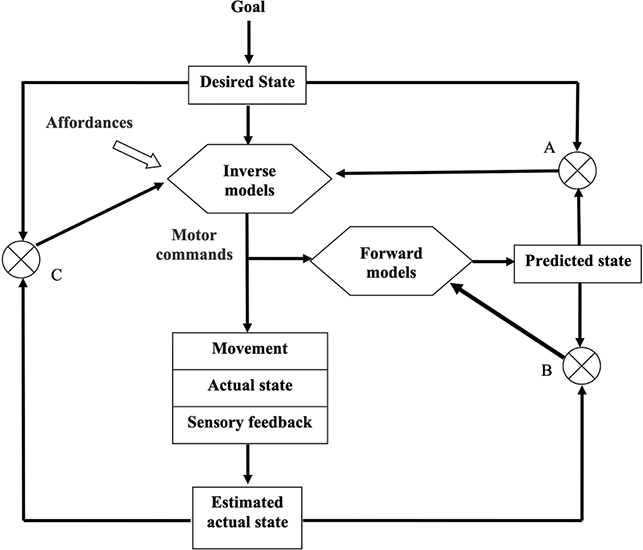

I have proposed (Pacherie, Reference Pacherie2008; Mylopoulos & Pacherie, Reference Pacherie, Newen, de Bruin and Gallagher2018) a dynamic hierarchical model of intentions that brings together philosophical work on intentions and empirical work on motor representations and motor control. This model distinguishes three main stages in the process of action specification, each corresponding to a different level of intention and each level of intention having a distinctive role to play in the guidance and monitoring of the action. It thus proposes a threefold distinction among distal intentions (D-intentions), proximal intentions (P-intentions), and motor intentions (M-intentions), hence the DPM model for short. The DPM model specifies the representational and functional profiles of each type of intention, as well as their local and global dynamics, and the ways in which they interact. One of its core tenets is that action control is the result of integrated, coordinated activity across these levels of intention.

In the DPM model, the distinction between D-intentions, P-intentions, and M-intentions is motivated by an analysis of their different and complementary functional roles, of the different types of contents they involve and of their respective temporal scales. D-intentions are in certain respects close kins to the distal intentions of dual-intention theories. D-intentions also function as terminators of practical reasoning about ends, as prompters of practical reasoning about means and plans, and as intra- and interpersonal coordinators. As such, they are subject to norms of practical rationality. An agent’s intentions should be mutually consistent and they should be consistent with all of the agent’s beliefs (strong consistency requirement). The practical rationality constraints that bear on them require the presence of a network of inferential relations among intentions, beliefs, and desires to insure internal, external and global consistency. It is generally admitted that concepts are the inferentially relevant constituents of intentional states and that their sharing a common conceptual representational format is what makes possible a form of global consistency, at the personal level, of our desires, beliefs, intentions and other propositional attitudes. If we accept this common view, it follows that in order to satisfy the rationality constraints they are subject to, D-intentions must have a propositional format and conceptual content. The main difference with earlier conceptions of distal intentions is that the DPM model assigns a specific control function to D-intentions. They are in charge of the rational control of the action. Their role is to ensure that the way the action unfolds – in particular, adjustments of the action plan in the face of unanticipated side effects or difficulties – doesn’t flout the reasons the agent had for their action in the first place or violate norms of practical rationality.

A P-intention often, though not always, inherits an action plan from a D-intention. One of its functions is to anchor this plan in the situation of action. The temporal anchoring, the decision to start acting now is but one aspect of this process. Once the agent has established a perceptual information-link to the situation of action, she must generate a more detailed representation of the action that fits the specification inherited from the D-intention while anchoring it to the situation at hand. The formation of a P-intention depends on the integration of conceptual information about the intended action inherited from the D-intention with perceptual information about the current situation and memory information about actions in one’s motor repertoire to yield a more definite representation of the action to be performed. For instance, whether I use my right or my left hand to reach for a bottle of water may depend on the position of the bottle relative to me or on the presence of obstacles on the path. Once formed, P-intentions are in charge of the situational monitoring and control of the action as it unfolds. This control is more local than the rational control exerted by D-intentions and is concerned with the immediate goal and the situation as currently perceived.

The main novelty of the DPM model is perhaps the introduction of M-intentions. M-intentions involve what neuroscientists call motor representations. Motor representations are not just representations of movements. They encode action goals together with the motoric means for achieving them and do so in a motoric format directly suitable to action execution. The way they encode movements is normally sensitive not just to the immediate motor goal but to the hierarchy of motor goals in which they are embedded. For instance, the same cup will be grasped in different ways depending on whether one wants to bring it to one’s lips or to turn it upside down. Thus, motor representations appear to be themselves hierarchically organized, with a decomposition of more general into more specific motor goals (Jeannerod, Reference Jeannerod2006; Van Elk et al., Reference van Elk, van Schie and Bekkering2014). According to the DPM Model, M-intentions are also responsible for a distinctive form of monitoring and control of an ongoing action. They are responsible for the precision and smoothness of action execution, and motor control typically operates automatically and at a very fine time-scale.

The introduction of M-intentions is motivated in part by the need to distinguish this automatic, unconscious and very fast form of action control from the typically conscious and slower forms of action control exerted at the level of P-intentions and D-intentions. It is also motivated by the failure of dual-intention accounts to offer more than partial solutions to the problem of antecedent causal deviance and the problem of the wrong movement. For instance, consider the marriage proposal example again. Imagine this time that the young man, having already formed the distal intention to get down on his knees to propose marriage, forms the proximal intention to do so upon entering the room where the woman of his heart finds herself. As he approaches her, their eyes meet and he is so overcome by emotion that he suddenly feels weak and sinks to his knees. In this variation on the marriage proposal example, both distal and proximal intentions are present, but the young man’s sinking on his knees was not caused by a motor intention to do so. Thus, causing behavior in the right way for it to count as an action may be a matter of intentions causing behavior via the instantiation of appropriate motor intentions. Similarly, to explain why Brutus failed to stab Caesar in the chest, we may have to say that that there was something wrong with the content of his motor intentions.

Why talk of M-intentions rather than simply motor representations? M-intentions have the functional role of bringing about the actions they represent, whereas motor representations need not have such a functional role. For instance, motor representations are also formed when we observe someone else acting or when we imagine acting (Jeannerod, Reference Jeannerod1995; Filimon et al., Reference Filimon, Nelson, Hagler and Sereno2007). Thus, talk of M-intentions emphasizes the role of such states in action production and the fact that M-intentions share certain core functional characteristics of D-intentions and P-intentions. It may still be objected that M-intentions fail to meet three constraints that genuine intentions should satisfy, namely conscious accessibility, integration with other propositional attitudes and strong consistency, where conscious accessibility and integration are taken as preconditions of strong consistency (Brozzo, Reference Brozzo2017). M-intentions’ failure to meet these constraints – insofar as their contents are not always consciously accessible and their integration with other propositional attitudes is limited – would be problematic if it led to systematic inconsistencies between an agent’s D-intentions or P-intentions and the behavior for which M-intentions are immediately responsible. However, the architecture of the DPM model precludes such systematic inconsistencies. On the one hand, what M-intentions are formed and what contents they have is constrained, although not fully determined, by P-intentions and their contents, so that initially at least M-intentions will not be inconsistent with higher-level intentions. On the other hand, the existence of three levels of monitoring and control normally ensures that emerging discrepancies during action execution are detected and reduced. Thus, while M-intentions may not directly meet strong consistency constraints, the architecture in which they are embedded ensures that they meet them indirectly.

The DPM model proposes to explicate action production and control in terms of a dynamic hierarchy of intentions. As we shall see, the model also provides a useful framework for thinking about the phenomenology of agency (Section 3) and about joint action (Section 4). It is, however, like the other versions of CTA discussed so far, mainly aimed at explaining human intentional agency and as such cognitively demanding. The models discussed so far, including the DPM model, thus appear ill-suited to account for minimal forms of agency of the kind exhibited by creatures and possibly artifacts with more modest cognitive endowments. This is why in the next section, I change tack, attempting to identify minimal forms of agency and their basic building blocks and to understand how they relate to the more sophisticated forms of agency considered so far.

2 Minimal Forms of Agency and Their Building Blocks

As we have seen, one main feature distinguishing activity that counts as action from mere activity (my shutting the door vs. the wind shutting the door) is purposiveness or goal-directedness. As we have also seen, philosophers have tended to construe action in terms of intentionality and acting with a purpose or goal as acting for a reason. However, the agency as intentional action approach might be too restrictive, especially if intentional actions are characterized in terms of notions such as reasons, justification, intentions, practical reasoning or conscious awareness. Many living organisms appear to engage in purposeful, goal-directed behavior. Yet, it is doubtful whether they have the sophisticated cognitive processing and representational abilities presupposed by CTA in its various guises. This raises the issue of what representational abilities may support less demanding notions of purposiveness and goal-directedness.

In addition, in analyzing acting intentionally as acting for a reason that identifies the aim or goal pursued by the agent and makes the action intelligible in their eyes, theories of agency as intentional action also make it clear that what they are concerned with are personal-level reasons, goals or aims of the agent as a whole. But what about creatures who, for obvious reasons, cannot answer the question “Why?” This raises the second issue of what, for these creatures, counts as goal-directedness and how we determine what actually drives their behavior.

Third, creatures that act purposively have some control over their behavior, adapt what they do to environmental circumstances and may correct the course of their action to ensure they reach their goal. In other words, some of the functions assigned to intentions in theories of intentional actions have at least partial and more limited counterparts in other creatures. How can we describe the forms of control these creatures are or are not capable of?

In what follows, I consider the three issues of representational abilities, goal-directedness, and control in turn. I conclude this section with some remarks on artificial agency.

2.1 Representations Revisited

Let me start with two preliminary remarks. First, non-human agents do not form a homogeneous category. It is more than doubtful that all non-human animals, from chimpanzees to earthworms, not to mention paramecia and other unicellular organisms, have the same agential capacities and are capable of a similar range of purposive activities. Thus, we should not expect a one-size-fits-all story about (non-intentional) purposive agency. Second, humans are part of the animal realm. On the one hand, young children have yet to develop some of the capacities that will allow them to become full-blown intentional agents. On the other, as we already discussed in Section 1, many of the actions performed by human adults – habitual, impulsive, spontaneous, or arational actions – may fail to meet the stringent criteria imposed by CTA. Thus, while humans might have or develop agential capacities non-human agents lack, there is no radical discontinuity between human and non-human agency. Indeed, the more basic agential capacities shared by humans and (some) animal species may serve as a necessary scaffold for full-blown human intentional agency.

There are two main routes one may take to account for the purposiveness of non-human agency. The first is representational and aims at showing that one can ascribe belief-like, desire-like, or intention-like states to non-human agents by appealing to more basic mental representations than those needed for full-blown beliefs, desires, and intentions. The second route is more radical and contends that an explication of non-human forms of agency can dispense with representations altogether. The first route is thus deflationary in matters of representational resources, the second is eliminativist. Let us explore the first route first.

2.1.1 Deflationary Representational Views of Agency

CTA explains agency in terms of the agent’s beliefs, desires, and intentions. Beliefs, desires, and intentions are standardly understood as propositional attitudes, that is as mental states with representational content that is propositional and has concepts as its constituents. To go back to one of our earlier examples, when we explain a pedestrian’s action of crossing the street in terms of their desire to go to the shops and their belief that crossing the street is a way to go to the shops, we ascribe to them two propositional attitudes: a desire with the propositional content “I go to the shops,” and a belief with the propositional content “crossing the street is a way to go to the shops.” Among the constituents of these propositional contents are concepts such as those of shops, streets, crossing, and so on.

In addition, Davidson (Reference Davidson1975, Reference Davidson1982) famously argued that the ascription of propositional attitudes to a creature only makes sense if that creature has concepts of the corresponding attitudes, and, first and foremost, a concept of belief, the possession of which in turn requires language and a capacity for higher-order thought. He concluded that, having neither language nor a capacity for higher-order thought, animals could not be ascribed propositional attitudes and did not have the capacity to act intentionally. Many philosophers have objected that Davidson’s position over-intellectualizes propositional attitude ascription. They have argued, against Davidson, that you don’t need to possess the concept of belief or to represent that you have beliefs to actually have beliefs (for recent critical discussions, see, e.g., Roskies, Reference Roskies, Metzinger and Windt2016 and Newen & Starzak, Reference Newen and Starzak2022).

Whether language is required for concept possession more generally remains a matter of debate. If one takes the view that language is required for concept possession, one may still deny the ascription of propositional attitudes to animals, on the ground, this time, not that animals do not possess concepts of belief and other attitudes, but that that do not possess the concepts that are the constituents of the contents of propositional attitudes. If it takes language to have the concept of a cat, then we cannot ascribe to the dog looking at a tree the belief that the cat is in the tree. Proponents of the language of thought hypothesis (Fodor, Reference Fodor1975; see also Quilty-Dunn, Porot, & Mandelbaum, Reference Quilty-Dunn, Porot and Mandelbaum2023 for a recent defense of this approach) may contend that (some) animals have a language of thought, hence concepts, and thus can be ascribed propositional attitudes. The issue of whether the language of thought hypothesis for animals is true is far from settled, although, as Beck (Reference Beck, Andrews and Beck2018) points out, the results of ongoing research programs into the logical abilities of animals may get us closer to an answer. Note that one reason, and perhaps the main reason, why many philosophers insist that the content of propositional attitudes is conceptual is that concepts are held to be the inferentially relevant constituents of propositional states. In particular, their sharing a common conceptual representational format is what makes possible a form of global consistency, at the personal level, of our desires, beliefs, intentions, and other prepositional attitudes. In other words, this is what accounts for what has been called their inferential promiscuity. If one accepts this common view, then a capacity for rational behavior presupposes a capacity for conceptual thought.

Rather than insisting that animals have beliefs, desires, or intentions understood as propositional attitudes with conceptual contents, some philosophers have argued that (some) animals have representational mental states that are less sophisticated but can still cause and explain their actions. As Steward puts it:

Perhaps if animals can, for example, see certain objects, want certain things, and try to get them (as might seem much more plausible than that they have beliefs or intentions), these capacities would be sufficient to constitute appropriate precursors for exercises of something we could recognise as agency?

In recent years, a number of philosophers have argued that it is neither the case that the only possible representational format for mental representation is language-like and propositional, nor the case that the whole range of possible formats is exhausted by the dichotomy between, on the one hand, digital representations with a propositional format and, on the other hand, analog representations with an iconic or image-like format, that is, representations that represent in virtue of a direct isomorphism between the representation and what it represents. These philosophers have pointed out the existence of a number of other representational formats, such as mental maps and diagrams (Bermúdez, Reference Bermudez2003; Camp, Reference Camp2007; Rescorla, Reference Rescorla2009; Shea, Reference Shea2018), structured perceptual representations (for instance, according to Peacocke (Reference Peacocke1992, Reference Peacocke1994), the non-conceptual yet proto-propositional content of perceptual states), mental models (Johnson-Laird, Reference Johnson-Laird2006), motor representations, and motor schemas (Pacherie, Reference Pacherie, Newen, de Bruin and Gallagher2018; Pacherie & Mylopoulos, Reference Pacherie and Mylopoulos2021), directed graphs or Bayes nets for representing causal relations (Camp and Shupe, Reference Camp, Shupe, Andrews and Beck2017) and tree-like representations that can be used to represent social hierarchies (Cheney & Seyfarth, Reference Cheney and Seyfarth2007; Camp, Reference Camp and Lurz2009).

Representations in these various representational formats may include both digital and analog elements; they are structured and obey certain combinatorial rules. While these features may not be sufficient to support unrestricted inferential transitions, they may support inference in specific domains. For instance, suppose an animal has a mental map of its territory, representing geographical features, such as the location of bodies of water, open and wooded areas, valleys, hills, and promontories, as well as the location of food sources (e.g., berry bushes), potential hiding places, favorite haunts of its predators, and so on. Given its current goals or needs (e.g., drinking, feeding, finding a safe resting place) and its mental map of its territory, the animal may compute where to go and what route to take to get there.

To take another example, borrowed from Cheney and Seyfarth (Reference Cheney and Seyfarth2007) and discussed by Camp (Reference Camp and Lurz2009), baboons are social animals living in troops whose organization is highly hierarchical. How a baboon interacts with other members of the troop is determined by the relative social rank of their family with respect to other families and their own social rank within their family. Baboons behave in ways that indicate their awareness of this hierarchy and their position in it. Cheney and Seyfarth propose that baboons represent their social knowledge of dominance relations in the form a two-tiered hierarchical tree, with the first tier representing the dominance relations among families and the second the dominance relations among members of the same family. They also argue that this representational format is compositional: Baboons represent each member of their troop as a distinct entity; changes in the representation of dominance relations are updated in a rule-governed way (for instance as a result of a dominant losing a fight to a subordinate); the hierarchical relation is transitive, with individuals from different families represented as ranked in dominance in virtue of the represented ranking between their respective families; it supports inferences about social behavior.

However, as Camp (Reference Camp and Lurz2009) points out, even if one accepts Cheney and Seyfarth’s claim that social knowledge of baboons constitutes a discrete, combinatorial system that shares several features with human language, one shouldn’t hasten to conclude that baboons think in a mental language. For one thing, language is not the only type of compositional system. For example, diagrammatic systems and many types of maps are also compositional. Second, compositionality may be more or less general and systematic. If we take hierarchical organization, for instance, baboons’ hierarchical representations of dominance relations have only two layers, while languages can generate structures of potentially infinite depth (e.g., the dog chased the cat who was stalking the mouse who had stolen the cheese that had been left on the table by the woman who left the kitchen to answer a call from the delivery man who …). Third, there is no evidence that baboons use a similar hierarchical representational system to represent relations other than dominance relations and it may be that baboon social cognition is modular with only dominance cognition making use of this type of hierarchical representations.

This is in contrast to the productivity and generative capacity of both human public languages and the putative language of thought, which allow us represent a potentially infinite number of ideas on the basis of a limited set of representational units and rules. Yet, more basic representational formats may be better tailored to representing some domains and may allow for faster, more efficient inferential processes within these domains. Think for instance of navigating your way in Paris using a tourist map showing all the landmarks, metro lines and bus routes, instead of having only a linguistic description of the city, its attractions and public transportation system. From just looking at the map you will probably readily infer that it may not be a good idea to try and walk from Montmartre to the Eiffel Tower unless you have plenty of time and energy and are equipped with comfortable shoes. Drawing this inference from a linguistic description of the city may be much more cumbersome and time-consuming.

Thus, although animals may lack a domain-general, fully compositional representational system of the kind afforded by language, they may make effective use of more basic representational formats adapted to their needs. While the representational formats they use may not exhibit the productivity, expressive power and inferential promiscuity of linguistic representations, they may support more limited forms of inference in particular domains, and these inferential islands may be sufficient to allow them to engage in some forms of means-end reasoning.

In addition to instrumental reasoning, associative learning, which is pervasive throughout the animal realm, is also an efficient and more frugal way to work out the relationships between means and ends. One form of associative learning, operant or instrumental conditioning, is key to this. Through trial and error, animals build associations between actions and their outcomes and through repetition of these actions, these associations get reinforced as long the contingency between action and outcome remains stable. For instance, rats can be trained to press a lever to obtain a pellet of food. In addition, in the field of associative learning in psychology, one canonical manipulation for distinguishing between goal-directed and goal-independent behavior in animals is the outcome-devaluation procedure (Dickinson, Reference Dickinson1985). For instance, once the rats have learned the association between their action (lever pressing) and its outcome (delivery of food), the outcome is devaluated (e.g., the rats are satiated and no longer find food rewarding or the appetizing food is replaced by foul-tasting food). If their behavior is goal-directed, rats will rapidly stop pressing the lever once the outcome is devaluated; if the rats have been over-trained and their behavior has become habitual or automatic, they will persist in their lever pressing for much longer, suggesting that their responses are being directly triggered by the context, via stimulus-response associations, independently of rewards.

Animals can also learn about means-end or action-reward relationships through observation. For instance, in a remarkable set of studies with bumblebees, Chittka and colleagues (Alem et al., Reference Alem, Perry and Zhu2016; Chittka, Reference Chittka2022) first trained bees in a non-natural object-manipulation task that involved pulling a string to access a reward in an artificial flower placed under a plexiglass table. They then let naïve bees interact with the trained bees or watch them pulling the string from an observation chamber. The naïve bees were able to learn the task both through interaction and by observation and served in turn as demonstrators to a new set of naïve bees, with the process repeating over several generations and thus starting a new cultural tradition.

At this point, though, one might well wonder whether associationism is a representational theory. As Dickinson (Reference Dickinson2012) points out, the learning processes through which associations are formed, reinforced or weakened brings them into correspondence with reality. On the one hand, insofar as they track how things are, associations may be said to serve at least one of the functional roles of representations. On the other hand, they may well fail to serve other important functions of representations. As Dickinson puts it:

… it is far from clear that the processes that deploy the content of association, excitation and inhibition are in any sense rational. At a psychological level, excitation and inhibition gain their explanatory power by analogy to physical processes and for this reason, associationism is often characterized as mechanistic.

Dickinson’s own suggestion is that it makes little sense to ask whether associative processes on their own are representational. Rather, the answer to this question depends on the processing architecture within which they are embedded and the constraints thus exerted on them. When embedded within the appropriate kind of processing architecture, associative processes may implement, or operate as reliable proxies for, aspects of practical inference.

To recap, even if they lack a capacity for conceptual, propositional thought, non-human animals may still be able to form representations in a variety of formats that support at least some forms of inference and means-end reasoning. This in turn may be sufficient to explain their capacity to act in a purposive or goal-directed manner and in a way that is sensitive to environmental conditions. As we also saw, associations may be a borderline case in that their representational status is more contentious. Let us now turn to more radical views that contend that an explication of some forms of purposive agency can dispense with representations altogether.

2.1.2 Non-Representational Views of Agency

One can distinguish two rather different lines of argument for the view that we need not appeal to representations to account for agency. One comes from enactive and embodied approaches in philosophy and cognitive science and its scope is typically quite large, with the more radical enactivist views being squarely anti-representationalist across the board. The other, put forward by Burge (Reference Burge2009), has a more limited scope, arguing solely for the existence of a primitive form of non-representational agency, the only form of agency enjoyed by the simplest organisms.

Let me start with the first line of argument that is inspired notably by the work of Merleau-Ponty and Heidegger in the phenomenological tradition (e.g., Dreyfus, Reference Dreyfus1991, Reference Dreyfus2002), by work in robotics and dynamical system theory (Brooks, Reference Brooks1991), by the work of Maturana and Varela on the self-organization of living beings (Maturana & Varela, Reference Maturana and Varela1980) and by the work of Gibson in ecological psychology (Gibson, Reference Gibson1966). In very general terms, this approach emphasizes the role of bodily mediated interactions with the environment in shaping cognition. It is also very critical of the way orthodox computational cognitive science conceives of cognition, namely as the rule-governed manipulation of symbolic representations in the brain. Beyond this, there is little further consensus among its advocates on how embodied cognition should be understood. Different authors disagree on the exact nature of the role played by the body in cognition and disagree on whether or to what extent embodied cognition renders explanations in terms of mental representations superfluous (Gallagher, Reference Gallagher, Newen, de Bruin and Gallagher2018, Reference Gallagher2023).

With regard to action and purposive agency, proponents of embodied cognition have tended to focus on skilled action. For instance, according to Dreyfus (Reference Dreyfus2005, Reference Dreyfus2007) skilled action (or in his terms “skilled coping”) is a form of engagement with the world where agents respond to relevant features of their situation in a way that does not involve conscious deliberation, reasoning, or planning nor depends on any mental representation of goals or of features of the world relevant to these goals. Instead, skilled coping consists in a direct, absorbed, and self-forgetful responsiveness that depends on our embodied capacities. While Dreyfus himself was mostly interested in human skilled action, others have seen his characterization of skilled coping as an apt description of the practical embodied skills exhibited by the purposive agency of animals.

Two types of considerations have been adduced in favor of Dreyfus’ characterization of skilled coping as “mindless” (Dreyfus, Reference Dreyfus2007). First, Dreyfus himself often appeals to phenomenological evidence that experts perform best when they do not reflect on what they are doing, do not engage in conscious monitoring and are performing automatically. The second type of consideration concerns the implausibility of an account of skilled action in terms of mental representations. Such an account would, it is argued, force us to posit an extravagant profusion of very specific representations and severely tax the agent’s information-processing resources.