1. Introduction

We fix

![]() $r \in \mathbb {N}_{\geq 2}$

and let

$r \in \mathbb {N}_{\geq 2}$

and let

![]() $H = \left (V_1, \dotsc , V_r; E \right )$

be an r-partite and r-uniform hypergraph (or just an r-hypergraph for brevity) with vertex sets

$H = \left (V_1, \dotsc , V_r; E \right )$

be an r-partite and r-uniform hypergraph (or just an r-hypergraph for brevity) with vertex sets

![]() $V_1, \dotsc , V_r$

having

$V_1, \dotsc , V_r$

having

![]() $\lvert V_i\rvert = n_i$

, (hyper-) edge set E and a total number

$\lvert V_i\rvert = n_i$

, (hyper-) edge set E and a total number

![]() $n = \sum _{i=1}^r n_i$

of vertices.

$n = \sum _{i=1}^r n_i$

of vertices.

Zarankiewicz’s problem asks for the maximum number of edges in such a hypergraph H (as a function of

![]() $n_1, \dotsc , n_r$

) assuming that it does not contain the complete r-hypergraph

$n_1, \dotsc , n_r$

) assuming that it does not contain the complete r-hypergraph

![]() $K_{k, \dotsc , k}$

with

$K_{k, \dotsc , k}$

with

![]() $k> 0$

a fixed number of vertices in each part. The following classical upper bound is due to Kővári, Sós and Turán [Reference Kővári, Sós and Turán14] for

$k> 0$

a fixed number of vertices in each part. The following classical upper bound is due to Kővári, Sós and Turán [Reference Kővári, Sós and Turán14] for

![]() $r=2$

and Erdős [Reference Erdős9] for a general r: if H is

$r=2$

and Erdős [Reference Erdős9] for a general r: if H is

![]() $K_{k, \dotsc , k}$

-free, then

$K_{k, \dotsc , k}$

-free, then

$\lvert E\rvert = O_{r,k} \left (n^{r - \frac {1}{k^{r-1}}} \right )$

. A probabilistic construction in [Reference Erdős9] also shows that the exponent cannot be substantially improved.

$\lvert E\rvert = O_{r,k} \left (n^{r - \frac {1}{k^{r-1}}} \right )$

. A probabilistic construction in [Reference Erdős9] also shows that the exponent cannot be substantially improved.

However, stronger bounds are known for restricted families of hypergraphs arising in geometric settings. For example, if H is the incidence graph of a set of

![]() $n_1$

points and

$n_1$

points and

![]() $n_2$

lines in

$n_2$

lines in

![]() $\mathbb {R}^2$

, then H is

$\mathbb {R}^2$

, then H is

![]() $K_{2,2}$

-free, and the Kővári–Sós–Turán Theorem implies

$K_{2,2}$

-free, and the Kővári–Sós–Turán Theorem implies

$\lvert E\rvert = O\left ( n^{3/2}\right )$

. The Szemerédi–Trotter Theorem [Reference Szemerédi and Trotter20] improves this and gives the optimal bound

$\lvert E\rvert = O\left ( n^{3/2}\right )$

. The Szemerédi–Trotter Theorem [Reference Szemerédi and Trotter20] improves this and gives the optimal bound

$\lvert E\rvert = O\left (n^{4/3}\right )$

. More generally, [Reference Fox, Pach, Sheffer, Suk and Zahl12] gives improved bounds for semialgebraic graphs of bounded description complexity. This is generalised to semialgebraic hypergraphs in [Reference Do8]. In a different direction, the results in [Reference Fox, Pach, Sheffer, Suk and Zahl12] are generalised to graphs definable in o-minimal structures in [Reference Basu and Raz2] and, more generally, in distal structures in [Reference Chernikov, Galvin and Starchenko4].

$\lvert E\rvert = O\left (n^{4/3}\right )$

. More generally, [Reference Fox, Pach, Sheffer, Suk and Zahl12] gives improved bounds for semialgebraic graphs of bounded description complexity. This is generalised to semialgebraic hypergraphs in [Reference Do8]. In a different direction, the results in [Reference Fox, Pach, Sheffer, Suk and Zahl12] are generalised to graphs definable in o-minimal structures in [Reference Basu and Raz2] and, more generally, in distal structures in [Reference Chernikov, Galvin and Starchenko4].

A related highly nontrivial problem is to understand when the bounds offered by the results in the preceding paragraph are sharp. When H is the incidence graph of

![]() $n_1$

points and

$n_1$

points and

![]() $n_2$

circles of unit radius in

$n_2$

circles of unit radius in

![]() $\mathbb {R}^2$

, the best known upper bound is

$\mathbb {R}^2$

, the best known upper bound is

$\lvert E\rvert =O\left (n^{4/3}\right )$

, proven in [Reference Spencer, Szemerédi and Trotter19] and also implied by the general bound for semialgebraic graphs. Any improvement to this bound will be a step toward resolving the long-standing unit-distance conjecture of Erdős (an almost-linear bound of the form

$\lvert E\rvert =O\left (n^{4/3}\right )$

, proven in [Reference Spencer, Szemerédi and Trotter19] and also implied by the general bound for semialgebraic graphs. Any improvement to this bound will be a step toward resolving the long-standing unit-distance conjecture of Erdős (an almost-linear bound of the form

$\lvert E\rvert =O\left (n^{1+c/\log \log n}\right )$

will positively resolve it).

$\lvert E\rvert =O\left (n^{1+c/\log \log n}\right )$

will positively resolve it).

This paper was originally motivated by the following incidence problem: Let H be the incidence graph of a set of

![]() $n_1$

points and a set of

$n_1$

points and a set of

![]() $n_2$

solid rectangles with axis-parallel sides (which we refer to as boxes) in

$n_2$

solid rectangles with axis-parallel sides (which we refer to as boxes) in

![]() $\mathbb {R}^2$

. Assuming that H is

$\mathbb {R}^2$

. Assuming that H is

![]() $K_{2,2}$

-free – that is, no two points belong to two rectangles simultaneously – what is the maximum number of incidences

$K_{2,2}$

-free – that is, no two points belong to two rectangles simultaneously – what is the maximum number of incidences

![]() $\lvert E\rvert $

? In the following theorem, we obtain an almost-linear bound (which is much stronger than the bound implied by the aforementioned general result for semialgebraic graphs) and demonstrate that it is close to optimal:

$\lvert E\rvert $

? In the following theorem, we obtain an almost-linear bound (which is much stronger than the bound implied by the aforementioned general result for semialgebraic graphs) and demonstrate that it is close to optimal:

Theorem (A).

-

1. For any set P of

$n_1$

points in

$n_1$

points in

$\mathbb {R}^2$

and any set R of

$\mathbb {R}^2$

and any set R of

$n_2$

boxes in

$n_2$

boxes in

$\mathbb {R}^2$

, if the incidence graph on

$\mathbb {R}^2$

, if the incidence graph on

$P \times R$

is

$P \times R$

is

$K_{k,k}$

-free, then it contains at most

$K_{k,k}$

-free, then it contains at most

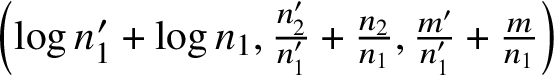

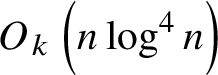

$O_k \left (n \log ^{4}(n) \right )$

incidences (Corollary 2.38 with

$O_k \left (n \log ^{4}(n) \right )$

incidences (Corollary 2.38 with

$d=2$

).

$d=2$

). -

2. If all boxes in R are dyadic (i.e., direct products of intervals of the form

$\left [s2^t, (s+1)2^t\right )$

for some integers

$\left [s2^t, (s+1)2^t\right )$

for some integers

$s,t$

), then the number of incidences is at most

$s,t$

), then the number of incidences is at most

$O_k \left ( n \frac {\log \left (100+n_1\right )}{\log \log \left (100+n_1\right )} \right )$

(Theorem 4.7).

$O_k \left ( n \frac {\log \left (100+n_1\right )}{\log \log \left (100+n_1\right )} \right )$

(Theorem 4.7). -

3. For an arbitrarily large n, there exists a set of n points and n dyadic boxes in

$\mathbb {R}^2$

so that the incidence graph is

$\mathbb {R}^2$

so that the incidence graph is

$K_{2,2}$

-free and the number of incidences is

$K_{2,2}$

-free and the number of incidences is

$\Omega \left (n \frac {\log (n)}{\log \log (n)} \right )$

(Proposition 3.5).

$\Omega \left (n \frac {\log (n)}{\log \log (n)} \right )$

(Proposition 3.5).

Problem 1.1. While the bound for dyadic boxes is tight, we leave it as an open problem to close the gap between the upper and lower bounds for arbitrary boxes.

Remark 1.2. A related result in [Reference Fox and Pach11] demonstrates that every

![]() $K_{k,k}$

-free intersection graph of n convex sets on the plane satisfies

$K_{k,k}$

-free intersection graph of n convex sets on the plane satisfies

![]() $\lvert E\rvert = O_{k}(n)$

. Note that in Theorem (B) we consider a

$\lvert E\rvert = O_{k}(n)$

. Note that in Theorem (B) we consider a

![]() $K_{k,k}$

-free bipartite graph, so in particular there is no restriction on the intersection graph of the boxes in R.

$K_{k,k}$

-free bipartite graph, so in particular there is no restriction on the intersection graph of the boxes in R.

Theorem (A.1) admits the following generalisation to higher dimensions and more general polytopes:

Theorem (B).

-

1. For any set P of

$n_1$

points and any set B of

$n_1$

points and any set B of

$n_2$

boxes in

$n_2$

boxes in

$\mathbb {R}^d$

, if the incidence graph on

$\mathbb {R}^d$

, if the incidence graph on

$P \times B$

is

$P \times B$

is

$K_{k,k}$

-free, then it contains at most

$K_{k,k}$

-free, then it contains at most

$O_{d,k} \left ( n \log ^{2 d} n \right )$

incidences (Corollary 2.38).

$O_{d,k} \left ( n \log ^{2 d} n \right )$

incidences (Corollary 2.38). -

2. More generally, given finitely many half-spaces

$H_1, \dotsc , H_s$

in

$H_1, \dotsc , H_s$

in

$\mathbb {R}^d$

, let

$\mathbb {R}^d$

, let

$\mathcal {F}$

be the family of all possible polytopes in

$\mathcal {F}$

be the family of all possible polytopes in

$\mathbb {R}^d$

cut out by arbitrary translates of

$\mathbb {R}^d$

cut out by arbitrary translates of

$H_1, \dotsc , H_s$

. Then for any set P of

$H_1, \dotsc , H_s$

. Then for any set P of

$n_1$

points in

$n_1$

points in

$\mathbb {R}^d$

and any set F of

$\mathbb {R}^d$

and any set F of

$n_2$

polytopes in

$n_2$

polytopes in

$\mathcal {F}$

, if the incidence graph on

$\mathcal {F}$

, if the incidence graph on

$P \times F$

is

$P \times F$

is

$K_{k,k}$

-free, then it contains at most

$K_{k,k}$

-free, then it contains at most

$O_{k,s}\left ( n \log ^{s} n \right )$

incidences (Corollary 2.37).

$O_{k,s}\left ( n \log ^{s} n \right )$

incidences (Corollary 2.37).

Problem 1.3. What is the optimal bound on the power of

![]() $\log n$

in Theorem (B)? In particular, does it actually have to grow with the dimension d?

$\log n$

in Theorem (B)? In particular, does it actually have to grow with the dimension d?

Remark 1.4. A bound similar to Theorem (B.1) and an improved bound for Theorem (A.1) in the

![]() $K_{2,2}$

-free case are established independently by Tomon and Zakharov in [Reference Tomon and Zakharov22], in which they also use our Theorem (A.3) to provide a counterexample to a conjecture of Alon et al. [Reference Alon, Basavaraju, Chandran, Mathew and Rajendraprasad1] about the number of edges in a graph of bounded separation dimension, as well as to a conjecture of Kostochka from [Reference Kostochka13]. Some further Ramsey properties of semilinear graphs are demonstrated by Tomon in [Reference Tomon21].

$K_{2,2}$

-free case are established independently by Tomon and Zakharov in [Reference Tomon and Zakharov22], in which they also use our Theorem (A.3) to provide a counterexample to a conjecture of Alon et al. [Reference Alon, Basavaraju, Chandran, Mathew and Rajendraprasad1] about the number of edges in a graph of bounded separation dimension, as well as to a conjecture of Kostochka from [Reference Kostochka13]. Some further Ramsey properties of semilinear graphs are demonstrated by Tomon in [Reference Tomon21].

The upper bounds in Theorems (A.1) and (B) are obtained as immediate applications of a general upper bound for Zarankiewicz’s problem for semilinear hypergraphs of bounded description complexity.

Definition 1.5. Let V be an ordered vector space over an ordered division ring R (e.g.,

![]() $\mathbb {R}$

viewed as a vector space over itself). A set

$\mathbb {R}$

viewed as a vector space over itself). A set

![]() $X \subseteq V^d$

is semilinear, of description complexity

$X \subseteq V^d$

is semilinear, of description complexity

![]() $(s,t)$

, if X is a union of at most t sets of the form

$(s,t)$

, if X is a union of at most t sets of the form

$$ \begin{align*} \left\{ \bar{x}\in V^{d}:f_{1}\left(\bar{x}\right) \leq 0, \dotsc, f_{p} \left(\bar{x}\right) \leq 0,f_{p+1}\left(\bar{x}\right)<0,\dotsc,f_{s}\left(\bar{x}\right)<0\right\}, \end{align*} $$

$$ \begin{align*} \left\{ \bar{x}\in V^{d}:f_{1}\left(\bar{x}\right) \leq 0, \dotsc, f_{p} \left(\bar{x}\right) \leq 0,f_{p+1}\left(\bar{x}\right)<0,\dotsc,f_{s}\left(\bar{x}\right)<0\right\}, \end{align*} $$

where

![]() $p \leq s \in \mathbb {N}$

and each

$p \leq s \in \mathbb {N}$

and each

![]() $f_i: V^d \to V$

is a linear function – that is, of the form

$f_i: V^d \to V$

is a linear function – that is, of the form

for some

![]() $\lambda _{i}\in R$

and

$\lambda _{i}\in R$

and

![]() $a\in V$

.

$a\in V$

.

We focus on the case

![]() $V=R = \mathbb {R}$

in the introduction, when these are precisely the semialgebraic sets that can be defined using only linear polynomials.

$V=R = \mathbb {R}$

in the introduction, when these are precisely the semialgebraic sets that can be defined using only linear polynomials.

Remark 1.6. By a standard quantifier elimination result [Reference Van den Dries23, §7], every set definable in an ordered vector space over an ordered division ring, in the sense of model theory, is semilinear (equivalently, a projection of a semilinear set is a finite union of semilinear sets).

Definition 1.7. We say that an r-hypergraph H is semilinear, of description complexity

![]() $(s,t)$

, if there exist some

$(s,t)$

, if there exist some

![]() $d_i \in \mathbb {N}, V_i \subseteq \mathbb {R}^{d_i}$

and a semilinear set

$d_i \in \mathbb {N}, V_i \subseteq \mathbb {R}^{d_i}$

and a semilinear set

$X \subseteq \mathbb {R}^d = \prod _{i \in [r]} \mathbb {R}^{d_i}$

of description complexity

$X \subseteq \mathbb {R}^d = \prod _{i \in [r]} \mathbb {R}^{d_i}$

of description complexity

![]() $(s,t)$

so that H is isomorphic to the r-hypergraph

$(s,t)$

so that H is isomorphic to the r-hypergraph

$\left (V_1, \dotsc , V_r; X \cap \prod _{i \in [r]} V_i \right )$

.

$\left (V_1, \dotsc , V_r; X \cap \prod _{i \in [r]} V_i \right )$

.

We stress that there is no restriction on the dimensions

![]() $d_i$

in this definition. We obtain the following general upper bound:

$d_i$

in this definition. We obtain the following general upper bound:

Theorem (C). If H is a semilinear r-hypergraph of description complexity

![]() $(s,t)$

and H is

$(s,t)$

and H is

![]() $K_{k, \dotsc , k}$

-free, then

$K_{k, \dotsc , k}$

-free, then

$$ \begin{align*}\lvert E\rvert = O_{r,s,t,k} \left( n^{r-1} \log^{s\left(2^{r-1}-1\right)}(n) \right).\end{align*} $$

$$ \begin{align*}\lvert E\rvert = O_{r,s,t,k} \left( n^{r-1} \log^{s\left(2^{r-1}-1\right)}(n) \right).\end{align*} $$

In particular,

![]() $\lvert E\rvert = O_{r,s,t,k,\varepsilon } \left ( n^{r-1 + \varepsilon } \right )$

for any

$\lvert E\rvert = O_{r,s,t,k,\varepsilon } \left ( n^{r-1 + \varepsilon } \right )$

for any

![]() $\varepsilon>0$

in this case. For a more precise statement, see Corollary 2.36 (in particular, the dependence of the constant in

$\varepsilon>0$

in this case. For a more precise statement, see Corollary 2.36 (in particular, the dependence of the constant in

![]() $O_{r,s,t,k}$

on k is at most linear).

$O_{r,s,t,k}$

on k is at most linear).

Remark 1.8. It is demonstrated in [Reference Mustafa and Pach17] that a similar bound holds in the situation when H is the intersection hypergraph of

![]() $(d-1)$

-dimensional simplices in

$(d-1)$

-dimensional simplices in

![]() $\mathbb {R}^d$

.

$\mathbb {R}^d$

.

One can get rid of the logarithmic factor entirely by restricting to the family of all finite r-hypergraphs induced by a given

![]() $K_{k, \dotsc , k}$

-free semilinear relation (as opposed to all

$K_{k, \dotsc , k}$

-free semilinear relation (as opposed to all

![]() $K_{k, \dotsc , k}$

-free r-hypergraphs induced by a given arbitrary semilinear relation, as in Theorem (C)).

$K_{k, \dotsc , k}$

-free r-hypergraphs induced by a given arbitrary semilinear relation, as in Theorem (C)).

Theorem (D). Assume that

$X \subseteq \mathbb {R}^d = \prod _{i \in [r]} \mathbb {R}^{d_i}$

is semilinear and X does not contain the direct product of r infinite sets (e.g., if X is

$X \subseteq \mathbb {R}^d = \prod _{i \in [r]} \mathbb {R}^{d_i}$

is semilinear and X does not contain the direct product of r infinite sets (e.g., if X is

![]() $K_{k, \dotsc , k}$

-free for some k). Then for any r-hypergraph H of the form

$K_{k, \dotsc , k}$

-free for some k). Then for any r-hypergraph H of the form

$\left (V_1, \dotsc , V_r; X \cap \prod _{i \in [r]} V_i \right )$

for some finite

$\left (V_1, \dotsc , V_r; X \cap \prod _{i \in [r]} V_i \right )$

for some finite

![]() $V_i \subseteq \mathbb {R}^{d_i}$

, we have

$V_i \subseteq \mathbb {R}^{d_i}$

, we have

![]() $\lvert E\rvert = O_X\left (n^{r-1}\right )$

.

$\lvert E\rvert = O_X\left (n^{r-1}\right )$

.

This is Corollary 5.12 and follows from a more general Theorem 5.6 connecting linear Zarankiewicz bounds to a model-theoretic notion of linearity of a first-order structure (in the sense that the matroid given by the algebraic closure operator behaves like the linear span in a vector space, as opposed to the algebraic closure in an algebraically closed field – see Definition 5.3).

In particular, for every

![]() $K_{k,k}$

-free semilinear relation

$K_{k,k}$

-free semilinear relation

![]() $X \subseteq \mathbb {R}^{d_1} \times \mathbb {R}^{d_2}$

(equivalently, X definable with parameters in the first-order structure

$X \subseteq \mathbb {R}^{d_1} \times \mathbb {R}^{d_2}$

(equivalently, X definable with parameters in the first-order structure

![]() $(\mathbb {R}, <, +)$

by Remark 1.6) we have

$(\mathbb {R}, <, +)$

by Remark 1.6) we have

![]() $\lvert X \cap (V_1 \times V_2)\rvert = O(n)$

for all

$\lvert X \cap (V_1 \times V_2)\rvert = O(n)$

for all

$V_i \subseteq \mathbb {R}^{d_i}_i$

,

$V_i \subseteq \mathbb {R}^{d_i}_i$

,

![]() $\lvert V_i\rvert = n_i$

,

$\lvert V_i\rvert = n_i$

,

![]() $n = n_1 + n_2$

. One the other hand, by optimality of the Szemerédi–Trotter bound, for the semialgebraic

$n = n_1 + n_2$

. One the other hand, by optimality of the Szemerédi–Trotter bound, for the semialgebraic

![]() $K_{2,2}$

-free point-line incidence graph

$K_{2,2}$

-free point-line incidence graph

$X = \left \{(x_1,x_2; y_1, y_2) \in \mathbb {R}^4 : x_2 = y_1 x_1 + y_2 \right \}\subseteq \mathbb {R}^2 \times \mathbb {R}^2$

we have

$X = \left \{(x_1,x_2; y_1, y_2) \in \mathbb {R}^4 : x_2 = y_1 x_1 + y_2 \right \}\subseteq \mathbb {R}^2 \times \mathbb {R}^2$

we have

$\lvert X \cap (V_1 \times V_2)\rvert = \Omega \left (n^{\frac {4}{3}}\right )$

. Note that in order to define it we use both addition and multiplication – that is, the field structure. This is not coincidental; as a consequence of the trichotomy theorem in o-minimal structures [Reference Peterzil and Starchenko18], we observe that the failure of a linear Zarankiewicz bound always allows us to recover the field in a definable way (Corollary 5.11). In the semialgebraic case, we have the following corollary that is easy to state (Corollary 5.14):

$\lvert X \cap (V_1 \times V_2)\rvert = \Omega \left (n^{\frac {4}{3}}\right )$

. Note that in order to define it we use both addition and multiplication – that is, the field structure. This is not coincidental; as a consequence of the trichotomy theorem in o-minimal structures [Reference Peterzil and Starchenko18], we observe that the failure of a linear Zarankiewicz bound always allows us to recover the field in a definable way (Corollary 5.11). In the semialgebraic case, we have the following corollary that is easy to state (Corollary 5.14):

Theorem (E). Assume that

$X \subseteq \mathbb {R}^d = \prod _{i \in [r]} \mathbb {R}^{d_i}$

for some

$X \subseteq \mathbb {R}^d = \prod _{i \in [r]} \mathbb {R}^{d_i}$

for some

![]() $r,d_i \in \mathbb {N}$

is semialgebraic and

$r,d_i \in \mathbb {N}$

is semialgebraic and

![]() $K_{k, \dotsc , k}$

-free, but

$K_{k, \dotsc , k}$

-free, but

$\lvert X \cap \prod _{i \in [r]} V_i\rvert \neq O\left (n^{r-1}\right )$

. Then the graph of multiplication

$\lvert X \cap \prod _{i \in [r]} V_i\rvert \neq O\left (n^{r-1}\right )$

. Then the graph of multiplication

![]() $\times \restriction _{[0,1]}$

restricted to the unit box is definable in

$\times \restriction _{[0,1]}$

restricted to the unit box is definable in

![]() $(\mathbb {R}, <, +, X)$

.

$(\mathbb {R}, <, +, X)$

.

We conclude with a brief overview of the paper.

In Section 2 we introduce a more general class of hypergraphs definable in terms of coordinate-wise monotone functions (Definition 2.1) and prove an upper Zarankiewicz bound for it (Theorem 2.17). Theorems (A.1), (B) and (C) are then deduced from it in Section 2.5.

In Section 3 we prove Theorem (A.3) by establishing a lower bound on the number of incidences between points and dyadic boxes on the plane, demonstrating that the logarithmic factor is unavoidable (Proposition 3.5).

In Section 4, we establish Theorem (A.2) by obtaining a stronger bound on the number of incidences with dyadic boxes on the plane (Theorem 4.7). We use a different argument, relying on a certain partial order specific to the dyadic case, to reduce from

![]() $\log ^4(n)$

given by the general theorem to

$\log ^4(n)$

given by the general theorem to

![]() $\log (n)$

. Up to a constant factor, this implies the same bound for incidences with general boxes when one counts only incidences that are bounded away from the border (Remark 4.8).

$\log (n)$

. Up to a constant factor, this implies the same bound for incidences with general boxes when one counts only incidences that are bounded away from the border (Remark 4.8).

Finally, in Section 5 we prove a general Zarankiewicz bound for definable relations in weakly locally modular geometric first-order structures (Theorem 5.6), deduce Theorem (D) from it (Corollary 5.12) and observe how to recover a real closed field from the failure of Theorem (D) in the o-minimal case (Corollary 5.11).

2. Upper bounds

2.1. Coordinate-wise monotone functions and basic sets

For an integer

![]() $r\in \mathbb N_{>0}$

, by an r-grid (or a grid, if r is clear from the context) we mean a cartesian product

$r\in \mathbb N_{>0}$

, by an r-grid (or a grid, if r is clear from the context) we mean a cartesian product

![]() $B=B_1{{\times }\dotsb {\times }} B_r$

of some sets

$B=B_1{{\times }\dotsb {\times }} B_r$

of some sets

![]() $B_1, \dotsc , B_r$

. As usual,

$B_1, \dotsc , B_r$

. As usual,

![]() $[r]$

denotes the set

$[r]$

denotes the set

![]() $\left \{1, 2, \dotsc , r \right \}$

.

$\left \{1, 2, \dotsc , r \right \}$

.

If

![]() $B=B_1{{\times }\dotsb {\times }} B_r$

is a grid, then by a subgrid we mean a subset

$B=B_1{{\times }\dotsb {\times }} B_r$

is a grid, then by a subgrid we mean a subset

![]() $C \subseteq B$

of the form

$C \subseteq B$

of the form

![]() $C=C_1{{\times }\dotsb {\times }} C_r$

for some

$C=C_1{{\times }\dotsb {\times }} C_r$

for some

![]() $C_i \subseteq B_i$

.

$C_i \subseteq B_i$

.

Let B be an r-grid, S an arbitrary set and

![]() $f: B \to S$

a function. For

$f: B \to S$

a function. For

![]() $i \in [r]$

, set

$i \in [r]$

, set

and let

![]() $\pi _i: B \to B_i$

and

$\pi _i: B \to B_i$

and

![]() $\pi ^i: B \to B^i$

be the projection maps.

$\pi ^i: B \to B^i$

be the projection maps.

For

![]() $a \in B^i $

and

$a \in B^i $

and

![]() $b \in B_i$

, we write

$b \in B_i$

, we write

![]() $a \oplus _i b$

for the element

$a \oplus _i b$

for the element

![]() $c \in B$

with

$c \in B$

with

![]() $\pi ^i(c) = a$

and

$\pi ^i(c) = a$

and

![]() $\pi _i(c) = b$

. In particular, when

$\pi _i(c) = b$

. In particular, when

![]() $i = r$

,

$i = r$

,

![]() $a \oplus _r b = (a, b)$

.

$a \oplus _r b = (a, b)$

.

Definition 2.1. Let B be an r-grid and

![]() $(S,<)$

a linearly ordered set. A function

$(S,<)$

a linearly ordered set. A function

![]() $f\colon B\to S$

is coordinate-wise monotone if for any

$f\colon B\to S$

is coordinate-wise monotone if for any

![]() $i\in [r]$

,

$i\in [r]$

,

![]() $a,a'\in B^i$

and

$a,a'\in B^i$

and

![]() $b,b'\in B_i$

, we have

$b,b'\in B_i$

, we have

Remark 2.2. Let

![]() $B =B_1{{\times }\dotsb {\times }} B_r$

be an r-grid and

$B =B_1{{\times }\dotsb {\times }} B_r$

be an r-grid and

![]() $\Gamma $

an ordered abelian group. We say that a function

$\Gamma $

an ordered abelian group. We say that a function

![]() $f\colon B\to \Gamma $

is quasi-linear if there exist some functions

$f\colon B\to \Gamma $

is quasi-linear if there exist some functions

![]() $f_i\colon B_i\to \Gamma $

,

$f_i\colon B_i\to \Gamma $

,

![]() $i\in [r]$

, such that

$i\in [r]$

, such that

Then every quasi-linear function is coordinate-wise monotone (as

![]() $ f(a\oplus _i b) \leq f({a} \oplus _i b') \Leftrightarrow f_i(b) \leq f_i(b')$

for any

$ f(a\oplus _i b) \leq f({a} \oplus _i b') \Leftrightarrow f_i(b) \leq f_i(b')$

for any

![]() $a \in B^i$

).

$a \in B^i$

).

Example 2.3. Suppose that V is an ordered vector space over an ordered division ring R,

![]() $d_i \in \mathbb {N}$

for

$d_i \in \mathbb {N}$

for

![]() $i \in [r]$

, and

$i \in [r]$

, and

![]() $f: V^{d_1} {{\times }\dotsb {\times }} V^{d_r} \to V $

is a linear function. Then f is obviously quasi-linear, and hence coordinate-wise monotone.

$f: V^{d_1} {{\times }\dotsb {\times }} V^{d_r} \to V $

is a linear function. Then f is obviously quasi-linear, and hence coordinate-wise monotone.

Remark 2.4. Let B be a grid and

![]() $C \subseteq B$

a subgrid. If

$C \subseteq B$

a subgrid. If

![]() $f\colon B\to S$

is a coordinate-wise monotone function, then the restriction

$f\colon B\to S$

is a coordinate-wise monotone function, then the restriction

![]() $f{\restriction C}$

is a coordinate-wise monotone function on C.

$f{\restriction C}$

is a coordinate-wise monotone function on C.

Definition 2.5. Let B be an r-grid. A subset

![]() $X\subseteq B$

is a basic set if there exists a linearly ordered set

$X\subseteq B$

is a basic set if there exists a linearly ordered set

![]() $(S,<)$

, a coordinate-wise monotone function

$(S,<)$

, a coordinate-wise monotone function

![]() $f\colon B\to S$

and

$f\colon B\to S$

and

![]() $l\in S$

such that

$l\in S$

such that

![]() $X= \left \{ b\in B \colon f(b) < l\right \}$

.

$X= \left \{ b\in B \colon f(b) < l\right \}$

.

Remark 2.6. If

![]() $r=1$

, then every subset of

$r=1$

, then every subset of

![]() $B=B_1$

is basic.

$B=B_1$

is basic.

Remark 2.7. If

![]() $X\subseteq B$

is given by

$X\subseteq B$

is given by

![]() $X= \left \{ b\in B \colon f(b) \leq l\right \}$

for some coordinate-wise monotone function

$X= \left \{ b\in B \colon f(b) \leq l\right \}$

for some coordinate-wise monotone function

![]() $f\colon B\to S$

, then X is a basic set as well. Indeed, we can just add a new element

$f\colon B\to S$

, then X is a basic set as well. Indeed, we can just add a new element

![]() $l'$

to S so that it is a successor of l, and then

$l'$

to S so that it is a successor of l, and then

![]() $X=\left \{b \in B: f(b)< l'\right \}$

.

$X=\left \{b \in B: f(b)< l'\right \}$

.

Similarly, the sets

![]() $\left \{ b\in B \colon f(b)> l\right \}, \left \{ b\in B \colon f(b) \geq l\right \}$

are basic, by inverting the order on S.

$\left \{ b\in B \colon f(b)> l\right \}, \left \{ b\in B \colon f(b) \geq l\right \}$

are basic, by inverting the order on S.

We have the following ‘coordinate-splitting’ presentation for basic sets:

Proposition 2.8. Let

![]() $B=B_1{{\times }\dotsb {\times }} B_r$

be an r-grid and

$B=B_1{{\times }\dotsb {\times }} B_r$

be an r-grid and

![]() $X\subseteq B$

a basic set. Then there is a linearly ordered set

$X\subseteq B$

a basic set. Then there is a linearly ordered set

![]() $(S,<)$

, a coordinate-wise monotone function

$(S,<)$

, a coordinate-wise monotone function

![]() $f^r\colon B^r \to S$

and a function

$f^r\colon B^r \to S$

and a function

![]() $f_r\colon B_r\to S$

such that

$f_r\colon B_r\to S$

such that

![]() $X=\left \{ b^r \oplus _{r} b_r \colon f^r(b^r) < f_r(b_r) \right \}$

.

$X=\left \{ b^r \oplus _{r} b_r \colon f^r(b^r) < f_r(b_r) \right \}$

.

Remark 2.9. The converse of this proposition is also true: an arbitrary linear order

![]() $(S,<)$

can be realised as a subset of some ordered abelian group

$(S,<)$

can be realised as a subset of some ordered abelian group

![]() $(G, +, <)$

with the induced ordering (we can take

$(G, +, <)$

with the induced ordering (we can take

![]() $G := \mathbb {Q}$

when S is at most countable); then define

$G := \mathbb {Q}$

when S is at most countable); then define

![]() $f: B \to S$

by setting

$f: B \to S$

by setting

Proof of Proposition 2.8. Assume that we are given a coordinate-wise monotone function

![]() $f\colon B\to S$

and

$f\colon B\to S$

and

![]() $l\in S$

with

$l\in S$

with

![]() $X= \left \{ b\in B \colon f(b) < l\right \}$

.

$X= \left \{ b\in B \colon f(b) < l\right \}$

.

For

![]() $i\in [r]$

, let

$i\in [r]$

, let

![]() $\leq _i$

be the preorder on

$\leq _i$

be the preorder on

![]() $B_i$

induced by f – namely, for

$B_i$

induced by f – namely, for

![]() $b,b'\in B_i$

we set

$b,b'\in B_i$

we set

![]() $b\leq _i b'$

if and only if for some (equivalently, any)

$b\leq _i b'$

if and only if for some (equivalently, any)

![]() $a\in B^i$

we have

$a\in B^i$

we have

![]() $f(a \oplus _i b)\leq f(a \oplus _i b')$

.

$f(a \oplus _i b)\leq f(a \oplus _i b')$

.

Quotienting

![]() $B_i$

by the equivalence relation corresponding to the preorder

$B_i$

by the equivalence relation corresponding to the preorder

![]() $\leq _i$

if needed, we may assume that each

$\leq _i$

if needed, we may assume that each

![]() $\leq _i$

is actually a linear order.

$\leq _i$

is actually a linear order.

Let

![]() $<^r$

be the partial order on

$<^r$

be the partial order on

![]() $B^r$

with

$B^r$

with

$(b_1,\dotsc ,b_{r-1}) <^r \left (b^{\prime }_1,\dotsc ,b^{\prime }_{r-1}\right )$

if and only if

$(b_1,\dotsc ,b_{r-1}) <^r \left (b^{\prime }_1,\dotsc ,b^{\prime }_{r-1}\right )$

if and only if

$$ \begin{align*} (b_1,\dotsc,b_{r-1}) \neq \left(b^{\prime}_1,\dotsc,b^{\prime}_{r-1}\right) \text{ and } b_j\leq_j b^{\prime}_j \text{ for all } j\in[r-1]. \end{align*} $$

$$ \begin{align*} (b_1,\dotsc,b_{r-1}) \neq \left(b^{\prime}_1,\dotsc,b^{\prime}_{r-1}\right) \text{ and } b_j\leq_j b^{\prime}_j \text{ for all } j\in[r-1]. \end{align*} $$

Define

![]() $T := B^r \dot \cup B_r$

, where

$T := B^r \dot \cup B_r$

, where

![]() $\dot \cup $

denotes the disjoint union. Clearly

$\dot \cup $

denotes the disjoint union. Clearly

![]() $<^r$

is a strict partial order on T – that is, a transitive and antisymmetric (hence irreflexive) relation.

$<^r$

is a strict partial order on T – that is, a transitive and antisymmetric (hence irreflexive) relation.

For any

![]() $b^r\in B^r$

and

$b^r\in B^r$

and

![]() $b_r\in B_r$

, we define

$b_r\in B_r$

, we define

Claim 2.10. Set

![]() $a_1,a_2\in B^r$

and

$a_1,a_2\in B^r$

and

![]() $b_1,b_2\in B_r$

.

$b_1,b_2\in B_r$

.

-

1. If

$a_1\triangleleft b_1 \triangleleft a_2 \triangleleft b_2$

, then

$a_1\triangleleft b_1 \triangleleft a_2 \triangleleft b_2$

, then

$b_2 <_r b_1$

and

$b_2 <_r b_1$

and

$a_1\triangleleft b_2$

.

$a_1\triangleleft b_2$

. -

2. If

$b_1\triangleleft a_1 \triangleleft b_2 \triangleleft a_2$

, then

$b_1\triangleleft a_1 \triangleleft b_2 \triangleleft a_2$

, then

$b_2 <_r b_1$

and

$b_2 <_r b_1$

and

$b_1\triangleleft a_2$

.

$b_1\triangleleft a_2$

.

Proof. (1). We have

![]() $f(a_2 \oplus _r b_1) \geq l$

and

$f(a_2 \oplus _r b_1) \geq l$

and

![]() $f(a_2 \oplus _{r} b_2) < l$

, hence

$f(a_2 \oplus _{r} b_2) < l$

, hence

![]() $b_2 <_r b_1$

. Since

$b_2 <_r b_1$

. Since

![]() $f(a_1 \oplus _r b_1)<l$

and

$f(a_1 \oplus _r b_1)<l$

and

![]() $b_2 <_r b_1$

we also have

$b_2 <_r b_1$

we also have

![]() $f(a_1 \oplus _r b_2)<l$

.

$f(a_1 \oplus _r b_2)<l$

.

(2) is similar.

Let

![]() $\triangleleft ^t$

be the transitive closure of

$\triangleleft ^t$

be the transitive closure of

![]() $\triangleleft $

. It follows from the preceding claim that

$\triangleleft $

. It follows from the preceding claim that

![]() $\triangleleft ^t=\triangleleft \cup \triangleleft {\circ }\triangleleft $

. More explicitly, for

$\triangleleft ^t=\triangleleft \cup \triangleleft {\circ }\triangleleft $

. More explicitly, for

![]() $b_1,b_2 \in B_r$

, we have

$b_1,b_2 \in B_r$

, we have

![]() $b_1 \triangleleft ^t b_2$

if

$b_1 \triangleleft ^t b_2$

if

![]() $b_2 <_r b_1$

, and for

$b_2 <_r b_1$

, and for

![]() $a_1,a_2 \in B^r$

, we have

$a_1,a_2 \in B^r$

, we have

![]() $a_1 \triangleleft ^t a_2$

if

$a_1 \triangleleft ^t a_2$

if

![]() $f(a_1 \oplus b) < l < f(a_2 \oplus b)$

for some

$f(a_1 \oplus b) < l < f(a_2 \oplus b)$

for some

![]() $b \in B_r$

. It is not hard to see then that

$b \in B_r$

. It is not hard to see then that

![]() $\triangleleft ^t$

is antisymmetric, and hence it is a strict partial order on T.

$\triangleleft ^t$

is antisymmetric, and hence it is a strict partial order on T.

Claim 2.11. The union

![]() $<^r \cup \triangleleft ^t$

is a strict partial order on T.

$<^r \cup \triangleleft ^t$

is a strict partial order on T.

Proof. We first show transitivity. Note that

![]() $<^r$

and

$<^r$

and

![]() $\triangleleft ^t$

are both transitive, so it suffices to show for

$\triangleleft ^t$

are both transitive, so it suffices to show for

![]() $x, y, z \in T$

that if either

$x, y, z \in T$

that if either

![]() $x <^r y \triangleleft ^t z$

or

$x <^r y \triangleleft ^t z$

or

![]() $x \triangleleft ^t y <^r z$

, then

$x \triangleleft ^t y <^r z$

, then

![]() $x \triangleleft ^t z$

. Furthermore, since

$x \triangleleft ^t z$

. Furthermore, since

![]() $\triangleleft ^t=\triangleleft \cup \triangleleft {\circ }\triangleleft $

, we may restrict our attention to the following cases: If

$\triangleleft ^t=\triangleleft \cup \triangleleft {\circ }\triangleleft $

, we may restrict our attention to the following cases: If

![]() $a_1 <^r a_2\triangleleft b$

with

$a_1 <^r a_2\triangleleft b$

with

![]() $a_1,a_2\in B^r$

and

$a_1,a_2\in B^r$

and

![]() $b\in B_r$

, then

$b\in B_r$

, then

![]() $f(a_1 \oplus _r b)<f(a_2 \oplus _r b)<l$

, and so

$f(a_1 \oplus _r b)<f(a_2 \oplus _r b)<l$

, and so

![]() $a_1\triangleleft b$

. If

$a_1\triangleleft b$

. If

![]() $b\triangleleft a_1 <^r a_2$

with

$b\triangleleft a_1 <^r a_2$

with

![]() $a_1,a_2\in B^r$

and

$a_1,a_2\in B^r$

and

![]() $b\in B_r$

, then

$b\in B_r$

, then

![]() $f(a_2 \oplus _r b)>f(a_1 \oplus _r b)\geq l$

, and so

$f(a_2 \oplus _r b)>f(a_1 \oplus _r b)\geq l$

, and so

![]() $b\triangleleft a_2$

.

$b\triangleleft a_2$

.

To check antisymmetry, assume

![]() $a_1 <^r a_2$

and

$a_1 <^r a_2$

and

![]() $a_2 \triangleleft ^t a_1$

. Since

$a_2 \triangleleft ^t a_1$

. Since

![]() $a_1,a_2\in B^r$

, we have

$a_1,a_2\in B^r$

, we have

![]() $a_2\triangleleft b \triangleleft a_1$

for some

$a_2\triangleleft b \triangleleft a_1$

for some

![]() $b\in B_r$

. We have

$b\in B_r$

. We have

![]() $f(a_1 \oplus _r b)\geq l> f(a_2 \oplus _r b)$

, contradicting

$f(a_1 \oplus _r b)\geq l> f(a_2 \oplus _r b)$

, contradicting

![]() $a_1<^r a_2$

.

$a_1<^r a_2$

.

Finally, let

![]() $\prec $

be an arbitrary linear order on

$\prec $

be an arbitrary linear order on

![]() $T=B^r\dot \cup B_r$

extending

$T=B^r\dot \cup B_r$

extending

![]() $<^r \cup \triangleleft ^t$

. Since

$<^r \cup \triangleleft ^t$

. Since

![]() $\prec $

extends

$\prec $

extends

![]() $\triangleleft $

, for

$\triangleleft $

, for

![]() $a\in B^r$

and

$a\in B^r$

and

![]() $b\in B_r$

we have

$b\in B_r$

we have

![]() $(a,b)\in X$

if and only if

$(a,b)\in X$

if and only if

![]() $a\prec b$

.

$a\prec b$

.

We take

![]() $f^r\colon B^r\to T$

and

$f^r\colon B^r\to T$

and

![]() $f_r\colon B_r\to T$

to be the identity maps. Since

$f_r\colon B_r\to T$

to be the identity maps. Since

![]() $\prec $

extends

$\prec $

extends

![]() $<^r$

, the map

$<^r$

, the map

![]() $f^r$

is coordinate-wise monotone.

$f^r$

is coordinate-wise monotone.

2.2. Main theorem

Definition 2.12. Let

![]() $B=B_1{{\times }\dotsb {\times }} B_r$

be an r-grid.

$B=B_1{{\times }\dotsb {\times }} B_r$

be an r-grid.

-

1. Given

$s \in \mathbb {N}$

, we say that a set

$s \in \mathbb {N}$

, we say that a set

$X\subseteq B$

has grid-complexity s (in B) if X is the intersection of B with at most s basic subsets of B.

$X\subseteq B$

has grid-complexity s (in B) if X is the intersection of B with at most s basic subsets of B.We say that X has finite grid-complexity if it has grid-complexity s for some

$s \in \mathbb {N}$

.

$s \in \mathbb {N}$

. -

2. For integers

$k_1,\dotsc , k_r$

we say that

$k_1,\dotsc , k_r$

we say that

$X\subseteq B$

is

$X\subseteq B$

is

$K_{k_1,\dotsc ,k_r}$

-free if X does not contain a subgrid

$K_{k_1,\dotsc ,k_r}$

-free if X does not contain a subgrid

$C_1{{\times }\dotsb {\times }} C_r\subseteq S$

with

$C_1{{\times }\dotsb {\times }} C_r\subseteq S$

with

$\lvert C_i\rvert =k_i$

.

$\lvert C_i\rvert =k_i$

.

In particular, B itself is the only subset of B of grid-complexity

![]() $0$

.

$0$

.

Example 2.13. Suppose that V is an ordered vector space over an ordered division ring,

![]() $d = d_1 + \dotsb + d_r \in \mathbb {N}$

and

$d = d_1 + \dotsb + d_r \in \mathbb {N}$

and

$$ \begin{align*} X = \left\{ \bar{x} \in V^{d}:f_{1}\left(\bar{x}\right) \leq 0, \dotsc, f_{p}\left(\bar{x}\right) \leq 0,f_{p+1}\left(\bar{x}\right)<0,\dotsc,f_{s}\left(\bar{x}\right)<0\right\}, \end{align*} $$

$$ \begin{align*} X = \left\{ \bar{x} \in V^{d}:f_{1}\left(\bar{x}\right) \leq 0, \dotsc, f_{p}\left(\bar{x}\right) \leq 0,f_{p+1}\left(\bar{x}\right)<0,\dotsc,f_{s}\left(\bar{x}\right)<0\right\}, \end{align*} $$

for some linear functions

![]() $f_i: V^d \to V, i \in [s]$

. Then each

$f_i: V^d \to V, i \in [s]$

. Then each

![]() $f_i$

is coordinate-wise monotone (Example 2.3), and hence each of the sets

$f_i$

is coordinate-wise monotone (Example 2.3), and hence each of the sets

$$ \begin{align*}\left\{\bar{x} \in V^d : f_i(\bar{x}) <0 \right\}, \left\{\bar{x} \in V^d : f_i(\bar{x}) \leq 0 \right\}\end{align*} $$

$$ \begin{align*}\left\{\bar{x} \in V^d : f_i(\bar{x}) <0 \right\}, \left\{\bar{x} \in V^d : f_i(\bar{x}) \leq 0 \right\}\end{align*} $$

is a basic subset of the grid

![]() $V^{d_1} {{\times }\dotsb {\times }} V^{d_r}$

(the latter by Remark 2.7), and

$V^{d_1} {{\times }\dotsb {\times }} V^{d_r}$

(the latter by Remark 2.7), and

![]() $X \subseteq V^{d_1} {{\times }\dotsb {\times }} V^{d_r}$

as an intersection of these s basic sets has grid-complexity s.

$X \subseteq V^{d_1} {{\times }\dotsb {\times }} V^{d_r}$

as an intersection of these s basic sets has grid-complexity s.

Remark 2.14.

-

1. Let B be an r-grid and

$A\subseteq B$

a subset of B of grid-complexity s. If

$A\subseteq B$

a subset of B of grid-complexity s. If

$C \subseteq B$

is a subgrid containing A, then A is also a subset of C of grid-complexity s.

$C \subseteq B$

is a subgrid containing A, then A is also a subset of C of grid-complexity s. -

2. In particular, if

$A\subseteq B$

is a subset of grid-complexity s, then A is a subset of grid-complexity

$A\subseteq B$

is a subset of grid-complexity s, then A is a subset of grid-complexity

$ s$

of the grid

$ s$

of the grid

$A_1{{\times }\dotsb {\times }} A_r$

, where

$A_1{{\times }\dotsb {\times }} A_r$

, where

$A_i :=\pi _i(A)$

is the projection of A on

$A_i :=\pi _i(A)$

is the projection of A on

$B_i$

(it is the smallest subgrid of B containing A).

$B_i$

(it is the smallest subgrid of B containing A).

Definition 2.15. Let

![]() $B=B_1{{\times }\dotsb {\times }} B_r$

be a finite r-grid and set

$B=B_1{{\times }\dotsb {\times }} B_r$

be a finite r-grid and set

![]() $n_i :=\lvert B_i\rvert $

. For

$n_i :=\lvert B_i\rvert $

. For

![]() $j\in \{0,\dotsc , r\}$

, we will denote by

$j\in \{0,\dotsc , r\}$

, we will denote by

$\delta _j^r(B)$

the integer

$\delta _j^r(B)$

the integer

$$ \begin{align*} \delta_j^r(B) := \sum_{ i_1<i_2<\dotsb< i_j \in [r]} n_{i_1} \cdot n_{i_2} \cdot \dotsb \cdot n_{i_j}.\end{align*} $$

$$ \begin{align*} \delta_j^r(B) := \sum_{ i_1<i_2<\dotsb< i_j \in [r]} n_{i_1} \cdot n_{i_2} \cdot \dotsb \cdot n_{i_j}.\end{align*} $$

Example 2.16. We have

![]() $\delta ^r_0(B)=1$

,

$\delta ^r_0(B)=1$

,

![]() $\delta ^r_1(B)=n_1+\dotsb +n_r$

,

$\delta ^r_1(B)=n_1+\dotsb +n_r$

,

![]() $\delta _r^r(B)=n_1n_2\dotsb n_r$

.

$\delta _r^r(B)=n_1n_2\dotsb n_r$

.

We can now state the main theorem:

Theorem 2.17. For all integers

![]() $r\geq 2, s\geq 0, k\geq 2$

, there are

$r\geq 2, s\geq 0, k\geq 2$

, there are

![]() $\alpha =\alpha (r,s,k)\in \mathbb {R}$

and

$\alpha =\alpha (r,s,k)\in \mathbb {R}$

and

![]() $\beta =\beta (r,s)\in \mathbb N$

such that for any finite r-grid B and

$\beta =\beta (r,s)\in \mathbb N$

such that for any finite r-grid B and

![]() $K_{k,\dotsc ,k}$

-free subset

$K_{k,\dotsc ,k}$

-free subset

![]() $A \subseteq B$

of grid-complexity s, we have

$A \subseteq B$

of grid-complexity s, we have

$$ \begin{align*} |A| \leq \alpha \delta^r_{r-1}(B) \log^\beta \left( \delta^r_{r-1}(B)+1 \right). \end{align*} $$

$$ \begin{align*} |A| \leq \alpha \delta^r_{r-1}(B) \log^\beta \left( \delta^r_{r-1}(B)+1 \right). \end{align*} $$

Moreover, we can take

![]() $\beta (r,s) := s\left (2^{r-1}-1\right )$

.

$\beta (r,s) := s\left (2^{r-1}-1\right )$

.

Remark 2.18. Inspecting the proof in Sections 2.3 and 2.4, it can be verified that the dependence of

![]() $\alpha $

on k in Theorem 2.17 s at most linear.

$\alpha $

on k in Theorem 2.17 s at most linear.

Remark 2.19. We use

$\log ^\beta \left ( \delta ^r_{r-1}(B)+1 \right )$

instead of

$\log ^\beta \left ( \delta ^r_{r-1}(B)+1 \right )$

instead of

$\log ^\beta \left ( \delta ^r_{r-1}(B) \right )$

to include the case

$\log ^\beta \left ( \delta ^r_{r-1}(B) \right )$

to include the case

![]() $\delta ^r_{r-1}(B)\leq 1$

.

$\delta ^r_{r-1}(B)\leq 1$

.

Remark 2.20. If, in Theorem 2.17, A is only assumed to be a union of at most t sets of grid-complexity s, then the same bound holds with

![]() $\alpha ' := t \cdot \alpha $

(if

$\alpha ' := t \cdot \alpha $

(if

![]() $A = \bigcup _{i \in [t]} A_i$

is

$A = \bigcup _{i \in [t]} A_i$

is

![]() $K_{k,\dotsc ,k}$

-free, then each

$K_{k,\dotsc ,k}$

-free, then each

![]() $A_i$

is also

$A_i$

is also

![]() $K_{k,\dotsc ,k}$

-free, so we can apply Theorem 2.17 to each

$K_{k,\dotsc ,k}$

-free, so we can apply Theorem 2.17 to each

![]() $A_i$

and bound

$A_i$

and bound

![]() $\lvert A\rvert $

by the sum of their bounds).

$\lvert A\rvert $

by the sum of their bounds).

Definition 2.21. Let

![]() $B=B_1{{\times }\dotsb {\times }} B_r$

be a grid. We extend the definition of

$B=B_1{{\times }\dotsb {\times }} B_r$

be a grid. We extend the definition of

![]() $\delta ^r_j$

to arbitrary finite subsets of B as follows: let

$\delta ^r_j$

to arbitrary finite subsets of B as follows: let

![]() $A\subseteq B$

be a finite subset, and let

$A\subseteq B$

be a finite subset, and let

![]() $A_i :=\pi _i(A)$

,

$A_i :=\pi _i(A)$

,

![]() $i\in [r]$

, be the projections of A. We define

$i\in [r]$

, be the projections of A. We define

$\delta ^r_j(A) :=\delta ^r_j(A_1{{\times }\dotsb {\times }} A_r)$

.

$\delta ^r_j(A) :=\delta ^r_j(A_1{{\times }\dotsb {\times }} A_r)$

.

If B is a finite r-grid and

![]() $A\subseteq B$

, then obviously

$A\subseteq B$

, then obviously

$\delta ^r_j(A)\leq \delta ^r_j(B)$

. Thus Theorem 2.17 is equivalent to the following:

$\delta ^r_j(A)\leq \delta ^r_j(B)$

. Thus Theorem 2.17 is equivalent to the following:

Proposition 2.22. For all integers

![]() $r\geq 2, s\geq 0, k\geq 2$

, there are

$r\geq 2, s\geq 0, k\geq 2$

, there are

![]() $\alpha =\alpha (r,s,k)\in \mathbb {R}$

and

$\alpha =\alpha (r,s,k)\in \mathbb {R}$

and

![]() $\beta =s\left (2^{r-1}-1\right ) \in \mathbb N$

such that for any r-grid B and

$\beta =s\left (2^{r-1}-1\right ) \in \mathbb N$

such that for any r-grid B and

![]() $K_{k,\dotsc ,k}$

-free finite subset

$K_{k,\dotsc ,k}$

-free finite subset

![]() $A \subseteq B$

of grid-complexity

$A \subseteq B$

of grid-complexity

![]() $\leq s$

, we have

$\leq s$

, we have

$$ \begin{align*} \lvert A\rvert \leq \alpha \delta^r_{r-1}(A) \log^\beta\left(\delta^r_{r-1}(A)+1\right). \end{align*} $$

$$ \begin{align*} \lvert A\rvert \leq \alpha \delta^r_{r-1}(A) \log^\beta\left(\delta^r_{r-1}(A)+1\right). \end{align*} $$

Definition 2.23. For

![]() $r\geq 1, s\geq 0, k\geq 2$

and

$r\geq 1, s\geq 0, k\geq 2$

and

![]() $n\in \mathbb N$

, let

$n\in \mathbb N$

, let

![]() $F_{r,k}(s,n)$

be the maximal size of a

$F_{r,k}(s,n)$

be the maximal size of a

![]() $K_{k,\dotsc ,k}$

-free subset A of grid-complexity s of some r-grid B with

$K_{k,\dotsc ,k}$

-free subset A of grid-complexity s of some r-grid B with

![]() $\delta _{r-1}^r(B)\leq n$

.

$\delta _{r-1}^r(B)\leq n$

.

Then Proposition 2.22 can be restated as follows:

Proposition 2.24. For all integers

![]() $r\geq 2, s\geq 0, k\geq 2$

, there are

$r\geq 2, s\geq 0, k\geq 2$

, there are

![]() $\alpha =\alpha (r,s,k)\in \mathbb {R}$

and

$\alpha =\alpha (r,s,k)\in \mathbb {R}$

and

![]() $\beta =\beta (r,s)\in \mathbb N$

such that

$\beta =\beta (r,s)\in \mathbb N$

such that

Remark 2.25. Notice that

![]() $F_{r,k}(s,0)=0$

.

$F_{r,k}(s,0)=0$

.

In the rest of the section we prove Proposition 2.24 by induction on r, where for each r it is proved by induction on s. We will use the following simple recurrence bound:

Fact 2.26. Let

![]() $\mu \colon \mathbb N \to \mathbb N$

be a function satisfying

$\mu \colon \mathbb N \to \mathbb N$

be a function satisfying

![]() $\mu (0)=0$

and

$\mu (0)=0$

and

![]() $\mu (n)\leq 2\mu (\lfloor n/2\rfloor ) + \alpha n \log ^\beta (n+1)$

for some

$\mu (n)\leq 2\mu (\lfloor n/2\rfloor ) + \alpha n \log ^\beta (n+1)$

for some

![]() $\alpha \in \mathbb {R}$

and

$\alpha \in \mathbb {R}$

and

![]() $\beta \in \mathbb N$

. Then

$\beta \in \mathbb N$

. Then

![]() $\mu (n)\leq \alpha ' n \log ^{\beta +1} (n+1)$

for some

$\mu (n)\leq \alpha ' n \log ^{\beta +1} (n+1)$

for some

![]() $\alpha '=\alpha '(\alpha ,\beta )\in \mathbb {R}$

.

$\alpha '=\alpha '(\alpha ,\beta )\in \mathbb {R}$

.

2.3. The base case

$r=2$

$r=2$

Let

![]() $B=B_1{\times } B_2$

be a finite grid and

$B=B_1{\times } B_2$

be a finite grid and

![]() $A\subseteq B$

a subset of grid-complexity s. We will proceed by induction on s.

$A\subseteq B$

a subset of grid-complexity s. We will proceed by induction on s.

If

![]() $s=0$

, then

$s=0$

, then

![]() $A=B_1\times B_2$

. If A is

$A=B_1\times B_2$

. If A is

![]() $K_{k,k}$

-free, then one of the sets

$K_{k,k}$

-free, then one of the sets

![]() $B_1, B_2$

must have size at most k. Hence

$B_1, B_2$

must have size at most k. Hence

$\lvert A\rvert \leq k(\lvert B_1\rvert +\lvert B_2\rvert )=k\delta ^2_1(B)$

.

$\lvert A\rvert \leq k(\lvert B_1\rvert +\lvert B_2\rvert )=k\delta ^2_1(B)$

.

Thus

Remark 2.27. The same argument shows that

![]() $F_{r,k}(0,n) \leq k n$

for all

$F_{r,k}(0,n) \leq k n$

for all

![]() $r \geq 2$

.

$r \geq 2$

.

Assume now that the theorem is proved for

![]() $r=2$

and all

$r=2$

and all

![]() $s'<s$

. Define

$s'<s$

. Define

![]() $n_1 :=\lvert B_1\rvert $

,

$n_1 :=\lvert B_1\rvert $

,

![]() $n_2 :=\lvert B_2\rvert $

and

$n_2 :=\lvert B_2\rvert $

and

$n :=\delta ^2_1(B)=n_1+n_2$

.

$n :=\delta ^2_1(B)=n_1+n_2$

.

We choose basic sets

![]() $X_1,\dotsc , X_s \subseteq B$

such that

$X_1,\dotsc , X_s \subseteq B$

such that

![]() $A=B \cap \bigcap _{j \in [s]} X_j$

.

$A=B \cap \bigcap _{j \in [s]} X_j$

.

By Proposition 2.8, we can choose a finite linear order

![]() $(S,<)$

and functions

$(S,<)$

and functions

![]() $f_1\colon B_1\to S$

and

$f_1\colon B_1\to S$

and

![]() $f_2\colon B_2\to S$

so that

$f_2\colon B_2\to S$

so that

For

![]() $l\in S$

,

$l\in S$

,

![]() $i\in \{1,2\}$

and

$i\in \{1,2\}$

and

![]() $\square \in \{ <,=,>, \leq , \geq \}$

, let

$\square \in \{ <,=,>, \leq , \geq \}$

, let

$$ \begin{align*} B_i^{\square l} = \left\{ b\in B_i \colon f_i(b) \square l \right\}. \end{align*} $$

$$ \begin{align*} B_i^{\square l} = \left\{ b\in B_i \colon f_i(b) \square l \right\}. \end{align*} $$

We choose

![]() $h\in S$

such that

$h\in S$

such that

$$ \begin{align*} \left\lvert B_1^{<h}\right\rvert+\left\lvert B_2^{<h}\right\rvert \leq n/2 \text{ and } \left\lvert B_1^{>h}\right\rvert+\left\lvert B_2^{>h}\right\rvert \leq n/2. \end{align*} $$

$$ \begin{align*} \left\lvert B_1^{<h}\right\rvert+\left\lvert B_2^{<h}\right\rvert \leq n/2 \text{ and } \left\lvert B_1^{>h}\right\rvert+\left\lvert B_2^{>h}\right\rvert \leq n/2. \end{align*} $$

For example, we can take h to be the minimal element in

![]() $f_1(B_1)\cup f_2(B_2)$

with

$f_1(B_1)\cup f_2(B_2)$

with

$ \left \lvert B_1^{\leq h}\right \rvert +\left \lvert B_2^{ \leq h}\right \rvert \geq n/2$

. Then

$ \left \lvert B_1^{\leq h}\right \rvert +\left \lvert B_2^{ \leq h}\right \rvert \geq n/2$

. Then

$$ \begin{align*} X_s = \left[\left(B_1^{<h}\times B_2^{<h}\right) \cap X_s \right] \cup \left[ \left(B_1^{>h}\times B_2^{>h} \right) \cap X_s \right] \cup \left(B_1^{<h}\times B_2^{\geq h} \right) \cup \left(B_1^{=h}\times B_2^{>h}\right). \end{align*} $$

$$ \begin{align*} X_s = \left[\left(B_1^{<h}\times B_2^{<h}\right) \cap X_s \right] \cup \left[ \left(B_1^{>h}\times B_2^{>h} \right) \cap X_s \right] \cup \left(B_1^{<h}\times B_2^{\geq h} \right) \cup \left(B_1^{=h}\times B_2^{>h}\right). \end{align*} $$

Hence we conclude

Applying the induction hypothesis on s and using Fact 2.26 and Remark 2.25, we obtain

![]() $F_{2,k}(s,n)\leq \alpha n (\log n)^\beta $

for some

$F_{2,k}(s,n)\leq \alpha n (\log n)^\beta $

for some

![]() $\alpha =\alpha (s,k)\in \mathbb {R}$

and

$\alpha =\alpha (s,k)\in \mathbb {R}$

and

![]() $\beta =\beta (s)\in \mathbb N$

.

$\beta =\beta (s)\in \mathbb N$

.

This finishes the base case

![]() $r=2$

.

$r=2$

.

2.4. Induction step

We fix

![]() $r \in \mathbb {N}_{\geq 3}$

and assume that Proposition 2.24 holds for all pairs

$r \in \mathbb {N}_{\geq 3}$

and assume that Proposition 2.24 holds for all pairs

![]() $(r',s)$

with

$(r',s)$

with

![]() $r'<r$

and

$r'<r$

and

![]() $s \in \mathbb {N}$

.

$s \in \mathbb {N}$

.

Definition 2.28. Let

![]() $B=B_1{{\times }\dotsb {\times }} B_r$

be a finite r-grid.

$B=B_1{{\times }\dotsb {\times }} B_r$

be a finite r-grid.

-

1. For integers

$t, u\in \mathbb N$

, we say that a subset

$t, u\in \mathbb N$

, we say that a subset

$A\subseteq B$

is of split grid-complexity

$A\subseteq B$

is of split grid-complexity

$(t, u)$

if there are basic sets

$(t, u)$

if there are basic sets

$X_1,\dotsc , X_{u} \subseteq B$

, a subset

$X_1,\dotsc , X_{u} \subseteq B$

, a subset

$A^r\subseteq B_1{{\times }\dotsb {\times }} B_{r-1}$

of grid-complexity t and a subset

$A^r\subseteq B_1{{\times }\dotsb {\times }} B_{r-1}$

of grid-complexity t and a subset

$A_r\subseteq B_{r}$

such that

$A_r\subseteq B_{r}$

such that

$A=(A^r\times A_r)\cap \bigcap _{i \in [u]} X_i$

.

$A=(A^r\times A_r)\cap \bigcap _{i \in [u]} X_i$

. -

2. For

$t, u \geq 0, k\geq 2$

and

$t, u \geq 0, k\geq 2$

and

$n\in \mathbb N$

, let

$n\in \mathbb N$

, let

$G_{k}(t,u,n)$

be the maximal size of a

$G_{k}(t,u,n)$

be the maximal size of a

$K_{k,\dotsc ,k}$

-free subset A of an r-grid B of split grid-complexity

$K_{k,\dotsc ,k}$

-free subset A of an r-grid B of split grid-complexity

$(t,u)$

with

$(t,u)$

with

$\delta _{r-1}^r(B)\leq n$

.

$\delta _{r-1}^r(B)\leq n$

.

Remark 2.29.

-

1. Note that

$A_r$

has grid-complexity at most

$A_r$

has grid-complexity at most

$1$

, which is the reason we do not include a parameter for the grid-complexity of

$1$

, which is the reason we do not include a parameter for the grid-complexity of

$A_r$

in the split grid-complexity of A.

$A_r$

in the split grid-complexity of A. -

2. If

$A\subseteq B$

is of grid-complexity s, then it is of split grid-complexity

$A\subseteq B$

is of grid-complexity s, then it is of split grid-complexity

$(0,s)$

.

$(0,s)$

. -

3. If

$A\subseteq B$

is of split grid-complexity

$A\subseteq B$

is of split grid-complexity

$(t, u)$

, then it is of grid-complexity

$(t, u)$

, then it is of grid-complexity

$t + u$

.

$t + u$

.

For the rest of the proof, we abuse notation slightly and refer to the split grid-complexity of a set as simply the grid-complexity. To complete the induction step we will prove the following proposition:

Proposition 2.30. For any integers

![]() $t,u\geq 0, k\geq 2, r \geq 3$

, there are

$t,u\geq 0, k\geq 2, r \geq 3$

, there are

![]() $\alpha ' = \alpha '(r,k,t,u)\in \mathbb {R}$

and

$\alpha ' = \alpha '(r,k,t,u)\in \mathbb {R}$

and

![]() $\beta ' = \beta '(r,k,t,u)\in \mathbb N$

such that

$\beta ' = \beta '(r,k,t,u)\in \mathbb N$

such that

We will use the following notations throughout the section:

-

•

$B=B_1{{\times }\dotsb {\times }} B_r$

is a finite grid with

$B=B_1{{\times }\dotsb {\times }} B_r$

is a finite grid with

$n=\delta ^r_{r-1}(B)$

;

$n=\delta ^r_{r-1}(B)$

; -

•

$A\subseteq B$

is a subset of grid-complexity

$A\subseteq B$

is a subset of grid-complexity

$(t,u)$

;

$(t,u)$

; -

•

$B^r$

is the

$B^r$

is the

$(r-1)$

-grid

$(r-1)$

-grid

$B^r :=B_1{{\times }\dotsb {\times }} B_{r-1}$

;

$B^r :=B_1{{\times }\dotsb {\times }} B_{r-1}$

; -

•

$A^r \subseteq B^r$

is a subset of grid-complexity t,

$A^r \subseteq B^r$

is a subset of grid-complexity t,

$A_r \subseteq B_r$

, and

$A_r \subseteq B_r$

, and

$X_1,\dotsc X_{u} \subseteq B$

are basic subsets such that

$X_1,\dotsc X_{u} \subseteq B$

are basic subsets such that

$A= (A^r{\times } A_r)\cap \bigcap _{i \in [u]} X_i$

.

$A= (A^r{\times } A_r)\cap \bigcap _{i \in [u]} X_i$

.

We proceed by induction on u.

The base case

![]() $u=0$

of Proposition 2.30.

$u=0$

of Proposition 2.30.

In this case,

![]() $A=A^r\times A_r$

. If A is

$A=A^r\times A_r$

. If A is

![]() $K_{k,\dotsc ,k}$

-free, then either

$K_{k,\dotsc ,k}$

-free, then either

![]() $A^r$

is

$A^r$

is

![]() $K_{k,\dotsc ,k}$

-free or

$K_{k,\dotsc ,k}$

-free or

![]() $\lvert A_r\rvert <k$

.

$\lvert A_r\rvert <k$

.

In the first case, by the induction hypothesis on r, there are

![]() $\alpha =\alpha (r-1, t,k)$

and

$\alpha =\alpha (r-1, t,k)$

and

![]() $\beta =\beta (r-1, t)$

such that

$\beta =\beta (r-1, t)$

such that

$\lvert A^r\rvert \leq \alpha \delta ^{r-1}_{r-2}(B^r)\log ^\beta \left ( \delta ^{r-1}_{r-2}(B^r)+1\right )$

. In the second case, we have

$\lvert A^r\rvert \leq \alpha \delta ^{r-1}_{r-2}(B^r)\log ^\beta \left ( \delta ^{r-1}_{r-2}(B^r)+1\right )$

. In the second case, we have

$\lvert A\rvert \leq \lvert B^r\rvert k=\delta ^{r-1}_{r-1}(B^r)k$

.

$\lvert A\rvert \leq \lvert B^r\rvert k=\delta ^{r-1}_{r-1}(B^r)k$

.

Since

$n=\delta ^r_{r-1}(B)=\delta ^{r-1}_{r-1}(B^r)+\delta ^{r-1}_{r-2}(B^r) \lvert B_r\rvert $

, the conclusion of the proposition follows with

$n=\delta ^r_{r-1}(B)=\delta ^{r-1}_{r-1}(B^r)+\delta ^{r-1}_{r-2}(B^r) \lvert B_r\rvert $

, the conclusion of the proposition follows with

![]() $\alpha ' := \alpha , \beta ' := \beta $

.

$\alpha ' := \alpha , \beta ' := \beta $

.

Induction step of Proposition 2.30.

We assume now that the proposition holds for all pairs

![]() $(t,u')$

with

$(t,u')$

with

![]() $u'<u$

and

$u'<u$

and

![]() $t \in \mathbb {N}$

.

$t \in \mathbb {N}$

.

Given a tuple

![]() $x = (x_1, \dotsc , x_r) \in B$

, we set

$x = (x_1, \dotsc , x_r) \in B$

, we set

![]() $x^r := (x_1, \dotsc , x_{r-1})$

. By Proposition 2.8, we can choose a finite linear order

$x^r := (x_1, \dotsc , x_{r-1})$

. By Proposition 2.8, we can choose a finite linear order

![]() $(S,<)$

, a coordinate-wise monotone function

$(S,<)$

, a coordinate-wise monotone function

![]() $f^r\colon B^r\to S$

and a function

$f^r\colon B^r\to S$

and a function

![]() $f_r\colon B_r\to S$

so that

$f_r\colon B_r\to S$

so that

Moreover, by Remark 2.9 we may assume without loss of generality that the coordinate-wise monotone function defining

![]() $X_u$

is given by

$X_u$

is given by

Definition 2.31. Given an arbitrary set

![]() $C^r \subseteq B^r$

, we say that a set

$C^r \subseteq B^r$

, we say that a set

![]() $H^r \subseteq C^r$

is an

$H^r \subseteq C^r$

is an

![]() $f^r$

-strip in

$f^r$

-strip in

![]() $C^r$

if

$C^r$

if

for some

![]() $l_1,l_2\in S$

,

$l_1,l_2\in S$

,

![]() $\triangleleft _1, \triangleleft _2\in \{ <,\leq \}$

. Likewise, given an arbitrary set

$\triangleleft _1, \triangleleft _2\in \{ <,\leq \}$

. Likewise, given an arbitrary set

![]() $C_r \subseteq B_r$

, we say that

$C_r \subseteq B_r$

, we say that

![]() $H_r \subseteq C_r$

is an

$H_r \subseteq C_r$

is an

![]() $f_r$

-strip in

$f_r$

-strip in

![]() $C_r$

if

$C_r$

if

for some

![]() $l_1,l_2\in S$

,

$l_1,l_2\in S$

,

![]() $\triangleleft _1, \triangleleft _2\in \{ <,\leq \}$

. If

$\triangleleft _1, \triangleleft _2\in \{ <,\leq \}$

. If

![]() $C^r = A^r$

or

$C^r = A^r$

or

![]() $C_r = A_r$

, we simply say an

$C_r = A_r$

, we simply say an

![]() $f^r$

-strip or

$f^r$

-strip or

![]() $f_r$

-strip, respectively.

$f_r$

-strip, respectively.

Remark 2.32. Note the following:

-

1.

$A^r$

is an

$A^r$

is an

$f^r$

-strip, and

$f^r$

-strip, and

$A_r$

is an

$A_r$

is an

$f_r$

-strip.

$f_r$

-strip. -

2. Every

$f^r$

-strip is a subset of the

$f^r$

-strip is a subset of the

$(r-1)$

-grid

$(r-1)$

-grid

$B^r$

of grid-complexity

$B^r$

of grid-complexity

$t+2$

(using Remark 2.7).

$t+2$

(using Remark 2.7). -

3. The intersection of any two

$f^r$

-strips is an

$f^r$

-strips is an

$f^r$

-strip; the same conclusion holds for

$f^r$

-strip; the same conclusion holds for

$f_r$

-strips.

$f_r$

-strips.

Definition 2.33.

-

1. We say that a subset

$H\subseteq B$

is an f-grid if

$H\subseteq B$

is an f-grid if

$H=H^r\times H_r$

, where

$H=H^r\times H_r$

, where

$H^r\subseteq B^r$

is an

$H^r\subseteq B^r$

is an

$f^r$

-strip in

$f^r$

-strip in

$B^r$

and

$B^r$

and

$H_r \subseteq B_r$

is an

$H_r \subseteq B_r$

is an

$f_r$

-strip in

$f_r$

-strip in

$B_r$

.

$B_r$

. -

2. If

$H=H^r\times H_r$

is an f-grid, we set Note that if H is a sub-grid of B, then

$H=H^r\times H_r$

is an f-grid, we set Note that if H is a sub-grid of B, then $$ \begin{align*} \Delta(H) :=\lvert H^r\rvert + \delta^{r-1}_{r-2}(H^r)\lvert H_r\rvert \text{ (see Definition~2.21 for } \delta^{r-1}_{r-2}). \end{align*} $$

$$ \begin{align*} \Delta(H) :=\lvert H^r\rvert + \delta^{r-1}_{r-2}(H^r)\lvert H_r\rvert \text{ (see Definition~2.21 for } \delta^{r-1}_{r-2}). \end{align*} $$

$\Delta (H)=\delta ^r_{r-1}(H)$

.

$\Delta (H)=\delta ^r_{r-1}(H)$

.

-

3. For an f-grid H, we will denote by

$A_H$

the set

$A_H$

the set

$A\cap H$

.

$A\cap H$

.

The induction step for Proposition 2.30 will follow from the following proposition:

Proposition 2.34. For all integers

![]() $k\geq 2, r \geq 3$

, there are

$k\geq 2, r \geq 3$

, there are

![]() $\alpha ' = \alpha '(r,k,t,u)\in \mathbb {R}$

and

$\alpha ' = \alpha '(r,k,t,u)\in \mathbb {R}$

and

![]() $\beta '=\beta '(r,t,u) \in \mathbb N$

such that, for any f-grid H, if the set

$\beta '=\beta '(r,t,u) \in \mathbb N$

such that, for any f-grid H, if the set

![]() $A_H$

is

$A_H$

is

![]() $K_{k,\dotsc ,k}$

-free then

$K_{k,\dotsc ,k}$

-free then

We should stress that in this proposition,

![]() $\alpha '$

and

$\alpha '$

and

![]() $\beta '$

do not depend on

$\beta '$

do not depend on

![]() $f^r, f_r$

, B,

$f^r, f_r$

, B,

![]() $A^r$

, and

$A^r$

, and

![]() $A_r$

, but they may depend on our fixed t and u.

$A_r$

, but they may depend on our fixed t and u.

Given Proposition 2.34, we can apply it to the f-grid

![]() $H := A^r\times A_r$

(so

$H := A^r\times A_r$

(so

![]() $A_H = A$

) and get

$A_H = A$

) and get

It is easy to see that

![]() $\Delta (A^r\times A_r)\leq \delta ^r_{r-1}(B)$

, and hence Proposition 2.30 follows with the same

$\Delta (A^r\times A_r)\leq \delta ^r_{r-1}(B)$

, and hence Proposition 2.30 follows with the same

![]() $\alpha '$

and

$\alpha '$

and

![]() $\beta '$

.

$\beta '$

.

We proceed with the proof of Proposition 2.34:

Proof of Proposition 2.34. Fix

![]() $m\in \mathbb N$

, and let

$m\in \mathbb N$

, and let

![]() $L(m)$

be the maximal size of a

$L(m)$

be the maximal size of a

![]() $K_{k,\dotsc ,k}$

-free set

$K_{k,\dotsc ,k}$

-free set

![]() $A_H$

among all f-grids

$A_H$

among all f-grids

![]() $H \subseteq B$

with

$H \subseteq B$

with

![]() $\Delta (H)\leq m$

. We need to show that for some

$\Delta (H)\leq m$

. We need to show that for some

![]() $\alpha '=\alpha '(k)\in \mathbb {R}$

and

$\alpha '=\alpha '(k)\in \mathbb {R}$

and

![]() $\beta ' \in \mathbb N$

we have

$\beta ' \in \mathbb N$

we have

Let

![]() $H=H^r\times H_r$

be an f-grid with

$H=H^r\times H_r$

be an f-grid with

![]() $\Delta (H)\leq m$

.

$\Delta (H)\leq m$

.

For

![]() $l\in S$

and

$l\in S$

and

![]() $\square \in \{ <,=,>, \leq , \geq \}$

, define

$\square \in \{ <,=,>, \leq , \geq \}$

, define

and

Note that for every

![]() $l \in S$

,

$l \in S$

,

![]() $H^{r,\square l}$

is an

$H^{r,\square l}$

is an

![]() $f^r$

-strip in

$f^r$

-strip in

![]() $H^r$

,

$H^r$

,

![]() $H_r^{\square l}$

is an

$H_r^{\square l}$

is an

![]() $f_r$

-strip in

$f_r$

-strip in

![]() $H_r$

and their product is an f-grid.

$H_r$

and their product is an f-grid.

Claim 2.35. There is

![]() $h\in S$

such that

$h\in S$

such that

$$ \begin{align*} \Delta\left(H^{r,<h}\times H_r^{<h}\right) \leq m/2 \text{ and } \Delta\left(H^{r,>h}\times H_r^{>h}\right) \leq m/2.\end{align*} $$

$$ \begin{align*} \Delta\left(H^{r,<h}\times H_r^{<h}\right) \leq m/2 \text{ and } \Delta\left(H^{r,>h}\times H_r^{>h}\right) \leq m/2.\end{align*} $$

Proof. Set

$\delta :=\delta ^{r-1}_{r-2}(H^r)$

.

$\delta :=\delta ^{r-1}_{r-2}(H^r)$

.

Let h be the minimal element in

![]() $f^r(H^r)\cup f_r(H_r)$

with

$f^r(H^r)\cup f_r(H_r)$

with

$$ \begin{align*} \left\lvert H^{r,\leq h }\right\rvert+\delta \left\lvert H_r^{\leq h}\right\rvert \geq m/2.\end{align*} $$

$$ \begin{align*} \left\lvert H^{r,\leq h }\right\rvert+\delta \left\lvert H_r^{\leq h}\right\rvert \geq m/2.\end{align*} $$

Then

$ \left \lvert H^{r,< h }\right \rvert +\delta \left \lvert H_r^{< h}\right \rvert \leq m/2$

and

$ \left \lvert H^{r,< h }\right \rvert +\delta \left \lvert H_r^{< h}\right \rvert \leq m/2$

and

$ \left \lvert H^{r,> h }\right \rvert +\delta \left \lvert H_r^{> h}\right \rvert \leq m/2$

. Since

$ \left \lvert H^{r,> h }\right \rvert +\delta \left \lvert H_r^{> h}\right \rvert \leq m/2$

. Since

![]() $H^{r,< h}, H^{r,> h} \subseteq H^r$

, we have

$H^{r,< h}, H^{r,> h} \subseteq H^r$

, we have

$\delta ^{r-1}_{r-2}\left (H^{r,< h}\right ), \delta ^{r-1}_{r-2}\left (H^{r,> h}\right ) \leq \delta $

. The claim follows.

$\delta ^{r-1}_{r-2}\left (H^{r,< h}\right ), \delta ^{r-1}_{r-2}\left (H^{r,> h}\right ) \leq \delta $

. The claim follows.

Let h be as in the claim. It is not hard to see that the following hold:

$$ \begin{gather*} \left( H^{r, \leq h} \times H_r^{\geq h} \right) \cap X_u = \left( H^{r, < h} \times H_{r}^{\geq h} \right) \cup \left( H^{r, =h} \times H_r^{>h} \right),\\ \left( H^{r, \geq h} \times H_r^{\leq h} \right) \cap X_u = \emptyset. \end{gather*} $$

$$ \begin{gather*} \left( H^{r, \leq h} \times H_r^{\geq h} \right) \cap X_u = \left( H^{r, < h} \times H_{r}^{\geq h} \right) \cup \left( H^{r, =h} \times H_r^{>h} \right),\\ \left( H^{r, \geq h} \times H_r^{\leq h} \right) \cap X_u = \emptyset. \end{gather*} $$

It follows that

$$ \begin{align*} A_H \cap X_u = \left[\left(H^{r,<h}\times H_r^{<h}\right) \cap X_u \right] \cup \left[\left(H^{r,>h}\times H_r^{>h}\right) \cap X_u \right] \cup \left(H^{r,<h}\times H_r^{\geq h}\right) \cup \left(H^{r,=h}\times H_r^{>h}\right). \end{align*} $$

$$ \begin{align*} A_H \cap X_u = \left[\left(H^{r,<h}\times H_r^{<h}\right) \cap X_u \right] \cup \left[\left(H^{r,>h}\times H_r^{>h}\right) \cap X_u \right] \cup \left(H^{r,<h}\times H_r^{\geq h}\right) \cup \left(H^{r,=h}\times H_r^{>h}\right). \end{align*} $$

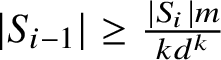

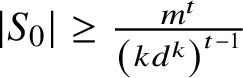

Hence, by the choice of h and using Remark 2.32(2),

Applying the induction hypothesis on u and using Fact 2.26, we obtain

![]() $L(m)\leq \alpha ' m \log ^{\beta '}(m+1)$

for some

$L(m)\leq \alpha ' m \log ^{\beta '}(m+1)$

for some

![]() $\alpha '=\alpha '(k)\in \mathbb {R}$

and

$\alpha '=\alpha '(k)\in \mathbb {R}$

and

![]() $\beta '\in \mathbb N$

.

$\beta '\in \mathbb N$

.

This finishes the proof of Proposition 2.34, and hence of the induction step of Proposition 2.24.

Finally, inspecting the proof, we have shown the following:

-

1.

$\beta (2,s) \leq s$

for all

$\beta (2,s) \leq s$

for all

$s \in \mathbb {N}$

.

$s \in \mathbb {N}$

. -

2.

$\beta '(r,t, 0) \leq \beta (r-1,t)$

for all

$\beta '(r,t, 0) \leq \beta (r-1,t)$

for all

$r \geq 3$

and

$r \geq 3$

and

$t \in \mathbb {N}$

.

$t \in \mathbb {N}$

. -

3.

$\beta '(r,t,u) \leq \beta '(r, t+2, u-1) + 1$

for all

$\beta '(r,t,u) \leq \beta '(r, t+2, u-1) + 1$

for all

$r \geq 3, t \geq 0, u \geq 1$

.

$r \geq 3, t \geq 0, u \geq 1$

.

Iterating (3), for every

![]() $r \geq 3, s \geq 1$

we have

$r \geq 3, s \geq 1$

we have

![]() $\beta (r,s) \leq \beta '(r,0,s) \leq \beta '(r, 2s, 0) + s$

. Hence, by (2),

$\beta (r,s) \leq \beta '(r,0,s) \leq \beta '(r, 2s, 0) + s$

. Hence, by (2),

![]() $\beta (r,s) \leq \beta (r-1, 2s) + s$

for every

$\beta (r,s) \leq \beta (r-1, 2s) + s$

for every

![]() $r \geq 3$

and

$r \geq 3$

and

![]() $s \geq 1$

. Iterating this, we get

$s \geq 1$

. Iterating this, we get

$\beta (r,s) \leq \beta \left (2, 2^{r-2}s\right ) + s \sum _{i=0}^{r-3}2^i$

. Using (1), this implies

$\beta (r,s) \leq \beta \left (2, 2^{r-2}s\right ) + s \sum _{i=0}^{r-3}2^i$

. Using (1), this implies

$\beta (r,s) \leq s \sum _{i=0}^{r-2}2^i = s\left (2^{r-1}-1\right )$

for all