Implementation science is becoming increasingly important in the nutrition field. Over the past decades, a number of innovative nutrition interventions have been developed and tested that can contribute to reduce the high burden of malnutrition and human development, particularly when they are scaled-up through the use of a comprehensive implementation science framework. Globally, about 2 billion people are suffering from anaemia mainly due to micronutrient malnutrition(Reference Kassebaum1). A high burden of malnutrition imposes adverse consequences on overall development, which impacts on individuals, families, communities and nations. Globally, it is estimated that all forms of malnutrition cost up to US$3·5 trillion per year(2).

Recently, several initiatives have been taken at the global level to address all forms of malnutrition including locating nutrition targets within the sustainable development goals (SDG). The SDG 2 goals aim to end hunger, achieve food security and improve nutrition and promote sustainable agriculture by 2030(Reference Hawkes and Fanzo3). Another initiative by the Scaling-Up Nutrition Movement focuses on translating momentum into results for people who suffer due to malnutrition(4). The application of implementation science to nutrition is important to expedite the use of effective nutrition interventions. Over the last couple of decades, the number of randomised controlled trials (RCTs) has increased five to tenfold to test the efficacy of innovative health and nutrition interventions(Reference Balas and Boren5), although there is little evidence that these trials transfer into real-world practice(Reference Oldenburg, Sallis and French6).

Implementation researchers have proposed a number of conceptual frameworks and theories which have diverse implications and usefulness for a systematic uptake of evidence-based interventions into the complex real world. These theories, models and frameworks have been categorised into three groups(Reference Nilsen7): (i) process models – guiding the process of translating research into practice; (ii) determinants frameworks, classic theories and implementation theories – explaining what influences implementation outcomes and (iii) evaluation frameworks evaluating the implementation(Reference Nilsen7). However, the scaling-up and sustainability of an implementation have not been included in these groups, even though they are essential components of implementation science(Reference Dintrans, Bossert and Sherry8,Reference Moucheraud, Schwitters and Boudreaux9) . Scaling-up and sustainability focus on specific processes that help expand the impact of innovative interventions across populations to ensure continuation of the impact for a longer term or until the problem is resolved.

Recently in the nutrition field, several scholars have used implementation science to support scaling-up of evidence-based nutrition interventions(Reference Tumilowicz, Ruel and Pelto10– Reference Gillespie, Menon and Kennedy 12 ). In 2016, the Society for Implementation Science in Nutrition (SISN) was formed to promote implementation science for addressing malnutrition burdens in low- and middle-income countries(13). In 2018, researchers in SISN defined implementation science in nutrition, ‘as an interdisciplinary body of theory, knowledge, frameworks, tools and approaches whose purpose is to strengthen implementation quality and impact’(Reference Gillespie, Menon and Kennedy12). The same article also proposed an integrated framework for implementation science in nutrition(Reference Gillespie, Menon and Kennedy12). This framework addressed several elements of implementation science including recognising the quality of an implementation, developing a culture of evaluation and learning among program implementers and resolving inherent tensions between program implementation and research(Reference Gillespie, Menon and Kennedy12). These initiatives may be further strengthened by developing a comprehensive conceptual framework for nutrition implementation science that includes how an effective intervention can be identified for scaling-up and sustained until malnutrition is improved at the population level.

The aim of this paper is to develop a more comprehensive conceptual framework that can be applied to a real-world nutrition intervention from an efficacy or effectiveness trial through scaling-up to its sustainability. This work was motivated by the need to implement a package of nutrition interventions under the platform of Maternal, Infant and Young Child Nutrition in a low-income setting. The first author of this paper developed this framework to evaluate the implementation of home fortification of food with micronutrient powder (MNP) in Bangladesh.

Methods

Search strategy

To perform a narrative review of peer-reviewed published literature on implementation science frameworks or theory, we used PubMed and Scopus databases, and Google and Google Scholar as search engines and performed manual searches (e.g. reference searching, personal communication) to identify relevant literature and documents. The final round of searching finished on 31 December 2018, and we did not limit publication date while searching.

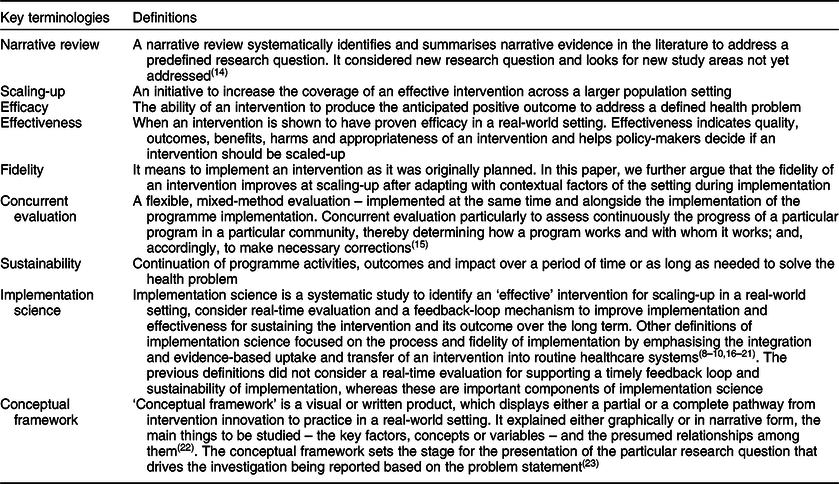

Functional definitions

We used a range of key terms and terminologies in this paper, including Narrative review, Scaling-up, Efficacy, Effectiveness, Fidelity, Concurrent evaluation, Sustainability, Implementation science and Conceptual framework. Table 1 shows the functional definitions of these terms/terminologies for this paper.

Table 1 Definition of key terminologies

Search terms

For PubMed, we used (‘implementation science’[Title/Abstract] OR ‘implementation research’[Title/Abstract]) AND (‘framework’[Title/Abstract] OR ‘theory’[Title/Abstract] OR ‘model’[Title/Abstract]) AND English[lang] as search terms and search limit. For Scopus, we used [(TITLE-ABS-KEY (‘implementation science’) OR TITLE-ABS-KEY (‘implementation research’) AND TITLE-ABS-KEY (‘framework’) OR TITLE-ABS-KEY (‘theory’) OR TITLE-ABS-KEY (‘model’)) AND (LIMIT-TO (SUBJAREA, ‘MEDI’) OR LIMIT-TO (SUBJAREA, ‘SOCI’) OR LIMIT-TO (SUBJAREA, ‘PSYC’) OR LIMIT-TO (SUBJAREA, ‘NURS’)) AND (EXCLUDE (SUBJAREA, ‘BUSI’) OR EXCLUDE (SUBJAREA, ‘COMP’) AND (LIMIT-TO (LANGUAGE, ‘English’)) as search terms and search limit. However, when we used Google and Google Scholar, we used combination of these search terms or used the title of the article.

Exclusion criteria

We excluded literature that was not relevant to the objectives of this paper; for example, if it did not propose a unique framework, but instead critically appraised existing frameworks. We also excluded literature that dealt with the same framework in multiple papers. In this case, we included the paper that proposed the original framework or the paper that modified the framework later.

The process of selecting literature

We used EndNote version X8.2 for organising and managing the literature review. First, we removed the duplicate articles identified through both the search databases: Scopus and PubMed. Then we screened the titles of all identified articles and excluded those which were not relevant to the study objective. We read the abstracts of all remaining articles and selected the literature which contained a framework, model or theory pertaining to implementation science. We downloaded full texts of the selected articles and excluded the articles if full texts were not available. We read the full texts of the selected articles and excluded those which fallen under the exclusion criteria. We included and reviewed articles identified in the earlier full-text review to produce the final list of articles discussed below.

Analysis and synthesis to identify the components of conceptual framework

We performed a qualitative thematic analysis on the identified articles containing implementation science frameworks. We reviewed the articles, highlighting the text that explained the frameworks and then coded the text to build initial themes. At first, we performed a matrix analysis to display the reviewed findings. We later grouped initial themes under five major themes, then summarised them in tabulation forms (Tables 2 and 3). This process helped us to identify which specific components of implementation science have been included in the reviewed articles. We have summarised the reviewed findings and used them to develop a comprehensive conceptual framework with additional evidence from the literature to clarify the domains and components of the framework.

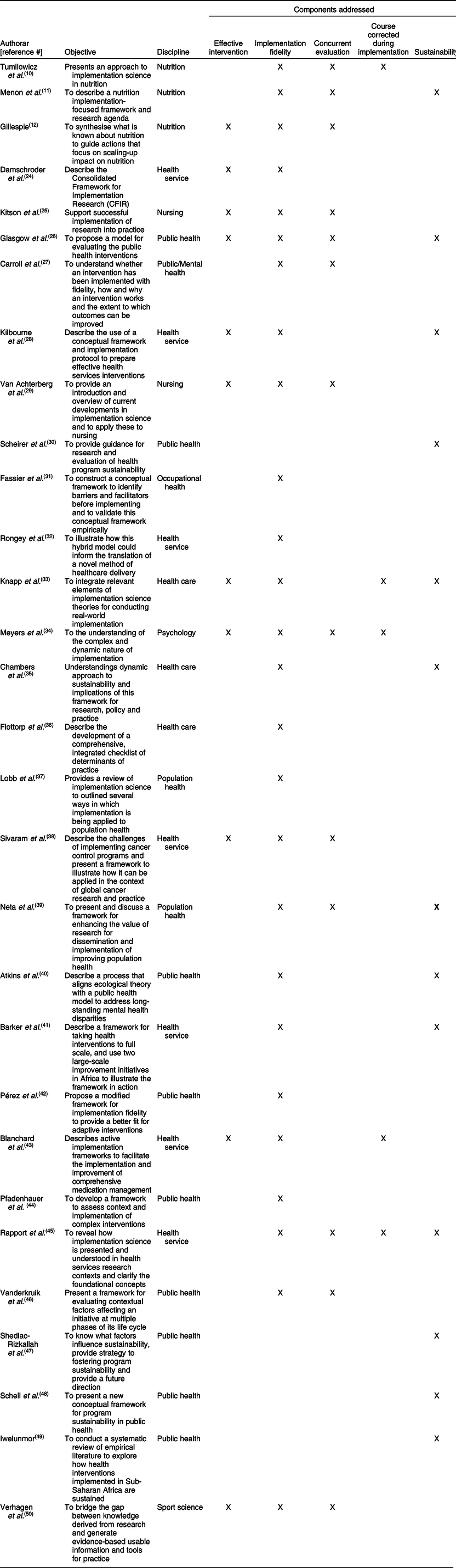

Table 2 A summary of the literature review

Table 3 Synthesis of review findings

Results

Review results

Using both search databases we identified 1772 articles published between 1998, when papers first appeared on implementation science and 2018 when we conducted the search. We excluded 80 duplicate articles. Through title screening, we excluded 1365 articles, which were not relevant to the study’s objectives, and identified 327 articles for abstract screening. We excluded 196 abstracts that were not related to the study objective and four articles due to unavailability of the full text. After reading 127 full-text articles, we excluded 102 because they did not include a framework or they provided duplicates of existing frameworks in the literature. We added five papers through reviewing the references of selected articles. Finally, we identified thirty articles (Fig. 1), which provided a framework, theory or a model for implementation science(Reference Tumilowicz, Ruel and Pelto10– Reference Gillespie, Menon and Kennedy 12 , Reference Damschroder, Aron and Keith 24 – Reference Verhagen, Voogt and Bruinsma 50 ).

Fig. 1 Flow diagram of the literature search and identifying full-text literature

Most of the frameworks covered broad public health disciplines, including population health, health services, health care and a few referred to nursing, psychology/mental health, sports science and occupational health. Three frameworks focused on nutrition but did not include all the key components recommended by implementation science. For example, two papers on nutrition implementation science focused on scaling-up a nutrition intervention, but did not consider sustainability in their conceptual frameworks, and the other framework considered sustainability that did not consider how to identify an effective intervention (Table 2).

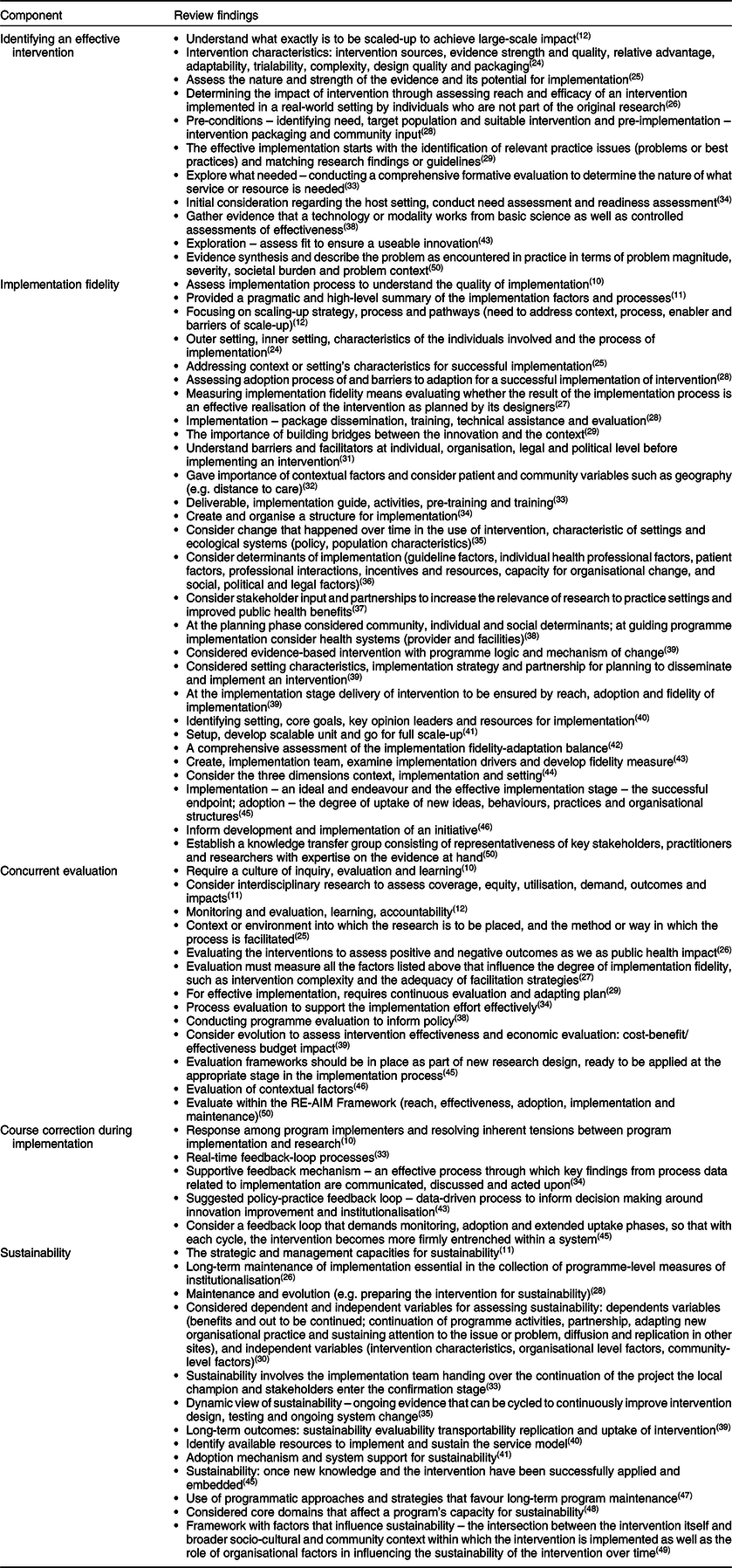

Table 3 synthesises the key findings of literature review under five broad themes. Eleven frameworks considered how to identify effective interventions(Reference Gillespie, Menon and Kennedy12,Reference Damschroder, Aron and Keith24– Reference Glasgow, Vogt and Boles 26 , Reference Kilbourne, Neumann and Pincus 28 , Reference Van Achterberg, Schoonhoven and Grol 29 , Reference Meyers, Durlak and Wandersman 34 , Reference Sivaram, Sanchez and Rimer 38 , Reference Blanchard, Livet and Ward 43 , Reference Verhagen, Voogt and Bruinsma 50 ). The nature of the implementation settings, intervention characteristics, evidence strength and intervention contexts were used to identify effective interventions for scaling-up (Table 3). Most of the frameworks (n 26) emphasise the importance of implementation fidelity while scaling-up the intervention in a real-world setting (Table 2). Some frameworks suggested that it was important to identify implementation drivers (e.g. performance of health workers) during scaling-up to measure and improve implementation fidelity. The range of implementation drivers influencing fidelity were found to operate at the health-systems level, the socio-cultural and political level and the individual level. These frameworks also emphasised the importance of guidelines, strategies or structure of the implementation to monitor fidelity, adapting it to local contexts (Table 3).

More than one-third of implementation science frameworks (n 13, Table 2) considered real-time evaluation. These evaluations assessed the implementation process, outcome, effectiveness (including cost-effectiveness) and impact of the implementation(Reference Tumilowicz, Ruel and Pelto10– Reference Gillespie, Menon and Kennedy 12 , Reference Kitson, Harvey and McCormack 25 – Reference Carroll, Patterson and Wood 27 , Reference Van Achterberg, Schoonhoven and Grol 29 , Reference Meyers, Durlak and Wandersman 34 , Reference Sivaram, Sanchez and Rimer 38 , Reference Neta, Glasgow and Carpenter 39 , Reference Rapport, Clay-Williams and Churruca 45 , Reference Vanderkruik and McPherson 46 , Reference Verhagen, Voogt and Bruinsma 50 ). It was considered vital that a culture of inquiry, interdisciplinary research and an evaluation framework should be included at the beginning of the implementation strategy (Table 3). Five frameworks included a planned evaluation to improve programme implementation(Reference Tumilowicz, Ruel and Pelto10,Reference Knapp and Anaya33,Reference Meyers, Durlak and Wandersman34,Reference Blanchard, Livet and Ward43,Reference Rapport, Clay-Williams and Churruca45) . Evaluations containing a real-time feedback loop generated key findings. If the data from this was acted upon quickly, it could resolve inherent tensions between program implementation and research (Table 3).

Thirteen frameworks(Reference Menon, Covic and Harrigan11,Reference Glasgow, Vogt and Boles26,Reference Kilbourne, Neumann and Pincus28,Reference Scheirer and Dearing30,Reference Knapp and Anaya33,Reference Chambers, Glasgow and Stange35,Reference Atkins, Rusch and Mehta40,Reference Barker, Reid and Schall41,Reference Rapport, Clay-Williams and Churruca45,Reference Shediac-Rizkallah and Bone47– Reference Iwelunmor, Blackstone and Veira 49 ) included sustainability as part of a implementation science framework. The determinants of sustainability covered institutionalisation, adoption mechanisms, system support, involvement of a local champion and consideration of programmatic approaches and strategies (Table 3).

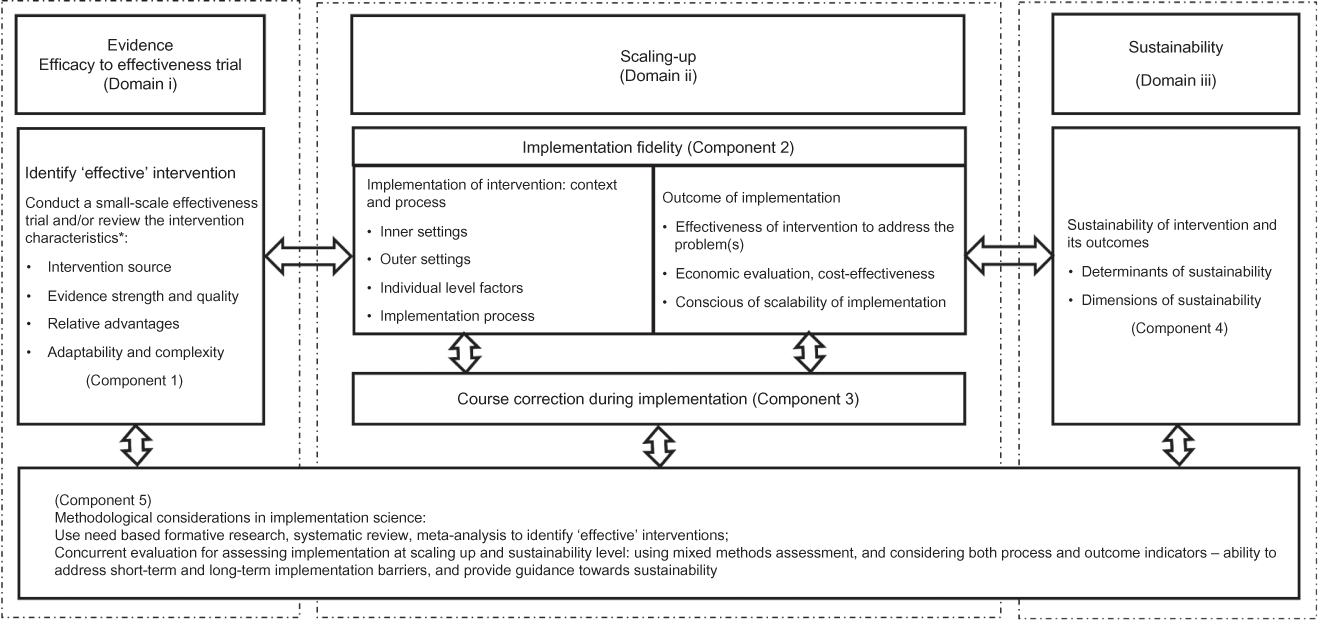

A comprehensive framework for implementation science

We developed a comprehensive framework which we have conceptualised as an implementation science process that describes the transition from the use of scientific evidence through to scaling-up with the aim of making an intervention sustainable. We grouped the five themes (Tables 2 and 3) under three domains: the Evidence Domain i – efficacy to effectiveness trials, Domain ii – scaling-up and Domain iii – sustainability. (see Fig. 2). The evidence-based intervention should be generated through a scientific process and tested rigorously (such as through an RCT). If the intervention is identified as ‘effective’, it then moves to the phase of scaling-up taking into account the larger setting. When the intervention has demonstrated effectiveness in this larger setting, it has the capacity to be sustainable in a real-world practice setting. The double-headed arrows in the framework indicate the bi-directional interaction between the domains and components (Fig. 2). The process may move from identifying an effective intervention to scaling-up and then to sustainability and in the opposite direction indicating that the intervention or a part of intervention may move back for further assessment or piloting in a small-scale setting. For example, during scaling-up, a researcher may identify an additional intervention characteristic which needs further assessment, or may identify a new problem at scaling-up that requires further experiment.

Fig. 2 A comprehensive conceptual framework for implementation science

Domain i: evidence – efficacy to effectiveness

Domain i deals with how a new evidence-based intervention become an ‘effective’ intervention to qualify for scaling-up in a larger real-world setting.

Component 1: identifying an ‘effective’ intervention

Implementation science requires an effective and ‘innovative’ intervention. An intervention can be identified as ‘effective’ through review of the essential intervention characteristics(Reference Verhagen, Voogt and Bruinsma50), which may influence its success(Reference Greenhalgh, Robert and Macfarlane51– 53 ) and determine whether it will be adopted or will ‘fit’ the local health system. The four main characteristics relate to the intervention source, evidence strength and quality, relative advantages and adaptability and complexity of intervention(Reference Damschroder, Aron and Keith24) (Fig. 2). The intervention source refers its original setting which can affect its adaptability for scaling-up(Reference Chambers, Glasgow and Stange35) and whether the intervention was piloted internally and externally. Internal testing is considered better(Reference Damschroder, Aron and Keith24). Strong, quality evidence supports the implementation of an intervention(Reference Atkins, Best and Briss54). There are a range of published guidelines or checklists(55,Reference Balshem, Helfand and Schünemann56) that can help researchers assess the evidence quality and strength of an effective intervention.

The relative advantage of one intervention over a similar one is another important characteristic of its ‘effectiveness’(Reference Gustafson, Sainfort and Eichler57). For example, an evaluation of a preventive intervention may suggest a relative advantage by finding that ‘reducing a risk factor by a small amount in the overall population is more effective than reducing it by a large amount in high-risk individuals’(Reference Zulman, Vijan and Omenn58). Assessing existing evidence of the cost of an intervention can help to understand its relative advantages(Reference Greenhalgh, Robert and Macfarlane51). If users perceive a clear, unambiguous advantage in effectiveness or efficiency of the intervention, it is more likely that the implementation will be successful(Reference Damschroder, Aron and Keith24).

The fourth characteristic relates to adaptability and complexity. Implementation researchers should aim to understand how an intervention can be adapted, tailored, refined or reinvented to meet local needs(Reference Damschroder, Aron and Keith24). This includes considering (i) the essential elements on an intervention and (ii) adaptable elements in the community or the health systems in which it is being implemented(Reference Damschroder, Aron and Keith24). Take for example, an intervention to create awareness of a healthy diet in a rural community using text messages on mobile phones. The mobile phone is an essential element and the language of text messages is an adaptable element which may need to be translated into languages used by rural residents. The intervention may not be adaptable if the community is located in a remote location without a mobile network. The complexity relates to perceived difficulties of scaling-up on an intervention. Implementation scientists suggest that complexity could be addressed successfully through simple, clear and detailed implementation plans, schedules and task assignments(Reference Gustafson, Sainfort and Eichler57).

Domain ii: Scaling-up

Under this domain, there are two components: implementation fidelity (Component 2) and course corrections during implementation (Component 3).

Component 2: implementation fidelity

Fidelity or the degree to which an intervention adheres to the planned process generally produces expected/positive outcomes(Reference Baldwin, Chandran and Gusic59,Reference Carroll, Patterson and Wood60) such as effectiveness and population-level benefits. Fidelity is based on three levels of factors adapted from a previous framework(Reference Kitson, Harvey and McCormack25): (i) outer settings, for example, socio-cultural factors, geographical settings, political context; (ii) inner settings, for example, factors within the organisation or health systems such as organisational policy, structure, human resources, monitoring and supervision and financial management and (iii) individual-level factors such as age, gender, education, skill, worldview, self-efficacy and whether this individual could benefit from or provide an intervention (Fig. 3). The success of scaling-up mostly depends on close consideration of all three levels of contextual factors in tandem with concurrent evaluation and course correction during implementation(Reference Kitson, Harvey and McCormack25,Reference Vamos, Thompson and Cantor61) . These have been discussed under Components 3 and 5.

Fig. 3 Conceptual framework for implementation fidelity

The outcomes of an intervention relate to its effectiveness, its economic viability, including cost-effectiveness, and consensus among the stakeholders around scalability of implementation. The effectiveness of the intervention is vital to scaling-up in a real-world setting. Because scaled-up settings often include an entire community, there is limited opportunity to consider a comparison community. Therefore, we proposed a concurrent evaluation (discussed under Component 5). Consideration of economic viability of the intervention, such as the economic capacity of the implementers and a program’s financial sustainability, in this phase is also crucial for its scalability and sustainability(Reference Scheirer and Dearing30,Reference Samuel and Allen62,Reference De Savigny and Adam63) . If necessary, researchers can develop an advocacy strategy to encourage the beneficiaries of the intervention to embrace it as well as respective policy-makers and other stakeholders in government departments.

Component 3: course corrections during implementation

Assessing the implementation process while it is occurring is crucial to success. Timely course correction creates opportunities for the implementer to revise the implementation plan before it produces an outcome. A concurrent evaluation (discussed under Component 5) allows implementers to assess short-term output/outcomes and implementation gaps. Concurrent evaluations have provided useful lessons for improving the quality of implementation through correction of implementation gaps(Reference Demby, Gregory and Broussard64,Reference Sarma65) . An implementation plan should be flexible enough to include timely course correction, which will be of benefit from the implementers’ perspective and to the beneficiaries and donors(Reference Sarma65).

An implementation coordination team may involve key members from the implementing organisation, the funding agency, beneficiary groups and the research organisation. This team should meet regularly to analyse the evaluation data, outcomes, implementation gaps and evaluators’ recommendations(Reference Sarma65). Team members should jointly decide how to undertake course correction if required. They might also consider a number of issues including strategies to address the implementation gaps, identification of the individuals to perform course corrections and suggestions to the evaluators about whether any modification is required, for example, adding or dropping the indicators for future assessments of the intervention.

Domain iii: sustainability

This domain includes the sustainability of an effective intervention and how to assess it. If an evidence-based intervention demonstrated effectiveness and is economically viable when scaled-up, then it is likely to be sustainable in an even larger setting.

Component 4: sustainability of interventions and its outcomes

Sustainability should be considered from the beginning of the implementation as it requires planning to define it and to determine the operational indicators to monitor it over time(Reference Shediac-Rizkallah and Bone47). The dimensions of sustainability relate to the amount of investment and inputs required, its spatial coverage over time and its fidelity and adaptability to new real-world contexts(66). The determinants of sustainability mostly relate to programme or intervention-specific factors, organisational and other contextual factors(66). Sustainability is difficult in low-income settings, as it is influenced by economic, political(Reference Shediac-Rizkallah and Bone47,Reference Bossert67) and other contextual factors(68,Reference Shediac-Rizkallah and Bone47,Reference Schell, Luke and Schooley48) that are beyond the control of the programme. Reliable funding is critical for maintaining and sustaining the intervention for a long time(Reference Kilbourne, Neumann and Pincus28).

Several studies have proposed conceptual frameworks that could be used to assess the sustainability of an intervention(Reference Scheirer and Dearing30,Reference Chambers, Glasgow and Stange35,Reference Shediac-Rizkallah and Bone47– Reference Greenhalgh, Robert and Macfarlane 51 ). These frameworks considered sustainability as a standalone component of implementation, whereas we argue that sustainability is closely linked with other components of implementation science. Moreover, the published sustainability frameworks mostly deal with programmes which were implemented in developed country settings. Interventions in low-income settings are generally supported by external funders such as international donor agencies. The determinants, including barriers and opportunities of sustainability, are very context specific in low-income settings. To assess sustainability in low-income settings, it is important to consider following key questions:

-

1. What would be the appropriate time point to measure sustainability? (e.g. after how many years of implementation, what would be the starting point of implementation, after ending the external supports or initial inception of the intervention?)

-

2. Would sustainability be measured retrospectively or prospectively?

-

3. What are the dimensions of sustainability, how would they be measured, should we consider them from the very beginning of intervention innovation?

-

4. What are the determinants of sustainability?

The answer to these questions will differ depending on the characteristics of the intervention. An implementation scientist may design a conceptual framework including sustainability or may adapt an existing framework taking into account the evidence at the scaling-up phase, the above questions, the implementation setting and the characteristics of the intervention.

Component 5: methodological consideration in implementation science

The Component 5 of our framework spans the three domains of our conceptual framework. From the very beginning of implementation research, priority should be given to using an appropriate methodology to identify an ‘effective’ intervention as well as to evaluating it. The proposed framework is likely to be more effective if used in conjunction with a range of appropriate methods suited to each domain.

In Domain i, a review of evidence using need-based formative research, a systematic review or meta-analysis provides a methodologically rigorous approach to systematically synthesising the scientific evidence(68– Reference Bastian, Glasziou and Chalmers 71 ). Recently, the number of systematic reviews has increased, with an average of eleven systematic reviews of efficacy level trials published every day in various health and nutrition fields(Reference Bastian, Glasziou and Chalmers71). Therefore, it is not always necessary to start a comprehensive investigation from the beginning. In some cases, relevant available information could be sufficient to identify the effective intervention. An RCT is considered to be a gold standard experimental research design for evaluating the efficacy or effectiveness of an intervention. However, an RCT might not be always be appropriate because it is not easy to adjust during implementation.

For assessing scalability and sustainability in Domains ii and iii, a concurrent evaluation strategy is used to continuously assess the progress of a particular program in a particular community, to determine how it works and with whom it works and accordingly to make necessary corrections(Reference Moss15,Reference Sarma65) . This uses multi-methods evaluation – such as qualitative investigations, quantitative assessment, operations research and economic evaluation and may also include other innovative methods to investigate the implementation and its outcome rigorously(Reference Sarma65). The scaling-up of an implementation should include a comprehensive evaluation plan containing a range of evaluation methods. For example, the implementation scientist can use process evaluation to assess the implementation process, an impact evaluation to assess outcomes and impact of the implementation and an economic evaluation to assess the cost-effectiveness of the interventions in a real-world setting. Additionally, evaluation at this level should have the provision to generate policy recommendations not only based on the direct outcomes of the interventions but also on the awareness of policy experts about the scalability of the intervention in the wider practice level. Our framework includes a ‘concurrent evaluation’ and considers both process and outcome indicators, addresses short-term and long-term implementation barriers and assesses the determinants and dimensions of sustainability.

Applying the framework to a real-world nutrition intervention

The above conceptual framework has been tested and validated in a program that distributes MNP widely to address childhood anaemia in Bangladesh. MNP was first developed in the laboratory in 1996 by a group of researchers in the Hospital for Sick Children in Toronto(Reference Zlotkin, Schauer and Christofides72,Reference Dewey, Yang and Boy73). Its use was trialled through a series of RCTs conducted in different geographical regions (in seven settings in Ghana, China, Bangladesh and Canada) to assess how effectively it reduced the prevalence of childhood anaemia(Reference Dewey, Yang and Boy73). Following positive results, several trials were then conducted to assess its effectiveness, acceptability and feasibility in small-scale community settings in Bangladesh, Ghana and China(Reference Dewey, Yang and Boy73). In addition, systematic reviews and meta-analyses were used to assess the relevant empirical evidence before recommending that this intervention be scaled-up for use in real-world settings(Reference Salam, MacPhail and Das74). BRAC, formerly known as Bangladesh Rural Advancement Committee – an international development organisation, adopted the intervention to implement a population-based home-fortification program reducing childhood anaemia across Bangladesh. The BRAC model relies on volunteer CHWs(Reference Sarma, Uddin and Harbour75) to distribute and sell MNP at cost price to carers in their homes who apply it to children’s food.

As noted, BRAC’s CHWs play a critical role in implementing the intervention in communities. Before they were enrolled, it was important to assess their skills, capacity, motivation and willingness – all individual level factors. Similarly, we considered organisational level factors related to BRAC’s implementation process including its use of incentives for CHWs. We have used it to identify key contextual elements such as Bangladesh’s regulations policies, politics and socio-cultural contexts so that the intervention has been successfully scaled-up. The framework is currently being used by the first author and other researchers at icddr,b to guide an ongoing evaluation including whether the program successfully addresses malnutrition, disease prevention or curation and economic feasibility. Empirical evidence of success will create further demand for the intervention among the stakeholders and beneficiaries. Based on that evaluation findings and experiences, the following papers are under review:

-

1. Home Fortification of Food with Micronutrient Powder: Critical Considerations to Implement It in a Real-World Setting

-

2. Factors Associated with Home-visits by Volunteer Community Health Workers to Implement a Home-fortification Intervention in Bangladesh: A Multilevel Analysis

-

3. Performance of Volunteer Community Health Workers in Implementing Home-fortification Interventions in Bangladesh: A Qualitative Investigation

-

4. Role of Home-visit by Volunteer Community Health Workers in Implementation of Home-fortification of Foods with Micronutrient Powder in Rural Bangladesh

-

5. Use of Concurrent Evaluation to Improve Implementation of a Home-fortification Programme in Bangladesh: A Methodological Innovation

-

6. Cost-effectiveness of a Market-Based Home-fortification of Food with Micronutrient Powder Programme in Bangladesh

In nutrition implementation science, choosing an appropriate methodology is another critical task(68). Scholars propose a range of methodologies to evaluate an implementation(Reference Craig, Dieppe and Macintyre76), or to measure the outcomes or impacts(Reference Rogers, Hawkins and McDonald77) in general but they rarely focus specifically on the most appropriate methods for identifying, testing and improving an innovative nutrition intervention. When a nutrition intervention is implemented in a real-world setting, appropriate methodology is required to carefully assess the contexts, process, outcome, cost and effectiveness of the implementation. Our framework proposes concurrent evalution with a range of appropriate methods proposed for each phase, that is, to identify an effective intervention; explore barriers and facilitators; address gaps during implementation and to show a pathway to sustain the implementation and its outcomes over time.

Discussion

Our framework offers some advances on existing frameworks in implementation sciences. It specifically captures all the main elements of implementation science. This has created an opportunity to understand implementation science holistically and comprehensively – from the beginning to the end including identifying an effective intervention, understanding the process of implementation and effectiveness and finally the sustainability of intervention. Combining the three domains will help implementation researchers to visualise the whole process of implementation science in a one frame.

A unique characteristic of our framework is the inclusion of course correction during implementation. In the implementation literature, most papers focus on how implementation fidelity could be achieved(Reference Carroll, Patterson and Wood27,Reference Pérez, Van der Stuyft and del Carmen Zabala42,Reference Verhagen, Voogt and Bruinsma50,Reference Carroll, Patterson and Wood60) . However, few reviewed frameworks considered how to make course corrections. Concurrent evaluation may generate evidence around the implementation process that is adjustable and the short-term and long-term outcomes. This has improved implementation fidelity and coverage of a home-fortification intervention in Bangladesh(Reference Sarma65). The combination of concurrent evaluation and course correction creates an opportunity to improve implementation fidelity as well as reducing the uncertainty, which ultimately contributes to implementation sustainability.

Sustainability of an intervention and its outcomes are another key implementation science concern. Issues related to sustainability are disruption and discontinuous financial supports, poor support from local stakeholders and local implementers’ lack of decision-making capacity. During decision making, local authorities commonly question why they should invest in the intervention, how they should invest, what are the expected challenges and outcomes. Our framework is particularly helpful for answering these questions, for engaging key stakeholders and generating shared lessons learned through documenting a complete pathway of implementation.

This framework also shows how an intervention can be made more sustainable and how it may be assessed. It is important that sustainability should be considered from the beginning(Reference Schell, Luke and Schooley48) of the intervention because when an effective intervention is scaled-up, it is often changed to adapt within new real-world contexts. Such adaptation ultimately strengthens implementation fidelity so that it is flexible enough to cope with contextual barriers and facilitators. Once the scaled-up intervention generates effective outcomes, then it is more likely to be sustained within that real-world setting.

The framework is holistic. However, this raises the question of whether a single project can implement such a broad framework. Our argument is that it is possible. For example, a single project may not have to start with innovative scientific evidence generation, if a proven effective intervention already exists. If this is the case, the project should follow the pathway for how the intervention can be scaled-up and made sustainable.

Limitations

The proposed framework was developed based on a review of peer-reviewed journal articles and may have excluded useful frameworks available elsewhere such as the grey literature. Most of the reviewed frameworks were originally developed for non-nutrition fields as there is limited literature on the application of implementation science to nutrition interventions. Our framework also derives, in part, from the first author’s hands-on experience in implementing and evaluating a nutrition intervention in Bangladesh. This informed the development of the framework and methodological considerations.

Conclusions

This review provides a foundational and holistic understanding of key components of a conceptual framework and aims to make a valuable addition to implementation science. The framework provides guidance for implementation researchers, policy-makers and programme managers on: reviewing key characteristics of an effective intervention, opportunities to strengthen the fidelity and outcomes, and on sustainability. It may be used in many fields but it aims to assist the development of nutrition interventions for use, particularly in low-income settings taking into account the contexts and characteristics of the intervention. Researchers can use it to plan an implementation roadmap and as a tool for negotiating with funders and other key stakeholders. We encourage future researchers to use and validate this conceptual framework for other public health interventions in various settings and propose improvements.

Acknowledgements

Acknowledgements: The authors thank Professor Gabriele Bammer, Research School of Population Health, The Australian National University and Dr. Mduduzi Mbuya, The Global Aliance for Improved Nutrition for their review of the initial draft and for providing valuable comments to improve the conceptual framework. Financial support: No direct funding supports received for this research. Conflict of interest: The authors declare that they have no competing interests. Authorship: H.S. conceived and designed the study. H.S. and C.B. analysed the data and were involved throughout the study, conducting literature searches, identifying the studies for inclusion and writing the manuscript. C.D., T.J.B. and T.A. were involved in finalising the manuscript and provided technical and scientific inputs in preparing the final draft. All the authors reviewed the final draft before submission. Ethics of human subject participation: Not applicable, as it is a systematic narrative review. Consent for publication: Not applicable, as the paper does not contain personal information of any individual. Availability of data and material: No primary data available.