As consumers interface with the food system, food environments drive their decisions about what to eat, where to get it from and with whom to eat it(Reference Neve, Hawkes and Brock1,Reference Downs, Ahmed and Fanzo2) . Changes to food environments in low-and middle-income countries (LMIC) over the past few decades have been attributed to shifts in dietary intakes that have led to an increase in the prevalence of overweight and obesity and diet-related non-communicable diseases(Reference Downs, Ahmed and Warne3–Reference Popkin and Ng5). In some cases, changes in food environments have also coincided with reductions in the prevalence of undernutrition among children(Reference Debela, Demmler and Klasen6,Reference Huelsen, Khonje and Qaim7) , but not that of micronutrient deficiencies(Reference Rahman, de Silva and Sassa8,Reference Hawkes, Ruel and Salm9) .

Characterising the dimensions of diverse food environments in LMIC, including availability, affordability, convenience, quality and promotion and sustainability of foods(Reference Downs, Ahmed and Fanzo2), can facilitate better design of interventions and policies that are tailored to the local context and people’s lived experiences. However, most of the tools that were designed to measure food environments were developed for high-income contexts(Reference Downs, Ahmed and Fanzo2,Reference Toure, Herforth and Pelto10,Reference Herforth and Ahmed11) . In recent years, there has been a significant amount of progress in terms of developing assessments, tools and methodological approaches that are designed to capture objective measures of food environments in LMIC(Reference Downs, Warne and McClung12–Reference Ahmed, Kennedy and Crum14), including in wild, cultivated and built spaces. This includes mapping vendors, collecting price data, observational checklists and other static measures about the food environment(Reference Turner, Kalamatianou and Drewnowski15).

Yet, it is also important to gain insight into how people navigate their personal food environments (i.e. ‘a set of individual-level dimensions’) and how they make food-related decisions within the contexts of their lived experiences(Reference Turner, Aggarwal and Walls16). This involves subjective measures since not everyone experiences a given food environment in the same way. Each individual has subjective interactions with food environments based on how much income or time a person has, their cultural identity, their physical capacity, whether they have a vehicle to access food sources and their personal preferences, among many others(Reference Neve, Hawkes and Brock1,Reference Turner, Aggarwal and Walls16) . These lived experiences filter people’s engagement with objective measures in the food environment. Although a significant amount of qualitative research, including interviews, focus groups, photo elicitation techniques, etc., has been conducted to document how people’s lived experiences influence the way they navigate their food environments(Reference Turner, Kalamatianou and Drewnowski15–Reference Wanjohi, Pradeilles and Asiki19), capturing this information through structured surveys could help provide more representative and comparable data of populations’ lived experiences complementing the findings of qualitative research in this space.

Existing surveys and questionnaires to capture the interface between the food environment and people’s lived experiences(Reference Green and Glanz20,Reference Steptoe, Pollard and Wardle21) have been designed largely for high-income country contexts, without consideration of the multiple dimensions of diverse food environments in LMIC. Data that have measured food environments and people’s lived experiences in LMIC show that the way consumers interact with their food environments is markedly different than high-income settings(Reference Toure, Herforth and Pelto10,Reference Turner, Kalamatianou and Drewnowski15,Reference Turner, Aggarwal and Walls16,Reference Constantinides, Turner and Frongillo22–Reference Downs, Manohar and Staromiejska25) . Consumers in LMIC access different types of food environments; they produce or collect foods from natural sources, engage with vendors from informal settings, have different purchasing patterns due to a variety of reasons such as a lack of cold storage and emphasise food safety and vendor trustworthiness, among many other differences(Reference Constantinides, Turner and Frongillo22). For these reasons, developing survey instruments that are tailored to the experiences of people living in LMIC is needed(Reference Choo26,Reference Cooper, Zahra and Konapur27) .

The objective of this paper is to present the process of developing, implementing and refining a food environment survey to capture people’s perceptions of their food environments in LMIC: the Food Environment Perceptions Survey (FEPS). By describing the process of developing the FEPS, we provide transparency into the survey development and the steps taken to arrive at the finalised version.

Methods

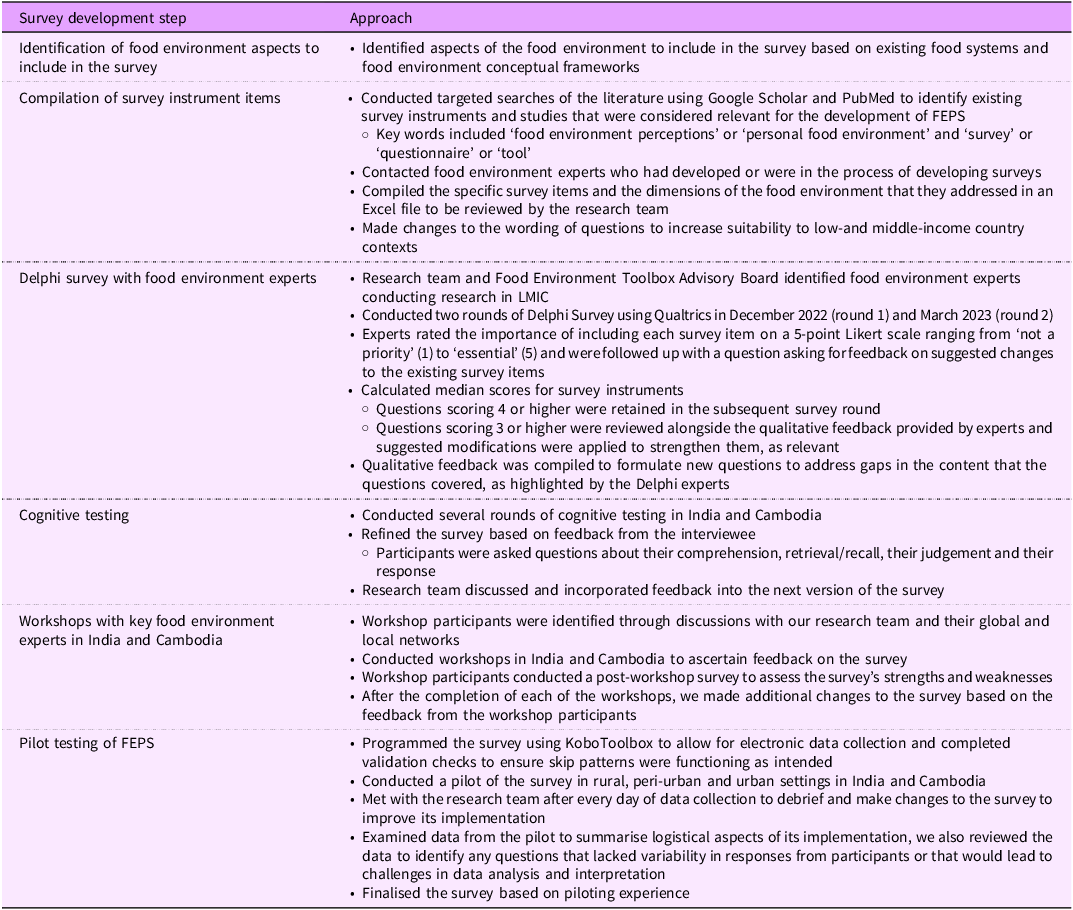

FEPS was co-created using an iterative process; Table 1 provides an overview of the steps taken as part of the survey development process. We (i) identified relevant aspects of food environments to include in the survey, (ii) drew from existing survey instruments and modified questions for suitability in diverse LMIC contexts, (iii) evaluated suitability and modified survey questions through a robust process of internal review and expert feedback and (iv) conducted cognitive and pilot testing in the field in diverse contexts in India and Cambodia and finalised the tool. The FEPS is one component of the Food Environment Toolbox that includes a suite of tools aimed at characterising food environments in LMIC settings.

Table 1. An overview of the steps taken as part of the Food Environment Perceptions Survey

FEPS, Food Environment Perceptions Survey; LMIC, low-and middle-income countries.

We obtained ethical approval from the Rutgers University Institutional Review Board and the institutional ethics committee of The George Institute for Global Health for this work. We also obtained ethical approval from the National Ethics Committee for Health Research in Cambodia and local authorities in Cambodia.

Identification of aspects of food environments to include in the survey

The first step in our approach to developing the FEPS was to identify which aspects of how consumers interface with their food environments to include in the survey. We pulled from food environment and food system frameworks with a particular focus on LMIC to identify the constructs and food environment dimensions that interacted with individual factors(Reference Downs, Ahmed and Fanzo2,Reference Turner, Aggarwal and Walls16,28–Reference Fanzo, Haddad and Schneider30) . More specifically, we used the following food environment dimensions to guide the survey development: accessibility (i.e. physical distance, time, space, place, etc.) and availability (i.e. the presence or absence of food sources and products)(Reference Turner, Aggarwal and Walls16), affordability, convenience, quality and promotion and sustainability of foods. The ways in which these dimensions influence food acquisition are influenced by individual factors, including economic (income and purchasing power), cognitive (information and knowledge), aspirational (desires, values, preferences) and situational (home and work environment, mobility, location, time) factors(Reference Herforth and Ahmed11,Reference Fanzo, Haddad and Schneider30) . The survey content was designed to be flexible to capture consumers’ perceptions of their food environments and how they influence food acquisition within different types of food environments, including natural (i.e. wild and cultivated), built (i.e. formal and informal), supplemental food assistance and kin and community(Reference Downs, Ahmed and Fanzo2,Reference Bogard, Andrew and Farrell29) .

Compilation and initial modification of survey instrument items

We conducted a search and compiled existing survey instruments and studies that were considered relevant for the development of FEPS; relevant instruments and studies were determined as those that elicited people’s perceptions, experiences and knowledge of their food environments. We modified the wording and response options of existing items to be more relevant to diverse LMIC contexts and added new survey items when we perceived gaps in the information that was being collected for different food environment dimensions (e.g. accessibility and availability, affordability, convenience, quality and promotion and sustainability of foods). The adaptation of existing questions and the development of new ones were guided by key principles of survey development(Reference Tsang, Royse and Terkawi31,Reference van de Vijver, Mohler and Harkness32) .

Delphi survey with food environment experts

The compiled survey items were then used in a Delphi survey with food environment experts. The Delphi method is a consensus-generating method of systematically gathering input from experts on a topic(Reference Turoff and Linstone33); our goal was to reach consensus on survey items to include in the FEPS instrument. With the support of our Food Environment Toolbox Advisory Board, we identified experts with diverse backgrounds and experiences in evaluating food environments in LMIC. We sent the initial invitation of the Delphi survey to fifty-one food environment experts; a total of twenty-four experts participated in the first round of the survey. We sent the second-round survey to a total of twenty-nine experts (twenty-four from the first round plus five additional, noted below); a total of seventeen experts participated in the second round of the survey. We made a modification to the typical Delphi approach by inviting five additional experts who were unable to contribute to the first Delphi survey round due to time constraints to participate in the second round. While this is atypical of a standard Delphi method, we anticipated that the feedback that those experts would provide merited the modified approach and would still allow for the goals of the Delphi survey to be achieved (i.e. reaching consensus on survey items).

Descriptive statistics were used to calculate the median score for each of the items included in the survey(Reference Hallowell and Gambatese34). We also used qualitative feedback to modify the wording of questions based on expert feedback. Survey questions that had sufficient support from experts and that maintained a consistent flow of the survey items were retained in the survey for cognitive testing of the draft survey tool. In addition to ascertaining feedback related to the different survey items, we also sought feedback on specific food groups to include in the FEPS as part of the Delphi survey. The food groups went through many iterations over the course of the project, which is described in additional detail elsewhere, given that we used the same food groups for the Toolbox overall(Reference Downs, Staromiejska and Bakshi35).

Refining the tools based on cognitive testing and expert workshops

We conducted several rounds of cognitive testing of FEPS in India (in Hindi) and Cambodia (in Khmer). The first round of cognitive testing in India was conducted by members of the research team with people living in urban (n 5) and rural (n 5) areas between October and December 2023. After each round of cognitive testing, the feedback from the participants and the interviewer was summarised for each survey section. The rounds continued until no (or few) new insights were being revealed (i.e. saturation). The process was iterative, with each interview informing subsequent changes to the survey instrument, which were updated prior to the next round of cognitive testing.

In addition to feedback from participants of the cognitive testing interviews, we also elicited feedback from local food environment researchers and practitioners through a workshop in India (November 2023). Nineteen experts affiliated with universities (n 6), local and national research institutes (n 9), foundations (n 2) and UN agencies (n 2) attended the workshop in India. Members of our research team who conducted the cognitive testing presented their initial findings to workshop participants, which informed the discussion on changes that needed to be made to the survey. In addition to providing real-time feedback on the survey, workshop participants rated the FEPS in a post-workshop survey (rating range 1–3). Subsequent rounds of cognitive testing incorporated workshop participants’ feedback into a revised survey.

We conducted all rounds of cognitive testing and the workshop in India prior to conducting the testing in Cambodia; in Cambodia, our research team conducted cognitive testing with fifteen participants living in rural (n 5), peri-urban (n 3) and urban (n 7) settings between March and April 2024, following a similar process to what was described above for India cognitive testing interviews. We also conducted a workshop with key food environment researchers and practitioners in Cambodia (March 2024) to ascertain their feedback on the FEPS tool. In Cambodia, twenty-eight experts representing local universities (n 9), local and national research institutes (n 2), non-governmental organisations and civil society organisations (n 12), government agencies (n 3) and UN agencies (n 2) participated in the workshop. We shortened the workshop survey in Cambodia to reduce respondent burden and included a rating scale from 1–5 (strongly disagree to strongly agree), rather than the smaller scale (1–3) used in India, to capture more variation in workshop participants’ ratings. Any additional feedback was incorporated into the revised survey.

Pilot testing of the Food Environment Perceptions Survey

After the cognitive testing and workshops with experts, we piloted the survey with a convenience sample of participants in rural (India n 21; Cambodia n 15), peri-urban (Narayanganj, a transitional area in Madhya Pradesh, India n 10; town on the outskirts of Phnom Penh, Cambodia n 15) and urban (Delhi, India n 13; Phnom Penh, Cambodia n 30) settings. The piloting took place between March and June 2024. We aimed to pilot the survey with fifteen people from each of the settings. This sample size is aligned with sample sizes for feasibility assessments(Reference Moore, Carter and Nietert36), given that the piloting was designed to assess the feasibility of implementing the survey instrument prior to implementing the survey with a larger sample size in future work and was not intended for hypothesis testing. In India, households in each community were approached by foot at random and asked if they would be willing to participate; in Cambodia, participants were identified through community focal points. In the rural and peri-urban settings, participants were generally low income; in the urban settings, we randomly selected participants regardless of income in Delhi and purposively selected low-and high-income participants in Phnom Penh.

Analysis

We used descriptive statistics, using Microsoft Excel (16·87, 2024) to summarise results from the Delphi survey, the workshops and the pilot study. Using our pilot data and SPSS (Version 29), we conducted ANOVA for continuous variables and χ 2 tests (for categorical variables) to examine statistical differences in food environments accessed across rural, peri-urban and urban transects. A P-value of < 0·05 was considered statistically significant. In addition, we examined detailed notes from cognitive testing interviews and the workshop notes to thematically summarise the information related to the challenges of implementing the survey and experts’ perceptions of the survey, respectively.

Results

Compilation of survey instrument items

Online supplementary material, Supplemental Table 1 provides an overview of the manuscripts, reports and unpublished survey instruments that were reviewed to inform the development of the FEPS. Overall, we reviewed eighteen published manuscripts, some of which referred to several different survey instruments within them. In addition to these, we obtained three survey instruments(Reference Choo26,Reference Cooper, Zahra and Konapur27,37,Reference Grude38) from food environment researchers and practitioners working in LMIC that had not yet been published at the time we were compiling study instruments. We included 113 items from these surveys to be included in the Delphi survey.

Delphi survey with food environment experts

Overall, twenty-four of the invited participants (47 % response rate) completed the survey in round one. We sent the round two survey to twenty-nine participants, seventeen of whom completed the survey (59 % response rate). Delphi participants were mostly female (92 %), early or mid-career researchers (71 %), working in universities (58 %) and with nutrition as their primary discipline (63 %). Food environments and food systems were the primary specialties that participants used to describe themselves. All survey participants conducted food environment work in LMIC. Figure 1 depicts the specific countries (n 33) they reported working in.

Figure 1. Countries in which Delphi participants conduct food environment work.

Of the 113 survey items that were included in the first Delphi survey round, eighty-eight had a median score of 4 or above (78 %) and were included in the next round of the Delphi survey. Twenty-five survey items (22 %) had a median score below 4; all but one of these low-rated questions were removed in the survey’s next iteration. The survey item that had a score below 4 but was included in round 2 was modified based on its specific qualitative feedback from the Delphi participants and retained. We refined the remaining questions and added additional questions to address feedback from the Delphi participants about the gaps in the survey items that had been compiled.

In round 2, survey participants were asked to rate 102 survey items; this round determined which survey items would be included for cognitive testing. Survey items scoring 4 and 5 were automatically included (n 64, 63 %); one survey item (1 %) scored 2 and was automatically removed. Survey items scoring 3 (n 37; 36 %) were further reviewed for inclusion in the cognitive testing stage. Of the survey items that scored 3, 30 (81 %) were included in the subsequent version of the survey based on support from experts through qualitative feedback; the remaining questions (n 7) were removed from the survey after the second Delphi round due to their median score being three or below and their lack of support from experts. This resulted in a total of ninety-four survey items to be included in the survey for cognitive testing. In addition to the rating of survey items, we reviewed and incorporated qualitative feedback from round 2 for the retained questions prior to beginning cognitive testing of FEPS in India and Cambodia.

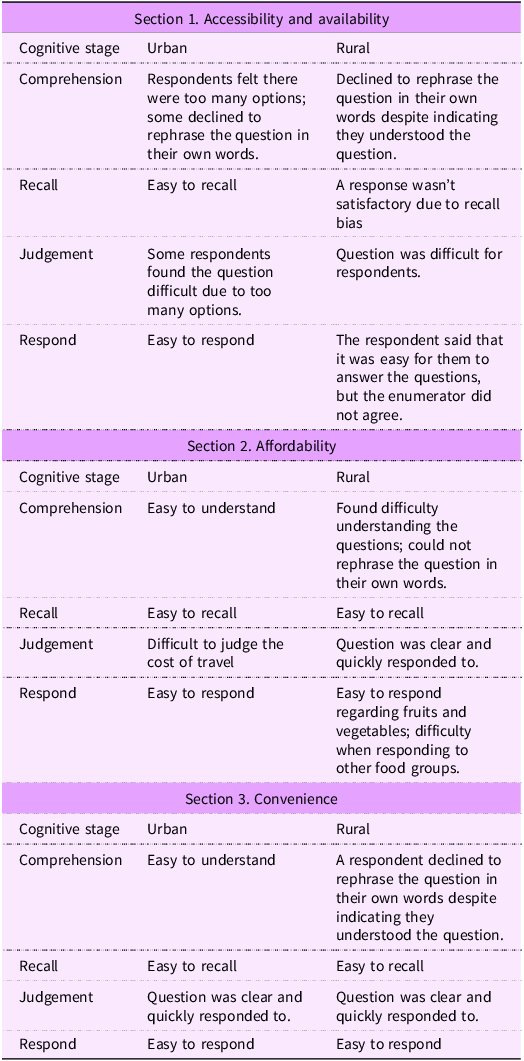

Cognitive testing

Table 2 provides a summary of the responses to the cognitive testing questions for each of the survey sections in India. In the first round of the cognitive testing of FEPS in India, the first section of the survey that focused on food accessibility and availability took 90 min to complete. This led the team to reconfigure this section of the survey prior to conducting subsequent cognitive testing rounds. During the subsequent rounds of cognitive testing in India, the survey took an average of 74 min to complete with urban respondents and 135 min with rural respondents.

Table 2. Summary of cognitive testing findings of administering the Food Environment Perceptions Survey in India

Additional changes to the survey were made based on feedback from workshop participants and input from the research team to further reduce the amount of time the survey took to complete. In the second round of cognitive testing, most sections of the survey required tweaks to wording and terms used, rather than complete overhauls of the survey sections, except for the food sovereignty section, which participants had difficulties responding to.

The cognitive testing in Cambodia was conducted several months after India. Between the India and Cambodia cognitive testing, we conducted workshops with experts in both countries and completed the rural piloting in India. For this reason, the survey instrument had undergone many changes, leading to the identification of fewer additional changes after the cognitive testing in Cambodia. In Cambodia, the survey took an average of 46 (rural setting) to 57 min (peri-urban setting) to complete. The amount of time taken to administer and complete the survey, particularly with participants from rural areas (i.e. the average time was reduced by 89 min between the countries). Overall, we identified small changes that improved survey delivery.

Workshops with key food environment experts

During both the workshops in India and in Cambodia, we received extensive feedback from experts on how to further strengthen the survey. In India, over half of the workshop participants rated the overall presentation (n 9, 90 %), language (n 6, 60 %), ease of use (n 6, 60 %), relevancy to LMIC (n 7, 70 %) and relevant dimensions (n 10, 100 %) positively (i.e. 3 out of 3). However, the respondents felt the tool was somewhat lengthy or lengthy (n 6, 60 %) (see Figure 2, Panel A). In Cambodia, which elicited feedback on a more finalised version of the survey, workshop participants rated the survey positively (i.e. strongly agreed or agreed with statements) across all attributes (Figure 2, Panel B). The overwhelming majority gave favourable ratings for the presentation (n 13, 100 %), language (n 12, 92 %), ease of use (n 12, 92 %), relevancy to LMIC (n 13, 100 %), length (n 12, 92 %) and relevant dimensions (n 13, 100 %).

Figure 2. India1 and Cambodia2 workshop participants’ feedback on different attributes of the Food Environment Perceptions Survey. LMIC, low-and middle-income countries. 1The scores from the workshop in India were rated from 1 (viewed negatively) to 3 (viewed positively). 2The scores for tool length in India ranged from 1 (very lengthy/somewhat lengthy) to 3 (short). The lengthy and somewhat lengthy response options were collapsed to enable their display on a 3-point scale. 3The scores from the workshop in Cambodia were rated from 1 (viewed negatively) to 5 (viewed positively).

Table 3 summarises the workshop participants’ ratings of each of the sections of the survey instrument. In India, 20 % of participants felt it would be difficult for survey respondents to recollect information about the food labels they see and/or read when purchasing food. In Cambodia, the only question that had fewer than 90 % of participants responding that they agreed or strongly agreed was related to the sufficiency of the information captured on labelling and promotion. We made additional changes to the survey questions, such as refining the wording to improve comprehension and readability, splitting questions into two parts to elicit more specific information, adding/removing response options, etc., after the workshops and prior to piloting the tools.

Table 3. Summary of workshop participants’ ratings of each survey section in India and Cambodia

* The scores from the workshop in India were rated from 1 (viewed negatively) to 3 (viewed positively).

† The scores from the workshop in Cambodia were rated from 1 (viewed negatively) to 5 (viewed positively).

There was extensive feedback about survey food groupings at each stage of survey development. The development and refinement of the food groups underwent numerous iterations. There were differing points of view that made it difficult to reach a consensus. For example, workshop participants in India suggested sub-categories of foods and breaking out starchy staples into their own groups (n 3, 23 %). One participant suggested breaking out dark green leafy vegetables into their own food group. During group discussions, participants provided similar feedback and suggested the use of previously established food group classifications (see online supplementary material, Supplemental Table 2 for a description of the final food groups included in the survey). The food groups presented in the workshop in Cambodia were more finalised, and workshop participants provided minimal input on their refinement.

Pilot testing of the Food Environment Perceptions Survey

Overall, the FEPS survey that was piloted took 50–70 min in rural and peri-urban India and 30–40 min in urban India, whereas in Cambodia, it took an average of 35 min in rural, 32 min in peri-urban and 35 min in the urban settings. In India, we found that respondents were still experiencing challenges responding to the food sovereignty section of the survey; for that reason, we removed the section from the survey shortly after beginning piloting in India. While piloting the tool in India, we also found that the questions related to the food environment dimension of promotion did not seem to be applicable to the rural population as many participants in rural settings did not report being exposed to any food or beverage promotions. However, the section was relevant for all the other populations we piloted and tested the survey with, including rural Cambodia. For that reason, these questions were retained.

Enumerators encountered challenges when implementing the survey in both countries. Questions related to convenience were more difficult for rural respondents to conceptualise, much like the experience with the food promotions module described above. Moreover, questions related to the aspirational aspects of food affordability (e.g. ‘which foods do you wish were cheaper?’) were sometimes difficult for respondents to comprehend.

While conducting preliminary analyses of the pilot data in rural India, we identified issues with the use of the option ‘not applicable’ in several of the questions. This created challenges for data interpretation and analysis. We therefore made changes to the response options included in the programming of the online data collection form. We also made changes to parameters for responses (e.g. only responding in minutes rather than having the option of minutes or hours) to reduce the amount of data cleaning that would be required while also improving the interpretability.

Description of the finalised version of the Food Environment Perceptions Survey

The final version of the FEPS can be found on the Toolbox website (https://sites.rutgers.edu/food-environment-toolbox/food-environment-perceptions-survey-feps/). The survey consists of 109 questions covering six sections: accessibility and availability (forty-eight questions); affordability (five questions), convenience (seventeen questions); quality and safety (three questions); information, promotion and labelling (sixteen questions); and an optional sustainability section (twenty questions). We included twenty-seven food groups in the final survey instrument; however, four food groups are considered optional.

Based on our piloting data, the survey captured the diversity of food environments that participants were accessing and how those differed across transects. In both India and Cambodia, the number of food environment types accessed was significantly higher in rural (mean 2 (sd 0·85) in Cambodia; mean 3·95 (sd 0·92) in India) compared with peri-urban (mean 1·7 (sd 0·81) in Cambodia; mean 2·6 (sd 0·97) in India) and urban (mean 1·2 (sd 0·43) in Cambodia; mean 2·08 (sd 0·76) in India) settings (P-value < 0·001 in India; P-value = 0·02 in Cambodia). Moreover, in India, urban (mean 5·2 (sd 2·4)) and peri-urban (mean 5·8 (sd 3·0)) populations accessed more types of food outlets/vendors in the built environment compared with rural participants (mean 2·7 (sd 1·3)) (P-value < 0·001). We observed a similar gradient in Cambodia; however, it was not statistically significant (P = 0·360). Of note, all (100 %) of the urban participants in India reported accessing food from the online food environment compared with 0 % of the rural participants and 40 % of the peri-urban participants (P < 0·001). In Cambodia, only three pilot participants (5 %), from peri-urban/urban settings, reported accessing food from online sources. In India, there were no differences in accessing mobile vendors across the rural, peri-urban and urban transects (P = 0·130). However, in Cambodia, all rural participants (100 %) and none (0 %) of the urban participants accessed food from mobile vendors (P < 0·001).

In addition to capturing the diversity of food environments that participants were accessing, the survey captured participants’ perceptions of food availability and affordability, including information about which of the food groups they considered to be expensive, which they wished were cheaper and which fluctuated seasonally. The survey also captured participants’ perceptions related to convenience, including which food groups they consider convenient and in which ways (e.g. ‘because it’s easy to prepare’); their exposure to food and beverage-related information, labelling and promotion, including which food groups they were for; and their perceptions related to sustainability and the food groups that they perceive have been impacted by climate variability over the past 10 years. Online supplementary material, Supplemental Table 3 provides an overview of narrative takeaways from our pilot about different dimensions of the food environment by location. Given that we were still making iterative changes to the survey during the piloting in India, we report more data from Cambodia.

Discussion

This paper describes the co-design, refinement and finalisation of a survey instrument to capture perceptions of food environments among populations living in LMIC, specifically in rural and urban contexts in India and Cambodia. This iterative process of developing, refining and finalising the survey took place over the course of two and a half years in two countries and involved multiple iterative steps and input from research team members, food environment experts and individuals interacting with food environments in the study areas. Given the diversity of food environments accessed in both India and Cambodia, we anticipate that the experiences implementing the survey across transects in these diverse contexts will be relevant to other LMIC settings, with minimal adaptations.

Overall, the FEPS was rated favourably by workshop participants in India and Cambodia. Moreover, our findings suggest that the survey was strengthened over the course of the process of refining and finalising, with each iteration leading to reductions in the amount of time it took to complete, improvements in the flow and improved wording of specific questions. We made numerous changes to the survey throughout the process including both minor changes to wording and terms used and major changes such as removing entire sections of the survey or reconfiguring the way the questions in a specific section were asked.

FEPS was designed with the aim of capturing a wide array of food environments accessed by participants in rural, peri-urban and urban populations in LMIC, given that the food environments that populations access across these gradients of urbanicity can differ substantially(Reference Merchant, Fatima and Fatima39–Reference Westbury, Ghosh and Jones41). The finalised survey provided helpful insight into the different food environments that people accessed and how those differed across contexts. Moreover, it provided insight into how the people’s perceptions of different food environment dimensions (e.g. affordability, safety, convenience, etc.) were related to specific food groups, which can inform interventions. As communities are exposed to different food environments and as populations migrate from rural to urban settings, these dimensions may play a more prominent role in their food choices. For example, we found that convenience was difficult for rural populations in India to conceptualise. However, in urban settings, it was relatively easy for respondents to articulate how convenience was influencing their food choices. This likely reflects different time constraints and responsibility within and outside the household for these different population groups, given the time use changes that typically occur when people migrate from rural to more urban settings(Reference Kenney, Rampalli and Samin42). It might also reflect a wider array of foods to choose from, including more ready-to-eat and snack foods in these settings(Reference Pries, Filteau and Ferguson43).

We faced many challenges during the process of developing the survey. First, we needed to strike the right balance of covering a sufficient breadth of key dimensions of the food environment, while being time sensitive to respondent burden. Second, we had to refine the most appropriate food groups to include in the survey. Finally, we had to ensure that the questions and their corresponding response options were applicable across settings. One way to overcome the potential time burden challenge for effectively using the instrument in different research is to select the specific sections of the survey that are relevant to the research question being examined, rather than conducting the survey in its entirety. Alternatively, selecting only specific food groups of interest could also help to reduce the amount of time that the survey takes to complete. Lastly, a bigger sample size is needed to conduct more comprehensive analyses; however, this was beyond the scope of this study.

Conclusion

The finalised version of the survey provides researchers and practitioners with a newly developed survey that provides insight into how consumers’ perceptions of their food environments influence their food choices in LMIC. While it has solely been piloted in two countries to date, we anticipate that it will be relevant to other settings with minimal adaptations. Future piloting in different contexts can continue to refine the survey based on field experiences. Moreover, future work is needed to create corresponding indicators for the survey and to assess the validity of FEPS and its relationship with dietary intakes. Ultimately, it is expected that the knowledge gained from the application of FEPS in diverse contexts will be beneficial to develop solutions to improve food environments for delivering healthy and sustainable diets for all.

Supplementary material

For supplementary material accompanying this paper, visit https://doi.org/10.1017/S1368980026102043.

Acknowledgements

We thank the participants of our workshops with experts in India and Cambodia and our Advisory Board members (Drs. Chris Turner, Khris Nicholas, Chris Vogliano, Gina Kennedy and Amos Laar) for their instrumental feedback on the survey.

Authorship

S.D., S.S., S.A., E.F., A.H. and S.G.J. were responsible for conceptualisation and funding acquisition; S.D. and W.S. conducted formal analyses; S.D. and S.G.J. developed the methodology; S.D. drafted the original manuscript; W.S. completed the visualisation; and S.S. and S.G.J. collected data. All authors reviewed and approved the final version of the manuscript.

Financial support

This work is funded through the Innovative Methods and Metrics for Agriculture and Nutrition Action (IMMANA) programme, led by the London School of Hygiene & Tropical Medicine, in partnership with Tufts University and the University of Sheffield. IMMANA is co-funded with UK Aid from the UK government and by the Bill & Melinda Gates Foundation (INV-002962/OPP1211308). Under the grant conditions of the Foundation, a Creative Commons Attribution 4.0 Generic License has already been assigned to the Author Accepted Manuscript version that might arise from this submission. This funding source had no role in the design, analysis or writing of this article.

Competing interests

There are no conflicts of interest.

Ethics of human subject participation

This study was conducted according to the guidelines laid down in the Declaration of Helsinki, and all procedures involving research study participants were approved by the Rutgers University Institutional Review Board. Written informed consent was obtained from all subjects/patients.