1. Introduction

Flow reconstruction from sparse data has received a lot of attention and the literature on the subject is vast; see for example Brunton & Noack (Reference Brunton and Noack2015), Sipp & Schmid (Reference Sipp and Schmid2016), Callaham, Maeda & Brunton (Reference Callaham, Maeda and Brunton2019), Karniadakis et al. (Reference Karniadakis, Kevrekidis, Lu, Perdikaris, Wang and Yang2021), Zaki (Reference Zaki2025) and references therein. On the other hand, forecasting the future evolution of the flow has received considerably less attention in fluid dynamics research, especially turbulence. The ability to estimate the future state from current data, also known as forecasting (Box et al. Reference Box, Jenkins, Reinsel and Ljung2015), has many applications. Examples include weather forecasting, urban safety (prediction of toxic pollutant trajectory that can guide evacuation measures), prediction of extreme events (such as heat waves) ahead of time, etc.

The forecasting time window of turbulent flows is limited by a fundamental physical constraint, which arises from the fact that such flows are chaotic and thus have extreme sensitivity to initial conditions. This is popularly known as the ’butterfly effect’ (Lorenz Reference Lorenz1963) and can be understood as follows. Consider two turbulent flows, originating from the same physical process, that have initial conditions with infinitesimally small differences. Their trajectories in phase space will diverge at a rate determined by the maximum Lyapunov exponent,

![]() $\lambda _1$

, that is

$\lambda _1$

, that is

![]() $|\delta x (t)| \sim {\rm e}^{\lambda _1 t}$

, where

$|\delta x (t)| \sim {\rm e}^{\lambda _1 t}$

, where

![]() $|\delta x (t)|$

is the norm of the distance between two points in phase space. The corresponding time scale is

$|\delta x (t)|$

is the norm of the distance between two points in phase space. The corresponding time scale is

![]() $1/\lambda _1$

.

$1/\lambda _1$

.

There are theoretical arguments (Ruelle Reference Ruelle1979; Crisanti et al. Reference Crisanti, Jensen, Vulpiani and Paladin1993; Ge, Rolland & Vassilicos Reference Ge, Rolland and Vassilicos2023) and computational evidence (Mohan, Fitzsimmons & Moser Reference Mohan, Fitzsimmons and Moser2017; Hassanaly & Raman Reference Hassanaly and Raman2019) that in turbulent flows

![]() $\lambda _1$

scales with the Kolmogorov time scale,

$\lambda _1$

scales with the Kolmogorov time scale,

![]() $\tau$

(with a correction factor that depends on Reynolds number). Therefore the future evolution of turbulent flows can only be forecast over a time window that is only several times larger than

$\tau$

(with a correction factor that depends on Reynolds number). Therefore the future evolution of turbulent flows can only be forecast over a time window that is only several times larger than

![]() $\tau$

, say

$\tau$

, say

![]() $(6{-}10) \tau$

. This has been confirmed repeatedly, irrespective of the method used for forecasting; see Pathak et al. (Reference Pathak, Lu, Hunt, Girvan and Ott2017), Vlachas et al. (Reference Vlachas, Pathak, Hunt, Sapsis, Girvan, Ott and Koumoutsakos2020), Dubois et al. (Reference Dubois, Gomez, Planckaert and Perret2020), Eivazi et al. (Reference Eivazi, Guastoni, Schlatter, Azizpour and Vinuesa2021), Khodkar & Hassanzadeh (Reference Khodkar and Hassanzadeh2021) and Constante-Amores, Linot & Graham (Reference Constante-Amores, Linot and Graham2025) for a very small sample of extensive literature on the subject. Therefore, for high-Reynolds-number flows this time window is very short to be useful in practice. For other applications, however, for example in medium-range weather forecasting, current lead times are 10 days, but can reach up to 15 days if the uncertainty in the initial conditions is reduced by an order of magnitude (Zhang et al. Reference Zhang, Sun, Magnusson, Buizza, Lin, Chen and Emanuel2019; Allen, Markou & Tebbutt Reference Allen, Markou and Tebbutt2025). This window is sufficiently large to bring about enormous socioeconomic benefits, for example protecting lives, property, etc.

$(6{-}10) \tau$

. This has been confirmed repeatedly, irrespective of the method used for forecasting; see Pathak et al. (Reference Pathak, Lu, Hunt, Girvan and Ott2017), Vlachas et al. (Reference Vlachas, Pathak, Hunt, Sapsis, Girvan, Ott and Koumoutsakos2020), Dubois et al. (Reference Dubois, Gomez, Planckaert and Perret2020), Eivazi et al. (Reference Eivazi, Guastoni, Schlatter, Azizpour and Vinuesa2021), Khodkar & Hassanzadeh (Reference Khodkar and Hassanzadeh2021) and Constante-Amores, Linot & Graham (Reference Constante-Amores, Linot and Graham2025) for a very small sample of extensive literature on the subject. Therefore, for high-Reynolds-number flows this time window is very short to be useful in practice. For other applications, however, for example in medium-range weather forecasting, current lead times are 10 days, but can reach up to 15 days if the uncertainty in the initial conditions is reduced by an order of magnitude (Zhang et al. Reference Zhang, Sun, Magnusson, Buizza, Lin, Chen and Emanuel2019; Allen, Markou & Tebbutt Reference Allen, Markou and Tebbutt2025). This window is sufficiently large to bring about enormous socioeconomic benefits, for example protecting lives, property, etc.

Is it possible to enlarge the forecasting window for turbulent flows and get estimations that are useful in engineering practice? The aforementioned scaling of

![]() $\lambda _1$

with

$\lambda _1$

with

![]() $\tau$

indicates that the former is determined by the smallest scales of the flow. However, a turbulent flow consists of a wide range of spatio-temporal structures, and it is well known that large-scale structures are slower and more organised (Pope Reference Pope2000). This opens the possibility that they can be more amenable to forecasting. These structures are important because they determine momentum transfer (and therefore forces) and scalar dispersion. The ability therefore to forecast their future evolution can bring many practical benefits. Most of existing work on forecasting to date has focused on low-dimensional dynamical systems (such the Lorenz 63/96 systems, the Kuramoto–Sivashinsky equation, the nine-equation model of Moehlis, Faisst & Eckhardt (Reference Moehlis, Faisst and Eckhardt2004)) or two-dimensional flows (such as flow in a two-dimensional lid-driven cavity). These dynamical systems, however, do not exhibit the large separation of length and time scales that is necessary to answer the question at the beginning of this paragraph. To fill this gap, the present paper considers the three-dimensional turbulent recirculating flow around a surface-mounted prism. As is seen later, this flow contains a wide range of scales, such as large organised structures and a clear inertial regime.

$\tau$

indicates that the former is determined by the smallest scales of the flow. However, a turbulent flow consists of a wide range of spatio-temporal structures, and it is well known that large-scale structures are slower and more organised (Pope Reference Pope2000). This opens the possibility that they can be more amenable to forecasting. These structures are important because they determine momentum transfer (and therefore forces) and scalar dispersion. The ability therefore to forecast their future evolution can bring many practical benefits. Most of existing work on forecasting to date has focused on low-dimensional dynamical systems (such the Lorenz 63/96 systems, the Kuramoto–Sivashinsky equation, the nine-equation model of Moehlis, Faisst & Eckhardt (Reference Moehlis, Faisst and Eckhardt2004)) or two-dimensional flows (such as flow in a two-dimensional lid-driven cavity). These dynamical systems, however, do not exhibit the large separation of length and time scales that is necessary to answer the question at the beginning of this paragraph. To fill this gap, the present paper considers the three-dimensional turbulent recirculating flow around a surface-mounted prism. As is seen later, this flow contains a wide range of scales, such as large organised structures and a clear inertial regime.

Naive application of existing dimensionality reduction methods, such as dynamic mode decomposition (DMD), for forecasting will fail. The DMD method constructs a linear model of the form

![]() $\boldsymbol{x}[k+1]=A \boldsymbol{x}[k]$

(see Schmid Reference Schmid2010), where

$\boldsymbol{x}[k+1]=A \boldsymbol{x}[k]$

(see Schmid Reference Schmid2010), where

![]() $\boldsymbol{x}[k]$

is the state vector at time instant

$\boldsymbol{x}[k]$

is the state vector at time instant

![]() $k$

. Recursive application of this relation results in exponentially increasing or decreasing state values, depending on whether the eigenvalues of

$k$

. Recursive application of this relation results in exponentially increasing or decreasing state values, depending on whether the eigenvalues of

![]() $A$

are outside or inside the unit circle, respectively. In the case of exponentially increasing values, the growth rate is not related to

$A$

are outside or inside the unit circle, respectively. In the case of exponentially increasing values, the growth rate is not related to

![]() $\lambda _1$

. The same problem appears for higher-order DMD, which can be put in the same linear form as the standard DMD, but now

$\lambda _1$

. The same problem appears for higher-order DMD, which can be put in the same linear form as the standard DMD, but now

![]() $\boldsymbol{x}[k]$

contains time-delayed variables (Le Clainche & Vega Reference Le Clainche and Vega2017).

$\boldsymbol{x}[k]$

contains time-delayed variables (Le Clainche & Vega Reference Le Clainche and Vega2017).

More advanced approaches are therefore necessary. In particular, the decomposition of chaotic dynamics into a linear model with forcing is a very attractive approach because of the availability of a large number of tools for linear systems. Recently Chu & Schmidt (Reference Chu and Schmidt2025) derived a linear, time-invariant model for the coefficients of spectral proper orthogonal decomposition (POD) modes. The authors retained the forcing term and included its dynamics in the model formulation. The presence of a stochastic forcing term results in a probabilistic representation of the future evolution of dominant structures. Brunton et al. (Reference Brunton, Brunton, Proctor, Kaiser and Kutz2017) combined time-delay embedding and Koopman theory to derive a linear model in the leading time-delay coordinates which is forced by low-energy variables. The authors call this the Hankel alternative view of Koopman (HAVOK). The underlying idea is the following. Koopman theory (Mezić Reference Mezić2013; Otto & Rowley Reference Otto and Rowley2021; Brunton et al. Reference Brunton, Budišić, Kaiser and Kutz2022) seeks a linear representation of strongly nonlinear dynamics in the space of all observables

![]() $\boldsymbol {y}$

, which are functions of the state

$\boldsymbol {y}$

, which are functions of the state

![]() $\boldsymbol {x}$

of the system, i.e.

$\boldsymbol {x}$

of the system, i.e.

![]() $\boldsymbol {y}=\boldsymbol {g}(\boldsymbol {x})$

. It therefore trades the finite dimensionality of the nonlinear system to the infinite dimensional dynamics of the linear system. In DMD,

$\boldsymbol {y}=\boldsymbol {g}(\boldsymbol {x})$

. It therefore trades the finite dimensionality of the nonlinear system to the infinite dimensional dynamics of the linear system. In DMD,

![]() $\boldsymbol {y}=\boldsymbol {x}$

, but this selection of observables may not be rich enough for many nonlinear systems. The main idea of HAVOK is to construct a forced linear model using as states not

$\boldsymbol {y}=\boldsymbol {x}$

, but this selection of observables may not be rich enough for many nonlinear systems. The main idea of HAVOK is to construct a forced linear model using as states not

![]() $\boldsymbol {x}$

, but instead time-delayed coordinates that contain more information about the system. This is in accordance with the Takens (Reference Takens1981) embedding theorem; the theorem allows the construction of an attractor from a time series of a single measurement that is diffeomorphic to the original chaotic attractor (diffeomorphic means that a smooth, invertible transformation exists between the original attractor and its representation). A linear model cannot capture multiple fixed points, so the model is forced by an (unclosed) term. The model states are obtained from the right singular vectors of the Hankel matrix which is assembled by stacking time-delayed values of observables column by column, and each column is advanced one time unit ahead of the previous column. It is found that the forcing is active only in the regions of the chaotic attractor with strong nonlinearity (e.g. when switching between the two lobes in the Lorenz attractor), and its statistics are non-Gaussian. In Khodkar & Hassanzadeh (Reference Khodkar and Hassanzadeh2021) the forcing is found from vector-valued observables in a physics-informed way or a purely data-driven fashion, depending on whether any knowledge of governing dynamics was available or not. In Dylewsky et al. (Reference Dylewsky, Kaiser, Brunton and Kutz2022) the forcing is obtained in a two-step process in a fully unsupervised manner.

$\boldsymbol {x}$

, but instead time-delayed coordinates that contain more information about the system. This is in accordance with the Takens (Reference Takens1981) embedding theorem; the theorem allows the construction of an attractor from a time series of a single measurement that is diffeomorphic to the original chaotic attractor (diffeomorphic means that a smooth, invertible transformation exists between the original attractor and its representation). A linear model cannot capture multiple fixed points, so the model is forced by an (unclosed) term. The model states are obtained from the right singular vectors of the Hankel matrix which is assembled by stacking time-delayed values of observables column by column, and each column is advanced one time unit ahead of the previous column. It is found that the forcing is active only in the regions of the chaotic attractor with strong nonlinearity (e.g. when switching between the two lobes in the Lorenz attractor), and its statistics are non-Gaussian. In Khodkar & Hassanzadeh (Reference Khodkar and Hassanzadeh2021) the forcing is found from vector-valued observables in a physics-informed way or a purely data-driven fashion, depending on whether any knowledge of governing dynamics was available or not. In Dylewsky et al. (Reference Dylewsky, Kaiser, Brunton and Kutz2022) the forcing is obtained in a two-step process in a fully unsupervised manner.

The combination of Koopman theory with time-delayed embeddings has opened new directions for the representation of a chaotic system as a forced linear model and has spawned important theoretical work (Kamb et al. Reference Kamb, Kaiser, Brunton and Kutz2020; Arbabi & Mezić Reference Arbabi and Mezić2017; Pan & Duraisamy Reference Pan and Duraisamy2019, Reference Pan and Duraisamy2020; Giannakis Reference Giannakis2019; Das & Giannakis Reference Das and Giannakis2019). The connection between the left-singular vectors of the time-delayed Hankel matrix and the space–time POD, space-only POD and spectral POD modes was established in Frame & Towne (Reference Frame and Towne2023).

The contribution of the present paper is to add one more piece to the aforementioned time-delayed embedding/Koopman framework. More specifically, we combine this framework with linear optimal estimation theory (Kailath, Hassibi & Sayed Reference Kailath, Hassibi and Sayed2000) that allows us to derive a mapping from sparse measurements at the current time instant to the velocity field at future time instants. This approach is useful because it provides an estimate of the forcing term and thereby closes the system. Schmidt (Reference Schmidt2026) proposed a method that does not require a forcing term. This was achieved by leveraging the correlation between hindcast and forecast datasets with the aid of extended POD. To forecast the future evolution of the flow, data are required for the full flow, while in our method, once the mapping has been established, only data from a few sensors are required. A nonlinear mapping that employs machine learning techniques (instead of Koopman theory) was proposed recently for weather forecasting by Allen et al. (Reference Allen, Markou and Tebbutt2025).

Our approach is applied to flow around a surface-mounted cube, as already mentioned. Scalar is released from a source placed upstream of the cube. We derive the mapping from current to future states using not only velocity data but also scalar data; scalar sensors are usually cheaper to acquire. The proposed estimator is scalable, physically interpretable and provides sequential forecasting on a rolling time window as streaming data are coming in. Of particular interest is the quality of forecasting and how it varies with the size of the time window.

The paper is structured as follows. Sections 2 and 3 describe the forecasting methodology from sparse velocity and scalar data, respectively. Results are presented and discussed in § 4. Section 5 summarises the main findings of the paper and outlines some future research directions.

2. Flow forecasting from sparse velocity measurements

In the following,

![]() $u,v,w$

(interchangeably used with

$u,v,w$

(interchangeably used with

![]() $u_1,u_2,u_3$

) are the three velocity components in the Cartesian directions

$u_1,u_2,u_3$

) are the three velocity components in the Cartesian directions

![]() $x,y,z$

, respectively. Time averages are designated with angular brackets and fluctuations with a prime; for example

$x,y,z$

, respectively. Time averages are designated with angular brackets and fluctuations with a prime; for example

![]() $\langle u \rangle$

and

$\langle u \rangle$

and

![]() $u^\prime$

are the mean and fluctuating velocities, respectively, in the

$u^\prime$

are the mean and fluctuating velocities, respectively, in the

![]() $x$

direction.

$x$

direction.

We first describe how to forecast the future evolution of a turbulent flow from a set of sparse velocity measurements that record current information. In the following section, we extend the idea to sparse scalar measurements.

The method comprises three steps.

2.1. Step 1: dimensionality reduction

To make the method applicable to three-dimensional turbulent flows, we first need to reduce the number of degrees of freedom. In this paper we use POD (Sirovich Reference Sirovich1987). This method provides a linear mapping between the velocity field and the POD coefficients; we exploit this linearity later in step 3.

To get the POD modes, the snapshot matrix

![]() $\boldsymbol{Y}(\boldsymbol{x},t_{1}:t_{K})$

for the velocity fluctuations

$\boldsymbol{Y}(\boldsymbol{x},t_{1}:t_{K})$

for the velocity fluctuations

![]() $u^{\prime }$

,

$u^{\prime }$

,

![]() $v^{\prime }$

and

$v^{\prime }$

and

![]() $w^{\prime }$

is assembled:

$w^{\prime }$

is assembled:

\begin{equation} \boldsymbol{Y}(\boldsymbol{x},t_{1}:t_{K}) = \left [ \begin{array}{cccc} u^{\prime }(\boldsymbol{x}_{1}, t_{1})& u^{\prime }(\boldsymbol{x}_{1}, t_{2})& \ldots & u^{\prime }(\boldsymbol{x}_{1}, t_{K})\\ \vdots & \vdots & \ldots & \vdots \\ u^{\prime }(\boldsymbol{x}_{N}, t_{1})& u^{\prime }(\boldsymbol{x}_{N}, t_{2})& \ldots & u^{\prime }(\boldsymbol{x}_{N}, t_{K})\\ v^{\prime }(\boldsymbol{x}_{1}, t_{1})& v^{\prime }(\boldsymbol{x}_{1}, t_{2})& \ldots & v^{\prime }(\boldsymbol{x}_{1}, t_{K})\\ \vdots & \vdots & \ldots & \vdots \\ v^{\prime }(\boldsymbol{x}_{N}, t_{1})& v^{\prime }(\boldsymbol{x}_{N}, t_{2})& \ldots & v^{\prime }(\boldsymbol{x}_{N, t_{K}})\\ w^{\prime }(\boldsymbol{x}_{1}, t_{1})& w^{\prime }(\boldsymbol{x}_{1}, t_{2})& \ldots & w^{\prime }(\boldsymbol{x}_{1}, t_{K})\\ \vdots & \vdots & \ldots & \vdots \\ w^{\prime }(\boldsymbol{x}_{N}, t_{1})& w^{\prime }(\boldsymbol{x}_{N}, t_{2})& \ldots & w^{\prime }(\boldsymbol{x}_{N, t_{K}}) \end{array} \right ] , \end{equation}

\begin{equation} \boldsymbol{Y}(\boldsymbol{x},t_{1}:t_{K}) = \left [ \begin{array}{cccc} u^{\prime }(\boldsymbol{x}_{1}, t_{1})& u^{\prime }(\boldsymbol{x}_{1}, t_{2})& \ldots & u^{\prime }(\boldsymbol{x}_{1}, t_{K})\\ \vdots & \vdots & \ldots & \vdots \\ u^{\prime }(\boldsymbol{x}_{N}, t_{1})& u^{\prime }(\boldsymbol{x}_{N}, t_{2})& \ldots & u^{\prime }(\boldsymbol{x}_{N}, t_{K})\\ v^{\prime }(\boldsymbol{x}_{1}, t_{1})& v^{\prime }(\boldsymbol{x}_{1}, t_{2})& \ldots & v^{\prime }(\boldsymbol{x}_{1}, t_{K})\\ \vdots & \vdots & \ldots & \vdots \\ v^{\prime }(\boldsymbol{x}_{N}, t_{1})& v^{\prime }(\boldsymbol{x}_{N}, t_{2})& \ldots & v^{\prime }(\boldsymbol{x}_{N, t_{K}})\\ w^{\prime }(\boldsymbol{x}_{1}, t_{1})& w^{\prime }(\boldsymbol{x}_{1}, t_{2})& \ldots & w^{\prime }(\boldsymbol{x}_{1}, t_{K})\\ \vdots & \vdots & \ldots & \vdots \\ w^{\prime }(\boldsymbol{x}_{N}, t_{1})& w^{\prime }(\boldsymbol{x}_{N}, t_{2})& \ldots & w^{\prime }(\boldsymbol{x}_{N, t_{K}}) \end{array} \right ] , \end{equation}

where

![]() $\boldsymbol{Y} \in \mathbb{R}^{3N \times K}$

,

$\boldsymbol{Y} \in \mathbb{R}^{3N \times K}$

,

![]() $\boldsymbol{x}_{i}= [x_{i}, y_{i}, z_{i} ]\ (i=1,2,\ldots ,N)$

is the location vector for the

$\boldsymbol{x}_{i}= [x_{i}, y_{i}, z_{i} ]\ (i=1,2,\ldots ,N)$

is the location vector for the

![]() $i$

th spatial location,

$i$

th spatial location,

![]() $N$

is the number of cells and

$N$

is the number of cells and

![]() $K$

is the total number of snapshots. The spacing between successive snapshots is

$K$

is the total number of snapshots. The spacing between successive snapshots is

![]() $\Delta t$

. Singular value decomposition (SVD) is performed on the weighted matrix

$\Delta t$

. Singular value decomposition (SVD) is performed on the weighted matrix

![]() $\mathcal{V}^{1/2} \boldsymbol{Y}$

:

$\mathcal{V}^{1/2} \boldsymbol{Y}$

:

where

![]() $\mathcal{V}=diag (V_1, V_2 \ldots V_N, V_1, V_2, \ldots , V_N, V_1, V_2, \ldots , V_N )$

is a diagonal matrix with the cell volumes

$\mathcal{V}=diag (V_1, V_2 \ldots V_N, V_1, V_2, \ldots , V_N, V_1, V_2, \ldots , V_N )$

is a diagonal matrix with the cell volumes

![]() $V_i$

in the main diagonal,

$V_i$

in the main diagonal,

![]() $\boldsymbol{U}_{\!Y} \in \mathbb{R}^{3N \times K}$

contains the left singular vectors,

$\boldsymbol{U}_{\!Y} \in \mathbb{R}^{3N \times K}$

contains the left singular vectors,

![]() $\boldsymbol{ \mathit{\varSigma } }_{Y} \in \mathbb{R}^{K \times K}$

is a diagonal matrix that stores

$\boldsymbol{ \mathit{\varSigma } }_{Y} \in \mathbb{R}^{K \times K}$

is a diagonal matrix that stores

![]() $K$

singular values (we assume

$K$

singular values (we assume

![]() $K \ll 3N$

) and

$K \ll 3N$

) and

![]() $\boldsymbol{V}_{Y} \in \mathbb{R}^{K \times K}$

contains the right singular vectors. The scaled POD eigenmodes

$\boldsymbol{V}_{Y} \in \mathbb{R}^{K \times K}$

contains the right singular vectors. The scaled POD eigenmodes

![]() $U_{Y, k}(\boldsymbol{x})$

are extracted from the columns of

$U_{Y, k}(\boldsymbol{x})$

are extracted from the columns of

![]() $\boldsymbol{U}_{\!Y}$

from

$\boldsymbol{U}_{\!Y}$

from

The singular values

![]() $\sigma _{Y, k}$

are ranked in descending order along the diagonal of matrix

$\sigma _{Y, k}$

are ranked in descending order along the diagonal of matrix

![]() $\boldsymbol{ \mathit{\varSigma } }_{Y}$

. The energy content of each mode is computed using

$\boldsymbol{ \mathit{\varSigma } }_{Y}$

. The energy content of each mode is computed using

and the time coefficients from

The fluctuating velocity field can be written as

\begin{equation} u^{\prime }_{i}(x,y,z,t) = \sum ^{ K }_{k=1} a_{k}(t) {U}^{(i)}_{Y, k}(x,y,z) \approx \sum ^{m_{u}}_{k=1} a_{k}(t) {U}^{(i)}_{Y, k}(x,y,z) \quad (i=1, 2, 3), \end{equation}

\begin{equation} u^{\prime }_{i}(x,y,z,t) = \sum ^{ K }_{k=1} a_{k}(t) {U}^{(i)}_{Y, k}(x,y,z) \approx \sum ^{m_{u}}_{k=1} a_{k}(t) {U}^{(i)}_{Y, k}(x,y,z) \quad (i=1, 2, 3), \end{equation}

where

![]() $m_{u}$

is the number of retained POD modes,

$m_{u}$

is the number of retained POD modes,

![]() $a_{k}(t)$

is the time coefficient of the

$a_{k}(t)$

is the time coefficient of the

![]() $k$

th POD mode and

$k$

th POD mode and

![]() ${U}^{(i)}_{Y, k}$

is the

${U}^{(i)}_{Y, k}$

is the

![]() $k$

th POD eigenvector of the

$k$

th POD eigenvector of the

![]() $i$

th velocity component. This expression can be written in matrix form as

$i$

th velocity component. This expression can be written in matrix form as

\begin{equation} \underbrace {\left [ \begin{array}{c} u_1^{\prime } \\[5pt] u_2^{\prime }\\[5pt] u_3^{\prime }\end{array} \right ]} _{\equiv \boldsymbol{u}^{\prime }} = \underbrace {\left [ \begin{array}{cccc} {U}^{(1)}_{Y, 1} & {U}^{(1)}_{Y, 2} & \ldots & {U}^{(1)}_{Y, m_u} \\[5pt] {U}^{(2)}_{Y, 1} & {U}^{(2)}_{Y, 2} & \ldots & {U}^{(2)}_{Y, m_u} \\[5pt] {U}^{(3)}_{Y, 1} & {U}^{(3)}_{Y, 2} & \ldots & {U}^{(3)}_{Y, m_u} \end{array} \right ]}_{\equiv \boldsymbol{U}_{\!Y}} \underbrace {\left [ \begin{array}{c} a_1^{\prime } \\[5pt] a_2^{\prime }\\[5pt] \vdots \\[5pt] a_{m_u}^{\prime }\end{array} \right ]}_{\equiv \boldsymbol{a}} \end{equation}

\begin{equation} \underbrace {\left [ \begin{array}{c} u_1^{\prime } \\[5pt] u_2^{\prime }\\[5pt] u_3^{\prime }\end{array} \right ]} _{\equiv \boldsymbol{u}^{\prime }} = \underbrace {\left [ \begin{array}{cccc} {U}^{(1)}_{Y, 1} & {U}^{(1)}_{Y, 2} & \ldots & {U}^{(1)}_{Y, m_u} \\[5pt] {U}^{(2)}_{Y, 1} & {U}^{(2)}_{Y, 2} & \ldots & {U}^{(2)}_{Y, m_u} \\[5pt] {U}^{(3)}_{Y, 1} & {U}^{(3)}_{Y, 2} & \ldots & {U}^{(3)}_{Y, m_u} \end{array} \right ]}_{\equiv \boldsymbol{U}_{\!Y}} \underbrace {\left [ \begin{array}{c} a_1^{\prime } \\[5pt] a_2^{\prime }\\[5pt] \vdots \\[5pt] a_{m_u}^{\prime }\end{array} \right ]}_{\equiv \boldsymbol{a}} \end{equation}

or more compactly

2.2. Step 2: construction of a dynamical system for current and future POD coefficients

The next step is the construction of a model for the dynamic evolution of POD coefficients. This model should be able to forecast the future time signal from current information. To this end, we assemble the time-delayed Hankel matrix

![]() $\boldsymbol{H}$

that consists of the POD coefficients of the retained

$\boldsymbol{H}$

that consists of the POD coefficients of the retained

![]() $m_{u}$

velocity modes:

$m_{u}$

velocity modes:

\begin{equation} \boldsymbol{H} = \left [ \begin{array}{cccc} \boldsymbol{a}(t_{1})& \boldsymbol{a}(t_{2})& \ldots & \boldsymbol{a}(t_{p})\\ \boldsymbol{a}(t_{2})& \boldsymbol{a}(t_{3})& \ldots & \boldsymbol{a}(t_{p+1})\\ \vdots & \vdots & \ddots & \vdots \\ \boldsymbol{a}(t_{q})& \boldsymbol{a}(t_{q+1})& \ldots & \boldsymbol{a}(t_{K_{\textit{train}}}) \end{array} \right ] , \end{equation}

\begin{equation} \boldsymbol{H} = \left [ \begin{array}{cccc} \boldsymbol{a}(t_{1})& \boldsymbol{a}(t_{2})& \ldots & \boldsymbol{a}(t_{p})\\ \boldsymbol{a}(t_{2})& \boldsymbol{a}(t_{3})& \ldots & \boldsymbol{a}(t_{p+1})\\ \vdots & \vdots & \ddots & \vdots \\ \boldsymbol{a}(t_{q})& \boldsymbol{a}(t_{q+1})& \ldots & \boldsymbol{a}(t_{K_{\textit{train}}}) \end{array} \right ] , \end{equation}

where

![]() $ \boldsymbol{a}(t_{j})= [a_1(t_j)\ldots a_{m_u}(t_j) ]^\top$

and we use

$ \boldsymbol{a}(t_{j})= [a_1(t_j)\ldots a_{m_u}(t_j) ]^\top$

and we use

![]() $q$

vectors in each column. The number of columns is

$q$

vectors in each column. The number of columns is

![]() $p=K_{\textit{train}}-q+1$

, where

$p=K_{\textit{train}}-q+1$

, where

![]() $K_{\textit{train}}$

is the number of snapshots for the training dataset and

$K_{\textit{train}}$

is the number of snapshots for the training dataset and

![]() $\boldsymbol{H} \in \mathbb{R}^{ ( m_{u} \times q ) \times p}$

. Performing SVD on

$\boldsymbol{H} \in \mathbb{R}^{ ( m_{u} \times q ) \times p}$

. Performing SVD on

![]() $\boldsymbol{H}$

, we obtain

$\boldsymbol{H}$

, we obtain

where

![]() $\boldsymbol{U}_{\!H} \in \mathbb{R}^{ ( m_{u} \times q ) \times r}$

,

$\boldsymbol{U}_{\!H} \in \mathbb{R}^{ ( m_{u} \times q ) \times r}$

,

![]() $\boldsymbol{\varSigma }_{H} \in \mathbb{R}^{r \times r}$

,

$\boldsymbol{\varSigma }_{H} \in \mathbb{R}^{r \times r}$

,

![]() $\boldsymbol{V}_{H} \in \mathbb{R}^{p \times r}$

and

$\boldsymbol{V}_{H} \in \mathbb{R}^{p \times r}$

and

![]() $r$

is the number of retained singular values. The matrix of the left singular vectors

$r$

is the number of retained singular values. The matrix of the left singular vectors

![]() $\boldsymbol{U}_{\!H}$

can be explicitly written as

$\boldsymbol{U}_{\!H}$

can be explicitly written as

\begin{equation} \boldsymbol{U}_{\!H} = \left [ \begin{array}{cccc} \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{1})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{1})\\[5pt] \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{2})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{2})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{2})\\[5pt] \vdots & \vdots & \ddots & \vdots \\[5pt] \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{q})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{q})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{q}) \end{array} \right ] , \end{equation}

\begin{equation} \boldsymbol{U}_{\!H} = \left [ \begin{array}{cccc} \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{1})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{1})\\[5pt] \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{2})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{2})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{2})\\[5pt] \vdots & \vdots & \ddots & \vdots \\[5pt] \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{q})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{q})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{q}) \end{array} \right ] , \end{equation}

where each column

![]() $\boldsymbol{U}^{(u,v,w)}_{H,i}(t_{1} \colon t_{q}) \in \mathbb{R}^{m_{u} \times q }$

is the

$\boldsymbol{U}^{(u,v,w)}_{H,i}(t_{1} \colon t_{q}) \in \mathbb{R}^{m_{u} \times q }$

is the

![]() $i$

th time-delayed singular mode of the Hankel matrix.

$i$

th time-delayed singular mode of the Hankel matrix.

The diagonal matrix of singular values

![]() $\boldsymbol{\varSigma }_{H}$

is

$\boldsymbol{\varSigma }_{H}$

is

\begin{equation} \boldsymbol{\varSigma }_{H} = \left [ \begin{array}{cccc} \sigma _{H, 1}& 0& \ldots & 0\\ 0& \sigma _{H, 2}& \ldots & 0\\ \vdots & \vdots & \ddots & \vdots \\ 0& 0& \ldots & \sigma _{H, r} \end{array} \right ] , \end{equation}

\begin{equation} \boldsymbol{\varSigma }_{H} = \left [ \begin{array}{cccc} \sigma _{H, 1}& 0& \ldots & 0\\ 0& \sigma _{H, 2}& \ldots & 0\\ \vdots & \vdots & \ddots & \vdots \\ 0& 0& \ldots & \sigma _{H, r} \end{array} \right ] , \end{equation}

and the matrix of the right singular vectors

![]() $\boldsymbol{V}_{H}$

can be explicitly written as

$\boldsymbol{V}_{H}$

can be explicitly written as

\begin{equation} \boldsymbol{V}_{H} = \left [ \begin{array}{cccc} v_{H,1}(t_{1})& v_{H,1}(t_{2})& \ldots & v_{H,1}(t_{p})\\[3pt] v_{H,2}(t_{1})& v_{H,2}(t_{2})& \ldots & v_{H,2}(t_{p})\\ \vdots & \vdots & \ddots & \vdots \\ v_{H,r}(t_{1})& v_{H,r}(t_{2})& \ldots & v_{H,r}(t_{p}) \end{array} \right ] . \end{equation}

\begin{equation} \boldsymbol{V}_{H} = \left [ \begin{array}{cccc} v_{H,1}(t_{1})& v_{H,1}(t_{2})& \ldots & v_{H,1}(t_{p})\\[3pt] v_{H,2}(t_{1})& v_{H,2}(t_{2})& \ldots & v_{H,2}(t_{p})\\ \vdots & \vdots & \ddots & \vdots \\ v_{H,r}(t_{1})& v_{H,r}(t_{2})& \ldots & v_{H,r}(t_{p}) \end{array} \right ] . \end{equation}

We now define the vector

![]() $\boldsymbol{v}_{H}(t_j)=[v_{H, 1}(t_j), v_{H, 2}(t_j), \ldots , v_{H, r}(t_j)]^{\top } \in \mathbb{R}^{r}$

$\boldsymbol{v}_{H}(t_j)=[v_{H, 1}(t_j), v_{H, 2}(t_j), \ldots , v_{H, r}(t_j)]^{\top } \in \mathbb{R}^{r}$

![]() $(j=1 \ldots p)$

extracted from the columns of matrix

$(j=1 \ldots p)$

extracted from the columns of matrix

![]() $\boldsymbol{V}_{H}$

. We consider

$\boldsymbol{V}_{H}$

. We consider

![]() $\boldsymbol{v}_{H}$

as the state variable of the following discrete-in-time, forced, linear dynamical system:

$\boldsymbol{v}_{H}$

as the state variable of the following discrete-in-time, forced, linear dynamical system:

where

![]() $\boldsymbol{v}_{H}[k]=\boldsymbol{v}_{H}[t_k]$

,

$\boldsymbol{v}_{H}[k]=\boldsymbol{v}_{H}[t_k]$

,

![]() $k=1 \ldots p-1$

and

$k=1 \ldots p-1$

and

![]() $\boldsymbol{A} \in \mathbb{R}^{r \times r}$

.

$\boldsymbol{A} \in \mathbb{R}^{r \times r}$

.

To obtain matrix

![]() $\boldsymbol{A}$

, we write (2.14) explicitly for

$\boldsymbol{A}$

, we write (2.14) explicitly for

![]() $k=1 \ldots p-1$

:

$k=1 \ldots p-1$

:

\begin{align} \boldsymbol{v}_{H}[3] & \approx \boldsymbol{A}\boldsymbol{v}_{H}[2],\nonumber \\ & \vdots \end{align}

\begin{align} \boldsymbol{v}_{H}[3] & \approx \boldsymbol{A}\boldsymbol{v}_{H}[2],\nonumber \\ & \vdots \end{align}

In matrix form, this becomes

where

![]() $\boldsymbol{V}^{\prime }, \boldsymbol{V} \in \mathbb{R}^{r \times (p-1)}$

:

$\boldsymbol{V}^{\prime }, \boldsymbol{V} \in \mathbb{R}^{r \times (p-1)}$

:

Then the system matrix

![]() $\boldsymbol{A}$

can be calculated from

$\boldsymbol{A}$

can be calculated from

This formulation essentially applies the DMD algorithm directly to

![]() $ \boldsymbol{v}_{H}[k]$

.

$ \boldsymbol{v}_{H}[k]$

.

Once

![]() $\boldsymbol{A}$

is known, the forcing

$\boldsymbol{A}$

is known, the forcing

![]() $\boldsymbol{w}_{2}[k]$

can be easily computed from the training dataset,

$\boldsymbol{w}_{2}[k]$

can be easily computed from the training dataset,

![]() $\boldsymbol{w}_{2}[k]=\boldsymbol{v}_{H}[k+1]-\boldsymbol{A} \boldsymbol{v}_{H}[k]$

. The covariance matrix of the forcing

$\boldsymbol{w}_{2}[k]=\boldsymbol{v}_{H}[k+1]-\boldsymbol{A} \boldsymbol{v}_{H}[k]$

. The covariance matrix of the forcing

![]() $\boldsymbol{Q} \in \mathbb{R}^{r \times r}$

can be obtained from

$\boldsymbol{Q} \in \mathbb{R}^{r \times r}$

can be obtained from

\begin{equation} \boldsymbol{Q} = \mathbb{E} \big ( \boldsymbol{w}_{2}\boldsymbol{w}^{\top }_{2} \big ) = \frac {1}{p-1} \sum ^{k=p-1}_{k=1} \boldsymbol{w}_{2}[k] \boldsymbol{w}^{\top }_{2}[k]. \end{equation}

\begin{equation} \boldsymbol{Q} = \mathbb{E} \big ( \boldsymbol{w}_{2}\boldsymbol{w}^{\top }_{2} \big ) = \frac {1}{p-1} \sum ^{k=p-1}_{k=1} \boldsymbol{w}_{2}[k] \boldsymbol{w}^{\top }_{2}[k]. \end{equation}

It is important to notice that using

![]() $\boldsymbol{v}_{H}[k]$

we can obtain the current (at instant

$\boldsymbol{v}_{H}[k]$

we can obtain the current (at instant

![]() $k$

) and forecast the future (at instants

$k$

) and forecast the future (at instants

![]() $k+1 \ldots k+q$

) time coefficients as follows:

$k+1 \ldots k+q$

) time coefficients as follows:

\begin{eqnarray} \left [ \begin{array}{c} \boldsymbol{a}[k] \\[3pt] \boldsymbol{a}[k+1] \\ \vdots \\ \boldsymbol{a} [k+q] \\ \end{array} \right ] & = & \boldsymbol{U}_{\!H} \varSigma _{H} \boldsymbol{v}_{H}[k]. \end{eqnarray}

\begin{eqnarray} \left [ \begin{array}{c} \boldsymbol{a}[k] \\[3pt] \boldsymbol{a}[k+1] \\ \vdots \\ \boldsymbol{a} [k+q] \\ \end{array} \right ] & = & \boldsymbol{U}_{\!H} \varSigma _{H} \boldsymbol{v}_{H}[k]. \end{eqnarray}

In particular

![]() $\boldsymbol{a}[k]$

can be obtained from

$\boldsymbol{a}[k]$

can be obtained from

where

![]() $\boldsymbol{C} \in \mathbb{R}^{m_{u} \times r}$

and

$\boldsymbol{C} \in \mathbb{R}^{m_{u} \times r}$

and

![]() $\boldsymbol{U}_{\!H}(t_{1})= [ \begin{array}{cccc} \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{1})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{1}) \end{array} ] \in \mathbb{R}^{m_{u} \times r}$

is the top row of matrix

$\boldsymbol{U}_{\!H}(t_{1})= [ \begin{array}{cccc} \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{1})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{1}) \end{array} ] \in \mathbb{R}^{m_{u} \times r}$

is the top row of matrix

![]() $\boldsymbol{U}_{\!H}$

; see (2.11).

$\boldsymbol{U}_{\!H}$

; see (2.11).

The previous two expressions demonstrate the fundamental importance of

![]() $\boldsymbol{U}_{\!H}$

. This matrix has a very clear physical interpretation, which can be understood as follows. Each column of

$\boldsymbol{U}_{\!H}$

. This matrix has a very clear physical interpretation, which can be understood as follows. Each column of

![]() $\boldsymbol{H}$

contains

$\boldsymbol{H}$

contains

![]() $q$

snapshots, i.e. short time-varying sequences of fixed time duration (instead of a single snapshot as in standard POD). There are

$q$

snapshots, i.e. short time-varying sequences of fixed time duration (instead of a single snapshot as in standard POD). There are

![]() $p$

such short sequences in total. The left singular vectors

$p$

such short sequences in total. The left singular vectors

![]() $\boldsymbol{U}^{(u,v,w)}_{H,i}$

are obtained by performing SVD on

$\boldsymbol{U}^{(u,v,w)}_{H,i}$

are obtained by performing SVD on

![]() $\boldsymbol{H}$

. These vectors capture the most important time-dependent patterns, in exactly the same way as the POD modes capture the most important (static) patterns from a series of snapshots. These time-varying patterns are arranged column by column in descending order of importance, as quantified by the singular values

$\boldsymbol{H}$

. These vectors capture the most important time-dependent patterns, in exactly the same way as the POD modes capture the most important (static) patterns from a series of snapshots. These time-varying patterns are arranged column by column in descending order of importance, as quantified by the singular values

![]() $\sigma _{H,k}$

, and can be exploited to predict the future evolution from current information. They resemble Legendre polynomials for short

$\sigma _{H,k}$

, and can be exploited to predict the future evolution from current information. They resemble Legendre polynomials for short

![]() $q \times \Delta t$

and become sinusoids for large

$q \times \Delta t$

and become sinusoids for large

![]() $q \times \Delta t$

(see Dylewsky et al. Reference Dylewsky, Kaiser, Brunton and Kutz2022; Frame & Towne Reference Frame and Towne2023). We visualise these patterns for the flow around a surface-mounted cube in §§ 4.4 and 4.5.

$q \times \Delta t$

(see Dylewsky et al. Reference Dylewsky, Kaiser, Brunton and Kutz2022; Frame & Towne Reference Frame and Towne2023). We visualise these patterns for the flow around a surface-mounted cube in §§ 4.4 and 4.5.

Note that this step is independent of the dimensionality reduction method selected in step 1. The question now is how to obtain

![]() $\boldsymbol{v}_{H}[k]$

since the forcing term

$\boldsymbol{v}_{H}[k]$

since the forcing term

![]() $\boldsymbol{w}_{2}[k]$

in (2.14) is not known. We can get a closure if measurements at some sparse sensor points are available.

$\boldsymbol{w}_{2}[k]$

in (2.14) is not known. We can get a closure if measurements at some sparse sensor points are available.

2.3. Step 3: closure of the system using sensor measurements

Let us assume that we have a set of

![]() $l$

velocity measurements

$l$

velocity measurements

![]() $\boldsymbol{s}[k] \in \mathbb{R}^{l}$

at a number of sensor points. At each sensor, one or more velocity components are recorded. We can express

$\boldsymbol{s}[k] \in \mathbb{R}^{l}$

at a number of sensor points. At each sensor, one or more velocity components are recorded. We can express

![]() $\boldsymbol{s}[k]$

in terms of

$\boldsymbol{s}[k]$

in terms of

![]() $\boldsymbol{a}[k]$

as follows:

$\boldsymbol{a}[k]$

as follows:

where matrix

![]() $\boldsymbol{S}$

selects the rows of the POD mode matrix

$\boldsymbol{S}$

selects the rows of the POD mode matrix

![]() $\boldsymbol{U}_{\!Y}$

corresponding to the sensor location and the velocity component being measured (it is

$\boldsymbol{U}_{\!Y}$

corresponding to the sensor location and the velocity component being measured (it is

![]() $0$

everywhere except for those points and components where the corresponding element is equal to

$0$

everywhere except for those points and components where the corresponding element is equal to

![]() $1$

). Vector

$1$

). Vector

![]() $\boldsymbol{g} \in \mathbb{R}^{l}$

includes measurement errors as well as errors due to POD mode truncation (because only

$\boldsymbol{g} \in \mathbb{R}^{l}$

includes measurement errors as well as errors due to POD mode truncation (because only

![]() $m_u$

modes are retained). The elements of

$m_u$

modes are retained). The elements of

![]() $\boldsymbol{g}[k]$

can be obtained from the training dataset,

$\boldsymbol{g}[k]$

can be obtained from the training dataset,

![]() $\boldsymbol{g}[k]=\boldsymbol{s}[k]-\boldsymbol{S} \boldsymbol{U}_{\!Y} \boldsymbol{a}[k]$

), and its covariance

$\boldsymbol{g}[k]=\boldsymbol{s}[k]-\boldsymbol{S} \boldsymbol{U}_{\!Y} \boldsymbol{a}[k]$

), and its covariance

![]() $\boldsymbol{R} \in \mathbb{R}^{l \times l}$

can be easily calculated from

$\boldsymbol{R} \in \mathbb{R}^{l \times l}$

can be easily calculated from

\begin{equation} \boldsymbol{R} = \mathbb{E} \big ( \boldsymbol{g}\boldsymbol{g}^{\top } \big ) = \frac {1}{p-1} \sum ^{k=p-1}_{k=1} \boldsymbol{g}[k] \boldsymbol{g}^{\top }[k]. \end{equation}

\begin{equation} \boldsymbol{R} = \mathbb{E} \big ( \boldsymbol{g}\boldsymbol{g}^{\top } \big ) = \frac {1}{p-1} \sum ^{k=p-1}_{k=1} \boldsymbol{g}[k] \boldsymbol{g}^{\top }[k]. \end{equation}

We are now ready to design a Kalman filter to estimate

![]() $\boldsymbol{v}_{H}[k]$

from the sparse measurements

$\boldsymbol{v}_{H}[k]$

from the sparse measurements

![]() $\boldsymbol{s}[k]$

. The filter takes the form

$\boldsymbol{s}[k]$

. The filter takes the form

where a hat

![]() $\hat {()}$

denotes an estimated quantity and

$\hat {()}$

denotes an estimated quantity and

![]() $\mathcal{L} \in \mathbb{R}^{r \times l}$

is the Kalman filter gain. The latter is obtained from the solution of the following Riccati equation:

$\mathcal{L} \in \mathbb{R}^{r \times l}$

is the Kalman filter gain. The latter is obtained from the solution of the following Riccati equation:

From

![]() $\hat {\boldsymbol{v}}_{H}[k]$

one can estimate the current and future POD coefficients from (2.20), and of course the instantaneous velocity field from (2.8).

$\hat {\boldsymbol{v}}_{H}[k]$

one can estimate the current and future POD coefficients from (2.20), and of course the instantaneous velocity field from (2.8).

The linear mapping between the velocity and POD coefficients, (2.22), has allowed us to synthesise a Kalman filter. This type of filter is suitable only for linear dynamical systems and linear mappings between the measurement and state variables. For nonlinear mappings, one can use an extended Kalman filter or an ensemble Kalman filter (Evensen Reference Evensen2003). Note also that the formulation can accommodate data from moving sensor locations (e.g. data from drones); this is achieved by using a time-dependent selection matrix

![]() $\boldsymbol{S}[k]$

in (2.22). In this case, the filter gain

$\boldsymbol{S}[k]$

in (2.22). In this case, the filter gain

![]() $\mathcal{L}[k]$

will be also time-dependent. A machine learning technique (instead of Koopman/Kalman filter theory) was proposed recently by Allen et al. (Reference Allen, Markou and Tebbutt2025); it maps measurements

$\mathcal{L}[k]$

will be also time-dependent. A machine learning technique (instead of Koopman/Kalman filter theory) was proposed recently by Allen et al. (Reference Allen, Markou and Tebbutt2025); it maps measurements

![]() $\boldsymbol{s}[k]$

directly to the future states for weather forecasting.

$\boldsymbol{s}[k]$

directly to the future states for weather forecasting.

Steps 1 and 2 are performed offline using a training dataset. The Kalman estimator in step 3 runs online and requires only the streaming measurement data

![]() $\boldsymbol{s}[k]$

. The arriving data are then mapped to the future velocity field on a rolling time window through (2.24), then (2.20) and finally (2.8). Depending on the number of retained modes

$\boldsymbol{s}[k]$

. The arriving data are then mapped to the future velocity field on a rolling time window through (2.24), then (2.20) and finally (2.8). Depending on the number of retained modes

![]() $m_u$

, it may be possible to perform such a sequential forecasting in real time, thereby making the approach also useful to experimentalists.

$m_u$

, it may be possible to perform such a sequential forecasting in real time, thereby making the approach also useful to experimentalists.

3. Flow forecasting from sparse scalar measurements

We follow the same approach to forecast the flow from sparse scalar measurements. First we assemble the snapshot matrix

![]() $\boldsymbol{ \mathit{Z} }(\boldsymbol{x},t_{1}:t_{K})$

of the scalar fluctuations

$\boldsymbol{ \mathit{Z} }(\boldsymbol{x},t_{1}:t_{K})$

of the scalar fluctuations

![]() $c^{\prime }$

:

$c^{\prime }$

:

\begin{equation} \boldsymbol{ \mathit{Z} }(\boldsymbol{x},t_{1}:t_{K}) = \left [ \begin{array}{cccc} c^{\prime }(\boldsymbol{x}_{1}, t_{1})& c^{\prime }(\boldsymbol{x}_{1}, t_{2})& \ldots & c^{\prime }(\boldsymbol{x}_{1}, t_{K})\\ \vdots & \vdots & \ddots & \vdots \\ c^{\prime }(\boldsymbol{x}_{N}, t_{1})& c^{\prime }(\boldsymbol{x}_{N}, t_{2})& \ldots & c^{\prime }(\boldsymbol{x}_{N}, t_{K}) \end{array} \right ] , \end{equation}

\begin{equation} \boldsymbol{ \mathit{Z} }(\boldsymbol{x},t_{1}:t_{K}) = \left [ \begin{array}{cccc} c^{\prime }(\boldsymbol{x}_{1}, t_{1})& c^{\prime }(\boldsymbol{x}_{1}, t_{2})& \ldots & c^{\prime }(\boldsymbol{x}_{1}, t_{K})\\ \vdots & \vdots & \ddots & \vdots \\ c^{\prime }(\boldsymbol{x}_{N}, t_{1})& c^{\prime }(\boldsymbol{x}_{N}, t_{2})& \ldots & c^{\prime }(\boldsymbol{x}_{N}, t_{K}) \end{array} \right ] , \end{equation}

where

![]() $\boldsymbol{ \mathit{Z} } \in \mathbb{R}^{N \times K}$

. Note that the scalar data are synchronised with the velocity data, that is, the time instants

$\boldsymbol{ \mathit{Z} } \in \mathbb{R}^{N \times K}$

. Note that the scalar data are synchronised with the velocity data, that is, the time instants

![]() $t_i \ (i=1 \ldots K)$

in (2.1) and (3.1) are the same. As before, we apply SVD to the weighted matrix

$t_i \ (i=1 \ldots K)$

in (2.1) and (3.1) are the same. As before, we apply SVD to the weighted matrix

![]() $\mathcal{V}^{1/2} \boldsymbol{Z}$

(where now

$\mathcal{V}^{1/2} \boldsymbol{Z}$

(where now

![]() $\mathcal{V}=diag (V_1, V_2 \ldots V_N )$

) and obtain the scalar POD modes,

$\mathcal{V}=diag (V_1, V_2 \ldots V_N )$

) and obtain the scalar POD modes,

![]() $U_{Z, k}(x,y,z)$

, and time coefficients,

$U_{Z, k}(x,y,z)$

, and time coefficients,

![]() $b_{k}(t)$

. Thus we can write

$b_{k}(t)$

. Thus we can write

\begin{equation} c^{\prime }(x,y,z,t) = \sum ^{ K }_{k=1} b_{k}(t)U_{Z, k}(x,y,z) \approx \sum ^{m_{c}}_{k=1} b_{k}(t)U_{Z, k}(x,y,z), \end{equation}

\begin{equation} c^{\prime }(x,y,z,t) = \sum ^{ K }_{k=1} b_{k}(t)U_{Z, k}(x,y,z) \approx \sum ^{m_{c}}_{k=1} b_{k}(t)U_{Z, k}(x,y,z), \end{equation}

where

![]() $m_{c}$

is the number of retained scalar POD modes, or in more compact form,

$m_{c}$

is the number of retained scalar POD modes, or in more compact form,

Equations (2.8) and (3.3) can be written together as

\begin{equation} \left [ \begin{array}{c} \boldsymbol{u}^{\prime } \\ \boldsymbol{c}^{\prime } \end{array} \right ](x,y,z,t)= \underbrace {\left [ \begin{array}{cc} \boldsymbol{U}_{\!Y} & \boldsymbol{0} \\ \boldsymbol{0} & \boldsymbol{U}_{Z} \end{array} \right ]}_{\equiv \boldsymbol{U}_{YZ}}\ \left [ \begin{array}{c} \boldsymbol{a}(t) \\ \boldsymbol{b}(t) \end{array} \right ]. \end{equation}

\begin{equation} \left [ \begin{array}{c} \boldsymbol{u}^{\prime } \\ \boldsymbol{c}^{\prime } \end{array} \right ](x,y,z,t)= \underbrace {\left [ \begin{array}{cc} \boldsymbol{U}_{\!Y} & \boldsymbol{0} \\ \boldsymbol{0} & \boldsymbol{U}_{Z} \end{array} \right ]}_{\equiv \boldsymbol{U}_{YZ}}\ \left [ \begin{array}{c} \boldsymbol{a}(t) \\ \boldsymbol{b}(t) \end{array} \right ]. \end{equation}

We then build the time-delayed Hankel matrix with the POD coefficients of the most dominant

![]() $m_{u}$

velocity and

$m_{u}$

velocity and

![]() $m_{c}$

scalar modes:

$m_{c}$

scalar modes:

\begin{equation} \boldsymbol{H} = \left [ \begin{array}{cccc} \boldsymbol{a}(t_{1})& \boldsymbol{a}(t_{2})& \ldots & \boldsymbol{a}(t_{p})\\ \boldsymbol{b}(t_{1})& \boldsymbol{b}(t_{2})& \ldots & \boldsymbol{b}(t_{p})\\ \boldsymbol{a}(t_{2})& \boldsymbol{a}(t_{3})& \ldots & \boldsymbol{a}(t_{p+1})\\ \boldsymbol{b}(t_{2})& \boldsymbol{b}(t_{3})& \ldots & \boldsymbol{b}(t_{p+1})\\ \vdots & \vdots & \ddots & \vdots \\ \boldsymbol{a}(t_{q})& \boldsymbol{a}(t_{q+1})& \ldots & \boldsymbol{a}(t_{K_{\textit{train}}})\\ \boldsymbol{b}(t_{q})& \boldsymbol{b}(t_{q+1})& \ldots & \boldsymbol{b}(t_{K_{\textit{train}}}) \end{array} \right ] , \end{equation}

\begin{equation} \boldsymbol{H} = \left [ \begin{array}{cccc} \boldsymbol{a}(t_{1})& \boldsymbol{a}(t_{2})& \ldots & \boldsymbol{a}(t_{p})\\ \boldsymbol{b}(t_{1})& \boldsymbol{b}(t_{2})& \ldots & \boldsymbol{b}(t_{p})\\ \boldsymbol{a}(t_{2})& \boldsymbol{a}(t_{3})& \ldots & \boldsymbol{a}(t_{p+1})\\ \boldsymbol{b}(t_{2})& \boldsymbol{b}(t_{3})& \ldots & \boldsymbol{b}(t_{p+1})\\ \vdots & \vdots & \ddots & \vdots \\ \boldsymbol{a}(t_{q})& \boldsymbol{a}(t_{q+1})& \ldots & \boldsymbol{a}(t_{K_{\textit{train}}})\\ \boldsymbol{b}(t_{q})& \boldsymbol{b}(t_{q+1})& \ldots & \boldsymbol{b}(t_{K_{\textit{train}}}) \end{array} \right ] , \end{equation}

where

![]() $\boldsymbol{H} \in \mathbb{R}^{ ((m_{u}+m_{c})\times q ) \times p}$

. Performing SVD on

$\boldsymbol{H} \in \mathbb{R}^{ ((m_{u}+m_{c})\times q ) \times p}$

. Performing SVD on

![]() $\boldsymbol{H}$

we obtain the matrices

$\boldsymbol{H}$

we obtain the matrices

![]() $\boldsymbol{\varSigma }_{H}$

,

$\boldsymbol{\varSigma }_{H}$

,

![]() $\boldsymbol{V}_{H}$

,

$\boldsymbol{V}_{H}$

,

![]() $\boldsymbol{U}_{\!H}$

as before. Note that this means that we use the same weights in the velocity and scalar coefficients; this warrants further investigation, which we leave as part of future work. The matrix of the left singular vectors

$\boldsymbol{U}_{\!H}$

as before. Note that this means that we use the same weights in the velocity and scalar coefficients; this warrants further investigation, which we leave as part of future work. The matrix of the left singular vectors

![]() $\boldsymbol{U}_{\!H} \in \mathbb{R}^{ ((m_{u}+m_{c})\times q ) \times r}$

can be written explicitly as

$\boldsymbol{U}_{\!H} \in \mathbb{R}^{ ((m_{u}+m_{c})\times q ) \times r}$

can be written explicitly as

\begin{equation} \boldsymbol{U}_{\!H} = \left [ \begin{array}{cccc} \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{1})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{1})\\[3pt] \boldsymbol{U}^{(c)}_{H, 1}(t_{1})& \boldsymbol{U}^{(c)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(c)}_{H, r}(t_{1})\\[3pt] \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{2})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{2})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{2})\\[3pt] \boldsymbol{U}^{(c)}_{H, 1}(t_{2})& \boldsymbol{U}^{(c)}_{H, 2}(t_{2})& \ldots & \boldsymbol{U}^{(c)}_{H, r}(t_{2})\\[3pt] \vdots & \vdots & \ddots & \vdots \\[3pt] \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{q})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{q})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{q})\\[3pt] \boldsymbol{U}^{(c)}_{H, 1}(t_{q})& \boldsymbol{U}^{(c)}_{H, 2}(t_{q})& \ldots & \boldsymbol{U}^{(c)}_{H, r}(t_{q}) \end{array} \right ] . \end{equation}

\begin{equation} \boldsymbol{U}_{\!H} = \left [ \begin{array}{cccc} \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{1})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{1})\\[3pt] \boldsymbol{U}^{(c)}_{H, 1}(t_{1})& \boldsymbol{U}^{(c)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(c)}_{H, r}(t_{1})\\[3pt] \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{2})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{2})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{2})\\[3pt] \boldsymbol{U}^{(c)}_{H, 1}(t_{2})& \boldsymbol{U}^{(c)}_{H, 2}(t_{2})& \ldots & \boldsymbol{U}^{(c)}_{H, r}(t_{2})\\[3pt] \vdots & \vdots & \ddots & \vdots \\[3pt] \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{q})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{q})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{q})\\[3pt] \boldsymbol{U}^{(c)}_{H, 1}(t_{q})& \boldsymbol{U}^{(c)}_{H, 2}(t_{q})& \ldots & \boldsymbol{U}^{(c)}_{H, r}(t_{q}) \end{array} \right ] . \end{equation}

A dynamical system for

![]() $\boldsymbol{v}_{H}$

,

$\boldsymbol{v}_{H}$

,

can be derived as before. Also, the process noise covariance

![]() $\boldsymbol{Q} \in \mathbb{R}^{r \times r}$

can be calculated in the same way as in § 2.

$\boldsymbol{Q} \in \mathbb{R}^{r \times r}$

can be calculated in the same way as in § 2.

Vector

![]() $\boldsymbol{v}_{H}[k]$

can be used to obtain the current and future POD coefficients of the velocity and scalar fields as

$\boldsymbol{v}_{H}[k]$

can be used to obtain the current and future POD coefficients of the velocity and scalar fields as

\begin{eqnarray} \left [ \begin{array}{c} \boldsymbol{a}[k] \\[3pt] \boldsymbol{b}[k] \\[3pt] \boldsymbol{a}[k+1] \\[3pt] \boldsymbol{b}[k+1] \\[3pt] \vdots \\[3pt] \boldsymbol{a} [k+q] \\[3pt] \boldsymbol{b}[k+q] \\[3pt] \end{array} \right ] & = & \boldsymbol{U}_{\!H} \varSigma _{H} \boldsymbol{v}_{H}[k]. \end{eqnarray}

\begin{eqnarray} \left [ \begin{array}{c} \boldsymbol{a}[k] \\[3pt] \boldsymbol{b}[k] \\[3pt] \boldsymbol{a}[k+1] \\[3pt] \boldsymbol{b}[k+1] \\[3pt] \vdots \\[3pt] \boldsymbol{a} [k+q] \\[3pt] \boldsymbol{b}[k+q] \\[3pt] \end{array} \right ] & = & \boldsymbol{U}_{\!H} \varSigma _{H} \boldsymbol{v}_{H}[k]. \end{eqnarray}

In particular, for the

![]() $k$

th instant we have

$k$

th instant we have

\begin{equation} \left [ \begin{array}{c} \boldsymbol{a}\\ \boldsymbol{b} \end{array} \right ][k] = \underbrace {\boldsymbol{U}_{\!H}(t_{1}) \boldsymbol{\varSigma }_{H}} _{=\boldsymbol{C}} \boldsymbol{v}_{H}[k], \end{equation}

\begin{equation} \left [ \begin{array}{c} \boldsymbol{a}\\ \boldsymbol{b} \end{array} \right ][k] = \underbrace {\boldsymbol{U}_{\!H}(t_{1}) \boldsymbol{\varSigma }_{H}} _{=\boldsymbol{C}} \boldsymbol{v}_{H}[k], \end{equation}

where

![]() $\boldsymbol{C} \in \mathbb{R}^{(m_{u}+m_{c}) \times r}$

and matrix

$\boldsymbol{C} \in \mathbb{R}^{(m_{u}+m_{c}) \times r}$

and matrix

![]() $\boldsymbol{U}_{\!H}(t_{1})$

represents the top two rows of

$\boldsymbol{U}_{\!H}(t_{1})$

represents the top two rows of

![]() $\boldsymbol{U}_{\!H}$

, i.e.

$\boldsymbol{U}_{\!H}$

, i.e.

\begin{equation} \boldsymbol{U}_{\!H}(t_{1}) = \left [ \begin{array}{cccc} \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{1})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{1})\\[3pt] \boldsymbol{U}^{(c)}_{H, 1}(t_{1})& \boldsymbol{U}^{(c)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(c)}_{H, r}(t_{1}) \end{array} \right ] . \end{equation}

\begin{equation} \boldsymbol{U}_{\!H}(t_{1}) = \left [ \begin{array}{cccc} \boldsymbol{U}^{(u,v,w)}_{H, 1}(t_{1})& \boldsymbol{U}^{(u,v,w)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(u,v,w)}_{H, r}(t_{1})\\[3pt] \boldsymbol{U}^{(c)}_{H, 1}(t_{1})& \boldsymbol{U}^{(c)}_{H, 2}(t_{1})& \ldots & \boldsymbol{U}^{(c)}_{H, r}(t_{1}) \end{array} \right ] . \end{equation}

Let us assume that we have now

![]() $l$

scalar measurements

$l$

scalar measurements

![]() $\boldsymbol{s}[k]$

; they can be written as

$\boldsymbol{s}[k]$

; they can be written as

where now matrix

![]() $\boldsymbol{S}$

selects the rows of the POD mode matrix

$\boldsymbol{S}$

selects the rows of the POD mode matrix

![]() $\boldsymbol{U}_{Z}$

corresponding to the scalar sensor locations. Note that it is possible to mix velocity and scalar measurements; in this case

$\boldsymbol{U}_{Z}$

corresponding to the scalar sensor locations. Note that it is possible to mix velocity and scalar measurements; in this case

![]() $\boldsymbol{S}$

will act on the compound matrix

$\boldsymbol{S}$

will act on the compound matrix

![]() $\boldsymbol{U}_{YZ}$

(see (3.4)). In the following we assume that we have scalar measurements only. The covariance

$\boldsymbol{U}_{YZ}$

(see (3.4)). In the following we assume that we have scalar measurements only. The covariance

![]() $\boldsymbol{R}$

of vector

$\boldsymbol{R}$

of vector

![]() $\boldsymbol{g}$

can be obtained as explained in the previous section.

$\boldsymbol{g}$

can be obtained as explained in the previous section.

The Kalman filter takes the form

where

![]() $(\boldsymbol{C})_2$

indicates the second-row block of matrix

$(\boldsymbol{C})_2$

indicates the second-row block of matrix

![]() $\boldsymbol{C}$

, and the Kalman gain matrix

$\boldsymbol{C}$

, and the Kalman gain matrix

![]() $\mathcal{L}$

is obtained by solving a Riccati equation similar to (2.25) where

$\mathcal{L}$

is obtained by solving a Riccati equation similar to (2.25) where

![]() $\boldsymbol{U}_{\!Y}$

is replaced by

$\boldsymbol{U}_{\!Y}$

is replaced by

![]() $\boldsymbol{U}_{Z}$

.

$\boldsymbol{U}_{Z}$

.

From

![]() $\hat {\boldsymbol{v}}_{H}[k]$

we can estimate the current and future POD coefficients from (3.8) and the instantaneous velocity and scalar fields from (3.4).

$\hat {\boldsymbol{v}}_{H}[k]$

we can estimate the current and future POD coefficients from (3.8) and the instantaneous velocity and scalar fields from (3.4).

4. Application to the flow around a surface-mounted cube

4.1. Computational set-up and numerical methodology

We consider the three-dimensional flow around a surface-mounted cube of height

![]() $h$

. The computational domain, shown in figure 1, has dimensions

$h$

. The computational domain, shown in figure 1, has dimensions

![]() $L_{x} \times L_{y} \times L_{z}= 19h \times 10h \times 10h$

. The origin of the coordinate system is located at the bottom midpoint of the upstream face of the cube. The inlet is located at

$L_{x} \times L_{y} \times L_{z}= 19h \times 10h \times 10h$

. The origin of the coordinate system is located at the bottom midpoint of the upstream face of the cube. The inlet is located at

![]() $x/h=-6$

in the streamwise direction and the outlet at

$x/h=-6$

in the streamwise direction and the outlet at

![]() $x/h=13$

. The domain extends between

$x/h=13$

. The domain extends between

![]() $-5 \leqslant z/h \leqslant 5$

in the spanwise direction and up to

$-5 \leqslant z/h \leqslant 5$

in the spanwise direction and up to

![]() $y/h=10$

in the wall-normal direction. Uniform velocity

$y/h=10$

in the wall-normal direction. Uniform velocity

![]() $U_{\infty }=1$

is prescribed at the inlet and a convective boundary condition at the outlet. No-slip conditions are imposed on the cube surfaces and bottom wall, while symmetry conditions are applied on the top and spanwise boundaries (Krajnovic & Davidson Reference Krajnovic and Davidson2002).

$U_{\infty }=1$

is prescribed at the inlet and a convective boundary condition at the outlet. No-slip conditions are imposed on the cube surfaces and bottom wall, while symmetry conditions are applied on the top and spanwise boundaries (Krajnovic & Davidson Reference Krajnovic and Davidson2002).

Computational domain and boundary conditions (BC). The red lines mark the boundaries of the region where snapshot data are collected.

A scalar is released from a source with elliptical cross-section centred at

![]() $(x_{s}, y_{s}, z_{s})=(-2, 0.1, -0.5:+0.5)h$

. The axis of the source is along the

$(x_{s}, y_{s}, z_{s})=(-2, 0.1, -0.5:+0.5)h$

. The axis of the source is along the

![]() $z$

direction and at the same elevation as the core of the horseshoe vortex forming in front of the cube (see later). The source strength

$z$

direction and at the same elevation as the core of the horseshoe vortex forming in front of the cube (see later). The source strength

![]() $\hat {m}_{T}(\boldsymbol{x})$

(amount of scalar released per unit volume) is steady with a spatial distribution given by

$\hat {m}_{T}(\boldsymbol{x})$

(amount of scalar released per unit volume) is steady with a spatial distribution given by

with

\begin{equation} \gamma = \frac {1}{4r_{x}r_{y}(\pi -2/{\pi }) }, \quad r = \sqrt { \bigg ( \frac {x-x_{s}}{r_{x}} \bigg )^{2} + \bigg ( \frac {y-y_{s}}{r_{y}} \bigg )^{2} }, \end{equation}

\begin{equation} \gamma = \frac {1}{4r_{x}r_{y}(\pi -2/{\pi }) }, \quad r = \sqrt { \bigg ( \frac {x-x_{s}}{r_{x}} \bigg )^{2} + \bigg ( \frac {y-y_{s}}{r_{y}} \bigg )^{2} }, \end{equation}

where

![]() $(x_{s}, y_{s}, z_{s})$

are the coordinates of the centre of the source,

$(x_{s}, y_{s}, z_{s})$

are the coordinates of the centre of the source,

![]() $r_{x}=0.1h$

is the major axis and

$r_{x}=0.1h$

is the major axis and

![]() $r_{y}=0.08h$

is the minor axis (smaller than

$r_{y}=0.08h$

is the minor axis (smaller than

![]() $r_x$

due to the presence of the bottom wall). Equation (4.1) represents a source distribution that vanishes at the boundary of the elliptical cross-section. The normalisation parameter

$r_x$

due to the presence of the bottom wall). Equation (4.1) represents a source distribution that vanishes at the boundary of the elliptical cross-section. The normalisation parameter

![]() $\gamma$

ensures the volume integral of

$\gamma$

ensures the volume integral of

![]() $\hat {m}_{T}$

is unity.

$\hat {m}_{T}$

is unity.

The flow is simulated with the in-house code PANTARHEI. The incompressible Navier–Stokes equations are discretised in space using the finite-volume method, with a second-order central discretisation scheme (for both convection and viscous terms) and marched in time with a third-order backward scheme. The fractional step method is employed to obtain pressure and correct the velocities to satisfy the continuity equation. The code has been used extensively to simulate transitional and turbulent flows (Thomareis & Papadakis Reference Thomareis and Papadakis2017, Reference Thomareis and Papadakis2018; Yao & Papadakis Reference Yao and Papadakis2023; Schlander, Rigopoulos & Papadakis Reference Schlander, Rigopoulos and Papadakis2024).

The Reynolds number, defined as

![]() $Re_{h}=U_{\infty }h/\nu$

, is set to

$Re_{h}=U_{\infty }h/\nu$

, is set to

![]() $5000$

. The flow domain is discretised using

$5000$

. The flow domain is discretised using

![]() $N_{x} \times N_{y} \times N_{z}=576 \times 268 \times 406$

cells in a Cartesian arrangement; the total number around the cube is 61 612 200. The cells are clustered close to the cube surfaces and bottom wall. Each cube edge is discretised with 102 cells. The thickness is

$N_{x} \times N_{y} \times N_{z}=576 \times 268 \times 406$

cells in a Cartesian arrangement; the total number around the cube is 61 612 200. The cells are clustered close to the cube surfaces and bottom wall. Each cube edge is discretised with 102 cells. The thickness is

![]() $0.006h$

in the first layer near the walls. A time step

$0.006h$

in the first layer near the walls. A time step

![]() $\delta t U_{\infty }/h =0.001$

is selected to satisfy

$\delta t U_{\infty }/h =0.001$

is selected to satisfy

![]() $\text{CFL}\lt 0.5$

. The maximum ratio of the cell size (taken as the cubic root of cell volume) to the Kolmogorov length scale was equal to 4.6 in the recirculation zone behind the cube, which indicates almost direct numerical simulation (DNS)-quality resolution, which is sufficient for the purposes of the present investigation.

$\text{CFL}\lt 0.5$

. The maximum ratio of the cell size (taken as the cubic root of cell volume) to the Kolmogorov length scale was equal to 4.6 in the recirculation zone behind the cube, which indicates almost direct numerical simulation (DNS)-quality resolution, which is sufficient for the purposes of the present investigation.

Snapshots of velocities and scalar fields are collected within a subregion (defined by the red lines of figure 1) that contains

![]() $N=38.69 \times 10^{6}$

cells. The flow is first advanced for

$N=38.69 \times 10^{6}$

cells. The flow is first advanced for

![]() $2$

flow-through times; the simulation is then restarted and advanced for

$2$

flow-through times; the simulation is then restarted and advanced for

![]() $6$

more flow-through times. In total

$6$

more flow-through times. In total

![]() $K=6000$

flow and scalar field snapshots are recorded synchronously during the last

$K=6000$

flow and scalar field snapshots are recorded synchronously during the last

![]() $6$

flow-through times. The time separation between snapshots is

$6$

flow-through times. The time separation between snapshots is

![]() $\Delta t=0.019{h}/{U_{\infty }}$

.

$\Delta t=0.019{h}/{U_{\infty }}$

.

4.2. Time-averaged flow and scalar fields

Figure 2 shows time-averaged streamlines superimposed on the mean pressure fields on the symmetry

![]() $xy$

plane and the

$xy$

plane and the

![]() $xz$

plane at the height of the first cell centroid away from the bottom wall. The reference pressure is the mean static pressure at the inlet plane. In figure 2(

$xz$

plane at the height of the first cell centroid away from the bottom wall. The reference pressure is the mean static pressure at the inlet plane. In figure 2(

![]() $a$

), three main separation regions can be seen, at the front (

$a$

), three main separation regions can be seen, at the front (

![]() $F$

), at the leading edge (

$F$

), at the leading edge (

![]() $T$

) and downstream of the cube (

$T$

) and downstream of the cube (

![]() $R$

). There is a secondary recirculation region (

$R$

). There is a secondary recirculation region (

![]() $N$

) at the bottom corner of the leeward face.

$N$

) at the bottom corner of the leeward face.

Time-averaged streamlines superimposed on contours of mean pressure field: (

![]() $a$

) symmetry

$a$

) symmetry

![]() $xy$

plane at

$xy$

plane at

![]() $z/h=0$

; (b)

$z/h=0$

; (b)

![]() $xz$

plane at distance

$xz$

plane at distance

![]() $y/h=0.003$

from the bottom wall.

$y/h=0.003$

from the bottom wall.

In figure 2(

![]() $b$

), it can be seen that the flow separates upstream of the cube, at the saddle point

$b$

), it can be seen that the flow separates upstream of the cube, at the saddle point

![]() $S_s$

located at

$S_s$

located at

![]() $(-1.8, 0, 0)h$

. The flow reattaches upstream of the cube at the nodal point

$(-1.8, 0, 0)h$

. The flow reattaches upstream of the cube at the nodal point

![]() $N_{a}$

at

$N_{a}$

at

![]() $(-0.015, 0, 0)h$

. The streamline passing through

$(-0.015, 0, 0)h$

. The streamline passing through

![]() $S_{s}$

bends around the cube and forms a ‘line of separation’ (

$S_{s}$

bends around the cube and forms a ‘line of separation’ (

![]() $LS$

) close to the bottom wall. The ‘line of attachment’ (

$LS$

) close to the bottom wall. The ‘line of attachment’ (

![]() $LA$

) is formed by streamlines passing through

$LA$

) is formed by streamlines passing through

![]() $N_{a}$

. The horseshoe vortex centre forms a line between

$N_{a}$

. The horseshoe vortex centre forms a line between

![]() $LS$

and

$LS$

and

![]() $LA$

and is deflected around the cube to form two legs

$LA$

and is deflected around the cube to form two legs

![]() $B$

and

$B$

and

![]() $B^{\prime }$

. The shear layers separating from the two vertical edges of the front face generate the two lateral vortices

$B^{\prime }$

. The shear layers separating from the two vertical edges of the front face generate the two lateral vortices

![]() $E$

and

$E$

and

![]() $E^{\prime }$

. The two vortices and the shear layer over the cube join at a higher elevation to form an arc-shaped vortex tube. Downstream of the cube, two symmetrically located points

$E^{\prime }$

. The two vortices and the shear layer over the cube join at a higher elevation to form an arc-shaped vortex tube. Downstream of the cube, two symmetrically located points

![]() $D_{1}$

and

$D_{1}$

and

![]() $D_{2}$

indicate two vortices on the bottom wall. Further downstream, the flow reattaches at

$D_{2}$

indicate two vortices on the bottom wall. Further downstream, the flow reattaches at

![]() $S_r$

$S_r$

![]() $(3.31, 0, 0)h$

. The reattachment length is

$(3.31, 0, 0)h$

. The reattachment length is

![]() $L_{R}/h=2.31$

.

$L_{R}/h=2.31$

.

Contour plots of the Reynolds stresses and the turbulent kinetic energy (TKE) at the symmetry plane

![]() $z/h=0$

are shown in figure 3. High levels of

$z/h=0$

are shown in figure 3. High levels of

![]() $\langle u^{\prime }u^{\prime } \rangle$

are found inside the separating shear layer emanating from the leading edge. The peak value is located above the cube at

$\langle u^{\prime }u^{\prime } \rangle$

are found inside the separating shear layer emanating from the leading edge. The peak value is located above the cube at

![]() $(x,y)=(0.9, 1.36)h$

, while

$(x,y)=(0.9, 1.36)h$

, while

![]() $\langle v^{\prime }v^{\prime } \rangle$

peaks downstream of the cube at

$\langle v^{\prime }v^{\prime } \rangle$

peaks downstream of the cube at

![]() $(x,y)=(2.85, 0.71)h$

. The peak of

$(x,y)=(2.85, 0.71)h$

. The peak of

![]() $\langle u^{\prime }v^{\prime } \rangle$

is located at

$\langle u^{\prime }v^{\prime } \rangle$

is located at

![]() $(x,y)=(0.95, 1.36)h$

which is close to the peak of

$(x,y)=(0.95, 1.36)h$

which is close to the peak of

![]() $\langle u^{\prime }u^{\prime } \rangle$

. Finally, the TKE peak is located at

$\langle u^{\prime }u^{\prime } \rangle$

. Finally, the TKE peak is located at

![]() $(x,y)=(2.6, 0.86)h$

closer to the peak location of

$(x,y)=(2.6, 0.86)h$

closer to the peak location of

![]() $\langle v^{\prime }v^{\prime } \rangle$

. Small patches of Reynolds stresses can be seen upstream of the cube, around the horseshoe vortex centre.

$\langle v^{\prime }v^{\prime } \rangle$

. Small patches of Reynolds stresses can be seen upstream of the cube, around the horseshoe vortex centre.

Contours of the (a–c) Reynolds stresses and (d) TKE at the symmetry

![]() $xy$

plane at

$xy$

plane at

![]() $z/h=0$

.

$z/h=0$

.

Figure 4 shows contours of Reynolds stresses and TKE in the

![]() $xz$

plane at mid-height

$xz$

plane at mid-height

![]() $y/h=0.5$

. High levels of

$y/h=0.5$

. High levels of

![]() $\langle u^{\prime }u^{\prime } \rangle$

and

$\langle u^{\prime }u^{\prime } \rangle$

and

![]() $\langle u^{\prime }w^{\prime } \rangle$

are found inside the shear layers separating from the front vertical edges and downstream of the cube. The peak value of

$\langle u^{\prime }w^{\prime } \rangle$

are found inside the shear layers separating from the front vertical edges and downstream of the cube. The peak value of

![]() $\langle u^{\prime }u^{\prime } \rangle$

is found at

$\langle u^{\prime }u^{\prime } \rangle$

is found at

![]() $(x,z)=(0.8, \pm 0.85)h$

. Notice the high values of the normal stresses in the spanwise direction

$(x,z)=(0.8, \pm 0.85)h$

. Notice the high values of the normal stresses in the spanwise direction

![]() $\langle w^{\prime }w^{\prime } \rangle$

that peak further downstream at

$\langle w^{\prime }w^{\prime } \rangle$

that peak further downstream at

![]() $(x,z)=(3.15, 0)h$

. Such high values indicate strong symmetry-breaking motions. The TKE combines features of

$(x,z)=(3.15, 0)h$

. Such high values indicate strong symmetry-breaking motions. The TKE combines features of

![]() $\langle u^{\prime }u^{\prime } \rangle$

and

$\langle u^{\prime }u^{\prime } \rangle$

and

![]() $\langle w^{\prime }w^{\prime } \rangle$

but is more influenced by the spanwise stresses.

$\langle w^{\prime }w^{\prime } \rangle$

but is more influenced by the spanwise stresses.

Contours of the (a–c) Reynolds stresses and (d) TKE in the

![]() $xz$

plane at mid-height

$xz$

plane at mid-height

![]() $y/h=0.5$

.

$y/h=0.5$

.

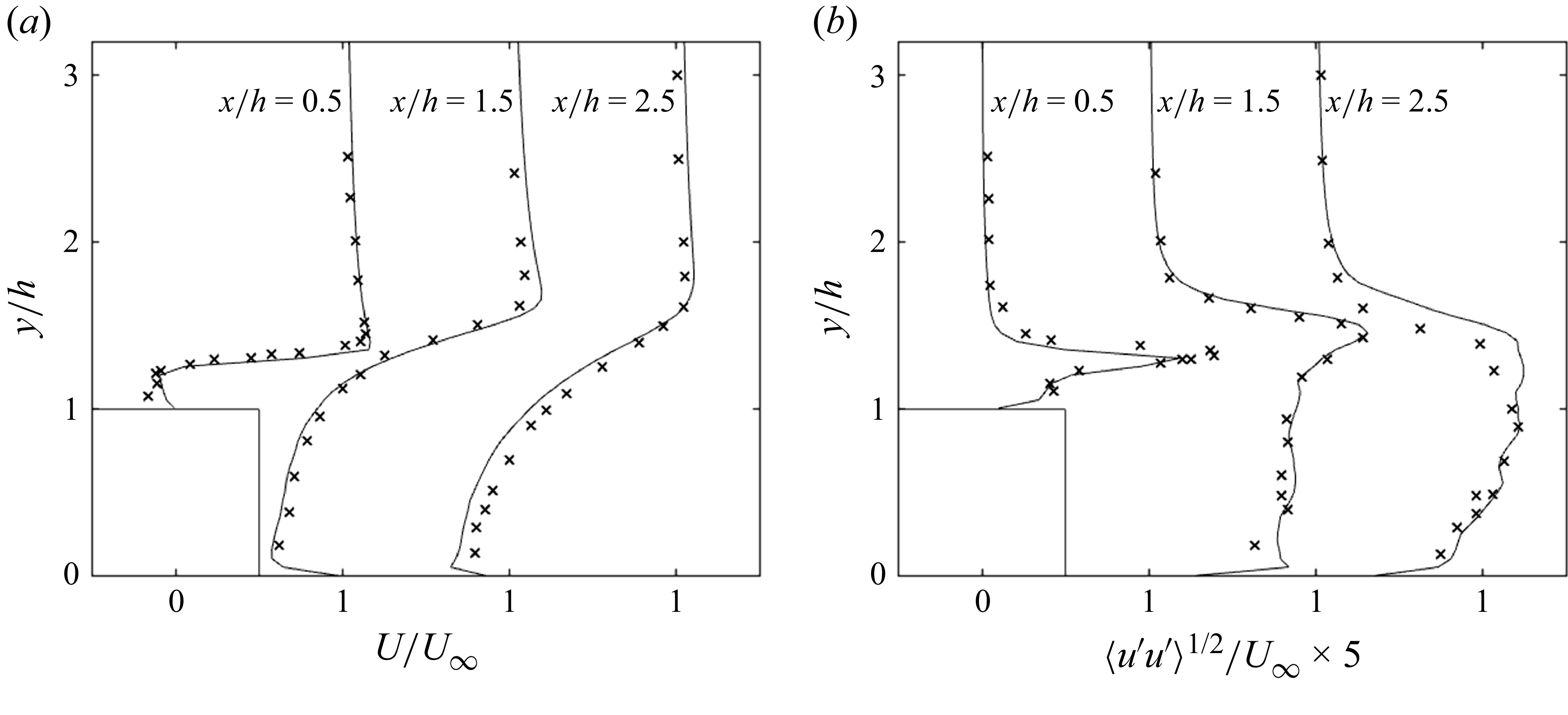

Mean and root-mean-square (r.m.s.) profiles of the streamwise velocity are compared against the experimental data of Castro & Robins (Reference Castro and Robins1977) in figure 5. At

![]() $x/h=0.5$

and

$x/h=0.5$

and

![]() $1.5$

the DNS solution follows the experimental results quite well. At

$1.5$

the DNS solution follows the experimental results quite well. At

![]() $x/h=2.5$

, the numerical results are also good, but slightly overestimate the backflow streamwise velocity within the recirculation zone in

$x/h=2.5$

, the numerical results are also good, but slightly overestimate the backflow streamwise velocity within the recirculation zone in

![]() $y/h=0{-}1$

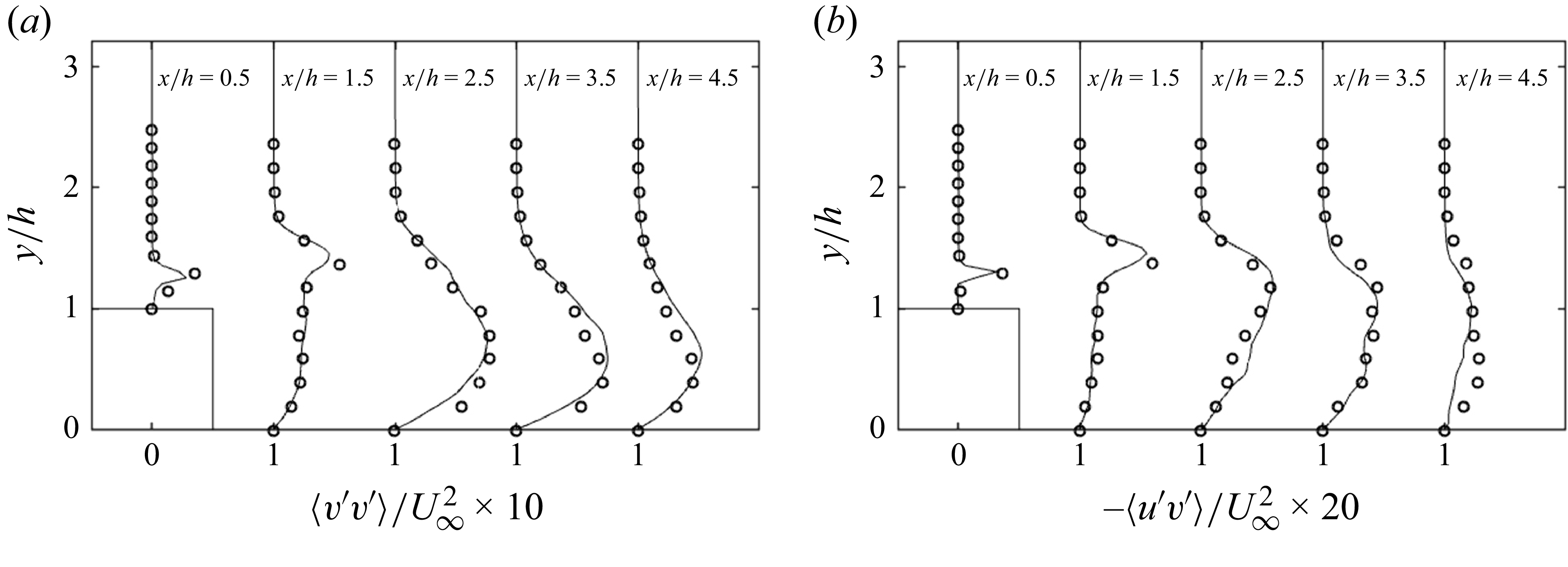

. For the r.m.s. of streamwise velocity, the numerical results give quantitatively good predictions at all streamwise positions. Small differences can be attributed to different inlet velocity profiles. We have used a constant profile at the inlet, but for the experiments a fully developed boundary-layer profile was employed. We also compare our predictions with the DNS of Rossi, Philips & Iaccarino (Reference Rossi, Philips and Iaccarino2010). Figure 6 presents profiles of normal stresses

$y/h=0{-}1$