1. Introduction

It is an open question if and to what extent language and music share a mechanism for perceiving and analyzing pitch accent (e.g. Bautista & Ciampetti, Reference Bautista and Ciampetti2003; Bidelman et al., Reference Bidelman, Gandour and Krishnan2011, Reference Bidelman, Hutka and Moreno2013; Confavreux et al., Reference Confavreux, Croisile, Garassus, Aimard and Trillet1992; Drakoulaki et al., Reference Drakoulaki, Anagnostopoulou, Guasti, Tillmann and Varlokosta2024; Jansen et al., Reference Jansen, Harding, Loerts, Başkent and Lowie2022, Reference Jansen, Harding, Loerts, Başkent and Lowie2023; Knickerbocker, Reference Knickerbocker2007; Liu et al., Reference Liu, Patel, Fourcin and Stewart2010; Lochy et al., Reference Lochy, Hyde, Parisel, Van Hyfte and Peretz2004; Loui et al., Reference Loui, Wu, Wessel and Knight2009; Lu et al., Reference Lu, Ho, Liu, Wu and Thompson2015; Patel, Reference Patel2010; Patel et al., Reference Patel, Peretz, Tramo and Labreque1998b; Peretz, Reference Peretz1993, Reference Peretz2008; Peretz & Coltheart, Reference Peretz and Coltheart2003; Peretz et al., Reference Peretz, Kolinsky, Tramo, Labrecque, Hublet, Demeurisse and Belleville1994, Reference Peretz, Ayotte, Zatorre, Mehler, Ahad, Penhune and Jutras2002; Patel et al., Reference Patel, Foxton and Griffiths2005, Reference Patel, Wong, Foxton, Lochy and Peretz2008; Perrachione et al., Reference Perrachione, Fedorenko, Vinke, Gibson and Dilley2013; Pfordresher & Brown, Reference Pfordresher and Brown2009; Sadakata et al., Reference Sadakata, Weidema, Roncaglia-Denissen and Honing2023; Sammler, Reference Sammler2020; Slevc & Miyake, Reference Slevc and Miyake2006; Tillmann, Reference Tillmann2014). Some theories relate music pitch processing with right-hemisphere brain areas and linguistic prosody with the analogous left-hemisphere brain homologues (Chen et al., Reference Chen, Zhao, Zhong, Cui, Li, Gong, Dong and Nan2018; Peretz, Reference Peretz2008, i.a.). Others have claimed that music processing affects language-associated brain structures (that is, inferior frontal gyrus, cerebellum and primary motor cortex) and that there is a shared frequency response in a subcortical neural pitch network for both language and music (Besson et al., Reference Besson, Schön, Moreno, Santos and Magne2007; Wong et al., Reference Wong, Skoe, Russo, Dees and Kraus2007).

Within current trends in cognitive sciences, a dominant question concerns the investigation of cross-domain correlation transfer phenomena to substantiate the relation of language and music (e.g. see the meta-analysis by Jansen et al., Reference Jansen, Harding, Loerts, Başkent and Lowie2023), while less emphasis is given to the exploration of whether these effects reflect potential shared underlying pitch cognitive mechanisms.

The current study offers a new psycholinguistic approach to real-time processing where music pitch potentially substitutes the function of intonation in temporal structural ambiguous sentences. We thus address the following research question: Does music pitch influence the resolution of temporal structural ambiguity in Greek garden-pathed sentences?

A possible linguistic domain for examining such an interaction between intonation and music pitch is the environments causing the garden-path effect Footnote 1 (Frazier, Reference Frazier1978; Pickering & van Gompel, Reference Pickering and Van Gompel2006), that is, sentences like (1):

In such instances (1), the reader encounters a temporal structural ambiguity: The constituent the violins is initially interpreted as the object of the optionally transitive verb record. Immediately afterwards, once the reader analyses the second Verbal Phrase (VP) were sounding, they realize that the preceding element (the violins) is eventually the subject of that VP and the sentence is disambiguated. It has been observed that if the violins were attached to the second VP, extra processing cost is required (Papadopoulou & Tsimpli, Reference Papadopoulou and Tsimpli2005 for Greek). It has been proposed that the parsing analysis of such sentences is achieved via the late closure principle (that is, parsing preference for attaching upcoming constituents; Frazier, Reference Frazier1978; Papangeli & Marinis, Reference Papangeli and Marinis2010 for Greek).

However, the ambiguity associated with these sentences can be resolved with the use of intonation: a rising intonational emphasis on the first VP (were recording), leads the hearer to directly interpret the violins as the subject of were sounding. Previous research on this hypothesis (Martzoukou & Papadopoulou, Reference Martzoukou and Papadopoulou2020), has found that rising intonation before the closing of the first VP (were recording) indeed shapes the word boundaries, eliminating such ambiguities in Greek.

The purpose of our study is to investigate whether the use of rising music pitch at the loci where the linguistic intonation would rise facilitates the disambiguation in Greek garden-path sentences. If the answer to this question turns out to be positive, we could have evidence from real-time processing that non-linguistic acoustic cues can contribute to language disambiguation, which, in turn, would point toward the direction that linguistic and music pitch share/make use of similar identifying processes.

We will first discuss the theoretical and experimental background, which, more or less, addresses the question regarding shared properties and mechanisms between linguistic and music cognition and we will then outline our hypothesis that music pitch cues may signal a similar function to linguistic intonation as far as disambiguation is concerned.

1.1. Theoretical and experimental background: the relation between language and music and the prosody–pitch interaction

Both language and music systems (specific only to humans, Patel, Reference Patel2010; cf. Cross, Reference Cross, Wallin, Björn and Steven2001; Hauser & McDermott, Reference Hauser and McDermott2003) use small units, which are physical sound entities that result from the sound processing information system. In both cases, there is a common physical process of pairing sound information, which involves pitch (Patel, Reference Patel2010; Randel, Reference Randel1978; Tomasello et al., Reference Tomasello, Call and Hare2003). Moreover, within certain generative and related theoretical models, previous research on language (Chomsky, Reference Chomsky1957, Reference Chomsky1965, Reference Chomsky and Aka1991; Jackendoff, Reference Jackendoff1997; Pinker, Reference Pinker1994; Ullman et al., Reference Ullman, Corkin, Coppola, Hickok, Growdon, Koroshetz and Pinker1997) and music (Lerdahl & Jackendoff, Reference Lerdahl and Jackendoff1977; Reference Lerdahl and Jackendoff1983, see Patel, Reference Patel2010 for a general overview) suggests that both systems have analogous operational systems in the sense that both use a storage mental place (equivalent to lexicon), where lexical information is listed, and a mental, combinatorial, rule-generative system (Chomsky, Reference Chomsky1957, Reference Chomsky1965, Reference Chomsky and Aka1991; Jackendoff, Reference Jackendoff1997; Pinker, Reference Pinker1994; Ullman et al., Reference Ullman, Corkin, Coppola, Hickok, Growdon, Koroshetz and Pinker1997), which enables humans with the capacity to create a(n) infinite number of linguistic and musical progressions respectively.

In addition, prior research on artificial implicit music learning provides empirical evidence regarding the relationship between language and music (Loui, Reference Loui2010, Reference Loui2012; Loui & Wessel, Reference Loui, Wessel and Kam2006, Reference Loui and Wessel2008). These studies have shown that after low input exposure, musically untrained learners are able to recognize familiar notes, but their generalization rules are insignificant. However, once the exposed musical sequences increase, the generalization rates become significantly higher. Similar findings regarding the impact of low or high input complexity come from the Radulescu et al. (Reference Radulescu, Wijnen and Avrutin2018) study. Radulescu et al. showed that artificial language learning is directly affected by the input complexity and the encoding capacity: low input capacity triggers memorization, while high input capacity activates the generalization process. Further extensions on Radulescu’s et al. and Loui’s et al. work suggest that language and music share certain information learning processes (see also Kargakis, Reference Kargakis2019).

Finally, research on structural integration has shown that music affects syntactic processing (Fedorenko et al., Reference Fedorenko, Patel, Casasanto, Winawer and Gibson2009; Slevc et al., Reference Slevc, Rosenberg and Patel2009). In the Slevc et al. (Reference Slevc, Rosenberg and Patel2009) study, it was shown that musical syntactic violations (that is, out-of-key notes) accompanying the segments of sentences like “After the trial the attorney advised (that) the defendant was likely to commit more crimes” led to longer reaction time in the critical region of ambiguity (was). Such an effect was not found when no musical syntactic violation appeared. Similarly, Fedorenko et al. (Reference Fedorenko, Patel, Casasanto, Winawer and Gibson2009), testing the simultaneous structural integration demands on language and music in sentences with local and non-local dependencies showed that out of key musical stimuli led to significantly lower accuracy judgments in non-local dependencies. This effect was not found for acoustically anomalous stimuli (increasing loudness). Both studies explain their findings within the Shared Syntactic Integration Resource Hypothesis (SSIRH) (Patel, Reference Patel2003), which states that the cognitive and neural resources of a shared syntactic mechanism among distinct domains will lead to competition for the available resources. SSIRH finds experimental support in a number of neuroscientific studies (Koelsch et al., Reference Koelsch, Gunter, von Cramon, Zysset, Lohmann and Friederici2002; Levitin & Menon, Reference Levitin and Menon2003; Maess et al., Reference Maess, Koelsch, Gunter and Friederici2001; Patel et al., Reference Patel, Gibson, Ratner, Besson and Holcomb1998a; Sammler et al., Reference Sammler, Koelsch, Ball, Brandt, Elger, Friederici and Schulze-Bonhage2009; Stromswold et al., Reference Stromswold, Caplan, Alpert and Rauch1996; Tillmann et al., Reference Tillmann, Janata and Bharucha2003).

Turning to the question as to whether language shares any underlying process with pitch specifically, the findings up to date are inconclusive. Some neuropsychological studies suggest that individuals with congenital amusia, a disorder in the perception and production of musical pitch, display relatively intact linguistic prosodic perception (e.g. Peretz et al., Reference Peretz, Ayotte, Zatorre, Mehler, Ahad, Penhune and Jutras2002). These studies find further support from some more recent neuroimaging studies, which suggest dissociations for linguistic and music pitch (Chen et al., Reference Chen, Zhao, Zhong, Cui, Li, Gong, Dong and Nan2018, Reference Chen, Affourtit, Ryskin, Regev, Norman-Haignere, Jouravlev, Malik-Moraleda, Kean, Varley and Fedorenko2023; Maggu et al., Reference Maggu, Wong, Antoniou, Bones, Liu and Wong2018; Peretz, Reference Peretz2008). However, other neuropsychological studies on a variety of linguistic phenomena, such as question–statement pairs and lexical tone distinction, have found that pitch-processing deficits associated with congenital amusia (or tone-deafness) can extend to speech prosody, particularly when prosodic intervals involve small semitone differences and are, therefore, less perceptually discrete (see Liu et al., Reference Liu, Patel, Fourcin and Stewart2010; Lu et al., Reference Lu, Ho, Liu, Wu and Thompson2015; Patel et al., Reference Patel, Peretz, Tramo and Labreque1998b, Reference Patel, Foxton and Griffiths2005, Reference Patel, Wong, Foxton, Lochy and Peretz2008; Pfeifer et al., Reference Pfeifer, Hamann and Exter2014; Tillmann et al., Reference Tillmann, Rusconi, Traube, Butterworth, Umilta and Peretz2011 for congenital amusia; Bautista & Ciampetti, Reference Bautista and Ciampetti2003; Confavreux et al., Reference Confavreux, Croisile, Garassus, Aimard and Trillet1992 for degenerative amusia). Within this approach, prior research’s inability to detect those general pitch deficits can be attributed to easy linguistic pitch processing tasks (Ayotte et al., Reference Ayotte, Peretz and Hyde2002; Foxton et al., Reference Foxton, Dean, Gee, Peretz and Griffiths2004; Jiang et al., Reference Jiang, Hamm, Lim, Kirk and Yang2010; Liu et al., Reference Liu, Jiang, Thompson, Xu, Yang and Stewart2012; Nguyen et al., Reference Nguyen, Tillmann, Gosselin and Peretz2009; Peretz, Reference Peretz2006, Reference Peretz2008; Peretz & Coltheart, Reference Peretz and Coltheart2003; Peretz et al., Reference Peretz, Ayotte, Zatorre, Mehler, Ahad, Penhune and Jutras2002). This view is supported by evidence from various domains. For instance, clinical studies investigating cross-domain treatment interventions in speech and language disorders (e.g. aphasia, dyslexia) suggest that training in one domain can have benefits in another (Besson et al., Reference Besson, Schön, Moreno, Santos and Magne2007; Zumbansen et al., Reference Zumbansen, Peretz and Hébert2014). Νeuroimaging research shows that the brainstem, which is involved in the encoding of frequency-related stimuli, is highly sensitive to both speech and music (Krishnan et al., Reference Krishnan, Bidelman, Smalt, Ananthakrishnan and Gandour2012; Musacchia et al., Reference Musacchia, Sams, Skoe and Kraus2007; Wong et al., Reference Wong, Skoe, Russo, Dees and Kraus2007), providing a neural basis for general cross-domain auditory effects.

In line with these findings, a number of behavioral and neuroscientific studies suggest that there might be cross-domain transfer effects from language to music and vice versa. For instance, Wong et al. (Reference Wong, Skoe, Russo, Dees and Kraus2007) found that speakers of tonal languages display enhanced music tone perception and Bidelman et al. (Reference Bidelman, Gandour and Krishnan2011) revealed that trained musicians show better linguistic tone perception. Additional evidence comes from second language learning, showing that music abilities may lead to a general phonological advantage in second language learning (Knickerbocker, Reference Knickerbocker2007; Slevc & Miyake, Reference Slevc and Miyake2006) and, that native speakers of tonal languages (e.g. Mandarin, Cantonese and Vietnamese) have better performance under imitation and discrimination of interval music pitch demands compared to speakers of intonation languages (e.g. English) (Pfordresher & Brown, Reference Pfordresher and Brown2009). A recent meta-analysis by Jansen et al. (Reference Jansen, Harding, Loerts, Başkent and Lowie2023) supports such cross-domain transfer effects, revealing a positive correlation between prosodic perception and musical abilities across 109 relevant studies.

These results also relate to work examining the neural basis of pitch processing. Past studies report a link between low-level pitch processing and left language-associated brain areas, and higher-order pitch processing is strongly associated with the right auditory neural circuitries (Hyde et al., Reference Hyde, Lerch, Zatorre, Griffiths, Evans and Peretz2007, Reference Hyde, Zatorre and Peretz2011; Zatorre & Gandour, Reference Zatorre and Gandour2008; Zatorre et al., Reference Zatorre, Belin and Penhune2002). However, other studies (e.g. Patel et al., Reference Patel, Oishi, Wright, Sutherland-Foggio, Saxena, Sheppard and Hillis2018; Sammler et al., Reference Sammler, Grosbras, Anwander, Bestelmeyer and Belin2015; Shapiro & Danly, Reference Shapiro and Danly1985) have found right hemisphere dominance for prosodic processing, suggesting that prosodic processing might overlap in music-related auditory circuits. Furthermore, a functional magnetic adaptation study by Sammler et al. (Reference Sammler, Baird, Valabrègue, Clément, Dupont, Belin and Samson2010) showed pre-lexical, phonemic integrated representation for lyrics and tunes in unfamiliar songs in the left dorsal precentral gyrus. This evidence reveals a potential fusion of linguistic and music acoustic features at early processing stages; however, the semantic and structural interpretation of lyrics was processed independently in the superior temporal sulcus, suggesting separate integration-level processes for the linguistic and music interpretations.

These studies indicate that cross-domain effects between language and music are evident not only behaviorally, but also at multiple stages of neural processing. However, findings from previous studies call for further examination before drawing a definite conclusion concerning the shared pitch processes and, even more, regarding the ways pitch is organized in cross-domain contexts such as language and music.

2. The hypothesis: high music pitch replacing rising intonation

It has been shown that rising intonation resolves (temporal) ambiguity (evidence from Greek; Arvaniti & Baltazani, Reference Arvaniti and Baltazani2005; Martzoukou & Papadopoulou, Reference Martzoukou and Papadopoulou2020; Papangeli & Marinis, Reference Papangeli and Marinis2010). Martzoukou and Papadopoulou (Reference Martzoukou and Papadopoulou2020), through a production study measuring comprehension accuracies, examined structural ambiguities in garden-path sentences like (2):

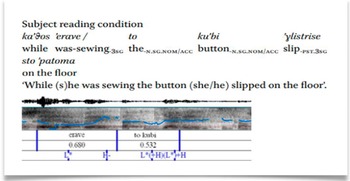

In (2), the Noun Phrase (NP) to kubi (the button) can either be interpreted as the subject of the second VP glistrise (slipped) (reading 2a) or as the object of the first VP erave (was sewing) (reading 2b). They present two different intonation patterns (Figures 1 and 2; based on Arvaniti & Baltazani, Reference Arvaniti and Baltazani2005), annotated in Praat (Boersma, Reference Boersma2011) corresponding to reading (2a) and (2b) respectively. Thus, they found that when the participants interpret the NP to kubi (the button) as the subject of the second VP (that is, reading (2a)), they place rising intonation on the first VP erave (was sewing) (see Figure 1). In contrast, when the participants interpret the NP to kubi as the object of the first VP (that is, reading (2b)), the rising intonation is placed on the NP to kubi (see Figure 2).

Spectrogram and F0 contour for the subject interpretation (from Martzoukou & Papadopoulou, Reference Martzoukou and Papadopoulou2020). The rising phrase accent H- marks the boundary of the first phrase (kathos erave).

Spectrogram and F0 contour for the object interpretation (from Martzoukou & Papadopoulou, Reference Martzoukou and Papadopoulou2020). The rising phrase accent H- marks the boundary of the first phrase (kathos erave to kubi).

Given the effect of the intonation in ambiguous sentences like (2), we aim to investigate whether music melodic patterns, analogous to the intonation patterns, can exhibit similar effects. More specifically, we examine whether high music pitch facilitates the resolution of temporal structural ambiguity. In our stimuli, instead of rising intonation, we use high music pitch within the same phrase boundaries as in Figure 1. Through a self-paced reading-listening experiment, we compare reading times across word segments in ambiguous and non-ambiguous conditions, under high, neutral and low music pitch processing demands.

2.1. Hypothesis

If high music pitch in the critical regionFootnote 2 facilitates the processing of temporal ambiguous sentences and there is no similar effect from low or neutral pitch, then high music pitch functions similarly to rising intonation in the language domain.

2.2. Predictions

Our hypothesis makes certain predictions regarding the patterns we anticipate to observe:

-

i) High music pitch may function as a substitute for the rising intonation in terms of acoustical processing, facilitating the direct interpretation of the sentence. In such a case, we should be able to observe shorter reading times in the critical region, compared to the conditions with neutral and low music pitch.

-

ii) Prediction (i) should be confirmed across critical and control conditions. That is, reading times in high music pitch exposure in the ambiguous condition should be similar to the corresponding control condition.

-

iii) Moreover, it is expected that the non-ambiguous neutral and low (control) conditions will display shorter reading times, compared to the corresponding critical conditions.

-

iv) If there is an effect of low music pitch, it could mean that the interaction of linguistic and non-linguistic acoustic information may extend to a more general acoustic/sound level.

-

v) If low music pitch exhibits longer reading times compared to neutral pitch, delaying processing in the ambiguous sentence, it would mean that the deviant pitch functions as a distracting acoustic cue. In such an outcome, it becomes clearer that the high-pitched effect is not simply due to an accidental manipulation in pitch.

If our hypothesis and predictions are on the right track, understanding the role of music pitch in prosodic perception may shed light on potential shared mechanisms in real-time processing between music and language, which in turn may reveal fundamental principles about how the brain organizes complex auditory processing.

3. Methodology

3.1. Linguistic and music stimuli

In a temporal (local) ambiguous context like (1) (repeated below), rising linguistic pitch in the first VP (were recording) would normally lead to the interpretation that the violins are the subject of the second VP (were sounding).

Greek is a non-tonal language and speech intonation contrasts are continuous (Arvaniti & Baltazani, Reference Arvaniti and Baltazani2005). In contrast, the high music pitch scales replacing the rising intonation demand a more fine-grained processing. Employing a more fine-grained process, the pitch direction (high/neutral/low) may show similar processing effects, assuming that the activation of a higher-order processing mechanism of pitch (Patel, Reference Patel2010) can affect the processing of the ambiguous sentences.

The present experiment is a self-paced reading non-cumulative task in a stationary-window combined with music (listening). Therefore, reading times are not affected by eye-movements. Word segments are accompanied by the simultaneous onset of musical notes, leading to a complete sentence and melody, respectively (Figure 3). After each sentence was accomplished, a comprehension question followed to ensure participants’ constant attention. The task measures reading times (in ms) across segments (as presented in Figure 3) and evaluates comprehension judgments through their response times and rates.

Schematic representation of the self-paced reading-listening task. Word segments were accompanied by music notes of various pitch. The high music pitch is on the third segment (emphatic region).

Syntactically, the NP ta vjolia (the violins) (see Figure 3) can be attached either in the preceding or in the following VP. If it is attached to the first VP ixografusan (were recording), it is (temporally) given the role of the object, while if it is attached to the second VP akugodan (were sounding), it is interpreted directly as its subject. In terms of processing, if the reader treats the violins as the object of the first VP, they tend to get confused once they reach the second VP (that is, the critical segment) and start looking for an alternative re-interpretation. They eventually re-interpret the NP ta vjolia (the violins) as the subject of the second VP (were sounding), and the sentence is disambiguated (see also Papadopoulou & Tsimpli, Reference Papadopoulou and Tsimpli2005; Papangeli & Marinis, Reference Papangeli and Marinis2010).

Throughout the linguistic stimuli, the task involves six-word segments (as in Figure 3), which are accompanied by piano syncopated music notes (one note per segment). Every time a participant pressed the button, the next segment would appear in the middle of the screen simultaneously with music (listening), while the previous segment would disappear. This gives the reader the opportunity to process segments at their own pace. Nevertheless, participants must pay attention to the word segments in order to retain them in memory until the sentence is completed. Once they reach the third segment (emphatic region), depending on the condition, there are three possible note variations: participants would hear either a high pitch-527 Hz note, a neutral pitch-394 Hz note, or a low pitch-295 Hz note. The remaining segments displayed stable notes across items, regardless of the condition. The fifth segment (second VP of the sentence) is the critical region where participants are expected to be garden-pathed and look for the alternative re-interpretation. Thus, reaction time on the fifth segment is expected to be shorter, if participants perceive the high music pitch on the third segment as an indication for closing the phrase boundaries (similarly to how the listener perceives the rising intonation in Figure 1).

Regarding the music stimuli, they were syncopated piano notes in wav.file format, which were created for the purposes of the current experiment to accompany the word segments, following the intonational pattern of Martzoukou and Papadopoulou (Reference Martzoukou and Papadopoulou2020) where the rising pitch accent determines the phrase boundary in the subject interpretation of the garden path sentence (Figure 1).

More specifically, there is a first C major note that functions as a context to shape the perceptual tonal center and create pitch expectancies (Patel, Reference Patel2010). It has the largest duration among the musical notes (1.08 seconds). That note is always attached to the word segment Xtes (yesterday) that appeared in all items. The note G4 is also present across stimuli and it accompanies the word segment eno (while). The reason that these segments were the same across items is to ensure that high pitch will not be displayed too early in the sentence. The duration of eno (while) and the rest notes was equal (0.641 ms) across music stimuli. Thus, if there are selective differences across segments, it cannot be attributed to the duration of music. The third segment was the emphatic region where there is a music pitch variation depending on the condition (high pitch C5, neutral pitch G4, lower pitch D4). The musical notes that followed the third region were G4, E4 and C4 for the remaining three segments, respectively.

While the music stimuli are built on segmental acoustic cues, they are not expected to be perceived as discrete acoustic cues; by the completion of the sequence of segments, a complete melody is shaped and we are dealing with music progressions (C2, G4, C5/G4/D4, G4, E4, C4; bold letters represent the high/neutral/low note of the emphatic region) (see Figures 3–6). Houtsma and Goldstein (Reference Houtsma and Goldstein1970) have shown that the hearer is perfectly able to identify a melody, even when the musical sounds have no energy at the F0. Moreover, it has been suggested that both musicians and non-musicians with music knowledge of their culture automatically develop music expectations while processing discrete acoustic events differing in pitch (Bigand & Poulin-Charronnat, Reference Bigand and Poulin-Charronnat2006; Tillmann, Reference Tillmann2014). We, therefore, expect the participants to perceive our stimuli as music. This sequence, despite the fact that it displays a variation in the third note, does not violate any music structure expectancy, as all options are in key. This way, any potential effect on the processing of the sentence is expected to be simply due to pitch manipulations and not due to music syntax.

3.1.1. Conditions and items

The experimental conditions consist of three critical and three control conditions. The three critical conditions (1)–(3), that is, garden-path syntactically temporal ambiguous sentences, are accompanied by the simultaneous onset of musical notes as depicted in Figures 4–6.

Music spectrogram per segment with high music pitch (527 Hz) on the third segment.

Music spectrogram per segment with neutral music pitch (394 Hz) on the third segment.

Music spectrogram per segment with low music pitch (295 Hz) on the third segment.

Critical condition 1: Ambiguous + high music pitch.

Critical condition 2: Ambiguous + neutral music pitch.

Critical condition 3: Ambiguous + low music pitch.

In the first VP (third segment), a high, neutral or low music pitch was employed depending on the condition (Figures 4–6 respectively), while musical cues were identical across the rest of the other segments.

-

1. Ambiguity + high music pitch (527 Hz)

-

2. Ambiguity + neutral music pitch (394 Hz)

-

3. Ambiguity + low music pitch (295 Hz)

In the three control conditions, the music cue was kept identical to the critical conditions (that is, high/ neutral/ low) and the difference concerned only the linguistic stimuli in the sense that there was no garden path ambiguity (that is, Xtes eno ixografuse ta vjolja akuge paratonies/Yesterday while (he/she) was recording the violins, (he/she) was listening to mistunes).

Control condition 1: Non-Ambiguous + high music pitch.

Control condition 2: Non-Ambiguous + neutral music pitch.

Control condition 3: Non-Ambiguous + low music pitch.

Overall, participant responses were evaluated on 24 pseudo-randomized items to distract participants from any association of specific notes with specific segments (see Appendix 1 linguistic stimuli). Half of those items (12/24) were temporal ambiguous sentences (critical items) and the remaining 12 correspond to non-ambiguous sentences (control items). The control items were morphologically manipulated in such a way that the constituent ta vjolia (the violins) does not agree in number with the verb of the second VP (hence, no garden-path effect). Moreover, there were two practice items at the beginning of the task, identical to the experimental material and 3 fillers, randomly pseudo-randomized, in order to give the participants short breaks during the task and further distract them from developing a response strategy.Footnote 3

3.2. Participants, setting and procedure

3.2.1. Participants and setting

The study has been approved by the Ethics Assessment Committee of the Utrecht Institute of Linguistics (UiL OTS), which is part of Utrecht University.

Seventy-two Greek monolingual participants were recruited privately through a post on social media, acquaintances and students from the foreign language center Paneuropia, where the experiment took place, in Heraklion City in the Crete region. Before the experiment, participants were informed about their rights and the type of study and they had to fill in a questionnaire with all the demographic and background information (such as age, education and music experience familiarity and hearing/visual issues) and sign the consent form. Twenty-two subjects were males and 50 were females. Their age ranged from 20 to 31 years old (mean age: 25;6). All the participants were undergraduate, graduate and postgraduate students with fully developed reading skills. Three out of the initial 72 participants reported that they have been diagnosed with dyslexia and they were replaced by new participants. None of the included participants reported any hearing/visual loss, reading difficulties and language or other diagnosed disorders. Eight of the 72 participants were left-handed and the computer mouse was adjusted accordingly.

Twelve out of 72 participants had music expertise (that is, active musicians with more than 3 years of systematic training). For this group, a separate analysis took place to test whether music expertise leads to enhanced linguistic perception. Although there may be a tonal perceptual advantage from music to language (Jansen et al., Reference Jansen, Harding, Loerts, Başkent and Lowie2023), it was not expected that musicians process music pitch differently for two reasons: (a) It has been reported that non-musicians detect pitch contrasts in music and they process it automatically, even if they are instructed to attend to language and ignore music in simultaneous onset contexts (Koelsch et al., Reference Koelsch, Gunter, Wittfoth and Sammler2005), and, (b) in most cultures, pitch changes of one octave can be detected even by novice listeners (Dowling & Harwood, Reference Dowling and Harwood1986 as reported in Patel, Reference Patel2010). A separate statistical analysis was run for this group (details in Section 4) and confirmed this prediction. The factors of age, along with the educational and music background, serve as control for the processing capabilities of the participants and their cognitive executive functions (e.g. memory).

The experiment took place in a studio that was not soundproof, as, due to the Covid-19 measures, access to soundproof booths was forbidden. However, the studio has been designed and used for listening training purposes, constructed to abolish external noises (double-glazed windows and door). None of the participants reported any external noise or distraction when they were asked. For the experiment, a Samsung laptop with Zep 1.17.1 software (Veenker, Reference Veenker2012), two Edifier speakers (R1855DB, 70 W RMS power) and one adjustable computer mouse were used. In similar self-paced experiments (Fedorenko et al., Reference Fedorenko, Patel, Casasanto, Winawer and Gibson2009; Slevc et al., Reference Slevc, Rosenberg and Patel2009), headphones have been used instead of speakers. However, this was avoided for two reasons. First, due to Covid-19 measures and secondly, because, during the pilot testing, it was observed that speakers made the task more natural and that both stimulus types (language and music) had perfect synchronization onset. The volume was approximately 100 db across participants. None reported that the loudness of the speakers distracted them.

3.2.2. Procedure

Before the beginning of the task, participants were introduced to the equipment and the mode of the experiment and were requested to turn their mobiles to flight mode. It was made clear that it was of great importance to complete the experiment without external distractions. They were also instructed to read the sentences carefully, even the ones that might appear several times, as there was always a comprehension question that needed to be answered at the end. Participants had to answer the follow-up comprehension question (e.g. Were the violins sounding out of tune?) by choosing between the options Yes/ No. No feedback was given to their answers. Moreover, they were not advised to pay attention to the music. However, it was made clear that they are going to read segments word by word, which are accompanied by the simultaneous onset of a music note (presented over speakers). Participants had to press the spacebar in order to proceed to the next segment. In the beginning, there was a practice phase to ensure that they understood how the task works and immediately after the practice phase, there was a statement encouraging them to ask any questions before the experimental phase. We also had a short evaluation questionnaire at the end of the task, asking participants to report any difficulties/issues they faced regarding the setup of the experiment (e.g. noise, concentration difficulties, tiredness). The total duration of the experimental task was approximately 10 minutes. There was no financial compensation for the participants.

3.3. Experimental design model

The design of the task is a Latin square with respect to 12 experimental items and a within-subject design with respect to 12 additional experimental items. Thus, 12 experimental items (2 different items × 6 conditions) showed up once across participants and 12 experimental items (2 identical items × 6 conditions) showed up within participants.

The reason for this manipulation in the design was to increase the reliability of reading times compared to when participants would go through only different items in every condition. The within-subject items were used to exclude the possibility that reading time differences stem from lexical differences and to ensure that potential effects are only due to music pitch changes. However, in this case, the risk was that participants might process those sentences faster since they had encountered them under a different condition and their interpretations might be affected. Participants reported that when they read an item more than once, they were still processing it as a novel instance, as they were instructed. The within-subject items analysis did not show any substantial difference from the overall results.

3.4. Analysis

The dependent variables of the study are reading times in milliseconds (ms) and comprehension judgments (that is, percentage accuracy of sentence ambiguity resolution). The independent variables are high, neutral and low pitch at two levels (ambiguous/non-ambiguous sentences). Overall, the answers to the comprehension questions were 84% correct, ensuring that participants paid attention to the task.

The statistical analysis of the reading times took place separately for each word segment (Figure 7). Recall that our focus is on the critical region (fifth segment), as it represents the moment of the realization of the ambiguity (green bars in Figure 7). We considered reading times equal to or more than 5000 ms outside the normal distribution and we excluded the outliers that fell within this reaction time range. Moreover, the statistical analysis for items which showed less than 60% correct responses did not reveal any significant difference from the overall results and were not excluded. We attributed low accuracy to individual difficulties in interpreting specific comprehension questions.

Mean reading times in milliseconds across conditions per region.

In the primary data analysis across segments, we observed that in the last segment, there was a large distribution of the reading times. That could be attributed to individual different pauses at the end of the sentence (Figure 7). Therefore, we excluded the post-critical region from our analysis.

For the analyses, we used two statistical tests: a one-way F-test (ANOVA) for the comparison of the means of the three experimental ambiguous conditions (high/neutral/low pitch) and a two-sample Welch t-test for the comparison of each experimental condition (high/neutral/low ambiguous) with the corresponding control condition (high/neutral/low non-ambiguous). Further post hoc tests using Tukey’s HSD detected precise effects among the three-group interaction. The statistical analysis was conducted in Python 3.12.4 (Van Rossum & Drake, Reference Van Rossum and Drake2009) in Visual Studio Code (Microsoft Corporation, 2024). The reading and manipulation of the data were done with the package Pandas (McKinney et al., Reference McKinney2010) and the visualization through matplotlib.pyplot (Hunter, Reference Hunter2007). For the general statistical analysis, the packages that were used is SciPy (Jones et al., Reference Jones, Oliphant and Peterson2001; Virtanen et al., Reference Virtanen, Gommers, Oliphant, Haberland, Reddy and Cournapeau2020), NumPy (Harris et al., Reference Harris, Millman, van der Walt, Gommers, Virtanen, Cournapeau, Wieser, Taylor, Berg, Smith, Kern, Picus, Hoyer, van Kerkwijk, Brett, Haldane, Fernández del Río, Wiebe, Peterson and Oliphant2020) and more specifically, scikit_posthocs (Pedregosa et al., Reference Pedregosa, Varoquaux, Gramfort, Michel, Thirion, Grisel, Blondel, Prettenhofer, Weiss, Dubourg, Vanderplas, Passos, Cournapeau, Brucher, Perrot and Duchesnay2011) within a three-group interaction. All data and analysis code necessary to reproduce the findings of this study are available in an anonymized Open Science Framework (OSF) repository at https://doi.org/10.17605/OSF.IO/DKJ4N (Supplementary Materials).

4. Results

Mean reading times are presented in milliseconds (ms) per condition in the critical region (see Table 1, Figure 8), as shown through the overall data analysis. For the comparison across ambiguous conditions (high 1169 ms, neutral 1305 ms and low pitch 1158 ms), our one-way F-test ANOVA showed significance of the reading times and the effect is small F(2, 849) = 3.98, p = .01, η 2 = .009. Further post hoc tests using Tukey’s HSD revealed that this effect is significant while comparing high and low with neutral pitch (p = .048, p = .036), but not between low versus high pitch (p = .85). These tendencies from the low and high music pitch are supported through the comparisons of the experimental low and high pitch conditions with the analogous non-ambiguous conditions; the Welch two-sample t-tests revealed that in low music pitch, reading times (1158 ms vs 1065 ms) did not reach significant differences t(574) = 1.73, p = .08, d = .14. Similarly, in high music pitch, there was no significant difference between experimental and control conditions (that is 1169 ms vs. 1097 ms) t(574) = 1.26, p = .21, d = .10, both reflecting a potential facilitation effect from music. In contrast, in neutral pitch conditions, the responses are significantly dissimilar with a moderate effect (that is, 1305 ms vs 1073 ms) t(574) = 4.16, p < .001, d = .35, indicating that music pitch in this case did not influence the resolution of ambiguity and led to eliminated garden-path effects. We further conducted a post hoc power analysis by using G*Power (Faul et al., Reference Faul, Erdfelder, Lang and Buchner2007); with an effect size of dz = 0.35, α = 0.05 and N = 72 (participants), the paired-samples t-test showed that the achieved power was 0.90. The calculation indicates a 90% probability of detecting an effect of this magnitude, which is above the suggested threshold of 0.80 (Cohen, Reference Cohen1988). This validates that the effect is meaningful.

Descriptive statistics of the mean reading times for the critical region across conditions

Overall results: Mean of reading times per condition on the critical region.

A separate analysis was conducted to test whether identical items across participants and conditions (within-subject experimental items) led to results similar to Latin-square results. Our one-way F-test (ANOVA) revealed that reading times displayed a marginal effect among experimental conditions F(2, 429) = 2.45, p = .08, η 2 = .01. Further post hoc analysis using Tukey’s HSD test revealed that low pitch leads to marginal significance in the reading times while comparing it with neutral pitch (p = .07). This was not the case in the comparisons between low vs high pitch (p = .53) and high versus neutral pitch (p = .53). Similarly to the overall data analysis, the comparison of high and low pitch exposure between ambiguous and the corresponding control conditions, revealed similar reading times t(286) = 1.63, p = .10, d = 19 and t(286) = 1.27, p = .21, d = 15 respectively. This was not the case for neutral pitch, which showed significantly longer reading times in the experimental condition, compared to the analogous control condition with a moderate effect t(286) = 3.11, p = .002, d = 37.

In sum, the overall results are in favor of our hypothesis, revealing a potential facilitation effect, not only from high music pitch, as expected, but also from low music pitch, supporting the idea that a random acoustic deviant manipulation in pitch may signal the resolution of ambiguity. We return to this issue in the discussion section.

Regarding the group of musicians, we performed a second analysis to test whether they display enhanced linguistic processing of the garden-path sentences that could be attributed to music transfer effects. They did not reveal any significant effect across experimental conditions F(2, 141) = 0.50, p = .60, η 2 = 007. No significant pitch effect was also found across the comparisons of the experimental to the control conditions under high (t(94) = 0.65, p = 0.51, d = .13, neutral t(94) = 1.28, p = 0.20, d = .26, and low pitch t(94) = 1.12, p = 0.26, d = 23. Thus, musicians, compared to non-musicians, do not reveal any music pitch perceptual advantage while processing garden-pathed sentences (unlike prior findings on tonal perceptual advantages for musicians, e.g., Jansen et al., Reference Jansen, Harding, Loerts, Başkent and Lowie2023).

5. Discussion

Our findings show that the initial hypothesis is only partially confirmed: high music pitch does function similarly to the rising intonation and eliminates structural ambiguity; however, the unexpected finding was that it is not only high music pitch that shows this effect, but low music pitch as well. This was evident through the comparison of reading times on the critical region across conditions, which revealed a facilitation effect for both the high and low music pitch. Crucially, this was not the case for the neutral pitch condition, which did not show any impact on the resolution of the garden-path effect, as reading times were significantly longer in the ambiguous condition, compared to the corresponding control condition. This pattern was confirmed through separate analyses, which excluded items with low percentage scores, repeated items analysis, and high comprehension accuracies of the participants.

The facilitation effect found on low music pitch can be accounted for within the concept of processing uncertain/surprising information (see Friston, Reference Friston2010). In our study, it could be that the low pitch displayed more uncertainty (a surprising cue) compared to neutral music pitch (where pitch distances were closer to the melody and thus more expected). Within Friston’s, (Reference Friston2010) view, the acoustic surprisal of low music pitch may be interpreted as a highly informative event; the processing of this information calls for reducing uncertainty, facilitating the avoidance of a garden-path in the ambiguous sentences.

These findings appear to be in line with our prediction (iv) that an accidental, deviant manipulation of pitch functions as a non-linguistic acoustic cue capable of signaling a sound emphasis which could result in the resolution of ambiguity. Since the effect does not come only from the expected high music pitch cue, future research should focus on a potential shared underlying general sound process to test whether a simple non-music/non-linguistic acoustic cue can substitute prosodic signaling for the purposes of disambiguating. Moreover, follow-up studies on listening tasks, with advanced control regarding the variability and the complexity of analogous stimuli between linguistic and (non-) music sound pitch (e.g. identical psychoacoustics), may reveal more on the nature of the language-music interaction. Understanding the role of a more general process for analyzing pitch beyond language and music could contribute to a growing body of studies that focus on the potentially shared processes in these domains (e.g. Confavreux et al., Reference Confavreux, Croisile, Garassus, Aimard and Trillet1992; Fedorenko et al., Reference Fedorenko, Patel, Casasanto, Winawer and Gibson2009; Harding et al., Reference Harding, Sammler, Henry, Large and Kotz2019; Jackendoff, Reference Jackendoff2009; Koelsch et al., Reference Koelsch, Gunter, von Cramon, Zysset, Lohmann and Friederici2002; Krishnan et al., Reference Krishnan, Bidelman, Smalt, Ananthakrishnan and Gandour2012; Lu et al., Reference Lu, Ho, Liu, Wu and Thompson2015; Patel, Reference Patel2003; Patel et al., Reference Patel, Foxton and Griffiths2005, Reference Patel, Wong, Foxton, Lochy and Peretz2008; Patel et al., Reference Patel, Peretz, Tramo and Labreque1998b; Perrachione et al., Reference Perrachione, Fedorenko, Vinke, Gibson and Dilley2013; Sadakata et al., Reference Sadakata, Weidema, Roncaglia-Denissen and Honing2023; Sammler, Reference Sammler2020; Sammler et al., Reference Sammler, Koelsch, Ball, Brandt, Elger, Friederici and Schulze-Bonhage2009; Slevc et al., Reference Slevc, Rosenberg and Patel2009; Tillmann et al., Reference Tillmann, Rusconi, Traube, Butterworth, Umilta and Peretz2011).

Our findings showing that music pitch affects linguistic processing are in line with existing behavioral and neural evidence supporting cross-domain effects between language and music (e.g. Bidelman et al., Reference Bidelman, Gandour and Krishnan2011; Patel et al., Reference Patel, Oishi, Wright, Sutherland-Foggio, Saxena, Sheppard and Hillis2018; Sammler et al., Reference Sammler, Baird, Valabrègue, Clément, Dupont, Belin and Samson2010, Reference Sammler, Grosbras, Anwander, Bestelmeyer and Belin2015; Shapiro & Danly, Reference Shapiro and Danly1985; Wong et al., Reference Wong, Skoe, Russo, Dees and Kraus2007; Zatorre et al., Reference Zatorre, Belin and Penhune2002). More specifically, the observed effect of high and low pitch would not take place if music pitch information was not accessible within the same processing channel and (possibly) via shared processing resources within the general auditory/sound channel. Prior hypotheses on the shared underlying computational processes support the idea that language and music share processing resources during the integration of structural information (Patel, Reference Patel2003; Slevc et al., Reference Slevc, Rosenberg and Patel2009). The results of the present study reveal that this interaction may be extended not only to the syntactic processing level between language and music, but also to the general auditory channel. This assumption is supported by the Shared Sound Category Learning Mechanism Hypothesis (SSCLMH) (Patel, Reference Patel2010), which states that there must be a distinction between the shared developmental processes among domains such as language and music, and the outcomes of those processes that lead to domain-specific conceptualizations. In line with this hypothesis, Loui et al. (Reference Loui, Wu, Wessel and Knight2009) further suggest a general acoustic mechanism for analyzing pitch, while a significant amount of evidence has reported correlation phenomena related to pitch processing and prosodic perception between language and music (see Jansen et al., Reference Jansen, Harding, Loerts, Başkent and Lowie2023 for a recent meta-analysis), pointing toward the idea of a more general conceptual mechanism for processing pitch.

Returning to the concept of uncertainty, the brain is in a constant intrinsic effort to minimize surprise as a natural tendency to resist disorder (Predictive Coding Theory-PCT; Friston, Reference Friston2005, Reference Friston2010; Friston & Kiebel, Reference Friston and Kiebel2009). PCT suggests that the brain functions with probabilistic error calculations to reduce the uncertainty of the input. Under this view, one could further explore whether employing a melody, which represents the language-specific intonation pattern, can facilitate the resolution of a temporal syntactic ambiguity. In such a case, would the brain try to minimize syntactic uncertainty by eliminating temporal ambiguity on the basis of the provided melody? Future research would benefit from taking into account more general cognitive mechanisms, like predictive coding (Drakoulaki et al., Reference Drakoulaki, Anagnostopoulou, Guasti, Tillmann and Varlokosta2024; Fiveash, Tillmann & Sammler, Reference Fiveash, Tillmann and Sammler2024 for a general review), when testing domain-general versus domain-specific hypotheses in language and music (see also Kargakis et al., Reference Kargakis, Harding, Coler and Lowieunder review, for further exploration on the predicative role of music under uncertain linguistic phenomena).

In conclusion, the concept of shared processing resources among distinct cognitive domains may be linked to the general principles of the organization of the human brain, such as preservation of available processing resources/energy (Bullmore & Sporns, Reference Bullmore and Sporns2012). Thus, if linguistic pitch shares common acoustic properties with music and the general auditory channel, it would be logical to hypothesize that, for energy cost reduction, there might be processes among different functions allocated by shared computational resources, often assumed to be separated (see Patel, Reference Patel2010 for an overview). Within such a hypothesis, pitch would be a shared mechanism, at least during the early acoustical decoding information (lower) level and before the topological transition of shaping domain-specific representations.

Supplementary material

The supplementary material for this article can be found at http://doi.org/10.1017/langcog.2026.10064.

Data availability statement

The data that support the findings of this study are openly available in Open Science Framework (OSF) at https://doi.org/10.17605/OSF.IO/DKJ4N.

Funding statement

This research did not receive any specific grant from funding agencies in the public, commercial or not-for-profit sectors. Open access funding provided by University of Groningen.

A. Appendix – Linguistic stimuli

https://docs.google.com/document/d/1nK2opn4Vnm8vL8BdAu1qv9ppU8qu-SrIPHcqGN077xo/edit?usp=sharing