Mentoring is widely believed to be among the most effective interventions to improve the status of women in academic political science (Committee for the Status of Women in the Profession 2016; McClain and Mealy Reference McClain and Mealy2022). But, as with most proposed interventions, there is a dearth of causal research on its efficacy (Argyle and Mendelberg Reference Argyle and Mendelberg2020).

To the best of our knowledge, this study reports the most comprehensive long-term randomized evaluation of an intervention to improve women’s outcomes in political science.Footnote 1 We designed a women’s mentoring program in coordination with the American Political Science Association (APSA). We implemented it with women who received their PhD between 2014 and 2023. We evaluated the program using surveys and curriculum vitae (CV) information collected pretreatment, immediately afterwards, and two to seven years post-treatment.

To the best of our knowledge, this study reports the most comprehensive long-term randomized evaluation of an intervention to improve women’s outcomes in political science.

We build on a handful of randomized interventions designed to improve outcomes for female political science faculty members (Barnes and Beaulieu Reference Barnes and Beaulieu2017; Bonneau et al. Reference Bonneau, Kanthak, Leifson and Redman2021; Unkovic, Sen, and Quinn Reference Unkovic, Sen and Quinn2016). This study goes beyond previous research by using a longer intervention; evaluating it over a much longer period; and assessing a much wider set of outcomes, including key career metrics such as publications as well as attitudes and behaviors. It builds most directly on a study of a women’s mentoring program in economics that found positive effects on career advancement (Blau et al. Reference Blau, Currie, Rachel and Ginther2010; Ginther et al. Reference Ginther, Currie, Blau and Rachel2020). We replicated the main features of that study and added two important improvements. First, while the economics study randomized applicants only within subfield, we implemented a more precise matched randomization procedure that produces less biased estimates by more thoroughly accounting for pretreatment differences. Second, while the economics study measured only end-result outcomes (e.g., publications), we also measured attitudes and behaviors, including those identified as contributing to the gender gap (Farris, Key, and Sumner Reference Farris, Key and Sumner2024; Mitchell and Hesli Reference Mitchell and Hesli2013; Teele and Thelen Reference Teele and Thelen2017).

Although participants gave the program high ratings, it had almost no meaningful causal effects in the long term. Null results held even when we accounted for meeting frequency, mentoring, and peer feedback and relationships, as well as whether participants were satisfied with the workshop. The only meaningful effect was an increased sense of belonging in the profession two years later. By implication, more intensive programs—or more structural changes—may be needed to advance women’s status in the profession.

Although participants gave the program high ratings, it had almost no meaningful causal effects in the long term.

Although these null results may be disappointing, we believe they are meaningful. Our sample was sufficiently powered to detect the expected moderate effect size, and our analysis accounts for common threats to validity. Nevertheless, this study should not be the final word. We conclude our study by underscoring the importance of subjecting popular interventions to rigorous evaluation (Argyle and Mendelberg Reference Argyle and Mendelberg2020).

We conclude our study by underscoring the importance of subjecting popular interventions to rigorous evaluation.

DATA AND METHODS

We recruited three cohorts to participate in a mentoring workshop in 2018, 2021, and 2023 (see online appendix D). The goal was to help junior scholars obtain tenure. The first workshop was in person; the second and third were online due to the COVID-19 pandemic and funding shortages. The workshop included panels where mentors spoke and answered questions, and small-group sessions assembled by subfield where participants received feedback on their research.

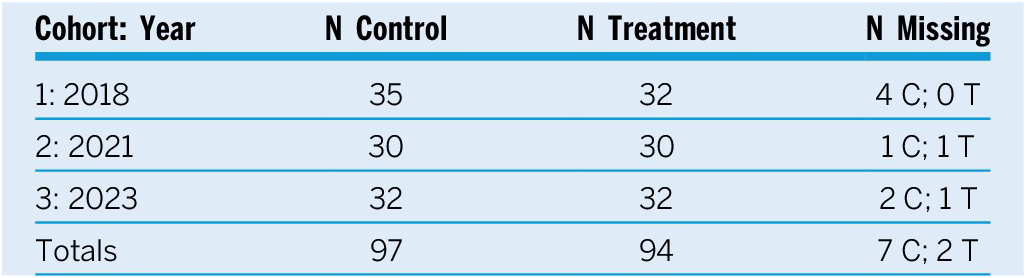

To apply, applicants filled out a “wave 1” survey and attached a CV and research statement (see online appendix B). We then manually constructed blocks of two to four eligible applicants using five variables that should predict career advancement: academic position, subfield or research area, time since PhD, publication score, and departmental ranking (see online appendix C). Table 1 indicates that the sample is well balanced.

Balance on Pretreatment Covariates

Notes: Means in the control and treatment groups, the difference in means, and the p-value on that difference (two-sided t-test). N is 191 observations (no missing values).

We randomly assigned applicants from each block to participate in the workshop or to the control group. The treatment and control groups were given the same post-treatment survey. We assembled small groups by subfield and assigned each a mentor from that subfield. After the workshop, we asked mentors to meet periodically with their mentees.

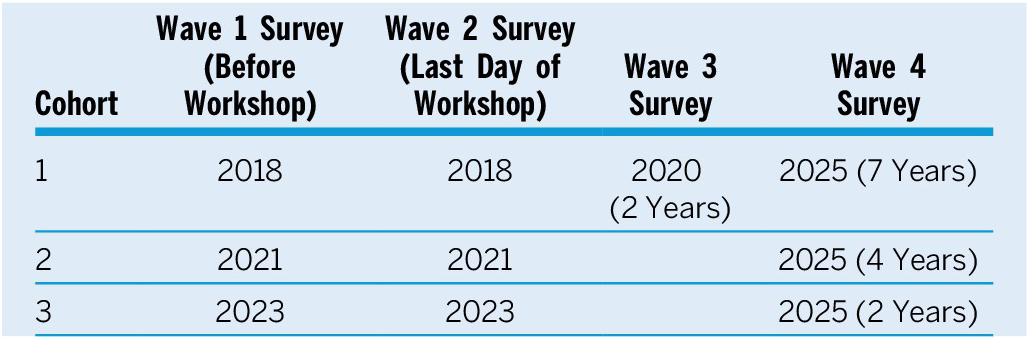

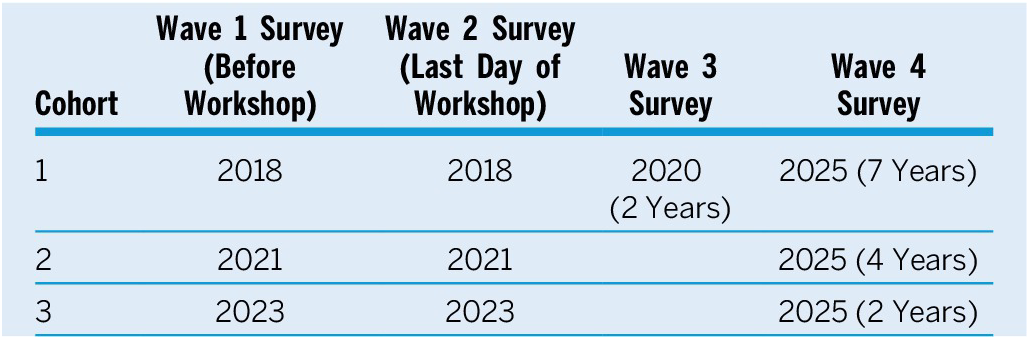

In each survey, we measured career-advancement outcomes and behaviors. The primary measures were number of grants, top-tier journal publications, and peer-reviewed publications; publication score; and departmental rank. The behavioral measures included seeking peer or mentor feedback on research, being the lead or sole author on research projects, presenting projects at professional workshops and conferences, and submitting articles to top journals (see online appendix E). Table 2 describes the timing of the survey waves. Coding and analysis details are in online appendix F and appendix table A.20.

Data Collection

We focus on intent-to-treat (ITT) estimates. To improve precision, we include as a predictor a pretreatment measure of the dependent variable (or a close proxy) when possible in each regression as well as covariates predicting career achievements: pretreatment publication score, institutional rank, and years since PhD. To ensure that the estimates are not model dependent, we replicate the ITT results with stratified average treatment effects (SATE) that only assume random assignment.

The main concern about our null findings is that they may be due to a relatively small sample. To ensure that the null results do not arise from a lack of statistical power and are instead due to small effect sizes, we conduct equivalence tests (Titiunik and Feher Reference Titiunik and Feher2018). To ensure our results are not due to poor treatment uptake and to account for noncompliance, we estimate complier average causal effects (CACE) using various measures of compliance.

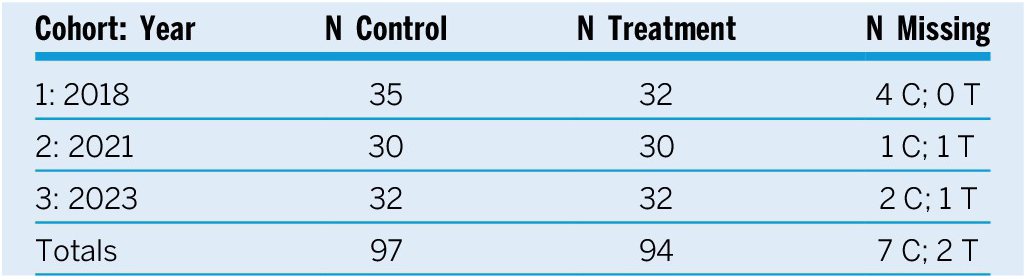

As shown in table 3, each cohort had 60 to 65 participants, and the total is 191. The attrition rate of 4.7% is low, and our results are robust to various approaches for addressing nonrandom attrition (see online appendix G).

Data Structure

ANALYSIS

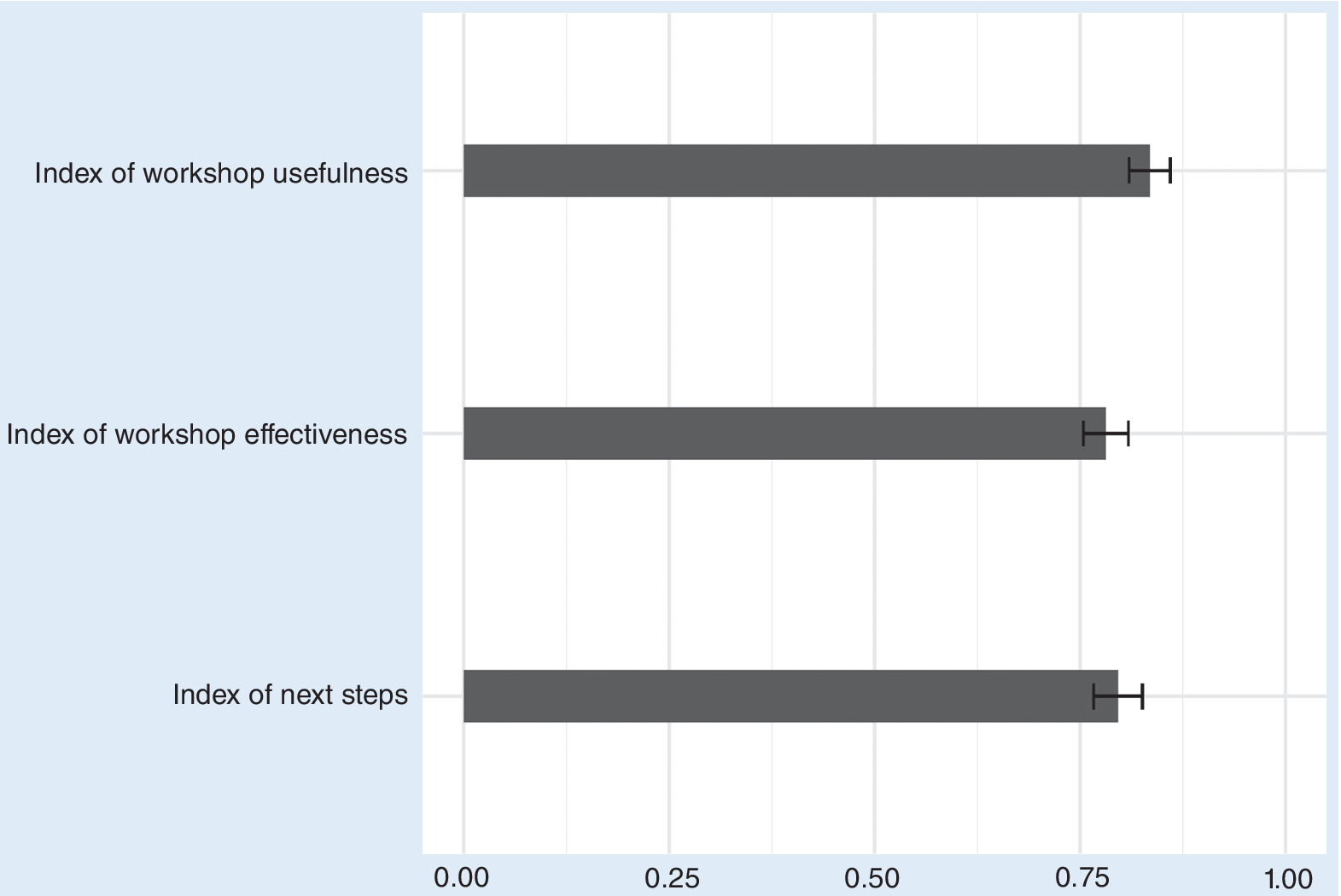

At the conclusion of the workshop, we administered a “wave 2” survey to the participants. The survey evaluated how beneficial participants perceived the workshop to be and their intention to submit articles to top journals, apply for grants, and stay in contact with workshop peers and mentors. The results of this manipulation check reveal that the intervention was received as intended. Almost all participants perceived the workshop as useful and effective, and the workshop improved their confidence and produced the intent to engage in productive behaviors (figure 1).

Means and 95% Confidence Intervals for Three Measures of Workshop Benefits

Figure 2 presents ITT estimates (see online appendix tables A.1–A.4 for the regression output). Count variables are shown in the top left on the figure; variables that range from 0 to 1 are in the top right (including binary, ordinal, and continuous variables); and variables with a large range are at the bottom. The program had no statistically significant positive effects.Footnote 2 There are only two apparent exceptions: (1) the number of times a participant sent a manuscript to a mentor for feedback after the workshop (7 percentage points, p<0.05); and (2) a sense of belonging in the profession (7 percentage points, p<0.01). However, neither exception survives the false discovery rate adjustment for multiple comparisons, and both are substantively small. Effects are similar for SATE estimates, which guard against model-dependent results (see online appendix tables A.8–A.11).

ITT Effects

Although these tests do not reject the null hypothesis, they cannot provide affirmative evidence for the absence of an effect. We therefore performed equivalence tests using the Two One-Sided Tests procedure (Titiunik and Feher Reference Titiunik and Feher2018). We set the smallest effect size of interest to 15% of the variable’s range and tested the null hypothesis that the true effect size is at least that large in either direction. For 33 of the 35 outcomes, this test was statistically significant (p<0.05).Footnote 3 That is, the true effect is smaller than 15% on almost all outcomes (see online appendix table A.21). In sum, the program had no meaningful effects.

The ITT effects are unchanged when we examine different cohorts and time lags. We tested for effects: (1) two years post-workshop (see online appendix tables A.5-A.7); (2) four and seven years post-workshop (see replication file tables B.75–B.78); and (3) by cohort (see replication file tables B.32–B.43). The only positive effect is on sense of belonging in the profession in year 2 (i.e., 10 percentage points, p<0.05; see online appendix table A.7, column 8), which survives adjustment for multiple comparisons.Footnote 4 Potential lack of variation in outcomes such as publications also does not explain the null findings (see online appendix table A.20 for the standard deviations and means).

A key concern is whether these null findings are driven by poor compliance or weak uptake. We therefore estimated four CACE effects. We use a two-sided compliance measure to account for those participants who were assigned to treatment but did not participate and, importantly, for those assigned to the control group who received mentoring elsewhere (see online appendix tables A.12–A.15). We also use three one-sided measures of compliance that account for varying treatment intensity: (1) the frequency of post-workshop meetings with the small group; (2) whether they received feedback on manuscripts or established mentoring or peer relationships; and (3) whether they were satisfied with the workshop (see online appendix tables A.16–A.19 and replication file tables B.21–B.24 and B.25–B.28).Footnote 5 The results did not change meaningfully. Although we find precise effects on sending manuscripts to mentors and on sense of belonging, they do not survive adjustment for multiple comparisons. This means that the null results are not due to the control group self-treating (i.e., obtaining mentoring elsewhere) or to the treated group failing to uptake the program.

COMPARISON TO OTHER INTERVENTIONS

Why do our results appear to diverge from existing studies? This section presents a comparison with the main relevant mentoring interventions. First, Brutger (Reference Brutger2024) reported large effects from a seven-session course to mentor college students in applying to graduate school. Only the feeling of being prepared to apply to a graduate program increased—and only immediately post-treatment. That finding is similar to our immediate post-workshop effects. That is, we replicated the positive effect of this study.

Second, Barnes and Beaulieu (Reference Barnes and Beaulieu2017) found a positive association between attending a political methodology women’s workshop and article submissions relative to a women’s comparison group. This finding is similar to our finding of limited short-term effects. Thus, the results are not necessarily incompatible with ours.

Third, the most relevant comparison is with the successful economics program on which we based our study. There are several differences with our study but they do not explain our divergent findings. The economics workshops were in person and were completed by 2014. Ours were in person (in 2018) and online (afterwards) and occurred mostly during the COVID-19 pandemic, which may have made the targeted behaviors and post-workshop exchanges less likely for all three cohorts. However, our null results hold for both the in-person and online workshops and, notably, even for those who engaged in the targeted behaviors, met with their small group frequently post-workshop, and found the workshop to be useful. It may be that our more tightly matched randomization reduced spurious effects, and the true effects from the economics study are closer to null. Further research is needed to test the possible explanations.Footnote 6

CONCLUSION

We had reason to expect that women’s mentoring programs increase their professional achievements. We designed and implemented our program with robust support from and coordination with APSA, and we followed best practices from existing studies. But while highly successful in the immediate aftermath, with high praise from participants, the program in the long term did not make a meaningful difference. Equivalence tests confirm that statistical power is not the explanation; neither does treatment quality mitigate the null effects. The COVID-19 pandemic may be to blame, perhaps by eroding uptake, but the null results hold for the pre-pandemic workshop and even for those with good treatment uptake (e.g., frequent small-group meetings). These findings suggest that the pandemic did not override any effects. Perhaps the benefits take longer to attain than the post-treatment periods we studied, but a slow-moving effect exceeding four years seems unlikely. Finally, the results are not due to the control group self-treating.

Common mentoring programs therefore may not be as effective a solution as is widely believed. Nonrandomized studies concluding that workplace mentoring generally boosts representation in fact do not show evidence of consistent effects (Dobbin and Kalev Reference Dobbin and Kalev2022, 93, figure 4.2). Mentoring programs are appealing because they require less restructuring of department cultures and university hierarchies. They derive from a human capital approach to diversity, which carries less potential backlash than an affirmative action approach. However, as often implemented, such programs may not increase women’s advancement in the long term.Footnote 7

We see two paths forward. One approach requires deeper structural reforms, which might include reexamining norms or closing resource gaps. The second approach doubles down on mentoring but with more intensive, regular interactions with mentors and peers. In our program, even those participants who met at least once a year did not experience substantial improvement, but perhaps still more frequent, deeper engagement is needed (Cassese and Holman Reference Cassese and Holman2018). Advocates of mentoring programs favor embedding them within an individual’s institution (Dobbin and Kalev Reference Dobbin and Kalev2022), which may promote intensive mentoring. Future research should examine these programs.

Widely recommended policies, including clock-stopping, diversity-hiring programs, and minimum women’s representation on promotion boards, have null, mixed, or backfire effects (Argyle and Mendelberg Reference Argyle and Mendelberg2020). All of these policies require more concerted evaluation. Their longer-term target outcomes should be rigorously measured. To improve career outcomes, it is not enough to measure short-term perceptions and attitudes.

SUPPLEMENTARY MATERIAL

To view supplementary material for this article, please visit http://doi.org/10.1017/S1049096526102145.

ACKNOWLEDGMENTS

This article was made possible by a grant from APSA. The statements made and views expressed are the sole responsibility of the authors. The authors thank Rocio Titiunik and Gustavo Novoa for valuable feedback on previous versions of this article.

DATA AVAILABILITY STATEMENT

The editors have issued an exception to the Data Availability Policy for this manuscript. In compliance with the authors’ IRB protocol, the possibility of identifying participants in the study justified depositing the data and replication code as a restricted access file in the ICPSR archive instead of the typical open access deposit in Dataverse. The DOI for this file is http://doi.org/10.3886/ICPSR39704.v1.

CONFLICTS OF INTEREST

The authors declare that there are no ethical issues or conflicts of interest in this research.