A common refrain in popular discourse is that law is fated to lag behind technological innovation. Politicians and pundits sometimes refer to this as the “pacing problem,” what Wallach defines as “the gap between the introduction of a new technology and the establishment of laws.”Footnote 2 Rooted in folk wisdom, the pacing problem has long been viewed as a “a simple but unavoidable principle of modern life,” and even a “law of disruption.”Footnote 3 This perception is not new. Speaking to the National Academy of Sciences in 1963, President Kennedy lamented that “every time you scientists make a major invention, we politicians have to invent a new institution to cope with it.”Footnote 4 In recent years, however, many have voiced concern that the pacing problem is “breaking” our most cherished international legal institutions,Footnote 5 sparking debate about whether to amend, overhaul, or abandon them.Footnote 6

Despite these challenges, the pacing problem is neither ubiquitous nor inevitable. In practice, legal rules are often remarkably resilient to technological change, even when written in ignorance about what the future might bring.Footnote 7 Examples include the Geneva Protocol (1925) and the Chemical Weapons Convention (1993), cases I examine later. The Chemical Weapons Convention, a landmark treaty over 200 pages in length, was disrupted in its second decade by the invention of novichok nerve agents. Extending coverage to novichok necessitated a costly formal amendment process that demanded buy-in from novichok’s inventors. In contrast, the Geneva Protocol—consisting of just 350 words—weathered multiple technological shocks over five decades, yet was always interpreted expansively. As a result, technological first-movers faced a choice between acquiescing to restrictions or appearing noncompliant, without a need for formal amendment.

Since formal amendments in international law are known to be costly and infrequent,Footnote 8 and abrogation is common,Footnote 9 resilience merits close attention. Consistent with how the term is used in organization science and ecology, Percy and Sandholtz define “resilience” as “the ability of a norm to recover, or retain its viability, in the wake of non-compliance or challenge.”Footnote 10 What distinguishes resilience from simple longevity is the capacity to withstand or recover quickly from disruption.Footnote 11 If a legal rule is interpreted inclusively downstream, it is resilient.Footnote 12 Under this definition, even international law—long derided as “the vanishing point of jurisprudence”Footnote 13 because it lacks an authoritative judiciary—can exhibit a high degree of resilience.

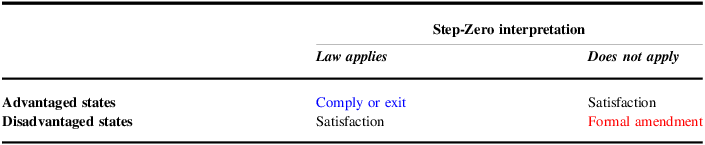

When new technologies prompt questions about the applicability of existing law,Footnote 14 the underlying assumption is that it might already apply.Footnote 15 Legal scholars refer to this initial inquiry—whether existing legal rules even apply at all to novel situations—as the Step-Zero question.Footnote 16 Because the day-to-day business of any legal system is about applying rules and principles to individual events, Step-Zero questions become explicit only in edge cases, foregrounding moments when law is at risk of disruption.Footnote 17 More often, the question is implicit, and law’s quiet adaptivity goes largely unnoticed. That Step-Zero questions exist at all in international relations (IR) underscores a puzzle for scholars: why are some legal rules resilient to technological challenges, while others become subject to contestation, renegotiation, or fragmentation? That is, how do sovereign states with divergent preferences ever reach consensus about whether old laws govern new phenomena?

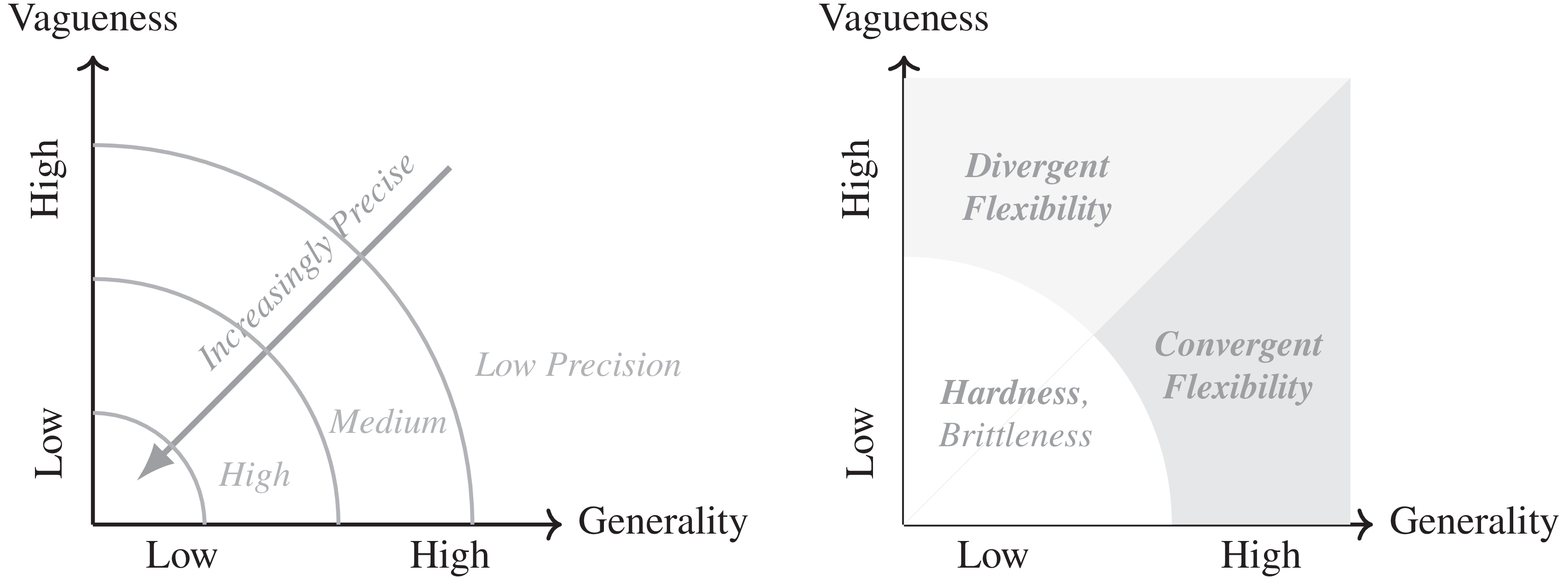

In this article, I argue that legal resilience turns not only on technological novelty, but also on technology’s interaction with different forms of legal precision. Drawing on insights from psychology and linguistics, I distinguish between what I call convergent and divergent flexibility in legal rules. I argue that vagueness contributes to divergence in legal interpretation, whereas generality promotes convergence and therefore resilience.Footnote 18 Whether adaptability translates to resilience depends on the type of flexibility a rule employs. There are several empirical implications. First, highly precise legal rules are “hard”Footnote 19 but also brittle. While precision can foster compliance in static environments, it struggles to accommodate novel challenges as conditions change. Second, the effects of imprecision depend on its form. Vagueness may enhance flexibility by widening the range of plausible interpretations, but that same flexibility invites divergent interpretations, prompting contestation. In contrast, generality systematically upweights the plausibility of restrictive interpretations, which fosters resilience by closing off emergent loopholes.

To test the theory, I recruit

![]() $N = 450$

national security law professionals to participate in a randomized controlled trial (RCT) embedded in a USD 25,000 writing contest. Participants were commissioned to write long-form advisory briefs analyzing the legality of a hypothetical new technology. The experimental design employed a

$N = 450$

national security law professionals to participate in a randomized controlled trial (RCT) embedded in a USD 25,000 writing contest. Participants were commissioned to write long-form advisory briefs analyzing the legality of a hypothetical new technology. The experimental design employed a

![]() ${2^3}$

factorial structure that independently varied treaty precision and technological novelty. To generate objective measures of compliance from participants’ subjective interpretations, I further varied whether the client was a government with a strong interest in a permissive policy outcome. With prizes tied to persuasiveness, participants formulated recommendations they believed would best advance the national interest while also persuading the client to adopt the recommendation. Text analysis of their essay-length responses identifies two distinct causal pathways to convergence: the psychological pull of generality and the perceived need to protect clients from being viewed as noncompliant. Finally, to show how convergent flexibility applies to foreign policy, I complement the RCT with research into two historical controversies surrounding chemical weapons technology: “super tear gas” and novichok.

${2^3}$

factorial structure that independently varied treaty precision and technological novelty. To generate objective measures of compliance from participants’ subjective interpretations, I further varied whether the client was a government with a strong interest in a permissive policy outcome. With prizes tied to persuasiveness, participants formulated recommendations they believed would best advance the national interest while also persuading the client to adopt the recommendation. Text analysis of their essay-length responses identifies two distinct causal pathways to convergence: the psychological pull of generality and the perceived need to protect clients from being viewed as noncompliant. Finally, to show how convergent flexibility applies to foreign policy, I complement the RCT with research into two historical controversies surrounding chemical weapons technology: “super tear gas” and novichok.

The findings advance our understanding of law and technology in several ways. In extant scholarship, there are two dominant accounts of international law’s relationship with technology. Both offer competing explanations for technological disruption, but neither can account for resilience. At one end is the conventional wisdom, the pacing problem. The pacing problem offers a deceptively simple explanation: since the law cannot regulate what it cannot foresee,Footnote 20 every disruption is simply a failure of legislative imagination. Yet because this logic implies that disruption is the universal condition, it cannot explain variation. On the other end is the literature on institutional design, which has only recently begun to incorporate theories of change.Footnote 21 Foundational scholarship focused on the relationship between formal flexibility and participation.Footnote 22 More recent research has highlighted the relationship between informal, or “emergent,” flexibility and compliance by pointing to the role of legal precision.Footnote 23 My theory builds on this research.

Political scientists have long viewed legal precision—classically defined by Abbott and colleagues as the degree to which rules “unambiguously define the conduct they require, authorize, or proscribe”—as integral to compliance.Footnote 24 In canonical models, precision and compliance are positively related: rules that are less precise shift agency away from framers and toward adherents, leading to agent drift and evasive noncompliance.Footnote 25 This logic has also been extended beyond treaty making to other political domains, such as legislation,Footnote 26 bureaucracy,Footnote 27 judicial politics,Footnote 28 public policy,Footnote 29 and alliances.Footnote 30 Some scholars have explained why framers might accept this tradeoff,Footnote 31 while others emphasize its unintended consequences.Footnote 32 The underlying logic—that precision strictly improves compliance—is rarely questioned.

Building on the conventional view, emergent flexibility theory has extended this logic as a partial explanation of legal change. Emergent flexibility differs from convergent flexibility, the model advanced here. Its proponents contend that because imprecision is thought to be “inherently permissive,”Footnote 33 states have flexibility to adopt new interpretations as the world changes.Footnote 34 Yet if imprecision is strictly permissive, this would imply divergent reinterpretations whenever regulatory incentives conflict. By this logic, we can hardly call imprecise laws “resilient” if they invariably lead to contestation or fragmentation.Footnote 35 Wider IR theory might explain that, in such situations, resilience can still be enforced, either through coercive powerFootnote 36 or strong norms.Footnote 37 But these explanations are incomplete, since even powerful states sometimes acquiesce to disadvantageous interpretations,Footnote 38 even in the absence of norms.Footnote 39 Moreover, because norms take time to develop,Footnote 40 technological novelty should preclude their existence.

The question of legal resilience strikes at core theories in political science regarding institutional design, compliance, and the vitality of legal norms. Scholars have long sought to understand compliance with international law,Footnote 41 but little is known about its microfoundationsFootnote 42 —how states actually distinguish between legality and illegality. Compliance assessment has two components: monitoring and verification. Monitoring is simply fact collection.Footnote 43 Although facts can sometimes be obfuscated,Footnote 44 they are grounded in fundamentally objective reality. Verification, in turn, entails analysis—a mapping process between facts and the law’s underlying meaning. Although law is ordinarily embodied in commonly observable text, judgment about how it maps onto individual cases is communal and inherently subjective.Footnote 45 When states share common interests, ambiguity can be constructive; but when a case is divisive, and no single party can dictate interpretations, new technologies should shatter international consensus.

Although resilience is equally important for domestic law, international law’s response to technological novelty presents an especially hard test. In the model I advance, conditions are stacked against the law: yesterday’s lawmakers could not have anticipated the technology, interpretation is decentralized, the law is imprecise, and states have maximal incentives to deviate. According to existing theory, it is under these conditions that we should most expect divergence. Yet even under hostile conditions, generality fosters convergence. These findings suggest that the ability of states to manipulate legal meaning is bounded, complementing existing research on the limits of rhetorical maneuver.Footnote 46 Especially in a changing world, generality shapes how states understand what it means to be “compliant.”

Despite the centrality of lawyers in this process, lawyers are an understudied population in political science.Footnote 47 Significant attention in IR has been devoted to the role of judges,Footnote 48 diplomats,Footnote 49 and other bureaucrats,Footnote 50 but few studies—and perhaps no RCTs—have focused on legal advisors. Given their ubiquity in drafting policies, negotiating agreements, verifying compliance, and justifying interpretations, the dearth of research on this group is surprising. Legal norms are thought to be distinctly powerfulFootnote 51 in part because political decisionmakers in all regimes rely on competent legal advice.Footnote 52 For these reasons, legal scholars have long argued that a general theory of law and technological change is needed,Footnote 53 but extant research has predominantly focused on the legality of particular technologies.

This article is an attempt to close that gap. In the following pages, I first introduce key concepts and derive a theory of law and technology. I then describe the identification strategy, consisting of the RCT, topic models, and case studies. Results are discussed in detail, with additional tests in the appendix. I conclude by discussing limitations and theoretical implications.

A Theory of Law and Technological Change

For international law, technology is the ultimate stress test.Footnote 54 International law is designed to institutionalize a particular configuration of state interests,Footnote 55 but technological change generates misalignment between legal rules and the interests they were intended to reflect.Footnote 56 When states can anticipate change, accommodations are often built in at the design stage.Footnote 57 The difficulty, of course, is that forecasting the future is inherently difficult. For example, should the United States double down on Artificial General Intelligence (AGI), or is AI a bubble? At present, US government officials continue to debate this question, because nobody really knows. Unpredictability is inherent to technological innovation, especially in the long run.Footnote 58

The element of surprise effectively severs the relationship between institutional design and desire to comply. Even the most technologically advanced states get it wrong; in fact, the innovator’s dilemma—a cornerstone of innovation theory, cited 40,000 times—holds that technology leaders are even more prone to miscalculation.Footnote 59 Occasionally, the omission of future technologies is not accidental but deliberateFootnote 60 —driven by prioritization of the short-run, political obstacles, contract costs, or technological skepticism.Footnote 61 Technological surprise can also be one sided, since research and development (R&D) is inherently secretive.Footnote 62 States with private information may withhold what they know or advocate language deliberately crafted to exclude technologies with expected advantages, as the United States did during Cold War arms control talksFootnote 63 and Russia did in the case of novichok. Law itself can even encourage technological surprise by creating tacit incentives to innovate in unexpected directions—for example, to circumvent restrictions.Footnote 64 The key point is that whenever at least one party fails to anticipate the direction of technological innovation, misalignment is exogenous.Footnote 65

At best, the design of international law is akin to sending a “message in a bottle”Footnote 66 to future generations, since shocks are eventually guaranteed. Innovation theorists distinguish between mundane and novel technologies. Mundane innovations—classically termed “recombinant” or “incremental” technologies in this literature—reconfigure existing phenomena in new ways. Novel innovations, by contrast, introduce entirely new properties, for example, by harnessing previously unknown physical phenomena. Although “novelty” exists along a continuum, innovation theory has classically treated these categories as binary ideals.Footnote 67 Within this framework, a minimally novel technology introduces exactly one novel property. Because novelty is defined by similarity to existing technologies, expansion of the technological frontier eventually renders formal anticipation impossible at the outset.Footnote 68

Reactions to technological shocks vary;Footnote 69 not all technologies become subject to contestation.Footnote 70 Table 1 summarizes state interests in formal versus informal resolution mechanisms. When existing restrictions apply automatically, disadvantaged states can rely on “arguing” as well as “bargaining” strategies to enforce compliance.Footnote 71 When no such restrictions apply, advantaged states must be enticed back to the bargaining table, and law must be updated manually. Therefore, all else equal, disadvantaged states prefer interpretations that bring new technologies under existing law, whereas advantaged states prefer to avoid such application. These dynamics are relevant wherever states value the appearance of compliance, but contestation over application should be most pronounced in institutions deemed too important to exit, since exiting means forgoing the residual benefits of cooperation.

Strategic incentives under technological shocks

Notes: Automatic (judicial) solutions put technologically advantaged states on the defensive, since disadvantaged states can rely on “arguing” as well as “bargaining” strategies. Manual (legislative) solutions shift the burden of action onto disadvantaged states, since without the option to credibly allege violations, bargaining is the only realistic option.

The feasibility of reinterpretation is thought to be a function of legal imprecision,Footnote 72 broadly understood as semantic indeterminacy.Footnote 73 While political scientists use terms like ambiguity, vagueness, and imprecision interchangeably, linguists make careful distinction. Ambiguity means that the same word conveys multiple plausible meanings, such as polysemy (a single word with multiple related senses) or homonymy (a single word with multiple unrelated meanings).Footnote 74 The difference is a matter of degree.Footnote 75 Simple ambiguity entails two or more discrete, disjoint meanings, whereas vagueness—an extreme form of ambiguity—implies continuous, graded meaning.Footnote 76 Because vagueness has been used to explain both evasionFootnote 77 and emergent flexibility,Footnote 78 existing theory implies that emergent flexibility is strictly beneficial for technology adopters because of its permissive properties.

If vagueness were the only component of imprecision, contestation would be ubiquitous. Yet linguists have also identified an equally important dimension of imprecision—generality Footnote 79 —which, unlike vagueness, can promote convergence. Whereas vagueness concerns the sharpness of category boundaries, generality concerns category breadth. Both generate imprecision and may co-occur in legal text, but they are independent semantic properties with distinct effects. Accordingly, low precision may result from high vagueness, high generality, or both; throughout the article, I use specificity to denote low generality per se. Figure 1 visualizes this conceptual space and its implications for legal interpretation.

Semantic topology of “precision”

Notes: Mapping of generality versus vagueness as orthogonal subcomponents of precision. The balance of these properties shapes interpretation.

Although generality is undertheorized in political science, psycholinguistics has extensively studied its influence on cognition.Footnote 80 Linguists formally refer to word-level generality as hypernymy, the hierarchical relationship between general terms (hypernyms) and their more specific instances (hyponyms).Footnote 81 Hypernymy is a cornerstone of semantic linguistics, common to all natural languages.Footnote 82 Hypernymy follows a simple “is-a” heuristic. If one can answer affirmatively to the question “Is X a type of Y?” then X is a hyponym of Y (and Y is X’s hypernym). The relationship is transitive, meaning that if X is a hyponym of Y, and Y is a hyponym of Z, the X is also a hyponym of Z. Because it is transitive, hypernymy structures meaning by producing taxonomic hierarchies that order concepts from specific to general.Footnote 83 Unlike vagueness, which is indeterminate, generality is inclusive: the more overlapping qualities two examples share, the more likely interpreters are to place them in the same hypernymic bucket.Footnote 84 As reference words increase in generality, so does salient category size, and associations become clearer, resulting in analogical convergence.

Having introduced the concept of generality, we can now derive its theoretical implications. For law to function as a “gapless” system,Footnote 85 whatever is not explicitly prohibited is implicitly permitted. In international law, this logic is formalized in the Lotus principle.Footnote 86 As new cases become salient, Lotus implies that they must be assigned to one of these two categories by reference to the existing corpus.Footnote 87 Vagueness permits pluralistic interpretations: the same language can support both expansive and restrictive readings without loss of interpretive credibility, since all interpretations are uniformly plausible. Generality operates differently. Because general terms hierarchically subsume more specific examples, increased generality systematically upweights more expansive interpretations. Under the closed logic imposed by Lotus, category expansion is zero-sum. Broadening the scope of explicit prohibitions correspondingly contracts the residual domain of implicit permissions. Thus when legal text defines prohibitions in highly general terms, the scope of permissible conduct is necessarily reduced.Footnote 88

Figure 2 illustrates how generality affects legal interpretation. Each ray represents a rule’s reach across a universe of six hyponyms: five known at the time of drafting (

![]() ${x_1} \ldots {x_5}$

) and one unanticipated (

${x_1} \ldots {x_5}$

) and one unanticipated (

![]() ${x_{\rm{*}}}$

). Ray width denotes generality, while the dashed boundary captures vagueness at the margin of coverage. A specific rule (left) enumerates prohibited hyponyms individually, maximizing selectivity but excluding unforeseen cases by construction. Specificity increases determinacy: as the scope of prohibitions becomes clearer, so too does the scope of what is not prohibited. A general rule (right) encompasses all analogous hyponyms, regardless of whether these were explicitly anticipated. Because a law that might apply to

${x_{\rm{*}}}$

). Ray width denotes generality, while the dashed boundary captures vagueness at the margin of coverage. A specific rule (left) enumerates prohibited hyponyms individually, maximizing selectivity but excluding unforeseen cases by construction. Specificity increases determinacy: as the scope of prohibitions becomes clearer, so too does the scope of what is not prohibited. A general rule (right) encompasses all analogous hyponyms, regardless of whether these were explicitly anticipated. Because a law that might apply to

![]() ${x_{\rm{*}}}$

is at least weakly more constraining than a law that definitely cannot, generality should promote interpretive convergence. States desiring

${x_{\rm{*}}}$

is at least weakly more constraining than a law that definitely cannot, generality should promote interpretive convergence. States desiring

![]() ${x_{\rm{*}}}$

must contend with the community view that permissive interpretations are less plausible.

${x_{\rm{*}}}$

must contend with the community view that permissive interpretations are less plausible.

Specificity versus generality in rule design

Notes: Rays map the coverage of a legal rule over technologies χ1…χ5. Narrower rays denote higher specificity. The outlier χ* represents a novel attributes associated with technologies that emerged after the rules were drafted. High-specificity rules cannot apply to phenomena outside their domain, while general rules may apply by analogy.

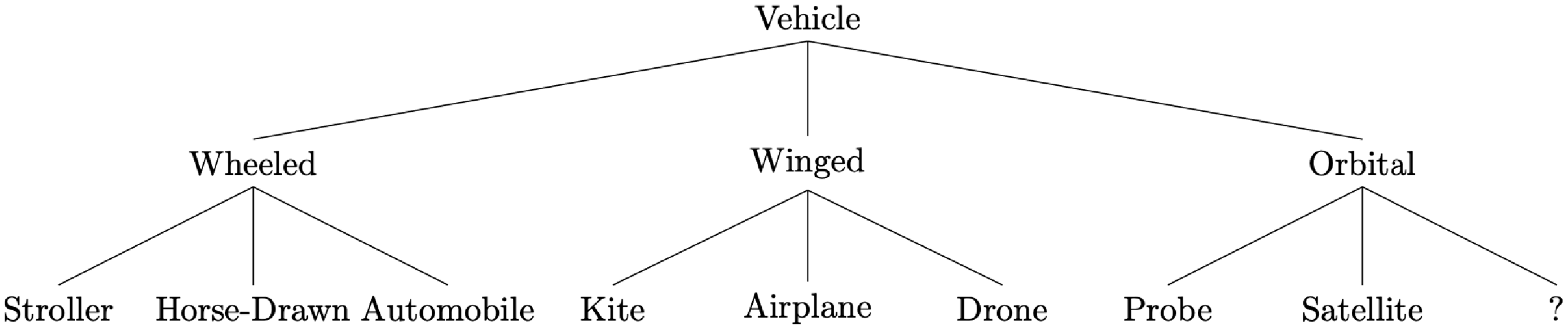

Figure 3 offers a concrete example, using H.L.A. Hart’s canonical example of a rule prohibiting “vehicles in the park.”Footnote 89 Application turns on how the term “vehicle” is interpreted, while resilience depends on whether that interpretation remains stable over time. In 1885, a specific ban on “horse-drawn carriages” would have clearly covered horsecarts while excluding baby carriages, a reasonable intention. But after the invention of the first “horseless carriage” in 1886, such a specific rule would have become self-undermining: unless formally restated, it would no longer obviously apply to all analogous vehicles, opening the door to evasion by automobile owners. In the counterfactual, Hart’s blanket prohibition on “vehicles” would be far more resilient, since most reasonable interpreters would agree that automobiles—however novel—are merely another type of vehicle. In this example, the resilience of the rule across time necessarily turns on whether it was framed in general or specific terms.

Semantic relations between “vehicle” and its hyponyms

Notes: Example hyponyms only. Boundaries between categories need not be mutually exclusive, but they are always hierarchical. For example, NASA’s X-15 exoatmospheric rocket plane could be categorized as a terrestrial vehicle, an aerial vehicle, and/or a space vehicle depending on how interpreters weight its different attributes. But a generic rule against “vehicles” would simply cover all three possibilities, eliminating the need for weighting.

The logic of convergent flexibility implies five testable hypotheses. When legal rules are highly specific, the contents of the prohibitive category are tightly fixed. This rigidity constrains analogical reasoning: genuinely novel technologies lack antecedents in that category. The higher the specificity, the less elastic the mental classification process, and the more permissive the law will seem for unanticipated technological shocks. Therefore:

H1 Specificity versus mundane technologies: the propensity to advance restrictive arguments should be highest when legal rules are specific and technology is mundane.

H2 Specificity versus novel technologies: the propensity to advance restrictive arguments should be lowest when legal rules are specific and technology is novel.

In contrast, imprecision reverses the logic of inelasticity by increasing the plausibility of restrictive interpretations by analogy. Crucially, the effects of imprecision depend on whether it stems from vagueness or generality. Vagueness makes opposing interpretations equally plausible, increasing discretion and therefore generating divergence. As new cases emerge, generality—which reduces space for permissive interpretations—makes inclusion by analogy likelier. Therefore:

H3 Generality versus either technology: the propensity to advance restrictive arguments should be moderately high when legal rules contain at least as much generality as they do vagueness.

While H1 to H3 hypothesize about legal interpretations, how do interpretations influence actual foreign policy outcomes? In foreign policy contexts, legal advisors act as “counsel” and political principals as “clients.”Footnote 90 Political principals may desire particular outcomes, but it is the role of legal experts—trained to operate within the closed system of formally rational law—to identify the boundaries of “legitimate” discourse.Footnote 91 This norm of legitimacy functions not merely as a professional ethic but as a strategic constraint: legal claims acquire persuasive force only when third parties—including one’s opponents—recognize them as credible.Footnote 92 Legal discourse depends on shared interpretive standards, and lawyers cannot advance arbitrary arguments. Success depends on aligning arguments with prevailing legal understandings. An argument that best serves the principal’s interest is one that lies within the bounds of legitimacy, balancing the pursuit of advantage against the risk of reputational harm.

Legitimacy plays an important role in any highly legalized dispute settlement process, including domestic law. However, its role is arguably even more important in international law, where interpretation is decentralizedFootnote 93 —mainly through the mechanism of reputation.Footnote 94 Arguments that fall within accepted bounds of credibility have a better chance of gaining traction,Footnote 95 while those that stretch the bounds of credibility are better off being concealed.Footnote 96 Accordingly, we should not expect systematic deviation in the direction of interpretation, even when principals are motivated to pursue permissive policies and seek a green light from their advisors. Under highly precise rules, this is because legal interpreters either cannot credibly evade (mundane technologies) or have no need to do so (novel technologies). Similarly, general rules constrain rhetorical maneuverability while systematically biasing it toward restriction.

H4 Resistance to politicization: the relationships posited in H1, H2, H3 should hold in equilibrium, without systematic deviation under politicized client instructions, when legal rules contain at least as much generality as they do vagueness.

While H4 predicts no change in interpretative direction, the rationale embedded in legal advice may vary with the principal’s goals. Political principals may be motivated less by formal legality than by reputational or strategic considerations. In such cases, the justificatory content of legal advice should reflect the advisor’s expectations about what kinds of arguments the principal will find persuasive. Advisors tasked with providing impartial assessments to a neutral principal are more likely to emphasize the plain meaning of the law, whereas those advising political clients who have an interest in a more permissive outcome may instead frame their advice around reputational risk and potential blowback as a more effective mode of persuasion.

H5 Two pathways to the same interpretation: politicization should shift argumentative emphasis from textual to reputational grounds without reversing the direction of interpretation.

To summarize the empirical implications of the theory, precision (specificity) maximally constrains interpretive flexibility. While precision promotes compliance in the near term, it limits the law’s capacity to apply automatically to unforeseen problems. Imprecision, conversely, has conditional effects. Vagueness increases the plausibility of both permissive and prohibitive interpretations, prompting divergence in politically charged environments. Generality, in contrast, promotes convergence, since it is weakly more likely than specificity to apply to emergent phenomena by chance. Anticipating that other parties will view policies based on permissive interpretations as bad faith and noncompliant, legal advisors—trained to strategically navigate the language of law, and tasked with protecting their clients—should counsel restraint.

Research Design

These theoretical predictions are tested using a mixed-method approach. A randomized controlled trial (RCT) is first used to test the effect of generality and vagueness on legal interpretations regarding a novel satellite technology. The RCT findings are then complemented with comparative case studies designed to show how interpretations affect foreign policy decisions, culminating in disruption or resilience in practice. To offset the possibility that the results are somehow specific to satellite technology or space law, the case studies involve two different technologies in an entirely unrelated domain (that is, chemistry).

Recruitment Procedure

For realism, I target the population of interest: trained legal professionals with top US law school affiliations. Past experimental research has often relied on open-ended answers to assess participant rationale.Footnote 97 The writing task in this experiment diverges from typical open-ended survey formats by requiring long-form qualitative responses with no upper space limit. Long-form responses provide much richer insight into the participants’ thought processes. Participants were specifically asked to structure their arguments as follows:

-

• State a position (for/against restriction).

-

• Identify the legal issue(s).

-

• Make reference to any and all provisions you think are applicable.

-

• Clearly explain your reasoning, taking into account all information provided.

-

• Make no other assumptions other than what is provided (no outside information).

To boost participation, the RCT was embedded in a writing contest worth approximately USD 25,000 in prizes. The contest received public endorsements from several prominent legal scholars, and a well-known senior law professor with high-level policy experience volunteered to be named as a judge. Contestants were informed at the outset that their submissions would be evaluated based on clarity, issue-spotting, logical consistency, and overall persuasiveness. Under these evaluation criteria, written responses provide a window into the themes participants believed (1) would offer the best possible representation of their client, and (2) would be most likely to convince the client to adopt the recommendation.

Recruitment initially focused on an American law school in the top three US News ranking (name withheld to protect institutional anonymity). This was expanded to include four additional institutions, two of which are consistently ranked in the top fourteen (widely considered elite status). The remaining two are specifically recognized for channeling graduates into the US policymaking community. The contest was advertised through university-sanctioned national security law societies (NSLSs) at each institution. These societies are valuable networking and employment opportunity vehicles for students and alumni. Each NSLS maintains an email listserv for affiliates who practice, or have signaled interest in practicing, international law, foreign relations law, and/or national security law as a career. In addition to cash prizes, participants were informed that the names of contest winners would be published in a newsletter or the website of the relevant NSLS.

Case materials were sent to any affiliates who registered their interest by completing an initial pretreatment questionnaire. Over 1,000 individuals responded. Of those,

![]() $N = 450$

authenticated by proving their status and proceeding to treatment assignment. Despite instructions to devote only thirty minutes to the exercise, the median participant spent more than two hours meticulously crafting a legal brief and wrote in excess of 3,500 characters (600 words). The longest brief was 2,300 words (over ten pages). As especially well-written briefs stood a chance to win prizes and acclaim from the NSLS, participant alacrity can be attributed to the complexity of the exercise as well as their motivation to excel in the contest by writing intelligently and persuasively.

$N = 450$

authenticated by proving their status and proceeding to treatment assignment. Despite instructions to devote only thirty minutes to the exercise, the median participant spent more than two hours meticulously crafting a legal brief and wrote in excess of 3,500 characters (600 words). The longest brief was 2,300 words (over ten pages). As especially well-written briefs stood a chance to win prizes and acclaim from the NSLS, participant alacrity can be attributed to the complexity of the exercise as well as their motivation to excel in the contest by writing intelligently and persuasively.

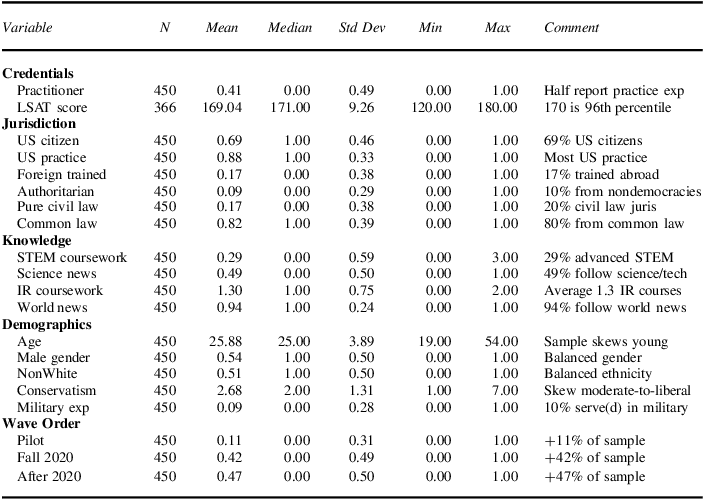

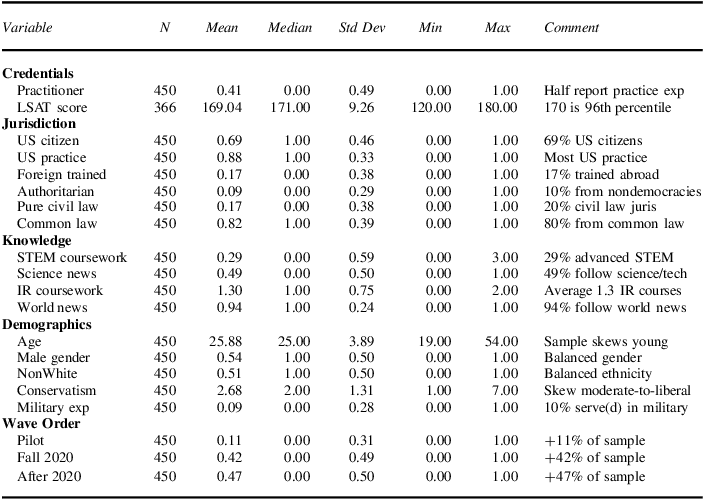

Sample Characteristics

The sample, which included a mix of advanced law students and professional practitioners, is as representative as possible for this difficult-to-target population. In total, individuals from sixty-two different countries participated. Because recruitment occurred through American law schools, the sample includes both US participants and internationally trained or internationally bound participants who received at least part of their training at a US institution. One-third of the sample was non-American, and 13 percent reported professional practice experience (or intention to practice) in countries other than the United States. Across the entire sample, one in ten originated from an autocratic country, and one in five had received prior legal training abroad. Approximately 10 percent reported military experience, which includes veterans who later applied to law school as well as judge advocates general who may have returned to study in graduate programs or as visiting fellows.

The participant pool exhibited the expected degree of covariate balance (see appendix). Table 2 summarizes participant characteristics. The median Law School Admission Test (LSAT) score was 170 out of 180 (96th percentile). Roughly half (41 percent) indicated practice experience. The median participant was a recent or expectant graduate who had taken at least two seminars in International Law. All participants had a minimum of one year of graduate legal training (JD or LLM) and had completed core courses such as Legal Writing. Four out of five had earned, or were close to earning, a JD degree, with the remainder holding LLM, LLD, or SJD degrees. Although the sample expectedly skews young, nonparametric tests (see appendix) found no association between the primary outcomes and age, experience level, legal tradition, practice specialty, coursework, regime type, or a variable proxying for home country job opportunities, demonstrating consistency across the sample.

Basic information about participants

Notes: Treated participants only. For information on individuals who registered but did not participate, see appendix. Not all participants were required to take the LSAT.

Scenario

The experimental scenario uses satellite technology as a test case because satellites can have both market and security implications. Dual-use technologies are thought to be more difficult to govern,Footnote 98 offering a stricter test of the theory. Participants who were assigned to see a novel version of the satellite were given the vignette shown in Figure 4. Mundane and novel satellites in this design differ only in their orbital capabilities (discussed later). An important objective of the design was to balance between realism and perceived novelty. Therefore, an actual but relatively rare type of orbit—a Lissajous orbit—is chosen to increase perceptions of “novelty.”Footnote 99 Although used on several occasions by spacefaring nations in the past, Lissajous orbits are largely unknown except to specialists. As of 30 August 2020, close to the date the experiment was first fielded, a Google search for “Lissajous orbit” or “Lagrangian orbit” yielded a miniscule 14,200 results.Footnote 100

Example vignette for T1: Novel

![]() $ \times $

T2: Specific

$ \times $

T2: Specific

Notes: Vignette contains four components. Left: Copy of the relevant legal text with four (4) randomly varied Article II provisions (T2). Middle: Intelligence report describing technological characteristics on 4+ dimensions (T1). Top right: list of previous satellite classifications. Bottom right: Client instructions (T3) Variation is summarized in Figure 5.

The first page of the materials introduced background information: participants are told to imagine the year is 2039. Most countries now have an active space program, and orbital lanes are increasingly overcrowded. To ensure fair access, a binding Orbital Convention had entered into force after being universally ratified ten years prior. Participants are informed that the Convention is lex specialis (supersedes other relevant law), and contains no withdrawal or dispute settlement provisions. Under the Convention, all satellites must be registered with a United Nations (UN) Satellite Authority. The Convention distinguishes between “restricted satellites,” which count toward a 1,000-satellite limit for each country, and “permitted satellites,” which do not count toward the limit. Participants who confirmed they had at least thirty minutes to complete the exercise were shown one of eight (

![]() ${2^3} = 8$

) vignettes using simple random assignment.

${2^3} = 8$

) vignettes using simple random assignment.

Vignettes consisted of three components. The first component was a report describing a device incorporating the new technology, called “XNS,” as well as its operating capabilities (T1). The report was accompanied by a schedule of past satellite classifications, compared across five technical dimensions. The second component was the full text of the Orbital Convention (T2). The third component included specific instructions from a client (T3), included in the technical report. Treatment conditions, described later, centered on variation in these components. The legal issues, as subjects correctly inferred from the request, is whether XNS technology should (1) be classified as a satellite at all; and if so, classified as (2) “restricted” or “permitted” under the rules of the Convention. Deploying a “restricted” satellite would exceed the inventing country’s limit and therefore violate the terms of the Convention.

The exercise was deliberately complex given the population of interest. Unlike other types of survey respondents for which abstract scenarios have been deemed appropriate,Footnote 101 lawyers are trained to identify loopholes and inconsistencies. This necessitated an extremely thorough and cognitively intensive scenario. The vignette design ensured that any “loopholes” participants might discover (that is, through imprecise definitions) were intentionally embedded as part of a rigorous test of the hypotheses. For convenience, Figure 5 summarizes the variation, theoretical priors, and theoretical predictions.

Summary of treatment/control conditions

T1: Technological novelty

We previously defined a “minimally novel” technology as one that introduces exactly one new design feature—here, a feature based on previously unknown physical principles. The technology shown in T1 is compared to past satellites along five dimensions listed in Schedule 1 in Figure 4. For past satellites, all of which are mundane, one feature has been implicitly held constant: orbital periodicity. Because their flight paths are regular and repeating, these satellites eventually intersect their point of origin. The Lissajous orbit of XNS alters this feature. Its quasi-periodic trajectory introduces the previously unconsidered dimension of periodicity itself. Moreover, achieving quasi-periodicity requires XNS to orbit the combined gravity well of the Earth, Sun, and Moon rather than the Earth alone. Even if the Article might technically permit non-Earth orbits, questions may arise as to whether a non-repeating trajectory is an orbit at all for the purposes of Article II. Thus a single design change disrupts established classification practices by giving salience to two unprecedented, higher-order dimensions.

The mundane version of XNS is still new, but differs from past satellites only in incremental ways—such as intersecting its origin on odd intervals, rather than on every pass. Participants who reason carefully should be inclined to restrict the mundane satellite, especially under the specific version of Article II (

![]() ${T_2} = 1$

), which very precisely restricts certain past satellites on previously understood dimensions. The mundane

${T_2} = 1$

), which very precisely restricts certain past satellites on previously understood dimensions. The mundane

![]() $ \times $

specific cell therefore offers a baseline for comparison, since restrictive interpretations are expected.

$ \times $

specific cell therefore offers a baseline for comparison, since restrictive interpretations are expected.

The crux of T1 is that observable satellite attributes—eccentricity, inclination, and apoapsis—implicitly depend on the location of the gravitational centerpoint. Participants in both conditions must compare these observed attributes to the Orbital Convention reference rule in T2, described below. In the “novel” version of XNS, the relationship between these features and the centerpoint is made explicit. If the centerpoint is determined by three celestial barycenters—only one of which is unambiguously planetary—rather than two, then a highly precise version of the ten-year-old Article II—whose framers do not explicitly seem to have anticipated more than two barycenters—might implicitly exclude technologies based on nonplanetary foci, regardless of how similar that technology is to past technologies on other, explicit dimensions.

T2: Reference rule specificity

The Convention holds constant a number of provisions designed to control for a variety of potential unrelated objections, such as preambular language, ratification, entry into force, conflicts of law, and right to withdraw. Holding these conditions constant forces participants to focus on the actual variation of interest—precision in the definition of a “restricted satellite.” The variation centered on four operative provisions in Article II. These vary from imprecise (

![]() ${T_2} = 0$

) to precise (

${T_2} = 0$

) to precise (

![]() ${T_2} = 1$

). For a harder test of the theory, only Subprovisions 1 and 2 contained generalities, while Subprovisions 3 and 4 contained vagueness. This was intended to ensure a double-barreled, countervailing effect. Since vagueness has been shown by prior political science research to encourage evasion, any convergence we do see should be taken as evidence that generality predominates.

${T_2} = 1$

). For a harder test of the theory, only Subprovisions 1 and 2 contained generalities, while Subprovisions 3 and 4 contained vagueness. This was intended to ensure a double-barreled, countervailing effect. Since vagueness has been shown by prior political science research to encourage evasion, any convergence we do see should be taken as evidence that generality predominates.

How has imprecision or ambiguity been operationalized in past research? Much of the foundational political science literature on precision was formal-theoretic.Footnote 102 As such, measurement strategies were not required. Early empirical models used document length as a proxy for specificity,Footnote 103 though this risked inadvertently conflating detail with omnibus regulatory approaches. Subsequent efforts alternated between manually codedFootnote 104 and crowdsourcedFootnote 105 measures, typically using undergraduate coders lacking domain expertise. More recent scholarship draws on computational, composite, or dictionary methods,Footnote 106 which, although innovative, are vocabulary dependent and do not distinguish between vagueness/generality, central to the theory.Footnote 107 To compensate for these shortcomings, Convention language—specifically, Article II—is constructed using workhorse measures from structural linguistics.

Generality is operationalized in Provisions 1 and 2 using linked hypernym–hyponym pairs drawn from Princeton’s WordNet, the most comprehensive English-language database of semantic relations.Footnote

108

Each pair consists of a more abstract term (the hypernym) and a more specific instantiation (the hyponym). For example, the specific version (

![]() ${T_2} = 1$

) uses the modifier planetary barycenter to describe a satellite’s gravitational focus, whereas the general version (

${T_2} = 1$

) uses the modifier planetary barycenter to describe a satellite’s gravitational focus, whereas the general version (

![]() ${T_2} = 1$

) refers more abstractly to a gravitational centerpoint, without invoking any planetary criterion. In the WordNet hierarchy, planetary barycenter is a direct hyponym of gravitational centerpoint. Under the theory, generality in the term “centerpoint” should reduce the interpretive salience of emergent (that is, nonplanetary-bound) technological attributes.

${T_2} = 1$

) refers more abstractly to a gravitational centerpoint, without invoking any planetary criterion. In the WordNet hierarchy, planetary barycenter is a direct hyponym of gravitational centerpoint. Under the theory, generality in the term “centerpoint” should reduce the interpretive salience of emergent (that is, nonplanetary-bound) technological attributes.

Note that the Convention is completely silent on precedent, apoapsis (distance from Earth), and visual cues. Information on these dimensions is included in the vignette in Figure 4 partly for immersion; partly as an attention check; and partly as a “red herring” to bait evasion. Although research has found that visual cues tend not to affect experimental outcomes in ordinary survey-experimental research,Footnote 109 but participants in the present design might attempt to strategically cite extralegal content in an attempt to excuse evasive policies.

T3: Client interests

Efforts to measure “noncompliance” or “evasion” in the study of international law have long been hindered by pervasive selection issues.Footnote 110 Another challenge is that compliance ultimately depends on capacity and intent (subjective factors).Footnote 111 The RCT is designed to address both issues. To test whether generality can be divergent in politically charged situations (H4 and H5), T3 varies the identity of the client whose interests participants are being tasked to advance. Because only one group had incentives to deviate, the average interpretative distance between these two groups allows for a direct quantification of compliance pull.

As a benchmark of “true” compliance, participants in the control condition (

![]() ${T_3} = 0$

) were instructed to render an impartial judgment on behalf of a standing “registration authority” with no vested interest in the outcome. Interpretations in this condition are assured to be good faith, since there is no incentive to deviate. Specifically, participants who work for the registration authority are told only that “the legitimacy of our office depends on an unbiased assessment.” The remaining participants were instructed to advise the government of the country that invented XNS (

${T_3} = 0$

) were instructed to render an impartial judgment on behalf of a standing “registration authority” with no vested interest in the outcome. Interpretations in this condition are assured to be good faith, since there is no incentive to deviate. Specifically, participants who work for the registration authority are told only that “the legitimacy of our office depends on an unbiased assessment.” The remaining participants were instructed to advise the government of the country that invented XNS (

![]() ${T_3} = 1$

). Participants in this condition are specifically informed by the client that “XNS is expected to convey substantial advantages for our country’s economic and national security, and we regard its development as top national priority.” Results reveal how participants in the treatment condition balance between competing professional imperatives—their fiduciary duty to clients, and their duty to uphold legal legitimacy.

${T_3} = 1$

). Participants in this condition are specifically informed by the client that “XNS is expected to convey substantial advantages for our country’s economic and national security, and we regard its development as top national priority.” Results reveal how participants in the treatment condition balance between competing professional imperatives—their fiduciary duty to clients, and their duty to uphold legal legitimacy.

Outcomes

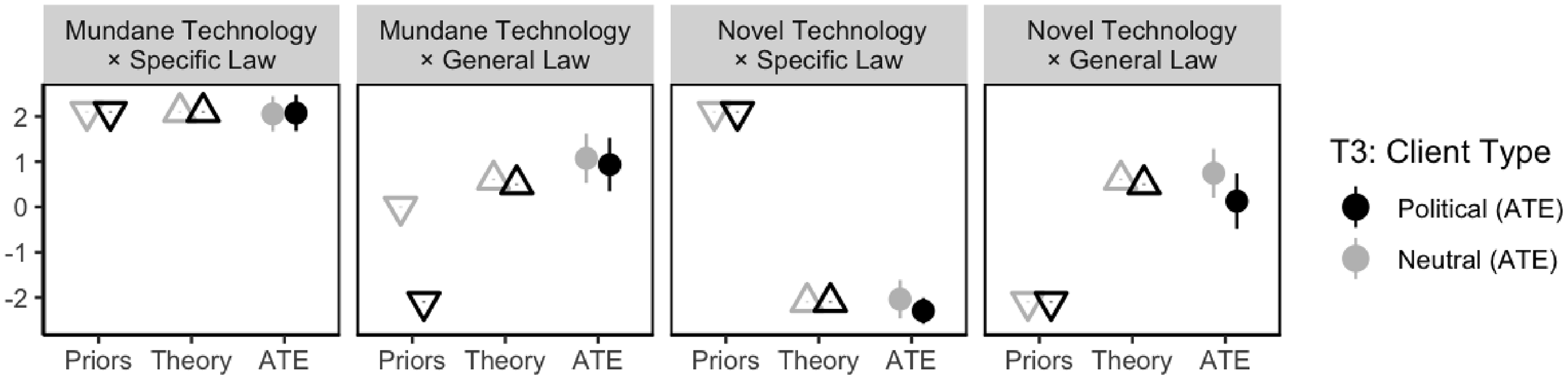

The primary dependent variable is a seven-point Likert score measuring the direction and confidence of interpretations, Y_Likert. Positive values indicate more restrictive classifications—in other words, more confidence that XNS is indisputably prohibited by the Convention. Following convention in previous psycholinguistics experiments,Footnote 112 this variable is a composite of two questions. The first question asks whether XNS would count as a “permitted satellite or a restricted satellite under the Orbital Convention” (Yes/No/Don’t Know). The second asks participants to rate their level of confidence about the answer. Both questions are multiplied to form a seven-point composite. An advantage of asking the first question separately is that it reinforces the idea embedded in Lotus that there can only be one correct answer. Another advantage is that, because refusal to classify and uncertainty are measured independently, we can (in principle) distinguish high-confidence refusals to classify from genuine classification uncertainty. Results for Y_Likert are shown in Figure 6 and discussed in the next section. After making their selections, participants are tasked with writing a legal brief, Y_Essay, in which they summarize the case facts and then argue for or against the proposition that XNS is “restricted” under the Convention.

Main results: confidence that existing law applies

Results

Main Effects: Direction of Interpretation

To test hypotheses H1–H4, I estimate how interpretations about the applicability of the Convention become more restrictive as T1 (technological novelty), T2 (rule specificity), and T3 (client instructions) vary. Suppose Y is a vector of outcomes,

![]() $\alpha $

is the intercept,

$\alpha $

is the intercept,

![]() $\beta $

is the effect associated with each treatment vector T,

$\beta $

is the effect associated with each treatment vector T,

![]() $\zeta $

is an interaction coefficient.

$\zeta $

is an interaction coefficient.

![]() ${\bf{X}}$

is a covariate matrix with

${\bf{X}}$

is a covariate matrix with

![]() $\gamma $

coefficients, which is empty in the base model. In the appendix, LASSO is used to select the optimal set of predictive covariates

$\gamma $

coefficients, which is empty in the base model. In the appendix, LASSO is used to select the optimal set of predictive covariates

![]() ${X^{\rm{*}}} \in {\bf{X}}$

:

${X^{\rm{*}}} \in {\bf{X}}$

:

Figure 6 plots the main results in Y_Likert based on all

![]() $N = 450$

responses. The y-axis is the direction of interpretation, from permissive (negative) to restrictive (positive). Figure 6 is designed to aid in comparing prior theoretical expectations (“priors”), hypotheses H1–H3 (“theory”), and actual findings (“ATE,” represented with 95 percent confidence intervals). The effect of generality can be observed by comparing Panes 1

$N = 450$

responses. The y-axis is the direction of interpretation, from permissive (negative) to restrictive (positive). Figure 6 is designed to aid in comparing prior theoretical expectations (“priors”), hypotheses H1–H3 (“theory”), and actual findings (“ATE,” represented with 95 percent confidence intervals). The effect of generality can be observed by comparing Panes 1

![]() $ \to $

2 and 3

$ \to $

2 and 3

![]() $ \to $

4. The effect of novelty is represented by Panes 1

$ \to $

4. The effect of novelty is represented by Panes 1

![]() $ \to $

3 and 2

$ \to $

3 and 2

![]() $ \to $

4. Impartial assessments are plotted in grayscale, and politicized interpretations are shaded black. For convenience, points represent the following:

$ \to $

4. Impartial assessments are plotted in grayscale, and politicized interpretations are shaded black. For convenience, points represent the following:

-

$ \bullet $

Circles denote the results (ATE) for each cell (95% confidence intervals).

$ \bullet $

Circles denote the results (ATE) for each cell (95% confidence intervals). -

$\Delta $

Triangles denote the theoretical predictions H1–H4 (illustration).

$\Delta $

Triangles denote the theoretical predictions H1–H4 (illustration). -

$\nabla $

Inverted triangles denote prior expectations from the literature (illustration).

$\nabla $

Inverted triangles denote prior expectations from the literature (illustration).

To preview the results, within each pane, note the close correspondence between circles (average treatment effects) and triangles (hypotheses). Compare that to large differences between the ATE and the inverted triangles (prior expectations in the literature). The results show a powerful interaction between novelty and generality. The results are robust to specification. Even in the base model with no covariates, nearly half the variation is explained by the treatments. There are three key takeaways from the main results in Figure 6.

The first finding is that H1 and H2, which hypothesized a negative relationship between legal specificity and technological novelty, are strongly supported. Interpretations about novel technologies become much more permissive when Article II is specific (Panes 1 and 3). As predicted, participants readily applied the specific text to a version of XNS based on mundane technology (

![]() $1.659$

,

$1.659$

,

![]() $p \lt 0.001$

). However, they were reluctant to apply the specific text to the version of XNS based on novel technologies (

$p \lt 0.001$

). However, they were reluctant to apply the specific text to the version of XNS based on novel technologies (

![]() $ - 4.837$

,

$ - 4.837$

,

![]() $p \lt 0.001$

). Because responses were on a seven-point scale, this is a substantial effect size. From the standpoint of existing theory, this finding alone would appear to refute classical institutions literature and confirm the existence of a pacing problem: even the most precise law, it seems, is no match for technological change. The second finding, however, directly contradicts conventional wisdom about the pacing problem. As H3 predicted, when Article II employs more general language (Panes 2 and 4), interpretations tended to be restrictive. This pattern held despite the fact that participants saw very different technologies, one mundane and one that the Convention’s framers were unlikely to have anticipated.

$p \lt 0.001$

). Because responses were on a seven-point scale, this is a substantial effect size. From the standpoint of existing theory, this finding alone would appear to refute classical institutions literature and confirm the existence of a pacing problem: even the most precise law, it seems, is no match for technological change. The second finding, however, directly contradicts conventional wisdom about the pacing problem. As H3 predicted, when Article II employs more general language (Panes 2 and 4), interpretations tended to be restrictive. This pattern held despite the fact that participants saw very different technologies, one mundane and one that the Convention’s framers were unlikely to have anticipated.

The last finding considers the implications of generality for emergent flexibility. After all, since interpretations in the imprecise condition (

![]() ${T_2} = 0$

) are slightly depressed, we cannot conclusively rule out the possibility that vagueness has caused divided agreement, or simple uncertainty, about how the Convention should be applied. The design leverages politicization (T3) to ensure separation on H4 if either (a) generality fails to produce convergent effects or (b) vagueness dominates generality. As H4 predicted, government priorities (black shade) had little effect on the direction of interpretation, even when Article II was imprecise (

${T_2} = 0$

) are slightly depressed, we cannot conclusively rule out the possibility that vagueness has caused divided agreement, or simple uncertainty, about how the Convention should be applied. The design leverages politicization (T3) to ensure separation on H4 if either (a) generality fails to produce convergent effects or (b) vagueness dominates generality. As H4 predicted, government priorities (black shade) had little effect on the direction of interpretation, even when Article II was imprecise (

![]() $ - 0.272$

,

$ - 0.272$

,

![]() $p \gt 0.1$

). Nor was any statistically significant difference observed for

$p \gt 0.1$

). Nor was any statistically significant difference observed for

![]() ${T_3}$

in subgroup analyses (

${T_3}$

in subgroup analyses (

![]() ${T_2} = 0$

,

${T_2} = 0$

,

![]() $p \gt 0.1$

) or three-way interaction tests (

$p \gt 0.1$

) or three-way interaction tests (

![]() ${T_2} \times {T_3}$

,

${T_2} \times {T_3}$

,

![]() $p \gt 0.1$

).

$p \gt 0.1$

).

That a politically charged instruction set had little effect on the direction of interpretation is particularly striking, as role-play salience has been shown to be a powerful moderator in past experimental research.Footnote 113 Because participants had clear monetary incentives to gain client acceptance, and clients are presumably more accepting of sympathetic advice, the null findings on T3 effectively quantify the reluctance of participants, in dollar terms, to advance politically convenient but illegitimate recommendations.

Causal Mechanisms: Justificatory Strategy

Together, the findings in the previous section offer strong support for the theory that generality is a powerful cause of interpretive convergence, helping to promote resilience against technological disruption. However, while Figure 6 obtains the causal effect of general language on interpretive direction, it does not explain why legal professionals reach these conclusions. The direction of interpretation may not have varied, but how seriously did participants take their roles as advisors—of particular importance to the null findings on T3?

In this section, I investigate causal mechanisms. The results identify two unique justificatory pathways to the same restrictive interpretation. That is, the identity of the client (T3) significantly determined whether participants who recommended restraint tended to emphasize the plain-text meaning of Article II or reputation and credibility in their advisory briefs. Since the psychological effects of vagueness should be universal, but only one group received a politicized instruction set, we can infer two stages. In the first (cognitive or “communicative”) stage, generality operates psychologically: interpreters reason by analogy and conclude that the Convention applies. In the second (reputational or “strategic”) stage, participants form expectations about how legally implausible arguments would be received by external audiences. Both pathways produce restraint, but through distinct mechanisms.

To probe justificatory content, I rely on a Structural Topic Models (STM) approachFootnote 114 to estimate how treatment assignment affects topical emphasis. Now widely used in political science, STMs combine the strengths of unsupervised topic models with the ability to use randomized experimental treatments as statistical predictors. I estimate three STMs to study subjects’ written legal analyses. Results from each model are plotted in Figure 7, with model validation and hyperparameter choices and discussed in the appendix. The x-axis of Figure 7 describes the probability of a given topic appearing in a document as treatment assignments varied. A mathematically optimal number of highly prevalent argumentative themes is listed along the y-axis. The findings are supported by qualitative excerpts in Figure 8, with more examples in the appendix.

Structural topic model results: emphasis plots

Notes: Model 1 (left): effect of novelty on specific laws. Model 2 (middle): effect of generality on interpretations about the more novel technology. Model 3 (right): effect of politicization on reasons why a restrictive policy should be adopted. Statistically significant topics are marked with an asterisk (“

![]() ${\rm{*}}$

”).

${\rm{*}}$

”).

Excerpted arguments by T2 condition (T1 = 1 fixed)

Notes: Arguments about the more novel technology under different conditions. See appendix for additional examples.

Model 1 (left) provides another test of the classical institutions literature, which assumed a strictly positive relationship between precision and compliance. Consistent with H1 and H2, model 1 provides higher-dimensional evidence of a negative interaction between specificity and novelty. When the Convention was fixed in its more specific form (

![]() ${T_2} = 1$

), participants placed greater emphasis on perceived novelty when the technology introduced new properties and were less willing to argue that the Convention clearly applied. This pattern held for both groups of advisors, regardless of their T3 assignment.

${T_2} = 1$

), participants placed greater emphasis on perceived novelty when the technology introduced new properties and were less willing to argue that the Convention clearly applied. This pattern held for both groups of advisors, regardless of their T3 assignment.

Model 2 (middle) tests conventional wisdom about the pacing problem. Again, it also shows that when technological novelty was fixed (

![]() ${T_1} = 1$

), generality exerted a powerful psychological effect on interpretation—again, irrespective of T3. Participants working with a more general version of the Convention were far more likely to apply Article II by analogy, reasoning that this interpretation was both fair and consistent with the framers’ intent, despite their lack of foresight. In contrast, participants exposed to the more specific version typically argued that XNS was permitted under Article II and that only a formal amendment could restrict it. Since both groups in model 2 saw an identical (novel) technology (

${T_1} = 1$

), generality exerted a powerful psychological effect on interpretation—again, irrespective of T3. Participants working with a more general version of the Convention were far more likely to apply Article II by analogy, reasoning that this interpretation was both fair and consistent with the framers’ intent, despite their lack of foresight. In contrast, participants exposed to the more specific version typically argued that XNS was permitted under Article II and that only a formal amendment could restrict it. Since both groups in model 2 saw an identical (novel) technology (

![]() ${T_1} = 1)$

, this constitutes strong evidence that not only can language alone shape legal interpretations, but also that language may even shape underlying perceptions of technological “novelty.”Footnote

115

${T_1} = 1)$

, this constitutes strong evidence that not only can language alone shape legal interpretations, but also that language may even shape underlying perceptions of technological “novelty.”Footnote

115

Model 3 contains the most important takeaway. Figure 6 has already shown that politicized advice tended to converge with impartial advice, and that advice concerning the interpretation of general text was, on average, more restrictive. Model 3 analyzes the justifications participants provided for adopting policy based on a more restrictive reading. Participants asked to provide an impartial assessment tended to offer justifications to the left, whereas participants advising a potentially evasive government tended to offer justifications to the right. As shown, impartial advisors emphasized pure reason and the plain meaning of the text, consistent with the findings from model 2. Government advisors, conversely, placed greater emphasis on words like “credibility” and “legitimacy.”

Taken together, the STM and qualitative results strongly support H5. When the law is highly precise, advocates have little room—or need, depending on their interests—to argue that new technologies are exempt. But when the law is more general, restrictive interpretations appear more legitimate. Anticipating that illegitimate policies could invite reputational backlash, government advisors framed credibility as a matter of national interest, emphasizing that compliance safeguards the state’s reputation. Rather than exploiting legal ambiguity, they overwhelmingly advised against opportunistic policies that might be likely to draw fire from the international community.

These results are consistent with psycholinguistic research showing that hypernymy anchors categorization.Footnote 116 Language provides an initial focal point for legal reasoning, shaping what interpreters perceive as plausible applications of a rule. When political interests are salient, reputational considerations then supply a justificatory logic for advancing recommendations that remain credible to external audiences. Although participants could, in principle, depart from this linguistic anchor, they are generally unwilling to do so—even when advising evasive clients. Even without guidance about a “correct” answer, participants in both conditions nevertheless converged on a shared, text-based understanding of Article II, treating the Convention itself as a source of epistemic constraint.

Often overlooked in the study of compliance, shared understandings are central to the verification process. Only through a “meeting of the minds” can states anticipate when contested behavior is likely to be judged noncompliant, and when enforcement or reputational costs may follow. In this sense, participants were advancing their clients’ interests—specifically, by minimizing exposure to noncompliance allegations. Because the policy choice in this case was dichotomous (that is, deploy or not deploy XNS), no interpretation supporting deployment fell within the bounds of credibility, and restraint followed. Where policy choices are more continuous, by contrast, legal advisors are likely to select the most advantageous interpretation that remains defensible within the prevailing legal consensus.

Linking Legal Interpretation to Foreign Policy

The RCT provides causal evidence for how technological change impacts legal interpretations. As a test of how legal interpretations influence actual foreign policy outcomes, this section examines two real-world cases involving two very different types of technology. The first concerns the impact of novichok nerve agents on the Chemical Weapons Convention (CWC) in 2018.Footnote 117 The second considers the impact of advanced Riot Control Agents (RCAs) on the Geneva Protocol (GP) in the late 1960s.Footnote 118 The CWC, designed as the GP’s successor, is roughly 400 times longer and far more detailed. Moreover, the GP was nearly twice as old as the CWC when each faced its respective technological shock. Conventional wisdom would therefore predict greater resilience for the CWC. Yet this was not the case. Consistent with H2, novichok’s opponents could not allege a violation under highly specific CWC due to an emergent loophole. Conversely, as H3 predicts, the GP’s more general prohibition was regarded by states as applicable to RCAs by analogy. The inventors of RCAs ultimately abandoned their technological ambitions rather than face accusations of violating the GP, whereas novichok eluded the CWC on a technicality, compelling its opponents to seek a formal amendment.

Failure Case: The 1993 Chemical Weapons Convention

Often lauded as “the world’s most successful disarmament treaty,”Footnote 119 the landmark Chemical Weapons Convention (CWC) entered into force in April 1997 after more than twenty years of hard-fought negotiations. All but four countries (Egypt, North Korea, South Sudan, and Israel) have ratified it. Celebrated for its precision,Footnote 120 the CWC exhaustively enumerates all banned substances on the basis of their specific chemical structure.Footnote 121 These are listed individually in CWC Schedule 1, down to their preferred IUPAC name, or PIN. Such precision was intended to prevent any confusion about which chemicals are weapons. Within ten years, however, one state—Russia—had found a way to use the CWC’s specificity against it. Because the exact chemical structure of Russia’s new chemical weapon—called novichok, or “newcomer,” in Russian—was accidentally omitted from the CWC, affected states could not allege a violation. Novichok’s discovery spurred “months of wrangling” over how to formally amend the CWC.Footnote 122

Novichok consists of a family of A-series nerve agents developed secretly by the Soviet Union under the so-called Foliant Program during the Cold War. Nerve agents are highly toxic chemicals, similar to powerful insecticides, that cause death by disrupting the human nervous system. Novichok, however, was based on an entirely new family of chemical precursors. Whereas Soviets developed an organophosphorus version, the United States had also experimented with its own A-series technologies, based on carbamates. Both novichok and carbamate weapons are similar to past chemical weapons such as Sarin or VX in that they work by blocking the enzyme acetylcholinesterase. However, novichok was chemically unrelated to anything listed in the CWC.Footnote 123 The Soviet Union, observing that the CWC was likely to contain loopholes, intentionally kept its plans to experiment with new compounds secret during negotiations.Footnote 124 An explicitly stated goal of the novichok program was to find a legal means of circumvention.Footnote 125

Between 2009 and 2018, Russia’s interest in novichok was just a rumor.Footnote 126 In March 2018, however, Russian defector Sergei Skripal, his daughter, and four other people mysteriously died after coming into contact with an unknown substance in a public park in the United Kingdom.Footnote 127 The facts of the case were never in question: everyone agreed Russia was behind the attack, and there was conclusive proof that the agent in question was novichok.Footnote 128 However, CWC members could not find Russia in breach of the CWC, since novel organophosphorus agents like novichok were nowhere to be found in the 200-page document, just as the Kremlin intended.

Unable to condemn Russia under the CWC, states raced to incorporate novichok into the CWC’s text during the 24th Session. Negotiations were difficult. It took more than two years to update Schedule 1. States were challenged to formulate a precise prohibition without exact knowledge of novichok’s specific chemical structure. The United States, Canada, and the Netherlands collectively submitted a proposal to ban two new families of chemical structures, phosphono and phosphoro analogues. This proposal expanded on Schedule 2.B.04’s three-carbon atom limitation by incorporating three linear alkyl chains of ten carbon atoms each, plus a fourth chain of nine carbon atoms attached to a specific part of the molecule.Footnote 129 Russia immediately objected, thwarting progress.Footnote 130

The Western proposal was surprisingly broad. According to analysts, “based on the total number of possible non-cycloalkyl variations alone” the joint proposal’s lower bound would proscribe “

![]() $3 \times {10^{11}}$

theoretically possible molecular structures,” including many “substances with low toxicity.”Footnote

131

Russia’s five counterproposals were more specific. Russia conceded phosphono- and phosphoroamidine structures, but sought to limit the ban to one or two carbon atoms. This would result in a “total coverage of six phosphoro analogues and a single phosphono analogue”Footnote

132

as opposed to billions of combinations in the Western formulation. Russia also proposed including fourteen formaldoxime structures plus two families of carbamates, which the US Army is known to have explored. Formaldoxime in particular led to a breakdown in negotiations until Russia was finally induced to drop it.Footnote

133

$3 \times {10^{11}}$

theoretically possible molecular structures,” including many “substances with low toxicity.”Footnote

131

Russia’s five counterproposals were more specific. Russia conceded phosphono- and phosphoroamidine structures, but sought to limit the ban to one or two carbon atoms. This would result in a “total coverage of six phosphoro analogues and a single phosphono analogue”Footnote

132

as opposed to billions of combinations in the Western formulation. Russia also proposed including fourteen formaldoxime structures plus two families of carbamates, which the US Army is known to have explored. Formaldoxime in particular led to a breakdown in negotiations until Russia was finally induced to drop it.Footnote

133

A compromise was eventually reached in June 2020, more than two years after Skirpal’s poisoning.Footnote 134 Director General Fernando Arias celebrated the accomplishment, noting in a speech that Schedule 1 had been updated for “the first time in its history,” albeit at great cost.Footnote 135 Yet only two months after the changes came into effect, novichok was again used—this time against Russian dissident Alexei Navalny. Forensic analyses later determined that, despite containing “structural elements of [some prohibited substances on] both Schedules 1.A.14 and 1.A.15.80,” the weapon used against Navalny was also technically “unscheduled,” even under the updated CWC.Footnote 136 Noting the impossibility of “trying to identify and list [all] unscheduled chemicals” exhaustively in advance,Footnote 137 experts worry that states will continue to exploit emergent technological loopholes in the CWC.Footnote 138 Had the CWC imposed a general ban on “all weaponizable nerve agents” (for instance), Russia might still have opted to develop novichok, but could not have persuasively claimed that doing so was, strictly speaking, compliant with the treaty.

Success Case: The 1925 Geneva Protocol