The introduction of research methods in political science emphasizes the teaching of quantitative methods, even as publications using qualitative methods outnumber those using quantitative methods (Emmons and Moravcsik Reference Emmons and Moravcsik2020). Interviewing is a widely used qualitative method, developed as the sole methodological approach or as a component of mixed-method studies (Ellinas Reference Ellinas2023; Martin Reference Martin and Mosley2013; Mosley Reference Mosley2013). It can be deceptively considered an easy method to master and use in research (Knott et al. Reference Knott, Rao, Summers and Teeger2022; Potter and Hepburn Reference Potter, Hepburn and Gubrium2012): this misunderstanding can be exacerbated if students are not introduced early to the complexities of qualitative research and interview techniques, as well as the importance of foregrounding ethical considerations in the process.

This article contributes to the literature on teaching and learning by introducing a rubric for question development in undergraduate courses and discussing its development and implementation. It is structured as follows. We first discuss the methodological and ethical considerations surrounding teaching interview techniques. Second, we discuss the development of a rubric for question development, drawing on our experiences in different educational settings. Third, we examine the application of the rubric in the three educational institutions. We conclude by exploring possible uses for the rubric in different contexts.

METHODOLOGICAL AND ETHICAL CONSIDERATIONS

Despite the foundational role of qualitative methods in political science, qualitative research training is often limited. Scholars have consistently pointed to a disciplinary bias favoring quantitative methods, resulting in students’ uneven exposure to qualitative techniques. Emmons and Moravcsik (Reference Emmons and Moravcsik2020) found that only 40% of top-ranked political science PhD programs offered qualitative methods courses, with only two of those programs requiring students to take those courses. Qualitative training is even more limited at the undergraduate level. Large‐N curricular surveys show that between 70% and 85% of US political science programs require a single “scope & methods” course, and these often prioritize statistics (Thies and Hogan Reference Thies and Hogan2005; Turner and Thies Reference Turner and Thies2009). Recent updates confirm this pattern. Siver, Greenfest, and Haeg (Reference Siver, Greenfest and Haeg2016) find that fewer than 15% of departments list a stand-alone qualitative methods course in their undergraduate catalogs, and Szarejko and Carnes (Reference Szarejko and Carnes2018) state that qualitative components are most often offered as electives or capstones, not as part of required coursework.

Interviewing is a qualitative method designed to develop a deep understanding of subjects’ opinions, positions, reasoning, and comprehension. It is a widely used method in political science, but the evidence just presented implies that teaching interview techniques has not been a focus in methods courses, possibly generating a skills gap in students’ development of sound methodological approaches. Recent pedagogy research proposes innovative tools and alternative models of instruction. Becker, Graham, and Zvobgo (Reference Becker, Graham and Zvobgo2021) introduce a stewardship model emphasizing hands-on experience in designing interview protocols, and Arikan and Milosav (Reference Arikan and Milosav2024) promote the integration of qualitative methods such as interviewing into thematic electives to contextualize methods and increase student engagement. Elman, Kapiszewski, and Lupia (Reference Elman, Kapiszewski and Lupia2018) propose engaging students with real interview data such as transcripts and simulating research practice when fieldwork is not feasible.

Teaching interview research methods demands attention to core features of qualitative inquiry. Hsiung (Reference Hsiung2008) argues that explicit and continuous reflexivity is required to examine the researcher’s own assumptions, positions, beliefs, and emotions throughout the process. Hosein and Rao (Reference Hosein and Rao2016) encourage the use of reflective writing, peer review, and ethical case studies to scaffold the development of positionality. Jacob and Furgerson (Reference Jacob and Furgerson2012) note the importance of breaking down the process of designing interview protocols into smaller, more manageable steps for students as to way to increase their confidence and provide ethical grounding to novice researchers. Buys et al. (Reference Buys, Casteleijn, Heyns and Untiedt2022) highlight the challenges of insider–outsider dynamics in student-led interviews and suggest classroom-based reflection and guided protocol development as an approach. Political science interviews often target elites or hard-to-reach groups, which highlight the need to help students develop strategies for gaining entry, developing rapport, and ensuring safety. Students can benefit from role-play exercises modeling snowballing techniques, as well as case illustrations for interviewing diverse research populations (Ellinas Reference Ellinas2023; Esselment and Marland Reference Esselment and Marland2019; Goldstein Reference Goldstein2002; Van Puyvelde Reference Van Puyvelde2018; Wu and Savić Reference Wu and Savić2010).

Human subject ethics and cultural competency are two key aspects of proper interview techniques. Although interviewing may seem like a simple process to undergraduates, the complexity of human relations and hierarchies of power mean that developing proper interview questions is an essential ethical step (Pasque and alexander Reference Pasque and alexander2022). Instructors have a responsibility to ensure that students understand their obligation to research subjects. To fully understand this obligation, students must complete human subjects training. These considerations need to be reinforced in a manner that is clear to students. One way to do that is through the question rubric we introduce.

From a practical perspective, students can benefit from structural tools that guide the design of interview questions. The remainder of this article discusses the development and implementation of a rubric for question development to help students in this process. The rubric complements, rather than replaces, ethics protocols by foregrounding question design features that maximize data quality and minimize potential distress.

DEVELOPING THE RUBRIC

The rubric’s goals are to guide students in their development of interview questions and to provide instructors with a tool for structured feedback (table 1). To those ends, the rubric is an additional tool in the development of scaffolded protocols (Jacob and Furgerson Reference Jacob and Furgerson2012). Working in three distinct educational institutions, we developed a rubric that could be adapted to courses in various settings.

Table 1 Four Criteria for Interview Development Assessment Rubric

The rubric emphasizes four criteria for ethically sound and research-oriented question development. If shared early in the question development process, it has the potential to assist students in understanding expectations in the development of questions aligned with their research that are ethically sound.

The rubric emphasizes four criteria for ethically sound and research-oriented question development. If shared early in the question development process, it has the potential to assist students in understanding expectations in the development of questions aligned with their research that are ethically sound.

The first criterion in the rubric—Complexity of questions is sufficient to discourage one-word replies; follow-up questions are included—was designed to help students develop questions allowing for reflectivity. Single-word answers can hinder depth; open-ended prompts encourage narrative detail and enable the interviewer to probe causal mechanisms (Knott et al. Reference Knott, Rao, Summers and Teeger2022). By creating questions that encourage the interview subject to elaborate, students can develop an appreciation for the coproduction of knowledge in qualitative research. The requirement that students be ready with follow-up questions encourages them to engage in a conversation with their interviewees. In so doing, students further acknowledge the value of qualitative research in understanding subjects’ experiences, beliefs, and biases.

The second criterion—Organization of questions is logical—is meant to encourage control over the process. Organization will encourage an easier flow of the conversation, the basis of the interview. Sequencing questions from descriptive to evaluative mirrors best practices for elite and semistructured interviews because it helps sustain cognitive flow and minimizes topic-switch fatigue (Silverman Reference Silverman2011). An organized interview plan ensures that topics flow smoothly and allows for elaboration on earlier points. This encourages students to understand the interview as a reflective venture, facilitating a growing understanding of the subject’s perspective.

The third criterion—Tone of questions is designed to put interview subjects at ease—reinforces students’ awareness of their ethical responsibility to interviewees. Following ethics training, students will know intellectually their responsibility to protect their subjects. Ensuring that questions are designed to keep interviewees from experiencing unnecessary stress reinforces ethics in a practical sense and provides an additional opportunity to examine the students’ own biases. Asking a question in a way that reflects disapproval or enthusiasm can skew the responses of the subject, as well as cause discomfort. A neutral, empathetic tone is also key to ethical interviewing. Reflexivity training in the classroom helps students check how word choice projects judgment, whereas “catastrophic encounter,” the unsettling interaction with others that can challenge students’ understanding of power relations, highlights the emotional stakes for interview interactions (Gallagher Reference Gallagher2016; Hsiung Reference Hsiung2008). Instructors may create classroom simulations to showcase the importance of building rapport (Buys et al. Reference Buys, Casteleijn, Heyns and Untiedt2022).

The final criterion—Interview questions are appropriate for the research question—is practical and designed to help students focus on how their questions will further their own research. It is part of the process of mentoring students through practical hands-on work (Becker, Graham, and Zvobgo Reference Becker, Graham and Zvobgo2021). Linking prompt questions to the overarching research questions also models the approach of designing backward from inference (Martin Reference Martin and Mosley2013). Teaching students to design questions appropriate to their topic guides them in the process of defining and narrowing their research questions, as well as preparing for interviews.

APPLYING THE RUBRIC

Three courses in distinct educational settings used the rubric in different ways to help students develop ethically and methodologically sound interview questions.Footnote 1 At California State University San Marcos (CSUSM), a four-year public regional comprehensive university, the rubric was used in the course, Practice of Political Research. At State University of New York at Cortland (SUNY Cortland), a medium-sized public institution, it was used in the course, The Politics of Education Policy. At the College of Saint Benedict and Saint John’s University (CSB and SJU), a small liberal arts college, the course was Race, Gender, and Inequality in Brazil.

Students at CSUSM participated in an interview research workshop pairing short mock interviews with rubric-based peer feedback. The workshop synthesizes best practice in experiential methods training, including “practice-then-perform" scaffolding (Jacob and Furgerson Reference Jacob and Furgerson2012; Mosley Reference Mosley2013) and peer assessment for feedback literacy and self-regulation (Nicol and Macfarlane-Dick Reference Nicol and Macfarlane-Dick2006). Each stage explicitly reinforces one or more rubric dimensions to help students translate abstract criteria into concrete revisions.

Table 2 illustrates the rubric-based simulation and peer review cycle as a replicable template. Before class, students upload a version 1 (v-1) packet containing draft interview questions with planned follow-ups and supplementary materials. This ensures that students arrive with materials ready for the simulation and peer feedback. In class, we allocated roughly 50 minutes for the paired simulation and peer review cycles. In the first 20-minute block, student A interviews student B, who plays the role of the target respondent. After the mock interview, B scores A’s questions against the rubric. Then students switch roles. The instructor then leads a 10-minute debriefing session, guiding students to reflect on their co-learning experiences, identify recurrent issues, and how they may address these challenges. After class, students submit a v-2 packet of their interview questions and a short reflective statement on how they revised their interview questions.

Table 2 Mock Interview Structure

Many students indicate that the rubric made problems more obvious when they had to score a peer’s questions. Several students noted that hearing their questions read aloud helped simplify language and reorder prompts to smooth narrative flow. Others reported feeling more confident about approaching interviewees outside the classroom. By asking students to score “complexity,” “logical flow,” “tone,” and “alignment” immediately after the mock interview, the workshop helps them capture many issues in interview design, such as double-barreled prompts, topic-switch fatigue, and participant well-being (Gallagher Reference Gallagher2016; Leech Reference Leech2002; Silverman Reference Silverman2011). This helps close the loop between theory and practice, allowing instructors to focus on deeper methodological issues, such as linking prompts to the causal puzzle, calibrating tone to positionality and power dynamics, and anticipating participant well-being. The research workshop experience therefore provides students with an opportunity to practice diagnosing issues while maintaining the instructor’s guidance on ethical and analytical issues.

Students at SUNY Cortland prepared a set of questions designed for interviews with experts in education policy related to their policy research projects. The initial draft questions were written after an in-class discussion of interview techniques. The instructor evaluated the drafts using the rubric and then met with each student to discuss feedback. After those one-on-one meetings, students completed Collaborative Institutional Training Initiative (CITI n.d.) training and read Silverman’s (Reference Silverman2011) chapter, “Interview Data.” They then completed their final questions.

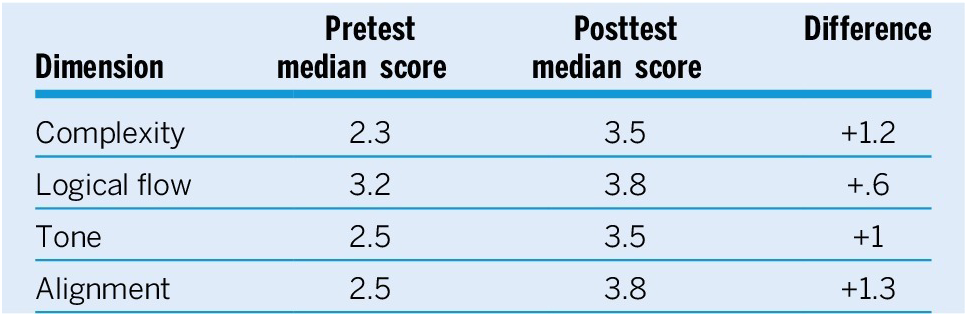

The instructor used the rubric to rate students’ draft and final questions. The draft served as the pretest, and the final questions served as the posttest. As a group and individually, students showed improvement in all four dimensions (table 3).

Table 3 SUNY Cortland Rubric Scores

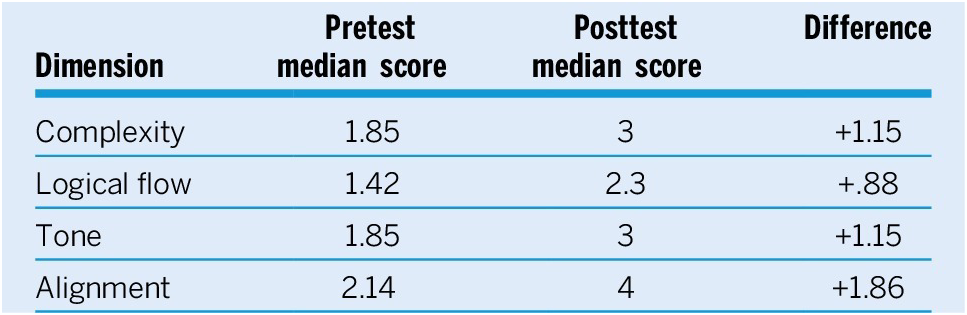

Students at CSB and SJU were asked, in the first week of classes, to develop interview questions based on their understanding of the course and their expectations of the study-abroad ethnographic portion of the class. The professor then introduced the research proposal assignment and began a series of scaffolding assignments to help students become more acquainted with ethnography and interview techniques and the ethics of asking good questions while conducting research abroad; these assignments included the completion of CITI training. Students were then able to work in groups to develop their individual research proposals and refine their interview questions. Using the first assignment as a pretest and the questions in the research proposal as a posttest, similarly to what occurred at SUNY Cortland, students showed improvement in all four dimensions; they showed marked improvement on the alignment between interview questions and their research questions (see table 4), which we expected because the pretest was conducted very early in the course.

Table 4 CSB and SJU Rubric Scores

These examples show different ways to use the rubric as a tool for teaching interview techniques. In all three experiences, students were introduced to the rubric as part of their question development process and received feedback based on its criteria by peers, faculty, or both—reinforcing the elements present in the rubric and assisting students in centering their interview questions while considering the ethical consequences of their questions and research.

DISCUSSION

The three courses highlighted used approaches that varied in the amount of class time dedicated to interview question development and the type of interview. The rubric provides a baseline of criteria that can assist in course development and in students’ understanding of interview question development. It can be used in different ways and in courses with distinct needs and time constraints related to teaching interview techniques.

All three examples emphasized the importance of ethics training and in-class discussions about developing interview questions that are both methodologically and ethically sound. Especially at the undergraduate level, instruction and discussion about hierarchies of power, researcher positionality, and human research ethics are essential to reinforce to students their responsibilities in the development of interview questions.

The rubric can also be used to assess the technical progress of students. Preliminary pretest and posttest rubric assessment showed improvement in all four categories, and future research focusing on pre- and posttest rubric assessment of a larger number of courses and students can provide a more nuanced understanding of the interventions that can best support student success in developing interview questions for qualitative analysis.

ACKNOWLEDGMENTS

The authors would like to thank the editors of this special issue, Shamira Gelbman and Sebastian Karcher, for their leadership and continuous support, as well as the anonymous reviewers for their thoughtful and productive feedback. We would also like to thank all the participants in the APSA Teaching Qualitative Methods in Political Science Symposium in 2023. Finally, none of this work would be possible without our students, and we cannot thank them enough for their contributions to our work.

CONFLICTS OF INTEREST

The authors declare no ethical issues or conflicts of interest in this research.