There may, indeed, be other applications of the system than its use as a logic.

——————————————————

A. Church, Postulates for the foundation of logic

1. Introduction

The sequent calculus and the lambda calculus were both invented in the context of logical investigations, the former by Gentzen as a language of proofs (Gentzen, Reference Gentzen1935) and the latter by Church as a language of functions (Church, Reference Church1932). The computational content of these calculi emerged at different times, with the relevance of

![]() $\beta$

-reduction of lambda terms to the emerging theory of computation being more quickly realised than the relevance of cut-elimination. By now, it is clear that both calculi have logical and computational aspects and that the two calculi are deeply related to one another. In this paper, we revisit this relationship in the form of an isomorphism of categories (Theorem 4.15)

$\beta$

-reduction of lambda terms to the emerging theory of computation being more quickly realised than the relevance of cut-elimination. By now, it is clear that both calculi have logical and computational aspects and that the two calculi are deeply related to one another. In this paper, we revisit this relationship in the form of an isomorphism of categories (Theorem 4.15)

for each sequence

![]() $\Gamma$

of formulas (née types) where

$\Gamma$

of formulas (née types) where

![]() $\mathcal{S}_\Gamma$

is a category of proofs in intuitionistic sequent calculus (defined in Section 2) and

$\mathcal{S}_\Gamma$

is a category of proofs in intuitionistic sequent calculus (defined in Section 2) and

![]() $\mathcal{L}_\Gamma$

is a category of simply-typed lambda terms (defined in Section 3). Both categories have the same set of objects, viewed either as the formulas of intuitionistic propositional logic or simple types. The set of morphisms

$\mathcal{L}_\Gamma$

is a category of simply-typed lambda terms (defined in Section 3). Both categories have the same set of objects, viewed either as the formulas of intuitionistic propositional logic or simple types. The set of morphisms

![]() $\mathcal{S}_\Gamma (p,q)$

is the set of proofs of

$\mathcal{S}_\Gamma (p,q)$

is the set of proofs of

![]() $\Gamma \vdash p \supset q$

up to an equivalence relation

$\Gamma \vdash p \supset q$

up to an equivalence relation

![]() $\sim _p$

generated by cut-elimination transformations and commuting conversions together with a small number of additional natural relations, while

$\sim _p$

generated by cut-elimination transformations and commuting conversions together with a small number of additional natural relations, while

![]() $\mathcal{L}_\Gamma (p,q)$

is the set of simply-typed lambda terms of type

$\mathcal{L}_\Gamma (p,q)$

is the set of simply-typed lambda terms of type

![]() $p \rightarrow q$

whose free variables have types taken from

$p \rightarrow q$

whose free variables have types taken from

![]() $\Gamma$

, taken up to

$\Gamma$

, taken up to

![]() $\beta \eta$

-equivalence. We refer to this isomorphism of categories and the normal form theorem which refines it (Theorem 4.49) as the Gentzen–Mints–Zucker duality between sequent calculus and lambda calculus. The name reflects work by Zucker (Reference Zucker1974) and Mints (Reference Mints and Odifreddi1996), elaborated below.

$\beta \eta$

-equivalence. We refer to this isomorphism of categories and the normal form theorem which refines it (Theorem 4.49) as the Gentzen–Mints–Zucker duality between sequent calculus and lambda calculus. The name reflects work by Zucker (Reference Zucker1974) and Mints (Reference Mints and Odifreddi1996), elaborated below.

A duality consists of two different points of view on the same object (Atiyah, Reference Atiyah2007). The greater the difference between the two points of view, the more informative is the duality which relates them. Such correspondences are important because two independent discoveries of the same structure are strong evidence that the structure is natural. The above duality is interesting precisely because sequent calculus proofs and lambda terms are not tautologically the same thing: for example, the cut-elimination relations are fundamentally local while the

![]() $\beta$

-equivalence relation is global (see Section 4.3). In this sense, sequent calculus and lambda calculus are, respectively, local and global points of view on a common logico-computational mathematical structure.

$\beta$

-equivalence relation is global (see Section 4.3). In this sense, sequent calculus and lambda calculus are, respectively, local and global points of view on a common logico-computational mathematical structure.

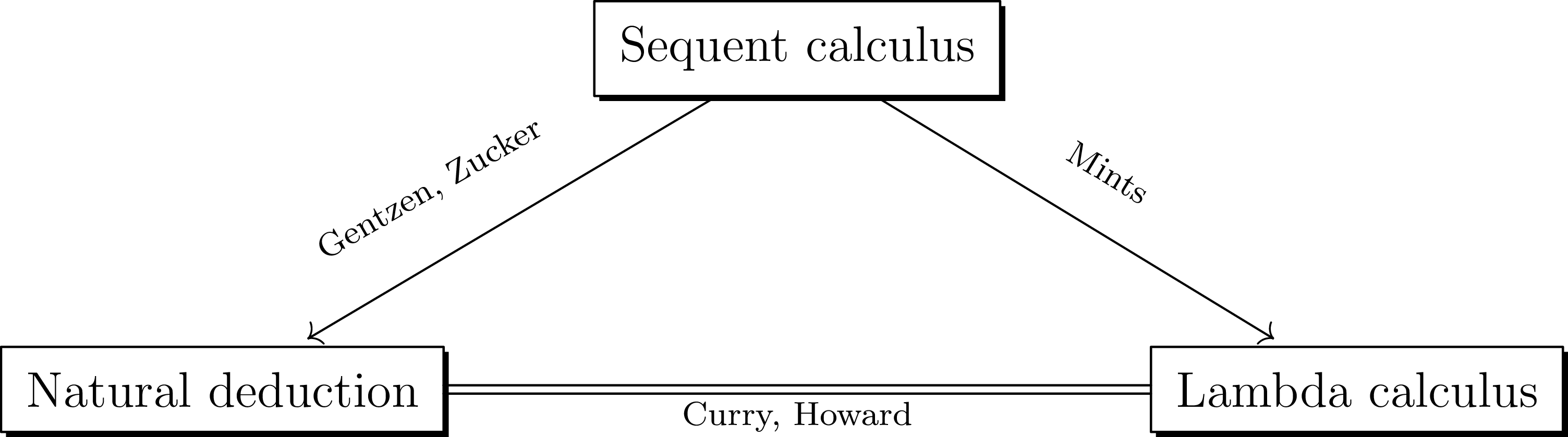

This duality is related to, but distinct from, the Curry–Howard correspondence. The precise relationship is elaborated in Section 1.1 below, but broadly speaking, it is captured by the conceptual diagram of Figure 1. The Curry–Howard correspondence gives a bijection between natural deduction proofs and lambda terms, while Gentzen–Mints–Zucker duality reveals that

![]() $\beta \eta$

-normal lambda terms are normal forms for sequent calculus proofs modulo an equivalence relation generated by pairs that are well-motivated from the point of view of the Brouwer-Heyting-Kolmogorov interpretation of intuitionistic proof.

$\beta \eta$

-normal lambda terms are normal forms for sequent calculus proofs modulo an equivalence relation generated by pairs that are well-motivated from the point of view of the Brouwer-Heyting-Kolmogorov interpretation of intuitionistic proof.

The relationship between three logico-computational calculi.

1.1 The Curry–Howard correspondence

The relationship between proofs and lambda terms (or “programs”) has become widely known as the Curry–Howard correspondence following Howard’s work (Howard, Reference Howard1980), although an informal understanding of the computational content of intuitionistic proofs has older roots in the Brouwer–Heyting–Kolmogorov interpretation (Troelstra and van Dalen, Reference Troelstra and van Dalen1988). The correspondence has been so influential in logic and computer science that the Curry–Howard correspondence as philosophy now overshadows the Curry–Howard correspondence as a theorem.

As a theorem, the Curry–Howard correspondence is the observation that the formulas of implicational propositional logic are the same as the types of simply-typed lambda calculus, and that there is a surjective map from the set of all proofs of a sequent

![]() $\Gamma \vdash \alpha$

in “sequent calculus” to the set of all lambda terms of type

$\Gamma \vdash \alpha$

in “sequent calculus” to the set of all lambda terms of type

![]() $\alpha$

with free variables in

$\alpha$

with free variables in

![]() $\Gamma$

(due to Howard (Reference Howard1980, Section 3) building on ideas of Curry and Tait). This map is not a bijection, and as such does not represent the best possible statement about the relationship between proofs and lambda terms. The problem is that there are two natural continuations of Howard’s work, depending on how one interprets the somewhat vague notion of proof in Howard (Reference Howard1980, Section 1).

$\Gamma$

(due to Howard (Reference Howard1980, Section 3) building on ideas of Curry and Tait). This map is not a bijection, and as such does not represent the best possible statement about the relationship between proofs and lambda terms. The problem is that there are two natural continuations of Howard’s work, depending on how one interprets the somewhat vague notion of proof in Howard (Reference Howard1980, Section 1).

The vagueness is due to the fact that the system called “sequent calculus” by Howard is actually a hybrid of intuitionistic sequent calculus in the sense of Gentzen’s LJ (there are weakening, exchange and contraction rules in Howard’s system) and natural deduction in the sense of Gentzen (Reference Gentzen1935) and Prawitz (Reference Prawitz1965) (Howard has an elimination rule for implication rather than the left introduction rule of sequent calculus). The use of an elimination rule makes the connection to lambda terms straightforward, and the inclusion of structural rules places the correspondence in its proper context as a relationship between proofs with hypotheses and lambda terms with free variables (Howard, Reference Howard1980, Section 3).

In resolving this ambiguity, subsequent authors writing about the correspondence have almost universally decided that proof means natural deduction proof; see, for example, (Sørensen and Urzyczyn, Reference Sørensen and Urzyczyn2006, Sections 4.8, 7.4, 7.6) and Wadler (Reference Wadler2000). The correspondence may then be interpreted as a bijection between lambda terms and natural deduction proofs (Selinger, Reference Selinger2008, Section 6.5). This bijection represents the natural conclusion of one line of development starting with Howard (Reference Howard1980), and henceforth we refer to this bijection as the Curry–Howard correspondence. Despite its philosophical importance, this correspondence is not mathematically of great interest, because natural deduction and lambda calculus are so similar that the bijection is close to tautological (in the case of closed terms, it is left as an exercise in one standard text (Sørensen and Urzyczyn, Reference Sørensen and Urzyczyn2006, Ex. 4.8)).

In the present work, we investigate the second natural continuation of Howard (Reference Howard1980), which takes the structural rules in Howard’s “sequent calculus” seriously and seeks to give a bijection between sequent calculus proofs and lambda terms. As soon as explicit structural rules are introduced into proofs, however, there will be multiple proofs that map to the same lambda term, and so for there to be a bijection between proofs and lambda terms, proof must mean equivalence class of preproofs modulo some relation. If this relation is simply “maps to the same lambda term,” then what we have constructed is merely a surjective map from proofs to lambda terms, which is hardly more than what is in Howard (Reference Howard1980). Hence, in this second line of thought, the identity of proofs becomes a central concern.

Consequently one of the contributions of this paper is to give explicit generating relations for a relation

![]() $\sim _p$

on preproofs such that

$\sim _p$

on preproofs such that

![]() $\pi _1 \sim _p \pi _2$

if and only if

$\pi _1 \sim _p \pi _2$

if and only if

![]() $F_\Gamma (\pi _1) = F_\Gamma (\pi _2)$

, and to give a logical justification of these relations independent of the translation to lambda terms. This establishes

$F_\Gamma (\pi _1) = F_\Gamma (\pi _2)$

, and to give a logical justification of these relations independent of the translation to lambda terms. This establishes

![]() $\mathcal{S}_\Gamma$

as a mathematical structure in its own right, so that the comparison to

$\mathcal{S}_\Gamma$

as a mathematical structure in its own right, so that the comparison to

![]() $\mathcal{L}_\Gamma$

may be meaningfully referred to as a duality.

$\mathcal{L}_\Gamma$

may be meaningfully referred to as a duality.

Given the Curry–Howard correspondence, the identity of proofs is closely related to the old problem of when two sequent calculus proofs map to the same natural deduction under the translation defined by Gentzen (Reference Gentzen1935) (see Remark 4.5). This has been studied by various authors, most notably (Zucker, Reference Zucker1974; Pottinger, Reference Pottinger1977; Dyckhoff and Pinto, Reference Dyckhoff and Pinto1999; Mints, Reference Mints and Odifreddi1996; Kleene, Reference Kleene1952). The most important results are those obtained by Zucker and Mints, and we restrict ourselves here to comments on their work; see also Section 4.4.

There is substantial overlap between our main results and those of Zucker and Mints, which we became aware of after this paper had been completed. In Zucker (Reference Zucker1974), Zucker gives a set of generating relations characterising when two sequent calculus proofs map to the same natural deduction, for a calculus that does not contain weakening and exchange. The main content of Theorem 4.15 also lies in identifying an explicit set of generating relations on preproofs, for the map from sequent calculus proofs to lambda terms in a system of sequent calculus that is (as far as is possible for a system that must be translated unambiguously to lambda terms) as close as possible to Gentzen’s LJ. As far as we know, this paper is the first place where the generating relations have been established for Gentzen’s LJ with all structural rules.

Mints, using ideas of Kleene (Reference Kleene1952), identifies a set of normal forms of sequent calculus proofs and studies them using the map from proofs to lambda terms. Our proof of the main theorem (Theorem 4.15) relies on the identification of normal forms, which differ slightly from those of Mints (see also Theorem 4.49). Again, we treat a standard form of LJ, whereas Mints (Reference Mints and Odifreddi1996) follows Kleene’s system G (Kleene, Reference Kleene1952) in the form of its

![]() $(L \supset\!)$

rule.

$(L \supset\!)$

rule.

Finally, since we must argue that

![]() $\mathcal{S}_\Gamma$

has an independent existence in order for the duality to be a relationship between equals, we are committed to mounting a purely logical defence of all the generating relations of

$\mathcal{S}_\Gamma$

has an independent existence in order for the duality to be a relationship between equals, we are committed to mounting a purely logical defence of all the generating relations of

![]() $\sim _p$

. This is not a concern shared by Zucker, Mints or Kleene. The most interesting generating relations are those that we call

$\sim _p$

. This is not a concern shared by Zucker, Mints or Kleene. The most interesting generating relations are those that we call

![]() $\lambda$

-equivalences (Definition 2.21) which, are justified on the grounds that they represent an internal Brouwer-Heyting-Kolmogorov interpretation, see the discussion preceding Definition 2.21 and Section 4.2.

$\lambda$

-equivalences (Definition 2.21) which, are justified on the grounds that they represent an internal Brouwer-Heyting-Kolmogorov interpretation, see the discussion preceding Definition 2.21 and Section 4.2.

The connection between syntactic presentations of proofs and their categorical semantics, as developed in foundational work by Lambek (Reference Lambek1958, 1969), Scott (Reference Scott1976), Scott et al. (1980), Seely (Reference Seely1984, Reference Seely1989), and others, is indeed central to our perspective. In particular, the categories

![]() $\mathcal{L}_\Gamma$

and

$\mathcal{L}_\Gamma$

and

![]() $\mathcal{S}_\Gamma$

can be understood as presentations of the free cartesian closed category generated by

$\mathcal{S}_\Gamma$

can be understood as presentations of the free cartesian closed category generated by

![]() $\Gamma$

, and our main equivalence theorem reflects the fact that the simply-typed lambda calculus and the intuitionistic sequent calculus provide distinct but equivalent syntactic lenses on the same semantic object. While we focus on a syntactic account of proof equivalence, the internal BHK semantics developed in Section 4.2 is closely aligned with categorical models of proofs: for instance, our treatment echoes the coherence conditions familiar from cartesian closed categories. We believe these connections offer promising directions for future work, particularly in relating our equivalence results to existing coherence theorems in categorical proof theory.

$\Gamma$

, and our main equivalence theorem reflects the fact that the simply-typed lambda calculus and the intuitionistic sequent calculus provide distinct but equivalent syntactic lenses on the same semantic object. While we focus on a syntactic account of proof equivalence, the internal BHK semantics developed in Section 4.2 is closely aligned with categorical models of proofs: for instance, our treatment echoes the coherence conditions familiar from cartesian closed categories. We believe these connections offer promising directions for future work, particularly in relating our equivalence results to existing coherence theorems in categorical proof theory.

2. Sequent Calculus

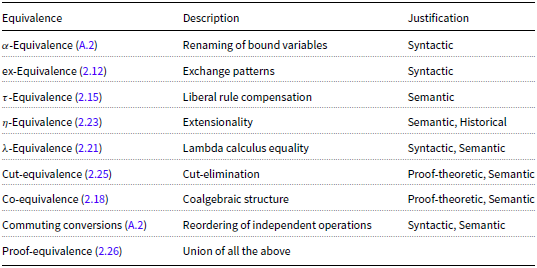

We introduce the Sequent Calculus system (Gentzen’s LJ) and define a family of equivalence relations on proofs within this system. These equivalence relations are summarised in Table 1.

Summary of equivalence relations and their justifications

In this paper, we restrict our attention to the intuitionistic sequent calculus with implication, for simplicity. The inclusion of additional connectives, such as conjunctions and disjunctions, is left for future work.

There is an infinite set of atomic formulas, and if

![]() $p$

and

$p$

and

![]() $q$

are formulas, then so is

$q$

are formulas, then so is

![]() $p \supset q$

. Let

$p \supset q$

. Let

![]() $\Psi _\supset$

denote the set of all formulas. For each formula

$\Psi _\supset$

denote the set of all formulas. For each formula

![]() $p$

, let

$p$

, let

![]() $Y_p$

be an infinite set of variables associated with

$Y_p$

be an infinite set of variables associated with

![]() $p$

. For distinct formulas

$p$

. For distinct formulas

![]() $p,q$

, the sets

$p,q$

, the sets

![]() $Y_p, Y_q$

are disjoint. We write

$Y_p, Y_q$

are disjoint. We write

![]() $x : p$

for

$x : p$

for

![]() $x \in Y_p$

and say

$x \in Y_p$

and say

![]() $x$

has type

$x$

has type

![]() $p$

. Let

$p$

. Let

![]() $\mathcal{P}^n$

be the set of all length

$\mathcal{P}^n$

be the set of all length

![]() $n$

sequences of variables with

$n$

sequences of variables with

![]() $\mathcal{P}^0 := \lbrace \varnothing \rbrace$

, and

$\mathcal{P}^0 := \lbrace \varnothing \rbrace$

, and

![]() $\mathcal{P} := \cup _{n = 0}^\infty \mathcal{P}^n$

. A sequent is a pair

$\mathcal{P} := \cup _{n = 0}^\infty \mathcal{P}^n$

. A sequent is a pair

![]() $(\Gamma ,p)$

where

$(\Gamma ,p)$

where

![]() $\Gamma \in \mathcal{P}$

and

$\Gamma \in \mathcal{P}$

and

![]() $p \in \Psi _\supset$

, written

$p \in \Psi _\supset$

, written

![]() $\Gamma \vdash p$

. We call

$\Gamma \vdash p$

. We call

![]() $\Gamma$

the antecedent and

$\Gamma$

the antecedent and

![]() $p$

the succedent of the sequent. Given

$p$

the succedent of the sequent. Given

![]() $\Gamma$

and a variable

$\Gamma$

and a variable

![]() $x:p$

, we write

$x:p$

, we write

![]() $\Gamma , x:p$

for the element of

$\Gamma , x:p$

for the element of

![]() $\mathcal{P}$

given by appending

$\mathcal{P}$

given by appending

![]() $x:p$

to the end of

$x:p$

to the end of

![]() $\Gamma$

. A variable

$\Gamma$

. A variable

![]() $x:p$

may occur more than once in a sequent.

$x:p$

may occur more than once in a sequent.

Our intuitionistic sequent calculus is the system LJ of Gentzen (Reference Gentzen1935, Section III) restricted to implication, with formulas in the antecedent tagged with variables. We follow the convention of Girard et al. (Reference Girard, Lafont and Taylor1989, Section 5.1) in grouping

![]() $(\!\operatorname {ax}\!)$

and

$(\!\operatorname {ax}\!)$

and

![]() $(\!\operatorname {cut}\!)$

together rather than including the latter in the structural rules.

$(\!\operatorname {cut}\!)$

together rather than including the latter in the structural rules.

Definition 2.1.

A deduction rule results from one of the schemata below by a substitution of the following kind: replace

![]() $p,q,r$

by arbitrary formulas,

$p,q,r$

by arbitrary formulas,

![]() $x,y$

by arbitrary variables, and

$x,y$

by arbitrary variables, and

![]() $\Gamma , \Delta , \Theta$

by arbitrary (possibly empty) sequences of formulas separated by commas:

$\Gamma , \Delta , \Theta$

by arbitrary (possibly empty) sequences of formulas separated by commas:

-

• the identity group :

-

– Axiom :

-

– Cut :

-

-

• the structural rules :

-

– Contraction :

-

– Weakening :

-

– Exchange :

-

-

• the logical rules :

-

– Right introduction :

-

– Left introduction :

-

Definition 2.2.

A preproof is a finite rooted planar tree where each edge is labelled by a sequent and each node except for the root is labelled by a valid deduction rule. If the edge connected to the root is labelled by the sequent

![]() $\Gamma \vdash p$

then we call the preproof a preproof of

$\Gamma \vdash p$

then we call the preproof a preproof of

![]() $\Gamma \vdash p$

.

$\Gamma \vdash p$

.

Observe that the only valid label for a leaf node is an axiom rule, so a preproof reads from the leaves to the root as a deduction of

![]() $\Gamma \vdash p$

from axiom rules.

$\Gamma \vdash p$

from axiom rules.

Example 2.3.

Here is the Church numeral

![]() $\underline {2}$

in our sequent calculus

$\underline {2}$

in our sequent calculus

Remark 2.4. Multiple occurrences of a deduction rule are represented using doubled horizontal lines. For example if

![]() $\Gamma = x_1: p_1, \ldots , x_n: p_n$

then the preproof

$\Gamma = x_1: p_1, \ldots , x_n: p_n$

then the preproof

weakens in every formula in the sequence. The doubled horizontal line therefore stands for

![]() $n$

occurrences of the rule

$n$

occurrences of the rule

![]() $(\!\operatorname {weak}\!)$

. The preproofs which perform these weakenings in a different order are, of course, not equal as preproofs, so the notation is an abuse. We will only use it below in the context of defining generating pairs of equivalence relations in cases where any reading of this notation leads to the same equivalence relation.

$(\!\operatorname {weak}\!)$

. The preproofs which perform these weakenings in a different order are, of course, not equal as preproofs, so the notation is an abuse. We will only use it below in the context of defining generating pairs of equivalence relations in cases where any reading of this notation leads to the same equivalence relation.

Remark 2.5. We follow Gentzen (Reference Gentzen1935, Section III) in putting the variable introduced by a

![]() $(L \supset\!)$

rule at the first position in the antecedent. This choice is correct from the point of view of the relationship between sequent calculus proofs and lambda terms, as may be seen in Lemma 4.29 and Section 4.1.

$(L \supset\!)$

rule at the first position in the antecedent. This choice is correct from the point of view of the relationship between sequent calculus proofs and lambda terms, as may be seen in Lemma 4.29 and Section 4.1.

When should two preproofs be considered to be the same proof? Clearly some of the structure of a preproof is logically insignificant, but it is by no means trivial to identify a precise notion of proof as separate from preproof. Historically, logic has concerned itself primarily with the provability of sequents

![]() $\Gamma \vdash p$

rather than the structure of the set of all preproofs, but as proof theory has developed the question of the identity of proofs has acquired increasing importance; see Ungar (1992) and Prawitz (Reference Prawitz1981, Section 4.3). We also note the foundational contributions of G. Kreisel, who in his influential works Kreisel (Reference Kreisel1962, Reference Kreisel and Saaty1965) suggested to distinguish the “Theory of Proofs” (that studies the proofs as individual entities, close to the approach of this paper) and “Proof Theory”.

$\Gamma \vdash p$

rather than the structure of the set of all preproofs, but as proof theory has developed the question of the identity of proofs has acquired increasing importance; see Ungar (1992) and Prawitz (Reference Prawitz1981, Section 4.3). We also note the foundational contributions of G. Kreisel, who in his influential works Kreisel (Reference Kreisel1962, Reference Kreisel and Saaty1965) suggested to distinguish the “Theory of Proofs” (that studies the proofs as individual entities, close to the approach of this paper) and “Proof Theory”.

We say that a relation

![]() $\sim$

on the set of preproofs satisfies condition (C0) if

$\sim$

on the set of preproofs satisfies condition (C0) if

![]() $\pi _1 \sim \pi _2$

implies

$\pi _1 \sim \pi _2$

implies

![]() $\pi _1, \pi _2$

are preproofs of the same sequent. The relation satisfies condition (C1) if it satisfies (C0) and

$\pi _1, \pi _2$

are preproofs of the same sequent. The relation satisfies condition (C1) if it satisfies (C0) and

![]() $\pi _1 \sim \pi _2$

implies

$\pi _1 \sim \pi _2$

implies

![]() $\pi _1' \sim \pi _2'$

where

$\pi _1' \sim \pi _2'$

where

![]() $\pi _1', \pi _2'$

are the result of applying the same deduction rule to

$\pi _1', \pi _2'$

are the result of applying the same deduction rule to

![]() $\pi _1, \pi _2$

, respectively. For example, if

$\pi _1, \pi _2$

, respectively. For example, if

![]() $\sim$

satisfies (C1) and

$\sim$

satisfies (C1) and

![]() $\pi _1 \sim \pi _2$

then

$\pi _1 \sim \pi _2$

then

Condition (C2) is defined using the following schematics:

We say that a relation

![]() $\sim$

on the set of preproofs satisfies condition (C2) if it satisfies (C0) and whenever

$\sim$

on the set of preproofs satisfies condition (C2) if it satisfies (C0) and whenever

![]() $\pi _1 \sim \pi _2$

and

$\pi _1 \sim \pi _2$

and

![]() $\rho _1 \sim \rho _2$

then also

$\rho _1 \sim \rho _2$

then also

![]() $\kappa _1 \sim \kappa _2$

where

$\kappa _1 \sim \kappa _2$

where

![]() $\kappa _i$

for

$\kappa _i$

for

![]() $i \in \{1,2\}$

is obtained from the pair

$i \in \{1,2\}$

is obtained from the pair

![]() $(\pi _i, \rho _i)$

by application of one of the deduction rules in (2.1).

$(\pi _i, \rho _i)$

by application of one of the deduction rules in (2.1).

Definition 2.6.

A relation

![]() $\sim$

on preproofs is compatible if it satisfies (C0),(C1),(C2).

$\sim$

on preproofs is compatible if it satisfies (C0),(C1),(C2).

An occurrence of

![]() $x:p$

in a preproof

$x:p$

in a preproof

![]() $\pi$

is an occurrence in the antecedent

$\pi$

is an occurrence in the antecedent

![]() $\Gamma$

of a sequent labelling some edge of

$\Gamma$

of a sequent labelling some edge of

![]() $\pi$

. Some occurrences are related by the flow of information in the preproof, and some are not. More precisely:

$\pi$

. Some occurrences are related by the flow of information in the preproof, and some are not. More precisely:

Definition 2.7 (Ancestors). An occurrence of

![]() $z_1:s$

in a preproof

$z_1:s$

in a preproof

![]() $\pi$

is an immediate strong ancestor (resp. immediate weak ancestor) of an occurrence

$\pi$

is an immediate strong ancestor (resp. immediate weak ancestor) of an occurrence

![]() $z_2:s$

if there is a deduction rule in

$z_2:s$

if there is a deduction rule in

![]() $\pi$

where

$\pi$

where

![]() $z_1:s$

is in the numerator and

$z_1:s$

is in the numerator and

![]() $z_2:s$

is in the denominator, and one of the following holds (referring to the schemata in Definition 2.1):

$z_2:s$

is in the denominator, and one of the following holds (referring to the schemata in Definition 2.1):

-

(i)

$z_1:s, z_2:s$

are in the same position of

$z_1:s, z_2:s$

are in the same position of

$\Gamma , \Delta , \Theta$

in the numerator and denominator.

$\Gamma , \Delta , \Theta$

in the numerator and denominator.

-

(ii) the rule is

$(\!\operatorname {ctr}\!)$

,

$(\!\operatorname {ctr}\!)$

,

$z_1:s$

is the first of the two variables being contracted (resp.

$z_1:s$

is the first of the two variables being contracted (resp.

$z_1:s$

is either of the variables being contracted) and

$z_1:s$

is either of the variables being contracted) and

$z_2:s$

is the result of that contraction.

$z_2:s$

is the result of that contraction.

-

(iii) the rule is

$(\!\operatorname {ex}\!)$

and either

$(\!\operatorname {ex}\!)$

and either

$z_1:s = x:p, z_2:s = x:p$

or

$z_1:s = x:p, z_2:s = x:p$

or

$z_1:s = y:p, z_2:s = y:p$

.

$z_1:s = y:p, z_2:s = y:p$

.

One occurrence

![]() $z:s$

is a strong ancestor (resp. weak ancestor) of another

$z:s$

is a strong ancestor (resp. weak ancestor) of another

![]() $z':s$

if there is a sequence

$z':s$

if there is a sequence

![]() $z:s = z_1:s, \ldots , z_n:s = z':s$

of occurrences in

$z:s = z_1:s, \ldots , z_n:s = z':s$

of occurrences in

![]() $\pi$

with

$\pi$

with

![]() $z_i:s$

an immediate strong (resp. weak) ancestor of

$z_i:s$

an immediate strong (resp. weak) ancestor of

![]() $z_{i+1}:s$

for

$z_{i+1}:s$

for

![]() $1 \le i \lt n$

.

$1 \le i \lt n$

.

Note that if

![]() $z_1:s$

is a strong ancestor of

$z_1:s$

is a strong ancestor of

![]() $z_2:s$

then

$z_2:s$

then

![]() $z_1 = z_2$

but this is not necessarily true for weak ancestors.

$z_1 = z_2$

but this is not necessarily true for weak ancestors.

Definition 2.8.

Let

![]() $\approx _{str}$

(resp.

$\approx _{str}$

(resp.

![]() $\approx _{wk}$

) denote the equivalence relation on the set of variable occurrences generated by the strong (resp. weak) ancestor relation.

$\approx _{wk}$

) denote the equivalence relation on the set of variable occurrences generated by the strong (resp. weak) ancestor relation.

Definition 2.9 (Ancestor substitution). Let

![]() $x:p$

be an occurrence of a variable in a preproof

$x:p$

be an occurrence of a variable in a preproof

![]() $\pi$

and

$\pi$

and

![]() $y:p$

another variable. We denote by

$y:p$

another variable. We denote by

![]() $\operatorname {subst}^{str}(\pi , x, y)$

the preproof obtained from

$\operatorname {subst}^{str}(\pi , x, y)$

the preproof obtained from

![]() $\pi$

by replacing the occurrence

$\pi$

by replacing the occurrence

![]() $x:p$

and all its strong ancestors by

$x:p$

and all its strong ancestors by

![]() $y$

.

$y$

.

Example 2.10.

In the preproof

![]() $\underline {2}$

of Example 2.3 the partition of variable occurrences according to the equivalence relation

$\underline {2}$

of Example 2.3 the partition of variable occurrences according to the equivalence relation

![]() $\approx _{str}$

is shown by colours in

$\approx _{str}$

is shown by colours in

and the partition according to

![]() $\approx _{wk}$

in

$\approx _{wk}$

in

In our preproofs, we have tags, in the form of variables, for hypotheses. Since the precise nature of these tags is immaterial, if the variable is eliminated in a

![]() $(R \supset\!)$

,

$(R \supset\!)$

,

![]() $(L \supset\!)$

,

$(L \supset\!)$

,

![]() $(\!\operatorname {ctr}\!)$

or

$(\!\operatorname {ctr}\!)$

or

![]() $(\!\operatorname {cut}\!)$

rule the identity of the proof should be independent of the tag. Thus we define

$(\!\operatorname {cut}\!)$

rule the identity of the proof should be independent of the tag. Thus we define

![]() $\alpha$

-equivalence to formalise this. See Definition A.1.

$\alpha$

-equivalence to formalise this. See Definition A.1.

Remark 2.11. The generating relation (A.4) of Definiton A.1 is to be read as a pair of preproofs

![]() $(\psi ,\psi ') \in \; \sim _\alpha$

where both preproofs have final sequent

$(\psi ,\psi ') \in \; \sim _\alpha$

where both preproofs have final sequent

![]() $z: p \supset q, \Gamma , \Delta , \Theta \vdash r$

and the branch ending in

$z: p \supset q, \Gamma , \Delta , \Theta \vdash r$

and the branch ending in

![]() $\Gamma \vdash p$

is any preproof (but it is the same preproof in both

$\Gamma \vdash p$

is any preproof (but it is the same preproof in both

![]() $\psi$

and

$\psi$

and

![]() $\psi '$

). To avoid clutter, we will not label branches, here or elsewhere, if it is clear how to match up the branches in the two preproofs involved in the relation.

$\psi '$

). To avoid clutter, we will not label branches, here or elsewhere, if it is clear how to match up the branches in the two preproofs involved in the relation.

Multiple distinct sequences of applications of

![]() $(\!\operatorname {ex}\!)$

rules can yield the same permutation of formulas within a hypothesis. We establish an equivalence relation to identify these.

$(\!\operatorname {ex}\!)$

rules can yield the same permutation of formulas within a hypothesis. We establish an equivalence relation to identify these.

Definition 2.12 (

![]() $\operatorname {ex}$

-Equivalence). We define

$\operatorname {ex}$

-Equivalence). We define

![]() $\sim _{(\!\operatorname {ex}\!)}$

to be the smallest compatible equivalence relation on preproofs satisfying the following (recall our conventions with double horizontal lines of Remark 2.4)

$\sim _{(\!\operatorname {ex}\!)}$

to be the smallest compatible equivalence relation on preproofs satisfying the following (recall our conventions with double horizontal lines of Remark 2.4)

where

![]() $\tau$

is a permutation and

$\tau$

is a permutation and

![]() $\sigma _1, \ldots , \sigma _r$

and

$\sigma _1, \ldots , \sigma _r$

and

![]() $\rho _1,\ldots ,\rho _s$

are sequences of transpositions of consecutive positions (one or both lists may be empty) with the property that

$\rho _1,\ldots ,\rho _s$

are sequences of transpositions of consecutive positions (one or both lists may be empty) with the property that

![]() $\sigma _1 \cdots \sigma _r = \tau = \rho _1 \cdots \rho _s$

in the permutation group. The two preproofs in (

2.2

) are, respectively, the sequences of exchanges corresponding to the

$\sigma _1 \cdots \sigma _r = \tau = \rho _1 \cdots \rho _s$

in the permutation group. The two preproofs in (

2.2

) are, respectively, the sequences of exchanges corresponding to the

![]() $\sigma _i$

and

$\sigma _i$

and

![]() $\rho _j$

.

$\rho _j$

.

In this paper, we present many conversion rules relating preproofs which only differ by logically insignificant rearrangements of deduction rules. The system we have adopted holds a strict set of rules which only allow

![]() $(\!\operatorname {cut}\!), (\!\operatorname {ctr}\!), (\!\operatorname {weak}\!), (L\supset\!), (L\supset\!)$

rules where the relevant formula is on the far left of the sequent. Upholding this for the remainder of the paper will make the logical content of the conversion rules less transparent than if we work with a more liberal system involving derived rules. Thus, we introduce the following.

$(\!\operatorname {cut}\!), (\!\operatorname {ctr}\!), (\!\operatorname {weak}\!), (L\supset\!), (L\supset\!)$

rules where the relevant formula is on the far left of the sequent. Upholding this for the remainder of the paper will make the logical content of the conversion rules less transparent than if we work with a more liberal system involving derived rules. Thus, we introduce the following.

Definition 2.13. The following derived rules are the extended deduction rules, where by a slight abuse of notation, we give the same names as the strict counterparts given in Definition 2.1 :

-

• the identity group :

-

– Cut :

-

-

• the structural rules :

-

– Contraction :

-

– Weakening :

-

-

• the logical rules :

-

– Right introduction :

-

– Left introduction :

-

Example 2.14. As an example of how these derived rules are derived, consider the Contraction rule, which stands for the following deduction:

Notice that any two such preproof fragments are equivalent under

![]() $(\!\operatorname {ex}\!)$

-equivalence (Definition 2.12).

$(\!\operatorname {ex}\!)$

-equivalence (Definition 2.12).

We will work with these derived rules for the remainder of this paper. More precisely, we replace the deduction rules of Definition 2.1 with the same name as those of Definition 2.13 with those of Definition 2.13. This is a more liberal system than the strict version of Gentzen’s LJ, but the payoff is that the local nature of sequent calculus is made significantly more transparent, which is a perspective we wish to emphasise. We include the following equivalences which allow the purist to relate our more liberal system to the more strict one, see Lemma 2.17.

The price for our more liberal deduction rules is the inclusion of

![]() $\tau$

-equivalences below, which express that two instances of the same deduction rule, operating in different places, are essentially the same.

$\tau$

-equivalences below, which express that two instances of the same deduction rule, operating in different places, are essentially the same.

Definition 2.15 (

![]() $\tau$

-Equivalence). We define

$\tau$

-Equivalence). We define

![]() $\sim _{\tau }$

to be the smallest compatible equivalence relation on preproofs satisfying

$\sim _{\tau }$

to be the smallest compatible equivalence relation on preproofs satisfying

Definition 2.16.

A deduction rule is strict if it is an arbitrary

![]() $(\!\operatorname {ax}\!)$

or

$(\!\operatorname {ax}\!)$

or

![]() $(\!\operatorname {ex}\!)$

rule, or it is one of the other rules and the occurrence of

$(\!\operatorname {ex}\!)$

rule, or it is one of the other rules and the occurrence of

![]() $x:p$

in the rule is leftmost in the antecedent. A strict preproof is a preproof in which every deduction rule is strict.

$x:p$

in the rule is leftmost in the antecedent. A strict preproof is a preproof in which every deduction rule is strict.

Lemma 2.17.

Every preproof is equivalent under

![]() $\sim _\tau$

to a strict preproof.

$\sim _\tau$

to a strict preproof.

We have a strong intuition about the structure of logical arguments which leads to the expectation that the antecedent of a sequent is an extended “space” disjoint subsets of which may be the locus of independent operations. This independence is formalised by commuting conversions, which identify preproofs that differ only by “insignificant” rearranging of deduction rules. These are given in full in Definition A.2.

Should the order of two variables

![]() $x:p,y:p$

that are contracted be logically significant? We are prevented from identifying contraction on

$x:p,y:p$

that are contracted be logically significant? We are prevented from identifying contraction on

![]() $x:p,y:p$

with contraction on

$x:p,y:p$

with contraction on

![]() $y:p,x:p$

because the former leaves

$y:p,x:p$

because the former leaves

![]() $x:p$

and the latter

$x:p$

and the latter

![]() $y:p$

, but a sufficient cocommutativity principle is expressed by (2.9). Similarly (2.8) expresses that contraction is coassociative. The rule (2.10) is counitality, which says that we attach no logical meaning to contraction with a variable which has been weakened in. These principles assert that contraction is coalgebraic, a point of view further ramified in linear logic.

$y:p$

, but a sufficient cocommutativity principle is expressed by (2.9). Similarly (2.8) expresses that contraction is coassociative. The rule (2.10) is counitality, which says that we attach no logical meaning to contraction with a variable which has been weakened in. These principles assert that contraction is coalgebraic, a point of view further ramified in linear logic.

Definition 2.18 (

![]() $co$

-Equivalence). We define

$co$

-Equivalence). We define

![]() $\sim _{co}$

to be the smallest compatible equivalence relation on preproofs satisfying

$\sim _{co}$

to be the smallest compatible equivalence relation on preproofs satisfying

Remark 2.19. The relation (2.8) appears as the contraction conversion (Zucker, Reference Zucker1974, Section 3.1.2 (b)(i)) of Zucker. The sequent calculus of Zucker (Reference Zucker1974) does not contain explicit weakening or exchange so the relations there do not include (2.9) or (2.10). This omission obscures the coalgebraic structure, which we believe to be an important logical principle.

Principles (2.11) and (2.12) below are of profound importance, as they are the internal manifestation in our system of the Brouwer-Heyting-Kolmogorov interpretation of proofs in intuitionistic logic (Troelstra and van Dalen, Reference Troelstra and van Dalen1988). Under that interpretation, a proof of a hypothesis

![]() $y: p \supset q$

reads as a transformation of proofs of

$y: p \supset q$

reads as a transformation of proofs of

![]() $p$

to proofs of

$p$

to proofs of

![]() $q$

. Rule (2.11) expresses that if the output proof of

$q$

. Rule (2.11) expresses that if the output proof of

![]() $q$

is to be used multiple times the transformation must be employed once for each copy. Rule (2.12) expresses that if the output is not needed, neither is the transformation nor any of its inputs. Note that these principles mirror (2.19) and (2.20) and therefore in some sense realise

$q$

is to be used multiple times the transformation must be employed once for each copy. Rule (2.12) expresses that if the output is not needed, neither is the transformation nor any of its inputs. Note that these principles mirror (2.19) and (2.20) and therefore in some sense realise

![]() $(L \supset\!)$

as an internalised cut. We develop this point of view more systematically in Section 4.2.

$(L \supset\!)$

as an internalised cut. We develop this point of view more systematically in Section 4.2.

Definition 2.21 (

![]() $\lambda$

-equivalence). We define

$\lambda$

-equivalence). We define

![]() $\sim _{\lambda }$

to be the smallest compatible equivalence relation on preproofs satisfying

$\sim _{\lambda }$

to be the smallest compatible equivalence relation on preproofs satisfying

Remark 2.22. The relation (2.11) appears as contraction conversion (Zucker, Reference Zucker1974, Section 3.1.2 (b)(iii)) of Zucker. The sequent calculus of Zucker (Reference Zucker1974) does not contain explicit weakening so the relations there do not include (2.12). The relation (2.11) is also implicit in Kleene (Reference Kleene1952, Lemma 12) and Mints (Reference Mints and Odifreddi1996, Lemma 2) and (2.12) is implicit in Kleene (Reference Kleene1952, Lemma 4) and Mints (Reference Mints and Odifreddi1996, Lemma 1).

Let us consider the assertion that

![]() $(\!\operatorname {ax}\!)$

, which, in our system, is available for any formula

$(\!\operatorname {ax}\!)$

, which, in our system, is available for any formula

![]() $p$

, should be restricted to atomic formulas. Let

$p$

, should be restricted to atomic formulas. Let

![]() $\Sigma ^\Gamma _q$

denote the set of preproofs of

$\Sigma ^\Gamma _q$

denote the set of preproofs of

![]() $\Gamma \vdash q$

under our system and

$\Gamma \vdash q$

under our system and

![]() $\Pi ^\Gamma _q$

the set of preproofs under this system with a restricted axiom rule. Clearly

$\Pi ^\Gamma _q$

the set of preproofs under this system with a restricted axiom rule. Clearly

![]() $\Pi ^\Gamma _q \subseteq \Sigma ^\Gamma _q$

and if

$\Pi ^\Gamma _q \subseteq \Sigma ^\Gamma _q$

and if

![]() $\Sigma ^\Gamma _q$

is nonempty then so is

$\Sigma ^\Gamma _q$

is nonempty then so is

![]() $\Pi ^\Gamma _q$

. Since the restriction on the axiom rule does not affect provability, we are free to adopt it, either directly by changing the deduction rules, or indirectly by keeping the deduction rules as given but adopting an equivalence relation on preproofs which effectively makes the axiom rule on compound formulas a derived rule:

$\Pi ^\Gamma _q$

. Since the restriction on the axiom rule does not affect provability, we are free to adopt it, either directly by changing the deduction rules, or indirectly by keeping the deduction rules as given but adopting an equivalence relation on preproofs which effectively makes the axiom rule on compound formulas a derived rule:

Definition 2.23 (

![]() $\eta$

-Equivalence). We define

$\eta$

-Equivalence). We define

![]() $\sim _\eta$

to be the smallest compatible equivalence relation on preproofs such that for arbitrary formulas

$\sim _\eta$

to be the smallest compatible equivalence relation on preproofs such that for arbitrary formulas

![]() $p,q$

$p,q$

The logical justification of the cut-elimination transformations is the inversion principle (Prawitz, Reference Prawitz1965, Section II) which states that the left introduction rule

![]() $(L \supset\!)$

is, in a sense, the inverse of the right introduction rule

$(L \supset\!)$

is, in a sense, the inverse of the right introduction rule

![]() $(R \supset\!)$

. This principle is made manifest in the cut-elimination theorem of Gentzen (Theorem 2.29). To make the point in a slightly different way, note that the

$(R \supset\!)$

. This principle is made manifest in the cut-elimination theorem of Gentzen (Theorem 2.29). To make the point in a slightly different way, note that the

![]() $(\!\operatorname {cut}\!)$

rule asserts that an occurrence of

$(\!\operatorname {cut}\!)$

rule asserts that an occurrence of

![]() $A$

on the left of the turnstile is precisely as strong as an occurrence on the right; see (Girard, Reference Girard2011, Sections 3.2.1, 3.3.3). The cut-elimination theorem says that this balance of strength is implicit already in the rules without

$A$

on the left of the turnstile is precisely as strong as an occurrence on the right; see (Girard, Reference Girard2011, Sections 3.2.1, 3.3.3). The cut-elimination theorem says that this balance of strength is implicit already in the rules without

![]() $(\!\operatorname {cut}\!)$

.

$(\!\operatorname {cut}\!)$

.

Note that rule (2.14) below uses ancestor substitution (Definition 2.9). It is convenient to call a deduction rule proper if it is not

![]() $\operatorname {(cut)}$

.

$\operatorname {(cut)}$

.

Definition 2.24 (Single step cut reduction). We define

![]() $\to _{\operatorname {cut}}$

to be the smallest compatible relation (not necessarily an equivalence relation) on preproofs containing:

$\to _{\operatorname {cut}}$

to be the smallest compatible relation (not necessarily an equivalence relation) on preproofs containing:

-

• For any proper deduction rule

$(r)$

(2.14)

$(r)$

(2.14) (2.15)

(2.15)

-

• Let

$(r_0)$

be a structural rule,

$(r_0)$

be a structural rule,

$(r)$

any proper deduction rule. Then

(2.16)

$(r)$

any proper deduction rule. Then

(2.16)

-

•

$(L \supset\!)$

on the left and

$(L \supset\!)$

on the left and

$(r)$

any proper deduction rule

(2.17)

$(r)$

any proper deduction rule

(2.17)

-

• For any logical rule

$(r_1)$

and structural rule

$(r_1)$

and structural rule

$(r_0)$

, where the cut variable

$(r_0)$

, where the cut variable

$x:p$

was not manipulated by

$x:p$

was not manipulated by

$(r_0)$

:(2.18)

$(r_0)$

:(2.18)

-

• For any logical rule

$(r_1)$

:(2.19)

$(r_1)$

:(2.19) (2.20)

(2.20)

The remaining cases correspond to having a logical rule on both the left and right:

-

•

$(R\supset\!)$

on the left and

$(R\supset\!)$

on the left and

$(R\supset\!)$

on the right:

(2.21)

$(R\supset\!)$

on the right:

(2.21)

-

•

$(R\supset\!)$

on the left and

$(R\supset\!)$

on the left and

$(L\supset\!)$

on the right:

(2.22)

$(L\supset\!)$

on the right:

(2.22)

-

•

$(R \supset\!)$

on the left and

$(R \supset\!)$

on the left and

$(L \supset\!)$

on the right but

$(L \supset\!)$

on the right but

$(L \supset\!)$

does not introduce the variable which is involved in the

$(L \supset\!)$

does not introduce the variable which is involved in the

$(\!\operatorname {cut}\!)$

(2.23)

$(\!\operatorname {cut}\!)$

(2.23)

and

(2.24)

Definition 2.25.

We define

![]() $\sim _{\operatorname {cut}}$

to be the smallest equivalence relation on preproofs containing the relation

$\sim _{\operatorname {cut}}$

to be the smallest equivalence relation on preproofs containing the relation

![]() $\to _{\operatorname {cut}}$

.

$\to _{\operatorname {cut}}$

.

Definition 2.26 introduces a unified notion of proof equivalence that consolidates the various equivalence relations discussed in preceding sections. It captures the core structural principles underlying normalisation and cut-elimination. It also reflects a contemporary consensus on when two proofs should be identified. This definition serves as the conceptual culmination of this section.

Definition 2.26 (Proof equivalence). We define

![]() $\sim _p$

to be the smallest compatible equivalence relation on preproofs containing the union of

$\sim _p$

to be the smallest compatible equivalence relation on preproofs containing the union of

-

•

$\alpha$

-equivalence (Definition A.1),

$\alpha$

-equivalence (Definition A.1),

-

•

$(\!\operatorname {ex}\!)$

-equivalence (Definition 2.12),

$(\!\operatorname {ex}\!)$

-equivalence (Definition 2.12),

-

• Commuting conversions (Definition A.2),

-

•

$co$

-equivalence (Definition 2.18),

$co$

-equivalence (Definition 2.18),

-

•

$\lambda$

-equivalence (Definition 2.21),

$\lambda$

-equivalence (Definition 2.21),

-

•

$\eta$

-equivalence (Definition 2.23),

$\eta$

-equivalence (Definition 2.23),

-

• cut equivalence (Definition 2.25).

A proof is an equivalence class of preproofs under proof equivalence. We say that two preproofs are equivalent if they are equivalent under

![]() $\sim _p$

.

$\sim _p$

.

2.1 Cut-elimination

Why give yet another proof of cut-elimination? The structure of our proof is similar to Gentzen (Reference Gentzen1935), but we avoid the “mix” rule by making use of commuting conversions. We include the details so as to make clear which conversions are used. The treatment in the literature most similar to ours is Borisavljevic et al.(n.d.); however, there the induction is structured differently and the focus is on weakening rather than contraction trees.

At a conceptual level, in order to justify the generating rules for proof equivalence, particularly the

![]() $\lambda$

-equivalence rules, we have chosen our cut-elimination transformations (Definition 2.24) to bring out as clearly as possible the parallels between

$\lambda$

-equivalence rules, we have chosen our cut-elimination transformations (Definition 2.24) to bring out as clearly as possible the parallels between

![]() $(\!\operatorname {cut}\!)$

and

$(\!\operatorname {cut}\!)$

and

![]() $(L \supset\!)$

(see Remark 4.54). Our proof of cut-elimination reinforces this connection, with some of the key steps in eliminating

$(L \supset\!)$

(see Remark 4.54). Our proof of cut-elimination reinforces this connection, with some of the key steps in eliminating

![]() $(\!\operatorname {cut}\!)$

repeated below to eliminate a subset of

$(\!\operatorname {cut}\!)$

repeated below to eliminate a subset of

![]() $(L \supset\!)$

rules in Section 4 (see Lemmas 4.18 and 4.29).

$(L \supset\!)$

rules in Section 4 (see Lemmas 4.18 and 4.29).

Definition 2.27.

The width

![]() $w(q)$

of a formula

$w(q)$

of a formula

![]() $q$

is the number of occurrences of

$q$

is the number of occurrences of

![]() $\supset$

.

$\supset$

.

Definition 2.28.

The height of a preproof

![]() $\pi$

, denoted

$\pi$

, denoted

![]() $h(\pi )$

, is one less than the number of deduction rules encountered on the longest path in the underlying tree of the preproof.

$h(\pi )$

, is one less than the number of deduction rules encountered on the longest path in the underlying tree of the preproof.

Note that two preproofs can be equivalent under

![]() $\sim _p$

but have different heights. A proof consisting of an axiom rule has height zero. A preproof which does not contain an occurrence of the

$\sim _p$

but have different heights. A proof consisting of an axiom rule has height zero. A preproof which does not contain an occurrence of the

![]() $(\!\operatorname {cut}\!)$

rule is called cut-free.

$(\!\operatorname {cut}\!)$

rule is called cut-free.

Theorem 2.29.

Every preproof is equivalent under

![]() $\sim _p$

to a cut-free preproof.

$\sim _p$

to a cut-free preproof.

Proof.

Given any preproof

![]() $\pi$

, we can choose an instance of the

$\pi$

, we can choose an instance of the

![]() $(\!\operatorname {cut}\!)$

rule in

$(\!\operatorname {cut}\!)$

rule in

![]() $\pi$

which is at the greatest possible height, and apply Proposition 2.32 below to the subproof given by taking this as the root. Iterating this finitely many times yields the result.

$\pi$

which is at the greatest possible height, and apply Proposition 2.32 below to the subproof given by taking this as the root. Iterating this finitely many times yields the result.

With reference to the prototype contraction in Definition 2.1, we say that the variables

![]() $x:p, y:p$

are involved in that deduction rule.

$x:p, y:p$

are involved in that deduction rule.

Definition 2.30.

Let

![]() $\pi$

be a preproof. We say that a particular instance of

$\pi$

be a preproof. We say that a particular instance of

![]() $(\!\operatorname {ctr}\!)$

in the proof tree is active with respect to an occurrence of a variable

$(\!\operatorname {ctr}\!)$

in the proof tree is active with respect to an occurrence of a variable

![]() $x:p$

in the preproof if the involved variables in the contraction are weak ancestors of that occurrence.

$x:p$

in the preproof if the involved variables in the contraction are weak ancestors of that occurrence.

We begin with an easy special case:

Lemma 2.31.

Suppose given a preproof

![]() $\pi$

of the form

$\pi$

of the form

where

![]() $\pi _1$

and

$\pi _1$

and

![]() $\pi _2$

are both cut-free, the cut variable

$\pi _2$

are both cut-free, the cut variable

![]() $x:p$

is introduced in

$x:p$

is introduced in

![]() $\pi _2$

by an axiom rule and

$\pi _2$

by an axiom rule and

![]() $\pi _2$

contains no active contractions with respect to the displayed occurrence of

$\pi _2$

contains no active contractions with respect to the displayed occurrence of

![]() $x:p$

. Then

$x:p$

. Then

![]() $\pi$

is equivalent under

$\pi$

is equivalent under

![]() $\sim _p$

to a cut-free preproof.

$\sim _p$

to a cut-free preproof.

Proof.

By induction on the height of

![]() $\pi _2$

. In the base case

$\pi _2$

. In the base case

![]() $\pi$

isZ

$\pi$

isZ

which is equivalent by (2.15) to

![]() $\pi _1$

. For the inductive step where

$\pi _1$

. For the inductive step where

![]() $\pi _2$

has height

$\pi _2$

has height

![]() $\gt 0$

, we break into cases depending on the rule

$\gt 0$

, we break into cases depending on the rule

![]() $(r)$

:

$(r)$

:

-

•

$(r)$

is a structural rule. Since

$(r)$

is a structural rule. Since

$x:p$

is introduced by

$x:p$

is introduced by

$(\!\operatorname {ax}\!)$

and there are no active contractions in

$(\!\operatorname {ax}\!)$

and there are no active contractions in

$\pi _2$

, the cut variable

$\pi _2$

, the cut variable

$x:p$

is not manipulated by

$x:p$

is not manipulated by

$(r)$

and so this case follows by the inductive hypothesis and (2.18).

$(r)$

and so this case follows by the inductive hypothesis and (2.18). -

•

$(r) = (R \supset\!)$

by (2.21) and the inductive hypothesis.

$(r) = (R \supset\!)$

by (2.21) and the inductive hypothesis. -

•

$(r) = (L \supset\!)$

by (2.23) and (2.24) and the inductive hypothesis, using that

$(r) = (L \supset\!)$

by (2.23) and (2.24) and the inductive hypothesis, using that

$x:p$

is not introduced by

$x:p$

is not introduced by

$(L \supset\!)$

.

$(L \supset\!)$

.

This completes the inductive step and the proof of the lemma.

Proposition 2.32.

Any preproof

![]() $\pi$

of the form

$\pi$

of the form

where

![]() $\pi _1$

and

$\pi _1$

and

![]() $\pi _2$

are both cut-free, is equivalent under

$\pi _2$

are both cut-free, is equivalent under

![]() $\sim _p$

to a cut-free preproof.

$\sim _p$

to a cut-free preproof.

Proof.

Let

![]() $P(w, n)$

denote the following statement: any preproof

$P(w, n)$

denote the following statement: any preproof

![]() $\pi$

with cut-free branches

$\pi$

with cut-free branches

![]() $\pi _1, \pi _2$

and final cut variable

$\pi _1, \pi _2$

and final cut variable

![]() $x:p$

(as above) satisfying

$x:p$

(as above) satisfying

![]() $w(p) = w$

and

$w(p) = w$

and

![]() $n = h(\pi _1) + h(\pi _2)$

is equivalent under

$n = h(\pi _1) + h(\pi _2)$

is equivalent under

![]() $\sim _p$

to a cut-free preproof. Let

$\sim _p$

to a cut-free preproof. Let

![]() $P(w)$

denote

$P(w)$

denote

![]() $\forall n P(w,n)$

. We will prove

$\forall n P(w,n)$

. We will prove

![]() $\forall w P(w)$

by induction on

$\forall w P(w)$

by induction on

![]() $w$

. Thus, we must show

$w$

. Thus, we must show

![]() $P(0)$

and that if for all

$P(0)$

and that if for all

![]() $v \lt w$

$v \lt w$

![]() $P(v)$

then

$P(v)$

then

![]() $P(w)$

. We refer to this as the outer induction.

$P(w)$

. We refer to this as the outer induction.

Base case of the outer induction: to prove

![]() $P(0)$

(i.e.,,

$P(0)$

(i.e.,,

![]() $\forall n P(0,n)$

) we proceed by induction on

$\forall n P(0,n)$

) we proceed by induction on

![]() $n$

, which we refer to as the inner induction. In the base case

$n$

, which we refer to as the inner induction. In the base case

![]() $P(0,0)$

of the inner induction, both

$P(0,0)$

of the inner induction, both

![]() $(r_1),(r_2)$

are axiom rules, so the claim follows from (2.14), (2.15). Now, assume

$(r_1),(r_2)$

are axiom rules, so the claim follows from (2.14), (2.15). Now, assume

![]() $n \gt 0$

and that

$n \gt 0$

and that

![]() $P(0,k)$

holds for all

$P(0,k)$

holds for all

![]() $k \lt n$

. If

$k \lt n$

. If

![]() $(r_1)$

is

$(r_1)$

is

![]() $(\!\operatorname {ax}\!)$

, then we are done by (2.14). If

$(\!\operatorname {ax}\!)$

, then we are done by (2.14). If

![]() $(r_1)$

is a structural rule, then the claim follows by applying the inner inductive hypothesis and (2.16). If

$(r_1)$

is a structural rule, then the claim follows by applying the inner inductive hypothesis and (2.16). If

![]() $(r_1)$

is a logical rule, then since

$(r_1)$

is a logical rule, then since

![]() $w(x:p) = 0$

it must be

$w(x:p) = 0$

it must be

![]() $(L \supset\!)$

and the claim follows from (2.17) and the inner inductive hypothesis.

$(L \supset\!)$

and the claim follows from (2.17) and the inner inductive hypothesis.

Inductive step of the outer induction: now, suppose that

![]() $w \gt 0$

is fixed and

$w \gt 0$

is fixed and

![]() $P(v)$

holds for all

$P(v)$

holds for all

![]() $v \lt w$

. To prove

$v \lt w$

. To prove

![]() $P(w)$

(i.e.,,

$P(w)$

(i.e.,,

![]() $\forall n P(w,n)$

), we proceed by induction on

$\forall n P(w,n)$

), we proceed by induction on

![]() $n$

, which we again refer to as the inner induction. If

$n$

, which we again refer to as the inner induction. If

![]() $n \le 1$

, then one of

$n \le 1$

, then one of

![]() $(r_1),(r_2)$

is

$(r_1),(r_2)$

is

![]() $(\!\operatorname {ax}\!)$

so the claim follows from (2.14), (2.15). Suppose now that

$(\!\operatorname {ax}\!)$

so the claim follows from (2.14), (2.15). Suppose now that

![]() $n \gt 1$

and that

$n \gt 1$

and that

![]() $P(w,k)$

holds for all

$P(w,k)$

holds for all

![]() $k \lt n$

. We again divide into cases depending on the final deduction rules

$k \lt n$

. We again divide into cases depending on the final deduction rules

![]() $(r_1),(r_2)$

. Some cases follow from the inner inductive hypothesis as in the proof of the base case of the outer induction above, and we will not repeat them. The new cases that are easily dispensed with:

$(r_1),(r_2)$

. Some cases follow from the inner inductive hypothesis as in the proof of the base case of the outer induction above, and we will not repeat them. The new cases that are easily dispensed with:

-

•

$(r_1) = (R \supset\!), (r_2) = (R \supset\!)$

follows by (2.21) and the inner inductive hypothesis.

$(r_1) = (R \supset\!), (r_2) = (R \supset\!)$

follows by (2.21) and the inner inductive hypothesis. -

•

$(r_1) = (R \supset\!), (r_2) = (L \supset\!)$

may be divided into two subcases. Either the

$(r_1) = (R \supset\!), (r_2) = (L \supset\!)$

may be divided into two subcases. Either the

$(L \supset\!)$

does not introduce the cut variable

$(L \supset\!)$

does not introduce the cut variable

$x:p$

, in which case the claim follows by (2.23) and the inner inductive hypothesis, or the

$x:p$

, in which case the claim follows by (2.23) and the inner inductive hypothesis, or the

$(L \supset\!)$

does introduce the cut variable

$(L \supset\!)$

does introduce the cut variable

$x$

of type

$x$

of type

$p = r \supset s$

, in which case

$p = r \supset s$

, in which case

$\pi$

is by (2.22) equivalent to a proof of the form

$\pi$

is by (2.22) equivalent to a proof of the form

where

$\Gamma = \Gamma ', \Gamma ''$

and

$\Gamma = \Gamma ', \Gamma ''$

and

$\Delta = \Theta , \Lambda , \Omega$

. Since both of these cuts involve types of lower width than

$\Delta = \Theta , \Lambda , \Omega$

. Since both of these cuts involve types of lower width than

$p$

, the claim follows from the outer inductive hypothesis.

$p$

, the claim follows from the outer inductive hypothesis. -

•

$(r_1)$

is logical and

$(r_1)$

is logical and

$(r_2)$

is one of

$(r_2)$

is one of

$(\!\operatorname {weak}\!), (\!\operatorname {ex}\!)$

follow from the inner inductive hypothesis and (2.20), (2.3), respectively.

$(\!\operatorname {weak}\!), (\!\operatorname {ex}\!)$

follow from the inner inductive hypothesis and (2.20), (2.3), respectively. -

•

$(r_1)$

is

$(r_1)$

is

$(L \supset\!)$

and

$(L \supset\!)$

and

$(r_2)$

is

$(r_2)$

is

$(\!\operatorname {ctr}\!)$

follows as above in the proof of the base case of the outer induction, by the inner inductive hypothesis and (2.17).

$(\!\operatorname {ctr}\!)$

follows as above in the proof of the base case of the outer induction, by the inner inductive hypothesis and (2.17).

The only remaining case is where

![]() $(r_1) = (R \supset\!)$

and

$(r_1) = (R \supset\!)$

and

![]() $(r_2) = (\!\operatorname {ctr}\!)$

, which will occupy the rest of the proof. In this case

$(r_2) = (\!\operatorname {ctr}\!)$

, which will occupy the rest of the proof. In this case

![]() $\pi$

is of the form

$\pi$

is of the form

where we set

![]() $x_1 = x$

. Using

$x_1 = x$

. Using

![]() $\tau$

-equivalence, commuting conversions and

$\tau$

-equivalence, commuting conversions and

![]() $co$

-equivalence, we can manipulate

$co$

-equivalence, we can manipulate

![]() $\pi _2$

(meaning

$\pi _2$

(meaning

![]() $\pi _2'$

plus the final contraction) so that all the active contractions with respect to the cut variable

$\pi _2'$

plus the final contraction) so that all the active contractions with respect to the cut variable

![]() $x_1:p$

occur at the bottom of the proof tree (see Lemma 2.36 and Remark 2.37 below). Note that the final deduction rule of

$x_1:p$

occur at the bottom of the proof tree (see Lemma 2.36 and Remark 2.37 below). Note that the final deduction rule of

![]() $\pi _2$

is, by hypothesis, an active contraction.Footnote

1

After this step, we see that

$\pi _2$

is, by hypothesis, an active contraction.Footnote

1

After this step, we see that

![]() $\pi$

is equivalent to

$\pi$

is equivalent to

where

![]() $\pi _2''$

is cut-free and contains no active contractions with respect to

$\pi _2''$

is cut-free and contains no active contractions with respect to

![]() $x_1$

. To reduce clutter, we have dropped the types from the variables

$x_1$

. To reduce clutter, we have dropped the types from the variables

![]() $x_i:p$

. By repeated applications of (2.19), we obtain the following preproof equivalent to

$x_i:p$

. By repeated applications of (2.19), we obtain the following preproof equivalent to

![]() $\pi$

:

$\pi$

:

where

![]() $r \Gamma$

denotes the concatenation of

$r \Gamma$

denotes the concatenation of

![]() $r$

copies of the sequence

$r$

copies of the sequence

![]() $\Gamma$

. Note that this proof contains no active contractions for the final cut variable

$\Gamma$

. Note that this proof contains no active contractions for the final cut variable

![]() $x:p = x_1:p$

.

$x:p = x_1:p$

.

The variable

![]() $x_i$

is introduced inside

$x_i$

is introduced inside

![]() $\pi _2''$

by an instance

$\pi _2''$

by an instance

![]() $(r_i)$

of a deduction rule which is

$(r_i)$

of a deduction rule which is

![]() $(\!\operatorname {weak}\!), (L \supset\!)$

or

$(\!\operatorname {weak}\!), (L \supset\!)$

or

![]() $(\!\operatorname {ax}\!)$

. Possibly using (2.9) to rearrange the ordering, we may assume that there is an integer

$(\!\operatorname {ax}\!)$

. Possibly using (2.9) to rearrange the ordering, we may assume that there is an integer

![]() $1 \le m \le l$

such that for all

$1 \le m \le l$

such that for all

![]() $1 \le i \le m$

, the variable

$1 \le i \le m$

, the variable

![]() $x_i$

is introduced by either

$x_i$

is introduced by either

![]() $(\!\operatorname {weak}\!)$

or

$(\!\operatorname {weak}\!)$

or

![]() $(L \supset\!)$

and for

$(L \supset\!)$

and for

![]() $i \gt m$

it is introduced by

$i \gt m$

it is introduced by

![]() $(\!\operatorname {ax}\!)$

.Footnote

2

First, we deal with the cases

$(\!\operatorname {ax}\!)$

.Footnote

2

First, we deal with the cases

![]() $1 \le i \le m$

. Using commuting conversions,

$1 \le i \le m$

. Using commuting conversions,

![]() $(r_i)$

may be commuted downwards in

$(r_i)$

may be commuted downwards in

![]() $\pi _2''$

past the rule

$\pi _2''$

past the rule

![]() $(r)$

. Further by (2.18), (2.23), the rule

$(r)$

. Further by (2.18), (2.23), the rule

![]() $(r_i)$

may be commuted past not only the

$(r_i)$

may be commuted past not only the

![]() $(\!\operatorname {cut}\!)$

directly below

$(\!\operatorname {cut}\!)$

directly below

![]() $(r)$

but every cut down to the one that is actually against the variable

$(r)$

but every cut down to the one that is actually against the variable

![]() $x_i:p$

introduced by

$x_i:p$

introduced by

![]() $(r_i)$

. Here, we use in an essential way that the active contractions have been accounted for in the previous step.

$(r_i)$

. Here, we use in an essential way that the active contractions have been accounted for in the previous step.

At the end of this process, we see that

![]() $\pi$

is equivalent to a preproof, roughly of the same shape as above, with

$\pi$

is equivalent to a preproof, roughly of the same shape as above, with

![]() $l$

copies of

$l$

copies of

![]() $\pi _1$

being cut against the “trunk” of the tree at the “crown” of which is a preproof

$\pi _1$

being cut against the “trunk” of the tree at the “crown” of which is a preproof

![]() $\pi _2'''$

of

$\pi _2'''$

of

![]() $x_{m+1},\ldots ,x_l, \Delta \vdash q$

derived from

$x_{m+1},\ldots ,x_l, \Delta \vdash q$

derived from

![]() $\pi _2''$

. The first

$\pi _2''$

. The first

![]() $m$

of these copies of

$m$

of these copies of

![]() $\pi _1$

are cut against variables

$\pi _1$

are cut against variables

![]() $x_1,\ldots ,x_m$

introduced immediately before the cut, and the final

$x_1,\ldots ,x_m$

introduced immediately before the cut, and the final

![]() $l-m$

copies of

$l-m$

copies of

![]() $\pi _1$

are cut against a proof of

$\pi _1$

are cut against a proof of

![]() $x_{m+1},\ldots ,x_i,\Delta \vdash q$

for some

$x_{m+1},\ldots ,x_i,\Delta \vdash q$

for some

![]() $m+1 \le i \le l$

. These final

$m+1 \le i \le l$

. These final

![]() $l-m$

cuts may be eliminated using Lemma 2.31 (this does not use either the inner or outer inductive hypothesis) noting that in the notation of that lemma, any variable in

$l-m$

cuts may be eliminated using Lemma 2.31 (this does not use either the inner or outer inductive hypothesis) noting that in the notation of that lemma, any variable in

![]() $\Delta$

introduced by an

$\Delta$

introduced by an

![]() $(\!\operatorname {ax}\!)$

in

$(\!\operatorname {ax}\!)$

in

![]() $\pi _2$

is still introduced by an

$\pi _2$

is still introduced by an

![]() $(\!\operatorname {ax}\!)$

in the cut-free proof produced which is equivalent to

$(\!\operatorname {ax}\!)$

in the cut-free proof produced which is equivalent to

![]() $\pi$

, so that the lemma may be applied multiple times. The remaining cuts on

$\pi$

, so that the lemma may be applied multiple times. The remaining cuts on

![]() $x_1,\ldots ,x_m$

may then be sequentially eliminated using either (2.20) or (2.22) and the outer inductive hypothesis. The end result is a cut-free preproof equivalent to

$x_1,\ldots ,x_m$

may then be sequentially eliminated using either (2.20) or (2.22) and the outer inductive hypothesis. The end result is a cut-free preproof equivalent to

![]() $\pi$

.

$\pi$

.

Remark 2.33. Note that the proof of cut-elimination (including the proof of the existence of contraction normal from in Lemma 2.36) only uses

![]() $\tau$

-equivalence,

$\tau$

-equivalence,

![]() $co$

-equivalence, commuting conversions and the cut-elimination transformations (2.14)–(2.24) of Definition 2.24 (note that all of these cut-elimination transformations are used). So the cut-elimination theorem holds without

$co$

-equivalence, commuting conversions and the cut-elimination transformations (2.14)–(2.24) of Definition 2.24 (note that all of these cut-elimination transformations are used). So the cut-elimination theorem holds without

![]() $\lambda$

-equivalence or

$\lambda$

-equivalence or

![]() $\eta$

-equivalence.

$\eta$

-equivalence.

In the rest of the section, we develop the notion of a contraction normal form, which was used in the proof of cut-elimination. To avoid conflicting with the notation for the preproof

![]() $\pi _2$

, there we denote the subject of following by

$\pi _2$

, there we denote the subject of following by

![]() $\varphi$

.

$\varphi$

.

Definition 2.34.

Let

![]() $\varphi$

be a cut-free preproof of

$\varphi$

be a cut-free preproof of

![]() $x:p, \Delta \vdash q$

. The contraction tree of

$x:p, \Delta \vdash q$

. The contraction tree of