Recent audits of qualitative-methods syllabi and curricula highlighted that few students are being taught data analysis skills (Emmons and Moravcsik Reference Emmons and Moravcsik2020; Turner and Thies Reference Turner and Thies2009). There may be multiple reasons for this, including that in comparison to quantitative methods, qualitative-methods curricula must encompass a wider range of topics and skills. A pedagogical neglect of qualitative data analysis is not a new concern (Miles Reference Miles1979); however, redressing it has become increasingly urgent in the face of disciplinary calls for greater research transparency (Elman and Kapiszewski Reference Elman and Kapiszewski2014). Qualitative political scientists are routinely criticized by other members of the profession for failing to adhere to methodological rules regarding data analysis (Moravcsik Reference Moravcsik2010) and giving insufficient attention to how data are analyzed (Goodman and Cyr Reference Goodman and Cyr2024). Unless emerging scholars are taught how to provide a clear account of the analysis they undertook to arrive at their relevant inferences and conclusions (Buthe and Jacobs Reference Buthe and Jacobs2015, 2), qualitative methods will continue to be criticized for a lack of rigor.

Unless emerging scholars are taught how to provide a clear account of the analysis they undertook to arrive at their relevant inferences and conclusions, qualitative methods will continue to be criticized for a lack of rigor.

The literature suggests that three key barriers exist to teaching students how to analyze qualitative data. First, as Elman and Kapiszewski (Reference Elman and Kapiszewski2014, 43) noted, qualitative scholars do not consistently share the evidentiary basis of their conclusions and explain how those conclusions were reached. This means that there are relatively few examples of “model” qualitative scholarship that contain an explicit discussion of analytic methods. Second, as Jacobs, Kapiszewski, and Karcher (Reference Jacobs, Kapiszewski and Karcher2022) observed, qualitative analytic methods textbooks tend to focus more on the epistemological underpinnings of those methods than on the mechanics of using them. Third, as discussed by Elman, Kapiszewski, and Kirilova (Reference Elman, Kapiszewski and Kirilova2015), the reluctance of qualitative political scientists to share their material means that there is a dearth of data on which students can practice analytical skills. Despite initiatives to increase the availability of qualitative data—such as Syracuse University’s Qualitative Data Repository—there is little in the way of a stock of data that can easily be repurposed for classroom use.

This article discusses how we sought to overcome these challenges when teaching deductive coding—a method in which theoretical concepts provide a lens to analyze data—to more than 100 undergraduate students. We first explain what is meant by deductive coding before describing the broader active learning framework—which required students to conduct an interview with a faculty member to generate data—within which this qualitative-analysis task was embedded. We then outline how we taught our students the analytical steps of deductive coding and describe the different data on which students practiced their new skills. Finally, we reflect on the pros and cons of our teaching approach; explain how we managed to orchestrate a relatively complex series of stages in a large and compulsory undergraduate class; and conclude by providing suggestions to other faculty members who want to replicate our pedagogical approach when teaching their students how to link theory with data.

DEDUCTIVE CODING AND QUALITATIVE ANALYSIS

What role does masculinity play in climate-change discourse? Is Boris Johnson a populist? Do menstrual-leave policy debates bolster or undermine the status of women? One way in which these questions—adapted from recent student dissertations that used qualitative methods—can be answered persuasively is by a researcher (1) translating key theoretical concepts into a coding framework; (2) applying that framework to their data; and (3) discussing the evidence that supports or undermines their framework (Fereday and Muir-Cochrane Reference Fereday and Muir-Cochrane2006; Fife and Gossner Reference Fife and Gossner2024).

What we are describing here is a type of deductive qualitative analysis (Fife and Gossner Reference Fife and Gossner2024) that can operationalize, test, and refine existing theories using a coding framework and a corpus of data. Hypothesis testing is the goal of deductive analysis; however, before researchers can reach that final point to evaluate whether H1 is stronger than H2 (for example), they first must be able to classify the evidence as supporting H1 or H2. Deductive coding facilitates the intermediary step of classifying evidence as being more for H1 or H2 and enables the linking of concepts central to political science theory to actual data.

Deductive-coding approaches are evident in recent examples of qualitative and mixed-methods political science published in prominent journals such as American Political Science Review and British Journal of Political Science. A key aspect of Bailard et al.’s (Reference Bailard, Tromble, Zhong, Bianchi, Hosseini and Broniatowski2024) study of the relationship between the Proud Boys’ online and offline activities involved analyzing Telegram messages through the lens of the collective-action framing literature. These authors clarified the three main types of collective-action frames (i.e., diagnosis, prognosis, and motivation); defined what was meant by each frame; and suggested which words and phrases they considered an indicator of that frame’s presence in Proud Boy communications. Thijssen and Verheyen (Reference Thijssen and Verheyen2022) used a similar approach in their analysis of solidarity in party manifestos. They drew on a four-part typology to identify different manifestations of solidarity. After distilling key concepts from the literature (e.g., compassionate solidarity), Thijssen and Verheyen (Reference Thijssen and Verheyen2022) suggested how they would ascertain the relevant concept’s presence in a party manifesto (e.g., by a party claiming that it is a victim or has been marginalized).

A core component of deductive qualitative analysis is the creation of a coding framework in which theoretical concepts provide a lens through which data can be analyzed. A strong deductive-coding framework should list each theoretical concept for which a researcher proposes to code the data, define that concept, and suggest an “exemplary instance” of data that would warrant the application of the code (Fife and Gossner Reference Fife and Gossner2024, 6). A deductive-coding framework clearly links theory to empirics, requiring that scholars and students define theoretical concepts and suggest how they will practically identify them within their data.

We understand that qualitative analysis and coding often are equated with inductive approaches (Ezzy Reference Ezzy2002; Saldaña 2021). Nevertheless, we believe that it is important for political science students to master the mechanics of deductive coding. A deductive-coding framework provides an important first step in making explicit “the interpretive processes linking observations to conclusions or understandings” (Buthe and Jacobs Reference Buthe and Jacobs2015, 3). Moreover, by requiring scholars to define theoretical concepts, deductive coding guards against criticism that qualitative political science is conceptually opaque (Buggs and Sims Reference Buggs, Sims, Cyr and Goodman2024).

AN ACTIVE LEARNING APPROACH TO TEACHING QUALITATIVE DEDUCTIVE ANALYSIS

We taught deductive coding to our students as part of their compulsory undergraduate training in qualitative methods. There are more than 100 students enrolled on our introductory qualitative methods course who are undertaking degrees in either politics and international relations or politics, philosophy, and economics. The course runs for 10 weeks with paired one-hour lectures and one-hour seminars, and it is divided into three parts: preparing for data collection, collecting data, and analyzing data. Deductive coding was taught explicitly during a one-week period as part of the data-analysis component. However, students were given additional opportunities to discuss their coding frameworks in subsequent seminars and during a writing retreat that took place shortly before their assessments were due.Footnote 1

In comparison to other subjects that may be taught as part of a political science degree, methods learning is particularly focused on skill development, with research showing that students master methods better when they are required to practice (Allen and Baughman Reference Allen and Baughman2016; Duncan and Brown Reference Duncan and Brown2021). Therefore, we advised students on the first day of class that they would be required in week 5 to conduct a 10-minute-long interview with a faculty member on the question: Why are women academics underrepresented in our university’s political science department? We also informed students about the requirements of their final assessment: write a 2,500-word paper that identifies the ethical and positionality issues arising from the faculty–student interview; draft a semi-structured question guide; and design a coding framework that could be used to analyze interview data.Footnote 2 Asking our students to learn by doing and giving them a concrete experience in which to test abstract concepts meant that both our assessment and approach to teaching qualitative methods aligned with the principles of active and experiential learning (Bonwell and Eisen Reference Bonwell and Eison1991; Kolb Reference Kolb2014).

Asking our students to learn by doing and giving them a concrete experience in which to test abstract concepts meant that both our assessment and approach to teaching qualitative methods aligned with the principles of active and experiential learning.

Before undertaking their interview, students were tasked with designing an interview guide that tested a theory that sought to explain why women are underrepresented in our political science department. To assist them with this task, we gave a lecture on interviewing that provided guidance on how to construct interview questions that are theoretically motivated but colloquially expressed (Kapiszewski, MacLean, Read Reference Kapiszewski, MacLean and Read2015, 216). We also gave students an opportunity in the seminars to practice their interview questions and to receive feedback on their question guides. To help our students narrow their theoretical focus when designing their question guide, we provided articles that sought to explain the gender gap in political science. These articles included Brown et al. (Reference Brown, Horiuchi, Htun and Samuels2020), who suggested that gender differences in risk aversion fuel publication disparities, and Teele and Thelen (Reference Teele and Thelen2017), who speculated that women are excluded from coauthored teams. We encouraged our students to test these authors’ ideas in interviews.

After students conducted their interview, they were taught to deductively analyze their data during a one-hour lecture and a one-hour seminar. The lecture and seminar aimed to surmount common pedagogical obstacles by providing students with (1) examples of qualitative data analysis that clarify how conclusions were reached; (2) an understanding of the mechanics of undertaking qualitative data analysis; and (3) original data on which to practice.

To scaffold the students’ learning of the analytical process without influencing their subsequent interpretation of their own data, we intentionally chose readings and data from topics unrelated to the faculty–student interview question. Thus, before attending the first lecture and seminar for this part of the course, students were instructed to read March’s (Reference March2017) comparison of different types of populism. This article was selected because it is accessible, focuses on a topic of likely interest to students, and—important for our purposes—explains how the concept of populism was operationalized to code party manifestos. To further meet our objective of providing instances of qualitative data analysis that specify how conclusions were reached, students were asked to read Fife and Gossner (Reference Fife and Gossner2024), whose study illustrated the steps of qualitative deductive analysis using two worked examples.

During our lecture, we used multiple strategies to impart an understanding of the mechanics of undertaking qualitative deductive analysis. First, we walked students through the analytical steps taken by March (Reference March2017) when he constructed his coding framework: dividing a definition of populism into smaller conceptual components, elaborating on the meaning of each component, and suggesting an exemplar of each component. After we reconstructed March’s (Reference March2017) coding framework, we asked students to apply it to an excerpt of Reform UK’s manifesto. Students were encouraged to explain to the class how they coded the data before the lecturer displayed a slide showing her own approach to the task.

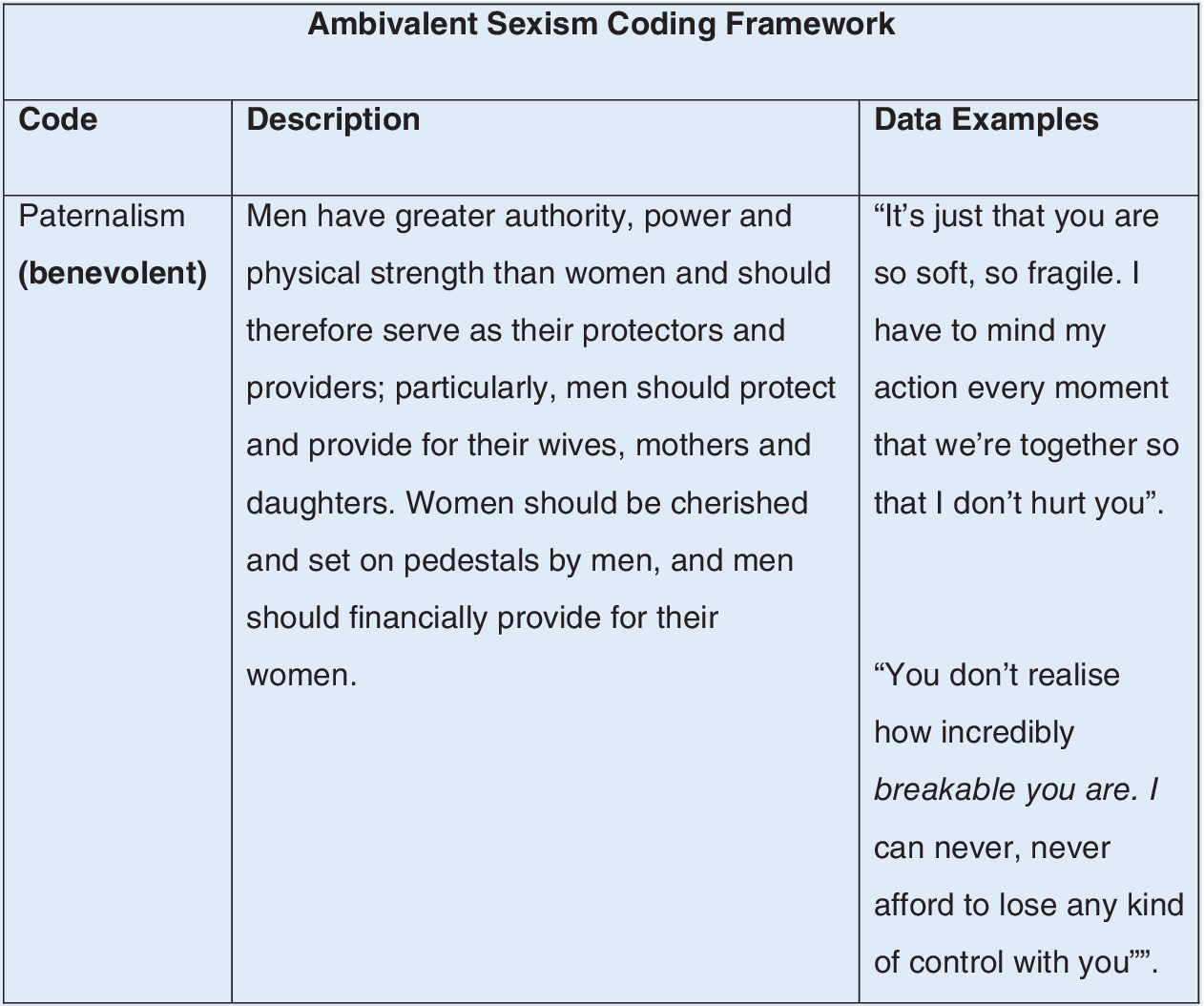

To further consolidate understanding of the mechanics of qualitative deductive analysis, we provided every student with a partially completed coding framework that had begun to operationalize Glick and Fiske’s (Reference Glick, Fiske, Glick and Fiske1996) theory of ambivalent sexism.Footnote 3 The framework that students were given had a column for the code, a column for a description of the code’s meaning, and a column to provide an exemplary instance of data that warranted the application of the code. Students were instructed to complete the first two columns (i.e., code word and description) and then to apply their coding framework to data—in this case, an excerpt from Stephanie Meyers’ young-adult Twilight novel that is notable for its patriarchal themes. (Figure 1 is an excerpt of the completed framework; the full template and data extraction are in the online appendix.)

Ambivalent Sexism Coding Framework

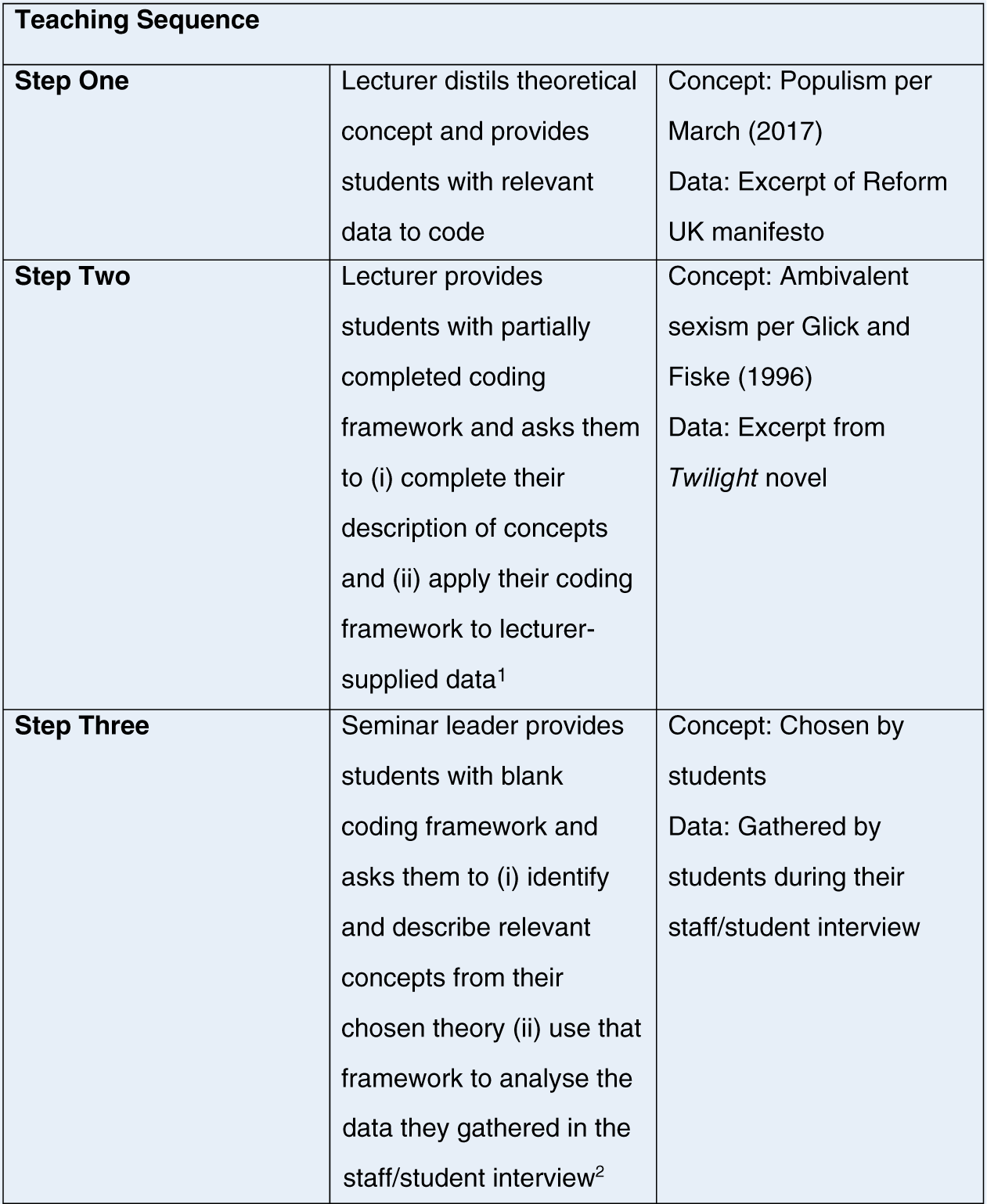

Finally, in the seminars, students were tasked with replicating these steps to construct a deductive-coding framework that could be used to analyze their own data—that is, the material generated during their interview with a faculty member. This time, they were given a blank coding template (see the online appendix) and asked to complete the first two columns—code and description—before applying it to their interview data. Figure 2 outlines the steps of our teaching sequence for those instructors who want to replicate our approach in their own course.

Teaching Sequence

1 A partially completed coding framework and data are available in the Supplementary Materials.

2 A blank coding framework is available in the Supplementary Materials.

After students completed a section of their coding framework and identified data exemplars, they were encouraged to share their progress with the class. During this discussion, we observed that students’ code descriptions often were brief and superficial, lacking the level of detail that an outsider would need to understand the connection between theoretical concepts and data. For example, some students who had focused on Brown et al.’s (Reference Brown, Horiuchi, Htun and Samuels2020) notion of risk aversion defined it as a person who dislikes taking chances. We urged these students instead to identify the attributes of their relevant concept (in this case, risk aversion) and suggest how it may be manifested in the context under consideration (in this case, an academic environment). To provide all students with a model of an appropriate level of detail, we also drew their attention to Bailard et al.’s (Reference Bailard, Tromble, Zhong, Bianchi, Hosseini and Broniatowski2024) coding framework, in which the authors provide a brief definition of their codes as well as suggest how these theoretical concepts may be manifested empirically.

As part of their course assessment, students were required to submit the coding framework used to analyze their interview data. When we appraised our students’ work, we believed that a good framework would contain a list of codes with rich and detailed descriptions, as well as a data exemplar that warranted the application of the code. Most of our students performed well on their assessment and we were pleased to see many coding frameworks that successfully operationalized theories by providing codes, comprehensive descriptions, and a discernible link between codes and data. Students who performed less well typically had frameworks that provided brief definitions of relevant concepts without furnishing the detail necessary to identify those concepts in real-world data or selected data exemplars that did not appear to correspond to the relevant codes.

DEBRIEFING WITH STUDENTS

After our module concluded and all assessments had been graded, we issued an open call for our students to participate in an interview with a faculty member at our university to reflect on their experiences of undertaking qualitative research and deductive coding.Footnote 4 To encourage student candor, the interviews were conducted by a faculty member who had not taught the module.

The interviewers focused on asking questions about the students’ approach to mastering the steps of deductive coding and drafting their own coding framework. In their answers, students suggested that our approach to teaching deductive coding had been successful and that our multipronged approach of providing templates, data, and opportunities to practice helped them to master the fundamentals of the exercise. One student stated:

[T]he coding part for me was the most intimidating…. In the lecture, we had examples of how your data should be organized and also those slides were given to us after so we could access those at any time and replicate that for our own data. That was very useful.

Another student also described practice and examples as having been helpful in overcoming her initial trepidation:

[Coding] was a bit scary or intimidating at first just because we’re not used to doing this….We went through a lot of examples in the lecture that were useful and in seminar again we got to talk through it as in just go around the room and share what is your piece of data, what is the code you would give it, and so on. And just to get the feedback from your seminar leader….So that was really useful.

It also was apparent that student engagement—that is, the investment and effort directed toward mastering academic skills (Newmann Reference Newmann1992, 12)—was bolstered by asking students to code their own data. When asked about the experiences of coding her own data, one student stated:

I think one of the advantages was that because it was data I collected, I had a strong interest in it, so I was extra keen on presenting it accurately and as convincingly as I could.

Another student recalled:

Psychologically, I felt a lot more motivated to do this because…I thought of all the [interview] questions…it was me throughout the entire process. It was all me, and analyzing my own data is much more interesting….

Some students also observed that the fact that they had collected the data themselves meant that additional and valuable information—such as participant body language, emotions, and tone of voice—became available to them as part of the analytical process. One student recalled how the physical reaction of her participant following a question about departmental hiring processes had factored into her assessment of the data:

…he kind of leant back for a minute and he really thought about it, which kind of signalled to me that he was remembering something, and that this might not have been widely known…that meant that the body language would kind of feed into this coding process as well.

Some students also observed that the fact that they had collected the data themselves meant that additional and valuable information—such as participant body language, emotions, and tone of voice—became available to them as part of the analytical process.

Another student similarly noted that words on a page are not the only data relevant to the analytical process:

I get to see the body language and like, part of what I was saying, that the interview was combatant….It was how he was saying it. I feel like I wouldn’t have been able to catch that if I was just reading the transcript.

SUGGESTIONS FOR REPLICATING AND IMPROVING THIS EXERCISE

There are three key considerations that faculty members may want to consider if they want to replicate this exercise. First, there are the logistical hurdles of recruiting interview subjects and potentially securing ethical approval.Footnote 5 Extensive forward planning is necessary for an undertaking that involves many human participants. Before the teaching term began, we asked faculty members in our department to provide a two-hour window of their time when they would be available to participate in 10 interviews with our students each lasting 10 minutes. After we secured the commitment of a sufficient number of participants, we created an online booking system that could be used by students to reserve an interview slot with a faculty member. The booking system and full assessment instructions were provided to students on their first day of class. Early planning for this exercise was critical to ensuring its success—and we were helped in our recruitment efforts by offering our faculty participants a small incentive in the form of a reduced grading load. Although we think it is valuable for students to obtain interview experience, we also recognize that in certain contexts, this course component could raise insurmountable logistical or ethical challenges. If this were to become the case, then we recommend that students be tasked with gathering original data from other sources (e.g., an archive).

Second, faculty members should consider whether they will dictate the research question that students gather data in response to and/or the theory that students are trying to test. On the one hand, the pedagogical literature suggests that allowing students to pursue their own line of inquiry gives them an opportunity to conduct “real” research (as opposed to merely studying) and bolsters their sense of ownership over the learning process (Levy and Petrulis Reference Levy and Petrulis2012). On the other hand, our experience suggests that it was easier to teach deductive coding when students were focusing on the same research question and had brought only limited theoretical perspectives to bear on it. If our students had set their own unique research questions (with accompanying unique theoretical perspectives), then faculty members may have been faced with the difficult task of working with them to distill hundreds of different theories into a coding framework.

Third, although we did not require students to discuss intercoder reliability as part of this exercise,Footnote 6 it is both beneficial and relatively straightforward to incorporate lecture and seminar activities to meet that goal. Campbell et al. (Reference Campbell, Quincy, Osserman and Pedersen2013) provided a useful guide to paired intercoder reliability exercises that could be conducted in a classroom setting. For example, two students could develop a coding framework and explain it to their partner; both students would then analyze a portion of the data, compare results, and—in the event of discrepancies—delete unreliable codes and clarify coding definitions before repeating the exercise. If this analysis were carried out on student-generated data, then we anticipate that the exercise would prompt particularly animated discussion—given our previous observation that some students felt that words on a page did not capture the full meaning of their data.

CONCLUSION

This article focuses on identifying the common barriers to teaching qualitative data analysis and explaining how we surmounted them when teaching a large and compulsory undergraduate qualitative-methods course. The study outlines a three-step process that we used to help students master the mechanics of undertaking deductive coding, explains how we enabled each student to acquire original data on which to practice, and document the benefits of this approach. By setting an assessment that encouraged students to gather data, make discoveries, and evidence their own ideas, our students were given the experience of conducting original research (Levy and Petrulis Reference Levy and Petrulis2012)—which is likely to benefit them as they progress through their academic and professional careers. We hope that the techniques described in this article will encourage other faculty members to draw on experiential learning techniques when teaching their students how to analyze—as well as gather—qualitative data.

SUPPLEMENTARY MATERIAL

To view supplementary material for this article, please visit http://doi.org/10.1017/S1049096525101686.

CONFLICTS OF INTEREST

The authors declare that there are no ethical issues or conflicts of interest in this research.