1 Introduction

Explanations are a central object of study in a range of fields. In particular, in the last ten years or so, explanations that display the reasons why a certain conclusion is true, or why a certain phenomenon occurs, have become a flourishing and thriving object of research in several disciplines. In philosophy, for example, there is a long and illustrious tradition—starting with Aristotle and passing by scholars such as Leibniz, Bolzano or FregeFootnote 1 —that, although often neglected for several decades, is now witnessing a renewed interest. This tradition gives pride of place to explanations that play the same role in conceptual sciences, like mathematics, as causal explanations do in the empirical sciences: as the latter explain by displaying the causes, the former explain via the reasons.Footnote 2 Recently, Poggiolesi [Reference Poggiolesi25] has formalized ideas coming from this tradition using logical tools from proof theory. She has thus introduced a sequent calculus with explanatory rules, namely, rules where not only the conclusion follows from the premisse(s), but where the premisse(s) represent the reasons why the conclusion is true.

Another notable field where explanations with reasons have been receiving an increasing amount of attention and interest is computer science, and in particular machine learning classifiers. A recent research direction has aimed to show that some common machine learning classifiers can be represented by propositional formulas and classifications of instances can be analyzed in terms of classical logical consequence. In this context, Darwiche and Hirth [Reference Darwiche and Hirth7] define the idea of sufficient reasons for a decision, which correspond to its potential explicanda,Footnote 3 and relate it to prime implicants, as well as a range of kindred concepts.

The notion of explanation with reasons thus emerges as a central topic in two distinct fields, linked to different literatures, and using different techniques to develop different approaches. Is there a connection, beyond the apparent similarity in object? The current paper provides an answer to this question, by mapping notions used in machine learning into the framework for understanding explanations developed in philosophy. More precisely, we show that the explanatory tools introduced by Poggiolesi [Reference Poggiolesi25] naturally provide all and only the sufficient reasons for a decision, in the sense of Darwiche and Hirth [Reference Darwiche and Hirth7]. This result is of double conceptual significance. On the one hand, it shows that the philosophical approach to explanation via explanatory rules has applications in practical and significant cases, where it naturally captures pre-philosophical intuitions. On the other hand, it connects the notion of sufficient reason introduced in AI to a long philosophical tradition, suggesting that there exists a sort of fil rouge going from Aristotle to the present day, confirming the deepness and interest of the concepts at issue. Our result is also of formal import, insofar as the computation of the set of sufficient reasons for a decision has long been an open problem [Reference Coudert and Madre5, Reference Shih, Choi, Darwiche and Lang29]. The result shows that the explanatory framework developed in philosophy naturally provides a novel and simple solution to this problem, which may be of use in the study of as-yet open generalisations.

The paper is structured as follows. In §2 we introduce the concepts of sufficient and complete reason as defined by Shih et al. [Reference Shih, Choi, Darwiche and Lang30], whilst in §3 we clarify the main ideas behind the explanatory sequent calculus of Poggiolesi [Reference Poggiolesi25] and a generalized version of it. §4 will be used to illustrate how we can use the explanatory calculus to compute sufficient reasons behind decisions made by boolean classifiers, whilst in §5 we will prove that explanatory calculi can compute the complete reasons behind decisions made by classifiers. We finally close with some concluding remarks in §6.

2 Sufficient and complete reasons

We use this section to introduce and clarify the key notions proper to the account developed by Darwiche and Hirth [Reference Darwiche and Hirth7], Shih et al. [Reference Shih, Choi, Darwiche and Lang30]. To do so, we proceed by means of the following example. Consider a classifier

![]() $\mathcal {C}$

which admits an applicant just in case she has passed the entrance exam and either she is not a first time applicant, or she has work experience, or she has a high GPA. Consider the language of classical propositional language defined as follows.

$\mathcal {C}$

which admits an applicant just in case she has passed the entrance exam and either she is not a first time applicant, or she has work experience, or she has a high GPA. Consider the language of classical propositional language defined as follows.

Definition 2.1. The language of classical propositional logic,

![]() $\mathcal {L}$

, is composed by: atomic formulas (

$\mathcal {L}$

, is composed by: atomic formulas (

![]() $p_{0}$

,

$p_{0}$

,

![]() $p_{1}$

,

$p_{1}$

,

![]() $p_{2}$

, …), logical connectives (

$p_{2}$

, …), logical connectives (

![]() $\neg $

,

$\neg $

,

![]() $\wedge $

,

$\wedge $

,

![]() $\vee $

), and parentheses: (,). Literals are either atomic formulas or their negations, whilst extended literals are formulas of the form:

$\vee $

), and parentheses: (,). Literals are either atomic formulas or their negations, whilst extended literals are formulas of the form:

![]() $\overbrace {\neg \dots \neg }^n p$

for

$\overbrace {\neg \dots \neg }^n p$

for

![]() $n\geq 0$

and atomic formula p. Extended literals will be denoted by

$n\geq 0$

and atomic formula p. Extended literals will be denoted by

![]() $l_{1}, l_{2}, \ldots $

. We take the symbols

$l_{1}, l_{2}, \ldots $

. We take the symbols

![]() $\top , \bot $

and

$\top , \bot $

and

![]() $\rightarrow $

to be defined as usual. The set of well-defined formulas,

$\rightarrow $

to be defined as usual. The set of well-defined formulas,

![]() ${\mathcal {WF}}$

, is constructed in the standard way. Finally, for the sake of brevity, the symbol

${\mathcal {WF}}$

, is constructed in the standard way. Finally, for the sake of brevity, the symbol

![]() $\circ $

will be used to denote either a conjunction or a disjunction.

$\circ $

will be used to denote either a conjunction or a disjunction.

As it will become clear later on, it is convenient to use the metalinguistic symbol of converse of a formula, which is defined as follows.

Definition 2.2. The converse of a formula A, written

![]() $A^{\bot }$

, is defined as follows:

$A^{\bot }$

, is defined as follows:

$$ \begin{align*}A^{\bot } = \bigg \{ \begin {array}{@{}ll} \neg ^{n-1} E , & \mathit{if}\; A = \neg ^{n} B\; and\; n\; is\; odd\\ \neg ^{n+1} E, & \mathit{if}\; A = \neg ^{n} B\; and\; n\; is\; even,\\ \end {array}\end{align*} $$

$$ \begin{align*}A^{\bot } = \bigg \{ \begin {array}{@{}ll} \neg ^{n-1} E , & \mathit{if}\; A = \neg ^{n} B\; and\; n\; is\; odd\\ \neg ^{n+1} E, & \mathit{if}\; A = \neg ^{n} B\; and\; n\; is\; even,\\ \end {array}\end{align*} $$

where the main connective in B is not a negation,

![]() $n \geqslant $

0 and 0 is taken to be an even number.

$n \geqslant $

0 and 0 is taken to be an even number.

Suppose to use the following denotations:

-

- e denotes “applicant has passed the entrance exam;”

-

- f denotes “applicant is at her the first attempt;”

-

- w denotes “applicant has work experience;”

-

- g denotes “applicant has a high GPA.”

Following Darwiche and Hirth [Reference Darwiche and Hirth7], we can represent classifier

![]() $\mathcal {C}$

by the following formula A of the classical propositional language,

$\mathcal {C}$

by the following formula A of the classical propositional language,

![]() $A= e\wedge (\neg f\vee w\vee g)$

, where

$A= e\wedge (\neg f\vee w\vee g)$

, where

![]() $e, f, w, g$

are literals.

$e, f, w, g$

are literals.

Consider now the two applicants Greg and Susan. Greg and Susan have both passed the entrance exam, but whilst Greg has already applied and has work experience, Susan has work experience and a high GPA. Their features can be gathered in the following two sets (of literals)

![]() $\alpha _{g}$

and

$\alpha _{g}$

and

![]() $\alpha _{s}$

, respectively, and can be formalized as follows

$\alpha _{s}$

, respectively, and can be formalized as follows

![]() $\alpha _{g}=\{e, \neg f, w, \neg g\}$

and

$\alpha _{g}=\{e, \neg f, w, \neg g\}$

and

![]() $\alpha _{s}=\{e, f, w, g\}$

. We call both

$\alpha _{s}=\{e, f, w, g\}$

. We call both

![]() $\alpha _{g}$

and

$\alpha _{g}$

and

![]() $\alpha _{s}$

instances, where instances are defined through terms as follows.

$\alpha _{s}$

instances, where instances are defined through terms as follows.

Definition 2.3. A term is a consistent set of literals. Terms will be denoted by

![]() $\tau _{1}, \tau _{2}, \tau _{3}, \ldots $

. A term

$\tau _{1}, \tau _{2}, \tau _{3}, \ldots $

. A term

![]() $\tau _{i}$

subsumes a term

$\tau _{i}$

subsumes a term

![]() $\tau _{j}$

if, and only if,

$\tau _{j}$

if, and only if,

![]() $\tau _{i}\subseteq \tau _{j}$

.

$\tau _{i}\subseteq \tau _{j}$

.

Definition 2.4. An instance

![]() $\alpha $

of a formula A is a term such that, for every literal

$\alpha $

of a formula A is a term such that, for every literal

![]() $l$

appearing in A, either

$l$

appearing in A, either

![]() $l$

or

$l$

or

![]() $l^{\bot }$

is in

$l^{\bot }$

is in

![]() $\alpha $

. We sometimes refer to the literals in

$\alpha $

. We sometimes refer to the literals in

![]() $\alpha $

as the characteristics of the instance.

$\alpha $

as the characteristics of the instance.

Grege and Susan are both admitted by classifier

![]() $\mathcal {C}$

, as the admission is granted in case the formula which represents the classifier logically follows from the instance that corresponds to the applicant. It is easy to verify that we have both

$\mathcal {C}$

, as the admission is granted in case the formula which represents the classifier logically follows from the instance that corresponds to the applicant. It is easy to verify that we have both

![]() $\alpha _{g}\models A$

, as well as

$\alpha _{g}\models A$

, as well as

![]() $\alpha _{s}\models A$

.

$\alpha _{s}\models A$

.

More generally speaking, we use

![]() $A(\alpha )$

to denote the decision (0 or 1) of classifier A on instance

$A(\alpha )$

to denote the decision (0 or 1) of classifier A on instance

![]() $\alpha $

: this corresponds to

$\alpha $

: this corresponds to

![]() $\alpha \models A$

if, and only if,

$\alpha \models A$

if, and only if,

![]() $A(\alpha )$

= 1, and

$A(\alpha )$

= 1, and

![]() $\alpha \models \neg A$

if, and only if,

$\alpha \models \neg A$

if, and only if,

![]() $A(\alpha )$

= 0. We also define

$A(\alpha )$

= 0. We also define

![]() $A_{\alpha }$

in the following way:

$A_{\alpha }$

in the following way:

$$ \begin{align*}A_{\alpha} = \bigg\{ \begin{array}{@{}ll} A, & \textrm{if}\; A(\alpha) = 1 \\ \neg A, & \textrm{if}\; A(\alpha) = 0. \\ \end{array} \end{align*} $$

$$ \begin{align*}A_{\alpha} = \bigg\{ \begin{array}{@{}ll} A, & \textrm{if}\; A(\alpha) = 1 \\ \neg A, & \textrm{if}\; A(\alpha) = 0. \\ \end{array} \end{align*} $$

Note that

![]() $\alpha \models A_{\alpha }$

and

$\alpha \models A_{\alpha }$

and

![]() $A(\alpha )=A(\beta )$

if, and only if,

$A(\alpha )=A(\beta )$

if, and only if,

![]() $A_{\alpha } = A_{\beta }$

.

$A_{\alpha } = A_{\beta }$

.

Hence, both Greg and Susan have been admitted by classifier

![]() $\mathcal {C}$

; however, it seems that it is so for different reasons. The main goal of Darwiche and Hirth [Reference Darwiche and Hirth7], Shih et al. [Reference Shih, Choi, Darwiche and Lang30] is to explain the decisions made by a classifier on specific instances by way of providing various insights into what motivated these decisions. In particular, they propose the captivating notion of sufficient reason.

$\mathcal {C}$

; however, it seems that it is so for different reasons. The main goal of Darwiche and Hirth [Reference Darwiche and Hirth7], Shih et al. [Reference Shih, Choi, Darwiche and Lang30] is to explain the decisions made by a classifier on specific instances by way of providing various insights into what motivated these decisions. In particular, they propose the captivating notion of sufficient reason.

Definition 2.5. A sufficient reason for decision

![]() $A(\alpha )$

is a term

$A(\alpha )$

is a term

![]() $\tau $

that satisfies A, namely,

$\tau $

that satisfies A, namely,

![]() $\tau \models A$

, and

$\tau \models A$

, and

![]() $\tau $

is not subsumed by any other term that satisfies A.Footnote

4

$\tau $

is not subsumed by any other term that satisfies A.Footnote

4

A sufficient reason identifies characteristics of an instance that justify the decision: the decision will remain the same even if other characteristics of the instance were different. Moreover, a sufficient reason is minimal as none of its strict subsets can justify the decision.

Let us go back to the classifier

![]() $\mathcal {C}$

which admits an applicant just in case she has passed the entrance exam and either she has already applied or she has work experience or she has a high GPA. According to what has just been said, classifier

$\mathcal {C}$

which admits an applicant just in case she has passed the entrance exam and either she has already applied or she has work experience or she has a high GPA. According to what has just been said, classifier

![]() $\mathcal {C}$

admits Greg for the following sufficient reasons:

$\mathcal {C}$

admits Greg for the following sufficient reasons:

-

• Passed the entrance exam and is not a first time applicant,

$\tau _{1}=\{e, \neg f\}$

.

$\tau _{1}=\{e, \neg f\}$

. -

• Passed the entrance exam and has work experience,

$\tau _{2}=\{e, w\}$

.

$\tau _{2}=\{e, w\}$

.

Since Greg passed the entrance exam and has applied before, he will be admitted even if his other characteristics were different. Similarly, since Greg passed the entrance exam and has work experience, he will be admitted even if his other characteristics were different. Moreover, in both cases reasons are minimal: if any of their features is omitted, the decision is not longer the same.

Note that classifier

![]() $\mathcal {C}$

also admits Susan for the following sufficient reasons:

$\mathcal {C}$

also admits Susan for the following sufficient reasons:

-

• Passed the entrance exam and has work experience,

$\tau _{1}=\{e, w\}$

.

$\tau _{1}=\{e, w\}$

. -

• Passed the entrance exam and has a high GPA,

$\tau _{2}=\{e, g\}$

.

$\tau _{2}=\{e, g\}$

.

Since Susan passed the entrance exam and has work experience, or since Susan passed the entrance exam and she has a high GPA, she will be admitted. This is so even if her other characteristics were different and again these sets are minimal.

Definition 2.6. The complete reason for a decision is the set of all sufficient reasons.

A decision may have multiple sufficient reasons, sometimes an exponential number of them (see, e.g., [Reference Darwiche and Lang6]). As a result the enumeration of all sufficient reasons, i.e., the enumeration of the complete reasons, is a problem that has been treated in several papers (see, e.g., Coudert and Madre [Reference Coudert and Madre5] and Shih et al. [Reference Shih, Choi, Darwiche and Lang29]). The algorithms proposed over the time also had to face another difficulty which can be easily explained by means of the following example. Consider the classifier

![]() $\mathcal {C^{\prime }}$

represented by the formula

$\mathcal {C^{\prime }}$

represented by the formula

![]() $A^{\prime }=(p\wedge q)\vee (r\wedge \neg q)$

and the instance

$A^{\prime }=(p\wedge q)\vee (r\wedge \neg q)$

and the instance

![]() $\alpha ^{\prime }=\{p, q, r\}$

. It can be straightforwardly checked that the decision

$\alpha ^{\prime }=\{p, q, r\}$

. It can be straightforwardly checked that the decision

![]() $A^{\prime }(\alpha ^{\prime })$

has two sufficient reasons, namely,

$A^{\prime }(\alpha ^{\prime })$

has two sufficient reasons, namely,

![]() $\tau =\{p, q\}$

, but also

$\tau =\{p, q\}$

, but also

![]() $\tau ^{\prime }=\{p, r\}$

(because of the law of excluded middle). Algorithms proposed in the above mentioned literature may miss the sufficient reason

$\tau ^{\prime }=\{p, r\}$

(because of the law of excluded middle). Algorithms proposed in the above mentioned literature may miss the sufficient reason

![]() $\tau ^{\prime }=\{p, r\}$

and therefore be incomplete. Darwiche and Hirth [Reference Darwiche and Hirth7] and Shih et al. [Reference Shih, Choi, Darwiche and Lang30] have recently fixed this problem by constructing algorithms that provide in linear time the set of complete reasons; however, as the discussion shows, this is no trivial solution.

$\tau ^{\prime }=\{p, r\}$

and therefore be incomplete. Darwiche and Hirth [Reference Darwiche and Hirth7] and Shih et al. [Reference Shih, Choi, Darwiche and Lang30] have recently fixed this problem by constructing algorithms that provide in linear time the set of complete reasons; however, as the discussion shows, this is no trivial solution.

3 Explanatory sequent calculus

Extending ideas introduced in Genco [Reference Genco10] and Poggiolesi [Reference Poggiolesi22], Poggiolesi [Reference Poggiolesi25] mobilizes the resources of proof theory to formalize the notion of explanation from reasons. Two main ideas motivate this formalization. The first relates to a remark that is both ancient and central in the philosophical literature on explanations, and which consists at looking at explanations as deductive arguments that, starting from true premisses—be they the causes or the reasons—explain a certain conclusion.Footnote 5 Of course not any deductive argument constitutes an explanation, but some of them do, namely, those which have an explanatory power. The perspective that is adopted in Poggiolesi [Reference Poggiolesi25] consists in a formalization of this central idea along the following lines: explanations can be seen as proofs which, starting from true premisses, the reasons, not only prove that a certain conclusion is true, but also explain why it is such. This perspective naturally arises from the observation that proofs are deductive arguments; moreover, it is supported by the fact that notable explanations from reasons that can be found in mathematics actually are proofs of mathematical theorems, which show why those theorems are true.Footnote 6 Since proofs are standardly formalized in logic by means of derivations, explanations from reasons will be formalized as a special type of derivation, namely, a metalinguistic relation called formal explanation. As derivations are introduced via inferential rules, formal explanations will be introduced via explanatory rules, namely, rules where not only is the conclusion inferable from their premisse(s), but also such that the premisses are the reasons why the conclusion is true.

Let us now move to the second main idea linked to the formalization of explanations proposed by Poggiolesi [Reference Poggiolesi25]. The second idea relates to the observation that in many examples of explanation from reasons, reasons and conclusions, once formalized in a formal language, correspond to formulas related to each other by elements that occur inside the formulas themselves.Footnote

7

Consider the sentence “any bachelor is an unmarried man;” the intuitive reasons which explain this truth are “any bachelor is unmarried” and “any bachelor is a man.” Formalizing them we obtain

![]() $\forall x(Bx\rightarrow Ux\wedge Mx)$

, and

$\forall x(Bx\rightarrow Ux\wedge Mx)$

, and

![]() $\forall x(Bx\rightarrow Ux)$

,

$\forall x(Bx\rightarrow Ux)$

,

![]() $\forall x(Bx\rightarrow Mx)$

, respectively. The link between these formulas occurs deep inside the formulas themselves: in particular, the connective

$\forall x(Bx\rightarrow Mx)$

, respectively. The link between these formulas occurs deep inside the formulas themselves: in particular, the connective

![]() $\wedge $

inside

$\wedge $

inside

![]() $\forall x(Bx\rightarrow Ux\wedge Mx)$

is broken into two and thus give rise to both

$\forall x(Bx\rightarrow Ux\wedge Mx)$

is broken into two and thus give rise to both

![]() $\forall x(Bx\rightarrow Ux)$

and

$\forall x(Bx\rightarrow Ux)$

and

![]() $\forall x(Bx\rightarrow Mx)$

. However, as already said, this is no isolated case. Another example is obtained by considering the sentence“zero or the successor of any natural number is itself a natural number.” The reasons which explain this truth are “zero is a natural number” and “the successor of any natural number is a natural number.” Formalizing them we obtain

$\forall x(Bx\rightarrow Mx)$

. However, as already said, this is no isolated case. Another example is obtained by considering the sentence“zero or the successor of any natural number is itself a natural number.” The reasons which explain this truth are “zero is a natural number” and “the successor of any natural number is a natural number.” Formalizing them we obtain

![]() $\forall x((Zx\vee SNx)\rightarrow Nx)$

, and

$\forall x((Zx\vee SNx)\rightarrow Nx)$

, and

![]() $\forall x(Zx\rightarrow Nx)$

,

$\forall x(Zx\rightarrow Nx)$

,

![]() $\forall x(SNx\rightarrow Nx)$

. Once again, the link between these formulas occurs deep inside the formulas themselves: in particular, this time it is the connective

$\forall x(SNx\rightarrow Nx)$

. Once again, the link between these formulas occurs deep inside the formulas themselves: in particular, this time it is the connective

![]() $\vee $

which is broken and gives rise to the reasons of the conclusion.Footnote

8

Similar examples also arise at the propositional level. Consider the sentence “if it is a triangle, then it has three sides and the sum of its angles is 180

$\vee $

which is broken and gives rise to the reasons of the conclusion.Footnote

8

Similar examples also arise at the propositional level. Consider the sentence “if it is a triangle, then it has three sides and the sum of its angles is 180

![]() $^{\circ }$

;” the reasons which explain its truth are “if it is a triangle, then it has three sides” and “if it is a triangle, then the sum of its angles is 180

$^{\circ }$

;” the reasons which explain its truth are “if it is a triangle, then it has three sides” and “if it is a triangle, then the sum of its angles is 180

![]() $^{\circ }$

.” Formalizing them we obtain

$^{\circ }$

.” Formalizing them we obtain

![]() $t\rightarrow s\wedge a$

, and

$t\rightarrow s\wedge a$

, and

![]() $t\rightarrow s$

,

$t\rightarrow s$

,

![]() $t\rightarrow a$

, respectively. Once more the link between these formulas occurs deep inside the formulas themselves.

$t\rightarrow a$

, respectively. Once more the link between these formulas occurs deep inside the formulas themselves.

To deal with this kind of case, Poggiolesi introduces the notions of context and formula in a context that allow to focus on a particular part of the formula at issue.Footnote

9

For example consider again the formula

![]() $\forall x((Zx\vee SNx)\rightarrow Nx)$

and suppose we want to focus on a particular part of it, say

$\forall x((Zx\vee SNx)\rightarrow Nx)$

and suppose we want to focus on a particular part of it, say

![]() $Zx\vee SNx$

. We denote this fact by rewriting

$Zx\vee SNx$

. We denote this fact by rewriting

![]() $\forall x((Zx\vee SNx)\rightarrow Nx)$

as

$\forall x((Zx\vee SNx)\rightarrow Nx)$

as

![]() $C[Zx\vee SNx]$

, where

$C[Zx\vee SNx]$

, where

![]() $C[.]$

is the context and

$C[.]$

is the context and

![]() $Zx\vee SNx$

is the formula in the context

$Zx\vee SNx$

is the formula in the context

![]() $C[.]$

. Following what has been said above, the reasons why the formula

$C[.]$

. Following what has been said above, the reasons why the formula

![]() $C[Zx\vee SNx]$

is true will be

$C[Zx\vee SNx]$

is true will be

![]() $C[Zx]$

and

$C[Zx]$

and

![]() $C[SNx]$

.

$C[SNx]$

.

Before introducing the formal notion of context, and formula in a context, let us dwell on an important feature of explanations. When working in an explanatory framework, negation needs to be treated with special attention: indeed, as shown in Poggiolesi [Reference Poggiolesi, Boccuni and Sereni21, Reference Poggiolesi23] and Wilhelm [Reference Wilhelm32], otherwise inconsistencies might arise. In order to avoid them (even in the simple case where the context is empty), one needs to distinguish, for instance, between the reasons of formulas of the form

![]() $\neg (p\vee q)$

and the reasons of formulas of the form

$\neg (p\vee q)$

and the reasons of formulas of the form

![]() $\neg (\neg p\vee \neg q)$

. In the former case, formulas

$\neg (\neg p\vee \neg q)$

. In the former case, formulas

![]() $\neg p, \neg q$

explain why

$\neg p, \neg q$

explain why

![]() $\neg (p\vee q)$

is true, whilst in the latter case, formulas

$\neg (p\vee q)$

is true, whilst in the latter case, formulas

![]() $p, q$

explain why

$p, q$

explain why

![]() $\neg (\neg p\vee \neg q)$

is true. Thanks to the notion of converse of a formula, which we have introduced in the §2, we can treat both cases uniformly by simply saying that

$\neg (\neg p\vee \neg q)$

is true. Thanks to the notion of converse of a formula, which we have introduced in the §2, we can treat both cases uniformly by simply saying that

![]() $A^{\bot }, B^{^{\bot }}$

explain why

$A^{\bot }, B^{^{\bot }}$

explain why

![]() $\neg (A\vee B)$

is true. Indeed whilst the converse of p (or q) corresponds to

$\neg (A\vee B)$

is true. Indeed whilst the converse of p (or q) corresponds to

![]() $\neg p$

(

$\neg p$

(

![]() $\neg q$

), the converse of

$\neg q$

), the converse of

![]() $\neg p$

(or

$\neg p$

(or

![]() $\neg q$

) corresponds to p (q).

$\neg q$

) corresponds to p (q).

For contexts, similar care is needed in dealing with negations. Suppose, for instance, that one ignores the issue and, focussing on

![]() $p\vee q$

and

$p\vee q$

and

![]() $\neg p\vee \neg q$

, treated the formulas

$\neg p\vee \neg q$

, treated the formulas

![]() $\neg (p\vee q)$

and

$\neg (p\vee q)$

and

![]() $\neg (\neg p\vee \neg q)$

as

$\neg (\neg p\vee \neg q)$

as

![]() $C[p\vee q]$

and

$C[p\vee q]$

and

![]() $C[\neg p\vee \neg q]$

respectively, where

$C[\neg p\vee \neg q]$

respectively, where

![]() $C= \neg $

. Then, by breaking the

$C= \neg $

. Then, by breaking the

![]() $\vee $

, we would get

$\vee $

, we would get

![]() $C[p], C[q]$

in the former case, and

$C[p], C[q]$

in the former case, and

![]() $C[\neg p], C[\neg q]$

in the latter. But this corresponds to

$C[\neg p], C[\neg q]$

in the latter. But this corresponds to

![]() $\neg p$

and

$\neg p$

and

![]() $\neg q$

, but also to

$\neg q$

, but also to

![]() $\neg \neg p$

and

$\neg \neg p$

and

![]() $\neg \neg q$

. In this latter case, the result differs from that obtained when using the empty context, namely,

$\neg \neg q$

. In this latter case, the result differs from that obtained when using the empty context, namely,

![]() $p, q$

. To avoid these kinds of inconsistencies, contexts are defined so as to exclude substituting a formula into the immediate scope of an odd number of negations.

$p, q$

. To avoid these kinds of inconsistencies, contexts are defined so as to exclude substituting a formula into the immediate scope of an odd number of negations.

Definition 3.1. The set

![]() $Co$

of contexts is inductively defined in the following way:

$Co$

of contexts is inductively defined in the following way:

-

-

$[.]\in Co$

;

$[.]\in Co$

; -

- if

$C [.]\in Co$

, then

$C [.]\in Co$

, then

$\neg \neg C [.]$

,

$\neg \neg C [.]$

,

$D\circ C [.]$

,

$D\circ C [.]$

,

$C[.]\circ D \in Co$

;

$C[.]\circ D \in Co$

; -

- if

$C [.]\in Co$

and

$C [.]\in Co$

and

$C [.]\neq \overbrace {\neg ...\neg }^{2n} [.]$

, where

$C [.]\neq \overbrace {\neg ...\neg }^{2n} [.]$

, where

$n \geq $

0, then

$n \geq $

0, then

$\neg C[.] \in Co$

.

$\neg C[.] \in Co$

.

Definition 3.2. For all contexts

![]() $C[.]$

, and formulas A, we define

$C[.]$

, and formulas A, we define

![]() $C[A]$

, a formula in a context, as follows:

$C[A]$

, a formula in a context, as follows:

-

- if

$C[.] = [.]$

, then

$C[.] = [.]$

, then

$C[A]=A$

;

$C[A]=A$

; -

- if

$C[.] = \neg \neg D[.]$

, then

$C[.] = \neg \neg D[.]$

, then

$C[A]= \neg \neg D[A]$

;

$C[A]= \neg \neg D[A]$

; -

- if

$C [.]$

=

$C [.]$

=

$D^{\prime }\circ D[.]$

,

$D^{\prime }\circ D[.]$

,

$D[.]\circ D^{\prime }$

,

$D[.]\circ D^{\prime }$

,

$\neg D [.]$

, then

$\neg D [.]$

, then

$C[A]$

=

$C[A]$

=

$D^{\prime }\circ D[A]$

,

$D^{\prime }\circ D[A]$

,

$D[A]\circ D^{\prime }$

,

$D[A]\circ D^{\prime }$

,

$\neg D[A]$

, respectively.

$\neg D[A]$

, respectively.

Once formulas are considered in contexts, they will naturally have a polarity which is either positive or negative and that is defined as standard (see, e.g., Troelstra and Schwichtenberg [Reference Troelstra and Schwichtenberg31].

Definition 3.3. We define the set of contexts with positive

![]() $\mathcal {P}$

and negative polarities

$\mathcal {P}$

and negative polarities

![]() $\mathcal {N}$

simultaneously by an inductive definition given by the three clauses (i)–(iii) below.

$\mathcal {N}$

simultaneously by an inductive definition given by the three clauses (i)–(iii) below.

-

(i)

$[.] \in \mathcal {P}$

;

$[.] \in \mathcal {P}$

;

if

![]() $B^{+} \in \mathcal {P}$

,

$B^{+} \in \mathcal {P}$

,

![]() $B^{-} \in \mathcal {N}$

, and A is any formula, then:

$B^{-} \in \mathcal {N}$

, and A is any formula, then:

-

(ii)

$\neg B^{-}$

,

$\neg B^{-}$

,

$A\wedge B^{+}$

,

$A\wedge B^{+}$

,

$B^{+}\wedge A$

,

$B^{+}\wedge A$

,

$A\vee B^{+}$

,

$A\vee B^{+}$

,

$B^{+}\vee A \in \mathcal {P}$

.

$B^{+}\vee A \in \mathcal {P}$

. -

(iii)

$\neg B^{+}$

,

$\neg B^{+}$

,

$A\wedge B^{-}$

,

$A\wedge B^{-}$

,

$B^{-}\wedge A$

,

$B^{-}\wedge A$

,

$A\vee B^{-}$

,

$A\vee B^{-}$

,

$B^{-}\vee A \in \mathcal {N}$

$B^{-}\vee A \in \mathcal {N}$

whenever these objects are in

![]() $Co$

. We say that a formula A is positive (resp. negative) in a context

$Co$

. We say that a formula A is positive (resp. negative) in a context

![]() $C[A]$

if

$C[A]$

if

![]() $C[.] \in \mathcal {P}$

(resp.

$C[.] \in \mathcal {P}$

(resp.

![]() $C[.] \in \mathcal {N}$

).

$C[.] \in \mathcal {N}$

).

Summing up, we have introduced the two main ideas that characterize Poggiolesi’s formalism. The first idea amounts at looking at explanations as special types of proofs, whilst the second amounts to the requirement of working deep inside formula. Putting them together, we have that Poggiolesi [Reference Poggiolesi25] introduces explanatory rules that deal with formulas in contexts. In other words, explanatory rules will have the form of deep inferences, namely, a recently introduced variation of the sequents calculus (see, e.g., Brünnler [Reference Brünnler4, Reference Guglielmi and Bruscoli11, Reference Pimentel, Ramayanake and Lellmann19]) where rules operate deep inside formulas. Although the literature on deep inferences has been motivated by cornerstone results of structural proof theory, in this context they reveal a profound philosophical significance.

Explanatory propositional calculus (EC).

As already stated, explanatory rules are such that their premisses are the reasons why their conclusion is true. More specifically, explanatory rules provide the total reasons why the conclusion is true, where total reasons typically amount to the multiset of all, and only, those formulas, each of which contributes to explaining another (see also Schaffer [Reference Schaffer28]). Note that the notion of total reasons motivates a distinction that we illustrate with the following example. Jane has a brother, Billy, and a sister, Suzy. Jane also has a niece. Thus the reason why Jane has a niece is that her sister has a girl. Indeed a niece is the girl of someone’s brother or someone’s sister and Suzy, Jane’s sister, has a girl. Jane’s brother could have had a girl, but he does not. Hence Jane’s brother having a girl is merely a potential reason of why Jane has a niece. Potential reasons are also central for total explanations: if Jane’s brother had a girl, this would have been part of the total explanation of why Jane has a niece. We rephrase this distinction between reasons and potential reasons as the one between reasons and conditions. So, for example, we will say that under the condition that Jane’s brother does not have a girl, the total reason why Jane has a niece is that her sister has a daughter. We will indicate the distinction between conditions and reasons with a vertical bar: formulas lying on the left of the vertical bar are conditions, whilst formulas on the right of the vertical bar are reasons. Since every formula on the left side of the bar is actually the converse of a formula, to lighten the presentation, we do not write the converse symbol: we just understand that being on the left of the bar indicates this converse reading, as specified in Definition 3.5.

We now have all the elements needed to introduce sequents for the explanatory calculus EC.

Definition 3.4. For any

![]() $\alpha $

, A, and multiset

$\alpha $

, A, and multiset

![]() $X= \{B_{1}, \ldots , B_{n}\}$

, such that

$X= \{B_{1}, \ldots , B_{n}\}$

, such that

![]() $\alpha $

is an instance of A, but also an instance of each

$\alpha $

is an instance of A, but also an instance of each

![]() $B_{i}$

, 1

$B_{i}$

, 1

![]() $\leq i\leq n$

, we have that:

$\leq i\leq n$

, we have that:

-

-

$\alpha \Rightarrow A$

is a sequent;

$\alpha \Rightarrow A$

is a sequent; -

-

$X\mid \alpha \Rightarrow A$

is a sequent.

$X\mid \alpha \Rightarrow A$

is a sequent.

Definition 3.5. The interpretation

![]() $\tau $

of a sequent is the following:

$\tau $

of a sequent is the following:

-

-

$(\alpha \Rightarrow A)^{\tau }:= \bigwedge \alpha \rightarrow A$

;

$(\alpha \Rightarrow A)^{\tau }:= \bigwedge \alpha \rightarrow A$

; -

-

$(X\mid \alpha \Rightarrow A)^{\tau }:= (\bigwedge X^{\bot }\wedge \bigwedge \alpha )\rightarrow A$

.

$(X\mid \alpha \Rightarrow A)^{\tau }:= (\bigwedge X^{\bot }\wedge \bigwedge \alpha )\rightarrow A$

.

where if

![]() $X=\{B_{1}, ..., B_{n}\}$

, then

$X=\{B_{1}, ..., B_{n}\}$

, then

![]() $\bigwedge X^{\bot }= B^{\bot }_{1}\wedge ...\wedge B^{\bot }_{n}$

.

$\bigwedge X^{\bot }= B^{\bot }_{1}\wedge ...\wedge B^{\bot }_{n}$

.

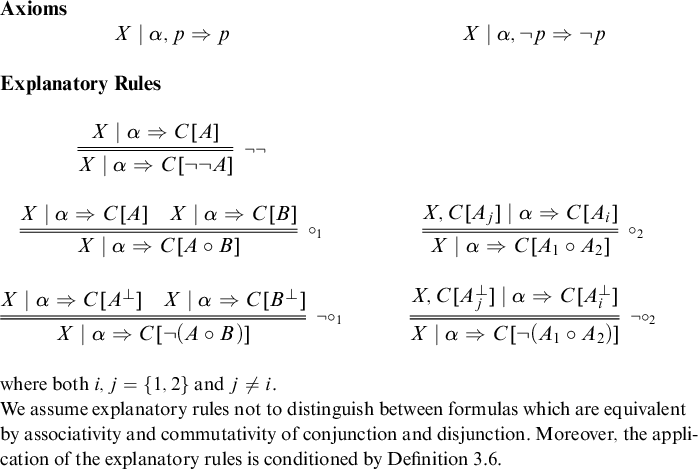

By using sequents as defined above, we introduce the explanatory calculus EC in Figure 1. Note that EC results from a modification, but also a generalization, of the ideas conveyed in Poggiolesi [Reference Poggiolesi25]. In particular, (i) whilst Poggiolesi introduces explanatory rules for first-order formulas, here we limit ourselves to propositional formulas; (ii) secondly, whilst in the sequents used by Poggiolesi, antecedents and consequents of sequents are multisets of formulas, in the present case, the antecedent is an instance, and the consequent is a single formula; (iii) thirdly, whilst Poggiolesi added explanatory rules to the standard sequent calculus for first-order classical logic, here, in a way similar to that proposed by Genco [Reference Genco10], explanatory rules plus axioms constitute a calculus on its own. (iv) Finally, in the present work conditions are generalized to multisets of formulas. Following the notation introduced in Poggiolesi [Reference Poggiolesi25], we write explanatory rules with a double line; the double line signals that not only is the conclusion inferable from the premisses, but also the premisses are the (total) reasons why the conclusion is true.Footnote 10

Definition 3.6. We assume the application of explanatory propositional rulesFootnote 11 to obey the following restrictions:

-

- rule

$\circ _2$

can be applied on a formula of the form

$\circ _2$

can be applied on a formula of the form

$C[A\circ B]$

if:

$C[A\circ B]$

if:

$\bigg \{ \begin {array}{@{}ll} \scriptstyle C\in \mathcal {P}\;and\; \circ =\vee , or\\ \scriptstyle C\in \mathcal {N}\;and\;\circ =\wedge .\\ \end {array} $

$\bigg \{ \begin {array}{@{}ll} \scriptstyle C\in \mathcal {P}\;and\; \circ =\vee , or\\ \scriptstyle C\in \mathcal {N}\;and\;\circ =\wedge .\\ \end {array} $

-

- rule

$\neg \circ _2$

can be applied on a formula of the form

$\neg \circ _2$

can be applied on a formula of the form

$C[\neg (A\circ B)]$

if:

$C[\neg (A\circ B)]$

if:

$\bigg \{ \begin {array}{@{}ll} \scriptstyle C\in \mathcal {P}\;and\;\circ =\wedge , or\\ \scriptstyle C\in \mathcal {N}\;and\;\circ =\vee .\\ \end {array}$

$\bigg \{ \begin {array}{@{}ll} \scriptstyle C\in \mathcal {P}\;and\;\circ =\wedge , or\\ \scriptstyle C\in \mathcal {N}\;and\;\circ =\vee .\\ \end {array}$

We now comment on the rules of the explanatory sequent calculus. Each of these rules is supposed to capture cases where the premisses are the total reasons for the conclusions.Footnote

12

Some examples are clear: for instance p and q are clearly the reasons for

![]() $p \wedge q$

; and rule

$p \wedge q$

; and rule

![]() $\circ _1$

reflects this. Let us then dwell on the less obvious and more novel cases. First of all, note that there is no rule for single negation. This is because explanations notoriously go from (potentially) true formulas to (potentially) true formulas; there can thus be no rule which acts, as in the case of the rule for negation in the standard sequent calculus, by shifting formulas from one side of the sequent to another. In other words, one cannot explain the truth of

$\circ _1$

reflects this. Let us then dwell on the less obvious and more novel cases. First of all, note that there is no rule for single negation. This is because explanations notoriously go from (potentially) true formulas to (potentially) true formulas; there can thus be no rule which acts, as in the case of the rule for negation in the standard sequent calculus, by shifting formulas from one side of the sequent to another. In other words, one cannot explain the truth of

![]() $\neg A$

, from the falsity of A. Instead negation is spread over the other connectives: either it is analyzed when it is double, or when it is in front of conjunction and disjunction. Note that, for reasons mentioned above (when introducing contexts) and which are discussed in Poggiolesi [Reference Poggiolesi20], the connective of negation must be carefully treated in an explanatory context; this is why the converse of a formula (see Definition 2.2) is used in the rules

$\neg A$

, from the falsity of A. Instead negation is spread over the other connectives: either it is analyzed when it is double, or when it is in front of conjunction and disjunction. Note that, for reasons mentioned above (when introducing contexts) and which are discussed in Poggiolesi [Reference Poggiolesi20], the connective of negation must be carefully treated in an explanatory context; this is why the converse of a formula (see Definition 2.2) is used in the rules

![]() $\neg \circ _{1}$

and

$\neg \circ _{1}$

and

![]() $\neg \circ _{2}$

.Footnote

13

$\neg \circ _{2}$

.Footnote

13

Let us now turn to those rules that do not involve conditions: i.e.,

![]() $\neg \neg , \circ _{1}$

and

$\neg \neg , \circ _{1}$

and

![]() $\neg \circ _{1}$

. Each of them stands as a straightforward generalization of standard rules concerning classical connectives, allowing them to apply deep inside formulas. This is so because these rules are not merely intended to be simple inferential rules but explanatory rules, i.e., rules that provide the (total) reason(s) why their conclusion is true. The relation between reason(s) and conclusion might hold in virtue of elements that lie inside formulas, so the rules need to reflect this possibility.

$\neg \circ _{1}$

. Each of them stands as a straightforward generalization of standard rules concerning classical connectives, allowing them to apply deep inside formulas. This is so because these rules are not merely intended to be simple inferential rules but explanatory rules, i.e., rules that provide the (total) reason(s) why their conclusion is true. The relation between reason(s) and conclusion might hold in virtue of elements that lie inside formulas, so the rules need to reflect this possibility.

Let us now move to the rules which involves conditions, namely, the rules

![]() $\circ _{2}$

,

$\circ _{2}$

,

![]() $\neg \circ _{2}$

. These rules naturally emerge for total explanations, i.e., explanations where all the reasons why a conclusion is true need to be evoked. In this setting, conditions need to be mentioned to prevent equivocation between total and partial explanations (see, e.g., Poggiolesi [Reference Poggiolesi20]). Consider the example: John got into the University, and he is rich or he passed the entrance exam. Suppose that in fact John got into the University, he is rich, but he did not pass the entrance exam. In this example, the explanation why it is true that John got into the University, and he is rich or he passed the entrance exam is that John got into University and he is rich. However, if nothing is said about the passing exam, the explanation remains ambiguous: it is indeed unclear whether the explanandum is true also because John got into University and passed the entrance exam. Conditions allow disambiguation of the explanation. Thus we say that, under the condition that it is not the case that John got into University and passed the entrance exam, it is true that John got into the University, and he is rich or he passed the entrance exam, because John got into University and he is rich. On formal terms, let us denote the sentence “John gets into the University, and he is either rich or it has passed the entrance exam,” with the formula

$\neg \circ _{2}$

. These rules naturally emerge for total explanations, i.e., explanations where all the reasons why a conclusion is true need to be evoked. In this setting, conditions need to be mentioned to prevent equivocation between total and partial explanations (see, e.g., Poggiolesi [Reference Poggiolesi20]). Consider the example: John got into the University, and he is rich or he passed the entrance exam. Suppose that in fact John got into the University, he is rich, but he did not pass the entrance exam. In this example, the explanation why it is true that John got into the University, and he is rich or he passed the entrance exam is that John got into University and he is rich. However, if nothing is said about the passing exam, the explanation remains ambiguous: it is indeed unclear whether the explanandum is true also because John got into University and passed the entrance exam. Conditions allow disambiguation of the explanation. Thus we say that, under the condition that it is not the case that John got into University and passed the entrance exam, it is true that John got into the University, and he is rich or he passed the entrance exam, because John got into University and he is rich. On formal terms, let us denote the sentence “John gets into the University, and he is either rich or it has passed the entrance exam,” with the formula

![]() $p\wedge (q\vee r)$

. Let us apply on this formula, focussing on the disjunction, the following instance of the rule

$p\wedge (q\vee r)$

. Let us apply on this formula, focussing on the disjunction, the following instance of the rule

![]() $\circ _{2}$

, we get:

$\circ _{2}$

, we get:

The rule can be applied since

![]() $\circ $

is a disjunction with a positive polarity (see Definition 3.6). Thanks to the rule

$\circ $

is a disjunction with a positive polarity (see Definition 3.6). Thanks to the rule

![]() $\circ _{2}$

, we can explain the formula

$\circ _{2}$

, we can explain the formula

![]() $p\wedge (q\vee r)$

by the formula

$p\wedge (q\vee r)$

by the formula

![]() $p\wedge r$

, which represents the total reason why it is true under the condition that the formula

$p\wedge r$

, which represents the total reason why it is true under the condition that the formula

![]() $p\wedge q$

does not hold. The rule matches what we have just been discussing and thus stands as an adequate instance of the rule.

$p\wedge q$

does not hold. The rule matches what we have just been discussing and thus stands as an adequate instance of the rule.

Applications of rules deep inside formulas with conditions involve some limitations: these limitations serve to preserve an adequate notion of explanation. For example, consider the formula

![]() $p\rightarrow (q\wedge r)$

. One cannot apply the rule

$p\rightarrow (q\wedge r)$

. One cannot apply the rule

![]() $\circ _{2}$

on the conjunction of this formula, since the conjunction occurs with positive polarity. On the other hand, this limitation is recommended. Indeed, the rule might have the following instance:

$\circ _{2}$

on the conjunction of this formula, since the conjunction occurs with positive polarity. On the other hand, this limitation is recommended. Indeed, the rule might have the following instance:

and it is easy to check that the formula

![]() $(\Rightarrow p\rightarrow (q\wedge r))^{\tau }$

is not even derivable from the formula

$(\Rightarrow p\rightarrow (q\wedge r))^{\tau }$

is not even derivable from the formula

![]() $(p\rightarrow q\mid \Rightarrow p\rightarrow r)^{\tau }$

(see Definition 3.5 for the interpretation of sequent).

$(p\rightarrow q\mid \Rightarrow p\rightarrow r)^{\tau }$

(see Definition 3.5 for the interpretation of sequent).

Finally note that explanatory rules do not distinguish between formulas that are equivalent by associativity and commutativity of conjunction and disjunction. This is an hyperintensional feature of the calculus, in line with the fact that explanation is an hyperintensional notion (see, e.g., Berto and Nolan [Reference Berto, Nolan, Zalta and Nodelman2] and Leitgeb [Reference Leitgeb15]).

Definition 3.7. Let

![]() $\vdash _{EC}X\mid \alpha \Rightarrow A$

denote that there exists a derivation of the sequent

$\vdash _{EC}X\mid \alpha \Rightarrow A$

denote that there exists a derivation of the sequent

![]() $X\mid \alpha \Rightarrow A$

in the explanatory calculus

$X\mid \alpha \Rightarrow A$

in the explanatory calculus

![]() $EC$

.

$EC$

.

Theorem 3.8. For any X,

![]() $\alpha $

and A, if

$\alpha $

and A, if

![]() $\vdash _{EC}X\mid \alpha \Rightarrow A$

, then

$\vdash _{EC}X\mid \alpha \Rightarrow A$

, then

![]() $\models (X\mid \alpha \Rightarrow A)^{\tau }$

.

$\models (X\mid \alpha \Rightarrow A)^{\tau }$

.

Proof. Straightforward, given Theorem 5.7 in [Reference Poggiolesi25].

Theorem 3.9. For any

![]() $\alpha $

and A, if

$\alpha $

and A, if

![]() $\alpha \models A$

, then

$\alpha \models A$

, then

![]() $\vdash _{EC} \alpha \Rightarrow A$

.

$\vdash _{EC} \alpha \Rightarrow A$

.

Proof. Suppose

![]() $\alpha \Rightarrow A$

is not a derivable sequent. This means that whatever sequence of the explanatory rules

$\alpha \Rightarrow A$

is not a derivable sequent. This means that whatever sequence of the explanatory rules

![]() $\neg \neg , \circ _{1}, \neg \circ _{1}$

we apply on A, we will end up with leafs of the form

$\neg \neg , \circ _{1}, \neg \circ _{1}$

we apply on A, we will end up with leafs of the form

![]() $\alpha , p\Rightarrow \neg p$

, or

$\alpha , p\Rightarrow \neg p$

, or

![]() $\alpha , \neg p\Rightarrow p$

. This means that if we assign evaluation 1 to the literals on the left side of the sequent, the literals on the right side of the sequents will have evaluation 0. Since, as it has been shown in Theorem 5.7 in [Reference Poggiolesi25], explanatory rules preserve the evaluation 0, then A will have evaluation 0 as well. Hence we have found an evaluation witnessing that A is not a logical consequence of

$\alpha , \neg p\Rightarrow p$

. This means that if we assign evaluation 1 to the literals on the left side of the sequent, the literals on the right side of the sequents will have evaluation 0. Since, as it has been shown in Theorem 5.7 in [Reference Poggiolesi25], explanatory rules preserve the evaluation 0, then A will have evaluation 0 as well. Hence we have found an evaluation witnessing that A is not a logical consequence of

![]() $\alpha $

.

$\alpha $

.

4 From the sequent calculus to sufficient reasons

We now describe how to use the explanatory calculus to extract sufficient (but also, as we will show, complete) reasons out of a decision. To do so, we first of all give instructions on how to define a comprehensive derivation of a sequent

![]() $\alpha \Rightarrow A$

, where the notion of comprehensive derivation is a crucial ingredient for extracting sufficient reasons out of decisions. Indeed, the main novelty of the calculus

$\alpha \Rightarrow A$

, where the notion of comprehensive derivation is a crucial ingredient for extracting sufficient reasons out of decisions. Indeed, the main novelty of the calculus

![]() $EC$

is the following. In any standard sequent calculus, given a formula A, say of the form

$EC$

is the following. In any standard sequent calculus, given a formula A, say of the form

![]() $s\wedge ((p\wedge q)\vee r)$

, the calculus will operate first on the main connective

$s\wedge ((p\wedge q)\vee r)$

, the calculus will operate first on the main connective

![]() $\wedge $

, then on the connective

$\wedge $

, then on the connective

![]() $\vee $

, finally on

$\vee $

, finally on

![]() $\wedge $

again. In the calculus

$\wedge $

again. In the calculus

![]() $EC$

, instead, one can start from whatever connective one prefers, i.e., either

$EC$

, instead, one can start from whatever connective one prefers, i.e., either

![]() $\wedge $

, or

$\wedge $

, or

![]() $\vee $

. In a comprehensive derivation it is demanded that the rules of

$\vee $

. In a comprehensive derivation it is demanded that the rules of

![]() $EC$

canonically apply to the innermost connective,Footnote

14

e.g., in the A above, the

$EC$

canonically apply to the innermost connective,Footnote

14

e.g., in the A above, the

![]() $\wedge $

which links p and q. This feature is crucial because working with the innermost connective uncovers potential analytic subformulas that do not contribute to the collection of the complete reasons of a decision.

$\wedge $

which links p and q. This feature is crucial because working with the innermost connective uncovers potential analytic subformulas that do not contribute to the collection of the complete reasons of a decision.

The second feature of comprehensive derivations concern rules

![]() $\circ _{2}$

or

$\circ _{2}$

or

![]() $\neg \circ _{2}$

. Typically, these rules keep one formula in the sequent, whilst they put the other in the conditions. In comprehensive derivations, any formula

$\neg \circ _{2}$

. Typically, these rules keep one formula in the sequent, whilst they put the other in the conditions. In comprehensive derivations, any formula

![]() $B_i$

in the conditions X is such that

$B_i$

in the conditions X is such that

![]() $\alpha \Rightarrow B_{i}$

is neither an axiom nor derivable via explanatory rules. In terms of the example of the classifier

$\alpha \Rightarrow B_{i}$

is neither an axiom nor derivable via explanatory rules. In terms of the example of the classifier

![]() $\mathcal {C}$

and applicants Greg or Susan, in comprehensive derivations, rules

$\mathcal {C}$

and applicants Greg or Susan, in comprehensive derivations, rules

![]() $\circ _{2}$

or

$\circ _{2}$

or

![]() $\neg \circ _{2}$

serve to separate those characteristics of the applicant that contribute to the success of the application—these characteristics are kept in the sequents—from those characteristics that do not contribute to the success of the application—these characteristics are put in the conditions.

$\neg \circ _{2}$

serve to separate those characteristics of the applicant that contribute to the success of the application—these characteristics are kept in the sequents—from those characteristics that do not contribute to the success of the application—these characteristics are put in the conditions.

Comprehensive derivations can easily be constructed using the algorithm provided in Appendix A, which makes clearer how comprehensive derivations work (see also the examples after Definition 4.6).

Definition 4.1. The depth of a subformula in a formula is the number of connectives it is nested into. So, for the example, the depth of

![]() $B\vee C$

in the formula

$B\vee C$

in the formula

![]() $E\wedge (B\vee C)$

is 1; whilst the depth of

$E\wedge (B\vee C)$

is 1; whilst the depth of

![]() $B\vee C$

in the formula

$B\vee C$

in the formula

![]() $(E\vee ((F\wedge (B\vee C))\wedge (G\wedge H)))\wedge (G\vee H\vee R)$

is 4.

$(E\vee ((F\wedge (B\vee C))\wedge (G\wedge H)))\wedge (G\vee H\vee R)$

is 4.

Definition 4.2. Consider the sequent

![]() $\alpha \Rightarrow A$

. A derivation d of

$\alpha \Rightarrow A$

. A derivation d of

![]() $\alpha \Rightarrow A$

is comprehensive if (reading the derivation top-down):

$\alpha \Rightarrow A$

is comprehensive if (reading the derivation top-down):

-

1. For every sequent of the form

$X \mid \alpha \Rightarrow A'$

appearing in d and every

$X \mid \alpha \Rightarrow A'$

appearing in d and every

$B_i \in X$

,

$B_i \in X$

,

$\alpha \Rightarrow B_{i}$

is neither an axiom nor derivable via explanatory rules.

$\alpha \Rightarrow B_{i}$

is neither an axiom nor derivable via explanatory rules. -

2. If

$R \circ ' \overbrace {\neg \cdots \neg }^{m}(B_1 \circ " B_2)$

is a subformula of

$R \circ ' \overbrace {\neg \cdots \neg }^{m}(B_1 \circ " B_2)$

is a subformula of

$A'$

with

$A'$

with

$\alpha \Rightarrow A'$

appearing in d, for

$\alpha \Rightarrow A'$

appearing in d, for

$\circ ', \circ " \in \{\vee , \wedge \}$

, with

$\circ ', \circ " \in \{\vee , \wedge \}$

, with

$\circ ' \neq \circ "$

if

$\circ ' \neq \circ "$

if

$m = 2n$

, and

$m = 2n$

, and

$\circ ' = \circ "$

if

$\circ ' = \circ "$

if

$m = 2n + 1$

, then:

$m = 2n + 1$

, then:-

(a) if

$(B_1 \circ " B_2)\neq (l \vee \neg l), (l \wedge \neg l)$

for any extended literal

$(B_1 \circ " B_2)\neq (l \vee \neg l), (l \wedge \neg l)$

for any extended literal

$l$

, then any rule applied on

$l$

, then any rule applied on

$\circ '$

in this subformula is higher in d than every rule applied on

$\circ '$

in this subformula is higher in d than every rule applied on

$\circ "$

;

$\circ "$

; -

(b) if

$(B_1 \circ " B_2) = (l \vee \neg l), (l \wedge \neg l),$

for any extended literal

$(B_1 \circ " B_2) = (l \vee \neg l), (l \wedge \neg l),$

for any extended literal

$l$

, then any rule applied on

$l$

, then any rule applied on

$\circ "$

in this subformula is higher in d than every rule applied on

$\circ "$

in this subformula is higher in d than every rule applied on

$\circ '$

.

$\circ '$

.

-

-

3. If

$B_{1}\circ ' \ldots \circ ' B_{r}$

is a subformula of

$B_{1}\circ ' \ldots \circ ' B_{r}$

is a subformula of

$A'$

with

$A'$

with

$\alpha \Rightarrow A'$

appearing in d, where each

$\alpha \Rightarrow A'$

appearing in d, where each

$B_i = \overbrace {\neg \cdots \neg }^{2n}(l_{1}\circ " \ldots \circ " l_{r_i})$

or

$B_i = \overbrace {\neg \cdots \neg }^{2n}(l_{1}\circ " \ldots \circ " l_{r_i})$

or

$\overbrace {\neg \cdots \neg }^{2n + 1}(l^{\prime }_{1}\circ " \ldots \circ " l^{\prime }_{r^{\prime }_i})$

for

$\overbrace {\neg \cdots \neg }^{2n + 1}(l^{\prime }_{1}\circ " \ldots \circ " l^{\prime }_{r^{\prime }_i})$

for

$\circ ', \circ " \in \{\vee , \wedge \}$

,

$\circ ', \circ " \in \{\vee , \wedge \}$

,

$\circ ' \neq \circ "$

, then any application of rules

$\circ ' \neq \circ "$

, then any application of rules

$\circ _2$

,

$\circ _2$

,

$\neg \circ _2$

in d with main connective in this subformula occurs below applications of other rules with main connective in this subformula.

$\neg \circ _2$

in d with main connective in this subformula occurs below applications of other rules with main connective in this subformula.

Comprehensive derivations are derivations with (i) specifications on the order of application of rules, and (ii) restrictions on application of rules

![]() $\circ _{2}$

and

$\circ _{2}$

and

![]() $\neg \circ _{2}$

. A quick reflection is enough to see that (ii) is an essential feature to reach axioms, reading derivations bottom-up; so it is not restrictive. As for (i), it is straightforward to check that, in the calculus

$\neg \circ _{2}$

. A quick reflection is enough to see that (ii) is an essential feature to reach axioms, reading derivations bottom-up; so it is not restrictive. As for (i), it is straightforward to check that, in the calculus

![]() $EC$

, whenever rules are applied on the

$EC$

, whenever rules are applied on the

![]() $\vee $

and

$\vee $

and

![]() $\wedge $

connectives in a derivation of a sequent with no conditions, then there exists a derivation of the same sequent with the order of these rules inverted.Footnote

15

Hence it follows from Theorem 3.9 that, whenever

$\wedge $

connectives in a derivation of a sequent with no conditions, then there exists a derivation of the same sequent with the order of these rules inverted.Footnote

15

Hence it follows from Theorem 3.9 that, whenever

![]() $\alpha \models A$

, there exists a comprehensive derivation of

$\alpha \models A$

, there exists a comprehensive derivation of

![]() $\alpha \Rightarrow A$

. Moreover, comprehensive derivations can easily be constructed using a version of a standard proof search algorithm: details are provided in Appendix A. The constructed derivation is guaranteed to have proof height (maximal length of a branch from root to leaf) at most the complexity of the classifier A. Finally, it is worth noticing that in comprehensive derivations, conditions end up containing subformulas of A that do not logically follow from the instance

$\alpha \Rightarrow A$

. Moreover, comprehensive derivations can easily be constructed using a version of a standard proof search algorithm: details are provided in Appendix A. The constructed derivation is guaranteed to have proof height (maximal length of a branch from root to leaf) at most the complexity of the classifier A. Finally, it is worth noticing that in comprehensive derivations, conditions end up containing subformulas of A that do not logically follow from the instance

![]() $\alpha $

.

$\alpha $

.

Definition 4.3. Let

![]() $\tau _{1}, \ldots , \tau _{n}$

be terms. We use the notation

$\tau _{1}, \ldots , \tau _{n}$

be terms. We use the notation

![]() $\tau _{1}- \cdots -\tau _{n}$

, for

$\tau _{1}- \cdots -\tau _{n}$

, for

![]() $n\geq 1$

, to indicate a list of separated terms.

$n\geq 1$

, to indicate a list of separated terms.

Definition 4.4. We define two sets of applications of explanatory rules:

-

-

$S_{1}$

contains: rule

$S_{1}$

contains: rule

$\circ _{1}$

applied on formulas of the form

$\circ _{1}$

applied on formulas of the form

$C[A\wedge B]$

, where

$C[A\wedge B]$

, where

$C[.]\in \mathcal {P}$

, or on formulas of the form

$C[.]\in \mathcal {P}$

, or on formulas of the form

$C[A\vee B]$

, where

$C[A\vee B]$

, where

$C[.]\in \mathcal {N}$

, as well as rule

$C[.]\in \mathcal {N}$

, as well as rule

$\neg \circ _{1}$

applied on formulas of the form

$\neg \circ _{1}$

applied on formulas of the form

$C[\neg (A\vee B)]$

, where

$C[\neg (A\vee B)]$

, where

$C[.]\in \mathcal {P}$

or on formulas of the form

$C[.]\in \mathcal {P}$

or on formulas of the form

$C[\neg (A\wedge B)]$

, where

$C[\neg (A\wedge B)]$

, where

$C[.]\in \mathcal {N}$

.

$C[.]\in \mathcal {N}$

. -

-

$S_{2}$

contains: rule

$S_{2}$

contains: rule

$\circ _1$

applied on formulas of the form

$\circ _1$

applied on formulas of the form

$C[A\vee B]$

, where

$C[A\vee B]$

, where

$C[.]\in \mathcal {P}$

or on formulas of the form

$C[.]\in \mathcal {P}$

or on formulas of the form

$C[A\wedge B]$

, where

$C[A\wedge B]$

, where

$C[.]\in \mathcal {N}$

, as well as rule

$C[.]\in \mathcal {N}$

, as well as rule

$\neg \circ _{1}$

applied on formulas of the form

$\neg \circ _{1}$

applied on formulas of the form

$C[\neg (A\wedge B)]$

, where

$C[\neg (A\wedge B)]$

, where

$C[.]\in \mathcal {N}$

or on formulas of the form

$C[.]\in \mathcal {N}$

or on formulas of the form

$C[\neg (A\vee B)]$

, where

$C[\neg (A\vee B)]$

, where

$C[.]\in \mathcal {P}$

.

$C[.]\in \mathcal {P}$

.

To obtain sufficient reasons from comprehensive derivations, we employ the following labelling of derivations.

Definition 4.5. Let d be a comprehensive derivation of

![]() $\alpha \Rightarrow A$

. The labelling of (the sequents in) d by lists of separated terms is defined inductively as follows:

$\alpha \Rightarrow A$

. The labelling of (the sequents in) d by lists of separated terms is defined inductively as follows:

-

1. For each leaf of d, if it is of one of the two following forms:

-

(i)

$X, \overbrace {\neg \cdots \neg }^{2n +1}p\mid \alpha \Rightarrow p;$

$X, \overbrace {\neg \cdots \neg }^{2n +1}p\mid \alpha \Rightarrow p;$

-

(ii)

$X, \overbrace {\neg \cdots \neg }^{2n}p\mid \alpha \Rightarrow \neg p,$

$X, \overbrace {\neg \cdots \neg }^{2n}p\mid \alpha \Rightarrow \neg p,$

for some integer n then associate with the leaf the empty set. Otherwise, associate with the leaf the set

$\{p\}$

or

$\{p\}$

or

$\{\neg p\}$

, where p or

$\{\neg p\}$

, where p or

$\neg p$

, respectively, are the literals on the right side of the sequent.

$\neg p$

, respectively, are the literals on the right side of the sequent. -

-

2. For any sequent in d, if one of the rules

$\neg \neg $

,

$\neg \neg $

,

$\circ _{2}, \neg \circ _{2}$

has been applied to obtain this sequent, and

$\circ _{2}, \neg \circ _{2}$

has been applied to obtain this sequent, and

$\tau _{1}-\cdots - \tau _{n}$

is the label associated with the premisse of the rule, then

$\tau _{1}-\cdots - \tau _{n}$

is the label associated with the premisse of the rule, then

$\tau _{1}-\cdots - \tau _{n}$

is label associated with the sequent.

$\tau _{1}-\cdots - \tau _{n}$

is label associated with the sequent. -

3. For any sequent in d, if any of the rules belonging to

$S_{1}$

have been applied to obtain this sequent, and the labels associated with the premisses of the rule are

$S_{1}$

have been applied to obtain this sequent, and the labels associated with the premisses of the rule are

$\tau _{n_1} -\cdots - \tau _{n_i}$

and

$\tau _{n_1} -\cdots - \tau _{n_i}$

and

$\tau _{m_1}-\cdots - \tau _{m_j}$

, then the label associated with the sequent is the minimal sublist of

$\tau _{m_1}-\cdots - \tau _{m_j}$

, then the label associated with the sequent is the minimal sublist of

$\tau _{n1}\cup \tau _{m1} -\tau _{n1}\cup \tau _{m2} - \cdots - \tau _{nk}\cup \tau _{ml}$

.

$\tau _{n1}\cup \tau _{m1} -\tau _{n1}\cup \tau _{m2} - \cdots - \tau _{nk}\cup \tau _{ml}$

. -

4. For any sequent in d, if any of the rules belonging to

$S_{2}$

have been applied to obtain this sequent, and the labels associated with the premisses of the node are

$S_{2}$

have been applied to obtain this sequent, and the labels associated with the premisses of the node are

$\tau _{n1}-\cdots - \tau _{nk}$

and

$\tau _{n1}-\cdots - \tau _{nk}$

and

$\tau _{m1}-\cdots - \tau _{mj}$

, then the label associated with the sequent is the minimal sublist of

$\tau _{m1}-\cdots - \tau _{mj}$

, then the label associated with the sequent is the minimal sublist of

$\tau _{n1}-\cdots - \tau _{nk}-\tau _{m1}-\cdots - \tau _{mj}$

.

$\tau _{n1}-\cdots - \tau _{nk}-\tau _{m1}-\cdots - \tau _{mj}$

. -

5. Consider the list of separated terms associated with the conclusion

$\alpha \Rightarrow A$

of the derivation d. For any two sets

$\alpha \Rightarrow A$

of the derivation d. For any two sets

$\tau _{i}$

and

$\tau _{i}$

and

$\tau _{j}$

belonging to the list, it should never be the case that

$\tau _{j}$

belonging to the list, it should never be the case that

$\tau _{i}\subseteq \tau _{j}$

. If so, eliminate the bigger set. Filtering this way the list, we obtain

$\tau _{i}\subseteq \tau _{j}$

. If so, eliminate the bigger set. Filtering this way the list, we obtain

$SR$

the set of all sets belonging to the (possibly filtered) list,

$SR$

the set of all sets belonging to the (possibly filtered) list,

where the minimal sublist of a list of separated terms

![]() $\tau _{1}-\cdots - \tau _{n}$

contains all and only

$\tau _{1}-\cdots - \tau _{n}$

contains all and only

![]() $\tau _i$

such that

$\tau _i$

such that

![]() $\forall j \neq i,\ \tau _i \not \subset \tau _j$

.

$\forall j \neq i,\ \tau _i \not \subset \tau _j$

.

Definition 4.6. Let d be a comprehensive derivation of

![]() $\alpha \Rightarrow A$

, equipped with the labelling defined above, and let

$\alpha \Rightarrow A$

, equipped with the labelling defined above, and let

![]() $\tau _{1}-\cdots - \tau _{n}$

be the label of the conclusion.

$\tau _{1}-\cdots - \tau _{n}$

be the label of the conclusion.

![]() $SR = \{\tau _i: i = 1, \ldots , n\}$

.

$SR = \{\tau _i: i = 1, \ldots , n\}$

.

The labelling in Definition 4.5 specifies inductively reasons for each sequent in the derivation. Since sufficient reasons have to be minimal, the definition works in such a way that all those reasons labelling the conclusion that are not minimal, i.e., such that they are subsumed by another reason labelling the conclusion, are filtered. Below we shall show that

![]() $SR$

corresponds to the set of sufficient reasons for a decision

$SR$

corresponds to the set of sufficient reasons for a decision

![]() $A(\alpha )$

; it follows that the

$A(\alpha )$

; it follows that the

![]() $SR$

for

$SR$

for

![]() $\alpha \Rightarrow A$

only depends on the sequent and is independent of the comprehensive derivation of this sequent used.

$\alpha \Rightarrow A$

only depends on the sequent and is independent of the comprehensive derivation of this sequent used.

As an example, consider again classifier

![]() $\mathcal {C}$

represented by the formula

$\mathcal {C}$

represented by the formula

![]() $A= e\wedge (\neg f\vee w\vee g)$

, and the case of Greg (the case of Susan can be treated analogously), which can be summed up by the following instance

$A= e\wedge (\neg f\vee w\vee g)$

, and the case of Greg (the case of Susan can be treated analogously), which can be summed up by the following instance

![]() $\alpha = (e, \neg f, w, \neg g)$

. Greg is admitted by classifier C:

$\alpha = (e, \neg f, w, \neg g)$

. Greg is admitted by classifier C:

![]() $\alpha \models A$

. It is easy to check that the following is a comprehensive derivation d of

$\alpha \models A$

. It is easy to check that the following is a comprehensive derivation d of

![]() $\alpha \Rightarrow A$

:

$\alpha \Rightarrow A$

:

Terms associated with d. The two top-left leafs are associated with the two lists of separated terms

![]() $\{e\}$

and

$\{e\}$

and

![]() $\{\neg f\}$

, whilst the two top-right leafs are associated with the two lists of separated terms

$\{\neg f\}$

, whilst the two top-right leafs are associated with the two lists of separated terms

![]() $\{e\}$

and

$\{e\}$

and

![]() $\{w\}$

. Since for each pair of sequents associated with the lists of separated terms mentioned above a rule belonging to

$\{w\}$

. Since for each pair of sequents associated with the lists of separated terms mentioned above a rule belonging to

![]() $S_{1}$

has been applied, we get on the one side

$S_{1}$

has been applied, we get on the one side

![]() $\{e, \neg f\}$

and on the other side

$\{e, \neg f\}$

and on the other side

![]() $\{e, w\}$

. In each case, we then have the rule

$\{e, w\}$

. In each case, we then have the rule

![]() $\circ _{2}$

followed by a rule belonging to

$\circ _{2}$

followed by a rule belonging to

![]() $S_{2}$

, hence we get the list of separated terms

$S_{2}$

, hence we get the list of separated terms

![]() $\{e, \neg f\}$

-

$\{e, \neg f\}$

-

![]() $\{e, w\}$

. Following the procedure of Definition 4.5, we conclude that

$\{e, w\}$

. Following the procedure of Definition 4.5, we conclude that

![]() $SR=\{\{e, \neg f\}$

,

$SR=\{\{e, \neg f\}$

,

![]() $\{e, w\}\}$

is the set of two terms associated with derivation d of the sequent

$\{e, w\}\}$

is the set of two terms associated with derivation d of the sequent

![]() $e, \neg f, w, \neg g\Rightarrow e\wedge ((\neg f\vee w)\vee g)$

.

$e, \neg f, w, \neg g\Rightarrow e\wedge ((\neg f\vee w)\vee g)$

.

As further illustration, consider now the classifier

![]() $C^{\prime }$

, which is represented by the formula

$C^{\prime }$

, which is represented by the formula

![]() $A^{\prime }=(p\wedge q)\vee (r\wedge \neg q)$

, and which was mentioned in §2 as a more difficult example to treat. The sufficient reasons of, for instance,

$A^{\prime }=(p\wedge q)\vee (r\wedge \neg q)$

, and which was mentioned in §2 as a more difficult example to treat. The sufficient reasons of, for instance,

![]() $\alpha ^{\prime } = \{p, q, r\}$

are

$\alpha ^{\prime } = \{p, q, r\}$

are

![]() $\{p, q\}$

and

$\{p, q\}$

and

![]() $\{p, r\}$

; we now show how our procedure is able to generate them. First of all, it is easy to check that the following is a comprehensive derivation d of

$\{p, r\}$

; we now show how our procedure is able to generate them. First of all, it is easy to check that the following is a comprehensive derivation d of

![]() $\alpha ^{\prime }\Rightarrow A^{\prime }$

:

$\alpha ^{\prime }\Rightarrow A^{\prime }$

:

Terms associated with d. The four top-left leafs are associated with the four lists of separated terms

![]() $\{p\}$

,

$\{p\}$

,

![]() $\{r\}$

,

$\{r\}$

,

![]() $\{q\}$

and

$\{q\}$

and

![]() $\{r\}$

. Since for each pair of lists of separated terms,

$\{r\}$

. Since for each pair of lists of separated terms,

![]() $\{p\}$

and

$\{p\}$

and

![]() $\{r\}$

on the one side, and

$\{r\}$

on the one side, and

![]() $\{q\}$

and

$\{q\}$

and

![]() $\{r\}$

on the other side, a rule belonging to the set

$\{r\}$

on the other side, a rule belonging to the set

![]() $S_{2}$

has been applied, we get

$S_{2}$

has been applied, we get

![]() $\{p\}$