As artificial intelligence (AI) becomes increasingly capable of performing nonroutine tasks that were, until recently, believed to require distinctly human intelligence, few occupations now appear entirely immune to automation (Felten, Raj, and Seamans Reference Felten, Raj and Seamans2023; Frey and Osborne Reference Frey and Osborne2023; Zarifhonarvar Reference Zarifhonarvar2023). Recent research suggests that generative AI programs, like ChatGPT, could already fully replace 33% of all jobs (Zarifhonarvar Reference Zarifhonarvar2023), with high-skilled workers facing the greatest threat (Autor Reference Autor2024; Felten, Raj, and Seamans Reference Felten, Raj and Seamans2023). These transformations are not merely theoretical speculations about the future; they are already taking place: studies have shown that occupation profiles are rapidly changing, and high-skilled workers are increasingly afraid of losing their jobs (Demirci, Hannane, and Zhu Reference Demirci, Hannane and Zhu2025; Knotz et al. Reference Knotz, Montero, Lavanchy and Wagner2024; Xue et al. Reference Xue, Cao, Feng, Gu and Zhang2022). Large language models like ChatGPT have hence thrust into the spotlight an impending wave of labor market transformation, which would appear to pose an unprecedented challenge for the contemporary welfare states.

Scholars disagree on exactly how AI will impact the labor market: pessimists warn of impending mass unemployment and widespread job displacement across sectors, whereas more optimistic perspectives argue that AI’s impact will correspond with previous technological revolutions of the twentieth century, in which new jobs will replace those lost (Willcocks Reference Willcocks2020). However, there is broad agreement that we stand before a period of intensified labor market challenges, including job displacement and potential deterioration of job quality due to deskilling, skill mismatch, and increased digital surveillance (Autor Reference Autor2022; Goos and Savona Reference Goos and Savona2024). Likewise, several studies argue that existing welfare institutions may be insufficiently equipped to meet these socioeconomic challenges (Acemoglu, Autor, and Johnson Reference Acemoglu, Autor and Johnson2023; Ludden Reference Ludden2024; Spencer Reference Spencer2018; Susskind Reference Susskind2020).

The contemporary welfare states were shaped by the pressures of globalization and technological innovation of the 1990s, leading to welfare institutions structured around work: social benefits have been increasingly tied to labor market participation and activation measures (Knotz Reference Knotz2018), which may be poorly suited to meet AI’s labor market transformations. After decades of welfare retrenchment, liberalization, and labor market dualization, welfare state systems have moreover lost much of their redistributive capacity and become increasingly permissive to precarious employment arrangements (Armingeon and Weisstanner Reference Armingeon, Weisstanner, Green-Pedersen, Jensen and Vis2023; Pontusson and Weisstanner Reference Pontusson and Weisstanner2018; Scruggs and Ramalho Tafoya Reference Scruggs and Tafoya2022; Wang and Van Vliet Reference Wang and van Vliet2016). Skill formation schemes are largely designed for a context of skill-biased technological advancement (Goldin and Katz Reference Goldin and Katz2009), a model that may not fully respond to workers’ needs in an era in which technology replaces high-skill occupations. These conditions suggest an impending crisis of existing welfare systems, with renewed political support for welfare state transformation.

This paper situates the impact of AI on the welfare state in the longer history of technology-driven welfare transformations, and draws on an analysis of the first multicountry survey to specifically address the impact of AI on welfare preferences—the 2024 OECD Risks that Matter survey (OECD 2025a)—to theorize this potential shift on the politics of welfare states. As we will see, the OECD data reveal growing fears of AI automation, and broad support for policies aimed at protecting workers from the labor market disruptions caused by AI. Results also show that preferences go beyond dominant social policy paradigms.

Three findings stand out. First, although low- and middle-skilled workers have historically been most vulnerable to automation, the new data suggest that fears of AI-driven automation now cut across educational lines. Second, whereas perceived automation risks have traditionally been associated with increased support for social consumption policies—and to a lesser extent, social investment—this pattern appears less consistent today. While labor market insecurity remains positively linked to support for unemployment benefits, the relationship is weaker than for emerging policy proposals such as robot taxes and universal basic income (UBI). Fear of being replaced by AI is moreover negatively associated with support for retraining programs. The rise of AI thus appears to mean that fear of automation is now translated into demand for new forms of protection. Third, the results indicate a negative feedback pattern: in countries with more generous welfare states, individuals who fear losing their jobs to AI are less likely to support the expansion of a given policy. Notably, this pattern does not appear in the case of robot taxes; support for this measure remains high among those who feel threatened by AI, regardless of the generosity of existing welfare arrangements.

On this basis, I argue that the shift in welfare preferences will hence be characterized by the intensified demand for two distinct policy directions for addressing the disruptive effect of AI on the labor market. The first seeks to promote a society with less reliance on work by reducing the dependence of livelihoods on paid employment, severing the link between welfare benefits and services from work requirements, and decoupling skill formation from labor market demands. Examples include negative income tax, UBI, and generous welfare benefits and services, without conditionalities (Noguera Reference Noguera2019; Spencer Reference Spencer2018; Susskind Reference Susskind2020; M. Thompson Reference Thompson2022). The second direction focuses instead on protecting workers by preserving the central role of employment in resource distribution. This involves direct state intervention to limit job-replacing technologies through measures such as robot taxes, “use” taxes, and job guarantee schemes (Abbott and Bogenschneider Reference Abbott and Bogenschneider2018; Acemoglu, Autor, and Johnson Reference Acemoglu, Autor and Johnson2023; Acemoglu and Restrepo Reference Acemoglu and Restrepo2020).

These results point to the increasing relevance of a political conflict centered on the future role of work itself. The OECD data suggest that constituencies are already aligning along this divide: lower-income individuals are more likely to support decommodifying policies that reduce dependence on employment, while those who view welfare recipients as undeserving tend to favor interventions that uphold work as the cornerstone of social distribution. These tensions reflect a nascent but consequential reconfiguration of distributive conflict—one centered not just on social protection and skill development, but on the legitimacy of work as the primary mechanism of livelihood security.

We begin by situating AI’s impact on the labor market in the longer history of welfare transformations.

Technology and the Welfare State

The relationship between work and the welfare state has gone through several fundamental historical shifts, driven in part by technological and economic transformations. In the early phases of industrialization, the welfare states were developed to protect workers from the social dislocation and labor market insecurities caused by rapid technological advancements (Polanyi Reference Polanyi[1944] 2001). Following World War II, many countries expanded their welfare systems, introducing more comprehensive social programs aimed at enhancing social protection and reducing inequality. Esping-Andersen’s (Reference Esping-Andersen1990) seminal work on welfare state regimes characterizes these institutions as designed to promote decommodification, providing services and benefits—such as unemployment benefits, pensions, healthcare, and housing—that enable citizens to secure their livelihoods independently of the labor market.

Since the 1980s, however, the welfare states have undergone significant transformations. The postindustrial landscape, characterized by rapid technological change and globalization, led to significant transformations in the labor markets of advanced economies, including a decline in manufacturing employment and a growing demand for a highly skilled workforce (Iversen and Wren Reference Iversen and Wren1998). Successive economic crises and intensified competitive pressures resulting from globalization strained existing welfare systems, leading to increased scrutiny of welfare expenditures (Swank Reference Swank2005)—motivating retrenchment, liberalization, and dualization of labor market policies (Armingeon and Weisstanner Reference Armingeon, Weisstanner, Green-Pedersen, Jensen and Vis2023; Scruggs and Ramalho Tafoya Reference Scruggs and Tafoya2022; Wang and Van Vliet 2016).

In response to these pressures, welfare state benefits became increasingly conditional, aiming to enhance international competitiveness while balancing growing demands for government resources with a shrinking pool of public funding. Benefits were linked to previous contributions and increasingly demanded labor market participation. Reforms introduced sanctions and conditionalities, tying benefits to a rapid return to the labor market (Bonoli Reference Bonoli, Palier, Palme and Morel2011; Knotz Reference Knotz2018; Reference Knotz2020). The austerity measures of the post–2008 financial crisis intensified these policies, with further cuts to welfare programs and stricter eligibility criteria. As benefits have diminished in generosity and universality, welfare states have lost part of their redistributive capacity, and hence their ability to protect citizens from labor market risks.

Some welfare states furthermore underwent recalibration reforms, shifting the focus from social protection to social investment (Bonoli Reference Bonoli1997; Reference Bonoli2005). This approach focuses on developing and mobilizing skills through education, adult training, and childcare support, with the goal of promoting social inclusion through labor market participation. In the context of skill-biased technological change, where investments in technology are increasing the demand for high-skilled workers (Goldin and Katz Reference Goldin and Katz2009), scholars have praised the social investment paradigm as well suited to addressing social inequalities by promoting the “upskilling” of the workforce (Garritzmann, Häusermann, and Palier Reference Garritzmann, Häusermann and Palier2022; Häusermann and Palier Reference Häusermann and Palier2008).

In short, as a result of welfare reforms since the 1980s, current welfare states are centered on two key tenets: (1) work is the only pathway to securing a livelihood, meaning that welfare benefits are primarily compensatory and linked to labor market participation; and (2) the assumption that technological innovation will mean that “low-skilled” jobs will be automated and replaced by “high-skilled” work, motivating social investments in skills.

AI and the Welfare State

While the term artificial intelligence has been in use since the 1950s, its meaning and technological foundations have evolved significantly over time—from symbolic logic and rule-based systems in its early decades, to statistical learning and data-driven models, and more recently to large-scale neural networks capable of general-purpose reasoning (Törnberg et al. Reference Törnberg, Söderström, Barella, Greyling and Oldfield2025). A commonly cited turning point occurred around 2012, driven by advances in computational power, the proliferation of digital data, and increased investment in AI research. This enabled the rise of deep learning and data-intensive machine learning, which allowed for powerful applications in tasks such as pattern recognition, classification, forecasting, and process optimization (Haenlein and Kaplan Reference Haenlein and Kaplan2019; Lee et al. Reference Lee, Davari, Singh and Pandhare2018; Oztemel and Gursev Reference Oztemel and Gursev2020; Ribeiro et al. Reference Ribeiro, Lima, Eckhardt and Paiva2021). These systems, however, were often narrow in scope, required substantial task-specific training, and remained relatively costly to deploy across domains.

A new milestone was reached in 2022 with the emergence of generative AI, particularly large language models fine-tuned through instruction learning. These models brought a qualitative shift in AI capabilities: they could perform a broad range of tasks without domain-specific training, simply through natural language prompting. In many cases, they not only matched but outperformed traditional approaches across a variety of knowledge and communication-intensive tasks (Törnberg Reference Törnberg2024). This shift dramatically lowered the cost and broadened the scope of automation, making AI a general-purpose technology with widespread applicability across sectors.

As AI systems increasingly permeate the economy, they pose a growing challenge to the two tenets upon which the contemporary welfare state paradigm rests: that work is the main pathway to securing a livelihood, and that technological innovation will replace “low-skilled” jobs with new “high-skilled” ones. Whereas previous waves of automation targeted low- and middle-skilled jobs, AI is expected also to impact high-skilled occupations (Felten, Raj, and Seamans Reference Felten, Raj and Seamans2023; Zarifhonarvar Reference Zarifhonarvar2023). As AI reduces the advantages humans traditionally hold over machines in cognitive tasks, it can automate nonroutine occupations or simplify complex tasks, leading to the deskilling of certain jobs (Autor Reference Autor2024). Moreover, it is no longer safe to assume that investment in technology will be associated with an increase in job opportunities: AI technologies can be used to substitute human labor without a corresponding increase in productivity and demand for work (Acemoglu and Restrepo Reference Acemoglu and Restrepo2020; Susskind Reference Susskind2023). Welfare institutions predicated on work requirements, activation policies, and upskilling are thus poorly equipped to meet such labor market transitions. These contradictions suggest growing internal tensions, with political calls for reforms and possibly even a crisis of the current welfare state paradigm in prospect.

While this crisis does not necessarily signal a decline in public support for traditional welfare policies, it is likely to open space for new policy paradigms aimed at addressing the challenges AI poses to the labor market (Hall Reference Hall1993). The core argument advanced here is that these paradigms revolve around a new axis of distributive conflict: the role of work as the principal mechanism for distributing resources, social rights, and meaning in society.

Existing scholarship on AI and the future of work suggests two broad responses to this evolving conflict. The first seeks to preserve the centrality of work by protecting employment and resisting labor-substituting technological change. This approach emphasizes that work is not only a means of securing income but also a key source of identity, dignity, and social inclusion. Advocates argue that accelerating automation undermines these functions and propose measures to slow or regulate AI adoption to ensure that the social value of work is maintained (Acemoglu, Autor, and Johnson Reference Acemoglu, Autor and Johnson2023; Spencer Reference Spencer2018; Susskind Reference Susskind2020; M. Thompson Reference Thompson2022).

Earlier technological shifts also provoked such reactions, most famously the Luddite machine breaking of the early nineteenth century. Despite their political significance, such events were typically concentrated in specific sectors (Mokyr Reference Mokyr1992; E. Thompson Reference Thompson2016). By contrast, the pervasive, cross-sectoral reach of AI generates a qualitatively different landscape of conflict. Recent survey evidence shows that nearly half of respondents in both the United Kingdom and the United States express concern about AI’s negative impact on the labor market, signaling a far broader and more diffuse societal reaction (Dreksler et al. Reference Dreksler, Law, Ahn, Schiff, Schiff and Peskowitz2025).

Concrete policies aligned with the “preserve work” direction include robot taxes, automation-use levies, stricter regulations on AI deployment, and employment guarantee schemes (Abbott and Bogenschneider Reference Abbott and Bogenschneider2018; Acemoglu, Autor, and Johnson Reference Acemoglu, Autor and Johnson2023; Acemoglu and Restrepo Reference Acemoglu and Restrepo2020; Lehner and Schwarz Reference Lehner and Schwarz2025). Robot taxes, for example, are designed to counteract current tax asymmetries that favor capital over labor. Because firms typically pay payroll taxes but not equivalent levies on automation technologies, they are incentivized to automate even when doing so is neither efficient nor socially beneficial (Abbott and Bogenschneider Reference Abbott and Bogenschneider2018). Robot taxes thus aim to correct this distortion and, in some proposals, to fund programs that offset the social costs of automation. Others, like Acemoglu and Lensman (Reference Acemoglu and Lensman2024), advocate a more precautionary approach, arguing that slowing the pace of AI adoption allows policy makers time to assess its broader societal impacts. From this perspective, they propose adoption taxes (e.g., Pigouvian taxes) or stricter regulations, including the prohibition of AI in certain sectors.

Employment guarantee schemes offer another mechanism for preserving the role of work. These programs promise state-organized, full-time employment at a livable wage to anyone unable to find work in the private sector. While program designs vary in scope, duration, and sectoral involvement, they share a commitment to reinforcing employment as a social right, aligning salaries with collective bargaining standards, and ensuring participation in socially useful work (Lehner Reference Lehner2024).

The second approach, by contrast, calls for reducing the dependence of livelihoods on labor market participation, a process often described as a more radical form of decommodification. Here, work is understood not as an inherent source of meaning but as a historically contingent structure that has become increasingly dysfunctional under contemporary economic conditions. Drawing on thinkers such as Hannah Arendt (Reference Arendt1958), proponents argue that human flourishing has historically been tied more to public engagement and creative contribution—what Arendt called action and work—than to remunerated labor. In this view, AI presents an opportunity to reimagine welfare institutions in ways that loosen the grip of paid work over social rights and human value.

These ideas are not new. Early industrial-era debates already envisioned welfare arrangements that would buffer individuals from the vagaries of the labor market. Their most far-reaching implementation arguably occurred in Sweden in the postwar period, though even there, reforms over time shifted the system closer to continental Europe’s contribution-based insurance model (Pontusson Reference Pontusson, Crouch, Streeck and Pontusson1997). More recently, the COVID-19 pandemic reignited demands for deeper decommodification, yet empirical evidence shows that support for UBI remains contingent, with European publics still divided (Weisstanner Reference Weisstanner2022). The widespread perception of AI as a labor market threat may, however, create a more favorable environment for such policies, potentially broadening their appeal beyond that attained during previous moments of crisis.

One pathway toward greater decommodification involves substantially reforming core social insurance programs, such as unemployment benefits, by raising generosity, loosening work-related eligibility conditions, and decoupling access from continuous employment histories, allowing them to respond to long-term unemployment and declining job quality. An alternative trajectory that departs more clearly from the traditional compensatory model of the welfare state, which ties social rights to labor market participation and prior contributions (Garritzmann, Häusermann, and Palier Reference Garritzmann, Häusermann and Palier2022), centers on proposals such as UBI and negative income-tax schemes. These approaches acknowledge that stable employment may no longer offer a viable or universal foundation for securing income and welfare in the AI era.

Automation Fears, Welfare Preferences

While both policy approaches—preserving work and decommodification—are actively promoted by various stakeholders as responses to AI-induced labor market disruption, little is known about how these ideas resonate with the broader public.

A well-established body of research links fears of technological displacement to increased support for welfare spending (Thewissen and Rueda Reference Thewissen and Rueda2019). Individuals concerned about automation tend to favor compensatory policies, such as unemployment benefits, over social investment measures focused on education or retraining (Busemeyer et al. Reference Busemeyer, Gandenberger, Knotz and Tober2023; Busemeyer and Tober Reference Busemeyer and Tober2023; Kurer and Häusermann Reference Kurer, Häusermann, Busemeyer, Kemmerling, Van Kersbergen and Marx2022). However, only a limited number of studies have examined public attitudes toward alternative policy responses that go beyond the established paradigms of social compensation and investment to address the specific disruptions caused by AI-driven automation.

Although these studies capture only the early stages of public opinion formation, emerging evidence suggests that fears surrounding generative AI are already broadening public support for nontraditional interventions (Bicchi, Kuo, and Gallego Reference Bicchi, Kuo and Gallego2024; Borwein et al. Reference Borwein, Magistro, Michael Alvarez, Bonikowski and Loewen2024; Busemeyer et al. Reference Busemeyer, Gandenberger, Knotz and Tober2023; Gallego et al. Reference Gallego, Kuo, Manzano and Fernández-Albertos2022). In particular, such concerns are associated not only with support for compensatory measures but also with demands for job-preserving interventions—including efforts to slow technological adoption and protect employment (Bicchi, Kuo, and Gallego Reference Bicchi, Kuo and Gallego2024; Borwein et al. Reference Borwein, Magistro, Michael Alvarez, Bonikowski and Loewen2024).

By contrast, support for decommodifying policies such as UBI remains more mixed. The literature suggests that attitudes toward UBI and similar proposals are often shaped less by socioeconomic vulnerability than by cultural orientations—including beliefs about work, responsibility, and deservingness (Gallego and Kurer Reference Gallego and Kurer2022). In fact, research suggests support for such policies often appears to be influenced more by cultural factors than socioeconomic characteristics (Dermont and Weisstanner Reference Dermont and Weisstanner2020). From this perspective, support for decommodification may be constrained by the persistence of authoritarian welfare values (Busemeyer, Rathgeb, and Sahm Reference Busemeyer, Rathgeb and Alexander2022; Chueri et al. Reference Chueri, Gandenberger, Taylor, Knotz and Fossati2024), including a strong work ethic and concerns over the misuse of benefits.

Since the public release of ChatGPT on November 30, 2022, both the visibility of AI and public awareness of its potential to affect job opportunities have expanded considerably. Surveys accordingly show widespread concern about the potential negative impact of this technology on the labor market (Dreksler et al. Reference Dreksler, Law, Ahn, Schiff, Schiff and Peskowitz2025). As AI technologies become more accessible and their labor market implications become easier to grasp, it becomes increasingly important to systematically examine how they shape public policy preferences and welfare politics.

This study offers, to the best of my knowledge, the first multicountry survey that directly examines how fears of AI-driven automation influence welfare preferences. Focusing exclusively on the demand side, the analysis provides empirical evidence that AI is already reshaping public attitudes toward the welfare state. It draws on the 2024 OECD Risks that Matter survey, conducted in 27 countries with more than 27,000 respondents. The survey is nationally representative with respect to gender, age, income, and education. The 2024 wave includes, for the first time, a dedicated question on fears of automation due to AI, along with policy preferences for addressing its labor market impacts (for replication data, see Chueri Reference Chueri2026).

AI-Driven Automation Fears and Welfare Preferences

We begin the empirical analysis with a descriptive overview of how fear of AI automation is distributed across education levels and countries of employment. Fear of AI automation is captured with the survey item, “How likely is it that your job (or job opportunities) will be taken over by an artificial-intelligence (AI) tool such as ChatGPT within the next five years?” Responses were given on a five-point scale (“Very unlikely,” “Unlikely,” “Likely,” “Very likely,” and “Can’t choose”). For the analyses, we recode this into a binary variable that takes a value of one when respondents report “Likely” or “Very likely” and zero when they report “Unlikely” or “Very unlikely”; “Can’t choose” is treated as missing. Educational attainment is collapsed into three groups: low covers no schooling through lower secondary; medium includes upper secondary and postsecondary nontertiary; and high comprises undergraduate and postgraduate degrees.

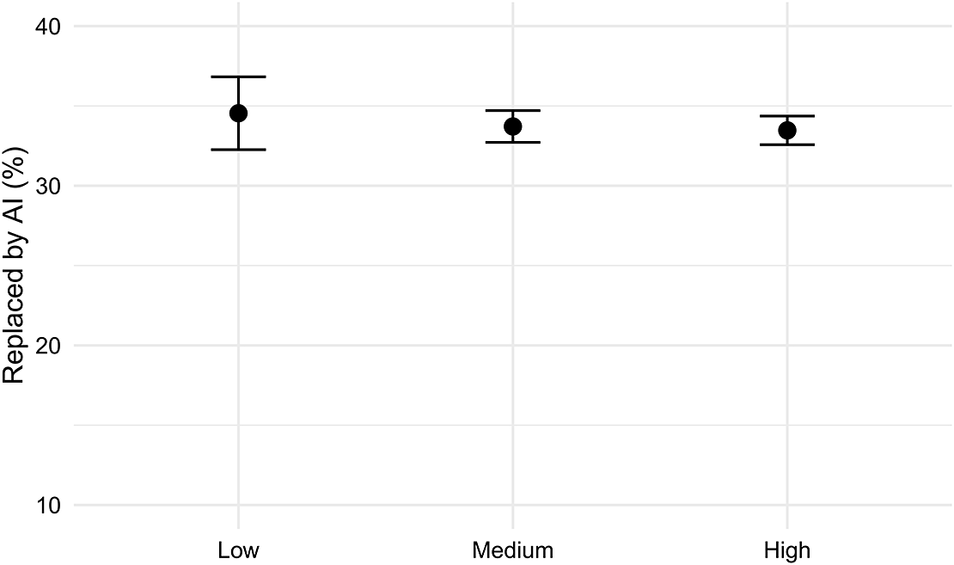

In line with previous studies (Knotz et al. Reference Knotz, Montero, Lavanchy and Wagner2024), figure 1 shows that, unlike earlier waves of automation, AI also poses a significant threat to high-skilled workers. Specifically, it shows that around 35% of respondents across all education levels consider it likely or very likely that their jobs will be replaced by AI.

Perception of AI-Driven Automation Fear across Education Levels in OECD Countries

Source: Descriptive statistics are derived from OECD (2025a), incorporating survey weights.

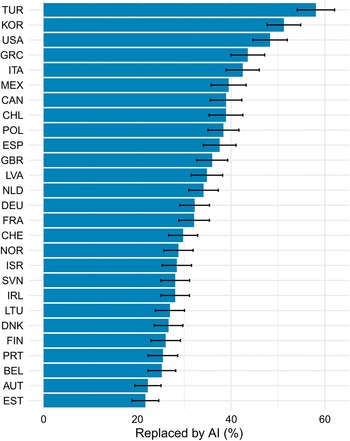

Figure 2 displays the average of AI-driven automation fear by country. It reveals considerable variability across nations. While more than 50% of respondents in Turkey and South Korea express concern about job displacement due to AI, in Estonia and Austria this proportion is approximately 20%. These results highlight the importance of considering the national context—such as industries, existing labor relations, and social institutional context—when studying perceived AI risks.

Fear of AI-Driven Automation, by Country

Source: Descriptive statistics are derived from OECD (2025a), incorporating survey weights.

Figure 3 presents the predicted support for various policies addressing technological disruption in the labor market, based on the perceived threat of AI-driven automation. The figure illustrates the results of four distinct binomial regressions, each modeling the support for one of the following policies: basic income, robot taxes, retraining, and unemployment benefits. In all models, support for the policy is the dependent variable, and country random effects are included to account for country-level differences. Additionally, the models control for key factors such as education, household disposable income, security of employment contract, sex, and age. In the plots, these control variables are held at their mean or modal values. For detailed information on the distribution of these variables, see table S1 in the supplementary material, and for the full regression output, refer to table S2.

Predicted Support for Welfare Responses to Technological Change in OECD Countries, by Perceived Risk of Job Replacement by AI

Note: Plots report predicted probability and are the result of linear multilevel regression using data from OECD (2025a).

The analysis shows that individuals who fear being replaced by AI are only slightly more likely to support the expansion of unemployment benefit programs compared with those who do not perceive such a threat. Support for this measure is found among nearly half of the population, regardless of their perceived risk from AI. Notably, fear of AI-driven automation is negatively associated with support for the introduction or expansion of adult training programs aimed at addressing the challenges posed by technological change in the labor market. While more than 60% of those who do not believe they will be negatively affected by AI support this measure, only about 53% of those who feel threatened by AI support adult training programs. The interaction between perceived AI vulnerability, education, and age in shaping support for adult training indicates that the effect is consistent across groups and not driven by any specific age cohort or education level (see figure S1 in the supplementary material).

Taken together, these findings suggest a relatively high societal acceptability and legitimacy of retraining programs as a solution to address technological disruptions in the labor market, particularly when compared with the expansion of unemployment benefits. Yet support remains comparatively modest among workers who face these risks directly. This aligns with research showing that support for adult training among individuals who do not feel threatened often stems from altruistic or sociotropic considerations (Haslberger, Gingrich, and Bhatia Reference Haslberger, Gingrich and Bhatia2024). For those confronting immediate labor market risks, however, retraining is less attractive because benefits accrue only in the longer term (Busemeyer et al. Reference Busemeyer, Gandenberger, Knotz and Tober2023; Kurer and Häusermann Reference Kurer, Häusermann, Busemeyer, Kemmerling, Van Kersbergen and Marx2022).

At the same time, the results add nuance to earlier work on automation fears and policy preferences. While prior studies have consistently found that concerns about automation primarily drive demands for compensatory measures, such as unemployment benefits (Busemeyer et al. Reference Busemeyer, Gandenberger, Knotz and Tober2023; Busemeyer and Tober Reference Busemeyer and Tober2023; Kurer and Häusermann Reference Kurer, Häusermann, Busemeyer, Kemmerling, Van Kersbergen and Marx2022), the association between AI-related fears and support for this measure appears considerably weaker. Moreover, and in line with the argument of this piece, subjective fear of AI-driven automation is associated with support for alternative policy interventions aimed more directly at addressing the impact of AI technology on job availability. Robot taxes serve as an example of a policy designed to preserve the role of work in society, whereas UBI initiatives aim to sever the link between employment and livelihood. Figure 3 shows that support for those policy initiatives among those who fear being replaced by AI is around 60% in the case of robot taxes and 55% in the case of UBI—levels that exceed the support for unemployment benefits, a well-established policy for protecting workers’ incomes against labor market risks.

Support for UBI is also substantial among individuals who do not feel threatened by AI, at roughly 50%. By contrast, only about 47% of those who do not feel at risk support robot taxes, revealing a clear gap between individuals who do and do not feel threatened by AI. This pattern is intuitive: policies that slow or constrain technological change are less appealing to those who expect to benefit from it.

To understand how contextual factors—in particular, the level of welfare effort—influence the relationship between fears of AI automation and support for different policies, I run the same models and add a cross-level interaction term between a country’s public social expenditure (as a share of gross domestic product), obtained from the OECD Social Expenditure Database (OECD 2025b), and vulnerability to AI. Following the literature’s recommendation (Heisig and Schaeffer Reference Heisig and Schaeffer2019), I also include fear of AI replacement as a random slope in the models. Results are available in table S3 in the supplementary material. Figure 4 shows that context mediates this relationship: support for policy expansion is higher where policy expenditure is lower. This negative feedback effect is comparatively stronger for unemployment benefits and UBI than for adult training. However, the earlier result that found lower support for adult training among those who feel threatened persists across different levels of welfare expenditure. Notably, welfare effort does not affect support for robot taxes among those who feel threatened by AI replacement, suggesting consistently high levels of support for this policy across OECD countries, regardless of existing welfare institutions.

I now turn to an exploratory analysis of individual-level differences among those who prefer each alternative. I estimate four binomial multilevel regressions, each model containing the support for the expansion or introduction of adult training, unemployment benefits, robot taxes, or basic income to deal with AI-driven labor market disruption as dependent variables. In all models, I incorporate our main independent variable—fear of being replaced by AI—along with socioeconomic controls: sex, education, age, income, and security of employment contract. In addition, two binary variables are included to capture welfare state attitudes. The first measures perceptions of deservingness, based on agreement with the statement that many people receive welfare benefits without deserving them. Responses were collected on a six-point scale and recoded such that respondents who agree or strongly agree are coded as one, and all other substantive responses as zero. The second variable captures beliefs about the role of the state in guaranteeing economic security, based on agreement with the statement that the government should do more to guarantee economic security and well-being. Responses were collected on a six-point scale and recoded as one for those who believe the government should be doing more or much more, and zero for all other substantive responses. In both items, responses of “Can’t choose” are coded as missing. All models also include country random effects, with full results provided in table S4 in the supplementary material.

Figure 5 presents the regression results as odds ratios. Values greater than one indicate a higher likelihood of supporting the respective policy, while values below one indicate a lower likelihood. An odds ratio of one implies no association between the independent variable and the outcome. In the figure, coefficients that are statistically significant at the 0.05 level are highlighted, whereas nonsignificant estimates are displayed in light gray. In line with the results presented in figure 3, fear of AI-driven automation is associated with support for expanding or introducing robot taxes, unemployment benefits, and basic income, but not adult training. Fear of automation is most strongly linked to support for robot taxes, followed by basic income. This aligns with our theoretical discussion, suggesting that AI-driven transformations increase demand for policy interventions beyond existing paradigms.

Regression Results for Support for Adult Training, Robot Taxes, Unemployment Benefits, and Basic Income under Technological Change

Source: Regressions rely on OECD (2025a).

Note: Coefficients are estimated using binomial multilevel models and reported as odds ratios, with those having a significance level below 0.05 rendered in black.

The view that the government should do more to ensure the economic and social well-being of the population is strongly associated with support for all policy interventions. This effect is particularly pronounced in the case of unemployment benefits, where the belief in state responsibility is linked to a 2.5-times higher likelihood of supporting the expansion of this policy. By contrast, concerns about welfare misuse have a differentiated impact. Respondents who think benefits are abused show higher support for robot taxes and adult training but lower support for unemployment benefits and UBI. A clear pattern thus emerges: those who perceive widespread misuse tend to oppose decommodification measures and instead advocate for maintaining employment as the primary mechanism of redistribution by slowing the pace of technological change and promoting workforce retraining to meet new market demands. Conversely, those with a more positive view of welfare recipients support measures that promote decommodification as a response to AI-driven labor market disruptions.

Income is negatively associated with support for decommodification policies such as basic income and expanded unemployment benefits, and positively associated with support for adult training. These results suggest that individuals in more advantaged labor market positions are more likely to favor social investment over social consumption. No significant relationship is observed between income and support for robot taxes.

Moreover, consistent with previous studies, support for adult training is strongly associated with education, with more highly educated individuals demonstrating greater support for this policy. Somewhat unexpectedly, a similar pattern emerges in relation to unemployment benefits, although the effect is weaker. Education, however, is not significantly associated with support for nontraditional policy interventions, potentially revealing that the perceived threat of automation exists across education levels.

Age emerges as a strong predictor of support for adult training. Compared with young adults aged 18 to 24, individuals in all other age groups exhibit greater support for this policy, with the effect increasing across age groups. Perhaps counterintuitively, this pattern suggests that those approaching retirement perceive a greater need for and willingness to undergo training. Older individuals are also significantly more supportive of unemployment benefits than young adults. However, for this policy, the effect remains relatively stable across age groups and is weaker overall. Finally, women are more likely to support adult training and less likely to support robot taxes.

Conclusions and Research Agenda

AI represents a profound challenge to the labor market. As AI technology becomes capable of performing tasks that until recently required human intelligence, few occupations remain entirely unaffected. Although it remains an open question whether automated jobs will be replaced by new ones in the medium and long run, the short-term trajectory points toward labor displacement and declining job quality in some sectors—driven by deskilling, skill mismatches, and increased digital surveillance. Existing welfare institutions are not well positioned to meet these challenges. The dominant welfare paradigms—social investment and social consumption—are centered around work, creating both incentives and opportunities for a quick return to the labor market. These models are likely to become insufficient in a context where work is either scarce or degraded.

This piece has situated AI-driven challenges to welfare within a longer history of technological change, triggering welfare transformation. As in past episodes, AI is likely to generate institutional strain, triggering a political moment for reform. Yet, unlike previous episodes, the current crisis revolves not only around how to protect workers but also around the broader question of the role of work as the primary mechanism of social distribution and individual worth. This emerging conflict over the future role of work is reflected in two alternative policy directions. One seeks to preserve work by slowing the pace of automation and ensuring access to employment through direct intervention. The other promotes decommodification by reducing the dependence of livelihood on paid labor—through basic income, negative income taxes, or relaxed eligibility for unemployment benefits.

These two directions are not mutually exclusive; individuals who fear job loss may support both. However, they reflect fundamentally different normative views about the value of work and are likely to generate political and societal divisions.

To provide empirical evidence for these claims, this study has drawn on the 2024 OECD Risks that Matter survey. The survey features a battery of questions on AI-related fears and support for policy interventions to address AI-driven labor market disruptions.

The findings reveal that fears of AI-driven automation are widespread and cut across educational groups. However, these concerns do not translate straightforwardly into increased support for traditional welfare programs. Rather, they are more strongly linked to support for nontraditional interventions, primarily job-preserving measures (such as robot taxes), but also policies aimed at preparing for a future where work may be less central, such as UBI. Notably, support for robot taxes remains high across contexts, even in countries with generous welfare systems, suggesting that these interventions resonate beyond the traditional contours of welfare politics.

Thus, these results indicate that automation fears are not primarily associated with support for social consumption, contrary to earlier findings. Instead, they reveal an axis of welfare conflict centered on the role of work itself. Given the lack of historical data on support for these nontraditional policy interventions, it is not possible to make a causal claim that AI is driving this shift in preferences. However, the growing prominence of AI-related threats to job quality and employment prospects suggests that these attitudes may be linked to broader transformations in the labor market. As the labor market implications of AI become clearer, future research should more systematically examine how automation-related concerns are linked to support for policies that either sustain or reconfigure the role of work.

Moreover, the data indicate a clear societal division over which path to take. Wealthier individuals and those who view welfare recipients as undeserving tend to support policies that reinforce work’s central role. In contrast, lower-income individuals and those with positive views of welfare claimants are more supportive of decommodification.

This study advances our theoretical understanding of how AI-related labor market transformations intersect with welfare politics. Whether these tensions translate into party agendas and government actions, however, will depend on their political salience and the degree to which political systems remain responsive to public preferences. AI-related concerns must also compete with long-standing issues, such as immigration or the cost of living, that shape party strategies in many democracies. Thus, while this paper has examined the demand side, future work should investigate how political actors act upon these preferences, and how partisanship, ideology, and values condition support for preserving or reconfiguring the role of work in the welfare state.

Previous research has shown that elite framing of technological change shapes how citizens perceive and respond to labor market risks (Marenco and Seidl Reference Marenco and Seidl2021). Yet welfare state research has largely overlooked how political actors mediate and respond to the distributive tensions generated by AI. Future work should examine how key stakeholders—parties, unions, and governments—shape the discourse and political conflict over AI’s implications. In doing so, scholars should build on the institutional perspective advanced here, moving beyond crude measures of welfare effort to examine how specific arrangements mediate both the impact of, and responses to, technological change.

Finally, this approach highlights a broader point: AI’s labor market consequences are not technologically determined but politically contested. Understanding how societies respond to automation thus requires sustained engagement with the political, institutional, and normative dimensions of welfare state transformation.

Supplementary material

To view supplementary material for this article, please visit http://doi.org/10.1017/S1537592726104538.

Data Replication

Data replication sets are available in Harvard Dataverse at: https://doi.org/10.7910/DVN/JFMUH9.

Acknowledgments

I thank Annatina Aerne, Jonas Pontusson, and Petter Törnberg for their careful reading of and generous feedback on this work.