1. Introduction

1.1. General introduction

Markov decision problems, central to decision theory, constitute a fundamental mathematical framework for modeling sequential decision-making under uncertainty. These models find widespread applications across diverse fields, including dynamic programming, quantitative finance, artificial intelligence, and robotics. Typically, these problems involve identifying an optimal policy—a decision strategy that maximizes a given performance criterion.

Often, this criterion is the so-called “gamma-weighted” criterion, which balances immediate and future rewards, and appears in disciplines such as econometrics (utility discounting models), finance (discounted cash flows), robotics, and reinforcement learning. However, the framework considered herein diverges from the classical optimization viewpoint. Instead of seeking an optimal policy, we focus on analyzing the structural properties of stochastic games—specifically, whether such games exhibit bias or balanced outcomes according to formal properties. In other words, our goal is not so much to optimize a given strategy, but rather to understand the intrinsic behavior of the game and identify potential biases that may advantage or disadvantage the player.

To illustrate this concept, we present three examples related to Bitcoin. First, by showing that a specific stochastic game is arbitrable, we resolve a concrete problem: determining the relative hash power threshold beyond which an otherwise honest miner has an incentive to mine on an accidental fork. Our analysis estimates this threshold at approximately 42.91%, a figure not clearly documented in existing Bitcoin literature. Second, we analyze a biased variation of the classical Heads or Tails game that sheds light on the vulnerability of the Bitcoin difficulty adjustment formula to certain attacks on Nakamoto’s consensus protocol. We derive, in a straightforward manner—without recourse to Markov decision process solvers—the threshold beyond which a rational miner with connectivity constraints benefits from deviating from honest mining behavior. Third, we prove that a certain stochastic game is non-arbitrable, demonstrating that the aforementioned problem can be addressed by modifying Bitcoin’s difficulty adjustment formula to factor in orphan blocks.

It is unsurprising that game theory has been employed to analyze the Bitcoin protocol. Indeed, Satoshi Nakamoto himself was the first to do so in his seminal paper, where he computed the success probability of a double-spending attack using the classical gambler’s ruin formula, which is a simple example of a casino game. Since then, numerous studies have advanced the understanding of such attacks [Reference Brown, Peköz and Ross6, Reference Georgiadis and Zeilberger11, Reference Grunspan and Pérez-Marco12]. For a broader context, readers are referred to a recent comprehensive book by M. Warren on Bitcoin and game theory [Reference Warren26]. An alternative approach applying mean field games to Bitcoin mining also exists [Reference Bertucci, Bertucci, Lasry and Lions3]. The remainder of this paper begins by recalling the formal definition of stochastic games.

1.2 Arbitrable stochastic game

Non-competitive stochastic game, also called Markov decision problem (MDP) is a single-player, discrete-time game with full observability. It can be described as a set of Markovian transitions—actions—on a set of states, which we assume here to be countable. Below, we recall the definition of a Markov decision problem and introduce the notations that will be used throughout this article. We also provide elementary proofs for general results.

Definition 1.1 (Non-competitive stochastic game)

A non-competitive stochastic game is defined by a quadruple ![]() $(S, A, P, R)$ and an initial state

$(S, A, P, R)$ and an initial state ![]() $s \in S$ where

$s \in S$ where ![]() $S$ is a (countable) set of states,

$S$ is a (countable) set of states, ![]() $A$ is a set of actions,

$A$ is a set of actions, ![]() $P$ is a transition probability, and

$P$ is a transition probability, and ![]() $R$ is a reward function taking values in the real numbers

$R$ is a reward function taking values in the real numbers ![]() $\mathbb{R}$.

$\mathbb{R}$.

Note that every transition gives a reward that can be positive or negative: ![]() $R\in\mathbb{R}$. More precisely, for each

$R\in\mathbb{R}$. More precisely, for each ![]() $s \in S$, the player has a subset

$s \in S$, the player has a subset ![]() $A(s)$ of the set

$A(s)$ of the set ![]() $A$ of all possible actions. In what follows, actions will be denoted by Greek letters to distinguish them from states, which are denoted by Latin letters. When the player is in state

$A$ of all possible actions. In what follows, actions will be denoted by Greek letters to distinguish them from states, which are denoted by Latin letters. When the player is in state ![]() $s \in S$ and chooses action

$s \in S$ and chooses action ![]() $\alpha \in A(s)$, they reach state

$\alpha \in A(s)$, they reach state ![]() $s' \in S$ with probability

$s' \in S$ with probability ![]() $P(s' \mid s, \alpha)$ and receive a deterministic reward

$P(s' \mid s, \alpha)$ and receive a deterministic reward ![]() $R(s' \mid s, \alpha)$. Note that the reward function is slightly different from that found, for example, in [Reference Filar and Vrieze10] since it depends not only on the state

$R(s' \mid s, \alpha)$. Note that the reward function is slightly different from that found, for example, in [Reference Filar and Vrieze10] since it depends not only on the state ![]() $s$ and the choice of action

$s$ and the choice of action ![]() $\alpha$ but also on the outcome

$\alpha$ but also on the outcome ![]() $s'$ of the action

$s'$ of the action ![]() $\alpha$.

$\alpha$.

We assume that ![]() $A(s)$ is measurable for every

$A(s)$ is measurable for every ![]() $s \in S$, and define a strategy

$s \in S$, and define a strategy ![]() $\mathfrak{f}$ as a family

$\mathfrak{f}$ as a family ![]() $(\mathfrak{f}_s)_{s \in S}$ where each

$(\mathfrak{f}_s)_{s \in S}$ where each ![]() $\mathfrak{f}_s$ is a probability measure on

$\mathfrak{f}_s$ is a probability measure on ![]() $A(s)$. We denote by

$A(s)$. We denote by ![]() $\mathcal{F}$ the set of all possible strategies. In other words, according to the strategy

$\mathcal{F}$ the set of all possible strategies. In other words, according to the strategy ![]() $\mathfrak{f} \in \mathcal{F}$, if the player is in state

$\mathfrak{f} \in \mathcal{F}$, if the player is in state ![]() $s$, they randomly choose an action in

$s$, they randomly choose an action in ![]() $A(s)$ according to the probability measure

$A(s)$ according to the probability measure ![]() $\mathfrak{f}_s$. For

$\mathfrak{f}_s$. For ![]() $\alpha \in A(s)$, we write

$\alpha \in A(s)$, we write

This is the probability of choosing action ![]() $\alpha$ when in state

$\alpha$ when in state ![]() $s$ following strategy

$s$ following strategy ![]() $\mathfrak{f}$.

$\mathfrak{f}$.

The choice of a strategy ![]() $\mathfrak{f}$ defines a Markov chain

$\mathfrak{f}$ defines a Markov chain ![]() $\mathfrak{X}$ on

$\mathfrak{X}$ on ![]() $S$. The sequence

$S$. The sequence ![]() $(\tilde{R}_t)$ denotes the sequence of rewards obtained by the player as a result of state transitions: for each

$(\tilde{R}_t)$ denotes the sequence of rewards obtained by the player as a result of state transitions: for each ![]() $t \in \mathbb{N}$,

$t \in \mathbb{N}$, ![]() $\tilde{R}_t$ is the reward earned during the period

$\tilde{R}_t$ is the reward earned during the period ![]() $[t, t+1[$, and we write

$[t, t+1[$, and we write

\begin{equation*}

\mathbb{E}_{s, \mathfrak{f}}[\tilde{R}_t] = \mathbb{E}_{\mathfrak{f}}[\tilde{R}_t \mid \mathfrak{X}_0 = s].

\end{equation*}

\begin{equation*}

\mathbb{E}_{s, \mathfrak{f}}[\tilde{R}_t] = \mathbb{E}_{\mathfrak{f}}[\tilde{R}_t \mid \mathfrak{X}_0 = s].

\end{equation*} For every integer ![]() $n \in \mathbb{N}$, we denote by

$n \in \mathbb{N}$, we denote by

\begin{equation*}

G_n = \sum_{t=0}^{n-1} \tilde{R}_t

\end{equation*}

\begin{equation*}

G_n = \sum_{t=0}^{n-1} \tilde{R}_t

\end{equation*}the cumulative gain of the player, corresponding to the wealth at step ![]() $n$.

$n$.

A classical problem consists in finding the best strategy ![]() $\mathfrak{f}$ that maximizes a certain utility function depending on the rewards

$\mathfrak{f}$ that maximizes a certain utility function depending on the rewards ![]() $(\tilde{R}_t)$, such as

$(\tilde{R}_t)$, such as

\begin{equation*}

\sum_{t=0}^{\infty} \beta^t \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \quad \text{with } \beta \in (0,1).

\end{equation*}

\begin{equation*}

\sum_{t=0}^{\infty} \beta^t \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \quad \text{with } \beta \in (0,1).

\end{equation*} However, for the problems that interest us, we consider another reward criterion associated to any strategy ![]() $\mathfrak{f} \in \mathcal{F}$.

$\mathfrak{f} \in \mathcal{F}$.

Definition 1.2. Let ![]() $\mathcal{T}$ denote the set of stopping times and

$\mathcal{T}$ denote the set of stopping times and ![]() $\mathcal{T} \cap L^1$ the set of integrable stopping times. Let

$\mathcal{T} \cap L^1$ the set of integrable stopping times. Let ![]() $s \in S$ and

$s \in S$ and ![]() $n \in \mathbb{N}$. For any strategy

$n \in \mathbb{N}$. For any strategy ![]() $\mathfrak{f} \in \mathcal{F}$, define:

$\mathfrak{f} \in \mathcal{F}$, define:

\begin{equation*}

E_{\mathfrak{f}}(s) = \sup_{\tau \in \mathcal{T} \cap L^1} \left\{\sum_{t=0}^{\tau-1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \right\} \in \overline{\mathbb{R}},

\end{equation*}

\begin{equation*}

E_{\mathfrak{f}}(s) = \sup_{\tau \in \mathcal{T} \cap L^1} \left\{\sum_{t=0}^{\tau-1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \right\} \in \overline{\mathbb{R}},

\end{equation*} \begin{equation*}

E_{\mathfrak{f}, n}(s) = \sup_{\substack{\tau \leq n \\ \tau \in \mathcal{T}}} \left\{\sum_{t=0}^{\tau-1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \right\} \in \mathbb{R}.

\end{equation*}

\begin{equation*}

E_{\mathfrak{f}, n}(s) = \sup_{\substack{\tau \leq n \\ \tau \in \mathcal{T}}} \left\{\sum_{t=0}^{\tau-1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \right\} \in \mathbb{R}.

\end{equation*} In the first case, the sup is taken over all integrable stopping times ![]() $\tau$, and in the second case, the sup is taken over all finite stopping times

$\tau$, and in the second case, the sup is taken over all finite stopping times ![]() $\tau$ such that

$\tau$ such that ![]() $\tau \leq n$.

$\tau \leq n$.

Definition 1.3. Let ![]() $s \in S$ and

$s \in S$ and ![]() $(\mathfrak{f},\mathfrak{g}) \in \mathcal{F}$. We say that

$(\mathfrak{f},\mathfrak{g}) \in \mathcal{F}$. We say that ![]() $\mathfrak{f}$ is less profitable than

$\mathfrak{f}$ is less profitable than ![]() $\mathfrak{g}$ and we write

$\mathfrak{g}$ and we write ![]() $\mathfrak{f} \prec \mathfrak{g}$ if

$\mathfrak{f} \prec \mathfrak{g}$ if ![]() $E_{\mathfrak{f}}(s)\leq E_{\mathfrak{g}}(s)$

$E_{\mathfrak{f}}(s)\leq E_{\mathfrak{g}}(s)$

The goal is to maximize ![]() $E_{\mathfrak{f}}(s)$ or sometimes

$E_{\mathfrak{f}}(s)$ or sometimes ![]() $E_{\mathfrak{f}, n}(s)$.

$E_{\mathfrak{f}, n}(s)$.

Definition 1.4. Let ![]() $s \in S$ and

$s \in S$ and ![]() $n \in \mathbb{N}$. We set:

$n \in \mathbb{N}$. We set:

\begin{equation*}

E(s) = \sup_{\mathfrak{f} \in \mathcal{F}} E_{\mathfrak{f}}(s) \in \overline{\mathbb{R}},

\end{equation*}

\begin{equation*}

E(s) = \sup_{\mathfrak{f} \in \mathcal{F}} E_{\mathfrak{f}}(s) \in \overline{\mathbb{R}},

\end{equation*} \begin{equation*}

E_n(s) = \sup_{\mathfrak{f} \in \mathcal{F}} E_{\mathfrak{f}, n}(s) \in \mathbb{R}.

\end{equation*}

\begin{equation*}

E_n(s) = \sup_{\mathfrak{f} \in \mathcal{F}} E_{\mathfrak{f}, n}(s) \in \mathbb{R}.

\end{equation*}It is clear that

for every state ![]() $s$. Moreover, if the player chooses to quit immediately and stop playing (

$s$. Moreover, if the player chooses to quit immediately and stop playing (![]() $\tau=0$), his payoff is 0. So,

$\tau=0$), his payoff is 0. So,

The value ![]() $E(s)$ represents the maximum expected gain achievable when playing the stochastic game, where the game terminates at a random time

$E(s)$ represents the maximum expected gain achievable when playing the stochastic game, where the game terminates at a random time ![]() $\tau$ that depends on the system’s positions before the final time. In contrast,

$\tau$ that depends on the system’s positions before the final time. In contrast, ![]() $E_n(s)$ denotes the maximum expected gain when the game is constrained to end after at most

$E_n(s)$ denotes the maximum expected gain when the game is constrained to end after at most ![]() $n$ actions. Another way of looking at it is to say that

$n$ actions. Another way of looking at it is to say that ![]() $E(s)$ is the fair price to pay for playing the game, starting at state

$E(s)$ is the fair price to pay for playing the game, starting at state ![]() $s \in S$. Note that this

$s \in S$. Note that this ![]() $E(s)$ can possibly be infinite. There is a classical case when it is the case.

$E(s)$ can possibly be infinite. There is a classical case when it is the case.

Proposition 1.1. If the starting state ![]() $s \in S$ of a non-competitive stochastic game is a recurrent state for the Markov chain

$s \in S$ of a non-competitive stochastic game is a recurrent state for the Markov chain ![]() $\mathfrak{X}$ associated with a certain strategy

$\mathfrak{X}$ associated with a certain strategy ![]() $\mathfrak{f} \in \mathcal{F}$ and if for a return time

$\mathfrak{f} \in \mathcal{F}$ and if for a return time ![]() $\tau$ to

$\tau$ to ![]() $s$ (i.e.,

$s$ (i.e., ![]() $\mathfrak{X}(\tau) = s$) we have

$\mathfrak{X}(\tau) = s$) we have

\begin{equation*}

\sum_{t=0}^{\tau-1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \gt 0,

\end{equation*}

\begin{equation*}

\sum_{t=0}^{\tau-1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \gt 0,

\end{equation*}then ![]() $E(s) = +\infty$.

$E(s) = +\infty$.

Proof. For any ![]() $n \in \mathbb{N}$, it suffices to consider the stopping time

$n \in \mathbb{N}$, it suffices to consider the stopping time

\begin{equation*}

\tau^{(n)} = \sum_{i=1}^n \tau_i

\end{equation*}

\begin{equation*}

\tau^{(n)} = \sum_{i=1}^n \tau_i

\end{equation*}where the ![]() $\tau_i$ are i.i.d. with the same distribution as

$\tau_i$ are i.i.d. with the same distribution as ![]() $\tau$. Then,

$\tau$. Then,

\begin{equation*}

\sum_{t=0}^{\tau^{(n)} -1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] = n \cdot \sum_{t=0}^{\tau -1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t],

\end{equation*}

\begin{equation*}

\sum_{t=0}^{\tau^{(n)} -1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] = n \cdot \sum_{t=0}^{\tau -1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t],

\end{equation*}which tends to infinity as ![]() $n \to \infty$. Hence, we get the result.

$n \to \infty$. Hence, we get the result.

We now give the definition of an arbitrable stochastic game.

Definition 1.5. A non-competitive stochastic game starting from state ![]() $s \in S$ is said to be arbitrable or biased if

$s \in S$ is said to be arbitrable or biased if

It is generally difficult to express ![]() $E(s)$ explicitly, but

$E(s)$ explicitly, but ![]() $E_n(s)$ can sometimes be computed straightforwardly by induction on

$E_n(s)$ can sometimes be computed straightforwardly by induction on ![]() $n \in \mathbb{N}$.

$n \in \mathbb{N}$.

Proposition 1.2. Let ![]() $s \in S$ and

$s \in S$ and ![]() $n \in \mathbb{N}^*$. Let us assume that for all

$n \in \mathbb{N}^*$. Let us assume that for all ![]() $s \in S$,

$s \in S$, ![]() $|A(s)|$ is finite. Then,

$|A(s)|$ is finite. Then,

\begin{equation*}

E_n(s) = \max_{\alpha \in A(s)} \left\{\sum_{s' \in S} (E_{n-1}(s') + R(s' \mid s, \alpha)) \cdot \mathbb{P}(s' \mid s, \alpha) \right\}.

\end{equation*}

\begin{equation*}

E_n(s) = \max_{\alpha \in A(s)} \left\{\sum_{s' \in S} (E_{n-1}(s') + R(s' \mid s, \alpha)) \cdot \mathbb{P}(s' \mid s, \alpha) \right\}.

\end{equation*}Proof. Starting from state ![]() $s$, if the player chooses action

$s$, if the player chooses action ![]() $\alpha \in A(s)$, then they transition to state

$\alpha \in A(s)$, then they transition to state ![]() $s' \in S$ with probability

$s' \in S$ with probability ![]() $P(s' \mid s, \alpha)$ and receive a reward of

$P(s' \mid s, \alpha)$ and receive a reward of ![]() $R(s' \mid s, \alpha)$. Therefore, the expected maximal wealth by choosing action

$R(s' \mid s, \alpha)$. Therefore, the expected maximal wealth by choosing action ![]() $\alpha$ is

$\alpha$ is

\begin{equation*}

\sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s,\alpha) \right) \cdot P(s' \mid s,\alpha).

\end{equation*}

\begin{equation*}

\sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s,\alpha) \right) \cdot P(s' \mid s,\alpha).

\end{equation*} Assume now ![]() $|A(s)|$ is finite. If the action

$|A(s)|$ is finite. If the action ![]() $\alpha$ is chosen randomly with probability

$\alpha$ is chosen randomly with probability ![]() $\mathfrak{f}_s(\alpha)$, the player obtains on average at most

$\mathfrak{f}_s(\alpha)$, the player obtains on average at most

\begin{equation*}

\sum_{\alpha \in A(s)} \mathfrak{f}_s(\alpha) \cdot \left( \sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s,\alpha) \right) \cdot \mathbb{P}(s' \mid s,\alpha) \right).

\end{equation*}

\begin{equation*}

\sum_{\alpha \in A(s)} \mathfrak{f}_s(\alpha) \cdot \left( \sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s,\alpha) \right) \cdot \mathbb{P}(s' \mid s,\alpha) \right).

\end{equation*} We have a finite number of real numbers that are explicitly given by ![]() $\sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s,\alpha)\right)$ for

$\sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s,\alpha)\right)$ for ![]() $\alpha\in|A(s)|$, and we are looking for the supremum of a convex combination of these real numbers. The supremum is necessarily attained for one of these real numbers.

$\alpha\in|A(s)|$, and we are looking for the supremum of a convex combination of these real numbers. The supremum is necessarily attained for one of these real numbers.

Thus,

\begin{align*}

E_n(s) &= \sup_{\mathfrak{f}_s} \left\{\sum_{\alpha \in A(s)} \mathfrak{f}_s(\alpha) \cdot \left( \sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s,\alpha) \right) \cdot \mathbb{P}(s' \mid s,\alpha) \right) \right\}, \\

&= \max_{\alpha \in A(s)} \left\{\sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s, \alpha) \right) \cdot \mathbb{P}(s' \mid s, \alpha) \right\}.

\end{align*}

\begin{align*}

E_n(s) &= \sup_{\mathfrak{f}_s} \left\{\sum_{\alpha \in A(s)} \mathfrak{f}_s(\alpha) \cdot \left( \sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s,\alpha) \right) \cdot \mathbb{P}(s' \mid s,\alpha) \right) \right\}, \\

&= \max_{\alpha \in A(s)} \left\{\sum_{s' \in S} \left( E_{n-1}(s') + R(s' \mid s, \alpha) \right) \cdot \mathbb{P}(s' \mid s, \alpha) \right\}.

\end{align*}2. Three examples of the coin toss game

We will illustrate the previous section by providing three examples of variations on the classical coin toss game with chips. In each case, it is a variation of the coin toss game but played with chips. The set ![]() $S$ of all possible states is always

$S$ of all possible states is always ![]() $\mathbb{N}^2$, and for every

$\mathbb{N}^2$, and for every ![]() $s \in S$, the cardinality of

$s \in S$, the cardinality of ![]() $A(s)$ equals

$A(s)$ equals ![]() $2$. The

$2$. The ![]() $i$-th game starting in the state

$i$-th game starting in the state ![]() $s$ is denoted by

$s$ is denoted by ![]() $HT^i(s)$ (HT stands for “Heads-or-Tails”). During these various games, both the player and the casino accumulate chips that can be converted into real money under certain conditions. In any case, we note

$HT^i(s)$ (HT stands for “Heads-or-Tails”). During these various games, both the player and the casino accumulate chips that can be converted into real money under certain conditions. In any case, we note ![]() $E^i(s)$ as the maximum expected gain of the game

$E^i(s)$ as the maximum expected gain of the game ![]() $HT^i(s)$ starting from state

$HT^i(s)$ starting from state ![]() $s$, according to the notation in the previous section, and

$s$, according to the notation in the previous section, and ![]() $E_n^i(s)$ is the maximum expected gain under the constraint that the game ends after at most

$E_n^i(s)$ is the maximum expected gain under the constraint that the game ends after at most ![]() $n$ actions with

$n$ actions with ![]() $n \in \mathbb{N}$.

$n \in \mathbb{N}$.

2.1. Classic coin toss game with chips

Let ![]() $q \in [0, 1/2)$ and

$q \in [0, 1/2)$ and ![]() $p = 1 - q$. The stochastic game is defined as follows. We have

$p = 1 - q$. The stochastic game is defined as follows. We have ![]() $S = \mathbb{N} \times \mathbb{N}$ and for all

$S = \mathbb{N} \times \mathbb{N}$ and for all ![]() $s \in S$,

$s \in S$, ![]() $|A(s)| = 2$. If

$|A(s)| = 2$. If ![]() $s = (a,h)$ with

$s = (a,h)$ with ![]() $a \le h$, then

$a \le h$, then ![]() $A(s) = \{\text{abandon}, \text{toss}\}$ and if

$A(s) = \{\text{abandon}, \text{toss}\}$ and if ![]() $a \gt h$,

$a \gt h$, ![]() $A(s) = \{\text{crush}, \text{toss}\}$. The functions

$A(s) = \{\text{crush}, \text{toss}\}$. The functions ![]() $P$ and

$P$ and ![]() $R$ are defined by:

$R$ are defined by:

• For all

$s' \in S$ and

$s' \in S$ and  $s = (a,h)$,

$s = (a,h)$,

\begin{equation*}

P(s' \mid s, \text{toss}) = \begin{cases}

p &\text{if } s' = (a, h+1)\\

q &\text{if } s' = (a+1, h)\\

0 &\text{otherwise}

\end{cases}

\quad \text{and} \quad R(s' \mid s, \text{toss}) = -q.

\end{equation*}

\begin{equation*}

P(s' \mid s, \text{toss}) = \begin{cases}

p &\text{if } s' = (a, h+1)\\

q &\text{if } s' = (a+1, h)\\

0 &\text{otherwise}

\end{cases}

\quad \text{and} \quad R(s' \mid s, \text{toss}) = -q.

\end{equation*}• For all

$s' \in S$ and

$s' \in S$ and  $s = (a,h)$ with

$s = (a,h)$ with  $a \le h$,

$a \le h$,

\begin{equation*}

P(s' \mid s, \text{abandon}) = \begin{cases}

1 &\text{if } s' = (0,0)\\

0 &\text{otherwise}

\end{cases}

\quad \text{and} \quad R(s' \mid s, \text{abandon}) = 0.

\end{equation*}

\begin{equation*}

P(s' \mid s, \text{abandon}) = \begin{cases}

1 &\text{if } s' = (0,0)\\

0 &\text{otherwise}

\end{cases}

\quad \text{and} \quad R(s' \mid s, \text{abandon}) = 0.

\end{equation*}• For all

$s' \in S$ and

$s' \in S$ and  $s = (a,h)$ with

$s = (a,h)$ with  $a \gt h$,

$a \gt h$,

\begin{equation*}

P(s' \mid s, \text{crush}) = \begin{cases}

1 &\text{if } s' = (a - h - 1, 0)\\

0 &\text{otherwise}

\end{cases}

\quad \text{and} \quad R(s' \mid s, \text{crush}) = h + 1.

\end{equation*}

\begin{equation*}

P(s' \mid s, \text{crush}) = \begin{cases}

1 &\text{if } s' = (a - h - 1, 0)\\

0 &\text{otherwise}

\end{cases}

\quad \text{and} \quad R(s' \mid s, \text{crush}) = h + 1.

\end{equation*}

Definition 2.1. We denote by ![]() $HT^1(a,h)$ the game described above, which begins with the initial state

$HT^1(a,h)$ the game described above, which begins with the initial state ![]() $(a,h)$.

$(a,h)$.

This is a classic version of the game of heads or tails, but with chips. At any given moment, the player has two of the following actions available:

Toss: A croupier tosses a coin rigged in favor of the casino. The probability of getting Tails is

$q$. This action costs the player

$q$. This action costs the player  $q$ euros whatever the result.

$q$ euros whatever the result.

• If the result is Heads, the casino wins a chip.

• If the result is Tails, the player wins a chip.

Crush: This action is only possible if the player (resp. the casino) has

$a$ (resp.

$a$ (resp.  $h$) chips with

$h$) chips with  $a \gt h$. In this case, the casino loses all its chips, the player loses

$a \gt h$. In this case, the casino loses all its chips, the player loses  $h+1$ chips but gains

$h+1$ chips but gains  $h+1$ euros. This action costs the player nothing.

$h+1$ euros. This action costs the player nothing.Abandon: The casino and the player lose all their chips. This action costs the player nothing.

Theorem 2.1. Let ![]() $(a,h) \in \mathbb{N}^2$. Then,

$(a,h) \in \mathbb{N}^2$. Then,

Proof. The initial state is ![]() $(a,h)$. Let

$(a,h)$. Let ![]() $\mathfrak{f}$ be an arbitrary strategy and

$\mathfrak{f}$ be an arbitrary strategy and ![]() $\tau$ an integrable stopping time. The strategy

$\tau$ an integrable stopping time. The strategy ![]() $\mathfrak{f}$ defines a Markov chain

$\mathfrak{f}$ defines a Markov chain ![]() $\mathfrak{X}$ on

$\mathfrak{X}$ on ![]() $\mathbb{N}^2$. For

$\mathbb{N}^2$. For ![]() $n \in \mathbb{N}$, let

$n \in \mathbb{N}$, let ![]() $A_n$ denote the first coordinate of

$A_n$ denote the first coordinate of ![]() $\mathfrak{X}_n \in \mathbb{N}^2$. This represents the number of chips the player holds at step

$\mathfrak{X}_n \in \mathbb{N}^2$. This represents the number of chips the player holds at step ![]() $n$, and let

$n$, and let

\begin{equation*}

G_n = \sum_{t=0}^{n-1} \tilde{R}_t

\end{equation*}

\begin{equation*}

G_n = \sum_{t=0}^{n-1} \tilde{R}_t

\end{equation*}be the cumulative gains in fiat money collected by the player throughout the game.

Note that, regardless of the strategy, the player’s gain is at most equal to the total number of chips received over the course of the game, including the chips the player has at the beginning of the game. This number increases by at most one at each step; thus,

The same argument shows that

\begin{equation*}

\sum_{t=0}^{n-1} \max(0, \tilde{R}_t) \leq n + a.

\end{equation*}

\begin{equation*}

\sum_{t=0}^{n-1} \max(0, \tilde{R}_t) \leq n + a.

\end{equation*} Similarly, the player loses at most ![]() $q$ at each step, so

$q$ at each step, so

\begin{equation*}

\sum_{t=0}^{n-1} \min(0, \tilde{R}_t) \geq -q n.

\end{equation*}

\begin{equation*}

\sum_{t=0}^{n-1} \min(0, \tilde{R}_t) \geq -q n.

\end{equation*}It follows that

\begin{equation*}

\sum_{t=0}^{n-1} |\tilde{R}_t| = \sum_{t=0}^{n-1} \max(0, \tilde{R}_t) - \sum_{t=0}^{n-1} \min(0, \tilde{R}_t) + a \leq (1 + q) n + a.

\end{equation*}

\begin{equation*}

\sum_{t=0}^{n-1} |\tilde{R}_t| = \sum_{t=0}^{n-1} \max(0, \tilde{R}_t) - \sum_{t=0}^{n-1} \min(0, \tilde{R}_t) + a \leq (1 + q) n + a.

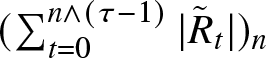

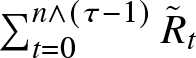

\end{equation*} By assumption, ![]() $\tau$ is integrable. This ensures that the sequence

$\tau$ is integrable. This ensures that the sequence  $(\sum_{t=0}^{n \wedge (\tau - 1)} |\tilde{R}_t|)_n$ is bounded by

$(\sum_{t=0}^{n \wedge (\tau - 1)} |\tilde{R}_t|)_n$ is bounded by ![]() $(1+q)\tau + 1 + q + a$ and so converges in

$(1+q)\tau + 1 + q + a$ and so converges in ![]() $L^1$ as

$L^1$ as ![]() $n \to \infty$ as does

$n \to \infty$ as does  $\sum_{t=0}^{n \wedge (\tau - 1)} \tilde{R}_t$. Therefore,

$\sum_{t=0}^{n \wedge (\tau - 1)} \tilde{R}_t$. Therefore,

\begin{equation}

\mathbb{E}_{s,\mathfrak{f}} \left[ \sum_{t=0}^{\tau -1} \tilde{R}_t \right] = \sum_{t=0}^{\tau -1} \mathbb{E}_{s,\mathfrak{f}} [\tilde{R}_t].\end{equation}

\begin{equation}

\mathbb{E}_{s,\mathfrak{f}} \left[ \sum_{t=0}^{\tau -1} \tilde{R}_t \right] = \sum_{t=0}^{\tau -1} \mathbb{E}_{s,\mathfrak{f}} [\tilde{R}_t].\end{equation} Let ![]() $n \in \mathbb{N}$ and

$n \in \mathbb{N}$ and ![]() $X \in \{\text{Abandon}, \text{Toss}, \text{Crush}\}$. By definition, the event

$X \in \{\text{Abandon}, \text{Toss}, \text{Crush}\}$. By definition, the event ![]() $\{f_n = X\}$ means that action

$\{f_n = X\}$ means that action ![]() $X$ was chosen at step

$X$ was chosen at step ![]() $n$. If Toss is chosen at step

$n$. If Toss is chosen at step ![]() $n$, then necessarily

$n$, then necessarily ![]() $G_{n+1} = G_n - q$, and

$G_{n+1} = G_n - q$, and

First, suppose ![]() $\mathfrak{X}_n = (a,h)$ with

$\mathfrak{X}_n = (a,h)$ with ![]() $a \leq h$. If Abandon is chosen at step

$a \leq h$. If Abandon is chosen at step ![]() $n$, then

$n$, then ![]() $A_{n+1} = 0$ and

$A_{n+1} = 0$ and ![]() $G_{n+1} = G_n$. Thus,

$G_{n+1} = G_n$. Thus,

\begin{align*}

\mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1}] &= \mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1} \mid f_n = \text{Toss}] \mathbb{P}[f_n = \text{Toss}] \\

&\quad + \mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1} \mid f_n = \text{Abandon}] \mathbb{P}[f_n = \text{Abandon}] \\

&= (p A_n + q (A_n + 1) + G_n - q) \mathbb{P}[f_n = \text{Toss}] + G_n \mathbb{P}[f_n = \text{Abandon}] \\

&\leq (A_n + G_n)(\mathbb{P}[f_n = \text{Toss}] + \mathbb{P}[f_n = \text{Abandon}]) \\

&\leq A_n + G_n.

\end{align*}

\begin{align*}

\mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1}] &= \mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1} \mid f_n = \text{Toss}] \mathbb{P}[f_n = \text{Toss}] \\

&\quad + \mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1} \mid f_n = \text{Abandon}] \mathbb{P}[f_n = \text{Abandon}] \\

&= (p A_n + q (A_n + 1) + G_n - q) \mathbb{P}[f_n = \text{Toss}] + G_n \mathbb{P}[f_n = \text{Abandon}] \\

&\leq (A_n + G_n)(\mathbb{P}[f_n = \text{Toss}] + \mathbb{P}[f_n = \text{Abandon}]) \\

&\leq A_n + G_n.

\end{align*} Similarly, in the case ![]() $a \gt h$, if Crush is chosen at step

$a \gt h$, if Crush is chosen at step ![]() $n$, then

$n$, then ![]() $A_{n+1} = A_n - h - 1$ and

$A_{n+1} = A_n - h - 1$ and ![]() $G_{n+1} = G_n + h + 1$, so

$G_{n+1} = G_n + h + 1$, so

and

\begin{align*}

\mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1}] &= \mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1} \mid f_n = \text{Toss}] \mathbb{P}[f_n=\text{Toss}] \\

&\quad + \mathbb{E}_{s,\mathfrak{f}}[A_{n+1}+G_{n+1} \mid f_n = \text{Crush}] \mathbb{P}[f_n=\text{Crush}] \\

&= (p A_n + q (A_n + 1) + G_n - q) \mathbb{P}[f_n=\text{Toss}] + (A_n + G_n) \mathbb{P}[f_n=\text{Crush}] \\

&= (A_n + G_n)(\mathbb{P}[f_n=\text{Toss}] + \mathbb{P}[f_n=\text{Crush}]) = A_n + G_n.

\end{align*}

\begin{align*}

\mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1}] &= \mathbb{E}_{s,\mathfrak{f}}[A_{n+1} + G_{n+1} \mid f_n = \text{Toss}] \mathbb{P}[f_n=\text{Toss}] \\

&\quad + \mathbb{E}_{s,\mathfrak{f}}[A_{n+1}+G_{n+1} \mid f_n = \text{Crush}] \mathbb{P}[f_n=\text{Crush}] \\

&= (p A_n + q (A_n + 1) + G_n - q) \mathbb{P}[f_n=\text{Toss}] + (A_n + G_n) \mathbb{P}[f_n=\text{Crush}] \\

&= (A_n + G_n)(\mathbb{P}[f_n=\text{Toss}] + \mathbb{P}[f_n=\text{Crush}]) = A_n + G_n.

\end{align*} It follows that, regardless of the chosen strategy, ![]() $(A_n + G_n)$ is a supermartingale. The stopping time

$(A_n + G_n)$ is a supermartingale. The stopping time ![]() $\tau \wedge n$ is finite, thus by Doob’s theorem,

$\tau \wedge n$ is finite, thus by Doob’s theorem,

Therefore,

since the initial state is ![]() $(a,h)$ and

$(a,h)$ and ![]() $G_0 = 0$. By (1),

$G_0 = 0$. By (1), ![]() $\mathbb{E}_{s,\mathfrak{f}}[G_{\tau \wedge n}]$ converges to

$\mathbb{E}_{s,\mathfrak{f}}[G_{\tau \wedge n}]$ converges to  $\sum_{t=0}^{\tau - 1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t]$. Therefore,

$\sum_{t=0}^{\tau - 1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t]$. Therefore,

\begin{equation*}

\sum_{t=0}^{\tau -1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \leq a

\end{equation*}

\begin{equation*}

\sum_{t=0}^{\tau -1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \leq a

\end{equation*}for any strategy ![]() $\mathfrak{f}$ and any stopping time

$\mathfrak{f}$ and any stopping time ![]() $\tau$. So,

$\tau$. So,

Corollary 2.1. For all ![]() $h \geq 0$, the stochastic game

$h \geq 0$, the stochastic game ![]() $HT^1(0,h)$ is non-arbitrable.

$HT^1(0,h)$ is non-arbitrable.

Proof. By Theorem 2.1, ![]() $E^1(0,h) \leq 0$. But of course, if the player chooses Abandon immediately and leaves the game, his payoff is 0. So, also

$E^1(0,h) \leq 0$. But of course, if the player chooses Abandon immediately and leaves the game, his payoff is 0. So, also ![]() $E^1(0,h) \geq 0$. Hence, we get

$E^1(0,h) \geq 0$. Hence, we get ![]() $E^1(0,h) = 0$ and

$E^1(0,h) = 0$ and ![]() $HT^1(0,h)$ is non-arbitrable.

$HT^1(0,h)$ is non-arbitrable.

Corollary 2.2. For all ![]() $n \in \mathbb{N}$, we have

$n \in \mathbb{N}$, we have ![]() $E_n^1(0,0)=0$.

$E_n^1(0,0)=0$.

Corollary 2.3. Let ![]() $(a,h) \in \mathbb{N}^2$ with

$(a,h) \in \mathbb{N}^2$ with ![]() $a \gt h$. Then,

$a \gt h$. Then, ![]() $E^1(a,h) = a$.

$E^1(a,h) = a$.

Proof. Starting from ![]() $(a,h) \in \mathbb{N}^2$ with

$(a,h) \in \mathbb{N}^2$ with ![]() $a \gt h$, if the player repeatedly chooses the crush action until they have used up all their chips, then they get

$a \gt h$, if the player repeatedly chooses the crush action until they have used up all their chips, then they get ![]() $a$. So,

$a$. So, ![]() $E^1(a,h) \geq a$ and also

$E^1(a,h) \geq a$ and also ![]() $E^1(a,h) \leq a$ by Theorem 2.1. Hence, we get the result.

$E^1(a,h) \leq a$ by Theorem 2.1. Hence, we get the result.

This last result can be interpreted as meaning that if, during the game, the player manages to obtain more chips than the casino, then their best strategy is to use the Crush action and end the game.

Note, however, that for ![]() $(a,h) \in \mathbb{N}^2$, the game

$(a,h) \in \mathbb{N}^2$, the game ![]() $HT^1(a,h)$ may be arbitrable when

$HT^1(a,h)$ may be arbitrable when ![]() $0 \lt a \lt h$.

$0 \lt a \lt h$.

Proposition 2.1. The stochastic game ![]() $HT^1(1,2)$ is arbitrable for

$HT^1(1,2)$ is arbitrable for ![]() $q \gt 0.429056$.

$q \gt 0.429056$.

Proof. By Proposition 1.2 and Corollary 2.2, for ![]() $0 \leq a \leq h$, we have:

$0 \leq a \leq h$, we have:

\begin{align*}

E_n^1(a,h) &= \max \left\{E_{n-1}^1(0,0), \ p E_{n-1}^1(a,h+1) + q E_{n-1}^1(a+1,h) - q \right\} \\

&= \max \left\{0, \ p E_{n-1}^1(a,h+1) + q E_{n-1}^1(a+1,h) - q \right\}

\end{align*}

\begin{align*}

E_n^1(a,h) &= \max \left\{E_{n-1}^1(0,0), \ p E_{n-1}^1(a,h+1) + q E_{n-1}^1(a+1,h) - q \right\} \\

&= \max \left\{0, \ p E_{n-1}^1(a,h+1) + q E_{n-1}^1(a+1,h) - q \right\}

\end{align*} Moreover, by Proposition 1.2, for ![]() $a \gt h$,

$a \gt h$,

\begin{equation*}

E_n^1(a,h) = \max \{h+1 + E_{n-1}^1(a - h-1, 0), \ p E_{n-1}^1(a,h+1) + q E_{n-1}^1(a+1,h) - q \}.

\end{equation*}

\begin{equation*}

E_n^1(a,h) = \max \{h+1 + E_{n-1}^1(a - h-1, 0), \ p E_{n-1}^1(a,h+1) + q E_{n-1}^1(a+1,h) - q \}.

\end{equation*} On the other hand, for ![]() $(a,h) \in \mathbb{N}^2$,

$(a,h) \in \mathbb{N}^2$,  $E_0^1(a,h) = 0$. Previous formulas allow

$E_0^1(a,h) = 0$. Previous formulas allow ![]() $E_n^1(a,h)$ to be calculated by induction on

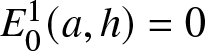

$E_n^1(a,h)$ to be calculated by induction on ![]() $n$. Below is a simple pseudo-code that accurately gives the average maximum gain

$n$. Below is a simple pseudo-code that accurately gives the average maximum gain ![]() $E_n^1(a,h)$. We use the memoization principle for the sake of efficiency [Reference Cormen, Leiserson, Rivest and Stein7].

$E_n^1(a,h)$. We use the memoization principle for the sake of efficiency [Reference Cormen, Leiserson, Rivest and Stein7].

We observe that ![]() $E^1_n(1, 2) = 4.050134694288943 \times 10^{8} \gt 0$ for

$E^1_n(1, 2) = 4.050134694288943 \times 10^{8} \gt 0$ for ![]() $q=0.429056$ and

$q=0.429056$ and ![]() $n=75$. In other words, if

$n=75$. In other words, if ![]() $q \gt 42.91\%$, the game

$q \gt 42.91\%$, the game ![]() $HT^1(1, 2)$ is arbitrable.

$HT^1(1, 2)$ is arbitrable.

Note, however, that we are unable to find ![]() $n\in\mathbb{N}$ such that

$n\in\mathbb{N}$ such that ![]() $E^1_n(1, 2) \gt 0$ for

$E^1_n(1, 2) \gt 0$ for ![]() $q\leq 0.429055$.

$q\leq 0.429055$.

2.2. Optimal strategy

As noted after Corollary 2.3, the optimal action is Crush whenever ![]() $a \gt h$. Furthermore, when

$a \gt h$. Furthermore, when ![]() $E(a,h) \gt 0$ and

$E(a,h) \gt 0$ and ![]() $a \leq h$, the optimal action must be Toss rather than Abandon, since Abandon leads to the state

$a \leq h$, the optimal action must be Toss rather than Abandon, since Abandon leads to the state ![]() $(0,0)$, and

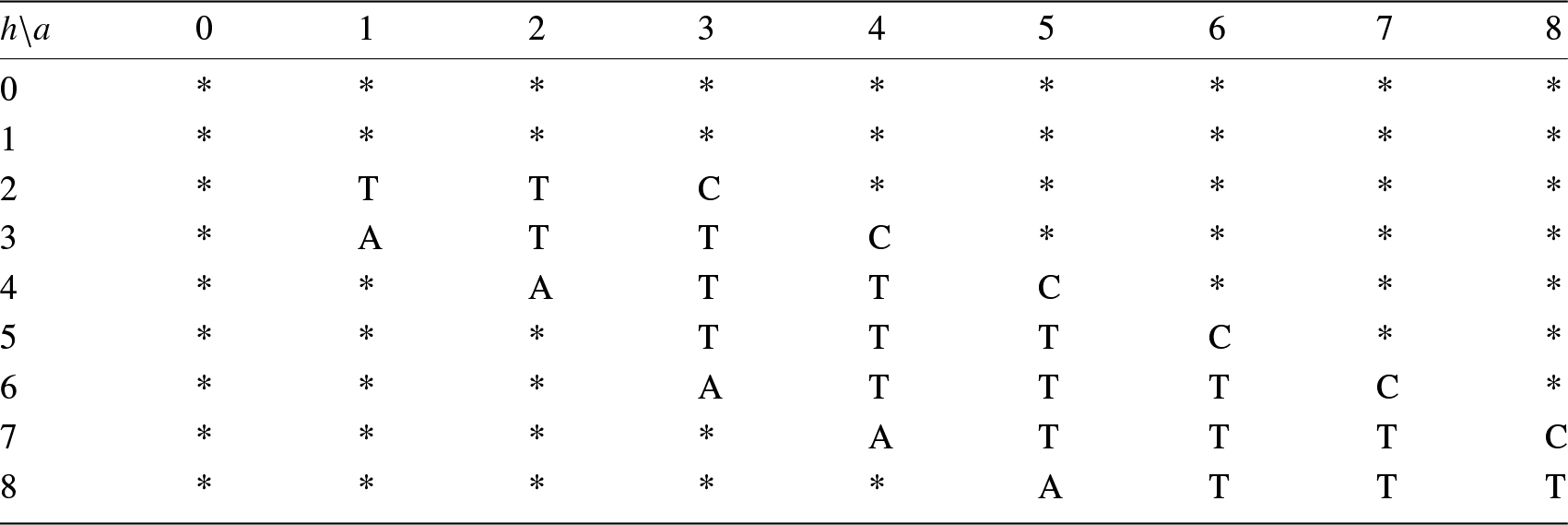

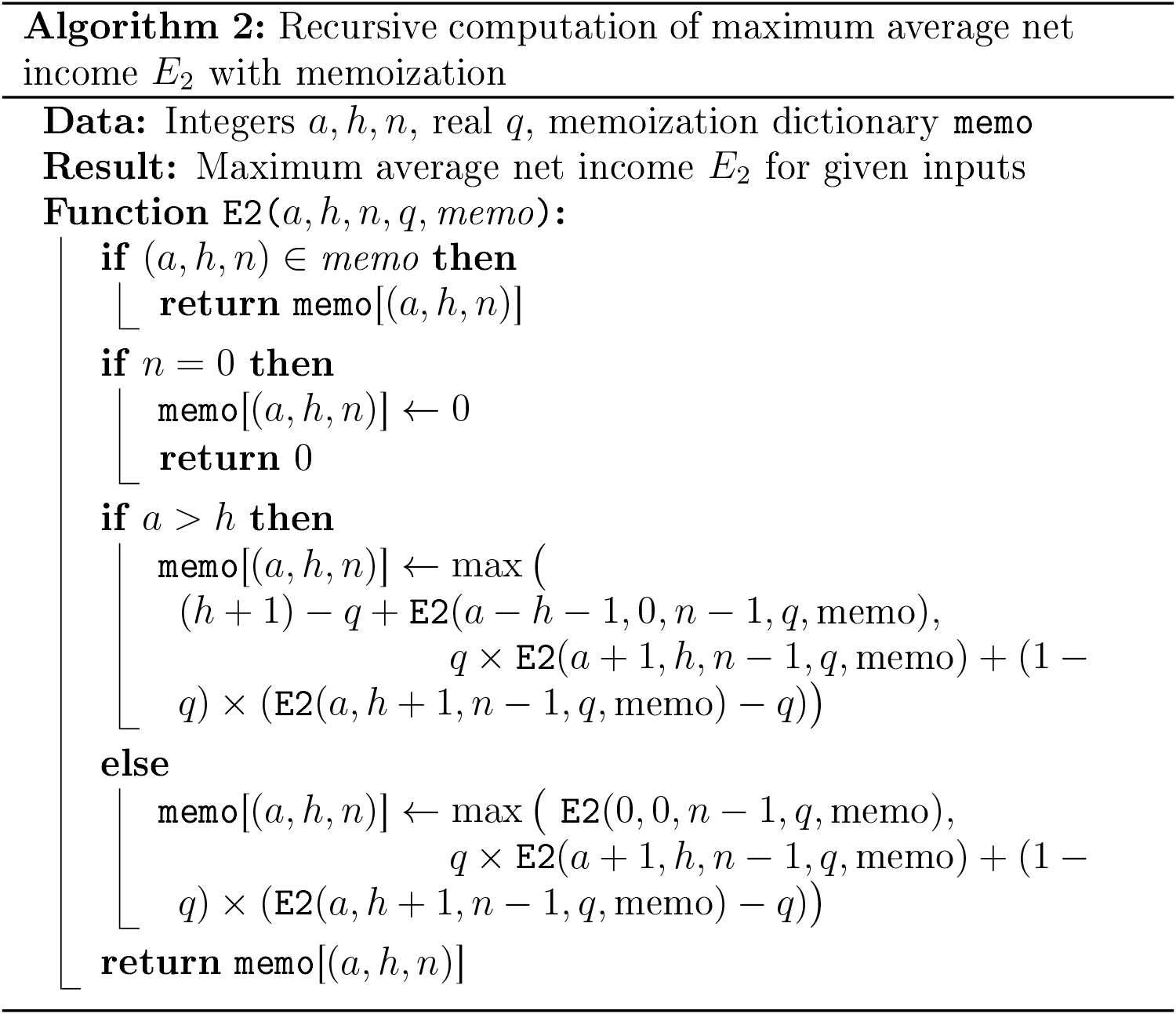

$(0,0)$, and ![]() $E^1(0,0) = 0$ by Corollary 2.2. The table below details the optimal action when the game begins in state

$E^1(0,0) = 0$ by Corollary 2.2. The table below details the optimal action when the game begins in state ![]() $(1,2)$. Each time the player selects the Crush action, the game terminates because the next state is

$(1,2)$. Each time the player selects the Crush action, the game terminates because the next state is ![]() $(0,0)$ and

$(0,0)$ and ![]() $E^1(0,0) = 0$, as stated in Corollary 2.2. The function

$E^1(0,0) = 0$, as stated in Corollary 2.2. The function ![]() $E^1(a,h)$ was computed using the preceding algorithm with

$E^1(a,h)$ was computed using the preceding algorithm with ![]() $n = 500$ and

$n = 500$ and ![]() $q=0.45$. See Table 1.

$q=0.45$. See Table 1.

Optimal strategy for the game ![]() $E^1(1,2)$ when

$E^1(1,2)$ when ![]() $q=0.45$.

$q=0.45$.

The player leaves the game as soon as he chooses the Abandon action, denoted by A, or the Crush action, denoted by C. The Toss action is denoted by T. An asterisk (*) indicates that the state is unreachable.

We observe that when the player is two chips behind the casino but has at least three chips, continuing to play is advantageous (with ![]() $q=0.45$ as before).

$q=0.45$ as before).

2.3. Modified coin toss game with chips. Second version

The game is similar to the previous one, except that the player does not always pay ![]() $q$ each time they use the Toss action. They only pay when the result of this action is unfavorable to them (when the result is Head and the casino wins a chip). As compensation, the action Crush yields slightly less when used because now, each time the player uses it, they must pay

$q$ each time they use the Toss action. They only pay when the result of this action is unfavorable to them (when the result is Head and the casino wins a chip). As compensation, the action Crush yields slightly less when used because now, each time the player uses it, they must pay ![]() $q$. We will see that this is not sufficient and that the game is biased if

$q$. We will see that this is not sufficient and that the game is biased if ![]() $q$ is large enough. To be concrete, the stochastic game is defined as follows. We have

$q$ is large enough. To be concrete, the stochastic game is defined as follows. We have ![]() $S = \mathbb{N} \times \mathbb{N}$ and for all

$S = \mathbb{N} \times \mathbb{N}$ and for all ![]() $s \in S$,

$s \in S$, ![]() $|A(s)| = 2$. If

$|A(s)| = 2$. If ![]() $s = (a,h)$ with

$s = (a,h)$ with ![]() $a \leq h$,

$a \leq h$, ![]() $A(s) = \{\text{abandon}, \text{toss}\}$ and if

$A(s) = \{\text{abandon}, \text{toss}\}$ and if ![]() $a \gt h$,

$a \gt h$, ![]() $A(s) = \{\text{crush}, \text{toss}\}$.

$A(s) = \{\text{crush}, \text{toss}\}$.

The functions ![]() $P$ and

$P$ and ![]() $R$ are defined by:

$R$ are defined by:

• For all

$s' \in S$ and

$s' \in S$ and  $s = (a,h)$,

$s = (a,h)$,

\begin{equation*}

P(s' \mid s, \text{toss}) = \begin{cases}

p & \text{if } s' = (a,h+1), \\

q & \text{if } s' = (a+1,h), \\

0 & \text{otherwise},

\end{cases} \quad

R(s' \mid s, \text{toss}) = \begin{cases}

-q & \text{if } s' = (a,h+1), \\

0 & \text{otherwise}.

\end{cases}

\end{equation*}

\begin{equation*}

P(s' \mid s, \text{toss}) = \begin{cases}

p & \text{if } s' = (a,h+1), \\

q & \text{if } s' = (a+1,h), \\

0 & \text{otherwise},

\end{cases} \quad

R(s' \mid s, \text{toss}) = \begin{cases}

-q & \text{if } s' = (a,h+1), \\

0 & \text{otherwise}.

\end{cases}

\end{equation*}• For all

$s' \in S$ and

$s' \in S$ and  $s = (a,h)$ with

$s = (a,h)$ with  $a \leq h$,

$a \leq h$,

\begin{equation*}

P(s' \mid s, \text{abandon}) = \begin{cases}

1 & \text{if } s' = (0,0), \\

0 & \text{otherwise},

\end{cases} \quad

R(s' \mid s, \text{abandon}) = 0.

\end{equation*}

\begin{equation*}

P(s' \mid s, \text{abandon}) = \begin{cases}

1 & \text{if } s' = (0,0), \\

0 & \text{otherwise},

\end{cases} \quad

R(s' \mid s, \text{abandon}) = 0.

\end{equation*}• For all

$s' \in S$ and

$s' \in S$ and  $s = (a,h)$ with

$s = (a,h)$ with  $a \gt h$,

$a \gt h$,

\begin{equation*}

P(s' \mid s, \text{crush}) = \begin{cases}

1 & \text{if } s' = (a - h - 1, 0), \\

0 & \text{otherwise},

\end{cases} \quad

R(s' \mid s, \text{crush}) = h + 1-q.

\end{equation*}

\begin{equation*}

P(s' \mid s, \text{crush}) = \begin{cases}

1 & \text{if } s' = (a - h - 1, 0), \\

0 & \text{otherwise},

\end{cases} \quad

R(s' \mid s, \text{crush}) = h + 1-q.

\end{equation*}

We denote by ![]() $HT^2(a,h)$ the game described above, which begins with the initial state

$HT^2(a,h)$ the game described above, which begins with the initial state ![]() $(a,h)$. In other terms, during the course of the game, the player regularly accumulates chips and can, under certain constraints, convert them into cash (euros, let’s say). At any given moment, the player has a maximum of three possible actions.

$(a,h)$. In other terms, during the course of the game, the player regularly accumulates chips and can, under certain constraints, convert them into cash (euros, let’s say). At any given moment, the player has a maximum of three possible actions.

Toss: A croupier tosses a coin rigged in favor of the casino. The probability of getting Tails is

$q$.

$q$.

• If the result is Tails, the player wins a chip and pays nothing.

• If the result is Heads, the casino wins a chip and the player pays

$q$ to the dealer.

$q$ to the dealer.

Crush: This action is only possible if the player (resp. the casino) has

$a$ (resp.

$a$ (resp.  $h$) chips with

$h$) chips with  $a \gt h$. In this case, the casino loses all its chips, the player loses

$a \gt h$. In this case, the casino loses all its chips, the player loses  $h+1$ chips but wins

$h+1$ chips but wins  $h+1$ euros and also gives

$h+1$ euros and also gives  $q$ euros to the dealer. His net result is therefore

$q$ euros to the dealer. His net result is therefore  $h+1-q$ euros.

$h+1-q$ euros.Abandon: The casino and the player lose all their chips. This action costs the player nothing.

Theorem 2.2. The stochastic game ![]() $HT^2(0,0)$ is arbitrable for

$HT^2(0,0)$ is arbitrable for ![]() $q \gt 0.329393$.

$q \gt 0.329393$.

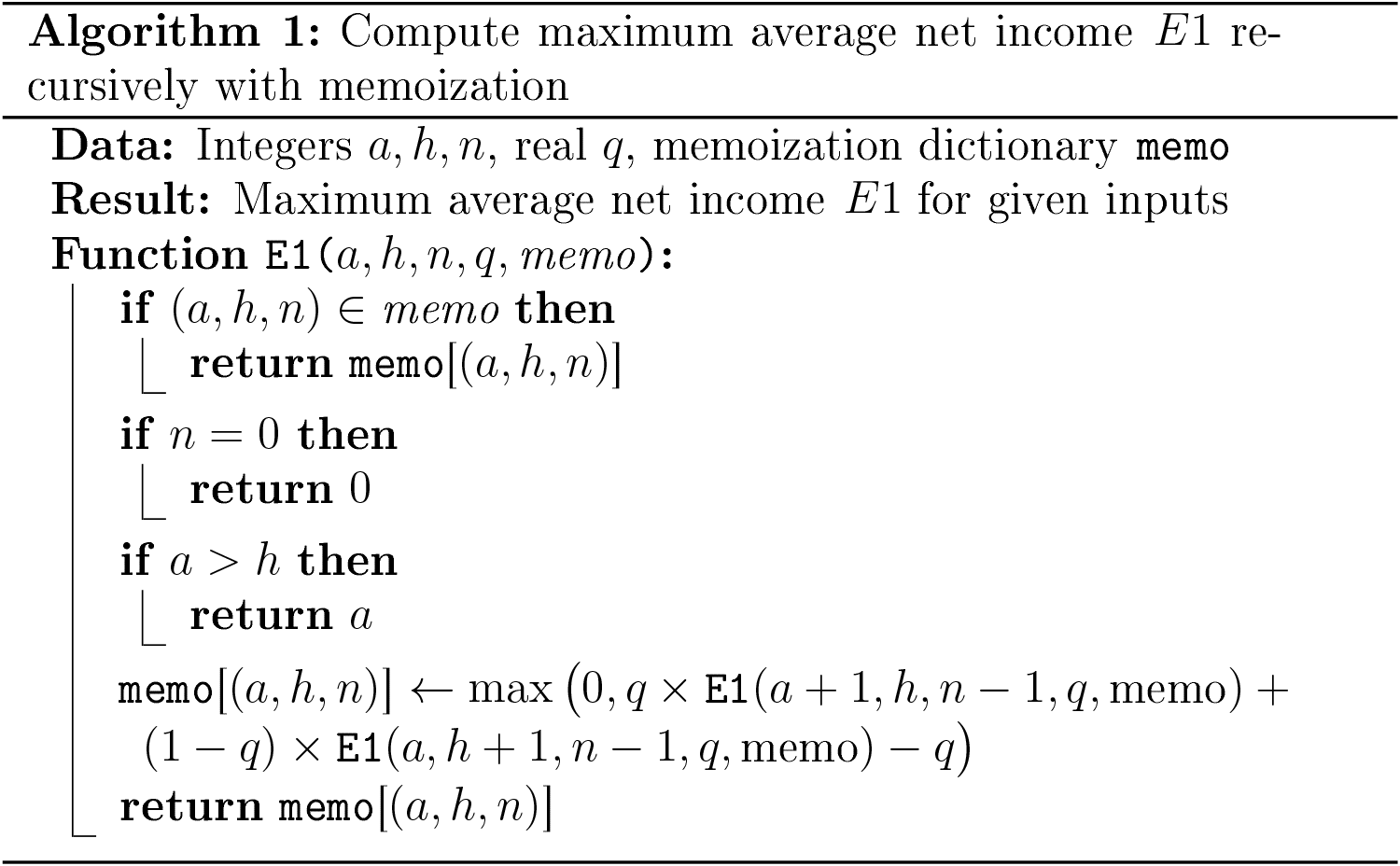

Proof. We use Proposition 1.2 to calculate ![]() $E_n^2(a,h)$ by induction on

$E_n^2(a,h)$ by induction on ![]() $n$. For any

$n$. For any ![]() $n \in \mathbb{N}$ and

$n \in \mathbb{N}$ and ![]() $a \gt h$, if the player decides to use the Crush action, then the state of the network changes from

$a \gt h$, if the player decides to use the Crush action, then the state of the network changes from ![]() $(a,h)$ to

$(a,h)$ to ![]() $(a - h - 1, 0)$ and the game continues. Thus, for

$(a - h - 1, 0)$ and the game continues. Thus, for ![]() $a \gt h$, we have

$a \gt h$, we have

\begin{equation*}

E_n^2(a,h) = \max \big\{h + 1 - q + E_{n-1}^2(a - h - 1, 0), q E_{n-1}^2(a + 1, h) + p \big( E_{n-1}^2(a, h + 1) - q \big) \big\}.

\end{equation*}

\begin{equation*}

E_n^2(a,h) = \max \big\{h + 1 - q + E_{n-1}^2(a - h - 1, 0), q E_{n-1}^2(a + 1, h) + p \big( E_{n-1}^2(a, h + 1) - q \big) \big\}.

\end{equation*} In the case where ![]() $a \leq h$, the player has basically the choice between Toss or Abandon. Therefore,

$a \leq h$, the player has basically the choice between Toss or Abandon. Therefore,

\begin{equation*}

E_n^2(a,h) = \max \big\{E_{n-1}^2(0,0), q E_{n-1}^2(a + 1, h) + p \big( E_{n-1}^2(a, h + 1) - q \big) \big\}.

\end{equation*}

\begin{equation*}

E_n^2(a,h) = \max \big\{E_{n-1}^2(0,0), q E_{n-1}^2(a + 1, h) + p \big( E_{n-1}^2(a, h + 1) - q \big) \big\}.

\end{equation*} Below is a very simple pseudo-code that precisely provides the maximal expected gain ![]() $E_n^2(a,h)$ through memoization.

$E_n^2(a,h)$ through memoization.

We note that for ![]() $q = 0.329393$ and

$q = 0.329393$ and ![]() $n=146$,

$n=146$,

so, the game ![]() $HT^2(0,0)$ is biased.

$HT^2(0,0)$ is biased.

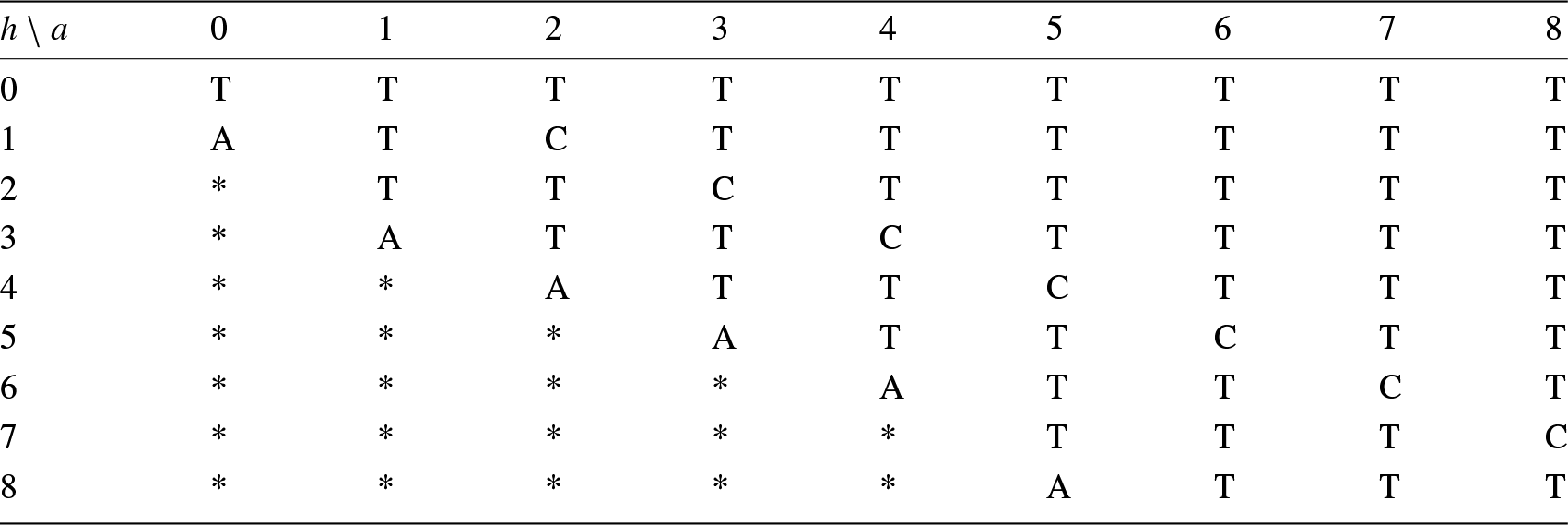

2.4. The optimal strategy

By modifying the previous code and imposing certain conditions for different values of ![]() $a$ and

$a$ and ![]() $h$, we can obtain the optimum solution. The solution is given in Table 2.

$h$, we can obtain the optimum solution. The solution is given in Table 2.

Optimal strategy for ![]() $q \gt 0.329393$. The parameter

$q \gt 0.329393$. The parameter ![]() $a$ (resp.

$a$ (resp. ![]() $h$) is represented horizontally (resp. vertically) from

$h$) is represented horizontally (resp. vertically) from ![]() $0$ to

$0$ to ![]() $8$.

$8$.

For each value of ![]() $a$ and

$a$ and ![]() $h$ an action is selected from

$h$ an action is selected from ![]() $\{A,T,C\}$ (

$\{A,T,C\}$ (![]() $A=$ Abandon,

$A=$ Abandon, ![]() $T=$ Toss,

$T=$ Toss, ![]() $C=$ Crush). The * symbol means that the action is irrelevant.

$C=$ Crush). The * symbol means that the action is irrelevant.

In comparison, the honest strategy is just  (all other states

(all other states ![]() $(a,h)$ for

$(a,h)$ for ![]() $a\geq 2$ or

$a\geq 2$ or ![]() $h\geq 2$ are irrelevant)

$h\geq 2$ are irrelevant)

We observe that the optimal strategy is both conservative and aggressive. It is conservative because in a situation which is favorable to the player, he does not take the risk of being caught and losing all his advantage, except in the case where ![]() $a=1$ and

$a=1$ and ![]() $h=0$. In all other cases where

$h=0$. In all other cases where ![]() $a=h+1$, he uses the Crush action. The optimal strategy is also aggressive because, in an unfavorable situation where

$a=h+1$, he uses the Crush action. The optimal strategy is also aggressive because, in an unfavorable situation where ![]() $a \lt h$, the more chips the player has, the less likely he is to give up. For example, in a situation where

$a \lt h$, the more chips the player has, the less likely he is to give up. For example, in a situation where ![]() $a\geq 5$, the player does not give up if

$a\geq 5$, the player does not give up if ![]() $h\leq a+$2, and similarly if

$h\leq a+$2, and similarly if ![]() $a\geq 10$, he continues to play (Toss action) if

$a\geq 10$, he continues to play (Toss action) if ![]() $h\leq a+$3.

$h\leq a+$3.

2.5. A third coin toss game

During this game, the player regularly accumulates chips that, under certain constraints, they can convert into cold hard cash (let’s say euros). At any given moment, the player has at most three possible actions:

Toss: A croupier tosses a coin rigged in favor of the casino. The probability of getting Tails is

$q$.

$q$.

• If the result is Tails, the player wins a chip and pays nothing.

• If the result is Heads, the casino wins a chip and the player pays

$q$ to the dealer.

$q$ to the dealer.

Crush: This action is only possible if the player (or the casino) has

$a$ (or

$a$ (or  $h$) chips with

$h$) chips with  $a \gt h$. In this case, the casino loses

$a \gt h$. In this case, the casino loses  $h$ chips, the player loses

$h$ chips, the player loses  $h+1$ chips and wins

$h+1$ chips and wins  $h+1$ euros, but he must also give the dealer

$h+1$ euros, but he must also give the dealer  $q\,(h+1)$. Hence, their net result is

$q\,(h+1)$. Hence, their net result is  $p.(h+1)$ euros.

$p.(h+1)$ euros.Abandon: The casino and the player lose all their chips. This action costs the player nothing.

The only difference between ![]() $HT^2$ and

$HT^2$ and ![]() $HT^3$ is the consequence of the Crush action. In

$HT^3$ is the consequence of the Crush action. In ![]() $HT^3$, the player earns less than in

$HT^3$, the player earns less than in ![]() $HT^2$. To be concrete

$HT^2$. To be concrete ![]() $HT^3$ is defined as follows. Let

$HT^3$ is defined as follows. Let ![]() $S = \mathbb{N} \times \mathbb{N}$ and for all

$S = \mathbb{N} \times \mathbb{N}$ and for all ![]() $s \in S$,

$s \in S$, ![]() $|A(s)| = 2$. If

$|A(s)| = 2$. If ![]() $s = (a,h)$ with

$s = (a,h)$ with ![]() $a \leq h$, then

$a \leq h$, then ![]() $A(s) = \{\text{abandon}, \text{toss}\}$, and if

$A(s) = \{\text{abandon}, \text{toss}\}$, and if ![]() $a \gt h$, then

$a \gt h$, then ![]() $A(s) = \{\text{crush}, \text{toss}\}$. The functions

$A(s) = \{\text{crush}, \text{toss}\}$. The functions ![]() $P$ and

$P$ and ![]() $R$ are defined by:

$R$ are defined by:

• For all

$s' \in S$ and

$s' \in S$ and  $s = (a,h)$,

$s = (a,h)$,

\begin{equation*}

P(s' \mid s, \text{toss}) = \begin{cases}

p & \text{if } s' = (a, h+1) \\

q & \text{if } s' = (a+1, h) \\

0 & \text{otherwise}

\end{cases}

, \quad

R(s' \mid s, \text{toss}) = \begin{cases}

-q & \text{if } s' = (a, h+1) \\

0 & \text{otherwise}

\end{cases}

\end{equation*}

\begin{equation*}

P(s' \mid s, \text{toss}) = \begin{cases}

p & \text{if } s' = (a, h+1) \\

q & \text{if } s' = (a+1, h) \\

0 & \text{otherwise}

\end{cases}

, \quad

R(s' \mid s, \text{toss}) = \begin{cases}

-q & \text{if } s' = (a, h+1) \\

0 & \text{otherwise}

\end{cases}

\end{equation*}• For all

$s' \in S$ and

$s' \in S$ and  $s = (a,h)$ with

$s = (a,h)$ with  $a \leq h$,

$a \leq h$,

\begin{equation*}

P(s' \mid s, \text{abandon}) = \begin{cases}

1 & \text{if } s' = (0,0) \\

0 & \text{otherwise}

\end{cases}

, \quad

R(s' \mid s, \text{abandon}) = 0.

\end{equation*}

\begin{equation*}

P(s' \mid s, \text{abandon}) = \begin{cases}

1 & \text{if } s' = (0,0) \\

0 & \text{otherwise}

\end{cases}

, \quad

R(s' \mid s, \text{abandon}) = 0.

\end{equation*}• For all

$s' \in S$ and

$s' \in S$ and  $s = (a,h)$ with

$s = (a,h)$ with  $a \gt h$,

$a \gt h$,

\begin{equation*}

P(s' \mid s, \text{crush}) = \begin{cases}

1 & \text{if } s' = (a - h - 1, 0) \\

0 & \text{otherwise}

\end{cases}

, \quad

R(s' \mid s, \text{crush}) = p (h+1).

\end{equation*}

\begin{equation*}

P(s' \mid s, \text{crush}) = \begin{cases}

1 & \text{if } s' = (a - h - 1, 0) \\

0 & \text{otherwise}

\end{cases}

, \quad

R(s' \mid s, \text{crush}) = p (h+1).

\end{equation*}

Definition 2.2. We denote by ![]() $HT^3(a,h)$ the game described above, where the player starts from a position with

$HT^3(a,h)$ the game described above, where the player starts from a position with ![]() $a$ chips against

$a$ chips against ![]() $h$ chips for the casino, that is,

$h$ chips for the casino, that is, ![]() $(a,h)\in\mathbb{N}^2$.

$(a,h)\in\mathbb{N}^2$.

We prove that ![]() $HT^3(0,0)$ is not arbitrable.

$HT^3(0,0)$ is not arbitrable.

Theorem 2.3. Let ![]() $(a,h)\in\mathbb{N}^2$. Then,

$(a,h)\in\mathbb{N}^2$. Then, ![]() $E^3(a,h)\leq p\, a$.

$E^3(a,h)\leq p\, a$.

Proof. The proof is analogous to that of Theorem 2.1. In particular, Equation (1) holds. This time, the sequence ![]() $(p A_n + G_n)_n$ is always a supermartingale regardless of the player’s strategy. Indeed, denoting by

$(p A_n + G_n)_n$ is always a supermartingale regardless of the player’s strategy. Indeed, denoting by ![]() $\{f_n = X\}$ the event that action

$\{f_n = X\}$ the event that action ![]() $X$ was chosen at step

$X$ was chosen at step ![]() $n$, for an integer

$n$, for an integer ![]() $n$, we have

$n$, we have

Hence,

Suppose first that ![]() $\mathfrak{X}_n = (a,h)$ with

$\mathfrak{X}_n = (a,h)$ with ![]() $a \leq h$. If Abandon was chosen at step

$a \leq h$. If Abandon was chosen at step ![]() $n$, then

$n$, then ![]() $A_{n+1} = 0$ and

$A_{n+1} = 0$ and ![]() $G_{n+1} = G_n$. Thus,

$G_{n+1} = G_n$. Thus,

\begin{align*}

\mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1}]

&= \mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1} \mid f_n = \text{Toss}] \, \mathbb{P}[f_n = \text{Toss}] \\

&\quad + \mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1} \mid f_n = \text{Abandon}] \, \mathbb{P}[f_n = \text{Abandon}] \\

&= (p A_n + G_n) \, \mathbb{P}[f_n = \text{Toss}] + G_n \, \mathbb{P}[f_n = \text{Abandon}] \\

&\leq (p A_n + G_n) \left(\mathbb{P}[f_n = \text{Toss}] + \mathbb{P}[f_n = \text{Abandon}]\right) \\

&\leq p A_n + G_n.

\end{align*}

\begin{align*}

\mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1}]

&= \mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1} \mid f_n = \text{Toss}] \, \mathbb{P}[f_n = \text{Toss}] \\

&\quad + \mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1} \mid f_n = \text{Abandon}] \, \mathbb{P}[f_n = \text{Abandon}] \\

&= (p A_n + G_n) \, \mathbb{P}[f_n = \text{Toss}] + G_n \, \mathbb{P}[f_n = \text{Abandon}] \\

&\leq (p A_n + G_n) \left(\mathbb{P}[f_n = \text{Toss}] + \mathbb{P}[f_n = \text{Abandon}]\right) \\

&\leq p A_n + G_n.

\end{align*} Similarly, in the case ![]() $a \gt h$, if Crush was chosen at step

$a \gt h$, if Crush was chosen at step ![]() $n$, then

$n$, then ![]() $A_{n+1} = A_n - h - 1$ and

$A_{n+1} = A_n - h - 1$ and ![]() $G_{n+1} = G_n + p (h+1)$. Hence,

$G_{n+1} = G_n + p (h+1)$. Hence,

and

\begin{equation*}

\begin{aligned}

\mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1}]

&= \mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1} \mid f_n = \text{Toss}] \mathbb{P}[f_n = \text{Toss}] \\

&\quad + \mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1} \mid f_n = \text{Abandon}] \mathbb{P}[f_n = \text{Abandon}] \\

&= (p A_n + G_n) \mathbb{P}[f_n = \text{Toss}] + G_n \mathbb{P}[f_n = \text{Abandon}] \\

&\leq (p A_n + G_n) \bigl(\mathbb{P}[f_n = \text{Toss}] + \mathbb{P}[f_n = \text{Abandon}]\bigr) \\

&\leq p A_n + G_n.

\end{aligned}

\end{equation*}

\begin{equation*}

\begin{aligned}

\mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1}]

&= \mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1} \mid f_n = \text{Toss}] \mathbb{P}[f_n = \text{Toss}] \\

&\quad + \mathbb{E}_{s,\mathfrak{f}}[p A_{n+1} + G_{n+1} \mid f_n = \text{Abandon}] \mathbb{P}[f_n = \text{Abandon}] \\

&= (p A_n + G_n) \mathbb{P}[f_n = \text{Toss}] + G_n \mathbb{P}[f_n = \text{Abandon}] \\

&\leq (p A_n + G_n) \bigl(\mathbb{P}[f_n = \text{Toss}] + \mathbb{P}[f_n = \text{Abandon}]\bigr) \\

&\leq p A_n + G_n.

\end{aligned}

\end{equation*} It follows that, whatever the strategy chosen, ![]() $(p A_n + G_n)$ is a supermartingale. The stopping time

$(p A_n + G_n)$ is a supermartingale. The stopping time ![]() $\tau \wedge n$ is finite, so by Doob’s theorem,

$\tau \wedge n$ is finite, so by Doob’s theorem,

Hence,

since the initial state is ![]() $(a,h)$ by hypothesis and

$(a,h)$ by hypothesis and ![]() $G_0=0$. Moreover, by Equation (1),

$G_0=0$. Moreover, by Equation (1), ![]() $\mathbb{E}_{s,\mathfrak{f}}[G_{\tau \wedge n}]$ converges to

$\mathbb{E}_{s,\mathfrak{f}}[G_{\tau \wedge n}]$ converges to  $\sum_{t=0}^{\tau - 1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t]$. Therefore,

$\sum_{t=0}^{\tau - 1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t]$. Therefore,

\begin{equation*}

\sum_{t=0}^{\tau - 1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \leq p\, a

\end{equation*}

\begin{equation*}

\sum_{t=0}^{\tau - 1} \mathbb{E}_{s,\mathfrak{f}}[\tilde{R}_t] \leq p\, a

\end{equation*}which completes the proof.

By taking ![]() $(a,h)=(0,0)$, we deduce the following corollary.

$(a,h)=(0,0)$, we deduce the following corollary.

Corollary 2.4. The game ![]() $HT^3(0,0)$ game is not arbitrable.

$HT^3(0,0)$ game is not arbitrable.

3. Application to bitcoin

We now turn to applications to the Bitcoin protocol [Reference Antonopoulos1, Reference Nakamoto21]. We present three mining problems on the Bitcoin network, all of which we solve using the stochastic games studied previously. The first variant models temporary Bitcoin forks, determining the threshold at which an honest but temporarily “Byzantine” miner continues mining on their fork to recover orphaned blocks. The second, biased variant explains vulnerabilities in the difficulty adjustment formula, providing a simple derivation—without Markov decision solvers—of when a miner lacking connectivity benefits from a deviant strategy. The unbiased third variant shows that this difficulty adjustment issue can be theoretically fully corrected. Our results align with the literature and clarify it quantitatively and qualitatively. In each case, the random event corresponding to the creation of a block is modeled as a coin toss: if Heads occurs, the honest miners find a block; if Tails occurs, the attacker does.

It is the intrinsic nature of the game—namely, the fact that it can be biased in favor of the player according to the definition given in the previous section—that allows us to understand why the honest strategy is not always optimal.

In the first case (Sections 2.1 and 3.1), it is in the miner-player’s best interest to maintain their fork because if they succeed, they stand to gain significantly; the risk is therefore justified. Therefore, if the miner-player starts with a slight advantage (![]() $a \gt 0$), the game

$a \gt 0$), the game ![]() $HT^1(a,h)$ can be arbitrable even when

$HT^1(a,h)$ can be arbitrable even when ![]() $h \gt a$. See Proposition 2.1.

$h \gt a$. See Proposition 2.1.

In the second case (Sections 2.3 and 3.2), the effect of the difficulty adjustment is reflected in the cost incurred by the miner-player when new blocks are added. Only the height of the official blockchain is considered in the difficulty adjustment, meaning only unfavorable outcomes for the miner (i.e., when Heads occurs) impose a cost. When the miner discovers a block (i.e., when Tails occurs), the official blockchain height does not increase. From this, we can see that the game is biased in favor of the miner-player even when ![]() $a=0$ (no blocks at the start). See Theorem 2.2. The fact that the game

$a=0$ (no blocks at the start). See Theorem 2.2. The fact that the game ![]() $HT^2(0,0)$ is arbitrable highlights a flaw in Bitcoin’s difficulty adjustment mechanism.

$HT^2(0,0)$ is arbitrable highlights a flaw in Bitcoin’s difficulty adjustment mechanism.

Finally, in the third case (Sections 2.5 and 3.3), when the miner overwrites the official blockchain, they orphan all the honest miners’ blocks that they replace. As a result, their net gain is reduced, since these orphaned blocks are publicly known and the miner now incurs a cost for each orphaned block created. The fact that the ![]() $HT^3(0,0)$ game is not arbitrable reflects the correction made to the difficulty adjustment formula.

$HT^3(0,0)$ game is not arbitrable reflects the correction made to the difficulty adjustment formula.

3.1. Temporarily Byzantine by “force of circumstance”

When analyzing the security of certain systems, it is common practice in computer science to consider two very distinct categories of actors: honest participants, who respectfully follow the rules of protocol, and attackers. Following the terminology introduced by [Reference Lamport, Shostak and Pease19] in the study of distributed systems, the latter are called “Byzantines.” In general, we do not change categories. Nevertheless, depending on the circumstances, we may occasionally be led to do so, such as a person who is fundamentally honest but finds a large sum of money on the street and decides to keep it for himself, without any effort to find the rightful owner. We consider a simple situation where an honest miner on the Bitcoin network can be tricked into not respecting the rules of the protocol: the creation of a temporary fork. This is a relatively rare occurrence, but not an extremely rare one. According to statistical analyses carried out between 18/03/2014 and 14/06/2017, the rate of orphan block creation was 0.31% for this period, and it is likely that this rate is even lower today thanks to the new versions of Bitcoin Core [4, 8]. We consider the case where two “honest” miners, each mining on the official blockchain, find a block at almost the same time. “Honest” means here, as elsewhere in the article, that the miner always mines on the last block of the official blockchain and always immediately makes his discoveries public. In general, the first block discovered takes precedence and the second is considered “orphaned,” although this terminology is imprecise. The Bitcoin wiki site prefers to speak of a “stale block” [5]. The miner who mined the second block is then drawn into a deviant posture. It is clearly in his interest to mine his orphan block rather than the last official block, because if he manages to mine a new block before the rest of the network, he will earn the reward contained in two blocks rather than just one. But then imagine that the other miners discover a block before he does. He must now not only catch up with the official blockchain, but also mine an additional block to gain the upper hand. Should he continue mining on his fork, or return to mining on the last block of the official blockchain, as stipulated by the Bitcoin protocol? This is an unprecedented situation for the miner, who eventually becomes “Byzantine” by “force of circumstances.” The situation is indeed unprecedented, since it is perhaps the first time that it has been imposed on the miner, and it is unlikely that he will find himself in the same situation twice in a row in the future in the course of his activity. Furthermore, being fundamentally “honest,” the miner, if he manages to catch up with and surpass the official blockchain, will benefit immediately. In particular, he will not engage in a block-withholding attack. The miner may become temporarily byzantine. His attack starts when he is one block behind the official blockchain and ends as soon as he gives up or manages to catch up and exceed the official blockchain by one block. In both cases, he resumes his position as an honest miner. The natural question is: Is it in his interest to continue mining on his fork, or should he abandon it and return to mining on the official blockchain? What is the threshold in terms of relative hash power at which an a priori “honest” miner has an interest in stubbornly mining on his fork when he is one block behind on the official blockchain? This question can be resolved using the classic coin toss game seen in Section 2.1.

• The Toss action corresponds to the fact that the miner persists in mining on his fork despite a delay on the official blockchain.

• The Crush action corresponds to the fact that the miner has a secret fork enabling him to gain an advantage over the official blockchain. He then decides to make it public and pocket all the rewards it contains; he then naturally resumes his position as an honest miner.

• The Abandon action means that the miner returns to mining on the last block of the official blockchain, like any honest miner.

Remark 3.1. In fact, given that the miner overwrites the official blockchain whenever they have the opportunity, the associated game is actually only a subgame of the first game described in Section 2.1, in the sense of Definition 3.1 below. For ![]() $a \gt h$, we have

$a \gt h$, we have ![]() $A(s) = \{\text{Crush}\} \subset \{\text{Crush}, \text{Toss}\}$. However, it was also shown in this section that the optimal strategy for this game is to stop playing as soon as the Abandon or Crush action is used once.

$A(s) = \{\text{Crush}\} \subset \{\text{Crush}, \text{Toss}\}$. However, it was also shown in this section that the optimal strategy for this game is to stop playing as soon as the Abandon or Crush action is used once.

Definition 3.1 (Sub-game)

We say that a non-competitive stochastic game ![]() $(S, A, P, R)$ is a sub-game of another non-competitive stochastic game

$(S, A, P, R)$ is a sub-game of another non-competitive stochastic game ![]() $(S', A', P', R')$ if the following conditions are satisfied :

$(S', A', P', R')$ if the following conditions are satisfied : ![]() $S\subset S'$, for any

$S\subset S'$, for any ![]() $s\in S$,

$s\in S$, ![]() $A(s)\subset A'(s)$, for any

$A(s)\subset A'(s)$, for any ![]() $(s,s')^2\in S$ and any action

$(s,s')^2\in S$ and any action ![]() $\alpha\in A(s)$, we have

$\alpha\in A(s)$, we have ![]() $P(s' \mid s, \alpha)=P'(s' \mid s, \alpha)$ and

$P(s' \mid s, \alpha)=P'(s' \mid s, \alpha)$ and ![]() $R(s' \mid s, \alpha)=R'(s' \mid s, \alpha)$.

$R(s' \mid s, \alpha)=R'(s' \mid s, \alpha)$.

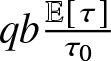

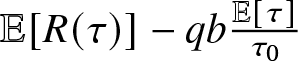

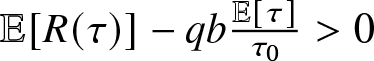

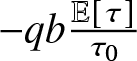

In the temporary fork situation we are considering, a mining strategy is just the stopping time ![]() $\tau$ that specifies the first instant when the miner returns to mine on the official blockchain. We denote

$\tau$ that specifies the first instant when the miner returns to mine on the official blockchain. We denote ![]() $R(\tau)$ the income earned by the miner following this strategy. We need to compare

$R(\tau)$ the income earned by the miner following this strategy. We need to compare ![]() $R(\tau)$ with the income the miner would have earned in

$R(\tau)$ with the income the miner would have earned in ![]() $\tau$ if he had mined honestly all along. Given that the miner’s relative hashing power is

$\tau$ if he had mined honestly all along. Given that the miner’s relative hashing power is ![]() $q$, the latter quantity is worth on average

$q$, the latter quantity is worth on average  $q b\frac{\mathbb{E}

[\tau]}{\tau_0}$ with

$q b\frac{\mathbb{E}

[\tau]}{\tau_0}$ with ![]() ${\tau_0}=10$ minutes and

${\tau_0}=10$ minutes and ![]() $b=3.125$ BTC (current value of a coinbase) plus the average value of transaction fees present in a block. So the key quantity for choosing the

$b=3.125$ BTC (current value of a coinbase) plus the average value of transaction fees present in a block. So the key quantity for choosing the ![]() $\tau$ strategy over the honest one is

$\tau$ strategy over the honest one is  $\mathbb{E}

[R(\tau)]-q b \frac{\mathbb{E}

[\tau]}{\tau_0}$. The

$\mathbb{E}

[R(\tau)]-q b \frac{\mathbb{E}

[\tau]}{\tau_0}$. The ![]() $\tau$ strategy is preferable to the honest strategy if

$\tau$ strategy is preferable to the honest strategy if  $\mathbb{E}

[R(\tau)]-q b \frac{\mathbb{E}

[\tau]}{\tau_0} \gt 0$. The second term

$\mathbb{E}

[R(\tau)]-q b \frac{\mathbb{E}

[\tau]}{\tau_0} \gt 0$. The second term  $-q b \frac{\mathbb{E}

[\tau]}{\tau_0}$ is then interpreted as a cost. In this expression, everything happens as if the miner were paying

$-q b \frac{\mathbb{E}

[\tau]}{\tau_0}$ is then interpreted as a cost. In this expression, everything happens as if the miner were paying ![]() $q b$ every time a block is discovered. Hence, the fact that the player-miner pays a fixed cost to the croupier, which is

$q b$ every time a block is discovered. Hence, the fact that the player-miner pays a fixed cost to the croupier, which is ![]() $q$ whatever the result of the coin toss (the unit of wealth is

$q$ whatever the result of the coin toss (the unit of wealth is ![]() $b$). The parameter

$b$). The parameter ![]() $q$ is the probability that the coin will land on Tails, which corresponds to the miner finding a block before the honest miners.

$q$ is the probability that the coin will land on Tails, which corresponds to the miner finding a block before the honest miners.

As observed in Section 2.1, for ![]() $q=0.429056$, we have

$q=0.429056$, we have  $E^1_{75} (1, 2) \gt 0$. However, we are unable to find

$E^1_{75} (1, 2) \gt 0$. However, we are unable to find ![]() $n$ such that

$n$ such that ![]() $E^1_{n} (1, 2) \gt 0$ for

$E^1_{n} (1, 2) \gt 0$ for ![]() $q\leq 0.429055$. So, we can state.

$q\leq 0.429055$. So, we can state.

Proposition 3.1. In the case of a temporary fork, the minimum threshold beyond which an a priori honest miner to insist on mining on his fork even though he is one block behind on the blockchain is about ![]() $42, 91$%.

$42, 91$%.

The result is in line with the ![]() $36.1$% and

$36.1$% and ![]() $45.5$% bounds obtained in [Reference Kiayias, Koutsoupias, Kyropoulou and Tselekounis17] with calculus in their section “The Immediate-Release Game.” Moreover, in a presentation of this article given at the University of Crete in

$45.5$% bounds obtained in [Reference Kiayias, Koutsoupias, Kyropoulou and Tselekounis17] with calculus in their section “The Immediate-Release Game.” Moreover, in a presentation of this article given at the University of Crete in ![]() $2019$ the speaker (who is also one of the authors of the article) is more precise and states that the threshold lies between

$2019$ the speaker (who is also one of the authors of the article) is more precise and states that the threshold lies between ![]() $42$% and

$42$% and ![]() $43$% (the lower bound

$43$% (the lower bound ![]() $42$% is also in the paper) [Reference Kiayias, Koutsoupias, Kyropoulou and Tselekounis17, Reference Koutsoupias18]. An a priori honest miner therefore has a temporary interest in holding on to his fork as soon as he has more than

$42$% is also in the paper) [Reference Kiayias, Koutsoupias, Kyropoulou and Tselekounis17, Reference Koutsoupias18]. An a priori honest miner therefore has a temporary interest in holding on to his fork as soon as he has more than ![]() $42.91\%$ of relative hash power. Note, however, that if the honest miners find a second block again (so that

$42.91\%$ of relative hash power. Note, however, that if the honest miners find a second block again (so that ![]() $a=1$ and

$a=1$ and ![]() $h=3$) then the miner has an interest in giving up and returning to mine on the last block of the official blockchain. Indeed, we are unable to find a value of

$h=3$) then the miner has an interest in giving up and returning to mine on the last block of the official blockchain. Indeed, we are unable to find a value of ![]() $n\in\mathbb{N}$ and

$n\in\mathbb{N}$ and  $q \lt \frac{1}{2}$ such that

$q \lt \frac{1}{2}$ such that ![]() $E^1_{n}(1,3) \gt 0$.

$E^1_{n}(1,3) \gt 0$.

3.2. To be or not to be totally Byzantine?

We now consider another mining problem. At what relative hash power ![]() $q$ does it no longer make sense for a miner to be honest? For a miner, being honest means always mining on the last block of the official blockchain and always making any blocks discovered public. Not being honest means the opposite: keeping discovered blocks secret or not mining on the last block of the official blockchain. The problem under consideration is fundamentally different from the one previously considered. The miner is not an honest miner who momentarily becomes “Byzantine” by force of circumstances. On the contrary, he chooses his camp from the outset (honest or Byzantine) and never leaves it [Reference Rosenfeld23]. What is more, his mining strategy is not limited in time. On the contrary, it is unlimited and repetitive. This problem has certainly already been solved in the general case [Reference Sapirshtein, Sompolinsky and Zohar24]. The authors recognize a Markov decision problem, which they solve using a solver. They are then confronted with technical issues, as a priori, such a solver can only be used in the case where the number of system states is finite. We show that it is possible to simplify this problem in the case where the connectivity of the miner is zero, and that it then resembles the second non-competitive stochastic game

$q$ does it no longer make sense for a miner to be honest? For a miner, being honest means always mining on the last block of the official blockchain and always making any blocks discovered public. Not being honest means the opposite: keeping discovered blocks secret or not mining on the last block of the official blockchain. The problem under consideration is fundamentally different from the one previously considered. The miner is not an honest miner who momentarily becomes “Byzantine” by force of circumstances. On the contrary, he chooses his camp from the outset (honest or Byzantine) and never leaves it [Reference Rosenfeld23]. What is more, his mining strategy is not limited in time. On the contrary, it is unlimited and repetitive. This problem has certainly already been solved in the general case [Reference Sapirshtein, Sompolinsky and Zohar24]. The authors recognize a Markov decision problem, which they solve using a solver. They are then confronted with technical issues, as a priori, such a solver can only be used in the case where the number of system states is finite. We show that it is possible to simplify this problem in the case where the connectivity of the miner is zero, and that it then resembles the second non-competitive stochastic game ![]() $HT^2$. Recall that miner connectivity is a parameter introduced by [Reference Eyal and Sirer9] and picked up by many authors since then. This parameter, often denoted by the Greek letter

$HT^2$. Recall that miner connectivity is a parameter introduced by [Reference Eyal and Sirer9] and picked up by many authors since then. This parameter, often denoted by the Greek letter ![]() $\gamma$, measures the attacker’s ability to react in the case where it possesses a hidden block on one of its computers that has the same height as the latest block in the official blockchain. If it is well-connected, it will quickly learn of the existence of a new block before the others and announce the existence of its own hidden block to the rest of the network. This is a measure of its ability to create confusion in the network.

$\gamma$, measures the attacker’s ability to react in the case where it possesses a hidden block on one of its computers that has the same height as the latest block in the official blockchain. If it is well-connected, it will quickly learn of the existence of a new block before the others and announce the existence of its own hidden block to the rest of the network. This is a measure of its ability to create confusion in the network.

Since the network is constantly evolving, it is an illusion to believe that ![]() $\gamma$ remains constant over time. However, this is an assumption often made when assessing the profitability of mining strategies. In itself, connectivity is an attack vector that was not imagined by Satoshi Nakamoto, since it does not feature in his founding paper. With

$\gamma$ remains constant over time. However, this is an assumption often made when assessing the profitability of mining strategies. In itself, connectivity is an attack vector that was not imagined by Satoshi Nakamoto, since it does not feature in his founding paper. With ![]() $\gamma=1$, a miner has no incentive to be honest. He has no interest in publishing a block he has just discovered. He can simply wait for another block to be discovered and react then. It is interesting to pose

$\gamma=1$, a miner has no incentive to be honest. He has no interest in publishing a block he has just discovered. He can simply wait for another block to be discovered and react then. It is interesting to pose ![]() $\gamma=0$ to understand how Nakamoto’s consensus can naturally be faulted without adding this attack vector. We therefore formulate this hypothesis (