1. Introduction

Artificial Intelligence (AI) emerged approximately 70 years ago as a result of humanity’s enduring ambition to model and compute reasoning and logic. The Dartmouth Summer Research Project on Artificial Intelligence, in 1956, marked a pivotal moment, operating under the conjecture that ‘every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it’ (McCarthy et al. Reference McCarthy, Minsky, Rochester and Shannon1956). This project catalysed a prolific wave of research aimed at replicating human capabilities, such as reasoning, vision (Russakovsky et al. Reference Russakovsky2015) and speech recognition (Graves, Mohamed & Hinton Reference Graves, Mohamed and Hinton2013), among many others, through numerical algorithms, giving rise to what we now recognise as AI.

However, a paradigm shift has emerged in recent years: rather than replacing human abilities, AI is increasingly being used to augment or even replace traditional mathematical algorithms, as it is the case in Computational Science (CS) (Tompson et al. Reference Tompson, Schlachter, Sprechmann and Perlin2017; Raissi, Perdikaris & Karniadakis Reference Raissi, Perdikaris and Karniadakis2019; Cranmer et al. Reference Cranmer, Greydanus, Hoyer, Battaglia, Spergel and Ho2020a ; Lemos et al. Reference Lemos, Jeffrey, Cranmer, Ho and Battaglia2023). This shift has profound implications, especially in the context of improving well-established numerical solvers and algorithms. However, are these same AI methods, designed to mimic human perception or language, also relevant when the task is to approximate mathematical operators? A typical illustration of this change in task nature lies in the visual metrics or simple mean square errors (MSEs) employed in the AI community, in contrast with the machine-precision accuracy and physics-based metrics usually adopted in CS. This raises the critical question: what modifications or safeguards are necessary to adapt off-the-shelf AI algorithms for physically constrained or computationally rigorous tasks? Thus, while most recent reviews on AI for computational physics (Cai et al. Reference Cai, Mao, Wang, Yin and Karniadakis2021; Herrmann & Kollmannsberger Reference Herrmann and Kollmannsberger2024), engineering (Zhou et al. Reference Zhou, Cui, hu, Zhang, Yang, Liu, Wang, Li and Sun2020; Azar et al. Reference Azar2021) or CFD (Garnier et al. Reference Garnier, Viquerat, Rabault, Larcher, Kuhnle and Hachem2021; Lino et al. Reference Lino, Fotiadis, Bharath and Cantwell2023) emphasised on the various techniques and their improvements to tackle increasingly more complex situations, this work investigates this transformation from an epistemological perspective, addressing a critical question: is AI for computational science a tangible reality or merely a transient hype?

From the early ages of AI, researchers have studied the epistemological aspects of this new discipline. For instance, McCarthy (Reference McCarthy1981) considered that the epistemological part of AI, which studies what kind of tasks AI can address in principle, must be separate from the heuristic part of AI, which focuses on how, in practice, one can devise algorithms to solve those tasks. One benefit of relying on epistemological analysis is that a single solution of an epistemological problem can often admit multiple technical implementations. In other words, it would be wise to elucidate a few foundational principles required for AI in CS and CFD from an epistemological perspective, rather than enumerating the numerous technological solutions possible to address these challenges. For instance, Ganascia (Reference Ganascia2010) stresses the importance of distinguishing between the shortcomings of specific algorithms and the adequacy of the fundamental principles underlying AI. In his view, most early criticisms of ‘old-fashioned AI’ focused on algorithmic immaturity, without addressing whether the core principles of AI were inherently limited for these tasks. As a consequence, this present review will follow this epistemological perspective, by first identifying in § 3 the pillars of effectiveness in science through an epistemological approach, before exploring in § 4 how these fundamental aspects can actually be technically integrated into AI frameworks.

Note that some essays have already adopted such an epistemological perspective on particular aspects of AI, for example, its social inadequacy (Ganascia Reference Ganascia2010) or the philosophical challenges when using intelligent systems for health (Sa, Carvalho & Naves Reference Sa, Carvalho and Naves2023). However, works focusing on the epistemological foundation of AI in science, and more specifically into CS or CFD, are still rare. In that context, Duan (Reference Duan2023) explored the challenges to build artificial scientist. Velthoven & Marcus (Reference Velthoven and Marcus2024) analysed the implications of AI in science through the philosophical ideas of Popper (Reference Popper1962). Their main conclusion is that AI, alone, cannot advance science as it cannot explain why certain theories should be preferred over others because of its underlying inductive approach of knowledge, an idea also reported by Anderson & Abrahams (Reference Anderson and Abrahams2009). Note however that Velthoven & Marcus (Reference Velthoven and Marcus2024) employed systematically ‘current AI’ in their conclusions, opening the door to future AI systems, for example, combining both deductive and inductive approaches. This perspective is also shared by Krenn et al. (Reference Krenn2022) and Kaiser et al. (Reference Kaiser, Wu, Sonnewald, Thackray and Callis2024), who argued that an AI could, in principle, generate revolutionary theories, but only under specific conditions. For instance, they introduced the notion of ‘agent of understanding’, a condition defined as the capability of an AI system to conceptualise new scientific insights and, crucially, to transfer this understanding to human researchers. While these conditions are not fulfilled nowadays, authors envisioned the possibility that future intelligent systems may indeed both generate novel theories and render them intelligible to humans. In contrast, Alvarado (Reference Alvarado2023) considered AI in science as a simple epistemic tool, that is to say, without revolutionising the way science is conducted. As a summary, the epistemology of AI for science is still recent but bourgeoning, often debating on the ability of AI to generate novel scientific discovery. Nevertheless, to my knowledge, no work investigates the epistemology associated with AI algorithms replacing existing mathematical-based algorithms and solvers in computational science, which is a different task than the only theoretical discovery. In other words, the main objective of these perspectives is to extract what foundational principles are required for AI to be useful in CS and CFD, where mathematical algorithms are currently massively employed.

To approach this question of the potential effectiveness of AI in CS and CFD, I first provide a general background on deep learning in § 2. Then, I explore in § 3 why mathematics has been so effective in science, with a specific focus on fluid mechanics, particularly in the development of mathematical-based algorithms for solving fluid equations in CFD. This analysis identifies four foundational pillars of mathematical effectiveness (PoEs), namely: (i) symmetries, which impose internal structure and coherence; (ii) scale separation, allowing distinct treatments for the different scales and their interactions; (iii) sparsity, which simplifies complexity and enhances explicability; and (iv) semantic significance, which fosters abstraction, reasoning, and interpretability. Next, I examine how these PoEs align with advancements in AI in § 4. Each aspect is analysed to determine how recent AI methods integrate these principles, particularly in the domain of CFD. This examination is supported by a review of recent applications of AI in fluid mechanics, showcasing its potential to complement, or even surpass, traditional solvers in specific contexts. Beyond these PoEs, I also examine a fifth pillar, credibility (Jebeile Reference Jebeile2024), which is essential for translating effectiveness into the widespread adoption of AI within the fluid mechanics community. The findings suggest that AI does not inherently lack the capacity to incorporate these principles, so that they can be used as guidelines for future AI developments in CFD and, more generally, in computational science.

2. General background on deep learning

2.1. Neuron and neural architectures

This perspective focuses primarily on deep learning approaches in CFD. Deep learning is a branch of AI that leverages neural networks to approximate a function

![]() $f{\kern-1pt}(\boldsymbol{\cdot })$

mapping an input

$f{\kern-1pt}(\boldsymbol{\cdot })$

mapping an input

![]() $x$

and an output

$x$

and an output

![]() $y$

. This neural network, denoted

$y$

. This neural network, denoted

![]() $f_\theta$

, is parametrised by a user-defined set of weights

$f_\theta$

, is parametrised by a user-defined set of weights

![]() $\theta = \{\theta _1, \ldots , \theta _N \}$

, where

$\theta = \{\theta _1, \ldots , \theta _N \}$

, where

![]() $N$

can be very large. As such, neural networks belong to the broader family of parametric models.

$N$

can be very large. As such, neural networks belong to the broader family of parametric models.

From the early models (Rosenblatt Reference Rosenblatt1958), the core idea behind neural networks has remained: decompose the complex function

![]() $f_\theta$

into a composition of simple building blocks called neurons. Each neuron receives an input

$f_\theta$

into a composition of simple building blocks called neurons. Each neuron receives an input

![]() $x_n$

and produces an output

$x_n$

and produces an output

![]() $y_n$

, where

$y_n$

, where

![]() $x_n$

and

$x_n$

and

![]() $y_n$

can represent either the actual inputs or outputs of the target function

$y_n$

can represent either the actual inputs or outputs of the target function

![]() $f{\kern-1pt}(\boldsymbol{\cdot })$

, or intermediate values, often referred to as hidden variables.

$f{\kern-1pt}(\boldsymbol{\cdot })$

, or intermediate values, often referred to as hidden variables.

Most modern network architectures still follow the seminal principle introduced by McCulloch & Pitts (Reference McCulloch and Pitts1943), inspired by biological neurons: each artificial neuron is composed of: (i) a linear parametrised aggregation function

![]() $h_\theta$

, followed by (ii) a fixed nonlinear activation function

$h_\theta$

, followed by (ii) a fixed nonlinear activation function

![]() $\sigma$

. This leads to the fundamental equation of a neuron:

$\sigma$

. This leads to the fundamental equation of a neuron:

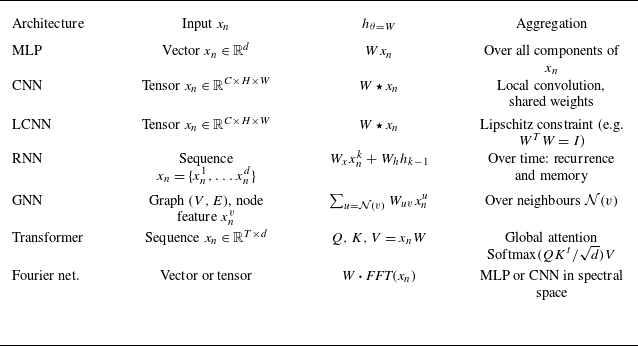

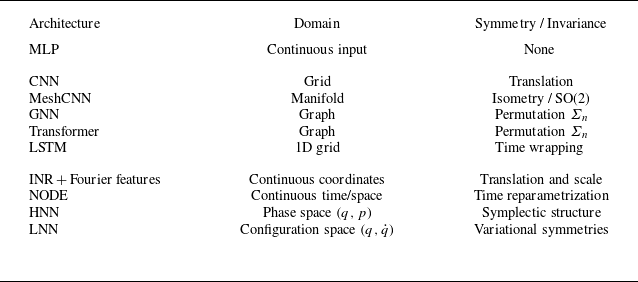

Despite its simplicity, (2.1) offers a flexible framework to construct a wide range of neural network architectures. The form of the aggregation function

![]() $h_\theta$

can be tailored to the input structure, as illustrated in table 1. Moreover, the choice of aggregation function directly impacts the properties of the resulting network, as further detailed in § 4.1.2. For instance, employing convolutional operations in convolutional neural networks (CNNs) allows weights sharing across the whole image, significantly reducing the number of learnable parameters (i.e. smaller

$h_\theta$

can be tailored to the input structure, as illustrated in table 1. Moreover, the choice of aggregation function directly impacts the properties of the resulting network, as further detailed in § 4.1.2. For instance, employing convolutional operations in convolutional neural networks (CNNs) allows weights sharing across the whole image, significantly reducing the number of learnable parameters (i.e. smaller

![]() $W$

). In addition, since convolution is translational equivariant, this symmetry naturally extends to the convolutional layers of the network.

$W$

). In addition, since convolution is translational equivariant, this symmetry naturally extends to the convolutional layers of the network.

Various well-known neural architectures, and their respective input format

![]() $x_n$

and associated aggregation function

$x_n$

and associated aggregation function

![]() $h_\theta$

parametrised by

$h_\theta$

parametrised by

![]() $\theta = W$

(bias is omitted for brevity). Architectures considered include the multilayer perceptron (MLP), convolutional neural network (CNN), Lipschitz-constrained neural network (LCNN), recurrent neural network (RNN) or graph neural network (GNN).

$\theta = W$

(bias is omitted for brevity). Architectures considered include the multilayer perceptron (MLP), convolutional neural network (CNN), Lipschitz-constrained neural network (LCNN), recurrent neural network (RNN) or graph neural network (GNN).

While most aggregation functions are linear, it is worth noting that attention mechanisms (Vaswany et al. Reference Vaswany, Shazeer, Parmar, Uszkoreit, Jones, Gomez and Kaiser2017) in transformer architecture (table 1) behave differently. Although the values

![]() $V$

are obtained through a linear transformation of the input (

$V$

are obtained through a linear transformation of the input (

![]() $V = x_n W_V$

), the global attention weights depend on the inputs: they are computed as

$V = x_n W_V$

), the global attention weights depend on the inputs: they are computed as

where the query is

![]() $Q = x_n W_Q$

, the key is

$Q = x_n W_Q$

, the key is

![]() $K = x_n W_K$

and

$K = x_n W_K$

and

![]() $d$

is the dimensionality of the query/key vectors. Since both

$d$

is the dimensionality of the query/key vectors. Since both

![]() $Q$

and

$Q$

and

![]() $K$

are linear in

$K$

are linear in

![]() $x_n$

, the dot product

$x_n$

, the dot product

![]() $QK^t$

introduced a quadratic dependency of the input, resulting in a nonlinear data-dependent aggregation weights

$QK^t$

introduced a quadratic dependency of the input, resulting in a nonlinear data-dependent aggregation weights

![]() $W(x_n)$

. This makes attention mechanisms fundamentally different and powerful, compared with fixed or shared-weight linear aggregations like convolutions.

$W(x_n)$

. This makes attention mechanisms fundamentally different and powerful, compared with fixed or shared-weight linear aggregations like convolutions.

Once the aggregation and activation functions are chosen, several neurons can be interconnected to form a network. Neurons are typically organised into layers, where all neurons in a layer share the same inputs, but produce different outputs. These outputs then serve as inputs for the neurons in the next layer. Stacking multiple layers introduces additional nonlinearities into the model, thereby increasing its capacity to represent complex functions. When a network contains many layers, it is called a deep neural network. The way neurons are interconnected across layers defines the network architecture. Various connection patterns can be employed, such as skip connections or residual blocks, which often improve training stability and convergence. Additional modules, such as normalisation layers, or downsampling and upsampling operations can also be included to enhance performance or efficiency. Overall, the flexibility in defining both the neuron internal structure and the network architecture makes neural networks an extremely versatile framework, capable of addressing a wide range of tasks and data modalities.

2.2. Training procedure: learning is optimising

Training a neural network consists in optimising its weights

![]() $\theta$

to minimise a metric, often called loss function, denoted

$\theta$

to minimise a metric, often called loss function, denoted

![]() $\mathcal{L}(\boldsymbol{\cdot })$

. In supervised learning, this process relies on a training dataset

$\mathcal{L}(\boldsymbol{\cdot })$

. In supervised learning, this process relies on a training dataset

![]() $\mathcal{D}_T = \{ x^{(i)}, y^{(i)} \}_{i=1..M}$

composed of input–output pairs, where

$\mathcal{D}_T = \{ x^{(i)}, y^{(i)} \}_{i=1..M}$

composed of input–output pairs, where

![]() $x^{(i)}$

(respectively

$x^{(i)}$

(respectively

![]() $y^{(i)}$

) is the ith input (respectively target output) sample. The goal of training is therefore to find the optimal set of weights

$y^{(i)}$

) is the ith input (respectively target output) sample. The goal of training is therefore to find the optimal set of weights

![]() $\theta ^*$

that minimises the loss over all samples in the dataset, which can be expressed generically as

$\theta ^*$

that minimises the loss over all samples in the dataset, which can be expressed generically as

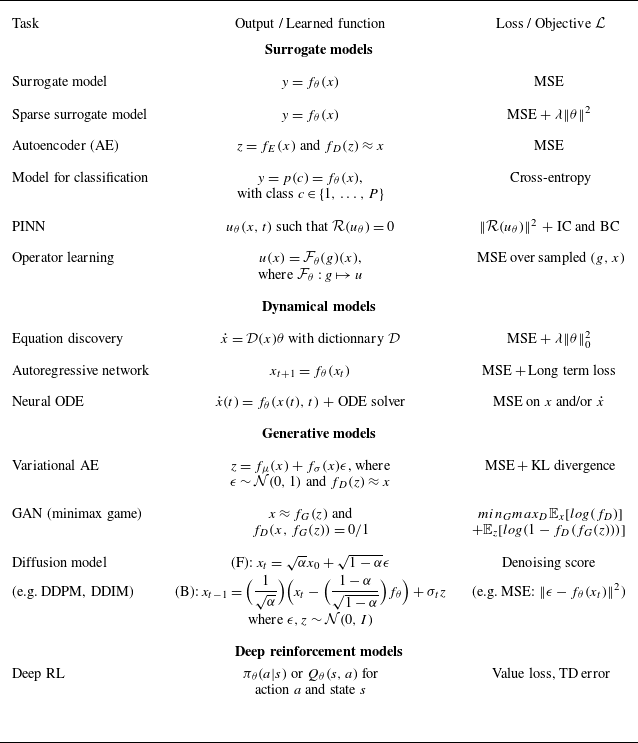

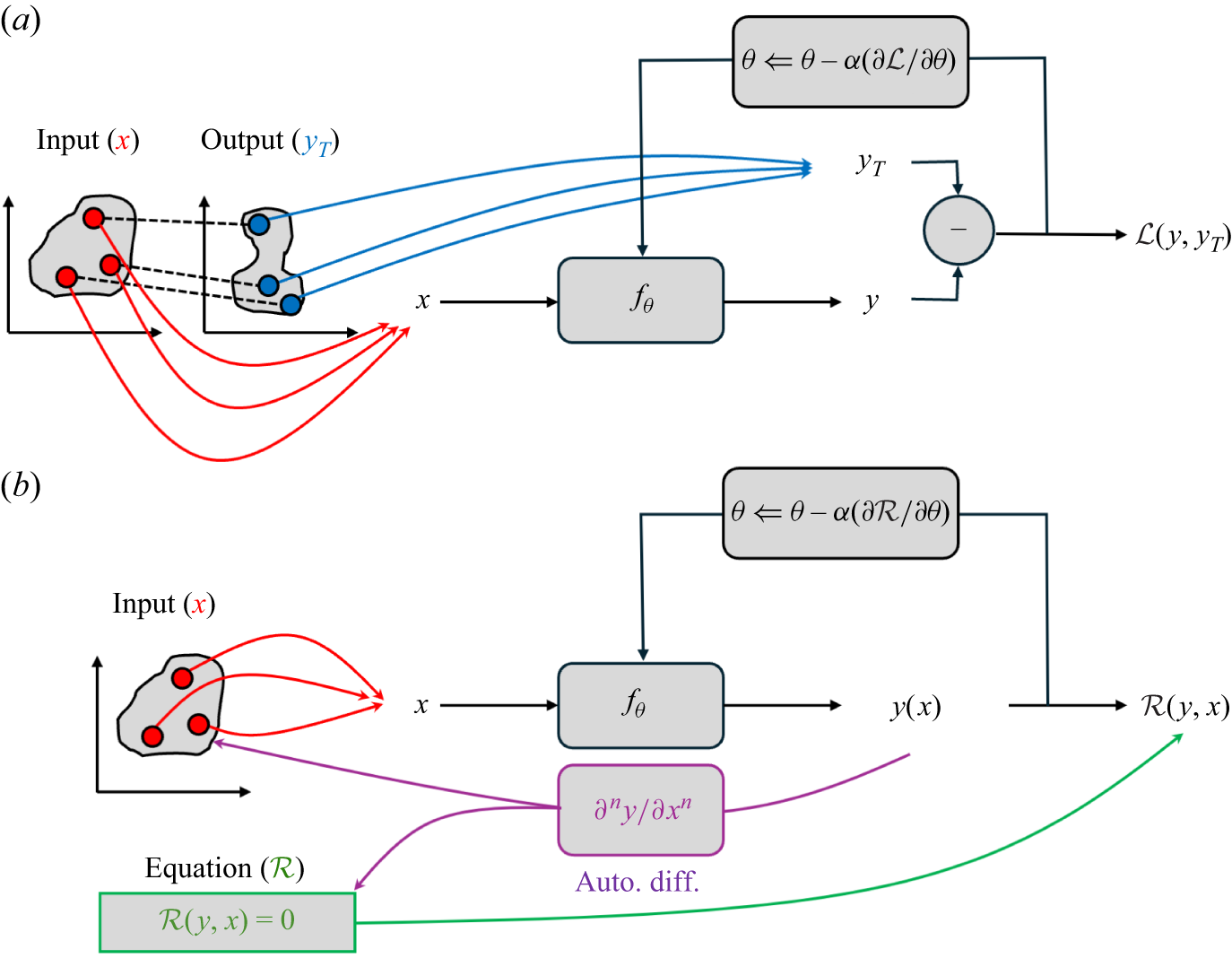

Equation (2.3) provides a general and flexible formulation of the learning process, where the choice of the target definition

![]() $y$

and the loss function

$y$

and the loss function

![]() $\mathcal{L}$

determines the type of neural network and the nature of the task it can address. Depending on these choices, one can design models for surrogate modelling, generative modelling, classification or even deep reinforcement learning (see table 2). As a simple example, a standard supervised training set-up using the mean squared error (MSE) loss is defined as

$\mathcal{L}$

determines the type of neural network and the nature of the task it can address. Depending on these choices, one can design models for surrogate modelling, generative modelling, classification or even deep reinforcement learning (see table 2). As a simple example, a standard supervised training set-up using the mean squared error (MSE) loss is defined as

In contrast, incorporating L2 regularisation (also known as weight decay) modifies the objective as

where

![]() $\lambda$

is a user-defined hyperparameter controlling the strength of the regularisation term and

$\lambda$

is a user-defined hyperparameter controlling the strength of the regularisation term and

![]() $\| \boldsymbol{\cdot }\|$

denotes the L2 norm. Note that classical least square method and Ridge regression correspond to (2.4)–(2.5) where the function

$\| \boldsymbol{\cdot }\|$

denotes the L2 norm. Note that classical least square method and Ridge regression correspond to (2.4)–(2.5) where the function

![]() $f_\theta$

is linear, i.e. where the activation function is

$f_\theta$

is linear, i.e. where the activation function is

![]() $\sigma = Id$

.

$\sigma = Id$

.

These surrogate models trained with an MSE loss require both the input

![]() $x$

and the corresponding output

$x$

and the corresponding output

![]() $y$

to compute the reconstruction error

$y$

to compute the reconstruction error

![]() $\| y - f_\theta (x) \|^2$

. When the true output is unavailable, a self-supervised strategy can still be employed by training the network to reconstruct its own input, enforcing

$\| y - f_\theta (x) \|^2$

. When the true output is unavailable, a self-supervised strategy can still be employed by training the network to reconstruct its own input, enforcing

![]() $x \approx f_\theta (x)$

. This approach becomes useful when the reconstruction is performed through a low-dimensional latent code

$x \approx f_\theta (x)$

. This approach becomes useful when the reconstruction is performed through a low-dimensional latent code

![]() $z$

, which enables an efficient compression of the input representation. This task is typically achieved through an autoencoder (AE) architecture, composed of two networks: an encoder

$z$

, which enables an efficient compression of the input representation. This task is typically achieved through an autoencoder (AE) architecture, composed of two networks: an encoder

![]() $f_E$

that compresses the input into a latent code

$f_E$

that compresses the input into a latent code

![]() $z = f_E(x)$

and a decoder

$z = f_E(x)$

and a decoder

![]() $f_D$

that reconstructs the original sample as

$f_D$

that reconstructs the original sample as

![]() $x \approx f_D(z)$

. Once trained, the latent code

$x \approx f_D(z)$

. Once trained, the latent code

![]() $z$

can be sampled (e.g.

$z$

can be sampled (e.g.

![]() $z \sim \mathcal{N}(0, I)$

), turning the model into a generative network capable of producing new data. To explicitly enforce this sampling property during training, one can constrain the latent variables to follow a prescribed distribution, typically a standard Gaussian law, by minimising the Kullback–Leibler (KL) divergence between the learned distribution

$z \sim \mathcal{N}(0, I)$

), turning the model into a generative network capable of producing new data. To explicitly enforce this sampling property during training, one can constrain the latent variables to follow a prescribed distribution, typically a standard Gaussian law, by minimising the Kullback–Leibler (KL) divergence between the learned distribution

![]() $p(z)$

and the target prior

$p(z)$

and the target prior

![]() $\mathcal{N}(0, I)$

. Combining this regularisation term with the reconstruction loss yields the well-known variational autoencoder (VAE) formulation.

$\mathcal{N}(0, I)$

. Combining this regularisation term with the reconstruction loss yields the well-known variational autoencoder (VAE) formulation.

Following this idea, other techniques have been developed for enhanced generative modelling. For example, generative adversarial networks (GANs) proposed by Goodfellow et al. (Reference Goodfellow, Pouget-Abadie, Mirza, Xu, Warde-Farley, Ozair, Courville and Bengio2014) also rely on a low-dimensional latent code

![]() $z$

to generate synthetic samples

$z$

to generate synthetic samples

![]() $x \approx f_G(z)$

. In addition, a second network, the discriminator

$x \approx f_G(z)$

. In addition, a second network, the discriminator

![]() $f_D$

, is trained to distinguish whether an image is real (taken from the dataset) or fake (produced by

$f_D$

, is trained to distinguish whether an image is real (taken from the dataset) or fake (produced by

![]() $f_G$

). This set-up defines a minimax optimisation problem, in which the loss is simultaneously minimised to improve the generator

$f_G$

). This set-up defines a minimax optimisation problem, in which the loss is simultaneously minimised to improve the generator

![]() $f_G$

and maximised to enhance the discriminator’s ability to detect fake images. Although GANs are mathematically well posed, they are notoriously difficult to train in practice and often suffer from mode collapse, a phenomenon where the generator produces limited diversity in the generated outputs. To address these challenges, diffusion-based models (Ho et al. Reference Ho, Jain, Abbeel, Larochelle, Ranzato, Hadsell, Balcan and Lin2020; Song, Meng & Ermon Reference Song, Meng and Ermon2020) have recently emerged. In their forward process (F), random noise

$f_G$

and maximised to enhance the discriminator’s ability to detect fake images. Although GANs are mathematically well posed, they are notoriously difficult to train in practice and often suffer from mode collapse, a phenomenon where the generator produces limited diversity in the generated outputs. To address these challenges, diffusion-based models (Ho et al. Reference Ho, Jain, Abbeel, Larochelle, Ranzato, Hadsell, Balcan and Lin2020; Song, Meng & Ermon Reference Song, Meng and Ermon2020) have recently emerged. In their forward process (F), random noise

![]() $\epsilon$

is gradually added to an initial image

$\epsilon$

is gradually added to an initial image

![]() $x_0$

, generating a sequence of increasingly noisy images

$x_0$

, generating a sequence of increasingly noisy images

![]() $x_t$

. Since the noise

$x_t$

. Since the noise

![]() $\epsilon$

and the corresponding noisy image

$\epsilon$

and the corresponding noisy image

![]() $x_t$

are both known, the task can be formulated as a supervised learning problem, where the network is trained to predict the noise using a standard MSE loss:

$x_t$

are both known, the task can be formulated as a supervised learning problem, where the network is trained to predict the noise using a standard MSE loss:

During the reverse process (B), the model starts from a pure Gaussian noise sample and progressively denoises it by subtracting the learned noise prediction

![]() $f_\theta$

at each step, yielding increasingly realistic reconstructions. To preserve stochasticity and allow for diversity in the generated samples, an additional noise term

$f_\theta$

at each step, yielding increasingly realistic reconstructions. To preserve stochasticity and allow for diversity in the generated samples, an additional noise term

![]() $\sigma _t z$

, with

$\sigma _t z$

, with

![]() $z \sim \mathcal{N}(0, I)$

, is injected during the backward diffusion. These generative models for physics are further detailed in § 4.6.1.

$z \sim \mathcal{N}(0, I)$

, is injected during the backward diffusion. These generative models for physics are further detailed in § 4.6.1.

Note that classification tasks also fit naturally within this general framework by predicting probability distributions rather than deterministic outputs. In such cases, the objective is to identify the most likely class

![]() $c$

(an integer label), which is commonly reformulated as predicting the corresponding class probabilities

$c$

(an integer label), which is commonly reformulated as predicting the corresponding class probabilities

![]() $p(c)$

. To properly train the network on these probabilistic targets, the loss function must therefore measure discrepancies between probability distributions rather than pointwise errors. This is precisely the role of the cross-entropy and KL divergence losses, which quantify the distance between the predicted probability distribution and the true one.

$p(c)$

. To properly train the network on these probabilistic targets, the loss function must therefore measure discrepancies between probability distributions rather than pointwise errors. This is precisely the role of the cross-entropy and KL divergence losses, which quantify the distance between the predicted probability distribution and the true one.

Beyond purely data-driven tasks such as classification or generative modelling, neural networks can also leverage prior physical knowledge to guide learning. In physics-informed neural networks (PINNs) proposed by Raissi et al. (Reference Raissi, Perdikaris and Karniadakis2019), for instance, the solution field

![]() $u_\theta (x, t)$

is parametrised by a neural network and trained by minimising the residual of the governing equations,

$u_\theta (x, t)$

is parametrised by a neural network and trained by minimising the residual of the governing equations,

![]() $\mathcal{R}(u_\theta (x, t))$

. This approach effectively relaxes the need for labelled data, as the network is constrained by the underlying physics. Initial (IC) and boundary (BC) conditions can be naturally incorporated into the loss function as additional terms. While PINNs focus on learning solution fields, more recent methods aim to directly learn operators that map inputs to outputs. Such operator learning approaches, including the Fourier neural operator (FNO), DeepONet and implicit neural representation (INR), are particularly useful for multi-scale or parametric problems and are discussed in detail in § 4.2.3.

$\mathcal{R}(u_\theta (x, t))$

. This approach effectively relaxes the need for labelled data, as the network is constrained by the underlying physics. Initial (IC) and boundary (BC) conditions can be naturally incorporated into the loss function as additional terms. While PINNs focus on learning solution fields, more recent methods aim to directly learn operators that map inputs to outputs. Such operator learning approaches, including the Fourier neural operator (FNO), DeepONet and implicit neural representation (INR), are particularly useful for multi-scale or parametric problems and are discussed in detail in § 4.2.3.

Finally, deep reinforcement learning (DRL), often used for optimal control of fluid flows (Verma, Novati & Koumoutsakos Reference Verma, Novati and Koumoutsakos2018; Rabault et al. Reference Rabault, Kuchta, Jensen, Réglade and Cerardi2019; Berger et al. Reference Berger, Arroyo-Ramo, Guillet, Lahire, Martin, Jardin, Rachelson and Bauerheim2024; Koumoutsakos Reference Koumoutsakos2024), can also be framed within this general neural network perspective. In DRL, a network is trained to approximate either the optimal policy

![]() $\pi _\theta (a | s)$

or the action-value function

$\pi _\theta (a | s)$

or the action-value function

![]() $Q_\theta (s, a)$

, as a function of the system state

$Q_\theta (s, a)$

, as a function of the system state

![]() $s$

and action

$s$

and action

![]() $a$

. The main challenge is that the true target is not known a priori, since it would require the knowledge of the optimal control law. Consequently, the target must be iteratively estimated during training, yielding a loss function similar to MSE, but with self-consistent updated targets instead of fixed labels. This formulation naturally fits within the general neural network framework, although it typically results in more unstable training dynamics compared with standard supervised learning.

$a$

. The main challenge is that the true target is not known a priori, since it would require the knowledge of the optimal control law. Consequently, the target must be iteratively estimated during training, yielding a loss function similar to MSE, but with self-consistent updated targets instead of fixed labels. This formulation naturally fits within the general neural network framework, although it typically results in more unstable training dynamics compared with standard supervised learning.

Beyond the task-specific formulations summarised in table 2, recent advances have introduced a new class of broadly trained models known as foundation models (Bommasani et al. Reference Bommasani2022). Typical examples are large language models (LLM) such as the well-known ChatGPT (Brown et al. Reference Brown, Larochelle, Ranzato, Hadsell, Balcan and Lin2020). Instead of optimising a network for a single objective, they are rather pretrained on massive heterogeneous datasets using self-supervised objectives, with the goal of learning general-purpose representations of data. After this large-scale pretraining, the resulting representations can be adapted to a wide variety of downstream tasks (regression, classification, generation or control), often with minimal additional training.

Overview of various neural network tasks with their associated learned function and training objectives.

In conclusion, neural networks offer a flexible and generic framework capable of tackling a wide range of complex tasks. The natural next question is how effective this framework truly is, particularly when compared with the well-established and highly optimised mathematical algorithms commonly used in computational physics, and especially in CFD. This comparison will allow the identification of key principles from classical methods that could guide neural networks to achieve comparable, or even superior, performance.

3. Effectiveness of mathematics throughout the history of science

Since Galileo Galilei, physicians intensively employ mathematics to describe natural phenomena, including gravitation, electro-magnetic fields as well as fluid mechanics among many others, exhibiting remarkable precision and generalisation capabilities. According to Feynman, ‘If you want to learn about nature, to appreciate nature, it is necessary to understand the language that she speaks in’. This statement is in line with the Galilei’s conjecture that the language of nature is mathematics. While this viewpoint implies the straightforward effectiveness of mathematics, it relies solely on Galileo’s conjecture, which cannot be demonstrated or rejected, yielding endless circular debates with no resolution in sight. Thus, let us consider another perspective, where mathematics in physics is useful because we have no choice. For instance, Kepler posed the question ‘What else can the human mind hold besides numbers and magnitudes?’. In other words, the real world is too complex and mathematics is our sole means to make this inherent complexity of nature intelligible to human understanding. Let us consider the situation where only the mathematical properties of the physical world are accessible to humans. Here, mathematics is not considered as the language of nature, but rather a tool for humans to apprehend its complexity. In that situation, physics would be effective only because its use is limited to mathematisable questions. This is evident in fields like medicine, where very few equations exist to describe the fundamental interactions between living cells or biological systems, making it less amenable to mathematical treatments. Note that mathematics are still used for specific problems, like understanding the microbial population dynamics (Quedeville Reference Quedeville2020) or the DNA replication and repair using knot theory (Robic & Jungck Reference Robic and Jungck2011), among others, including the pioneer works to create mathematical models of biological neurons, yielding the emergence of AI (McCulloch & Pitts Reference McCulloch and Pitts1943). Interestingly, this limitation had led to the early widespread adoption of AI in medical research, such as cancer detection (Tekkesin Reference Tekkesin2019) and protein discovery (Madani et al. Reference Madani2023), since no other efficient algorithm was already successful for such tasks. Nevertheless, does it imply that AI cannot play a crucial role in disciplines already massively based on mathematics, as it is the case for fluid mechanics and CFD, and more generally in computational physics? How can mathematics and AI complement each other in such domains? Can the fundamental properties of mathematics be transposed into AI to enhance both its effectiveness and adoption within the scientific community? In my opinion, these questions are rarely addressed and, therefore, this section is dedicated to explore and to answer them, at least partially.

3.1. Definitions of effectiveness

Before diving into the epistemology of mathematics, the notion of the effectiveness of a scientific method should be defined and clarified. According to Wigner (Reference Wigner1960), the effectiveness of mathematics in natural science is a miracle, out of reach of the human understanding. Nevertheless, he defined three levels of effectiveness in physics.

First, the prediction capability. As a main objective throughout history, scientific models and theories have been developed to predict behaviours of physical phenomena. In that view, a naïve definition of effectiveness is the capability of a model to predict accurately unseen behaviours, i.e. to generalise to new conditions, at least in a pre-defined range of operating points. On one hand, it is worth noting that prediction effectiveness should not be confused with the sole accuracy, but should be also related to its inherent cost to provide such a prediction. For instance, is Reynolds-averaged Navier–Stokes more or less effective than large eddy simulation (LES) or even DNS in the context of CFD? Similarly, is a linear regression model less effective than a deep neural network? Consequently, a proper metric defining the trade-off between accuracy and cost (or any measure of the complexity of a model) has to be proposed when such effectiveness is invoked. An alternative is to set an accuracy threshold and evaluate the cost of the method to obtain this user-defined accuracy (Ajuria-Illaramendi, Bauerheim & Cuenot Reference Ajuria-Illaramendi, Bauerheim and Cuenot2022). On the other hand, unseen behaviour is usually not clearly defined. This is due to the difficulty to properly construct a metric to assess how far a new behaviour is, compared with previous seen phenomena. For instance, this difficulty typically arises in very high dimension, as usually encountered with deep learning (Xia et al. Reference Xia, Xiong, Luo, Xu and Zhang2015), so that identifying ‘unseen’ data to evaluate generalisation becomes illusive. This question is attracting attention recently, in particular by finding methods to identify these out-of-distribution data (Durasov et al. Reference Durasov, Oner, Donier, Le and Fua2024; Yang et al. Reference Yang, Zhou, Li and Liu2024).

Second, the capability to provide explainable structures. Mathematics in physics is not limited to reproduce results provided by experiments, but allows us to understand the underlying mechanisms and structures encompassing, and thus explaining, multiple similar situations. It constitutes a conceptual generalisation. As an illustration, the general relativity proposed by Einstein (Reference Einstein1920) goes beyond its simple validation, such as the recent tests on the weak equivalence principle provided by the Microscope mission (Touboul Reference Touboul2022). Indeed, this theory offered to see gravitation as the curvature of the four-dimensional space–time, rather than considering it as a mere force. This epistemological rupture allowed the explanation of the previously insoluble anomaly of Mercury’s orbit, but also the description of new phenomena as surprising as gravitational lenses, black holes or gravitational waves. As a consequence, this theory goes beyond predictions, bringing also explanation, which echoes the ‘Predicting is not explaining’ written by Thom, Tsatsanis & Lisker (Reference Thom, Tsatsanis and Lisker2016). In addition, he underlined the need of unification in physics, which stipulates that explaining means reducing the large diversity of phenomena to a few general principles. It is worth noting that in this viewpoint, CFD is effective in predicting flows, but not directly to explain their behaviour: to do so, post-processing and analysis are always required. For example, while compressible CFD allows the simulation of aero-acoustic phenomena, determining the type and localisation of acoustic sources in a simulated flow still constitutes a challenge. This requires state-of-the-art post-processing, like Helmholtz’s or Doak’s decompositions, to separate acoustic perturbations from turbulent and hydrodynamic ones (D’Aniello et al. Reference D’Aniello, Gövert, Knobloch and Kissner2023). Similarly, finding the correlation between flow structures, like vortices, and quantities of interest, like lift, also necessitate the development of novel post-processing, like the force partitioning method (Menon & Mittal Reference Menon and Mittal2021), to extract meaningful information from the CFD simulations.

Third, the conceptual generative. Effectiveness can also be associated with another capability, the one called ‘generative’ by Wigner (Reference Wigner1960). This notion translates the ability of a theory, not to predict or to explain, but to fertilise novel ideas and concepts, often based on new representations. Bachelard (Reference Bachelard1936) emphasised this notion by stating that ‘mathematical information gives us more than reality; it gives us the plan of the possible; it goes beyond the actual experience of coherence’. In essence, the objective of mathematics in physics extends beyond merely providing theories consistent with experimental measurements. Rather, it provides a systematic framework that, starting from a small set of foundational principles, generates the full range of possible theories consistent with those principles. This approach allows physicists to generalise existing concepts, but also to envision novel ones, even in the absence of empirical validation or refutation (Maronne & Patras Reference Maronne and Patras2022): ‘To think about reality is to construct it mathematically’. Consider two examples from the history of scientific discoveries. On one hand, in 1846, Le Verrier predicted the existence of Neptune using only mathematical calculations. Its presence was soon confirmed by Galle’s observations. This was an impressive achievement, but this was just one more planet among those already known. On the other hand, the theoretical predictions of the Higgs boson introduced an entirely new kind of entity into physics, while providing a conceptual shift where mass is not an intrinsic property of particles. This idea was experimentally confirmed only 48 years later at the Large Hadron Collider (LHC), revealing that conceptual generative can appear prior to any prediction capabilities of a theory. It is worth noting that mathematical theories can exhibit conceptual generative without necessarily producing significant new explanations or predictions, as seen in string theory. In that case, the new physical concept serves as a source of new ideas for pure mathematics. Such theories with conceptual generative but not yet experimentally verified are often denoted as ‘tentative theories’ (Penrose Reference Penrose1990). This illustrates that no inclusion relationship exists between these three levels of effectiveness: predictive, explanatory and conceptual generative.

Nevertheless, AI for CFD remains an emerging approach and to be considered truly effective, it must consistently prove its predictive power by surpassing traditional mathematical tools. This is a prerequisite for broader adoption within the research and engineering communities. However, while AI has demonstrated superior performance in specific tasks and configurations, a major challenge remains: extending its applicability to a wider range of problems with minimal or no retraining. Interestingly, the rapid advancements in AI to achieve this objective have sparked a wave of innovative ideas, particularly in the modelling and understanding of these algorithms. In fluid mechanics, novel concepts bridging AI and CFD have emerged, showcasing its potential for conceptual generative effectiveness. Examples include physics-informed neural networks (PINNs) introduced by Raissi et al. (Reference Raissi, Perdikaris and Karniadakis2019), which approximate partial differential equation (PDE) solutions with continuous functions without relying on gradient approximations, and hybrid differentiable solvers (Um et al. Reference Um, Brand, Fei, Holl and Thuerey2020), which seamlessly integrate AI with classical numerical methods. Thus, the next crucial challenge for AI lies in explanatory effectiveness. First, AI models and training strategies must be interpretable, providing clear guidelines for practical implementation and facilitating adoption in research and engineering. Second, beyond just predictive accuracy, AI systems should be capable of understanding and explaining the underlying physics, similar to the ‘agent of understanding’ proposed by Krenn et al. (Reference Krenn2022), a challenge rarely addressed in the literature. While AI has shown promise in identifying complex nonlinear physical phenomena, its ability to provide physical insights remains limited, in particular on unknown and complex problems. This lack of interpretability represents a key area for future improvement, and I believe this is an essential step for AI to evolve into a reliable tool for scientific discovery and engineering applications.

That being said, note that Wigner (Reference Wigner1960) also insists on the fact that a new formalism, tool or concept is rarely directly applicable in the domain for which it was thought. As a consequence, evaluating the effectiveness of a theory a priori has no significant meaning or, at the very least, necessitates a careful consideration. This is why the following sections do not investigate the effectiveness of mathematics and AI based on their respective current results, but rather on their epistemological potential. Let us begin by delving into the origins of mathematical effectiveness to extract its foundational principles.

3.2. A reasonable explanation of the effectiveness of mathematics

3.2.1. Symmetries and invariants

Lambert (Reference Lambert1997) has examined the possible reasons behind the effectiveness in mathematics in his book ‘Is the efficiency of mathematics unreasonable?’, inspiring the title of this very subsection. Within this work, he explores various philosophical theories, such as Pythagoreanism, Platonism, empiricism etc. intended to explain the effectiveness of mathematics and, in general, to every scientific methods. Among them, he mentioned formalism, which stipulates that mathematics is effective because it relies on symbols and rules to manipulate them. Nevertheless, Lambert (Reference Lambert1997) argues that this point of view can explain the effectiveness of mathematics only as a kind of miracle, as it is difficult to see how a ‘game’ governed solely by constraints of internal coherence could correspond to a reality external to thought, such as physical phenomena. However, this theory gave birth to relationism, which considers mathematics as the formalised representation of all possible conceptual relationships, including numerical ones. For instance, according to Bohr (Reference Bohr1958), ‘we shall not consider pure mathematics as a separate branch of knowledge, but rather as a refinement of general language, supplementing it with appropriate tools to represent relations for which ordinary verbal expression is imprecise or cumbersome’. Here, mathematics is not just a language with symbols and rules, but serves as a means of formally translating and elucidating the relationships between concepts. This might explain why physics is well mathematised, since most results can be expressed through structured relationships between the fundamental quantities of the system, structures that mathematics can faithfully capture and elaborate. According to Vandamme (Reference Vandamme1976), ‘The knowledge in general, and even more so the scientific knowledge, is characterised by its structures’. Nevertheless, it is worth noting that not all relationships are useful. Corry (Reference Corry1996) notes that mathematicians are not interested in cataloguing all possible structures, but rather to investigate ones that possess rich invariants. This is consistent with physics, where an ‘element of reality’ cannot be described properly without recourse to invariants preserved under the symmetries of the system. This need of invariance is motivated by our partial observation of reality that requires invariants to conclude without exhaustive observations, which is often impractical or cumbersome. In other words, reality in physics must be robust to a change of point of view. This is exactly what research in physics is doing, but more generally how humans and animals perceive the surrounding world. For instance, Florack et al. (Reference Florack, Romeny, Koenderink and Viergever1994) and Lindeberg (Reference Lindeberg2013) have demonstrated that human vision and cognitive functions rely on some invariants and symmetries: ‘It is apparently the case that, at least up to some approximation, many visual tasks can be solved on the basis of such invariants only. This observation should affect the way computer-vision and image-analysis tasks are handled as well’ (Florack et al. Reference Florack, Romeny, Koenderink and Viergever1994).

Modern science, and mathematics, now heavily rely on the study of symmetry, as illustrated by Anderson (Reference Anderson1972) who noted that ‘it is only slightly overstating the case to say that physics is the study of symmetry’. Early on, Curie (Reference Curie1984) proposed his principle of symmetry, based on ideas from F. Neumann and B. Minnigerode, where ‘the symmetries of the causes are to be found in the effects’. This highlights the profound relevance of symmetries to understand the surrounding world. Interestingly, symmetries in physics are intricately related to invariants, i.e. conserved quantities, also known as first integrals, as elegantly elucidated by Noether’s theorem (Noether Reference Noether1918). As an example, rotational symmetry yields the conservation of angular momentum, and invariance under a translation in time leads to the conservation of energy. Moreover, symmetries and invariances are much more intricate and influential in modern physics.

In the realm of fluid mechanics, the rigorous investigation of symmetry and invariance has also been studied for a long time. For instance, Golubitsky & Stewart (Reference Golubitsky and Stewart1986) studied the specific symmetries acting on the stability of Taylor–Couette flows. They demonstrate that symmetries inherent in bifurcating systems have a strong influence on their behaviour, which motivates their study. The effect of symmetries on flow instability has been recently revisited by Sierra-Ausin, Fabre & Knobloch (Reference Sierra-Ausin, Fabre and Knobloch2024), focusing on the

![]() $O(2)$

symmetry applied to wake instability. Similarly, combustion instabilities in annular combustion chambers are governed by the azimuthal symmetry. When this symmetry is broken because of different flame types (Bauerheim et al. Reference Bauerheim, Salas, Nicoud and Poinsot2014b

), azimuthal mean flow (Bauerheim et al. Reference Bauerheim, Cazalens and Poinsot2014a

) or stochasticity (Bauerheim et al. Reference Bauerheim, Ndiaye, Constantine, Moreau and Nicoud2016a

), modal splitting occurs which affects the whole stability of the system. Recent works in thermoacoustics still involves this notion of symmetry breaking (Aguilar et al. Reference Aguilar, Dawson, Schuller, Durox, Prieur and Candel2021; Faure-Beaulieu et al. Reference Faure-Beaulieu, Indlekofer, Dawson and Noiray2021). Symmetries have also been exploited in fluid mechanics to build turbulence models. Indeed, Grebenev & Oberlack (Reference Grebenev and Oberlack2007) and Fakhar et al. (Reference Fakhar, Hayat, Yi and Zhao2009) studied the symmetry of the incompressible Navier–Stokes equations (Cantwell Reference Cantwell1978). Based on these symmetries, Razafindralandy, Hamdouni & Oberlack (Reference Razafindralandy, Hamdouni and Oberlack2007) and later Sayed et al. (Reference Sayed, Hamdouni, Liberge and Razafindralandy2010) derived new turbulence modelling which preserve those symmetries. They also analysed the existing turbulence models available at that time, including the Smagorinsky model (Smagorinsky Reference Smagorinsky1963), showing that only its dynamic version (Germano et al. Reference Germano, Piomelli, Moin and Cabot1991) allows symmetry preservation.

$O(2)$

symmetry applied to wake instability. Similarly, combustion instabilities in annular combustion chambers are governed by the azimuthal symmetry. When this symmetry is broken because of different flame types (Bauerheim et al. Reference Bauerheim, Salas, Nicoud and Poinsot2014b

), azimuthal mean flow (Bauerheim et al. Reference Bauerheim, Cazalens and Poinsot2014a

) or stochasticity (Bauerheim et al. Reference Bauerheim, Ndiaye, Constantine, Moreau and Nicoud2016a

), modal splitting occurs which affects the whole stability of the system. Recent works in thermoacoustics still involves this notion of symmetry breaking (Aguilar et al. Reference Aguilar, Dawson, Schuller, Durox, Prieur and Candel2021; Faure-Beaulieu et al. Reference Faure-Beaulieu, Indlekofer, Dawson and Noiray2021). Symmetries have also been exploited in fluid mechanics to build turbulence models. Indeed, Grebenev & Oberlack (Reference Grebenev and Oberlack2007) and Fakhar et al. (Reference Fakhar, Hayat, Yi and Zhao2009) studied the symmetry of the incompressible Navier–Stokes equations (Cantwell Reference Cantwell1978). Based on these symmetries, Razafindralandy, Hamdouni & Oberlack (Reference Razafindralandy, Hamdouni and Oberlack2007) and later Sayed et al. (Reference Sayed, Hamdouni, Liberge and Razafindralandy2010) derived new turbulence modelling which preserve those symmetries. They also analysed the existing turbulence models available at that time, including the Smagorinsky model (Smagorinsky Reference Smagorinsky1963), showing that only its dynamic version (Germano et al. Reference Germano, Piomelli, Moin and Cabot1991) allows symmetry preservation.

These examples reveal the uncanny effectiveness of mathematics because of symmetry and invariance, highlighting its capacity to shape our understanding of reality while existing purely within the realm of abstract ideas. This fundamental concept of symmetries and invariance is also embedded in the very construction of modern AI methods for classification and regression tasks (Gens & Domingos Reference Gens and Domingos2014; Weiler et al. Reference Weiler, Geiger, Welling, Boomsma and Cohen2018; Weiler & Cesa Reference Weiler and Cesa2019; Wang, Walters & Yu Reference Wang, Walters and Yu2021). Typical examples are CNN built upon the translation equivariance principle or graph networks based on permutation invariance. Recently, the community devoted to AI for science has been exploring the necessity of incorporating symmetries or structures directly into neural network architectures (Önder & Liu Reference Önder and Liu2023; Otto et al. Reference Otto, Zolman, Kutz and Brunton2023), not only as a design principle, but also to align with the intrinsic demands of science, which fundamentally relies on these principles. Similarly to the Erlangen programme proposed by F. Klein in 1972 to unify geometry as the study of invariants or symmetries using the language of group theory, a recent objective in AI is to unify the various network architectures into a general framework, known as geometric deep learning, based also on symmetries or invariants (Bronstein et al. Reference Bronstein, Bruna, Cohen and Velickovic2021). As a consequence, symmetry and invariants constitute the first pillar to make AI effective in CFD, as further discussed technically in § 4.1.

3.2.2. Scale separation

Furthermore, while investigating invariants for image recognition, Florack et al. (Reference Florack, Romeny, Koenderink and Viergever1992) also emphasised the scale dependence of these invariants. This notion of scale is crucial in physics, where the physical laws governing complex systems exhibit a sort of separation between the various levels of structures associated with spatial and temporal scales. For instance, while the classical Euclidean space is intuitive at spatial scales we encounter in our everyday life, this framework fails to explain phenomena at very low (quantum mechanics) and very large (gravitation) scales. Einstein (Reference Einstein1920) challenged this view by introducing the concept of curved space–time, effective at large scales, but still failing to explain the transition between the continuous world we experience and the quantised energy realm of quantum mechanics. Similarly in fluid mechanics, working at the macroscopic (Navier–Stokes equations) or mesoscopic (lattice Boltzmann method) scales does not require a detailed knowledge of the fine microscopic scales, such as the interactions between the fundamental particles. This scale separation, when available, allows dedicated physical analyses and methodologies to be performed, introducing its own abstraction. For instance, the notion of pressure at the macroscopic scale in a fluid is intuitive, while being much more complex, or even meaningless, at much lower scales.

These considerations are essential not only in physics, but also in the development of AI, as well as across a broad spectrum of disciplines, including sociology, history etc. This idea aligns closely with the epistemological perspective emphasised by Bachelard (Reference Bachelard1938), who considered that the main challenge of science is to push humans beyond their intuitive sense of scale. Additionally, Simondon (Reference Simondon1970) named scale rupture this transition between ordinary scales, where familiar schemes like particles or Euclidean space remain valid, and the extreme scales studied by quantum mechanics and astrophysics, where these schemes inherited from ordinary perception are no longer adequate. It suggests that the effectiveness of mathematics in physics derives from its ability to conceptualise and study phenomena occurring at very different scales compared with those the researcher, as a human being, is used to in a daily-life.

This principle is fundamental in CFD, where applications span an enormous range of scales, from microscopic flows around biological cells (O’Connor et al. Reference O’Connor, Day, Mandal and Revell2016) to astrophysical phenomena, such as supernova-induced turbulence and other large-scale flows in the universe (Müller Reference Müller2020). Early on, engineers and researchers recognised the necessity of classifying these flows based on characteristic non-dimensional parameters, such as the Reynolds or Mach numbers, among others. It is well established that flow behaviour changes drastically as these parameters vary, leading to the emergence of complex phenomena like shock waves or turbulence. These flow regimes can be highly localised, coexist within the same system, interact nonlinearly or even exhibit fractal characteristics (Meneveau & Sreenivasan Reference Meneveau and Sreenivasan1987), making them inherently multi-scale and challenging to capture and analyse.

Consequently, scale separation is central to many advancements in fluid mechanics. For instance, separation between the slowly varying large-scale perturbations from the rapidly varying small-scale of the flow is essential to derive a simplified effective description of the dynamics at large scales, and justify the concept of eddy viscosity (Dubrulle & Frisch Reference Dubrulle and Frisch1991). Similar multiple scale approaches are employed by Ovenden (Reference Ovenden2005) to model sound transmission through a slowly varying duct of arbitrary cross-section, by Faure-Beaulieu & Noiray (Reference Faure-Beaulieu and Noiray2022) to extract the dynamics of the slow-flow variables in an annular thermo-acoustic system, and by Magri et al. (Reference Magri, See, Tammisola, Ihme and Juniper2016) to formulate a set of equations in the low-Mach number limit for linear stability analysis.

Moreover, this multi-scale character of fluid flows lies at the core of many challenges in CFD. To address these, high-order numerical schemes (Bassi & Rebay Reference Bassi and Rebay1997; Nicoud Reference Nicoud2004) and lattice Boltzmann methods (LBMs) (Marié et al. Reference Marié, Ricot and Sagaut2009; Coratger et al. Reference Coratger, Farag, Zhao, Boivin and Sagaut2021) have been developed, specifically to preserve the propagation of fine-scale turbulent structures over long distances and to efficiently capture interactions across a wide range of scales. Similarly, identifying regions with extreme length scales, such as thin shock waves, flames or boundary layers, has led to the development of specialised numerical treatments (Colin et al. Reference Colin, Ducros, Veynante and Poinsot2000; Nicoud et al. Reference Nicoud, Baggett, Moin and Cabot2001). The critical role of scales in CFD is further highlighted by the widespread use of post-processing techniques, such as Fourier and wavelet transforms (Grizzi & Camussi Reference Grizzi and Camussi2012), proper orthogonal decomposition (POD), and other spectral analysis tools. These methods help to extract spatial and temporal scales where energy is generated or dissipated, providing deeper insight into the underlying flow physics.

This inherent multi-scale nature of fluid flows presents a significant challenge for AI, which is not initially specifically designed to handle such drastic scale separation with order of magnitudes. A clear example lies in denoising filters (Pratt Reference Pratt1972), used in some generative models, which often treat high-frequency components as noise and discard them (e.g. Wiener’s filter). In CFD, however, small-scale structures play a crucial role in accurately capturing overall flow behaviour and cannot simply be removed. Recognising this limitation, recent research has increasingly focused on equipping deep networks with multi-scale (Nabian Reference Nabian2014; Mathieu, Couprie & LeCun Reference Mathieu, Couprie and LeCun2016) and multi-resolution (Catalani et al. Reference Catalani, Agarwal, Bertrand, Tost, Bauerheim and Morlier2024; Cheng et al. Reference Cheng, Morel, Allys, Ménard and Mallat2024) capabilities, particularly for flow applications. These advancements open new avenues for improving deep learning methods in fluid mechanics by enforcing specialised treatments at different scales, as further discussed in § 4.2.

3.2.3. Sparsity

Pertaining directly to the second form of effectiveness as defined by Wigner (Reference Wigner1960), i.e. the capability to provide explainable structures, mathematics is based on concise equations (sparsity), facilitating explicability: sparsity requires abstraction, generating a semantics for physics. Semantics refers to the branch of linguistics and logic concerned with meaning. Sparsity and parsimony, as suggested by William of Occam, are of true interest since all models are wrong: ‘Pluralitas non est ponenda sine necessitate’ (Plurality is not to be posited without necessity). Among models with equivalent accuracy, the most effective are those that are sparse. For instance, the compactness of the expressions describing the Navier–Stokes equations is truly remarkable when considering the numerous physical fluid phenomena they govern: these applications range from the dynamics of blood circulation within the human heart (Chnafa et al. Reference Chnafa, Mendez, Moreno and Nicoud2015) to the airflow patterns around an aircraft, and even extend to the turbulent storms on Jupiter or the cataclysmic events of supernovae (Müller Reference Müller2020). How feasible are such compact expressions encapsulating so much physics? Occam’s razor suggests that sparsity is at the root of explicability, but nothing yet allows us to justify that these expressions will be so general and so effective in physics. This fundamental concept of sparsity is central to many approaches in recent AI for physics, such as discovering physical laws by neural networks. This is often achieved through sparsity-promoting algorithms (Brunton et al. Reference Brunton, Proctor and Kutz2016a , Reference Brunton, Proctor and Kutzb ; Silva et al. Reference Silva, Higdon, Brunton and Kutz2020) allowing to focus the explainability of the model in a few terms, and hence be more general by construction.

This explainability induced by sparsity can be illustrated with one remarkable example: the evolution of Maxwell’s equations. Initially, Maxwell (Reference Maxwell1861) formulated a set of

![]() $20$

equations to describe the behaviour of electric and magnetic fields. The quest for sparsity and elegance in Maxwell’s equations culminated in the realisation that the equations could be unified and simplified through the introduction of vector and tensor calculus. This reformulation has lead to a compact set of the four well-known equations, which simplifies interpretability through the divergence and curl operators, and manipulation such as deriving Poynting’s theorem for the energy conservation. However, electric (

$20$

equations to describe the behaviour of electric and magnetic fields. The quest for sparsity and elegance in Maxwell’s equations culminated in the realisation that the equations could be unified and simplified through the introduction of vector and tensor calculus. This reformulation has lead to a compact set of the four well-known equations, which simplifies interpretability through the divergence and curl operators, and manipulation such as deriving Poynting’s theorem for the energy conservation. However, electric (

![]() $\boldsymbol E$

) and magnetic (

$\boldsymbol E$

) and magnetic (

![]() $\boldsymbol B$

) fields still appear as separate entities, while in fact they can be unified into the same compact theory. This paradigm shift occurred when the equations were again reformulated, but now using the language of differential geometry. This leads to only two compact equations, where the electric and magnetic fields appear as components of a single electromagnetic tensor (

$\boldsymbol B$

) fields still appear as separate entities, while in fact they can be unified into the same compact theory. This paradigm shift occurred when the equations were again reformulated, but now using the language of differential geometry. This leads to only two compact equations, where the electric and magnetic fields appear as components of a single electromagnetic tensor (

![]() $F_{\mu \nu }$

), where

$F_{\mu \nu }$

), where

![]() $F_{0 i} = -E_i/c$

and

$F_{0 i} = -E_i/c$

and

![]() $F_{\textit{ij}} =- \epsilon _{\textit{ijk}} B_k$

. This unification of electric and magnetic fields is only possible because space and time are themselves combined into a single four-dimensional space–time, so that this sparse formalism naturally incorporates the principles of relativity. Beyond its compactness and elegance, this tensorial representation makes the fundamental Lorentz invariants of the electromagnetic field emerging directly from simple tensor contractions, highlighting the deep geometric structure underlying electromagnetism.

$F_{\textit{ij}} =- \epsilon _{\textit{ijk}} B_k$

. This unification of electric and magnetic fields is only possible because space and time are themselves combined into a single four-dimensional space–time, so that this sparse formalism naturally incorporates the principles of relativity. Beyond its compactness and elegance, this tensorial representation makes the fundamental Lorentz invariants of the electromagnetic field emerging directly from simple tensor contractions, highlighting the deep geometric structure underlying electromagnetism.

Similar illustrations of sparsity also arise in fluid dynamics, for instance, from the Boltzmann equation. Instead of solving directly the full set of nonlinear Navier–Stokes equations for mass, momentum and energy conservation, LBM reformulates fluid dynamics in terms of a single mesoscopic transport equation for the particle distribution function

![]() $F(x, t, v)$

. This sparse formulation makes conservation laws not imposed explicitly, but emerging solely from the moments of this distribution function. This is governed by the symmetries of the velocity space that ensures that only a handful of velocity moments are conserved, while the rest decay. This explains why mass, momentum and energy are the universal conservation laws in fluid flows. In addition, while the velocity space is continuous, its discretisation into a small set of representative velocities (e.g. D2Q9, D3Q19) is sufficient to preserve the essential hydrodynamic moments. This reduction transforms the complexity of the full PDE system into a simple stream-and-collide process, which is computationally effective. In this way, sparsity provides: (i) a compact representation; (ii) a clear physical interpretation; and (iii) a computational effective method.

$F(x, t, v)$

. This sparse formulation makes conservation laws not imposed explicitly, but emerging solely from the moments of this distribution function. This is governed by the symmetries of the velocity space that ensures that only a handful of velocity moments are conserved, while the rest decay. This explains why mass, momentum and energy are the universal conservation laws in fluid flows. In addition, while the velocity space is continuous, its discretisation into a small set of representative velocities (e.g. D2Q9, D3Q19) is sufficient to preserve the essential hydrodynamic moments. This reduction transforms the complexity of the full PDE system into a simple stream-and-collide process, which is computationally effective. In this way, sparsity provides: (i) a compact representation; (ii) a clear physical interpretation; and (iii) a computational effective method.

Another example of sparsity is provided by turbulence modelling. While DNS resolves all turbulent scales, a task that rapidly becomes intractable at high Reynolds numbers, RANS circumvents this by replacing the fluctuating motions with the Reynolds stress tensor, which contains only six independent unknowns. On one hand, Reynolds stress models (RSMs) solve transport equations for each component, offering high fidelity but little interpretability. On the other hand, eddy-viscosity models collapse the entire tensor to a single scalar coefficient, capturing turbulence as an effective viscosity. Despite its simplicity, it provides valuable physical insight by revealing turbulence’s essential role as a mechanism for momentum diffusion and enhanced mixing.

As a consequence, much like the compact form of Maxwell’s equations revealed the underlying structure of electromagnetism, sparse formulations and models of fluid motion provide deeper physical insight by exposing the fundamental role of key quantities in organising flow behaviour. In other words, sparsity promotes explicability. While sparsity often requires new assumptions, sometimes at the expense of predictive accuracy, these models typically retain a remarkable degree of expressive power despite being very low-parametric. However, what about the whole CFD pipeline? In practice, CFD employs high-dimensional inputs such as meshes, yielding algorithms that are not sparse in a formal sense, but nevertheless remain interpretable. I argue this interpretability arises in part because CFD is not monolithic: it is built from an aggregation of models and numerical schemes, each designed for specific objectives (e.g. turbulence closure, discretisation, boundary conditions). Such modularity allows a ‘divide and conquer’ strategy when diagnosing errors, debugging or analysing sensitivities of particular components of the modelling chain. This notion is referred as decomposability by Lipton (Reference Lipton2016), where ‘each part of the model […] admits an intuitive explanation’. In contrast, modern deep learning models tend to be monolithic, highly over-parametrised and opaque: predictive success is achieved only through massive parametrisation, but with interpretability being sacrificed (Doshi-Velez & Kim Reference Doshi-Velez and Kim2017). It highlights that the pursuit of sparsity in AI may involve trade-offs distinct from those in traditional mathematical tools. This also suggests that, while sparsity is indeed a critical pillar of effectiveness in scientific modelling, it may not be sufficient on its own: not all sparse methods are necessarily either effective or explicable, as further discussed in the AI context in § 4.3.

3.2.4. Semantic significance

In science, explicability is crucial, as it constitutes a necessary condition for constructive critical thinking. However, critical thinking can only be exercised in relation to an informed opinion, exhibiting the path and reasoning behind it. This process is often slower and more laborious than the rapid predictions of AI models. Yet, would we be willing to adhere to a result or a decision produced by an unexplainable AI, either in biology and medicine, but also in engineering? Maybe if the gap between AI and humans is substantial. This difference between slow reasoning and ultrafast predictions is characteristic of the distinction between conscious reasoning versus intuition, where the latter is ‘the ability to acquire knowledge, without recourse to conscious reasoning or needing an explanation’ (Patterson & Eggleston Reference Patterson and Eggleston2017).

A similar distinction is often invoked in artificial intelligence: on one hand, symbolic learning methods attempt to mimic conscious reasoning by manipulating explicit rules, logical relations or symbolic representations; on the other hand, statistical learning methods, such as neural networks, rely on detecting patterns in large datasets to provide rapid predictions without explicit reasoning. Mathematics aligns closely with the symbolic side, where logic and reasoning are the angular stones. In the realm of mathematics, we want to reason in an argumentative way to uncover truths. Therefore, mathematicians not only construct reality, but the mathematical notions created directly speak to reason (Bachelard Reference Bachelard1934). This forces mathematics, and thus physics, to be more than just a language with syntax, but also to possess semantics, where the structures and the relationships between mathematical objects are essential: ‘The knowledge, indeed, does not consist in a mere collection of data. On the contrary, it is well known that something can be considered as a data precisely thanks to its relationships with the structure, more or less abstract, of knowledge’ (Vandamme Reference Vandamme1976). In this context, semantics allows the reduction of information to manageable quantities with underlying structure and relationships, presented in a format or symbols (i.e. using the syntax) which facilitates their evaluations. This is precisely what mathematics accomplishes.

A typical example of semantic significance emerges in the construction of the various mathematical sets of real numbers (

![]() $\mathbb{R}$

), complex numbers (

$\mathbb{R}$

), complex numbers (

![]() $\mathbb{C}$

) and quaternions (

$\mathbb{C}$

) and quaternions (

![]() $\mathbb{H}$

). Consider the case of a real squared number being negative, which has no meaning in

$\mathbb{H}$

). Consider the case of a real squared number being negative, which has no meaning in

![]() $\mathbb{R}$

. By expanding our realm of exploration to include not only

$\mathbb{R}$

. By expanding our realm of exploration to include not only

![]() $\mathbb{R}$

, but also a new set

$\mathbb{R}$

, but also a new set

![]() $\mathbb{C}$

, we invent a new concept (‘i’, the pure imaginary number), which restores the validity of the initial proposition. However, what does this extension bring in terms of semantics? Is the transition from

$\mathbb{C}$

, we invent a new concept (‘i’, the pure imaginary number), which restores the validity of the initial proposition. However, what does this extension bring in terms of semantics? Is the transition from

![]() $\mathbb{R}$

to

$\mathbb{R}$

to

![]() $\mathbb{C}$

merely an artefact to enforce the validity of otherwise meaningless expressions or does

$\mathbb{C}$

merely an artefact to enforce the validity of otherwise meaningless expressions or does

![]() $\mathbb{C}$

introduce a new layer of meaning? Additionally, critically, does this meaningful transition only exist as an abstract idea or does it translate into tangible objects when analysing physical phenomena? To answer these questions, we can observed that expanding these sets inevitably alters their underlying syntactic rules. For instance,

$\mathbb{C}$

introduce a new layer of meaning? Additionally, critically, does this meaningful transition only exist as an abstract idea or does it translate into tangible objects when analysing physical phenomena? To answer these questions, we can observed that expanding these sets inevitably alters their underlying syntactic rules. For instance,

![]() $\mathbb{R}$

is a complete ordered field, whereas in

$\mathbb{R}$

is a complete ordered field, whereas in

![]() $\mathbb{C}$

, we lose ordering and in

$\mathbb{C}$

, we lose ordering and in

![]() $\mathbb{H}$

, commutativity. These syntactic rules, i.e. constraints governing how mathematical operations are performed, are central to the structure of these sets (Matsumoto & Nakai Reference Matsumoto and Nakai2023), which yields profound semantics much broader than their initial simple definitions. Indeed, each set provides a model for mathematical and physical systems where analogous rules are present or absent. Rotations offer a relevant example: while rotations in two dimensions are commutative, those in three dimensions are not. Consequently, unitary complex numbers can represent two-dimensional (2-D) rotations, and unitary quaternions rotations in three dimensions, since possessing the same structure. This connection between these sets and physical rotations can be formalised through the more general Lie theory, where complex numbers naturally encode planar rotations, while quaternions provide the algebraic structure underlying three-dimensional (3-D) rotations. In other words, syntactic rules and semantics are intertwined. This interconnection stems from the ability of mathematics to provide structures and relationships among diverse objects, either abstract entities as in pure mathematics (e.g. set of numbers) or tangible entities as in physics (e.g. rotations). These mathematical structures serve as a bridge between abstract ideas and real-world physical phenomena, thereby generating profound semantics to mathematics.

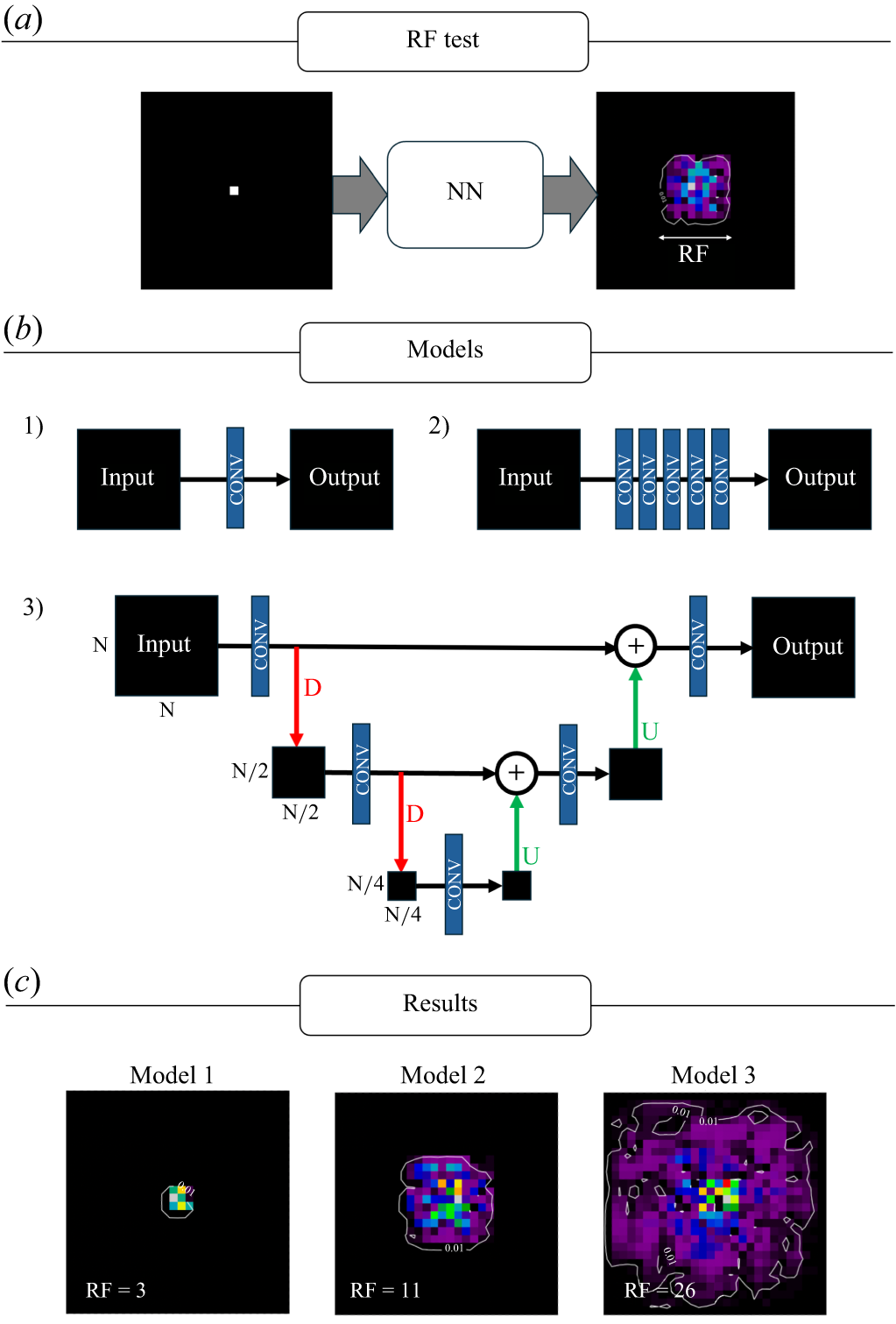

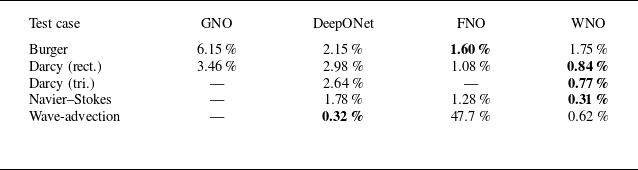

$\mathbb{H}$