1 Introduction

Let

![]() $m, n, c \in \mathbb {Z}$

with

$m, n, c \in \mathbb {Z}$

with

![]() $c \ge 1$

, and consider the classical Kloosterman sums

$c \ge 1$

, and consider the classical Kloosterman sums

$$ \begin{align} S(m, n; c) := \sum_{x \in (\mathbb{Z}/c\mathbb{Z})^\times} e\left(\frac{mx + n\bar{x}}{c}\right), \end{align} $$

$$ \begin{align} S(m, n; c) := \sum_{x \in (\mathbb{Z}/c\mathbb{Z})^\times} e\left(\frac{mx + n\bar{x}}{c}\right), \end{align} $$

where

![]() $e(\alpha ) := \exp (2\pi i \alpha )$

and

$e(\alpha ) := \exp (2\pi i \alpha )$

and

![]() $x\bar {x} \equiv 1 \pmod {c}$

. A great number of results in analytic number theory, particularly on the distribution of primes [Reference Bombieri, Friedlander and Iwaniec2, Reference Maynard34, Reference Maynard35, Reference Maynard36, Reference Deshouillers and Iwaniec9, Reference de La Bretèche and Drappeau8, Reference Merikoski37, Reference Lichtman32] and properties of Dirichlet L-functions [Reference Deshouillers and Iwaniec11, Reference Deshouillers and Iwaniec12, Reference Watt46, Reference Young48, Reference Drappeau, Pratt and Radziwiłł15, Reference Topacogullari45], rely on bounding exponential sums of the form

$x\bar {x} \equiv 1 \pmod {c}$

. A great number of results in analytic number theory, particularly on the distribution of primes [Reference Bombieri, Friedlander and Iwaniec2, Reference Maynard34, Reference Maynard35, Reference Maynard36, Reference Deshouillers and Iwaniec9, Reference de La Bretèche and Drappeau8, Reference Merikoski37, Reference Lichtman32] and properties of Dirichlet L-functions [Reference Deshouillers and Iwaniec11, Reference Deshouillers and Iwaniec12, Reference Watt46, Reference Young48, Reference Drappeau, Pratt and Radziwiłł15, Reference Topacogullari45], rely on bounding exponential sums of the form

$$ \begin{align} \sum_{m \sim M} a_m \sum_{n \sim N} b_n \sum_{(c, r) = 1} g\left(\frac{c}{C}\right) S(m\bar{r}, \pm n; sc), \end{align} $$

$$ \begin{align} \sum_{m \sim M} a_m \sum_{n \sim N} b_n \sum_{(c, r) = 1} g\left(\frac{c}{C}\right) S(m\bar{r}, \pm n; sc), \end{align} $$

where

![]() $(a_m)$

and

$(a_m)$

and

![]() $(b_n)$

are rough sequences of complex numbers, g is a compactly-supported smooth function, and

$(b_n)$

are rough sequences of complex numbers, g is a compactly-supported smooth function, and

![]() $r, s$

are coprime positive integers. One can often (but not always [Reference Merikoski37, Reference de La Bretèche and Drappeau8, Reference Maynard34]) leverage some additional averaging over r and s, if one of the sequences

$r, s$

are coprime positive integers. One can often (but not always [Reference Merikoski37, Reference de La Bretèche and Drappeau8, Reference Maynard34]) leverage some additional averaging over r and s, if one of the sequences

![]() $(a_m), (b_n)$

is independent of

$(a_m), (b_n)$

is independent of

![]() $r, s$

.

$r, s$

.

Estimates for sums like (1.2) are typically obtained via the spectral theory of automorphic forms [Reference Iwaniec25, Reference Iwaniec24], following Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10]; this allows one to bound (1.2) by certain averages of the sequences

![]() $(a_m)$

,

$(a_m)$

,

![]() $(b_n)$

with the Fourier coefficients of automorphic forms for

$(b_n)$

with the Fourier coefficients of automorphic forms for

![]() $\Gamma _0(rs)$

. Often in applications, the limitation in these bounds comes from our inability to rule out the existence of exceptional Maass cusp forms, corresponding to exceptional eigenvalues

$\Gamma _0(rs)$

. Often in applications, the limitation in these bounds comes from our inability to rule out the existence of exceptional Maass cusp forms, corresponding to exceptional eigenvalues

![]() $\lambda \in (0, \tfrac {1}{4})$

of the hyperbolic Laplacian. This is measured by a parameter

$\lambda \in (0, \tfrac {1}{4})$

of the hyperbolic Laplacian. This is measured by a parameter

![]() $\theta = \max _\lambda \max (0, \tfrac {1}{4} - \lambda )^{1/2}$

; under Selberg’s eigenvalue conjecture there would be no exceptional eigenvalues [Reference Selberg41], so one could take

$\theta = \max _\lambda \max (0, \tfrac {1}{4} - \lambda )^{1/2}$

; under Selberg’s eigenvalue conjecture there would be no exceptional eigenvalues [Reference Selberg41], so one could take

![]() $\theta = 0$

. But unconditionally, the record is Kim–Sarnak’s bound

$\theta = 0$

. But unconditionally, the record is Kim–Sarnak’s bound

![]() $\theta \le \tfrac {7}{64}$

, based on the automorphy of symmetric fourth power L-functions [Reference Kim28, Appendix 2].

$\theta \le \tfrac {7}{64}$

, based on the automorphy of symmetric fourth power L-functions [Reference Kim28, Appendix 2].

This creates a power-saving gap between the best conditional and unconditional results in various arithmetic problems, for example, on the prime factors of quadratic polynomials [Reference de La Bretèche and Drappeau8, Reference Merikoski37], the exponents of distribution of primes [Reference Lichtman32] and smooth numbers [Reference Pascadi39] in arithmetic progressions, and low-lying zeros of Dirichlet L-functions [Reference Drappeau, Pratt and Radziwiłł15]. Improvements to the dependency on

![]() $\theta $

, which help narrow this gap, come from large sieve inequalities for the Fourier coefficients of exceptional Maass cusp forms (see [Reference Deshouillers and Iwaniec10, Theorems 5, 6, 7] and their optimizations in [Reference Drappeau14, Reference Assing, Blomer and Li1, Reference Lichtman32, Reference Pascadi39]), which function as weak on-average substitutes for Selberg’s eigenvalue conjecture. However, in the key setting of fixed

$\theta $

, which help narrow this gap, come from large sieve inequalities for the Fourier coefficients of exceptional Maass cusp forms (see [Reference Deshouillers and Iwaniec10, Theorems 5, 6, 7] and their optimizations in [Reference Drappeau14, Reference Assing, Blomer and Li1, Reference Lichtman32, Reference Pascadi39]), which function as weak on-average substitutes for Selberg’s eigenvalue conjecture. However, in the key setting of fixed

![]() $r, s$

and sequences

$r, s$

and sequences

![]() $(a_n)$

of length

$(a_n)$

of length

![]() $N \approx rs$

, no such savings were previously available.

$N \approx rs$

, no such savings were previously available.

Luckily, for many of the most important applications, we don’t need to handle (1.2) for completely arbitrary sequences, but only for those arising from variations of Linnik’s dispersion method [Reference Linnik33, Reference Friedlander and Iwaniec18, Reference Bombieri, Friedlander and Iwaniec2, Reference Bombieri, Friedlander and Iwaniec3, Reference Bombieri, Friedlander and Iwaniec4]; these often have the rough form

$$ \begin{align} a_m = e(m\alpha) \qquad\qquad \text{and} \qquad\qquad b_n = \sum_{\substack{h_1, h_2 \sim H \\ h_1\ell_1 - h_2\ell_2 = n}} 1, \end{align} $$

$$ \begin{align} a_m = e(m\alpha) \qquad\qquad \text{and} \qquad\qquad b_n = \sum_{\substack{h_1, h_2 \sim H \\ h_1\ell_1 - h_2\ell_2 = n}} 1, \end{align} $$

for

![]() $\alpha \in \mathbb {R}/\mathbb {Z}$

and

$\alpha \in \mathbb {R}/\mathbb {Z}$

and

![]() $\ell _1 \asymp \ell _2 \gg H$

with

$\ell _1 \asymp \ell _2 \gg H$

with

![]() $(\ell _1, \ell _2) = 1$

. Our main results in this paper are new large sieve inequalities for such sequences, with Fourier transforms that obey strong concentration conditions. These are obtained by combining the framework of Deshouillers–Iwaniec with combinatorial ideas – specifically, with new estimates for bilinear sums of Kloosterman sums, stemming from a counting argument of Cilleruelo–Garaev [Reference Cilleruelo and Garaev7]. The resulting improved bounds for (1.2) can then feed through to the strongest results on several well-studied arithmetic problems.

$(\ell _1, \ell _2) = 1$

. Our main results in this paper are new large sieve inequalities for such sequences, with Fourier transforms that obey strong concentration conditions. These are obtained by combining the framework of Deshouillers–Iwaniec with combinatorial ideas – specifically, with new estimates for bilinear sums of Kloosterman sums, stemming from a counting argument of Cilleruelo–Garaev [Reference Cilleruelo and Garaev7]. The resulting improved bounds for (1.2) can then feed through to the strongest results on several well-studied arithmetic problems.

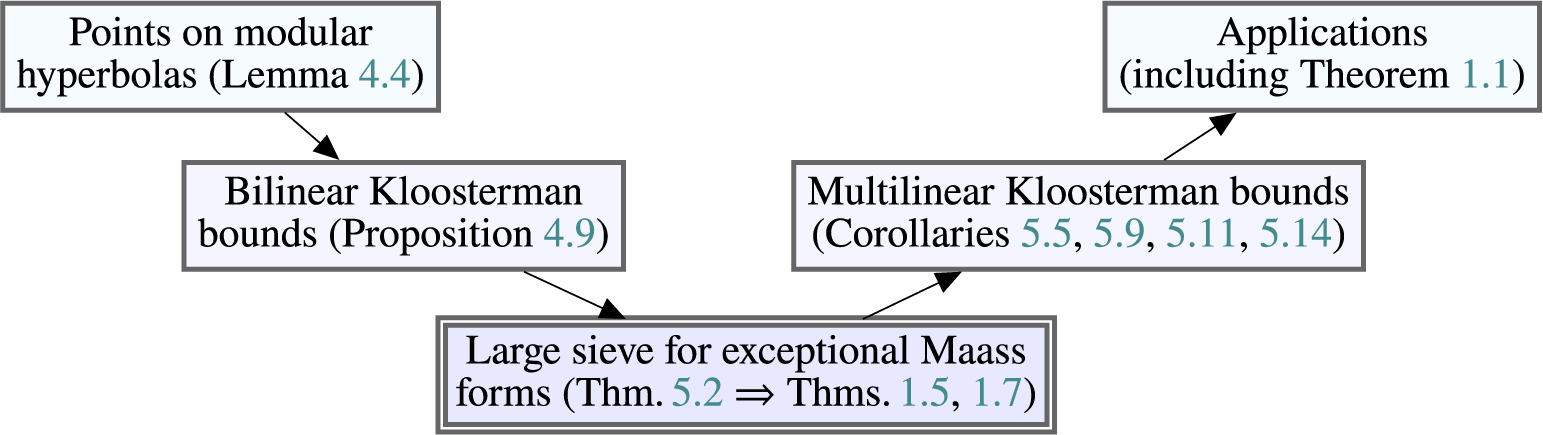

Figure 1 summarizes the results outlined above, which go from counting problems (on the top row), to exponential sums (middle row), to automorphic forms (bottom row), and then backwards. The transition between the first two rows is mostly elementary (using successive applications of Poisson summation, Cauchy–Schwarz, combinatorial decompositions, and/or sieve methods), while the transition between the last two rows uses the Kuznetsov trace formula [Reference Kuznetsov31, Reference Deshouillers and Iwaniec10].

Structure of paper (arrows signify logical implications).

Before we dive into the large sieve inequalities, let us motivate our discussion with applications.

Theorem 1.1. For infinitely many

![]() $n \in \mathbb {Z}_+$

, the greatest prime factor of

$n \in \mathbb {Z}_+$

, the greatest prime factor of

![]() $n^2+1$

is larger than

$n^2+1$

is larger than

![]() $n^{1.3}$

.

$n^{1.3}$

.

This result makes progress on a longstanding problem, approximating the famous conjecture that there exist infinitely many primes of the form

![]() $n^2+1$

. Back in 1967, Hooley [Reference Hooley23] proved the same result with an exponent of

$n^2+1$

. Back in 1967, Hooley [Reference Hooley23] proved the same result with an exponent of

![]() $1.1001$

, using the Weil bound for Kloosterman sums. In 1982, Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec9] used their bounds on multilinear forms of Kloosterman sums [Reference Deshouillers and Iwaniec10] to improve this substantially, up to an exponent of

$1.1001$

, using the Weil bound for Kloosterman sums. In 1982, Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec9] used their bounds on multilinear forms of Kloosterman sums [Reference Deshouillers and Iwaniec10] to improve this substantially, up to an exponent of

![]() $1.2024$

. More recently, using Kim–Sarnak’s bound

$1.2024$

. More recently, using Kim–Sarnak’s bound

![]() $\theta \le \tfrac {7}{64}$

[Reference Kim28, Appendix 2], de la Bretèche and Drappeau [Reference de La Bretèche and Drappeau8] optimized the exponent to

$\theta \le \tfrac {7}{64}$

[Reference Kim28, Appendix 2], de la Bretèche and Drappeau [Reference de La Bretèche and Drappeau8] optimized the exponent to

![]() $1.2182$

. Finally, Merikoski [Reference Merikoski37] proved a new bilinear estimate (still relying on the bounds of Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10]), and used Harman’s sieve to reach the exponent

$1.2182$

. Finally, Merikoski [Reference Merikoski37] proved a new bilinear estimate (still relying on the bounds of Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10]), and used Harman’s sieve to reach the exponent

![]() $1.279$

; assuming Selberg’s eigenvalue conjecture, Merikoski also reached the conditional exponent

$1.279$

; assuming Selberg’s eigenvalue conjecture, Merikoski also reached the conditional exponent

![]() $1.312$

. With our new large sieve inequalities (Theorems 1.5 and 1.7), we can improve the arithmetic information due to both Merikoski [Reference Merikoski37] and de la Bretèche–Drappeau [Reference de La Bretèche and Drappeau8], leading to the unconditional result in Theorem 1.1. As in [Reference Merikoski37, Reference de La Bretèche and Drappeau8], by adapting our proof, it should be possible to obtain similar results for other irreducible quadratic polynomials.

$1.312$

. With our new large sieve inequalities (Theorems 1.5 and 1.7), we can improve the arithmetic information due to both Merikoski [Reference Merikoski37] and de la Bretèche–Drappeau [Reference de La Bretèche and Drappeau8], leading to the unconditional result in Theorem 1.1. As in [Reference Merikoski37, Reference de La Bretèche and Drappeau8], by adapting our proof, it should be possible to obtain similar results for other irreducible quadratic polynomials.

Additionally, we announce applications to the distribution of primes and smooth numbers in arithmetic progressions to large moduli. In [Reference Pascadi38], the author will show that the primes have exponent of distribution

![]() $5/8-\varepsilon $

using “triply-well-factorable” weights

$5/8-\varepsilon $

using “triply-well-factorable” weights

![]() $(\lambda _q)$

[Reference Maynard35], in the sense that

$(\lambda _q)$

[Reference Maynard35], in the sense that

$$\begin{align*}\sum_{\substack{q \le x^{5/8-\varepsilon} \\ (q, a) = 1}} \lambda_q \left(\pi(x; q, a) - \frac{\pi(x)}{\varphi(q)}\right) \ll_{\varepsilon,A,a} \frac{x}{(\log x)^A}, \end{align*}$$

$$\begin{align*}\sum_{\substack{q \le x^{5/8-\varepsilon} \\ (q, a) = 1}} \lambda_q \left(\pi(x; q, a) - \frac{\pi(x)}{\varphi(q)}\right) \ll_{\varepsilon,A,a} \frac{x}{(\log x)^A}, \end{align*}$$

where

![]() $\pi (x; q, a)$

denotes the number of primes up to x which are congruent to a mod q. A similar result, with the same exponent of

$\pi (x; q, a)$

denotes the number of primes up to x which are congruent to a mod q. A similar result, with the same exponent of

![]() $5/8-\varepsilon $

, will be established for smooth numbers, using arbitrary

$5/8-\varepsilon $

, will be established for smooth numbers, using arbitrary

![]() $1$

-bounded weights

$1$

-bounded weights

![]() $(\lambda _q)$

. These will improve results of Maynard [Reference Maynard35] and Lichtman [Reference Lichtman32], respectively Drappeau [Reference Drappeau13] and the author [Reference Pascadi39]. Notably, our large sieve inequalities will suffice to completely eliminate the dependency on Selberg’s eigenvalue conjecture in these cases.

$(\lambda _q)$

. These will improve results of Maynard [Reference Maynard35] and Lichtman [Reference Lichtman32], respectively Drappeau [Reference Drappeau13] and the author [Reference Pascadi39]. Notably, our large sieve inequalities will suffice to completely eliminate the dependency on Selberg’s eigenvalue conjecture in these cases.

We also note that an extension of our large sieve inequalities to Maass forms with a general nebentypus should have consequences to counting smooth values of irreducible quadratic polynomials [Reference de La Bretèche and Drappeau8, Reference Harman21, Reference Harman22] (by improving de la Bretèche–Drappeau’s [Reference de La Bretèche and Drappeau8, Théorème 5.2]), and to enlarging the Fourier support in one-level density estimates for Dirichlet L-functions [Reference Drappeau, Pratt and Radziwiłł15].

1.1 The large sieve inequalities

We now turn to our main technical results. The sums of Kloosterman sums from (1.2) are related to the Fourier coefficients of

![]() $\mathrm {GL}_2$

automorphic forms of level

$\mathrm {GL}_2$

automorphic forms of level

![]() $q = rs$

by the Kuznetsov trace formula [Reference Kuznetsov31, Reference Deshouillers and Iwaniec10] for the congruence group

$q = rs$

by the Kuznetsov trace formula [Reference Kuznetsov31, Reference Deshouillers and Iwaniec10] for the congruence group

![]() $\Gamma _0(q)$

.

$\Gamma _0(q)$

.

More precisely, the spectral side of the Kuznetsov formula contains three terms, corresponding to the contribution of holomorphic forms, Maass forms, and Eisenstein series. The exceptional Maass forms are eigenfunctions of the hyperbolic Laplacian on

![]() $L^2(\Gamma _0(q) \backslash {\mathbb {H}})$

with eigenvalues

$L^2(\Gamma _0(q) \backslash {\mathbb {H}})$

with eigenvalues

![]() $0 < \lambda < 1/4$

; this (conjecturally empty) exceptional spectrum typically produces losses of the form

$0 < \lambda < 1/4$

; this (conjecturally empty) exceptional spectrum typically produces losses of the form

![]() $X^{\theta (q)}$

, where X is a large parameter and

$X^{\theta (q)}$

, where X is a large parameter and

![]() $\theta (q) := \max _\lambda \sqrt {\max (0, \tfrac {1}{4} - \lambda )}$

. The aforementioned large sieve inequalities for exceptional Maass forms can help alleviate this loss, by incorporating factors of

$\theta (q) := \max _\lambda \sqrt {\max (0, \tfrac {1}{4} - \lambda )}$

. The aforementioned large sieve inequalities for exceptional Maass forms can help alleviate this loss, by incorporating factors of

![]() $X^{\theta }$

. Below we state a known result for general sequences

$X^{\theta }$

. Below we state a known result for general sequences

![]() $(a_n)$

(the values

$(a_n)$

(the values

![]() $X \in \{1, q/N\}$

corresponding to [Reference Deshouillers and Iwaniec10, Theorems 2 and 5]), which we aim to improve; we detail our notation in Section 3.

$X \in \{1, q/N\}$

corresponding to [Reference Deshouillers and Iwaniec10, Theorems 2 and 5]), which we aim to improve; we detail our notation in Section 3.

Theorem 1.2 (Large sieve with general sequences [Reference Deshouillers and Iwaniec10])

Let

![]() $\varepsilon> 0$

,

$\varepsilon> 0$

,

![]() $X> 0$

,

$X> 0$

,

![]() $N \ge 1/2$

, and

$N \ge 1/2$

, and

![]() $(a_n)_{n \sim N}$

be a complex sequence. Let

$(a_n)_{n \sim N}$

be a complex sequence. Let

![]() $q \in \mathbb {Z}_+$

,

$q \in \mathbb {Z}_+$

,

![]() $\mathfrak {a}$

be a cusp of

$\mathfrak {a}$

be a cusp of

![]() $\Gamma _0(q)$

with

$\Gamma _0(q)$

with

![]() $\mu (\mathfrak {a}) = q^{-1}$

, and

$\mu (\mathfrak {a}) = q^{-1}$

, and

![]() $\sigma _{\mathfrak {a}} \in \mathrm {PSL}_2(\mathbb {R})$

be a scaling matrix for

$\sigma _{\mathfrak {a}} \in \mathrm {PSL}_2(\mathbb {R})$

be a scaling matrix for

![]() $\mathfrak {a}$

. Consider an orthonormal basis of Maass cusp forms for

$\mathfrak {a}$

. Consider an orthonormal basis of Maass cusp forms for

![]() $\Gamma _0(q)$

, with eigenvalues

$\Gamma _0(q)$

, with eigenvalues

![]() $\lambda _j$

and Fourier coefficients

$\lambda _j$

and Fourier coefficients

![]() $\rho _{j\mathfrak {a}}(n)$

around the cusp

$\rho _{j\mathfrak {a}}(n)$

around the cusp

![]() $\mathfrak {a}$

(via

$\mathfrak {a}$

(via

![]() $\sigma _{\mathfrak {a}}$

). Then with

$\sigma _{\mathfrak {a}}$

). Then with

![]() $\theta _j := \sqrt {\tfrac {1}{4} - \lambda _j}$

, one has

$\theta _j := \sqrt {\tfrac {1}{4} - \lambda _j}$

, one has

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(n) \right\vert^2 \ll_\varepsilon (qN)^\varepsilon \left(1 + \frac{N}{q}\right) \|a_n\|_2^2, \end{align} $$

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(n) \right\vert^2 \ll_\varepsilon (qN)^\varepsilon \left(1 + \frac{N}{q}\right) \|a_n\|_2^2, \end{align} $$

for any

$$ \begin{align} X \ll \max\left(1, \frac{q}{N}, \frac{q^2}{N^3}\right). \end{align} $$

$$ \begin{align} X \ll \max\left(1, \frac{q}{N}, \frac{q^2}{N^3}\right). \end{align} $$

Remark 1.3. As in [Reference Maynard34, Reference Pascadi39, Reference Lichtman32], we use Deshouillers–Iwaniec’s normalization [Reference Deshouillers and Iwaniec10] for the Fourier coefficients

![]() $\rho _{j\mathfrak {a}}(n)$

of Maass forms. In various other works [Reference Topacogullari45, Reference Drappeau14, Reference de La Bretèche and Drappeau8, Reference Merikoski37],

$\rho _{j\mathfrak {a}}(n)$

of Maass forms. In various other works [Reference Topacogullari45, Reference Drappeau14, Reference de La Bretèche and Drappeau8, Reference Merikoski37],

![]() $\rho _{j\mathfrak {a}}(n)$

are rescaled by

$\rho _{j\mathfrak {a}}(n)$

are rescaled by

![]() $n^{-1/2}$

.

$n^{-1/2}$

.

Remark 1.4. An equivalent (and more common [Reference Deshouillers and Iwaniec10, Reference Drappeau14]) way to phrase results like Theorem 1.2 is that

$$\begin{align*}\sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(n) \right\vert^2 \ll_\varepsilon (qN)^\varepsilon \left(1 + \frac{X}{X_0}\right)^{2\theta(q)} \left(1 + \frac{N}{q}\right) \|a_n\|_2^2, \end{align*}$$

$$\begin{align*}\sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(n) \right\vert^2 \ll_\varepsilon (qN)^\varepsilon \left(1 + \frac{X}{X_0}\right)^{2\theta(q)} \left(1 + \frac{N}{q}\right) \|a_n\|_2^2, \end{align*}$$

for any

![]() $X> 0$

, and

$X> 0$

, and

![]() $X_0 = X_0(N, q)$

given by the right-hand side of (1.5). We prefer to state our large sieve inequalities in terms of the maximal value of X which does not produce any losses in the right-hand side, compared to the regular spectrum (i.e.,

$X_0 = X_0(N, q)$

given by the right-hand side of (1.5). We prefer to state our large sieve inequalities in terms of the maximal value of X which does not produce any losses in the right-hand side, compared to the regular spectrum (i.e.,

![]() $X \ll X_0$

). We note that in applications, one usually has

$X \ll X_0$

). We note that in applications, one usually has

![]() $\sqrt {q} \ll N \ll q$

, and the best choice in (1.5) for this range is

$\sqrt {q} \ll N \ll q$

, and the best choice in (1.5) for this range is

![]() $X \asymp q/N$

. But in the critical range

$X \asymp q/N$

. But in the critical range

![]() $N \asymp q$

, Theorem 1.2 is as good as the large sieve inequalities for the full spectrum [Reference Deshouillers and Iwaniec10, Theorem 2], since the limitation

$N \asymp q$

, Theorem 1.2 is as good as the large sieve inequalities for the full spectrum [Reference Deshouillers and Iwaniec10, Theorem 2], since the limitation

![]() $X \ll 1$

forestalls any savings in the

$X \ll 1$

forestalls any savings in the

![]() $\theta $

-aspect.

$\theta $

-aspect.

When some averaging over levels

![]() $q \sim Q$

is available,

$q \sim Q$

is available,

![]() $\mathfrak {a} = \infty $

, and

$\mathfrak {a} = \infty $

, and

![]() $(a_n)$

,

$(a_n)$

,

![]() $\sigma _\infty $

are independent of q, Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10, Theorem 6] improved the admissible range to

$\sigma _\infty $

are independent of q, Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10, Theorem 6] improved the admissible range to

![]() $X \ll \max (1, (Q/N)^2)$

; Lichtman [Reference Lichtman32] recently refined this to

$X \ll \max (1, (Q/N)^2)$

; Lichtman [Reference Lichtman32] recently refined this to

![]() $X \ll \max (1, \min ((Q/N)^{32/7}, Q^2/N))$

, by making

$X \ll \max (1, \min ((Q/N)^{32/7}, Q^2/N))$

, by making

![]() $\theta $

-dependencies explicit in [Reference Deshouillers and Iwaniec10, §8.2]. We note that these results are still limited at

$\theta $

-dependencies explicit in [Reference Deshouillers and Iwaniec10, §8.2]. We note that these results are still limited at

![]() $X \ll 1$

when

$X \ll 1$

when

![]() $N \asymp Q$

.

$N \asymp Q$

.

Although it seems difficult to improve Theorem 1.2 in general (see Section 2.1), one can hope to do better for special sequences

![]() $(a_n)$

; for instance, the last term in (1.5) can be improved if the sequence

$(a_n)$

; for instance, the last term in (1.5) can be improved if the sequence

![]() $(a_n)$

is sparse. In this paper, we consider the “dual” setting when

$(a_n)$

is sparse. In this paper, we consider the “dual” setting when

![]() $(a_n)$

is sparse in frequency space, that is, when the Fourier transform

$(a_n)$

is sparse in frequency space, that is, when the Fourier transform

![]() $\hat {a}(\xi ) := \sum _n a_n\, e(-n\xi )$

is concentrated on a subset of

$\hat {a}(\xi ) := \sum _n a_n\, e(-n\xi )$

is concentrated on a subset of

![]() $\mathbb {R}/\mathbb {Z}$

. We give a general result of this sort in Theorem 5.2, which also depends on rational approximations to the support of

$\mathbb {R}/\mathbb {Z}$

. We give a general result of this sort in Theorem 5.2, which also depends on rational approximations to the support of

![]() $\hat {a}$

. Below we state the two main cases of interest, corresponding to the sequences in (1.3) (we also incorporate a scalar a in the Fourier coefficients, but on a first read one should take

$\hat {a}$

. Below we state the two main cases of interest, corresponding to the sequences in (1.3) (we also incorporate a scalar a in the Fourier coefficients, but on a first read one should take

![]() $a = 1$

).

$a = 1$

).

Theorem 1.5 (Large sieve with exponential phases)

Let

![]() $\varepsilon , X> 0$

,

$\varepsilon , X> 0$

,

![]() $N \ge 1/2$

,

$N \ge 1/2$

,

![]() $\alpha \in \mathbb {R}/\mathbb {Z}$

, and

$\alpha \in \mathbb {R}/\mathbb {Z}$

, and

![]() $q, a \in \mathbb {Z}_+$

. Then with the notation of Theorem 1.2 and the choice of scaling matrix in (3.9), the bound

$q, a \in \mathbb {Z}_+$

. Then with the notation of Theorem 1.2 and the choice of scaling matrix in (3.9), the bound

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} e(n\alpha)\, \rho_{j\mathfrak{a}}(an) \right\vert^2 \ll_\varepsilon (qaN)^\varepsilon \left(1 + \frac{aN}{q}\right) N \end{align} $$

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} e(n\alpha)\, \rho_{j\mathfrak{a}}(an) \right\vert^2 \ll_\varepsilon (qaN)^\varepsilon \left(1 + \frac{aN}{q}\right) N \end{align} $$

holds for all

$$ \begin{align} X \ll \frac{\max\left(N, \frac{q}{a}\right)}{\min_{t \in \mathbb{Z}_+} \left(t + N\|t\alpha\| \right)}. \end{align} $$

$$ \begin{align} X \ll \frac{\max\left(N, \frac{q}{a}\right)}{\min_{t \in \mathbb{Z}_+} \left(t + N\|t\alpha\| \right)}. \end{align} $$

In particular, this implies the range

![]() $X \ll \max (\sqrt {N}, \tfrac {q}{a\sqrt {N}})$

, uniformly in

$X \ll \max (\sqrt {N}, \tfrac {q}{a\sqrt {N}})$

, uniformly in

![]() $\alpha $

and

$\alpha $

and

![]() $\sigma _{\mathfrak {a}}$

. The same result holds if

$\sigma _{\mathfrak {a}}$

. The same result holds if

![]() $e(n\alpha )$

is multiplied by

$e(n\alpha )$

is multiplied by

![]() $\Phi (n/N)$

, for any smooth function

$\Phi (n/N)$

, for any smooth function

![]() $\Phi : (0, 4) \to \mathbb {C}$

with

$\Phi : (0, 4) \to \mathbb {C}$

with

![]() $\Phi ^{(j)} \ll _j 1$

.

$\Phi ^{(j)} \ll _j 1$

.

Here,

![]() $\|\alpha \|$

denotes the distance from

$\|\alpha \|$

denotes the distance from

![]() $\alpha $

to

$\alpha $

to

![]() $0$

inside

$0$

inside

![]() $\mathbb {R}/\mathbb {Z}$

; the fact that the worst (“minor-arc”) range covered by (1.7) is

$\mathbb {R}/\mathbb {Z}$

; the fact that the worst (“minor-arc”) range covered by (1.7) is

![]() $X \ll \max (\sqrt {N}, \tfrac {q}{a\sqrt {N}})$

follows from a pigeonhole argument. The best range,

$X \ll \max (\sqrt {N}, \tfrac {q}{a\sqrt {N}})$

follows from a pigeonhole argument. The best range,

![]() $X \ll \max (N, \tfrac {q}{a})$

, is achieved when

$X \ll \max (N, \tfrac {q}{a})$

, is achieved when

![]() $\alpha $

is

$\alpha $

is

![]() $O(N^{-1})$

away from a rational number with bounded denominator. In particular, Theorem 1.5 obtains significant savings in the

$O(N^{-1})$

away from a rational number with bounded denominator. In particular, Theorem 1.5 obtains significant savings in the

![]() $\theta $

-aspect in the critical case

$\theta $

-aspect in the critical case

![]() $N \asymp q$

, for an individual level q, which was previously impossible to the best of our knowledge.

$N \asymp q$

, for an individual level q, which was previously impossible to the best of our knowledge.

Remark 1.6. As detailed in Section 3.2, altering the scaling matrix

![]() $\sigma _{\mathfrak {a}}$

in bounds like (1.6) is equivalent to altering the phase

$\sigma _{\mathfrak {a}}$

in bounds like (1.6) is equivalent to altering the phase

![]() $\alpha $

; the canonical choice in (3.9) leads to several simplifications in practice.

$\alpha $

; the canonical choice in (3.9) leads to several simplifications in practice.

When

![]() $a = 1$

,

$a = 1$

,

![]() $\mathfrak {a} = \infty $

, and

$\mathfrak {a} = \infty $

, and

![]() $\alpha $

is independent of q, Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10, Theorem 7] showed that the bound in (1.6) holds on average over levels

$\alpha $

is independent of q, Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10, Theorem 7] showed that the bound in (1.6) holds on average over levels

![]() $q \sim Q$

in the larger range

$q \sim Q$

in the larger range

![]() $X \ll \max (N, Q^2/N)$

. In this on-average setting, we also mention the large sieve inequality of Watt [Reference Watt46, Theorem 2], which saves roughly

$X \ll \max (N, Q^2/N)$

. In this on-average setting, we also mention the large sieve inequality of Watt [Reference Watt46, Theorem 2], which saves roughly

![]() $X = Q^2/N^{3/2}$

when

$X = Q^2/N^{3/2}$

when

![]() $a_n$

is a smoothed divisor-type function.

$a_n$

is a smoothed divisor-type function.

For the second sequences mentioned in (1.3), we state a bound which also incorporates exponential phases

![]() $e(h_i\alpha _i)$

. The reader should keep in mind the case of parameter sizes

$e(h_i\alpha _i)$

. The reader should keep in mind the case of parameter sizes

![]() $N \asymp HL$

,

$N \asymp HL$

,

![]() $H \asymp L$

, and

$H \asymp L$

, and

![]() $\alpha _i = 0$

, when the X-factor saved below can be as large as

$\alpha _i = 0$

, when the X-factor saved below can be as large as

![]() $\max (\sqrt {N}, \tfrac {q}{a\sqrt {N}})$

.

$\max (\sqrt {N}, \tfrac {q}{a\sqrt {N}})$

.

Theorem 1.7 (Large sieve with dispersion coefficients)

Let

![]() $\varepsilon , X> 0$

,

$\varepsilon , X> 0$

,

![]() $N \ge 1/2$

,

$N \ge 1/2$

,

![]() $L, H \gg 1$

,

$L, H \gg 1$

,

![]() $\alpha _1, \alpha _2 \in \mathbb {R}/\mathbb {Z}$

, and

$\alpha _1, \alpha _2 \in \mathbb {R}/\mathbb {Z}$

, and

![]() $q, a, \ell _1, \ell _2 \in \mathbb {Z}_+$

satisfy

$q, a, \ell _1, \ell _2 \in \mathbb {Z}_+$

satisfy

![]() $\ell _1, \ell _2 \asymp L$

,

$\ell _1, \ell _2 \asymp L$

,

![]() $(\ell _1, \ell _2) = 1$

. Consider the sequence

$(\ell _1, \ell _2) = 1$

. Consider the sequence

![]() $(a_n)_{n \sim N}$

given by

$(a_n)_{n \sim N}$

given by

$$\begin{align*}a_n := \sum_{\substack{h_1, h_2 \in \mathbb{Z} \\ h_1 \ell_1 - h_2 \ell_2 = n}} \Phi_1\left(\frac{h_1}{H}\right) \Phi_2\left(\frac{h_2}{H}\right) e(h_1 \alpha_1 + h_2 \alpha_2), \end{align*}$$

$$\begin{align*}a_n := \sum_{\substack{h_1, h_2 \in \mathbb{Z} \\ h_1 \ell_1 - h_2 \ell_2 = n}} \Phi_1\left(\frac{h_1}{H}\right) \Phi_2\left(\frac{h_2}{H}\right) e(h_1 \alpha_1 + h_2 \alpha_2), \end{align*}$$

where

![]() $\Phi _i : (-\infty , \infty ) \to \mathbb {C}$

are smooth functions supported in

$\Phi _i : (-\infty , \infty ) \to \mathbb {C}$

are smooth functions supported in

![]() $(-O(1),O(1))$

, with

$(-O(1),O(1))$

, with

![]() $\Phi _i^{(j)} \ll _j 1$

,

$\Phi _i^{(j)} \ll _j 1$

,

![]() $\forall j \ge 0$

. Then with the notation of Theorem 1.2 and the choice of scaling matrix in (3.9), if

$\forall j \ge 0$

. Then with the notation of Theorem 1.2 and the choice of scaling matrix in (3.9), if

![]() $q \gg L^2$

, one has

$q \gg L^2$

, one has

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(an) \right\vert^2 \ll_\varepsilon (qaH)^{\varepsilon} \left(1 + \frac{aN}{q}\right) \left(\|a_n\|_2^2 + \gcd(a, q) N \left(\frac{H}{L} + \frac{H^2}{L^2}\right)\right), \end{align} $$

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(an) \right\vert^2 \ll_\varepsilon (qaH)^{\varepsilon} \left(1 + \frac{aN}{q}\right) \left(\|a_n\|_2^2 + \gcd(a, q) N \left(\frac{H}{L} + \frac{H^2}{L^2}\right)\right), \end{align} $$

whenever

$$ \begin{align} X \ll \max\left(1, \frac{q}{aN}\right) \max\left(1, \frac{NH}{(H+L)LM}\right), \qquad\quad M := \min_{\substack{t \in \mathbb{Z}_+ \\ i \in \{1, 2\}}} \left(t + \frac{N}{L} \|t\alpha_i\|\right). \end{align} $$

$$ \begin{align} X \ll \max\left(1, \frac{q}{aN}\right) \max\left(1, \frac{NH}{(H+L)LM}\right), \qquad\quad M := \min_{\substack{t \in \mathbb{Z}_+ \\ i \in \{1, 2\}}} \left(t + \frac{N}{L} \|t\alpha_i\|\right). \end{align} $$

Remark 1.8. In Theorem 1.7, when

![]() $N \asymp HL$

and

$N \asymp HL$

and

![]() $\alpha _i = 0$

, the norm

$\alpha _i = 0$

, the norm

![]() $\|a_n\|_2^2$

is on the order of

$\|a_n\|_2^2$

is on the order of

![]() $N(\frac {H}{L} + \frac {H^2}{L^2})$

. So in this setting, which is the limiting case for our applications, the right-hand side of (1.8) produces no important losses over the regular-spectrum bound of

$N(\frac {H}{L} + \frac {H^2}{L^2})$

. So in this setting, which is the limiting case for our applications, the right-hand side of (1.8) produces no important losses over the regular-spectrum bound of

![]() $(qN)^\varepsilon (1 + \tfrac {aN}{q})\, \|a_n\|_2^2$

.

$(qN)^\varepsilon (1 + \tfrac {aN}{q})\, \|a_n\|_2^2$

.

Remark 1.9. Some instances of the dispersion method [Reference Drappeau, Pratt and Radziwiłł15, Reference Drappeau14, Reference Assing, Blomer and Li1] use coefficients roughly of the shape

$$ \begin{align} b_n = \sum_{\substack{h \sim H \\ h(\ell_1-\ell_2) = n}} 1, \end{align} $$

$$ \begin{align} b_n = \sum_{\substack{h \sim H \\ h(\ell_1-\ell_2) = n}} 1, \end{align} $$

where

![]() $\ell _1 \asymp \ell _2 \gg H$

,

$\ell _1 \asymp \ell _2 \gg H$

,

![]() $\ell _1 \neq \ell _2$

, and the level is

$\ell _1 \neq \ell _2$

, and the level is

![]() $q = \ell _1 \ell _2$

. Although these resemble the second sequence from (1.3) (treated by Theorem 1.7), one should actually handle this case using Theorem 1.5, with

$q = \ell _1 \ell _2$

. Although these resemble the second sequence from (1.3) (treated by Theorem 1.7), one should actually handle this case using Theorem 1.5, with

![]() $\alpha = 0$

,

$\alpha = 0$

,

![]() $N = H$

, and

$N = H$

, and

![]() $a = |\ell _1 - \ell _2|$

. In particular, for these ranges we have

$a = |\ell _1 - \ell _2|$

. In particular, for these ranges we have

![]() $aN = |\ell _1-\ell _2|H \ll \ell _1\ell _2 = q$

, so the

$aN = |\ell _1-\ell _2|H \ll \ell _1\ell _2 = q$

, so the

![]() $1$

-term in the right-hand side of (1.6) is dominant, and the range in (1.7) becomes

$1$

-term in the right-hand side of (1.6) is dominant, and the range in (1.7) becomes

![]() $X \ll \ell _1\ell _2/|\ell _1 - \ell _2|$

.

$X \ll \ell _1\ell _2/|\ell _1 - \ell _2|$

.

Remark 1.10. For simplicity, we state and prove our results in the setting of arbitrary bases of classical Maass forms, following the original work of Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10]. However, our work should admit two independent extensions, which are relevant for some applications. The first is handling Maass forms with a nontrivial nebentypus, following Drappeau [Reference Drappeau14]; this leads to bounds for sums like (1.2) with c restricted to an arithmetic progression. The second is considering Hecke–Maass forms which are exceptional with respect to the Ramanujan–Petersson conjecture at finite places, the non-Archimedean analogue of Selberg’s conjecture; this should improve the dependency on the scalar a when

![]() $aN> q$

. One could either follow Assing–Blomer–Li [Reference Assing, Blomer and Li1] to ‘factor out’ a from

$aN> q$

. One could either follow Assing–Blomer–Li [Reference Assing, Blomer and Li1] to ‘factor out’ a from

![]() $\rho _{j\mathfrak {a}}(an)$

(and apply Kim–Sarnak’s bound [Reference Kim28] at places dividing a before using our large sieve inequalities), or treat the exceptional forms at places dividing a similarly to the Archimedean case, to match the regular-spectrum bound whenever

$\rho _{j\mathfrak {a}}(an)$

(and apply Kim–Sarnak’s bound [Reference Kim28] at places dividing a before using our large sieve inequalities), or treat the exceptional forms at places dividing a similarly to the Archimedean case, to match the regular-spectrum bound whenever

![]() $aX$

is at most a function of q and N (this option should work better when a is well-factorable).

$aX$

is at most a function of q and N (this option should work better when a is well-factorable).

2 Informal overview

Let us summarize the key ideas behind our work, ignoring a handful of technical details such as smooth weights, GCD constraints, or keeping track of

![]() $x^{o(1)}$

factors.

$x^{o(1)}$

factors.

2.1 Large sieve with general sequences

Let

![]() $q \in \mathbb {Z}_+$

and consider the simplified version

$q \in \mathbb {Z}_+$

and consider the simplified version

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\infty}(n) \right\vert^2 \lesssim \left(1 + \frac{N}{q}\right) \|a_n\|_2^2 \end{align} $$

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\infty}(n) \right\vert^2 \lesssim \left(1 + \frac{N}{q}\right) \|a_n\|_2^2 \end{align} $$

of the large sieve inequality from Theorem 1.2, for

![]() $\mathfrak {a} = \infty $

, ignoring

$\mathfrak {a} = \infty $

, ignoring

![]() $(qN)^{o(1)}$

factors. Here

$(qN)^{o(1)}$

factors. Here

![]() $(a_n)$

are arbitrary complex coefficients, and the reader may pretend that

$(a_n)$

are arbitrary complex coefficients, and the reader may pretend that

![]() $|a_n| \approx 1$

for each n, so that

$|a_n| \approx 1$

for each n, so that

![]() $\|a_n\|_2^2 \approx N$

. Such an inequality follows from [Reference Deshouillers and Iwaniec10, Theorem 2] when

$\|a_n\|_2^2 \approx N$

. Such an inequality follows from [Reference Deshouillers and Iwaniec10, Theorem 2] when

![]() $X = 1$

, but we need larger values of X to temper the contribution of exceptional eigenvalues. The Kuznetsov trace formula [Reference Kuznetsov31] in Proposition 3.5, combined with large sieve inequalities for the regular spectrum [Reference Deshouillers and Iwaniec10, Theorem 2], essentially reduces the problem to bounding (a smoothed variant of) the sum

$X = 1$

, but we need larger values of X to temper the contribution of exceptional eigenvalues. The Kuznetsov trace formula [Reference Kuznetsov31] in Proposition 3.5, combined with large sieve inequalities for the regular spectrum [Reference Deshouillers and Iwaniec10, Theorem 2], essentially reduces the problem to bounding (a smoothed variant of) the sum

$$ \begin{align} \sum_{\substack{c \sim NX \\ c \equiv 0 \pmod{q}}} \frac{1}{c} \sum_{m \sim N} \bar{a_m} \sum_{n \sim N} a_n\, S(m, n; c) \end{align} $$

$$ \begin{align} \sum_{\substack{c \sim NX \\ c \equiv 0 \pmod{q}}} \frac{1}{c} \sum_{m \sim N} \bar{a_m} \sum_{n \sim N} a_n\, S(m, n; c) \end{align} $$

by the same amount as in the right-hand side of (2.1) – see Corollary 3.10 for a formal statement in this direction. The left-hand side vanishes for

![]() $X < q/(2N)$

, so we immediately obtain (2.1) for

$X < q/(2N)$

, so we immediately obtain (2.1) for

![]() $X \ll q/N$

, which is the content of [Reference Deshouillers and Iwaniec10, Theorem 5]. Alternatively, we can plug in the pointwise Weil bound for

$X \ll q/N$

, which is the content of [Reference Deshouillers and Iwaniec10, Theorem 5]. Alternatively, we can plug in the pointwise Weil bound for

![]() $S(m, n; c)$

and apply Cauchy–Schwarz, to obtain an upper bound of roughly

$S(m, n; c)$

and apply Cauchy–Schwarz, to obtain an upper bound of roughly

$$ \begin{align} \frac{NX}{q} \frac{1}{NX} N \|a_n\|_2^2 \sqrt{NX} = \frac{N^{3/2} X^{1/2}}{q} \|a_n\|_2^2. \end{align} $$

$$ \begin{align} \frac{NX}{q} \frac{1}{NX} N \|a_n\|_2^2 \sqrt{NX} = \frac{N^{3/2} X^{1/2}}{q} \|a_n\|_2^2. \end{align} $$

This is acceptable in (2.1) provided that

![]() $X \le q^2/N^3$

, which completes the range from Theorem 1.2.

$X \le q^2/N^3$

, which completes the range from Theorem 1.2.

Improving the range

![]() $X \le \max (1, q/N, q^2/N^3)$

turns out to be quite difficult. Indeed, it is not clear how to exploit the averaging over c without the Kuznetsov formula, so any savings are more likely to come from bounding bilinear forms of Kloosterman sums

$X \le \max (1, q/N, q^2/N^3)$

turns out to be quite difficult. Indeed, it is not clear how to exploit the averaging over c without the Kuznetsov formula, so any savings are more likely to come from bounding bilinear forms of Kloosterman sums

![]() $\sum _{m \sim N} a_m \sum _{n \sim N} b_n\, S(m, n; c)$

; this is a notoriously hard problem for general sequences

$\sum _{m \sim N} a_m \sum _{n \sim N} b_n\, S(m, n; c)$

; this is a notoriously hard problem for general sequences

![]() $(a_m), (b_n)$

[Reference Kowalski, Michel and Sawin29, Reference Kowalski, Michel and Sawin30, Reference Kerr, Shparlinski, Wu and Xi27, Reference Xi47]. For example, an extension of the work of Kowalski–Michel–Sawin [Reference Kowalski, Michel and Sawin29] to general moduli should improve Theorem 1.2 in the critical range

$(a_m), (b_n)$

[Reference Kowalski, Michel and Sawin29, Reference Kowalski, Michel and Sawin30, Reference Kerr, Shparlinski, Wu and Xi27, Reference Xi47]. For example, an extension of the work of Kowalski–Michel–Sawin [Reference Kowalski, Michel and Sawin29] to general moduli should improve Theorem 1.2 in the critical range

![]() $q \approx N^2$

, but even then the final numerical savings would be relatively small.

$q \approx N^2$

, but even then the final numerical savings would be relatively small.

The other critical case encountered in applications is

![]() $q \approx N$

, where Theorem 1.2 gives no nontrivial savings in the

$q \approx N$

, where Theorem 1.2 gives no nontrivial savings in the

![]() $\theta $

-aspect (i.e.,

$\theta $

-aspect (i.e.,

![]() $X \ll 1$

), and where such savings should in fact be impossible for general sequences

$X \ll 1$

), and where such savings should in fact be impossible for general sequences

![]() $(a_n)$

. Indeed, we expect

$(a_n)$

. Indeed, we expect

![]() $|\rho _j(n)|$

to typically be of size

$|\rho _j(n)|$

to typically be of size

![]() $\approx q^{-1/2}$

, so by picking

$\approx q^{-1/2}$

, so by picking

![]() $a_n = q\, \bar {\rho _1(n)}$

, the left-hand side of (2.1) is at least

$a_n = q\, \bar {\rho _1(n)}$

, the left-hand side of (2.1) is at least

![]() $X^{2\theta (q)} N^2$

, while the right-hand side is

$X^{2\theta (q)} N^2$

, while the right-hand side is

![]() $(1 + \frac {N}{q}) qN$

; this limits the most optimistic savings for general sequences at

$(1 + \frac {N}{q}) qN$

; this limits the most optimistic savings for general sequences at

![]() $X = (1 + \frac {q}{N})^{1/(2\theta (q))}$

.

$X = (1 + \frac {q}{N})^{1/(2\theta (q))}$

.

The key idea in our work is to make use of the special structure of the sequences

![]() $(a_n)$

which show up in variations of the dispersion method [Reference Linnik33]. Often, such sequences have sparse Fourier transforms, and using Fourier analysis on the corresponding exponential sums leads to a combinatorial problem.

$(a_n)$

which show up in variations of the dispersion method [Reference Linnik33]. Often, such sequences have sparse Fourier transforms, and using Fourier analysis on the corresponding exponential sums leads to a combinatorial problem.

2.2 Exponential phases and a counting problem

Let us focus on the case

![]() $a_n = e(n\alpha )$

, for some

$a_n = e(n\alpha )$

, for some

![]() $\alpha \in [0, 1)$

. Expanding the Kloosterman sums from (2.2) and Fourier-completing in

$\alpha \in [0, 1)$

. Expanding the Kloosterman sums from (2.2) and Fourier-completing in

![]() $m, n$

leads to a variant of the identity

$m, n$

leads to a variant of the identity

Taking absolute values and ignoring the outer averaging over c, we are left with the task of bounding

for

![]() $c \sim NX$

, which is just a count of points on a modular hyperbola in short intervals (as considered in [Reference Cilleruelo and Garaev7]). When

$c \sim NX$

, which is just a count of points on a modular hyperbola in short intervals (as considered in [Reference Cilleruelo and Garaev7]). When

![]() $\alpha = 0$

, one can directly use the divisor bound to write

$\alpha = 0$

, one can directly use the divisor bound to write

up to a factor of

![]() $X^{o(1)}$

, which leads to a variant of

$X^{o(1)}$

, which leads to a variant of

(This type of bound was also observed by Shparlinski and Zhang [Reference Shparlinski and Zhang43].) Overall, we roughly obtain

$$ \begin{align} \sum_{\substack{c \sim NX \\ c \equiv 0 \pmod{q}}} \frac{1}{c} \sum_{m, n \sim N} S(m, n; c) \lesssim \frac{NX + N^2}{q}, \end{align} $$

$$ \begin{align} \sum_{\substack{c \sim NX \\ c \equiv 0 \pmod{q}}} \frac{1}{c} \sum_{m, n \sim N} S(m, n; c) \lesssim \frac{NX + N^2}{q}, \end{align} $$

which is at most

![]() $(1 + \frac {N}{q}) N$

, as required in (2.1), provided that

$(1 + \frac {N}{q}) N$

, as required in (2.1), provided that

This gives the best-case range from (1.7) (when

![]() $a = 1$

). The analogue of this argument for other values of

$a = 1$

). The analogue of this argument for other values of

![]() $\alpha \in \mathbb {R}/\mathbb {Z}$

depends on the quality of the best rational approximations to

$\alpha \in \mathbb {R}/\mathbb {Z}$

depends on the quality of the best rational approximations to

![]() $\alpha $

, due to a rescaling trick of Cilleruelo–Garaev [Reference Cilleruelo and Garaev7]. For an arbitrary value of

$\alpha $

, due to a rescaling trick of Cilleruelo–Garaev [Reference Cilleruelo and Garaev7]. For an arbitrary value of

![]() $\alpha $

, a pigeonhole argument (Dirichlet approximation) leads to a bound of the shape

$\alpha $

, a pigeonhole argument (Dirichlet approximation) leads to a bound of the shape

$$ \begin{align} \sum_{\substack{c \sim NX \\ c \equiv 0 \pmod{q}}} \frac{1}{c} \sum_{m \sim N} e(-m\alpha) \sum_{n \sim N} e(n\alpha)\, S(m, n; c) \lesssim \frac{N^{3/2} X + N^2}{q}, \end{align} $$

$$ \begin{align} \sum_{\substack{c \sim NX \\ c \equiv 0 \pmod{q}}} \frac{1}{c} \sum_{m \sim N} e(-m\alpha) \sum_{n \sim N} e(n\alpha)\, S(m, n; c) \lesssim \frac{N^{3/2} X + N^2}{q}, \end{align} $$

and ultimately to the range

![]() $X \le \max (\sqrt {N}, q/\sqrt {N})$

, which is the worst (and average) case in (1.7) when

$X \le \max (\sqrt {N}, q/\sqrt {N})$

, which is the worst (and average) case in (1.7) when

![]() $a = 1$

. Incorporating a scalar a inside

$a = 1$

. Incorporating a scalar a inside

![]() $\rho _{j\infty }(an)$

is not too difficult, since a similar argument handles the analogous bilinear sums of

$\rho _{j\infty }(an)$

is not too difficult, since a similar argument handles the analogous bilinear sums of

![]() $S(am, an; c)$

, up to a loss of

$S(am, an; c)$

, up to a loss of

![]() $\gcd (a, c)$

.

$\gcd (a, c)$

.

Remark 2.1. A consequence of not leveraging the exponential phases in the right-hand side of (2.4) is that the same argument extends to sums over

![]() $|m|, |n| \le N$

. In particular, the term

$|m|, |n| \le N$

. In particular, the term

![]() $m = n = 0$

already gives a contribution of about

$m = n = 0$

already gives a contribution of about

![]() $c \asymp NX$

, which produces a term of

$c \asymp NX$

, which produces a term of

![]() $NX/q$

in (2.6) with a linear growth in X (as opposed to the square-root growth from (2.3), coming from the Weil bound).

$NX/q$

in (2.6) with a linear growth in X (as opposed to the square-root growth from (2.3), coming from the Weil bound).

2.3 Sequences with frequency concentration

It will probably not come as a surprise that one can extend the preceding discussion by Fourier-expanding other sequences

![]() $(a_n)$

, given a strong-enough concentration condition for their Fourier transforms, but there are some subtleties in how to do this optimally. If

$(a_n)$

, given a strong-enough concentration condition for their Fourier transforms, but there are some subtleties in how to do this optimally. If

![]() $a_n = \check {\mu }(n) = \int _{\mathbb {R}/\mathbb {Z}} e(n\alpha )\, d\mu (\alpha )$

for all

$a_n = \check {\mu }(n) = \int _{\mathbb {R}/\mathbb {Z}} e(n\alpha )\, d\mu (\alpha )$

for all

![]() $n \sim N$

and some bounded-variation complex measure

$n \sim N$

and some bounded-variation complex measure

![]() $\mu $

, then there are at least two ways to proceed – depending on whether the integral over

$\mu $

, then there are at least two ways to proceed – depending on whether the integral over

![]() $\alpha $

is kept inside or outside of the square.

$\alpha $

is kept inside or outside of the square.

Indeed, by applying Cauchy–Schwarz in

![]() $\alpha $

and our Theorem 1.5 for exponential phases as a black-box, one can directly obtain a bound like

$\alpha $

and our Theorem 1.5 for exponential phases as a black-box, one can directly obtain a bound like

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(n) \right\vert^2 \lesssim \left(1 + \frac{N}{q}\right) N |\mu|(\mathbb{R}/\mathbb{Z})^2, \end{align} $$

$$ \begin{align} \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(n) \right\vert^2 \lesssim \left(1 + \frac{N}{q}\right) N |\mu|(\mathbb{R}/\mathbb{Z})^2, \end{align} $$

for all

![]() $X \le \max (\sqrt {N}, q/\sqrt {N})$

(and this range can be slightly improved given more information about the support of

$X \le \max (\sqrt {N}, q/\sqrt {N})$

(and this range can be slightly improved given more information about the support of

![]() $\mu $

near rational numbers of small denominators). Unfortunately, this replaces the norm

$\mu $

near rational numbers of small denominators). Unfortunately, this replaces the norm

![]() $\|a_n\|_2$

from Theorem 1.2 with

$\|a_n\|_2$

from Theorem 1.2 with

![]() $\sqrt {N} |\mu |(\mathbb {R}/\mathbb {Z})$

, which produces a significant loss unless

$\sqrt {N} |\mu |(\mathbb {R}/\mathbb {Z})$

, which produces a significant loss unless

![]() $\mu $

is very highly concentrated – and it is difficult to make up for this loss through gains of

$\mu $

is very highly concentrated – and it is difficult to make up for this loss through gains of

![]() $X^{2\theta }$

.

$X^{2\theta }$

.

The alternative approach is to expand the square in the left-hand side of (2.8), pass to a sum of Kloosterman sums as in (2.2) by Kuznetsov, and only then Fourier-expand (two instances of) the sequence

![]() $(a_n)$

. Using similar combinatorial ideas as for (2.7), we can then essentially bound

$(a_n)$

. Using similar combinatorial ideas as for (2.7), we can then essentially bound

$$ \begin{align} \sum_{\substack{c \sim NX \\ c \equiv 0 \pmod{q}}} \frac{1}{c} \sum_{m \sim N} e(m\alpha) \sum_{n \sim N} e(n\beta)\, S(m, n; c) \lesssim \frac{N^{5/3} X + N^2}{q}, \end{align} $$

$$ \begin{align} \sum_{\substack{c \sim NX \\ c \equiv 0 \pmod{q}}} \frac{1}{c} \sum_{m \sim N} e(m\alpha) \sum_{n \sim N} e(n\beta)\, S(m, n; c) \lesssim \frac{N^{5/3} X + N^2}{q}, \end{align} $$

for arbitrary values of

![]() $\alpha , \beta \in \mathbb {R}/\mathbb {Z}$

. With no further information about the support of

$\alpha , \beta \in \mathbb {R}/\mathbb {Z}$

. With no further information about the support of

![]() $\mu $

, this ultimately gives a bound like

$\mu $

, this ultimately gives a bound like

$$\begin{align*}\sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(n) \right\vert^2 \lesssim \left(1 + \frac{N}{q}\right) \|a_n\|_2^2 + \frac{N^{5/3}X + N^2}{q} |\mu|(\mathbb{R}/\mathbb{Z})^2, \end{align*}$$

$$\begin{align*}\sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{n \sim N} a_n\, \rho_{j\mathfrak{a}}(n) \right\vert^2 \lesssim \left(1 + \frac{N}{q}\right) \|a_n\|_2^2 + \frac{N^{5/3}X + N^2}{q} |\mu|(\mathbb{R}/\mathbb{Z})^2, \end{align*}$$

which is acceptable in (2.1), in particular, whenever

![]() $X < N^{1/3}$

and

$X < N^{1/3}$

and

![]() $\sqrt {N} |\mu |(\mathbb {R}/\mathbb {Z}) \le \sqrt {q/N} \|a_n\|_2$

. Compared to the first approach, this generally gains less in the X-aspect, but it relaxes the concentration condition on

$\sqrt {N} |\mu |(\mathbb {R}/\mathbb {Z}) \le \sqrt {q/N} \|a_n\|_2$

. Compared to the first approach, this generally gains less in the X-aspect, but it relaxes the concentration condition on

![]() $\mu $

if

$\mu $

if

![]() $N < q$

. This second approach turns out to be better for our applications; the resulting large sieve inequality is Theorem 5.2, which particularizes to Theorems 1.5 and 1.7.

$N < q$

. This second approach turns out to be better for our applications; the resulting large sieve inequality is Theorem 5.2, which particularizes to Theorems 1.5 and 1.7.

What is perhaps more surprising, though, is that strong-enough frequency concentration (i.e.,

![]() $\sqrt {N} |\mu |(\mathbb {R}/\mathbb {Z})^2 \le \sqrt {q/N} \|a_n\|_2$

) arises in applications, beyond the case of exponential sequences. A key observation is that the aforementioned dispersion coefficients

$\sqrt {N} |\mu |(\mathbb {R}/\mathbb {Z})^2 \le \sqrt {q/N} \|a_n\|_2$

) arises in applications, beyond the case of exponential sequences. A key observation is that the aforementioned dispersion coefficients

$$ \begin{align} a_n = \sum_{\substack{h_1, h_2 \sim H \\ h_1 \ell_1 - h_2 \ell_2 = n}} 1, \end{align} $$

$$ \begin{align} a_n = \sum_{\substack{h_1, h_2 \sim H \\ h_1 \ell_1 - h_2 \ell_2 = n}} 1, \end{align} $$

with

![]() $\ell _1 \asymp \ell _2 \asymp L$

, come from a convolution of two “arithmetic progressions”

$\ell _1 \asymp \ell _2 \asymp L$

, come from a convolution of two “arithmetic progressions” ![]() . The Fourier transform of each of these two sequences has

. The Fourier transform of each of these two sequences has

![]() $\ell _i$

periodic peaks of height H and width

$\ell _i$

periodic peaks of height H and width

![]() $(H \ell _i)^{-1}$

, supported around multiples of

$(H \ell _i)^{-1}$

, supported around multiples of

![]() $1/\ell _i$

. When

$1/\ell _i$

. When

![]() $(\ell _1, \ell _2) = 1$

, multiplying these two Fourier transforms results in cancellation everywhere away from a small number (

$(\ell _1, \ell _2) = 1$

, multiplying these two Fourier transforms results in cancellation everywhere away from a small number (

![]() $\le 1 + \frac {L}{H}$

) of rational points (and thus, in frequency concentration on a set of size

$\le 1 + \frac {L}{H}$

) of rational points (and thus, in frequency concentration on a set of size

![]() $\frac {1}{HL} + \frac {1}{H^2}$

); see Lemma 4.11.

$\frac {1}{HL} + \frac {1}{H^2}$

); see Lemma 4.11.

2.4 Multilinear forms of Kloosterman sums

Consider once again the sums (1.2), in the ranges

$$\begin{align*}M, N \le rs, \qquad\qquad X := \frac{s\sqrt{r} C}{\sqrt{MN}} \ge 1, \end{align*}$$

$$\begin{align*}M, N \le rs, \qquad\qquad X := \frac{s\sqrt{r} C}{\sqrt{MN}} \ge 1, \end{align*}$$

which are relevant for most applications. An additional use of the Kuznetsov formula, for the level

![]() $q = rs$

and the cusps

$q = rs$

and the cusps

![]() $\infty , 1/s$

(with suitable scaling matrices), gives a variant of the bound

$\infty , 1/s$

(with suitable scaling matrices), gives a variant of the bound

$$\begin{align*}\begin{aligned} &\sum_{m \sim M} a_m \sum_{n \sim N} b_n \sum_{(c, r) = 1} g\left(\frac{c}{C}\right) S(m\bar{r}, \pm n; sc) \\ &\quad\lesssim s\sqrt{r}C \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{m \sim M} a_m\, \rho_{j\infty}(m) \right\vert \left\vert \sum_{n \sim N} b_n\, \rho_{j\, 1/s}(n)\right\vert \ + \ \ldots \end{aligned} \end{align*}$$

$$\begin{align*}\begin{aligned} &\sum_{m \sim M} a_m \sum_{n \sim N} b_n \sum_{(c, r) = 1} g\left(\frac{c}{C}\right) S(m\bar{r}, \pm n; sc) \\ &\quad\lesssim s\sqrt{r}C \sum_{\lambda_j < 1/4} X^{2\theta_j} \left\vert \sum_{m \sim M} a_m\, \rho_{j\infty}(m) \right\vert \left\vert \sum_{n \sim N} b_n\, \rho_{j\, 1/s}(n)\right\vert \ + \ \ldots \end{aligned} \end{align*}$$

Here we omitted the contribution of the regular Maass forms, Eisenstein series and holomorphic forms (which will not be dominant). A priori, this arrangement introduces a factor of

![]() $X^{2\theta (q)}$

in our bounds, recalling that

$X^{2\theta (q)}$

in our bounds, recalling that

![]() $\theta (q) = \max _{\lambda _j(q) < 1/4} \theta _j(q)$

(if the maximum is nonempty, and

$\theta (q) = \max _{\lambda _j(q) < 1/4} \theta _j(q)$

(if the maximum is nonempty, and

![]() $\theta (q) = 0$

otherwise). However, the value of X in this loss can be decreased through the large sieve inequalities for exceptional Maass forms. Indeed, after splitting

$\theta (q) = 0$

otherwise). However, the value of X in this loss can be decreased through the large sieve inequalities for exceptional Maass forms. Indeed, after splitting

![]() $X = X_0 \sqrt {X_1 X_2}$

, taking out a factor of only

$X = X_0 \sqrt {X_1 X_2}$

, taking out a factor of only

![]() $(1 + X_0)^{2\theta (q)}$

, and applying Cauchy–Schwarz, we reach

$(1 + X_0)^{2\theta (q)}$

, and applying Cauchy–Schwarz, we reach

$$\begin{align*}s\sqrt{r}C\, (1 + X_0)^{2\theta(q)} \left(\sum_{\lambda_j < 1/4} X_1^{2\theta_j} \left\vert \sum_{m \sim M} a_m\, \rho_{j\infty}(m) \right\vert^2 \right)^{1/2} \left(\sum_{\lambda_j < 1/4} X_2^{2\theta_j} \left\vert \sum_{n \sim N} b_n\, \rho_{j\, 1/s}(n) \right\vert^2 \right)^{1/2}. \end{align*}$$

$$\begin{align*}s\sqrt{r}C\, (1 + X_0)^{2\theta(q)} \left(\sum_{\lambda_j < 1/4} X_1^{2\theta_j} \left\vert \sum_{m \sim M} a_m\, \rho_{j\infty}(m) \right\vert^2 \right)^{1/2} \left(\sum_{\lambda_j < 1/4} X_2^{2\theta_j} \left\vert \sum_{n \sim N} b_n\, \rho_{j\, 1/s}(n) \right\vert^2 \right)^{1/2}. \end{align*}$$

Above, we can choose

![]() $X_1$

and

$X_1$

and

![]() $X_2$

as the maximal values that can be fully incorporated in large sieve inequalities like (2.1) without producing losses in the right-hand side, for the specific sequences

$X_2$

as the maximal values that can be fully incorporated in large sieve inequalities like (2.1) without producing losses in the right-hand side, for the specific sequences

![]() $(a_m)$

and

$(a_m)$

and

![]() $(b_n)$

. In this case, we roughly obtain a final bound of

$(b_n)$

. In this case, we roughly obtain a final bound of

$$\begin{align*}s\sqrt{r} C \left(1 + \frac{s\sqrt{r}C}{\sqrt{MN X_1 X_2}}\right)^{2\theta(q)} \|a_m\|_2\, \|b_n\|_2. \end{align*}$$

$$\begin{align*}s\sqrt{r} C \left(1 + \frac{s\sqrt{r}C}{\sqrt{MN X_1 X_2}}\right)^{2\theta(q)} \|a_m\|_2\, \|b_n\|_2. \end{align*}$$

For example, if

![]() $a_m = e(m\alpha _{r,s})$

for some

$a_m = e(m\alpha _{r,s})$

for some

![]() $\alpha _{r,s} \in \mathbb {R}/\mathbb {Z}$

, then we may take

$\alpha _{r,s} \in \mathbb {R}/\mathbb {Z}$

, then we may take

![]() $X_1 = \max (\sqrt {N}, q/\sqrt {N})$

by Theorem 1.5, which ultimately saves a factor of

$X_1 = \max (\sqrt {N}, q/\sqrt {N})$

by Theorem 1.5, which ultimately saves a factor of

![]() $N^{\theta /2}$

. Similarly, if

$N^{\theta /2}$

. Similarly, if

![]() $(b_n)$

are of the form in (2.10), where

$(b_n)$

are of the form in (2.10), where

![]() $H \asymp L \asymp \sqrt {N}$

, then by Theorem 1.7 we may also take

$H \asymp L \asymp \sqrt {N}$

, then by Theorem 1.7 we may also take

![]() $X_2 = \max (\sqrt {N}, q/\sqrt {N})$

.

$X_2 = \max (\sqrt {N}, q/\sqrt {N})$

.

If some averaging over

![]() $r \sim R, s \sim S$

is available and the sequence

$r \sim R, s \sim S$

is available and the sequence

![]() $(a_m)$

does not depend on

$(a_m)$

does not depend on

![]() $r, s$

, then larger values of

$r, s$

, then larger values of

![]() $X_1$

are available due to Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10, Theorems 6, 7]. In this setting, if

$X_1$

are available due to Deshouillers–Iwaniec [Reference Deshouillers and Iwaniec10, Theorems 6, 7]. In this setting, if

![]() $a_m = e(m\omega )$

for a fixed

$a_m = e(m\omega )$

for a fixed

![]() $\omega \in \mathbb {R}/\mathbb {Z}$

, one can combine the essentially-optimal value

$\omega \in \mathbb {R}/\mathbb {Z}$

, one can combine the essentially-optimal value

![]() $X_1 = Q^2/N$

(see Theorem 3.11 below) with our savings in the

$X_1 = Q^2/N$

(see Theorem 3.11 below) with our savings in the

![]() $X_2$

-aspect. Following [Reference Deshouillers and Iwaniec10, Theorem 12], similar estimates can be deduced for multilinear forms of incomplete Kloosterman sums, simply by Fourier-completing them and appealing to the estimates for complete sums; see our Corollary 5.14. Such bounds feed directly into the dispersion method and its applications, as we shall see in Section 6.

$X_2$

-aspect. Following [Reference Deshouillers and Iwaniec10, Theorem 12], similar estimates can be deduced for multilinear forms of incomplete Kloosterman sums, simply by Fourier-completing them and appealing to the estimates for complete sums; see our Corollary 5.14. Such bounds feed directly into the dispersion method and its applications, as we shall see in Section 6.

2.5 Layout of paper

In Section 3, we cover notation and preliminary results, including several key lemmas from the spectral theory of automorphic forms. Section 4 only contains elementary arguments, from counting points on modular hyperbolas in Lemma 4.4 (following Cilleruelo–Garaev [Reference Cilleruelo and Garaev7]), to the bilinear Kloosterman bounds in Proposition 4.9 (which may be of independent interest to the reader). In Section 5.1, we combine these combinatorial inputs with the Deshouillers–Iwaniec setup [Reference Deshouillers and Iwaniec10] to prove a general large sieve inequality in Theorem 5.2, which can be viewed as our main technical result; we then deduce Theorems 1.5 and 1.7 from it. Section 5.3 contains the corollaries of these large sieve inequalities: various bounds for multilinear forms of Kloosterman sums, with improved dependencies on the

![]() $\theta $

parameter. Finally, in Section 6 we will use these bounds to prove Theorem 1.1, building on the work of Merikoski [Reference Merikoski37] and de la Bretèche–Drappeau [Reference de La Bretèche and Drappeau8].

$\theta $

parameter. Finally, in Section 6 we will use these bounds to prove Theorem 1.1, building on the work of Merikoski [Reference Merikoski37] and de la Bretèche–Drappeau [Reference de La Bretèche and Drappeau8].

3 Notation and preliminaries

3.1 Standard analytic notation

We write

![]() $\mathbb {Z}, \mathbb {Q}, \mathbb {R}, \mathbb {C}, {\mathbb {H}}$

for the sets of integers, rational numbers, real numbers, complex numbers, respectively complex numbers with positive imaginary part. We may scale these sets by constants, and may add the subscript

$\mathbb {Z}, \mathbb {Q}, \mathbb {R}, \mathbb {C}, {\mathbb {H}}$

for the sets of integers, rational numbers, real numbers, complex numbers, respectively complex numbers with positive imaginary part. We may scale these sets by constants, and may add the subscript

![]() $+$

to restrict to positive numbers; so for example

$+$

to restrict to positive numbers; so for example

![]() $2\mathbb {Z}_+$

denotes the set of even positive integers, while

$2\mathbb {Z}_+$

denotes the set of even positive integers, while

![]() $i\mathbb {R}$

is the imaginary line. For

$i\mathbb {R}$

is the imaginary line. For

![]() $\alpha \in \mathbb {R}$

(or

$\alpha \in \mathbb {R}$

(or

![]() $\mathbb {R}/\mathbb {Z}$

), we denote

$\mathbb {R}/\mathbb {Z}$

), we denote

![]() $e(\alpha ) := \exp (2 \pi i \alpha )$

, and set

$e(\alpha ) := \exp (2 \pi i \alpha )$

, and set

![]() $\|\alpha \| := \min _{n \in \mathbb {Z}} |\alpha - n|$

, which induces a metric on

$\|\alpha \| := \min _{n \in \mathbb {Z}} |\alpha - n|$

, which induces a metric on

![]() $\mathbb {R}/\mathbb {Z}$

. We write

$\mathbb {R}/\mathbb {Z}$

. We write

![]() $\mathbb {Z}/c\mathbb {Z}$

for the ring of residue classes modulo a positive integer c,

$\mathbb {Z}/c\mathbb {Z}$

for the ring of residue classes modulo a positive integer c,

![]() $(\mathbb {Z}/c\mathbb {Z})^\times $

for its multiplicative group of units, and

$(\mathbb {Z}/c\mathbb {Z})^\times $

for its multiplicative group of units, and

![]() $\bar {x}$

for the inverse of

$\bar {x}$

for the inverse of

![]() $x \in (\mathbb {Z}/c\mathbb {Z})^\times $

. We may use the latter notation inside congruences, with

$x \in (\mathbb {Z}/c\mathbb {Z})^\times $

. We may use the latter notation inside congruences, with

![]() $x \equiv y\bar {z} \pmod {c}$

meaning that

$x \equiv y\bar {z} \pmod {c}$

meaning that

![]() $xz \equiv y \pmod {c}$

(for

$xz \equiv y \pmod {c}$

(for

![]() $\gcd (z, c) = 1$

). We may also use the notation

$\gcd (z, c) = 1$

). We may also use the notation

![]() $(a, b)$

for

$(a, b)$

for

![]() $\gcd (a, b)$

, and

$\gcd (a, b)$

, and

![]() $[a, b]$

for

$[a, b]$

for

![]() $\mathrm {lcm}(a, b)$

, when it is clear from context to not interpret these as pairs or intervals. We write

$\mathrm {lcm}(a, b)$

, when it is clear from context to not interpret these as pairs or intervals. We write ![]() for the indicator function of a set S (or for the truth value of a statement S),

for the indicator function of a set S (or for the truth value of a statement S),

![]() $n \sim N$

for the statement that

$n \sim N$

for the statement that

![]() $N < n \le 2N$

(so, e.g.,

$N < n \le 2N$

(so, e.g., ![]() ), and interpret sums like

), and interpret sums like

![]() $\sum _{n \sim N}$

,

$\sum _{n \sim N}$

,

![]() $\sum _{n \equiv 0 \pmod {q}}$

, or

$\sum _{n \equiv 0 \pmod {q}}$

, or

![]() $\sum _{d \mid n}$

with the implied restrictions that

$\sum _{d \mid n}$

with the implied restrictions that

![]() $n, d \in \mathbb {Z}_+$

. For

$n, d \in \mathbb {Z}_+$

. For

![]() $n \in \mathbb {Z}_+$

, we define the divisor-counting function by

$n \in \mathbb {Z}_+$

, we define the divisor-counting function by

![]() $\tau (n) := \sum _{d \mid n} 1$

, and Euler’s totient function by

$\tau (n) := \sum _{d \mid n} 1$

, and Euler’s totient function by ![]() . We say that a complex sequence

. We say that a complex sequence

![]() $(a_n)$

is divisor-bounded iff

$(a_n)$

is divisor-bounded iff

![]() $|a_n| \ll \tau (n)^{O(1)}$

. We also write

$|a_n| \ll \tau (n)^{O(1)}$

. We also write

![]() $P^+(n)$

and

$P^+(n)$

and

![]() $P^-(n)$

for the largest and smallest prime factors of a positive integer n, and recall that n is called y-smooth iff

$P^-(n)$

for the largest and smallest prime factors of a positive integer n, and recall that n is called y-smooth iff

![]() $P^+(n) \le y$

.

$P^+(n) \le y$

.

We use the standard asymptotic notation

![]() $f \ll g$

,

$f \ll g$

,

![]() $f \asymp g$

,

$f \asymp g$

,

![]() $f = O(g)$

,

$f = O(g)$

,

![]() $f = o_{x \to \infty }(g)$

from analytic number theory, and indicate that the implicit constants depend on some parameter

$f = o_{x \to \infty }(g)$

from analytic number theory, and indicate that the implicit constants depend on some parameter

![]() $\varepsilon $

through subscripts (e.g.,

$\varepsilon $

through subscripts (e.g.,

![]() $f \ll _\varepsilon g$

,

$f \ll _\varepsilon g$

,

![]() $f = O_\varepsilon (g)$

). In particular, one should read bounds like

$f = O_\varepsilon (g)$

). In particular, one should read bounds like

![]() $f(x) \ll x^{o(1)}$

as

$f(x) \ll x^{o(1)}$

as

![]() $\forall \varepsilon> 0, f(x) \ll _\varepsilon x^\varepsilon $

. Given

$\forall \varepsilon> 0, f(x) \ll _\varepsilon x^\varepsilon $

. Given

![]() $\ell \in \mathbb {Z}_+$

, we write

$\ell \in \mathbb {Z}_+$

, we write

![]() $f^{(\ell )}$

for the

$f^{(\ell )}$

for the

![]() $\ell $

th derivative of a function

$\ell $

th derivative of a function

![]() $f : \mathbb {R} \to \mathbb {C}$

, and

$f : \mathbb {R} \to \mathbb {C}$

, and

![]() $f^{(0)} = f$

. For

$f^{(0)} = f$

. For

![]() $q \in [1, \infty ]$

, we denote by

$q \in [1, \infty ]$

, we denote by

![]() $\|f\|_{L^q}$

the

$\|f\|_{L^q}$

the

![]() $L^q$

-norm of a function

$L^q$

-norm of a function

![]() $f : \mathbb {R} \to \mathbb {C}$

(or

$f : \mathbb {R} \to \mathbb {C}$

(or

![]() $f : \mathbb {R}/\mathbb {Z} \to \mathbb {C}$

), and by

$f : \mathbb {R}/\mathbb {Z} \to \mathbb {C}$

), and by

![]() $\|a\|_q$

(or

$\|a\|_q$

(or

![]() $\|a_n\|_q$

) the

$\|a_n\|_q$

) the

![]() $\ell ^q$

norm of a sequence

$\ell ^q$

norm of a sequence

![]() $(a_n)$

.

$(a_n)$

.

We require multiple notations for the Fourier transforms of

![]() $L^1$

functions

$L^1$

functions

![]() $f, \Phi : \mathbb {R} \to \mathbb {C}$

,

$f, \Phi : \mathbb {R} \to \mathbb {C}$

,

![]() $\varphi : \mathbb {R}/\mathbb {Z} \to \mathbb {C}$

, and

$\varphi : \mathbb {R}/\mathbb {Z} \to \mathbb {C}$

, and

![]() $a : \mathbb {Z} \to \mathbb {C}$

(the latter could be, e.g., a finite sequence

$a : \mathbb {Z} \to \mathbb {C}$

(the latter could be, e.g., a finite sequence

![]() $(a_n)_{n \sim N}$

extended with zeroes elsewhere). These are given by

$(a_n)_{n \sim N}$

extended with zeroes elsewhere). These are given by

$$ \begin{align} \begin{aligned} f : \mathbb{R} \to \mathbb{C} \qquad \leadsto \qquad\,\, \hat{f} : \mathbb{C} \to \mathbb{C}, \qquad\quad &\hat{f}(\xi) := \int_{\mathbb{R}} f(t)\, e(-\xi t)\ dt, \\ \Phi : \mathbb{R} \to \mathbb{C} \qquad \leadsto \qquad\, \check{\Phi} : \mathbb{C} \to \mathbb{C}, \qquad\quad &\check{\Phi}(t) := \int_{\mathbb{R}} \Phi(\xi)\, e(\xi t)\ d\xi, \\ a : \mathbb{Z} \to \mathbb{C} \quad\ \ \, \, \leadsto \ \ \, \, \hat{a} : \mathbb{R}/\mathbb{Z} \to \mathbb{C}, \qquad\quad &\hat{a}(\alpha) := \sum_{n \in \mathbb{Z}} a_n\, e(-n\alpha), \qquad \\ \varphi : \mathbb{R}/\mathbb{Z} \to \mathbb{C} \qquad \leadsto \qquad \ \check{\varphi} : \mathbb{Z} \to \mathbb{C}, \qquad\quad &\check{\varphi}(n) := \int_{\mathbb{R}/\mathbb{Z}} \varphi(\alpha)\, e(n\alpha)\, d\alpha. \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} f : \mathbb{R} \to \mathbb{C} \qquad \leadsto \qquad\,\, \hat{f} : \mathbb{C} \to \mathbb{C}, \qquad\quad &\hat{f}(\xi) := \int_{\mathbb{R}} f(t)\, e(-\xi t)\ dt, \\ \Phi : \mathbb{R} \to \mathbb{C} \qquad \leadsto \qquad\, \check{\Phi} : \mathbb{C} \to \mathbb{C}, \qquad\quad &\check{\Phi}(t) := \int_{\mathbb{R}} \Phi(\xi)\, e(\xi t)\ d\xi, \\ a : \mathbb{Z} \to \mathbb{C} \quad\ \ \, \, \leadsto \ \ \, \, \hat{a} : \mathbb{R}/\mathbb{Z} \to \mathbb{C}, \qquad\quad &\hat{a}(\alpha) := \sum_{n \in \mathbb{Z}} a_n\, e(-n\alpha), \qquad \\ \varphi : \mathbb{R}/\mathbb{Z} \to \mathbb{C} \qquad \leadsto \qquad \ \check{\varphi} : \mathbb{Z} \to \mathbb{C}, \qquad\quad &\check{\varphi}(n) := \int_{\mathbb{R}/\mathbb{Z}} \varphi(\alpha)\, e(n\alpha)\, d\alpha. \end{aligned} \end{align} $$

Note that the first two and the last two of these transforms are inverse operations under suitable conditions; in particular, if

![]() $\Phi $

is Schwarz, a is

$\Phi $

is Schwarz, a is

![]() $L^1$

, and

$L^1$

, and

![]() $\varphi $

is smooth (so

$\varphi $

is smooth (so

![]() $\check {\varphi }(n)$

decays rapidly as

$\check {\varphi }(n)$

decays rapidly as

![]() $|n| \to \infty $

), one has

$|n| \to \infty $

), one has

We also denote the Fourier transform of a bounded-variation complex Borel measure

![]() $\mu $

on

$\mu $

on

![]() $\mathbb {R}/\mathbb {Z}$

by

$\mathbb {R}/\mathbb {Z}$

by

![]() $\check {\mu }(n) := \int _{\mathbb {R}/\mathbb {Z}} e(n\alpha )\, d\mu (\alpha )$

. For instance, one has

$\check {\mu }(n) := \int _{\mathbb {R}/\mathbb {Z}} e(n\alpha )\, d\mu (\alpha )$

. For instance, one has ![]() for the Lebesgue measure

for the Lebesgue measure

![]() $\lambda $

, and

$\lambda $

, and

![]() $\check {\delta }_{A}(n) = \sum _{\alpha \in A} e(n\alpha )$

for the Dirac delta measure on a finite set