During an interview with scholars in 2022, a Russian pensioner in his early 70s suddenly diverted the discussion from ordinary life to the execution of civilians in the Ukrainian town of Bucha by the Russian military—the most well-known episode of war crimes during Russia’s full-scale invasion of Ukraine. He confidently claimed that evidence of war crimes was fabricated: “I can spot fakes easily. Take Busha or Bucha or wherever it might be. The way they filmed it, the way the bodies were arranged—it was clearly a fake!” In this paradoxical response, he demonstrated that he did not know much about the town, even mispronouncing its name, yet he was adamant that the evidence of war crimes was fabricated. Not being interested in factual details, he simply borrowed statements about bodies being actors “arranged on the ground to produce the most dramatic effect,” widely distributed by pseudo fact checking channels aligned with the Russian regime, and used them to protect Russia from accusations (Alyukov Reference Alyukov2024b). Used as a rhetorical shield to deflect unwelcome information by a supporter of an authoritarian regime, this reaction illustrates the potential of pseudo fact checking as a propaganda tool.

Increasingly used by authoritarian governments around the world, the rise of pseudo fact checking represents a shift in authoritarian propaganda strategies. However, while authoritarian regimes in China (Fang Reference Fang, Wasserman and Madrid-Morales2022), Southeast Asia (Neo Reference Neo2022; Schuldt Reference Schuldt2021), Russia (Montaña-Niño et al. Reference Montaña-Niño, Vziatysheva, Dehghan, Badola, Zhu, Vinhas, Riedlinger and Glazunova2024), and other countries have adopted the same format to reinforce their stability, not much is known about how pseudo fact checking affects individuals.

To fill this gap, this article focuses on wartime Russia. We define pseudo fact checking as the use of fact checking formats by authoritarian governments to discredit criticism and reinforce official narratives rather than to provide independent verification of factual claims. Mimicking the style and language of independent fact checking, it labels inconvenient information as “fake” or “disinformation.” Russia is a particularly illustrative example of an authoritarian regime that relies on pseudo fact checking as a key propaganda strategy. While the practice of mirroring accusations of spreading disinformation dates back to the Cold War period (Tolz and Hutchings Reference Tolz, Hutchings, Chatterje-Doody and Crilley2021), pseudo fact checking represents a new stage in the evolution of authoritarian propaganda. With the rise of digital and social media, discrediting alternative information as “fake news” and “disinformation” through pro-regime websites and TV shows mimicking fact checking practices has become an extremely common strategy (Alyukov, Kunilovskaya, and Semenov Reference Alyukov, Kunilovskaya and Semenov2022).

The language of pseudo fact checking helps die-hard regime supporters to discard information that challenges their views (Alyukov Reference Alyukov2025), while simultaneously confusing citizens who have less established attitudes and enabling them to abstain from taking a stance vis-à-vis the regime. In the context of omnipresent suspicion toward any information, the cliché “Everything is complicated; we do not know the whole truth” (Ne vse tak odnoznachno, vsei pravdy my ne znaem) has become a common rhetorical device used by Russian citizens to abstain from taking a stance for or against the government’s policies (Public Sociology Laboratory 2023). As politicians always seek to avoid blame for unpopular decisions (Weaver Reference Weaver1986), blame attribution is an essential mechanism shaping overall political support in democracies and autocracies alike (Sirotkina and Zavadskaya Reference Sirotkina and Zavadskaya2020). Focusing on this intersection between new propaganda strategies and blame attribution, we ask the following research question: How does pseudo fact checking affect citizens’ perceptions of politics in an authoritarian context?

Access to samples in authoritarian countries is extremely limited, especially during military conflicts, when regimes may abandon any pretense of being “free” and deny access to the general population under the guise of military censorship. While restrictions on data access have rapidly evolved in wartime authoritarian Russia, the country’s large polling and consumer research market would take considerable time to fully censor and control. This provides unique opportunities to explore public opinion in a highly repressive environment during war. To examine the effects of pseudo fact checking, we rely on a preregistered online experiment (N = 2,949) conducted in Russia. All respondents received realistic news stories about a Russian missile attack in Ukraine or the inflation rate in Russia, attributed to Telegram channels with pro-regime or anti-regime framing. Treatment groups received additional matching debunking stories (i.e., a pro-regime debunking story for an anti-regime news story, and vice versa) discrediting the original stories as disinformation. We measure the effect of pseudo fact checking on the credibility of news stories, media trust, and attribution of responsibility for the war—to Ukraine, Russia, and the West—or the failure to attribute responsibility to any party. We introduce a scale of uncertainty to measure the degree of fuzziness in attributing responsibility, capturing the sentiment expressed in the infamous phrase “It’s not that simple; we don’t know the whole truth.” By examining how pseudo fact checking affects the attribution of responsibility, we seek to shed light on the interplay between propaganda and political blame attribution in an authoritarian context.

We argue that pseudo fact checking represents an effective response to the threat posed by saturated media environments to authoritarian rule. While the regime can effectively control major national television networks and key online media outlets (Gehlbach and Sonin Reference Gehlbach and Sonin2014), citizens still encounter information that challenges regime narratives via social media or smaller outlets. In predigital environments, alternative credible accounts of events could erode trust in state-censored reporting (Gläßel and Paula Reference Gläßel and Paula2020). However, in saturated media spaces, propaganda cannot win the hearts and minds of citizens through providing incorrect information or censorship alone. Pseudo fact checking allows the autocrat to respond to this challenge by preemptively debunking narratives that challenge the regime.

First, we demonstrate that both pro-regime and oppositional fact checking, ceteris paribus, decrease the perceived credibility of the information being discredited. Second, we show that exposure to both news and fact checks hinders the attribution of responsibility for regime policies among regime critics. While this interpretation is tentative, we argue that the process may be underpinned by an emotional mechanism. Taking a critical stance in an authoritarian context can lead to disagreements with others, pose personal risks, and be emotionally difficult, as the regime frames the cause of the war as intertwined with national identity, making criticism feel like a betrayal of one’s country. This dynamic may create additional incentives to refrain from blaming the regime despite one’s opposition. Fact checks can contribute to this tendency by generating uncertainty and providing regime critics with additional justifications for avoiding judgment. The results both illustrate how authoritarian propaganda adapts to new media environments and highlight the need to rethink current approaches to countering authoritarian propaganda.

The article is structured as follows. We first review existing literature on the rise and effects of fact checking, as well as the appropriation of fact checking formats by authoritarian regimes, and formulate hypotheses. We then present the design of the experiment. The analysis section presents the results of our statistical analysis. Finally, we conclude by discussing the implications of our findings for research on authoritarian propaganda in the context of saturated media environments and the limits of current approaches to countering propaganda.

The Origins and Effects of Pseudo Fact Checking

The Rise and Effects of Fact Checking

The global fact checking movement has grown explosively over the past two decades (Graves Reference Graves2016). The number of fact checking projects around the world increased from 11 in 2008 to 443 in 2025 (Ryan Reference Ryan2025), making it a global movement. As a vital democratic practice for countering untrustworthy information, fact checking has provoked strong interest among scholars. Researchers have been focusing on how different sources, topics, and formats of fact checking affect its effectiveness, the interaction between fact checking and partisan identities of citizens, and potential backfire effects.

Research suggests that the effectiveness of fact checking varies by the perceived credibility of a fact checker and its format. For instance, professional fact checkers are seen as more credible than fact checking done by media outlets, which in turn translates into different levels of effectiveness (Liu et al. Reference Liu, Qi, Wang and Metzger2023). The format of fact checking matters as well. Many studies suggest that the effectiveness of fact checking depends on its clarity: compared with long-form printed fact checks, videos and multimedia formats are more effective because they can foster attention and reduce confusion (Bowles et al. Reference Bowles, Croke, Larreguy, Liu and Marshall2025; Young et al. Reference Young, Jamieson, Poulsen and Goldring2018), as well as override partisan biases more effectively (Dan and Coleman Reference Dan and Coleman2025). Design features can facilitate the effectiveness of fact checks, such as “false” or “disputed” tags (Clayton et al. Reference Clayton, Blair, Busam, Forstner, Glance, Green and Kawata2020) and “truth scales” or visual indicators of falsity (Amazeen et al. Reference Amazeen, Thorson, Muddiman and Graves2018). Although the effectiveness of a fact checker does not depend on whether it refutes or confirms a story (Wouters et al. Reference Wouters, Opgenhaffen, Hameleers and Tulin2025), confirmations are shared more often while reducing negative emotions and polarization (Aruguete, Bachmann, et al. Reference Aruguete, Bachmann, Calvo, Valenzuela and Ventura2023; Aruguete, Batista, et al. Reference Aruguete, Batista, Calvo, Guizzo-Altube, Scartascini and Ventura2024). However, despite the fact that fact checking is generally effective in reducing misperceptions (Wood and Porter Reference Wood and Porter2019), it has very limited effects on general attitudes (Nyhan et al. Reference Nyhan, Porter, Reifler and Wood2020) and behaviors, such as verification efforts or engaging with misinformation in the first place (Bowles et al. Reference Bowles, Croke, Larreguy, Liu and Marshall2025).

More recently, some scholars have been concerned with the potentially adverse effects of fact checking and literacy interventions. While a growing body of research demonstrates that fact checking can effectively reduce misperceptions (Pennycook et al. Reference Pennycook, Epstein, Mosleh, Arechar, Eckles and Rand2021), other studies indicate that such interventions may induce skepticism, leading individuals to perceive true news stories as false (Hoes et al. Reference Hoes, Aitken, Zhang, Gackowski and Wojcieszak2024; Modirrousta-Galian and Higham Reference Modirrousta-Galian and Higham2023), while warnings about disinformation can increase support for repressive regulation of speech (Jungherr and Rauchfleisch Reference Jungherr and Rauchfleisch2024).

Another well-established pattern in research on fact checking is that its effectiveness and perceptions of credibility are tied to existing patterns of motivated reasoning. Studies find that it is easier to fact check information on neutral topics compared with political issues, as attitudes toward the latter are polarized (Amazeen et al. Reference Amazeen, Thorson, Muddiman and Graves2018). Perceived political bias reduces engagement with a fact checker (Shen, Jiang, and Yu Reference Shen, Jiang and Yu2025; Solovev and Pröllochs Reference Solovev and Pröllochs2025), and partisanship dampens the effects of fact checking on congenial misinformation (Amazeen et al. Reference Amazeen, Thorson, Muddiman and Graves2018; Van Erkel et al. Reference van Erkel, van Aelst, de Vreese, Hopmann, Matthes, Stanyer and Corbu2024). Moreover, encountering fact checks not in line with one’s partisan views can increase polarization (Boukes and Hameleers Reference Boukes and Hameleers2022) and make individuals perceive the media as more biased (Bachmann and Valenzuela Reference Aruguete, Bachmann, Calvo, Valenzuela and Ventura2023). Partisans generally tend to approach fact checks selectively, engaging with congenial fact checks while avoiding uncongenial ones (Hameleers and Van der Meer Reference Hameleers and van der Meer2019) and twisting the information to denigrate the opposing side (Chia, Lu, and Gunther Reference Chia, Lu and Gunther2022). While the backfire effect is rare (Wood and Porter Reference Wood and Porter2019), after being exposed to unwelcome information, individuals may engage in counterarguing and ultimately shape their views in ways that oppose the message’s suggestions (Nyhan and Reifler Reference Nyhan and Reifler2010).

Pseudo Fact Checking

The effectiveness of fact checking perhaps led many authoritarian leaders across the world to appropriate elements of it as a propaganda tool. Following the explosive growth of fact checking, populist and authoritarian governments have started to create their own replicas of fact checking services. Using similar techniques, such as providing images, videos, and links seemingly corroborating their account to discredit criticism, they mimic professional fact checking to debunk allegations made against them as fake (Funke Reference Funke2017; Funke and Benkelman Reference Funke and Benkelman2019). While there is not much research on this phenomenon or on conceptual interventions yet, some scholars categorize this type of fact checking as “fringe fact checking,” connoting its distance from the normative standards of the field (Montaña-Niño et al. Reference Montaña-Niño, Vziatysheva, Dehghan, Badola, Zhu, Vinhas, Riedlinger and Glazunova2024), or simply as “pro-government fact checking,” reflecting its commitment to a particular political agenda rather than to facts (Feng Reference Feng2024).

Scholars have documented the increasing dominance of this technique in autocracies. For instance, the Chinese government co-opted the fact checking format to debunk criticism of the regime’s policies toward COVID-19 as fake (Fang Reference Fang, Wasserman and Madrid-Morales2022). Autocrats increasingly present fake news as an existential problem to justify repressive legislation undermining freedom of speech, using pseudo fact checking websites to spread pro-regime propaganda across Cambodia, Malaysia, the Philippines, Singapore, Thailand, and Vietnam (Cho Reference Cho2025; Neo Reference Neo2022; Schuldt Reference Schuldt2021), as well as Brazil, India, China, and Russia (Montaña-Niño et al. Reference Montaña-Niño, Vziatysheva, Dehghan, Badola, Zhu, Vinhas, Riedlinger and Glazunova2024).

Disinformation Accusations

While there is no research on the effects of pseudo fact checking yet, one can imagine that they are similar to the effects of a related strategy of disinformation accusations. The use of accusations of spreading disinformation to achieve political ends is not new. The term Lügenpresse (“lying press”) was extensively used to discredit journalism throughout World War I and during the Nazi period in Germany (Koliska and Assmann Reference Koliska and Assmann2021). Following Donald Trump’s infamous ridicule of the mainstream media as “fake media” and “fake news,” the strategy has become popular among populist leaders (Ross and Rivers Reference Ross and Rivers2018) and right-wing parties (Hameleers and Minihold Reference Hameleers and Minihold2022). In the United States and the European Union, several coalitions with distinct institutional and ideological profiles have emerged, each with different stances on what constitutes legitimate authority to identify untrustworthy information (Radnitz Reference Radnitz2025). This dynamic reflects a broader transformation of the public sphere, where accusations of bias fuel polarization and erode generalized media trust (Štětka and Mihelj Reference Štětka and Mihelj2024).

The widespread use of the terms “fake news” and “disinformation” by populist and right-wing leaders has been a cause for concern among scholars. While the literature on this issue is limited, studies suggest that exposure to elite disinformation discourse can have harmful effects. First of all, disinformation accusations can be an efficient propaganda strategy when weaponized by a populist or authoritarian leader, reducing audiences’ trust in the accused news outlet and the perceived accuracy of its message (Egelhofer and Lecheler Reference Egelhofer and Lecheler2019)—although dissatisfaction with politics can render politicians’ use of disinformation accusations ineffective (Radnitz, Karceski, and Hsaio Reference Radnitz, Karceski and Hsaio2025). However, in addition to these direct effects, priming effects can make ideas about disinformation more salient in memory, increasing the likelihood that individuals will use them to assess the credibility of any information. Exposure to disinformation accusations propagated by populist leaders can lead individuals to question the reliability of information in general and have less trust in the media as an institution (Egelhofer et al. Reference Egelhofer, Boyer, Lecheler and Aaldering2022; Van Duyn and Collier Reference Van Duyn and Collier2019).

Finally, in addition to discrediting criticism of the government, disinformation accusations can have a disorienting and demobilizing effect. For instance, Wedeen (Reference Wedeen2015) documents how exposure to news and disinformation accusations during the Syrian civil war generated a sense of uncertainty, demobilizing potential opposition members. As pseudo fact checking is essentially disinformation accusations plus elements of a fact checking format, one can imagine that reduced credibility of the attacked source, lower media trust, and confusion can be the effects of pseudo fact checking as well.

Pseudo Fact Checking, Blame Attribution, and War in Authoritarian Russia

Scholars have generated a great deal of research on the reception and effects of state media in Russia, focusing on television (Mickiewicz Reference Mickiewicz2008) and social media (Bryanov et al. Reference Bryanov, Kliegl, Koltsova, Miltsov, Pashakhin, Porshnev, Sinyavskaya, Terpilovskii and Vziatysheva2023). This research has uncovered the role of various factors mediating propaganda effects, such as preexisting political views (Shirikov Reference Shirikov2024a), personal experience (Mickiewicz Reference Mickiewicz2005), political knowledge (Toepfl Reference Toepfl2013), political engagement (Alyukov Reference Alyukov2022; Reference Alyukov2024a), and media (dis)trust (Alyukov Reference Alyukov2023; Szostek Reference Szostek2018). In particular, scholars emphasize that individuals can read regime propaganda critically, especially when alternative information can be extracted directly from personal experience, such as information about the economy (Rosenfeld Reference Rosenfeld2018). However, authoritarian propaganda is never solely about credibility. State media can present support for the regime as patriotic and stigmatize disagreement with the regime’s policies as deviant, thus creating social incentives to conform as dissent becomes costly (Greene and Robertson Reference Greene and Robertson2017).

Regime propaganda in Russia also interacts with national identity in complex ways—a dynamic underpinned by feelings of national pride and humiliation. Surveys show a substantial growth in Russian national pride in the past 20 years, reflecting the government’s need for positive social identity (Fabrykant and Magun Reference Fabrykant and Magun2019). Pride related to World War II and its commemorations, as well as to victory over fascism, elicits strong positive emotions among a majority of Russians (Soroka and Krawatzek Reference Soroka and Krawatzek2021). Sharafutdinova (Reference Sharafutdinova2020) argues that the success of Kremlin propaganda is partly explained by how Russian state media engage with pride and handle national identity: anti-Western regime narratives pushed by propaganda resonate with national identity because they transform grievances associated with the widespread sense of shame and humiliation caused by the economic and political chaos of the 1990s into self-esteem and pride. Shirikov (Reference Shirikov2024b) calls this type of propaganda “affirmation propaganda”: it offers citizens emotional comfort, acknowledging their concerns and validating those identities that are the basis for regime support. In addition to targeting regime supporters, these themes are also often shared by citizens holding oppositional attitudes (Tolz and Hutchings Reference Tolz and Hutchings2023).

At the same time, the use of pseudo fact checking as a propaganda tool in Russia has rapidly evolved. Governments and media organizations can quickly mirror each other’s techniques when they are seen as effective (Tolz et al. Reference Tolz, Hutchings, Chatterje-Doody and Crilley2021). While the practice of mirroring accusations of spreading disinformation dates back to the Cold War period (Tolz and Hutchings Reference Tolz and Hutchings2021), the scale of the phenomenon has dramatically increased in recent years. With the rise of digital and social media, pro-government pseudo fact checking has gained new intensity in Russia, being forged in the interactive process of accusations exchanged between Russia and Western governments (Chernobrov and Briant Reference Chernobrov and Briant2022).

Initial explorations began in 2017 when Russia’s international broadcaster RT introduced Factcheck, a website section debunking claims critical of Russia. However, the mass adoption of pseudo fact checking occurred during the COVID-19 pandemic, with the regime launching multiple websites and social media channels to label criticism of its pandemic response as fake (Asmolov Reference Asmolov, Coombs and Holladay2022). Following a gradual increase in the salience and negativity of reporting on Ukraine in Russian state media over the past decade (La Lova Reference La Lova2025), this strategy was further amplified following the invasion of Ukraine. Facing intense criticism for attacking civilian infrastructure and committing war crimes in Ukraine, discrediting these accusations as “fake news” and “disinformation” by pseudo fact checkers has become an extremely common strategy across the Kremlin-controlled media sphere (Alyukov, Kunilovskaya, and Semenov Reference Alyukov, Kunilovskaya and Semenov2022). Several TV shows, such as Anti-Fake,Footnote 1 Fake Control,Footnote 2 and Stop Fake,Footnote 3 were launched to debunk accusations against Russia. This TV industry is complemented by the War on FakesFootnote 4 network, which includes websites and Telegram channels targeting Russian and international audiences in multiple languages (Tuters and Noordenbos Reference Tuters, Noordenbos, Mortensen and Pantti2024).

Often run by the same individuals affiliated with the Kremlin (Walter and Backovic Reference Walter and Backovic2023), these simultaneously launched projects represent a coherent segment of the propaganda industry. Recently, ANO Dialog, a Kremlin-affiliated nonprofit with which War on Fakes and other online disinformation activities are associated, established the Global Fact Checking Network, an international network intended to unite Kremlin-friendly organizations that produce alternatives to Western fact checkers around the world.Footnote 5 In other words, the regime has not only embraced elements of pseudo fact checking strategically but also plans to facilitate its adoption among friendly authoritarian regimes.

Qualitative studies show that the rhetoric of pseudo fact checking has deeply influenced citizen discourse in Russia, with many citizens using confusion generated by contradictory information to justify not taking a stance on regime policies (Public Sociology Laboratory 2023). This dynamic adds a new dimension to political blame avoidance. In democracies, blame attribution is influenced by institutional clarity (Tilley and Hobolt Reference Tilley and Hobolt2011), party cues (Healy, Kuo, and Malhotra Reference Healy, Kuo and Malhotra2014), perceived political control over services (Beazer and Reuter Reference Beazer and Reuter2019), and news framing (Iyengar Reference Iyengar1994). In autocracies, these factors are often overridden by the “division of labor” among the executive, institutions, and propaganda. In Russia, the regime benefits from international confrontations by attributing policy failures to the government and legislature, while the president gains popularity through the “rally-around-the-flag” effect (Sirotkina and Zavadskaya Reference Sirotkina and Zavadskaya2020). Propaganda refers to the leader exclusively through positive stories (La Lova Reference La Lova2024) and blames economic issues on external factors (Rochlitz, Kazun, and Yakovlev Reference Rochlitz, Kazun and Yakovlev2020). Selective blame attribution intensifies when the regime is vulnerable or enjoys high popular support (Rozenas and Stukal Reference Rozenas and Stukal2019). However, pseudo fact checking introduces a new mode of blame avoidance by preventing citizens from attributing blame altogether. In Russia’s environment of widespread media distrust (Alyukov Reference Alyukov2023), excessive use of pseudo fact checking rhetoric leads Russians to avoid responsibility for the war or claim that all parties are equally to blame. The aforementioned phrase “Everything is complicated; we do not know the whole truth” has become a cultural cliché representing this process (Public Sociology Laboratory 2023).

Mechanisms and Hypotheses

To explain the specific mechanisms underlying the effects of pseudo fact checking, we draw on the literature focusing on motivated reasoning and priming. First, we assume that political cognition is organized by the accessibility of information. To form a perception of an issue or an opinion, individuals sample multiple relevant considerations in their memories. Those considerations that were activated more recently than others are more likely to be sampled (Zaller Reference Zaller1992). The media can prime specific considerations, making them more likely to be used as cues for interpreting information (Iyengar and Kinder [Reference Iyengar and Kinder1987] 2010). Second, we assume that political cognition is driven by motivated reasoning, where the motivation to achieve an accurate conclusion about reality can be outweighed by the motivation to achieve a preferred conclusion when strong political loyalties at stake, thus leading individuals to accept belief-consistent information and discard belief-inconsistent information (Taber and Lodge Reference Taber and Lodge2006).

Based on these mechanisms, we formulate the following hypotheses. First, we assume that individuals tend to perceive information consistent with their political beliefs as more credible (Baum and Gussin Reference Baum and Gussin2008), and pseudo fact checking is likely to face resistance from an ideologically distant audience, similar to real fact checking (Amazeen et al. Reference Amazeen, Thorson, Muddiman and Graves2018; Van Erkel et al. 2024):

H1: respondents will perceive news and debunking stories framed in line with their political views as more credible.

Second, we assume exposure to pseudo fact checking effectively undermines the credibility of targeted news:

H2: exposure to pseudo fact checking will decrease the perceived credibility of news stories that are being debunked.

Third, under certain circumstances, exposure to pseudo fact checking can prime the idea of disinformation, making it a more accessible criterion for evaluating the credibility of the media in general, similar to the effects of disinformation accusations (Egelhofer et al. Reference Egelhofer, Boyer, Lecheler and Aaldering2022). Hence, we assume that exposure to pseudo fact checking will decrease general media trust:

H3: exposure to pseudo fact checking will decrease general media trust.

Fourth, we assume that attributing responsibility for politically sensitive policies in an authoritarian context might involve disagreements with others or even put one at risk (Greene and Robertson Reference Greene and Robertson2017). Under these circumstances, simultaneous exposure to both news and pseudo fact checking debunking the news can cause a feeling of uncertainty, making it easier for respondents to find justifications for avoiding the attribution of responsibility (Wedeen Reference Wedeen2015):

H4: exposure to both news stories and debunking stories will make the refusal to attribute responsibility for the invasion to any party involved in the conflict more likely.

Fifth, we assume that individuals attach greater weight to information that can be extracted directly from personal experience, such as information about the economy, which can be used to independently evaluate media messages (Rosenfeld Reference Rosenfeld2018). We expect information about the war to have a stronger effect than information about events within reach:

H5: economy-focused fact checks will have a weaker effect on the perceived credibility of a story, general media trust, and the attribution of responsibility than war-focused fact checks.

Research Design

Experiment Design

To test the hypotheses, we rely on a preregistered online experiment. The experiment is based on a 2 × 2 × 2 factorial design, which varies exposure to news and fact checks debunking it. Some participants were exposed to news stories and debunking stories (treatment groups), while others were exposed to news stories only (control groups). Our main treatment variable is exposure to fact checks. We vary exposure to fact checks by assigning a news story only to participants in control groups and a news story and a debunking story to participants in treatment groups. As we expect policy realms to affect the results, we vary the participants’ ability to independently benchmark information based on direct access to issues via personal experience. Each news and debunking story focuses on a Russian missile attack in Ukraine (less likely to be experienced directly) and inflation in Russia (more likely to be experienced directly). As we expect prior political attitudes to affect the results, we also vary the political framing of stories and debunking stories. Stories with a pro-regime framing attribute responsibility for the devastation in Ukraine to Ukrainian air defense and downplay the scale of inflation. Stories with an anti-regime framing attribute responsibility for the devastation to the Russian government and emphasize the scale of inflation.

Treatments and Outcomes

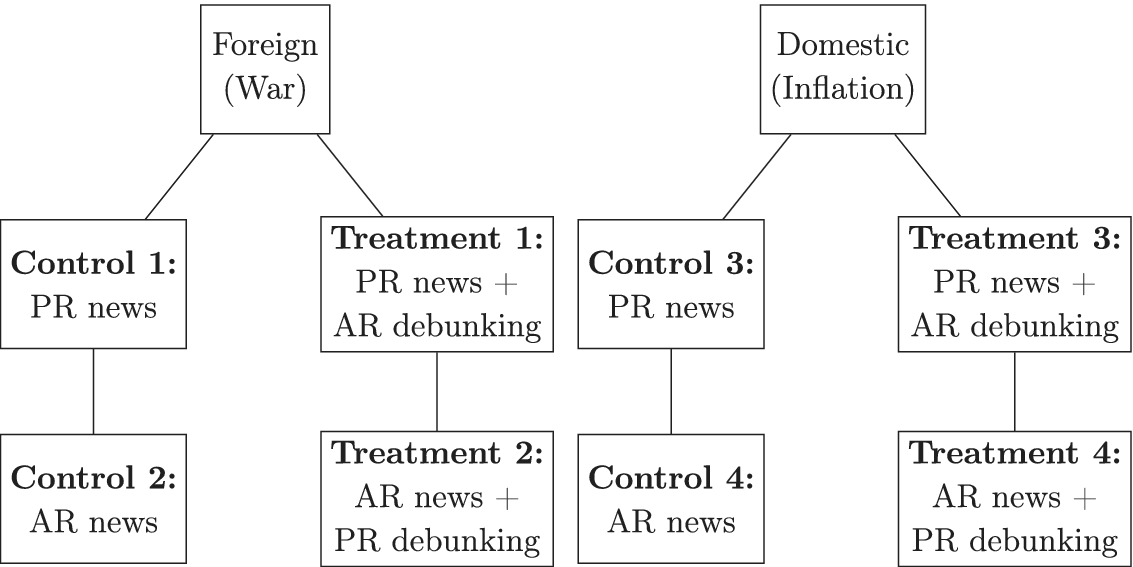

News stories are attributed to a fictional Telegram channel with a generic name, Nash Gorod (“Our Town”), with no perceived partisan affiliation. Debunking stories are attributed to two debunking channels mimicking the style of independent fact checking and pro-regime pseudo-debunking. Figure 1 summarizes the design.

Experiment Flow

Note: PR and AR stand for pro-regime and anti-regime, respectively.

Pro-regime and anti-regime debunking stories use appropriate words, such as “fake” and “truth,” but also rely on visual elements used in both journalistic and pro-regime pseudo fact checking, such as supporting images and multiple embedded links. Pro-regime debunking stories are similar in style to the “anti-fake” Telegram channel Voyna s Feykami (“War on Fakes”). Anti-regime debunking stories are similar in style to the independent Provereno.Media (“Verified Media”).

The stimuli are carefully designed to resemble real messages one would encounter on Russian Telegram in the context of the war: we keep the number of followers, time of posting, number of views, and emoji constant within news or debunking stories but different across news and debunking stories to make sure that respondents do not get suspicions when seeing identical elements. The debunking stories were shown with higher engagement metrics—specifically, more “thumbs up” approval indicators and views than the news stories. While view counts are unlikely to be interpreted as a sign of social endorsement (since they simply indicate the number of times a post was seen at a given moment and naturally increase over time), “thumbs up” indicators might have slightly enhanced the perceived credibility of debunking messages. However, given the low absolute numbers, these effects are likely to be negligible.

All the treatments, factorial design, and vignette population can be found in online appendix B. Figures 2 and 3 are examples of an anti-regime news story on a Russian missile attack in Ukraine and a pro-regime debunking story.

Missile Attack: An Oppositional News Story (Control 1)

Missile Attack: A Pro-Regime Debunking Story (Treatment 1)

Our main outcomes of interest are the perceived credibility of a news story, general media trust, and the clarity of attribution of responsibility. The first two outcomes are measured via items asking respondents to rate the credibility of a news story presented, the credibility of a debunking story (if in the treatment condition), and their trust in traditional and new media. Clarity of attribution of responsibility is measured via scales asking respondents to rate their agreement with statements attributing responsibility for the war to Russia, Ukraine, the West, and a statement claiming that the conflict between Russia and Ukraine is too complicated to identify the culprit unambiguously. The scales reflect a complex view of responsibility, with respondents assessing the degree of responsibility of each actor rather than making an either/or judgment. The survey questionnaire can be found in online appendix C.

Recruitment

We used Qualtrics to create the survey, which was distributed by Syno International, a market research company specializing in online research, through Cint, an online marketplace with survey panels worldwide. The survey was distributed between September 26 and October 2, 2023 (N = 2,949). The balance check suggests that there are no statistically significant differences between groups in key covariates. News and debunking stories were also followed by a simple factual question to ensure that respondents processed the stimuli. More than 80% answered the questions correctly in each group, suggesting that the stimuli were cognitively processed by respondents. See sections C.1 and C.2 of online appendix C for more details on recruitment and ethical issues. In online appendix B, see section B.3 and B.4 for the balance check and processing task, and section B.6 for the details on preregistration, data access, and replication.

Ethical Issues

The project received ethical approval from both the Law, Arts and Humanities Research Ethics Subcommittee at King’s College London (HR-23/24-38969), and the Research Ethics Committee in the Humanities and Social and Behavioural Sciences at the University of Helsinki. The study followed stringent ethical protocols: participants were informed about its nature, funding, and the institutions involved; they provided informed consent, could withdraw at any time (including at the end), and received compensation in the form of vouchers. Nevertheless, several ethical issues were associated with the study.

First, exposure to real or fictional fake news might influence beliefs. However, such treatments are commonly used in research on the effects of misinformation (e.g., Egelhofer et al. Reference Egelhofer, Boyer, Lecheler and Aaldering2022; Van Duyn and Collier Reference Van Duyn and Collier2019). Prior studies show that single exposures to information have negligible effects on attitudes: political attitudes result from long-term, cumulative exposure to information (Zaller Reference Zaller1992). In the case of political information, belief updating is also strongly motivated by prior partisanship and political engagement, further reducing the likelihood that brief experimental exposure could meaningfully affect attitudes compared with the broader informational environment (Taber and Lodge Reference Taber and Lodge2006).

Second, the experiment involved partial deception: the news stories were presented as real, although they were based on genuine events reframed for research purposes. To mitigate potential harm, participants received a detailed debriefing explaining the modifications and providing links to the original sources. Overall, the societal benefits of studying misinformation outweigh these minimal risks. Further details on ethical considerations can be found in section C.2 of online appendix C.

Limitations

There are several methodological aspects of the research design that limit potential inferences related to internal and external validity. First, debunking stories used to discredit news stories cannot be isolated from the news stories themselves. Hence, one can imagine that the causal effect of a pro-regime debunking story originates in some susceptibility of an anti-regime news story to debunking, and vice versa. However, as debunking stories without original news stories would not make sense to respondents, such an approach is common in research on disinformation accusations (Egelhofer et al. Reference Egelhofer, Boyer, Lecheler and Aaldering2022; Van Duyn and Collier Reference Van Duyn and Collier2019). Second, unlike typical vignette experiments based on textual material, we opted for more realistic stimuli. These stimuli are carefully designed to look like real messages one would encounter on Russian Telegram in the context of the war and include lengthy descriptions and multiple visual elements. Ensuring ecological validity, they can dilute the observed effects, making the design less sensitive than it could be with simple text vignettes.

Additionally, as the experiment is implemented via a survey, there are several challenges to validity. In recent years, scholars have raised concerns about the validity of surveys as a method in authoritarian contexts. Using list experiments designed to identify untruthful responses, some scholars have found that citizens may falsify their preferences in response to sensitive political questions. These findings are context dependent. For instance, some research suggests that Russian citizens do not falsify their support for the president (Frye, Gehlbach, et al. Reference Frye, Gehlbach, Marquardt and Reuter2017) but falsify their support for the invasion of Ukraine (Chapkovski and Schaub Reference Chapkovski and Schaub2022). Additionally, there are concerns related to self-selection bias. While regime supporters may be more inclined to participate in surveys because they feel safer, regime skeptics may also be more inclined to participate in surveys to make their opinions heard, potentially making survey results nonrepresentative (Frye, Hale, et al. Reference Frye, Hale, Reuter and Rosenfeld2024; Vyrskaia, Tkachenko, and Martynova Reference Vyrskaia, Tkachenko and Martynova2025). As the goal of this study is to estimate treatment effects, these concerns are not as critical. Even if participants provide misleading information or self-select based on the survey’s topic, randomization ensures the validity of causal inference. However, one should still interpret the results with caution, understanding that some groups can be over- or underrepresented in the sample.

Data Analysis

Sample Characteristics

As our experiment is based on an online survey, the sample is close to the population in terms of gender but slightly younger, more educated, and more urbanized (53% female, mean age 42.4, 61% have some university education, 92.6% living in a city) compared with the 2020 Russian census (55% female, mean age 48, 25.8% have some university education, 75% living in a city). However, the data are regionally representative. Respondents from all regions were represented in the survey, with minimal differences between regional variation in the sample and among the population (mean difference 0.4%, standard deviation 0.52%). In sections C.3 and C.4 of online appendix C, we provide a detailed comparison of our sample with a representative survey conducted via computer-assisted telephone method and with census data.

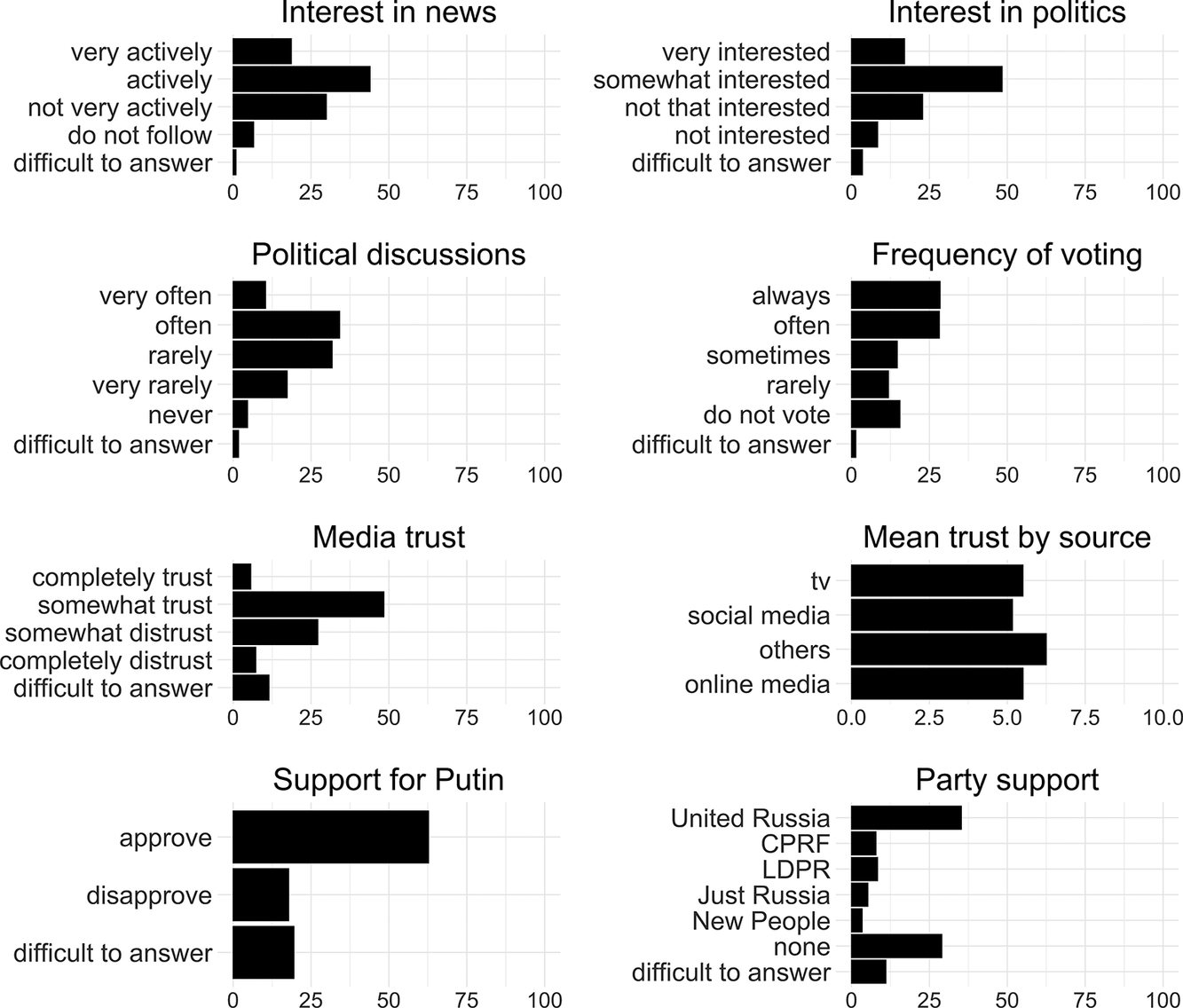

As figure 4 illustrates, our respondents exhibit relatively high levels of political engagement. Most respondents report following the news “actively” (43.6%), being “somewhat interested in politics” (48.2%), engaging in political discussions with others “often” (33.8%), and voting in elections “always” (28.2%). They also display a moderate level of trust in the media, with 48.1% reporting that they “somewhat trust” the media. Finally, our respondents appear to have predominantly pro-regime political preferences. More than 62% express support for President Putin. About 35.1% of respondents report supporting United Russia, the ruling party, while 28.9% do not support any of the current parties in the State Duma. These findings align with prior research suggesting that successful policies are credited to the president while all other policies are attributed to legislatures, which tends to make them unpopular (Sirotkina and Zavadskaya Reference Sirotkina and Zavadskaya2020).

Respondents’ Political Engagement and Regime Support

Note: CPRF and LDPR stand for Communist Party of the Russian Federation and Liberal Democratic Party of Russia, respectively.

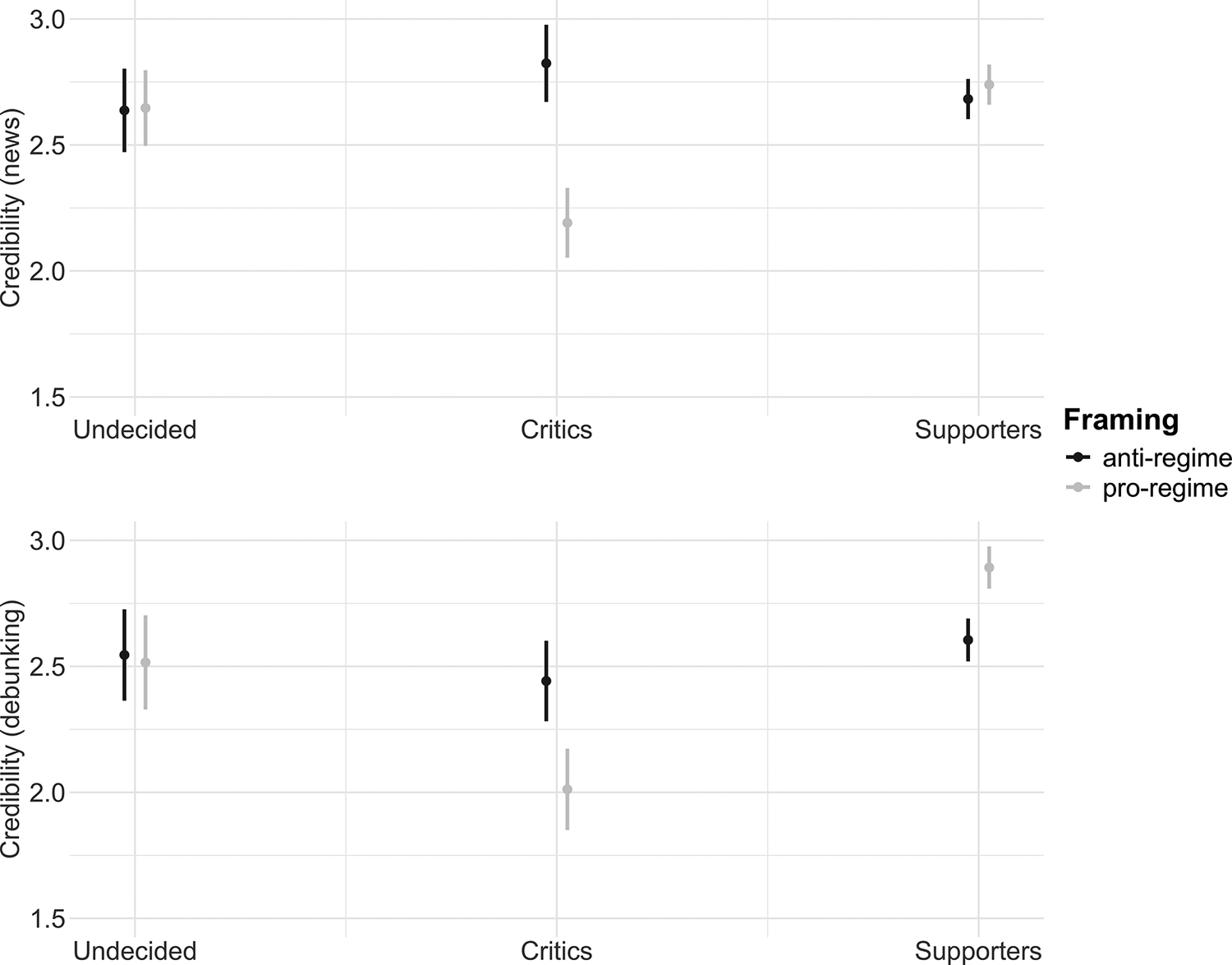

Pseudo Fact Checking and Credibility

According to our hypotheses, we expect news stories with a pro-regime framing to be more credible for respondents with pro-regime attitudes and less credible for respondents with anti-regime attitudes, and vice versa. Fact checks should also face more resistance from ideologically distant audiences. We employ regression models to examine the connection between respondents’ views and the credibility of news stories with different framings. As the existing party system in Russia’s authoritarian context does little to represent actual ideological preferences, scholars routinely rely on support for Putin as a simplistic but robust predictor of regime support (Frye, Gehlbach, et al. Reference Frye, Gehlbach, Marquardt and Reuter2017; Sirotkina and Zavadskaya Reference Sirotkina and Zavadskaya2020). Figure 5 presents the disparities in the perceived credibility of news stories and debunking stories with anti-regime or pro-regime frames among those who support Putin, are critical of Putin, or are undecided.

Credibility, by Regime Support

Despite the simplistic nature of the distinction, the graph reveals a clear pattern. While those who are undecided perceive information with pro-regime and anti-regime framing as equally credible, individuals with pro-regime views perceive news stories and debunking stories with pro-regime framing as more credible and anti-regime framing as less credible. Conversely, those with anti-regime views find news stories and debunking stories with anti-regime framing more credible and view news stories and debunking stories with pro-regime framing as less credible. Table A.1.1 in online appendix A provides the full results of the regression analysis.

The interactions between anti-regime views and pro-regime framing have a negative effect on the credibility of news (

![]() $ p $

$ p $

![]() $ < $

0.001) and debunking stories (

$ < $

0.001) and debunking stories (

![]() $ p $

$ p $

![]() $ < $

0.05). The interactions between pro-regime views and pro-regime framing have a positive effect on the credibility of debunking stories (

$ < $

0.05). The interactions between pro-regime views and pro-regime framing have a positive effect on the credibility of debunking stories (

![]() $ p $

$ p $

![]() $ < $

0.05), but the effect on the credibility of news stories is not significant. The effects are substantial, representing 0.3–0.6 shifts in average credibility ratings on a scale from one to four. The effects are in expected directions and significant in three out of four combinations, providing overall support for H1, which postulates that the credibility of information increases if it matches individuals’ views. Notably, the effects are stronger for regime critics, who are more distrustful of pro-regime messages relative to anti-regime messages than regime supporters are of anti-regime messages relative to pro-regime messages. This asymmetry likely arises because the higher stakes associated with opposition in a repressive context result in more crystallized views.

$ < $

0.05), but the effect on the credibility of news stories is not significant. The effects are substantial, representing 0.3–0.6 shifts in average credibility ratings on a scale from one to four. The effects are in expected directions and significant in three out of four combinations, providing overall support for H1, which postulates that the credibility of information increases if it matches individuals’ views. Notably, the effects are stronger for regime critics, who are more distrustful of pro-regime messages relative to anti-regime messages than regime supporters are of anti-regime messages relative to pro-regime messages. This asymmetry likely arises because the higher stakes associated with opposition in a repressive context result in more crystallized views.

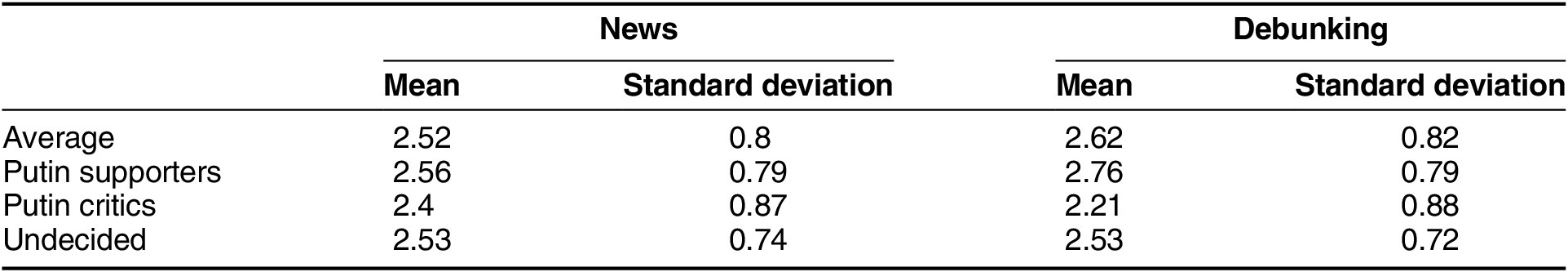

The second hypothesis posits that fact checks will decrease the perceived credibility of a news story being debunked. Table 1 demonstrates the means and standard deviations of the credibility of stories. On average, participants rate the credibility of news and debunking stories as higher than average (2.52 and 2.62 out of four), with regime critics being the most skeptical.

Credibility of News and Debunking Stories

Note: Scaled from one to four.

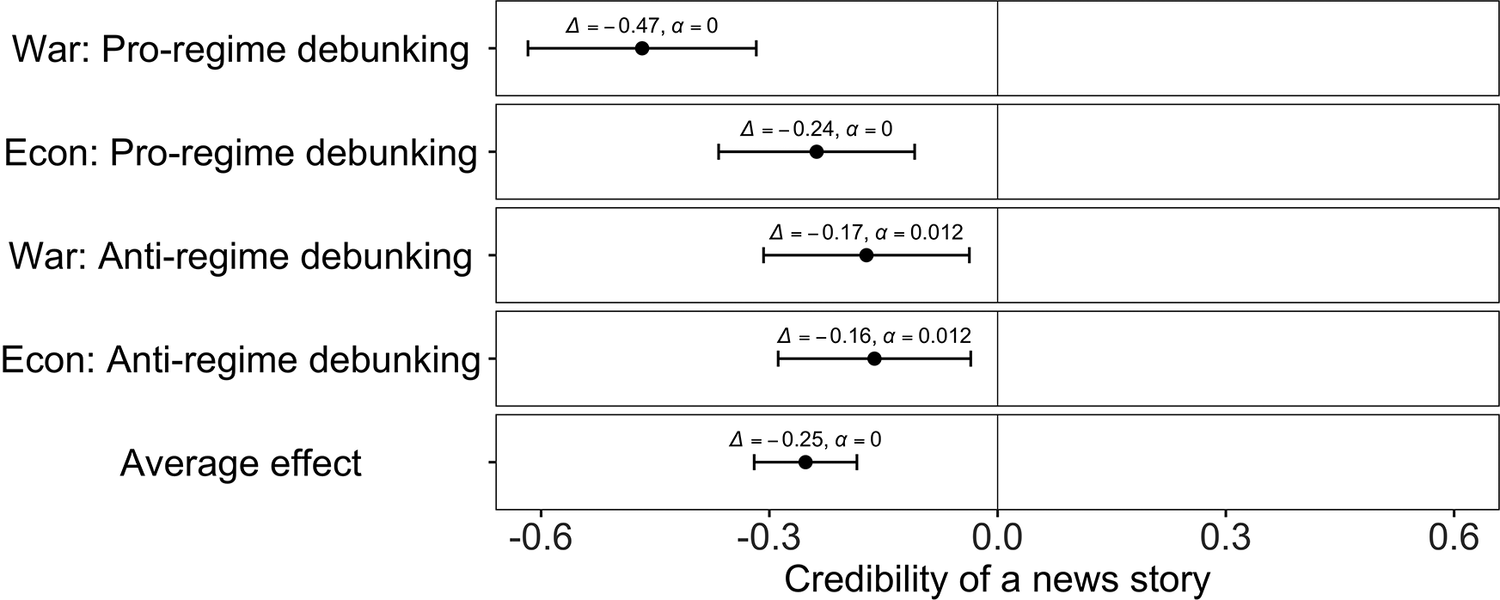

Figure 6 presents the estimation of average marginal component effects (see also table A.1.2 in online appendix A). We analyze both the effect of each debunking condition compared with a respective news condition (e.g., an anti-regime news story versus an anti-regime news story and a matching pro-regime debunking story debunking the anti-regime news story as disinformation) and the average effect of all debunking conditions compared with news-only conditions on the credibility of news stories.

The Effect of Fact Checks on the Credibility of News Stories

Note: α < 0.05.

As evident from the graph, compared with news-only conditions, exposure to both pro-regime and anti-regime debunking decreases the credibility of news stories. The effects of separate stories are moderate and range from 0.16 to 0.47, with the average effect representing a 0.25 shift in credibility ratings on a scale from minus two to two. The results provide clear support for H2.

Of particular interest is the effect of the war-focused pro-regime debunking story, which is at least two times stronger than the effects of other stories (0.47 versus 0.24, 0.17, and 0.16). As the experiment featured only two topics—war and the economy—we lack sufficient variation in treatments to explore differences in resonance across topics. The strong effect of the war-focused pro-regime debunking story can partly be explained by its appeal to feelings of national pride. Multiple scholars emphasize that the resonance of Russian propaganda is partly rooted in its ability to draw upon sentiments of national pride and humiliation shared across the political spectrum (e.g., Sharafutdinova Reference Sharafutdinova2020; Shirikov Reference Shirikov2024b). The framing of the war-focused pro-regime stories used in the experiment directly taps into this resentment, presenting criticism of attacks on civilians as an unjust assault on Russia’s dignity and moral standing, and transforming defensive reactions into a sense of collective grievance.

Pseudo Fact Checking and Media Trust

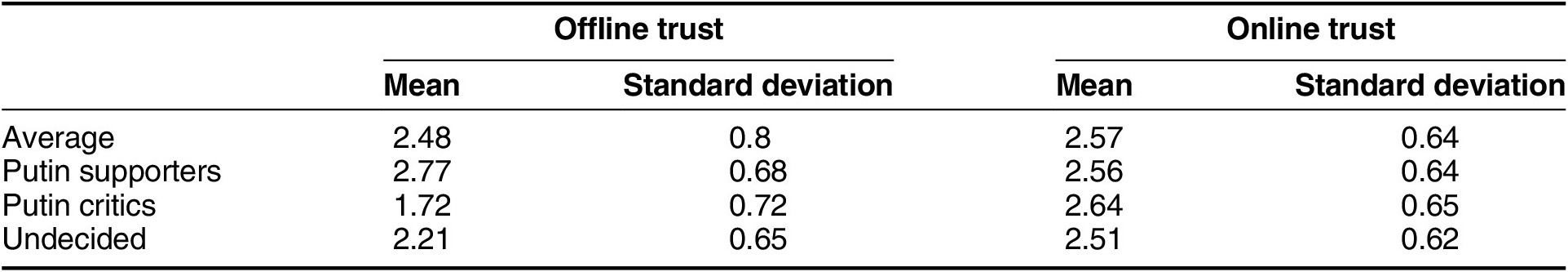

The third hypothesis posits that fact checks will decrease general media trust. Table 2 demonstrates the means and standard deviations of offline and online media trust.

Offline and Online Media Trust

Note: Scaled from one to four.

On average, participants report higher-than-average trust in offline and online media (2.48 and 2.57 out of four, respectively), with expected differences in terms of regime support. As offline media in Russia is fully controlled by the regime, reporting trust in offline sources is synonymous with being a regime supporter. However, as both pro-regime and anti-regime narratives compete in the online sphere, trust in online sources does not unambiguously reflect anti-regime views. Logically, regime supporters report significantly higher trust in offline media than regime critics (2.77 versus 1.72), while trust in online sources is only marginally higher among regime critics (2.56 versus 2.64). Figure 7 demonstrates the effects of debunking on both offline and online media trust (see also table A.1.2 in online appendix A).

The Effect of Fact Checks on Media Trust

Note: α < 0.05.

Contrary to our hypothesis, we do not find support for H3. Neither the average effect of all debunking stories nor the separate effects of each debunking story compared with a respective news story are statistically significant. While the absence of the effect may suggest that fact checks do not affect media trust, it could also be a result of the nature of our research design. In fact, this finding points to a discrepancy between perceptions of specific issues and more general attitudes, which is well documented in prior research. General attitudes, such as media trust, are a product of continuous exposure to vast amounts of information from different sources daily. Hence, they are more stable than perceptions of narrower issues and more difficult to change with one-time exposure. For instance, similar to our results, scholars find that exposure to fact checks can shift perceptions of the accuracy of specific issues, but these perceptions are difficult to translate into general attitudes. The effects on general attitudes increase with repeated exposure, though even multiple fact checks do not consistently generate a strong effect (Bailard, Porter, and Gross Reference Bailard, Porter and Gross2022; Coppock et al. Reference Coppock, Gross, Porter, Thorson and Wood2023). This discrepancy is also documented in the context of Russia’s invasion of Ukraine, where fact checks correct beliefs in false pro-Kremlin claims about the war but do not affect support for the war (Porter et al. Reference Porter, Scott, Wood and Zhandayeva2024). Fact checks can affect general attitudes, but only if individuals are already inclined to generalize them—for example, if they hold populist attitudes (Egelhofer et al. Reference Egelhofer, Boyer, Lecheler and Aaldering2022). In contrast, experimental manipulations that directly target general attitudes rather than perceptions of specific issues can bypass this gap and have stronger effects on general attitudes (Jungherr and Rauchfleisch Reference Jungherr and Rauchfleisch2024).

To demonstrate this difference, we compare our results with those of an experiment embedded in one of the waves of the Panel Study of Russian Public Opinion and Attitudes (PROPA) (Zavadskaya et al. Reference Zavadskaya, Vyrskaia, Gilev, Alyukov and Rumiantseva2024). The results suggest that as opposed to specific fact checks, exposing individuals to more general statements raising awareness of disinformation decreases media trust. See section A.2.1 of online appendix A for a full analysis of PROPA data.

Pseudo Fact Checking and Attribution of Responsibility

The fourth hypothesis suggests that exposure to fact checks will make it less likely for participants to attribute responsibility for the invasion to Russia, Ukraine, or the West, and more likely to refuse to attribute responsibility.

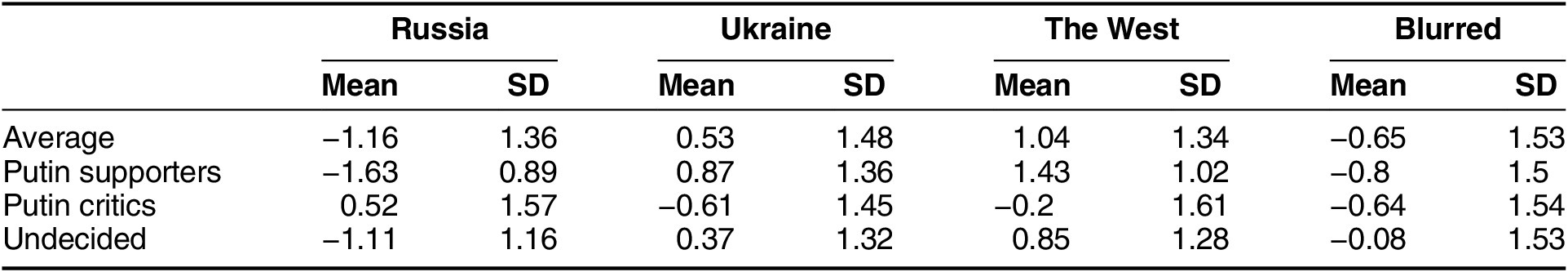

Table 3 presents the means and standard deviations for the attribution of responsibility. On average, respondents identify the West as the primary culprit, followed closely by Ukraine. In contrast, attributing responsibility to Russia is the least popular option. Notably, the refusal to assign blame is also less common, although it is still more prevalent than attributing responsibility to Russia. Expected differences emerge: regime supporters are significantly more likely to blame the West and Ukraine and significantly less likely to blame Russia. Conversely, regime critics predominantly blame Russia and are less likely to blame Ukraine and the West. Undecided respondents fall somewhere in between, attributing moderate blame to Ukraine and the West, while being less likely to blame Russia.

Attribution of Responsibility

Note: Scaled from minus two to two. SD = standard deviation.

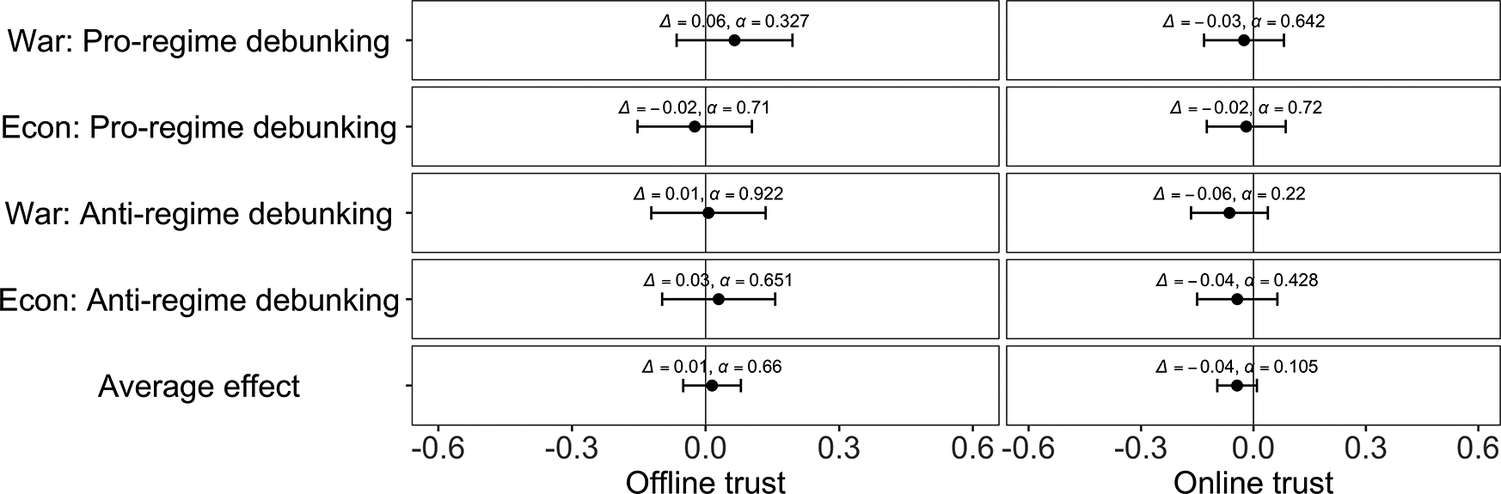

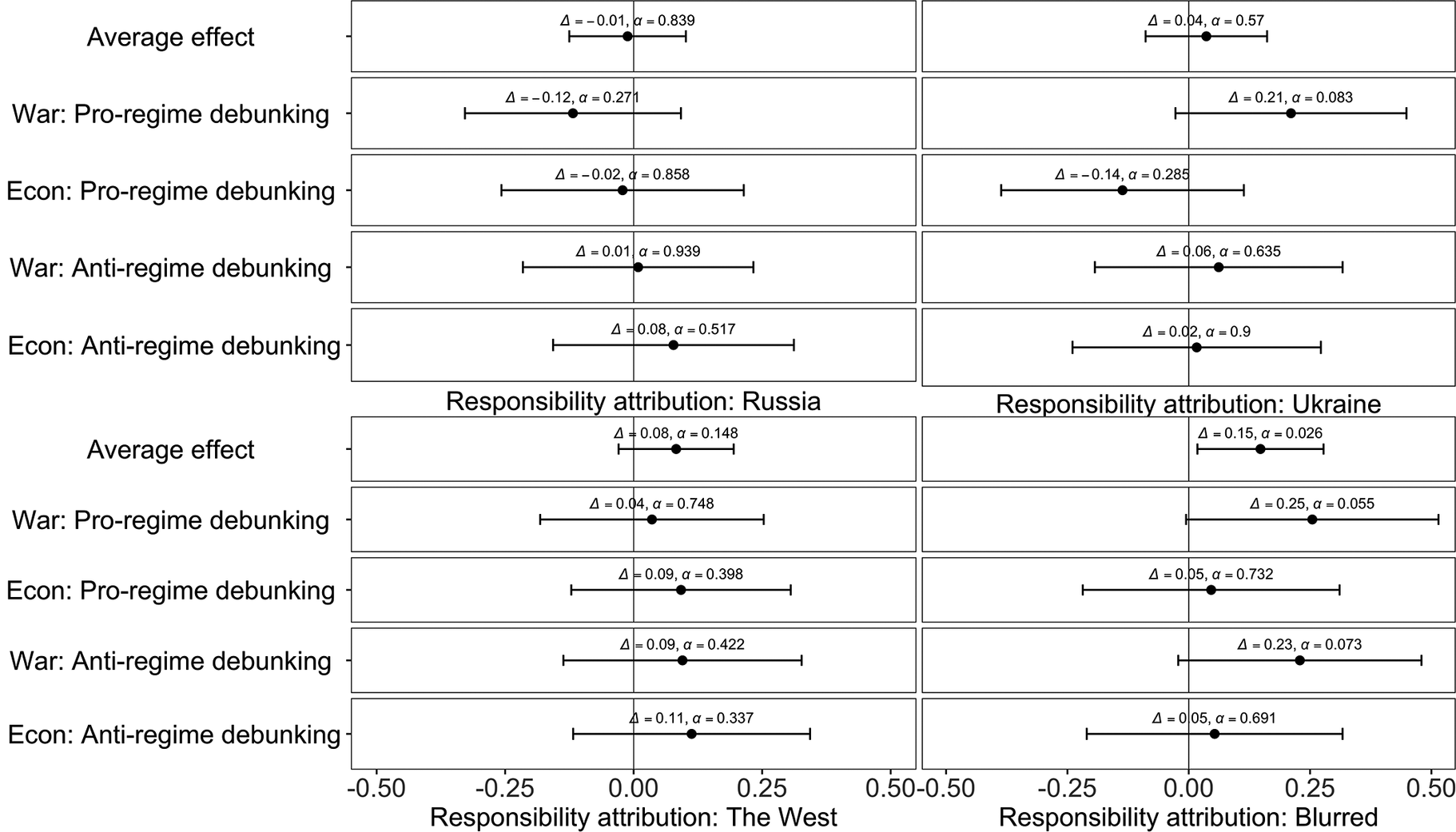

Figure 8 presents both the average effect of fact checks and the separate effects of each debunking condition on the attribution of responsibility to the main parties involved in the conflict and refusal to attribute responsibility (see also table A.1.2 in online appendix A).

The Effect of Fact Checks on Attribution of Responsibility

Note: α < 0.05.

The results lend partial support to H4. Compared with news-only conditions, we do not detect the effect of fact checks on attribution of responsibility for the war to Russia, Ukraine, or the West. However, fact checks do make participants more likely to characterize the conflict between Russia and Ukraine as difficult to understand and less likely to identify the culprit unambiguously. The effect is statistically significant and represents a 0.15 shift in average attribution of responsibility on a scale from minus two to two.

Compared with the effect of fact checks on credibility (0.25), their effect on the refusal to attribute responsibility is weaker. This aligns with previous research suggesting that information has a stronger impact on knowledge but a much smaller effect on attitudes (Coppock et al. Reference Coppock, Gross, Porter, Thorson and Wood2023). Attitudes are difficult to change through one-time exposure to information because they result from accumulated exposure, especially when the issue at stake is perceived as existential. As can be seen from the bottom right pane of the graph, the average effect of fact checks on refusal to attribute responsibility results from the effects of pro-regime and anti-regime war-focused debunking stories. Individually, both of these debunking stories just miss the conventional level of statistical significance of 0.05, but they are significant at

![]() $ \alpha $

$ \alpha $

![]() $ < $

0.1 (

$ < $

0.1 (

![]() $ \alpha $

$ \alpha $

![]() $ = $

0.055 and

$ = $

0.055 and

![]() $ \alpha $

$ \alpha $

![]() $ = $

0.073), and taken together, produce a significant effect at

$ = $

0.073), and taken together, produce a significant effect at

![]() $ \alpha $

$ \alpha $

![]() $ < $

0.05 (

$ < $

0.05 (

![]() $ \alpha $

$ \alpha $

![]() $ = $

0.026).

$ = $

0.026).

As in the case of the impact of debunking stories on credibility, war-focused stories stand out, which can be partly explained by their appeal to feelings of national pride. When anti-regime news or debunking stories highlight Russia’s role in civilian casualties, acknowledging this responsibility may conflict with such feelings, prompting avoidance rather than explicit attribution of blame.

There are potential competing explanations for the observed effects. On the one hand, in strongly polarized contexts, respondents might perceive surveys as affiliated with the regime and thus become less willing to express anti-regime attitudes (Bischoping and Schuman Reference Bischoping and Schuman1992). Most techniques used to test for potential preference falsification suggest little to no sensitivity bias in responses to questions about support for the regime and the invasion (Frye, Hale, et al. Reference Frye, Hale, Reuter and Rosenfeld2024). However, the possibility that the observed increase is a result of social desirability bias cannot be fully ruled out. To ensure that this is not the case, we run two additional checks reported in section A.3.1 of online appendix A. The first check tests whether respondents exposed to anti-regime messages systematically opt for politically safer answers. If participants were engaging in self-censorship, we would expect anti-regime messages to produce stronger effects on refusal rates than pro-regime messages, as respondents would avoid expressing agreement with regime-critical claims. However, this is not the case. The second check tests whether exposure to anti-regime messages leads respondents to signal loyalty to the regime more broadly. If respondents were trying to appear loyal to the regime after being exposed to politically sensitive content, we would expect an uptick in pro-regime responses, including support for the ruling party. However, this is not the case.

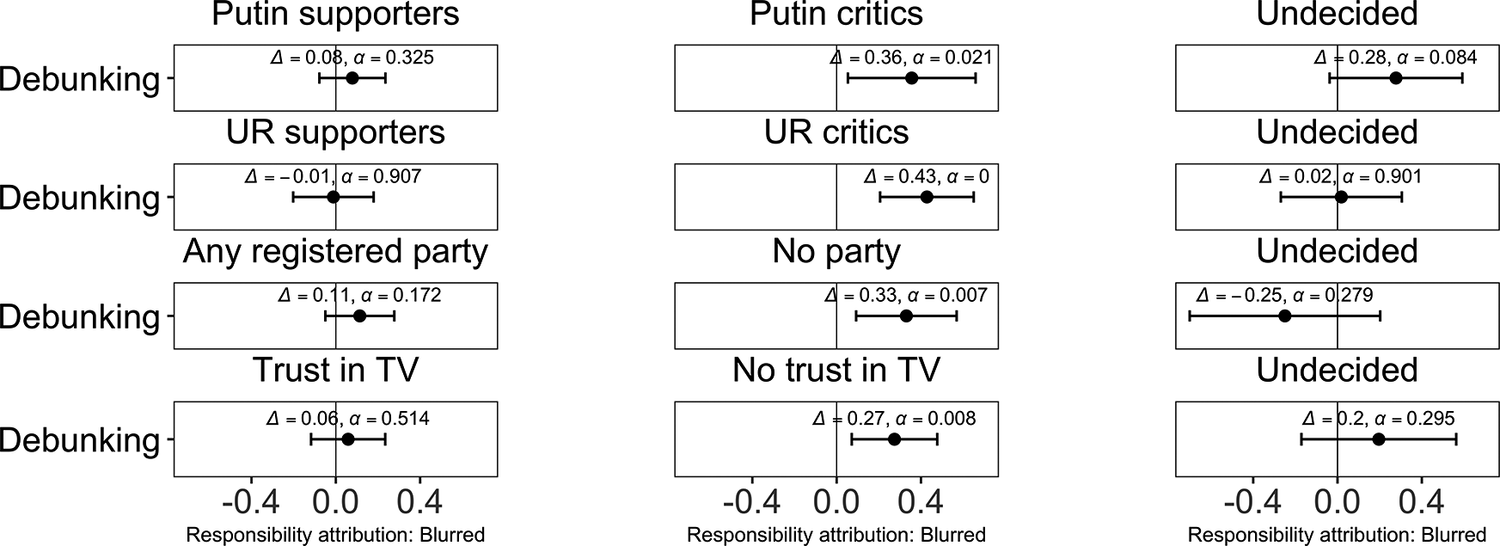

Pseudo Fact Checking and Regime Critics

Who are the respondents who become more likely to refuse to attribute responsibility after exposure to news stories and fact checks? Below we explore the effect of fact checks on refusal to attribute responsibility across three groups: regime supporters, regime critics, and the undecided. To ensure the robustness of this analysis, we use a variety of survey questions tapping into anti-regime attitudes. The first and second questions asked participants whether they are supportive of, critical of, or unsure about President Putin and United Russia. The third question asked participants about their support for registered parties in the parliament. Importantly, this question includes an option that translates as “none” but reads as “I don’t support any parties in the parliament” (nikakuiu), which appears to tap into disgust toward any “systemic” registered parties, seen as the flesh and blood of the regime-orchestrated political system. Almost two-thirds of those critical of Putin selected this option, with the second-closest choice, the Liberal Democratic Party of Russia, not reaching 9%. The fourth question focused on trust in television, which is widely seen as a mouthpiece of the government. Figure 9 demonstrates the effect of fact checks on refusal to attribute responsibility across subgroups (see also table A.1.3 in online appendix A).

The Effect of Fact Checks on Attribution of Responsibility, by Regime Support

Note: α < 0.05. UR stands for United Russia.

As can be seen from the graphs, fact checks make refusal to attribute responsibility more likely, but only among regime critics. The effect is moderate and significant for all items indicating opposition to the regime, with fact checks making participants more likely to refuse to attribute responsibility by 0.36 for those critical of Putin (

![]() $ \alpha $

$ \alpha $

![]() $ < $

0.05), by 0.43 for those critical of United Russia (

$ < $

0.05), by 0.43 for those critical of United Russia (

![]() $ \alpha $

$ \alpha $

![]() $ < $

0.001), by 0.33 for those supporting none of the registered parties (

$ < $

0.001), by 0.33 for those supporting none of the registered parties (

![]() $ \alpha $

$ \alpha $

![]() $ < $

0.01), and by 0.27 for those not trusting television (

$ < $

0.01), and by 0.27 for those not trusting television (

![]() $ \alpha $

$ \alpha $

![]() $ < $

0.01) on a scale from minus two to two. Multiple items used in the analysis suggest that the effect is robust, highlighting that it is primarily regime critics who react to exposure to news and debunking stories with refusal to attribute responsibility.

$ < $

0.01) on a scale from minus two to two. Multiple items used in the analysis suggest that the effect is robust, highlighting that it is primarily regime critics who react to exposure to news and debunking stories with refusal to attribute responsibility.

What are the mechanisms behind the effect of fact checks on the refusal to attribute responsibility among regime critics? As our experiment was not designed to explore causal mechanisms behind this effect, we cannot answer this question conclusively. However, we can propose two plausible pathways, each with its own set of observable expectations.

On the one hand, encountering multiple directly contradicting claims, participants may become less certain about who to blame. As confusion is primarily a cognitive effect, it should operate independently of political stance but depend on how strongly people hold their beliefs. In this case, we would expect to see similar effects across groups or the absence of an effect among regime supporters and critics and its presence among the undecided. On the other hand, uncertainty may also provide a psychological rationale for avoiding uncomfortable or risky judgments. As Wedeen (Reference Wedeen2015, 79–80) shows in her study of wartime Syria, an “avalanche of claims and counterclaims” from the regime, civil society, and foreign media can lead to “information oversaturation,” which, combined with “feelings of present danger,” can make it “easy for regime critics to find alibis for avoiding commitment to judgment at all,” offering “a seemingly potent rationale for inaction in contexts in which action might otherwise have seemed morally incumbent.” In other words, individuals critical of the regime may refrain from expressing blame not because they support it, but because uncertainty allows them to justify inaction when action seems too risky. As this mechanism involves managing emotional and political tension, it is likely to depend on political stance. In this case, we would expect the effect of fact checks on refusal to attribute responsibility to emerge primarily among those with anti-regime attitudes, but not among other groups.

These mechanisms are not mutually exclusive, and our experimental design cannot adjudicate between them decisively as the experiment did not include items tapping into psychological differences between regime supporters and regime critics. Nevertheless, as exposure to news stories and fact checks increases the tendency to refuse to attribute responsibility only among regime critics, the results are consistent with the expectations implied by the second mechanism, which suggests that uncertainty generated by fact checks provides them with a psychological rationale for inaction.

On the other hand, we are conscious of the fact that the direct juxtaposition of pro-regime and anti-regime framing might not be fully adequate. While pro-regime media mechanically blame the West and Ukraine for the war, oppositional media often provide a more nuanced picture. For instance, some Russian oppositional outlets regularly report on misconduct by Ukrainian forces. Therefore, one might argue that the observed effect is a result of a structural difference between regime supporters, who hold onto their preexisting views, and regime critics, who update their beliefs in a Bayesian manner. To ensure that this is not the case, we run additional checks in section A.3.2 of online appendix A. There are two arguments against this, one deductive and one empirical. Deductively, while oppositional media indeed offer a more complex and multifaceted account of the war, they nonetheless share a clear consensus on Russia’s and Putin’s responsibility for the invasion. Although such outlets may differ in how they report on the scale of destruction or instances of misconduct by Ukrainian forces, they do not question who initiated the conflict. Therefore, regime critics are unlikely to encounter narratives implying ambiguity about blame, making Bayesian belief updating an implausible explanation for the observed effect. Empirically, if regime critics were genuinely more inclined to update their beliefs than regime supporters, this tendency should manifest across other domains as well, including their perceptions of credibility in response to debunking. However, the results suggest regime supporters—not critics—exhibit a greater number of significant shifts in perceptions of news credibility after exposure to debunking messages, indicating that the observed pattern cannot be attributed to critics’ higher responsiveness to realistic or nuanced information.

In a post hoc subgroup analysis, we also observed an unexpected pattern that runs contrary to our preregistered expectations: exposure to a war-focused anti-regime debunking story increased, rather than decreased, the likelihood of anti-regime participants attributing responsibility for the invasion to the West. This reaction may reflect a backfire effect, whereby exposure to unwelcome information triggers defensive reasoning and counterarguing among some respondents (Nyhan and Reifler Reference Nyhan and Reifler2010). Given the limited robustness of this result and the multiple comparisons involved, we do not report it in the main text. However, as it may still be relevant for future research, we discuss it in detail in section A.2.2 of online appendix A.

War and Economy

The fifth hypothesis posits that the effects of war-focused fact checks will be stronger than the effects of economy-focused fact checks. Earlier, we demonstrated that fact checks decrease credibility (H2) and make participants more likely to refuse to attribute responsibility (H4). In what follows, we analyze the difference between the effects of war-focused and economy-focused fact checks. We examine differences between the effects of war-focused and economy-focused debunking stories and differences between the effects of each pair of debunking stories on credibility and refusal to attribute responsibility. Full results can be found in table A4 in online appendix A.

As the previous subsection suggested, war-focused items appear to drive the overall effect on refusal to attribute responsibility. However, z-tests indicate that the evidence for a significant difference from economy-focused stories is insufficient. There is no statistically significant difference between the effects of war-focused and economy-focused items on refusal to attribute responsibility on average (

![]() $ z $

$ z $

![]() $ = $

1.45,

$ = $

1.45,

![]() $ p $

$ p $

![]() $ = $

0.145). The results therefore point to a topical pattern consistent with stronger effects of war-related content, though this difference should be interpreted as indicative rather than definitive. However, while there is no statistically significant difference between the average effects of war-focused and economy-focused items on credibility (

$ = $

0.145). The results therefore point to a topical pattern consistent with stronger effects of war-related content, though this difference should be interpreted as indicative rather than definitive. However, while there is no statistically significant difference between the average effects of war-focused and economy-focused items on credibility (

![]() $ z $

$ z $

![]() $ = $

−1.68,

$ = $

−1.68,

![]() $ p $

$ p $

![]() $ = $

0.093), the effect of the war-focused pro-regime debunking story stands out in comparison with other debunking stories, reflecting the pattern observed in figure 6. The effect of the war-focused pro-regime debunking story is stronger than that of the war-focused anti-regime debunking story (

$ = $

0.093), the effect of the war-focused pro-regime debunking story stands out in comparison with other debunking stories, reflecting the pattern observed in figure 6. The effect of the war-focused pro-regime debunking story is stronger than that of the war-focused anti-regime debunking story (

![]() $ z $

$ z $

![]() $ = $

2.86,

$ = $

2.86,

![]() $ p $

$ p $

![]() $ < $

0.01), the economy-focused pro-regime debunking story (

$ < $

0.01), the economy-focused pro-regime debunking story (

![]() $ z $

$ z $

![]() $ = $

2.27,

$ = $

2.27,

![]() $ p $

$ p $

![]() $ < $

0.05), and the economy-focused anti-regime debunking story (

$ < $

0.05), and the economy-focused anti-regime debunking story (

![]() $ z $

$ z $

![]() $ = $

3.05,

$ = $

3.05,

![]() $ p $

$ p $

![]() $ < $

0.01). Thus, H5 is only partially supported.

$ < $

0.01). Thus, H5 is only partially supported.

This finding taps into several discussions. Some studies find that individuals in Russia attach greater weight to information that can be extracted directly from personal experience and use it to resist propaganda claims (Mickiewicz Reference Mickiewicz2008), particularly about the economy (Rosenfeld Reference Rosenfeld2018). Other studies suggest that personal experience is not an unambiguous benchmark that can be used to verify or falsify propaganda. Rather, it is remembered and reinterpreted in line with political views or frames offered by propaganda (Alyukov Reference Alyukov2023). In the context of the Russia–Ukraine war, messages in the public discourse do not contain neutral information about the economy. Rather, the state of the economy is a politicized topic, intertwined with information about the war and embedded in competing pro-regime or anti-regime narratives. As the absence of significant average differences across topics suggests, when economy-focused messages are a part of a politicized discursive struggle rather than neutral information, personal experience with the economy does not appear to serve as an alternative resource to independently evaluate them.

However, while there are no average differences across topics, when it comes to credibility, the war-focused pro-regime debunking story stands out with a stronger effect than all other debunking stories, suggesting that multiple mechanisms are at play. While the limited number of topics in the experiment does not allow us to explore variation in resonance across topics, we can hypothesize that another simultaneous explanation for the observed outcome is the sensitive nature of the war-focused pro-regime debunking story resonating more strongly because it engages emotions tied to national identity and pride, which makes it appear more credible to audiences.

Conclusion

How does pseudo fact checking, used as a tool of state propaganda, affect citizens’ perceptions of politics in an authoritarian context? Using the results of a preregistered online experiment conducted in authoritarian Russia in the wake of the invasion of Ukraine, we propose several answers to this question. Contrary to our preregistered expectations, we do not find consistent evidence suggesting that fact checks decrease general media trust or that citizens are better at independently benchmarking messages about the economy compared with messages about the war based on direct access to issues via personal experience. In line with our preregistered expectations, we find that the use of fact checks by both regime propaganda and oppositional media decreases the perceived credibility of news stories that are being debunked and induces uncertainty, preventing citizens from attributing responsibility for the regime’s policies. Interestingly, this effect is present only among regime critics, pointing to a complex cognitive-emotional interplay between regime support, repression, and information.

We argue that pseudo fact checking represents an effective response to the threat posed by high-choice media environments to authoritarian rule. While an authoritarian regime can effectively control major national television networks and key online media outlets, citizens still encounter information that challenges regime narratives in saturated media environments. In such media spaces, authoritarian propaganda cannot be effective by simply relying on providing incorrect information or censorship. Pseudo fact checking is the next step in the evolution of propaganda, allowing autocrats to respond to this challenge. By preemptively debunking alternative information, authoritarian propaganda can decrease its credibility. It can also induce uncertainty, providing justifications for avoiding attribution of responsibility for those who might have blamed the regime for its policies under different circumstances. While this interpretation remains tentative, we argue that pseudo fact checking may, by inducing uncertainty, provide regime critics with additional justifications to avoid judgment. Taking a critical stance in an authoritarian context is risky, creating incentives to refrain from blaming the regime. Pseudo fact checking may exacerbate this process by inducing uncertainty and providing regime critics with justifications for avoiding judgment. As regime supporters already support the regime, from the regime’s perspective inducing judgment avoidance among critics is a more optimal outcome than reinforcing certainty among loyalists.

Our results make contributions to several fields. First of all, they contribute to the research on pseudo fact checking—a new authoritarian propaganda strategy. Autocrats increasingly adopt the elements of fact checking and use it to spread pro-regime propaganda across autocracies. However, while there is some research on how the format is weaponized by autocrats (e.g., Cho Reference Cho2025; Neo Reference Neo2022; Schuldt Reference Schuldt2021) as well as preliminary conceptualizations of the technique vis-à-vis professional journalistic fact checking projects (e.g., Montaña-Niño et al. Reference Montaña-Niño, Vziatysheva, Dehghan, Badola, Zhu, Vinhas, Riedlinger and Glazunova2024), there are no studies exploring the effects of this strategy on citizens. We demonstrate that just like professional fact checking, pseudo fact checking can reduce the credibility of debunked information, thus providing autocrats with an effective tool to shield themselves from criticism and reinforce authoritarian stability. Moreover, it can also be used as an effective technique to help the regime induce uncertainty and hinder attribution of responsibility among those who might have blamed the regime under different circumstances. This effect provides the regime with new opportunities for manipulation and survival.

Second, our findings contribute to the research on the nature of contemporary authoritarian regimes. Starting from Linz’s ([Reference Linz1975] 2000) seminal work on authoritarian regimes, political scientists have demonstrated that many autocracies have transitioned to a more demobilizational form. Instead of relying on ideological legitimation and large-scale repression, contemporary autocrats often try to make citizens think that their regime is competent enough to satisfy their needs, depoliticize contentious issues, and restrict political participation (Gerschewski Reference Gerschewski2023; Guriev and Treisman Reference Guriev and Treisman2022). We identify a new strategy that autocrats use to reinforce the authoritarian equilibrium. Instead of making autocrats seem more competent, authoritarian propaganda can make citizens passive by confusing them and preventing them from attributing responsibility for policies.

In addition, our findings have important implications for policies implemented by democratic governments toward authoritarian regimes and fact checking. Starting from the beginning of Russia’s full-scale invasion of Ukraine in 2022, Western governments and civil societies have invested considerable resources in puncturing Russia’s “disinformation bubble” (Financial Times 2022). Major European newspapers have begun to translate their materials on the war into Russian to provide credible information to the Russian public (Helsingin Sanomat Reference Sanomat2022), while governments (Malnick Reference Malnick2022) and citizens (Hsu Reference Hsu2022) have been relying on targeted advertising to change Russian citizens’ opinions about the war. Our findings both provide reasons for optimism and demonstrate the potential limits of such counterpropaganda campaigns.

On the one hand, when it comes to credibility, we show that fact checking can work effectively even in nonfree environments, reducing the credibility of authoritarian propaganda without lowering general media trust, as long as the messages themselves do not seek to induce skepticism on a more general level. On the other hand, more does not mean better. Some research suggests that even professional fact checking in democracies can decrease confidence in one’s ability to discern political reality by exposing individuals to incongruous claims (York et al. Reference York, Ponder, Humphries, Goodall, Beam and Winters2020). In politically sensitive authoritarian contexts, where the costs of taking a stance against the regime are high, such contradictions are even more likely to foster uncertainty, offering individuals a convenient psychological rationale for inaction. Given that any independent political information circulated in the Russian media sphere will be in contradiction with what is circulated by the regime media, the actual effect can be demobilizational. While these results do not suggest that the attempts to counter propaganda are generally counterproductive, they emphasize the need to empirically pretest messages used for counterpropaganda efforts to ensure that they do not lead to unintended consequences.