1. Introduction

Design teams are motivated to thoroughly explore the design space, which is believed to increase the likelihood of developing an effective design. Shah, Smith, & Vargas-Hernandez (Reference Shah, Smith and Vargas-Hernandez2003) developed outcome-based evaluation metrics that can be used to determine how well the method (a) explores the design space and (b) expands the design space. After reviewing the literature and considering the fact that domain experts can recognize “good” ideas, they propose four separate effectiveness measures: the novelty, variety, quantity and quality of the ideas generated using the method. For convenience in the rest of this paper, we will refer to Shah et al. by the first letters of each of their last names (SVS), an abbreviation that is often used in the literature.

These terms are defined by SVS:

-

• “Novelty is a measure of how unusual or unexpected an idea is as compared to other ideas.”

-

• “Variety is a measure of the explored solution space during the idea generation process.”

-

• “Quantity is the total number of ideas generated.”

-

• “Quality, in this context, is the measure of the feasibility of an idea and how close it comes to meet[ing] the design specifications.”

Two of these measures, novelty and quality, are explicitly listed as characteristics of an individual idea, although there is also a measure of set quality. The other two, variety and quantity, are measures of the set. If we are to evaluate the effectiveness of an ideation method, we must have measures of the set, rather than just measures of the individual ideas in the set, as the output of an ideation method is a set of ideas. As will be described later, set-specific measures help to indicate how well a given ideation method facilitates the design team’s exploration of the design space.

Both novelty and variety refer to a reference solution space – the actual or potential design space. As described above, novelty is evaluated by comparing the idea to the solution space; novel ideas expand the solution space. Variety is evaluated by determining how much solution space is explored.

SVS’s metrics have been widely used to identify excellent ideation practices. Idea sets with high variety, novelty, quality and quantity are regularly used as examples of, or goals for, good design outcomes (Fu, Sylcott, & Das Reference Fu, Sylcott and Das2019; Blösch-Paidosh & Shea Reference Blösch-Paidosh and Shea2021; Deo et al. Reference Deo, Blej, Kirjavainen and Hölttä-Otto2021; Miller et al. Reference Miller, Hunter, Starkey, Ramachandran, Ahmed and Fuge2021; Song et al. Reference Song, Soria Zurita, Nolte, Singh, Cagan and McComb2021; Schauer, Fillingim, & Fu Reference Schauer, Fillingim and Fu2022; Lee et al. Reference Lee, Daly, Vadakumcherry and Rodriguez2023; Ma et al. Reference Ma, Grandi, McComb and Goucher-Lambert2023; Yuan, Marion, & Moghaddam Reference Yuan, Marion and Moghaddam2023; Das et al. Reference Das, Huang, Xu and Yang2024).

As we have used SVS’s metrics in our design education and research (Anderson, Anderson, & Jensen Reference Anderson, Anderson and Jensen2019; Stapleton et al. Reference Stapleton, Owens, Mattson, Sorensen and Anderson2019; Anderson et al. Reference Anderson, Chanthavane, Broshkevitch, Braden, Bassford, Kim, Fantini, Konig, Owens and Sorensen2022; Mattson et al. Reference Mattson, Geilman, Cook-Wright, Mabey, Dahlin and Salmon2024), we have found some practical limitations to the specific metrics proposed by SVS. These limitations include the following:

-

• The lack of a method to calculate a novelty score for a set.

-

• The lack of a method for determining when two ideas are insufficiently different to be counted separately.

-

• The inability to determine how much an individual idea adds to the variety of the set.

-

• The calculated variety is divided by the number of ideas in the set, so in some sense it is the “average” variety.

-

• The novelty calculation requires a consistent functional decomposition for all the ideas under consideration, which makes SVS difficult to apply to idea sets containing highly divergent ideas.

This paper proposes axioms for metrics for each of these set attributes that reflect best practices in ideation and appropriately reward preferred ideation behavior. We then propose evaluation methods and aggregation functions that satisfy the axioms when applied to ideas placed in a design tree (defined in “Design Trees”). Broadly, these accomplish the following:

-

• Calculate the novelty of an idea.

-

• Calculate the novelty of an idea set based on the novelties of the individual ideas and the design tree relationships.

-

• Calculate the variety of an idea set.

-

• Determine how much an individual idea contributes to the set variety.

-

• Calculate the quality of an idea.

-

• Calculate the quality of an idea set based on the qualities of the individual ideas.

-

• Calculate the quantity of ideas in a set, accounting for their relationships with other ideas.

Note that for each of SVS’s four metrics, we address both the individual ideas and the set of ideas.

The evaluation of novelty, variety and quality generally involves subjective decisions. We propose specific questions that evaluators answer in order to provide as much consistency as possible to these subjective evaluations.

The aggregation functions presented in this paper are used to calculate set properties based on the individual idea properties and the tree relationships were developed to match axioms for ideal behavior of the aggregation functions. The axioms were created consistent with the principles that (a) it is always better to have more ideas, greater variety, more novelty and more quality, and (b) one should not be able to artificially influence the score of the idea set by either adding or removing ideas that are low in variety, novelty or quality.

1.1. Aims

There are four related aims of this work. The first aim is to define axioms about the evaluation of idea sets that describe the behavior of set metrics that will encourage good ideation practices. The second aim is to define evaluation methods for quality, quantity, novelty and variety that can be easily performed on early-stage ideas and have clear operational definitions that lend themselves to future AI evaluation. The third aim is to develop aggregation functions for set quantity, quality, novelty and variety that ensure the axioms are met. The final aim is to present software tools that support the evaluation methods and implement the aggregation functions.

1.2. Significance

As a result of this work, the software tools can be used to repeatably evaluate large idea sets for variety, novelty, quality and quantity. The ability to perform this evaluation helps design researchers as they seek tools to improve ideation. These evaluations can also help designers consider whether their idea set is of sufficient quality to move forward to further design stages.

2. Nomenclature

-

$ \alpha $

$ \alpha $

-

Basic design space fraction for an idea; as

$ i $

goes to

$ i $

goes to

$ \infty $

,

$ \infty $

,

$ {f}_i $

goes to

$ {f}_i $

goes to

$ \alpha $

$ \alpha $

-

$ \beta $

$ \beta $

-

Parameter for reducing

$ {f}_i $

based on the number of family members.

$ {f}_i $

based on the number of family members.

$ \beta $

governs the rate at which

$ \beta $

governs the rate at which

$ {f}_i $

goes to

$ {f}_i $

goes to

$ \alpha $

$ \alpha $

-

$ {\epsilon}_i $

$ {\epsilon}_i $

-

Bonus fraction added to

$ \alpha $

for family member

$ \alpha $

for family member

$ i $

;

$ i $

;

$ {\epsilon}_i $

is

$ {\epsilon}_i $

is

$ 1-\alpha $

for family member 1 and decreases to zero for family member

$ 1-\alpha $

for family member 1 and decreases to zero for family member

$ \infty $

$ \infty $

-

$ {f}_a $

$ {f}_a $

-

Average fraction of

$ {\Omega}_l $

explored by each idea in a family will be less than 1

$ {\Omega}_l $

explored by each idea in a family will be less than 1 -

$ {f}_i $

$ {f}_i $

-

Fraction of

$ {\Omega}_l $

uniquely explored by idea

$ {\Omega}_l $

uniquely explored by idea

$ i $

; will be less than or equal to 1

$ i $

; will be less than or equal to 1 -

$ {f}_t $

$ {f}_t $

-

Total fraction of

$ {\Omega}_l $

explored ideas in a family will be greater than or equal to 1

$ {\Omega}_l $

explored ideas in a family will be greater than or equal to 1 -

$ l $

$ l $

-

Tree level number

-

$ m $

$ m $

-

Number of family members

-

$ {m}_c $

$ {m}_c $

-

Number of children for an element

-

$ n $

$ n $

-

Novelty for an idea, without respect to the level or the number of siblings in the design tree

-

$ N $

$ N $

-

Total novelty for a tree

-

$ {N}_B $

$ {N}_B $

-

Branch novelty: the total novelty of a branch whose head is the element under consideration

-

$ {N}_{B_c} $

$ {N}_{B_c} $

-

Branch novelty for a branch whose head is a child of the element under consideration

-

$ {N}_D $

$ {N}_D $

-

Descendant novelty: the sum of the branch novelties for all children of the element under consideration

-

$ {N}_{D_c} $

$ {N}_{D_c} $

-

Descendant novelty for a child of the element under consideration

-

$ {N}_E $

$ {N}_E $

-

Element novelty for an idea placed in the tree. It is a function of the idea novelty

$ n $

and the level and number of siblings in the design tree

$ n $

and the level and number of siblings in the design tree -

$ {N}_{E_c} $

$ {N}_{E_c} $

-

Element novelty for a child of the element under consideration

-

$ {\Omega}_l $

$ {\Omega}_l $

-

The nominal amount of design space occupied by an idea at level

$ l $

, neglecting any overlap with closely related ideas at that level

$ l $

, neglecting any overlap with closely related ideas at that level -

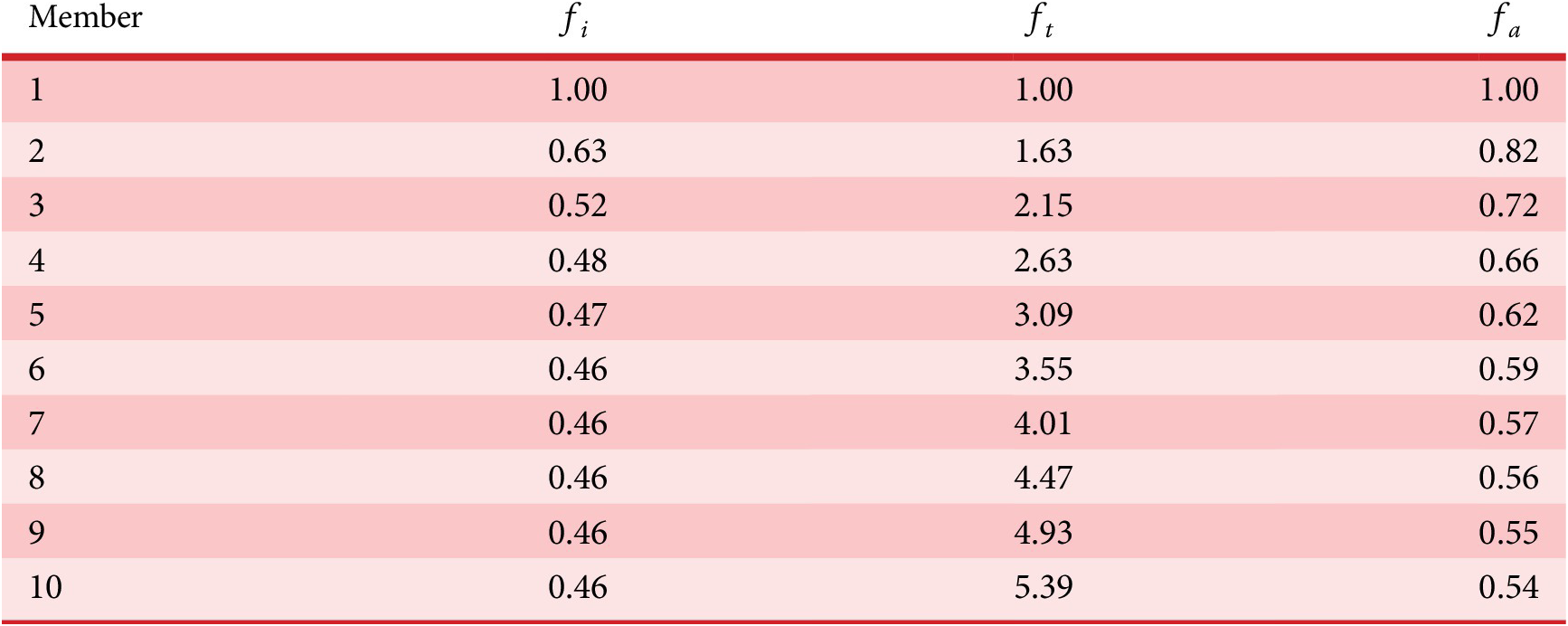

$ P $

$ P $

-

Composite scoring points for novelty of an idea, considering its scarcity in both the current design context and another context where the idea is commonly used. Novel ideas have high values of

$ P $

$ P $

-

$ q $

$ q $

-

Quality of an idea

-

$ {q}_{th} $

$ {q}_{th} $

-

Quality threshold for quality ideas. Ideas with

$ q\le {q}_{th} $

are considered low-quality ideas

$ q\le {q}_{th} $

are considered low-quality ideas -

$ Q $

$ Q $

-

Quality of a set of elements

-

$ {Q}_B $

$ {Q}_B $

-

Total quality of a branch of the tree whose head is the element under consideration

-

$ {Q}_{B_c} $

$ {Q}_{B_c} $

-

Total quality of a branch of the tree whose head is a child of the element under consideration

-

$ {Q}_E $

$ {Q}_E $

-

Set quality of an element

-

$ {S}_c $

$ {S}_c $

-

Scarcity of an idea in the current design context. Common ideas have low scarcity, rare ideas have high scarcity

-

$ {S}_f $

$ {S}_f $

-

Scarcity of an idea in a context other than the current design context, where the idea is most commonly used. Common ideas have low scarcity, rare ideas have high scarcity

-

$ u $

$ u $

-

Idea uniqueness

-

$ U $

$ U $

-

Set quantity: the number of unique ideas in the set

-

$ {U}_B $

$ {U}_B $

-

Set quantity of a branch: the number of unique ideas in a branch whose head is the element under consideration

-

$ {U}_{B_c} $

$ {U}_{B_c} $

-

The number of unique ideas in a branch whose head is a child of the element under consideration

-

$ V $

$ V $

-

Total variety for a tree

-

$ {V}_B $

$ {V}_B $

-

Branch variety: the total variety created by a branch whose head is the element under consideration.

-

$ {V}_{B_c} $

$ {V}_{B_c} $

-

Branch variety for a branch whose head is a child of the element under consideration

-

$ {V}_D $

$ {V}_D $

-

Descendant variety: the sum of the branch varieties for the children of the element under consideration

-

$ {V}_E $

$ {V}_E $

-

Element variety: The variety created by the presence of some element in a design tree.

-

$ {V}_{E_c} $

$ {V}_{E_c} $

-

Element variety for a child of the element under consideration

-

$ {X}_{i,l} $

$ {X}_{i,l} $

-

Amount of design space uniquely explored by family member

$ i $

on tree level

$ i $

on tree level

$ l $

.

$ l $

.

3. Literature review

Shah et al. (Reference Shah, Smith and Vargas-Hernandez2003) laid the groundwork for quantitatively measuring ideation effectiveness as part of the ideation research process. They clearly identified four factors that are important to effective ideation: novelty, quality, quantity and variety. They also proposed specific methods for evaluating novelty for each idea in the set, variety in a group of ideas, quality of a group of ideas and quantity of a set. Many authors have built on SVS’s groundbreaking work to propose improvements in implemented ideation metrics, as described in this section.

3.1. Novelty

Sarkar & Chakrabarti (Reference Sarkar and Chakrabarti2011) focus on novelty. They consider the novelty of a product to be evaluated relative to existing products that meet the same need. They evaluate both functions and structures to determine the level of novelty for the product. They focus on identifying the novelty of an idea, rather than of a set of ideas. They also recognize that design creativity involves both novelty and usefulness.

Sluis-Thiescheffer et al. (Reference Sluis-Thiescheffer, Bekker, Eggen, Vermeeren and De Ridder2016) applied SVS’s novelty calculations to actual data resulting from young children’s design activities. They found some problems with novelty scores, including trivial solutions that have high novelty scores, solutions that have different novelty scores depending on their method of generation, and that there is a relatively narrow distribution of novelty scores, which means we know very little about actual novelty. Instead of a continuous novelty rating for each idea, they propose a binary score (novel, not novel).

Hernandez, Shah, & Smith (Reference Hernandez, Shah and Smith2010) introduce two possible metrics for set novelty: the average novelty of the ideas in the set (average novelty) and the novelty of the most novel idea in the set (best novelty). They make no recommendation as to which is best, and consider that each of the set-based novelty metrics contributes a different view of the set novelty.

Jagtap et al. (Reference Jagtap, Larsson, Hiort, Olander and Warell2015) introduce individual average novelty (IAN), which evaluates ideas only relative to a specific individual idea set, as compared with SVS’s average novelty (AN), which evaluates ideas relative to all available idea sets for a given design challenge. They study the interdependency between novelty and variety for both of these novelty metrics.

Ahmed et al. (Reference Ahmed, Ramachandran, Fuge, Hunter and Miller2018) use pairwise comparisons of ideas to place a small set of ideas on an idea map. They then combine the ratings of multiple raters to identify the ideas with the highest novelty.

3.2. Variety

Nelson et al. (Reference Nelson, Wilson, Rosen and Yen2009) found SVS’s variety metric to be flawed. They claim that, using the SVS variety metric and evaluation approach, it was possible for an idea set with subjectively low variety to receive an erroneously high variety score. They also found that average variety scores are flawed, and therefore propose to use total, rather than normalized variety. They attempt to resolve the problems with SVS’s measure by using different weights for the various tree levels, but provided limited justification that the selected weights were correct.

Verhaegen et al. (Reference Verhaegen, Vandevenne, Peeters and Duflou2015) noted that when idea distributions (trees) were unbalanced, SVS’s and Nelson’s methods both led to problems. They propose the use of a Shannon entropy measure at each level of the tree to calculate variety and provide an online software tool for testing new metrics.

Ahmed et al. (Reference Ahmed, Ramachandran, Fuge, Hunter and Miller2020) suggest that tree-based methods of variety evaluation are unreliable and recommend that entropy-based methods be used instead. They estimated the ground truth variety by creating subsets from a ground set of design items, having human raters make pairwise comparisons of the subsets, and calculating tree-based metrics for the subsets. They find that the Herfindahl–Hirschman Index for Design (HHID) shows better accuracy and discrimination and can be used to identify high-variety subsets.

Ahmed & Fuge (Reference Ahmed and Fuge2017) seek to obtain ranked sets of ideas for diversity and quality, with a goal of obtaining a relatively small set of ideas with high diversity and quality that can serve as a base for future ideation. They demonstrate three methods of numerically calculating similarity and show that these similarity ratings can form the basis of optimal searches for high-diversity, high-quality idea sets.

Henderson et al. (Reference Henderson, Helm, Jablokow, McKilligan, Daly and Silk2017) define a variety metric that evaluates the amount of design space covered by looking at the scores for ideas on other metrics as coordinates in an

![]() $ n $

-dimensional space. This provides a quantitative measure for variety, but makes variety a dependent parameter of the other metrics evaluated for the set.

$ n $

-dimensional space. This provides a quantitative measure for variety, but makes variety a dependent parameter of the other metrics evaluated for the set.

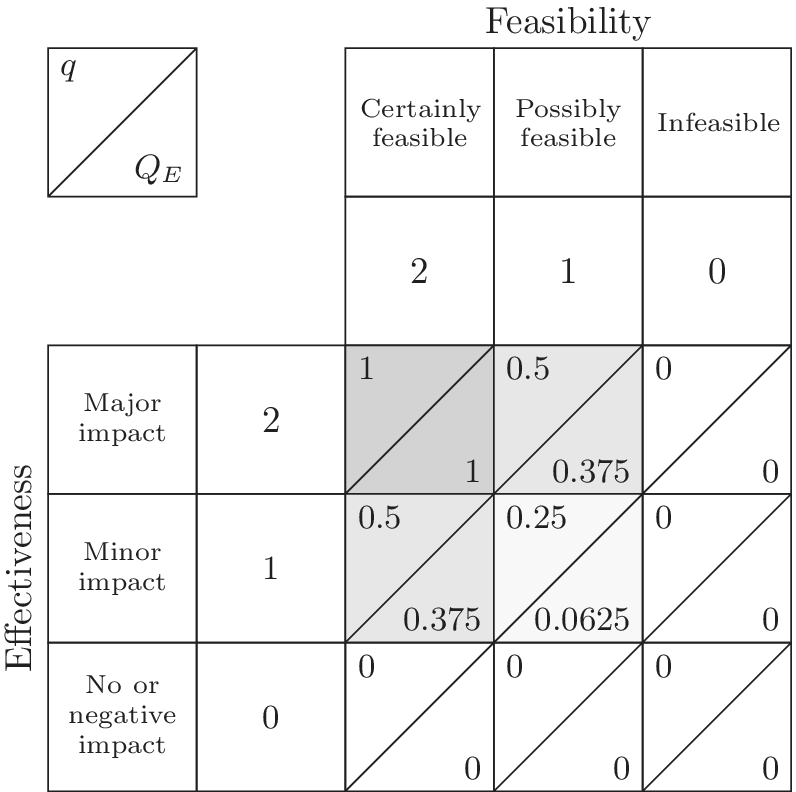

3.3. Quality

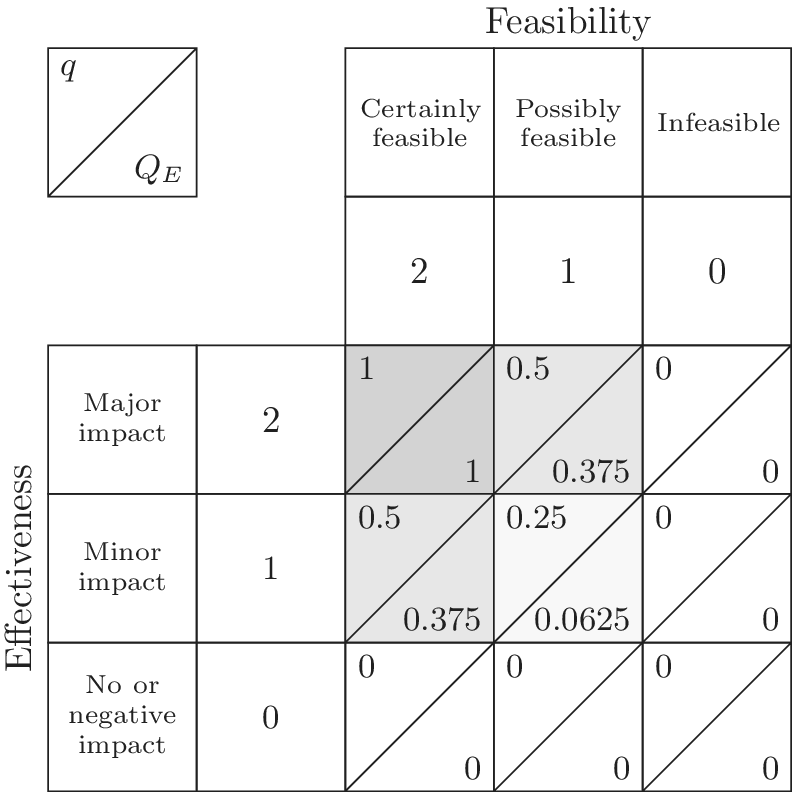

Cheeley et al. (Reference Cheeley, Weaver, Bennetts, Caldwell and Green2018) focused on quality scores and attempted to match ratings to ground truth. They identified two fundamental elements of quality: effectiveness and feasibility. They performed a statistical test to select relative weightings for effectiveness and quality that would allow quality rankings using their formula to match intuitive quality rankings by expert raters. This study showed that quality could be reliably evaluated by evaluating effectiveness and feasibility.

Kudrowitz & Wallace (Reference Kudrowitz and Wallace2013) identified three attributes of quality early-stage ideas: novel, feasible and useful. They evaluated individual product ideas, rather than the complete set of ideas. They considered this evaluation to be a first-pass evaluation.

Reinig, Briggs, & Nunamaker (Reference Reinig, Briggs and Nunamaker2007) focus on the measurement of set quality. They address the importance of identifying unique ideas, and then describe a few known methods for evaluating idea quality, and then discuss three possible set quality metrics: sum-of-quality, average-quality and good-idea-count. They demonstrate that good-idea-count is superior to the other two set quality metrics.

Toh & Miller (Reference Toh and Miller2014) assess idea quality on a five-point scale: does it achieve the desired outcome, is it technically feasible, is it easy to execute, and is it a significant improvement over the existing design. They use the average quality of the ideas for the quality of the set. They assess novelty in two classes: form-based and function-based. They use the average novelty of the ideas for the quality of the set. They evaluated the effect of physical examples and interactions on the novelty and quality of the resulting ideas.

Some researchers – including Patel et al. (Reference Patel, Summers, Morkos and Karmakar2024) – have acknowledged the challenge of predicting how well an early-stage concept will meet design requirements. Given this challenge, Patel et al. (Reference Patel, Summers, Morkos and Karmakar2024) have used addressment as a surrogate for quality, where addressment is the quantity of design requirements the concept addresses.

3.4. Quantity

All the reviewed papers that measured quantity simply counted the number of ideas listed in the set, with no adjustments for nearly identical ideas. We believe that when two ideas are close enough to one another, they should not be counted as distinct ideas. We found no references in the literature that attempted to adjust quantity measures for closely related ideas.

3.5. Weaknesses of literature metrics

Some methods for evaluating idea sets for SVS’s attributes display weaknesses in their use for evaluating idea sets. For example, using the average idea quality as a measure of the set quality means that the number of low-quality ideas will affect the set quality measure. Similarly, using the average quality of the top Y% of the ideas as the set quality measure means that a high-quality idea that is not in the top Y% will have no effect on the set quality measure. Thus, these methods reward discarding low-quality ideas from the set.

Using the count-of-quality proposed by Reinig et al. makes the quality evaluation binary – high-quality ideas get a count of 1; other ideas get a count of 0. This draws no distinction between extremely high-quality ideas and moderately high-quality ideas. This lack of distinction can be problematic.

The average novelty and individual average novelty scores described above will both drop as low-novelty ideas are added to the set. But low-novelty ideas do not decrease the exploration of the design space; they just do not increase the exploration.

When average or other normalized variety scores are used, the number of low-variety ideas is important to the overall variety score. However, the presence of low-variety ideas should not detract from the contribution to variety provided by other ideas in the set. Further, as Nelson et al. have observed, when level weightings are arbitrarily chosen, variety calculations can be inconsistent with subjective variety evaluations.

If overlap between closely related ideas is not considered, quantity can be artificially inflated by making many ideas that are virtually, but not exactly, identical.

3.6. Summary of literature

Many authors have provided methods for evaluating the SVS attributes. However, none have all of the attributes desired for our work, namely:

-

• The ability to evaluate both individual ideas and the set of ideas for all metrics

-

• An a priori description of how the aggregate scoring of an idea set should vary as the individual ideas vary

-

• The ability to quickly evaluate a large number of ideas that have a relatively low level of detail

-

• A well-defined procedure for making subjective judgments, thus helping to increase consistency between raters

-

• Set metrics that encourage the inclusion of all ideas, whether good or bad, in the set to be evaluated

Therefore, we define both simple idea evaluation tools and axiom-based aggregation functions that achieve these goals.

4. Ideas and aggregation functions

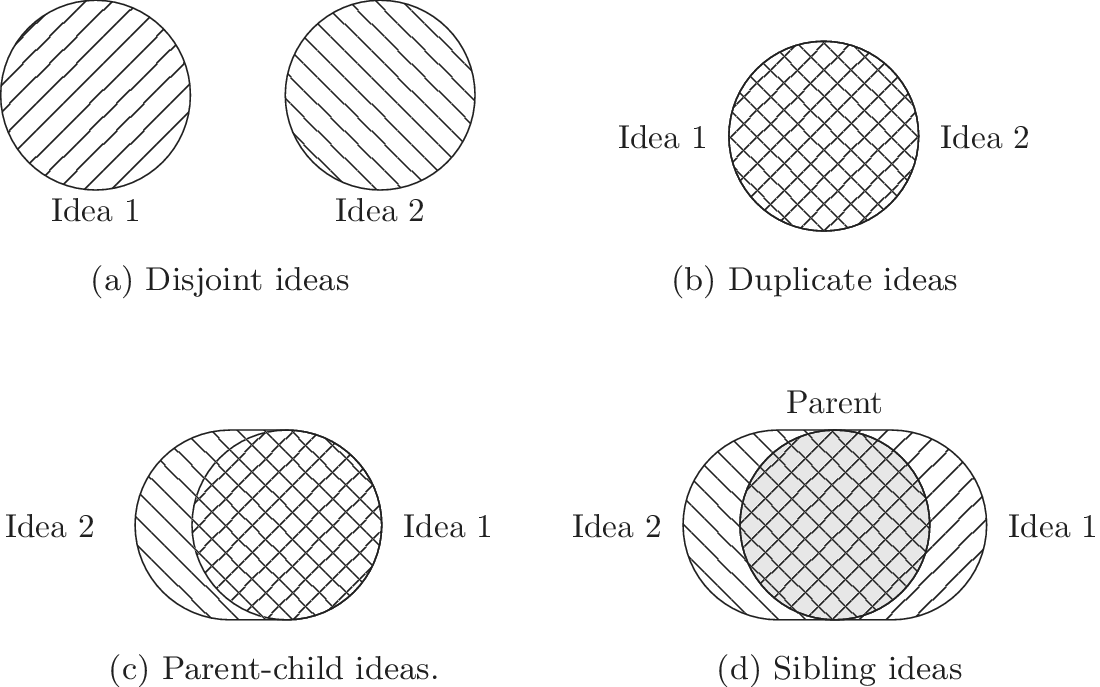

We begin by defining ideas, kinds of aggregation functions and axioms. These definitions are general; they apply to any set of ideas that can be classified by level of abstraction of ideas and similarity (or distance) between ideas.

For purposes of this paper, we define a design idea to be any idea that contributes to the definition of a solution for a design problem. A fully defined conceptual design could be a design idea. A principle that may be used to develop many conceptual designs could be a design idea. A single detail that could be added to a conceptual design could be a design idea.

Design teams can communicate their ideas in many different ways. The most common ways we have found are through words and/or sketches. We allow the team to define the boundaries of a design idea. The content of a single sketch or a single written description is considered a design idea for purposes of our analysis. For brevity, in the rest of this paper, we use the term idea as a synonym for design idea.

An idea set is the complete set of ideas produced during ideation activities intended to develop solutions to a design problem. When multiple teams are working on a particular design problem, each team will have a team idea set, and we can also consider idea sets resulting from combining two or more team idea sets.

For purposes of evaluating variety and novelty, ideas are placed into an idea space. The idea space reflects two important characteristics of design ideas: the level of abstraction of the idea and the similarity of or distance between different ideas. Any means of organizing ideas that reflect these two characteristics can be used to evaluate individual ideas for novelty, variety, quality and quantity. Further, aggregation functions can be developed to combine the individual evaluations into evaluations of set novelty, variety, quality and quantity.

4.1. Aggregation functions

Aggregation functions are used to calculate the properties of a set of ideas based on the characteristics of the individual ideas. Higher scores on the aggregation functions correspond to higher quality, quantity, novelty and variety. Aggregation functions should be designed so that the scores encourage good ideation practices.

For the best possible ideation, the free flow of ideas is encouraged. During ideation, ideas should not be filtered for quality or novelty, because even objectively bad ideas can serve as triggers for better ideas.

Consider a hypothetical situation where design ideation is a formal competition between teams. The teams will be judged on a numeric score for novelty, variety, quality and quantity. The numeric score will be calculated from the novelty, variety, quality and quantity of the individual ideas using aggregation functions. The goal is to find aggregation functions that will encourage desired ideation behaviors and ignore undesired ideation behaviors. Poor ideas should not be penalized, because teams should not be filtering ideas during ideation. The desired behavior of aggregation functions is that they will always reward adding additional ideas and never reward filtering or removing ideas.

Desirable idea evaluation and aggregation functions share the following characteristics:

-

• For any set of ideas, the aggregate score should never be increased by removing low-scoring ideas. This characteristic is necessary to discourage idea filtering either during or after ideation.

-

• Duplicate ideas should not affect the aggregate score for any metrics.

-

• Adding ideas should never reduce the aggregate score for a metric.

-

• Ideas at higher levels of abstraction explore (and if novel, expand) the design space more than ideas at lower levels of abstraction. This is because an idea at a high level of abstraction contains within it the seeds of many less abstract ideas.

-

• Ideas that are closely related contribute less to the exploration and expansion of design space than ideas that are distantly related.

-

• Novelty and quality of an idea can be evaluated independently of the location of the idea in the idea space.

-

• When considering the novelty of a set, both the novelty of an idea and the idea’s location in the idea space should be considered.

5. Axioms for ideation metrics

Based on the desirable characteristics of aggregation functions described above, axioms for set-based metrics of novelty, variety, quality and quantity are presented in this section. As axioms, they cannot be proven, but they are believed to be self-evident. In all cases, the axioms describe how the set-based metrics should change as ideas are added to the set. These axioms should apply to any set of ideas that can be classified by level of abstraction and similarity. They are not limited to tree-based set organization.

5.1. Axioms of variety

We propose the following axioms to describe how variety should change when adding new elements to an idea set:

-

Axiom V-1: Adding a unique entry at any level of abstraction should increase the total variety of the idea set. As a corollary, removing a unique entry at any level of abstraction should reduce the total variety of the set.

-

Axiom V-2: Adding a unique entry at a higher level of abstraction should increase the total variety more than adding a unique entry at a lower level.

-

Axiom V-3: Adding a unique entry at a given level of abstraction that is similar to many other ideas should increase the total variety less than adding a unique entry at the same abstraction level that is different from other ideas.

5.2. Axioms of novelty

We propose four axioms that describe how novelty should change when adding new elements to an idea set. These axioms recognize that novelty describes the expansion of design space, so both the amount of space occupied by an idea and the novelty of the idea will affect the set novelty.

-

Axiom N-1: At a given location in the idea space, an added idea with high novelty adds more to the set novelty than an added idea with low novelty.

-

Axiom N-2: A novel idea at a high level of abstraction adds more to the set novelty than an idea of equivalent novelty at a low level of abstraction.

-

Axiom N-3: An idea with a given novelty located far from other ideas in the idea space adds more to the set novelty than an idea with the same novelty located close to other ideas in the idea space.

-

Axiom N-4: The set novelty should be increased by adding a novel idea. It should be unchanged by adding an idea with zero novelty. As a corollary, the set novelty should never be increased by removing an idea.

5.3. Axioms of quantity

The following axioms are proposed for set quantity scores:

-

Axiom U-1: Duplicates of previously counted ideas should not be counted again when determining set quantity.

-

Axiom U-2: A unique idea added to the tree at a low abstraction level should add the same quantity as a unique idea added at a high abstraction level.

-

Axiom U-3: Only ideas generated by members of the team should be included in the set quantity. Ideas that may be generated by those evaluating the quantity should not add to the set quantity.

5.4. Axioms of set quality

The following axioms are proposed for set quality scores:

-

Axiom Q-1: High-quality ideas should increase the set quality more than moderate- or low-quality ideas

-

Axiom Q-2: Removing low-quality ideas from the set should not increase the set quality; adding low-quality ideas to the set should not decrease the set quality.

-

Axiom Q-3: Regardless of the number of high-quality ideas in the set, adding a high-quality idea should increase the set quality.

When these axioms are met by appropriate aggregation functions, the desired behaviors from “Aggregation Functions” will be achieved.

5.5. Axiom violations with legacy methods

To demonstrate the value of our methodology, we will now show the conditions under which the Axioms are not met with certain legacy methods, particularly SVS, because it is so well-known.

5.5.1. Variety

In Shah et al. (Reference Shah, Smith and Vargas-Hernandez2003), the SVS method is used to evaluate variety for a set with 11 ideas that result in two branches at the physical principle level, five branches at the working principle level, six branches at the embodiment level and four branches at the detail level. With SVS, these abstraction levels are scored as 10, 6, 3 and 1, respectively. Thus, the total Variety score is calculated as:

If one of the branches is removed from the detail level, the calculation becomes:

The SVS variety score has increased as a result of removing an idea, which violates Axiom V-1.

5.5.2. Novelty

SVS does not explicitly include a method for calculating a set novelty score, so there is no basis for evaluating SVS against these axioms. We believe it is useful to have a set novelty score, and necessary if researchers are going to use metrics to compare ideation methods. Later work (Nelson et al. (Reference Nelson, Wilson, Rosen and Yen2009), Hernandez et al. (Reference Hernandez, Shah and Smith2010) and Jagtap et al. (Reference Jagtap, Larsson, Hiort, Olander and Warell2015)) suggests average novelty as an idea set metric. Average novelty would fail to meet Axiom N-2 and Axiom N-3 because it would not account for location in the idea space. Further, it is trivial to show that adding a low-novelty idea would reduce the average novelty score, which would violate Axiom N-4. Hernandez et al. (Reference Hernandez, Shah and Smith2010) propose Best Novelty as a complementary metric, but it also fails to meet Axiom N-4, because any novel ideas that are less novel than the most novel idea add nothing to the score.

5.5.3. Quantity

SVS considers an idea to include a leaf element from the tree along with all of its parent elements. Only ideas that differ in at least one level are considered distinct. Thus, SVS meets all of the axioms. We wish to have the possibility of considering a single element at any level of the tree as an idea, which requires a different algorithm for determining idea quantity.

5.5.4. Quality

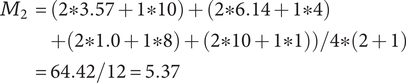

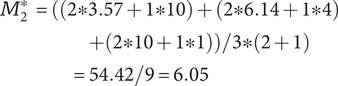

The SVS method calculates set quality through a weighted sum of component scores that reflect how well ideas meet certain functions or characteristics. In Shah et al. (Reference Shah, Smith and Vargas-Hernandez2003), an example is presented of four ideas (A-D) that are evaluated for manufacturability and minimum weight, which have a relative weighting of 2 and 1, respectively. The minimum weight scores for ideas A-D are 3.57, 6.14, 1.0 and 10, respectively. The manufacturability scores are 10, 8, 4 and 1, respectively. Thus, the total Quality score is calculated as:

$$ {\displaystyle \begin{array}{c}{M}_2=\left(2\ast 3.57+1\ast 10\right)+\left(2\ast 6.14+1\ast 4\right)\\ {}\hskip1.5em +\left(2\ast 1.0+1\ast 8\right)+\left(2\ast 10+1\ast 1\right)\Big)/4\ast \left(2+1\right)\\ {}=64.42/12=5.37\end{array}} $$

$$ {\displaystyle \begin{array}{c}{M}_2=\left(2\ast 3.57+1\ast 10\right)+\left(2\ast 6.14+1\ast 4\right)\\ {}\hskip1.5em +\left(2\ast 1.0+1\ast 8\right)+\left(2\ast 10+1\ast 1\right)\Big)/4\ast \left(2+1\right)\\ {}=64.42/12=5.37\end{array}} $$

If idea C is removed from the set, the calculation becomes:

$$ {\displaystyle \begin{array}{c}{M}_2^{\ast }=\Big(\left(2\ast 3.57+1\ast 10\right)+\left(2\ast 6.14+1\ast 4\right)\\ {}\hskip17em +\left(2\ast 10+1\ast 1\right)\Big)/3\ast \left(2+1\right)\\ {}\hskip15.5em =54.42/9=6.05\end{array}} $$

$$ {\displaystyle \begin{array}{c}{M}_2^{\ast }=\Big(\left(2\ast 3.57+1\ast 10\right)+\left(2\ast 6.14+1\ast 4\right)\\ {}\hskip17em +\left(2\ast 10+1\ast 1\right)\Big)/3\ast \left(2+1\right)\\ {}\hskip15.5em =54.42/9=6.05\end{array}} $$

The SVS quality score has increased as a result of removing an idea. It is similarly trivial to show that adding a 5th idea that is low quality would decrease the set quality score (a 5th idea with a weighted quality score of 10, like idea C, would result in a set quality score of 4.96). This violates Axiom Q-2. Because the SVS quality calculation is essentially a weighted average, it is also easy to show that it does not meet Axiom Q-3.

6. Design trees, exploration of design space and aggregation functions

6.1. Design trees

A common way of representing the structure in an idea set is to use a tree structure (sometimes called a “genealogy tree”) (Shah et al. Reference Shah, Smith and Vargas-Hernandez2003; Nelson et al. Reference Nelson, Wilson, Rosen and Yen2009; Verhaegen et al. Reference Verhaegen, Vandevenne, Peeters and Duflou2015; Ahmed et al. Reference Ahmed, Ramachandran, Fuge, Hunter and Miller2020).

A tree is a structure consisting of elements and edges. Elements are nodes in the design tree. There are two different types of elements in a design tree. Idea elements are ideas generated by the design team that have been placed as nodes in the tree. Organizational elements are nodes that have been placed in the tree during the analysis of an idea set to ensure that each element has a parent element. Edges connect elements at different levels of the tree, indicating a relationship between the elements. Levels in the tree range from the lowest level, which contains the most detailed information, to the highest level, which contains the most general information. When an edge connects two elements, the element at the higher level is considered a parent in the relationship, while the element at the lower level is considered a child in the relationship. A parent can have multiple children, but a child can have only one parent. A family of elements is defined as a set of elements sharing a common parent. An element with no children is considered a leaf element of the tree. A branch of the tree consists of an element and all of its descendants.

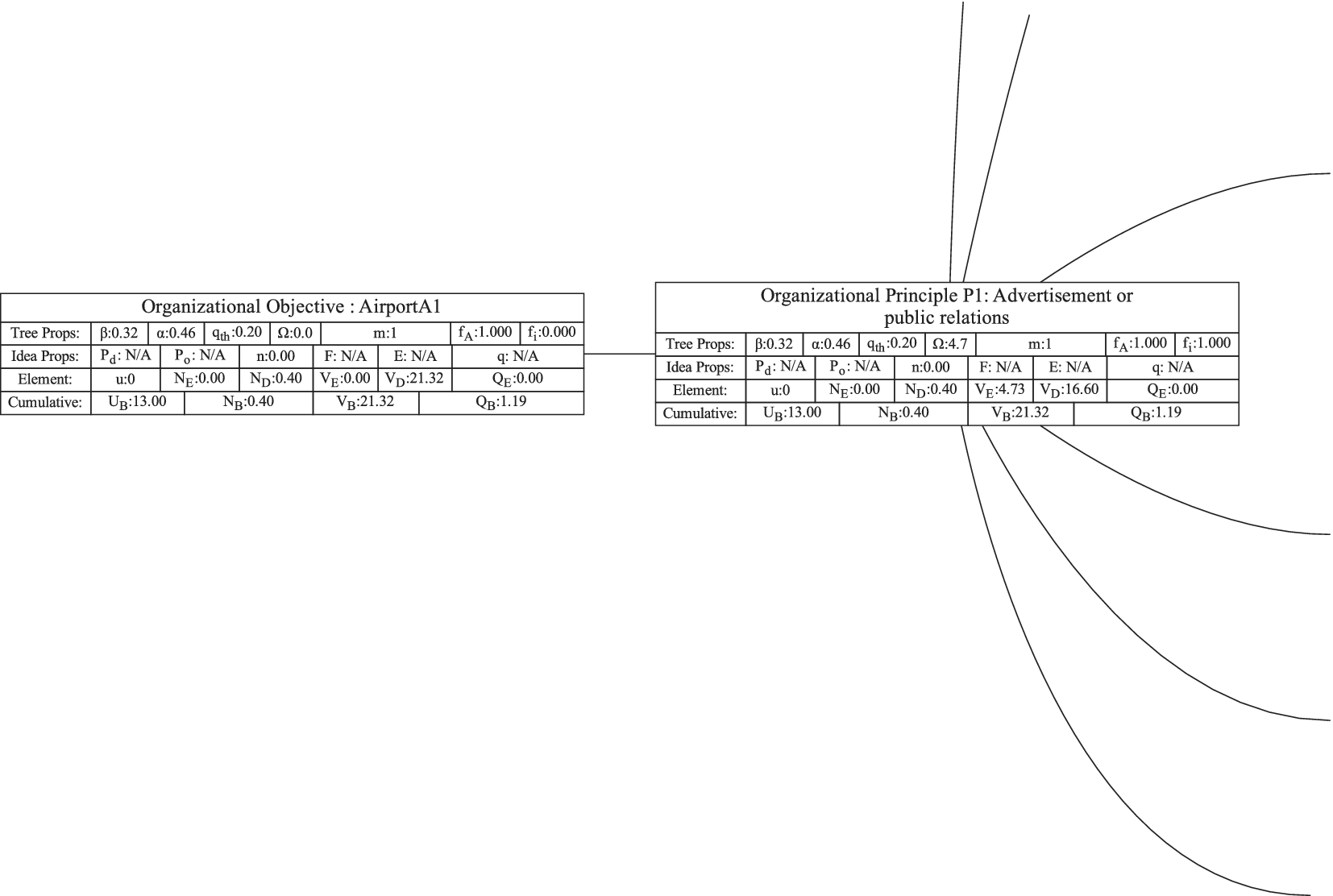

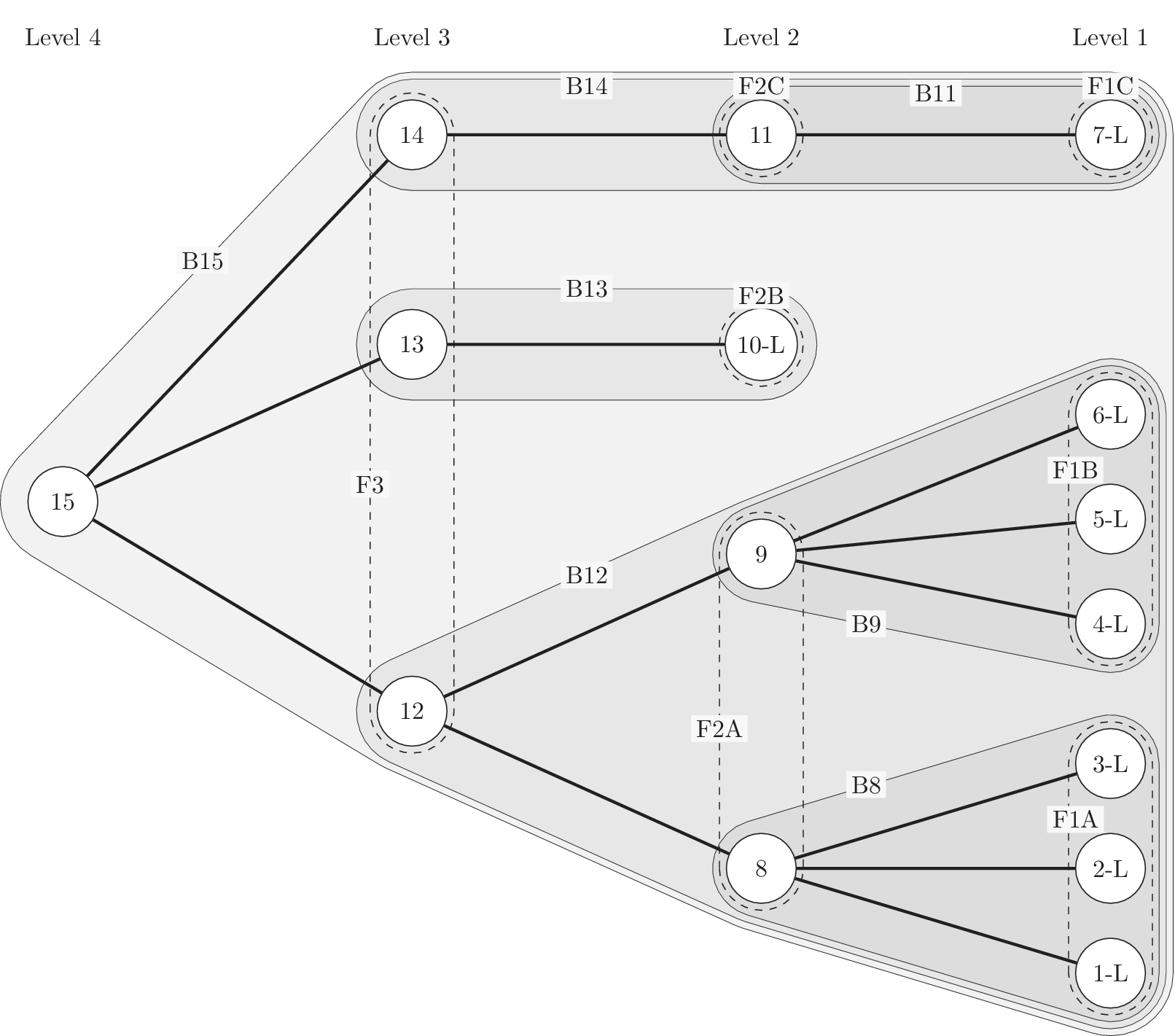

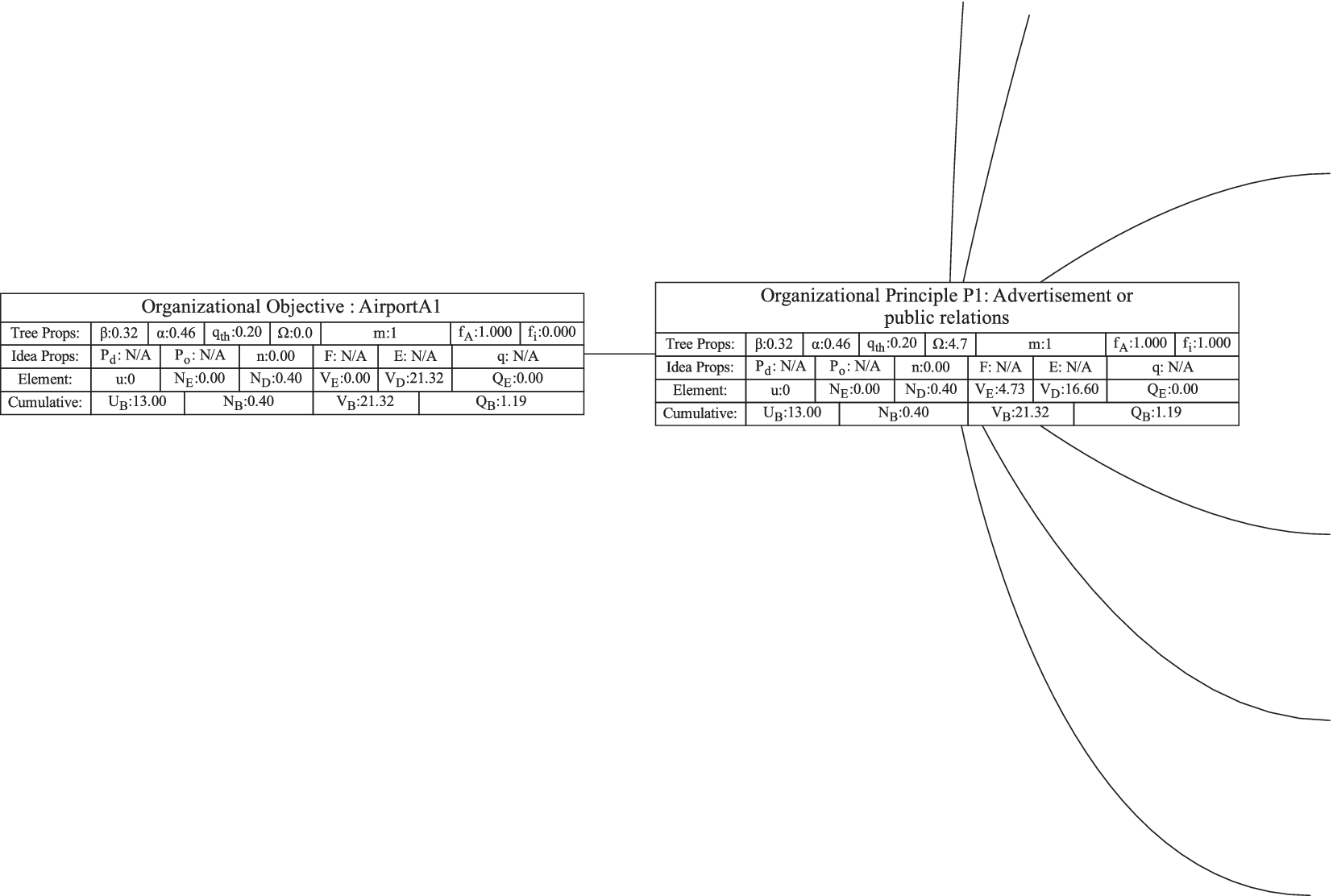

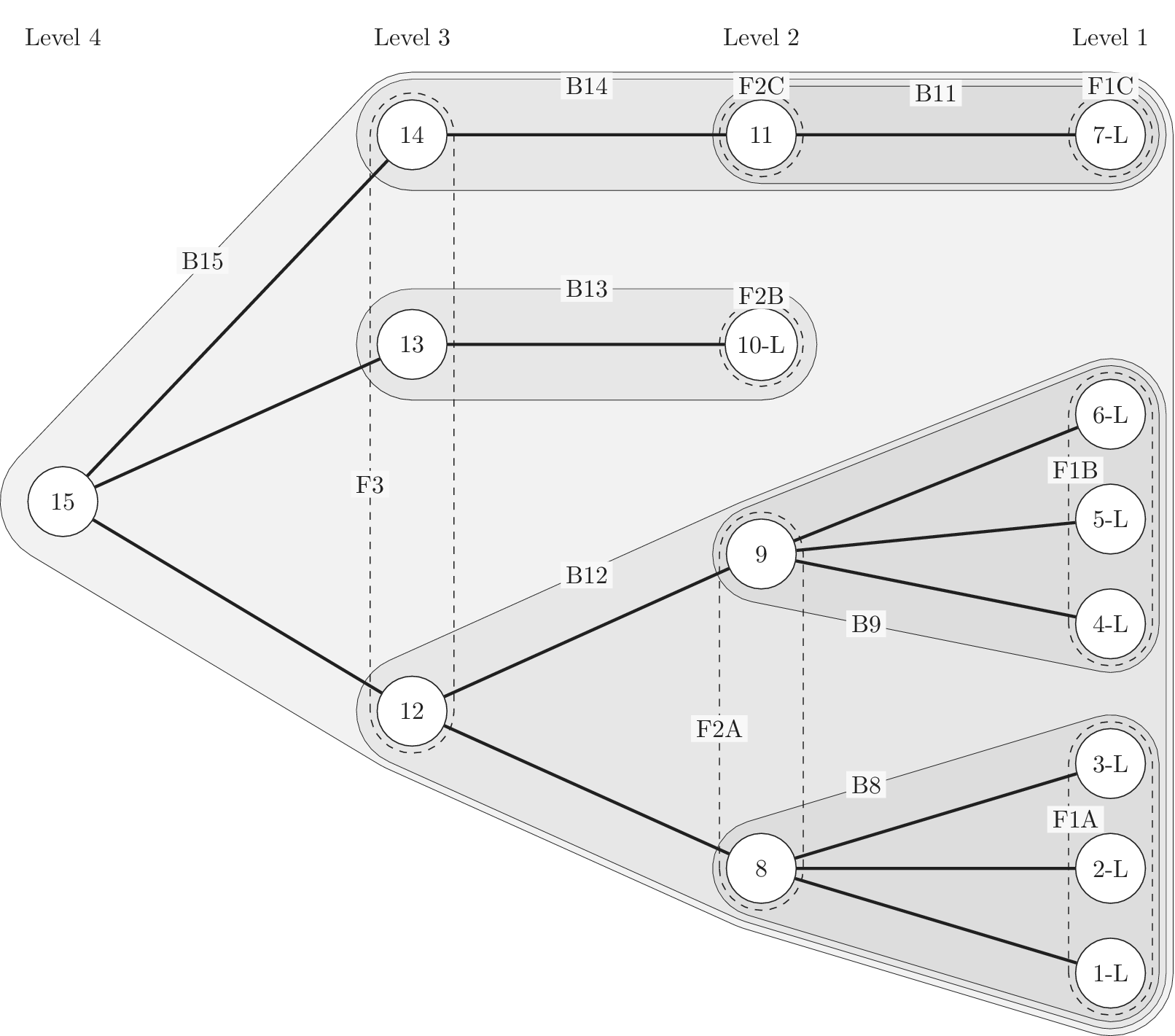

Figure 1 shows a simple genealogy tree. Elements are shown as circles. Leaf elements are labeled with the letter L. Families are enclosed in vertical dashed ovals. Branches are enclosed in polygons with rounded corners.

A sample four-level design tree. The level increases from right to left. Elements are shown by circles; their numbering is arbitrary. Leaf elements have an L following the element number. Families (groups of elements sharing a common parent, and which may have only a single member) are enclosed by dashed lines. They are labeled with F plus the level of the family. Branches are shown by shaded polygons with rounded corners. They are labeled with B plus the element number of the root element in the branch. There are eight leaf elements, seven families and seven branches in this tree.

A design tree is created by placing design ideas into a tree as elements of the tree. In this paper, when we evaluate the characteristics of an idea independent of its location in the tree, we calculate the idea properties. In contrast, when we evaluate the characteristics of an idea considering its relationships as an element of the tree, we calculate the element properties.

In order to evaluate the exploration and expansion of the design space (variety and novelty), we organize the idea set into a hierarchical tree structure. The structure we use is called an objective–principle–embodiment–detail (OPED) tree. Note that any tree structure would work in place of the OPED tree. For example, the physical principle – working principle – embodiment – detail tree used by Shah et al. (Reference Shah, Smith and Vargas-Hernandez2003) would work just as well. Every idea in the set is placed in the tree as an idea element at a particular tree level with an edge connecting it to a parent element at the next-higher level of the tree. Organizing the ideas in this fashion may require the evaluator to add organizational elements to the tree to ensure that each idea element in the tree has a parent. This is necessary to implement the scoring algorithm that follows, but does not bias the scoring, as will be demonstrated below.

For this paper, we define the levels in the OPED tree as follows:

Objective level: The desired outcome driving the ideation, which, on its own, describes the benefit provided by any idea in the set to the user or customer. This should be common to all ideas in the set. The objective describes what is to be done, not how it is to be done.

If we were doing a design project for removing ice, snow, and frost from a car windshield, an appropriate objective might be “Obtain an ice-free windshield.”

Principle level: A top-level statement of how the objective is to be achieved. A principle must be general enough that there can be multiple ways of embodying the principle. It often contains a verb as part of the principle description. One way of identifying a principle is to use the following statement and fill in the blank: “An idea could achieve the objective by __________________.”

For the objective “Obtain an ice-free windshield,” principles might include “Remove ice,” “Melt ice,” “Prevent ice formation,” and “Prevent ice adhesion.”

Embodiment level: A solution idea expressed in tangible or visible form. A way to think about an embodiment is that it has sufficient detail that it can be communicated in a concept sketch (which could apply to product, process or software design). The embodiment generally consists of a noun and one or more adjectives. The embodiment must represent a specific object.

For the example design, an embodiment might be “Ice scraper,” or “Windshield cover.”

Detail level: A solution idea that adds to, but does not fundamentally change, the embodiment. If the detail is removed, the embodiment remains.

From the example, consider a design idea for “Ice scraper with hand-warming mitten attached.” This would be a detail under the embodiment “Ice scraper.”

The decision between embodiment and detail is partly subjective, because both the embodiment and the detail are expressed in tangible or visible form. However, the tangible form of a detail adds something that is not required to be part of the embodiment.

As described above, the Objective level contains the desired outcome, not ideas for achieving the desired outcome. Thus, the Objective is not part of the generated ideas and serves only as an organizational element. The trees in this paper have only a single objective, but if desired, a tree can be created with multiple objectives.

It is anticipated that during ideation, there will often be ideas generated at the principle, embodiment and detail levels. However, if the team has not explicitly placed the ideas into a tree structure, it is unlikely that the idea elements will properly complete the design tree. For a well-formed tree, every element must ultimately be linked to the objective through a series of ancestors. Thus, every detail needs a parent embodiment, every embodiment needs a parent principle, and every principle needs a parent objective. During the analysis of the idea set, organizational elements will be added to the tree as needed to provide a parent for each idea element of the set. Guidelines for evaluating the relationships between ideas are given in “Idea Uniqueness.”

6.2. Design trees and design space exploration

Recall that SVS indicates that variety is a measure of how well the ideas explore the design space and novelty is a measure of how well the ideas expand the design space. The OPED tree is a representation of the explored region of design space. If novelty scores are assigned to individual ideas, the novelty can be used to estimate how much the design space is expanded. The detailed framework for doing this is described in “Evaluating and Aggregating Variety (Exploration of Design Space)” and “Evaluating and Aggregating Novelty (Expansion of Design Space).”

We recognize that the OPED tree cannot describe the total design space. It can only describe the explored space (because the ideas that have been generated represent exploration). Given this limitation, the OPED trees can only produce relative measures, but we believe that even the relative measures are useful.

The fundamental basis of this method is the recognition that every idea, regardless of its tree level, explores a finite amount of design space. Closely related ideas will overlap one another in the design space, so the amount of space explored per idea is less than for distantly related ideas. The total amount of explored space can be calculated from the sum of the individual spaces minus the overlapping spaces. The amount of explored design space is used in the calculation of set variety and set novelty.

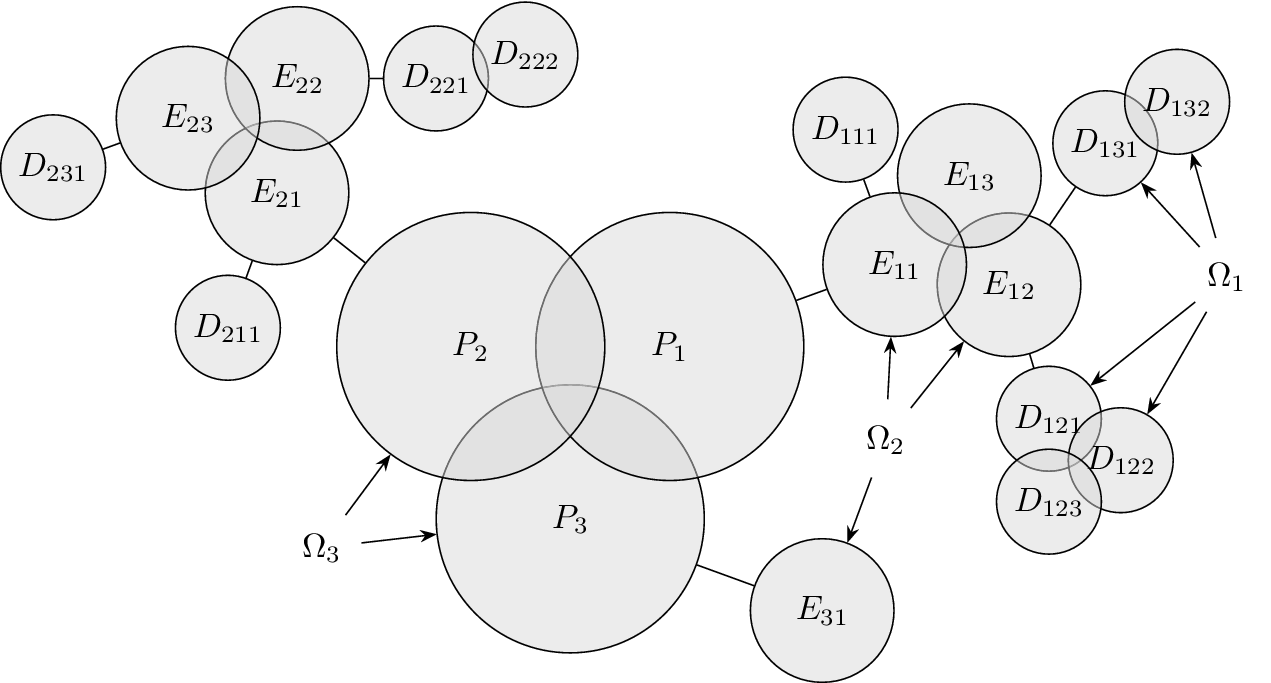

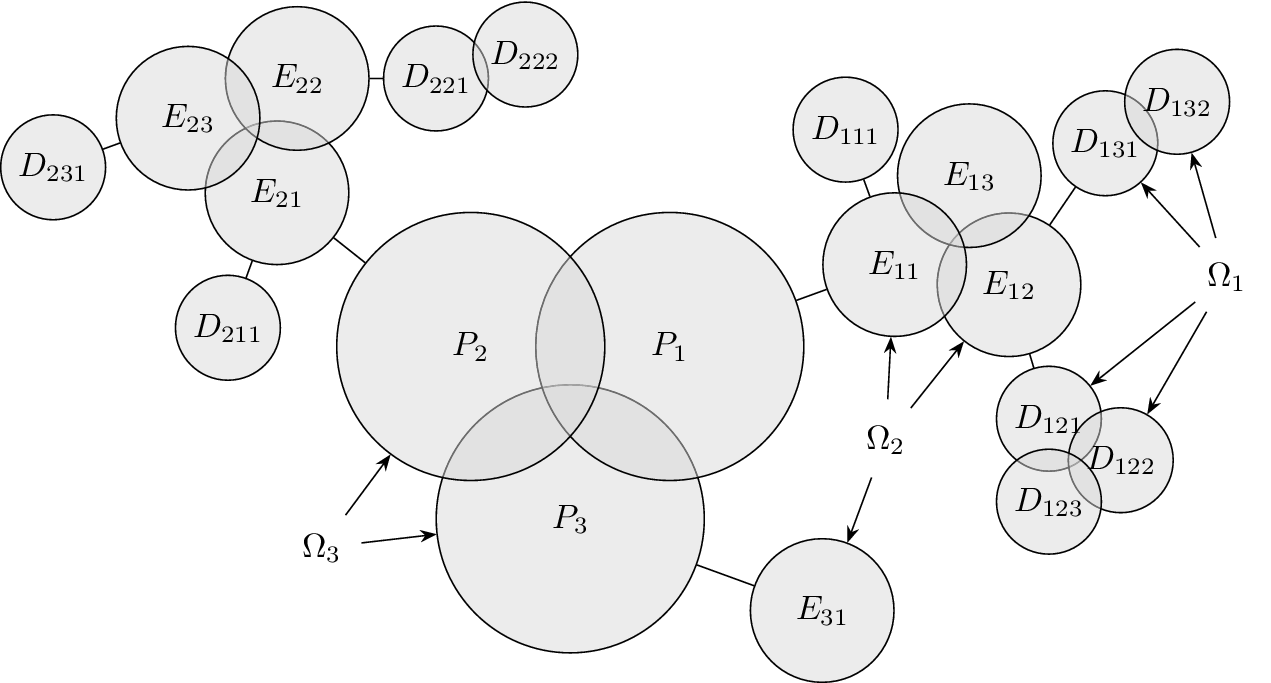

Figure 2 shows a schematic representation of the design space captured by a design tree. Circles in the figure represent the amount of design space explored by an idea. In this figure, ideas at the principle level (

![]() $ l=3 $

) are labeled

$ l=3 $

) are labeled

![]() $ {P}_i $

, ideas at the embodiment level (

$ {P}_i $

, ideas at the embodiment level (

![]() $ l=2 $

) are labeled

$ l=2 $

) are labeled

![]() $ {E}_{ij} $

(where index

$ {E}_{ij} $

(where index

![]() $ i $

is the index of the principle that is the parent of the embodiment) and ideas at the detail level (

$ i $

is the index of the principle that is the parent of the embodiment) and ideas at the detail level (

![]() $ l=3 $

) are labeled

$ l=3 $

) are labeled

![]() $ {D}_{ijk} $

(where index

$ {D}_{ijk} $

(where index

![]() $ ij $

is the index of the embodiment, i.e., the parent of the detail). To help make the parent/child relationships clearer, a line connects each parent to the cluster of ideas that are its children.

$ ij $

is the index of the embodiment, i.e., the parent of the detail). To help make the parent/child relationships clearer, a line connects each parent to the cluster of ideas that are its children.

Schematic representation of the design space explored by ideas in a tree structure. Two important concepts are illustrated: (1) individual ideas at higher levels of the tree explore more space than individual ideas at lower levels of the tree, and (2) closely related ideas overlap one another in the design space. The circles for principles, embodiments and details represent the amount of design space explored by an element at the principle (

![]() $ l=3 $

), embodiment (

$ l=3 $

), embodiment (

![]() $ l=2 $

) and detail (

$ l=2 $

) and detail (

![]() $ l=1 $

) levels, respectively. This figure does not indicate the absolute location of any of the ideas in the design space.

$ l=1 $

) levels, respectively. This figure does not indicate the absolute location of any of the ideas in the design space.

Although drawn as a two-dimensional space, the design tree space has no axes. The absolute location of an idea is arbitrary. However, the relative locations of two ideas are not arbitrary. Closely related ideas (siblings in the design tree) overlap one another. Distantly related ideas (those that are not siblings) do not overlap. The only conclusion we can draw about the distance between non-overlapping ideas is that their separation is greater than the characteristic size of the explored space for an idea at a given level.

The area of the rectangle in the figure represents the amount of design space available for meeting the objective. Each idea explores a part of the area of the design space shown by a circle in the figure. The size of the circle represents

![]() $ {\Omega}_l $

, which is the amount of design space explored by an idea at level

$ {\Omega}_l $

, which is the amount of design space explored by an idea at level

![]() $ l $

. Principles (

$ l $

. Principles (

![]() $ l=3 $

) explore more design space (and thus have larger circles representing

$ l=3 $

) explore more design space (and thus have larger circles representing

![]() $ {\Omega}_3 $

) than embodiments (

$ {\Omega}_3 $

) than embodiments (

![]() $ l=2 $

, circle of

$ l=2 $

, circle of

![]() $ {\Omega}_2 $

), which explore more design space than details (

$ {\Omega}_2 $

), which explore more design space than details (

![]() $ l=1 $

, circle of

$ l=1 $

, circle of

![]() $ {\Omega}_1 $

). When ideas are closely related, their circles overlap. When they are distantly related, their circles will not overlap. When circles overlap, the amount of design space uniquely explored by a given idea is reduced by the overlap with closely related ideas.

$ {\Omega}_1 $

). When ideas are closely related, their circles overlap. When they are distantly related, their circles will not overlap. When circles overlap, the amount of design space uniquely explored by a given idea is reduced by the overlap with closely related ideas.

6.3. Design space for family members

When a first child is added to an element in the tree, a family consisting of that child is created. Each member of a family can be considered to explore an amount of design space (including overlaps) of

![]() $ {\Omega}_l $

, which depends on the level of the family. However, due to overlaps with family members, the amount of design space uniquely explored by an idea (called

$ {\Omega}_l $

, which depends on the level of the family. However, due to overlaps with family members, the amount of design space uniquely explored by an idea (called

![]() $ {X}_{i,l} $

) may be less than

$ {X}_{i,l} $

) may be less than

![]() $ {\Omega}_l $

. We define the member fraction

$ {\Omega}_l $

. We define the member fraction

![]() $ {f}_i $

to be

$ {f}_i $

to be

![]() $ {X}_{i,l}/{\Omega}_l $

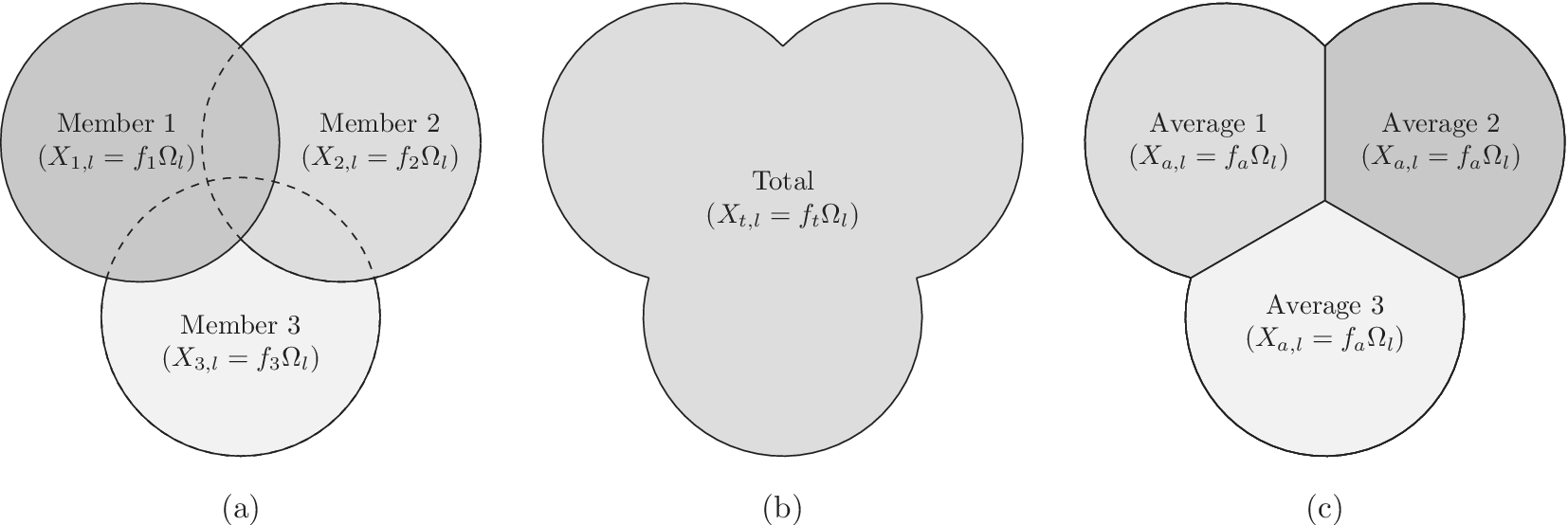

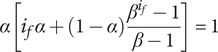

. As shown schematically in Figure 3, the member fraction for the first member of the family (

$ {X}_{i,l}/{\Omega}_l $

. As shown schematically in Figure 3, the member fraction for the first member of the family (

![]() $ {f}_1 $

) is 1. The member fraction for family member

$ {f}_1 $

) is 1. The member fraction for family member

![]() $ 2 $

will be less than 1 and is denoted

$ 2 $

will be less than 1 and is denoted

![]() $ {f}_2 $

.

$ {f}_2 $

.

![]() $ {f}_3 $

, the member fraction for family member

$ {f}_3 $

, the member fraction for family member

![]() $ 3 $

, is less than

$ 3 $

, is less than

![]() $ {f}_2 $

. Exact formulas for

$ {f}_2 $

. Exact formulas for

![]() $ {f}_i $

are provided later. Note that the total design space uniquely allocated to family member

$ {f}_i $

are provided later. Note that the total design space uniquely allocated to family member

![]() $ i $

at level

$ i $

at level

![]() $ l $

is given by

$ l $

is given by

![]() $ {X}_{i,l}={f}_i{\Omega}_l $

.

$ {X}_{i,l}={f}_i{\Omega}_l $

.

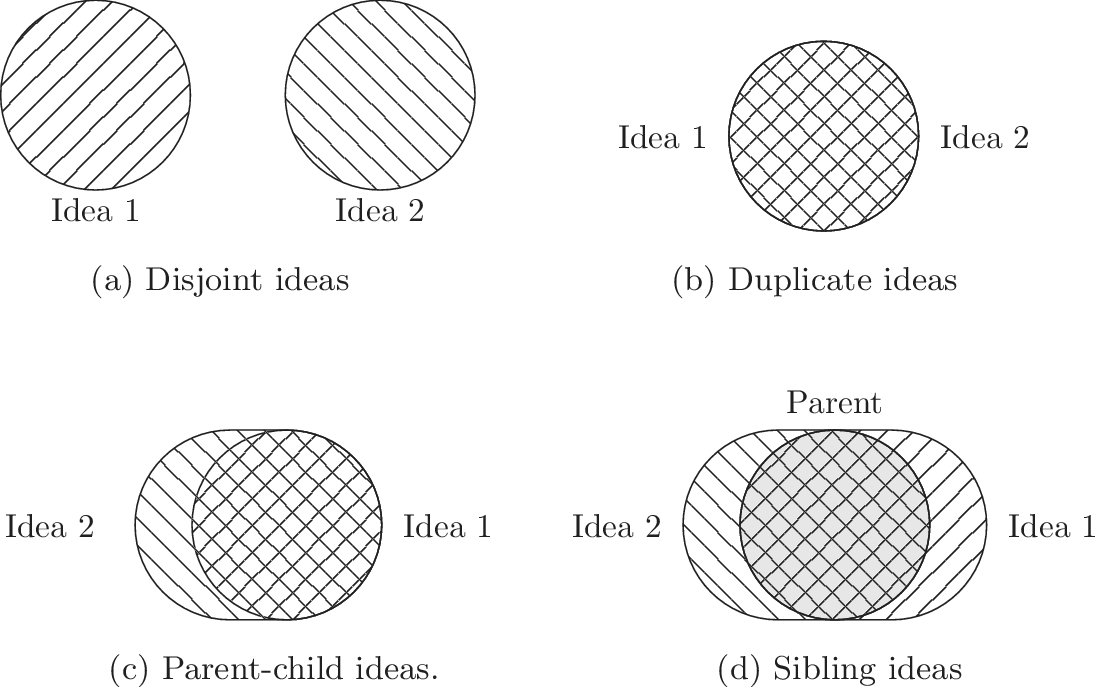

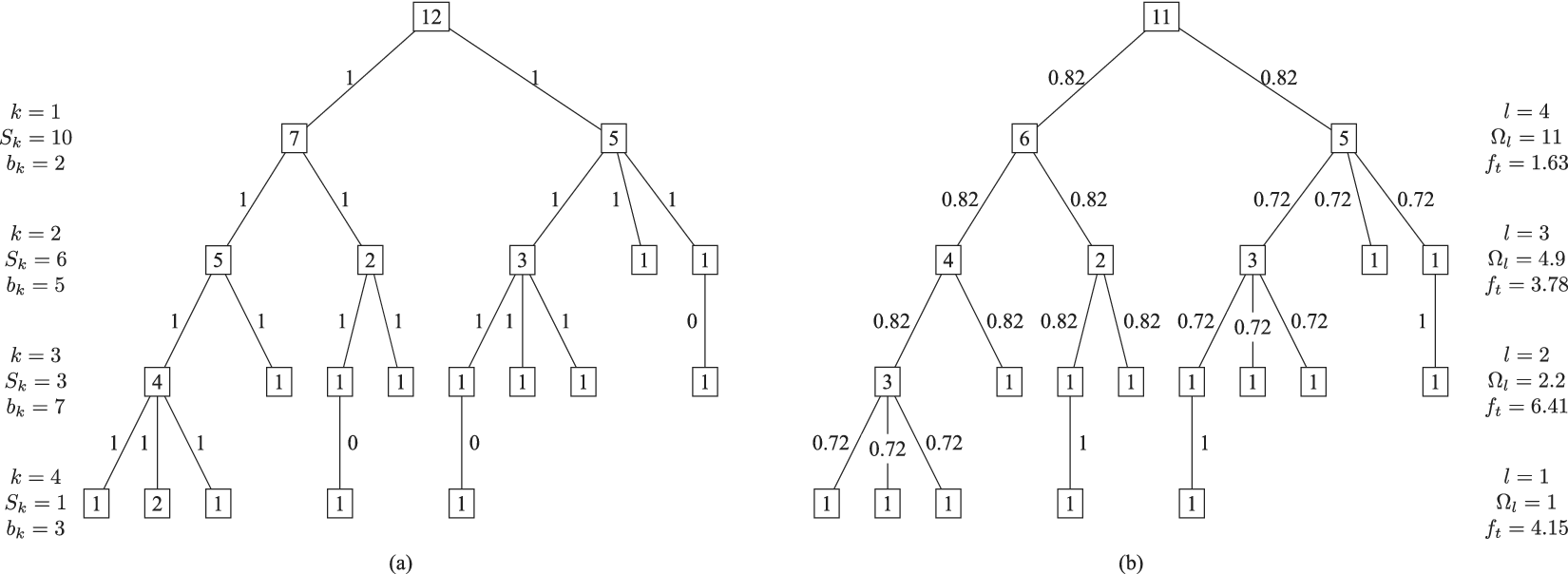

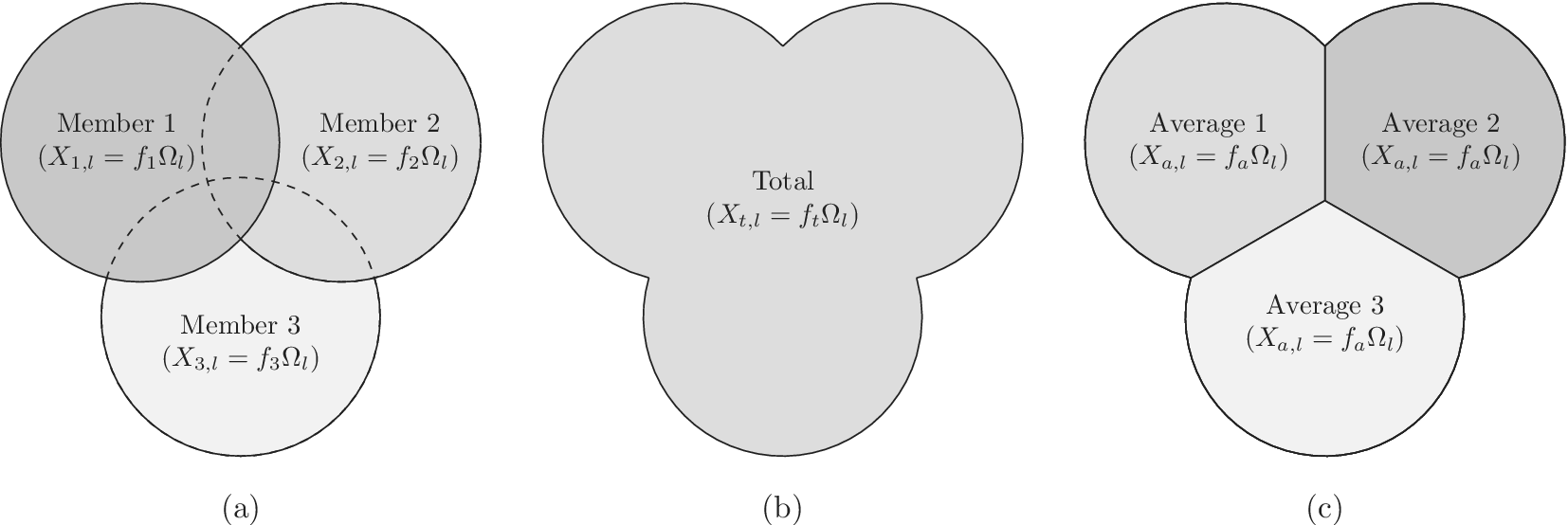

Schematic representation of member fraction, total fraction and average fraction for a set of three ideas in a family at level

![]() $ l $

. For this representation, the area of the circle is

$ l $

. For this representation, the area of the circle is

![]() $ {\Omega}_l $

, and the fraction is a part of the circle. In part (a), we see the three overlapping ideas on the same level. All three circles have the same explored area of

$ {\Omega}_l $

, and the fraction is a part of the circle. In part (a), we see the three overlapping ideas on the same level. All three circles have the same explored area of

![]() $ {\Omega}_l $

. Member 1 has a member fraction

$ {\Omega}_l $

. Member 1 has a member fraction

![]() $ {f}_1 $

of 1, as all the space is assumed to be uniquely explored by Member 1. Member 2 has a member fraction

$ {f}_1 $

of 1, as all the space is assumed to be uniquely explored by Member 1. Member 2 has a member fraction

![]() $ {f}_2 $

less than 1 due to its overlap with member 1, so its uniquely explored design space is less. Member 3 has a member fraction

$ {f}_2 $

less than 1 due to its overlap with member 1, so its uniquely explored design space is less. Member 3 has a member fraction

![]() $ {f}_3 $

less than

$ {f}_3 $

less than

![]() $ {f}_2 $

due to overlap with both members 1 and 2. Part (b) shows the total design space explored by the family, which is a multiple of

$ {f}_2 $

due to overlap with both members 1 and 2. Part (b) shows the total design space explored by the family, which is a multiple of

![]() $ {\Omega}_l $

called

$ {\Omega}_l $

called

![]() $ {f}_t $

. Part (c) shows the average fraction

$ {f}_t $

. Part (c) shows the average fraction

![]() $ {f}_a $

for each member when we have no basis for determining which idea explores the most design space.

$ {f}_a $

for each member when we have no basis for determining which idea explores the most design space.

As shown in Figure 3(b), the total design space explored by a family will be greater than the space explored by any single idea in the family. The total family space is a multiple of

![]() $ {\Omega}_l $

called the total fraction

$ {\Omega}_l $

called the total fraction

![]() $ {f}_t $

.

$ {f}_t $

.

![]() $ {f}_t $

is the sum of the member fractions for all family members.

$ {f}_t $

is the sum of the member fractions for all family members.

$$ {f}_t=\sum \limits_{i=1}^m{f}_i $$

$$ {f}_t=\sum \limits_{i=1}^m{f}_i $$

where

![]() $ m $

is the number of family members.

$ m $

is the number of family members.

As we add members to the family, the total fraction

![]() $ {f}_t $

increases, but the member fraction for each additional member

$ {f}_t $

increases, but the member fraction for each additional member

![]() $ {f}_i $

decreases, due to greater overlap of ideas in the family. This is shown schematically in Figure 3.

$ {f}_i $

decreases, due to greater overlap of ideas in the family. This is shown schematically in Figure 3.

When evaluating the novelty of design ideas, we number the ideas according to decreasing novelty. The most novel idea then explores the most design space, and as the novelty of ideas decreases, the amount of design space explored by the less-novel idea decreases as well.

When evaluating variety, the numbering scheme for members is arbitrary (i.e., there is no reason for calling one idea member 1 and another idea member 2). This makes it inappropriate to assume that one idea explores more design space than another. In this case, we calculate an average fraction

![]() $ {f}_a $

to assign to every member of the family, as shown in Figure 3(c).

$ {f}_a $

to assign to every member of the family, as shown in Figure 3(c).

![]() $ {f}_a $

is the total fraction divided by the number of members.

$ {f}_a $

is the total fraction divided by the number of members.

7. Applying the axioms to design trees

As previously described, design trees contain levels and families. Tree levels are measures of the level of abstraction for an idea. A higher level in the tree corresponds to a higher level of abstraction. The number of family members in the design tree is used as the number of closely related ideas. In the design tree analysis, we have no independent measure of the distance between families, so our distances are relative, rather than absolute.

When applying these tree elements to the axioms, we obtain the axioms for use with design trees.

7.1. Tree variety

-

Axiom TV-1: Adding a unique entry at any level of the tree should increase the total variety of the idea set.

-

Axiom TV-2: Adding a unique entry at a higher level of the tree should increase the total variety more than adding a unique entry at a lower level.

-

Axiom TV-3: Adding a unique entry at a given level of the tree that has many family members should increase the total variety less than adding a unique entry at the same level with few family members.

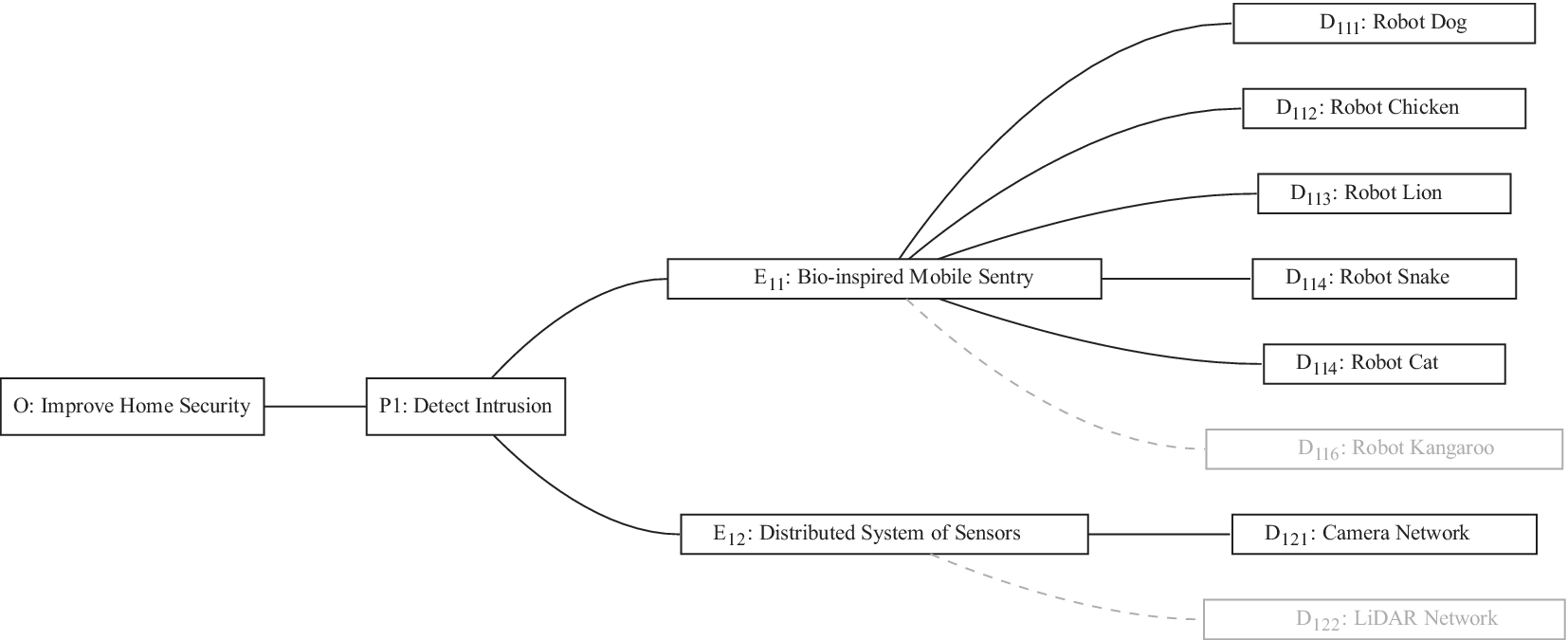

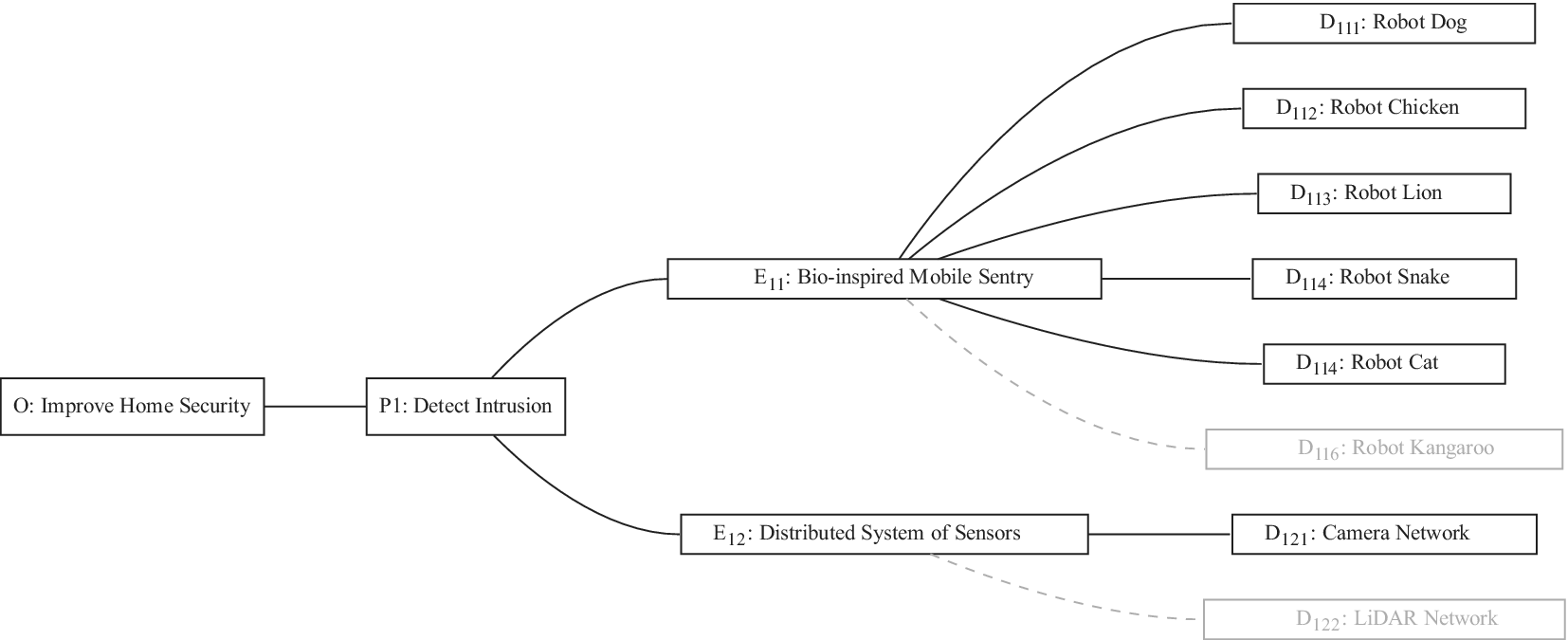

An example may help explain the meaning of the last axiom. Figure 4 shows partial results of an ideation session focused on improving home security. As shown, an embodiment idea of a bio-inspired mobile robot sentry was generated (

![]() $ {E}_{11} $

). Detailed ideas for this embodiment included robot dog (

$ {E}_{11} $

). Detailed ideas for this embodiment included robot dog (

![]() $ {D}_{111} $

), robot chicken (

$ {D}_{111} $

), robot chicken (

![]() $ {D}_{112} $

), robot lion (

$ {D}_{112} $

), robot lion (

![]() $ {D}_{113} $

), robot snake (

$ {D}_{113} $

), robot snake (

![]() $ {D}_{114} $

) and robot cat (

$ {D}_{114} $

) and robot cat (

![]() $ {D}_{115} $

), for a total of five details.

$ {D}_{115} $

), for a total of five details.

A sample design tree showing a subset of the ideas generated. The objective is to improve home security. The only principle shown in the figure is detecting intrusion. There are two embodiments shown:

![]() $ {E}_{11} $

and

$ {E}_{11} $

and

![]() $ {E}_{12} $

.

$ {E}_{12} $

.

![]() $ {E}_{11} $

has four details, with a potential fifth shown in gray.

$ {E}_{11} $

has four details, with a potential fifth shown in gray.

![]() $ {E}_{12} $

has one detail, with a potential second shown in gray. As discussed in the text, adding

$ {E}_{12} $

has one detail, with a potential second shown in gray. As discussed in the text, adding

![]() $ {D}_{122} $

should add more variety to the set than adding

$ {D}_{122} $

should add more variety to the set than adding

![]() $ {D}_{116} $

. Also, if

$ {D}_{116} $

. Also, if

![]() $ {D}_{122} $

and

$ {D}_{122} $

and

![]() $ {D}_{116} $

are equally novel ideas, adding

$ {D}_{116} $

are equally novel ideas, adding

![]() $ {D}_{122} $

should add more novelty to the set than adding

$ {D}_{122} $

should add more novelty to the set than adding

![]() $ {D}_{116} $

.

$ {D}_{116} $

.

An alternative embodiment idea is a system of stationary sensors scattered throughout the house (

![]() $ {E}_{12} $

). The only detailed idea for this embodiment is a camera network (

$ {E}_{12} $

). The only detailed idea for this embodiment is a camera network (

![]() $ {D}_{121} $

).

$ {D}_{121} $

).

An alternative idea at the detail level for a robot kangaroo (

![]() $ {D}_{116} $

) should add less variety to the set than an idea at the detail level for a stationary LiDAR detection system (

$ {D}_{116} $

) should add less variety to the set than an idea at the detail level for a stationary LiDAR detection system (

![]() $ {D}_{122} $

).

$ {D}_{122} $

).

7.2. Tree novelty

-

Axiom TN-1: At a given location in the design tree, an idea with high novelty adds more to the set novelty than an idea with low novelty.

-

Axiom TN-2: A novel idea at a high level of the tree adds more to the set novelty than an equivalent idea at a low level of the tree.

-

Axiom TN-3: An idea with a given novelty in a small family adds more to the set novelty than an idea with the same novelty in a large family.

-

Axiom TN-4: The set novelty should be increased by adding a novel idea. It should be unchanged by adding an idea with zero novelty. As a corollary, the set novelty should never be increased by removing an idea.

7.3. Tree quantity

-

Axiom TU-1: Duplicates of previously counted ideas should not be counted again when determining set quantity.

-

Axiom TU-2: The set quantity should not depend on the tree level at which the ideas are expressed.

-

Axiom TU-3: Organizational elements added to the tree by evaluators should not affect the set quantity.

7.4. Tree quality

-

Axiom TQ-1: High-quality ideas should increase the set quality more than moderate-quality ideas.

-

Axiom TQ-2: Removing low-quality ideas from the set should not increase the set quality; adding low-quality ideas to the set should not decrease the set quality.

-

Axiom TQ-3: Regardless of the number of high-quality ideas in the set, adding a high-quality idea should increase the set quality.

Note that the tree quality axioms are identical to the general quality axioms, as the quality axioms refer to neither the level of abstraction nor the distance from adjacent ideas. However, we list them here for convenience.

8. Evaluating and aggregating variety (exploration of design space)

In this section, we discuss how variety is evaluated and aggregated in the context of a design tree. If some other organization of the idea space is used, new evaluation and aggregation functions will need to be defined and used.

SVS explain that variety is a measure of the explored design space. Each idea that is developed adds to the exploration of design space. The assessment of the explored design space cannot be completed without considering the relationships of the different ideas. Thus, we cannot calculate an idea variety (variety that ignores the location in the tree). Instead, we first calculate the element variety (variety of the idea in its location in the tree). We then calculate the branch varieties for successively higher branches of the tree (elements with all their descendants), culminating in the total variety for the set. In addition, because each idea increases the explored design space, the variety of an idea set increases as the number of principles, embodiments and details in the idea set increases.

8.1. Element variety

As described in “Design Space for Family Members,” each idea placed in the tree to form an element explores a certain region of design space, the amount of which depends on the level of the element and the number of siblings. We call the amount of design space explored by an element the explored space

![]() $ {X}_{i,l} $

.

$ {X}_{i,l} $

.

where

![]() $ {\Omega}_l $

is the amount of design space explored by each idea at level

$ {\Omega}_l $

is the amount of design space explored by each idea at level

![]() $ l $

, and

$ l $

, and

![]() $ {f}_i $

is the fraction of

$ {f}_i $

is the fraction of

![]() $ {\Omega}_l $

uniquely explored by member element

$ {\Omega}_l $

uniquely explored by member element

![]() $ i $

in the family.

$ i $

in the family.

In this section, we develop functions for

![]() $ {\Omega}_l $

and

$ {\Omega}_l $

and

![]() $ {f}_i $

that can be used with simple aggregation functions to meet the variety axioms. We will start with

$ {f}_i $

that can be used with simple aggregation functions to meet the variety axioms. We will start with

![]() $ {f}_i $

.

$ {f}_i $

.

The function

![]() $ {f}_i $

should have a value of 1 for the first family member, and decrease with increasing value of

$ {f}_i $

should have a value of 1 for the first family member, and decrease with increasing value of

![]() $ i $

. However, it should have a non-zero asymptote, because regardless of the number of family members, an additional unique idea will increase the amount of design space explored.

$ i $

. However, it should have a non-zero asymptote, because regardless of the number of family members, an additional unique idea will increase the amount of design space explored.

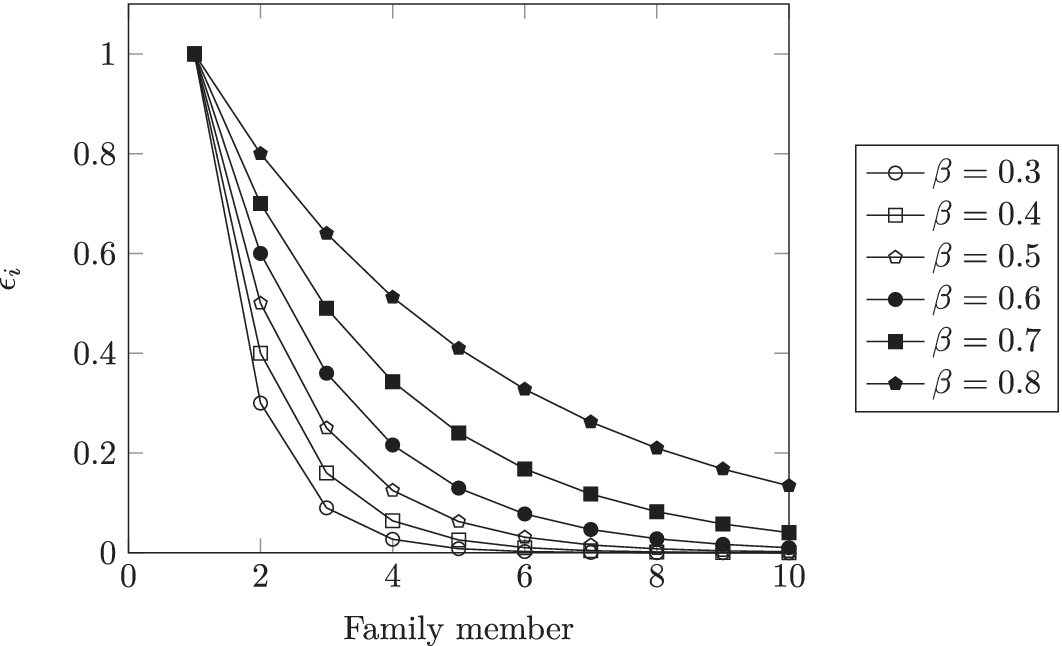

The function chosen to meet these criteria

![]() $ {f}_i $

is:

$ {f}_i $

is:

where

![]() $ \alpha $

and

$ \alpha $

and

![]() $ \beta $

are parameters with values between 0 and 1 that are used to adjust the amount of design space allocated to members of a family.

$ \beta $

are parameters with values between 0 and 1 that are used to adjust the amount of design space allocated to members of a family.

Note that for

![]() $ i=1 $

,

$ i=1 $

,

![]() $ {f}_i=1 $

; for

$ {f}_i=1 $

; for

![]() $ i=\infty $

,

$ i=\infty $

,

![]() $ {f}_i $

has the limiting value

$ {f}_i $

has the limiting value

![]() $ \alpha $

. Thus

$ \alpha $

. Thus

![]() $ \alpha $

describes the lower limit of the design space a single idea can occupy.

$ \alpha $

describes the lower limit of the design space a single idea can occupy.

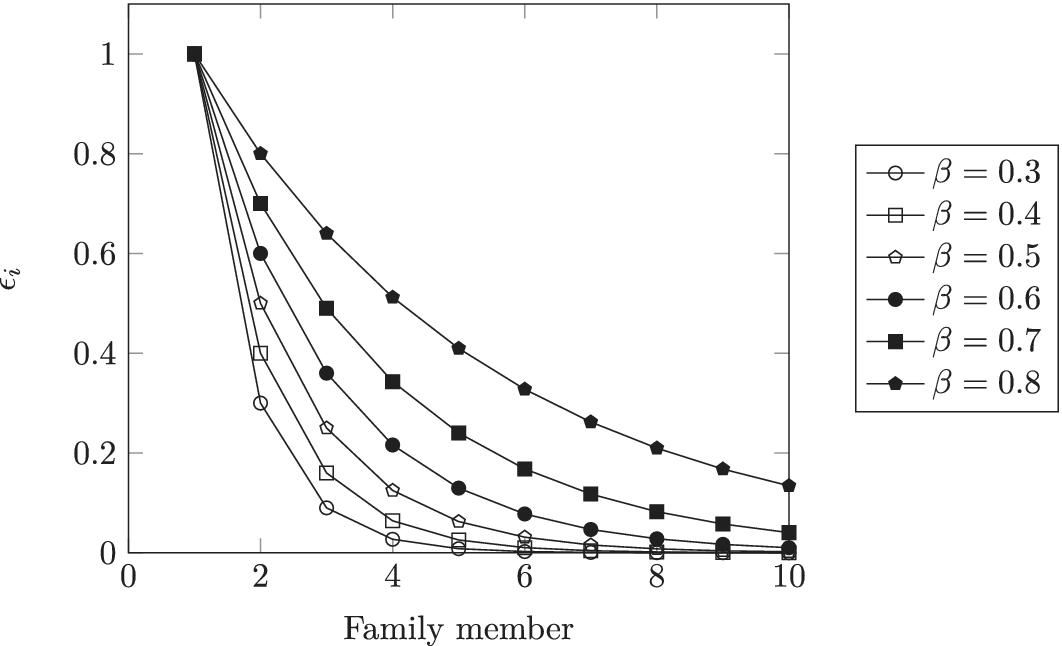

![]() $ \beta $

is a shape parameter that defines how rapidly

$ \beta $

is a shape parameter that defines how rapidly

![]() $ {f}_i $

falls toward its limiting value of

$ {f}_i $

falls toward its limiting value of

![]() $ \alpha $

. The choice of specific values for

$ \alpha $

. The choice of specific values for

![]() $ \alpha $

and

$ \alpha $

and

![]() $ \beta $

is discussed in “Choosing Values for Evaluation Parameters.”

$ \beta $

is discussed in “Choosing Values for Evaluation Parameters.”

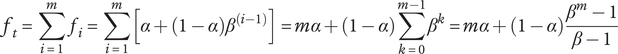

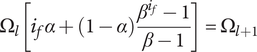

The total fraction for the family is given by the sum of the member fractions in the family:

$$ {f}_t=\sum \limits_{i=1}^m{f}_i=\sum \limits_{i=1}^m\left[\alpha +\left(1-\alpha \right){\beta}^{\left(i-1\right)}\right]= m\alpha +\left(1-\alpha \right)\sum \limits_{k=0}^{m-1}{\beta}^k= m\alpha +\left(1-\alpha \right)\frac{\beta^m-1}{\beta -1} $$

$$ {f}_t=\sum \limits_{i=1}^m{f}_i=\sum \limits_{i=1}^m\left[\alpha +\left(1-\alpha \right){\beta}^{\left(i-1\right)}\right]= m\alpha +\left(1-\alpha \right)\sum \limits_{k=0}^{m-1}{\beta}^k= m\alpha +\left(1-\alpha \right)\frac{\beta^m-1}{\beta -1} $$

where

![]() $ m $

is the number of family members (the identity for this finite series is found in Abramowitz & Stegun (Reference Abramowitz and Stegun1972)).

$ m $

is the number of family members (the identity for this finite series is found in Abramowitz & Stegun (Reference Abramowitz and Stegun1972)).

In order to meet Axiom TV-2,

![]() $ {\Omega}_l $

is chosen to ensure that the minimum possible explored design space for an idea at level

$ {\Omega}_l $

is chosen to ensure that the minimum possible explored design space for an idea at level

![]() $ l+1 $

is greater than the maximum possible explored space for an element at level

$ l+1 $

is greater than the maximum possible explored space for an element at level

![]() $ l $

. The minimum explored design space at level

$ l $

. The minimum explored design space at level

![]() $ l+1 $

is for an idea with infinite family members, where the member fraction is

$ l+1 $

is for an idea with infinite family members, where the member fraction is

![]() $ \alpha $

. Thus the minimum value of explored design space on level

$ \alpha $

. Thus the minimum value of explored design space on level

![]() $ l+1 $

is

$ l+1 $

is

![]() $ {X}_{\infty, l+1}=\alpha {\Omega}_{l+1} $

. The maximum explored design space at level

$ {X}_{\infty, l+1}=\alpha {\Omega}_{l+1} $

. The maximum explored design space at level

![]() $ l $

is for the first idea in a family

$ l $

is for the first idea in a family

![]() $ {X}_{1,l}={\Omega}_l $

. Setting

$ {X}_{1,l}={\Omega}_l $

. Setting

![]() $ {X}_{\infty, l+1}={X}_{1,l} $

we obtain a recurrence relation for

$ {X}_{\infty, l+1}={X}_{1,l} $

we obtain a recurrence relation for

![]() $ \Omega $

:

$ \Omega $

:

Without loss of generality, we can define

![]() $ {\Omega}_1=1 $

, then

$ {\Omega}_1=1 $

, then

With this substitution, equation (7) becomes

When calculating variety, there is no basis for deciding which idea is the first, and which is the last, so we do not use a different value

![]() $ {f}_i $

for each family member. Instead, we use the average fraction for the family

$ {f}_i $

for each family member. Instead, we use the average fraction for the family

![]() $ {f}_a $

, given by

$ {f}_a $

, given by

$$ {f}_a=\frac{f_t}{m}=\frac{m\alpha +\left(1-\alpha \right)\frac{\beta^m-1}{\beta -1}}{m} $$

$$ {f}_a=\frac{f_t}{m}=\frac{m\alpha +\left(1-\alpha \right)\frac{\beta^m-1}{\beta -1}}{m} $$

We then define the variety for each idea in the family to be an average amount of explored design space per family member

![]() $ {X}_{a,l} $

$ {X}_{a,l} $

$$ {V}_E={X}_{a,l}={f}_a{\Omega}_l=\frac{m\alpha +\left(1-\alpha \right)\frac{\beta^m-1}{\beta -1}}{\alpha^{l-1}m} $$

$$ {V}_E={X}_{a,l}={f}_a{\Omega}_l=\frac{m\alpha +\left(1-\alpha \right)\frac{\beta^m-1}{\beta -1}}{\alpha^{l-1}m} $$

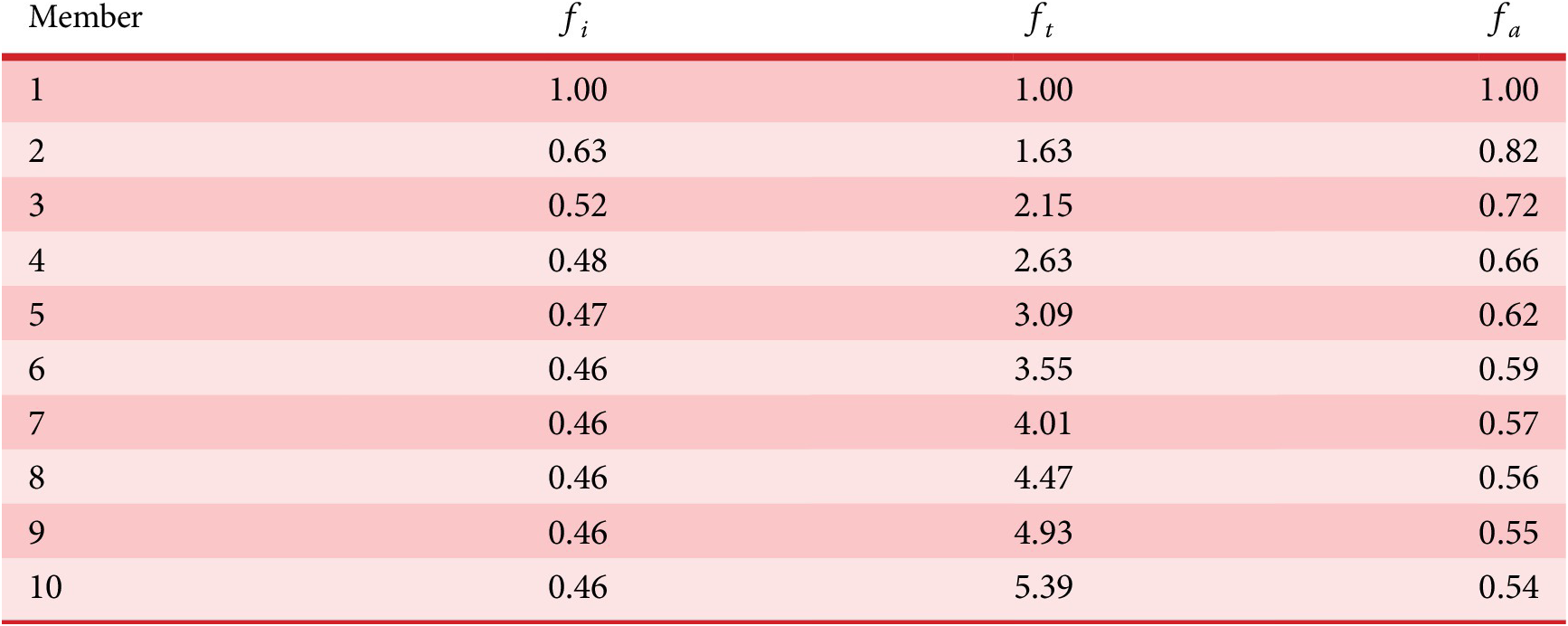

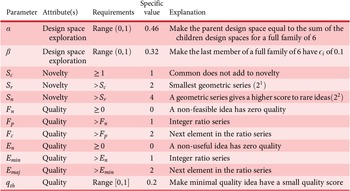

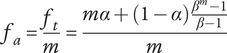

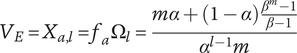

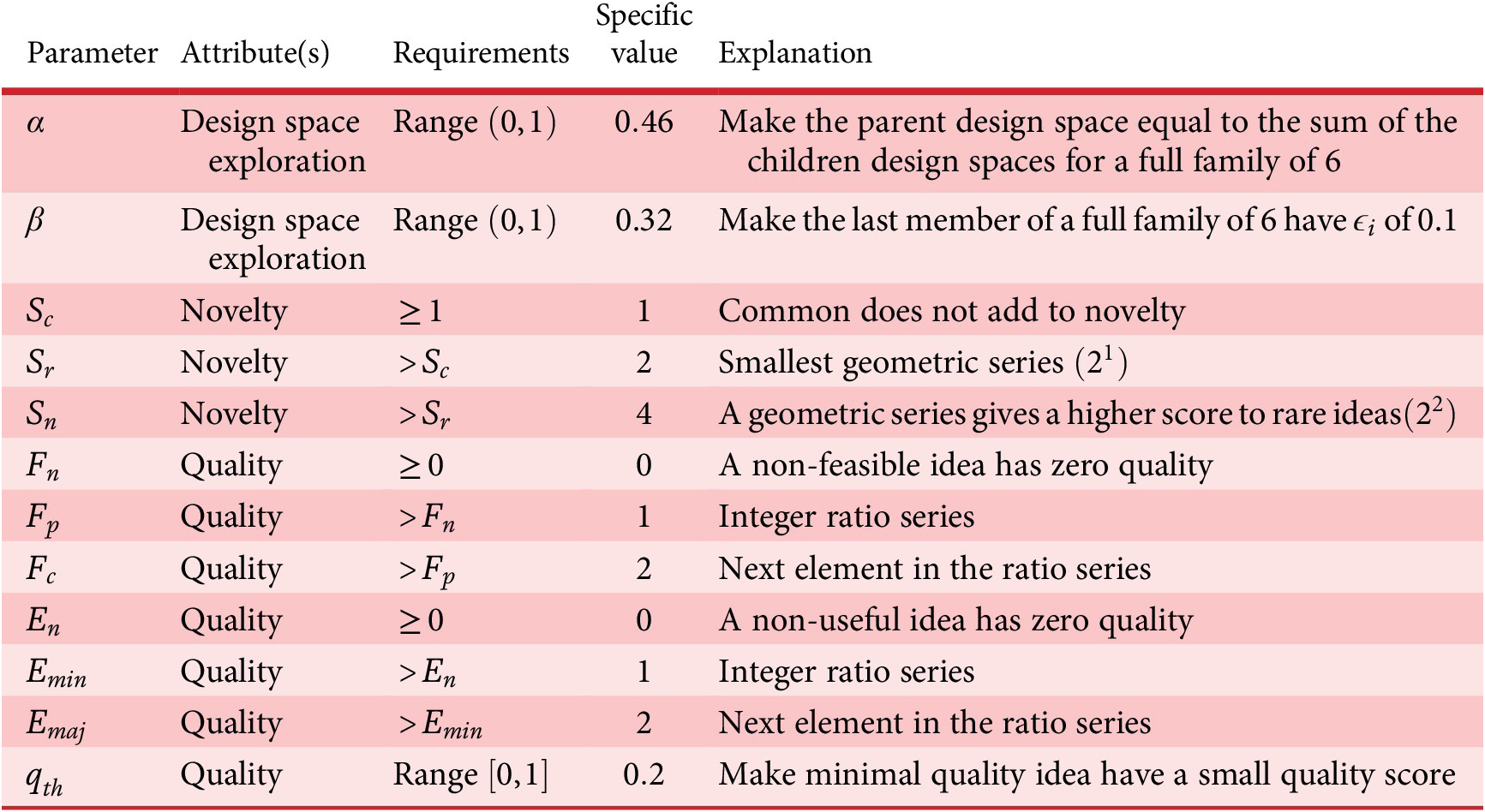

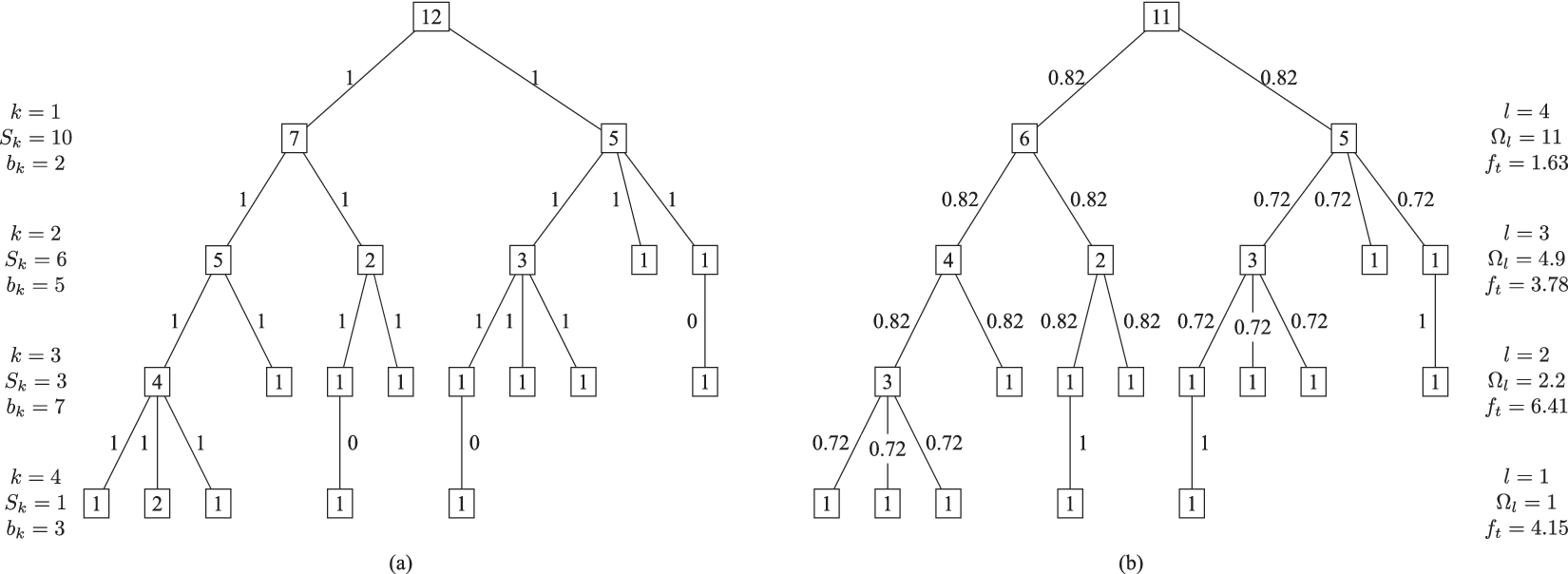

As described in “Choosing Values for Evaluation Parameters,” for this paper

![]() $ \beta =0.32 $

and

$ \beta =0.32 $

and

![]() $ \alpha =0.46 $

. Table 1 lists the member fraction, total fraction and average fraction for members 1–10 given these values of

$ \alpha =0.46 $

. Table 1 lists the member fraction, total fraction and average fraction for members 1–10 given these values of

![]() $ \beta $

and

$ \beta $

and

![]() $ \alpha $

.

$ \alpha $

.

Member fraction, total fraction and average fraction for family sizes up to 10 with

![]() $ \alpha =0.46 $

and

$ \alpha =0.46 $

and

![]() $ \beta =0.32 $

$ \beta =0.32 $

8.2. Branch variety

Starting at the leaf elements and working up to higher levels of the tree, the element variety can be aggregated into branch variety. For any element, we can calculate a branch variety, which is the sum of the element variety and the branch varieties for all children of the element.

The branch variety of an element is given by

where

![]() $ {V}_B $

is the branch variety of an element, and

$ {V}_B $

is the branch variety of an element, and

![]() $ {V}_{B_c} $

is the branch variety of a child of the element.

$ {V}_{B_c} $

is the branch variety of a child of the element.

For convenience, we can show the descendant variety

![]() $ {V}_D $

for an element, which is the sum of the branch varieties for all children of the element.

$ {V}_D $

for an element, which is the sum of the branch varieties for all children of the element.

where

![]() $ {V}_{E_c} $

and

$ {V}_{E_c} $

and

![]() $ {V}_{D_c} $

are the element variety and descendant variety, respectively, for a child of the element.

$ {V}_{D_c} $

are the element variety and descendant variety, respectively, for a child of the element.

8.3. Total variety

The total variety for a tree (

![]() $ V $

) is equal to the sum of the branch varieties for all elements at the highest level of the tree.

$ V $

) is equal to the sum of the branch varieties for all elements at the highest level of the tree.

8.4. Meeting the variety axioms

We can demonstrate that all three axioms are met by the given aggregation function.

Axiom TV-1: Adding a unique entry to any level of the tree should increase the total variety of the tree.

This axiom is met because each idea (regardless of its level) has a positive element variety, and the total variety is the sum of the element varieties for all elements. Thus, adding an element will increase the total variety.

Axiom TV-2: Adding a unique entry to a higher level of the tree should increase the total variety more than adding a unique entry to a lower level.

This axiom is met because the maximum element variety added by an idea at level

![]() $ l $

is

$ l $

is

The minimum variety added by a new element

![]() $ i $

at level

$ i $

at level

![]() $ l+1 $

is given by

$ l+1 $

is given by

For a finite design tree (and all design trees are finite)

![]() $ i<\infty $

, so

$ i<\infty $

, so

![]() $ {V}_{E_{i_{{\mathit{\min}}_{l+1}}}}>{V}_{E_{{\mathit{\max}}_l}} $

.

$ {V}_{E_{i_{{\mathit{\min}}_{l+1}}}}>{V}_{E_{{\mathit{\max}}_l}} $

.

Axiom TV-3 Adding a unique entry at a given level to a family with many members should increase the total variety less than adding a unique entry at the same level to a family with few members.

To show that this axiom is met, consider the effect of adding an idea

![]() $ A $

at level

$ A $

at level

![]() $ l $

in a family with

$ l $

in a family with

![]() $ {m}_1 $

existing members. The added variety will be

$ {m}_1 $

existing members. The added variety will be

![]() $ {V}_E $

for this new idea, which is

$ {V}_E $

for this new idea, which is

![]() $ {V}_{E_A}=\left(\alpha +\left(1-\alpha \right){\beta}^{\left({m}_1+1-1\right)}\right){\Omega}_l $

. Now consider adding a different idea

$ {V}_{E_A}=\left(\alpha +\left(1-\alpha \right){\beta}^{\left({m}_1+1-1\right)}\right){\Omega}_l $

. Now consider adding a different idea

![]() $ B $

also at level

$ B $

also at level

![]() $ l $

in a family with

$ l $

in a family with

![]() $ {m}_2 $

members, where

$ {m}_2 $

members, where

![]() $ {m}_2<{m}_1 $

. The added variety will be

$ {m}_2<{m}_1 $

. The added variety will be

![]() $ {V}_{E_B}=\left(\alpha +\left(1-\alpha \right){\beta}^{\left({m}_2+1-1\right)}\right){\Omega}_l $

. If

$ {V}_{E_B}=\left(\alpha +\left(1-\alpha \right){\beta}^{\left({m}_2+1-1\right)}\right){\Omega}_l $

. If

![]() $ \Delta V={V}_{E_B}-{V}_{E_A} $

is positive, the axiom is met.

$ \Delta V={V}_{E_B}-{V}_{E_A} $

is positive, the axiom is met.

As all three terms in the product above are positive,

![]() $ \Delta V $

is positive. Thus, Axiom V-3 is met.

$ \Delta V $

is positive. Thus, Axiom V-3 is met.

Note that the variety axioms are all met regardless of the specific values chosen for

![]() $ \alpha $

and

$ \alpha $

and

![]() $ \beta $

. However, the actual numerical value for the variety of a set of ideas will vary with

$ \beta $

. However, the actual numerical value for the variety of a set of ideas will vary with

![]() $ \alpha $

and

$ \alpha $

and

![]() $ \beta $

. The same is true for other parameters in our evaluation for novelty and quality. The rationale for the specific values chosen for these evaluation parameters is discussed in “Choosing Values for Evaluation Parameters.”

$ \beta $

. The same is true for other parameters in our evaluation for novelty and quality. The rationale for the specific values chosen for these evaluation parameters is discussed in “Choosing Values for Evaluation Parameters.”

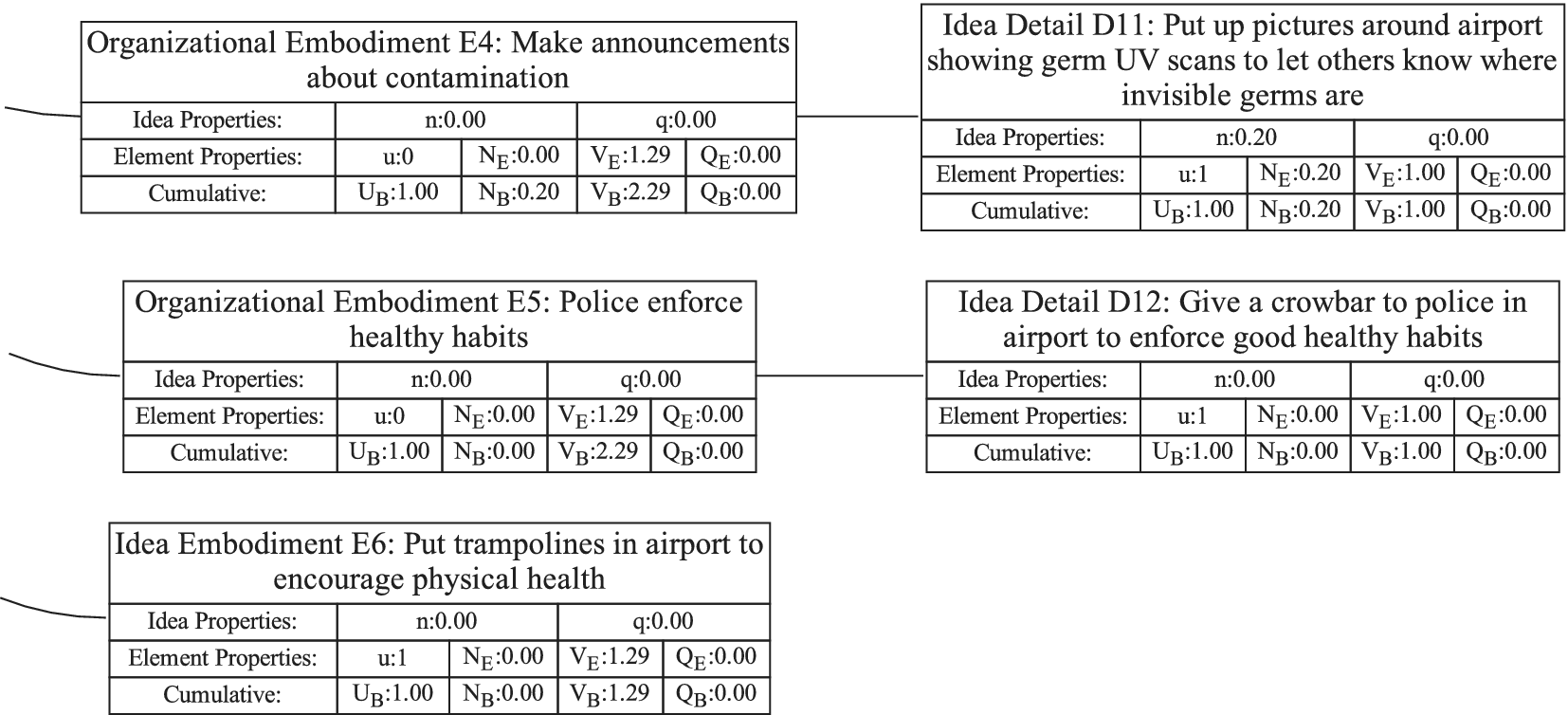

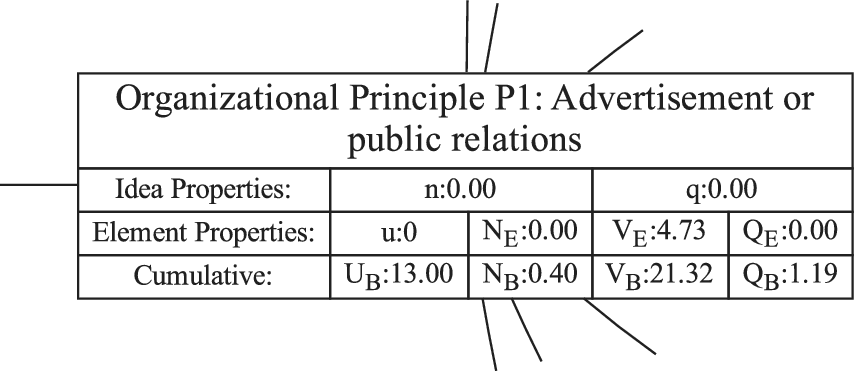

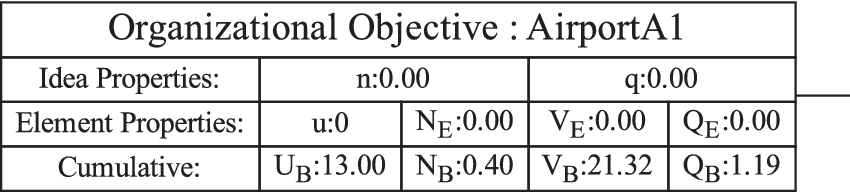

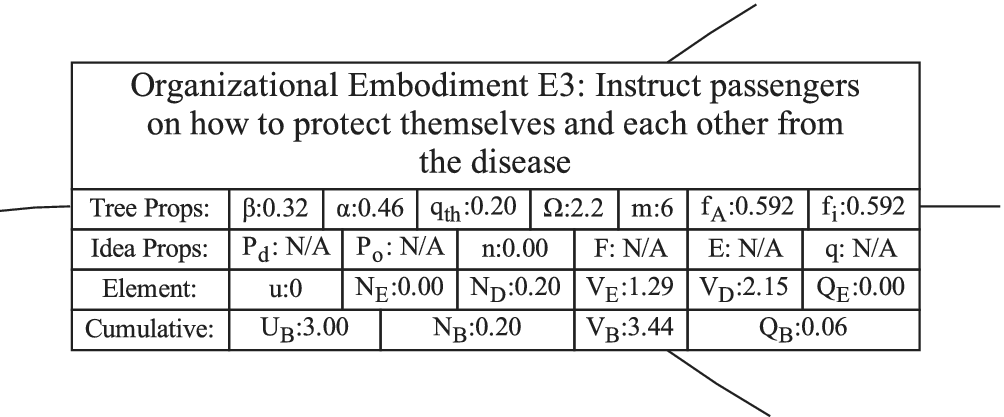

9. Evaluating and aggregating novelty (expansion of design space)

In order to evaluate novelty, we need to see how well the design space is expanded by the ideas. There are two important aspects of novelty. The first, called the idea novelty (

![]() $ n $

), is the novelty of an idea, irrespective of where the idea sits in the design tree. The second aspect is the explored design space for each idea, which takes into account the location of the idea in the tree. The element novelty for an idea placed in the context of the tree is the product of the idea novelty and the explored design space for the idea. The total novelty of a set is the sum of the element novelties for each of the elements in the set.

$ n $

), is the novelty of an idea, irrespective of where the idea sits in the design tree. The second aspect is the explored design space for each idea, which takes into account the location of the idea in the tree. The element novelty for an idea placed in the context of the tree is the product of the idea novelty and the explored design space for the idea. The total novelty of a set is the sum of the element novelties for each of the elements in the set.

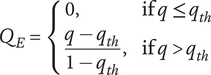

SVS provide two methods for measuring novelty of individual ideas. The first method evaluates a particular idea for commonality with other known ideas in the same problem space. Ideas that are infrequently seen in the reference set of ideas have a higher novelty score. This method is an effective method when comparing the results of different individuals, teams or ideation methods, and when a broad set of reference ideas for a given problem space is available. If there is no set of reference ideas, this method cannot be used. The novelty scores will depend on the reference set of ideas. If the reference set changes, the score will change. Furthermore, if the reference set is narrow compared with the universe of possible ideas, the novelty scores will be artificially inflated.