5 Brain bases for seeing speech: fMRI studies of speechreading

5.1 Introduction

This volume confirms that the ability to extract linguistic information from viewing the talker’s face is a fundamental aspect of speech processing. In this chapter we explore the cortical substrates that support these processes. There are a number of reasons why the identification of these visual speech circuits in the brain is important. One practical one is that it may help determine suitability for hearing aids, especially cochlear implants (Okazawa et al.Reference Okazawa, Naito, Yonekura, Sadato, Hirano, Nishizawa, Magata, Ishizu, Tamaki, Honjo and Konishi1996; Giraud and Truy Reference Giraud and Truy2002; Lazard et al.Reference Lazard, Lee, Gaebler, Kell, Truy and Giraud2010). Another is that there are brain correlates of good or poorer speechreading which may offer insights into individual differences in speechreading ability (see Ludman et al.Reference Ludman, Summerfield, Hall, Elliott, Foster, Hykin, Bowtell and Morris2000). There are also theoretical reasons to explore these brain bases. Cortical architecture reflects our evolutionary inheritance: Ape brains resemble human ones in very many respects, including the cortical organization of acoustic and visual sensation. Neurophysiological responses in macaque cortex show mutual modulation of vision and audition within key temporal regions formerly thought to be “purely” auditory. In turn, this suggests a broad-based, multimodal processing capacity for communication within lateral temporal regions, which could be the bedrock upon which a dedicated human speech processing system has evolved (see Ghazanfar et al.Reference Ghazanfar, Maier, Hoffman and Logothetis2005; Ghazanfar and Schroeder Reference Ghazanfar and Schroeder2006; Ghazanfar Reference Ghazanfar2009).

However, our interest was initially driven by other concerns. There had been suggestive evidence from patients with discrete lesions of the brain that watching faces speaking might call on distinct brain mechanisms, and that these could be different from those used for identifying other facial actions, such as facial expressions (see Campbell Reference Campbell, Stork and Hennecke1996). Nevertheless little was known about the precise brain networks involved in speechreading. Watching speech was known to be more similar to hearing speech than to reading written words; for example, in terms of its representations in immediate memory (Campbell and Dodd Reference Campbell and Dodd1980). But did this extend to cortical circuits? In our first studies, we wanted to know to what extent speech that is seen but not heard might engage the networks used for hearing speech, and which other brain networks might be engaged. These questions are related to further issues. How do the circuits for audiovisual speech relate to those for visual or auditory speech alone? How might the cortical bases for silent speechreading reflect the individual’s experience with heard language? That is, do people who are born deaf show similar or different patterns of activation than hearing people when (silently) speechreading? The technique that we have exploited to address these questions is functional magnetic resonance imaging (fMRI). This brain mapping technique is non-invasive, unlike positron emission tomography (PET), which requires the participant to ingest small amounts of radioactive substances.1 fMRI offers reasonably high spatial resolution (i.e., on the order of millimeters) of most brain regions. It can give a “cumulative snapshot” of those areas that are active during the period of scanning (typically several seconds). It does not lend itself to showing the discrete temporal sequence of brain events that are set in train when viewing a speaking face, although recent developments in analytic methods can model the fMRI data with respect to stages of information flow (e.g. von Kriegstein et al.Reference von Kriegstein, Dogan, Grüter, Giraud, Kell, Grüter, Kleinschmidt and Kiebel2008). For good temporal resolution, dynamic techniques, using, for example, scalp measurements of electrochemical brain potentials (event-related potentials, ERPs; see e.g. Callan et al.Reference Callan, Callan, Kroos and Vatikiotis-Bateson2001; Allison et al.Reference Allison, Puce and McCarthy2000), or magnetoencephalography (MEG Ð see Levänen Reference Levänen1999) are preferred, although spatial resolution is less good using these techniques. One compelling strategy is to combine inferences from spatial analysis using fMRI or PET, alongside analysis of the fine-grain temporal sequences of brain events using MEG or scalp electroencephalography (EEG), and this is where much current research activity is focused (e.g. Calvert and Thesen Reference Calvert and Thesen2004; Hertrich et al.Reference Hertrich, Mathiak, Lutzenberger, Menning and Ackermann2007; Hertrich et al.Reference Hertrich, Mathiak, Lutzenberger and Ackermann2009; Arnal et al.Reference Arnal, Morillon, Kell and Giraud2009; Hertrich et al.Reference Hertrich, Dietrich and Ackermann2010). A further imaging technique which has been used to explore audiovisual speech processing is TMS – transcranial magnetic stimulation. To date, this has been used to explore contributions of primary motor cortex (M1 – see below) to audiovisual speech processing (Watkins et al.Reference Watkins, Strafella and Paus2003; Sato et al.Reference Sato, Buccino, Gentilucci and Cattaneo2010).

5.2 Route maps and guidelines

5.2.1 Brain regions

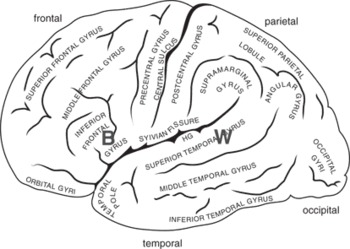

The cerebral cortex shows local specialization. The visual areas of the human brain are located at the back of the brain in the occipital lobes. Acoustic sensation is processed initially just behind the ears, in the left and right temporal lobe, within Heschl’s gyrus on the temporal plane (planum temporale) below the lateral surface of the temporal lobe (see Figure 5.1 to Figure 5.4 for these locations).

Figure 5.1 A schematic view of the left hemisphere, showing its major folds (sulci) and convolutions (gyri). Two language areas are also shown: “B” is Broca’s area; “W” is Wernicke’s area. These are general (functional) regions, not specific cortical locales (adapted from Damasio and Damasio Reference Damasio and Damasio1989).

The left temporal lobe is preferentially involved in understanding language, and the superior part of the left temporal lobe is named Wernicke’s region, after the nineteenth-century neurologist and psychiatrist Karl Wernicke, who has been credited with the suggestion that these regions support language comprehension. Another important brain region for language is in the inferior part of the left prefrontal lobe. Here lies Broca’s area, named after the French clinician Paul Broca who first described a case with damage in just this region in 1847. Patients with lesions in Broca’s area have difficulty in verbal production. The region is also implicated in syntactic processing, especially function words and verbs, and in processing inflectional morphology.

One mode of understanding cortical organization that has stood the test of time was indicated by the Russian neurologist, A. R. Luria (Reference Luria1973). He suggested a three-part functional hierarchy reflected in brain topography. Primary regions are where sensation and action are implemented at the most fine-grained level. For sensation, these regions are those that receive projections from the afferent sensory neurons via subcortical relays. For action, they are the regions that project to the efferent neural system, also via subcortical structures. For vision, this means that primary cortex in the calcarine fissure of the occipital lobes (visual areas V1, V2) is organized so that the visual features and spatial organization of the visual field are retained in the organization of cortical cell responses. Because of this, damage to parts of the occipital lobe leads to blindness as dense and as localized to specific parts of the visual field as that caused by retinal damage.

As for perception, so too for action. The primary motor region, which lies on the anterior surface of the central sulcus, maps the human body topographically (somatotopic organization). If there is damage to the motor strip, immobility will be as acute as that suffered if the spinal nerve to that set of muscles is severed, and as specific to those muscle groups.

So, to summarize, in addition to a primary motor area, at least three discrete and cortically widely separated primary sensory regions can be identified: (1) vision in occipital regions (V1/2), (2) audition in Heschl’s gyrus, a deep “fold” in the superior lateral temporal surface (A1), (3) somatosensory perception in the sensory strip on the posterior surface of the central sulcus (S1). These locations are indicated in Figure 5.2. They are, by definition, modality-specific.

Figure 5.2 Functional organization of the cortex, lateral view, adapted from Luria (Reference Luria1973). Primary regions are shaded darker, secondary areas are shaded lighter. Unshaded regions can be considered tertiary. V=vision, A=audition, S = somatosensory, M = motor.

Regions proximate to these primary sites show higher-level organization with less strict “point-for-point” organization. Such secondary areas for vision, for example, include V5/MT, a visual motion sensitive region, as well as some parts of the inferior temporal lobe that specialize for face or written-word or object processing. Within the parietal lobes, various spatial coding relations are mapped, so that cells here may be sensitive to spatial location but relatively indifferent to the type of object in view.

Finally, Luria suggested that tertiary areas are multimodal in their sensitivity and can support abstract representations. The tertiary zones are proximate to the secondary ones, but are distant from the primary ones. One good example of this is in the frontal lobe. The tertiary region here is the most anterior, prefrontal part. This is concerned with the highest level of executive function: general plans, monitoring long-term outcomes. This can be thought of as the strategic centre of the brain, involved in planning (“I want to make a cup of tea”). Moving in a posterior direction, the secondary motor areas effect more tactical aspects of action preparation (“I need to find the kettle, fill it with water and set it to boil”), while the most posterior part of the frontal lobe includes M1 where voluntary actions of specific muscle groups are initiated (Figure 5.2).

This general organizational landscape suggests that Broca’s area, lying within secondary (motor) regions has become specialized for the realization of specialized language functions including speech planning and (relatively abstract) aspects of production. A recent perspective emphasizes the role of Broca’s area in the selection of word and action (Thompson-Schill Reference Thompson-Schill and Cutler2005). Wernicke’s area, on one account, specializes in processing speech-like and language-like features of the auditory input signal (Wise et al.Reference Wise, Scott, Blank, Mummery, Murphy and Warburton2001), but the role of these regions in relation to visible speech and audiovisual speech processing had not been addressed.

5.2.2 Networks and connections

The organization of cortical grey matter, as sketched here, is not the only aspect of brain organization that should guide us. The brain is a dynamic system across which neural events unfold that support the various functional analyses and action plans that comprise behavior. In addition to connections between neighboring neurons that allow the development of functionally distinct cortical regions to emerge as sketched above, long fiber tracts (white matter) connect posterior and anterior regions (uncinate and arcuate tracts), as well as homologous regions in each hemisphere (commissural tracts). White matter tracts are now imageable, using diffusion tensor techniques (DTI, see Appendix). The neural pathways for the analysis of the signal from its registration in primary sensory regions for vision and audition right up to the conscious awareness and representation of a speech act require many different levels of analysis, supported by multiple neural connections, including white matter connections between different brain regions. These levels of analysis can be described in terms of different information processing stages, with bi-directional qualities. That is, the direction of information flow can be top-down, as well as bottom-up. Thus, a network approach can be used to describe how different functional processes may be supported by multiple, interrelated patterns of activation across discrete brain regions.

5.2.3 Processing streams

The acoustic qualities of the auditory signal are processed hierarchically according to spectrographic characteristics within Heschl’s gyrus and surrounding auditory belt (roughly Brodmann area 41) and parabelt (including Brodmann area 42) regions of the planum temporale and very superior parts of the lateral surface of the temporal lobes (Binder et al.Reference Binder, Frost, Hammeke, Bellgowan, Springer, Kaufman and Possing2000; Hackett Reference Hackett and Ghazanfar2003; Binder et al.Reference Binder, Rao, Hammeke, Yetkin, Jesmanowicz, Bandettini, Wong, Estkowski, Goldstein and Haughton2004). From these sites, which constitute, essentially, primary auditory cortex in Luria’s terms (A1), emerge two distinct processing streams, first identified in animal models (for a review, see Scott and Johnsrude Reference Scott and Johnsrude2003; Rauschecker and Scott Reference Rauschecker and Scott2009; Hickok and Poeppel Reference Hickok and Poeppel2004; Hickok and Poeppel Reference Hickok and Poeppel2007). One, the postero-dorsal stream, projecting to the temporo-parietal junction, on to inferior parietal sites, thence to dorsolateral pre-frontal cortex (DLPFC) supports the localization of sounds. This is conceptualized as a “where” stream (Scott and Johnsrude Reference Scott and Johnsrude2003). The other, the “what” stream, comprises an anterior projection along the superior temporal gyrus towards the temporal pole, and thence via uncinate tracts to ventrolateral prefrontal cortex including Broca’s area (Scott and Johnsrude Reference Scott and Johnsrude2003; Hickok and Poeppel Reference Hickok and Poeppel2004). This route allows the identification of discrete sounds as events/objects, and also supports the identification of individual voices (Warren et al.Reference Warren, Scott, Price and Griffiths2006). Figure 5.3 shows the approximate directions of these streams, overlaid on a schematic of the “opened” superior temporal regions.

Figure 5.3 This schematic view “opens out” the superior surface of the temporal lobe, as if the frontal lobe has been drawn away from the temporal lobe on which it normally lies. This reveals the superior temporal plane (STP) including the planum temporale (PT), and Heschl’s gyrus (HG). Within this extensive region are critical sites for acoustic analysis. Primary auditory cortex (A1) occupies HG. On the lateral surface of the temporal lobe, and visible in lateral view, the uppermost gyrus is the superior temporal gyrus (STG), with the superior temporal sulcus (STS) along its ventral parts (after Wise et al.Reference Wise, Scott, Blank, Mummery, Murphy and Warburton2001). This comprises region A2. The lower shaded arrow shows the approximate direction of forward flow of the anterior, ventral auditory stream (“what”); the upper shaded arrow shows the posterior, dorsal (“where/how”) stream.

Can this model, based originally on animal data, accommodate speech processing? PET studies using systematically degraded speech signals show a gradient of activation reflecting the intelligibility of the sound signal. Higher intelligibility correlates with more anterior superior temporal activation, irrespective of the complexity of the speech sound (Scott et al.Reference Scott, Rosen, Lang and Wise2006). Lesions of anterior temporal regions lead to impaired comprehension of speech (Scott and Johnsrude Reference Scott and Johnsrude2003; Hickok and Poeppel Reference Hickok and Poeppel2004; Hickok and Poeppel Reference Hickok and Poeppel2007). The anterior stream appears to constitute a “what” stream, supporting the progressive identification of phonological, lexical, and semantic features of the auditory signal. What, then, is the role of the posterior stream in speech processing? One compelling suggestion is that this constitutes a “how” pathway for speech, integrating articulatory and acoustic plans online, to enable not only fluent speech production, but also its accurate perception in terms of the intended speech act. The sensorimotor characteristics of speech may be better captured by this stream (Scott and Johnsrude Reference Scott and Johnsrude2003; Rauschecker and Scott Reference Rauschecker and Scott2009). Hickok and Poeppel (Reference Hickok and Poeppel2007) suggest that it is this dorsal route that shows very strong (left-) lateralization, in contrast to the ventral stream which may involve a greater degree of bilateral processing of the speech signal.

Why should the speech processing system require the “belt-and-braces” of two speech processing streams? One reason is in the variability of speech. We are surprisingly good at identifying an utterance whether it is spoken by a man or child, slowly or quickly, over a poor phone line or by a casual speaker. Speech processing takes account of coarticulatory processes used by the talker. It manages wide variation in talker style (accent, register). All of these affect the acoustic properties of the signal in such varied ways that there are rarely observable regularities in the spectrographic record. Speech constancy is a non-trivial issue for speech perception. This was recognized by motor theorists of speech perception, who proposed a critical role for the activation of articulatory representations in the listener in order for speech to be effectively heard and understood (Liberman and Mattingly Reference Liberman and Mattingly1985; Liberman and Whalen Reference Liberman and Whalen2000). Recent models of sensorimotor control offer more detailed outlines of how motor representations and sensory inputs may interact dynamically to shape ongoing perceptual processes as speech is perceived (Rauschecker and Scott Reference Rauschecker and Scott2009). Hickok and Poeppel (Reference Hickok and Poeppel2007) suggest tasks that require the recognition of a spoken utterance may rely more on the ventral stream, while speech perception itself makes more use of the dorsal stream.

Finally, it would be a mistake to conceive of these streams as solely forward-flowing. Neuroanatomical studies suggest that there can be modulation of “earlier” regions by “later” processing stages. That is, information could, for some processing, flow “back” to primary sensory regions as well as “forward” to tertiary sites. This is discussed in more detail below. For the dorsal stream, especially, it seems necessary to invoke both forward and inverse models to match the action plan for a speech gesture (frontal, fronto-parietal), with the acoustic properties of its realization (temporal, temporo-parietal).

5.2.4 Implications for speechreading and audiovisual speech

We have provided a broad brushstroke picture of the brain areas implicated in cognitive function, and of speech processing cortex in particular. We have outlined the characteristics of the cortical flow of the auditory speech stream(s) from primary auditory regions within Heschl’s gyrus, through secondary, neighboring regions in the planum temporale and on the lateral surface of the mid-superior temporal gyrus, firstly, to tertiary regions in anterior parts of the superior temporal lobe (“what” – a “ventral route”) and, secondly, to posterior, temporo-parietal regions (“how” – a dorsal route), with each stream involving frontal activation as a putative processing “endpoint” of forward information flow from the sensorium. This was clarified in order to set the groundwork for analyzing speechreading, since speechreading by eye must lead to a representation that is speech-based (Campbell Reference Campbell, Calder, Rhodes and Haxby2011). We are now in a better position to pose specific questions about speechreading in terms of cortical activation. To what extent will watching silent speech activate well-established hearing and speech regions including Wernicke’s and Broca’s areas? How are primary and secondary auditory areas implicated? Does seen speech impact more on the dorsal than the ventral stream, or vice versa? Are there indications of distinct processing streams for seen silent speech, echoing those that have been demonstrated for heard speech? Most importantly, how might circuits specialized for visual processing interact with those that engage the language and hearing regions?

5.3 Silent speechreading and auditory cortex

Our initial study (Calvert et al.Reference Calvert, Bullmore, Brammer, Campbell, Williams, McGuire, Woodruff, Iversen and David1997) asked to what extent do listening to and viewing the same speech event activate similar brain regions. We used a videotape of a speaker speaking numbers, and in order to be sure that the participant actually speechread the material we asked them to ‘mentally (silently) rehearse’ the lipread numbers in the scanner. The results are summarized, schematically, in Figure 5.4, which is based on Calvert et al.’s (Reference Calvert, Bullmore, Brammer, Campbell, Williams, McGuire, Woodruff, Iversen and David1997) findings.

Figure 5.4 Lateral views of the left and right hemispheres, with areas of activation indicated schematically as bounded ellipses. Speechreading and hearing activate primary and secondary auditory cortex (small grey textured ellipse, white border). Speechreading alone (open ellipses, black border) activates other lateral temporal and posterior, visual processing regions. The ellipses correspond approximately to the regions illustrated in figure 1 of Calvert et al. (Reference Calvert, Bullmore, Brammer, Campbell, Williams, McGuire, Woodruff, Iversen and David1997).

In our study, as in many others, auditory speech activation was confined to temporal regions, and was focused on Heschl’s gyrus (HG) extending throughout much of the superior temporal gyrus (STG). These areas are indicated in Figure 5.4. Watching silent speech activated visual regions in posterior temporo-occipital cortex. Many of these had been identified by other studies as active when watching any sort of moving event. More interestingly, we found that in some regions, the activation pattern for heard and for seen speech was co-incident. These included the lateral parts of Heschl’s gyrus (primary auditory cortex (A1)), and secondary auditory cortex in superior temporal regions (A2) bilaterally. Previous studies had suggested that only acoustic stimuli activated these regions; they were believed to be dedicated solely to auditory sensory processing.

The fact that seeing speech could generate activation in acoustically specialized regions immediately suggested further questions: Was seen speech the only type of visual event that could activate these auditory sites? What caused this pattern? Would we see it in deaf people?

5.3.1 How specific is the activation?

In one of Calvert et al.’s Reference Calvert, Bullmore, Brammer, Campbell, Williams, McGuire, Woodruff, Iversen and David1997 experiments (Experiment 3) we attempted to see if all face actions generated similar activation patterns. In the scanner, participants counted closed mouth twitching gestures (gurns) that could not be interpreted as speech. The patterns of activation for watching speech and for watching gurning actions were then compared. Speechreading activated lateral superior temporal regions, including auditory cortex, to a greater extent than counting the number of closed-mouth facial actions (gurning). This suggested that speechreading, rather than other forms of facial action, “reached the part others could not reach,” namely auditory cortex. However, this may have been a premature conclusion. Because speechreading and gurning were contrasted directly, rather than in relation to a control (baseline) condition, we could not determine what the common regions of activation were. The contrast analysis only allowed us to conclude that there were differences between the conditions.

We therefore returned to this question, incorporating appropriate baseline conditions and more powerful image acquisition techniques. Our volunteers this time were a new population of fourteen right-handed English speakers (Campbell et al.Reference Campbell, MacSweeney, Surguladze, Calvert, McGuire, Suckling, Brammer and David2001). We replicated the original findings (see below), but additionally were able to specify those areas activated by watching gurns and those activated by seeing speech, when both these face action conditions were contrasted with watching a still face.

Watching gurns activated visual movement cortex (V5/MT) – a posterior (occipito-temporal) region activated by any kind of visual movement. But activation extended beyond V5/MT into the temporal lobes, including the temporal gyri on the lateral surface of the temporal lobe (inferior, middle, and superior). Activation tended to be right-sided. The peak activation locus was in inferior-middle temporal regions, extending into superior temporal gyrus. However activation did not extend into primary/secondary auditory cortex (A1/A2).

Compared with watching a still face, watching speech also generated activation in lateral posterior regions including V5/MT. However, the speech task generated activation that showed a peak within the superior temporal gyrus, bilaterally. Thus the sites that were activated both by watching speech and by watching gurning movements included areas specialized for visual movement processing of any sort (V5/MT), parts of inferior temporal lobes and (posterior parts of) the superior temporal gyrus. When the gurn and speechreading conditions were contrasted directly, the pattern is as appears in Figure 5.5, and closely replicated the original findings.

Figure 5.5 Schematic showing differential activation in the lateral temporal lobes when watching non-speech actions (white-bordered grey ellipse) and watching speech (black-bordered ellipse). Renderings based on data from Campbell et al. (Reference Campbell, MacSweeney, Surguladze, Calvert, McGuire, Suckling, Brammer and David2001). A peak activation site in right STS is activated by both types of face action. Speech, but not gurn observation extended into auditory cortex.

This study confirmed that watching speech – not watching gurns –activates auditory cortex, where we define auditory cortex as those regions hitherto shown to be specific for the processing of acoustic input (Heschl’s gyrus at its lateral junction with superior temporal gyrus). Speech was more left-lateralized than gurn watching. More recent results from our own labs (e.g. Calvert and Campbell Reference Calvert and Campbell2003; Capek et al.Reference Capek, Waters, Woll, MacSweeney, Brammer, McGuire, David and Campbell2008b; Capek et al.Reference Capek, Macsweeney, Woll, Waters, McGuire, David, Brammer and Campbell2008a), and from others (e.g. Sadato et al.Reference Sadato, Okada, Honda, Matsuki, Yoshida, Kashikura, Takei, Sato, Kochiyama and Yonekura2005; Paulesu et al.Reference Paulesu, Perani, Blasi, Silani, Borghese, De Giovanni, Sensolo and Fazio2003), confirm this general picture.

5.3.2 Controversy: which parts of auditory cortex are activated by silent seen speech in hearing people?

That seeing speech could activate auditory cortex in hearing people has been a controversial finding, since it implies that dedicated acoustic processing regions may be accessible by a specific form of visual stimulation. One possibility was that the concurrent noise of the scanner may have contributed to auditory cortex activation when viewers observed silent speech, by giving an illusion of (heard) speech in noise. In the gurn condition, the “listener” would be unlikely to be subject to such an illusion. We were able to test this by taking advantage of one of the principles of functional magnetic resonance imaging. fMRI gives an indirect measure of blood flow in brain regions. Blood flow lags the electrophysiological event by several seconds. Peak dynamic blood flow changes, measured by the scanner, can occur three to eight seconds after the stimulus event and its immediate neurochemical localized response. The scanner is noisy only during the period when the brain activation pattern is being measured (“image collection”). By presenting a silent speechreading task, and then waiting for five seconds before image collection, it was possible to measure the activation generated by the single, silent speechreading event. Under these sparse-scanning conditions, where noise during speechreading was absent, we still found activation in auditory cortex in the lateral parts of Heschl’s gyrus (A1), and its junction with the superior temporal gyrus – BA 41, 42 (MacSweeney et al.Reference MacSweeney, Amaro, Calvert, Campbell, David, McGuire, Williams, Woll and Brammer2000).

Nevertheless, not all investigators find activation in these regions when people are speechreading. In particular, Bernstein et al. (Reference Bernstein, Auer, Moore, Ponton, Don and Singh2002) claim that auditory cortex is not activated by silent speechreading. Their studies required participants to match speechread words presented in sequence (a recognition task) in one condition, while in another they measured activation induced by hearing words. They then mapped the two activation patterns, seeking the regions of overlap on a person-by-person basis. They found activation by the seen-speech matching task in superior temporal gyri, including STS, but they report no activation in primary auditory cortex as such. By contrast, in our studies, participants were required to generate a language-level representation of the lipread stimulus by covert rehearsal. A further, independent study by Sadato et al. (Reference Sadato, Okada, Honda, Matsuki, Yoshida, Kashikura, Takei, Sato, Kochiyama and Yonekura2005) used yet another task. They required respondents to match the first and last of a series of four images of vowel actions, where the mouth patterns were selected from four point vowel mouthshapes. For a group of nineteen hearing respondents, they reported activation within the planum temporale for this task, compared to a similar task requiring matching closed (gurning) mouths. In a further study specifically designed to address the issue of whether primary auditory cortex is activated during silent speech perception, Pekkola et al. (Reference Pekkola, Ojanen, Autti, Jaaskelainen, Möttönen, Tarkiainen and Sams2005), using more powerful fMRI (3T) scanning procedures, found clear evidence, on a person-by-person basis, for activation in A1 by seen vowel actions in six out of nine participants.

It is possible that A1 activation by visible speech is task-specific. Speech imagery may be critical. Speech imagery appears to be an important aspect of the degree of activation in Heschl’s gyrus when other visual material, such as written words, is presented (Haist et al.Reference Haist, Song, Wild, Faber, Popp and Morris2001). A dual processing stream account could be invoked to address these discrepant findings across different processing tasks and individuals. Activation within A1 may be more likely when the dorsal stream is involved, since this involves sensorimotor aspects of processing, where the viewer is engaged in active auditory speech imagery.

5.3.3 Still and moving speech: dual routes for silent speechreading?

Visible speech has some unique characteristics as a visual information source. While it is dynamic, and the temporally varying trace of the visible actions of the face corresponds closely to a range of acoustic parameters of speech (Yehia et al.Reference Yehia, Kuratate and Vatikiotis-Bateson2002; Munhall and Vatikiotis-Bateson Reference Munhall, Vatikiotis-Bateson, Campbell, Dodd and Burnham1998; Jiang et al.Reference Jiang, Alwan, Keating, Auer and Bernstein2002; Jiang et al. Reference Jiang, Auer, Alwan, Keating and Bernstein2007; Chandrasekaran et al.Reference Chandrasekaran, Trubanova, Stillittano, Caplier and Ghazanfar2009), the stilled facial image itself can afford some speech information. For instance the shape of an open mouth can (to some extent) indicate vowel identity (compare “ee”, “ah”, and “oo”). Sight of tongue between teeth (“th”) or top teeth on lower lip (“ff”) is also indicative of a speech gesture (a dental or labiodental “sound”). Lesion studies suggest that speech seen as a still image and speech seen in natural movement can dissociate. A neuropsychological patient has been described who accurately reported the speech sound visible from a still photograph, but not from a movie clip of speech (Campbell et al.Reference Campbell, Zihl, Massaro, Munhall and Cohen1997). Other patients show the opposite dissociation (Campbell Reference Campbell, Brooks, De Haan and Roberts1996; Munhall et al.Reference Munhall, Servos, Santi and Goodale2002). Do these doubly dissociable characteristics of seen speech have implications for the neural networks that support speechreading in normal hearing populations?

Calvert and Campbell (Reference Calvert and Campbell2003) compared fMRI activation in response to natural visible (silent) speech and to a visual display comprising sequences of still photo images digitally captured from the natural speech sequence. Spoken disyllables (vowel-consonant-vowel) were seen. The still images were captured at the apex of the gesture – so for ‘th’, the image clearly showed the tongue between the teeth, and for the vowels, the image captured was that which best showed the vowel’s identity in terms of mouth shape. The series of stills thus comprised just three images: vowel, consonant, and vowel again. However, the video sequence was built up so that the natural onset and offset times of the vowel and consonant were preserved (i.e., multiple frames of vowel, then consonant, then vowel again). The overall duration of the still lip series was identical to that for the normal speech sample, and care was taken to avoid illusory movement effects. The visual impression was of a still image of a vowel (about 0.5 s), followed by a consonant (about 0.25 s) followed by a vowel. Participants in the scanner were asked to detect a dental consonantal target (“v”) among the disyllables seen. Although the posterior superior temporal sulcus (STSp) was activated in both natural and still conditions, it was activated more strongly by natural movement than by the still image series.

STSp seems particularly sensitive to natural movement in visible speech processing. In a complementary finding, Santi et al. (Reference Santi, Servos, Vatikiotis-Bateson, Kuratate and Munhall2003) found that pointlight illuminated speaking faces which carried no information about visual form generated activation in STSp. Finally, a recent study (Capek et al.Reference Capek, Campbell, MacSweeney, Woll, Seal, Waters, Davis, McGuire and Brammer2005; Campbell Reference Campbell2008) used only stilled photo-images of lip actions, each presented for one second, for participants in the scanner to classify as vowels or consonants. Under these conditions, no activation of STSp was detectable. So, images of lips and their possible actions are not always sufficient to generate activation of this region; STSp activation requires either that visual motion be available in the stimulus, or that the task requires access to a dynamic representation of heard or seen speech.

It may be no accident that two routes for speechreading echo those that have been described for acoustic speech processing. Similar principles may underlie each. Thus, the anterior visible speech stream may be essentially associative, reflecting, for example, (learned) co-incidence of a particular speaker’s auditory and visual characteristics, and likely to be activated in the context of a task that need not require on line speech processing. By contrast, tasks that recruit more “lifelike” representations may be invoked by natural movement, involving sensorimotor processes within the dorsal stream.

5.3.4 The role of the superior temporal sulcus

The superior temporal gyrus (STG) runs the length of the upper part of the temporal lobe along the superior plane of the sylvian fissure. We have already noted that this structure supports, in its anterior parts, the “what” stream, and, posteriorly, the “how” stream for auditory speech processing. The underside or ventral part of the gyrus is the superior temporal sulcus (STS; see Figure 5.6). Outwith speech processing, neurophysiological studies have long suggested that the posterior half of STS (STSp) appears to be a crucial “hub” region in relation to the processing of communicative acts – whatever their modality. The STSp, extending to the temporoparietal junction at the inferior parietal lobule, is a tertiary region in Luria’s terminology. It is highly multimodal, with projections from not only auditory and visual cortex, but also somatosensory cortex. It can be activated by written, heard, or seen language (including sign language – see, for example, Sadato et al.Reference Sadato, Okada, Honda, Matsuki, Yoshida, Kashikura, Takei, Sato, Kochiyama and Yonekura2005; Söderfeldt et al.Reference Söderfeldt, Ingvar, Ronnberg, Eriksson, Serrander and Stone-Elander1997; Pettito et al.Reference Pettito, Zatorre, Gauna, Nikelski, Dostie and Evans2000; MacSweeney et al.Reference MacSweeney, Woll, Campbell, McGuire, David, Williams, Suckling, Calvert and Brammer2002b; MacSweeney et al.Reference MacSweeney, Campbell, Woll, Giampietro, David, McGuire, Calvert and Brammer2004; MacSweeney et al.Reference MacSweeney, Campbell, Woll, Brammer, Giampietro, David, Calvert and McGuire2006; Newman et al.Reference Newman, Supalla, Hauser, Newport and Bavelier2010). It is also a key region for the perception of socially relevant events and supports theory-of-mind processing (Brothers Reference Brothers1990; Saxe and Kanwisher Reference Saxe and Kanwisher2003).

Figure 5.6 Schematic lateral view of left hemisphere highlighting superior temporal regions. Superior temporal sulcus (STS) is the ventral (under-) part of superior temporal gyrus (STG). The directions of the (auditory) ventral, anterior (“what”) and dorsal posterior (“how”) speech streams are schematically indicated as shaded arrows – the lighter arrow indicates the dorsal stream, the darker arrow the ventral stream.

As we have seen, STSp is especially sensitive to mouth movement (see Figure 5.5) as also quoted by other authors (Puce et al.Reference Puce, Allison, Bentin, Gore and McCarthy1998; Pelphrey et al.Reference Pelphrey, Morris, Michelich, Allison and McCarthy2005). To what extent could its role in speechreading reflect a more profound specialization – that of integrating speech across modalities? How might activation in STSp be related to activation in primary auditory cortex (A1)?

5.3.5 STSp and audiovisual speech: a binding function?

An early indication that lateral superior temporal regions were implicated in seeing speech was the demonstration by Sams and colleagues (Sams et al.Reference Sams, Aulanko, Hamalainen, Hari, Lounasmaa, Lu and Simola1991; Sams and Levänen Reference Sams, Levänen, Stork and Hennecke1996; Levänen Reference Levänen1999) that a physiological correlate of auditory speech matching could be induced by an audiovisual token. The localization of this function, which used magnetoencephalography (MEG) to measure the response, appeared to be in auditory cortex, extending into superior temporal cortex. However, MEG has relatively poor spatial localization, making it difficult to be certain just which lateral superior temporal regions were differentially activated by audiovisual speech compared with heard speech. Calvert et al. (Reference Calvert, Brammer, Bullmore, Campbell, Iversen and David1999; Reference Calvert, Campbell and Brammer2000) used fMRI to explore the pattern of activation generated by audiovisual speech in relation to that for heard or seen speech alone. Bimodal speech is much easier to process than speech that is seen silently, because many phonetic contrasts that are hard to distinguish by eye are available readily to the ear. Perhaps less obviously, seeing the speaker improves speech perception even when the acoustic input is sufficient for identification (Reisberg et al.Reference Reisberg, McLean, Goldfield, Dodd and Campbell1987). When the acoustic and visual displays are congruent, the integrated percept is more readily identified than would be predicted from a simple additive model based on performance in each modality alone.

Calvert et al.’s idea was that this superadditive characteristic of audiovisual speech with respect to the combining modalities might be related to STSp activation. Furthermore, it was predicted that STSp, receiving input from both visual and auditory sensory regions, might then enhance activation in each of the primary sensory regions, by recursive or back-projected neural projections. Activation of both primary visual movement (V5/MT) and primary auditory (A1) regions was compared when the input condition was bimodal compared with each of the unimodal conditions. Under bimodal stimulation, there was greater activation in both V5 and A1. This unimodal enhancement was linked directly to STSp activation, co-occurring with STS activation in the bimodal condition. Moreover, it only occurred when congruent, synchronized audiovisual stimuli were presented. Thus, STSp appears to offer a cortical mechanism for the modulation of the influence of one speech modality on another, in particular for the superadditive properties of congruent audiovisual speech (see Figure 5.7). Many further independent studies now confirm a special role for STSp in audiovisual speech processing compared with unimodal seen or heard speech (e.g. Wright et al.Reference Wright, Pelphrey, Allison, McKeown and McCarthy2003; Capek et al.Reference Capek, Bavelier, Corina, Newman, Jezzard and Neville2004; Sekiyama et al.Reference Sekiyama, Kanno, Miura and Sugita2003; Callan et al.Reference Callan, Jones, Munhall, Callan, Kroos and Vatikiotis-Bateson2003; Callan et al.Reference Callan, Jones, Munhall, Kroos, Callan and Vatikiotis-Bateson2004; Miller and D’Esposito Reference Miller and D’Esposito2005; Hertrich et al.Reference Hertrich, Dietrich and Ackermann2010).

Figure 5.7 Audiovisual binding: A role for STS. Activation in STS (audiovisual speech) can enhance activation in primary auditory sensory cortex (A) and visual movement cortex (V5/MT) (from Calvert et al.Reference Calvert, Campbell and Brammer2000).

The acoustic trace does not need to be well specified for audiovisual integration to occur, and for STSp to be implicated. Thus sinewave speech comprising time-varying pure tone analogues of acoustic speech formants, is typically initially heard as a non-speech sound, such as a series of tweets. However, when synchronized to coherent visible speech, some listeners perceive the signal as speech (Remez et al.Reference Remez, Rubin, Berns, Pardo and Lang1994). Möttönen et al. (Reference Möttönen, Calvert, Jääskeläinen, Matthews, Thesen, Tuomainen and Sams2006) showed that STSp is specifically activated when sinewave speech is accompanied by coherent natural visible speech – but only when this is “heard” as speech, and not when there is no (integrated) speech perception.2

Increasingly sensitive fMRI methods are revealing further organizational principles in relation to STSp function in audiovisual speech. Van Atteveldt et al. (Reference Van Atteveldt, Blau, Blomert and Goebel2010) used fMRI adaptation to explore the effects of audiovisual speech congruence. Neural populations that are sensitive to a specific signal can adapt readily to repetitions of that signal, and this is detected as a reduction in fMRI activation for repeated compared with single exposures. Adaptation to congruent audiovisual syllables was restricted to regions within STS. It did not extend back to primary cortices. Benoît et al. (2010) report similar findings in relation to audiovisual illusory syllables (McGurk stimuli, McGurk and MacDonald, Reference McGurk and MacDonald1976). However, in this case, sensitivity to McGurk illusions correlated with activation in primary cortices, underlining the relationship between primary sensory cortex activation, via binding sites, and perceptual experience of audiovisual congruence.

More direct imaging methods also illustrate the special role of STSp in audiovisual speech integration. Reale et al. (Reference Reale, Calvert, Thesen, Jenison, Kawasaki, Oya, Howard and Brugge2007) made use of a surgical technique to explore the role of STSp in audiovisual speech integration. Ten patients awaiting surgery for intractable epilepsy were implanted with electrode nets inserted subdurally, on the pial covering of the temporo-parietal cortical surface. The patients were then shown auditory, visual, and a variety of audiovisual speech tokens (CV syllables). STSp, and only this region (compared with other lateral temporo-parietal fields covered by the net) showed an evoked auditory response to a spoken syllable that was sensitive to the congruence of a synchronized visual mouth pattern. While this study could not explore further the impact of this activation on primary visual or auditory regions, other electrophysiological studies using scalp and deep electrodes have attempted to do this, and these are discussed further, below.

5.4 Audiovisual integration: timing

We have noted that, in principle, connections within speech processing streams can sometimes allow back as well as forward information flow, and have invoked this as a principle to account for activation in A1 and V5/MT by seen speech, consequent on activation in STSp. In fact, it is likely that such “back-activation” can be even more radical, with the sight of speech or music affecting (subcortical) auditory brainstem responses (Musacchia et al.Reference Musacchia, Sams, Nicol and Kraus2006; Musacchia et al.Reference Musacchia, Sams, Skoe and Kraus2007). But at what stage in processing do such multisensory interactions occur? Is STSp the only brain site that effects cross-talk between input modalities for speech that is seen, heard, and seen-and-heard? In order to explore this, we need a model of the time-course of neural events in audiovisual speech processing.

fMRI is not a sensitive technique to explore when in processing the visible and auditory speech signals affect each other. Its temporal resolution cannot track the speed of synaptic transmission to a specific event. For that, an electrophysiological signature of such an event is needed to trace (forward) information flow between different regions. While this review is focused on fMRI work, at this point we should consider how other techniques, especially EEG and MEG, may clarify the time-course and locales of audiovisual speech processing.

Until recently it appeared that a simple sequential analysis model sufficed. This assumed that integration in STSp occurred after the auditory and the visual speech signals had been independently analyzed (e.g. Calvert and Campbell Reference Calvert and Campbell2003; Colin et al.Reference Colin, Radeau, Soquet, Demolin, Colin and Deltenre2002). However, evidence is accumulating that there can be more direct, early influences of vision on auditory speech processing. From scalp EEG studies using natural audiovisual speech, auditory event-related potentials (ERPs), were found to be affected by visual speech cues as early as around 100 msec. from sight of a mouth gesture (the acoustic N1 stage – Besle et al.Reference Besle, Fort, Delpuech and Giard2004; Möttönen et al.Reference Möttönen, Schürmann and Sams2004; van Wassenhove et al.Reference van Wassenhove, Grant and Peoppel2005; Pilling Reference Pilling2009). Since visible mouth gestures can precede the acoustic onset of an utterance by up to 100 ms, it would appear that vision can “prime” auditory cortex. Direct pathways from visual to auditory cortex could be implicated, since neural events affecting auditory regions, but originating within STSp (back projection) would generate much longer latencies reflecting the recruitment of “higher-level” functions.

However, such studies give no direct information concerning the location source of the critical events. A deep electrode study with ten patients awaiting surgery for epilepsy (see the description above, on Reale et al.Reference Reale, Calvert, Thesen, Jenison, Kawasaki, Oya, Howard and Brugge2007), supported the direct cortical activation inference more clearly (Besle et al.Reference Besle, Fischer, Bidet-Caulet, Lecaignard, Bertrand and Giard2008). Patients were shown audiovisual clips of a single speaker uttering CV syllables (“pi, pa, po, py. . .”), as well as auditory-alone and visual-alone versions, and were asked to respond to a specific target syllable. Electrodes on the brain surface were positioned to track excitation at Heschl’s gyrus and other parts of the planum temporale. More electrodes, lying over inferior and middle temporal regions could track neural events in (visual) movement cortex and other visual regions. The recorded EEG events were locked to the onset of both the auditory and the visual signal in natural audiovisual speech tokens. In addition to superior temporal activation indicating late integration, neural events were recorded at both MT/V5 and in A1/2 “well before visual activations in other parts of the brain” (Besle et al.Reference Besle, Fischer, Bidet-Caulet, Lecaignard, Bertrand and Giard2008). Such feed forward activation need not imply that visual movement cortex analyzes speech qualities exhaustively, but rather that some more general aspect of speech – possibly an alerting signal that a speech sound might occur – allows the visual system to affect processing of the upcoming auditory signal.3

Another way to explore such direct feed forward modulation of vision on audition is to combine MEG or EEG with fMRI and behavioral data. A study by Arnal et al. (Reference Arnal, Morillon, Kell and Giraud2009) used audiovisually congruent and incongruent syllables as stimulus material. MEG established an early, possibly direct, effect of excitation in V5/MT on A1/2 by coherent audiovisual speech. Here is another piece of evidence that events in relatively low level visual regions can impact quickly on auditory cortex. In an fMRI study, this team then showed that correlations between activation in visual motion regions and auditory regions reflected the extent to which the visual event predicted the auditory stimulus. By contrast, audiovisual congruity (i.e. detecting whether a particular syllable was composed of congruent or incongruent audiovisual tracks) was the factor which characterized connections between STSp and primary regions.

The picture that is emerging from these studies is of at least two mechanisms that support audiovisual speech processing. One involves secondary auditory regions, focused on STSp, which can not only integrate acoustic and visual signals, but coordinate back projections to sensory regions. This route is likely to be implicated in identifying (linguistic) components in the audiovisual speech stream. The other is a fast route that allows visual events to affect an auditory speech response before STSp has been activated. However, the precise functional characteristics of the two routes, and their interactions, await further clarification.

5.5 Speechreading: other cortical regions

The discussion so far has focused on lateral temporal cortical regions and the interaction of auditory and visual signal streams. Yet other cortical regions are strongly implicated in visual and audiovisual speech processing. Activation in insular regions and in frontal (dorsolateral as well as inferior frontal – i.e. Broca’s area) is consistent with the dual-path model of speech processing, where pathways extending from posterior to anterior brain regions allow for speech processing to be fully effected. Following the implication that different cortical regions show functional differentiation within the speech streams we should predict different patterns of stimulus sensitivity in frontal (“later”) than more posterior (“earlier”) processing regions. Skipper et al (Reference Skipper, van Wassenhove, Nusbaum and Small2007) showed that “illusory” McGurk stimuli (“ta” experienced when seen “ga” and heard “pa” were coincident) more closely resembled auditory “ta” representations within frontal regions, while posterior sensory regions showed more distinctive responses reflecting the characteristics of each sensory modality.

Often, watching speech generates greater frontal activation than observing other actions, or listening to speech (e.g. Campbell et al.Reference Campbell, MacSweeney, Surguladze, Calvert, McGuire, Suckling, Brammer and David2001; Buccino et al.Reference Buccino, Binkofski, Fink, Fadiga, Fogassi, Gallese, Seitz, Zilles, Rizzolatti and Freund2001; Santi et al.Reference Santi, Servos, Vatikiotis-Bateson, Kuratate and Munhall2003; Watkins et al.Reference Watkins, Strafella and Paus2003; Callan et al.Reference Callan, Jones, Munhall, Callan, Kroos and Vatikiotis-Bateson2003; Callan et al.Reference Callan, Jones, Munhall, Kroos, Callan and Vatikiotis-Bateson2004; Skipper et al.Reference Skipper, Nusbaum and Small2005; Ojanen Reference Ojanen2005; Fridriksson et al.Reference Fridriksson, Moser, Ryalls, Bonilha, Rorden and Baylis2008; Okada and Hickok Reference Okada and Hickok2009). This suggests that speechreading can make particular demands on the speech processing system. However, to date there are no clear indications of the extent to which distinctive activation in different frontal regions may relate to the specific speechreading or audiovisual task, or to one or other speech processing stream (but see Skipper et al.Reference Skipper, van Wassenhove, Nusbaum and Small2007; Fridriksson et al.Reference Fridriksson, Moser, Ryalls, Bonilha, Rorden and Baylis2008; Okada and Hickok Reference Okada and Hickok2009; Szycik et al.Reference Szycik, Jansma and Münte2009, for speculations). Nor is there any compelling evidence for direct, unmoderated connections between frontal and inferior temporal regions – for instance, for visual motion detection. Such a scheme might be implied by strong versions of mirror-neuron theory, which give primacy in neural processing to prefrontal regions that specialize in matching observed actions with those that can be produced by the observer (see Appendix). A study by Sato et al. (Reference Sato, Buccino, Gentilucci and Cattaneo2010), using single pulse TMS, noted (fast – around 100 ms) changes in excitability in the region of tongue representation in primary motor cortex, M1, consequent on observing audiovisual stimuli that involved bilabial syllables (“ba”). Such studies need to be replicated and extended, since it is not clear to what extent these effects were specific to the observed syllables – or even to M1. The tongue and mouth area of primary somatosensory cortex, S1, which is close to the homologous regions in M1, is activated specifically when watching speech compared to listening to it (Möttönen et al.Reference Möttönen, Järveläinen, Sams and Hari2005).

The coordinated activation of cortical networks that involve frontal systems with multiple sensory systems seems to be a hallmark of visual speech processing, not just of audiovisual speech processing. Both the anterior and the posterior speech stream can be implicated (and see Campbell Reference Campbell2008). The broader picture is of a cortical system that is inherently sensitive to the multimodal correspondence of coherent speech events.

5.6 Speechreading in people born deaf

For many deaf people a spoken language, perceived by watching the speaker’s face, is the first language to which they are exposed, since fewer than 5 percent of deaf children are born to deaf, signing parents (Mitchell and Karchmer Reference Mitchell and Karchmer2004). Deaf speechreaders often outperform hearing people on tasks of speechreading (Mohammed et al.Reference Mohammed, Campbell, MacSweeney, Barry and Coleman2006; Rönnberg et al.Reference Rönnberg, Andersson, Samuelsson, Soderfeldt, Lyxell and Risberg1999; Andersson and Lidestam Reference Andersson and Lidestam2005) and visual speech discrimination (Bernstein et al.Reference Bernstein, Demorest and Tucker2000b). Skilled deaf speechreaders appear to process seen speech in ways that look very similar to the processes used by hearing people (Woodhouse et al.Reference Woodhouse, Hickson and Dodd2009).

The cortical circuits activated when deaf people speechread offer unique insights into how sensory loss, combined with “special” experience, may shape the developing brain. Which brain regions might be activated by speechreading in individuals who have never experienced useable heard speech, but who understand it entirely by eye? If speech processing is inherently multimodal, and seen speech processing in hearing people calls on auditory, somatosensory, and action systems, how do deaf brains become configured to process speech that is not heard? The first question that we asked was would STSp be less – or more – implicated in deaf than hearing speechreading? Thirteen profoundly congenitally deaf volunteers, all of whom were native signers of British Sign Language, and thirteen hearing non-signers were asked to speechread a list of unrelated words (Capek et al.Reference Capek, Macsweeney, Woll, Waters, McGuire, David, Brammer and Campbell2008a). Their task was to identify the target word ‘yes’. Both groups showed extensive bilateral activation of fronto-temporal regions for this task. There was significantly greater activation in the deaf than hearing group in the left and right superior temporal cortices. Was this because deaf participants were just better speechreaders? Performance on the Test of Adult Speechreading (TAS: Mohammed et al.Reference Mohammed, Campbell, MacSweeney, Barry and Coleman2006), performed outside the scanner, showed that the deaf group were superior speechreaders. Moreover, individual TAS scores correlated positively with activation in left STSp. However, when these scores were included as a covariate in the analyses, the left superior temporal region was still the only region showing a significant group difference (deaf > hearing). That is, the activation in this region was not only related to speechreading skill, but also to some other factor distinguishing deaf and hearing participants.4

These findings suggest, firstly, that “auditory” cortical regions in deafnative signers may be relatively more susceptible to activation by a visual language than would be the case for hearing people or for deaf people who do not use a sign language (also see Sadato et al.Reference Sadato, Okada, Honda, Matsuki, Yoshida, Kashikura, Takei, Sato, Kochiyama and Yonekura2005). It’s also likely that the word detection task (Capek et al.Reference Capek, Macsweeney, Woll, Waters, McGuire, David, Brammer and Campbell2008a) may preferentially activate the “how” (dorsal) stream, compared with rehearsal of overlearned (lipread) digit sequences (MacSweeney et al., Reference MacSweeney, Campbell, Calvert, McGuire, David, Suckling, Andrew, Woll and Brammer2001, Reference MacSweeney, Woll, Campbell, McGuire, David, Williams, Suckling, Calvert and Brammer2002b – at least in deaf speechreaders).

But where does this leave the binding hypothesis – that activation in STSp reflects audiovisual experience in which visual and acoustic signals become bound together in a coherent experience of audiovisual speech? While this region is engaged for audiovisual binding in hearing people, its extensive activation in deaf speechreading (Capek et al.Reference Capek, Macsweeney, Woll, Waters, McGuire, David, Brammer and Campbell2008a) suggests a further functional specialization. The positive correlation of activation of (left) STSp with speechreading skill in both deaf (Capek et al.Reference Capek, Macsweeney, Woll, Waters, McGuire, David, Brammer and Campbell2008a) and hearing people (Hall et al.Reference Hall, Fussell and Summerfield2005) indicates that this region is specialized for analyzing speech from facial actions – irrespective of hearing status. However, the further finding, of greater activation of this region in deaf people, supports an interpretation in terms of cross-modal plasticity. That is, when auditory input is absent, auditory cortex is relatively more sensitive to projections from other sensory modalities, especially vision (see MacSweeney et al.Reference MacSweeney, Calvert, Campbell, McGuire, David, Williams, Woll and Brammer2002a; MacSweeney et al.Reference MacSweeney, Campbell, Woll, Giampietro, David, McGuire, Calvert and Brammer2004; Bavelier and Neville Reference Bavelier and Neville2002; Sadato et al.Reference Sadato, Okada, Honda, Matsuki, Yoshida, Kashikura, Takei, Sato, Kochiyama and Yonekura2005).

How specific is the activation in superior temporal regions in relation to language, speech, and hearing status? Capek et al (Reference Capek, Woll, MacSweeney, Waters, McGuire, David, Brammer and Campbell2010) directly compared deaf people (who knew British Sign Language – BSL), and hearing participants (who did not know BSL). They were scanned in two experimental conditions. In one, they observed lists of signs and were asked to respond when a particular target gesture occurred. This was a task that both deaf and hearing respondents could do readily and accurately. In the other they speechread word lists. Left STSp showed a particular pattern of activation that was sensitive both to task and to group. This region was strongly activated by silent speech in the deaf and the hearing participants. However activation in STSp for sign lists was seen only in the deaf group, who knew BSL. We interpreted this pattern as suggesting that STSp has a specific role in (visible) language processing: both language modes were available to deaf, while only one (speechreading) was accessible to hearing participants. Sadato et al. (Reference Sadato, Okada, Honda, Matsuki, Yoshida, Kashikura, Takei, Sato, Kochiyama and Yonekura2005) drew rather different conclusions from their study of deaf and hearing participants performing recognition matching tasks on seen speech and videoclips of Japanese sign language. That study also included a further task of dot detection. Focusing on planum temporale rather than STSp5, they found greater activation in deaf than hearing participants – irrespective of task. Only in the speechreading condition did they observe significant activation in this region in hearing participants. They argue that the involvement of the planum temporale in speechreading in deaf participants reflects general colonization of auditory cortex by visual projections, and is specific neither to speech nor language processing. Further studies are needed to probe this issue further.

The long-term experience of deafness may also re-set the relative salience and neural activation patterns of regions other than superior temporal ones in relation to speechreading. For example, in people who had been deaf, but who experienced hearing anew through cochlear implantation, the pattern of audiovisual and visual activation looks different than that observed in people with an uninterrupted developmental history of normal hearing (Giraud and Truy Reference Giraud and Truy2002). People with CI can show distinctive activation in (inferior temporal) visual areas when hearing speech through their implants. Since these regions include face processing regions within the fusiform gyrus, it has been suggested that representations based on seen speech are activated when speech is newly heard via an underspecified acoustic signal (Giraud and Truy Reference Giraud and Truy2002). Such re-setting of the relative activation of visual and auditory signals in relation to audiovisual exposure can occur on a short time-scale, too. Thus, for people with normal hearing, some short-term exposure to the sight of someone speaking improves later perceptual processing when that talker is heard. Visual face-movement regions were implicated in this skill (von Kriegstein et al.Reference von Kriegstein, Dogan, Grüter, Giraud, Kell, Grüter, Kleinschmidt and Kiebel2008; and see Campbell Reference Campbell, Calder, Rhodes and Haxby2011, for further discussion). Similarly, Lee et al. (Reference Lee, Truy, Mamou, Sappey-Marinier and Giraud2007) suggest that the (more efficient) specialization of superior temporal regions for speechreading in deaf compared with hearing people may be a relatively fast-acting process: they reported superior temporal activation in a small group of deaf adults which was independent of the duration of their deafness.

Recent MEG studies (Suh et al.Reference Suh, Lee, Kim, Chung and Oh2009) show that latency of responses from superior temporal regions varied with the duration of deafness in adults. Two groups were studied: adults who were born deaf, and those who became deaf later. Neural responses were fastest in the late deaf, slowest in people born deaf. People with normal hearing showed intermediate latency for the auditory dipole event. The implications of this study are as yet unclear, since latency of the neural response did not correlate with the amplitude of the response (number of dipoles activated), nor with speechreading skill.

To summarize, recent studies suggest that speechreading engages a fronto-temporal network in born-deaf and in hearing people, alike. However, the extent to which the planum temporale and adjacent STSp play a language-specific role in deaf people, and the impact of early hearing experience in people who become deaf, or of duration of deafness itself, is not yet clear. It is likely that some non-specific activation of putative auditory regions by vision can occur in people born deaf when these regions have not experienced coherent acoustic stimulation, while in people who become deaf, some “resetting” of the activation patterns in different brain regions is likely. Cochlear implantation can “reset” patterns of activation – especially for speech processing. It should also be noted that the resting brain (i.e. when no specific task is performed during neuroimaging) shows a more widespread pattern of activation – including visual regions in the inferior temporal lobe – following CI than before CI (Strelnikov et al.Reference Strelnikov, Rouger, Demonet, Lagleyre, Fraysse, Deguine and Barone2010).

5.7 Conclusions, directions

The studies reported here cover a relatively short time span of around fifteen years. Yet, in this period, the use of neuroimaging techniques to map brain function has expanded at an enormous rate. The first ten years of human fMRI studies were largely concerned with defining cortical specializations in terms that could relate to lesion studies in neuropsychology, such as those of Luria (Reference Luria1973). That was the direction that we came from, since our earlier work showed how speechreading could be implicated in various ways in people with discrete brain lesions (Campbell Reference Campbell, Stork and Hennecke1996). Those studies showed us clearly that watching speech activates auditory speech processing regions, albeit via biological movement signals. In this respect, speechreading may enjoy a special status compared with other types of visual material such as written words, or compared to other types of facial or manual communication (Capek et al.Reference Capek, Woll, MacSweeney, Waters, McGuire, David, Brammer and Campbell2010). Speechreading may engage multisensory and sensori-motor speech processing mechanisms to a greater degree than simply listening to speech. This is not surprising: speechreading cannot deliver all the acoustically available speech contrasts – it must make do with a systematically degraded signal that may require relatively greater input from “higher” processing stages (Calvert and Campbell Reference Calvert and Campbell2003; Campbell Reference Campbell2008). In deaf people, speechreading seems to involve similar, primarily superior temporal (i.e. auditory) processing regions as in hearing people – but to a greater extent.

However, these pioneering fMRI studies presented a rather static view of brain function, focusing on specializations as relatively fixed and discrete. More recently, with the technical developments in imaging neural connections and in aligning fMRI with event-locked electrophysiological recordings, as well as with longitudinal and developmental studies, a more dynamic picture of brain function is starting to emerge. Firstly, on the behavioral front, work with atypical populations, such as deaf people, offers unique insights into long-term brain plasticity (see, for instance, Strelnikov et al.Reference Strelnikov, Rouger, Demonet, Lagleyre, Fraysse, Deguine and Barone2010). We have noted that cross-modal plasticity plays a role in the recruitment of superior temporal regions for non-auditory processing in the brains of people born deaf. Long-term studies with deaf children learning to master visual speech, and ongoing studies with cochlear implant patients should clarify the extent to which (for instance) particular visual regions, especially in V5/MT, may become recruited for speech processing, as well as the role of STSp in moderating such activation, if present. So, too, the involvement of frontal parts of the speech processing system can be addressed in deaf, as in hearing people, to offer insights into the extent to which hearing speech moderates the cortical bases of speech representations and speech processes (MacSweeney et al.Reference MacSweeney, Brammer, Waters and Goswami2009).

Audiovisual speech processing offers a natural model system for addressing key questions in neural function such as how are sensory events analyzed by dedicated, sensory-specific mechanisms, then “aligned” to deliver multimodal and, indeed, amodal percepts. Do signal characteristics that contribute to the unified percept, based on correspondences between the visual and auditory speech signal play a key role (Bernstein et al.Reference Bernstein, Auer, Wagner and Ponton2008; Loh et al.Reference Loh, Schmid, Deco and Ziegler2010)? To date, while two processing mechanisms – a fast forward flow from visual to auditory cortex, and a slow integrative mechanism utilizing STSp – have been described, much work remains to be done to clarify how these systems function and interact.

Despite the many recent advances in neuroimaging methods, lesion studies can still offer important clues to the organization of seen speech. Hamilton et al. (Reference Hamilton, Shenton and Branch Coslett2006) describe a patient with parietal damage who lost the ability to experience audiovisual speech coherently, with no apparent difficulty in processing unimodal speech. No such patient had been described before, and no parietal regions (other than inferior parietal regions bordering on STSp) had been implicated in seeing speech. Nowadays, we have tools and techniques that could probe the possible bases for such anomalous perceptions – and relatively well formulated models of signal processing and information flow to guide interpretation. They will lead us in interesting directions.

5.8 Acknowledgments

Both authors are members of the Deafness Cognition and Language Centre (DCAL), established with funding from the ESRC (UK), at University College, London. Mairéad MacSweeney is supported by the Wellcome Trust (UK). The work described here by Campbell, MacSweeney and colleagues was supported by the Medical Research Council, UK (“Functional Cortical Imaging of Language in Deafness”, MRC Grant. G702921N) and by the Wellcome Trust (“Imaging the Deaf Brain”, see www.ucl.ac.uk/HCS/deafbrain/). We thank all participants and colleagues for their contribution to this work, especially Cheryl Capek, Bencie Woll, Gemma Calvert, Dafydd Waters, Tony David, Phil McGuire, and Mick Brammer.

5.9 Appendix: Glossary of acronyms and terms

5.9.1 Functional imaging techniques (and see Cabeza and Kingstone Reference Cabeza and Kingstone2006 for further details)

fMRI – functional Magnetic Resonance Imaging. A brain imaging technique that uses the changes in local electromagnetic fields due to blood-flow changes following neural activity, to map cortical activation. To measure these changes, the participant is placed in a high-intensity magnetic field (1.5 to 4 Tesla), and images are acquired based on computerized tomography. Because it uses a high magnetic field to track brain activation it is currently unsuitable for testing people wearing hearing aids (including cochlear implants). It also requires relatively rigid head position to be maintained throughout scanning. A certain number of repeated scans are not thought to be harmful to health. Spatial resolution is, at best, about five millimeters; temporal resolution half to three-quarters of a second. More recent developments in magnetic resonance imaging include Diffusion Tensor Imaging (DTI) – a technique that enables the measurement of the restricted diffusion of water in tissue in order to produce neural tract (white matter) images as opposed to images based on grey matter regions of activation (Jones Reference Jonesin press). This is a form of structural imaging, especially useful for analyzing long-distance connections between brain regions. In order to analyze functional connections between brain regions, correlational analysis (principal component analysis – see Friston et al.Reference Friston, Frith, Liddle and Frackowiak1993) can be used to explore BOLD signal similarity between a seed region (ROI) and other brain regions.

PET – Positron Emission Tomography. A brain imaging technique that maps activation by tracing changes in cortical metabolism associated with neural activity. These are tracked by scanning for local changes in uptake of radioactive tracer metabolites. Because of the ingestion of radioactive materials, PET scanning should not be undertaken more than once over several years unless clinical conditions dictate it. PET scanning can be used to measure brain activation with relative freedom of movement and can also be used with people wearing hearing aids. Spatial resolution is similar to that for fMRI.

MEG and EEG – magnetoencephalography and electro-encephalography. Both track changes in cortical activity across the brain by mapping changes in scalp fields. These are local magnetic and electrical fields respectively, and can be measured non-invasively. The idea is that these scalp waveforms reflect underlying neural sources. As functional mapping techniques, they tend to use waveform signatures associated with specific cognitive events and explore their distribution in time and space. The most immediate such waveforms are pre-attentive ones such as the visual/auditory evoked responses to a non-specific visual or acoustic event. More high-level cognitive events can include a range of “mismatch” phenomena, when an unexpected cognitive event occurs following a series of expected ones. For example, a semantic mismatch negativity wave may occur when, after listening to spoken examples from one category, a category switch is introduced.

While temporal resolution is extremely fine, spatial localization to the level achieved by fMRI and PET is currently difficult to achieve, since it relies on mathematical modeling of the source electromagnetic properties of deep brain structures as surface (scalp) waveforms. The complexity of these resultant waveforms may set insoluble limits on the precision of localization of some brain structures.

TMS – Transcranial magnetic stimulation offers a form of “reversible brain lesion.” The application of localized magnetic induction to particular regions of the scalp can temporarily block neural transmission in the region lying below the induction wand. If used over primary motor cortex (M1), it produces muscle activity which can be recorded as a motor-evoked potential (MEP). The extent to which this is, in turn, disrupted by performing an experimental task can indicate the specialization of that region for the task.

5.9.2 Anatomical regions

STG – superior temporal gyrus. The uppermost of the three gyri (folds) on the lateral surface of each temporal lobe. It extends from the temporal pole (anterior) to the supra marginal gyrus (posterior).

STS – superior temporal sulcus. The ventral (underside) of the superior temporal gyrus. It extends for the length of the gyrus.

HG – Heschl’s gyrus. A deep fold within the Planum Temporale on the upper (hidden) surface of the temporal lobe. It is the first cortical processing site for acoustic input analysis.

PT – Planum Temporale (temporal plane). The upper or superior surface of the temporal lobe, normally lying under the posterior inferior parts of the frontal lobe. The infold between the two lobes is the Sylvian fissure. Most of the PT is associated with acoustic processing.

FG – Fusiform gyrus. A long gyrus which lies within Brodmann area 37, within the inferior temporal lobe. Bordered medially by the collateral sulcus and laterally by the occipitotemporal sulcus, it can be considered a secondary visual region with several “specializations” along its length. In its midregions it is reliably activated in observing faces, including speechreading. Other parts of FG appear specialized for recognizing written words and word-parts (left hemisphere).

Insular cortex (insula) lies medially between frontal and temporal lobes. The anterior insula, with many connections to the limbic system, is functionally specialized for “own body awareness.” It is often implicated in speech processing, both in perception and in production. Insular lesions can give rise to progressive aphasia.

DLPFC – Dorsolateral prefrontal cortex (Brodmann areas 9, 46), can be considered a secondary motor area, responsible for motor planning and organization. It is implicated in working memory and is part of the dorsal speech processing stream.

5.9.3 Functionally defined regions

A1 – primary auditory cortex. Regions within HG, in each temporal lobe, that support a number of basic analyses of acoustic information. It receives major projections from the inferior colliculus, a subcortical relay from the auditory nerve. Its internal organization is tonotopic (high spectral frequencies in specific locations, low ones distant from them). Further principles of organization within A1 remain to be determined.

A2 – secondary auditory cortex. Those regions, contiguous to primary auditory cortex, within the PT, that receive most of their input from primary auditory cortex and support higher-order acoustic analyses. A2 extends laterally to the upper part of STG.