6.1 Introduction

This chapter discusses the role of frequency for Construction Grammar, especially concerning usage-based models of language (Bybee Reference Bybee2010). In doing so, the chapter addresses issues of language processing and production, but also concerns that are methodological, relating to the corpus-based study of constructions through various statistical techniques (Yoon & Gries Reference Yoon and Gries2016). The importance of frequency measurements in constructional research has grown over recent years, reflecting the continuing evolution of the field. This development can be illustrated by comparing three definitions of the term construction that have been proposed by Adele Goldberg (Reference Goldberg1995, Reference Goldberg2006, Reference Goldberg2019), and have had a considerable impact in the field:

(1) C is a CONSTRUCTION iffdef C is a form–meaning pair <Fi, Si> such that some aspect of Fi or some aspect of Si is not strictly predictable from C’s component parts or from other previously established constructions (Goldberg Reference Goldberg1995: 4).

(2) Any linguistic pattern is recognized as a construction as long as some aspect of its form or function is not strictly predictable from its component parts or from other constructions recognized to exist. In addition, patterns are stored as constructions even if they are fully predictable as long as they occur with sufficient frequency (Goldberg Reference Goldberg2006: 5).

(3) [C]onstructions are understood to be emergent clusters of lossy memory traces that are aligned within our high- (hyper!) dimensional conceptual space on the basis of shared form, function, and contextual dimensions (Goldberg Reference Goldberg2019: 7).

If the three definitions are compared side by side, several differences become apparent. The first definition places non-compositional meanings and idiosyncratic formal characteristics at its center. Constructions such as the Xer the Yer (Kay & Fillmore Reference Kay and Fillmore1999) or the way-construction (Goldberg Reference Goldberg1995) are well-known illustrations of these characteristics, which constitute a fundamental motivation for viewing constructions as conventionalized form–meaning pairings.

The second definition builds on the first one but explicitly includes frequency, which is understood in this context as token frequency, that is, the repeated occurrence of a linguistic pattern in language use. The definition claims that sufficient exposure to such a pattern will result in the mental representations of a construction, even if the pattern itself is transparent and rule-governed. To take an example, a string such as I don’t know conforms to the canonical syntax of English negated declarative sentences, and it conveys a meaning that is fully compositional. Still, given its high frequency of use, speakers of English are likely to store it redundantly in memory, as a construction. Besides semantic or formal idiosyncrasy, high frequency of use thus provides a second important criterion for constructionhood. The definition further suggests that there is a threshold that distinguishes entrenched constructions from patterns that occur more rarely and that are not mentally represented as such. Such a threshold presupposes a continuum of entrenchment between highly frequent, conventionalized constructions and rare patterns that represent chance co-occurrences of linguistic elements. Whereas I don’t know is highly entrenched, sentences such as I don’t row or I don’t sow are not. With its emphasis on frequency, Goldberg’s second definition reflects the increasingly close ties between Construction Grammar and both psycholinguistic work and corpus-based research.

The third definition further expands on frequency-related characteristics of constructions. Goldberg (Reference Goldberg2019: 7) states that this definition is more aligned with what is currently known about human memory, learning, and categorization. It is useful to unpack three aspects of the definition. First, constructions are viewed as clusters of memory traces, which means that repeated exposure to similar linguistic patterns eventually leads speakers to form generalizations. This can be illustrated with the ditransitive construction, which combines a subject, a verb, and two objects, as in Mary handed me an envelope or We sent them a message. These examples contain different words but instantiate the same structural pattern. The ensuing generalization is characterized as ‘lossy’, so that the mental representation of the construction is more abstract than the actual instances of language use that form its basis. At the same time, the generalization is anchored in usage, so that linguistic elements that appear especially frequently represent the prototype of the construction (Bybee Reference Bybee2010: 79). Second, Goldberg situates constructions in a high-dimensional conceptual space. Instances of constructions vary along several dimensions of form and meaning. A speaker who is exposed to such variation will form generalizations on the basis of that experience. For example, a speaker of English would register that in ordinary language use most instances of the ditransitive construction involve animate recipients, but that there are occasional exceptions, as in We gave the bathroom a makeover. Knowledge of constructions thus involves knowledge of variation, specifically including knowledge of the relative frequency of variants. A third aspect in which the definition breaks new ground concerns the role of context. Language use is sensitive to context, which means that frequency of use has to be understood relative to speech situations. The constructions that a speaker is likely to encounter in one context may be near-absent in another, depending for example on the formality of the situation or the social distance between the communicators. An example for this is offered by Hay and Foulkes (Reference Hay and Foulkes2016) in a study of pronunciation variation in New Zealand English. In words such as city, speakers may realize the intervocalic /t/ either as a voiceless alveolar stop [t] or as a voiced or flapped variant [d/ɾ]. The extralinguistic context influences speaker behavior, so that when speakers verbalize events that lie in the more distant past, they are relatively more likely to use the more traditional, voiceless variant (Hay & Foulkes Reference Hay and Foulkes2016: 322). The example shows that constructions are sensitive to subtle characteristics of the speech situation. In the light of this, what Goldberg’s third definition accomplishes is thus a more realistic, but ultimately also more complex, account of how frequencies of use shape speakers’ mental representations of constructions.

To summarize what has been said so far, frequency has become an increasingly important notion in constructional studies. Whereas initially the focus was centered on token frequency, current research takes into account a much broader range of frequency-related measures that often pertain to variation in language use. It is the purpose of this chapter to present an overview of the measures and techniques that have been used in constructional analyses, to outline what results can be obtained through their use, and to discuss how these results inform constructional theories of language.

The remainder of this chapter is structured as follows. Section 6.2 will offer definitions of frequency and will discuss the way frequency manifests itself in the use of constructions. The section will differentiate between token frequency, type frequency, relative frequency, frequency of co-occurrence, and dispersion. It will be explained how these aspects of frequency can be measured on the basis of corpus data, and how these measurements allow the observation of different frequency effects (Bybee Reference Bybee2010; Pfänder & Behrens Reference Pfänder, Behrens, Behrens and Pfänder2016) that relate to phenomena such as entrenchment, ease of processing, productivity, phonological reduction, and resistance to regularization, among others. These frequency effects will be illustrated on the basis of experimental and corpus-based analyses of lexical, morphological, and syntactic constructions. It will be discussed how the insights from these empirical studies feed back into the development of constructional theories. Throughout the section, the discussion will showcase corpus-based methods that draw on frequency as a means to analyze constructions. For each method that is featured it will be clarified what kind of frequency data enters the analysis, how that data is processed, and what can be learned from the results. Case studies from the corpus-based constructional literature will be used to illustrate relevant concepts.

Section 6.3 will turn to frontiers and open questions regarding the role of frequency in constructionist research. Not only is the relation between corpus frequencies and theoretical notions such as entrenchment far from trivial (Blumenthal-Dramé Reference Blumenthal-Dramé and Schmid2017), it is also important not to attribute effects to token frequency that can in fact be explained by other, correlating variables. Gries (Reference Gries2022a, Reference Gries and Boas2022b) suggests a number of avenues that should prompt constructional research to go beyond the use of frequency values, taking into account paradigmatic and syntagmatic variability as well as dispersion and contingency. These suggestions will be taken up in order to flesh out their implications for the future development of Construction Grammar.

6.2 Frequency Measures and Frequency Effects

This section discusses five different ways in which frequency manifests itself in language use and language processing. Token frequency, type frequency, relative frequency, frequency of co-occurrence, and dispersion will be presented individually, focusing on their respective effects and their relevance for constructional analyses. The discussion will also point towards interrelations between these aspects of frequency.

6.2.1 Token Frequency

Token frequency captures how often an element or structural pattern appears in language use. It is measured through counts of elements in linguistic corpora. For example, in the British National Corpus, the word time has a token frequency of more than 150,000, whereas the word chronology appears a mere 300 times. Token frequencies are commonly normalized, for example to instances per million words, in order to make them comparable across corpora. High token frequency is known to impact language processing in several ways (Ellis Reference Ellis2002). Five effects of high token frequency that have direct implications for constructionist theories of language are known as chunking, entrenchment, reduction, conservation, and conservatism.

The first of these, chunking, refers to the phenomenon that speakers cognitively process a string of linguistic elements as a holistic unit (Bybee & Scheibman Reference Bybee and Scheibman1999). This is the case for strings such as by the way or you know, which serve as discourse markers and thus convey meanings that go beyond the meaning of their component parts. By the way signals that the speaker temporarily deviates from the discourse topic (Fraser Reference Fraser2009). With you know the speaker can invite the hearer to draw an inference (Jucker & Smith Reference Jucker, Smith, Jucker and Ziv1998). Such non-compositional meanings, which constitute an important definitional criterion of constructionhood, are directly related to holistic processing, which in turn is driven by high token frequency. Chunking is further relevant for the processing of complex syntactic constructions. For example, speakers of English holistically represent constructions such as the Xer the Yer (Kay & Fillmore Reference Kay and Fillmore1999; Hoffmann Reference Hoffmann2019), so that when they hear an utterance that begins with The more I think about it, the first part of the construction will trigger expectations about how the utterance will be continued, thereby facilitating language processing. Another effect of chunking relates to syntactic constituency (Bybee Reference Bybee2010: 136). If a string of elements is frequently processed, speakers will treat it as a syntactic unit. Hilpert (Reference Hilpert, Dąbrowska and Divjak2015: 348) offers the example of the string sitting and waiting, arguing that its syntactic behavior in questions such as What are you sitting and waiting for? is that of a single verbal unit. Questioning the prepositional object of the verb waiting is only possible because sitting and waiting is processed as a chunk. By comparison, a question such as What are you walking and waiting for? sounds unidiomatic, since the string walking and waiting is not processed as a chunk and does not function as a single verbal constituent.

A second important effect of high token frequency is entrenchment. Repeated exposure to a linguistic unit increases the strength with which that unit is mentally represented. Elements and patterns that are highly entrenched can be retrieved from memory more quickly and more accurately (Ellis Reference Ellis2002: 152). A finding that is particularly relevant for Construction Grammar has been reported by Arnon and Snider (Reference Arnon and Snider2010), who conducted an experiment in which the participants had to indicate whether English n-grams like don’t have to worry and don’t have to wait are possible parts of grammatically formed sentences. Both fragments are indeed grammatically well-formed, but there is a difference in that the first one is more frequent than the other. The participants were quicker to confirm the grammaticality of the more frequent n-gram. Crucially, this is not due to the token frequency of the component words and word pairs, which were controlled for in such a way that they are actually identical in frequency. Entrenchment can thus be shown to facilitate language processing even at the level of multi-word units.

Another common effect of high token frequency concerns language production. Linguistic units that are used frequently tend to become phonologically reduced. Bybee and Thompson (Reference Bybee and Thompson1997: 576) give the example of BE supposed to, which can be reduced to [spostə] in utterances such as That’s not supposed to happen. Phonological reduction of this kind is common in cases of grammaticalization (Hopper & Traugott Reference Hopper and Traugott2003: 69), as for instance in the case of be going to and its reduction to gonna, or want to and wanna. The phonologically reduced forms can be shown to establish themselves as independent constructions that differ in terms of meaning and structure from their respective sources (Lorenz Reference Lorenz2013).

What the effects of chunking, entrenchment, and reduction show is that high token frequency is a powerful driver of language change. At the same time, high token frequency also has a conserving effect (Bybee & Thompson Reference Bybee and Thompson1997: 577). Linguistic elements that are frequently used are relatively more resistant to analogical change, which is apparent for example in irregular past tense formations such as keep – kept. Whereas keep has retained its irregular past by virtue of its high token frequency, less frequent verbs such as weep or leap have succumbed to analogical pressure and have adopted regularized past tense forms. The conserving effect of high token frequency is also evident in syntax, for example in English expressions that maintain older forms of sentential negation (Tottie Reference Tottie and Kastovsky1991; Bybee Reference Bybee2006). Whereas the canonical pattern for an English negated sentence involves do-support, as in I don’t believe that, post-verbal negation is preserved with modal auxiliaries (You should not believe that) and in fixed expressions that involve lexical verbs (make no mistake, it gives me no joy). In these cases, high frequency of use motivates the maintenance of the older syntactic patterns.

A speaker who experiences a linguistic unit with high token frequency forms increasingly clear expectations about its distributional behavior. This is even true for children who are still acquiring their first language. Children are known to produce overgeneralization errors such as *bringed for brought or *breaked for broke (Clark Reference Clark and MacWhinney1987: 19). Brooks et al. (Reference Brooks, Tomasello, Dodson and Lewis1999) showed that this phenomenon is sensitive to token frequency. Specifically, it was shown that children are less open towards new combinations of words and constructions if the words in question are highly frequent. In the study, children were encouraged to use intransitive verbs in a transitive way. Existing intransitive verbs were extended more readily if they were infrequent. That is, while children were reluctant to transitivize frequent verbs such as come (e.g., He came the car), they showed a greater tendency to transitivize infrequent intransitive verbs with the same meaning, as for example arrive (e.g., He arrived the car).

With regard to Goldberg’s (Reference Goldberg2006: 5) second definition of constructions, the effects discussed in this section motivate why token frequency is important to consider in constructional analyses. The effects also suggest that high token frequency tends to bring about non-predictable characteristics in constructions. An entrenched multi-word unit such as I don’t know is syntactically canonical and semantically transparent, but in actual usage that string will often be used in its phonologically reduced form dunno and with a non-compositional meaning. As a response to the question Would you like to watch Netflix?, the string I dunno would indicate that the speaker actually has a different preference. The inclusion of high token frequency as a definitional criterion of constructionhood is therefore fully compatible with a view of constructions as idiosyncratic linguistic units that need to be learned.

Token frequency is further relevant with regard to the influence of linguistic context on speakers’ choices between alternative options. Rivas and Brown (Reference Rivas and Brown2012) show this in a study of Spanish presentational constructions with haber ‘have’, as in Hubo problemas ‘There are problems’ (lit. haveSG problems). Prescriptively, the presentational construction calls for a singular form of the verb, regardless of whether the noun phrase that is presented is in the singular or the plural. That said, in many varieties of Spanish plural noun phrases may actually trigger plural agreement in the verb. Rivas and Brown (Reference Rivas and Brown2012) demonstrate that this phenomenon is sensitive to token frequency, more specifically the frequency with which a plural noun appears as a grammatical subject in other clause-level constructions. A plural noun such as estudiantes ‘students’, which frequently appears as a grammatical subject, exhibits a stronger tendency to trigger plural agreement than a plural noun such as fraternidades ‘fraternities’, which is used less often with the function of a grammatical subject.

6.2.2 Type Frequency

Type frequency represents the number of different variants of a construction in language use. More specifically, type frequency can be determined through the use of corpora by exhaustively extracting the instances of a given construction, so that the different elements that can fill a slot of that construction can be counted. The concept can be illustrated with the English regular past tense construction, which is a pattern with very high type frequency (Bybee Reference Bybee1995). The suffix -ed appears with a large number of verbs, yielding forms such as walked, painted, or opened. By comparison, the type frequency of forming the past tense through a vowel contrast, such as drink – drank, sing – sang, or swim – swam, is much lower. Type frequencies can not only be determined for morphological constructions but also for syntactic ones. For example, Israel (Reference Israel and Goldberg1996) investigated the diachronic growth in type frequency of the English way-construction by examining the main verbs that appeared in that construction during different historical periods.

Goldberg (Reference Goldberg2006: 99) points out that high type frequency is connected to productivity, that is, the ease with which speakers produce and process new instances of a construction. A construction that accommodates a broad range of elements as slot-fillers is more likely to be extended further to new elements, as opposed to a construction that occurs only with a select few. Corpus-based operationalizations of productivity often combine type counts with other measures, including token frequency and the relative prevalence of hapax legomena, that is, types that appear only once. For example, the measure of ‘potential productivity’ is calculated by taking the hapax legomena of a construction and dividing that number by the overall token frequency of the construction (Baayen Reference Baayen, Lüdeling and Kytö2009: 902). If a construction is used with high token frequency and a large ratio of its types occurs just once, this constitutes strong evidence that speakers regularly use that construction in creative ways.

Barðdal (Reference Barðdal2008: 34) argues that assessments of the productivity of a construction need to take into account the coherence between its types. Coherence can be defined both in terms of meaning and phonological similarity. Type frequency and coherence are seen as inversely correlated, so that if a construction occurs with many types, their coherence is likely to be low. Suttle and Goldberg (Reference Suttle and Goldberg2011: 1239) make a similar point when they relate the productivity of constructions to type frequency, variability, and similarity. If a speaker observes a pattern with a large number of types that are variable in terms of meaning, a new instance that is similar to any one of those types will have a good chance of being acceptable.

An effect of type frequency can be seen in a study by Brooks and Tomasello (Reference Brooks and Tomasello1999), who presented children between 2 and 4 years of age with nonce verbs such as meeking and tamming. The respective verbs were presented exclusively in either the active voice or the passive voice. After the training phase, the children were encouraged to use the verbs themselves, and they were provided with contexts that would favor either the use of the active or the passive. Brooks and Tomasello (Reference Brooks and Tomasello1999: 34) observe that the children are generally reluctant to generalize the verbs from one construction to the other, but they further note an asymmetry between the two constructions, namely that generalizations towards the passive, which is characterized by a relatively lower type frequency, are especially scarce.

Dąbrowska (Reference 168Dąbrowska2008) tests the respective effects of type frequency and phonological similarity in an experiment that prompts speakers of Polish to add dative endings to nonce nouns. In the Polish case system, the masculine dative ending -owi has a higher type frequency than the feminine dative endings -’e and -i, which in turn have higher type frequencies than the neuter dative ending -u. Dąbrowska (Reference 168Dąbrowska2008: 937) designed stimuli in such a way that the nonce words differed systematically in their phonological neighborhood density. Items with high neighborhood density were similar to a large number of existing words, whereas items with low neighborhood density were dissimilar to other elements in the Polish lexicon. The results indicate that performance is better if the type frequency of the case inflection is higher and if the phonological neighborhood is densely populated (Dąbrowska Reference 168Dąbrowska2008: 947).

Like high token frequency, high type frequency drives phonological reduction effects. Bybee (Reference Bybee2002) examined final t/d-deletion in a corpus of spoken English, comparing pre-vocalic deletion rates across auxiliaries with contracted negation (don’t), lexical items ending in an unstressed syllable with final nt (government, different), and regular past tense forms (kissed, burned). Bybee (Reference Bybee2002: 276) finds that the highest rates of pre-vocalic t/d-deletion are observed with regular past tenses, which can be motivated in terms of the high type frequency of that construction. This result is corroborated by Díaz-Campos and Gradoville (Reference Díaz-Campos, Gradoville and Ortiz-López2011), who study intervocalic d-deletion in Spanish participles with -ado and -ido. Deletion rates are higher with -ado, which is characterized by a relatively higher type frequency.

To summarize this section, type frequency is central for measures of constructional productivity, in which it interacts with both semantic coherence between types and phonological neighborhood density. High type frequency is furthermore correlated with rates of phonological reduction in forms that instantiate a pattern.

6.2.3 Relative Frequency

Relative frequency measurements compare the token frequency of one linguistic unit to that of one or several related linguistic units and express the result in terms of a ratio. This is often done with constructions that serve as alternative expressions of similar or near-identical concepts. For example, the English dative alternation comprises two constructions, the ditransitive construction (Mary gave John the book) and the prepositional dative construction (Mary gave the book to John), which both serve to express the transfer of an object between an agent and a recipient. The constructions differ in terms of the sequence with which the arguments of the verb are presented, which means that speakers’ choices between the two are commonly rooted in principles of information structure, such as the principle of end-focus (Hilpert Reference Hilpert, Aarts, MacMahon and Hinrichs2021). In naturally occurring language use, the ditransitive construction has higher token frequency than the prepositional dative construction (Bresnan et al. Reference Bresnan, Cueni, Nikitina, Baayen, Bouma, Krämer and Zwarts2007). Another pair of constructions with similar functions and a marked frequency asymmetry comprises the English active and passive. Sentences such as The dog bit the mailman or The mailman was bitten by the dog serve to express the same state of affairs with a difference in focus on either the agent or the patient. Passive sentences are much less frequent than active transitive sentences. As a third example, the English comparative can be formed morphologically and periphrastically, as in prouder or more proud (Hilpert Reference Hilpert2008). In general English usage, morphological comparatives vastly outnumber periphrastic comparatives.

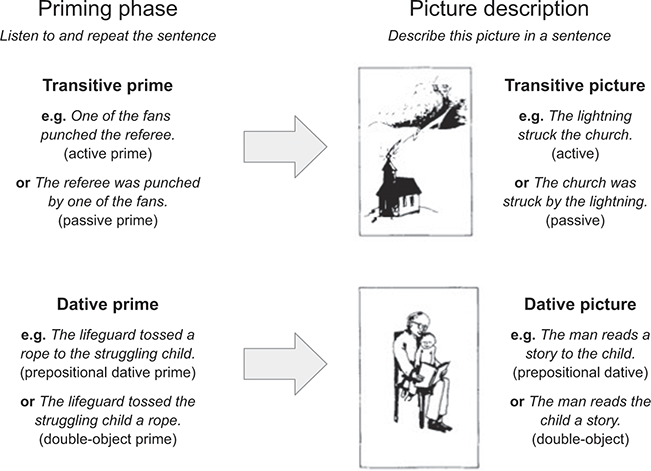

Asymmetries in relative frequency influence language use and language processing in several ways. One such effect can be observed in structural priming (see also Chapters 8 and 23). When speakers process a syntactic construction, this increases the likelihood that they will produce that construction in subsequent language use (Bock Reference Bock1986). This tendency is frequency-sensitive. The so-called inverse-preference effect (Ferreira & Bock Reference Ferreira and Bock2006) is the phenomenon that in a pair of related constructions, structural priming is stronger for the construction with the lower relative frequency. Jaeger and Snider (Reference Jaeger and Snider2007) demonstrate this for the English dative alternation, in which prepositional datives yield a stronger priming effect, and for the English voice alternation, in which passives have a more pronounced impact. The inverse-preference effect has been widely replicated. For example, Rosemeyer and Schwenter (Reference Rosemeyer and Schwenter2019) document it for the Spanish past subjunctive forms -se vs. -ra in historical corpus data. Torres Cacoullos (Reference Torres Cacoullos, Adli, García García and Kaufmann2015) further documents a relation between priming and the analyzability of constructions. In a study that addresses the Spanish progressive with the copula estar ‘be’ and a gerund on the basis of historical corpus data, Torres Cacoullos tests whether speakers are more likely to use the progressive construction if they have been primed with other constructions that contain the verb estar, such as locative, resultative, and predicate adjective constructions. The data reveal that such priming effects exist and that they become increasingly weaker over time (Torres Cacoullos Reference Torres Cacoullos, Adli, García García and Kaufmann2015: 278). This suggests that the holistic unit status of the progressive construction has become strengthened over time.

Relative frequencies are also relevant for an effect that is known as statistical preemption (Goldberg Reference Goldberg2019). The basic premise of statistical preemption is that speakers form generalizations over pairs of constructions that serve comparable functions, such as the dative alternation or the morphological comparative and the periphrastic comparative. It is further assumed that speakers’ mental representations of these construction pairs comprise knowledge of the lexical items that they encounter in each construction. For example, speakers’ knowledge of the dative alternation would include the fact that verbs such as give, send, or promise occur both in the ditransitive construction and in the prepositional dative construction. Importantly, the relative frequency distribution of some verbs is highly asymmetric, so that for example the verb explain regularly occurs in the prepositional dative construction (She explained the problem to me), but not at all in the ditransitive construction (*She explained me the problem). Speakers subconsciously take note of such statistical asymmetries and interpret them as grammatical constraints. In more concrete terms, this reasoning process can be spelled out as follows. Speakers know that the ditransitive construction and the prepositional dative construction convey similar meanings and occur with similar sets of verbs. They register that the ditransitive construction is overall more frequent. Yet, they encounter the verb explain frequently in the prepositional dative construction, but not at all in the ditransitive construction. Since this imbalance could not be due to chance, it has to be the case that explain cannot be used in the ditransitive construction. In a study that approaches this issue experimentally, Boyd and Goldberg (Reference Boyd and Goldberg2011) expose participants to novel adjectives such as adax and ablim. Those forms phonologically resemble English adjectives such as awake or afraid, which can be used predicatively (The child is awake, The passenger is afraid) but not attributively (*the awake child, *the afraid passenger). In other words, their relative frequency distribution across predicative and attributive contexts is maximally asymmetrical. Boyd and Goldberg (Reference Boyd and Goldberg2011: 69) observe that the participants of their study avoided using forms like adax and ablim in attributive contexts. Statistical preemption can thus account for the fact that speakers do not produce certain expressions and find them ungrammatical, when they are asked about them. Entrenchment as such can only explain why certain expressions sound familiar to speakers, but it cannot explain the difference between previously unseen structures that are fully acceptable and unseen structures that are completely unacceptable.

Another effect of relative frequencies has been documented by Hilpert (Reference Hilpert2008) in a study of English comparative constructions. Adjectives such as proud or healthy can form the comparative either morphologically (prouder, happier) or periphrastically (more proud, more healthy). The variation between the two variants is influenced by a broad range of factors including the length of the adjective, its stress pattern, its final phonological segment, and its syntactic context (Hilpert Reference Hilpert2008: 407). Frequency of use impacts speakers’ choices in two ways. First, there is an effect of high token frequency. A highly frequent adjective such as easy has a stronger tendency to form the morphological comparative than a less frequent adjective with similar phonological characteristics, such as queasy. A second frequency effect concerns the relative frequency of adjectives in the positive form and its comparative formations. An adjective such as tall is frequently used comparatively, whereas this is not the case for adjectives such as red or square, which are less gradable. Highly gradable adjectives show a greater tendency to be used with the morphological comparative.

The main point to take away from this section is the following. Effects such as statistical preemption and the inverse-preference effect in structural priming reveal that speakers are sensitive to relative frequencies in language use, and that this sensitivity impacts their choices between constructions that function as mutual alternatives.

6.2.4 Frequency of Co-occurrence

Measuring frequencies of co-occurrence is one of the fundamental analytical techniques of corpus linguistics, which has a long tradition of studying collocations (Gries Reference Gries2013). Collocations can be broadly defined as multi-word units that exhibit varying degrees of fixedness. They are exemplified by word pairs with non-compositional, conventionalized meanings such as fast food, word combinations with elements that co-occur much more frequently than would be expected by chance, such as unmitigated disaster, and multi-word idiomatic expressions such as make hay while the sun shines. The term collocation has further been used to describe the regular co-occurrence of lexical items in close proximity, which is observed for example for the words refugee and crisis.

Whereas token frequency, type frequency, and relative frequency are measured through basic counts of instances in a corpus, frequencies of co-occurrence are studied in terms of various association measures, which include for example mutual information, log likelihood, or Delta P (Brezina Reference Brezina2018: 72). These measures take into account how often two linguistic elements appear together and how often the same elements appear on their own in other contexts. A comparison of the co-occurrence frequencies with the individual token frequencies shows how strongly the elements of a collocation are mutually associated. A collocation such as sustainable development can serve as an example. Table 6.1, which uses data from the British National Corpus, shows the token frequency of the collocation, the token frequencies of the individual elements that make up the collocation, and the total number of words in the corpus.

Table 6.1 A contingency table of sustainable development and its frequency in the BNC

| sustainable | NOT sustainable | Total | |

|---|---|---|---|

| development | 147 | 31,564 | 31,711 |

| NOT development | 518 | 96,954,478 | 96,954,996 |

| Total | 665 | 96,986,042 | 96,986,707 |

Table 6.1 shows that the collocation sustainable development appears 147 times in the corpus, which contains a total of almost 97 million words. Given the token frequencies of the individual words sustainable (665) and development (31,711), it can be computed how often the two words would be expected to appear together by pure chance. The expected frequency of the collocation is the product of the individual token frequencies divided by the total number of words in the corpus, which in this case yields a value of 0.22. So whereas less than one instance of sustainable development would be expected, almost 150 are observed, which indicates a high degree of mutual association between sustainable and development. Association measures express collocation strength through scores that allow comparisons between different word pairs, so that for example the mutual attraction of sustainable development can be compared to that of sustainable tourism or sustainable seafood.

Whereas the study of collocations has a long tradition that focuses on associations between specific words, the family of methods known as collostructional analysis (Stefanowitsch & Gries Reference Stefanowitsch and Gries2003; Gries & Stefanowitsch Reference Gries and Stefanowitsch2004a, Reference Gries, Stefanowitsch, Achard and Kemmer2004b) applies the concept of collocations to the analysis of mutual associations between a construction and the lexical items that appear within it. Collostructional analysis thus takes into account the grammatical context in which lexical words appear. Stefanowitsch and Gries (Reference Stefanowitsch and Gries2003: 219) illustrate this idea with the English [NOUN waiting to happen] construction. The construction has an initial slot for nouns that is typically filled by semantically negative words such as disaster or accident, as for example It’s a disaster waiting to happen or That was an accident waiting to happen. In order to analyze the mutual association between the construction and the nouns that occur in it, collostructional analysis determines the token frequency of the nouns in the construction, their overall token frequency in the corpus, and the overall token frequency of the construction as such. Table 6.2, taken from Stefanowitsch & Gries (Reference Stefanowitsch and Gries2003: 219), illustrates this for the noun accident. The lower right corner of the table represents the total of clause-level constructions in the British National Corpus. The co-occurrence frequency of accident and waiting to happen is much higher than would be expected.

Table 6.2 A contingency table of accident and the [N waiting to happen] construction

| accident | NOT accident | Total | |

|---|---|---|---|

| [N waiting to happen] | 14 | 21 | 35 |

| NOT [N waiting to happen] | 8,606 | 10,197,659 | 10,206,265 |

| Total | 8,620 | 10,197,680 | 10,206,300 |

The output of a collostructional analysis is a list of lexical elements that are ranked in terms of their strength of association with the construction that is being studied. Association measures that are used to obtain these rankings include the Fisher–Yates exact test and log likelihood (Flach Reference Flach2021). A collostructional analysis can not only determine the lexical elements that are most strongly associated with a construction, but it also serves to identify the elements that occur significantly less often than would be expected by chance. Inspecting the words that are repelled by a construction often yields useful insights into the semantic constraints that characterize its usage.

There is no single collocation measure that could be said to reflect mutual association in the best or most objective way. Rather, measures such as mutual information or log likelihood differ in the relative importance they assign to aspects such as the token frequency of a collocation or the mutual faithfulness of the component words. For example, the collocation unmitigated disaster is much less frequent than sustainable development, but when the adjective unmitigated is observed, it acts as a very strong cue for the upcoming noun disaster. Measures such as Delta P further allow researchers to take the directionality of association into account. For example, the word instead is a strong cue that the following word would be of. This association is not symmetric, since of is frequently preceded by other elements than instead. Conversely, the word vitro is typically preceded by in, but in is not a reliable indicator that the next word will be vitro.

Frequencies of co-occurrence lie at the heart of distributional semantic approaches (Turney & Pantel Reference Turney and Pantel2010), which model the meanings of linguistic units in terms of their collocational profiles. To give a simple example, the meaning of a word such as violin is reflected in its distributional behavior, which is to say that words such as piano, orchestra, play, or sonata are strongly represented in contexts in which the word violin appears. On the basis of a corpus, it is possible to generate a frequency list of all words that appear in the neighborhood of the word violin. With an association measure, that frequency list can be transformed into a vector of values that shows which words are overrepresented or underrepresented, so that a semantic profile of violin emerges. Distributional semantic techniques typically compare many such vectors to establish patterns of semantic similarity or dissimilarity in larger sets of linguistic units. Analyses of that kind are able to determine that, for example, words such as piano, flute, and cello have collocational profiles that are very similar to that of violin, whereas words such as rose, tulip, and daffodil conform to a profile that is altogether different. Perek (Reference Perek2018) uses a distributional semantic approach in a diachronic study of the English way-construction. He uses data from the Corpus of Historical American English (Davies Reference Davies2010) in order to compute semantic vectors for the lexical verbs that appear in the way-construction. By comparing the semantic vectors of verbs that enter the construction across historical periods of time, Perek finds that the verbs of the way-construction occupy an increasingly broader semantic range, specifically in the manner sense of the construction (e.g., John joked his way into the meeting). For the path-creation sense of the construction, there is a development towards increasingly more varied and also more abstract verbs, including groups such as verbs of ingestion (eat, drink, etc.), verbs of commercial transactions (buy, purchase, acquire, etc.), speech act verbs (mumble, whisper, etc.), and others (announce, explain, write, etc.). In general terms, Perek’s study illustrates how co-occurrence frequencies, put into the service of distributional semantic analyses, can reveal how constructions behave with regard to schematicity and abstractness. The elements that co-occur with a construction reflect its meaning, and historical shifts in the co-occurrence patterns between a construction and its lexical collocates can yield insights into the diachronic semantic trajectory of a construction.

Frequency of co-occurrence can be also shown to affect language processing and language use. Relevant evidence is presented by Gries et al. (Reference Gries, Hampe and Schönefeld2005), who devised a sentence completion task in which speakers had to find continuations for sentence fragments. Gries and colleagues used the English as-predicative construction (The idea was considered as a major innovation) as a case study. The participants were exposed to sentence fragments that contained a subject, a passive auxiliary, and a past participle, as in The idea was considered. Their task was to complete the fragment in any way they saw fit. Gries et al. measured how often the participants’ continuations resulted in a complete as-predicative construction. With regard to the verbs that appeared in the fragments, Gries et al. controlled for their token frequency as well as their frequency of co-occurrence with the as-predicative construction. For the latter, the Fisher–Yates exact test was used as an association measure (Gries et al. Reference Gries, Hampe and Schönefeld2005: 647). Verbs such as regard, describe, or see are strongly associated with the construction. The results of the experiment show that participants were more likely to complete a fragment with the as-predicative if the verb in the fragment was strongly attracted to the construction. By contrast, Gries et al. (Reference Gries, Hampe and Schönefeld2005: 659) did not observe an independent main effect of token frequency.

Another effect of co-occurrence frequency is observed by Hilpert and Flach (Reference Hilpert, Flach, Krawczak, Lewandowska-Tomaszczyk and Grygiel2022) in a study of a phenomenon that is called constructional contamination (Pijpops & Van de Velde Reference Pijpops and Van de Velde2016; Pijpops et al. Reference Pijpops, de Smet and Van de Velde2018). The term describes a relation between two constructions of a language, such that usage frequencies of one construction influence patterns of variation in another construction. Hilpert and Flach (Reference Hilpert, Flach, Krawczak, Lewandowska-Tomaszczyk and Grygiel2022) study constructional contamination in the English passive, which exhibits variation with regard to the adverbial modification of participles. In a passive sentence, the past participle can be either preceded by an adverb, as in The government was democratically elected, or it can be followed by an adverb, as in The government was elected democratically. Pijpops and Van de Velde (Reference Pijpops and Van de Velde2016) argue that variation of this kind can potentially be influenced by a construction that features word strings that can also appear in one of the variants. With regard to the English passive, sequences of an adverb and a participle occur in noun phrases such as a democratically elected government. If uses of this kind appear with high token frequency in language use, the prediction is that speakers will favor the adverb-initial variant of the passive. Hilpert and Flach (Reference Hilpert, Flach, Krawczak, Lewandowska-Tomaszczyk and Grygiel2022) test this prediction on the basis of data from the Corpus of Contemporary American English (Davies Reference Davies2008) and they find that the token frequency of adverb–participle combinations in English noun phrase constructions significantly biases speakers towards the use of adverb-initial passives. Beyond this effect of high token frequency, they further observe an effect of co-occurrence frequency. Combinations such as dimly lit or randomly selected are not highly frequent in English noun phrases. Yet, the fact that these collocations have a strong degree of mutual association may bias speakers towards adverb-initial order in the passive construction. Hilpert and Flach controlled for a possible effect of mutual association strength with covarying collexeme analysis (Gries & Stefanowitsch Reference Gries, Stefanowitsch, Achard and Kemmer2004b), which was used to determine degrees of mutual association between adverbs and participles in the English noun phrase construction. The results indicate that frequency of co-occurrence is a significant predictor of speakers’ bias towards adverb-initial order in the English passive construction.

The studies described in this section suggest that language processing and production are shaped by co-occurrence frequencies of linguistic units. The respective effects go beyond effects of high token frequency as such. Degrees of mutual association can impact language use even when the linguistic units in question are relatively low in token frequency.

6.2.5 Dispersion (Burstiness)

The dispersion, or burstiness, of a linguistic unit concerns the regularity with which it appears and reappears in language use. Two linguistic units with the same token frequency may behave very differently with regard to their dispersion, so that their respective tokens are either spaced out evenly and regularly or tightly clustered together. To take an example, consider the words without and system, which appear with roughly the same token frequency in the British National Corpus. Whereas without appears in just about every text that is featured in the corpus, this is not the case for system. Tokens of the word system have a greater chance of following each other in close proximity, but there are also longer stretches in the corpus that do not contain the word system at all. What this means is that without is more evenly dispersed than system.

Various techniques have been proposed to measure dispersion (Gries Reference Gries2008, Reference Gries, Gries, Wulff and Davies2010, Reference Gries2022a). The conceptual basis for most of these techniques is that a corpus is divided into parts. The corpus parts may be defined in terms of a fixed number of running words or by dividing the data into the different text documents that constitute the corpus. Based on such a division, it can be determined how frequently a given linguistic unit appears in each of the parts. The dispersion measure known as ‘range’ simply assesses the percentage of corpus parts in which a linguistic unit is represented (Gries Reference Gries2008: 407). The higher the range, the more even the dispersion. The dispersion measure deviation of proportions (Gries Reference Gries2008: 415) is based on more sophisticated calculations and is computed as follows. In a first step, a corpus is divided into parts and it is determined for each part how much of the corpus it represents. A text of 10,000 words would thus represent 1 percent of a corpus with one million words. To assess the dispersion of a linguistic unit in that corpus, its frequency in the entire corpus and in each corpus part is determined. For example, the word without might appear 400 times in the corpus as a whole, and six times in a first text of 10,000 words. This means that without is overrepresented in that corpus part. Whereas only 1 percent of tokens would be expected, the text in fact contains six tokens which add up to 1.5 percent. Deviation of proportions is calculated in such a way that for all corpus parts the differences between expected percentage and observed percentage are added up, and the sum of differences is divided by two. If the resulting value is close to 0, the analyzed word is very evenly distributed. Values close to 1 indicate a highly uneven dispersion.

An alternative to measuring dispersion on the basis of corpus parts is to observe distances between the tokens of a linguistic unit (Gries Reference Gries2008: 409). For the word without, the maximal distance will be lower than for the word London. If the distances are distributed in such a way that some of them are very large and most are very small, this indicates an uneven dispersion.

It has been argued that certain effects of token frequency are more adequately explained as effects of dispersion. For example, Adelman et al. (Reference Adelman, Brown and Quesada2006: 817) analyzed latencies in six separate data sets from experiments that included word-naming and lexical decision tasks, finding that dispersion (operationalized as range) is a better predictor of word-processing times than token frequency, and that token frequency has no measurable effect that would be independent of dispersion. Baayen (Reference Baayen, Jarema, Libben and Westbury2011: 454) draws a similar conclusion on the basis of data from lexical decision tasks, arguing that any effect of token frequency is minimal once variables such as dispersion, morphological family size, or syntactic entropy are controlled for. It is important to note in this context that most of the established measures of dispersion, including range, are known to correlate strongly with token frequency (Adelman et al. Reference Adelman, Brown and Quesada2006: 815; Baayen Reference Baayen, Jarema, Libben and Westbury2011: 445). Infrequent lexical items will necessarily appear in only a few corpus parts, whereas frequent items stand a better chance of being represented in a large percentage of the parts. In order to address this problem, Gries (Reference Gries2022a) has recently proposed a measure that avoids this correlation and thus allows for a more reliable assessment of the respective effects of token frequency and dispersion. Gries uses deviation of proportions as the basis for a new measure that is labeled DPnofreq. The measure calculates theoretical lower and upper bounds for the dispersion of a linguistic item, given its token frequency. In other words, the measure assesses whether a word could be potentially more even or more uneven in its dispersion. Unlike other dispersion measures, DPnofreq can yield high dispersion values for infrequent linguistic items. Gries (Reference Gries2022a: 29) uses data from lexical decision tasks to show that DPnofreq, in combination with the variables of token frequency and word length, outperforms other dispersion measures in predicting reaction times.

Besides facilitating greater ease of processing, evenness of dispersion also correlates with aspects of linguistic meaning. Using a distance-based measure of dispersion, Pierrehumbert (Reference Pierrehumbert, Santos, Linden and Ng’ang’a2012: 104) shows that linguistic units with more specialized meanings are dispersed less evenly. Specifically, in a comparison of deverbal nouns (evolution, failure, growth) and their verbal bases (evolve, fail, grow), the nouns show a stronger tendency to appear in bursts, whereas the verbs are dispersed more evenly. In a study with a similar outlook, Hilpert and Correia Saavedra (Reference Hilpert and Correia Saavedra2017) analyze a matched set of lexical words and grammatical items that are balanced in terms of their respective token frequencies. They use regression modeling to determine whether even dispersion, measured in terms of deviation of proportions (Gries Reference Gries2008), is predicted more accurately by high token frequency or by semantic generality. The results indicate that both token frequency and semantic generality have significant effects. The semantic effect is, however, considerably weaker than the frequency effect.

In comparison to other measures of frequency, dispersion has received relatively less attention in research that is concerned with constructions and Cognitive Linguistics more generally. The importance of considering dispersion and its effects has been argued forcefully by Gries in a series of papers (Reference Gries2008, Reference Gries, Gries, Wulff and Davies2010, Reference Gries2022a, Reference Gries and Boas2022b). Aside from the issues that have been presented in this section, Gries (Reference Gries and Boas2022b: 62) further suggests that the effects of dispersion on language learning merit further attention.

6.3 Conclusions and Outlook

The discussion in this chapter up to this point allows three broad conclusions. First, the notion of frequency has become increasingly central to research in usage-based Construction Grammar. This development is not only reflected in the definitions that Goldberg (Reference Goldberg1995, Reference Goldberg2006, Reference Goldberg2019) offers for the term construction, but also in a steadily growing number of studies that present their arguments on the basis of corpus-based frequency data. Second, frequency is considered in that work not just as token frequency, but as a range of several different measures. This chapter has reviewed studies drawing on token frequency, type frequency, relative frequency, co-occurrence frequency, and dispersion. Section 6.2 covered the ways in which these aspects of frequency are measured, and to what ends they are being analyzed. The third conclusion is that frequency is intimately related to issues of language processing and production. The discussion has touched on a wide variety of frequency effects, including the relation of high token frequency and entrenchment (Ellis Reference Ellis2002: 152), the inverse-preference effect that links relative frequency and structural priming (Ferreira & Bock Reference Ferreira and Bock2006), and the relation of uneven dispersion and specialized meaning (Pierrehumbert Reference Pierrehumbert, Santos, Linden and Ng’ang’a2012: 104). Understanding how these frequency effects work is important for theoretical work in Construction Grammar, notably with regard to the organization of constructions in a network (Diessel Reference Diessel2019; Schmid Reference Schmid2020) (see also Chapter 9).

It is without dispute that the increasingly thorough engagement with frequency-related issues has yielded useful insights and has thereby advanced Construction Grammar as a field. That said, there are also reasons to maintain a critical view of what has been accomplished and what still remains to be done. The remainder of this section will go over four points that merit attention. First, as Blumenthal-Dramé (Reference Blumenthal-Dramé and Schmid2017: 141) points out, “we are still a long way from fully understanding the intricate relationships among usage frequency, entrenchment, and other factors that might modulate the strength and autonomy of linguistic representations in our minds.” How exactly the experience of a string of words impacts the mental representation of a schematic construction that is instantiated by those words remains to be worked out, especially with regard to the interplay of token frequency, type frequency, and co-occurrence frequency. Second, it is crucial not to attribute effects to frequency without considering alternative explanations. Section 6.2.5 pointed to work that tested systematically whether the effects of token frequency persist if other frequency-related factors such as dispersion are included. Gries (Reference Gries and Boas2022b: 47) cautions against the reliance on token frequency measurements that do not take variability across corpora and corpus parts into account. Variability across and within corpora can be substantial but work that takes this variability into account remains the exception. Third, the aspects of frequency that were discussed in this chapter have not received the same kind of attention. Whereas measurements of token frequency and co-occurrence frequency are routinely applied in constructional analyses, measures of dispersion are rarely taken into account (Gries Reference Gries2008: 403), and in most studies that actually consider dispersion, the measures that are used are strongly confounded with token frequency (Gries Reference Gries2022a). What this means is that more work is necessary in order to realistically assess the effects of dispersion and the interplay between dispersion and other aspects of frequency. The fourth and final point is of a more general nature. Whereas this chapter has laid out (a) a range of different aspects of frequency, (b) measures that are intended to capture these aspects, and (c) effects on processing and production that stem from them, it is important to recognize that any linguistic phenomenon is likely to benefit from an analysis that takes these different aspects into account simultaneously in order to capture how different frequency effects interact. For example, in order to arrive at a comprehensive understanding of an argument structure construction such as the caused-motion construction (Goldberg Reference Goldberg1995), several different frequency measures would be useful. These include token frequencies of the construction as such, token and type frequencies of the elements that appear in its slots, co-occurrence frequencies of the construction and lexical elements, relative frequencies of constructions that are similar in form or function, and dispersion measures that assess the distribution of the construction across different parts of the used corpora. It is clear that while comprehensive analyses of this kind are laborious, they hold the promise of coming to terms with the multi-faceted role of frequency in language use, which is an important goal for the future development of Construction Grammar.

7.1 Data in Linguistics and Corpus Data in Construction Grammar

Data in linguistics can be classified along at least three different dimensions (based on Gries Reference Gries, Hoffmann and Trousdale2013a), each of which could, for simplicity’s sake, be heuristically divided into different points/ranges:

(1) How natural does the speaker perceive his (experimental) setting?

a. most natural, for example, speakers who know each other talk to each other in unprompted authentic dialog;

b. intermediately natural, for example, a speaker describes pictures handed to him by an experimenter;

c. least natural, for example, a speaker lies in an fMRI unit undergoing a brain activity scan while having to press one of three buttons in responses to digitally presented black-and-white pictorial stimuli.

(2) What (linguistic) stimulus does/did the speaker act on?

a. most natural, for example, speakers are presented with natural utterances and turns in authentic dialog;

b. intermediately natural, for example, speakers are presented isolated words by an experimenter in an association task;

c. least natural, for example, speakers are presented with isolated vowel phones.

(3) What (linguistic) units/responses does/did the subject produce?

a. most natural, for example, subjects produce natural and unconstrained responses to questions;

b. intermediately natural, for example, speakers respond with isolated words (e.g., to a definition);

c. least natural, for example, speakers respond with a phone out of context.

The present chapter is concerned with corpus-linguistic approaches in Construction Grammar (CxG), that is, with approaches that tend towards the more/most natural part of each of these dimensions. The notion of a corpus can be considered a prototype category with the prototype being a collection of machine-readable files that contain text and/or transcribed speech that are supposed to be representative of a certain language, dialect, variety, etc. and were produced in a communicative setting. That means that at least the prototypical corpus scores most natural on each of the above three dimensions. Often, corpus files are stored in Unicode encodings (so that all sorts of different orthographies can be appropriately represented) and come with some form of markup (e.g., information about the source of the text) as well as annotation (e.g., linguistic information such as part-of-speech tagging, lemmatization, etc. added to the text, often in the form of XML annotation). However, there are many corpora that differ from the above prototype along one or more of the above dimensions and of course corpora also vary wildly in terms of their size, annotation, ease of access, processability, etc. Accordingly, the prototypical corpus contains data that are a kind of good-news-bad-news situation. The good news is that corpus data often have a very high degree of ecological validity precisely because the production data they contain are not tainted by any artificiality. But that is also the bad news: Data that are not tainted by any artificiality is just another expression for ‘noisy and unbalanced’, which is one major reason why, as we will see below, the analysis of corpus data in CxG has become more and more heavily statistical – simply to deal with the multi-factorial, noisy, and redundant mess that corpus data often are.

Corpus data did not play a big role in CxG historically. It is probably fair to say that CxG is now a little more than thirty years old since the ‘founding’ publications are probably Lakoff (Reference Lakoff1987), Langacker (Reference Langacker1987), and Fillmore et al. (Reference Fillmore, Kay and O’Connor1988), to be followed by Goldberg (Reference Goldberg1991, Reference Goldberg1995) and Kay and Fillmore (Reference Kay and Fillmore1999). But while much of this earliest work was mostly theoretical in nature and did not rely much either on experimental or on observational corpus data, today that situation has drastically changed. To use language from usage-/exemplar-based linguistics: When I ‘grew up academically’ in the mid to late 1990s, learning about Cognitive Linguistics and CxG on the one hand and about corpus linguistics and Pattern Grammar (Hunston & Francis Reference Hunston and Francis2000) on the other, there were very few tokens of studies that, in some multi-dimensional exemplar space, would have scored highly both on the CxG and the corpus-linguistics dimensions; back in the 1990s I certainly did not form a productive category of ‘corpus-based CxG’. But then in the early aughts that all changed and CxG – in particular usage-based/cognitive CxG – has evolved at what seems like a breathtaking pace into a field of study in which we have moved

from works with virtually no corpus data (or that used corpora as a mere repository from which to pick fitting examples) to studies with systematic data retrieval and annotation processes often involving thousands of data points; and

from works that presented isolated examples as evidence for what is possible to studies with complex quantitative methods that show what is likely and that involve, for instance, multi-factorial or multivariate statistical analyses, ‘more traditional’ machine-learning or fancier deep-learning or construction-induction methods, or network analyses.

Μuch of that move really only happened within the last fifteen or so years. In 2013, I published an overview article “Data in Construction Grammar” (in Hoffmann & Trousdale’s Oxford Handbook of Construction Grammar), which had a mere five to six pages on corpus-based and/or computational (machine-learning) studies; this time around, even just sampling papers from leading journals publishing studies relevant to this overview (e.g., Cognitive Linguistics, Constructions and Frames, and Corpus Linguistics and Linguistic Theory) had to be restricted to a small number of recent years so as to avoid drowning in an unmanageable number of interesting and methodologically extremely diverse studies. The purpose of this chapter is to give an overview of the different applications of corpus-linguistic data and methods to linguistic phenomena from a CxG perspective. While the overview is unlikely to be truly representative of the field (along what dimensions anyway?), care was taken to represent studies that differ along a variety of essential parameters, including:

the language(s) studied;

the kind of language(s) studied: L1/native speaker data, L2/FL non-native speaker/learner data, indigenized-variety speaker data, …;

the resolution: individual speakers vs. variation between individuals vs. (dialectal) speech communities, …;

the temporal kind of study: synchronic vs. diachronic/longitudinal;

the (ranges and kinds of) corpora used;

the use to which corpora were put: a collection of examples vs. fine-grained (semi-manual) annotation vs. bottom-up/inductive processing vs. correlation with additional experimental results, …;

the question the study is trying to answer and, related to that, the ‘scientific goal’ of the study: description vs. hypothesis testing vs. exploration, …; and

the statistical methods used for the analysis of the corpus data: none/qualitative only vs. frequencies/probabilities vs. association measures vs. multi-factorial (predictive) modeling vs. exploratory and/or machine-/deep-learning kinds of methods, …

The overview will be structured according to the latter two criteria because (i) the two criteria are of course often very much related to each other and (ii) for many researchers it will be interesting to see which kinds of CxG questions corpus-linguistic data, their (typically) qualitative annotation, and their statistical analysis can help address. Also, it is particularly in the interplay of the last two criteria that corpus-based CxG has maybe most developed. Put differently, while the field is of course still concerned with definitional matters, questions of learnability, abstraction, and/or representation (both mental and formal), corpus-linguistic approaches have been and are now also targeting specific subsets of questions that in turn naturally come with specific degrees of quantitative methods. I will therefore proceed by discussing

raw/normalized frequency-based approaches;

studies involving associations and their strengths between different constructions and/or their parts; and

statistical modeling, machine-learning, and exploratory/inductive bottom-up approaches.

In each of these sections, I will try and highlight topical clusters, that is, areas/questions that appear to be targeted particularly frequently; Section 7.3 will conclude.

7.2 Corpus-Based Applications in CxG

7.2.1 Largely Qualitative Corpus Approaches

As mentioned above, the initial uses of corpus or corpus-like data in CxG papers were largely only presentative in nature and served to make some theoretical point(s) by means of authentic examples, but often without the kind of systematic feature annotation that is characteristic of much contemporary work. This pointing out a lack of multivariate annotation is not meant as a criticism, given the different goals of papers at the time, but what is perhaps a bit more critical is that some such literature often did not clarify whether examples provided were made up or attested (and, if they were attested, what the source was). For example, Fillmore et al. (Reference Fillmore, Kay and O’Connor1988: 519) discuss hundreds of example sentences but usually provide no information on them, let alone on their source. One time they state “we have come across incontrovertible cases of attested utterances of non-negative let alone sentences that seem perfectly natural and which there is no apparent justification to ignore as performance errors” and proceed to discuss their examples (71) and (72) by stipulating (admittedly likely) contexts in which they may have been uttered. Kay and Fillmore (Reference Kay and Fillmore1999) proceed in a similar way: We don’t learn much about where examples are from etc., and the same is true of many other studies such as Smith (Reference Smith1994), Kemmer and Verhagen (Reference Kemmer and Verhagen1994), Dancygier and Sweetser (Reference Dancygier and Sweetser1997), Morgan (Reference Morgan1997), Gutzmann and Henderson (Reference Gutzmann and Henderson2019), and many others, which were all introspection-based and, if they used the word data, typically used it to refer to introspective judgments and/or example sentences.

Crucially, this is not just some complaint from a quantitative corpus linguist who wants corpus examples for the sake of corpus examples; the point is that what seem like clear-cut judgments from native speakers on made-up or even attested examples can look very different once one looks at (larger) quantities of data – as Sinclair (Reference Sinclair1991: 100) said, “Language looks very different when you look at a lot of it at once.” For example, it is likely that traditional linguists would consider a sentence such as He [VP donated [REC her] [PAT transplant money]] ungrammatical, since it is widely held that the verb donate cannot be used ditransitively (even though its meaning is so similar to that of the prototypical ditransitive verb give). However, Stefanowitsch (Reference Stefanowitsch2006: 69) shows that even the British National Corpus – a great but by today’s standards not particularly large corpus – already contains at least one example exactly like this (in a maybe atypical newspaper headline, admittedly), and Stefanowitsch (Reference Stefanowitsch2007: 65) lists ten examples of donate used ditransitively from a variety of internet pages from .uk domains, all of which “do not conform to what we might think of as the default donate frame”; instead, they appear to instantiate a frame that Stefanowitsch describes as follows:

A donor transfers some of his/her money to a recipient. The recipient is an official organization who uses the money to advance some public or charitable cause or to pay for its own expenses in doing so. The donor is an individual who gives the money because s/he believes in the cause, and without expecting to profit personally. There is no personal relationship between the donor and the recipient.

Thus, while linguistics in general and CxG in particular have benefited a lot even from papers that did not feature corpus data or analyses, linguists clearly have no unbiased and axiomatically correct view of what is possible (i.e., what can or cannot be said; see Labov Reference Labov and Austerlitz1975), let alone what is likely. Even theoretical works without any kind of quantification might have turned out a bit different if corpora or corpus-like data had been consulted systematically, and I think it is fair to say that usage-based linguistics and CxG have evolved precisely in this direction. For instance, Hamunen (Reference Hamunen2017) is not the least bit quantitative but still not only bases its diachronic exploration of the Finnish Colorative Construction mostly on 1,741 examples from three different corpora/corpus-like databases (viz. the Finnish Syntax archive, the Digital Morphology Archive, and the Digital Dictionary of Finnish Dialects), but also highlights all made-up examples as such. Beliën (Reference Beliën, Yoon and Gries2016) explicitly points out this methodological turn –

the method is applied to corpus data, because they show what types of structures are actually produced by speakers, and in which contexts. Earlier studies, on the other hand, relied on isolated, constructed sentences, with diverging grammaticality (or acceptability) judgments as a result. The authentic data presented here were collected from the 38 million-word corpus of the Institute for Dutch Lexicology … and from the Internet.

– before discussing the failure of traditional syntactic constituency tests regarding the analysis of Dutch particle constructions. However, it seems to me that most more recent and contemporary studies based on corpus data do involve at least some kind of quantification and I think that there are very few questions, if any, that cannot or should not be studied quantitatively as a matter of principle (but of course, there may be situations where, for example, data sparsity may rule out the use of certain statistical methods); see Jenset and McGillivray (Reference Jenset and McGillivray2017: section 3.7); Gries (Reference Gries2019b: 25–29), or Gries (Reference Gries2021 [2009]: section 1.2) for more on this question. We now turn to the simplest kind of quantification: frequencies of (co-)occurrence and (conditional) probabilities.

7.2.2 Frequencies of (Co-)occurrence and Conditional Probabilities

In spite of the statistical simplicity of frequencies and probabilities, if they are applied in the right kind of research context, they can be instructive, as is evidenced by a variety of studies having to do with issues of frequency as a mechanism driving, affecting, or at least correlating with entrenchment, learning/acquisition, language change, and productivity. For instance, in the area of language acquisition/learning, by now classic studies such as Goldberg’s (Reference Goldberg and MacWhinney1999) analysis of L1 acquisition data from CHILDES (to determine how highly frequent semantically light verbs facilitate the acquisition of semantically similar argument structure constructions) or Ellis and Ferreira-Junior’s (Reference Ellis and Ferreira-Junior2009a, Reference Ellis and Ferreira-Junior2009b) longitudinal study of L2 acquisition of verb-argument constructions in the European Science Foundation corpus were among the first to empirically highlight the importance of frequency of occurrence (of constructions) and frequency of co-occurrence (words in constructional slots) for language acquisition/learning, or for the ubiquity of Zipfian distributions of constructions, or for material within slots of constructions. Another hugely influential application of conditional probabilities – as cue validity – is Goldberg et al. (Reference Goldberg, Casenhiser and Sethuraman2004), which shows that certain patterns (e.g., V-Obj-Loc) have very high cue validities for certain meanings (e.g., caused motion), which reinforces the notion of constructions as pairings of form and meaning reliable enough to facilitate acquisition based on recognizing association patterns and chunking.

Quantitatively similar applications can also be found in other areas. An example of how corpus frequencies can inform theoretical argumentation is Boas (Reference Boas, Achard and Kemmer2004), who challenges a Minimalist Program account of wanna contraction in English. He shows that less than 1 percent of the examples of wanna contraction in the Switchboard corpus are instances within WH-clauses, which is interesting because most analyses put a lot of emphasis on wanna contraction in WH-clauses even though wanna contraction is actually more frequent than want to in relative clauses. As Boas (Reference Boas, Achard and Kemmer2004: 482) argues, if a theory of language claims not only to be descriptively but also explanatorily adequate, the question for Ausín’s (Reference Ausín2001) minimalist program analysis is how it may account for these differences in distribution.

Another study that is based on statistically very down-to-earth percentage data but uses them to make valuable theoretical contributions is Gaeta and Zeldes (Reference Gaeta and Zeldes2017). They use DeWaC, a 1.6 billion-word corpus of web-based German to study -er compounds (with agent noun heads) from a Construction Morphology perspective. On the basis of type, token, and hapax counts, they explore with which frequencies different combinations and orders of compounds are attested and the direction in which prototypical instances are generalized, and argue that Construction Morphology’s flexibility (in terms of permitting different derivational pathways of compounds) makes it an approach that supersedes purely syntactic or purely morphological approaches.

Quantitatively similar work – using type and token frequencies – is also found in Quochi (Reference Quochi, Yoon and Gries2016), a paper on a radial-category family of Italian light-verb constructions and their acquisition in L1 data from the CHILDES database. Approximately 2,100 instances of fare (‘do’) + noun constructions from children and adults are investigated in terms of the nouns/noun categories they occur with and the type–token ratios of verb-related nouns. Tracking new types over time she finds, among other things, that fare + nouns derived from verbs by suffixation appear to be rote-learned rather than instances of creative production. The general time course of acquisition, Quochi observes, is one where children first pick up on the most frequent uses, then develop a more abstract schema, which becomes generalized to intransitive actions, a development that is compatible with usage-based approaches to language acquisition of the kind outlined by Tomasello (Reference Tomasello2003), among others.