10.1 Introduction

Construction Grammar emerged in the 1980s as a response to the increasingly problematic core–periphery distinction in mainstream linguistics (Fillmore Reference Fillmore1988; Fillmore et al. Reference Fillmore, Kay and O’Connor1988; Kay & Fillmore Reference Kay and Fillmore1999). Linguists were only supposed to care about a ‘core’ of systematic rules, while condemning a large ‘periphery’ of linguistic misfits to scientific purgatory. Construction Grammar proposed a road to salvation by abolishing this distinction and to represent all of linguistic knowledge as conventionalized mappings between form and function called constructions, hypothesizing that “a grammatical formalism can be constructed … which is built exclusively on grammatical constructions” (Fillmore Reference Fillmore1988: 54).

As can be gleaned from the wealth of contributions to the present book, the concept of a ‘construction’ struck such a vein in the linguistics community that the principles of the constructional analysis became rapidly adopted by a wide variety of subdisciplines. Inevitably, Construction Grammar soon became the victim of its own success, and “it is nowadays more accurate to speak of Construction Grammars – plural – than of Construction Grammar” (Van de Velde Reference Van de Velde, Boogaert, Colleman and Gijsbert2014: 143). The original Construction Grammar (CxG; Fillmore Reference Fillmore, Hoffmann and Trousdale2013), written with capitals C and G, is therefore often called ‘Berkeley Construction Grammar’ (BCxG), using an epithet to distinguish it more clearly from other constructional approaches that emerged later. These younger approaches are not meant to supplant CxG in the same vein as the Minimalist Program (Chomsky Reference Chomsky1995) revised and replaced the theory of Government and Binding (Chomsky Reference Chomsky1981) in transformational syntax; they are rather considered as complementary frameworks that emphasize different directions.

While this diversity of constructional approaches is a clear sign of a thriving community, it also means that newcomers to the field can easily get lost. Moreover, when exploring new directions, the community is at risk of losing consensus about what it actually means to engage in a constructional analysis. The goal of this chapter is, therefore, to provide a comparative guide for navigating the constructional landscape. Along the way, this chapter also argues that the existence of different constructional flavors is in fact a healthy and necessary response to the problem of analyzing complex linguistic structures, which requires input from different perspectives. We will first provide some terminological foundations needed for our comparative guide (Section 10.2), after which we will consider how construction grammars handle empirical data (Section 10.3), the connection between meaning and form (Section 10.4), and how constructions can be formalized (Section 10.5). Given the conflict between the richness of the construction grammar community and practical space limitations, this chapter will exclusively focus on the following flavors of construction grammar:

(1) (Berkeley) Construction Grammar (CxG; Fillmore Reference Fillmore1988; Kay & Fillmore Reference Kay and Fillmore1999; Fillmore Reference Fillmore, Hoffmann and Trousdale2013) primarily aims at describing a language user’s knowledge about their language, but its toolbox has turned out to be very versatile, which makes CxG an excellent foundation for any kind of constructional research.

(2) Cognitive Construction Grammar (CCG; Goldberg Reference Goldberg1995, Reference Goldberg2006, Reference Goldberg2019), or the ‘Goldbergian’ flavor of CxG, heavily focuses on psychological plausibility, language usage, and language learning. Whereas CxG offers detailed analyses of constructions, Cognitive Construction Grammar uses informal diagrams for ease-of-exposition and puts more emphasis on the design of psycholinguistic experiments and corpus studies.

(3) Radical Construction Grammar (RCG; Croft Reference Croft2001) focuses on typological adequacy and language evolution. Its epithet ‘radical’ comes from the fact that RCG takes the foundational assumption of Construction Grammar to its logical conclusion: If constructions are the basic units of language, then all linguistic categories must be defined relative to the constructions they occur in (as opposed to forming a global set). Moreover, RCG adopts strict empirical methods in order to treat every language in its own right and as unbiased as possible (Croft Reference Croft, Boye and Engberg-Pedersen2010), which makes RCG suitable for both descriptive linguistics as well as for modeling linguistic competence.

(4) Sign-Based Construction Grammar (SBCG; Michaelis Reference Michaelis, Heine and Narrog2009; Sag Reference Sag, Boas and Sag2012) is a constructional reconceptualization of Head-Driven Phrase Structure Grammar (Pollard & Sag Reference Pollard and Sag1994) which aims at developing with mathematical precision a processing-neutral model of a language user’s linguistic knowledge (Sag & Wasow Reference Sag, Wasow, Borsley and Börjars2011). SBCG is especially useful for estimating degrees of well-formedness of constructs.

(5) Fluid Construction Grammar (Steels Reference Steels, Daelemans and Walker2004, Reference Steels2017) is not a theory but an open-source platform for implementing computational construction grammars and models of constructional language processing (van Trijp et al. Reference van Trijp, Beuls and Van Eecke2022). Besides its use for operationalizing constructional analyses, FCG also offers the necessary tools for scaling construction grammars to handle large corpora.

10.2 A Babelesque Confusion

The evolutionary biologist Eörs Száthmary once jokingly said that linguists would rather share each other’s toothbrush than each other’s terminology (p.c.). Unfortunately, construction grammarians are no exception, and the definition of what a ‘construction’ is may vary over time and from approach to approach, and each construction grammarian may use different terminology. This section therefore presents a definition of some concepts, summarized in Table 10.1, that are needed for our comparative guide in order to avoid a Babelesque confusion.

Table 10.1 Basic terminology used in this chapter

| Concept name | Collection name | Status |

|---|---|---|

| Construct | Corpus | Empirically observable |

| Constructional analysis | Analysis bank | Theoretical |

| Construction | Constructicon | Theoretical |

10.2.1 Constructs and Corpora

First of all, we need to maintain a distinction between empirical ‘constructs’ on the one hand and theoretical notions such as ‘constructions’ on the other. An (empirical) construct is an actual linguistic expression, such as a word, phrase, or sentence. Constructs can be observed and collected in a ‘corpus’. Example (1) offers a small corpus of constructs from Franz Kafka’s Metamorphosis (translated by Edwin and Milla Muir).

(1) As Gregor Samsa awoke one morning from uneasy dreams he found himself transformed in his bed into a gigantic insect. He was lying on his hard, as it were armor-plated, back and when he lifted his head a little he could see his dome-like brown belly divided into stiff arched segments on top of which the bed quilt could hardly keep in position and was about to slide off completely. His numerous legs, which were pitifully thin compared to the rest of his bulk, waved helplessly before his eyes.

10.2.2 Constructional Analyses and Analysis Banks

Everything beyond constructs “is part of a theory, invented by a linguist” (Dowty Reference Dowty, Bunt and van Horck1996: 12). A first theoretical concept is that of a ‘constructional analysis’ (of a construct), which represents a linguist’s model of a construct similar to how phonemes represent a linguist’s analysis of actual sound patterns in vocal languages or actual signs in signed languages. For instance, while example (1) contains the actual construct a gigantic insect, a linguist may ‘model’ that construct in a more abstract way in order to identify generalizations about a language, such as using a phrase structure tree (example [2]). A widely used representation in the constructional literature is the ‘boxes-within-boxes’ notation (Fried & Östman Reference Fried, Östman, Fried and Östman2004) of example (3), in which the tree structure of example (2) is represented as nested boxes, with the outer box representing a parent node (also called a ‘Mother’ node) and with the inner boxes representing its children nodes (also called ‘Daughters’). Each box also has a ‘feature structure’ (consisting of pairs of features and values) for adding more fine-grained linguistic information, such as the features ‘cat’ (category) and ‘lxm’ (lexeme), and values such as ‘np’ (noun phrase) or the lexeme gigantic. As we will see later in this chapter, constructional analyses are often more complex than tree structures, so instead of collecting tree structures in a treebank, constructional analyses can be stored in a ‘constructional analysis bank’.

(2)

(3)

By far most of the constructional analyses in the literature are ‘pen-and-paper’ insights performed by linguists themselves. These analyses are almost never presented with all of their details, either because the construction grammarian is using informal diagrams, or because the full analysis involves too much information to fit within the space limits of a publication. Alternatively, an analysis can also be constructed by a computational construction grammar. In this case, a linguist typically uses a computational tool for operationalizing constructional knowledge, which is then applied for automatically analyzing or producing constructs. The linguist can then verify whether the analysis corresponds to their expectations, which they can use either to validate their theory or for identifying which parts need further refinement. The computational model can then be shared as supplementary materials to a publication, allowing other researchers to verify and reproduce its analyses, or be made available as a web demonstration.

The term ‘constructional analysis’ does not really occur in the literature in the same sense as used in this chapter. In fact, many construction grammarians never clearly distinguish between what they call a ‘construction’ and what is called here a ‘constructional analysis’ and what we defined earlier as a ‘construct’. It is therefore common to find statements such as that the constructional analysis of example (3) is ‘an instance of a Determiner-Adjective-Noun construction’, or that the construct she gave her son a present is ‘an instance of the Ditransitive construction’. As we will see later, such phrasing is confusing and it would be more precise to say that a constructional analysis such as example (3) is accounted for by a combination of several constructions, including not only the Determiner-Adjective-Noun construction, but also lexical constructions that contribute information about the words a, gigantic, and insect.

Unsurprisingly, the more formal constructional approaches make the distinction clear between constructions and constructional analyses. In CxG and Sign-Based Construction Grammar (SBCG), a constructional analysis is called a ‘modeling-domain object’ (Kay Reference Kay2002), or a ‘linguistic object’ (Sag Reference Sag, Boas and Sag2012). In Fluid Construction Grammar, a constructional analysis is called a ‘(final) transient structure’ (Steels Reference Steels2017).

10.2.3 Constructions and Constructicons

Obviously, it is impossible to establish an exhaustive corpus of all the constructs of a language “because all languages allow an indefinitely large number of sentences” (Haspelmath Reference Haspelmath, Heine and Narrog2009: 290), hence it is equally impossible to develop an exhaustive constructional analysis bank. Descriptive linguistic theories therefore try to describe recurrent patterns of language usage in a way that accurately predicts the linguistic behavior of the members of a linguistic community. Traditionally, such recurrent patterns have been called ‘constructions’. When used in this sense, a linguist might say for instance that the English language employs the ‘Progressive Construction’ for expressing ongoing events using the pattern ‘Auxiliary-BE + V-ing’ as exemplified in the sentence I am learning how to develop computer games.

Since the Chomskyan revolution in the 1950s–1960s (Chomsky Reference Chomsky1957, Reference Chomsky1965), cognitive linguistic theories have emerged that aim to account for the linguistic knowledge that a language user needs for producing and comprehending novel constructs. Chomskyans assume that such knowledge takes the form of a generative device that applies a small set of abstract rules (Chomsky Reference Chomsky1981, Reference Chomsky1995). In this view, constructions exist only as the ephemeral side-effects of more fundamental operations and are therefore inconsequential for linguistic theorizing. As a result, there has been a wide gap between descriptive and cognitive linguistic theories. Indeed, the linguist Norbert Hornstein has attempted to rebrand descriptive linguistics as ‘languistics’, keeping the term ‘linguistics’ exclusively for theories that focus on mental models of linguistic competence (Leivada Reference Leivada2014). The major innovation of Construction Grammar, then, was to reconceptualize the traditional notion of a construction as the basic unit of linguistic knowledge (Fillmore et al. Reference Fillmore, Kay and O’Connor1988). In other words, for a language user to ‘know their language’, they simply have to know its constructions (Fried & Östman Reference Fried, Östman, Fried and Östman2004), which could finally bridge the gap between descriptive and cognitive linguistic theories.

All flavors of construction grammar, except for SBCG, therefore assume that a language user’s linguistic inventory is a structured network that exclusively consists of constructions (Diessel Reference Diessel2019). Since the construction inventory does not sharply distinguish between lexical and grammatical constructions, it is called a ‘constructicon’. SBCG is the odd one out by distinguishing between ‘listemes’ (which roughly corresponds to the ‘lexicon’) and phrasal constructions, though it still offers a uniform way to model the syntax–lexicon continuum (Michaelis Reference Michaelis, Busse and Moehlig-Falke2019). Fluid Construction Grammar, which supports any kind of constructional analysis, assumes a single constructicon by default but allows its users to distinguish lexical from grammatical constructions if they wish to do so.

10.2.4 The Relation between Constructions and Constructional Analyses

The relation between constructions on the one hand, and constructional analyses of constructs on the other, is different depending on the constructional flavor. As a rule of thumb, constructions can be thought of as (partially) schematic pieces of conventionalized linguistic information (or ‘linguistic patterns’), while a constructional analysis is always specific to the construct it represents. For example, the English Determiner-Adjective-Noun pattern in example (4) abstracts away from the specific analysis of example (3) by leaving the lexemes of the inner boxes unspecified. This pattern is therefore not only compatible with the construct a gigantic insect, but also with any other construct that follows the same pattern, such as his numerous legs.

(4)

Most construction grammarians will say that constructions can be combined with each other for producing or comprehending utterances, but it is almost never spelled out exactly how constructions combine. In fact, most constructional flavors, including those that subscribe to the tenets of usage-based linguistics (Diessel Reference Diessel and Aronoff2017), uphold some distinction between competence and performance (Chomsky Reference Chomsky1965) in practice and only focus on constructions as pieces of linguistic knowledge without modeling how that knowledge should be processed.

The strongest competence-performance distinction is found in the so-called ‘constraint-based’ construction grammars, particularly SBCG, which aims at processing-neutral competence models (Sag & Wasow Reference Sag, Wasow, Borsley and Börjars2011; Sag Reference Sag, Boas and Sag2012). In a constraint-based grammar, constructions are defined as ‘partial descriptions’ of well-formed structures or, put more precisely, as ‘constraints’ on what the grammar will accept as well-formed structures. An intuitive way to think about an SBCG grammar is to compare it to the list of building regulations that a safety inspector must check in order to approve a house, without having to build the house themselves (which is the job of the contractor). Likewise, SBCG constructions are formulated as constraints that can be checked independently from each other in order to estimate the degree of well-formedness of a construct, without having to be combined to actually form the construct (which is the job of the processor).

A second kind of construction grammars are ‘usage-based’ (Diessel Reference Diessel and Aronoff2017), which includes, among others, Radical Construction Grammar (Croft Reference Croft2001), Cognitive Construction Grammar (Goldberg Reference Goldberg2006, Reference Goldberg2019), and a lot of work in Berkeley Construction Grammar (see further below). Usage-based construction grammarians assume that language learning and usage have a profound impact on linguistic structure and therefore typically address their attention to questions of language acquisition (Diessel Reference Diessel2004; Goldberg et al. Reference Goldberg, Casenhiser and Sethuraman2004; Lieven Reference Lieven2009), language change (Croft Reference Croft2000; Fried Reference Fried2009), and language processing (Goldberg Reference Goldberg2019). However, such studies will typically use verbal explanations for the role of constructions in production and comprehension but rarely offer an explicit processing model of how constructions can be combined with each other, as lamented by Bod (Reference Bod2009).

(Berkeley) Construction Grammar offers a versatile approach that can be classified as constraint-based, but which has also been fruitfully applied for usage-based studies (e.g., Fried Reference Fried2009), contrary to the common misunderstanding that CxG is non-usage-based (see, e.g., Goldberg Reference Goldberg2006; Hoffmann Reference Hoffmann2022). In fact, CxG is the only theoretical approach in our comparison that proposes a detailed model of how two constructions can be combined with each other: ‘Kay unification’ (Kay & Fillmore Reference Kay and Fillmore1999; Kay Reference Kay2002).Footnote 1 Unification is a complex concept that has many different instantiations (Knight Reference Knight1989), but for our purposes it suffices to understand it as a way to combine two constructions with each other as long as they do not contain conflicting information. In CxG, a construct is therefore said to be licensed if there exists a set of constructions that can be unified with each other to form the constructional analysis of the construct. However, just like the other constructional flavors, CxG does not propose a processing model for actually computing a constructional analysis.

Linguists who are nevertheless interested in developing models of constructional language processing can do so using Fluid Construction Grammar (Steels Reference Steels, Daelemans and Walker2004, Reference Steels2017), which is a special-purpose programming language for implementing construction grammars.Footnote 2 FCG can be downloaded both as open-source software, or as part of the FCG Editor, an integrated development environment for construction grammars (van Trijp et al. Reference van Trijp, Beuls and Van Eecke2022). In FCG, constructions are used for mapping meanings onto constructs (‘production’, or ‘formulation’ in FCG terminology), or vice versa, for decoding a construct into its underlying meaning (‘comprehension’). Details of processing can be inspected through an interactive web interface and modified with the help of a grammar configurator. In FCG, constructions are not combined directly with each other but operate on a structure called a ‘transient structure’, which contains all the information about a construct, starting from the meaning to express in production or the form to analyze in comprehension. The word ‘transient’ highlights the fact that this structure changes over time as more constructions contribute information, until a ‘final transient structure’ is achieved, which corresponds to what I call ‘constructional analysis’ in this chapter. We will return to these matters in Section 10.5.

10.3 Getting the Empirical Facts Straight

While the earliest work in Construction Grammar in the 1980s–1990s set out an ambitious research agenda and laid the field’s theoretical foundations, most constructional research in the twenty-first century has been concerned with getting the empirical facts straight.

10.3.1 The Distributional Method Revisited

One of construction grammar’s oldest problems has been how to identify a construction based on a corpus of empirical evidence. For a long time, it was popular to posit the existence of a construction as soon as a pattern could be found whose form or meaning could not be reduced to the sum of its parts, or which could not be formed through the composition of other constructions. Goldberg formulated this view as follows:

C is a construction iffdef C is a form–meaning pair <Fi, Si> such that some aspect of Fi or some aspect of Si is not strictly predictable from C’s component parts or from any other previously established constructions. (Goldberg Reference Goldberg1995: 4)

Such a definition implies that there is no room for redundancy in the constructicon, which was indeed the prevalent view in early Construction Grammar and which is still true for SBCG. Nowadays, however, most construction grammarians argue that any pattern that is frequent enough can be stored as a construction, even if doing so would be redundant. That brings us back to the question how a construction can be identified.

The constructional theory that has been most explicit about how constructions can be identified is Radical Construction Grammar, which grew out of a thorough re-examination of what kind of syntactic argumentation is warranted for describing linguistic structures (Croft Reference Croft2001, Reference Croft, Östman and Fried2005, Reference Croft, Boye and Engberg-Pedersen2010). Most importantly, Croft formulates his methodological arguments in a way that does not presuppose a constructional approach, but in a way that approaches the empirical data as neutrally as possible (outlined in more detail in Chapter 17). Too often in the past, linguists have wittingly or unwittingly described languages from the viewpoint of well-studied languages such as Latin or English, or they have applied unwarranted assumptions from a linguistic framework (Haspelmath Reference Haspelmath, Heine and Narrog2009). To avoid such pitfalls, Croft (Reference Croft, Boye and Engberg-Pedersen2010: 314) argues that the ‘distributional analysis’ remains “the most important and soundest methodology in syntactic analysis,” provided that it is modified to relate utterances to actual usage events. Let us first look at how the distributional analysis can be used for examining the occurrence or non-occurrence of items in the ‘slots’ of a construction before addressing the question of how to identify the constructions themselves, using a couple of simple examples from Croft (Reference Croft2001: 12), repeated here in examples (5)–(8).

(5)

a. Jack is cold. b. *Jack colds.

(6)

a. Jack is happy. b. *Jack happies.

(7)

a. *Jack is dance. b. Jack dances.

(8)

a. *Jack is sing. b. Jack sings.

In the (a) sentences in examples (5)–(8), the words cold, happy, dance, and sing follow the inflected copula be. This is acceptable for cold and happy, but not for dance and sing. In the (b) sentences, there is no copula and the four words appear with the suffix -s. These sentences are acceptable for dance and sing, but not for cold and happy. Cold and happy therefore have the same distribution, while dance and sing share a different distribution.

Now consider examples (9)–(10) in which happy and cold appear after a determiner and before a noun, which again confirms their shared distribution pattern.

(9) a cold winter

(10) a happy person

Again, cold and happy have the same distribution pattern. Traditionally, such data have been used as evidence for the existence of the part-of-speech adjective as an abstract category. However, a group of words that Boyd and Goldberg (Reference Boyd and Goldberg2011) call a-adjectives resist occurring in a prenominal position, as shown in examples (11)–(12).

(11)

a. the boy is afraid b. ??the afraid boy

(12)

a. the man is asleep b. ??the asleep man

The empirical record thus shows that words such as afraid and asleep do not share the exact same distribution pattern as cold and happy, which is problematic for traditional approaches that classify all four words as adjectives. As noted by Croft (Reference Croft2001), a common ‘solution’ to such problems is methodological opportunism whereby the linguist decides in an ad hoc manner to ignore certain data or criteria to fit their analysis, even if it means positing abstract categories that are not borne out by the evidence. Another common way to handle these problems is to introduce additional theoretical apparatus for saving the analysis (also see the discussion on Active vs. Passive in Section 10.3.2), for which no empirical grounds exist either.

Croft (Reference Croft2001) argues that the problems of the distributional analysis stem from the fact that most linguistic theories are ‘reductionist’. That is, most linguistic theories try to reduce complex phenomena to ‘atomic’ (i.e., irreducible) building blocks such as parts of speech that are more manageable. Construction grammarians, on the other hand, assume that the ‘primitive’ units of language (constructions) are inherently complex themselves. ‘Primitive’ here means that constructions cannot be explained in terms of their components because the whole construction is greater than the sum of its parts. RCG takes this constructions-as-the-primitives-of-language assumption to its logical conclusion and, therefore, argues that a construction’s components are construction-specific and should be defined in terms of their role within a specific construction.

From a theoretical perspective, it may seem counterintuitive to give up atomic categories for more complex primitives. However, it is well known from other fields that more sophisticated building blocks ultimately lead to simpler solutions for complex and large-scale problems. A good example is architecture: You can easily build a dog house using basic materials such as a sheet of plywood, clams, and screws, but those materials would never scale to support the size of a house for humans. Likewise, it is easy to account for declarative sentences in English using simple rules such as phrase structure rules, but trying to apply them to more complex structures or typologically different languages rapidly leads to convoluted analyses that require additional mechanisms such as transformations, lexical rules, or global principles – all of which can be discarded when constructions are used (van Trijp Reference van Trijp2014, Reference van Trijp2015). Indeed, the constructional approach “provides a uniform model of grammatical representation and at the same time captures a broader range of empirical phenomena” (Croft Reference Croft2001: 17) – all the while using a simpler scientific metalanguage than other approaches.

RCG has the simplest scientific metalanguage of all constructional flavors. All that is needed for describing a language is a taxonomy of constructions and all that is needed for describing the internal structure of constructions is part–whole relations: “The syntactic structure of constructions consists only of their elements (which may also be complex …) and the roles that they fulfill in the construction” (Croft Reference Croft2001: 5). Categories are therefore not global pieces of knowledge but construction-specific, so every construction can be described in its own (often quirky) way, which avoids problems such as leaky abstractions. Returning to examples (5)–(12), RCG would therefore not introduce an abstract category such as ‘adjective’, but simply posit separate constructions for constructs such as John is happy and for constructs such as a happy person. Both constructions have their unique slot for elements that can play a modifier role and both have their own conditions on which elements may fill that slot. While the first construction happily allows the so-called a-adjectives to play the modifier role, the second one is more restrictive.

So far we have only focused on the occurrence and non-occurrence of elements in the slots of a construction but we haven’t answered the question yet how constructions themselves can be identified. Croft (Reference Croft2001: 236f.) writes that constructions have a “structural Gestalt” that can be recognized as a pattern by language users. For example, the English Passive has several unique cues that set it apart from other constructions, such as the combination of auxiliary-be and the past participle, and a by-phrase with the Agent or Source of the action.

Since RCG is a cognitive theory of language just like the other flavors, it offers a theory about the linguistic knowledge that language users need to have for producing and comprehending constructs. However, its careful treatment of data and simple scientific metalanguage also makes RCG a good starting point for a descriptive theory of a language without making claims about cognitive plausibility, and Croft (Reference Croft2001: 59–61) provides a brief overview of how a reference grammar in RCG would look like.

10.3.2 The Collostructional Analysis and Its Extensions

While RCG caters for linguists who need to approach an undescribed language and therefore need to discover in an unbiased way which constructions exist, most empirical studies in the construction grammar community typically start by already positing a construction, and then use the ‘collostructional analysis’ for characterizing its meaning in a more precise and objective way (Stefanowitsch & Gries Reference Stefanowitsch and Gries2003; see also Chapters 6 and 7). The name ‘collostructional’ is a combination of ‘construction’ and ‘collocational’, the latter being a term from corpus linguistics that designates a series of forms that co-occur more frequently than would be expected by chance. The collostructional analysis can thus be seen as a kind of collocational analysis that assumes the existence of a construction and then identifies which forms are associated with the slots of the construction. The underlying assumption is that a construction will attract semantically compatible lexemes, while repelling others. The collostructional analysis is in principle compatible with any constructional flavor, but has in practice mostly been carried out within the framework of Cognitive Construction Grammar (Goldberg Reference Goldberg2006, Reference Goldberg2019). Just like RCG, CCG is usage-based but with a stronger focus on the psychological aspects of language learning (Tomasello Reference Tomasello2003; Diessel Reference Diessel2004; Lieven Reference Lieven2009) and processing (Boyd & Goldberg Reference Boyd and Goldberg2011; Goldberg Reference Goldberg2019).

The collostructional analysis has been extended in several areas. For instance, diachronic collostructional analysis (Hilpert Reference Hilpert, Allan and Robinson2012) tracks changes in collocational patterns over time using temporally ordered corpora. Another popular extension, called ‘distinctive-collexeme analysis’, is geared towards the investigation of pairs of semantically similar constructions, also known as ‘alternations’ (Gries & Stefanowitsch Reference Gries and Stefanowitsch2004). One of the most famous ‘alternating’ pairs in English is the Active versus Passive voice, as illustrated in examples (13)–(14).

(13)

The boy kicked the ball. SUBJ Verb OBJ

(14)

The ball was kicked (by the boy). SUBJ Aux-be Verb-ed byAgent

A distinctive-collexeme analysis can help refine an analysis of both constructions, or compare competing theories. Here we can contrast a ‘lexicalist’ versus a ‘constructional’ approach. In lexicalist and other mainstream approaches, the English Passive has typically been treated as a derivational rule, which is essentially a syntactic operation that turns an active verb into a passive one. In a constructional approach, the Passive Construction is a first-class citizen just like the Active Construction, and therefore has its own semantics.

Gries and Stefanowitsch (Reference Gries and Stefanowitsch2004: 108) note that only a few studies exist that have attempted to characterize the meaning of the Passive construction independently from the Active, but find a proposal by Pinker (Reference Pinker1989: 91), who defines the meaning of the Passive as “X is in the circumstance … for which Y is responsible,” with X referring to the Passive subject and Y to the agentive by-phrase. Gries and Stefanowitsch (Reference Gries and Stefanowitsch2004: 108) argue that if the Passive is essentially a syntactic derivation (as hypothesized in the lexicalist approach), one would not expect to find any strong differences in which verbs would fit the Active or Passive sentence types. If, on the other hand, the Passive Construction has its own semantics (as hypothesized by the constructional approach), one would expect to find many distinctive collexemes.

The results of their study show that the constructional approach makes the correct prediction. The most distinctive collexemes for the Active Construction (e.g., have, think, get) are verbs whose direct objects are not easily construed as patients (e.g., stative verbs). The most distinctive collexemes for the Passive Construction (e.g., base, concern, involve) confirm the definition of Pinker (Reference Pinker1989: 91) and “overwhelmingly encode processes that cause the patient to come to be in a relatively permanent end state” (Gries & Stefanowitsch Reference Gries and Stefanowitsch2004: 109). They conclude that “the distinctive-collexeme analysis shows that passive voice is a construction in its own right with its own specific semantics.”

10.4 Constructions and Frames

We now turn from empirically observable forms to the question how constructions handle the expression of meaning. All flavors of construction grammar assume a Frame Semantics approach to lexical meaning (Fillmore Reference Fillmore1976, Reference Fillmore1982; Petruck Reference Petruck2011), which is widely considered to be construction grammar’s ‘sister theory’ (see Chapter 1 in this volume). Unfortunately, the phrase ‘Frame Semantics is assumed’ is often repeated as a sort of mantra in the constructional literature without further investigation of how semantics and grammar interact with each other, so it is worthwhile to briefly investigate this relation.

10.4.1 Scenes and Frames

The central slogan of Frame Semantics is that meanings are relativized to scenes (Fillmore Reference Fillmore and Zampolli1977; see also Chapter 1). Scenes can be grounded in the real world through our sensorimotor apparatus, or they can be cognitive scenes that are evoked by constructs. For instance, a verb such as to cook activates an entire cooking scene such as the one depicted in Figure 10.1. Cooking is an extremely rich experience, but people can learn to construct and use a ‘semantic frame’ to make sense of what is happening. A semantic frame is a skeletal representation of recurrent experiences with open slots (called ‘frame elements’) that need to be filled in. According to the English FrameNet database (Baker et al. Reference Baker, Fillmore and Lowe1998), the Cooking_Creation frame involves at least twelve frame elements, including prominent ones such as Cook and Produced_food, but also roles such as Container, Ingredients, Place, and Recipient. Example (15) provides some constructs that evoke the frame.

(15)

a. [Shecook] is preparing [vegetable soupproduced_food] in [an orange potcontainer]. b. [Shecook] loves cooking in [her new kitchenplace]. c. [Shecook] loves cooking for [her guestsrecipient]. d. [The vegetable soup produced_food] is [slowlymanner] cooking.

Figure 10.1 How a Cooking-frame can be used for making sense of the activity in the picture

As can be seen, the relation between a semantic frame and its morphosyntactic encoding in language is indirect: Only a handful of frame elements are actually realized in a construct, none of them is guaranteed to appear, and the same frame element may play a different grammatical role depending on the construction in which it occurs. Semantic frames are also not tied to a single lexical unit (e.g., both preparing and cooking may evoke the cooking frame in example [15]), and vice versa, a lexical item may evoke different frames (e.g., one can also prepare a presentation, which usually involves little to no cooking).

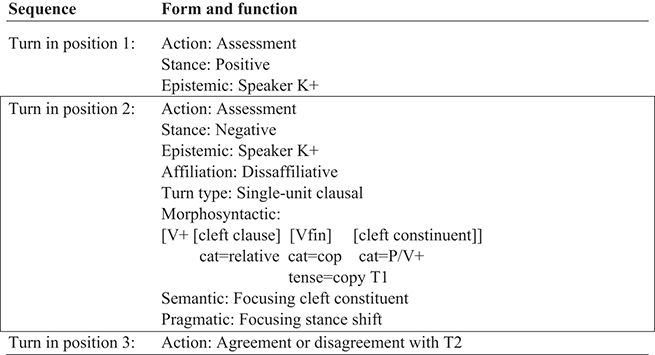

Different constructional flavors provide different solutions for handling the indirect relation between meanings and constructs, which to a large extent depends on how strongly the flavor is embedded in a broader theory of cognition. To compare the different flavors to each other, we will take the diagram in Figure 10.2 as a starting point. This figure, based on Fried (Reference Fried2005), shows the different steps that an analysis (potentially) has to address in order to explain the different ways in which semantic frames can be expressed and understood. The figure comprises four mapping processes, with each mapping adding a layer of abstraction: (i) between scenes and semantic frames, (ii) between the frame elements (FEs) of semantic frames and semantic roles (SRs), (iii) between semantic roles and grammatical functions (GFs), and (iv) between grammatical functions and their surface forms. As stated before, all constructional flavors assume a Frame Semantics approach so they agree on the first step in which scenes are conceptualized in terms of semantic frames. We will now go through the different steps for each constructional flavor.

10.4.2 Berkeley Construction Grammar

Mapping between Frame Elements and Semantic Roles

Semantic frames are rich representations of human experiences that are impossible to pass through the filter of language in their entirety. In Construction Grammar, frame elements are therefore mapped onto semantic roles, which can be considered as linguistic abstractions over event-specific roles. For instance, an agent is an abstraction of frame elements such as the Cook of a Cooking_Creation frame, or the Wearer of a Wearing frame. Semantic roles (also called case-, theta-, argument-, or thematic-roles) have a longstanding history which dates back to Pāṇini’s grammar of Sanskrit, but only resurfaced in modern linguistics under the influence of Gruber (Reference Gruber1965) and especially Charles Fillmore’s seminal paper “The case for case” (Fillmore Reference Fillmore and Fillmore2003, orig. publ. in 1968).

More specifically, CxG stores entire semantic frames in what I will call ‘frame-evoking constructions’, a practice that has been adopted by all of the other constructional flavors as well. Typically, these are lexical constructions, but idiomatic constructions such as the kick the bucket-construction or partially schematic constructions can also store information about the frames they evoke. Besides storing a frame, frame-evoking constructions will also provide information about their ‘minimal valence’, which selects the most salient frame elements from a large set of FEs and maps them onto more abstract semantic roles, as illustrated in example (16), which is a partial representation of the lexical construction cook. The Cooking_Creation frame is listed in the sem-feature (semantics) of the construction. For convenience’s sake, I only list three instead of all twelve frame elements. Each frame element is indexed with a number such as #1 for the FE Cook and #2 for the FE Produced_food. These indices can now be used as pointers in other parts of the construction, as is done here in the val-feature (valence). The val-feature is a set of relations (rel). For example, ‘rel #1’ relates information to the FE with the index #1 (the Cook). This information is a feature structure that specifies the semantic role (using the theta-symbol θ). As can be seen, the FE Cook is mapped onto ‘agt’ (agent) and the FE Produced_food is mapped onto ‘pat’ (patient).

(16)

Besides specifying semantic roles, the valence feature also indicates which of the semantic roles has privileged status and is therefore more likely to become the subject. In Fillmore’s Case Grammar, a predecessor of CxG, semantic roles would be ranked in a ‘thematic hierarchy’ from most agent-like to most patient-like (Fillmore Reference Fillmore and Fillmore2003). A language such as English is said to take the most agent-like semantic role as the subject of an Active construction. However, thematic hierarchies have turned out to be incompatible with empirical evidence (Croft Reference Croft, Butt and Geuder1998; Levin & Rappaport Hovav Reference Levin and Rappaport Hovav2005), since every verb may behave in an idiosyncratic way. CxG, therefore, specifies which semantic role should be considered as the ‘distinguished argument’ (DA) for each predicate. The feature–value pair [DA+] thus indicates which semantic role is in position for becoming the subject, while [DA−] indicates which semantic role does not have privileged status.

Mapping between Semantic Roles and Grammatical Functions

In the next step, semantic roles (if posited) are mapped onto grammatical functions such as subject and object. In (Berkeley) Construction Grammar, this mapping is done by ‘linking constructions’ (Fried & Östman Reference Fried, Östman, Fried and Östman2004). Linking constructions are functional constructions that do not involve constituent structures but simply connect semantic roles to grammatical functions. Example (17), adapted from Fried and Östman (Reference Fried, Östman, Fried and Östman2004: figure 14), illustrates the Transitive Object Construction, which unifies with active verbs and which specifies that the verb’s non-distinguished argument maps onto the object.

(17)

Linking constructions provide a flexible explanation for the fact that verbs may occur in numerous different patterns, also known as ‘the problem of multiple argument realization’. Multiple argument realization is a ‘problem’ for lexicalist analyses (see Section 10.4.5) that assume that a verb’s argument realization pattern is already specified in the lexicon. In order to handle all of the other patterns in which a verb may occur, lexicalist accounts therefore need mechanisms such as lexical rules or transformations that override the verb’s default constraints. Construction Grammar avoids this problem by letting linking constructions rather than lexical constructions decide on the mapping between semantic roles and grammatical functions. As already discussed in Section 10.3.2, this implies for instance that the English passive is not derived from an active construction but that it exists on a par with other linking constructions and that it has its own semantics and pragmatics, as shown in example (18), adapted from Fried and Östman (Reference Fried, Östman, Fried and Östman2004: figure 17). This linking construction maps the distinguished argument (which would be the subject in an active construction) onto a by-phrase.

(18)

Besides providing different linking relations, linking constructions may also add valence elements to compatible predicates (called ‘valence augmentation’). Typical examples are Adjunct Constructions (Kay & Fillmore Reference Kay and Fillmore1999). For instance, in the construct She prepared vegetable soup in an orange pot, the phrase in an orange pot is not part of the verb’s minimal valence but is added by an Adjunct Construction.

10.4.3 Cognitive Construction Grammar

Mapping between Frame Elements and Semantic Roles

The most popular constructional approach to argument structure is proposed by Cognitive Construction Grammar (Goldberg Reference Goldberg1995; Goldberg et al. Reference Goldberg, Casenhiser and Sethuraman2004), which borrows many insights from CxG and is therefore very similar in spirit. Just like CxG, CCG assumes a frame-semantic approach to lexical meaning. A lexical construction such as cook, therefore, evokes the Cooking_Creation frame and its frame elements (or ‘participant roles’ in Goldberg’s terminology). Goldberg uses a more informal representation that includes the most salient frame elements in boldface, as shown in example (19).

(19)

COOK < Cook Recipient Processed_food … >

One important difference between CCG and CxG is that CCG does not include a valence feature in lexical constructions. Instead, CCG posits the existence of more abstract ‘argument structure constructions’ such as the Ditransitive Construction. Crucially, an argument structure construction expresses argument structures in very much the same way as lexical constructions express semantic frames. Goldberg (Reference Goldberg1995: 51) writes that the semantics of the English ditransitive construction is ‘X CAUSES Y TO RECEIVE Z’, which is expressed as an argument structure frame in example (20). This pattern is also repeated in the diagrammatic representation of the Ditransitive Construction in Figure 10.3.

(20) CAUSE-RECEIVE < agt rec pat >

Figure 10.3 The English Ditransitive Construction

As can be seen in example (20) and on top of Figure 10.3, the CAUSE-RECEIVE frame has three semantic roles (or ‘argument roles’ in Goldberg’s terminology): agent (agt), recipient (rec), and patient (pat). Verbs can combine with argument structure constructions if they can, at a minimum, provide frame elements that can be ‘fused’ with the semantic roles of the Argument Structure Construction that are printed in boldface (Goldberg Reference Goldberg1995: 51). Fusion can be compared to the notion of ‘thematic fit’: The FE Cook best fits the agent role, the FE Processed_food best fits the patient role, and so on. The verb may optionally supply frame elements for the other semantic roles in regular font, or the argument structure construction can supply the semantic role itself and thereby augment the verb’s argument realization pattern. This process is called ‘coercion’ (Goldberg Reference Goldberg1995) or ‘type shifting’ (Michaelis Reference Michaelis2004).

The choice for specifying semantic roles in argument structure constructions rather than in the valence of lexical constructions is more than simply a notational variant. First of all, CCG’s argument structures are ‘Gestalt structures’ just like constructions are. That is, semantic roles can only be understood in terms of the argument structure patterns they occur in. This means that the fusion of verb-specific frame elements with more abstract semantic roles is only possible if (part of) the verb’s semantic frame can be construed as an instance of the more abstract argument structure frame, which ensures a close semantic alignment between the verb and the argument structure constructions that it may combine with. In Berkeley CxG, on the other hand, semantic roles are somewhat disconnected from actual semantic frames so they could easily be replaced by any formal symbol for linking frame elements to grammatical functions, which makes them almost meaningless. Secondly, CCG’s argument structure constructions and the fusion process make it in principle possible to have construction-specific semantic roles and grammatical functions, while the CxG approach always implies the existence of categories that are shared across multiple constructions. CCG thus incorporates many CxG insights, while its Gestalt structures and possibility for construction-specific categories align it more with RCG.

Mapping between Semantic Roles and Grammatical Functions

The mapping between semantic roles and grammatical functions in CCG is quite straightforwardly a one-to-one mapping that is specified within an argument structure construction. As illustrated in Figure 10.3, the English Ditransitive Construction always maps an agent onto subject, recipient onto indirect object (OBJ1), and patient onto direct object (OBJ2). Just like CxG, different mappings are therefore taken care of by different constructions such as the Passive Construction. In fact, one of the central claims of CCG is that it is more insightful to treat every construction in its own right rather than as ‘alternations’ of each other. This claim is formulated by Goldberg (Reference Goldberg2002: 329) as the Surface Generalization Hypothesis: “There are typically broader syntactic and semantic generalizations associated with a surface argument structure form than exist between the same surface form and a distinct form that it is hypothesized to be syntactically or semantically derived from.”

Just like the linking constructions of CxG, CCG’s argument structure constructions “do not specify phrase structure trees or word order directly” but “other constructions that they combine with do” (Goldberg Reference Goldberg2013: 453). Unfortunately, CCG does not propose an explicit model for the internal structure of argument structure constructions, which has left the analysis vulnerable to criticism (e.g., Müller & Wechsler Reference Müller and Wechsler2014) and various interpretations. For example, Müller and Wechsler (Reference Müller and Wechsler2014) assume that argument structure constructions resemble the functional structures of Lexical-Functional Grammar (Kaplan & Zaenen Reference Kaplan, Zaenen, Dalrymple, Kaplan, Maxwell and Zaenen1995); Osborne and Gross (Reference Osborne and Gross.2012) propose to model them as head-dependency trees; and van Trijp (Reference van Trijp2015) operationalizes them as multi-dimensional structures.

10.4.4 Radical Construction Grammar

RCG is close in spirit to CCG because it involves argument structure constructions as well. However, the RCG approach does not involve abstract semantic roles (or argument roles). Instead, Croft (Reference Croft, Butt and Geuder1998: 31) argues that generalizations across different semantic frames should be based on a ‘causal chain of force-dynamics’. A force-dynamic analysis focuses on the transmission of force relationships between frame elements. Example (21) illustrates the force-dynamic relations between the participants in the construct Bill cut the vegetables with a knife for Carol. This construct describes a scene with the following causal chain of subevents: (i) Bill acts on a knife (he takes it); (ii) the knife acts on the vegetables; and (iii) the vegetables cause an effect in Carol in the sense that she benefits from them being cut for her. As can be seen, the transmission of force (indicated by an arrow) is an asymmetric relation between an ‘initiator’ and an ‘endpoint’.

(21)

The mapping from a causal chain to a construct can be described using a small set of universal (in the Greenbergian sense) linking rules (Croft Reference Croft2012: 221), which I will explain here in a simplified manner for convenience’s sake. First, verb meaning is represented as ‘profiling a segment’ of the causal chain, which is indicated in example (22) by solid arrows. The initiator of this segment maps onto the subject, while the endpoint (if different from the initiator) maps onto the object. This segment is called the ‘verbal profile’.

(22)

Besides subject and object, there are two types of ‘oblique’ participants: those that precede the object in the causal chain (called ‘antecedent oblique’; knife in the current example) and those that follow the object (called a subsequent oblique; Carol in the current example). Most languages tend to treat each kind of obliques differently. For instance, the English preposition with only introduces antecedent obliques, while prepositions such as for and to only occur with subsequent obliques.

As stated before, argument structure constructions in RCG do not involve semantic roles but, instead, they indicate how the verbal profile should be construed. For instance, when the verb cut combines with the Active Transitive Construction, the verbal profile delimits the segment going from Bill to vegetables in example (22). The English Passive Construction, on the other hand, implies a different construal, where the initiator of the verb profile is vegetables (and therefore maps onto subject), hence Bill becomes an antecedent oblique, leading to constructs such as The vegetables were cut by Bill. The profiled causal chain for the Passive Construction is shown in example (23).

(23)

In sum, RCG shares several assumptions with CxG and CCG. It assumes a Frame Semantics approach, and argument realization is handled through constructions that exist on an equal footing instead of treating one kind of construction (such as the Passive) as a derivation of another one (such as the Active). RCG also shares with CCG the existence of argument structure constructions that express argument realization patterns in a more holistic way than CxG’s linking constructions do. One important difference, however, is that argument structure constructions do not express more abstract argument structure frames but, rather, the causal chains and verb profiles as construed by language users.

10.4.5 Sign-Based Construction Grammar

Sign-Based Construction Grammar (SBCG) is considered to be the most remote from the other flavors of construction grammar because it proposes a lexicalist rather than a constructional approach to argument realization, which means that argument realization is mostly handled through constraints in the lexicon rather than through the combination of lexical and grammatical constructions. For educational purposes (and for constraints of space), I will explain the SBCG account of Sag (Reference Sag, Boas and Sag2012) in a simplified way that does not reflect all of the technical details but instead focuses on the essence of the approach.

Just like the other constructional flavors, SBCG includes event-specific frame elements in the definition of lexical constructions. However, frame elements are not mapped onto semantic roles or event profiles but are directly associated with a syntactic structure. For instance, a verb such as to cook is typed as a transitive verb whose default argument realization pattern is directly specified in a feature called ARG-ST (argument structure), as shown in example (24). In other words, this feature states that the verb needs to be combined with two noun phrases to make a well-formed sentence. Just like we saw for CxG before, each argument is indexed (here: the subscript letters i and j) for linking the argument to a frame element (which here would link the index i to Cook and the index j to Produced_food).

(24)

[ARG-ST < NPi, NPj >]

The arguments in the feature ARG-ST are ordered according to their rank in the Accessibility Hierarchy of Keenan and Comrie (Reference Keenan and Comrie1977). The first argument therefore maps onto subject, the second onto direct object, and so on. In order to handle multiple argument realization, SBCG must therefore modify the ARG-ST list, which is achieved through ‘lexical rules’ (called ‘lexical constructions’ in SBCG terminology). Lexical rules are templates that take a lexical entry or lexeme as ‘input’ and that create a new lexeme as ‘output’. For instance, the Passive Lexical Rule – illustrated in example (25) – modifies the ARG-ST list of a lexeme by turning the object into the subject and by turning the subject into a by-phrase (or omitting it). Additionally, it changes the morphology of the verb. The newly formed passive lexeme thus requires an NP and optionally a by-phrase in order to make a well-formed sentence.

(25)

10.5 Handling the Multi-Dimensionality of Constructions

Many empirical and experimental studies in the constructional literature start with a ‘low resolution’ image of a construction by using informal diagrams and verbal exposition. This strategy is justified when the primary concern is analyzing the data in an as unbiased way as possible. However, informal analyses are vulnerable to misinterpretation and may therefore lead to debates in which everyone keeps talking past each other, which may hinder future progress in the field. Secondly, constructions are ‘multi-dimensional structures’ (Fried & Östman Reference Fried, Östman, Fried and Östman2004; van Trijp Reference van Trijp2020) that are too complex to adequately describe without proper formalization, simply because there is only so much that the human mind can handle without the help of adequate tools. This section discusses the most important options that construction grammarians have for formalizing their work, and how to choose between them. But first we must dispel some common myths about formalization.

10.5.1 Common Myths about Formalizing Construction Grammars

Myth 1: Usage-Based Analyses Cannot Be Formalized

The most common myth about formalizing construction grammars is rooted in the harmful ‘functional-versus-formal linguistics’ trope: Since formalization is so strongly associated with Chomskyan linguistics, many cognitive-functional linguists believe that formal approaches are incompatible with the goals of usage-based studies (e.g., Goldberg Reference Goldberg2006: 216–217). This trope stems from a failure to make a difference between a theory and the tools used for formalizing the theory, which started with Chomskyan linguists for whom a formal grammar is a theory of universal grammar. That is, the formal grammar is the generative device that should be capable of generating all (and only) the well-formed constructs of all of the possible human languages. This misconception then spilled over to other linguistic disciplines, and in the construction grammar community this has unfortunately led to the incorrect branding of Berkeley CxG as ‘non-usage-based’ simply because it uses formally precise notations and despite the existence of empirical studies that are compatible with the usage-based perspective (e.g., Michaelis Reference Michaelis2004; Fried Reference Fried2009).

In reality, the same formal tools can be used for different purposes. For instance, SBCG uses feature–value pairs as constraints on well-formed structures. It does so by defining a hierarchy of feature structure types that may occur in a grammar and which feature–value pairs are appropriate for those types (Michaelis Reference Michaelis, Heine and Narrog2009; Sag Reference Sag, Boas and Sag2012). FCG, on the other hand, uses feature–value pairs for computing constructional analyses in production and comprehension. Features do not need to be defined globally, possible values for features are dynamically computed during processing, and new features can emerge on the fly (Steels Reference Steels, Daelemans and Walker2004, Reference Steels2017). Both constructional flavors therefore use feature–value pairs but SBCG employs them in a way that is more suitable for concise mathematical descriptions of a state of a language (though it can be amended for usage-based analyses), while the FCG approach is more adapted for usage-based accounts (though it can also be used for describing the state of a language).

Myth 2: A Theory Is Either Formalized or Not Formalized

A second common myth is that formalization is a binary concept. That is, either something is formalized or it is not. Formalization is actually a matter of degree, which in the construction grammar community ranges from hand-drawn diagrams and verbal exposition to mathematical descriptions and computational implementations. Proper formalization is a process that requires continuous revisions and improvements by numerous people, who must often adapt the formalism in support of ever-evolving theories.

Again, it is CxG that has been harmed the most by this myth. CxG’s innovative theoretical ideas required the development of an equally innovative formalism, which is what Paul Kay and colleagues set out to do (Kay & Fillmore Reference Kay and Fillmore1999; Kay Reference Kay2002). These efforts were accompanied by discussions with other experts in formalization from the Head-Driven Phrase Structure Grammar community (HPSG; Pollard & Sag Reference Pollard and Sag1994), which inspired the HPSG scholar Ivan Sag to work on a ‘constructional’ version of HPSG (e.g., Ginzburg & Sag Reference Ginzburg and Sag2000) but which also led to the identification of some inconsistencies in the CxG formalism (e.g., Müller Reference Müller2006). Unfortunately, rather than ironing out these inconsistencies, the development of the CxG formalism came to a halt with no one to pick up the mantle. Instead, Paul Kay and others moved on to develop SBCG (Boas & Sag Reference Sag, Boas and Sag2012), which is mostly built on the formal foundations of constructional HPSG.

Ivan Sag (Reference Sag, Boas and Sag2012: 70) acknowledged the close resemblance between SBCG and HPSG but also expressed his “hope that construction grammarians of all stripes will find that SBCG is recognizable as a formalized version of BCG” (emphasis added, RvT). However, calling SBCG a ‘formalized version’ of Berkeley CxG is a fine example of the myth in action because it implies that CxG is not formalized. To use the ‘There is X, and then there is X’-construction: There is formalization, and then there is formalization. Both CxG and SBCG can be considered ‘formal’ because they use highly detailed notations, which we will explore in more detail further down. However, these notations still require some verbal explanation to know how they should be interpreted, so from a purely mathematical perspective, neither CxG nor SBCG are fully formalized. In the case of CxG, a mathematical formalism was designed by Paul Kay (Reference Kay2002) but never completely finished. As for SBCG, one could argue that it is further advanced in its formalization because it builds on the theoretical foundations of HPSG, for which a mathematical formalism called RSRL has been developed (Richter Reference Richter2004). However, since SBCG introduces concepts that stray away from what is considered to be HPSG’s standard model theory, the RSRL formalism cannot simply be used for SBCG, so the mathematical formalization of SBCG remains an open research question (Richter, p.c.).

In sum, both CxG and SBCG can safely be classified as formally explicit flavors of construction grammar since they use notations that are sufficiently precise to allow other linguists to interpret them correctly in most cases. The development of SBCG offers an important contribution to the construction grammar community by proposing a so-called ‘model-theoretic’ or ‘constraint-based’ approach. However, CxG puts forward novel ideas that are sometimes difficult or even impossible to express in SBCG, which makes it worth while to further explore its mathematical formalization as well, and its boxes-within-boxes notation may play an important role in the future development of construction grammars (see Section 10.5.2).

Myth 3: A Formal Analysis Is Automatically Computer-Interpretable

Related to the second myth is the false belief that a formally explicit analysis can simply be entered in a computer program and then be used for processing. In reality, however, only analyses that are directly expressed in a computational tool (e.g., Beuls Reference Beuls2012; van Trijp Reference van Trijp2014) can be shown to work, and even then it is not a trivial matter to get the intended analysis correct. Other analyses are amenable for implementation, but this requires interpreting and translating the analysis into a computational platform (e.g., Gromov Reference Gromov2010; Van Eecke & Beuls Reference Van Eecke and Beuls2018).

SBCG analyses are the most straightforward to translate into a computer-interpretable expression because they can be reformulated using tools that were developed for implementing HPSG grammars such as LKB (Copestake Reference Copestake2002) and TRALE (Meurers et al. Reference Meurers, Penn and Richter2002), or using a computational construction grammar platform such as FCG. Among all options, the TRALE platform most faithfully resembles SBCG/HPSG and therefore requires less interpretation and translation efforts (Gromov Reference Gromov2010).

Berkeley CxG is probably best translated into FCG because FCG is able to perform the kind of ‘set unification’ that CxG analyses require but which never worked properly in the CxG formalism (Müller Reference Müller2006), and because FCG supports multi-dimensional constructional analyses (van Trijp Reference van Trijp2014). CxG analyses that only appeal to constituent structures could also be operationalized in tree-based approaches such as Tree-Adjoining Grammar (Lichte & Kallmeyer Reference Lichte and Kallmeyer2017) or Data-Oriented Parsing (Bod Reference Bod2009).

Informal constructional accounts can also be implemented but this requires more interpretation efforts on the part of the computational linguist because the theory leaves more of its parts unspecified. For RCG, “the computational model of construction grammar that comes closest … is Fluid Construction Grammar” (Croft Reference Croft2013: 5). The reason for this affinity between RCG and FCG is that RCG is couched within a broader theory of evolutionary linguistics (Croft Reference Croft2000), while FCG was originally developed for supporting experiments on cultural language evolution (Steels Reference Steels2012a) before it became generalized as a platform for any kind of construction grammar (Steels Reference Steels2017). There is a similar affinity between CCG and FCG because of FCG’s support for usage-based linguistics and language learning, but also because the FCG formalism makes it straightforward to operationalize CCG’s analysis of ‘coercion’ or ‘constructional mismatches’ (Goldberg Reference Goldberg1995), as shown for, among others, argument structure (van Trijp Reference van Trijp2015) and linguistic creativity (Van Eecke & Beuls Reference Van Eecke and Beuls2018).

10.5.2 The Boxes-within-Boxes Notation

The most recognizable formalization of constructions is the ‘boxes-within-boxes’ notation of Construction Grammar (Fried & Östman Reference Fried, Östman, Fried and Östman2004), which was briefly introduced in Section 10.2.2. In early CxG, constructions were considered to be similar to the (sub)trees generated by phrase structure grammars but spanning wider ranges than local tree configurations and specifying detailed linguistic information through feature structures. Example (26) shows a constituent tree on the left and the boxes-within-boxes notation on the right.

(26)

Due to this original conception of constructions as (sub)trees and Paul Kay’s work on the CxG formalism (Kay Reference Kay2002), there exists a common misunderstanding – even among construction grammarians – that CxG’s boxes-within-boxes notation always represents phrase-structural relations. In reality, CxG has developed a much more sophisticated notion of a construction as a multi-dimensional structure that does not need to involve phrase-structural relations. As explained by Fried and Östman:

Constructions can represent very simple configurations that could be almost equally well captured by phrase-structure trees. But constructions can also be quite complex, representing much larger and more intricate patterns containing several layers of information … It is particularly the latter kind of constructions that emphasizes the unique character of Construction Grammar as a multi-dimensional framework in which none of the layers is seen as ‘more basic’ than any other; constructions only differ in the extent to which they make use of these resources.

An example of both the versatility of the boxes-within-boxes notation, as well as the misunderstanding of what it is used for, can be found in Osborne and Gross (Reference Osborne and Gross.2012) who have proposed to repurpose the notation for representing a sentence’s ‘dependency structure’. As illustrated in a simplified way on the right in example (27), the outer box now represents a syntactic head and the inner boxes represent its dependents. The example also illustrates how each box can be enriched with feature–value pairs. The left of example (27) shows a traditional dependency graph representation.

(27)

Osborne and Gross (Reference Osborne and Gross.2012) present dependency structures as an alternative to constituent structures but that misses the point of constructions as multi-dimensional structures (Fried & Östman Reference Fried, Östman, Fried and Östman2004; van Trijp Reference van Trijp2020). Instead of arguing that one structure should be preferred over the other, both constituent and dependency structures can be considered as alternative views on the same structure. Indeed, when Charles J. Fillmore was awarded the ACL Lifetime Achievement Award, he said that “anyone who looks closely at syntax knows that … you can never represent everything about a sentence in a single diagram” (Fillmore Reference Fillmore2012: 708).

The boxes-within-boxes notation can therefore be used for highlighting different views, and different languages may require different views. For instance, it is common practice to describe word order in languages such as Dutch and German in terms of ‘field topology’ rather than phrase-structural relations (Haspelmath Reference Haspelmath, Heine and Narrog2009). As illustrated by Fried & Östman (Reference Fried, Östman, Fried and Östman2004) and van Trijp (Reference van Trijp2020), such an analysis can be captured using ‘ordering constructions’, which here would use the same boxes-within-boxes notation for emphasizing the part–whole relations between a clause and its topological fields. The same notation is also amenable to representing morphological part–whole relations (Booij Reference Booij2010), and so on. The boxes-within-boxes notation is therefore a general way to represent any kind of part–whole structure, which Croft (Reference Croft2001: 5) calls a construction’s ‘meronomic structure’. Any analysis formulated in RCG can therefore be straightforwardly expressed in a more precise way using CxG’s boxes-within-boxes notation and the feature structures within those boxes (Croft Reference Croft2001: 48f.).

10.5.3 Fluid Construction Grammar and Its Interactive Web Interface

One inconvenience of the boxes-within-boxes notation is that we can only see one view at a time and that each view treats a particular dimension (e.g., phrase structure) as more basic than other linguistic information, which is represented using feature–value pairs. Fortunately, a solution to this inconvenience exists in the formal approach of FCG (van Trijp Reference van Trijp2020). Let us illustrate the approach using the construct a gigantic insect and assume for the sake of exposition that our constructional analysis involves two overlapping dimensions: constituent structure and head-dependency structure. Example (28) shows the head-dependency structure on top and the constituent structure below.

(28)

Example (29) shows a first but inadequate attempt at representing this multi-dimensional structure using the boxes-within-boxes notation. Here, constituent structure is represented using boxes, while the head-dependency structure is described as a feature–value pair. More specifically, the constituents a and gigantic are indexed as #1 and #2, respectively. These indices are then used in the feature ‘dependents’ in the insect box in order to ‘point to’ the noun’s dependents.

(29)

Even though the notation in example (29) succeeds in simultaneously representing the construct’s constituent structure and head-dependent structure, it has opted for a nested feature geometry in which constituent structure is seen as the most basic structure. That is, all information that is represented as feature–value pairs can only be accessed through the constituent structure. This goes against the principle of CxG that “none of the layers is seen as ‘more basic’ than any other” (Fried & Östman Reference Fried, Östman, Fried and Östman2004: 19). The solution in FCG is to describe all dimensions as feature–value pairs and to organize features as a flat list of units, as shown in example (30).

(30)

A ‘unit’ can be considered as a region in the multi-dimensional space, similar to identifying city names on a map. The names of the units do not matter (they might as well have been indices such as #1, #2, #3) as long as they are unique, and here I used names that are easier to remember and interpret for a human linguist. Each unit is represented as a box of feature–value pairs, and unit names can be used for pointing to other units. The NP-unit therefore contains a feature called ‘constituents’ that points to the units that represent information about these constituents, while the insect-unit contains a feature called ‘dependents’, which points to the units that represent information about the unit’s dependents. Of course, a flat list of units with feature–value pairs is extremely difficult for human readers to interpret. FCG, therefore, has an interactive web interface (van Trijp et al. Reference van Trijp, Beuls and Van Eecke2022) that allows its users to switch between different views by specifying which features represent a view, as illustrated in Figure 10.4.

Figure 10.4 The same constructional analysis (‘transient structure’ in FCG terminology) shown from the viewpoint of its constituent structure on the left and its head-dependency structure on the right

One important distinction between FCG and other constructional flavors is that in the latter, there is no real difference between the representation of a construction and a constructional analysis: A construction is simply more schematic than the analysis. However, this implies a relatively sharp separation of competence (the inventory of constructions) and performance (a processing model that needs to ‘know’ how to use the constructions efficiently for producing and comprehending constructs). In FCG, on the other hand, constructions are informative as to how they should be processed. More specifically, FCG constructions divide their units of feature–value pairs into a ‘conditional pole’ and a ‘contributing pole’. The conditional pole specifies the conditions under which it is appropriate for a construction to apply in either production or comprehension. For instance, the Insect-Construction will require the lexeme insect to be present in a construct during comprehension, while a Goldbergian Ditransitive Construction (cf. Figure 10.3) requires a predicate that can at least supply two frame elements that can be fused with the semantic roles agent and patient in production. If these conditions are satisfied, the information of the construction’s contributing pole is released. This information is then either contributed by the construction itself, or unified with information that was already contributed by other constructions. For instance, the Insect-Construction may add the meaning of the word insect in comprehension, or the Ditransitive construction may merge its recipient role with one of the predicate’s frame elements and map it onto the grammatical function indirect object in production. Successful application of a construction leads to a new transient structure that, in turn, may trigger other constructions to apply, until the final constructional analysis is achieved (for more detailed explication, see Chapter 21).

FCG’s web interface allows researchers to inspect the interaction between constructions in great detail. The open-source version of FCG is part of a larger software suite called Babel (Loetzsch et al. Reference Loetzsch, Wellens, De Beule, Bleys and van Trijp2008), which includes a system of monitors that allow the behavior of construction grammars to be traced at larger scales, a multi-agent framework for modeling language as a complex adaptive system (Steels Reference Steels, Schoenauer, Deb, Rudolph, Yao, Lutton, Merelo and Schwefel2000; Beckner et al. Reference Beckner, Blythe, Bybee, Christiansen, Croft, Ellis, Holland, Ke, Larsen-Freeman and Schoenemann2009), and a learning module for implementing language learning strategies (Van Eecke & Beuls Reference Van Eecke and Beuls2017). These modules make FCG the most appropriate computational tool for the study of usage-based approaches to construction grammar.

10.5.4 Static Constraints in Sign-Based Construction Grammar

SBCG shows the greatest promise for studying construction grammars from a purely mathematical perspective: Whereas FCG is strongly concerned with how constructions are processed, SBCG tries to describe construction grammars as process-neutral as possible through the form of static constraints (Sag & Wasow Reference Sag, Wasow, Borsley and Börjars2011). The influence of Ivan Sag and construction-based HPSG have resulted in a formal toolset that is finetuned for generative construction grammars that aim to describe all and only the well-formed structures of a language (van Trijp Reference van Trijp2013, Reference van Trijp2015), but an important research avenue would be to investigate how the SBCG approach can be generalized to also handle usage-based models.

SBCG uses ‘typed’ feature structures, which means that every SBCG grammar has a centralized ‘signature’ in which a ‘type declaration’ must be provided for the kinds of feature structures that are recognized by the grammar. For instance, a constructional analysis is modeled as a feature structure of type ‘sign’, whose type declaration is shown in example (31). The declaration states that the type sign has to be mapped onto a set of features such as phon, syn, and sem, which are typed themselves (and hence each feature is in turn mapped onto the feature structures that are declared to be appropriate for those types). Types are organized in a multiple inheritance type hierarchy, so typed feature structures inherit the type declarations of their parents.

(31)