7.1 Introduction

Phonetic and phonological characteristics vary greatly across the languages of the world. Infants do not come pre-adapted to the specific language environment they are born into, so they must tune in to the characteristics of native speech during language acquisition. Adults’ speech perception reflects their lifetime of using their specific native language(s), but it is particularly strongly grounded in language experience during infancy and early childhood. While this native-language (L1) attunement is beneficial for recognizing words in the L1, it often results in difficulty hearing the difference between words in a foreign language that are distinguished solely by a consonant or a vowel distinction that does not occur in their own language(s) (i.e., a non-native contrast). For instance, German lacks the initial consonant /θ/ in the English word “think” as well as the vowel /æ/ in “bat”; thus, for native German listeners the English minimal-word pairs “sink”–“think” and “bat”–“bet” would both involve a non-native speech contrast.

The Perceptual Assimilation Model (PAM; e.g., Reference Best and StrangeBest, 1995) was devised to account for how adults’ speech perception has been shaped by their language experience from birth. In its original form, the model focused on monolingual adults and on infants acquiring a native language in a monolingual setting. This was not because monolinguals are more interesting or important to study than bilinguals (they actually might be less interesting than bilinguals in certain respects), but because the task of accounting for how language experience shapes speech perception is already complex when an adult has learned or an infant is learning only one language, let alone when two or more languages are involved.

In this chapter, we will sketch out how speech perception and phonological category acquisition are shaped by early acquisition of more than one language, that is, from infancy or in early childhood, and how they might be accounted for with a PAM framework. We refer to such adults as “early bilinguals.” Our discussion will focus mainly on PAM, but we will touch on the Perceptual Assimilation Model of L2 Speech Learning (PAM-L2; Reference Best, Tyler, Munro and BohnBest & Tyler, 2007) at the end of the chapter. PAM-L2 extends PAM principles to account for L1 perceptual effects on late second language (L2) acquisition by adults, but aspects of the model are also relevant to early bilinguals. For late L2 acquisition, where learning of a second language begins in adolescence or adulthood, we refer readers to the PAM-L2 model presented in Reference Best, Tyler, Munro and BohnBest and Tyler (2007) and updated by Reference Tyler, Nyvad, Hejná, Højen, Jespersen and SørensenTyler (2019) and Reference Tyler, Ball and BestTyler, Ball, and Best (2024).

Before outlining how PAM might account for phonological attunement in early bilinguals, we first need to consider key findings and concepts. In Section 7.2, we will provide an overview of PAM, as it is currently described for monolinguals. An understanding of how PAM might account for language-attuned speech perception in adult early bilinguals also requires an appreciation of the experiential foundation of that attunement: development of speech perception in infants being raised monolingually versus bilingually. That issue will be covered in Section 7.3, then we will review the empirical findings in early bilingual speech perception that need to be accounted for by any model in Section 7.4. In Section 7.5, we will turn to how PAM might account for speech perception in early bilingual adults (henceforth, early bilinguals) before making some suggestions for future research directions in Section 7.6.

7.2 PAM and Monolinguals

To address bilingual speech perception from a PAM perspective, we need to first understand how PAM accounts for language attunement in monolinguals. The clearest evidence for the influence of L1 attunement on speech perception comes from studies in which perception of phonemes from an unfamiliar non-native language is compared across groups of monolinguals with different L1 backgrounds. This type of research is referred to as cross-language speech perception (Reference Bohn, Fernández and CairnsBohn, 2017). For example, monolingual English and Greek participants showed different patterns of discrimination accuracy for initial stop voicing contrasts from Ma’di, a language spoken in Uganda and South Sudan (Reference Antoniou, Best and TylerAntoniou, Best, & Tyler, 2013). Both groups had difficulty telling apart the Ma’di contrast between voiced plosive versus implosive coronal (tongue tip) stops /da/–/ɗa/. The Ma’di plosive has a stable larynx position and egressive airflow whereas the implosive has a larynx lowering gesture that causes ingressive airflow, a distinction that neither group had experienced in their L1. The English monolinguals also performed poorly on the Ma’di voiced-voiceless plosive /da/–/ta/ contrast, but the Greek monolinguals discriminated it accurately. Ma’di, English, and Greek all have phonologically equivalent voiced and voiceless stops (in this study, /d/ versus /t/, as in English <toner>–<donor> and Greek <τολμάς> [tolmas] “you dare” – <ντολμάς> [dolmas] “stuffed cabbage roll”),Footnote 1 but their phonetic realizations are different. The most important phonetic difference is voice onset time (VOT), which represents the time difference between the release of the tongue tip constriction and the onset of vocal fold vibration in stop consonant phonological contrasts such as English /d/–/t/, /b/–/p/, and /ɡ/–/k/. With respect to the Reference Antoniou, Best and TylerAntoniou et al. (2013) study, both Ma’di and Greek /d/ is phonetically prevoiced [d] (i.e., with a negative VOT that indicates the focal folds begin vibrating well before the constriction release), while /t/ is phonetically voiceless unaspirated [t] (i.e., with a slight positive VOT that indicates voicing begins slightly after the release). Thus, the Ma’di contrast is native-like for Greek listeners. In contrast, when English /d/ and /t/ occur in stressed syllable onset position, as the stimuli did in Reference Antoniou, Best and TylerAntoniou et al. (2013), English /d/ has a slight positive VOT (unaspirated [t]) that is phonetically quite similar to Ma’di and Greek /t/, whereas English /t/ is phonetically unlike Greek and Ma’di /t/, with notably longer positive VOT that results in noisy aspiration (i.e., long-lag aspirated [tʰ]). Thus, both Ma’di /d/ and /t/ are phonetically similar to English /d/ but neither is phonetically similar to English /t/. Based on those observations, discrimination of the non-native Ma’di consonant contrasts was consistent with each monolingual group’s prior experience with the phonetic VOT realizations of their L1 /d/ and/or /t/. It is findings such as these that PAM was devised to explain.

PAM is grounded on the premise that the objects of speech perception are temporally and spatially coordinated patterns of constrictions along the vocal tract, produced by its articulatory organs (lips, tongue tip, tongue body, tongue root, velum, and larynx), which dynamically shape the vocal tract over time during speech in distinct and perceptually discernible ways (Reference Best and StrangeBest, 1995; Reference FowlerFowler, 1986; Reference Studdert-Kennedy, Goldstein, Christiansen and KirbyStuddert-Kennedy & Goldstein, 2003). The multimodal output (acoustic signal, facial movements, airflow) of these temporally coordinated vocal tract constriction events provide evidence of the originating articulatory patterns that perceivers detect in speech. For example, English /d/ is typically produced by making a complete closure between the tongue tip and the alveolar ridge, with vocal fold vibration starting around the same time as, or just after, closure release when it occurs in word- or syllable-initial position (i.e., phonetically, it is an unaspirated short-lag VOT [t]). However, the precise articulatory-phonetic details of these articulatory gestures differ by phonetic context, as well as by talker and accent. The resulting variability in constriction location and degree, and in the relative timing of constrictions, is systematic. For example, when English /d/ occurs word-internally between vowels, as in “widen,” it is more likely to be prevoiced (phonetically, [d]), and if the following consonant involves a constriction at the teeth for the fricative [θ], as in the word “width,” its constriction location is more likely to be at the upper front teeth, that is, dental (phonetically, [d̪]).

The systematic relationships among variants of a given consonant or vowel, that is, a phonological segment, are delineated by the articulatory properties that are crucial to that segment’s phonological identity, which we refer to as its phonological category. The core articulatory properties that are shared by the many variants of a phonological category are its higher-order invariants, which are abstracted across the many disparate variants, and which set that phoneme apart from all other phonemes in a given language. To give an English lexical example of the difference between higher- and lower-order invariants, the word “baby” is pronounced differently by speakers of different regional English accents (e.g., as something akin to BAY-bee by Americans, BYE-bee by Australians, or BEE(-a)-bee by Jamaicans). Despite those accent differences, which provide listeners with useful lower-order phonetic information about where the speaker grew up, the three accented forms nonetheless share the higher-order lexical invariant of being the same word, “baby,” which is distinct from all other similar-sounding words in English, for example, from “bobby,” “birdy,” and “buggy.”

Each phonological category contrasts with all other phonological categories in that language. This contrastiveness can signal meaningful differences between words in the language. Contrastive differences among phonological segments are what we refer to as phonological distinctiveness (Reference Best, Tyler, Gooding, Orlando and QuannBest et al., 2009). For example, in English the voiceless alveolar fricative /s/ in “sing” differs from the voiceless dental fricative /θ/ in “thing” by a single gestural difference. The critical gestural difference is the location of the tongue tip constriction for producing fricatives at the alveolar ridge (/s/) versus the upper front teeth (/θ/). The invariants must also be of sufficiently high order, that is, abstract enough, for word recognition to remain robust across context, talker, and accent variations. That is, they must display phonological constancy (Reference Best, Tyler, Gooding, Orlando and QuannBest et al., 2009).

Tuning in to the higher-order invariants of native phonological categories supports rapid and efficient recognition of spoken words. This, in turn, equips native listeners with the flexibility to adapt to phonetic variability across talkers and accents, and to any long-term shifts in their own regional accent. This natively tuned perception leads listeners to automatically perceive speech from any language using the perceptual efficiencies that they developed to support L1 speech perception. In other words, they perceptually assimilate speech to their L1 phonological system.

The effect of L1 attunement on perception can be inferred by observing how listeners categorize and discriminate unfamiliar, minimally contrasting phonemes from a never-before-heard non-native language (i.e., cross-language speech perception, which is distinct from second-language speech perception – perception of a language that the listener is actively learning). If a listener detects higher-order invariant information that is consistent with a single L1 phonological category, then the non-native phoneme is perceptually assimilated as categorized, although its goodness of fit can range from ideal to notably deviant. For example, the monolingual Greek listeners in Reference Antoniou, Best and TylerAntoniou et al. (2013) perceived Ma’di alveolar /d/ as an acceptable exemplar of Greek /d/. They also perceived Ma’di implosive /ɗ/ as Greek /d/, but with a poorer goodness of fit. A non-native phoneme is uncategorized if it is perceived as speech, but not as consistent with any single L1 phonological category, either because it is not primarily similar to any particular L1 category or because the listener perceives (weak) similarity to one or more than one L1 category (for more on uncategorized assimilations, see Reference Faris, Best and TylerFaris, Best, & Tyler, 2016, Reference Faris, Best and Tyler2018; Reference Tyler and WaylandTyler, 2021b). Finally, some non-native phonemes may be so unlike any L1 phonological category that they are not perceived as speech, and thus are not assimilated as possible L1 phonological elements. This latter possibility arose from the observation that English monolingual adults often reported hearing nonspeech events (e.g., clap, finger snap, twig cracking) when asked to categorize Zulu click consonants (Reference Best, McRoberts and SitholeBest, McRoberts, & Sithole, 1988). Importantly, native listeners of Zulu and Sesothu (both click languages) predominantly perceived non-native !Xóõ click consonants as speech, in contrast to native English listeners who perceived them mostly as nonspeech or as containing a nonspeech element (Reference Best, Traill, Carter, Harrison, Faber, Solé, Recasens and RomeroBest et al., 2003).

So far, we have outlined the various ways in which a single unfamiliar phoneme from a never-experienced language might be perceived after perception has become natively tuned. PAM also provides general predictions for discrimination accuracy on non-native speech contrasts based on how the contrasting pairs of non-native phonemes are categorized and rated (see Reference Tyler and WaylandTyler [2021b] for a discussion of the different types of information available for discriminating non-native segments). Let us consider, first, cases where both non-native phonemes are categorized. Either the two contrasting non-native phonemes will be perceived as instances of different L1 phonological categories, or both will be perceived as instances of the same L1 phonological category. When they are perceived as different L1 categories, it is a two-category assimilation. Discrimination of two-category assimilations should be excellent because the listener has detected higher-order invariants in each of the non-native phonemes that would support efficient perception of an L1 contrast (i.e., due to language-specific attunement rather than the universal discriminability of the contrast). When both non-native phonemes are perceived as instances of the same L1 phonological category, this means that the listener has detected the same higher-order invariants in both non-native phonemes.Footnote 2 The extent to which the listener can perceive a difference between the non-native phonemes depends on the perceived goodness of fit of each one to the L1 category. If the phonetic differences between the non-native phonemes fall within a range of variability that would be expected for the single L1 category (i.e., they display phonological constancy in the L1), then both non-native phonemes will be perceived as having a good fit to the same L1 phoneme. For this single-category type of assimilation, the perception of higher-order invariants that facilitate L1 perception has a detrimental effect on discrimination, to the extent that non-native listeners may not detect any difference between the contrasting non-native phonemes. For example, in Reference Antoniou, Best and TylerAntoniou et al. (2013), English listeners categorized both Ma’di plosive /d/ and implosive /ɗ/ as English /d/. There was no significant difference in their goodness of fit, so /d/–/ɗ/ was a single-category contrast for these listeners. Their discrimination accuracy was 58 percent (chance was 50 percent, so not much above chance). A category goodness assimilation occurs when both non-native phonemes are assimilated to the same L1 phonological category but there is a perceived difference in goodness of fit. Discrimination accuracy is predicted to fall between that of a two-category and a single-category assimilation. For example, English listeners assimilated both the Zulu voiceless aspirated velar stop /kʰ/ and the velar ejective stop /k’/ to English /k/ (phonetically [kʰ]), but they perceived /k’/ as a notably poorer exemplar than /kʰ/ (Reference Best, McRoberts and GoodellBest, McRoberts, & Goodell, 2001). They discriminated the contrast well (~80 percent), though well below ceiling level. Thus, the clear prediction for discrimination accuracy, for these three assimilation types, is two-category > category-goodness > single-category.

When at least one non-native phoneme in a contrast is perceived as a phoneme but not as a clear exemplar of any specific L1 phonological category (i.e., it is uncategorized), discrimination accuracy depends on the extent to which the listener perceives overlapping L1 phonological information between the contrasting non-native phonemes. For example, Australian English listeners categorized the Danish vowel [œ] as their L1 [ɜː] (the Australian vowel in “bird”), but Danish [o] was uncategorized, with listeners perceiving phonological characteristics that were weakly consistent with L1 Australian English [ʊ] (“foot”), [ɔ] (“lot”), [oː] (“north”), and [əʉ] (“goat”) (Reference Faris, Best and TylerFaris et al., 2018; see Reference Cox and PalethorpeCox and Palethorpe [2007] for phonetic descriptions of Australian vowels). Discrimination accuracy for this uncategorized-categorized [o]–[œ] contrast was high (85 percent) because there was no phonological overlap between the sets of L1 vowel categories the English listeners perceived in each of the two non-native Danish vowels. In contrast, Danish [ø] was perceived as weakly consistent with L1 [ʊ], [ɜː], and [ʉː] as in “goose.” The uncategorized-categorized [ø]–[œ] contrast was partially overlapping because the L1 [ɜː] vowel (to which [œ] was categorized) was perceived as being among the set of English vowels that was consistent with Danish [ø]. Discrimination accuracy was poor for [ø]–[œ] (57 percent). The general prediction for discrimination accuracy on contrasts involving at least one uncategorized non-native phoneme is nonoverlapping > partially overlapping > completely overlapping (Reference Bohn, Best, Avesani, Vayra, Lee and ZeeBohn et al., 2011; Reference Chen, Antoniou and BestChen, Antoniou, & Best, 2023; Reference Faris, Best and TylerFaris et al., 2018). Finally, if both non-native phonemes in a contrast are nonassimilable (e.g., English listeners’ perception of Zulu clicks, Reference Best, McRoberts and SitholeBest et al., 1988) then discrimination should be good to excellent depending on how they are each perceived as nonspeech sounds (Reference Best, Traill, Carter, Harrison, Faber, Solé, Recasens and RomeroBest et al., 2003; for further discussion, see Reference Tyler and WaylandTyler, 2021b).

In summary, as a result of perceptual attunement to their native language, adult monolinguals have rapid and efficient perception of phonological contrasts in the L1. However, natively tuned perception is automatic, which may lead to inaccurate perception of unfamiliar phonological contrasts in a non-native language. To consider how PAM might account for early bilinguals’ speech perception, however, we first need to go back to the beginnings of language-specific perceptual attunement in infancy.

7.3 Back to the Future: First Steps in Infancy

7.3.1 Development of Speech Perception in Monolingual Infants

Most early speech perception studies have examined monolingual infants, that is, with little or no exposure to other languages. This research has overwhelmingly found effects of L1 experience on vowel and consonant perception in the second half-year of life (for reviews, see, e.g., Reference Best, Fernández and CairnsBest, 2017; Reference Best, Goldstein, Nam and TylerBest et al., 2016; Reference Werker, Yeung and YoshidaWerker, Yeung, & Yoshida, 2012). Before then, infants discriminate nearly all segmental phonetic contrasts of the world’s languages, including those lacking in their L1 environment. Thus, monolingual infants under six months do not yet appear to be perceptually attuned to native phonetic contrasts. But discrimination has declined by six months for most non-native vowel contrasts, and by nine to ten months for most non-native consonant contrasts examined. Most native contrasts continue to be discriminated well throughout that period. This general pattern of declining discrimination for most non-native contrasts but maintenance for most native contrasts is widely accepted and interpreted as evidence for perceptual attunement to native speech.

However, different developmental trajectories have been reported in monolingual infants for some consonant contrasts (see Reference Best, Fernández and CairnsBest, 2017; Reference Best, Goldstein, Nam and TylerBest et al., 2016; Reference Liu, Peter and TylerLiu, Peter, & Tyler, 2023; Reference Tyler, Best, Goldstein and AntoniouTyler et al., 2014). Discrimination of some native contrasts is only fair rather than good initially, improving during the second half-year (Reference Kuhl, Stevens and HayashiKuhl et al., 2006), while for others it is initially poor and shows even more delayed improvement with L1 experience, not evident until four years (Reference Polka, Colantonio and SundaraPolka, Colantonio, & Sundara, 2001). Conversely, initially good discrimination of some non-native contrasts is maintained across infancy and through to adulthood, rather than showing a decline (e.g., Reference Best, McRoberts and SitholeBest et al., 1988; Reference Best and McRobertsBest & McRoberts, 2003). The disparate developmental patterns for these contrasts appear largely compatible with PAM principles. For example, the Tigrinya ejectives /p’/–/t’/ that English-speaking adults assimilate to native stops /p/–/t/ ([pʰ]–[tʰ], a two-category assimilation) and the Zulu click contrasts that they perceive as nonspeech sounds (nonassimilable) are both discriminated well by English-learning infants from early on and show no decline (Reference Best and MolfeseBest, 1988; Reference Best, McRoberts, LaFleur and Silver-IsenstadtBest et al., 1995; see Reference Best, Goldstein, Nam and TylerBest et al., 2016). Meanwhile, the Zulu stop-ejective contrast /k/–/k’/ that English monolingual adults assimilated as good versus deviant exemplars of English /k/ ([kʰ]), and discriminated well (category-goodness assimilation), is discriminated at six to eight months but not at ten to twelve months (Reference Best and McRobertsBest & McRoberts, 2003).

By tuning in to the higher-order invariant properties of phonological categories, infant speech perception begins to specialize for efficient recognition and learning of meaningful words in the L1. The emergence of phonological constancy and phonological distinctiveness in the second year coincides with the vocabulary spurt, a period of rapid word learning that occurs once an infant’s expressive vocabulary (i.e., words they can produce) reaches around fifty words (Reference Nazzi and BertonciniNazzi & Bertoncini, 2003). For monolingual infants, this occurs at around seventeen to eighteen months of age. We suggest that it is the discovery of phonological constancy and distinctiveness, across phonetically variable productions of words (e.g., varying talkers, emotions, accents), that supports this rapid vocabulary expansion (Reference Best, Romero and RieraBest, 2015; Reference Best, Tyler, Gooding, Orlando and QuannBest et al., 2009).

7.3.2 Development of Speech Perception in Bilingual Infants

But how does an infant being raised bilingually optimally attune to the phonologies of two languages? It is tempting to ask simply whether bilingual infants show the same trajectories for their multiple languages as monolinguals show for their single L1, the focus of such research to date. But that question implicitly assumes a clear categorical distinction between monolingual versus bilingual infants, which is overly simplistic when given the multifaceted differences in language learning contexts between monolingual and bilingual children as well as among individuals on each side of what is actually a rather fuzzy dichotomy (e.g., Reference JohnsonJohnson, 2018). Even the speech heard on one or the other side of this blurry divide shows individual variation in quantity and quality, in the number of people speaking the language(s), their accents (native regional variants and/or L2), and sociocultural and family differences in spoken communication (Reference GathercoleGathercole, 2014; Reference JohnsonJohnson, 2018).

We also must consider whether bilingual infants acquire their languages simultaneously or sequentially, a fundamental distinction in adult bilingual research and theory. Simultaneous bilingual adults have acquired both languages “from infancy” (two L1s), whereas sequential bilinguals learned one language from birth (their L1) and the other later (their L2), either during the preschool years (early-sequential bilinguals) or later in childhood, or in adolescence/adulthood (late L2 learners). However, these definitions fail to capture the richly varied reality of infant bilingual contexts. While some do learn their languages simultaneously “from birth,” even these children’s circumstances can differ. For example, each parent may speak a different language to them, or both parents may speak both languages to them, or the parents may speak one language and an extended family member who regularly cares for the child from birth may speak another. But many, if not most, bilingual infants encounter their two languages sequentially. For example, they hear a single home language from birth, then begin to experience another one months later when they start spending regular time with people other than the parents, such as a nanny, other family caregivers, or daycare teachers. In such sequential cases the home language is often the parental heritage language, and the later-encountered L2 is used by the broader community/country, but the reverse situation can also occur (e.g., when a nanny or daycare teachers speak in languages other than the home/majority language; see Reference De HouwerDe Houwer, 2021; Reference GathercoleGathercole, 2014). Relatedly, one language may dominate, and dominance may shift over time. For example, the home language may be the “dominant” language when an infant is very young, but dominance shifts to the community L2 when the child enters daycare/preschool or interacts with L2-native peers in weekly play groups.

Bilingual infant speech perception studies have generally assumed a quasi-binary distinction that fails to consider either simultaneity-sequentiality or the other contextual differences among bilingual infants. The language background of participants has usually been grouped using arbitrary language exposure thresholds (e.g., those with > 85 percent exposure to a single L1 defined as monolingual, and those with < 65 percent exposure to one language and > 35 percent exposure to another as bilingual; Reference Bosch and Sebastián-GallésBosch & Sebastián-Gallés, 2001), but many infants fall between those two extremes. As a result, we still know little about the effects of the factors discussed here on bilingual infants’ perceptual attunement, or the vestiges of that attunement on adult bilingual speech perception.

7.3.3 Speech Perception in Bilingual Infants

Research on speech perception in bilingual infants is newer and less extensive than that on monolinguals, but has been increasing, along with reviews and theoretical analyses (e.g., Reference Byers-Heinlein, Grosjean and Byers-HeinleinByers-Heinlein, 2018; Reference Curtin, Byers-Heinlein and WerkerCurtin, Byers-Heinlein, & Werker, 2011; Reference Höhle, Bijeljac-Babic and NazziHöhle, Bijeljac-Babic, & Nazzi, 2020). The first such study examined discrimination of the Catalan-only vowel contrast between mid-high /e/ and mid /ɛ/ by monolingual and bilingual infants learning Spanish and/or Catalan (Reference Bosch and Sebastián-GallésBosch & Sebastián-Gallés, 2003). All groups discriminated Catalan /e/–/ɛ/ at four months, but by twelve months only Catalan monolinguals and bilinguals did so, not Spanish monolinguals, indicating perceptual attunement in all groups. At eight months, however, only the Catalan monolinguals discriminated it, indicating that the two monolingual groups were already perceptually attuned to their L1s. Despite their Catalan experience, the bilinguals showed a dip in discrimination at eight months, which they recovered by twelve months. In a follow-up study, an eight-month dip and twelve-month recovery was also found in bilinguals’ discrimination of a vowel contrast shared by Catalan and Spanish, high versus mid-high back rounded /u/–/o/, which both monolingual groups discriminated at all ages (Reference Sebastián-Gallés and BoschSebastián-Gallés & Bosch, 2009). The authors speculated that this indicates a temporary delay in bilinguals’ development of perceptual attunement. However, using a more sensitive anticipatory eye movement task at eight months they found that bilinguals, like Catalan monolinguals, can discriminate Catalan /e/–/ɛ/ whereas Spanish monolinguals failed (Reference Albareda-Castellot, Pons and Sebastián-GallésAlbareda-Castellot, Pons, & Sebastián-Gallés, 2011). Thus, the bilinguals’ earlier difficulty at this age appears to reflect greater sensitivity to task demands, rather than an actual loss of perceptual ability.

Analogously, English monolingual and Spanish-English bilingual infants discriminated the Midwestern American English /e/–/ɛ/ contrast at both four and eight months (Reference Sundara and ScutellaroSundara & Scutellaro, 2011) in a habituation task that was also more sensitive than Reference Bosch and Sebastián-GallésBosch and Sebastián-Gallés (2003). In another study, monolingual Dutch infants and infants being raised bilingually in Dutch and one of a range of languages (e.g., English, German, Spanish, or Turkish) completed the same sensitive habituation task with the Dutch /ɪ/–/i/ contrast, a purely spectral high versus mid-high front distinction lacking from the bilinguals’ other languages (Reference Liu and KagerLiu & Kager, 2016). Neither group discriminated it at five to six months, only the bilinguals discriminated it at eight to nine months, and then both groups succeeded at eleven to twelve months. The authors interpreted this pattern as a bilingual lead in perceptual attunement to this initially difficult native vowel contrast. That inference is consistent with monolingual English and bilingual Spanish-English infants’ brain responses when discriminating another difficult purely spectral English contrast, mid-high versus mid front lax /ɪ/–/ɛ/, indicating that bilingual infants may attend more to the distinction than monolinguals (Reference Shafer, Yu and DattaShafer, Yu, & Datta, 2011; Reference Shafer, Yu and Garrido-NagShafer, Yu, & Garrido-Nag, 2012).

Considering the task and vowel contrast differences, we draw the following inferences about bilingual infants’ perceptual attunement to vowel contrasts: they are neither delayed nor advanced relative to monolinguals in perceptual attunement to vowel contrasts, but instead differ in their attention to them, which is influenced by task demands. This attentional difference may explain the seemingly counterintuitive findings of a bilingual dip/delay at eight months for initially easy (discriminated by all groups at four to six months) native vowel contrasts (Catalan/Spanish /u/–/o/; Catalan /e/–/ɛ/; English /e/–/ɛ/), but a bilingual lead at eight to nine months for initially difficult (not discriminated by any groups at four to six months) native contrasts (Dutch /ɪ/–/i/; English /ɪ/–/ɛ/).

As for consonant contrasts, Canadian English monolingual versus French-English bilingual infants were examined on discrimination of stop consonant voicing distinctions that differ between languages in VOT (Reference Burns, Yoshida, Hill and WerkerBurns et al., 2007). As noted earlier, English uses short-lag versus long-lag aspirated VOTs to distinguish voiced versus voiceless stops, whereas, like Greek and Spanish, French uses near-zero-lag (Canadian) or voicing-lead (European) (Reference Caramazza and Yeni-KomshianCaramazza & Yeni-Komshian, 1974) versus short-lag VOT. The infants were habituated to a language-ambivalent short-lag [p] heard as /p/ by French-speaking but /b/ by English-speaking adults, then heard two test trials that assessed their discrimination of near-zero-lag [b] (Canadian French /b/) and long-lag [pʰ] (English /p/) from the habituation stimulus. At six to eight months both monolingual English and bilingual French-English infants discriminated the English [p]–[pʰ] but not the Canadian French [b]–[p] contrast, which was difficult for both groups. Bilinguals discriminated the French contrast from ten months onward, whereas English monolinguals again failed. This finding provides evidence of perceptual attunement for this consonant contrast by ten months for both groups, consistent with prior research showing perceptual attunement for consonant contrasts in monolinguals (see Reference Liu and KagerLiu and Kager [2015] for similar results with monolingual Dutch and bilingual Dutch-{French, Spanish, English, German, or Chinese} infants discriminating Dutch and English VOT contrasts). Analogous results were also found for a place-of-articulation difference between the allophones of /d/ in Canadian French (dental [d̪]) and Canadian English (alveolar [d]) (Reference Sundara, Polka and MolnarSundara, Polka, & Molnar, 2008). The [d]–[d̪] contrast was successfully discriminated by all three groups at six to eight months: bilingual French-English, monolingual French, and monolingual English. However, only the bilingual and the monolingual English infants discriminated the contrast at ten to twelve months, which is consistent with discrimination of the same contrast by monolingual English and bilingual adults (Reference Sundara and PolkaSundara & Polka, 2008). Neuroscientific studies on VOT comparisons with English monolingual and English-Spanish bilingual infants largely concur (Reference Garcia-Sierra, Rivera-Gaxiola and PercaccioGarcia-Sierra et al., 2011; Reference Ferjan Ramírez, Ramírez, Clarke, Taulu and KuhlFerjan Ramírez et al., 2017), and further suggest that bilinguals’ brain responses may show enhanced executive functioning and a longer period of “openness” to language experience relative to monolinguals (see also Reference Petitto, Berens and KovelmanPetitto et al., 2012; Reference Singh, Loh and XiaoSingh, Loh, & Xiao, 2017).

Our inference is that discrimination ability and perceptual attunement per se do not differ between bilinguals and monolinguals early in life. What differs instead is their experience-driven attentional biases to the phonetic details of native and non-native contrasts, which are affected by task demands as well as the other factors we discussed earlier (see also Reference StrangeStrange, 2011; Chapter 9, this volume). Thus, the simple “same or different trajectory” question is off target for understanding perceptual attunement in bilingual infants. The insights and inferences we have drawn here from research on the beginnings of language-specific perceptual attunement in monolingual versus bilingual infants provide developmental grounding for returning to consider our core question of how PAM principles might account for cross-language speech perception in adults who had acquired their two languages in the first two years of life, that is, mature early bilinguals.

7.4 Speech Perception in Early Bilingual Adults

While cross-language speech perception studies with bilinguals are rare, we can glean important insights from the limited studies available (see Reference Antoniou, Liang, Ettlinger and WongAntoniou et al. [2015] for a thorough review). Reference Antoniou, Best and TylerAntoniou et al. (2013) tested cross-language perception of Ma’di consonants by early Greek-English bilinguals, in addition to the Greek and English monolinguals we discussed earlier in Section 7.2. Participant selection was carefully controlled for the bilinguals, with additional requirements beyond those for the monolingual Greek and English participants. All bilingual participants were born in Sydney, Australia, and acquired Greek in the home environment before learning English as a second language (L2) before the age of five years, that is, they were early sequential bilinguals. A particular focus of the study was on whether their perception shifted according to the language mode (Reference Grosjean and NicolGrosjean, 2001; see also Reference Antoniou, Tyler and BestAntoniou, Tyler, & Best, 2012). Half of the bilinguals completed the experiment in a unilingual Greek mode, interacting with the early sequential bilingual Greek-English experimenter in Greek throughout recruitment and participation in the study. They received written and verbal instructions in Greek and were engaged in conversation about their activities in the Australian Greek community. The other half completed the experiment in a unilingual English mode. They were only ever spoken to in English, and the experimenter carefully avoided topics that might relate to their Greek heritage. When asked to categorize the Ma’di consonants using letters in Greek (e.g., <τ> versus <ντ>) or English orthography (e.g., <t> versus <d>), the bilinguals in the Greek mode condition categorized the Ma’di consonants similarly to Greek monolinguals, and bilinguals in the English mode condition responded similarly to English monolinguals. This suggests that bilinguals can change their listening mode depending on the language context of the experiment, at least for categorization tasks. In the discrimination tests of this study, however, accuracy did not differ according to language mode. For Ma’di contrasts where discrimination performance differed between monolingual Greek and English participants, the bilinguals’ accuracy was intermediate between the two monolingual groups. Thus, it appears that when the bilinguals performed a task requiring a judgment about category membership, they were able to respond in a way that matched the language mode they were in (language context of the experiment). In contrast, language mode did not affect their discrimination. The language mode effect on categorization but not discrimination is in line with prior findings on Greek-English bilinguals’ categorization versus discrimination of Greek and English coronal stop voicing stimuli (Reference Antoniou, Tyler and BestAntoniou et al., 2012). These findings suggest that listeners make language-specific phonological judgments when categorizing the stimuli but rely on phonetic information in a way that reflects their language-specific listening history (i.e., amount of exposure to one or the other or both languages) when discriminating between stimuli.

The only other recent cross-language perception study with early bilinguals, that we are aware of, is a small-scale study by Reference MelguyMelguy (2018), who compared categorization and discrimination of Nepali coronal stop place contrasts by early Spanish-English bilinguals (a mixture of sequential and simultaneous) and English monolinguals living in the USA. There was no language mode manipulation, but the bilinguals were given the option to select their consonant category choice from a set of English or Spanish initial-consonant keywords (e.g., English “pole,” “toll,” “coal” and Spanish “pon,” “ton,” “con”). Nepali has phonological contrasts between dental versus retroflex places of articulation for its voiced and voiceless aspirated coronal (tongue tip) stops, /d̪/–/ɖ/ and /t̪ʰ/–/ʈʰ/, respectively (Reference KhatiwadaKhatiwada, 2009), in which the retroflex stops are often realized phonetically as alveolars. The stimuli were produced by two Nepali-English bilinguals living in the USA, as dental versus alveolar phonetic distinctions with prevoiced versus long-lag aspirated VOTs, that is, [d̪]–[d] and [t̪ʰ]–[tʰ], respectively. In Spanish, /d/ and /t/ are generally produced with a dental place of articulation as prevoiced versus voiceless unaspirated [d̪]–[t̪], whereas in English they are generally alveolar voiceless unaspirated versus aspirated [t]–[tʰ]. The main aim of the study was to test whether Spanish-English bilinguals’ categorization and discrimination of the Nepali dental-alveolar phonetic distinctions was more accurate, given their experience with both places of articulation across their two languages (dental in Spanish, alveolar in English), than that of monolingual English speakers. In the categorization task, the bilinguals tended to choose a Spanish more often than an English consonant label for the Nepali dental consonants, and an English more often than a Spanish consonant label for the phonetically alveolar Nepali consonants (the phonologically retroflex stops). This suggests that they were sensitive to the differences between the Nepali dental (Spanish place of articulation) and alveolar (English place of articulation) phonetic realizations. Conversely, their discrimination of the two Nepali place contrasts did not reflect that sensitivity to the language-specific phonetic difference, as their accuracy was close to chance (and did not differ significantly from the English monolinguals). As there was no monolingual Spanish group, it is not possible to infer any effects of language dominance on discrimination accuracy.

While there are few studies focusing on cross-language speech perception in early bilinguals, there is a large literature on how they perceive consonants and vowels (and lexical tones) in their own two languages (for reviews, see Reference Gonzales, Byers-Heinlein and LottoGonzales, Byers-Heinlein, & Lotto, 2019; Reference SimonetSimonet, 2016). Reference Antoniou, Tyler and BestAntoniou et al. (2012) found that early sequential Greek-English bilinguals’ categorization of Greek and English bilabial and coronal stops differed according to language mode. The potential perceptual conflict for them is that Greek phonologically voiceless stops /t, p/ and English phonologically voiced stops /d, b/ have very similar phonetically short positive VOTs (i.e., unaspirated voicing lag). Only the bilinguals in Greek mode categorized the Greek stops similarly to Greek monolinguals, and only the bilinguals in English mode categorized the English stops like English monolinguals. Thus, despite the phonetic/phonological mismatches between their two languages, the bilinguals apparently optimized their category judgments to match the language context. Other studies have also shown that bilinguals shift their category judgments according to a language-specific phonetic context (Reference Casillas and SimonetCasillas & Simonet, 2018; Reference Gonzales and LottoGonzales & Lotto, 2013) and their expectations of which language they will hear (Reference Gonzales, Byers-Heinlein and LottoGonzales et al., 2019). As in Reference Antoniou, Best and TylerAntoniou et al. (2013), Reference Antoniou, Tyler and BestAntoniou et al. (2012) failed to find a language mode effect in discrimination. However, the bilinguals’ discrimination of both Greek and English voicing contrasts aligned with that of monolinguals from their dominant language, English, rather than being intermediate between the two monolingual groups’ discrimination levels as they were for the unfamiliar non-native Ma’di stimuli (Reference Antoniou, Best and TylerAntoniou et al., 2013).

Reference Sundara and PolkaSundara and Polka (2008) tested discrimination of Canadian French (dental [d̪]) and Canadian English (alveolar [d]) allophones of /d/ by simultaneous Canadian French-English bilinguals. Their results were compared to early bilinguals who acquired English at home and attended French immersion school from age five to six, monolingual French and English speakers, and native Hindi speakers. The last group were included because Hindi has a dental-retroflex stop contrast /d̪/–/ɖ/ and the authors speculated that English alveolar [d] was likely to assimilate to their L1 retroflex /ɖ/ while French dental [d̪] would assimilate to their /d̪/. The results suggested that, unlike the Spanish-English bilinguals in Reference MelguyMelguy (2018), the Canadian French-English bilinguals were sensitive to the phonetic difference between French [d̪] and English [d], as their discrimination was as accurate as the Hindi native speakers’, and generally higher than the French and English monolingual groups’. However, the participants did not complete a categorization task, so it is unclear whether the simultaneous bilinguals were using language-specific phonetic sensitivity to discriminate the contrast.

Another important set of findings is from perception of Catalan-only vowel contrasts by early sequential bilinguals with Catalan versus Spanish as their L1. These studies have often used categorical perception tasks, where participants are presented with synthesized speech tokens that are equally spaced along an acoustic continuum between two contrasting consonants or vowels. Perceivers have to categorize each continuum step as one category or the other and discriminate equally spaced pairs of steps along the continuum (e.g., step 1 versus step 4, step 2 versus step 5, and so on). Perception is considered categorical when the continuum steps are mostly perceived as one category or the other (i.e., there is a sharp boundary between categories), and discrimination is much better across the category boundary than within categories. In Reference Pallier, Bosch and Sebastián-GallésPallier, Bosch, and Sebastián-Gallés (1997), early sequential L1-Catalan and L1-Spanish bilinguals from Barcelona categorized and discriminated tokens from a vowel continuum ranging between the Catalan-only /e/–/ɛ/ contrast. Spanish has only /e/, which is acoustically intermediate between these Catalan vowels. The L1-Catalan bilinguals showed a clear categorical boundary for the synthetic /e/–/ɛ/ continuum, but the L1-Spanish bilinguals did not. The authors suggest that the L1-Spanish bilinguals’ early exposure to the Spanish /e/ prevented them from acquiring the Catalan /e/–/ɛ/ contrast because both Catalan vowels overlap acoustically with Spanish /e/.

Contrasting findings were reported by Reference AmengualAmengual (2016b), who divided Spanish-Catalan bilinguals in terms of their language dominance rather than order of acquisition. Catalan- versus Spanish-dominant bilinguals in Majorca categorized and discriminated synthetic continua for two Catalan-only vowel contrasts, /e/–/ɛ/ and mid-high versus mid /o/–/ɔ/. They discriminated both vowel contrasts quite categorically, without group differences. They also identified both vowel contrasts categorically, with no group difference on /e/–/ɛ/ but less robust categorization of /ɔ/ (one endpoint of the /o/–/ɔ/ continuum) by the Spanish-dominant than by the Catalan-dominant group. The different outcomes in Reference AmengualAmengual (2016b) versus Reference Pallier, Bosch and Sebastián-GallésPallier et al. (1997) might be due to phonetic differences between the Catalan spoken in Majorca versus that in Barcelona, but Amengual pointed out that language input differences may also be a factor. Spanish-Catalan bilinguals raised in Barcelona are more likely to be exposed to Spanish-accented Catalan, in which /e/ and /ɛ/ are merged, than are those raised in Majorca. In another study, the same Majorcan bilinguals completed discrimination tasks using naturally produced tokens of Catalan vowels (Reference AmengualAmengual, 2016a). While discrimination accuracy was high for both groups (> 85 percent correct), the Catalan-dominant bilinguals had a small but significantly higher accuracy than the Spanish-dominant bilinguals on three of the contrasts: /e/–/ɛ/, /o/–/ɔ/, and /ɛ/–/a/.

Our brief review of perceptual studies shows that early bilinguals can adjust their phonetic sensitivity according to their language context when making category judgments, which could be interpreted as evidence for separate phonological systems. However, the findings with Spanish-Catalan bilinguals seem to be consistent with the idea that bilinguals have a single phonological system for both languages, with attunement to Catalan /e/–/ɛ/ dependent on the phonetic characteristics of the regional accents and the context of language acquisition and use. The reason for the varying findings across these studies is difficult to pinpoint given the multiple differences among them. It could result from one or more of the following factors: different engagement of phonological and phonetic processes in categorization versus discrimination tasks (see Reference Antoniou, Tyler and BestAntoniou et al., 2012), stimulus differences (natural tokens versus synthetic continua; consonants versus vowels), language differences (Greek/English versus Catalan/Spanish), and/or different types of bilingual comparisons (language mode, language dominance, or order of acquisition). To make sense of bilingual speech perception, prior research needs to be interpreted within a theory of how phonological categories are acquired and perceived, and future studies should be designed to test theoretical predictions.

7.5 Back to Adult Bilinguals: Implications of, and for, PAM

In order to fully address the question of how PAM might account for speech perception in early bilinguals, we must briefly review some relevant aspects of PAM’s extension to second language learning, PAM-L2 (Reference Best, Tyler, Munro and BohnBest & Tyler, 2007). PAM-L2 was developed to account for the acquisition of phonological categories in late L2 learners (for further detail on PAM-L2, see Reference Best, Tyler, Munro and BohnBest & Tyler, 2007; Reference Tyler, Nyvad, Hejná, Højen, Jespersen and SørensenTyler, 2019; Reference Tyler, Ball and BestTyler et al., 2024). As late L2 learners are already well-practiced in using the natively tuned speech perception they developed early in life when they start learning the L2, they initially perceive L2 segments in terms of their L1 phonological categories (i.e., in the same way as naïve monolinguals in cross-language speech perception studies). PAM-L2 is based on the assumption that late L2 learners incorporate L2 phonological contrasts into a single interlanguage system, and it outlines the ways that L2 categories might develop.

The applicability of PAM-L2 to early bilinguals depends on whether they have a single interlanguage phonological system, as we have proposed for late L2 learners, or separate phonological systems for each language (for discussions on this topic for bilingual language use more generally, see Reference GeneseeGenesee, 1989; Reference MacSwanMacSwan, 2017; Reference Otheguy, García and ReidOtheguy, García, & Reid, 2015). Our review of the infant literature suggests that bilingual infants follow the same developmental sequence as monolinguals, but prior research on speech perception in early bilingual infants and adults does not provide any clear answers. We do not rule out the possibility that some bilinguals might develop separate phonological systems, but we think that in order for this to occur it would probably require a restricted set of circumstances, for example nonoverlapping contexts of language use and/or languages with markedly different phonological properties, such as bilinguals who speak a tonal and a nontonal language (e.g., Reference Choi, Tong and SamuelChoi, Tong, & Samuel, 2019) or who use a signed and a spoken language (e.g., Reference Blanco-Elorrieta, Emmorey and PylkkänenBlanco-Elorrieta, Emmorey, & Pylkkänen, 2018). We suggest that in most early bilingual language environments, both sequential and simultaneous bilinguals are most likely to develop a single interlanguage phonological system, just as we have proposed in PAM-L2 for adult L2 learners.

According to PAM-L2, when an L2 phonological segment is initially perceived as an instance of an L1 category, that category will become a shared L1-L2 phonological category. For L2 segments that are not acquired as shared L1-L2 categories, the learner may acquire a new L2-only phonological category (compare with the Speech Learning Model [SLM]; Reference Flege and StrangeFlege, 1995), for example when an L2 segment is initially assimilated as uncategorized or as a nonassimilable nonspeech sound. It is important to note that if an L2 contrast is a single-category assimilation for an L2 learner, then both L2 segments might be incorporated into the same shared L1-L2 phonological category, and the learner would continue to find it difficult to discriminate the L2 contrast (for a more detailed discussion, see Reference Tyler, Ball and BestTyler et al., 2024). Although this outcome may seem consistent with the failure of early sequential Spanish-Catalan bilinguals in Barcelona to perceive certain Catalan-only vowel contrasts categorically, we consider this late-L2 account unlikely to apply frequently to early bilinguals. This is because, unlike adult L2 learners, their nascent phonological categories would not be established well-enough in one language to prevent them from tuning in to a contrast in their other early-acquired language.

When the phonetic properties differ for the L1 and the L2 versions of shared L1-L2 phonological categories, as is the case for stop voicing in Greek and Spanish or place of articulation for /d/ in French and English, then late L2 learners may tune in to those L2-specific phonetic properties. Reference Best, Tyler, Munro and BohnBest and Tyler (2007) referred to this as a language-specific phonetic category within a shared phonological category. As early bilinguals’ phonological categories develop before any one language is well established, we predict that they are more likely than late L2 learners to acquire sensitivity to language-specific phonetic properties within phonological categories that are shared between their two languages.Footnote 3 Predictions for contrast discrimination accuracy are the same for PAM-L2 as they are for PAM, except that the segments comprising two-category, category-goodness, or single-category contrasts may be categorized as L1-only phonological categories, L2-only (new) phonological categories, or shared L1-L2 phonological categories.

One clear finding from the adult bilingual speech perception literature is that early bilinguals can make language-appropriate category judgments, even when there is a phonetic-phonological mismatch across languages for a shared phonological category (Reference Antoniou, Tyler and BestAntoniou et al., 2012; Reference Casillas and SimonetCasillas & Simonet, 2018; Reference Gonzales, Byers-Heinlein and LottoGonzales et al., 2019). Bilinguals may achieve this by shifting their attention to lower-order invariant phonetic properties that are unique to one language or the other, that is, to their language-specific phonetic specifications within that shared phonological category. Note that shifting attention to lower-order phonetic properties is not unique to bilingual speech perception. Monolinguals also need to shift their attention to lower-order phonetic properties in certain listening situations, such as when rating the goodness of fit of non-native phones to native phonemes. As monolinguals have only a single, unilingual language mode (Reference Grosjean and NicolGrosjean, 2001), they are able to shift attention between higher-order phonological and lower-order phonetic information according to task demands. We propose that bilinguals necessarily shift into a unilingual language mode in one or the other of their languages when they need to focus on lower-order phonetic details. While they may be able to shift between unilingual modes, we reason that they should not be able to attend to the lower-order phonetic details of both of their languages at the same time. For example, the Spanish-English bilinguals in Reference MelguyMelguy (2018) showed some differential sensitivity to the dental (Spanish-like) versus the alveolar (English-like) Nepali consonants in categorization, but their discrimination of the contrasts was poor. They may have been able to switch between unilingual Spanish and English modes as they judged the individual Nepali consonant stimuli because they were presented one by one, but we argue that in the discrimination task they would need to remain in a unilingual mode to detect the lower-order phonetic differences between the three non-native Nepali consonants within a given trial.Footnote 4

We posit that bilinguals living in an environment where one language dominates are likely to be more adept at shifting their attention from higher-order phonological to lower-order phonetic properties in the dominant language than in the nondominant language. When perceiving non-native speech, they should be more likely to focus automatically on the lower-order phonetic properties of their dominant language, unless the task requires an explicit focus on the nondominant language (e.g., categorizing non-native segments using orthographic labels from the nondominant language, Reference Antoniou, Best and TylerAntoniou et al., 2013). We speculate, further, that it should require greater effort for them to shift their attention to the nondominant language than it would to the dominant language. That is, we predict a language-dominance-related asymmetry in directing attention to lower-order phonetic details when perceiving speech in their two languages.Footnote 5

In summary, the principles that guide a PAM account of speech perception in monolinguals and late L2 learners also apply to early bilinguals. We have suggested that, like late L2 learners (Reference Best, Tyler, Munro and BohnBest & Tyler, 2007; Reference Tyler, Nyvad, Hejná, Højen, Jespersen and SørensenTyler, 2019; Reference Tyler, Ball and BestTyler et al., 2024), early bilinguals are likely to have developed a single interlanguage phonological system, composed of a combination of language-specific and shared phonological categories, the latter of which may also have shared or language-specific phonetic categories. We predict that early bilinguals will perceptually assimilate non-native phonological segments to their interlanguage phonological categories and that they will be sensitive to the higher-order phonological information that sets those categories apart from one another (i.e., two-category assimilations), regardless of language mode. If a non-native contrast cannot be discriminated using high-order phonological information (e.g., single-category and category-goodness assimilations), the bilingual must shift to a unilingual mode in order to focus on lower-order phonetic details. In this case, if the context does not favor one language over the other, then bilinguals are likely to shift to their dominant language.

7.6 Future Directions and Methodological Considerations

Studies of bilingual speech perception have only begun to scratch the surface of how early bilingual language experience shapes perception. Many studies have compared bilinguals’ perception of segments in their two languages, while very few have tested bilinguals’ cross-language perception using non-native segments from a language that they have never encountered before. For a richer insight into phonological attunement in bilinguals, more studies are needed in which bilinguals’ perception of minimally contrasting non-native segments is compared to perception by monolinguals of each of their two languages. We encourage researchers with access to early bilingual populations to investigate cross-language speech perception, but there are prerequisites that need to be met and methodological considerations, including some issues that are yet to be resolved, as we discuss in the following paragraphs.

To understand how bilingual language experience shapes perception, it is necessary to compare bilinguals and monolinguals. Ideally, there would be two monolingual groups, one for each of the bilingual’s languages (see, e.g., Reference Antoniou, Tyler and BestAntoniou et al., 2012, Reference Antoniou, Best and Tyler2013; Reference Sundara and PolkaSundara & Polka, 2008). PAM makes clear predictions about adult monolinguals’ discrimination accuracy for non-native contrasts because they are raised in linguistically similar language environments. However, perceptual attunement may differ among bilinguals who are exposed to the same two languages because the shape of a bilingual’s interlanguage phonological system depends on the nature of their own individual language experience (compare with the role of input in the revised Speech Learning Model (SLM-r), Reference Flege, Bohn and WaylandFlege & Bohn, 2021; and see Reference Otheguy, García and ReidOtheguy et al. [2015] for the notion of an idiolect). To avoid confounds, the characteristics of a bilingual sample need to be well controlled on the factors that are known to influence bilingual speech perception (e.g., age of acquisition of each language, dominance, and contexts of language use; see Reference Antoniou, Grosjean and Byers-HeinleinAntoniou, 2018). Monolingual groups must also be well controlled, with researchers ensuring that they have not been regularly exposed to other languages in early childhood (i.e., for more than four hours per week), have not lived for an extended period in a second-language environment, and are not advanced learners of an L2. Finding monolingual groups without early exposure to another language may be difficult nowadays, however, as children often also learn English (e.g., in Europe) or Standard Mandarin (in China or some other Asian countries) as an L2 from a young age. In fact, bilinguals from the same language community may acquire those languages as an L3. This means that the listeners could have acquired L2(/L3) phonological categories that support discrimination of non-native contrasts (see Reference Tyler, Nyvad, Hejná, Højen, Jespersen and SørensenTyler [2019] for a discussion of category acquisition in a foreign-language classroom setting). At the very least, researchers should ensure that monolinguals and bilinguals have the same history of early L2(/L3) classroom learning, but it would be preferable, where possible, to test bilinguals with language combinations for which both languages are also still being learned monolingually.

Our previous discussion shows that language mode (Reference Antoniou, Tyler and BestAntoniou et al., 2012; Reference Grosjean and NicolGrosjean, 2001) is an important factor to take into account, so investigations of bilingual speech perception should ideally include a language-mode manipulation. If that is not feasible, then it is important to control for language mode by ensuring that bilinguals are restricted to and maintained in one language mode or the other when completing the experiment. However, researchers should bear in mind that, even with the most carefully controlled language mode conditions, language mode might shift when the phonetic properties of the non-native stimuli are much more consistent with the bilingual’s other language (Reference Gonzales and LottoGonzales & Lotto, 2013).

In order to test PAM predictions, participants must complete both categorization and discrimination tasks. Categorization tasks usually have a closed set of response labels that participants can select from (e.g., Reference Antoniou, Best and TylerAntoniou et al., 2013; Reference MelguyMelguy, 2018), but it is possible to use an open set, where participants simply write down what they hear (e.g., Reference Best, McRoberts and SitholeBest et al., 1988; Reference Bohn, Best, Avesani, Vayra, Lee and ZeeBohn et al., 2011). An open-set categorization task has the advantages that participants are not restricted by a closed response set or influenced by the experimenter’s choice of categories. However, responses can be difficult to interpret if participants use novel letter combinations to indicate peculiar phonetic characteristics (e.g., Reference Best, McRoberts and SitholeBest et al., 1988). If using an open-set approach, researchers should interview the participants after the experiment to clarify what their labels refer to. A closed-set approach has the advantage of focusing participants’ attention on phonological categories and it avoids the need to interpret any idiosyncratic labels that participants might insert. However, if using a closed-set task, we recommend a whole-system approach (Reference Bundgaard-Nielsen, Best and TylerBundgaard-Nielsen, Best, & Tyler, 2011), where participants are provided with labels for all possible consonant or vowel categories in a given language, rather than assuming which phonological categories are relevant.

An unresolved methodological issue that needs to be considered for categorization tasks with bilinguals is whether to give them the opportunity to select categories from both of their languages. Spanish-English bilinguals in Reference MelguyMelguy (2018) were provided with a closed set of both English and Spanish response categories, whereas the Greek-English bilinguals in Reference Antoniou, Tyler and BestAntoniou et al. (2012, Reference Antoniou, Best and Tyler2013) selected labels in either Greek or English orthography, according to the language mode condition. Neither approach is ideal. Reference MelguyMelguy (2018) found that bilinguals’ responses for the same Nepali consonant were shared between the Spanish and the English labels. He needed to infer their language-specific phonetic sensitivity by analyzing the relative proportions of Spanish versus English labels, which is a nonideal methodological approach. Furthermore, using a whole-system approach for more than one language would result in an overwhelming number of response categories. The alternative approach of providing response options from only one language would also be problematic if the stimulus were perceived as a clear instance of a category in the bilingual’s other language. This is unlikely to have occurred in Reference Antoniou, Tyler and BestAntoniou et al. (2012, Reference Antoniou, Best and Tyler2013) because the stimuli were perceived as consonants that are shared between the bilinguals’ two languages. Had they been asked to categorize an Arabic voiceless uvular fricative /χ/, however, which also occurs in Greek, then bilinguals in English mode would not have been able to indicate that the Arabic consonant was a clear instance of their Greek /χ/ category. A possible alternative task is forced category-goodness rating (Reference TylerTyler, 2021a). For each stimulus item, the participant is provided with a single phonological category label and asked to rate the goodness of fit to the category provided. This task has the advantage that participants provide ratings against phonological categories that they may not have selected in a forced-choice categorization task, but it is very labor-intensive because each stimulus needs to be rated against multiple phonological categories. One compromise that may provide a solution is to use a categorization task with elements of both open- and closed-set approaches (Reference Rallo Fabra, Achichaou, Tyler, Blecua, Cicres, Espejel and MachucaRallo Fabra, Achichaou, & Tyler, 2022; Reference Rallo Fabra and TylerRallo Fabra & Tyler, 2023). Participants are provided with a closed set of labels from one language, with an additional button to use when they wish to use a different category. The participant provides a new label, which is then added to the categorization grid for subsequent trials. A survey at the end of the task asks for a description of each label, including the language that the category is from.

PAM was developed to account for how native-language experience shapes perception. In this chapter, we outlined how the principles of PAM and PAM-L2 (late bilinguals) also apply to early bilinguals’ speech perception. We have suggested that bilinguals’ speech perception is shaped by their experience with their two languages, and that they have a single shared phonological system for both of their languages. Phonological categories may be unique to one language or shared, and bilinguals may shift their attention to language-specific phonetic categories within a shared phonological category according to language mode. PAM was developed using experimental evidence from cross-language speech perception studies, in which listeners categorize and discriminate speech segments from a language that they have never encountered before. We suggest that carefully controlled cross-language speech perception studies comparing bilingual and monolingual infants and adults will provide important insights into how language experience shapes perception in early bilinguals.

8.1 Introduction

Since its original proposal (Reference EscuderoEscudero, 2005) and following a revision (Reference Escuderovan Leussen & Escudero, 2015), the Second Language Linguistic Perception (L2LP) model has received increasing attention as a comprehensive and quantitative model of second language (L2) speech perception. It grew out of and coevolved with the Bidirectional Phonology and Phonetics (BiPhon) framework (Reference BoersmaBoersma, 1998, Reference Boersma, Benz and Mattausch2011), which itself is an extension of Optimality Theory (OT; Reference Prince and SmolenskyPrince & Smolensky, [1993] 2002).Footnote 1 Numerous studies have been conducted within the model’s framework over the last two decades, accumulating evidence for its adequacy in describing, explaining, and predicting L2 learners’ perceptual patterns. Recent works have also extended the model to a wider range of bilingual populations (e.g., simultaneous bilinguals as in Reference Escudero, Mulak, Fu and SinghEscudero et al., 2016a), to other domains of language acquisition (e.g., word learning [as in Reference Escudero, Mulak and VlachEscudero, Mulak, & Vlach, 2016b; Reference Escudero, Smit and MulakEscudero, Smit, & Mulak, 2022], orthography [as in Reference EscuderoEscudero, 2015; Reference Escudero, Simon and MulakEscudero, Simon, & Mulak, 2014a; Reference Escudero, Smit and AngwinEscudero, Smit, & Angwin, 2023], and speech production [as in Reference Liu and EscuderoLiu & Escudero, 2023; Reference Yazawa, Konishi, Whang, Escudero and KondoYazawa et al., 2023a), and to other academic disciplines (e.g., language training and curriculum design [as in Reference Colantoni, Escudero, Marrero-Aguiar and SteeleColantoni et al., 2021; Reference Elvin, Escudero, Gutierrez-Mangado, Martínez-Adrián and Gallardo-del-PuertoElvin and Escudero, 2019]). This chapter aims to illustrate how L2LP can address a breadth of issues in bilingual phonetics and phonology by reviewing pivotal research conducted with the model. The focus here is on L2LP, but thorough comparisons with other models of L2 and bilingual phonetics and phonology can be found in Reference EscuderoColantoni, Steele, and Escudero (2015), Reference EscuderoEscudero (2005), and Reference YazawaYazawa (2020).

Before we move on, it is important to note that most studies that have previously been conducted within L2LP or other models of non-native speech perception have tended to feature “naïve” listeners and “L2 learners” with different proficiency levels. Given that within this volume the term used to define users of two or more languages is “bilingual,” it seems appropriate to first provide the definitions of a variety of participant groups that have been included in previous and recent L2LP studies.

Most studies within the L2LP framework have used a control group commonly termed “monolingual” listeners of the target language. However, even a term that seems simple and easy to determine has complexities. To clarify the term, Reference Escudero, Sisinni and GrimaldiEscudero, Sisinni, and Grimaldi (2014b, p. 1578) defined monolingual listeners or functional monolinguals as those who use only their first language (L1) in their everyday life, have not resided in a country or region where another language is spoken for longer than a month, and have received basic classroom L2 instruction (if at all) by L1-accented teachers focusing on reading and grammar. Such monolinguals can be regarded as being in their initial state for learning any subsequent language, that is, at the onset of L2 learning.

In Reference Escudero, Smit and MulakEscudero et al. (2022), an important difference is made between those who use two languages, commonly referred to as bilinguals, based on their age of acquisition for each language. Specifically, the authors distinguish between “simultaneous” and “sequential” bilinguals, with the former being exposed to their languages from birth and the latter acquiring an L2 after their L1. Sequential bilinguals are commonly called L2 learners, with the onset of L2 acquisition occurring during adolescence or adulthood. Although L2 learners can reach an end state that resembles native-like performance, this may not be the case for all components of language proficiency, resulting in different levels of L2 proficiency for sequential bilinguals. In contrast, simultaneous bilinguals commonly acquire full proficiency comparable to monolinguals of the two languages, especially in the domain of phonetics and phonology (Reference Antoniou, Best, Tyler and KroosAntoniou et al., 2011; Reference Elvin, Tuninetti and EscuderoElvin, Tuninetti, & Escudero, 2018a). Later we will see that this distinction between different types of bilinguals yields differential performance, which will be explained using L2LP’s developmental proposal.

The remainder of this chapter is organized as follows. First, we present an overview of L2LP to help familiarize readers with the model’s key constructs (Section 8.2). This section also discusses how computational and statistical methods are utilized to provide greater explanatory adequacy and more specific and testable predictions, since quantification is a crucial property of the model. We then report on a series of new studies to illustrate L2LP’s recent approach to lexical development (Section 8.3). These studies shed light on previously understudied aspects of bilingual phonetics and phonology, including how bilinguals’ linguistic background influences their prelexical perception and lexical development. Finally, we address some remaining questions concerning how the model handles important issues such as the role of orthography, speech production, and applications to language training and curriculum design, including future directions (Section 8.4). The chapter ends with a summary and conclusion (Section 8.5).

8.2 Model Overview

Given that L2LP’s theoretical framework is based on “Linguistic Perception” (LP), we start by outlining the principles of LP in Section 8.2.1, followed by their extension to L2LP in Section 8.2.2. Section 8.2.3 addresses how the model’s theoretical components can be computationally implemented for explanatory adequacy as well as to formulate specific and testable predictions.

8.2.1 Linguistic Perception (LP)

The term “Linguistic Perception” reflects the notion that human speech perception is a language-specific rather than a general auditory process. Reference EscuderoEscudero (2005, p. 7) defines speech perception as “the act by which listeners map continuous and variable speech onto linguistic targets.” Given that the very purpose of speech communication is to understand and to be understood, the listener’s task is to map the incoming variable acoustic cues (e.g., first formant or F1, second formant or F2, fundamental frequency, and duration) onto discrete and abstract linguistic representations (e.g., distinctive features, segmental categories, and suprasegmental structures) to ultimately extract the meaning intended by the speaker. The mapping patterns are language-specific in nature, since the number of linguistic representations and the use of acoustic cues vary substantially not only across languages but also across varieties or dialects of the same language.

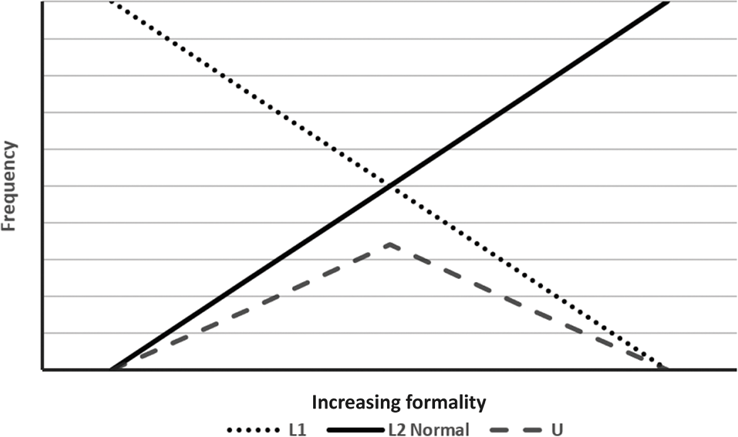

Consider, for example, how the acoustic cues of F1 and F2 can map onto vowel categories. These cues, though physically continuous, should perceptually map to a different number of discrete categories depending on the language. Native English listeners need to make a fine-grained mapping of the two cues onto a dozen vowel categories so that they can identify and distinguish minimal pairs such as “heed,” “hid,” “hayed,” “head,” “had,” “hud,” “hod,” “hawed,” “hoed,” “who’d,” “hood,” and “heard,” although “a dozen” is a very rough approximation because the exact number of categories varies across different dialects of English. The mapping is much less dense for Arabic, which has only three qualitative contrasts (/i/, /a/, and /u/), though again dialectal variations exist. Languages also exhibit divergent mapping patterns even when they have the same number of categories. For example, native listeners of Greek, Hebrew, Czech, Spanish, and Japanese, all of which have a five-vowel system in their standard varieties, show distinct mapping patterns of the F1 and F2 cues per language (Reference Boersma, Chládková, Lee and ZeeBoersma & Chládková, 2011; Reference Escudero, Sisinni and GrimaldiEscudero et al., 2014b).

The language-specific nature of speech perception is formulated in the LP model as the optimal perception hypothesis,Footnote 2 which posits that listeners learn the optimal mapping of acoustic cues onto appropriate sound representations that leads to maximum likelihood behavior (Reference BoersmaBoersma, 1998, p. 337). This means that the probability of correctly perceiving the intended linguistic representation based on the acoustic cues is maximized or, to put it another way, the probability of misperception is minimized. Native listeners’ perception of a language is optimal in that it tries to extract as many linguistic representations as required in the language (e.g., a dozen vowel categories in English, three in Arabic, or five in Greek, Hebrew, Czech, Spanish, and Japanese, with nonnegligible dialectal differences). It is also optimal in that the mapping patterns mirror the acoustic cues in the language (e.g., Japanese /u/ is generally more fronted than Spanish /u/, and so the perceptual usage of the F2 cue differs between the two languages).