The interaction of material, social, and individual dimensions of meaning design is clearly exemplified in the early development of the technology of writing. Writing is thought to have developed roughly 5,000 years ago in four different parts of the world: Mesopotamia, China, the Indus River Valley, and Egypt, with independent development considerably later in Mesoamerica.Footnote 1 Because scholarly documentation is most extensive for early writing in ancient Mesopotamia, this chapter will focus on that region.

Although few statements about the beginnings of writing go uncontested, most scholars agree that writing in Mesopotamia began not as a representation of language at all, but as a way of recording administrative activities in the ancient Near East. At the end of the Neolithic period, social conditions were changing rapidly, with rising wealth, labor specialization, increased circulation of goods, and the emergence of an elite. A more complex political and social infrastructure was essential to manage the expanding economy, and a specific need arose to keep durable records of the production, distribution, and storage of goods. The earliest writing, using an interface of soft clay etched with a pointed stick or reed, was well suited to this need.

But clay etchings and the system that organized them did not spring from nowhere. Archaeological evidence suggests they were likely to have developed over millennia through the invention and use of different systems of administrative record-keeping such as clay tokens, standardized containers, and seals. For example, Schmandt-Besserat (Reference Schmandt-Besserat1996) argues that writing evolved from an accounting system of clay tokens used as early as 8000 bce.Footnote 2 The earliest tokens, appearing with the beginning of agriculture, were basic geometric forms such as cones, disks, spheres, and cylinders, which Schmandt-Besserat calls ‘plain’ tokens (Figure 5.1).Footnote 3 With the rise of cities about four millennia later, ‘complex’ tokens arrived on the scene, complementing the plain tokens with new geometric shapes: bent coils, parabolas, quadrangles, as well as miniature representations of animals, fruits, furniture, vessels, and tools (Figure 5.2).Footnote 4 Whereas the plain tokens had a smooth surface, with no markings, the complex tokens often bore patterns, notches, and punctuations made with a stylus. Both types of token served the same overall function: to organize and store information about goods produced or transacted.

Figure 5.1 Plain tokens from Tepe Gawra, present-day Iraq, c. 4000 bce

Figure 5.2 Complex tokens from Tello, ancient Girsu, present-day Iraq, c. 3300 bce

As the administrative need arose to archive transaction records, several storage methods were developed. Plain tokens were enclosed in a bulla – a clay envelope the size and shape of a baseball (Figure 5.3), while complex tokens were often strung on a string attached at both ends to an oblong lump of clay impressed with a seal to endorse the authenticity and legitimacy of the record.Footnote 5 Both methods were presumably designed to impede tampering with records – a particularly important safeguard for agreements to be transacted at a later time.

Figure 5.3 Clay ball and tokens

The problem with storing tokens inside clay balls, however, was that once the tokens were enclosed, they were no longer visible or accessible, so the ball had to be broken if one wanted to verify its contents. The solution was to press the tokens into the soft clay exterior of the envelope just before sealing them inside (Figure 5.4). In that way, the contents could be verified without having to break the hardened clay ball. This storage system effectively made the enclosed tokens obsolete, for the impressions alone provided a sufficient record of transacted goods. And if impressions sufficed, it meant that the hollow clay envelope was no longer needed either – a mere flat surface would be adequate. Thus was born the clay tablet (Figure 5.5).Footnote 6

Figure 5.4 Sealed clay ball impressed with tokens similar to those shown to its right

Figure 5.5 Clay tablet from Susa (Iran) showing an enumeration

In terms of the design process, then, a change in social environment (i.e., greater economic and political complexity in Mesopotamian society) was accompanied by the adaptation of existing accounting technologies to develop new practices with the same basic materials (i.e., clay). But how did these social-technological changes relate to linguistic writing?

This is where the story becomes highly controversial. Archaeological evidence from the ancient city of Uruk (in present-day Iraq) dates the beginning of a pictographic writing system known as proto-cuneiform to around 3200 bc.Footnote 7 Proto-cuneiform included icons representing parts of the body, birds, fish, plants, mountains, stars, and so forth (e.g., bird was represented by ![]() , barley by

, barley by ![]() ). Schmandt-Besserat (Reference Schmandt-Besserat1996) argues that some of these pictographic signs depicted complex tokens she had inventoried – in other words, they were signs of signs, and thus constituted the essential leap necessary to allow the creation of written language.

). Schmandt-Besserat (Reference Schmandt-Besserat1996) argues that some of these pictographic signs depicted complex tokens she had inventoried – in other words, they were signs of signs, and thus constituted the essential leap necessary to allow the creation of written language.

Critics argue, however, that Schmandt-Besserat's classification scheme is flawed, that complex tokens are too loosely defined, and that there is no reason to assume that a given token consistently meant the same thing over many millennia and across the vast geographic range where tokens were found (from Egypt to Iran to Anatolia). Archaeologist Paul Zimansky (Reference Zimansky1993) suggests it is more likely that various people at various times used clay tokens to represent whatever they (as individuals) wanted them to represent. Stephen Lieberman (Reference Lieberman1980) adds that because tokens continued to be used alongside written records, we should not think of them as the specific precursor to writing but rather as a parallel system. He takes the fact that the complex tokens were themselves inscribed with marks as further evidence that there well may have existed a sign system that manifested itself both in clay tokens and in clay tablets contemporaneously.

Sumerologist Piotr Michalowski (Reference Michalowski1993) agrees that many tokens likely developed along with or even later than the first inscribed tablets, and proposes a feedback mechanism “whereby certain tokens were made in the shape of written signs, and the proliferation of symbols reflected the experimentation that was taking place in the first writing system” (p. 997). He argues that while tokens and bullae may well have been resources drawn upon in the invention of writing, they were not alone adequate to account for the development of what was really a completely new semiotic form whose genesis he describes as follows:

It is clear…that multiple forms of communication and visual means of social control were prevalent in the Near East in the periods directly preceding and during the time of rapid urbanization. Different social contexts provided the impetus for differing vehicles. Seals, potters’ marks, painting and craft ornamentation, tokens, bullae, numerical tablets, and other designs – these must be seen as parallel systems of communication. The Uruk IV tablets must be placed beside them, not as an evolutionary descendant but as a new member of the extended family. The inventor(s) of proto-cuneiform undoubtedly drew upon many pre-existing elements to create the new vehicle.

Following Michalowski in light of the metaphor of design, we can think of tokens, bullae, seals, numerical tablets, standardized containers, potters’ marks, painting, and craft ornamentation as available designs that operate within their own social contexts. In the new overarching social context of nascent civilization (rapid urbanization, economic exchange on a scale that demanded record-keeping of past, present, and future transactions), proto-cuneiform may not have evolved specifically from tokens but rather emerged from the vortex of available designs operating in parallel semiotic systems, drawing elements from each system (Figure 5.6).Footnote 8

Figure 5.6 Available designs in the development of proto-cuneiform tablets

It is important to point out that language in the sense of connected speech was not explicitly in the mix at this stage, since proto-cuneiform texts apparently bore no relation to spoken language (Damerow, Reference Damerow2006, p. 4). The transition from proto-cuneiform to a full-fledged cuneiform writing system capable of representing speech is poorly documented by archaeological findings, but specialists hypothesize that it was probably at least five centuries after early pictograms before the first connected texts (e.g., legal, religious, commemorative) were written.

What is clear, however, is that two important changes took place between proto-cuneiform and cuneiform writing of the Fara period about 500 years later. One was material, having to do with the technique of writing. The other was functional, having to do with how sounds were linked to graphic signs, first in Sumerian and then other languages.

The language connection: phonetic coding and the rebus principle

Archaeologists believe that the use of true cuneiform writing (as opposed to proto-cuneiform) began during the Early Dynastic I period (c. 2950–2750 bce) in Mesopotamia. Two key changes are considered especially important in this development. The first has to do with the material techniques used in writing, which led to increasingly abstract forms. The second, perhaps enabled by this increased abstraction, is the way written signs came to represent speech sounds.

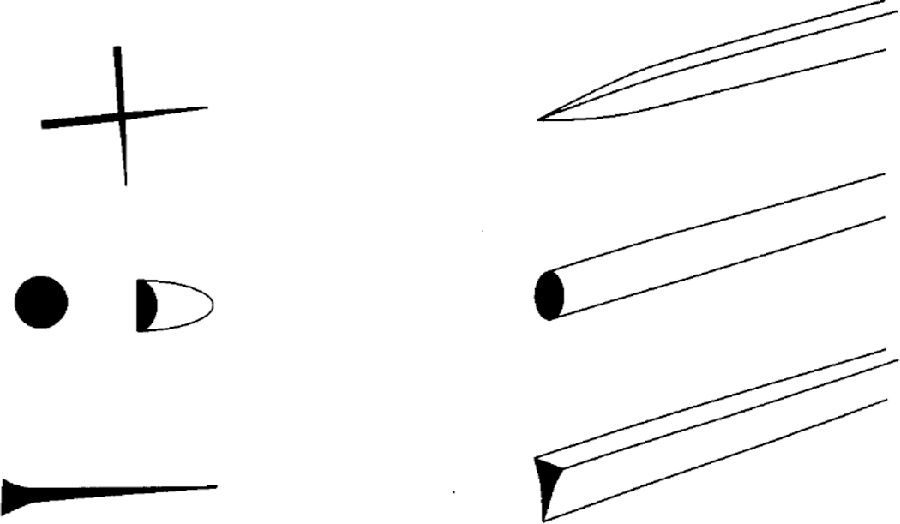

Because wet clay did not preserve curved lines well, and because it dried quickly in the hot, dry climate, lines drawn with a sharp pointed stylus in proto-cuneiform were replaced by short, straight impressions made with a reed stylus with a triangular tip, explaining the characteristic wedge-shaped marks that give cuneiform its name (see Figure 5.7). With this new wedge-shaped stylus, curved lines became straight strokes (Figure 5.8), circles became squares, and fine details were eliminated, all of which increased writing speed (Gaur, Reference Gaur1984, p. 48). This is the first instance of many that we will encounter of changes in written forms brought about by material factors.

Figure 5.7 Stylus shapes and their respective impressions

Figure 5.8 Breaking up of curved lines with change of stylus

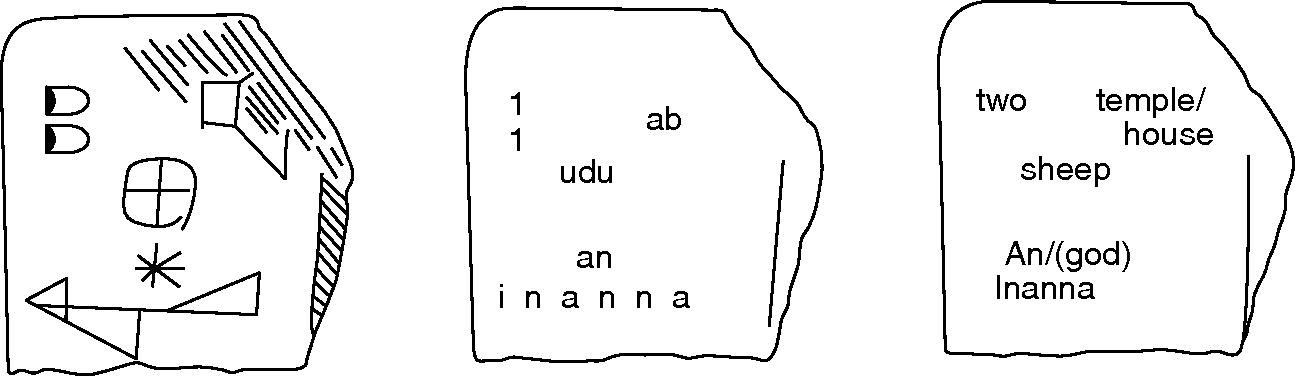

Because early clay tablets were bookkeeping devices, they did not have any discernable syntax and were mostly organized in spatial hierarchies (Damerow, Reference Damerow2006).Footnote 9 For example, names of donors or recipients of a transaction were consistently found below signs indicating the goods transacted, allowing information such as ‘ten sheep (received from) Kurlil’ to be recorded even in the absence of signs to indicate verbs and prepositions (Schmandt-Besserat, Reference Schmandt-Besserat1996, p. 98). While such extreme economy might seem overly ambiguous to the modern reader, it is not entirely unlike today's pared down text messages, tweets, and emoticons. Nissen (Reference Nissen1986) points out that for a long time writing was used “only as a means of producing catchwords for someone who was more or less familiar with the context but needed to be reminded of particular details” (p. 329). Nissen illustrates this point with the example shown in Figure 5.9. While the inscriptions can be interpreted as ‘Two sheep delivered to the temple (or house) of the goddess Inanna,’ they can also be read as ‘Two sheep received from the temple/house of the goddess Inanna.’ Furthermore, because the starlike sign (AN – ‘heaven’) can refer to An, the god of the heavens, or can function as a semantic determinative (marking divinity), it is not clear whether the house or the temple belongs to ‘the goddess Inanna’ or ‘the gods An and Inanna’ (1986, p. 329). Contingency of meaning was thus a feature of written texts right from the start.

Figure 5.9 The problem of relating signs in a proto-cuneiform text fragment

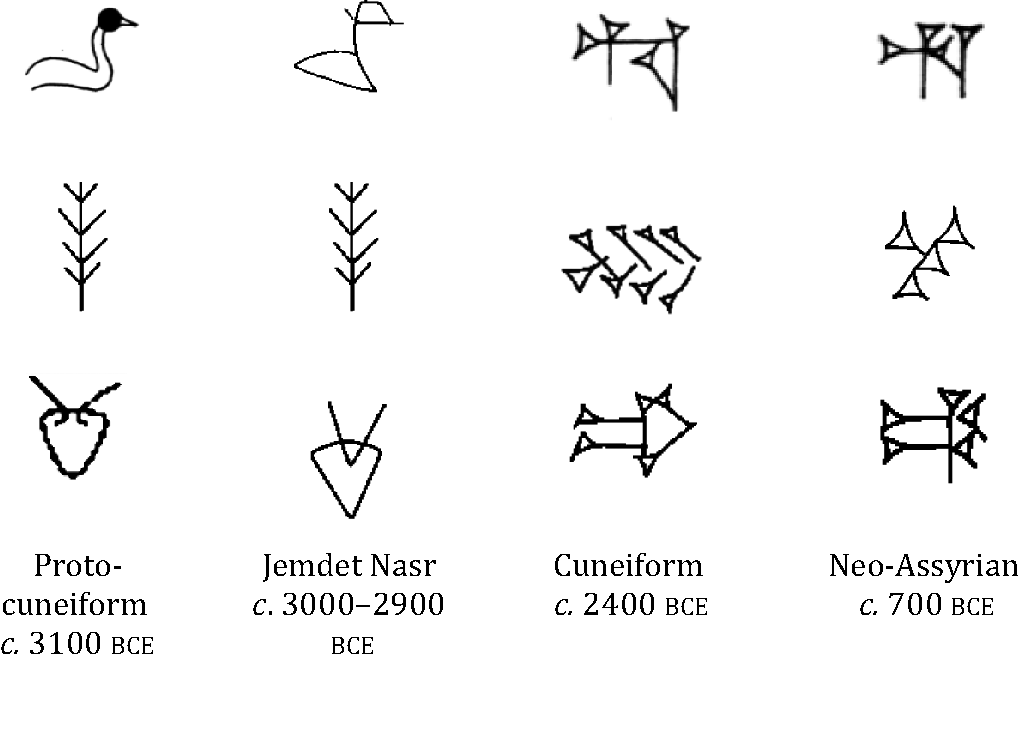

Over a period of centuries, ‘naturalistic’ signs became increasingly abstract, and they continued to change over subsequent millennia as they came to be used not just for Sumerian but also other languages such as Akkadian, Hittite, Elamite, Hurrian, and Urartian. Consider, for example, the transformation of the signs for Sumerian words mušen (‘bird’), še (‘barley’), and gu (‘ox’) from pictograms to cuneiform signs (Figure 5.10). The 90-degree left rotation (shown in the third column of Figure 5.10) occurred sometime during the early third millennium, corresponding to the transition from writing in vertical columns (read from right to left) to writing in rows (read left to right).Footnote 10

The second and crucial change, occurring during the Early Dynastic period (c. 2800 bce), was the linkage of Sumerian writing and speech through phonetic coding. This adaptation changed both the structure and the range of application of proto-cuneiform writing. Although proto-cuneiform was almost entirely logographic, meaning that one graphic sign represented a whole word, the system gradually became more logosyllabic, meaning that signs took on phonetic value through the use of the rebus principle. In a rebus, a picture or symbol is used purely for its sound to represent a spoken word or syllable (see Figure 5.11).

Figure 5.11 Example of a rebus in English. May I see you home, my dear? Escort Card, c. 1865, US

In cuneiform writing, the sign for one word was used to represent another word with the same or similar sound.Footnote 11 For example, the sign for the Sumerian word ti (‘arrow’), written ![]() , was also used to designate the near homophone til (‘life’). Rebuses and homophony not only made it possible to write abstract nouns but also afforded the possibility of writing multisyllabic words and names by representing the sounds of each individual syllable. An analogy in English would be using an icon of the sun not only to represent the words sun or son, or the name Sunn, but also the syllable in sundry, lesson, Anderson, and so on.

, was also used to designate the near homophone til (‘life’). Rebuses and homophony not only made it possible to write abstract nouns but also afforded the possibility of writing multisyllabic words and names by representing the sounds of each individual syllable. An analogy in English would be using an icon of the sun not only to represent the words sun or son, or the name Sunn, but also the syllable in sundry, lesson, Anderson, and so on.

But homophony has its limitations as well. Whereas context might clarify meaning in face-to-face speech, in the context-reduced situation of writing, a systematic one-to-one sound–symbol correspondence system could lead to frequent ambiguity. Because some Sumerian words had a large number of homophones, they needed to be distinguished in writing. For example, fourteen homophones of gu were represented by distinct visual signs. The first four are presented below (from Walker, Reference Walker1987, p. 12):

This is similar to how contemporary languages like English and French attribute multiple meanings to the same sound pattern, but differentiate each meaning with a distinct visual sign (e.g., there, their, they're; ver, vers, vert, vair, verre). And this is what allows tongue-in-cheek use of homophony as in the email forwarding tag ‘Sent from my eyePhone.’

To further complicate things, Sumerian was also characterized by homophony's complement, polyphony, meaning that one graphic sign could represent semantically related words that nevertheless had vastly different sounds. For example, the sign for ka‘mouth,’ originally a pictogram of a head with the mouth drawn on it, is also used for words associated with the mouth; for example, gu3 ‘voice’, zu ‘tooth’, du11 ‘speak,’ and inim ‘word’ (Walker, Reference Walker1987). Because of homophony and polyphony, the intended meaning of cuneiform signs could often only be determined by context.

A partial solution to the one-to-many correspondence problem was the development of determinatives – signs placed before or after other signs to specify the relevant semantic domain (e.g., geography, divinity, metal, wood, and so on). Perhaps the most common determinative in Sumerian was an (![]() ) as seen in the tablet in Figure 5.9 above to designate a deity. Other markers were phonetic, to indicate how a sign should be pronounced (akin to our use of st, nd, rd in 1st, 2nd, 3rd in English). Because they ‘determine’ how a stretch of writing should be read, these particles are vaguely analogous to emoticons in electronic texts today, which are often intended to clarify the tone of an utterance.

) as seen in the tablet in Figure 5.9 above to designate a deity. Other markers were phonetic, to indicate how a sign should be pronounced (akin to our use of st, nd, rd in 1st, 2nd, 3rd in English). Because they ‘determine’ how a stretch of writing should be read, these particles are vaguely analogous to emoticons in electronic texts today, which are often intended to clarify the tone of an utterance.

An important structural change resulting from cuneiform's new function of representing speech was the standardization of sign order and linearity. Whereas early accounts and administrative records used formats in which written signs were not necessarily placed in the order in which they would be spoken, by the middle of the third millennium bce, the order of signs usually reflected the syntax of spoken discourse (Veldhuis, Reference Veldhuis and Houston2012, p. 6). This happened at the same time that cuneiform was being used for the first time on a large scale to record lengthy prose and poetic compositions.

This story of the origins of writing still poses intriguing puzzles for scholars. We still do not know the extent to which particular threads of the story interact. As we have seen, for example, two important changes occurred between proto-cuneiform and cuneiform writing: material changes in the technique of writing, and functional changes with the beginnings of phonetic representation. Was there a relationship between these two developments? Here are the facts as we currently know them. First, we have seen that the earliest written signs depicted things in the world. When the wedge-shaped reed stylus was introduced, curved lines became straight impressions, fine details were lost, and signs became more stylized and abstract – a trend that continued over centuries and millennia. Signs lost any resemblance they might have originally had to things in the world. Might it have been this increasing abstraction of signs, grounded in the very materiality of writing practices, that facilitated the development of phonetic coding by distancing signs from their original real-world referents and thus making them more suitable for ‘arbitrary’ and multifunctional use? We will never know. But we do know that as cuneiform writing interfaced more fully with the Sumerian language, the degree of multifunctional use of cuneiform signs increased remarkably. Logograms served additionally as phonograms, and homophony and polyphony led to a complex web of relationships between signs, sounds, and meaning. Certain signs were used as semantic determinatives. Sumerian logograms were loosened from the language and came to be used to write Akkadian and other languages as well. Thus a limited inventory of signs was used to accomplish a maximum of communicative work. We can see how this principle still operates today as the Roman alphabet is used to write multiple languages, and when we use letters and numbers to represent words or parts of words, as when we write CU l8r for ‘see you later’ in a text message.

To round out the story, cuneiform became one of the most successful writing systems in human history. Over a period of three millennia, cuneiform writing served multiple needs, purposes, cultures, and languages. Despite its purely administrative origins, cuneiform writing came to preserve and disseminate literature (e.g., the Sumerian/Akkadian Epic of Gilgamesh), law (e.g., the Code of Hammurabi), religion (prayers, hymns, omens, divinations), and scholarship in fields such as astronomy, mathematics, and medicine.

Cuneiform and design

The above description has been somewhat detailed because the specific processes involved in establishing early Mesopotamian writing 5,000 years ago – the use of tokens, seals, tablets, pictograms, and abstract signs that were re-worked in relation to one another – are connected to general processes that have been reiterated throughout the history of literacy, and have direct relevance to our understanding of the relationship of language, literacy, and technology today.

We have seen that writing in Mesopotamia did not begin as a representation of speech but rather was born out of administrative needs in an increasingly complex society, to allow records of transactions to be made and preserved. What thus began as an interface to administrative bureaucracy was subsequently adapted to become a visual interface to language. This sequence is important, as it reminds us that writing is first and foremost a social phenomenon, and that its formal linguistic features follow from its social function as well as material constraints. In previous chapters we have seen this idea play out in diverse examples from handwriting to Greeklish, texting, online chat, and Facebook, and we will see it again in the next chapter when we consider new conventions that developed with the printing press. It will also help us to think about educational issues related to literacy in Part III. In writing classrooms today, for example, it all too often seems that writing is made to be about rules of standard grammar, spelling, and punctuation but without any real exploration of the social and pragmatic underpinnings of those conventions. The case study of cuneiform affirms the importance of conventions, but also highlights their social basis, which is what gives them meaning.

Material dimensions of literacy are also clearly illustrated in the case of cuneiform writing. As we saw in Chapter 2, the physical medium used has important implications for the form of the script, the size of texts, and their relative permanence. The medium of clay and reed stylus lent itself to short, straight impressions rather than round drawn lines. Because most tablets were small enough to fit in a scribe's palm, texts were generally short and required economical use of space. To date, clay has proved to be the most durable of any material used for writing – and its durability was only increased by fires!

Another important point that we can take from the story of cuneiform is that writing co-evolved with Mesopotamia's increasing social, economic, and cultural complexity, but it was not its cause. Technology is often considered an autonomous force that brings about advances in society, but the cases of innovations such as cuneiform writing, the telephone, the printing press, and the computer show us that it is not technology per se, but the interaction of technologies with social life, in the context of particular social conditions, that gives rise to broad social change. As functional needs change, so do material forms of communication, and these changes in turn open up possibilities for new functions, and so on.

The story of cuneiform also reminds us that the repurposing of existing resources depends on the ability to view familiar signs in a new way, drawing on repertoires of symbol manipulation. That ability is facilitated by the abstraction of signs. As users of an alphabet whose origins go back to the Phoenicians, writers of English no more think of an ox head when they write the letter A than they think of a little snake when they write the letter N. And no doubt the Phoenicians didn't either. The letters A and N make meaning when positioned in relation to other letters, but have no intrinsic meaning as letter forms.Footnote 12 The leap made by the Sumerians was to make signs abstract, which was at least partly due to material factors in using clay and stylus. Abstraction allowed signs to designate other signs (e.g., impressions on a bulla of tokens contained within it, which were in turn signs of categories of objects in the world; signs inscribed in clay being used to designate spoken words as well as functions that had no spoken equivalent, such as determinatives). The fact that a sign might refer to a notion of an object in the world, or it might refer to a language sound, or it might be used as a semantic determinative also suggests that extralinguistic context must have played an important role in the Sumerians’ interpretations of texts.

Another significant point illustrated by the case of cuneiform is that technologies of communication don't simply replace one another in a neat succession. Rather, they overlap and inform one another, creating a synergistic panoply of resources for record-keeping, for authenticating, for making and communicating meaning – all operating within a sociocultural ecology (as described in the Introduction and Chapter 3). When writing appeared, it supplanted neither token use nor seals, and it certainly did not supplant the use of speech. Nor did writing likely have just one precursor. As Lieberman, Michalowski and others have argued, writing emerged from a matrix of existing forms (i.e., available designs) coming from different, but interacting, semiotic systems.Footnote 13 Furthermore, the technology of cuneiform was itself an ‘available design’ in the sense that it went on to influence the development of other scripts, such as Ugaritic and Old Persian. Gaur (Reference Gaur2000) likens this (re)invention process to the turning of a kaleidoscope: “at every turn new patterns emerge but the basic components from which these patterns are made remain the same” (p. 6).

Finally, the case of cuneiform furthermore illustrates that writing systems are not static but respond dynamically to the ecologies in which they are used: they are variable in extension and adapt to the needs of the reading culture to which they belong. Thus whereas Old Assyrian merchants needed only about one hundred cuneiform signs, Old Babylonian scholars recording the Sumerian literary tradition used hundreds more, and many of which had several distinct values (Veldhuis, Reference Veldhuis and Houston2012, p. 8). Yet they were working with a system that had a recognizable quality of ‘sameness’ to it.Footnote 14 By virtue of interfacing with fourteen languages other than Sumerian, cuneiform writing also disabused us of the notion that writing systems ‘represent’ languages in any essential way. Rather, they have the potential to interface with multiple languages, and are often adopted for political or cultural reasons rather than for any linguistic reasons.

In the history of literacy, the printing press is usually described as the second quantum leap forward after the invention of writing itself. The standard narrative highlights Johannes Gutenberg's invention of the printing press in the mid-fifteenth century in Mainz, Germany. Because books no longer had to be copied individually by hand and could be mechanically mass produced, the press made it possible for many different people, physically dispersed, to view the same texts, images, maps, or diagrams simultaneously (Eisenstein, Reference Eisenstein1980, p. 53). This meant that texts, literacy, and knowledge could be much more widely disseminated, and that power could be redistributed out of the hands of a small priestly and scholarly elite to a more broad-based democratic base. The standard narrative has it that printing was the catalyst that made possible the Reformation, the Renaissance, and the Enlightenment, along with a wide range of -isms, including individualism, scientism, rationalism, and nationalism (Eisenstein, Reference Eisenstein1980; McLuhan, Reference McLuhan1962).Footnote 1

This standard narrative is, however, technocentric and Eurocentric, focusing on how a material tool revolutionized European society. The printing press as an invented artifact did not constitute a quantum leap forward; rather, it was the felicitous conjuncture of the printing press with just the right social, material, and economic conditions in Europe that made widespread social change possible.Footnote 2

Indeed, in some respects the printing press might even be considered more reactionary than revolutionary (Pattison, Reference Pattison1982). Gutenberg did not introduce a new form of storing narrative and information, but mechanized the production of a familiar form.Footnote 3 For example, the earliest printed books imitated scribal manuscripts down to the finest details, incorporating the same idiosyncratic letterforms, ligatures, and abbreviations. They incorporated the same page layout, and even left blank spaces for artists to add elaborate initial letters by hand and to illuminate the texts individually just as they had done with manuscripts.Footnote 4

In theory, printed texts could eliminate errors through careful editing and typesetting. However, early printed versions actually increased distortions and corruptions of texts, and when errors were produced in print, they were disseminated far more widely than they would have been with manuscript technology.Footnote 5 Besides errors that arose in the process of copy-reading or typesetting, the need to print pages out of sequence on folded sheets of paper also contributed to errors. In his 1492 treatise de laude scriptorum (In Praise of Scribes, Reference Trithemius and Behrendt1974), Johannes Trithemius argued that scribes were more careful than printers and that parchment was a far more durable medium than paper.Footnote 6

Nor was the technology itself entirely new. Ceramic movable type had been invented four centuries before Gutenberg in China, and even metal movable type had been developed in Korea two centuries before Gutenberg's time.Footnote 7 Printing was also known in the Muslim world long before being ‘invented’ in Europe.Footnote 8 The technology of the Gutenberg press was drawn from wine presses and cloth presses, which had come to Germany from the Romans. Gutenberg was a goldsmith by trade, and his true invention was to use brass casts to make unlimited uniform copies of letter sorts by pouring molten lead into the casts. His other key innovation was to develop an oil-based ink that would adhere to the metal type, since the traditional water-based ink used in woodblock printing would not stick. Here, he was inspired by Flemish painters, who mixed pigment into a linseed-oil varnish (Steinberg, Reference Steinberg1974, p. 25). Gutenberg's genius, then, was in bringing together tools and resources (i.e., available designs) from different spheres of activity – goldsmithery, bookmaking, wine making, painting – and synthesizing them in a novel way to satisfy a rapidly growing need for more efficient production of texts (Figure 6.1).

Figure 6.1 Available designs in the development of the printing press

Print and society

If the technology existed across the Asian continent long before it did in Europe, why didn't printing become widespread there before it did in Europe? Essentially because of different social conditions. In the case of China, printing was under the strict control of its rulers, who limited the number of print copies and restricted their dissemination to an elite. Furthermore, unlike Europe, where printing was immediately realized as a profit-producing industry, China did not have a capitalist economy. In the Muslim world, printing encountered powerful social resistance.Footnote 9 The reasons were partly religious, partly economic, and partly technical. With regard to religion, only the handwritten word was considered sacred. As Ogier Ghiselin de Busbecq, the European ambassador to the Ottoman Empire, wrote from Istanbul in 1560: “the scriptures, their holy letters, once printed would cease to be scriptures” (Káldy-Nagy, Reference Káldy-Nagy and Káldy-Nagy1974, p. 203). Furthermore, the practice of cleaning typesettings with hog-bristle brushes made it all the more inconceivable to print the name of Allah in type (ibid., p. 203).Footnote 10 Another reason was economic: printing would have supposedly put thousands of manuscript copyists out of work.Footnote 11 A third reason for the late adoption of printing had to do with the complexity of typesetting Arabic script. Because Arabic is a cursive script, letters are connected to one another and have different forms when they are in initial, medial, final, and free-standing positions. This means that a complete Arabic font, including vowel marks, can run to over 600 glyphs (Bloom, Reference Bloom2001, p. 218). Typographically, then, Arabic presented unique difficulties – and required greater typesetting skill – than languages that could be printed in uniform, discrete characters like English or Chinese. Ironically, the movable type printing press became widely used in the Islamic world only after the French occupation of Egypt in 1798.Footnote 12

Clearly, the mere existence of the technology of movable type was not sufficient for its extensive use; the right social ecology was needed as well. The comparatively rapid success of printing in Europe depended in particular on three key factors. One was a good supply of readers to make the mass production of books be economically feasible. Related to this were the entrepreneurial ambitions of printers, who were motivated by the commercial potential of publishing texts. The third necessary ingredient was an abundant supply of a durable yet inexpensive material on which to print.

Concerning readers, a “vigorous literate culture” (Clanchy, Reference Clanchy1979, p. 8) had been developing in Europe since the late twelfth century, when the growth of universities created an unprecedented demand for written texts, commentaries, and reference works. In cities such as Paris, Bologna, and Oxford, lay scribes worked in guilds to hand copy academic texts long before the arrival of print (Steinberg, Reference Steinberg1974). By the late fifteenth century, a growing population (and growing numbers of readers) combined with an economy on the ascent translated into a viable market for booksellers. The printing press may have amplified the demand for books, but it did not create it in the first place. Like cuneiform writing in Mesopotamia, the print ‘revolution’ co-evolved with, but did not cause, increased societal complexity.

Within the first fifty years following Gutenberg's invention, hundreds of printing presses were established in cities throughout Europe, and Febvre and Martin (Reference Febvre, Martin and Gerard1976) estimate that some twenty million books were printed (p. 248). Compared to the limited capacity of scribal guilds, this was clearly a huge expansion in the scale of production. Printers sought the most lucrative markets – university towns and commercial centers – and developed standardized procedures to maximize speed and productivity.

The third requirement, the plentiful availability of inexpensive material on which to print, was satisfied by paper. Introduced into southern Europe from the Muslim world in the eleventh century, paper was strong, smooth, flexible, and could be produced in uniform formats. Because it was made from recycled linen rags, paper was much cheaper than parchment, and could be produced in virtually unlimited quantities. If early printers had only had parchment to print on, their books would have been almost as expensive as handwritten manuscripts, and printing would not likely have taken hold to the extent that it did.

Although printing presses and books proliferated rapidly in Europe in the first fifty years after Gutenberg printed his first Bible, they did not immediately change the existing power dynamics of literacy. Three-quarters of the books published were in Latin and most readers were clerics or academics, so print began as a product of – and for – a very circumscribed segment of society. Although the printing press was initially welcomed by the Church because it increased dissemination of Bibles and other religious materials, it soon became apparent that the new print era also heralded unwelcome changes. The Church's tight control over the production of books was threatened, as was the Church's very language (since the Bible could now be published in vernacular languages as well as Latin). Mass dissemination of print also threatened communal recitation of prayers by making it possible for individuals to read and contemplate scripture privately. Circulation of the printed writings of Martin Luther, Jean Calvin, and other Protestant reformers so enraged France's King François I that he issued a series of restrictive laws, culminating in a 1535 decree outlawing all printing (by punishment of hanging) and ordering the closure of all book shops.Footnote 13

Nor did the printing press immediately usher in more widespread literacy. Non-literate aristocrats could hire readers and scribes for their literacy needs. Peasants, on the other hand, had little need of or access to writing. It was among the emerging middle classes that literacy held the greatest promise, for in a culture that was increasingly in demand of written records, the ability to write assured a livelihood. But this demand for written documents preceded the printing press, and for many lay scribes, print was more of a threat than a boon. During the sixteenth and seventeenth centuries, literacy rates in Europe remained low, especially in rural areas, and it wasn't until the late eighteenth century – more than three hundred years after Gutenberg's invention – that literacy rates came to approach even 50 percent among men in France and 60 percent among men in England.Footnote 14

Scholarly practices remained largely unchanged following the arrival of the printing press. Given the still relatively high cost of books, scholars continued to copy texts by hand, including texts that had been produced on a printing press.Footnote 15 Even in the early eighteenth century, access to university libraries was often limited to professors, and teaching practices typically followed the medieval tradition of reading aloud from authoritative texts and commenting upon them (Melton, Reference Melton2001, p. 90).

If print did not suddenly transform an oral society into a literate one, it was nevertheless appropriated in interesting ways into oral culture. Historian Natalie Zemon Davis writes that printing in sixteenth-century France (like the Internet today) established “new networks of communication…new options for the people…new means of controlling the people” (1975, p. 190). She explains how in village veillées in which books were read aloud, ‘reading aloud’ meant translating texts into the local dialect so the villagers could understand. In the case of long works, it also meant editing while reading. Reading was therefore far from a simple vocalization of print – it was a wholesale recreation and performance of texts. Non-literate peasants on the listening end were not passive recipients of information, Davis tells us, but participated in their own way in the new world of printing – not by reading and writing, but by actively interpreting and using the information that was read to them. Orality and literacy therefore suffused and transformed one another.

Similarly, Harold Love points out that even in late sixteenth and early seventeenth century England many key cultural texts were oral, and texts that were written were often intended for oral performance (2002, pp. 100–101). Because people's actual experience of texts often involved a mixture of speech, print, and handwriting, there was no clear-cut transition from ‘oral’ to ‘literate’ cultures, and practices in early modern Britain were better characterized as “intricate negotiations between the media” (ibid., p. 102). In the next chapter we will see that the same holds true today, and that “negotiations between the media” aptly characterizes twenty-first-century literacies as well.

Finally, a common assertion is that the printing press was the key factor in disseminating Luther's and other Protestant reformers’ ideas during the Reformation in Germany. It is undeniable that print played a significant role, but given the low literacy rates in sixteenth-century Germany, Scribner and Dixon (Reference Scribner and Dixon2003) estimate that only 2.3 percent of the German population could have encountered Luther's ideas via print. They argue that most information was passed by word of mouth through personal contacts, and that the real role of print was to inform ‘opinion leaders’ – literate and influential people – who disseminated the new ideas through oral communication, with sermons being the most powerful communication platform (p. 20). What print did, then, was to accelerate and broaden dissemination of information to such opinion leaders, creating more networks and spheres of influence through which new ideas could be spread.Footnote 16

Print and language

Probably the most significant way in which the printing press influenced language was to introduce an ethos of uniformity and standardization. This happened at two levels: at the visual surface of the printed text and more broadly in the standardization of language use and in the determination of what counted as a language. Although manuscripts had been written in standard hands, variability from one scribe to the next was inevitable, and of course any individual scribe's handwriting could vary slightly from one writing session to the next, depending on posture, fatigue, ambient temperature, and so on. As manuscripts were transformed into printed texts, such individual variability was lost, as the same letter forms were used consistently within and across texts produced by a given printer. As Amalia Gnanadesikan puts it, printing shifted the process from creating letters to selecting letters (Reference Gnanadesikan2009, p. 252). This shift was later extended by the typewriter and the computer, which allowed texts to be composed from the outset by means of selecting letters.

On a broader level, the printing press provided the impetus for European vernaculars (which had been mostly spoken) to be written, codified, and thereby legitimized as languages. Prior to the arrival of print in Europe, a diglossic linguistic configuration existed whereby the language of the Church, authority, and literacy was Latin, and the language of daily life in society was the regional vernacular. Although in the early years of printing most texts were published in Latin, by the sixteenth century printers produced increasing numbers of vernacular texts, motivated by the prospect of broadening their market base. The wide circulation of such texts helped consolidate and establish the literary languages of Europe and ultimately contributed to the decline of Latin.

Of course, there were many regional vernaculars. Those chosen for print (i.e., those most closely associated with power) became standard languages, whereas those that remained unprinted took on the status of provincial dialects. Early grammars for the most prestigious vernaculars were developed during the sixteenth century (Nebrija's 1493 Grammatica Castellana being a notably early contribution). These grammars provided a basis for filtering out many of the regionalisms that had marked earlier vernacular publications.

Print also gradually introduced new formatting, spelling, and punctuation conventions. Although many of the earliest printed books followed manuscript tradition in terms of layout and punctuation, new practices came to be established. For example, whereas rubrication (literally ‘red-inking’) had been used to show divisions in a text, printers found this too costly and made use of different fonts for headings, initial words, and main text. Quotations, which in manuscripts had been underlined in red ink, came to be indicated by quotation marks. On the other hand, paragraphs, which had been explicitly marked with symbols (such as the pilcrow, ¶), came to be marked by white space (indentation of the first line, or most recently, a preceding blank line in ‘block’ paragraphing) (Baron, Reference Baron2000, p. 169).

Before print, spelling was highly variable, and abbreviations were commonly used. Producing a manuscript book was expensive, not only in terms of labor, but also in terms of materials (a Bible written on parchment required the skins of hundreds of sheep). Because of this expense, scribes saved space wherever possible and developed elaborate abbreviation schemes (Cappelli's Lexicon Abbreviaturarum lists some 13,000 Latin abbreviations).Footnote 17 Printed books, which at first attempted to reproduce manuscripts as closely as possible, preserved abbreviations along with handwriting style, ligatures, illuminations, and layout conventions. Similarly, in both manuscript and early print books, word spellings were adjusted by adding or subtracting letters to fine-tune the length of each line of text so that a flush right margin could be maintained. Once printed, spellings took on a certain authority, and some of the ‘anomalous’ modified spellings became standard when dictionaries were made. Other techniques scribes and printers used to make text fit were adding or deleting whole words, increasing or decreasing space between words or between letters, substituting words for phrases, or vice versa (N. S. Baron, Reference Baron2000).

Abbreviations gradually came to be less used in printed books because printers did not want to risk losing readers who might not understand what they meant. Moreover, as they shifted from expensive parchment to comparatively cheap paper, printers were less compelled to maximize the efficiency of the texts they printed by using abbreviations.

The history of these early printing practices reminds us that spelling is not ‘natural’ and is often shaped by the technological medium in which it is used. Moreover, the control and standardization of spelling (as well as grammar) is also a matter of social forces whereby the power elite set standards, and ordinary people's access and adherence to those standards largely determines their social mobility.

It is clear that the printing press both reflected and shaped the cultural evolution of Europe. However, as mentioned earlier, print was not a catalyst for social change in Central and East Asia. We will now return to this story to see how another technological innovation – paper – was.

Paper

The invention of paper is commonly attributed to Tsai Lun, a eunuch at the court of the Han emperor Wu Di in China in 105 ce, although archeological findings from the Xuanquanzhi ruins of Tunhuang in China's northwest Gansu province place the invention of paper some two hundred years earlier (Yi & Lu, Reference Yi, Lu, Allen, Zuzao, Xiaolan and Bos2010). Made from rags, hemp, or tree bark dissolved in water and then sieved through woven cloth stretched over a bamboo frame, paper was from the beginning made of waste products and therefore relatively cheap to produce.Footnote 18 It was also very light in weight. These were substantial advantages over competing writing materials such as bamboo, which was heavy, and silk, which was expensive. Over time, production techniques were perfected and paper was made in various qualities for different purposes. One key use of paper was for printing, which evolved from the ancient use of carved stone or bronze seals to make impressions on clay or silk, and from the practice of making rubbings from stone and bronze reliefs (Bloom, Reference Bloom2001, p. 36).

Papermaking spread to Korea, where the product was refined to new levels of quality, and subsequently to Japan in the early seventh century. Paper was introduced to Central Asia in the eighth century, allegedly by Chinese prisoners taken captive by Arabs during the Battle of Talas, although archeological evidence from Samarkand suggests that paper may have existed there centuries earlier (ibid., pp. 43–45). In any event, with the unification of West Asia under Islam, the practice of papermaking migrated westward to Baghdad, Mecca, Egypt, Morocco, and finally to Spain. According to Bloom (ibid.), Spanish Christians began to use paper well before the year 1000, and their use of paper grew as they came to dominate greater areas of the Iberian peninsula. However, it was only after the arrival of the printing press in the fifteenth century that paper gradually came to replace parchment as the preferred medium for recording European thought.

In Islamic civilization, it was paper, not printing, that made possible huge advances in learning and new ways of thinking between the eighth and the sixteenth centuries. Paper was originally produced as an adjunct to papyrus and parchment to serve the administrative needs of the huge bureaucracy that developed during the Caliphates and the Ottoman Empire.Footnote 19 But its light weight, availability, and relatively low cost made it a catalyst for social, intellectual, and artistic innovation. The Hindu numeral system had spread through the Islamic world by the ninth century, and by the tenth century, the mathematician Abu al-Hasan Ahmad ibn Ibrahim al-Uqlidisi adapted the Hindu system to the affordances of paper and ink, developing the notion of positional decimal fractions and showing how to perform calculations without deletions (ibid.). Scholars collected and codified the oral traditions of Muhammad on paper, Greek bookrolls were translated into Arabic and written on paper, and new forms of literature, such as cookbooks and The Thousand and One Nights were copied on paper and sold (ibid., p. 12).

Paper provided a convenient means for textualizing not only language, but also artistic designs, architectural plans, genealogy charts, and battle plans, which could be composed in one place and put to use in another (ibid., pp. 215–16). This ability to separate things from their original context of conception and to recontextualize them in new settings, in new mediums, for new purposes, allowed ideas to be not only disseminated, but also transformed:

Drafters could abstract a design from one place and apply it in an entirely different setting. The scale, too, might also change dramatically. An artist could, for example, draw a design observed on a Chinese carved lacquer bowl and put the drawing aside in a portfolio or album. A bookbinder or plasterer might come upon the drawing and transfer the design by means of stencil or pounce to another medium, perhaps a molded-leather book cover or a carved and painted plaster panel many times the size of the original lacquer bowl. The bookbinder or plasterer would never have seen the bowl, and the intermediary drafter might have had no inkling that his drawing would be translated into leather or stucco. Designs were divorced from their original contexts, and this free-floating quality of design became a feature of later Islamic art, particularly the art made for the court.

The idea of divorcing design from its original context (like writing, which divorces language from its original context of utterance) is crucial to understanding relations among language, technology, and literacy. In Chapter 2 we saw how this idea is extended in today's digital texts, whose content is divorced from form (at the level of machine representation) in order to allow those texts to be appropriately scaled across a broad variety of devices.

Paper and language

In the Islamic world, the most important written text was of course the Qur'an. Although the oral literary tradition based on memorization and recitation remained vital, the written Qur'an assumed an increasingly important role when paper became available. Religious scholars were at first reluctant to transcribe the Qur'an on paper, as it was normally copied on parchment. However, given that a single Qur'an required the skins of about three hundred sheep, paper eventually won out. The oldest paper edition dates to the tenth century.

The medium of paper had an effect on the Arabic script. The Kufic script, traditionally used to write the Qur'an on parchment, and characterized by simple geometric shapes, gave way to an angular ‘broken Kufic’ that contrasted thick and thin strokes and was sometimes vertically elongated. This new script was well suited to the characteristics of paper and carbon ink and was more legible and easier to write. It was used for a wide variety of purposes, both secular and religious, and was popular among Christians as well as Muslims. This broken Kufic script led to the development of a more flowing rounded cursive similar to the Arabic script used today (Bloom, Reference Bloom2001, pp. 103–106).

The physical format of the Qur'an also changed with the accepted use of paper. Parchment editions of the Qur'an had been in landscape (horizontal) format, which differentiated them visually from Christian and Jewish scripture, which respectively took the form of codices with vertical orientation and bookrolls with horizontal orientation. Paper editions of the Qur'an, however, were in portrait (vertical) format (ibid., pp. 103–104). In the next chapter on electronically mediated discourse, we will again see that changes in the material medium are accompanied by changes in the form of writing.

The form of paper itself also had great cultural significance. When mainstream production of paper shifted to Europe in the fourteenth century, European paper began to be imported in Syria, Egypt, and North Africa. But the watermarked images of animals and symbols (and Christian crosses) again raised questions of the suitability of paper for religious writing (ibid., p. 13). Once again, material and technological innovations find themselves subject to sociocultural filters when it comes to their adoption.

With respect to literacy and the overlay of written tradition upon oral tradition, the development of paper has been described as the “industrialization of memory” (Debray, Reference Debray1991). And over the centuries, paper has certainly played a key role in the industrialization of society. Yet today, in the face of digitalization, which at least theoretically makes paper records obsolete (Sellen & Harper, Reference Sellen and Harper2002), the paper industry often highlights the ‘human’ face of paper: a medium of sentiment, a medium of personal meaning that binds us to our past and that we bequeath to our loved ones, as one paper company advertises on its website:

Birth certificates. Wedding albums. Autographed books and baseball cards. Paper mementos are among our most treasured possessions. And although digital media is gaining an increasingly important role in today's world, it can never replace the warm, tactile touch of paper. After all, scrapbooks don't require startups or shutdowns. Magazines never crash. And while flash drives are a convenient way to store images, wearing one around your neck just doesn't have the same effect as your grandmother's antique locket. Computers may keep records – but paper leaves a legacy.Footnote 20

Although it is a technology, paper has become naturalized, imbued with human significance both at the level of society and at the level of the individual.

In the next section we turn to a technology of the nineteenth and twentieth centuries that combined paper with print, but was designed for widespread personal use by individuals.

The typewriter: a personal printing press

The origins of the typewriter go back to at least 1714, when an English engineer named Henry Mill applied for a patent for his machine for transcribing letters that would print letters on paper “so neat and exact as not to be distinguished from print” and whose impression would be “deeper and more lasting than any other writing, and not to be erased or counterfeited without manifest discovery” (Bliven, Reference Bliven1954, p. 24). However, it took over 150 years and dozens of other similar inventions before a marketable machine actually named ‘Type-Writer’ arrived on the scene. Attributed to Christopher Latham Sholes, a newspaper editor and politician from Wisconsin, the prototype Type-Writer was inspired by the piano, with alternating black and white keys that triggered typeface hammers that brought an inked cloth ribbon into contact with paper to leave a printed impression. The ribbon had to be hand-inked, the hammers produced only upper case letter forms, and typists couldn't see what they had typed until they had completed an entire line of text. Nevertheless, the arrival of the ‘literary piano,’ as the editors of Scientific American had called an even earlier prototype, signaled the obsolescence of “the laborious and unsatisfactory performance of the pen” and heralded “a revolution as remarkable as that effected in books by the invention of printing.”Footnote 21

After failing to interest Western Union in his machine, Sholes turned to Philo Remington, whose company manufactured firearms and sewing machines. Demand for firearms was down since the end of the Civil War, and the company had extra production capacity. Remington bought Sholes's patent and marketed the first Remington typewriter in 1874. Sales were slow, given that the Type-Writer cost $125, but one of the early adopters was Mark Twain, who, despite his distaste for the machine, became the first writer to submit a typescript to a publisher.Footnote 22

One particular technical problem that Sholes and his collaborator James Densmore had faced was that the type keys often jammed if they were struck in too quick succession, requiring the typist to stop and pry the type bars apart. The original keyboard displayed the alphabet in sequential order but split across two rows of keys, as follows:

- 3 5 7 9 N O P Q R S T U V W X Y Z

2 4 6 8. A B C D E F G H I J K L M

Densmore suggested reordering letter keys in an unfamiliar pattern to slow down typing. Sholes consulted with his mathematician brother-in-law, asking him to reorganize the key configuration so that the type bars that most often got stuck would be separated. Many calculations and experiments led to the four-row ‘QWERTY’ configuration that is still used on English language keyboards to this day. Sholes claimed that this scientific arrangement of the keys would boost typists’ speed and efficiency. It may have done so to the extent that typists spent less time prying apart stuck type bars, but, keeping in mind that typing was done with only two fingers at the time, the QWERTY layout actually made typists’ movements less efficient because it maximized the distance that their fingers needed to travel in typing most words (Beeching, Reference Beeching1974). Besides reducing the incidence of jams, the QWERTY layout also positioned all of the letters in the word TYPEWRITER in the top row of letter keys, a feature that salesmen used to full advantage when they demonstrated the machine.

Although a number of other keyboard layouts have been invented since Sholes's time, and these have been claimed to be more scientific and efficient (most notably the one developed by Dr. August Dvorak in 1932), none has been able to replace the QWERTY standard.Footnote 23 Once again, we see that technological factors may sometimes be an impetus for change, but in many cases they are not, and that what makes the difference is the social context of the time and place. The technical problem that QWERTY targeted no longer exists – it has been irrelevant ever since the development of the typeball and was permanently put to rest with the personal computer. And yet QWERTY has prevailed – not because of any inherent technical superiority, but rather because of social inertia born of comfortable human habit and the consequent economic risk that any manufacturer proposing a new standard would face.

Unlike the technologies of handwriting and print, which do not favor certain scripts over others, the typewriter and the computer keyboard that evolved from it have a clear structural bias toward alphabetic writing. This is not surprising: a limited character set was a precondition for the typewriter; without an alphabet, the typewriter would not even have been conceivable. Attempts were made at adapting the typewriter to other scripts. For example, Underwood developed a Japanese typewriter based on the Katakana syllabary in 1923. However, because it could not include Kanji (Chinese) characters, which were typically used in conjunction with Katakana, the typewriter was not even marketed as a writing tool, and its use was largely limited to the preparation of billing statements in large companies (Gottlieb, Reference Gottlieb2000). Another limitation was that the typewriter imposed horizontal writing, whereas Japanese writing was most commonly formatted vertically. Finally, the typewriter's need to space characters uniformly created an unusual appearance on the page, and typists had to remember to insert word spaces to avoid ambiguities (Gnanadesikan, Reference Gnanadesikan2009, p. 129). A later typewriter designed in the 1960s for the Hiragana syllabary suffered a similar fate. It was not until the late 1970s, when word-processing technology made it possible to input Kana characters and convert them to Kanji, that the potential of the personal printing press was finally realized – but at the staggering cost of $37,000 per machine (ibid., p. 130). Today, Japanese computer keyboards retain the QWERTY keyboard, but they also print kana characters on the keys, facilitating the inputting of multiple scripts.

If the typewriter's design posed problems for languages written in non-alphabetic scripts, it contributed to the global spread of alphabetic writing by influencing decisions about what script to use for newly written languages. As Gnanadesikan points out:

In the newly global economy that came in the wake of colonialism and saw the growth of new multinational corporations, the result was to advantage those nations whose scripts fit easily onto a typewriter keyboard.

In sub-Saharan Africa, for example, the Roman alphabet (sometimes extended with additional letters) has often been deemed the most practical means to transcribe languages that have not traditionally been written “because it looks international, because individuals already educated in the colonial languages (English, French, and Portuguese) already know it…and because of the lasting, arguably pernicious influence of the typewriter” (ibid., p. 261).

With electronics, type keys were freed from singular mechanical connections to typeface characters. Any key could theoretically be programmed to produce any character – in any language or script. This is the principle that allows the QWERTY keyboard to be used in Chinese, for example. Perhaps the most common way to write Chinese characters on computers is to use the standard Roman alphabet keys on a QWERTY keyboard to write in pinyin (Roman phonetic transliteration), which activates a menu of Chinese characters that correspond to that phonetic representation. The user then chooses the appropriate Chinese character from the menu, and this is inserted into the text. An alternative procedure (called Wubi) maps components of Chinese characters (radicals and strokes) onto the QWERTY keyboard. One enters these character components in the same sequence as one would handwrite them on paper. Wubi-configured keyboards are divided into five regions, each designating a different type of stroke: horizontal, vertical, left-falling, right-falling, and hook. For experienced typists, this way of entering characters is far faster than using pinyin. Yet another way of entering Chinese characters is with finger movements on a touchscreen – pattern recognition software converts the hand-traced forms into printed characters.

Today, the accessibility of self-publishing via websites, blogs, social networking sites, and online forums has led to the production of a staggering amount of writing published by non-professional writers. The publishing industry's traditional role in legitimizing text and gatekeeping what can become ‘public writing’ has become to some extent democratized. This significant change has also made possible the sharing of what was once specialized knowledge of professional printers with ordinary individuals. One important such area of knowledge has to do with the semiotics of typeface.

The semiotics of typefaces

Typographers often seek to make type as ‘transparent’ as possible, not distracting the reader's attention from the writer's work (Warde, Reference Warde1956). At the same time, however, there is a very long tradition, from Egyptian hieroglyphs to illuminated manuscripts to advertising today, of texts that purposely call attention to their surface visual form, beckoning the reader to look at them as well as through them (Lanham, Reference Lanham1993). Calligraphy and typography are semiotic modes in their own right, contributing their own nuances to the meanings prompted by words (van Leeuwen, Reference van Leeuwen2005a). Fonts put new faces on words, and in so doing they affect our reading of those words. Consider how font design characteristics add a dimension of coolness, warmth, or cutesiness to a greeting.

Conversely, we can be led to make associations between different words by means of similar typographic design, as in a 2010 Greenpeace campaign aimed against Nestlé, the company that makes KitKat candy bars, for buying palm oil from companies that destroy Indonesian rainforests and pushing orangutans toward extinction. The typography and red color was designed to lead readers to make an association between a KitKat bar and the word ‘killer,’ as shown in Figure 6.2.

Figure 6.2 Greenpeace's appropriation of the visual design of the Nestlé KitKat logo

Like language, typeface has meaning potential, but not predetermined meaning as in a code. Typefaces, like other aspects of style, get culturally appropriated and therefore can ‘mean’ different things in different cultural and historical contexts. For example, the Nazis appropriated Gothic script as a symbol of German nationalism; stickers from the early years of the Third Reich displayed slogans like “Feel German, think German, speak German, be German, even in your script” (C. Burke, Reference Crystal1998, p. 148). However, in 1941, when Hitler realized the importance of communicating his case to the wider world, he rejected Gothic script, calling it a Jewish invention (“Schwabacher-Judenlettern”), and decreed that the Antiqua typeface was to be the “normal script” of the German people (Steinberg, Reference Steinberg1974, p. 293). In early modern England, on the other hand, Gothic script had been appropriated by the common people because it was thought to be the easiest script to read.

Today, Helvetica is an example of a typeface that is both revered and reviled, and is even the subject of a feature film.Footnote 24 Developed in 1957 by Swiss typographer Max Miedinger, Helvetica was designed to be modern, legible, comforting, and ‘neutral’ in the sense that the typeface itself should not convey any meaning and thus be ‘open’ to interpretation. Many cities have adopted Helvetica for their public signs displaying the dos and don'ts of street life. Critics of Helvetica condemn its lack of rhythm and contrast, its boring predictability, and its overuse in society.

Typefaces might be considered expressions of identity – just like clothes, haircuts, eyeglasses, and other visual design accouterments – and in this light it is notable that social networking sites such as Facebook and MySpace allow users to modify typefaces in their personal spaces (the default fonts are Lucida Grande and Verdana respectively). However, in comparison with the many ways in which social networking users are unwittingly being homogenized by algorithms that make them behave in consistent, predictable ways, the typeface choices may be little more than a superficial gimmick to provide an illusion of agency.

Because visual design features such as typeface and layout contribute in significant ways to the meanings people make and take from texts, there is a substantial responsibility to understand the visual pragmatics that underlies their use. Kress and van Leeuwen are two scholars who have contributed much valuable work in this area (Kress & van Leeuwen, Reference Kress and van Leeuwen1996, Reference Kress and van Leeuwen2001; van Leeuwen, Reference van Leeuwen2005a, Reference van Leeuwen2005b, Reference van Leeuwen2006) and we will consider the pedagogical implications of this topic in Part III.

Conclusion

We have seen in the last two chapters how technologies of literacy have affected humans’ relationship to language. The invention of writing allowed language to be separated from speakers’ bodies and distanced from the original context of utterance. Paper, by providing a cheap, light, foldable medium, offered wider access to writing and drawing, and thus broader use of textualization and recontextualization. Print introduced the mechanization of writing, imposing another degree of spatial and temporal distance between the author and the final form of the work (a distance that had been introduced during the manuscript era by scribes). The typewriter made a simplified version of print accessible to the masses; it made printing personal and portable, but still maintained mechanical intermediaries between writer and written product.

The story of paper and print illustrates how material, social, and individual dimensions interact in producing new designs of knowledge, learning, and social life. The fact that printing did not become widespread for centuries in either China or the Muslim world leads us to the conclusion that it was not the technological innovation of movable type alone that made printing viable. Rather, a favorable social–economic–material ecology had to be in place to allow the technological innovation to take hold. Paper and print influenced the social–economic–material ecology by making it possible to circulate ideas to an unprecedented degree, and, for the first time, to archive texts at a potentially unlimited number of sites. In so doing, paper and print changed both the individual's and society's relationship to written language. These developments were crucial for the development of nation-states, the notion of the public sphere, and the consolidation of academic disciplines, especially the sciences.

Today, digital devices introduce a further stage of transformative separation between humans and language (albeit hidden from the eye), as HTML and other computer codes intervene between the embodied biomechanical movements of writing and the display of signs on screens of various types and sizes. The connections between these devices also make it possible for individuals to disseminate their writing with unprecedented speed and breadth of dispersal. It is to electronically mediated writing that we turn in the next chapter.

Communication is a kind of interaction that actively seeks variety. No matter how firmly custom or instrumentality may appear to organize it and contain it, it carries the seeds of its own subversion.

Written communication in electronically mediated environments involves conventions, for sure, but it also affords the individual an unusual degree of leeway to invent new forms, which in some cases become socially accepted as new conventions. Some see the use of new forms as an illustration of the degradation of language. Others welcome it as a celebration of linguistic creativity. This chapter argues that such debates miss the point – that what is important about the special forms found in electronically mediated discourse is that they provide their users with a special identity and sense of belonging to particular discourse communities.

Electronically mediated writing runs the gamut from the most formal, polished expression to the most spontaneous and improvisational. On the formal end of the spectrum, electronically mediated writing looks very much like the writing found in print media (in fact, print is now almost always derived from electronic copy). When online writing is used for personal communication, on the other hand, it sometimes presents new forms and conventions, as in this texting exchange (to which we will return later):

A: wuz^

B: nmhu?

Non-standard forms and conventions arise partly from the fact that interactive electronic discourse is often space-limited or time-pressured. For example, Twitter has a 140-character limit and text messages have a limit of 160 characters.Footnote 1 In real-time interaction environments like chatrooms, people have to understand and respond quickly, as messages may scroll off the screen within a few seconds if there are many participants writing actively. Even if there are only two participants, lags are to be avoided because they disrupt the rhythm of conversation. Consequently, responses tend to be produced quickly and are short in length. Users have developed a plethora of reductions, abbreviations, acronyms, neologisms, emoticons, and amalgams of letters and symbols to deal with these space and time limitations.Footnote 2 For example, consider the following tweet relaying a statement by US Secretary of Education Arne Duncan:

RT @actfl: “W/i the brdr ctxt of a qual ed 4 evry stud, intl ed & fl stdy r vital 2…stud's full access to the world.” Sec of Ed Dunc …

This tweet incorporates vowel deletion (e.g., brdr ctxt), truncations (e.g., qual ed), initialisms (fl), numerals used in rebus fashion (4, 2), and letter/symbol abbreviated forms (e.g., w/i). ‘RT’ is a Twitter-specific device signifying that this is a forwarded ‘re-tweet’ and ‘@actfl’ means that the message was originally posted on the American Council for the Teaching of Foreign Languages Twitter site. The extreme abbreviation of the text is necessitated by Twitter's 140-character limit. The original quote from the Secretary of Education ran to 240 characters:

“Within the broader context of ensuring a quality education for every student, international education and foreign language study are vital to giving those students full access to the world around them.” Secretary of Education Arne Duncan

Eleven characters and spaces were required for the re-tweet acknowledgment (RT @actfl), leaving 129 for the message, presenting the writer with the task of reducing the footprint of the original statement by 54 percent while maintaining its full content.

Besides the formal surface features of this tweet, there is an interesting communicative characteristic related to the fact that this message is being ‘re-tweeted’ by various individuals who did not articulate the abbreviated word forms, much less compose the message. As such texts are recirculated across networks they become farther and farther removed from what Goffman (Reference Goffman1981) called the author (the person who composes the words) and the principal (the person whose position and beliefs are represented by the words). In this case, Arne Duncan is the principal and author, and the original tweeter (whose identity is unknown) is what Goffman called the animator, the one who actually articulates the words of the utterance or text. This is not new in the sense that scribes, journalists, and editors have been recasting other people's language for centuries, but it is new in that formal modifications of others’ words can now be made by anyone with a digital device. Although the author and principal, US Secretary of Education Arne Duncan, remains identified, his words have taken a radically new form. Is the meaning the same? Yes and no. Although the substance of Secretary Duncan's remarks has been retained, the surface features of the tweet affect its comprehensibility and what we might call its ‘identity aura.’ The abbreviated language projects a hip, youthful glow that may not normally be associated with Secretary Duncan, and moreover, the message can become associated with the various people who re-tweet it. Twitter scholar Dhiraj Murthy (Reference Murthy2013) points out that how much a tweet gets recirculated often has more to do with who is re-tweeting it than who its original author was. If this is true, it begs the question of whether our traditional notions of textual authority are valid in the Twitter world.

To suggest that abbreviations and other modifications in tweets, text messages, and chats are motivated only by space restrictions or a need for quick typing would be misleading. Similar forms are frequently used even when there is no particular space limitation or time pressure, to create a friendly, playful mood or to enact certain identities, as in the instant messaging (IM) exchange between two undergraduates shown in Table 7.1.

Table 7.1 IM exchange between two undergraduates

| Message | Time interval from previous entry |

|---|---|

| 1 A:ohaider | |

| 2 B:ohai2u | 2.48 |

| 3 A:howru | 0:24 |

| 4 A:<33 | 0:02 |

| 5 B:haha i'm ok | 0:06 |

| 6 B:it's been a really lazy Sunday | 0:06 |

| 7 A:gud :-) | 0:09 |

| 8 A:as it should be | 0:01 |

| 9 B:what did you do today | 43:12 |

| 10 A:judged debates | 0:15 |

| 11 A:i'm at the glenbrooks | 0:02 |

| 12 B:oh | 0:02 |

| 13 A:hha | 0:13 |

| 14 A:hot weekend | 0:01 |

| 15 A:i kno | 0:01 |