1. Introduction

Stochastic gradient descent (SGD) is traditionally focused on finding the minimum value of a function, also called the objective function in the optimization literature [Reference Ghadimi and Lan15, Reference Jin, Netrapalli, Ge, Kakade and Jordan21, Reference Mertikopoulos, Hallak, Kavis and Cevher25, Reference Robbins and Monro30]. It has proven to be an extremely effective method in tackling hard problems in multiple fields such as machine learning [Reference Bottou, Curtis and Nocedal3], statistics [Reference Toulis and Airoldi31], and electrical engineering [Reference Golmant, Vemuri, Yao, Feinberg, Gholami, Rothauge, Mahoney and Gonzalez17].

The SGD algorithm and some of its properties are now well known especially in the context of machine learning. The iterative version of the SGD algorithm is given as

where

![]() $\gamma_k$

is called the step size (it is possible to choose

$\gamma_k$

is called the step size (it is possible to choose

![]() $\gamma_k=\gamma$

for all k),

$\gamma_k=\gamma$

for all k),

![]() $g(\! \cdot \!)$

the objective function (the function for which we want the optimum) and

$g(\! \cdot \!)$

the objective function (the function for which we want the optimum) and

![]() $\xi(x_k)_{k+1}$

is the error term that may or may not depend on the current point

$\xi(x_k)_{k+1}$

is the error term that may or may not depend on the current point

![]() $x_k$

. The SGD algorithm can be thought of as a stochastic generalization of the gradient descent (GD) algorithm, one of the oldest algorithms for optimization. One of the most popular methods for performing SGD in practice is to perform SGD via minibatching [Reference Bottou4], i.e. select a subset of the data available randomly and then perform the iteration step. From this point onward, we refer to this algorithm as minibatch SGD.

$x_k$

. The SGD algorithm can be thought of as a stochastic generalization of the gradient descent (GD) algorithm, one of the oldest algorithms for optimization. One of the most popular methods for performing SGD in practice is to perform SGD via minibatching [Reference Bottou4], i.e. select a subset of the data available randomly and then perform the iteration step. From this point onward, we refer to this algorithm as minibatch SGD.

Though initially proposed to remedy the computational problem of gradient descent, recent studies have shown that minibatch SGD has the property of inducing an implicit regularization, which prevents the over-parametrized models from converging to the minima [Reference Hoffer, Hubara and Soudry19, Reference Zhang, Bengio, Hardt, Recht and Vinyals35]. This phenomenon leads to an investigation [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33], which introduced a version of SGD with multiplicative noise as the multiplicative stochastic gradient descent (M-SGD) algorithm; the main focus of our research. Iteratively, the algorithm is given as

where

![]() $W_k$

is a random weight vector in

$W_k$

is a random weight vector in

![]() $\mathbb{R}^n$

,

$\mathbb{R}^n$

,

![]() $\tilde{z}=(z_1,z_2,\ldots,z_n)$

is the data and

$\tilde{z}=(z_1,z_2,\ldots,z_n)$

is the data and

is the gradient matrix with

![]() $l(\theta,z)$

serving as the loss function evaluated at each

$l(\theta,z)$

serving as the loss function evaluated at each

![]() $\theta \in \mathbb{R}^p$

and data point

$\theta \in \mathbb{R}^p$

and data point

![]() $z \in \mathbb{R}^p$

. In the version of the algorithm used in the previous literature the authors mainly consider the case where

$z \in \mathbb{R}^p$

. In the version of the algorithm used in the previous literature the authors mainly consider the case where

![]() $z_1,z_2,\ldots,z_n$

are fixed [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33]. In the context of deep learning, (2) has been extensively applied, and for certain problems it exhibits good performance with simulation evidence using CIFAR data [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33].

$z_1,z_2,\ldots,z_n$

are fixed [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33]. In the context of deep learning, (2) has been extensively applied, and for certain problems it exhibits good performance with simulation evidence using CIFAR data [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33].

In this work, we study the properties of the online version of the M-SGD algorithm. Informally, the main goal of our work is to ascertain universal behavior of the dynamics of the online M-SGD algorithm, given that the noise has the same mean and variance structure as that of a minibatch SGD algorithm. More specifically, we consider the following iterative algorithm:

\begin{align}x_{k+1}=x_k-\gamma \left(\sum_{i=1}^{n} w_{i,k}\nabla l(x_k,u_{i,k})\right)\!,\quad\textrm{for }k=0,1,2,\ldots\end{align}

\begin{align}x_{k+1}=x_k-\gamma \left(\sum_{i=1}^{n} w_{i,k}\nabla l(x_k,u_{i,k})\right)\!,\quad\textrm{for }k=0,1,2,\ldots\end{align}

where

![]() $\gamma$

is the step size, l is the loss function and at each step k we generate/refresh weights

$\gamma$

is the step size, l is the loss function and at each step k we generate/refresh weights

![]() $w_{i,k}$

and data

$w_{i,k}$

and data

![]() $u_{i,k}$

. We are interested whether the ‘M-SGD error’ defined as

$u_{i,k}$

. We are interested whether the ‘M-SGD error’ defined as

![]() $\gamma \left(\sum_{i=1}^{n} w_{i,k}\nabla l(x_k,u_{i,k})\right)-\gamma \nabla g(x_k)$

is approximately Gaussian for fixed

$\gamma \left(\sum_{i=1}^{n} w_{i,k}\nabla l(x_k,u_{i,k})\right)-\gamma \nabla g(x_k)$

is approximately Gaussian for fixed

![]() $x_k$

and whether the algorithm is in the order of the step size close to a diffusion with respect to some particular distance metric. Since the general SGD algorithm has shown to perform with considerable success for convex optimization problems and with some success for non-convex optimization problems [Reference Bottou, Curtis and Nocedal3, Reference Ghadimi and Lan15, Reference Polyak and Juditsky28] and since M-SGD is indeed an SGD algorithm, these questions are of interest as they help us understand the behavior of M-SGD or even SGD as an optimization algorithm. This line of thinking is inspired from the vast literature on Langevin Monte Carlo where diffusion approximations are a staple to analyze the algorithm [Reference Dalalyan9, Reference Dalalyan10]. More directly, understanding the M-SGD algorithm as a diffusion, allows one to analyze it in much more depth, mathematically. In fact, understanding the dynamics of the M-SGD algorithm aids in understanding how the minibatch size influences it in different regimes. It also opens multiple avenues on analyzing the M-SGD algorithm mainly in terms of large deviations, which may be helpful in analyzing escape times [Reference Hu, Li, Li and Liu20]. Note that in (3) we write

$x_k$

and whether the algorithm is in the order of the step size close to a diffusion with respect to some particular distance metric. Since the general SGD algorithm has shown to perform with considerable success for convex optimization problems and with some success for non-convex optimization problems [Reference Bottou, Curtis and Nocedal3, Reference Ghadimi and Lan15, Reference Polyak and Juditsky28] and since M-SGD is indeed an SGD algorithm, these questions are of interest as they help us understand the behavior of M-SGD or even SGD as an optimization algorithm. This line of thinking is inspired from the vast literature on Langevin Monte Carlo where diffusion approximations are a staple to analyze the algorithm [Reference Dalalyan9, Reference Dalalyan10]. More directly, understanding the M-SGD algorithm as a diffusion, allows one to analyze it in much more depth, mathematically. In fact, understanding the dynamics of the M-SGD algorithm aids in understanding how the minibatch size influences it in different regimes. It also opens multiple avenues on analyzing the M-SGD algorithm mainly in terms of large deviations, which may be helpful in analyzing escape times [Reference Hu, Li, Li and Liu20]. Note that in (3) we write

![]() $w_{i,k},u_{i,k}$

instead of

$w_{i,k},u_{i,k}$

instead of

![]() $w_i$

and

$w_i$

and

![]() $u_i$

. This is because we refresh both the random training data and the ‘weights’ at each step of the iteration.

$u_i$

. This is because we refresh both the random training data and the ‘weights’ at each step of the iteration.

There has been an enormous amount of work for SGD with both fixed step size and variable step size, with different error structures [Reference Dieuleveut, Durmus and Bach13, Reference Yu, Balasubramanian, Volgushev and Erdogdu34]. We primarily focus on the fixed step-size case as we are interested in the dynamics of the exploration of the algorithm. A special case of our setting is when the weights are hypergeometric or Gaussian, which has been studied in the previous literature both empirically and theoretically [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33]. The hypergeometric case is particularly popular as it is the minibatch SGD algorithm, which is the most popular SGD algorithm used. The Gaussian weights have also been studied and seem to give good performance in certain problems, even beating the minibatch SGD algorithm in those cases [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33].

Do note that the online version of the algorithm captures multiple real-world scenarios, which are of importance. Consider the problem where an organization has a continuous stream of data coming in at each instance that cannot be stored in a database. The organization, at a particular time instance, exhibits goods/products to customers whose valuations are independent and identically distributed (i.i.d.) from some population. Each customer generates a profit for the organization, which is a function of this valuation and an intrinsic parameter dependent on the organization in question. The organization wants to maximize this total profit at each particular instance based on the data available at that very moment. This is one of many scenarios that neatly fit into our framework. In addition, note that our framework helps us capture the fixed data case, i.e. where the valuations are fixed deterministic values for the entire duration of the algorithm. For more details we refer the reader to Remark 1 in Section 2.

The main results of our work, as presented in Theorems 3–6, exhibit that the M-SGD algorithm is close to a diffusion in the Wasserstein metric in the order of functions of the step size for standard and weak assumptions on the random weights and the objective function. This is exhibited in three different regimes based on the objective function and the weights. We also exhibit that the M-SGD error is Gaussian when the minibatch size m goes to infinity in Theorems 1 and 2. Note that the minibatch size is an artificial minibatch size since there is no minibatch here; but the weights have the same mean and variance as that of a minibatch SGD with the number of data points n and minibatch size m. This result not only holds merit on its own, as it shows that with large minibatch sizes the algorithm is essentially akin to an SGD with Gaussian error, but it also sets the tone for the following results on the dynamics of the algorithm. Note that due to the nature of the weights, the central limit theorem (CLT) is not trivial and indeed requires some care in the analysis. Our results give two very strong intuitions: the dynamics of the M-SGD should be independent of the distribution of the weights for large values of m, which is supported by the CLT result of the error and Theorems 3 and 4. We can also see that the dynamics of the algorithm is close to the diffusion in Theorem 4 in order of a similar function of the step size for considerably relaxed conditions on m in the strongly convex regime or when the random weights are positive. To our knowledge, this work has never been attempted before. There has been previous work on approximating SGD to diffusions [Reference Hu, Li, Li and Liu20, Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33], however, they do not establish rates with respect to the step size in the Wasserstein distance in different regimes based on the objective function and nature of the error, especially in the online setting.

The rest of the paper is organized as follows. In Section 2, we introduce notation and the algorithms/stochastic processes we consider in the rest of the work. We also introduce our assumptions that we exploit throughout the work. In Section 3, we introduce and discuss our main results. In Section 4, we summarize our main results and comment on the future direction of the work. The technical results and proofs are shared in the Appendix.

2. Preliminaries

2.1. Notation and algorithm

Throughout our work, we denote

![]() $g(\! \cdot \!)$

to be the objective function,

$g(\! \cdot \!)$

to be the objective function,

![]() $l(\cdot,\cdot)$

as the loss function and

$l(\cdot,\cdot)$

as the loss function and

![]() $\gamma$

as the step size/learning rate with

$\gamma$

as the step size/learning rate with

![]() $0<\gamma<1$

. We consider the problem in the regime where

$0<\gamma<1$

. We consider the problem in the regime where

![]() $T=\gamma K$

, where T is fixed. We refer to n as the sample size,

$T=\gamma K$

, where T is fixed. We refer to n as the sample size,

![]() $m(n)\le n$

, a function of n, as the minibatch size. Further, we shall assume

$m(n)\le n$

, a function of n, as the minibatch size. Further, we shall assume

![]() $m(n)\to \infty$

,

$m(n)\to \infty$

,

![]() $m(n)/n\to \gamma^*$

and

$m(n)/n\to \gamma^*$

and

![]() $0\le \gamma^*\le 1$

as

$0\le \gamma^*\le 1$

as

![]() $n\to \infty$

. For ease of notation, we refer to m(n) as m henceforth. Note that m is not an actual minibatch size here. However, please do note that m is indeed fundamental to the analysis as our primary question is-does the dynamics of the algorithm remain the same if mean and variance of the weights is the same as that of a minibatch SGD of minibatch size m, but the distribution is of our choosing. We have sample training data as

$n\to \infty$

. For ease of notation, we refer to m(n) as m henceforth. Note that m is not an actual minibatch size here. However, please do note that m is indeed fundamental to the analysis as our primary question is-does the dynamics of the algorithm remain the same if mean and variance of the weights is the same as that of a minibatch SGD of minibatch size m, but the distribution is of our choosing. We have sample training data as

![]() $u_1,u_2,\ldots,u_n$

as i.i.d. from some distribution Q where Q is such that

$u_1,u_2,\ldots,u_n$

as i.i.d. from some distribution Q where Q is such that

![]() $\mathbb{E}\left( l(\theta,u_i)\right)=g(\theta)$

for all

$\mathbb{E}\left( l(\theta,u_i)\right)=g(\theta)$

for all

![]() $\theta \in \mathbb{R}^p$

, where p, the dimension of the problem, is fixed. The following is the algorithmic representation of online M-SGD.

$\theta \in \mathbb{R}^p$

, where p, the dimension of the problem, is fixed. The following is the algorithmic representation of online M-SGD.

Online Multiplicative Stochastic Gradient Descent (Online M-SGD).

Remark 1. In the case of deterministic training points

![]() $x_1,x_2,\ldots,x_t$

, defining the objective function, one may think of

$x_1,x_2,\ldots,x_t$

, defining the objective function, one may think of

![]() $u_i$

as being one of the training data values with uniform probability, i.e.

$u_i$

as being one of the training data values with uniform probability, i.e.

![]() $u_i=x_j \ \textrm{with probability} \ t^{-1} \ \textrm{for all }i,j$

.

$u_i=x_j \ \textrm{with probability} \ t^{-1} \ \textrm{for all }i,j$

.

We consider

![]() $|\cdot|$

to be the Euclidean norm (note that on

$|\cdot|$

to be the Euclidean norm (note that on

![]() $\mathbb{R}^1$

this is just the absolute value) and denote the transpose of any matrix A as

$\mathbb{R}^1$

this is just the absolute value) and denote the transpose of any matrix A as

![]() $A^{\mathsf{T}}$

. We denote by

$A^{\mathsf{T}}$

. We denote by

![]() $X \sim (a,b)$

as a random variable with

$X \sim (a,b)$

as a random variable with

![]() $\mathbb{E}(X)=a$

and

$\mathbb{E}(X)=a$

and

![]() $\textrm{Var}(X)=b$

and define

$\textrm{Var}(X)=b$

and define

![]() $\sigma(\! \cdot \!)=\textrm{Var}(\nabla l(\cdot,u))$

.

$\sigma(\! \cdot \!)=\textrm{Var}(\nabla l(\cdot,u))$

.

We consider the following algorithms, which shall appear in our analysis repeatedly:

which are respectively the SGD algorithm with scaled Gaussian error (4) and the M-SGD algorithm (5). Here

![]() $k=0,1,2,\ldots,K$

and

$k=0,1,2,\ldots,K$

and

![]() $\gamma$

is the learning rate or the step size. We also consider the following stochastic processes:

$\gamma$

is the learning rate or the step size. We also consider the following stochastic processes:

where

![]() $B_t$

is the standard Brownian motion. In (6)–(8),

$B_t$

is the standard Brownian motion. In (6)–(8),

![]() $t\in (k\gamma,(k+1)\gamma]$

with

$t\in (k\gamma,(k+1)\gamma]$

with

![]() $k\le K$

and

$k\le K$

and

![]() $K\gamma=T$

, where T is the time horizon of all the stochastic processes as defined throughout the work. All the initial points defined throughout the work are fixed at a single point denoted by

$K\gamma=T$

, where T is the time horizon of all the stochastic processes as defined throughout the work. All the initial points defined throughout the work are fixed at a single point denoted by

![]() $x_0$

. Equations (6) and (8) are continuous versions of (4) and (5), respectively. Our claim is that M-SGD is close to the diffusion as described by (7). By ‘close to’, we imply the Wasserstein-2 distance function between two probability measures. Recall the definition of the Wasserstein-2 distance as

$x_0$

. Equations (6) and (8) are continuous versions of (4) and (5), respectively. Our claim is that M-SGD is close to the diffusion as described by (7). By ‘close to’, we imply the Wasserstein-2 distance function between two probability measures. Recall the definition of the Wasserstein-2 distance as

Here

![]() $\Gamma(\mu,\nu)$

denotes the set of all couplings of the probability measures

$\Gamma(\mu,\nu)$

denotes the set of all couplings of the probability measures

![]() $\mu,\nu$

. Note that since random variables generate a probability measure on

$\mu,\nu$

. Note that since random variables generate a probability measure on

![]() $\mathbb{R}^p$

for any specified p, we can also define the Wasserstein-2 distance with respect to random variables in analogous fashion, i.e. for random variables X, Y, we shall use the notation

$\mathbb{R}^p$

for any specified p, we can also define the Wasserstein-2 distance with respect to random variables in analogous fashion, i.e. for random variables X, Y, we shall use the notation

2.2. Assumptions

Assumption 1. The loss function

![]() $l(\theta,x)$

is continuously twice differentiable in

$l(\theta,x)$

is continuously twice differentiable in

![]() $\theta$

for each

$\theta$

for each

![]() $x \in \mathbb{R}^p$

. In addition, for all

$x \in \mathbb{R}^p$

. In addition, for all

![]() $u \in \mathbb{R}^p$

there exists

$u \in \mathbb{R}^p$

there exists

![]() $h_1(u)>0$

such that for all

$h_1(u)>0$

such that for all

![]() $\theta_1, \theta_2 \in \mathbb{R}^p$

,

$\theta_1, \theta_2 \in \mathbb{R}^p$

,

In addition, there exists

![]() $\theta_0$

such that

$\theta_0$

such that

![]() $\nabla l(\theta_0, u), h_1(u)$

are

$\nabla l(\theta_0, u), h_1(u)$

are

![]() $L^3(\Omega,P)$

.

$L^3(\Omega,P)$

.

Remark 2. Note that the first part of Assumption 1 implies that

![]() $\mathbb{E}\left|\nabla l(\theta,u)\right|^3<\infty$

for all

$\mathbb{E}\left|\nabla l(\theta,u)\right|^3<\infty$

for all

![]() $\theta$

. This is easily seen since

$\theta$

. This is easily seen since

![]() $\left|\nabla l(\theta,u)\right|^3\le 4(|\nabla l(\theta_0,u)|^3+h_1^3(u)|\theta-\theta_0|^3)$

.

$\left|\nabla l(\theta,u)\right|^3\le 4(|\nabla l(\theta_0,u)|^3+h_1^3(u)|\theta-\theta_0|^3)$

.

Remark 3. Note that Assumptions 1 implies that for all

![]() $\theta$

, we have

$\theta$

, we have

Indeed one can see this by using the dominated convergence theorem (DCT). A detailed explanation of this is provided in Lemma 1 in Appendix A.

Remark 4. Note that Assumptions 1 also implies

for all

![]() $\theta$

, where

$\theta$

, where

![]() $L=\mathbb{E}[h(u)]$

.

$L=\mathbb{E}[h(u)]$

.

Remark 4 is a standard assumption in the optimization literature.

Assumption 2. There exists

![]() $L_1>0$

such that

$L_1>0$

such that

where

![]() $||\cdot||_2$

is the spectral norm of the operator and

$||\cdot||_2$

is the spectral norm of the operator and

![]() $\sigma(\! \cdot \!)=\textrm{Var}(\nabla l(\cdot,u))$

.

$\sigma(\! \cdot \!)=\textrm{Var}(\nabla l(\cdot,u))$

.

Remark 5. Note that Assumption 2 also implies

where

![]() $||\cdot||_F$

denotes the Frobenius norm of a matrix.

$||\cdot||_F$

denotes the Frobenius norm of a matrix.

This is easy to see since

![]() $\left|\left|A\right|\right|^2_F=\sum_{i=1}^{p} e^{\mathsf{T}}_iA^{\mathsf{T}}Ae_i\le \sum_{i=1}^{p} \left|Ae_i\right|^2\le \sum_{i=1}^{p} \left|\left|A\right|\right|^2_2=p \left|\left|A\right|\right|^2_2.$

This assumption implies that the covariance structure for the randomness in the training data has some level of linear control.

$\left|\left|A\right|\right|^2_F=\sum_{i=1}^{p} e^{\mathsf{T}}_iA^{\mathsf{T}}Ae_i\le \sum_{i=1}^{p} \left|Ae_i\right|^2\le \sum_{i=1}^{p} \left|\left|A\right|\right|^2_2=p \left|\left|A\right|\right|^2_2.$

This assumption implies that the covariance structure for the randomness in the training data has some level of linear control.

Remark 6. All our results are valid for any

![]() $\sigma(\theta)$

such that

$\sigma(\theta)$

such that

![]() $\sigma(\theta)\sigma(\theta)^{\mathsf{T}}=\sigma^2(\theta)$

with

$\sigma(\theta)\sigma(\theta)^{\mathsf{T}}=\sigma^2(\theta)$

with

![]() $\sigma(\theta)$

Lipschitz in the spectral norm. For the sake of convenience we assume

$\sigma(\theta)$

Lipschitz in the spectral norm. For the sake of convenience we assume

![]() $\sigma(\theta)=\sigma^2(\theta)^{1/2}$

throughout our work.

$\sigma(\theta)=\sigma^2(\theta)^{1/2}$

throughout our work.

Assumption 3. Given any n, the weight vectors at each iteration in (5) are i.i.d.

![]() $W=(w_1,w_2,w_3,\ldots,w_n)^{\mathsf{T}}$

where W is any random vector with

$W=(w_1,w_2,w_3,\ldots,w_n)^{\mathsf{T}}$

where W is any random vector with

![]() $\mathbb{E}(W)=1/n \,(1,1,\ldots,1)^{\mathsf{T}}$

and the variance covariance matrix is

$\mathbb{E}(W)=1/n \,(1,1,\ldots,1)^{\mathsf{T}}$

and the variance covariance matrix is

![]() $\Sigma$

, i.e.

$\Sigma$

, i.e.

where

![]() $\left(\Sigma\right)_{i,i}=\frac{n-m}{mn^2}$

and

$\left(\Sigma\right)_{i,i}=\frac{n-m}{mn^2}$

and

![]() $\left(\Sigma\right)_{i,j}=-\frac{n-m}{mn^2(n-1)}$

.

$\left(\Sigma\right)_{i,j}=-\frac{n-m}{mn^2(n-1)}$

.

An immediate example of such a case is minibatch SGD, which is the most widely used SGD algorithm in practice. In this case the

![]() $w_{i,k}=1/m$

is included in the sample and is 0 otherwise. Here m denotes the minibatch size. This is the hypergeometric set-up and it is not hard to show that the mean and the variance of the weights are as advertised above in Assumption 3. Indeed it is easy to see that

$w_{i,k}=1/m$

is included in the sample and is 0 otherwise. Here m denotes the minibatch size. This is the hypergeometric set-up and it is not hard to show that the mean and the variance of the weights are as advertised above in Assumption 3. Indeed it is easy to see that

![]() $\mathbb{E}(w_i)=1/n$

,

$\mathbb{E}(w_i)=1/n$

,

![]() $\textrm{Cov}(w_i,w_j) =-(n-m)/mn^2(n-1)$

and

$\textrm{Cov}(w_i,w_j) =-(n-m)/mn^2(n-1)$

and

![]() $\textrm{Var}(w_i)=(n-m)/mn^2$

(see [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33]). One point to note is that the variance matrix for W is not strictly positive, which implies that W lies in a lower-dimensional space. Indeed, this is true as one has

$\textrm{Var}(w_i)=(n-m)/mn^2$

(see [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33]). One point to note is that the variance matrix for W is not strictly positive, which implies that W lies in a lower-dimensional space. Indeed, this is true as one has

![]() $\sum_{i=1}^{n}w_{i,k}=1$

almost surely for all k, which is evident from the definition of

$\sum_{i=1}^{n}w_{i,k}=1$

almost surely for all k, which is evident from the definition of

![]() $w_{i,k}$

.

$w_{i,k}$

.

Remark 7. Note that the covariance of the weights is dependent on a quantity m which is some function of n, as we stated in the beginning of this section. A key feature of m is the fact that it acts like a ‘minibatch’ and can be thought of as a pseudo-minibatch. One way to consider this is that, if we consider hypergeometric weights, then m is very natural. Here, the quantity m appears due to the mean and the variance of the weights, which are the same as that of a minibatch SGD with minibatch size m.

Assumption 4. At each iteration step (k) in (5),

![]() $u_{i,k}$

are generated i.i.d. Q, i.e.

$u_{i,k}$

are generated i.i.d. Q, i.e.

where Q denotes the probability measure such that

![]() $\mathbb{E}( l(\theta,u_{i,k}))=g(\theta)$

for all

$\mathbb{E}( l(\theta,u_{i,k}))=g(\theta)$

for all

![]() $\theta$

, i, and k.

$\theta$

, i, and k.

This assumption on the training data implies that data of similar type come into consideration at each time point.

Next, further assumptions on W are considered so as to enable us in analyzing the Gaussian nature of the M-SGD error. Define

![]() $\tilde{1}=(1,1,\ldots,1)^{\mathsf{T}}$

, i.e. the vector of all entries 1. Note that

$\tilde{1}=(1,1,\ldots,1)^{\mathsf{T}}$

, i.e. the vector of all entries 1. Note that

where

![]() $\Sigma$

is defined in Assumption 3.

$\Sigma$

is defined in Assumption 3.

Assumption 5. For each iteration step k in the online M-SGD algorithm, (5),

![]() $W=(w_1, w_2,w_3,\ldots,w_n)^{\mathsf{T}}$

is defined as

$W=(w_1, w_2,w_3,\ldots,w_n)^{\mathsf{T}}$

is defined as

where

![]() $X=(X_1,X_2,\ldots,X_n)$

and

$X=(X_1,X_2,\ldots,X_n)$

and

![]() $X_1,X_2,\ldots,X_n$

are i.i.d. sub-Gaussian with mean

$X_1,X_2,\ldots,X_n$

are i.i.d. sub-Gaussian with mean

![]() $\mu$

and variance 1.

$\mu$

and variance 1.

Note that we omit the index k here as at each iteration step the random variables are i.i.d.

Remark 8. Observe,

![]() $X_i$

can have any mean

$X_i$

can have any mean

![]() $\mu \in \mathbb{R}$

. This is because

$\mu \in \mathbb{R}$

. This is because

\begin{align*} \sqrt{\frac{n-m}{mn(n-1)}}\left(I-\frac{1}{n}\tilde{1}\tilde{1}^{\mathsf{T}}\right)X&=\sqrt{\frac{n-m}{mn(n-1)}}\left(I-\frac{1}{n}\tilde{1}\tilde{1}^{\mathsf{T}}\right)(X-\mu \tilde{1})\\ &\quad \quad +\mu\,\sqrt{\frac{n-m}{mn(n-1)}}\left(I-\frac{1}{n}\tilde{1}\tilde{1}^{\mathsf{T}}\right)\tilde{1}\\ &=\sqrt{\frac{n-m}{mn(n-1)}}\left(I-\frac{1}{n}\tilde{1}\tilde{1}^{\mathsf{T}}\right)(X-\mu \tilde{1}), \end{align*}

\begin{align*} \sqrt{\frac{n-m}{mn(n-1)}}\left(I-\frac{1}{n}\tilde{1}\tilde{1}^{\mathsf{T}}\right)X&=\sqrt{\frac{n-m}{mn(n-1)}}\left(I-\frac{1}{n}\tilde{1}\tilde{1}^{\mathsf{T}}\right)(X-\mu \tilde{1})\\ &\quad \quad +\mu\,\sqrt{\frac{n-m}{mn(n-1)}}\left(I-\frac{1}{n}\tilde{1}\tilde{1}^{\mathsf{T}}\right)\tilde{1}\\ &=\sqrt{\frac{n-m}{mn(n-1)}}\left(I-\frac{1}{n}\tilde{1}\tilde{1}^{\mathsf{T}}\right)(X-\mu \tilde{1}), \end{align*}

where the second line follows as

![]() $\tilde{1}$

is in the null space of

$\tilde{1}$

is in the null space of

![]() $\Sigma^{1/2}$

.

$\Sigma^{1/2}$

.

Remark 9. This assumption enables us to consider a large class of distributions for W. It allows us to include the complicated case when the weights are negative and due to both the dependent structure of W and its dependence on m, n, we assume that the weights are generated by a sub-Gaussian vector. Dropping this assumption makes the problem very hard even with moment assumption. In addition, note that Assumption 5 automatically implies Assumption 3.

To address the case of non-negative weights, we employ another assumption on the weights, which is well known in the literature [Reference Arenal-Gutiérrez and Matrán1].

Assumption 6. Consider the sequence of random weights, as defined in Algorithm 1, satisfy the following assumptions:

-

1.

$w_i$

are exchangeable;

$w_i$

are exchangeable; -

2.

$w_i \ge 0$

for all i with

$w_i \ge 0$

for all i with

$\sum_{i=1}^{n} w_i=1$

;

$\sum_{i=1}^{n} w_i=1$

; -

3.

$\max_{1\le i\le n} \sqrt{m}\left|w_i-1/n\right|\overset{P}{\rightarrow}0$

as

$\max_{1\le i\le n} \sqrt{m}\left|w_i-1/n\right|\overset{P}{\rightarrow}0$

as

$n\to \infty$

;

$n\to \infty$

; -

4.

$m \sum_{i=1}^{n}(w_i-\frac{1}{n})^2 \overset{P}{\rightarrow} c^2$

as

$m \sum_{i=1}^{n}(w_i-\frac{1}{n})^2 \overset{P}{\rightarrow} c^2$

as

$n \to \infty$

.

$n \to \infty$

.

3. Main results

3.1. The CLT of M-SGD error

In this section we analyze the M-SGD error and try to see if there is some universal behavior in the same irrespective of the distribution of the weight vectors used in the algorithm. We begin by noting that the update step in Algorithm 1 can also be expressed as

owing to the fact

![]() $\sum_{i=1}^{n} w_{i,k}=1$

, which follows from Assumption 3. The term

$\sum_{i=1}^{n} w_{i,k}=1$

, which follows from Assumption 3. The term

is called the M-SGD error. We first exhibit that for any

![]() $\theta$

a scaled version of this error is approximately Gaussian or for all

$\theta$

a scaled version of this error is approximately Gaussian or for all

![]() $\theta \in \mathbb{R}^p$

, we have

$\theta \in \mathbb{R}^p$

, we have

The symbol

![]() $\overset{d}{\approx}$

implies has approximate distribution. This approximation holds when n is large. To put it more rigorously,

$\overset{d}{\approx}$

implies has approximate distribution. This approximation holds when n is large. To put it more rigorously,

One can find similar work in the bootstrap literature [Reference Arenal-Gutiérrez and Matrán1]. However, the problem considered in such cases is somewhat different from ours and thus this is a new way of looking at the SGD error.

We claim that if

![]() $W_k$

has mean and covariance structure as given in Assumption 3, some key properties of the minibatch SGD are retained. This in some way is a universality of the weights. Based on the asymptotic relation between m, n we divide the first problem into cases (

$W_k$

has mean and covariance structure as given in Assumption 3, some key properties of the minibatch SGD are retained. This in some way is a universality of the weights. Based on the asymptotic relation between m, n we divide the first problem into cases (

![]() $\gamma^*=1$

and

$\gamma^*=1$

and

![]() $\gamma^*<1$

) and analyze the Gaussian nature of the error term in Algorithm 1. For ease of notation, we shall write

$\gamma^*<1$

) and analyze the Gaussian nature of the error term in Algorithm 1. For ease of notation, we shall write

![]() $u_{i,k}=u_i$

and

$u_{i,k}=u_i$

and

![]() $w_{i,k}=w_i$

for this section as the Gaussian property is independent of the iteration k.

$w_{i,k}=w_i$

for this section as the Gaussian property is independent of the iteration k.

We invoke Assumptions 3, 4, and 5 or 4 and 6 to help us obtain the following CLT.

Theorem 1. Consider the regime

![]() $m/n \to \gamma^* \textrm{;} \quad 0\le \gamma^* < 1$

as

$m/n \to \gamma^* \textrm{;} \quad 0\le \gamma^* < 1$

as

![]() $n\to \infty$

. In addition, take the Assumptions 4 and 5 to hold true. In such a setting,

$n\to \infty$

. In addition, take the Assumptions 4 and 5 to hold true. In such a setting,

\[\sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i)-\nabla g(\theta)\right) \xrightarrow{d} N(0,\sigma^2(\theta))\]

\[\sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i)-\nabla g(\theta)\right) \xrightarrow{d} N(0,\sigma^2(\theta))\]

as

![]() $n\to \infty$

.

$n\to \infty$

.

The proof of this theorem is given in Appendix A.

Remark 10. The CLT still holds if we consider i.i.d. mean zero random variables with finite third moment instead of

![]() $(\nabla l(\theta,u_i)-\nabla g(\theta))$

. The asymptotic variance is dependent on the distribution of the i.i.d. random variables.

$(\nabla l(\theta,u_i)-\nabla g(\theta))$

. The asymptotic variance is dependent on the distribution of the i.i.d. random variables.

The proof of Theorem 1 provides evidence for Remark 10.

Theorem 2. Let Assumptions 3, 4, and 6 hold. In addition, let

![]() $m/n\to 0$

as

$m/n\to 0$

as

![]() $n\to \infty$

. Then for any

$n\to \infty$

. Then for any

![]() $\theta \in \mathbb{R}^p$

,

$\theta \in \mathbb{R}^p$

,

\begin{align*} \sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i)-\nabla g(\theta)\right) \xrightarrow{d} N(0,c^2\,\sigma^2(\theta)) \end{align*}

\begin{align*} \sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i)-\nabla g(\theta)\right) \xrightarrow{d} N(0,c^2\,\sigma^2(\theta)) \end{align*}

as

![]() $n \to \infty$

, where c is defined in Assumption 6.

$n \to \infty$

, where c is defined in Assumption 6.

The proof of Theorem 2 is provided in Appendix B.

Remark 11. Note that

![]() $m/n \to 0$

is fundamental to Theorem 2. This is because, the results of previous work [Reference Arenal-Gutiérrez and Matrán1] cannot be replicated in our setting, which consider the random variable

$m/n \to 0$

is fundamental to Theorem 2. This is because, the results of previous work [Reference Arenal-Gutiérrez and Matrán1] cannot be replicated in our setting, which consider the random variable

![]() $\sqrt{m}\sum_{i=1}^{n}(w_i-1/n)X_i$

, with

$\sqrt{m}\sum_{i=1}^{n}(w_i-1/n)X_i$

, with

![]() $X_i$

i.i.d. The

$X_i$

i.i.d. The

![]() $1/n$

is of key importance in these works without which the results shall not hold. To balance this limitation we require the condition

$1/n$

is of key importance in these works without which the results shall not hold. To balance this limitation we require the condition

![]() $m/n \to 0$

.

$m/n \to 0$

.

Remark 12. When the weights are positive, there is previous seminal work in the bootstrap literature [Reference Præstgaard and Wellner29] that one may try to derive the result of Theorem 2. However, a fundamental work in the topic [Reference Arenal-Gutiérrez and Matrán1] allows us greater comfort in handling the problem. Further note that Theorem 1 cannot be established using either of the two mentioned references as the weights may be negative.

Example 1 (Example for Theorem 1). An example of weights where this structure is observed is when

![]() $W\sim N(\mu,\Sigma)$

. In this case it is easy to see that

$W\sim N(\mu,\Sigma)$

. In this case it is easy to see that

where

![]() $X\sim N(0,I)$

. We can easily check that the conditions for Assumption 5 are satisfied here. We consider

$X\sim N(0,I)$

. We can easily check that the conditions for Assumption 5 are satisfied here. We consider

![]() $U_i=(U_{i1},U_{i2},\ldots,U_{i6})^{\mathsf{T}}$

where we have each

$U_i=(U_{i1},U_{i2},\ldots,U_{i6})^{\mathsf{T}}$

where we have each

![]() $U_{ij}\sim Unif(\!-1,1)$

i.i.d. as our data. We have

$U_{ij}\sim Unif(\!-1,1)$

i.i.d. as our data. We have

![]() $W\sim N(\mu,\Sigma)$

where

$W\sim N(\mu,\Sigma)$

where

![]() $\mu$

and

$\mu$

and

![]() $\Sigma$

are as defined in Assumption 3. The dimension of the problem is taken to be 6, i.e.

$\Sigma$

are as defined in Assumption 3. The dimension of the problem is taken to be 6, i.e.

![]() $p=6$

with

$p=6$

with

![]() $n=10^4$

,

$n=10^4$

,

![]() $m=2000$

, and

$m=2000$

, and

![]() $10^3$

samples of

$10^3$

samples of

![]() $\sqrt{m}\sum_{i=1}^{n}w_{i,k}U_i$

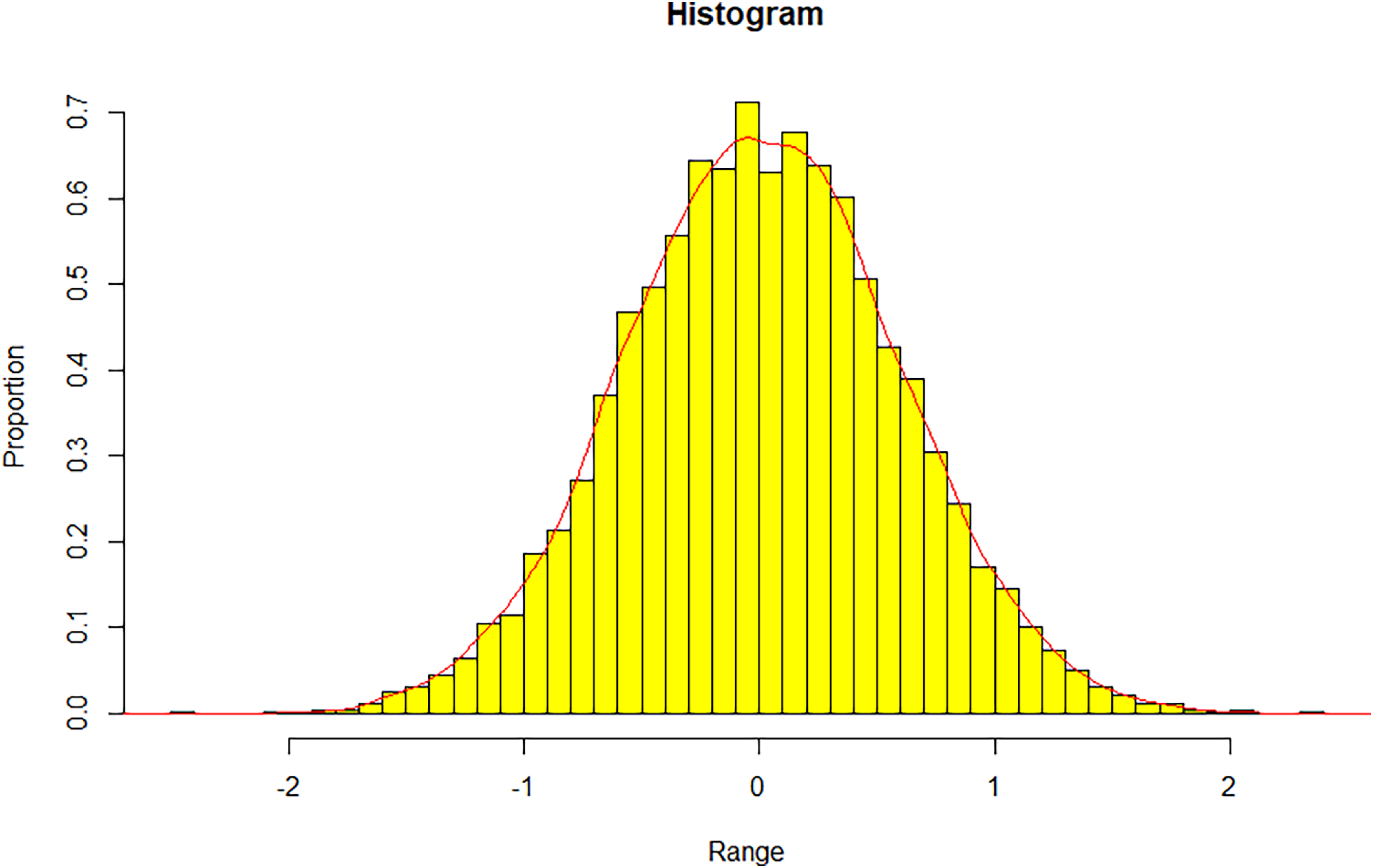

are generated. We observe the distribution of the resultant data. The histogram of the one-dimensional projection of the data along the standard basis is presented in Figure 1.

$\sqrt{m}\sum_{i=1}^{n}w_{i,k}U_i$

are generated. We observe the distribution of the resultant data. The histogram of the one-dimensional projection of the data along the standard basis is presented in Figure 1.

Histogram of the 10,000 samples of

![]() $\sqrt{m}\sum_{i=1}^{n}w_{i,k}U_i$

. Here

$\sqrt{m}\sum_{i=1}^{n}w_{i,k}U_i$

. Here

![]() $p=6$

with

$p=6$

with

![]() $n=10^4$

,

$n=10^4$

,

![]() $m=2000$

. The weight vector

$m=2000$

. The weight vector

![]() $W=(w_1,w_2,\ldots,w_n)^{\mathsf{T}}$

is distributed as per

$W=(w_1,w_2,\ldots,w_n)^{\mathsf{T}}$

is distributed as per

![]() $N(\mu, \Sigma)$

where

$N(\mu, \Sigma)$

where

![]() $\mu$

and

$\mu$

and

![]() $\Sigma$

are as specified in Assumption 3

$\Sigma$

are as specified in Assumption 3

Example 2 (Example for Theorem 2). The simplest example for the positive case is the minibatch/hypergeometric random variables where

![]() $w_i=1/m$

if the ith element is selected and

$w_i=1/m$

if the ith element is selected and

![]() $w_i=0$

otherwise. In addition,

$w_i=0$

otherwise. In addition,

![]() $\sum_{i=1}^{n}w_i=1$

, which implies exactly m indices are selected out of n. In this case it is easy to verify that Assumptions 3 and 6 hold. However, we provide a more non-trivial example, which is the Dirichlet distribution. Consider a vector

$\sum_{i=1}^{n}w_i=1$

, which implies exactly m indices are selected out of n. In this case it is easy to verify that Assumptions 3 and 6 hold. However, we provide a more non-trivial example, which is the Dirichlet distribution. Consider a vector

Note that a w that follows the given Dirichlet distribution has the property that

![]() $w_i$

are exchangeable,

$w_i$

are exchangeable,

![]() $w_i \ge 0$

and

$w_i \ge 0$

and

![]() $\sum_{i=1}^{n} w_i=1$

. In addition, some minor calculations will show that

$\sum_{i=1}^{n} w_i=1$

. In addition, some minor calculations will show that

where

![]() $\Sigma$

is defined as previously in Assumption 3.

$\Sigma$

is defined as previously in Assumption 3.

Proposition 1. If

with

![]() $m/n \to 0$

as

$m/n \to 0$

as

![]() $n\to \infty$

, then

$n\to \infty$

, then

\begin{align*} \sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i)-\nabla g(\theta)\right) \xrightarrow{d} N(0,\sigma^2(\theta)) \end{align*}

\begin{align*} \sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i)-\nabla g(\theta)\right) \xrightarrow{d} N(0,\sigma^2(\theta)) \end{align*}

as

![]() $n \to \infty$

.

$n \to \infty$

.

The proof of Proposition 1 is provided in Appendix B.

As a numerical example consider

![]() $U_i\sim Unif(-1,1)$

for all i. Note that the dimension of this problem is 1, i.e.

$U_i\sim Unif(-1,1)$

for all i. Note that the dimension of this problem is 1, i.e.

![]() $p=1$

with

$p=1$

with

![]() $n=10^4$

,

$n=10^4$

,

![]() $m=2000$

. We generate

$m=2000$

. We generate

![]() $10^4$

samples of

$10^4$

samples of

![]() $\sqrt{m}\sum_{i=1}^{n}w_{i}U_i$

. In this example, the weight vector

$\sqrt{m}\sum_{i=1}^{n}w_{i}U_i$

. In this example, the weight vector

![]() $W=(w_1,w_2,\ldots,w_n)^{\mathsf{T}}$

is simulated from

$W=(w_1,w_2,\ldots,w_n)^{\mathsf{T}}$

is simulated from

![]() $\textrm{Dir}\left(\left(\frac{1999}{8000},\frac{1999}{8000},\ldots,\frac{1999}{8000}\right)\right)$

, which is the Dirichlet distribution with parameter vector of length

$\textrm{Dir}\left(\left(\frac{1999}{8000},\frac{1999}{8000},\ldots,\frac{1999}{8000}\right)\right)$

, which is the Dirichlet distribution with parameter vector of length

![]() $10^4$

, given as

$10^4$

, given as

![]() $\left(\frac{1999}{8000},\frac{1999}{8000},\ldots,\frac{1999}{8000}\right)^{\mathsf{T}}$

. Results are exhibited in Figure 2. From the plot it seems that the samples are distributed as per the normal distribution.

$\left(\frac{1999}{8000},\frac{1999}{8000},\ldots,\frac{1999}{8000}\right)^{\mathsf{T}}$

. Results are exhibited in Figure 2. From the plot it seems that the samples are distributed as per the normal distribution.

Histogram of the 10,000 samples of

![]() $\sqrt{m}\sum_{i=1}^{n}w_{i,k}U_i$

. Here we have

$\sqrt{m}\sum_{i=1}^{n}w_{i,k}U_i$

. Here we have

![]() $p=1$

with

$p=1$

with

![]() $n=10^4$

,

$n=10^4$

,

![]() $m=2000$

. The weight vector

$m=2000$

. The weight vector

![]() $W=(w_1,w_2,\ldots,w_n)^{\mathsf{T}}$

is simulated from

$W=(w_1,w_2,\ldots,w_n)^{\mathsf{T}}$

is simulated from

![]() $\textrm{Dir}\left(\left(\frac{1999}{8000},\frac{1999}{8000},\ldots,\frac{1999}{8000}\right)\right)$

. The plot indicates the Gaussian nature of the samples.

$\textrm{Dir}\left(\left(\frac{1999}{8000},\frac{1999}{8000},\ldots,\frac{1999}{8000}\right)\right)$

. The plot indicates the Gaussian nature of the samples.

Proposition 2. Let Assumption 3 hold. In the regime

![]() $m/n\to 1$

,

$m/n\to 1$

,

\begin{align*} &\lim_{n\to \infty}\mathbb{E} \left|\sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i) -\nabla g(\theta)\right)-\sqrt{n}\left(\sum_{i=1}^{n}\frac{1}{n}\nabla l(\theta,u_i) -\nabla g(\theta)\right)\right|^2 = 0 \end{align*}

\begin{align*} &\lim_{n\to \infty}\mathbb{E} \left|\sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i) -\nabla g(\theta)\right)-\sqrt{n}\left(\sum_{i=1}^{n}\frac{1}{n}\nabla l(\theta,u_i) -\nabla g(\theta)\right)\right|^2 = 0 \end{align*}

and

\begin{align*} &\lim_{n\to \infty}\mathbb{E}\left|\sqrt{n}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i) -\sum_{i=1}^{n}\frac{1}{n}\nabla l(\theta,u_i)\right)\right|^2 = 0. \end{align*}

\begin{align*} &\lim_{n\to \infty}\mathbb{E}\left|\sqrt{n}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i) -\sum_{i=1}^{n}\frac{1}{n}\nabla l(\theta,u_i)\right)\right|^2 = 0. \end{align*}

The proof is provided in Appendix A. Using the above result, we instantly get the following CLT.

Corollary 1. In the regime

![]() $m/n\to 1$

as

$m/n\to 1$

as

![]() $n \to \infty$

, we have

$n \to \infty$

, we have

as

![]() $n\to \infty$

where the weights

$n\to \infty$

where the weights

![]() $w_i$

are as in Assumption 3.

$w_i$

are as in Assumption 3.

The regime

![]() $m/n \to 1$

as

$m/n \to 1$

as

![]() $n \to \infty$

is much easier both in the intuitive and the technical senses. Proposition 2 considers this case.

$n \to \infty$

is much easier both in the intuitive and the technical senses. Proposition 2 considers this case.

Remark 13. Note that the CLT results in Theorems 1 and 2 give intuition that for large values of m, the dynamics of the algorithm should be similar irrespective of the noise class of the weights. This further implies that we may use known settings and noise classes to estimate the escape times of the algorithm from low potential points in non-convex problems.

3.2. Wasserstein bounds for M-SGD

In this section we analyze the dynamics of the M-SGD algorithm in different regimes, irrespective of the distribution of the weight vectors with a fixed mean and variance. Note that if we rewrite the iteration step in Algorithm 1 as

\[x_{k+1}=x_k-\nabla g(x_k)+\frac{\gamma}{\sqrt{m}}\left(\sum_{i=1}^{n}\sqrt{m}w_{i,k}\left(\nabla l(x_k,u_{i,k})-\nabla g(x_k)\right)\right)\!,\]

\[x_{k+1}=x_k-\nabla g(x_k)+\frac{\gamma}{\sqrt{m}}\left(\sum_{i=1}^{n}\sqrt{m}w_{i,k}\left(\nabla l(x_k,u_{i,k})-\nabla g(x_k)\right)\right)\!,\]

where the term

![]() $\sum_{i=1}^{n}\sqrt{m}w_{i,k}\left(\nabla l(x_k,u_{i,k})-\nabla g(x_k)\right)$

according to Theorem 1 is approximately normal given

$\sum_{i=1}^{n}\sqrt{m}w_{i,k}\left(\nabla l(x_k,u_{i,k})-\nabla g(x_k)\right)$

according to Theorem 1 is approximately normal given

![]() $x_k$

. Hence, we might intuitively consider this algorithm to be equivalent to that given by

$x_k$

. Hence, we might intuitively consider this algorithm to be equivalent to that given by

![]() $x_{k+1}\approx x_k-\nabla g(x_k)+\frac{\gamma}{\sqrt{m}} \sigma(x_k)Z_{k+1}$

where

$x_{k+1}\approx x_k-\nabla g(x_k)+\frac{\gamma}{\sqrt{m}} \sigma(x_k)Z_{k+1}$

where

![]() $Z_{k+1}$

is a standard Gaussian random variable in p dimensions. This provides intuition for the following results, which establishes that the dynamics of (4) and (5) as described by their continuous versions (6) and (8) are close to the dynamics of a diffusion as described by (7).

$Z_{k+1}$

is a standard Gaussian random variable in p dimensions. This provides intuition for the following results, which establishes that the dynamics of (4) and (5) as described by their continuous versions (6) and (8) are close to the dynamics of a diffusion as described by (7).

3.2.1. The general regime:

We establish a non-asymptotic bound between (5) (or (8)) and (7) in the Wasserstein metric at any time point t in the time horizon. We shall consider the convex and non-convex regimes separately as the treatment of the problem is somewhat different in each case.

Theorem 3. Suppose Assumptions 1–4 hold. Recall

![]() $D_t$

and

$D_t$

and

![]() $X_t$

as the stochastic processes defined in (6) and (7), respectively. Then for any

$X_t$

as the stochastic processes defined in (6) and (7), respectively. Then for any

![]() $t\in (0,T]$

and any

$t\in (0,T]$

and any

![]() $m\ge 1$

, we have

$m\ge 1$

, we have

where

![]() $C_{11},C_{12}$

are constants dependent only on

$C_{11},C_{12}$

are constants dependent only on

![]() $T,L,L_1,p$

.

$T,L,L_1,p$

.

The proof of Theorem 3 is furnished in Appendix C where more information on the constants

![]() $C_{11},C_{12}$

is also provided. In Theorem 3 we establish that the Wasserstein distance between (6) and (7) is in the order of the square root of the step size. There have been previous works attempting to address this problem [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33]. These works derive bounds in settings which assume that the loss function is bounded. We do this in our set-up which assumes the loss function and the covariance function are Lipschitz in the parameter. Recall that here

$C_{11},C_{12}$

is also provided. In Theorem 3 we establish that the Wasserstein distance between (6) and (7) is in the order of the square root of the step size. There have been previous works attempting to address this problem [Reference Wu, Hu, Xiong, Huan, Braverman and Zhu33]. These works derive bounds in settings which assume that the loss function is bounded. We do this in our set-up which assumes the loss function and the covariance function are Lipschitz in the parameter. Recall that here

![]() $W_2$

is the 2-Wasserstein distance, which has been defined in the preliminaries section in (9). Our main aim here is to show that the M-SGD algorithm is close to diffusion (7) as a function of the step size. The way we go about this is to construct a linear version of the algorithm

$W_2$

is the 2-Wasserstein distance, which has been defined in the preliminaries section in (9). Our main aim here is to show that the M-SGD algorithm is close to diffusion (7) as a function of the step size. The way we go about this is to construct a linear version of the algorithm

![]() $Y_{n,t}$

, as defined in (8) and then show that this process is close to the diffusion (7). We have shown that

$Y_{n,t}$

, as defined in (8) and then show that this process is close to the diffusion (7). We have shown that

![]() $Y_{n,t}$

and

$Y_{n,t}$

and

![]() $D_t$

as defined by (8) and (6) are close in the Wasserstein distance in Appendix C. This brings us to one of our main results.

$D_t$

as defined by (8) and (6) are close in the Wasserstein distance in Appendix C. This brings us to one of our main results.

Theorem 4. Suppose Assumptions 1–4 hold. Recall

![]() $Y_{n,t}$

and

$Y_{n,t}$

and

![]() $X_t$

as the stochastic processes defined by (8) and (7), respectively. Then for any

$X_t$

as the stochastic processes defined by (8) and (7), respectively. Then for any

![]() $t\in (0,T]$

and

$t\in (0,T]$

and

![]() $\log m\ge \log 3 \,\cdot (T/\gamma)$

we have

$\log m\ge \log 3 \,\cdot (T/\gamma)$

we have

where

![]() $C_{21},C_{22}$

are constants dependent only on

$C_{21},C_{22}$

are constants dependent only on

![]() $T,L,L_1,p$

.

$T,L,L_1,p$

.

The proof of Theorem 4 is furnished in Appendix C where more information on the constants

![]() $C_{21},C_{22}$

is also provided. We observe that the M-SGD algorithm is close in distribution to (7) at each point in order of the square root of the step size under very strict conditions. In Theorem 3 the dependence on m is much weaker than Theorem 4 as in m can take any value greater than 1; whereas, in Theorem 4 the minibatch size needs to be exponentially large in terms of the maximum iteration number. This is due to the fact that the distribution of the weights in Theorem 4 is indeed general and hence needs a large sample and minibatch size to establish the same rate. For specific problems, one should be able to relax the condition on m. Next, we consider the case where the weights are non-negative. This case indeed improves the restriction on m.

$C_{21},C_{22}$

is also provided. We observe that the M-SGD algorithm is close in distribution to (7) at each point in order of the square root of the step size under very strict conditions. In Theorem 3 the dependence on m is much weaker than Theorem 4 as in m can take any value greater than 1; whereas, in Theorem 4 the minibatch size needs to be exponentially large in terms of the maximum iteration number. This is due to the fact that the distribution of the weights in Theorem 4 is indeed general and hence needs a large sample and minibatch size to establish the same rate. For specific problems, one should be able to relax the condition on m. Next, we consider the case where the weights are non-negative. This case indeed improves the restriction on m.

Theorem 5. Suppose Assumptions 1–4 hold. Recall

![]() $Y_{n,t}$

and

$Y_{n,t}$

and

![]() $X_t$

as the stochastic processes defined by (8) and (7), respectively. Then for any

$X_t$

as the stochastic processes defined by (8) and (7), respectively. Then for any

![]() $t\in (0,T]$

and

$t\in (0,T]$

and

![]() $m\ge (T/\gamma)^2$

, with

$m\ge (T/\gamma)^2$

, with

![]() $w_{i,k}\ge 0$

for all i,k,

$w_{i,k}\ge 0$

for all i,k,

where

![]() $C_{21},C_{22}$

are constants dependent only on

$C_{21},C_{22}$

are constants dependent only on

![]() $T,L,L_1,p$

.

$T,L,L_1,p$

.

The proof is provided in Appendix C. Theorem 5 shows that if we indeed have additional assumptions on the problem, weaker conditions on m are sufficient to obtain key bounds. Note that this setting is widely used in practice and remains the staple of deep learning literature to this day.

Remark 14. The relationship between

![]() $\gamma$

and m goes from exponential in the general case to polynomial for positive weights to constant when the error is Gaussian. This does indicate that the nature of the distribution of the error dictates a relationship between the size of the minibatch and the step size.

$\gamma$

and m goes from exponential in the general case to polynomial for positive weights to constant when the error is Gaussian. This does indicate that the nature of the distribution of the error dictates a relationship between the size of the minibatch and the step size.

Remark 15. To the best of our knowledge, there has been no lower bound work of this type and it still remains a hard open problem.

3.2.2. The convex regime:

The next question that naturally arises is what is the dynamic behavior of the M-SGD algorithm in the regime of strong convexity. To do this we first analyze the merits of the M-SGD algorithm as an optimizer and then leverage that knowledge to gain insights on the dynamic behavior of the algorithm. We make the following assumption that we use for the rest of this section.

Assumption 7. The function g is

![]() $\lambda$

-strongly convex with

$\lambda$

-strongly convex with

![]() $\lambda I \le\nabla^2 g (\theta)$

for some

$\lambda I \le\nabla^2 g (\theta)$

for some

![]() $\lambda>0$

and all

$\lambda>0$

and all

![]() $\theta \in \mathbb{R}^p$

.

$\theta \in \mathbb{R}^p$

.

Remark 16. Note that this also implies

![]() $g(x)\ge g(y)+(x-y)^{\mathsf{T}}\nabla g(y)+\frac{\lambda}{2}|x-y|^2$

for all

$g(x)\ge g(y)+(x-y)^{\mathsf{T}}\nabla g(y)+\frac{\lambda}{2}|x-y|^2$

for all

![]() $x,y \in \mathbb{R}^p$

.

$x,y \in \mathbb{R}^p$

.

Note that the assumption of strong convexity indeed forces

![]() $g(\! \cdot \!)$

to have a minima. In fact, there exits a unique

$g(\! \cdot \!)$

to have a minima. In fact, there exits a unique

![]() $x^*$

such that

$x^*$

such that

![]() $\inf_{x} g(x)=g(x^*)$

.

$\inf_{x} g(x)=g(x^*)$

.

The SGD algorithm as an optimizer has been studied at length [Reference Bottou, Curtis and Nocedal3, Reference Moulines and Bach26]; and we apply these ideas in the analysis of M-SGD for the purposes of optimization in the strongly convex regime. However, the difference from previous SGD literature is that, the variance of the loss, in our setting, is not fixed but spatially varying and is Lipschitz. In addition, note that the objective function in question is strongly convex here and not the loss function. Invoking the assumption of strong convexity on

![]() $g(\! \cdot \!)$

we derive bounds for the convergence of the M-SGD algorithm in squared mean to the optimal point denoted by

$g(\! \cdot \!)$

we derive bounds for the convergence of the M-SGD algorithm in squared mean to the optimal point denoted by

![]() $x^{*}=\arg\min_{x}g(x)$

.

$x^{*}=\arg\min_{x}g(x)$

.

Proposition 3. Taking Assumptions 1–4 and 7 to be true, under the regime

![]() $0<\gamma <\min \left(1/L,1\right)$

and

$0<\gamma <\min \left(1/L,1\right)$

and

Algorithms (4) and (5) exhibit

\begin{align*} \mathbb{E}\left(g(v_{k+1})-g(x^*)\right) & \le \left[1-\lambda\gamma(2-L\gamma)+\frac{2pLL^2_1\gamma^2}{m\lambda}\right]^{k+1} \left(g(x_{0})-g(x^*)\right)\\ &\quad +\frac{L\gamma}{m\left[\lambda(2-L\gamma)-\frac{2pLL^2_1\gamma}{m\lambda}\right]} \left|\left|\sigma(x^*)\right|\right|^2_F \end{align*}

\begin{align*} \mathbb{E}\left(g(v_{k+1})-g(x^*)\right) & \le \left[1-\lambda\gamma(2-L\gamma)+\frac{2pLL^2_1\gamma^2}{m\lambda}\right]^{k+1} \left(g(x_{0})-g(x^*)\right)\\ &\quad +\frac{L\gamma}{m\left[\lambda(2-L\gamma)-\frac{2pLL^2_1\gamma}{m\lambda}\right]} \left|\left|\sigma(x^*)\right|\right|^2_F \end{align*}

and

\begin{align*} \mathbb{E}\left|v_{k+1}-x^*\right|^2 &\le \frac{2}{\lambda}\left[1-\lambda\gamma(2-L\gamma)+\frac{2pLL^2_1\gamma^2}{m\lambda}\right]^{k+1} \left(g(x_{0})-g(x^*)\right)\\ &\quad +\frac{2}{\lambda}\left[\frac{L\gamma}{m\left(\lambda(2-L\gamma)-\frac{2pLL^2_1\gamma}{m\lambda}\right)}\right] \left|\left|\sigma(x^*)\right|\right|^2_F, \end{align*}

\begin{align*} \mathbb{E}\left|v_{k+1}-x^*\right|^2 &\le \frac{2}{\lambda}\left[1-\lambda\gamma(2-L\gamma)+\frac{2pLL^2_1\gamma^2}{m\lambda}\right]^{k+1} \left(g(x_{0})-g(x^*)\right)\\ &\quad +\frac{2}{\lambda}\left[\frac{L\gamma}{m\left(\lambda(2-L\gamma)-\frac{2pLL^2_1\gamma}{m\lambda}\right)}\right] \left|\left|\sigma(x^*)\right|\right|^2_F, \end{align*}

where

![]() $v_{k+1}$

is used to denote the

$v_{k+1}$

is used to denote the

![]() $k+1$

th iterate of both (4) and (5).

$k+1$

th iterate of both (4) and (5).

The proof of Proposition 3 is given in Appendix C.1.

Remark 17. Note that the existence of an optima follows from strong convexity. In addition, note that since

![]() $x^{*}$

is the optimum value, one has

$x^{*}$

is the optimum value, one has

![]() $g(x_0)-g(x^{*})>0$

. This is not random as both

$g(x_0)-g(x^{*})>0$

. This is not random as both

![]() $x_0$

and

$x_0$

and

![]() $x^{*}$

are deterministic points.

$x^{*}$

are deterministic points.

Remark 18. Proposition 3 exhibits that under strong convexity of the main objective function (and not the loss function), one has geometric convergence of the online M-SGD algorithm and the SGD algorithm with scaled Gaussian error to the optimum.

Remark 19. Note that the conditions on

![]() $\gamma$

and m ensure that the rate of convergence is indeed less than 1.

$\gamma$

and m ensure that the rate of convergence is indeed less than 1.

One key point is that our result assumes that the matrix

![]() $\sigma(\! \cdot \!)$

is Lipschitz, which is vital to our proof.

$\sigma(\! \cdot \!)$

is Lipschitz, which is vital to our proof.

Now we are ready to state the main theorem of this section. Define

\[\rho=\left[1-\lambda\gamma(2-L\gamma)+\frac{2pLL^2_1\gamma^2}{m\lambda}\right].\]

\[\rho=\left[1-\lambda\gamma(2-L\gamma)+\frac{2pLL^2_1\gamma^2}{m\lambda}\right].\]

Theorem 6. Take Assumptions 1–4 and 7 as true, under the regime

![]() $0<\gamma <\min\left(1/L,1\right)$

and

$0<\gamma <\min\left(1/L,1\right)$

and

Let

![]() $Y_{n,t}$

and

$Y_{n,t}$

and

![]() $X_t$

denote the stochastic processes defined by (8) and (7), respectively. Then for any

$X_t$

denote the stochastic processes defined by (8) and (7), respectively. Then for any

![]() $t\in (0,T]$

we have

$t\in (0,T]$

we have

for some constants

![]() $\tilde{C}^{**}_1$

,

$\tilde{C}^{**}_1$

,

![]() $\tilde{C}^{**}_2$

, and

$\tilde{C}^{**}_2$

, and

![]() $\tilde{C}^{**}_3$

independent of

$\tilde{C}^{**}_3$

independent of

![]() $\gamma$

,

$\gamma$

,

![]() $\rho$

, and m, with

$\rho$

, and m, with

![]() $[\! \cdot \!]$

denoting the floor function.

$[\! \cdot \!]$

denoting the floor function.

The proof is provided in Appendix C.1 where more information on the constants can be found.

Remark 20. Note that the condition on m is such that the lower bound is inversely proportional to K. This implies that a larger number of iterations relaxes the size of the minibatch. Thus, for small enough step sizes, any value of m works. However, smaller step sizes imply that the algorithm takes more time to explore. Hence, the practitioner is advised to try multiple step sizes in practice.

Example 3. In this example, we examine our algorithm in the context of the logistic regression problem. Consider

![]() $t \in \mathbb{N}$

and data given to us in the form

$t \in \mathbb{N}$

and data given to us in the form

![]() $(y_i,x_i)_{i=1}^{t}\in \mathbb{R}^{p+1}$

, where

$(y_i,x_i)_{i=1}^{t}\in \mathbb{R}^{p+1}$

, where

![]() $y_i \in \{0,1\}$

and

$y_i \in \{0,1\}$

and

![]() $x_i \in \mathbb{R}^p$

. Our objective function is given as the negative log-likelihood plus an

$x_i \in \mathbb{R}^p$

. Our objective function is given as the negative log-likelihood plus an

![]() $l_2$

-regularization penalty. The objective function is

$l_2$

-regularization penalty. The objective function is

\begin{align} g(\beta)=\frac{1}{t}\left[-\sum_{i=1}^{t}y_i x^{\mathsf{T}}_i\beta + \sum_{i=1}^{t}\log\left(1+e^{x^{\mathsf{T}}_i\beta}\right)\right]+\kappa \left|\beta\right|^2, \end{align}

\begin{align} g(\beta)=\frac{1}{t}\left[-\sum_{i=1}^{t}y_i x^{\mathsf{T}}_i\beta + \sum_{i=1}^{t}\log\left(1+e^{x^{\mathsf{T}}_i\beta}\right)\right]+\kappa \left|\beta\right|^2, \end{align}

where

![]() $\kappa>0$

is some constant.

$\kappa>0$

is some constant.

We choose our training data to be the random samples of

![]() $(y_i,x_i)$

done with replacement. That is for each

$(y_i,x_i)$

done with replacement. That is for each

![]() $u_i \in (u_1,u_2,\ldots,u_n)$

, we have

$u_i \in (u_1,u_2,\ldots,u_n)$

, we have

![]() $u_i=(y_j,x_j)$

with probability

$u_i=(y_j,x_j)$

with probability

![]() $t^{-1}$

for all i, j. The parameter for the problem is

$t^{-1}$

for all i, j. The parameter for the problem is

![]() $\beta \in \mathbb{R}^p$

. Note that this objective function as defined in (12), is strongly convex with Lipschitz gradients. Indeed this is easy to see as

$\beta \in \mathbb{R}^p$

. Note that this objective function as defined in (12), is strongly convex with Lipschitz gradients. Indeed this is easy to see as

\begin{align*} \nabla^2 g(\beta)=\frac{1}{t}\left[\sum_{i=1}^{t}\frac{e^{x^{\mathsf{T}}_i\beta}}{\left(1+e^{x^{\mathsf{T}}_i\beta}\right)^2}x_ix^{\mathsf{T}}_i\right]+2\kappa I. \end{align*}

\begin{align*} \nabla^2 g(\beta)=\frac{1}{t}\left[\sum_{i=1}^{t}\frac{e^{x^{\mathsf{T}}_i\beta}}{\left(1+e^{x^{\mathsf{T}}_i\beta}\right)^2}x_ix^{\mathsf{T}}_i\right]+2\kappa I. \end{align*}

It is immediate that the above matrix is positive definite with

![]() $||\nabla^2 g(\beta)||_2 \ge 2\kappa$

. In addition, as

$||\nabla^2 g(\beta)||_2 \ge 2\kappa$

. In addition, as

![]() $x_i$

are fixed data points,

$x_i$

are fixed data points,

![]() $\left|\left|\nabla^2 g(\beta)\right|\right|_2 \le 1/t\, \lambda_{\max}(XX^{\mathsf{T}})+2\kappa$

, where

$\left|\left|\nabla^2 g(\beta)\right|\right|_2 \le 1/t\, \lambda_{\max}(XX^{\mathsf{T}})+2\kappa$

, where

![]() $X=[x_1,x_2,\ldots,x_t]$

and

$X=[x_1,x_2,\ldots,x_t]$

and

![]() $\lambda_{\max}(XX^{\mathsf{T}})$

denotes the largest eigenvalue of

$\lambda_{\max}(XX^{\mathsf{T}})$

denotes the largest eigenvalue of

![]() $XX^{\mathsf{T}}$

. Hence

$XX^{\mathsf{T}}$

. Hence

![]() $\nabla g(\beta)$

is Lipschitz.

$\nabla g(\beta)$

is Lipschitz.

Define

![]() $u_i=(v_i,u_{1,i},u_{2,i},\ldots,u_{p,i})^{\mathsf{T}}$

and

$u_i=(v_i,u_{1,i},u_{2,i},\ldots,u_{p,i})^{\mathsf{T}}$

and

![]() $\tilde{u}_i=(u_{1,i},u_{2,i},\ldots,u_{p,i})^{\mathsf{T}}$

. In addition, define the loss function as

$\tilde{u}_i=(u_{1,i},u_{2,i},\ldots,u_{p,i})^{\mathsf{T}}$

. In addition, define the loss function as

Note that the loss function is also strongly convex and Lipschitz in

![]() $\beta$

. It can also be easily seen that the loss function is unbiased for the objective function. We need to find a matrix

$\beta$

. It can also be easily seen that the loss function is unbiased for the objective function. We need to find a matrix

![]() $\sigma(\beta)$

such that

$\sigma(\beta)$

such that

![]() $\sigma(\beta)\sigma(\beta)^{\mathsf{T}}=\textrm{Var}(\nabla l(\beta,u))$

and

$\sigma(\beta)\sigma(\beta)^{\mathsf{T}}=\textrm{Var}(\nabla l(\beta,u))$

and

![]() $\sigma(\beta)$

is Lipschitz in

$\sigma(\beta)$

is Lipschitz in

![]() $\beta$

in the

$\beta$

in the

![]() $||\cdot||_2$

norm. Now,

$||\cdot||_2$

norm. Now,

Define

![]() $z_i=(y_i,x_i)$

.

$z_i=(y_i,x_i)$

.

\begin{align*} \textrm{Var}(\nabla l(\beta,u))&=\frac{1}{t}\sum_{i=1}^{t}\left(\nabla l(\beta,z_i)-\nabla g(\beta)\right)\left(\nabla l(\beta,z_i)-\nabla g(\beta)\right)^{\mathsf{T}}. \end{align*}

\begin{align*} \textrm{Var}(\nabla l(\beta,u))&=\frac{1}{t}\sum_{i=1}^{t}\left(\nabla l(\beta,z_i)-\nabla g(\beta)\right)\left(\nabla l(\beta,z_i)-\nabla g(\beta)\right)^{\mathsf{T}}. \end{align*}

In addition, define

Note that

Hence, for this problem, we may consider

It can easily seen now that

![]() $\sigma(\beta)$

is Lipschitz in the Frobenius norm. We have

$\sigma(\beta)$

is Lipschitz in the Frobenius norm. We have

\begin{align*} \left|\left|\sigma(\beta_1)-\sigma(\beta_2)\right|\right|_F \le \frac{1}{\sqrt{t}}\sum_{i=1}^{t}\left|\nabla l(\beta_1,z_i)-\nabla l(\beta_2,z_i)\right|+\sqrt{t}\left|\nabla g(\beta_1)-\nabla g(\beta_2)\right|. \end{align*}

\begin{align*} \left|\left|\sigma(\beta_1)-\sigma(\beta_2)\right|\right|_F \le \frac{1}{\sqrt{t}}\sum_{i=1}^{t}\left|\nabla l(\beta_1,z_i)-\nabla l(\beta_2,z_i)\right|+\sqrt{t}\left|\nabla g(\beta_1)-\nabla g(\beta_2)\right|. \end{align*}

As

![]() $\nabla l$

and

$\nabla l$

and

![]() $\nabla g$

are both Lipschitz in

$\nabla g$

are both Lipschitz in

![]() $\beta$

, we have

$\beta$

, we have

![]() $\sigma(\beta)$

as a Lipschitz function in

$\sigma(\beta)$

as a Lipschitz function in

![]() $\beta$

.

$\beta$

.

Note that the above argument for

![]() $\sigma(\beta)$

being Lipschitz can be applied to a large class of problems with such a variance covariance matrix. The only two conditions necessary to establish this is that both

$\sigma(\beta)$

being Lipschitz can be applied to a large class of problems with such a variance covariance matrix. The only two conditions necessary to establish this is that both

![]() $\nabla l(\cdot,z)$

and

$\nabla l(\cdot,z)$

and

![]() $\nabla g(\! \cdot \!)$

are Lipschitz. In addition, an implicit assumption in this case is that the data is fixed.

$\nabla g(\! \cdot \!)$

are Lipschitz. In addition, an implicit assumption in this case is that the data is fixed.

We provide a simulation examples in Figures 3 and 4 to exhibit convergence for the algorithm. Consider

![]() $p=6$

with the number of data points as

$p=6$

with the number of data points as

![]() $t=10^4$

. The number of samples we choose randomly with replacement is

$t=10^4$

. The number of samples we choose randomly with replacement is

![]() $n=10^3$

and the minibatch size is

$n=10^3$

and the minibatch size is

![]() $m=10$

. We consider five values of

$m=10$

. We consider five values of

![]() $\kappa$

as

$\kappa$

as

![]() $(0.2,0.1,0.05,0.01,0.001)$

. The data is generated as

$(0.2,0.1,0.05,0.01,0.001)$

. The data is generated as

![]() $y_i \sim \textrm{Ber}(1/2)$

i.i.d. and

$y_i \sim \textrm{Ber}(1/2)$

i.i.d. and

![]() $x_i$

are random standard Gaussian. The weights at each step of the iteration are generated as per

$x_i$

are random standard Gaussian. The weights at each step of the iteration are generated as per

![]() $W\sim N(\mu,\Sigma)$

where

$W\sim N(\mu,\Sigma)$

where

![]() $\mu$

and

$\mu$

and

![]() $\Sigma$

are provided in Assumption 3. Note that the true

$\Sigma$

are provided in Assumption 3. Note that the true

![]() $\beta=0$

. After each iteration is complete we replicate it and take the norm of all the replicated

$\beta=0$

. After each iteration is complete we replicate it and take the norm of all the replicated

![]() $\hat \beta$

and take their average. This gives us an approximation of

$\hat \beta$

and take their average. This gives us an approximation of

![]() $E|\beta|^2$

. We plot this and show that it converges to 0 at different rates, which depend on

$E|\beta|^2$

. We plot this and show that it converges to 0 at different rates, which depend on

![]() $\kappa$

.

$\kappa$

.

MSE vs iteration with

![]() $\gamma=0.5$

.

$\gamma=0.5$

.

4. Discussion

In our findings we have exhibited that the M-SGD error is approximately Gaussian irrespective of the distribution of the weights used in the problem as long as the number of samples and the number of minibatches is large. This helps practitioners comprehend that the dynamics of the M-SGD algorithm is very similar to that of the SGD algorithm with a scaled Gaussian error. Our results exhibit that the M-SGD algorithm is close in distribution to a particular diffusion, the dynamics of which is somewhat known. Diffusions generally exhibit the interesting phenomenon of escaping low potential regions [Reference Hu, Li, Li and Liu20]. The next direct question is whether, using our results, we can conduct an analysis to ascertain escape times of the M-SGD algorithm from local minima. Note that this analysis must be carried out with some care as there have been previous works that exhibit some caveats here [Reference Kushner22].

MSE vs iteration with

![]() $\gamma=0.1$

.

$\gamma=0.1$

.

Note that a few questions naturally arise from our work. The first natural question is whether the Gaussian nature of the M-SGD error is tight. We conjecture that this is indeed the case. The second question is to obtain sharper bounds for more specific class of problems. We believe that this is also possible. The third question is whether the M-SGD algorithm can be tweaked so that the dimension dependence and the dependence on the time horizon T improves. Another interesting problem that one may consider is the case where the data generating measure Q changes per iteration, which allows one to include time series data in the analysis. We shall try to address these questions in future research work.

Appendix A. Proofs of Proposition 2 and Theorem 1

We present the following lemma, which is used throughout our work.

Lemma 1. Under Assumption 1,

Proof. We prove this for one dimension as that suffices for the general case as expectation distributes over all the components of the vector. Here

![]() $\theta$

is a fixed point at which we differentiate. Note that

$\theta$

is a fixed point at which we differentiate. Note that

for some

![]() $\xi$

using the mean value theorem. Note that, since differentiation is a local property, we can force

$\xi$

using the mean value theorem. Note that, since differentiation is a local property, we can force

![]() $\theta_1 \in B(\theta,1)$

, where

$\theta_1 \in B(\theta,1)$

, where

![]() $B(\theta,1)$

denotes the ball centered at

$B(\theta,1)$

denotes the ball centered at

![]() $\theta$

with radius 1. This also forces

$\theta$

with radius 1. This also forces

![]() $\xi \in B(\theta,1)$

. In addition, note,

$\xi \in B(\theta,1)$

. In addition, note,

The last line follows as

![]() $\xi \in B(\theta,1)$

. This implies

$\xi \in B(\theta,1)$

. This implies

\begin{align*} \frac{l(\theta_1,u)-l(\theta,u)}{\theta_1-\theta} & \le \left|\frac{l(\theta_1,u)-l(\theta,u)}{\theta_1-\theta}\right|\\ &\le \left|\nabla l(\theta,u)\right|+h_1(u). \end{align*}

\begin{align*} \frac{l(\theta_1,u)-l(\theta,u)}{\theta_1-\theta} & \le \left|\frac{l(\theta_1,u)-l(\theta,u)}{\theta_1-\theta}\right|\\ &\le \left|\nabla l(\theta,u)\right|+h_1(u). \end{align*}

The last term is independent of

![]() $\theta_1$

and is integrable. Hence, we can use DCT and we are done.

$\theta_1$

and is integrable. Hence, we can use DCT and we are done.

Proof of Proposition 2. We begin by noting the fact that

\begin{align*} \mathbb{E}\left|\sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i) -\nabla g(\theta)\right)-\sqrt{n}\left(\sum_{i=1}^{n}\frac{1}{n}\nabla l(\theta,u_i) -\nabla g(\theta)\right)\right|^2 & \\ \quad=\mathbb{E}\left|\sum_{i=1}^{n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\nabla l(\theta,u_i)+\left(\sqrt{n}-\sqrt{m}\right)\nabla g(\theta)\right|^2. \end{align*}

\begin{align*} \mathbb{E}\left|\sqrt{m}\left(\sum_{i=1}^{n}w_i \nabla l(\theta,u_i) -\nabla g(\theta)\right)-\sqrt{n}\left(\sum_{i=1}^{n}\frac{1}{n}\nabla l(\theta,u_i) -\nabla g(\theta)\right)\right|^2 & \\ \quad=\mathbb{E}\left|\sum_{i=1}^{n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\nabla l(\theta,u_i)+\left(\sqrt{n}-\sqrt{m}\right)\nabla g(\theta)\right|^2. \end{align*}

Now, the last term is equal to

\begin{align*} &\mathbb{E}\left\langle\sum_{i=1}^{n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\nabla l(\theta,u_i),\sum_{i=1}^{n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\nabla l(\theta,u_i)\right\rangle\\ &+ 2 \ \mathbb{E}\left\langle\sum_{i=1}^{n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\nabla l(\theta,u_i),\left(\sqrt{n}-\sqrt{m}\right)\nabla g(\theta)\right\rangle + \left(\sqrt{n}-\sqrt{m}\right)^2\left|\nabla g(\theta)\right|^2. \end{align*}

\begin{align*} &\mathbb{E}\left\langle\sum_{i=1}^{n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\nabla l(\theta,u_i),\sum_{i=1}^{n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\nabla l(\theta,u_i)\right\rangle\\ &+ 2 \ \mathbb{E}\left\langle\sum_{i=1}^{n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\nabla l(\theta,u_i),\left(\sqrt{n}-\sqrt{m}\right)\nabla g(\theta)\right\rangle + \left(\sqrt{n}-\sqrt{m}\right)^2\left|\nabla g(\theta)\right|^2. \end{align*}

We condition on

![]() $w=(w_1,w_2,\ldots,w_n)$

and get the above expression equal to

$w=(w_1,w_2,\ldots,w_n)$

and get the above expression equal to

\begin{align*} & \mathbb{E}\left[\sum_{1\le i,j \le n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\left(\sqrt{m}w_j-\frac{1}{\sqrt{n}}\right)\mathbb{E}_w\left\langle\nabla l(\theta,u_i),\nabla l(\theta,u_j)\right\rangle\right] \\ &+ 2 \ \mathbb{E}\left[\sum_{i=1}^{n}\left(\sqrt{m}w_i-\frac{1}{\sqrt{n}}\right)\left(\sqrt{n}-\sqrt{m}\right)\left|\nabla g(\theta)\right|^2)\right]\\ &+\left(\sqrt{n}-\sqrt{m}\right)^2 \ \left|\nabla g(\theta)\right|^2. \end{align*}