1. Introduction

Some of the most fundamental theorems in probability theory (and its applications) are concentration inequalities, which show that certain types of random variables are likely to lie in a small interval around their mean. In the other direction, anticoncentration inequalities give upper bounds on the probability that a random variable falls into a small interval or is equal to a particular value. In this area, one of the most important directions is the (polynomial) Littlewood–Offord problem. Roughly speaking, the problem is as follows.Footnote

1

Consider an n-variable polynomial

![]() $P\in \mathbb R[x_1,\ldots,x_n]$

, and consider independent Rademacher random variables

$P\in \mathbb R[x_1,\ldots,x_n]$

, and consider independent Rademacher random variables

![]() $\xi_1,\ldots,\xi_n\in\{-1,1\}$

(meaning that

$\xi_1,\ldots,\xi_n\in\{-1,1\}$

(meaning that

![]() $\mathrm{Pr}[\xi_{i}=-1]=\mathrm{Pr}[\xi_{i}=1]=1/2$

for each i). What upper bounds can we prove on the point probabilities of the form

$\mathrm{Pr}[\xi_{i}=-1]=\mathrm{Pr}[\xi_{i}=1]=1/2$

for each i). What upper bounds can we prove on the point probabilities of the form

![]() $\mathrm{Pr}[P(\xi_1,\ldots,\xi_n)=z]$

? More specifically, how large can the maximum point probability

$\mathrm{Pr}[P(\xi_1,\ldots,\xi_n)=z]$

? More specifically, how large can the maximum point probability

![]() $\sup_{z\in \mathbb{R}} \mathrm{Pr}[P(\xi_1,\ldots,\xi_n)=z]$

be, without making any strong assumptions about the polynomial P?

$\sup_{z\in \mathbb{R}} \mathrm{Pr}[P(\xi_1,\ldots,\xi_n)=z]$

be, without making any strong assumptions about the polynomial P?

Historically, the starting point for this problem was the linear case, where P is a degree-one polynomial. Indeed, consider a random variable

![]() $X=a_{1}\xi_{1}+\dots+a_{n}\xi_{n}$

, where

$X=a_{1}\xi_{1}+\dots+a_{n}\xi_{n}$

, where

![]() $a_{1},\ldots,a_{n}\in\mathbb{R}$

are nonzero real numbers and

$a_{1},\ldots,a_{n}\in\mathbb{R}$

are nonzero real numbers and

![]() $\xi_{1},\ldots,\xi_{n}\in\{-1,1\}$

are independent Rademacher random variables. As part of their study of random polynomials, in 1943 Littlewood and Offord [Reference Littlewood and OffordLO43] proved that

$\xi_{1},\ldots,\xi_{n}\in\{-1,1\}$

are independent Rademacher random variables. As part of their study of random polynomials, in 1943 Littlewood and Offord [Reference Littlewood and OffordLO43] proved that

Littlewood and Offord’s result was famously sharpened in 1945 by Erdős [Reference ErdősErd45], who found a purely combinatorial proof of what is now usually called the Erdős–Littlewood–Offord theorem: under the same assumptions,

This result is best-possible, as can be seen by considering the case where the coefficients

![]() $a_{i}$

are all equal.

$a_{i}$

are all equal.

Remark 1.1. It is worth noting that the general topic of anticoncentration of sums of independent random variables was first considered in 1936 by Doeblin and Lévy [Reference Doeblin and LévyDL36], and this led to a parallel line of research with many similar results (the two lines of research seem to have not been aware of each others’ existence until quite recently). In particular, in the above setting, the bound

![]() $\sup_{z\in\mathbb{R}}\mathrm{Pr}[X=z]\le O(1/\sqrt{n})$

follows from a general result claimed in a 1939 paper of Doeblin [Reference DoeblinDoe39], preceding Littlewood and Offord by several years. However, this general result is also the subject of a 1958 paper of Kolmogorov [Reference KolmogorovKol58], which claims that Doeblin’s paper did not provide a full proof.

$\sup_{z\in\mathbb{R}}\mathrm{Pr}[X=z]\le O(1/\sqrt{n})$

follows from a general result claimed in a 1939 paper of Doeblin [Reference DoeblinDoe39], preceding Littlewood and Offord by several years. However, this general result is also the subject of a 1958 paper of Kolmogorov [Reference KolmogorovKol58], which claims that Doeblin’s paper did not provide a full proof.

By now, the linear Littlewood–Offord problem is very well understood, and many variations and strengthenings are available. For example, there are very powerful inverse theorems that relate the maximum concentration probability to the arithmetic structure of the coefficients

![]() $a_1,\ldots,a_n$

, and these theorems have had a huge impact in random matrix theory (see for example the survey [Reference Nguyen and VuNV13]). Also, there are versions of the Erdős–Littlewood–Offord theorem for general distributions (i.e., allowing the variables

$a_1,\ldots,a_n$

, and these theorems have had a huge impact in random matrix theory (see for example the survey [Reference Nguyen and VuNV13]). Also, there are versions of the Erdős–Littlewood–Offord theorem for general distributions (i.e., allowing the variables

![]() $\xi_{1},\ldots,\xi_{n}$

to take non-Rademacher distributions), assuming that the distributions of the variables

$\xi_{1},\ldots,\xi_{n}$

to take non-Rademacher distributions), assuming that the distributions of the variables

![]() $\xi_{i}$

do not themselves concentrate too strongly. (Approximate results along these lines follow directly from the Lévy–Doeblin–Kolmogorov theorem mentioned in Remark 1.1, or can be deduced from the Erdős–Littlewood–Offord theorem; in some sense the Rademacher case is the ‘hardest case’. For exact results see [Reference JonesJon78, Reference JuškeviiusJuš22, Reference Leader and RadcliffeLR94].)

$\xi_{i}$

do not themselves concentrate too strongly. (Approximate results along these lines follow directly from the Lévy–Doeblin–Kolmogorov theorem mentioned in Remark 1.1, or can be deduced from the Erdős–Littlewood–Offord theorem; in some sense the Rademacher case is the ‘hardest case’. For exact results see [Reference JonesJon78, Reference JuškeviiusJuš22, Reference Leader and RadcliffeLR94].)

After the linear case, the next case to consider is the quadratic case: What bounds can we give on the point concentration of a quadratic polynomial

![]() $Q(\xi_{1},\ldots,\xi_{n})$

of independent Rademacher random variables

$Q(\xi_{1},\ldots,\xi_{n})$

of independent Rademacher random variables

![]() $\xi_{1},\ldots,\xi_{n}$

? This question came to the forefront in a 2005 paper by Costello, Tao and Vu [Reference Costello, Tao and VuCTV06], when they used such a bound in their proof of Weiss’ conjecture on singularity of random symmetric matrices. Specifically, they proved that if a quadratic polynomial

$\xi_{1},\ldots,\xi_{n}$

? This question came to the forefront in a 2005 paper by Costello, Tao and Vu [Reference Costello, Tao and VuCTV06], when they used such a bound in their proof of Weiss’ conjecture on singularity of random symmetric matrices. Specifically, they proved that if a quadratic polynomial

![]() $Q\in\mathbb{R}[x_{1},\ldots,x_{n}]$

has at least

$Q\in\mathbb{R}[x_{1},\ldots,x_{n}]$

has at least

![]() $cn^{2}$

nonzero coefficientsFootnote

2

(for some constant

$cn^{2}$

nonzero coefficientsFootnote

2

(for some constant

![]() $c \gt 0$

), then

$c \gt 0$

), then

![]() $X=Q(\xi_{1},\ldots,\xi_{n})$

(for independent Rademacher random variables

$X=Q(\xi_{1},\ldots,\xi_{n})$

(for independent Rademacher random variables

![]() $\xi_{1},\ldots,\xi_{n}$

) satisfies

$\xi_{1},\ldots,\xi_{n}$

) satisfies

Remark 1.2. Parallelling the situation described in Remark 1.1, we remark that Costello, Tao and Vu were actually not the first to prove this inequality: in 1996, Rosiński and Samorodnitsky [Reference Rosiński and SamorodnitskyRS96] had proved essentially the same result (in fact, a generalisation of it to higher-degree polynomials), in their study of zero-one laws for Lévy chaos (chaoses are polynomials of independent random variables; they are classical and very well-studied objects in probability theory, statistics and applied mathematics). Seemingly unaware of Rosiński and Samorodnitsky’s work, in 2013 Razborov and Viola [Reference Razborov and ViolaRV13] considered a similar higher-degree generalisation (for applications in the theory of Boolean functions).

The authors of [Reference Costello, Tao and VuCTV06, Reference Rosiński and SamorodnitskyRS96] already recognised that Equation (1.2) was likely not optimal. Indeed, just as for the linear Littlewood–Offord problem, one expects a bound of the form

![]() $\sup_{z\in\mathbb{R}}\mathrm{Pr}[X=z]\le O(1/\sqrt{n})$

(this bound is attained in the case

$\sup_{z\in\mathbb{R}}\mathrm{Pr}[X=z]\le O(1/\sqrt{n})$

(this bound is attained in the case

![]() $Q(x_{1},\ldots,x_{n})=(x_{1}+\dots+x_{n})^{2}$

, for example). A conjecture to this effect has been attributed to Nguyen and Vu (see [Reference Meka, Nguyen and VuMNV16, Reference Razborov and ViolaRV13]).

$Q(x_{1},\ldots,x_{n})=(x_{1}+\dots+x_{n})^{2}$

, for example). A conjecture to this effect has been attributed to Nguyen and Vu (see [Reference Meka, Nguyen and VuMNV16, Reference Razborov and ViolaRV13]).

The first improvement on Equation (1.2) was by Costello and Vu [Reference Costello and VuCV08], who showed how to adapt the arguments in [Reference Costello, Tao and VuCTV06] to prove a bound of the form

![]() $O(n^{-1/4})$

. Introducing several new ideas, Costello [Reference CostelloCos13] then managed to obtain the nearly optimal bound

$O(n^{-1/4})$

. Introducing several new ideas, Costello [Reference CostelloCos13] then managed to obtain the nearly optimal bound

![]() $O(n^{-1/2+\varepsilon})$

(for any constant

$O(n^{-1/2+\varepsilon})$

(for any constant

![]() $\varepsilon \gt 0$

). Via a completely different approach, this bound was further refined to

$\varepsilon \gt 0$

). Via a completely different approach, this bound was further refined to

![]() $\exp(O((\log\log n)^{2}))/\sqrt{n}$

by Meka, Nguyen and VuFootnote

3

(see arXiv version v4 of [Reference Meka, Nguyen and VuMNV16]), before it was observed that the bound

$\exp(O((\log\log n)^{2}))/\sqrt{n}$

by Meka, Nguyen and VuFootnote

3

(see arXiv version v4 of [Reference Meka, Nguyen and VuMNV16]), before it was observed that the bound

![]() $(\log n)^{O(1)}/\sqrt{n}$

follows from a powerful general result of Kane [Reference KaneKan14] (see the journal version of [Reference Meka, Nguyen and VuMNV16] for more discussion).

$(\log n)^{O(1)}/\sqrt{n}$

follows from a powerful general result of Kane [Reference KaneKan14] (see the journal version of [Reference Meka, Nguyen and VuMNV16] for more discussion).

In this paper, we finally resolve the quadratic Littlewood–Offord problem (up to constant factors), obtaining an optimal bound of

![]() $O(1/\sqrt{n})$

. We are also able to make a weaker assumption on Q than in previous work.

$O(1/\sqrt{n})$

. We are also able to make a weaker assumption on Q than in previous work.

Theorem 1.1. Let

![]() $Q\in\mathbb{R}[x_{1},\ldots,x_{n}]$

be a polynomial of degree at most two, and let

$Q\in\mathbb{R}[x_{1},\ldots,x_{n}]$

be a polynomial of degree at most two, and let

![]() $\xi_{1},\ldots,\xi_{n}\in\{-1,1\}$

be independent Rademacher random variables. For some positive integer m, suppose that

$\xi_{1},\ldots,\xi_{n}\in\{-1,1\}$

be independent Rademacher random variables. For some positive integer m, suppose that

![]() $Q(\xi_{1},\ldots,\xi_{n})$

‘robustly depends on at least m of the variables

$Q(\xi_{1},\ldots,\xi_{n})$

‘robustly depends on at least m of the variables

![]() $\xi_{i}$

’ in the sense that the value of

$\xi_{i}$

’ in the sense that the value of

![]() $Q(\xi_{1},\ldots,\xi_{n})$

cannot be determined by specifying any outcomes of any

$Q(\xi_{1},\ldots,\xi_{n})$

cannot be determined by specifying any outcomes of any

![]() $m-1$

of the variables

$m-1$

of the variables

![]() $\xi_{1},\ldots,\xi_n\in\{-1,1\}$

. Then,

$\xi_{1},\ldots,\xi_n\in\{-1,1\}$

. Then,

for some absolute constant C.

Remark 1.3. Recall that the Erdős–Littlewood–Offord theorem has an assumption that each linear coefficient

![]() $a_i$

is nonzero. Of course, zero coefficients can be ignored, so without such an assumption one immediately obtains a bound of the form

$a_i$

is nonzero. Of course, zero coefficients can be ignored, so without such an assumption one immediately obtains a bound of the form

![]() $\sup_{z\in \mathbb R}\mathrm{Pr}[a_1\xi_1+\dots+a_n\xi_n=z]\le O(1/\sqrt{m})$

, where m is the number of nonzero

$\sup_{z\in \mathbb R}\mathrm{Pr}[a_1\xi_1+\dots+a_n\xi_n=z]\le O(1/\sqrt{m})$

, where m is the number of nonzero

![]() $a_{i}$

. Unfortunately, the situation is not so simple in the quadratic case. One could make the very strong assumption that every degree-two coefficient is nonzero, but this is too strong of an assumption for most applications.Footnote

4

Alternatively, one might wish to consider the very weak assumption that every variable

$a_{i}$

. Unfortunately, the situation is not so simple in the quadratic case. One could make the very strong assumption that every degree-two coefficient is nonzero, but this is too strong of an assumption for most applications.Footnote

4

Alternatively, one might wish to consider the very weak assumption that every variable

![]() $x_{i}$

features in at least one nonzero term of

$x_{i}$

features in at least one nonzero term of

![]() $Q(x_{i})$

, but unfortunately this is too weak to get a sensible bound: indeed, consider for example the case where

$Q(x_{i})$

, but unfortunately this is too weak to get a sensible bound: indeed, consider for example the case where

![]() $Q(x_{1},\ldots,x_{n})=(1+\xi_{1})(\xi_{1}+\dots+\xi_{n})$

, which is zero whenever

$Q(x_{1},\ldots,x_{n})=(1+\xi_{1})(\xi_{1}+\dots+\xi_{n})$

, which is zero whenever

![]() $\xi_{1}=-1$

, and therefore

$\xi_{1}=-1$

, and therefore

![]() $\mathrm{Pr}[Q(\xi_{1},\ldots,\xi_{n})=0]\ge 1/2$

. More generally, if it is possible to determine the value of

$\mathrm{Pr}[Q(\xi_{1},\ldots,\xi_{n})=0]\ge 1/2$

. More generally, if it is possible to determine the value of

![]() $Q(\xi_{1},\ldots,\xi_{n})$

by fixing the outcomes of a small number of variables to certain values, then this automatically leads to a large point probability for

$Q(\xi_{1},\ldots,\xi_{n})$

by fixing the outcomes of a small number of variables to certain values, then this automatically leads to a large point probability for

![]() $Q(\xi_{1},\ldots,\xi_{n})$

. So it is necessary to make an assumption guaranteeing that Q ‘robustly depends on many of the

$Q(\xi_{1},\ldots,\xi_{n})$

. So it is necessary to make an assumption guaranteeing that Q ‘robustly depends on many of the

![]() $\xi_{i}$

’.

$\xi_{i}$

’.

We believe that our assumption in Theorem 1.1 captures this ‘robust dependence on many of the

![]() $\xi_{i}$

’ in a natural way. To compare with previous work: our assumption is slightly weaker than the assumption in [Reference Meka, Nguyen and VuMNV16] (which says that

$\xi_{i}$

’ in a natural way. To compare with previous work: our assumption is slightly weaker than the assumption in [Reference Meka, Nguyen and VuMNV16] (which says that

![]() $Q(x_{1},\ldots,x_{n})$

has many quadratic terms featuring disjoint variables), and is much weaker than the assumptions in [Reference CostelloCos13, Reference Costello, Tao and VuCTV06] (which say, in slightly different ways, that

$Q(x_{1},\ldots,x_{n})$

has many quadratic terms featuring disjoint variables), and is much weaker than the assumptions in [Reference CostelloCos13, Reference Costello, Tao and VuCTV06] (which say, in slightly different ways, that

![]() $Q(x_{1},\ldots,x_{n})$

has a huge number of nonzero coefficients).

$Q(x_{1},\ldots,x_{n})$

has a huge number of nonzero coefficients).

Remark 1.4. Of course, we could ask for the optimal constant factor C in Theorem 1.1. It is not necessarily clear what to expect: one may guess that the polynomial

![]() $Q(x_1,\ldots,x_m)=(x_1+\dots+x_m)(x_1+\dots+x_m+2)$

or

$Q(x_1,\ldots,x_m)=(x_1+\dots+x_m)(x_1+\dots+x_m+2)$

or

![]() $Q(x_1,\ldots,x_m)=(x_1+\dots+x_m+1)(x_1+\dots+x_m-1)$

(depending on whether m is odd or even) is the worst case, but recent developments on the so-called Gotsman–Linial conjecture (see § 12) suggest that this might be too naïve. It is also quite possible that the optimal value for the constant factor in the bound on

$Q(x_1,\ldots,x_m)=(x_1+\dots+x_m+1)(x_1+\dots+x_m-1)$

(depending on whether m is odd or even) is the worst case, but recent developments on the so-called Gotsman–Linial conjecture (see § 12) suggest that this might be too naïve. It is also quite possible that the optimal value for the constant factor in the bound on

![]() $\sup_{z\in\mathbb{R}}\mathrm{Pr}[Q(\xi_{1},\ldots,\xi_{n})=z]$

is sensitive to the precise assumption one makes on the quadratic polynomial Q (to ensure that it ‘robustly depends on many of the variables

$\sup_{z\in\mathbb{R}}\mathrm{Pr}[Q(\xi_{1},\ldots,\xi_{n})=z]$

is sensitive to the precise assumption one makes on the quadratic polynomial Q (to ensure that it ‘robustly depends on many of the variables

![]() $\xi_i$

’; see Remark 1.3).

$\xi_i$

’; see Remark 1.3).

In § 2 we give a brief summary of the methods that had previously been applied to the (quadratic) LittlewoodOfford problem, their limitations and the new ideas in our proof of Theorem 1.1. In particular, our key contribution is a new inductive decoupling scheme: we take the well-known technique of decoupling, usually viewed as a tool to inefficiently ‘reduce from a quadratic problem to a linear problem’, and reinterpret it as a tool to efficiently ‘reduce the relative dimension of the quadratic part of a problem’. We also develop a new way to study the anticoncentration of random vectors, via a technique we call witness counting. We believe both these aspects of our proof may have broader applications.

We also remark that, while the details of the proof of Theorem 1.1 are rather involved, there is a certain special case of Theorem 1.1 (the case where the quadratic part of Q has ‘bounded rank’) which permits a much simpler proof. This case is already interesting, and we present its proof in § 4 to serve as an accessible illustration of our inductive decoupling scheme (and the results of § 4 will also be used later in the paper).

Finally, we remark that just, as for the linear Littlewood–Offord problem, one can deduce a version of Theorem 1.1 in which the variables

![]() $\xi_{i}$

are allowed to take essentially any discrete distribution (not just the Rademacher distribution), as follows.

$\xi_{i}$

are allowed to take essentially any discrete distribution (not just the Rademacher distribution), as follows.

Theorem 1.2. Fix

![]() $0<\delta<1$

. Let

$0<\delta<1$

. Let

![]() $Q\in\mathbb{R}[x_{1},\ldots,x_{n}]$

be a polynomial of degree at most two, and let

$Q\in\mathbb{R}[x_{1},\ldots,x_{n}]$

be a polynomial of degree at most two, and let

![]() $\zeta_{1},\ldots,\zeta_{n}\in \mathbb{R}$

be independent discrete random variables.

$\zeta_{1},\ldots,\zeta_{n}\in \mathbb{R}$

be independent discrete random variables.

For nonempty subsets

![]() $R_1,\ldots,R_n$

of the supports of

$R_1,\ldots,R_n$

of the supports of

![]() $\zeta_{1},\ldots,\zeta_{n}$

, respectively, say that the product

$\zeta_{1},\ldots,\zeta_{n}$

, respectively, say that the product

![]() $R_1\times \dots\times R_n$

is a fixing box for Q if the polynomial Q is constant on

$R_1\times \dots\times R_n$

is a fixing box for Q if the polynomial Q is constant on

![]() $R_1\times \dots\times R_n$

. For some positive integer m, suppose that for any fixing box

$R_1\times \dots\times R_n$

. For some positive integer m, suppose that for any fixing box

![]() $R_1\times \dots\times R_n$

there are at least m indices

$R_1\times \dots\times R_n$

there are at least m indices

![]() $i\in \{1,\ldots,n\}$

such that

$i\in \{1,\ldots,n\}$

such that

![]() $\mathrm{Pr}[\zeta_{i}\in R_i]\le 1-\delta$

. Then, we have

$\mathrm{Pr}[\zeta_{i}\in R_i]\le 1-\delta$

. Then, we have

for some constant

![]() $C_\delta$

only depending on

$C_\delta$

only depending on

![]() $\delta$

.

$\delta$

.

Note that, if

![]() $\zeta_1,\ldots,\zeta_n$

are independent uniformly random integers in

$\zeta_1,\ldots,\zeta_n$

are independent uniformly random integers in

![]() $\{-B,-(B-1),\ldots,B-1,B\}$

, then

$\{-B,-(B-1),\ldots,B-1,B\}$

, then

![]() $\mathrm{Pr}[Q(\zeta_{1},\ldots,\zeta_{n})=z]\cdot (2B+1)^n$

is the number of integer solutions to

$\mathrm{Pr}[Q(\zeta_{1},\ldots,\zeta_{n})=z]\cdot (2B+1)^n$

is the number of integer solutions to

![]() $Q(x_1,\ldots,x_n)=z$

among integers

$Q(x_1,\ldots,x_n)=z$

among integers

![]() $x_1,\ldots,x_n$

with absolute value (‘height’) at most B. We remark that quantities of this form have been extensively studied in analytic number theory in the regime where n is constant and B is large (in contrast, our estimates are effective in the regime where B is constant and n is large); see for example [Reference Heath-BrownHB02, Reference PilaPil95] and [Reference Browning and GorodnikBG17, Theorem 1.11].

$x_1,\ldots,x_n$

with absolute value (‘height’) at most B. We remark that quantities of this form have been extensively studied in analytic number theory in the regime where n is constant and B is large (in contrast, our estimates are effective in the regime where B is constant and n is large); see for example [Reference Heath-BrownHB02, Reference PilaPil95] and [Reference Browning and GorodnikBG17, Theorem 1.11].

1.1 An application to edge statistics

Apart from the intrinsic value of Theorem 1.1, of course it also enables us to improve bounds in any place where quadratic Littlewood–Offord inequalities had previously been applied (see for example [Reference Addario-Berry and EslavaABE14, Reference Costello, Tao and VuCTV06, Reference Costello and VuCV10, Reference Costello and VuCV08, Reference Ferber, Kwan, Sah and SawhneyFKSS23, Reference Glasgow, Kwan, Sah and SawhneyGKSS, Reference Kwan, Sudakov and TranKST19]). Here, we highlight one particular application: we can resolve a conjecture of Alon, Hefetz, Krivelevich and Tyomkyn [Reference Alon, Hefetz, Krivelevich and TyomkynAHKT20] related to the so-called graph inducibility problem. Specifically, let

![]() $\mathcal{G}_{n}$

be the set of n-vertex graphs, and for a graph G let

$\mathcal{G}_{n}$

be the set of n-vertex graphs, and for a graph G let

![]() $N_{G}(k,\ell)$

be the number of sets of k vertices of G inducing exactly

$N_{G}(k,\ell)$

be the number of sets of k vertices of G inducing exactly

![]() $\ell$

edges. Then, define the edge inducibility (with parameters k and

$\ell$

edges. Then, define the edge inducibility (with parameters k and

![]() $\ell$

) by

$\ell$

) by

This parameter measures, for large graphs, the maximum possible fraction of k-vertex subsets which induce

![]() $\ell$

edges. By considering complete or empty graphs we have

$\ell$

edges. By considering complete or empty graphs we have

but for

![]() $0<\ell<\binom k 2$

we have

$0<\ell<\binom k 2$

we have

![]() $\operatorname{ind}(k,\ell)<1$

(this follows easily from Ramsey’s theorem, which says that large graphs must have large complete or empty subgraphs). In fact, for

$\operatorname{ind}(k,\ell)<1$

(this follows easily from Ramsey’s theorem, which says that large graphs must have large complete or empty subgraphs). In fact, for

![]() $0<\ell<\binom k 2$

, and large k, we now know that

$0<\ell<\binom k 2$

, and large k, we now know that

![]() $\operatorname{ind}(k,\ell)$

cannot be much larger than

$\operatorname{ind}(k,\ell)$

cannot be much larger than

![]() $1/e$

; this was the content of the Edge-Statistics conjecture, proved in a combination of papers by Kwan, Sudakov and Tran [Reference Kwan, Sudakov and TranKST19], Fox and Sauermann [Reference Fox and SauermannFS20] and Martinsson, Mousset, Noever and Trujić [Reference Martinsson, Mousset, Noever and TrujićMMNT19]. One can use Theorem 1.1 to prove the following much stronger bound when

$1/e$

; this was the content of the Edge-Statistics conjecture, proved in a combination of papers by Kwan, Sudakov and Tran [Reference Kwan, Sudakov and TranKST19], Fox and Sauermann [Reference Fox and SauermannFS20] and Martinsson, Mousset, Noever and Trujić [Reference Martinsson, Mousset, Noever and TrujićMMNT19]. One can use Theorem 1.1 to prove the following much stronger bound when

![]() $\ell$

is far from zero and far from

$\ell$

is far from zero and far from

![]() $\binom{k}{2}$

, which was conjectured by Alon, Hefetz, Krivelevich and Tyomkyn (see [Reference Alon, Hefetz, Krivelevich and TyomkynAHKT20, Conjecture 6.2]).

$\binom{k}{2}$

, which was conjectured by Alon, Hefetz, Krivelevich and Tyomkyn (see [Reference Alon, Hefetz, Krivelevich and TyomkynAHKT20, Conjecture 6.2]).

Theorem 1.3. For

![]() $0<\ell<\binom k 2$

we have

$0<\ell<\binom k 2$

we have

$$ \operatorname{ind}(k,\ell)=O\left(\frac 1{\sqrt{\min\Big(\ell,\binom k2-\ell\Big)/k}}\right). $$

$$ \operatorname{ind}(k,\ell)=O\left(\frac 1{\sqrt{\min\Big(\ell,\binom k2-\ell\Big)/k}}\right). $$

The deduction of Theorem 1.3 from Theorem 1.1 is exactly as in the proof of [Reference Kwan, Sudakov and TranKST19, Theorem 1.1] (which uses the weaker quadratic Littlewood–Offord inequality from [Reference Meka, Nguyen and VuMNV16]), so we do not include it here. The idea is that, for any large graph G, the number of edges in a random set of k vertices of G can be interpreted as a quadratic polynomial of independent Rademacher random variables (via a certain coupling).

1.1.1 Notation

As usual, for a nonnegative integer n, we write

![]() $[n]:=\{1,\ldots,n\}$

(note that for

$[n]:=\{1,\ldots,n\}$

(note that for

![]() $n=0$

, this means

$n=0$

, this means

![]() $[n]=\emptyset$

). For an

$[n]=\emptyset$

). For an

![]() $n\times n$

matrix A and subsets

$n\times n$

matrix A and subsets

![]() $I,J\subseteq [n]$

, we write

$I,J\subseteq [n]$

, we write

![]() $A[I\times J]$

for the

$A[I\times J]$

for the

![]() $|I|\times |J|$

submatrix of A consisting of the rows with indices in I and the columns with indices in J. Similarly, for a vector

$|I|\times |J|$

submatrix of A consisting of the rows with indices in I and the columns with indices in J. Similarly, for a vector

![]() $\vec v\in \mathbb{R}^n$

and a subset

$\vec v\in \mathbb{R}^n$

and a subset

![]() $I\subseteq [n]$

, we write

$I\subseteq [n]$

, we write

![]() $\vec v[I]\in \mathbb{R}^{I}$

for the vector obtained from

$\vec v[I]\in \mathbb{R}^{I}$

for the vector obtained from

![]() $\vec v$

by only taking the coordinates with indices in I. Finally, for a vector

$\vec v$

by only taking the coordinates with indices in I. Finally, for a vector

![]() $\vec v\in \mathbb{R}^n$

and

$\vec v\in \mathbb{R}^n$

and

![]() $i\in [n]$

, we write

$i\in [n]$

, we write

![]() $\vec v[i]\in \mathbb{R}$

for the ith entry of

$\vec v[i]\in \mathbb{R}$

for the ith entry of

![]() $\vec v$

.

$\vec v$

.

In this paper, we say that

![]() $Q\in \mathbb{R}[x_1,\ldots,x_n]$

is a quadratic polynomial if its degree is at most two. For

$Q\in \mathbb{R}[x_1,\ldots,x_n]$

is a quadratic polynomial if its degree is at most two. For

![]() $t \gt 0$

, we write

$t \gt 0$

, we write

![]() $\log(t)$

for the base-two logarithm of t.

$\log(t)$

for the base-two logarithm of t.

2. Key ideas, in comparison with previous work

By now there are several different proofs of the

![]() $O(1/\sqrt{n})$

bound in the (linear) Erdős–Littlewood–Offord theorem. As far as we know, they all take advantage of at least one of two very special properties of random variables of the form

$O(1/\sqrt{n})$

bound in the (linear) Erdős–Littlewood–Offord theorem. As far as we know, they all take advantage of at least one of two very special properties of random variables of the form

![]() $X=a_{1}\xi_{1}+\dots+a_{n}\xi_{n}$

. First, Erdős’ original proof [Reference ErdősErd45] used the monotonicity of X (for every index i, changing

$X=a_{1}\xi_{1}+\dots+a_{n}\xi_{n}$

. First, Erdős’ original proof [Reference ErdősErd45] used the monotonicity of X (for every index i, changing

![]() $\xi_{i}$

from

$\xi_{i}$

from

![]() $-1$

to 1 will always make the value of X increase, or always make the value of X decrease). Second, one can take advantage of the fact that X is a sum of independent random variables (each with a very simple distribution), so its Fourier transform is very well behaved. (The first Fourier-analytic proof seems to have been by Halász [Reference HalászHal77]; see also the very simple proof of a

$-1$

to 1 will always make the value of X increase, or always make the value of X decrease). Second, one can take advantage of the fact that X is a sum of independent random variables (each with a very simple distribution), so its Fourier transform is very well behaved. (The first Fourier-analytic proof seems to have been by Halász [Reference HalászHal77]; see also the very simple proof of a

![]() $O(1/\sqrt n)$

bound due to Croot [Reference CrootCro11].)

$O(1/\sqrt n)$

bound due to Croot [Reference CrootCro11].)

Unfortunately, in the quadratic setting (where

![]() $X=Q(\xi_{1},\ldots,\xi_{n})$

for some quadratic polynomial Q), both of the above properties of X may fail in a very strong way. There are two general approaches that have been most successful so far: Gaussian approximation, and a technique called decoupling. We briefly discuss both these approaches and their limitations, before describing our new ideas.

$X=Q(\xi_{1},\ldots,\xi_{n})$

for some quadratic polynomial Q), both of the above properties of X may fail in a very strong way. There are two general approaches that have been most successful so far: Gaussian approximation, and a technique called decoupling. We briefly discuss both these approaches and their limitations, before describing our new ideas.

2.1 Gaussian approximation and combinatorial partitioning

Whether one is interested in the linear or the quadratic cases of the Littlewood–Offord problem, perhaps the most natural starting point is to try to leverage some of the vast literature in probability theory on distributional approximation: if one can approximate the entire distribution of a random variable X, then anticoncentration should be an easy corollary.

This angle of attack is especially compelling in the linear case, since

![]() $X=a_{1}\xi_{1}+\dots+a_{n}\xi_{n}$

is a sum of independent random variables; it is tempting to try to apply a central limit theorem. One cannot be too naïve here, as the limiting distribution of X could actually be very far from Gaussian (consider for example the case where

$X=a_{1}\xi_{1}+\dots+a_{n}\xi_{n}$

is a sum of independent random variables; it is tempting to try to apply a central limit theorem. One cannot be too naïve here, as the limiting distribution of X could actually be very far from Gaussian (consider for example the case where

![]() $a_{i}=2^{i}$

for each i). However, in their foundational paper, Littlewood and Offord [Reference Littlewood and OffordLO43] were in fact able to prove their

$a_{i}=2^{i}$

for each i). However, in their foundational paper, Littlewood and Offord [Reference Littlewood and OffordLO43] were in fact able to prove their

![]() $O(\log n/\sqrt{n})$

bound via Gaussian approximation. The key idea was to partition the coefficients

$O(\log n/\sqrt{n})$

bound via Gaussian approximation. The key idea was to partition the coefficients

![]() $a_{i}$

into

$a_{i}$

into

![]() $O(\log n)$

‘buckets’ according to their orders of magnitude, in such a way that Gaussian approximation is effective within each bucket.

$O(\log n)$

‘buckets’ according to their orders of magnitude, in such a way that Gaussian approximation is effective within each bucket.

It is far from obvious how to extend this type of strategy to the higher-degree case (when

![]() $X=Q(\xi_{1},\ldots,\xi_{n})$

for a bounded-degree polynomial Q), but this is more or less what was accomplished by Meka, Nguyen and Vu [Reference Meka, Nguyen and VuMNV16] and by Kane [Reference KaneKan14] in their bounds of the form

$X=Q(\xi_{1},\ldots,\xi_{n})$

for a bounded-degree polynomial Q), but this is more or less what was accomplished by Meka, Nguyen and Vu [Reference Meka, Nguyen and VuMNV16] and by Kane [Reference KaneKan14] in their bounds of the form

![]() $\exp(O((\log\log n)^{2}))/\sqrt{n}$

and

$\exp(O((\log\log n)^{2}))/\sqrt{n}$

and

![]() $(\log n)^{O(1)}/\sqrt{n}$

, respectively. Instead of a central limit theorem, one needs a Gaussian invariance principle (which provides sufficient conditions under which one can approximate a polynomial of independent Rademacher random variables by a polynomial of independent Gaussian random variables), and instead of a simple ‘bucketing’ argument one needs a regularity lemma to describe

$(\log n)^{O(1)}/\sqrt{n}$

, respectively. Instead of a central limit theorem, one needs a Gaussian invariance principle (which provides sufficient conditions under which one can approximate a polynomial of independent Rademacher random variables by a polynomial of independent Gaussian random variables), and instead of a simple ‘bucketing’ argument one needs a regularity lemma to describe

![]() $Q(\xi_{1},\ldots,\xi_{n})$

in terms of a ‘low-complexity decision tree’.

$Q(\xi_{1},\ldots,\xi_{n})$

in terms of a ‘low-complexity decision tree’.

These types of methods are very powerful and very flexible, but unfortunately it seems that one inevitably needs to ‘pay logarithmic factors’ in order to deconstruct an arbitraryFootnote

5

quadratic polynomial into ‘well-behaved pieces’ for which Gaussian approximation is effective. Even in the linear case, we are not aware of a way to prove an optimal

![]() $O(1/\sqrt n)$

bound via Gaussian approximation. Perhaps this is not surprising: in some sense the entire philosophy of the Littlewood–Offord problem is that anticoncentration is an extremely robust phenomenon that holds under much weaker assumptions than central limit theorems, and we should not expect to be able to use central limit theorems to prove optimal anticoncentration.

$O(1/\sqrt n)$

bound via Gaussian approximation. Perhaps this is not surprising: in some sense the entire philosophy of the Littlewood–Offord problem is that anticoncentration is an extremely robust phenomenon that holds under much weaker assumptions than central limit theorems, and we should not expect to be able to use central limit theorems to prove optimal anticoncentration.

2.2 Decoupling

A completely different technique was employed in the papers of Costello, Tao and Vu [Reference Costello, Tao and VuCTV06] and Rosński and Samorodnitsky [Reference Rosiński and SamorodnitskyRS96], which first studied the quadratic Littlewood–Offord problem. This technique is now usually called decoupling, following [Reference Costello, Tao and VuCTV06]. Roughly speaking, decoupling is a general technique to ‘reduce a quadratic anticoncentration problem to a linear one’.Footnote 6 Below, we sketch the basic idea, incorporating an improvement due to Costello and Vu [Reference Costello and VuCV08].Footnote 7

Let

![]() $Q\in \mathbb{R}[x_1,\ldots,x_n]$

be a quadratic polynomial and let

$Q\in \mathbb{R}[x_1,\ldots,x_n]$

be a quadratic polynomial and let

![]() $\vec{\xi}=(\xi_{1},\ldots,\xi_{n})$

be a sequence of independent Rademacher random variables. Given a partition of the index set

$\vec{\xi}=(\xi_{1},\ldots,\xi_{n})$

be a sequence of independent Rademacher random variables. Given a partition of the index set

![]() $\{1,\ldots,n\}$

into two subsets I and J, we can break the random vector

$\{1,\ldots,n\}$

into two subsets I and J, we can break the random vector

![]() $\vec{\xi}$

into two parts

$\vec{\xi}$

into two parts

![]() $\vec{\xi}[I]\in\{-1,1\}^{I}$

and

$\vec{\xi}[I]\in\{-1,1\}^{I}$

and

![]() $\vec{\xi}[J]\in\{-1,1\}^{J}$

. Then, a simple application of the Cauchy–Schwarz inequality (see Lemma 3.6) shows that, if

$\vec{\xi}[J]\in\{-1,1\}^{J}$

. Then, a simple application of the Cauchy–Schwarz inequality (see Lemma 3.6) shows that, if

![]() $\vec{\xi}\mkern2mu\vphantom{\xi}'[I]$

is an independent copy of

$\vec{\xi}\mkern2mu\vphantom{\xi}'[I]$

is an independent copy of

![]() $\vec{\xi}[I]$

, we have

$\vec{\xi}[I]$

, we have

Now,

![]() $Q(\vec{\xi}[I],\vec{\xi}[J])-Q(\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec{\xi}[J])$

can be interpreted as a linear function of

$Q(\vec{\xi}[I],\vec{\xi}[J])-Q(\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec{\xi}[J])$

can be interpreted as a linear function of

![]() $\vec{\xi}[J]$

, with coefficients that depend on

$\vec{\xi}[J]$

, with coefficients that depend on

![]() $(\vec{\xi}[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I])$

(since the terms that are quadratic in

$(\vec{\xi}[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I])$

(since the terms that are quadratic in

![]() $\vec \xi[J]$

cancel out). Furthermore, this linear function typically has many nonzero coefficients (since it is unlikely that most of the ‘cross-terms’ between

$\vec \xi[J]$

cancel out). Furthermore, this linear function typically has many nonzero coefficients (since it is unlikely that most of the ‘cross-terms’ between

![]() $\vec \xi[I]$

and

$\vec \xi[I]$

and

![]() $\vec{\xi}\mkern2mu\vphantom{\xi}'[I]$

cancel out). So, after conditioning on a typical outcome of

$\vec{\xi}\mkern2mu\vphantom{\xi}'[I]$

cancel out). So, after conditioning on a typical outcome of

![]() $(\vec{\xi}[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I])$

, one can apply the Erdős–Littlewood–Offord theorem to obtain a bound of the form

$(\vec{\xi}[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I])$

, one can apply the Erdős–Littlewood–Offord theorem to obtain a bound of the form

![]() $\mathrm{Pr}[Q(\vec \xi)=z]\le (O(1/\sqrt n))^{1/2}=O(n^{-1/4})$

.

$\mathrm{Pr}[Q(\vec \xi)=z]\le (O(1/\sqrt n))^{1/2}=O(n^{-1/4})$

.

The great advantage of decoupling is that the resulting linear anticoncentration problem is much easier: one has access to the much wider range of tools available to study sums of independent random variables. However, the inequality between Equations (2.1) and (2.2) is rather lossy, and one tends to end up with bounds that are at least ‘a square root away’ from best-possible.Footnote 8

Costello’s paper [Reference CostelloCos13], proving the first bound of the form

![]() $n^{-1/2+o(1)}$

for the quadratic Littlewood–Offord problem, combined decoupling with a structural dichotomy. Namely, Costello discovered that if the quadratic polynomial Q is in a certain sense ‘robustly irreducible’, then number-theoretic arguments give the stronger bound

$n^{-1/2+o(1)}$

for the quadratic Littlewood–Offord problem, combined decoupling with a structural dichotomy. Namely, Costello discovered that if the quadratic polynomial Q is in a certain sense ‘robustly irreducible’, then number-theoretic arguments give the stronger bound

![]() $\mathrm{Pr}[Q(\vec{\xi}[I],\vec{\xi}[J])-Q(\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec{\xi}[J])=0]\le n^{-1+o(1)}$

, and so, even after the ‘square-root loss’ of decoupling, one has

$\mathrm{Pr}[Q(\vec{\xi}[I],\vec{\xi}[J])-Q(\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec{\xi}[J])=0]\le n^{-1+o(1)}$

, and so, even after the ‘square-root loss’ of decoupling, one has

![]() $\mathrm{Pr}[Q(\vec{\xi}[I],\vec{\xi}[J])=z]\le n^{-1/2+o(1)}$

. He then gave a separate argument to handle the case where Q does not satisfy the robust irreducibility condition (i.e., when Q essentially splits into linear factors), based on the Szemerédi–Trotter theorem from discrete geometry.

$\mathrm{Pr}[Q(\vec{\xi}[I],\vec{\xi}[J])=z]\le n^{-1/2+o(1)}$

. He then gave a separate argument to handle the case where Q does not satisfy the robust irreducibility condition (i.e., when Q essentially splits into linear factors), based on the Szemerédi–Trotter theorem from discrete geometry.

We cannot conclusively rule out the possibility that one could prove an optimal

![]() $O(1/\sqrt n)$

bound with a similar kind of case analysis, but this seems to be extremely difficult. In particular, we are not aware of any suitable candidate for a condition on Q which ensures that

$O(1/\sqrt n)$

bound with a similar kind of case analysis, but this seems to be extremely difficult. In particular, we are not aware of any suitable candidate for a condition on Q which ensures that

![]() $\mathrm{Pr}[Q(\vec{\xi}[I],\vec{\xi}[J])-Q(\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec{\xi}[J])=0]\le O(1/n)$

(Costello’s notion of robust irreducibility, and similar notions of ‘robust rank’ or ‘robust sum-of-squares complexity’, are only suitable for an

$\mathrm{Pr}[Q(\vec{\xi}[I],\vec{\xi}[J])-Q(\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec{\xi}[J])=0]\le O(1/n)$

(Costello’s notion of robust irreducibility, and similar notions of ‘robust rank’ or ‘robust sum-of-squares complexity’, are only suitable for an

![]() $n^{-1+o(1)}$

bound on such probabilities). Also, Costello’s Szemerédi–Trotter-based proof for the nearly reducible case does not seem to easily generalise to other simple-looking families of quadratic polynomials (for example, polynomials which can be written as the sum of four squares; see the discussion in [Reference Fox, Kwan and SpinkFKS23, Section 10]).

$n^{-1+o(1)}$

bound on such probabilities). Also, Costello’s Szemerédi–Trotter-based proof for the nearly reducible case does not seem to easily generalise to other simple-looking families of quadratic polynomials (for example, polynomials which can be written as the sum of four squares; see the discussion in [Reference Fox, Kwan and SpinkFKS23, Section 10]).

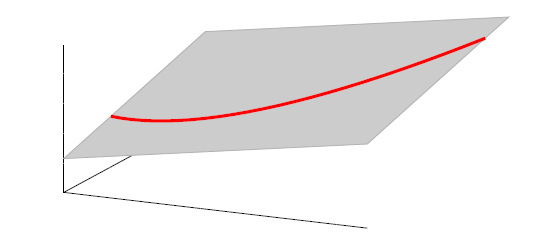

2.3 A geometric point of view, and an inductive decoupling scheme

In this paper, we consider a different perspective on decoupling: instead of using decoupling to immediately reduce from a quadratic problem to a linear one, we reinterpret decoupling as a tool to obtain a problem with a linear and a quadratic part, and to inductively ‘reduce the proportion of our problem that is quadratic’. To explain this, it is helpful to take a geometric perspective.

Specifically, for a quadratic polynomial

![]() $Q\in \mathbb{R}[x_1,\ldots,x_n]$

and a random vector

$Q\in \mathbb{R}[x_1,\ldots,x_n]$

and a random vector

![]() $\vec{\xi}\in \{1,-1\}^n$

, note that

$\vec{\xi}\in \{1,-1\}^n$

, note that

![]() $\mathrm{Pr}[Q(\vec \xi)=z]$

can be interpreted as the probability that

$\mathrm{Pr}[Q(\vec \xi)=z]$

can be interpreted as the probability that

![]() $\vec \xi$

lies in the quadric (quadratic variety)

$\vec \xi$

lies in the quadric (quadratic variety)

![]() $\mathcal Z$

given by

$\mathcal Z$

given by

![]() $\mathcal Z=\{\vec x \in \mathbb{R}^n: Q(\vec x)=z\}\subseteq\mathbb{R}^n$

. Similarly, the expression

$\mathcal Z=\{\vec x \in \mathbb{R}^n: Q(\vec x)=z\}\subseteq\mathbb{R}^n$

. Similarly, the expression

![]() $\mathrm{Pr}[Q(\vec{\xi}[I],\vec{\xi}[J])=z\text{ and }Q(\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec{\xi}[J])=z]$

appearing in Equation (2.1) can be interpreted as the probability that

$\mathrm{Pr}[Q(\vec{\xi}[I],\vec{\xi}[J])=z\text{ and }Q(\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec{\xi}[J])=z]$

appearing in Equation (2.1) can be interpreted as the probability that

![]() $\vec \xi[J]$

lies in the variety

$\vec \xi[J]$

lies in the variety

where, for

![]() $\vec u\in \mathbb{R}^I$

, we write

$\vec u\in \mathbb{R}^I$

, we write

![]() $\mathcal Z_{\vec u}$

for the set of all

$\mathcal Z_{\vec u}$

for the set of all

![]() $\vec x\in \mathbb{R}^J$

with

$\vec x\in \mathbb{R}^J$

with

![]() $(\vec u,\vec x)\in \mathcal Z$

(typically, this is a quadric in

$(\vec u,\vec x)\in \mathcal Z$

(typically, this is a quadric in

![]() $\mathbb{R}^J$

). Using this language, Equation (2.1) can be restated as

$\mathbb{R}^J$

). Using this language, Equation (2.1) can be restated as

The next step leading to the traditional decoupling inequality, Equation (2.2), can geometrically be phrased as observing that

![]() $\mathcal Z^{(1)}$

lies inside the affine-linear subspace

$\mathcal Z^{(1)}$

lies inside the affine-linear subspace

Indeed, this yields the probability bound

stated in Equation (2.2). One can then forget about

![]() $\mathcal Z^{(1)}$

and restrict one’s attention to the affine-linear subspace

$\mathcal Z^{(1)}$

and restrict one’s attention to the affine-linear subspace

![]() $\mathcal W^{(1)}$

, where the relevant probabilities are easier to analyse (under suitable assumptions on Q, one can show that

$\mathcal W^{(1)}$

, where the relevant probabilities are easier to analyse (under suitable assumptions on Q, one can show that

![]() $\mathrm{Pr}[\vec \xi[J]\in \mathcal W^{(1)}]\le O(1/\sqrt{n})$

, leading to the bound

$\mathrm{Pr}[\vec \xi[J]\in \mathcal W^{(1)}]\le O(1/\sqrt{n})$

, leading to the bound

![]() $\mathrm{Pr}[Q(\vec \xi)=z]\le O(n^{-1/4})$

described in § 2.2).

$\mathrm{Pr}[Q(\vec \xi)=z]\le O(n^{-1/4})$

described in § 2.2).

However, it turns out that this ‘forgetting’ of

![]() $\mathcal{Z}^{(1)}$

is precisely the cause of the square-root loss usually associated with decoupling. Indeed,

$\mathcal{Z}^{(1)}$

is precisely the cause of the square-root loss usually associated with decoupling. Indeed,

![]() $\mathcal W^{(1)}$

is a variety with codimension 1 (being defined by a single equation), while

$\mathcal W^{(1)}$

is a variety with codimension 1 (being defined by a single equation), while

![]() $\mathcal{Z}^{(1)}$

is typicallyFootnote

9

a variety with codimension (at least) 2 (being defined by two equations), so intuitively we should be much less likely to have

$\mathcal{Z}^{(1)}$

is typicallyFootnote

9

a variety with codimension (at least) 2 (being defined by two equations), so intuitively we should be much less likely to have

![]() $\vec{\xi}[J]\in\mathcal{Z}^{(1)}$

than

$\vec{\xi}[J]\in\mathcal{Z}^{(1)}$

than

![]() $\vec{\xi}[J]\in\mathcal{W}^{(1)}$

. More specifically, in order to have

$\vec{\xi}[J]\in\mathcal{W}^{(1)}$

. More specifically, in order to have

![]() $\vec{\xi}[J]\in\mathcal{Z}^{(1)}$

, we need

$\vec{\xi}[J]\in\mathcal{Z}^{(1)}$

, we need

![]() $\vec{\xi}[J]$

to satisfy two different equations simultaneously, and we might expect each of these to be satisfied with probability

$\vec{\xi}[J]$

to satisfy two different equations simultaneously, and we might expect each of these to be satisfied with probability

![]() $O(1/\sqrt n)$

. So for typical outcomes of

$O(1/\sqrt n)$

. So for typical outcomes of

![]() $\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I]$

(which determine

$\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I]$

(which determine

![]() $\mathcal Z^{(1)}$

) we might expect

$\mathcal Z^{(1)}$

) we might expect

![]() $\mathrm{Pr}[\vec{\xi}[J]\in\mathcal{Z}^{(1)}\,|\,\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I]]\le(O(1/\sqrt{n}))^{2}$

. If we could prove this, we would be able to deduce Theorem 1.1 (recalling Equation (2.3)).

$\mathrm{Pr}[\vec{\xi}[J]\in\mathcal{Z}^{(1)}\,|\,\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I]]\le(O(1/\sqrt{n}))^{2}$

. If we could prove this, we would be able to deduce Theorem 1.1 (recalling Equation (2.3)).

So, we choose not to ‘forget’

![]() $\mathcal{Z}^{(1)}$

, and our task is to show that

$\mathcal{Z}^{(1)}$

, and our task is to show that

![]() $\mathrm{Pr}[\vec{\xi}[J]\in\mathcal{Z}^{(1)}]\le(O(1/\sqrt{n}))^{2}$

. While this new task may seem harder than the previous one (as

$\mathrm{Pr}[\vec{\xi}[J]\in\mathcal{Z}^{(1)}]\le(O(1/\sqrt{n}))^{2}$

. While this new task may seem harder than the previous one (as

![]() $\mathcal{Z}^{(1)}$

seems like a more complicated object than

$\mathcal{Z}^{(1)}$

seems like a more complicated object than

![]() $\mathcal{Z}$

), the key observation is that we have ‘reduced the relative proportion of the quadratic part of our problem’. Indeed, at the start we were interested in a quadric

$\mathcal{Z}$

), the key observation is that we have ‘reduced the relative proportion of the quadratic part of our problem’. Indeed, at the start we were interested in a quadric

![]() $\mathcal{Z}$

described by a single quadratic equation, but now we are interested in

$\mathcal{Z}$

described by a single quadratic equation, but now we are interested in

![]() $\mathcal{Z}^{(1)}$

, which can be interpreted as a quadric inside the affine-linear subspace

$\mathcal{Z}^{(1)}$

, which can be interpreted as a quadric inside the affine-linear subspace

![]() $\mathcal{W}^{(1)}\subseteq \mathbb{R}^J$

. That is to say,

$\mathcal{W}^{(1)}\subseteq \mathbb{R}^J$

. That is to say,

![]() $\mathcal{Z}^{(1)}$

is described by one linear and one quadratic equation, so now ‘only half of our problem is quadratic’.

$\mathcal{Z}^{(1)}$

is described by one linear and one quadratic equation, so now ‘only half of our problem is quadratic’.

Crucially, it is possible to iterate this entire procedure: we next fix a partition

![]() $I^{(2)}\cup J^{(2)}$

of J, and consider the variety

$I^{(2)}\cup J^{(2)}$

of J, and consider the variety

(where, for

![]() $\vec u\in \mathbb{R}^{I^{(2)}}$

, we write

$\vec u\in \mathbb{R}^{I^{(2)}}$

, we write

![]() $\mathcal Z^{(1)}_{\vec u}$

for the set of all

$\mathcal Z^{(1)}_{\vec u}$

for the set of all

![]() $\vec x\in \mathbb{R}^{J^{(2)}}$

with

$\vec x\in \mathbb{R}^{J^{(2)}}$

with

![]() $(\vec u,\vec x)\in \mathcal Z^{(1)}$

). Now, decoupling, analogously to the inequality in Equation (2.3), yields

$(\vec u,\vec x)\in \mathcal Z^{(1)}$

). Now, decoupling, analogously to the inequality in Equation (2.3), yields

So, it suffices to show that

![]() $\mathrm{Pr}[\vec \xi[J^{(2)}]\in \mathcal Z^{(2)}\,|\,\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I]]\le (O(1/\sqrt n))^4$

for typical outcomes of

$\mathrm{Pr}[\vec \xi[J^{(2)}]\in \mathcal Z^{(2)}\,|\,\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I]]\le (O(1/\sqrt n))^4$

for typical outcomes of

![]() $\vec \xi[I]$

and

$\vec \xi[I]$

and

![]() $\vec{\xi}\mkern2mu\vphantom{\xi}'[I]$

. Now, for

$\vec{\xi}\mkern2mu\vphantom{\xi}'[I]$

. Now, for

![]() $\mathcal{Z}^{(2)}$

to be nonempty, it must be the case that

$\mathcal{Z}^{(2)}$

to be nonempty, it must be the case that

![]() $\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}\cap\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

is nonempty, or equivalently that

$\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}\cap\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

is nonempty, or equivalently that

![]() $\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}=\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

(it is not hard to see that

$\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}=\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

(it is not hard to see that

![]() $\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}$

and

$\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}$

and

![]() $\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

are parallel translates of each other). That is to say,

$\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

are parallel translates of each other). That is to say,

![]() $\vec \xi[I^{(2)}]-\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]$

must lie in a certain affine-linear subspace which typically has codimension 1; this happens with probability

$\vec \xi[I^{(2)}]-\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]$

must lie in a certain affine-linear subspace which typically has codimension 1; this happens with probability

![]() $O(1/\sqrt n)$

by the (linear) Erdős–Littlewood–Offord theorem.

$O(1/\sqrt n)$

by the (linear) Erdős–Littlewood–Offord theorem.

If we also condition on outcomes of

![]() $\vec \xi[I^{(2)}]$

and

$\vec \xi[I^{(2)}]$

and

![]() $\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]$

such that

$\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]$

such that

![]() $\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}=\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

, it is not hard to see that

$\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}=\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

, it is not hard to see that

![]() $\mathcal Z^{(2)}$

is typically a quadric inside the affine-linear subspace

$\mathcal Z^{(2)}$

is typically a quadric inside the affine-linear subspace

![]() $\mathcal W^{(2)}$

of

$\mathcal W^{(2)}$

of

![]() $\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}=\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}\subseteq \mathbb{R}^{J^{(2)}}$

given by the linear equation

$\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}=\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}\subseteq \mathbb{R}^{J^{(2)}}$

given by the linear equation

![]() $Q(\vec \xi[I],\vec \xi[I^{(2)}],\vec x)-Q(\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}],\vec x)=0$

(in much the same way that

$Q(\vec \xi[I],\vec \xi[I^{(2)}],\vec x)-Q(\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}],\vec x)=0$

(in much the same way that

![]() $\mathcal Z^{(1)}$

is a quadric inside the affine-linear subspace

$\mathcal Z^{(1)}$

is a quadric inside the affine-linear subspace

![]() $\mathcal W^{(1)}$

). That is to say,

$\mathcal W^{(1)}$

). That is to say,

![]() $\mathcal Z^{(2)}$

is typically a variety of codimension (at least) 3, described by two linear equations and one quadratic equation. So we might expect that (for typical outcomes of

$\mathcal Z^{(2)}$

is typically a variety of codimension (at least) 3, described by two linear equations and one quadratic equation. So we might expect that (for typical outcomes of

![]() $\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec \xi[I^{(2)}],\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]$

for which

$\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec \xi[I^{(2)}],\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]$

for which

![]() $\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}=\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

)

$\mathcal{W}^{(1)}_{\vec \xi[I^{(2)}]}=\mathcal{W}^{(1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]}$

)

If we were able to prove Equation (2.5), we would obtain a bound of the form

which would imply Theorem 1.1, tracing back through our decoupling inequalities Equation (2.3) and Equation (2.4). We have made progress by ‘reducing the proportion of our problem that is quadratic’: if, instead of Equation (2.5), we were only able to prove that

\begin{align*}\mathrm{Pr}[\vec \xi[J^{(2)}]\in\mathcal{Z}^{(2)}\,|\,\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec \xi[I^{(2)}],\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]] & \le \mathrm{Pr}[\vec \xi[J^{(2)}]\in\mathcal{W}^{(2)}\,|\,\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec \xi[I^{(2)}],\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]] \\& \le(O(1/\sqrt{n}))^{2} \end{align*}

\begin{align*}\mathrm{Pr}[\vec \xi[J^{(2)}]\in\mathcal{Z}^{(2)}\,|\,\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec \xi[I^{(2)}],\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]] & \le \mathrm{Pr}[\vec \xi[J^{(2)}]\in\mathcal{W}^{(2)}\,|\,\vec \xi[I],\vec{\xi}\mkern2mu\vphantom{\xi}'[I],\vec \xi[I^{(2)}],\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(2)}]] \\& \le(O(1/\sqrt{n}))^{2} \end{align*}

(‘forgetting’ the quadratic part of the problem and only bounding the probability that

![]() $\vec \xi[J^{(2)}]$

lies in the affine-linear subspace

$\vec \xi[J^{(2)}]$

lies in the affine-linear subspace

![]() $\mathcal{W}^{(2)}$

of codimension 2), we would end up with a final bound of the form

$\mathcal{W}^{(2)}$

of codimension 2), we would end up with a final bound of the form

![]() $\mathrm{Pr}[\vec \xi\in \mathcal Z]\le O(n^{-3/8})$

, which is much better than the

$\mathrm{Pr}[\vec \xi\in \mathcal Z]\le O(n^{-3/8})$

, which is much better than the

![]() $O(n^{-1/4})$

bound we obtained with a single decoupling step.

$O(n^{-1/4})$

bound we obtained with a single decoupling step.

In general, after k steps of this scheme, we will have considered k ‘nested’ partitions of the form

![]() $J^{(i-1)}=I^{(i)}\cup J^{(i)}$

, and defined k varieties

$J^{(i-1)}=I^{(i)}\cup J^{(i)}$

, and defined k varieties

![]() $\mathcal Z^{(1)},\ldots,\mathcal Z^{(k)}$

. We will have applied the decoupling inequality k times, and considered various conditional probabilities that the vectors

$\mathcal Z^{(1)},\ldots,\mathcal Z^{(k)}$

. We will have applied the decoupling inequality k times, and considered various conditional probabilities that the vectors

![]() $\vec \xi[I^{(i)}]-\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(i)}]$

lie in certain affine-linear subspaces (to ensure that certain intersections

$\vec \xi[I^{(i)}]-\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(i)}]$

lie in certain affine-linear subspaces (to ensure that certain intersections

![]() $\mathcal{W}^{(i-1)}_{\vec \xi[I^{(i)}]}\cap\mathcal{W}^{(i-1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(i)}]}$

are nonempty). After all this, we find ourselves in a position where if we ‘forget the quadratic part of the problem’ we obtain a bound of the form

$\mathcal{W}^{(i-1)}_{\vec \xi[I^{(i)}]}\cap\mathcal{W}^{(i-1)}_{\vec{\xi}\mkern2mu\vphantom{\xi}'[I^{(i)}]}$

are nonempty). After all this, we find ourselves in a position where if we ‘forget the quadratic part of the problem’ we obtain a bound of the form

![]() $\mathrm{Pr}[Q(\vec \xi)=z]\le O(n^{-1/2+1/2^{k+1}})$

. That is to say, if k is at least

$\mathrm{Pr}[Q(\vec \xi)=z]\le O(n^{-1/2+1/2^{k+1}})$

. That is to say, if k is at least

![]() $\log \log n$

, the quadratic part of the problem is so insignificant that ‘forgetting’ it only costs us a constant factor in the final bound.

$\log \log n$

, the quadratic part of the problem is so insignificant that ‘forgetting’ it only costs us a constant factor in the final bound.

At an extremely high level, this explains our strategy to prove Theorem 1.1. However, we omitted a number of important details in this outline. In particular, our strategy heavily depends on being able to obtain suitable upper bounds on probabilities that certain random vectors fall into certain affine-linear subspaces, and there are crucial ‘robust rank’ nondegeneracy conditions that must be satisfied in order for such bounds to hold. This is not just a technicality; we require significant new ideas to maintain these robust rank properties during our iterative decoupling scheme, which we discuss in the next subsection.

Remark 2.1. In the actual proof, in order to keep everything organized, we phrase our arguments recursively: the key step is to prove a recursive bound for the maximum possible probability that a random vector

![]() $\vec{\xi}\in \{1,-1\}^n$

falls onto some quadric on an affine-linear subspace of a given codimension, satisfying certain nondegeneracy conditions (this main recursive bound is stated in Theorem 5.5).

$\vec{\xi}\in \{1,-1\}^n$

falls onto some quadric on an affine-linear subspace of a given codimension, satisfying certain nondegeneracy conditions (this main recursive bound is stated in Theorem 5.5).

Remark 2.2. Theorem 1.1 concerns quadratic polynomials, but one may be interested in a generalisation to cubic polynomials, or to polynomials of any fixed degree. Decoupling still makes sense for general polynomials: for example, if

![]() $\mathcal Z$

is a cubic (variety), then decoupling yields an inequality of the form

$\mathcal Z$

is a cubic (variety), then decoupling yields an inequality of the form

![]() $\mathrm{Pr}[\vec \xi\in \mathcal Z]\le \mathrm{Pr}[\vec\xi[J]\in \mathcal Z^{(2)}]^{1/2}$

, where

$\mathrm{Pr}[\vec \xi\in \mathcal Z]\le \mathrm{Pr}[\vec\xi[J]\in \mathcal Z^{(2)}]^{1/2}$

, where

![]() $\mathcal Z^{(2)}$

is the intersection of a quadric and a cubic. As observed by Rosiński and Samorodnitsky [Reference Rosiński and SamorodnitskyRS96] and Razborov and Viola [Reference Razborov and ViolaRV13], the basic type of decoupling argument described in § 2.2 generalises quite straightforwardly to higher degrees (but the bounds get worse as the degree increases). However, it is less clear how to generalise the inductive decoupling scheme described in this subsection. Roughly speaking, in the degree-d case, instead of affine-linear subspaces (obtained as intersections of affine-linear hyperplanes), one must work with intersections of degree-

$\mathcal Z^{(2)}$

is the intersection of a quadric and a cubic. As observed by Rosiński and Samorodnitsky [Reference Rosiński and SamorodnitskyRS96] and Razborov and Viola [Reference Razborov and ViolaRV13], the basic type of decoupling argument described in § 2.2 generalises quite straightforwardly to higher degrees (but the bounds get worse as the degree increases). However, it is less clear how to generalise the inductive decoupling scheme described in this subsection. Roughly speaking, in the degree-d case, instead of affine-linear subspaces (obtained as intersections of affine-linear hyperplanes), one must work with intersections of degree-

![]() $(d-1)$

varieties, which are much more complicated objects. We hope that the relevant complexities can be handled with some kind of multiple-level induction, but so far we were not able to accomplish this.

$(d-1)$

varieties, which are much more complicated objects. We hope that the relevant complexities can be handled with some kind of multiple-level induction, but so far we were not able to accomplish this.

2.4 High-dimensional anticoncentration inequalities and witness counting

Our proof, as outlined in the previous subsection, relies on bounds on probabilities that random vectors lie in certain affine-linear subspaces. More specifically, for a suitably nondegenerate affine-linear subspace

![]() $\mathcal W\subseteq \mathbb{R}^n$

of codimension k, and a uniformly random vector

$\mathcal W\subseteq \mathbb{R}^n$

of codimension k, and a uniformly random vector

![]() $\vec{\xi}\in \{1,-1\}^n$

, we need a probability bound of the form

$\vec{\xi}\in \{1,-1\}^n$

, we need a probability bound of the form

![]() $\mathrm{Pr}[\vec \xi\in \mathcal W]\le O(n^{-k/2})$

. Intuitively, this is because

$\mathrm{Pr}[\vec \xi\in \mathcal W]\le O(n^{-k/2})$

. Intuitively, this is because

![]() $\vec \xi$

needs to simultaneously satisfy k different linear equations, each of which is satisfied with probability roughly

$\vec \xi$

needs to simultaneously satisfy k different linear equations, each of which is satisfied with probability roughly

![]() $n^{-1/2}$

. More formally, such a bound follows from a high-dimensional version of the Erdős–Littlewood–Offord theorem.

$n^{-1/2}$

. More formally, such a bound follows from a high-dimensional version of the Erdős–Littlewood–Offord theorem.

The first such high-dimensional version was due to Halász [Reference HalászHal77]. In linear-algebraic language, it can be phrased as follows: for any fixed k, if

![]() $M\in \mathbb R^{k\times n}$

is a matrix that ‘robustly has rank k’ in the sense that (for some fixed

$M\in \mathbb R^{k\times n}$

is a matrix that ‘robustly has rank k’ in the sense that (for some fixed

![]() $\delta \gt 0$

) one cannot delete

$\delta \gt 0$

) one cannot delete

![]() $\delta n$

columns of M to obtain a matrix with rank less than k, then for a uniformly random vector

$\delta n$

columns of M to obtain a matrix with rank less than k, then for a uniformly random vector

![]() $\vec \xi\in\{-1,1\}^n$

we have

$\vec \xi\in\{-1,1\}^n$

we have

Note that some kind of ‘robust rank-k’ condition is necessary here: for example, if n is even and

![]() $M\in \mathbb{R}^{2\times n}$

has rows

$M\in \mathbb{R}^{2\times n}$

has rows

![]() $(1,\ldots,1)\in \mathbb{R}^n$

and

$(1,\ldots,1)\in \mathbb{R}^n$

and

![]() $(1,\ldots,1,0,0)\in \mathbb{R}^n$

, then it is easy to check that

$(1,\ldots,1,0,0)\in \mathbb{R}^n$

, then it is easy to check that

![]() $\mathrm{Pr}[M\vec \xi=\vec 0]$

has order of magnitude

$\mathrm{Pr}[M\vec \xi=\vec 0]$

has order of magnitude

![]() $1/\sqrt n$

.

$1/\sqrt n$

.

Several extensions and variants of Halasz’ inequality have since been proved (see for example [Reference Ferber, Jain and ZhaoFJZ22, Reference Fox, Kwan and SauermannFKS21, Reference HowardHow00, Reference Tao and VuTV06]); in particular, Ferber, Jain and Zhao [Reference Ferber, Jain and ZhaoFJZ22] proved a version of Halasz’ theorem with a much better dependence on k (allowing k to vary with n, instead of viewing it as a constant). We state (a corollary of) this theorem as Theorem 3.3.

Of course, whenever we want to apply any Halász-type theorem, we need a ‘robust rank’ condition to hold. So, in order to execute the strategy described in the last subsection, at each step of the decoupling scheme we need a ‘robust rank inheritance’ lemma, proving that a robust rank condition is likely to hold for the next step, given that it holds for the current step. The key ingredient for our robust rank inheritance lemma is a new high-dimensional anticoncentration inequality for the probability that a random vector falls in a small ball in the Hamming norm. We believe this inequality (and the techniques in its proof) to be of independent interest; an important special case is as follows.

Lemma 2.1. For any fixed positive integer r, there are constants

![]() $C_r \gt 0$

and

$C_r \gt 0$

and

![]() $c_r \gt 0$

only depending on r such that the following holds. Consider a matrix

$c_r \gt 0$

only depending on r such that the following holds. Consider a matrix

![]() $A\in \mathbb{R} ^{m\times n}$

which has rank at least r after deletion of any t rows and t columns (for some positive integer t). Then for a sequence

$A\in \mathbb{R} ^{m\times n}$

which has rank at least r after deletion of any t rows and t columns (for some positive integer t). Then for a sequence

![]() $\vec{\xi}=(\xi_{1},\ldots,\xi_{n})\in\{-1,1\}^{n}$

of independent Rademacher random variables, we have

$\vec{\xi}=(\xi_{1},\ldots,\xi_{n})\in\{-1,1\}^{n}$

of independent Rademacher random variables, we have

The assumption in Lemma 2.1 says that A robustly has rank at least r, but in a stronger sense than typical Halász-type theorems: the rank needs to remain at least r after row deletion as well as column deletion. As a result, we are able to obtain a stronger conclusion, namely that for any vector

![]() $\vec v$

, it is unlikely that

$\vec v$

, it is unlikely that

![]() $A\vec \xi$

agrees with

$A\vec \xi$

agrees with

![]() $\vec v$

in almost all its coordinates. (It is not hard to see that for this stronger conclusion, such a row-deletion assumption is indeed necessary.)

$\vec v$

in almost all its coordinates. (It is not hard to see that for this stronger conclusion, such a row-deletion assumption is indeed necessary.)

We prove Lemma 2.1 (and our more general robust rank inheritance lemma) in § 7 using a witness-counting technique, which we outline here. First, note that the most naïve strategy to prove Lemma 2.1 would be to simply take a union bound over all sets I of

![]() $m-c_r t$

coordinates in which

$m-c_r t$

coordinates in which

![]() $A\vec \xi$

and

$A\vec \xi$

and

![]() $\vec v$

could agree. For some specific I, the probability that

$\vec v$

could agree. For some specific I, the probability that

![]() $A\vec \xi$

and

$A\vec \xi$

and

![]() $\vec v$

agree on the coordinates indexed by I can be easily understood using existing tools (i.e., Halász’ inequality and its variants) and is at most of the order of

$\vec v$

agree on the coordinates indexed by I can be easily understood using existing tools (i.e., Halász’ inequality and its variants) and is at most of the order of

![]() $t^{-r/2}$

. However, it is far too wasteful to simply take a union bound summing over all possibilities for I (the number of possibilities is exponential in t).

$t^{-r/2}$

. However, it is far too wasteful to simply take a union bound summing over all possibilities for I (the number of possibilities is exponential in t).

Instead, for each I we consider small ‘witness’ subsets

![]() $I'\subseteq I$

, such that the submatrix of A consisting of the rows with indices in I’ still has (robustly) high rank. Note that for each

$I'\subseteq I$

, such that the submatrix of A consisting of the rows with indices in I’ still has (robustly) high rank. Note that for each

![]() $I'\subseteq I$

, whenever

$I'\subseteq I$

, whenever

![]() $A\vec \xi$

and

$A\vec \xi$

and

![]() $\vec v$

agree on the coordinates indexed by I, they also in particular agree on the coordinates indexed by I’. Given the high-rank property, for each ‘witness’ subset I’ we can still easily bound the probability that

$\vec v$

agree on the coordinates indexed by I, they also in particular agree on the coordinates indexed by I’. Given the high-rank property, for each ‘witness’ subset I’ we can still easily bound the probability that

![]() $A\vec \xi$

and

$A\vec \xi$

and

![]() $\vec v$

agree on the coordinates indexed by I’ (using Halász’ inequality and its variants).

$\vec v$

agree on the coordinates indexed by I’ (using Halász’ inequality and its variants).

There are still too many possible ‘witness’ subsets I’ to be able to simply take a union bound over all possibilities for I’, but (roughly speaking) we can show that whenever

![]() $A\vec \xi$

and

$A\vec \xi$

and

![]() $\vec v$

agree on a large set of coordinates I, then they must agree on the coordinates of many ‘witness’ subsets

$\vec v$

agree on a large set of coordinates I, then they must agree on the coordinates of many ‘witness’ subsets

![]() $I'\subseteq I$

. We can show this is unlikely by computing the expected number of ‘witness’ coordinate subsets on which

$I'\subseteq I$

. We can show this is unlikely by computing the expected number of ‘witness’ coordinate subsets on which

![]() $A\vec \xi$

and

$A\vec \xi$

and

![]() $\vec v$

agree, and applying Markov’s inequality.

$\vec v$

agree, and applying Markov’s inequality.

Remark 2.3. One might be interested in adapting Theorem 1.1 to study small-ball probabilities of the form

![]() $\sup_{z\in \mathbb{R}}\mathrm{Pr}[|Q(\xi_{1},\ldots,\xi_{n})-z|\le 1]$

instead of point probabilities

$\sup_{z\in \mathbb{R}}\mathrm{Pr}[|Q(\xi_{1},\ldots,\xi_{n})-z|\le 1]$

instead of point probabilities

![]() $\sup_{z\in \mathbb{R}}\mathrm{Pr}[Q(\xi_{1},\ldots,\xi_{n})=z]$

. The robust rank inheritance lemma seems to be the main point of difficulty for such an adaptation; it is not clear how to extend the witness-counting arguments to the small-ball setting (e.g., in the setting of Lemma 2.1, we would be interested in the event that there are fewer than

$\sup_{z\in \mathbb{R}}\mathrm{Pr}[Q(\xi_{1},\ldots,\xi_{n})=z]$

. The robust rank inheritance lemma seems to be the main point of difficulty for such an adaptation; it is not clear how to extend the witness-counting arguments to the small-ball setting (e.g., in the setting of Lemma 2.1, we would be interested in the event that there are fewer than

![]() $c_r t$

coordinates i for which

$c_r t$

coordinates i for which

![]() $|(A\vec \xi-\vec v)[i]|\le 1$

).

$|(A\vec \xi-\vec v)[i]|\le 1$

).

Before ending this overview section, we remark that there is a way to sidestep the robust rank inheritance issue in the special case where Q has ‘bounded rank’, meaning that the quadratic part of Q can be written as

![]() $\vec x^{\intercal} A\vec x$

for some symmetric matrix A of rank O(1). Indeed, in this case we can reduce our entire problem to a certain bounded-dimensional geometric anticoncentration problem (involving a quadric), where the robust rank conditions for Halasz’ inequality are always automatically satisfied when following the strategy in the previous subsection. In § 4, we give a simple self-contained proof of an essentially optimal anticoncentration bound in this setting. We believe this to be a good illustration of the basic principles of our inductive decoupling scheme, with minimal technicalities. Moreover, the result of § 4 will actually also be an ingredient for the full proof of Theorem 5.1: it allows us to restrict our attention to the case where the quadratic part of Q ‘robustly has high rank’, which allows us to avoid possible degeneracies at certain points in the proof.

$\vec x^{\intercal} A\vec x$

for some symmetric matrix A of rank O(1). Indeed, in this case we can reduce our entire problem to a certain bounded-dimensional geometric anticoncentration problem (involving a quadric), where the robust rank conditions for Halasz’ inequality are always automatically satisfied when following the strategy in the previous subsection. In § 4, we give a simple self-contained proof of an essentially optimal anticoncentration bound in this setting. We believe this to be a good illustration of the basic principles of our inductive decoupling scheme, with minimal technicalities. Moreover, the result of § 4 will actually also be an ingredient for the full proof of Theorem 5.1: it allows us to restrict our attention to the case where the quadratic part of Q ‘robustly has high rank’, which allows us to avoid possible degeneracies at certain points in the proof.

3. Preliminaries

First, as outlined in § 2, we need a ‘high-dimensional’ version of the Erdős–Littlewood–Offord theorem. This will require a robust rank condition, defined as follows.

Definition 3.1 For integers

![]() $0\le k\le n$

and a real number

$0\le k\le n$

and a real number

![]() $s\ge 0$

, let

$s\ge 0$

, let

![]() $\mathcal{H}^{k\times n}(s)$

be the set of matrices

$\mathcal{H}^{k\times n}(s)$

be the set of matrices

![]() $M\in\mathbb{R}^{k\times n}$

such that

$M\in\mathbb{R}^{k\times n}$

such that

![]() $\operatorname{rank} M[[k]\times J]=k$

for all subsets

$\operatorname{rank} M[[k]\times J]=k$

for all subsets

![]() $J\subseteq [n]$

of size