1. Introduction

1.1 Background and motivation

Going back to experimental work from the 1990s, the most prominent question concerning random

![]() $k$

-SAT has been to pinpoint the satisfiability threshold, defined as the largest density

$k$

-SAT has been to pinpoint the satisfiability threshold, defined as the largest density

![]() $m/n$

of clauses

$m/n$

of clauses

![]() $m$

to variables

$m$

to variables

![]() $n$

up to which satisfying assignments likely exist [Reference Achlioptas, Naor and Peres6, Reference Cheeseman, Kanefsky and Taylor18]. Currently, the satisfiability threshold is known precisely in the case of

$n$

up to which satisfying assignments likely exist [Reference Achlioptas, Naor and Peres6, Reference Cheeseman, Kanefsky and Taylor18]. Currently, the satisfiability threshold is known precisely in the case of

![]() $k=2$

[Reference Chvátal and Reed21, Reference Goerdt41] and for

$k=2$

[Reference Chvátal and Reed21, Reference Goerdt41] and for

![]() $k\geq k_0$

with

$k\geq k_0$

with

![]() $k_0$

an undetermined (large) constant [Reference Ding, Sly and Sun34]. The latter result confirms ‘predictions’ based on an analytic but non-rigorous physics technique called the ‘cavity method’. Indeed, the cavity method predicts the satisfiability threshold for every

$k_0$

an undetermined (large) constant [Reference Ding, Sly and Sun34]. The latter result confirms ‘predictions’ based on an analytic but non-rigorous physics technique called the ‘cavity method’. Indeed, the cavity method predicts the satisfiability threshold for every

![]() $k\geq 3$

[Reference Mézard, Parisi and Zecchina52], but random

$k\geq 3$

[Reference Mézard, Parisi and Zecchina52], but random

![]() $k$

-SAT for ‘small’

$k$

-SAT for ‘small’

![]() $k\geq 3$

appears to be a particularly hard nut to crack. Additionally, according to the cavity method several phase transitions precede the satisfiability threshold and are expected to impact, among other things, the performance of algorithms [Reference Krzakala, Montanari, Ricci-Tersenghi, Semerjian and Zdeborová48]. One of these phase transitions, the Gibbs uniqueness transition, pertains to a spatial mixing property that also plays a pivotal role in the computational complexity of counting and sampling [Reference Sly62].

$k\geq 3$

appears to be a particularly hard nut to crack. Additionally, according to the cavity method several phase transitions precede the satisfiability threshold and are expected to impact, among other things, the performance of algorithms [Reference Krzakala, Montanari, Ricci-Tersenghi, Semerjian and Zdeborová48]. One of these phase transitions, the Gibbs uniqueness transition, pertains to a spatial mixing property that also plays a pivotal role in the computational complexity of counting and sampling [Reference Sly62].

From a statistical physics viewpoint, the satisfiability threshold is only the second most important quantity associated with random

![]() $k$

-SAT. The first place firmly belongs to the typical number of satisfying assignments, known as the partition function in physics parlance [Reference Mézard and Montanari51]. All the other predictions, including the location of the satisfiability threshold, ultimately derive from the formula for the number of satisfying assignments or closely related variables [Reference Mertens, Mézard and Zecchina50]. Yet there has been little progress on confirming the physics formula for the number of satisfying assignments rigorously.

$k$

-SAT. The first place firmly belongs to the typical number of satisfying assignments, known as the partition function in physics parlance [Reference Mézard and Montanari51]. All the other predictions, including the location of the satisfiability threshold, ultimately derive from the formula for the number of satisfying assignments or closely related variables [Reference Mertens, Mézard and Zecchina50]. Yet there has been little progress on confirming the physics formula for the number of satisfying assignments rigorously.

Three prior contributions stand out. First, a proof technique called the ‘interpolation method’ turns the physics prediction into a rigorous upper bound [Reference Franz and Leone36, Reference Guerra42, Reference Panchenko and Talagrand61].Footnote 1 Second, in the case

![]() $k=2$

, conceptually much simpler than

$k=2$

, conceptually much simpler than

![]() $k\geq 3$

, the physics formula has been proved correct [Reference Achlioptas, Coja-Oghlan and Hahn-Klimroth3]. Third, Montanari and Shah [Reference Montanari and Shah57] proved that also for

$k\geq 3$

, the physics formula has been proved correct [Reference Achlioptas, Coja-Oghlan and Hahn-Klimroth3]. Third, Montanari and Shah [Reference Montanari and Shah57] proved that also for

![]() $k\geq 3$

for certain clause/variable densities the ‘replica symmetric solution’ from physics correctly approximates the number of ‘good’ assignments that satisfy all but

$k\geq 3$

for certain clause/variable densities the ‘replica symmetric solution’ from physics correctly approximates the number of ‘good’ assignments that satisfy all but

![]() $o(n)$

clauses. However, it seems difficult to estimate the gap between the number of such ‘good’ assignments and the number of actual satisfying assignments. A rigorous method to this effect would likely imply the existence of uniform satisfiability thresholds for all

$o(n)$

clauses. However, it seems difficult to estimate the gap between the number of such ‘good’ assignments and the number of actual satisfying assignments. A rigorous method to this effect would likely imply the existence of uniform satisfiability thresholds for all

![]() $k\geq 3$

, thereby resolving a long-standing conundrum [Reference Bayati, Gamarnik and Tetali12, Reference Friedgut37]. The proof of Montanari and Shah is based on the aforementioned Gibbs uniqueness property.

$k\geq 3$

, thereby resolving a long-standing conundrum [Reference Bayati, Gamarnik and Tetali12, Reference Friedgut37]. The proof of Montanari and Shah is based on the aforementioned Gibbs uniqueness property.

The aim of the present paper is to determine the number of actual satisfying assignments of random

![]() $k$

-SAT formulas for clause/variable densities up to the Gibbs uniqueness threshold. Specifically, we verify that the ‘replica symmetric solution’ from [Reference Monasson and Zecchina55, Reference Monasson and Zecchina56] yields the correct answer for any

$k$

-SAT formulas for clause/variable densities up to the Gibbs uniqueness threshold. Specifically, we verify that the ‘replica symmetric solution’ from [Reference Monasson and Zecchina55, Reference Monasson and Zecchina56] yields the correct answer for any

![]() $k\geq 3$

right up to the Gibbs uniqueness threshold, even though the precise value of this threshold is not currently known. Additionally, we derive a new lower bound on the Gibbs uniqueness threshold. The improvement is particularly significant for ‘small’

$k\geq 3$

right up to the Gibbs uniqueness threshold, even though the precise value of this threshold is not currently known. Additionally, we derive a new lower bound on the Gibbs uniqueness threshold. The improvement is particularly significant for ‘small’

![]() $k\geq 3$

. Combining these two results, we obtain the first rigorous formula for the number of satisfying assignments of random

$k\geq 3$

. Combining these two results, we obtain the first rigorous formula for the number of satisfying assignments of random

![]() $k$

-SAT formula for a non-trivial regime of clause/variable densities. Crucially, the result covers meaningful clause/variable densities even for small

$k$

-SAT formula for a non-trivial regime of clause/variable densities. Crucially, the result covers meaningful clause/variable densities even for small

![]() $k\geq 3$

.

$k\geq 3$

.

1.2 Results

Let

![]() $\boldsymbol{\Phi }=\boldsymbol{\Phi }_{d,k}(n)$

be the random

$\boldsymbol{\Phi }=\boldsymbol{\Phi }_{d,k}(n)$

be the random

![]() $k$

-CNF on

$k$

-CNF on

![]() $n$

Boolean variables

$n$

Boolean variables

![]() $x_1,\ldots ,x_n$

with

$x_1,\ldots ,x_n$

with

![]() $\boldsymbol{m}=\boldsymbol{m}_n\sim \textrm {Po}(dn/k)$

clauses

$\boldsymbol{m}=\boldsymbol{m}_n\sim \textrm {Po}(dn/k)$

clauses

![]() $a_1,\ldots ,a_{\boldsymbol{m}}$

. The clauses

$a_1,\ldots ,a_{\boldsymbol{m}}$

. The clauses

![]() $a_i$

are drawn independently and uniformly from the set of all

$a_i$

are drawn independently and uniformly from the set of all

![]() $2^k \binom {n}{k}$

possible clauses with

$2^k \binom {n}{k}$

possible clauses with

![]() $k$

distinct variables. Hence, the parameter

$k$

distinct variables. Hence, the parameter

![]() $d$

prescribes the expected number of clauses in which a given variable appears. Let

$d$

prescribes the expected number of clauses in which a given variable appears. Let

![]() $S(\boldsymbol{\Phi })$

be the set of satisfying assignments of

$S(\boldsymbol{\Phi })$

be the set of satisfying assignments of

![]() $\boldsymbol{\Phi }$

and let

$\boldsymbol{\Phi }$

and let

![]() $Z(\boldsymbol{\Phi })=|S(\boldsymbol{\Phi })|$

. We encode the Boolean values ‘true’ and ‘false’ by

$Z(\boldsymbol{\Phi })=|S(\boldsymbol{\Phi })|$

. We encode the Boolean values ‘true’ and ‘false’ by

![]() $+1$

and

$+1$

and

![]() $-1$

, respectively. Since right up to the satisfiability threshold

$-1$

, respectively. Since right up to the satisfiability threshold

![]() $Z(\boldsymbol{\Phi })$

is of order

$Z(\boldsymbol{\Phi })$

is of order

![]() $\exp (\Theta (n))$

w.h.p. for trivial reasons,Footnote 2 our objective is to study the random variable

$\exp (\Theta (n))$

w.h.p. for trivial reasons,Footnote 2 our objective is to study the random variable

![]() $n^{-1}\log Z(\boldsymbol{\Phi })$

as

$n^{-1}\log Z(\boldsymbol{\Phi })$

as

![]() $n\to \infty$

.

$n\to \infty$

.

1.2.1 The number of satisfying assignments up to the Gibbs uniqueness threshold

The first main result vindicates the ‘replica symmetric solution’ for values of

![]() $d$

up to the Gibbs uniqueness threshold of the Galton-Watson tree that mimics the local topology of

$d$

up to the Gibbs uniqueness threshold of the Galton-Watson tree that mimics the local topology of

![]() $\boldsymbol{\Phi }$

. Let us define these concepts precisely.

$\boldsymbol{\Phi }$

. Let us define these concepts precisely.

We begin with the Galton-Watson tree

![]() $\mathbb{T}=\mathbb{T}_{d,k}$

, which is generated by a two-type branching process. The two types are variable nodes and clause nodes. The process starts with a single root variable node

$\mathbb{T}=\mathbb{T}_{d,k}$

, which is generated by a two-type branching process. The two types are variable nodes and clause nodes. The process starts with a single root variable node

![]() $\mathfrak{x}$

. The offspring of any variable node is a

$\mathfrak{x}$

. The offspring of any variable node is a

![]() $\textrm {Po}(d)$

number of clause nodes, while every clause node begets precisely

$\textrm {Po}(d)$

number of clause nodes, while every clause node begets precisely

![]() $k-1$

variable nodes. Additionally, independently for each clause node

$k-1$

variable nodes. Additionally, independently for each clause node

![]() $a$

and every variable node

$a$

and every variable node

![]() $x$

that is either a child or the parent of

$x$

that is either a child or the parent of

![]() $a$

a sign, denoted

$a$

a sign, denoted

![]() $\mathrm{sign}(x,a)\in \{\pm 1\}$

, is chosen uniformly at random. The resulting random tree

$\mathrm{sign}(x,a)\in \{\pm 1\}$

, is chosen uniformly at random. The resulting random tree

![]() $\mathbb{T}$

models the local structure of the random formula

$\mathbb{T}$

models the local structure of the random formula

![]() $\boldsymbol{\Phi }$

in the sense of local weak convergence [Reference Aldous, Steele and Kesten9, Reference Lovász49].Footnote 3

$\boldsymbol{\Phi }$

in the sense of local weak convergence [Reference Aldous, Steele and Kesten9, Reference Lovász49].Footnote 3

Next, we define the Gibbs uniqueness property on the tree

![]() $\mathbb{T}$

. For an integer

$\mathbb{T}$

. For an integer

![]() $\ell \geq 0$

let

$\ell \geq 0$

let

![]() $\mathbb{T}^{(\ell )}$

be the finite tree obtained by removing all variable and clause nodes at a distance greater than

$\mathbb{T}^{(\ell )}$

be the finite tree obtained by removing all variable and clause nodes at a distance greater than

![]() $2\ell$

from the root

$2\ell$

from the root

![]() $\mathfrak{x}$

. We identify the finite tree

$\mathfrak{x}$

. We identify the finite tree

![]() $\mathbb{T}^{(\ell )}$

with a Boolean formula whose variables/clauses are precisely the variable/clause nodes of

$\mathbb{T}^{(\ell )}$

with a Boolean formula whose variables/clauses are precisely the variable/clause nodes of

![]() $\mathbb{T}^{(\ell )}$

. Let

$\mathbb{T}^{(\ell )}$

. Let

![]() $S(\mathbb{T}^{(\ell )})\neq \emptyset$

be the set of satisfying assignments of this formula and let

$S(\mathbb{T}^{(\ell )})\neq \emptyset$

be the set of satisfying assignments of this formula and let

![]() $\boldsymbol{\tau }^{(\ell )}\in S(\mathbb{T}^{(\ell )})$

be a uniformly random satisfying assignment. Moreover, let

$\boldsymbol{\tau }^{(\ell )}\in S(\mathbb{T}^{(\ell )})$

be a uniformly random satisfying assignment. Moreover, let

![]() $\partial ^{2\ell }\mathfrak{x}$

be the set of variable nodes of

$\partial ^{2\ell }\mathfrak{x}$

be the set of variable nodes of

![]() $\mathbb{T}^{(\ell )}$

at distance precisely

$\mathbb{T}^{(\ell )}$

at distance precisely

![]() $2\ell$

from the root

$2\ell$

from the root

![]() $\mathfrak{x}$

. Then for given

$\mathfrak{x}$

. Then for given

![]() $d,k$

the tree

$d,k$

the tree

![]() $\mathbb{T}=\mathbb{T}_{d,k}$

has the Gibbs uniqueness property if

$\mathbb{T}=\mathbb{T}_{d,k}$

has the Gibbs uniqueness property if

In words, in the limit of large

![]() $\ell$

the truth value

$\ell$

the truth value

![]() $\boldsymbol{\tau }^{(\ell )}(\mathfrak{x})$

of the root

$\boldsymbol{\tau }^{(\ell )}(\mathfrak{x})$

of the root

![]() $\mathfrak{x}$

is asymptotically independent of the truth values

$\mathfrak{x}$

is asymptotically independent of the truth values

![]() $\{\boldsymbol{\tau }^{(\ell )}(x)\}_{x\in \partial ^{2\ell }\mathfrak{x}}$

of the variables at distance

$\{\boldsymbol{\tau }^{(\ell )}(x)\}_{x\in \partial ^{2\ell }\mathfrak{x}}$

of the variables at distance

![]() $2\ell$

from

$2\ell$

from

![]() $\mathfrak{x}$

. In light of the above, for any

$\mathfrak{x}$

. In light of the above, for any

![]() $k \ge 2$

we further define

$k \ge 2$

we further define

![]() $d_{\mathrm{uniq}}(k)$

as

$d_{\mathrm{uniq}}(k)$

as

It is easy to see that

![]() $d_{\mathrm{uniq}}(k)$

is strictly positive and finite for any

$d_{\mathrm{uniq}}(k)$

is strictly positive and finite for any

![]() $k\geq 2$

. Indeed, in Theorem 1.2 we will derive explicit lower bounds on

$k\geq 2$

. Indeed, in Theorem 1.2 we will derive explicit lower bounds on

![]() $d_{\mathrm{uniq}}(k)$

. However, the exact value of

$d_{\mathrm{uniq}}(k)$

. However, the exact value of

![]() $d_{\mathrm{uniq}}(k)$

is not currently known for any

$d_{\mathrm{uniq}}(k)$

is not currently known for any

![]() $k\geq 3$

.

$k\geq 3$

.

As a final preparation we need to spell out the ‘replica symmetric solution’ from [Reference Monasson and Zecchina55]. This prediction comes in terms of a distributional fixed point problem, i.e., a fixed point problem on the space

![]() $\mathscr{P}(0,1)$

of probability measures on the open unit interval. Specifically, consider the Belief Propagation operator

$\mathscr{P}(0,1)$

of probability measures on the open unit interval. Specifically, consider the Belief Propagation operator

defined as follows. Let

![]() $\boldsymbol{d}^+,\boldsymbol{d}^-\sim \textrm {Po}(d/2)$

be Poisson variables with expectation

$\boldsymbol{d}^+,\boldsymbol{d}^-\sim \textrm {Po}(d/2)$

be Poisson variables with expectation

![]() $d/2$

. Moreover, let

$d/2$

. Moreover, let

![]() $(\boldsymbol{\mu }_{\pi ,i,j})_{i,j\geq 1}$

be a sequence of i.i.d. random variables, each following distribution

$(\boldsymbol{\mu }_{\pi ,i,j})_{i,j\geq 1}$

be a sequence of i.i.d. random variables, each following distribution

![]() $\pi$

. All these random variables are mutually independent. Further, let

$\pi$

. All these random variables are mutually independent. Further, let

\begin{align} \boldsymbol{\mu }_{\pi ,i}=1-\prod _{j=1}^{k-1}\boldsymbol{\mu }_{\pi ,i,j}\quad \mbox{ for $i\geq 1$,}\quad\mbox{and}\quad \hat {\boldsymbol{\mu }}_{\pi }=\frac {\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi ,2i-1}}{\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi ,2i-1}+\prod _{i=1}^{\boldsymbol{d}^+}\boldsymbol{\mu }_{\pi ,2i}}. \end{align}

\begin{align} \boldsymbol{\mu }_{\pi ,i}=1-\prod _{j=1}^{k-1}\boldsymbol{\mu }_{\pi ,i,j}\quad \mbox{ for $i\geq 1$,}\quad\mbox{and}\quad \hat {\boldsymbol{\mu }}_{\pi }=\frac {\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi ,2i-1}}{\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi ,2i-1}+\prod _{i=1}^{\boldsymbol{d}^+}\boldsymbol{\mu }_{\pi ,2i}}. \end{align}

Then

![]() $\hat {\pi }$

is the distribution of

$\hat {\pi }$

is the distribution of

![]() $\hat {\boldsymbol{\mu }}_{\pi }$

. Furthermore, for a probability measure

$\hat {\boldsymbol{\mu }}_{\pi }$

. Furthermore, for a probability measure

![]() $\pi \in \mathscr{P}(0,1)$

define the Bethe free entropy

Footnote 4

$\pi \in \mathscr{P}(0,1)$

define the Bethe free entropy

Footnote 4

\begin{align} \mathfrak B_{d,k}(\pi ) & ={\mathbb E}\left [ {\log \left ({\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi ,2i-1}+\prod _{i=1}^{\boldsymbol{d}^+}\boldsymbol{\mu }_{\pi ,2i}}\right )-\frac {d(k-1)}{k}\log \left ({1-\prod _{j=1}^k\boldsymbol{\mu }_{\pi ,1,j}}\right )}\right ] , \end{align}

\begin{align} \mathfrak B_{d,k}(\pi ) & ={\mathbb E}\left [ {\log \left ({\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi ,2i-1}+\prod _{i=1}^{\boldsymbol{d}^+}\boldsymbol{\mu }_{\pi ,2i}}\right )-\frac {d(k-1)}{k}\log \left ({1-\prod _{j=1}^k\boldsymbol{\mu }_{\pi ,1,j}}\right )}\right ] , \end{align}

provided that the expectation on the r.h.s. exists. Finally, let

![]() $\delta _{1/2}\in \mathscr{P}(0,1)$

be the atom at

$\delta _{1/2}\in \mathscr{P}(0,1)$

be the atom at

![]() $1/2$

and let us write

$1/2$

and let us write

![]() $\mathrm{BP}_{d,k}^\ell$

for the

$\mathrm{BP}_{d,k}^\ell$

for the

![]() $\ell$

-fold application of the operator

$\ell$

-fold application of the operator

![]() $\mathrm{BP}_{d,k}$

.

$\mathrm{BP}_{d,k}$

.

Theorem 1.1.

Let

![]() $k\geq 3$

and assume that

$k\geq 3$

and assume that

![]() $0\lt d\lt d_{\mathrm{uniq}}(k)$

. Then the weak limit

$0\lt d\lt d_{\mathrm{uniq}}(k)$

. Then the weak limit

exists and

The formula (1.7) matches the prediction from [Reference Monasson and Zecchina55] precisely. Of course, part of the assertion of Theorem 1.1 is that the Bethe free entropy

![]() $\mathfrak B_{d,k}(\pi _{d,k})$

is well defined. Admittedly, the formula (1.7) is not ‘explicit’. But the proof of Theorem 1.1 evinces that the convergence (1.6) occurs rapidly. Therefore, a randomised algorithm called ‘population dynamics’ [Reference Mézard and Montanari51] can be used to approximate (1.7) within any desired numerical accuracy.

$\mathfrak B_{d,k}(\pi _{d,k})$

is well defined. Admittedly, the formula (1.7) is not ‘explicit’. But the proof of Theorem 1.1 evinces that the convergence (1.6) occurs rapidly. Therefore, a randomised algorithm called ‘population dynamics’ [Reference Mézard and Montanari51] can be used to approximate (1.7) within any desired numerical accuracy.

1.2.2 An improved lower bound on Gibbs uniqueness

The obvious next task is to determine the Gibbs uniqueness threshold

![]() $d_{\mathrm{uniq}}(k)$

. Currently, its value is known precisely only in the case

$d_{\mathrm{uniq}}(k)$

. Currently, its value is known precisely only in the case

![]() $k=2$

, where

$k=2$

, where

![]() $d_{\mathrm{uniq}}(2)=2$

coincides with the random 2-SAT satisfiability threshold [Reference Achlioptas, Coja-Oghlan and Hahn-Klimroth3, Reference Chvátal and Reed21, Reference Goerdt41]. Furthermore, Montanari and Shah [Reference Montanari and Shah57] proved that the pure literal threshold

Footnote 5

$d_{\mathrm{uniq}}(2)=2$

coincides with the random 2-SAT satisfiability threshold [Reference Achlioptas, Coja-Oghlan and Hahn-Klimroth3, Reference Chvátal and Reed21, Reference Goerdt41]. Furthermore, Montanari and Shah [Reference Montanari and Shah57] proved that the pure literal threshold

Footnote 5

![]() $d_{\mathrm{pure}}(k)$

upper bounds

$d_{\mathrm{pure}}(k)$

upper bounds

![]() $d_{\mathrm{uniq}}(k)$

for all

$d_{\mathrm{uniq}}(k)$

for all

![]() $k\geq 2$

.Footnote 6 The value of

$k\geq 2$

.Footnote 6 The value of

![]() $d_{\mathrm{pure}}(k)$

admits a neat formula [Reference Broder, Frieze and Upfal16, Reference Molloy54]:

$d_{\mathrm{pure}}(k)$

admits a neat formula [Reference Broder, Frieze and Upfal16, Reference Molloy54]:

Complementing the upper bound (1.8), Montanari and Shah derived a lower bound

![]() $d_{\mathrm{MS}}(k)$

:

$d_{\mathrm{MS}}(k)$

:

Unfortunately, the bound (1.9) is tight not even in the case

![]() $k=2$

, where

$k=2$

, where

![]() $d_{\mathrm{uniq}}(2)=2$

while

$d_{\mathrm{uniq}}(2)=2$

while

![]() $d_{\mathrm{MS}}(2)\approx 1.16$

. That said, the lower and upper bounds

$d_{\mathrm{MS}}(2)\approx 1.16$

. That said, the lower and upper bounds

![]() $d_{\mathrm{MS}}(k)$

and

$d_{\mathrm{MS}}(k)$

and

![]() $d_{\mathrm{pure}}(k)$

match asymptotically in the limit of large

$d_{\mathrm{pure}}(k)$

match asymptotically in the limit of large

![]() $k$

, as

$k$

, as

with

![]() $o_k(1)$

hiding a term that vanishes as

$o_k(1)$

hiding a term that vanishes as

![]() $k\to \infty$

. The following theorem yields an improved lower bound

$k\to \infty$

. The following theorem yields an improved lower bound

![]() ${{{d_{\mathrm{con}}}}}(k)$

on

${{{d_{\mathrm{con}}}}}(k)$

on

![]() $d_{\mathrm{uniq}}(k)$

.

$d_{\mathrm{uniq}}(k)$

.

Theorem 1.2.

For all

![]() $k\geq 3$

we have

$k\geq 3$

we have

An easy calculation reveals that

Moreover, it is satisfactory that the formula (1.11) reproduces the correct (previously known) threshold

![]() $d_{\mathrm{uniq}}(2)={{{d_{\mathrm{con}}}}}(2)=d_{\mathrm{pure}}(2)=2$

. That said, we have no reason to believe that (1.11) is tight for any

$d_{\mathrm{uniq}}(2)={{{d_{\mathrm{con}}}}}(2)=d_{\mathrm{pure}}(2)=2$

. That said, we have no reason to believe that (1.11) is tight for any

![]() $k\geq 3$

.

$k\geq 3$

.

Combining Theorems 1.1 and 1.2, we obtain the following.

Corollary 1.3.

Let

![]() $k\geq 3$

. If

$k\geq 3$

. If

![]() $d\lt {{{d_{\mathrm{con}}}}}(k)$

then (1.7) holds.

$d\lt {{{d_{\mathrm{con}}}}}(k)$

then (1.7) holds.

Corollary 1.3 constitutes the first rigorous result to determine the precise asymptotic value of

![]() $\log Z(\boldsymbol{\Phi })$

for a non-trivial regime of

$\log Z(\boldsymbol{\Phi })$

for a non-trivial regime of

![]() $d$

for any

$d$

for any

![]() $k\geq 3$

. To elaborate, the formula (1.7) is trivially true for

$k\geq 3$

. To elaborate, the formula (1.7) is trivially true for

![]() $d\lt1/(k-1)$

because for such

$d\lt1/(k-1)$

because for such

![]() $d$

the

$d$

the

![]() $k$

-uniform hypergraph induced by the clauses of

$k$

-uniform hypergraph induced by the clauses of

![]() $\boldsymbol{\Phi }$

has no giant component and Belief Propagation is exact on acyclic graphical models [Reference Mézard and Montanari51]. But Corollary 1.3 applies to

$\boldsymbol{\Phi }$

has no giant component and Belief Propagation is exact on acyclic graphical models [Reference Mézard and Montanari51]. But Corollary 1.3 applies to

![]() $d$

well beyond this threshold, as displayed in Table 1. In particular, in contrast to much of the prior work on random

$d$

well beyond this threshold, as displayed in Table 1. In particular, in contrast to much of the prior work on random

![]() $k$

-SAT, Corollary 1.3 applies to a non-trivial regime of

$k$

-SAT, Corollary 1.3 applies to a non-trivial regime of

![]() $d$

even for ‘small’

$d$

even for ‘small’

![]() $k\geq 3$

.

$k\geq 3$

.

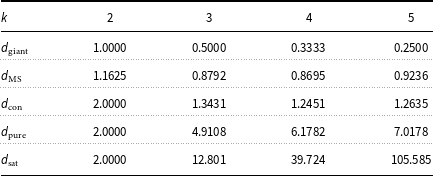

The values of

![]() $d_{\mathrm{MS}}(k), {{{d_{\mathrm{con}}}}}(k)$

, and

$d_{\mathrm{MS}}(k), {{{d_{\mathrm{con}}}}}(k)$

, and

![]() $d_{\mathrm{pure}}(k)$

for

$d_{\mathrm{pure}}(k)$

for

![]() $2\leq k\leq 5$

. Additionally,

$2\leq k\leq 5$

. Additionally,

![]() $d_{\mathrm{giant}}(k) = 1/(k-1)$

marks the giant component threshold of the hypergraph induced by the random

$d_{\mathrm{giant}}(k) = 1/(k-1)$

marks the giant component threshold of the hypergraph induced by the random

![]() $k$

-CNF formula. Moreover,

$k$

-CNF formula. Moreover,

![]() $d_{\mathrm{sat}}(k)$

is the satisfiability threshold according to physics predictions [Reference Mertens, Mézard and Zecchina50]. It is not hard to show that

$d_{\mathrm{sat}}(k)$

is the satisfiability threshold according to physics predictions [Reference Mertens, Mézard and Zecchina50]. It is not hard to show that

![]() $d_{\mathrm{giant}}(k) \le d_{\mathrm{MS}}(k) \le {{{d_{\mathrm{con}}}}}(k) \le d_{\mathrm{uniq}}(k) \le d_{\mathrm{pure}}(k) \le d_{\mathrm{sat}}(k)$

, for all

$d_{\mathrm{giant}}(k) \le d_{\mathrm{MS}}(k) \le {{{d_{\mathrm{con}}}}}(k) \le d_{\mathrm{uniq}}(k) \le d_{\mathrm{pure}}(k) \le d_{\mathrm{sat}}(k)$

, for all

![]() $k \ge 2$

$k \ge 2$

Although Table 1 contains the values

![]() $d_{\mathrm{MS}}(k)$

from [Reference Montanari and Shah57] for comparison, we emphasise that Montanari and Shah’s result only yields the number of ‘good’ assignments satisfying all but

$d_{\mathrm{MS}}(k)$

from [Reference Montanari and Shah57] for comparison, we emphasise that Montanari and Shah’s result only yields the number of ‘good’ assignments satisfying all but

![]() $o(n)$

clauses, rather than of actual satisfying assignments. In fact, the best prior rigorous bounds on the number of satisfying assignments for

$o(n)$

clauses, rather than of actual satisfying assignments. In fact, the best prior rigorous bounds on the number of satisfying assignments for

![]() $d\gt 1/(k-1)$

derive from the first and the second moment methods. Specifically, the folklore first moment bound reads

$d\gt 1/(k-1)$

derive from the first and the second moment methods. Specifically, the folklore first moment bound reads

Furthermore, Achlioptas and Peres [Reference Achlioptas and Peres7] perform a second moment argument on the number of balanced satisfying assignments, i.e., satisfying assignments that enjoy a peculiar additional condition required to keep the second moment under control. They show that w.h.p.

\begin{align} \frac 1n & \log Z(\boldsymbol{\Phi })\geq (1-d)\log 2+\frac dk\log \left [ {\left ({\lambda ^{1/2}+\lambda ^{-1/2}}\right )^k-\lambda ^{-k/2}}\right ] +o(1),\quad \mbox{where}\\ & (1-\lambda )(1+\lambda )^{k-1}=1,\,\lambda \gt 0. \nonumber\end{align}

\begin{align} \frac 1n & \log Z(\boldsymbol{\Phi })\geq (1-d)\log 2+\frac dk\log \left [ {\left ({\lambda ^{1/2}+\lambda ^{-1/2}}\right )^k-\lambda ^{-k/2}}\right ] +o(1),\quad \mbox{where}\\ & (1-\lambda )(1+\lambda )^{k-1}=1,\,\lambda \gt 0. \nonumber\end{align}

Figure 1 illustrates the bounds (1.13)–(1.14) along with (1.7) for

![]() $k=3$

. As the figure shows, the correct value (1.7) is quite close to the first moment bound. That said, the first moment bound strictly exceeds

$k=3$

. As the figure shows, the correct value (1.7) is quite close to the first moment bound. That said, the first moment bound strictly exceeds

![]() $\mathfrak B_{d,k}(\pi _{d,k})$

for all

$\mathfrak B_{d,k}(\pi _{d,k})$

for all

![]() $d\gt 0$

,

$d\gt 0$

,

![]() $k\geq 3$

[Reference Coja-Oghlan, Kapetanopoulos and Müller24]. On the other hand, Figure 1 demonstrates that the ‘balanced second moment bound’ (1.14) significantly undershoots

$k\geq 3$

[Reference Coja-Oghlan, Kapetanopoulos and Müller24]. On the other hand, Figure 1 demonstrates that the ‘balanced second moment bound’ (1.14) significantly undershoots

![]() $\mathfrak B_{d,3}(\pi _{d,3})$

. Recall that Figure 1 is on a logarithmic scale; thus, even small differences translate into exponentially large errors.

$\mathfrak B_{d,3}(\pi _{d,3})$

. Recall that Figure 1 is on a logarithmic scale; thus, even small differences translate into exponentially large errors.

Comparison of

![]() $\mathfrak B_{d,k}(\pi _{d,k})$

with known bounds for

$\mathfrak B_{d,k}(\pi _{d,k})$

with known bounds for

![]() $\lim _{n\to \infty }\frac 1n\log Z(\boldsymbol{\Phi })$

for

$\lim _{n\to \infty }\frac 1n\log Z(\boldsymbol{\Phi })$

for

![]() $k=3$

. The red dotted line depicts the first moment upper bound (1.13), while the green dotted line represents the lower bound provided by (1.14). The blue line displays a numerical approximation of

$k=3$

. The red dotted line depicts the first moment upper bound (1.13), while the green dotted line represents the lower bound provided by (1.14). The blue line displays a numerical approximation of

![]() $\mathfrak B_{d,3}(\pi _{d,3})$

. To obtain our values, we generated

$\mathfrak B_{d,3}(\pi _{d,3})$

. To obtain our values, we generated

![]() $10^{6}$

samples from

$10^{6}$

samples from

![]() $\pi \approx \mathrm{BP}^{25}_{d,3}(\delta _{1/2})$

and then evaluated the corresponding empirical average of the expression in (1.5).

$\pi \approx \mathrm{BP}^{25}_{d,3}(\delta _{1/2})$

and then evaluated the corresponding empirical average of the expression in (1.5).

1.3 Preliminaries and notation

Let

![]() $\Phi$

be a Boolean expression in conjunctive normal such that no clause contains the same variable twice. We write

$\Phi$

be a Boolean expression in conjunctive normal such that no clause contains the same variable twice. We write

![]() $V(\Phi )$

for the set of Boolean variables of

$V(\Phi )$

for the set of Boolean variables of

![]() $\Phi$

and

$\Phi$

and

![]() $F(\Phi )$

for the set of clauses. The formula

$F(\Phi )$

for the set of clauses. The formula

![]() $\Phi$

gives rise to a bipartite graph

$\Phi$

gives rise to a bipartite graph

![]() $G(\Phi )$

on the vertex set

$G(\Phi )$

on the vertex set

![]() $V(\Phi )\cup F(\Phi )$

in which a variable

$V(\Phi )\cup F(\Phi )$

in which a variable

![]() $x$

and a clause

$x$

and a clause

![]() $a$

are adjacent iff variable

$a$

are adjacent iff variable

![]() $x$

appears in clause

$x$

appears in clause

![]() $a$

(either positively or negatively). Let

$a$

(either positively or negatively). Let

![]() $E(\Phi )$

denote the edge set of the graph

$E(\Phi )$

denote the edge set of the graph

![]() $G(\Phi )$

. Furthermore, for a vertex

$G(\Phi )$

. Furthermore, for a vertex

![]() $v\in V(\Phi )\cup F(\Phi )$

let

$v\in V(\Phi )\cup F(\Phi )$

let

![]() $\partial _\Phi v$

be the set of neighbours of

$\partial _\Phi v$

be the set of neighbours of

![]() $v$

; where the reference to

$v$

; where the reference to

![]() $\Phi$

is self-evident, we just write

$\Phi$

is self-evident, we just write

![]() $\partial v$

.

$\partial v$

.

The graph

![]() $G(\Phi )$

induces a metric on

$G(\Phi )$

induces a metric on

![]() $V(\Phi )\cup F(\Phi )$

by letting

$V(\Phi )\cup F(\Phi )$

by letting

![]() $\mathrm{dist}_\Phi (v,w)$

equal the length of the shortest path from

$\mathrm{dist}_\Phi (v,w)$

equal the length of the shortest path from

![]() $v$

to

$v$

to

![]() $w$

. For a vertex

$w$

. For a vertex

![]() $v$

and an integer

$v$

and an integer

![]() $\ell \geq 0$

let

$\ell \geq 0$

let

![]() $\partial ^\ell _\Phi v=\partial ^\ell v$

be the set of all vertices

$\partial ^\ell _\Phi v=\partial ^\ell v$

be the set of all vertices

![]() $w$

at distance precisely

$w$

at distance precisely

![]() $\ell$

from

$\ell$

from

![]() $v$

.

$v$

.

For a clause

![]() $a$

and a variable

$a$

and a variable

![]() $x\in \partial a$

we define

$x\in \partial a$

we define

![]() $\mathrm{sign}_\Phi (x,a)=1$

if

$\mathrm{sign}_\Phi (x,a)=1$

if

![]() $a$

contains

$a$

contains

![]() $x$

as a positive literal, and

$x$

as a positive literal, and

![]() $\mathrm{sign}_\Phi (x,a)=-1$

if

$\mathrm{sign}_\Phi (x,a)=-1$

if

![]() $a$

contains the negation

$a$

contains the negation

![]() $\neg x$

. (This is unambiguous because clause

$\neg x$

. (This is unambiguous because clause

![]() $a$

is not allowed to contain both

$a$

is not allowed to contain both

![]() $x$

and

$x$

and

![]() $\neg x$

.) For a variable

$\neg x$

.) For a variable

![]() $x\in V(\Phi )$

and

$x\in V(\Phi )$

and

![]() $s\in \{\pm 1\}$

we let

$s\in \{\pm 1\}$

we let

![]() $\partial ^s_\Phi x=\partial ^sx$

be the set of clauses

$\partial ^s_\Phi x=\partial ^sx$

be the set of clauses

![]() $a\in \partial _\Phi x$

such that

$a\in \partial _\Phi x$

such that

![]() $\mathrm{sign}_\Phi (x,a)=s$

. Where convenient we use the shorthand

$\mathrm{sign}_\Phi (x,a)=s$

. Where convenient we use the shorthand

![]() $\partial ^\pm x=\partial ^{\pm 1}x$

. We say that a variable

$\partial ^\pm x=\partial ^{\pm 1}x$

. We say that a variable

![]() $x$

is pure in

$x$

is pure in

![]() $\Phi$

if

$\Phi$

if

![]() $\mathrm{sign}_\Phi (x,a)=\mathrm{sign}_\Phi (x,b)$

for all

$\mathrm{sign}_\Phi (x,a)=\mathrm{sign}_\Phi (x,b)$

for all

![]() $a,b\in \partial x$

. More specifically, say that

$a,b\in \partial x$

. More specifically, say that

![]() $x$

is a pure literal of

$x$

is a pure literal of

![]() $\Phi$

if

$\Phi$

if

![]() $\partial ^-x=\emptyset$

. Similarly,

$\partial ^-x=\emptyset$

. Similarly,

![]() $\neg x$

is called a pure literal if

$\neg x$

is called a pure literal if

![]() $\partial ^+x=\emptyset$

. A variable or literal that fails to be pure is called mixed.

$\partial ^+x=\emptyset$

. A variable or literal that fails to be pure is called mixed.

For a literal

![]() $l\in \{x,\neg x\,:\,x\in V(\Phi )\}$

we let

$l\in \{x,\neg x\,:\,x\in V(\Phi )\}$

we let

![]() $|l|$

denote the underlying variable; thus,

$|l|$

denote the underlying variable; thus,

![]() $|x|=|\neg x|=x$

for

$|x|=|\neg x|=x$

for

![]() $x\in V(\Phi )$

. Moreover, we define

$x\in V(\Phi )$

. Moreover, we define

![]() $\mathrm{sign}(x)=1$

and

$\mathrm{sign}(x)=1$

and

![]() $\mathrm{sign}(\neg x)=-1$

. Further, for a literal

$\mathrm{sign}(\neg x)=-1$

. Further, for a literal

![]() $l$

we define

$l$

we define

![]() $1\cdot l=l$

and

$1\cdot l=l$

and

![]() $(-1)\cdot l=\neg l$

.

$(-1)\cdot l=\neg l$

.

If

![]() $\Phi$

is satisfiable, then

$\Phi$

is satisfiable, then

![]() $\boldsymbol{\sigma }_\Phi =(\boldsymbol{\sigma }_\Phi (x))_{x\in V(\Phi )}$

denotes a uniformly random satisfying assignment of

$\boldsymbol{\sigma }_\Phi =(\boldsymbol{\sigma }_\Phi (x))_{x\in V(\Phi )}$

denotes a uniformly random satisfying assignment of

![]() $\Phi$

. Where the reference to

$\Phi$

. Where the reference to

![]() $\Phi$

is obvious we just write

$\Phi$

is obvious we just write

![]() $\boldsymbol{\sigma }$

.

$\boldsymbol{\sigma }$

.

Let

![]() $\mu ,\nu$

be two probability measures on

$\mu ,\nu$

be two probability measures on

![]() $\mathbb{R}^h$

, let

$\mathbb{R}^h$

, let

![]() $q\geq 1$

and assume that

$q\geq 1$

and assume that

![]() $\int _{\mathbb{R}^h}\|x\|_q^q{\mathrm d}\mu (x),\int _{\mathbb{R}^h}\|x\|_q^q{\mathrm d}\nu (x)\lt \infty$

. We recall that the

$\int _{\mathbb{R}^h}\|x\|_q^q{\mathrm d}\mu (x),\int _{\mathbb{R}^h}\|x\|_q^q{\mathrm d}\nu (x)\lt \infty$

. We recall that the

![]() $L_q$

-Wasserstein distance of

$L_q$

-Wasserstein distance of

![]() $\mu ,\nu$

is defined as

$\mu ,\nu$

is defined as

where the infimum is taken over all pairs

![]() $(\boldsymbol{\xi },\boldsymbol{\zeta })$

of random variables defined on the same probability space

$(\boldsymbol{\xi },\boldsymbol{\zeta })$

of random variables defined on the same probability space

![]() $\Omega$

such that

$\Omega$

such that

![]() $\boldsymbol{\xi }$

has distribution

$\boldsymbol{\xi }$

has distribution

![]() $\mu$

and

$\mu$

and

![]() $\boldsymbol{\zeta }$

has distribution

$\boldsymbol{\zeta }$

has distribution

![]() $\nu$

. If

$\nu$

. If

![]() $\boldsymbol{X},\boldsymbol{Y}$

are random variables with distributions

$\boldsymbol{X},\boldsymbol{Y}$

are random variables with distributions

![]() $\mu ,\nu$

, it is convenient to use the shorthand

$\mu ,\nu$

, it is convenient to use the shorthand

![]() $W_q(\boldsymbol{X},\boldsymbol{Y})=W_q(\mu ,\nu )$

, provided that

$W_q(\boldsymbol{X},\boldsymbol{Y})=W_q(\mu ,\nu )$

, provided that

![]() ${\mathbb E}[\|\boldsymbol{X}\|_q^q],{\mathbb E}[\|\boldsymbol{Y}\|_q^q]\lt \infty$

.

${\mathbb E}[\|\boldsymbol{X}\|_q^q],{\mathbb E}[\|\boldsymbol{Y}\|_q^q]\lt \infty$

.

For two random variables

![]() $\boldsymbol{X},\boldsymbol{Y}$

we write

$\boldsymbol{X},\boldsymbol{Y}$

we write

![]() $\boldsymbol{X}\sim \boldsymbol{Y}$

if

$\boldsymbol{X}\sim \boldsymbol{Y}$

if

![]() $\boldsymbol{X},\boldsymbol{Y}$

are identically distributed. Moreover, for a probability distribution

$\boldsymbol{X},\boldsymbol{Y}$

are identically distributed. Moreover, for a probability distribution

![]() $\mu$

and a random variable

$\mu$

and a random variable

![]() $\boldsymbol{X}$

we write

$\boldsymbol{X}$

we write

![]() $\boldsymbol{X}\sim \mu$

if

$\boldsymbol{X}\sim \mu$

if

![]() $\boldsymbol{X}$

has distribution

$\boldsymbol{X}$

has distribution

![]() $\mu$

.

$\mu$

.

We will make repeated use of the following tail bound for Poisson variables.

Lemma 1.4 (Bennett’s inequality [Reference Boucheron, Lugosi and Massart14, Theorem 2.9]). Suppose that

![]() $\boldsymbol{X}\sim \textrm {Po}(\lambda )$

with

$\boldsymbol{X}\sim \textrm {Po}(\lambda )$

with

![]() $\lambda \gt 0$

and let

$\lambda \gt 0$

and let

![]() $\varphi (x)=(1+x)\log (1+x)-x$

for

$\varphi (x)=(1+x)\log (1+x)-x$

for

![]() $x\gt -1$

. Then

$x\gt -1$

. Then

For reals

![]() $a,b$

we write

$a,b$

we write

Unless specified otherwise asymptotic notation

![]() $o(\!\cdot \!),\,O(\!\cdot \!)$

, etc. is understood to refer to the limit

$o(\!\cdot \!),\,O(\!\cdot \!)$

, etc. is understood to refer to the limit

![]() $n\to \infty$

. The symbol

$n\to \infty$

. The symbol

![]() $\tilde O(\!\cdot \!)$

is understood to swallow

$\tilde O(\!\cdot \!)$

is understood to swallow

![]() $\mathrm{polylog}(n)$

terms. Throughout we tacitly assume that

$\mathrm{polylog}(n)$

terms. Throughout we tacitly assume that

![]() $n$

is sufficiently large so that the various estimates are valid. We use the conventions

$n$

is sufficiently large so that the various estimates are valid. We use the conventions

![]() $\log 0=-\infty$

and

$\log 0=-\infty$

and

![]() $\log \infty =\infty$

. Finally, throughout the paper we assume that

$\log \infty =\infty$

. Finally, throughout the paper we assume that

![]() $k\geq 3$

is a fixed integer.

$k\geq 3$

is a fixed integer.

2. Overview

In this section we survey the proofs of the main results. Subsequently, we discuss further related work. The proof details are deferred to the remaining sections; see Section 2.7 for pointers. We assume throughout that

![]() $k\geq 3$

.

$k\geq 3$

.

2.1 Existence of the fixed point and upper bound

As a first step towards the proof of Theorem 1.1 we prove that the limit (1.6) exists for

![]() $d\lt d_{\mathrm{uniq}}(k)$

. More precisely, we will establish the following statement.

$d\lt d_{\mathrm{uniq}}(k)$

. More precisely, we will establish the following statement.

Proposition 2.1.

For every

![]() $k \ge 3$

and every

$k \ge 3$

and every

![]() $d \lt d_{\mathrm{uniq}}(k)$

, the

$d \lt d_{\mathrm{uniq}}(k)$

, the

![]() $W_1$

-limit

$W_1$

-limit

![]() $\pi _{d,k}=\lim _{\ell \to \infty }\mathrm{BP}_{d,k}^{\ell }(\delta _{1/2})$

exists and

$\pi _{d,k}=\lim _{\ell \to \infty }\mathrm{BP}_{d,k}^{\ell }(\delta _{1/2})$

exists and

\begin{align} {\mathbb E}\left [ {\log ^2\boldsymbol{\mu }_{\pi _{d,k},1,1}}\right ] +{\mathbb E}\left |{\log \left ({\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi _{d,k},2i}+\prod _{i=1}^{\boldsymbol{d}^+}\boldsymbol{\mu }_{\pi _{d,k},2i-1}}\right )}\right |+{\mathbb E}\left |{\log \left ({1-\prod _{j=1}^k\boldsymbol{\mu }_{\pi _{d,k},1,j}}\right )}\right | & \lt \infty . \end{align}

\begin{align} {\mathbb E}\left [ {\log ^2\boldsymbol{\mu }_{\pi _{d,k},1,1}}\right ] +{\mathbb E}\left |{\log \left ({\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi _{d,k},2i}+\prod _{i=1}^{\boldsymbol{d}^+}\boldsymbol{\mu }_{\pi _{d,k},2i-1}}\right )}\right |+{\mathbb E}\left |{\log \left ({1-\prod _{j=1}^k\boldsymbol{\mu }_{\pi _{d,k},1,j}}\right )}\right | & \lt \infty . \end{align}

In addition,

![]() $\boldsymbol{\mu }_{\pi _{d,k},1,1}$

and

$\boldsymbol{\mu }_{\pi _{d,k},1,1}$

and

![]() $1-\boldsymbol{\mu }_{\pi _{d,k},1,1}$

are identically distributed.

$1-\boldsymbol{\mu }_{\pi _{d,k},1,1}$

are identically distributed.

The existence of the limit

![]() $\pi _{d,k}$

is an easy consequence of the Gibbs uniqueness property. As an aside, the limit

$\pi _{d,k}$

is an easy consequence of the Gibbs uniqueness property. As an aside, the limit

![]() $\pi _{d,k}=\lim _{\ell \to \infty }\mathrm{BP}_{d,k}^{\ell }(\delta _{1/2})$

is a fixed point of the Belief Propagation operator, i.e.,

$\pi _{d,k}=\lim _{\ell \to \infty }\mathrm{BP}_{d,k}^{\ell }(\delta _{1/2})$

is a fixed point of the Belief Propagation operator, i.e.,

The proof of the bound (2.1) is a bit more subtle and requires a few preparations, but we will come to that. The upshot of (2.1) is that the Bethe free entropy

![]() $\mathfrak B_{d,k}(\pi _{d,k})$

is well defined.

$\mathfrak B_{d,k}(\pi _{d,k})$

is well defined.

With the fixed point

![]() $\pi _{d,k}$

in hand we can bring to bear the ‘interpolation method’ to the upper bound the likely value of

$\pi _{d,k}$

in hand we can bring to bear the ‘interpolation method’ to the upper bound the likely value of

![]() $\log Z(\boldsymbol{\Phi })$

.

$\log Z(\boldsymbol{\Phi })$

.

Corollary 2.2.

If

![]() $d \lt d_{\mathrm{uniq}}(k)$

then w.h.p. we have

$d \lt d_{\mathrm{uniq}}(k)$

then w.h.p. we have

![]() $\frac 1n\log Z(\boldsymbol{\Phi })\leq \mathfrak B_{d,k}(\pi _{d,k})+o(1).$

$\frac 1n\log Z(\boldsymbol{\Phi })\leq \mathfrak B_{d,k}(\pi _{d,k})+o(1).$

The interpolation method is a mainstay of the study of disordered systems in mathematical physics and has also been used to investigate random constraint satisfaction problems. In particular, the variant of the interpolation method from [Reference Panchenko and Talagrand61] (in combination with Proposition 2.1) easily implies that

taking the logarithm of

![]() $Z(\boldsymbol{\Phi })\vee 1$

ensures that the expectation is well defined, as it is possible (albeit unlikely for

$Z(\boldsymbol{\Phi })\vee 1$

ensures that the expectation is well defined, as it is possible (albeit unlikely for

![]() $d\lt d_{\mathrm{uniq}}(k)$

) that

$d\lt d_{\mathrm{uniq}}(k)$

) that

![]() $\boldsymbol{\Phi }$

is unsatisfiable. The added value of Corollary 2.2 is that we obtain a bound that holds with high probability, rather than just a bound on the expectation. The interpolation method was used in [Reference Achlioptas, Coja-Oghlan and Hahn-Klimroth3] in a similar fashion to prove a ‘with high probability’ bound on the number of satisfying assignments of random 2-CNFs. The proof of Corollary 2.2 is an adaptation of that argument to

$\boldsymbol{\Phi }$

is unsatisfiable. The added value of Corollary 2.2 is that we obtain a bound that holds with high probability, rather than just a bound on the expectation. The interpolation method was used in [Reference Achlioptas, Coja-Oghlan and Hahn-Klimroth3] in a similar fashion to prove a ‘with high probability’ bound on the number of satisfying assignments of random 2-CNFs. The proof of Corollary 2.2 is an adaptation of that argument to

![]() $k\geq 3$

.

$k\geq 3$

.

2.2 A matching lower bound

The key step towards Theorem 1.1 is to establish a lower bound on

![]() $\log Z(\boldsymbol{\Phi })$

that matches the upper bound from Corollary 2.2. To accomplish this task we employ a coupling argument known as the ‘Aizenman-Sims-Starr scheme’ in mathematical physics. Its original version was intended to estimate the partition function of the Sherrington-Kirkpatrick model, a spin glass model [Reference Aizenman, Sims and Starr8]. But the technique has since been employed in probabilistic combinatorics (e.g., [Reference Coja-Oghlan, Kapetanopoulos and Müller24, Reference Coja-Oghlan, Krzakala, Perkins and Zdeborová25, Reference Coja-Oghlan and Perkins29]). By comparison to the mathematical physics context, the crucial difference is that here our objective is to count actual satisfying assignments where every single clause imposes a hard constraint, whereas in spin glass theory constraints are soft. The same issue occurred in previous work on the random 2-SAT problem [Reference Achlioptas, Coja-Oghlan and Hahn-Klimroth3]. However, in that case a relatively simple percolation argument was sufficient to deal with the ensuing complications. As we will see, for

$\log Z(\boldsymbol{\Phi })$

that matches the upper bound from Corollary 2.2. To accomplish this task we employ a coupling argument known as the ‘Aizenman-Sims-Starr scheme’ in mathematical physics. Its original version was intended to estimate the partition function of the Sherrington-Kirkpatrick model, a spin glass model [Reference Aizenman, Sims and Starr8]. But the technique has since been employed in probabilistic combinatorics (e.g., [Reference Coja-Oghlan, Kapetanopoulos and Müller24, Reference Coja-Oghlan, Krzakala, Perkins and Zdeborová25, Reference Coja-Oghlan and Perkins29]). By comparison to the mathematical physics context, the crucial difference is that here our objective is to count actual satisfying assignments where every single clause imposes a hard constraint, whereas in spin glass theory constraints are soft. The same issue occurred in previous work on the random 2-SAT problem [Reference Achlioptas, Coja-Oghlan and Hahn-Klimroth3]. However, in that case a relatively simple percolation argument was sufficient to deal with the ensuing complications. As we will see, for

![]() $k\geq 3$

considerably more care is needed.

$k\geq 3$

considerably more care is needed.

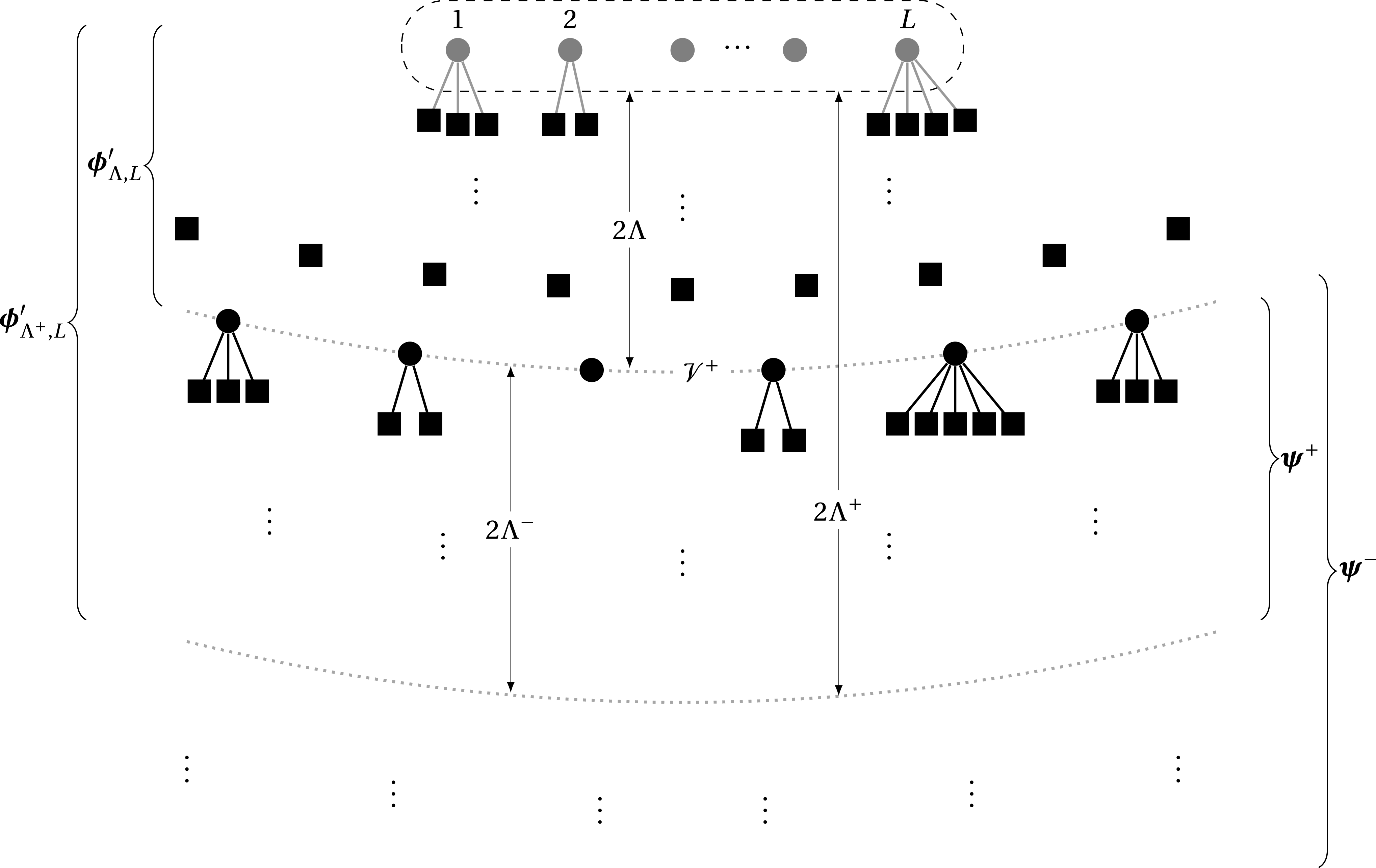

But first things first. The basic idea behind the Aizenman-Sims-Starr argument is to perform a kind of induction. Translated to random

![]() $k$

-SAT this means that we couple the random

$k$

-SAT this means that we couple the random

![]() $k$

-CNF

$k$

-CNF

![]() $\boldsymbol{\Phi }_{d,k}(n)$

with

$\boldsymbol{\Phi }_{d,k}(n)$

with

![]() $n$

variables with the random

$n$

variables with the random

![]() $k$

-CNF

$k$

-CNF

![]() $\boldsymbol{\Phi }_{d,k}(n+1)$

with

$\boldsymbol{\Phi }_{d,k}(n+1)$

with

![]() $n+1$

variables. Recall that

$n+1$

variables. Recall that

![]() $\boldsymbol{\Phi }_{d,k}(n)$

comprises

$\boldsymbol{\Phi }_{d,k}(n)$

comprises

![]() $\boldsymbol{m}_n\sim \textrm {Po}(dn/k)$

independent random clauses. Ultimately Theorem 1.1 is going to be a consequence of Corollary 2.2 and the following statement.

$\boldsymbol{m}_n\sim \textrm {Po}(dn/k)$

independent random clauses. Ultimately Theorem 1.1 is going to be a consequence of Corollary 2.2 and the following statement.

Proposition 2.3.

If

![]() $d\lt d_{\mathrm{uniq}}(k)$

then

$d\lt d_{\mathrm{uniq}}(k)$

then

![]() ${\mathbb E}\left [ {\log (Z(\boldsymbol{\Phi }_{d,k}(n+1))\vee 1)}\right ] -{\mathbb E}\left [ {\log (Z(\boldsymbol{\Phi }_{d,k}(n))\vee 1)}\right ] = \mathfrak B_{d,k}(\pi _{d,k})+o(1).$

${\mathbb E}\left [ {\log (Z(\boldsymbol{\Phi }_{d,k}(n+1))\vee 1)}\right ] -{\mathbb E}\left [ {\log (Z(\boldsymbol{\Phi }_{d,k}(n))\vee 1)}\right ] = \mathfrak B_{d,k}(\pi _{d,k})+o(1).$

Once again we work with

![]() $Z(\boldsymbol{\Phi }_{d,k}(n))\vee 1$

and

$Z(\boldsymbol{\Phi }_{d,k}(n))\vee 1$

and

![]() $Z(\boldsymbol{\Phi }_{d,k}(n+1))\vee 1$

to ensure that the expectations are well defined.

$Z(\boldsymbol{\Phi }_{d,k}(n+1))\vee 1$

to ensure that the expectations are well defined.

To prove Proposition 2.3 we couple the random formulas

![]() $\boldsymbol{\Phi }_{d,k}(n+1)$

and

$\boldsymbol{\Phi }_{d,k}(n+1)$

and

![]() $\boldsymbol{\Phi }_{d,k}(n)$

as follows.

$\boldsymbol{\Phi }_{d,k}(n)$

as follows.

-

CPL1: Let

$\boldsymbol{\Phi }'$

be a random

$\boldsymbol{\Phi }'$

be a random

$k$

-CNF with variables

$k$

-CNF with variables

$x_1,\ldots ,x_n$

and

$x_1,\ldots ,x_n$

and

$\boldsymbol{m}'\sim \textrm {Po}(d(n-k+1)/k)$

clauses.

$\boldsymbol{m}'\sim \textrm {Po}(d(n-k+1)/k)$

clauses. -

CPL2: Obtain

$\boldsymbol{\Phi }''$

from

$\boldsymbol{\Phi }''$

from

$\boldsymbol{\Phi }'$

by adding another

$\boldsymbol{\Phi }'$

by adding another

$\boldsymbol{\Delta }''\sim \textrm {Po}(d(k-1)/k)$

independent random clauses.

$\boldsymbol{\Delta }''\sim \textrm {Po}(d(k-1)/k)$

independent random clauses. -

CPL3: Obtain

$\boldsymbol{\Phi }'''$

from

$\boldsymbol{\Phi }'''$

from

$\boldsymbol{\Phi }'$

by adding one new variable

$\boldsymbol{\Phi }'$

by adding one new variable

$x_{n+1}$

and

$x_{n+1}$

and

$\boldsymbol{\Delta }'''\sim \textrm {Po}(d)$

independent random clauses that each contain

$\boldsymbol{\Delta }'''\sim \textrm {Po}(d)$

independent random clauses that each contain

$x_{n+1}$

and

$x_{n+1}$

and

$k-1$

other variables from

$k-1$

other variables from

$\{x_1,\ldots ,x_n\}$

.

$\{x_1,\ldots ,x_n\}$

.

Observe that

![]() $\boldsymbol{\Phi }''$

ultimately has variables

$\boldsymbol{\Phi }''$

ultimately has variables

![]() $x_1,\ldots ,x_n$

and a total of

$x_1,\ldots ,x_n$

and a total of

![]() $\boldsymbol{m}_n\sim \textrm {Po}(dn/k)$

random clauses. Thus,

$\boldsymbol{m}_n\sim \textrm {Po}(dn/k)$

random clauses. Thus,

![]() $\boldsymbol{\Phi }''$

is identical to the random formula

$\boldsymbol{\Phi }''$

is identical to the random formula

![]() $\boldsymbol{\Phi }_{d,k}(n)$

. Similarly,

$\boldsymbol{\Phi }_{d,k}(n)$

. Similarly,

![]() $\boldsymbol{\Phi }'''$

has the same distribution as

$\boldsymbol{\Phi }'''$

has the same distribution as

![]() $\boldsymbol{\Phi }_{d,k}(n+1)$

. Consequently, we obtain the following.

$\boldsymbol{\Phi }_{d,k}(n+1)$

. Consequently, we obtain the following.

Fact 2.4.

For any

![]() $d\gt 0$

we have

$d\gt 0$

we have

![]() $Z(\boldsymbol{\Phi }_{d,k}(n))\sim Z(\boldsymbol{\Phi }'')$

and

$Z(\boldsymbol{\Phi }_{d,k}(n))\sim Z(\boldsymbol{\Phi }'')$

and

![]() $Z(\boldsymbol{\Phi }_{d,k}(n+1))\sim Z(\boldsymbol{\Phi }''')$

.

$Z(\boldsymbol{\Phi }_{d,k}(n+1))\sim Z(\boldsymbol{\Phi }''')$

.

The coupling CPL1–CPL3 reduces the proof of Proposition 2.3 to getting a handle on the differences

![]() $\log (Z(\boldsymbol{\Phi }'')\vee 1)-\log (Z(\boldsymbol{\Phi }')\vee 1)$

and

$\log (Z(\boldsymbol{\Phi }'')\vee 1)-\log (Z(\boldsymbol{\Phi }')\vee 1)$

and

![]() $\log (Z(\boldsymbol{\Phi }''')\vee 1)-\log (Z(\boldsymbol{\Phi }')\vee 1)$

. More precisely, recalling (1.4)–(1.5), we see that Proposition 2.3 is a consequence of the following two statements.

$\log (Z(\boldsymbol{\Phi }''')\vee 1)-\log (Z(\boldsymbol{\Phi }')\vee 1)$

. More precisely, recalling (1.4)–(1.5), we see that Proposition 2.3 is a consequence of the following two statements.

Proposition 2.5.

If

![]() $d\lt d_{\mathrm{uniq}}(k)$

then

$d\lt d_{\mathrm{uniq}}(k)$

then

\begin{align} {\mathbb E}\left [ {\log \frac {Z(\boldsymbol{\Phi }'')\vee 1}{Z(\boldsymbol{\Phi }')\vee 1}}\right ] & =\frac {d(k-1)}{k}{\mathbb E}\left [ {\log \left ({1-\prod _{j=1}^k\boldsymbol{\mu }_{\pi _{d,k},1,j}}\right )}\right ] +o(1). \end{align}

\begin{align} {\mathbb E}\left [ {\log \frac {Z(\boldsymbol{\Phi }'')\vee 1}{Z(\boldsymbol{\Phi }')\vee 1}}\right ] & =\frac {d(k-1)}{k}{\mathbb E}\left [ {\log \left ({1-\prod _{j=1}^k\boldsymbol{\mu }_{\pi _{d,k},1,j}}\right )}\right ] +o(1). \end{align}

Proposition 2.6.

If

![]() $d\lt d_{\mathrm{uniq}}(k)$

then

$d\lt d_{\mathrm{uniq}}(k)$

then

\begin{align} {\mathbb E}\left [ {\log \frac {Z(\boldsymbol{\Phi }''')\vee 1}{Z(\boldsymbol{\Phi }')\vee 1}}\right ] & ={\mathbb E}\left [ {\log \left ({\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi _{d,k},2i}+\prod _{i=1}^{\boldsymbol{d}^+}\boldsymbol{\mu }_{\pi _{d,k},2i-1}}\right )}\right ] +o(1). \end{align}

\begin{align} {\mathbb E}\left [ {\log \frac {Z(\boldsymbol{\Phi }''')\vee 1}{Z(\boldsymbol{\Phi }')\vee 1}}\right ] & ={\mathbb E}\left [ {\log \left ({\prod _{i=1}^{\boldsymbol{d}^-}\boldsymbol{\mu }_{\pi _{d,k},2i}+\prod _{i=1}^{\boldsymbol{d}^+}\boldsymbol{\mu }_{\pi _{d,k},2i-1}}\right )}\right ] +o(1). \end{align}

To prove Propositions 2.5– 2.6 we effectively need to trace the impact that local changes have on the number of satisfying assignments. Indeed, under the coupling CPL1–CPL3, the formula

![]() $\boldsymbol{\Phi }''$

is obtained from the ‘base formula’

$\boldsymbol{\Phi }''$

is obtained from the ‘base formula’

![]() $\boldsymbol{\Phi }'$

by adding just a bounded expected number of random clauses. Thus, if we imagine that, as both the first moment upper bound (1.13) and the balanced second moment lower bound (1.14) suggest, each additional random clause typically reduces the number of satisfying assignments by a constant factor, then the quantity

$\boldsymbol{\Phi }'$

by adding just a bounded expected number of random clauses. Thus, if we imagine that, as both the first moment upper bound (1.13) and the balanced second moment lower bound (1.14) suggest, each additional random clause typically reduces the number of satisfying assignments by a constant factor, then the quantity

![]() $|\log (Z(\boldsymbol{\Phi }'')/Z(\boldsymbol{\Phi }'))|$

should be bounded with probability close to one. Similar reasoning applies to

$|\log (Z(\boldsymbol{\Phi }'')/Z(\boldsymbol{\Phi }'))|$

should be bounded with probability close to one. Similar reasoning applies to

![]() $\boldsymbol{\Phi }'''$

.

$\boldsymbol{\Phi }'''$

.

Yet while with high probability the local changes that turn

![]() $\boldsymbol{\Phi }'$

into

$\boldsymbol{\Phi }'$

into

![]() $\boldsymbol{\Phi }''$

or

$\boldsymbol{\Phi }''$

or

![]() $\boldsymbol{\Phi }'''$

are indeed benign, because we are dealing with hard constraints there is a non-negligible probability that

$\boldsymbol{\Phi }'''$

are indeed benign, because we are dealing with hard constraints there is a non-negligible probability that

![]() $\log (Z(\boldsymbol{\Phi }'')/Z(\boldsymbol{\Phi }'))$

and

$\log (Z(\boldsymbol{\Phi }'')/Z(\boldsymbol{\Phi }'))$

and

![]() $\log (Z(\boldsymbol{\Phi }''')/Z(\boldsymbol{\Phi }'))$

could be large. Indeed, a single extra clause might wipe out all satisfying assignments of

$\log (Z(\boldsymbol{\Phi }''')/Z(\boldsymbol{\Phi }'))$

could be large. Indeed, a single extra clause might wipe out all satisfying assignments of

![]() $\boldsymbol{\Phi }'$

, in which case

$\boldsymbol{\Phi }'$

, in which case

Hence, we need to argue that such drastic changes are sufficiently rare. The following statement furnishes the necessary tail bound.

Proposition 2.7.

For

![]() $d\lt d_{\mathrm{uniq}}(k)$

we have

$d\lt d_{\mathrm{uniq}}(k)$

we have

\begin{align} {\mathbb E}\left [ {\left |\log \frac {Z(\boldsymbol{\Phi }'')\vee 1}{Z(\boldsymbol{\Phi }')\vee 1}\right |^{3/2}+\left |\log \frac {Z(\boldsymbol{\Phi }''')\vee 1}{Z(\boldsymbol{\Phi }')\vee 1}\right |^{3/2}}\right ] =O(1). \end{align}

\begin{align} {\mathbb E}\left [ {\left |\log \frac {Z(\boldsymbol{\Phi }'')\vee 1}{Z(\boldsymbol{\Phi }')\vee 1}\right |^{3/2}+\left |\log \frac {Z(\boldsymbol{\Phi }''')\vee 1}{Z(\boldsymbol{\Phi }')\vee 1}\right |^{3/2}}\right ] =O(1). \end{align}

2.3 Pure literal pursuit

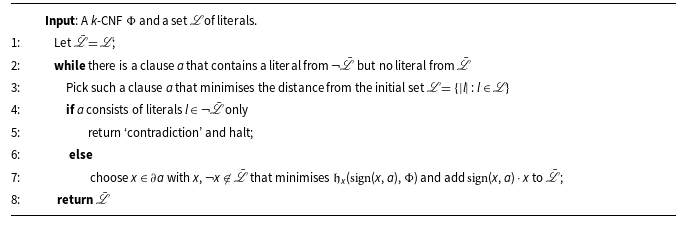

The proof of Proposition 2.7 constitutes the main technical challenge towards the proof of Theorem 1.1. The linchpin of the proof is an algorithm that we call Pure Literal Pursuit (‘

![]() $\texttt {PULP}$

’). Its purpose is to trace the repercussions of setting a relatively small number of variables to specific truth values. More precisely,

$\texttt {PULP}$

’). Its purpose is to trace the repercussions of setting a relatively small number of variables to specific truth values. More precisely,

![]() $\texttt {PULP}$

will allow us to compare the number of satisfying assignments that set a few chosen variables to specific values to the total number of satisfying assignments.

$\texttt {PULP}$

will allow us to compare the number of satisfying assignments that set a few chosen variables to specific values to the total number of satisfying assignments.

To this end

![]() $\texttt {PULP}$

attempts to solve the following optimisation task. Suppose we are given a

$\texttt {PULP}$

attempts to solve the following optimisation task. Suppose we are given a

![]() $k$

-CNF

$k$

-CNF

![]() $\Phi$

and a set

$\Phi$

and a set

![]() $\mathscr{L}$

of literals of

$\mathscr{L}$

of literals of

![]() $\Phi$

that we deem to be set to ‘true’. We would like to identify a superset

$\Phi$

that we deem to be set to ‘true’. We would like to identify a superset

![]() $\skew9\bar{\mathscr{L}}\supseteq \mathscr{L}$

of literals with the following properties; think of

$\skew9\bar{\mathscr{L}}\supseteq \mathscr{L}$

of literals with the following properties; think of

![]() $\skew9\bar{\mathscr{L}}$

as a ‘closure’ of

$\skew9\bar{\mathscr{L}}$

as a ‘closure’ of

![]() $\mathscr{L}$

.

$\mathscr{L}$

.

-

PULP1: every clause

$a$

of

$a$

of

$\Phi$

that contains a literal from

$\Phi$

that contains a literal from

$\neg \skew9\bar{\mathscr{L}}=\{\neg l\,:\,l\in \skew9\bar{\mathscr{L}}\}$

also contains a literal from

$\neg \skew9\bar{\mathscr{L}}=\{\neg l\,:\,l\in \skew9\bar{\mathscr{L}}\}$

also contains a literal from

$\skew9\bar{\mathscr{L}}$

.

$\skew9\bar{\mathscr{L}}$

. -

PULP2: there is no literal

$l$

such that

$l$

such that

$l,\neg l\in \skew9\bar{\mathscr{L}}$

.

$l,\neg l\in \skew9\bar{\mathscr{L}}$

.

Of course, it may be impossible to satisfy PULP1 and PULP2 simultaneously. In this case we ask

![]() $\texttt {PULP}$

to report a ‘contradiction’. But if PULP1–PULP2 can be satisfied, we aim to find a closure

$\texttt {PULP}$

to report a ‘contradiction’. But if PULP1–PULP2 can be satisfied, we aim to find a closure

![]() $\skew9\bar{\mathscr{L}}$

of as small size

$\skew9\bar{\mathscr{L}}$

of as small size

![]() $|\skew9\bar{\mathscr{L}}|$

as possible.

$|\skew9\bar{\mathscr{L}}|$

as possible.

The combinatorial idea behind PULP1–PULP2 is as follows. Deeming the literals from the initial set

![]() $\mathscr{L}$

‘true’, our goal is to reconcile this assumption with the formula

$\mathscr{L}$

‘true’, our goal is to reconcile this assumption with the formula

![]() $\Phi$

. To this end we enhance the set

$\Phi$

. To this end we enhance the set

![]() $\mathscr{L}$

. Clearly, any clause that contains the negation

$\mathscr{L}$

. Clearly, any clause that contains the negation

![]() $\neg l$

of a literal

$\neg l$

of a literal

![]() $l$

that we deem true also needs to contain another literal

$l$

that we deem true also needs to contain another literal

![]() $l'$

that is set to true. This is what PULP1 asks. Furthermore, it would be contradictory to deem both

$l'$

that is set to true. This is what PULP1 asks. Furthermore, it would be contradictory to deem both

![]() $l$

and its negation

$l$

and its negation

![]() $\neg l$

true; this is PULP2.

$\neg l$

true; this is PULP2.

The size of the closure

![]() $\skew9\bar{\mathscr{L}}$

yields a bound on the reduction in the number of satisfying assignments if we indeed insist on all literals

$\skew9\bar{\mathscr{L}}$

yields a bound on the reduction in the number of satisfying assignments if we indeed insist on all literals

![]() $l\in \mathscr{L}$

being set to true. Formally, let

$l\in \mathscr{L}$

being set to true. Formally, let

![]() $S(\Phi ,\mathscr{L})$

be the set of all satisfying assignments

$S(\Phi ,\mathscr{L})$

be the set of all satisfying assignments

![]() $\sigma \in S(\Phi )$

under which all literals

$\sigma \in S(\Phi )$

under which all literals

![]() $l\in \mathscr{L}$

evaluate to ‘true’. Also set

$l\in \mathscr{L}$

evaluate to ‘true’. Also set

![]() $Z(\Phi ,\mathscr{L})=|S(\Phi ,\mathscr{L})|$

.

$Z(\Phi ,\mathscr{L})=|S(\Phi ,\mathscr{L})|$

.

Lemma 2.8.

For any

![]() $\Phi ,\mathscr{L}$

and any

$\Phi ,\mathscr{L}$

and any

![]() $\skew9\bar{\mathscr{L}} \supseteq \mathscr{L}$

that satisfies

PULP1

–

PULP2

we have

$\skew9\bar{\mathscr{L}} \supseteq \mathscr{L}$

that satisfies

PULP1

–

PULP2

we have

![]() $Z(\Phi )\leq 2^{|\skew9\bar{\mathscr{L}}|}Z(\Phi ,\mathscr{L})$

.

$Z(\Phi )\leq 2^{|\skew9\bar{\mathscr{L}}|}Z(\Phi ,\mathscr{L})$

.

In order to identify a ‘small’ closure

![]() $\skew9\bar{\mathscr{L}}$

the

$\skew9\bar{\mathscr{L}}$

the

![]() $\texttt {PULP}$

algorithm resorts to pure literal elimination, a simple trick commonplace to satisfiability algorithms. A variable

$\texttt {PULP}$

algorithm resorts to pure literal elimination, a simple trick commonplace to satisfiability algorithms. A variable

![]() $x$

is pure in a CNF formula

$x$

is pure in a CNF formula

![]() $\Phi$

if

$\Phi$

if

![]() $\mathrm{sign}(x,a)=\mathrm{sign}(x,b)$

for any two clauses

$\mathrm{sign}(x,a)=\mathrm{sign}(x,b)$

for any two clauses

![]() $a,b\in \partial x$

. Clearly, if our objective is to construct a satisfying assignment, we might as well set all pure variables

$a,b\in \partial x$

. Clearly, if our objective is to construct a satisfying assignment, we might as well set all pure variables

![]() $x$

to the value that satisfies all clauses

$x$

to the value that satisfies all clauses

![]() $a\in \partial x$

and disregard these clauses henceforth. In light of this observation, pure literal elimination repeatedly removes all clauses that contain a pure variable. Naturally, every round of clause removals may create new pure variables, and thus more clauses may be ripe for removal in the next round. For a clause

$a\in \partial x$

and disregard these clauses henceforth. In light of this observation, pure literal elimination repeatedly removes all clauses that contain a pure variable. Naturally, every round of clause removals may create new pure variables, and thus more clauses may be ripe for removal in the next round. For a clause

![]() $a$

of the original formula

$a$

of the original formula

![]() $\Phi$

let

$\Phi$

let

![]() $\mathfrak{h}_a(\Phi )\geq 1$

be the number of the round at which pure literal elimination removes

$\mathfrak{h}_a(\Phi )\geq 1$

be the number of the round at which pure literal elimination removes

![]() $a$

. If

$a$

. If

![]() $a$

is never removed then we set

$a$

is never removed then we set

![]() $\mathfrak{h}_a(\Phi )=\infty$

.

$\mathfrak{h}_a(\Phi )=\infty$

.

The

![]() $\texttt {PULP}$

algorithm invokes a slightly modified version of pure literal elimination to accommodate the initial set

$\texttt {PULP}$

algorithm invokes a slightly modified version of pure literal elimination to accommodate the initial set

![]() $\mathscr{L}$

of literals. Specifically, for a variable

$\mathscr{L}$

of literals. Specifically, for a variable

![]() $x$

of a CNF

$x$

of a CNF

![]() $\Phi$

and

$\Phi$

and

![]() $s\in \{\pm 1\}$

let

$s\in \{\pm 1\}$

let

![]() $\Phi [x\mapsto s]$

be the CNF obtained by removing all clauses

$\Phi [x\mapsto s]$

be the CNF obtained by removing all clauses

![]() $a\in \partial x$

with

$a\in \partial x$

with

![]() $\mathrm{sign}(x,a)=s$

and removing the literal

$\mathrm{sign}(x,a)=s$

and removing the literal

![]() $-s\cdot x$

from all

$-s\cdot x$

from all

![]() $a\in \partial x$

with

$a\in \partial x$

with

![]() $\mathrm{sign}(x,a)=-s$

. The definition reflects that if we set

$\mathrm{sign}(x,a)=-s$

. The definition reflects that if we set

![]() $x$

to value

$x$

to value

![]() $s$

, all

$s$

, all

![]() $a\in \partial ^s x$

will be satisfied, while all

$a\in \partial ^s x$

will be satisfied, while all

![]() $a\in \partial ^{-s} x$

will have to be satisfied by one of their other constituent literals. Further, let

$a\in \partial ^{-s} x$

will have to be satisfied by one of their other constituent literals. Further, let

\begin{align} \mathfrak{h}_x(s,\Phi ) & = \begin{cases} 0 & \mbox{ if }\partial _\Phi ^{-s} x=\emptyset ,\\ \max \left \{{\mathfrak{h}_a(\Phi [x\mapsto s])\,:\,a\in \partial _\Phi ^{-s} x}\right \} & \mbox{ otherwise.} \end{cases} \qquad \in [0,\infty ]. \end{align}

\begin{align} \mathfrak{h}_x(s,\Phi ) & = \begin{cases} 0 & \mbox{ if }\partial _\Phi ^{-s} x=\emptyset ,\\ \max \left \{{\mathfrak{h}_a(\Phi [x\mapsto s])\,:\,a\in \partial _\Phi ^{-s} x}\right \} & \mbox{ otherwise.} \end{cases} \qquad \in [0,\infty ]. \end{align}

We refer to

![]() $\mathfrak{h}_x(s,\Phi )$

as the height of literal

$\mathfrak{h}_x(s,\Phi )$

as the height of literal

![]() $s\cdot x$

in

$s\cdot x$

in

![]() $\Phi$

.

$\Phi$

.

The

![]() $\texttt {PULP}$

algorithm, displayed as Algorithm 1, harnesses the heights as follows. In its attempt to precipitate PULP1 and PULP2 the algorithm iteratively enhances the set

$\texttt {PULP}$

algorithm, displayed as Algorithm 1, harnesses the heights as follows. In its attempt to precipitate PULP1 and PULP2 the algorithm iteratively enhances the set

![]() $\mathscr{L}$

of literals deemed to be ‘true’. For any clause

$\mathscr{L}$

of literals deemed to be ‘true’. For any clause

![]() $a$

that violates PULP1 and that contains a literal

$a$

that violates PULP1 and that contains a literal

![]() $l\not \in \neg \mathscr{L}$

the algorithm adds one such literal

$l\not \in \neg \mathscr{L}$

the algorithm adds one such literal

![]() $l$

of minimum height to

$l$

of minimum height to

![]() $\mathscr{L}$

. This choice is intended to keep the ultimate size of the closure small; one could say that

$\mathscr{L}$

. This choice is intended to keep the ultimate size of the closure small; one could say that

![]() $\texttt {PULP}$

uses height as a proxy of ‘size’. If at any point the algorithm encounters a clause

$\texttt {PULP}$

uses height as a proxy of ‘size’. If at any point the algorithm encounters a clause

![]() $a$

that consists of literals from

$a$

that consists of literals from

![]() $\neg \mathscr{L}$

only, the algorithm reports a contradiction and aborts.

$\neg \mathscr{L}$

only, the algorithm reports a contradiction and aborts.

The

![]() $\texttt {PULP}$

algorithm

$\texttt {PULP}$

algorithm

Remark 2.9. To break ties that may occur in the execution of Steps 3 and 7 of

![]() $\texttt {PULP}$

we assume that the variables and clauses of

$\texttt {PULP}$

we assume that the variables and clauses of

![]() $\Phi$

are numbered so that Steps 3 and 7 can choose the clause/variable with the smallest number that satisfies the respective requirements. In due course we will run

$\Phi$

are numbered so that Steps 3 and 7 can choose the clause/variable with the smallest number that satisfies the respective requirements. In due course we will run

![]() $\texttt {PULP}$

on (finite subtrees of) the Galton-Watson tree

$\texttt {PULP}$

on (finite subtrees of) the Galton-Watson tree

![]() $\mathbb{T}$

. To number the variables and clauses of

$\mathbb{T}$

. To number the variables and clauses of

![]() $\mathbb{T}$

we equip each of them with an independent Gaussian label. Since

$\mathbb{T}$

we equip each of them with an independent Gaussian label. Since

![]() $\mathbb{T}$

comprises a countable number of clauses/variables, these labels will almost surely be pairwise distinct.

$\mathbb{T}$

comprises a countable number of clauses/variables, these labels will almost surely be pairwise distinct.

From here on we write

![]() $\skew9\bar{\mathscr{L}}$

for the set of literals returned by

$\skew9\bar{\mathscr{L}}$

for the set of literals returned by

![]() $\texttt {PULP}$

if the algorithm does not encounter a contradiction; in the event of a a contradiction we let

$\texttt {PULP}$

if the algorithm does not encounter a contradiction; in the event of a a contradiction we let

![]() $\skew9\bar{\mathscr{L}}=\{x,\neg x\,:\,x\in V(\Phi )\}$

be the set of all literals. Where the reference to the formula

$\skew9\bar{\mathscr{L}}=\{x,\neg x\,:\,x\in V(\Phi )\}$

be the set of all literals. Where the reference to the formula

![]() $\Phi$

is not entirely obvious, we write

$\Phi$

is not entirely obvious, we write

![]() $\skew9\bar{\mathscr{L}}_{\Phi }$

. The analysis of

$\skew9\bar{\mathscr{L}}_{\Phi }$

. The analysis of

![]() $\texttt {PULP}$

on the random formula

$\texttt {PULP}$

on the random formula

![]() $\boldsymbol{\Phi }'$

furnishes the following bound on

$\boldsymbol{\Phi }'$

furnishes the following bound on

![]() $|\skew9\bar{\mathscr{L}}|$

in terms of the size of the initial set

$|\skew9\bar{\mathscr{L}}|$

in terms of the size of the initial set

![]() $\mathscr{L}$

. This bound is the key ingredient towards the proof of Proposition 2.7.

$\mathscr{L}$