1. Introduction

To date, the majority of probabilistic state estimation approaches in robotics operate in discrete time. However, such algorithms are usually pushed to their limits when considering high-rate sensor data, such as inertial measurement units (IMUs), or sensors that continuously collect data during motion, such as rolling-shutter cameras or spinning LiDAR and radar sensors. Continuous-time estimation techniques offer a solution to this problem by representing the state as a smooth trajectory over time, allowing inference of the state at any desired query time. Unlike discrete-time methods, which need to include an additional state at every measurement time, continuous-time approaches can incorporate measurements at any time along the trajectory [Reference Furgale, Barfoot and Sibley16].

Our proposed method incorporates known control inputs into a continuous Gaussian process prior formulation, which is fused with noisy state observations. It is applicable to the estimation of both mobile robot continuous-time trajectories and continuum-robot shapes.

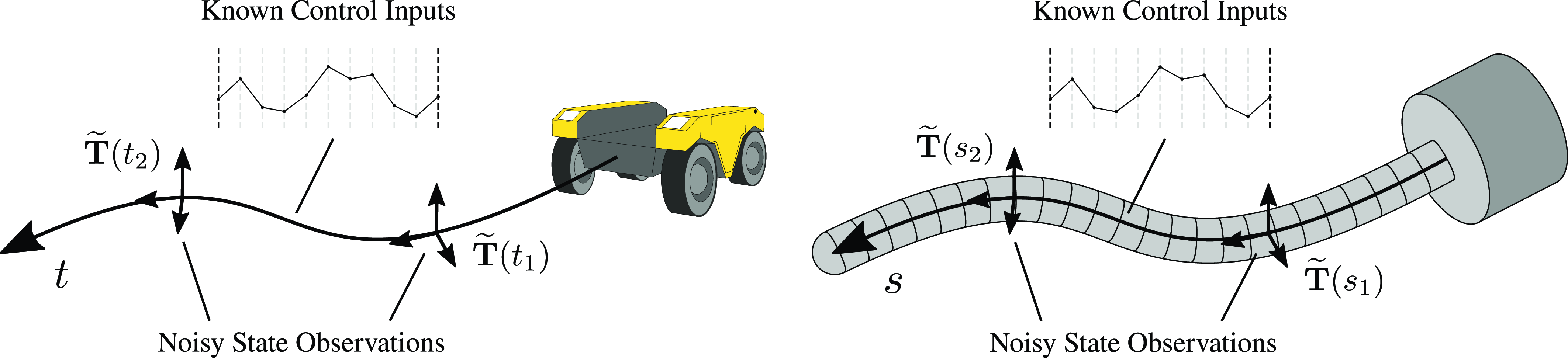

Throughout this manuscript, we are specifically focusing on continuous-time batch state estimation using Gaussian processes (GPs) to represent the robot’s state and uncertainty continuously over time [Reference Tong, Furgale and Barfoot33]. This approach involves formulating a suitable prior distribution of continuous robot trajectories. Current formulations are usually based on simple physics models, making specific assumptions about the motion of the robot. In [Reference Anderson and Barfoot1] a white-noise-on-acceleration (WNOA) prior is employed, assuming robot motion with constant velocity. This approach has been extended to a white-noise-on-jerk (WNOJ) prior in [Reference Tang, Yoon and Barfoot30], which assumes constant acceleration. These formulations have been shown to also be applicable to the continuous estimation of the shape of continuum robots [Reference Ferguson, Rucker and Webster13, Reference Lilge, Barfoot and Burgner-Kahrs22, Reference Lilge, Barfoot and Burgner-Kahrs23], substituting time with arclength and velocity with strain. Figure 1 highlights this analogy.

For mobile robots, we seek to estimate their pose and velocity along a continuous trajectory, where the continuous variable is time. For continuum robots, an analogous estimation problem can be formulated, in which we seek to estimate the robot’s pose and strain along their continuous shape. The continuous variable is now arclength, and instead of estimating a trajectory over time, we are estimating a quasi-static shape in space. In both cases, the robot’s pose and its derivative are estimated along a continuous, smooth curve—representing either a trajectory or shape—by fusing the Gaussian process prior with noisy state observations of various types. For instance, in mobile robotics, noisy landmark observations are commonly used to relate the robot’s pose and orientation to landmarks in the environment. In continuum robotics, electromagnetic tracking sensors might provide noisy direct measurements of the pose.

In many cases, the GP prior formulations represent the mobile robot motions or continuum robot shapes well. However, besides the assumptions of constant velocity (or strain) or acceleration (equivalent to the strain derivative for continuum robots), no additional knowledge about the robot state is incorporated into the prior, such as exogenous control inputs to the system. For mobile robots, these inputs could be known velocity or acceleration commands, while for continuum robots they could consist of known actuation forces and moments acting on their structure. Incorporating such information directly into the GP formulation can lead to more informed prior distributions, representing the mobile robot trajectory or continuum robot shape more accurately. Ultimately, this can mean that less measurements are required to accurately estimate the continuous robot state, when compared to the traditional prior formulations.

In this work, we are extending the existing WNOA Gaussian process prior formulation from [Reference Anderson and Barfoot1] (for mobile robots) and [Reference Lilge, Barfoot and Burgner-Kahrs22] (for continuum robots) to include known exogenous control inputs. We show how this approach can be applied to both mobile robot continuous-time trajectory estimation and continuum-robot shape estimation (see Figure 1).

1.1. Related work

In the following, we review commonly used continuous-time state estimation methods. For a more comprehensive introduction, we refer interested readers to a recent survey on these approaches [Reference Talbot, Nubert, Tuna, Cadena, Dümbgen, Tordesillas, Barfoot and Hutter29].

Initial approaches to infer the continuous state between two discrete states of a batch estimation approach relied on simple linear interpolation. For instance, the works in [Reference Bosse and Zlot6, Reference Bosse, Zlot and Flick7] assume constant velocities over short time periods to linearly interpolate the robot’s pose for LiDAR Simultaneous Localization and Mapping. Similarly, [Reference Hong, Ko and Kim17, Reference Zhang and Singh35] use linear pose interpolations to correct for motion distortion during LiDAR odometry. While linear interpolation schemes are easy to implement and fast to compute, their accuracy is generally limited, as they do not necessarily correspond to the actual robot motion.

As an alternative, first parametric continuous-time state estimation approaches were proposed, using temporal basis functions to represent continuous and smooth robot trajectories [Reference Furgale, Barfoot and Sibley16]. While initial investigations were limited to deal with states expressed in vector spaces, this approach has since been extended to Lie group formulations [Reference Lovegrove, Patron-Perez and Sibley24, Reference Patron-Perez, Lovegrove and Sibley26]. Recent advancements mostly focus on increasing computational efficiency of these approaches, e.g., by deriving analytical Jacobians for Lie group formulations [Reference Li, Cao and Hanebeck21, Reference Sommer, Usenko, Schubert, Demmel and Cremers28, Reference Tirado and Civera31].

A nonparametric approach, relying only on time as its sole parameter, was proposed in [Reference Tong, Anderson, Dong and Barfoot32, Reference Tong, Furgale and Barfoot33], using GPs to represent the continuous state in 2D and 3D. Both of these works allow for a variety of different GP priors featuring relatively large and dense kernel matrices, ultimately limiting computational efficiency. A more efficient GP prior was proposed in [Reference Barfoot, Tong and Särkkä4], which is derived from linear, time-varying stochastic differential equations driven by white noise, leading to an exactly sparse inverse kernel matrix by exploiting the Markov property. The proposed prior employs WNOA for 2D robot trajectories, leading to a constant velocity assumption. The WNOA prior was extended to

![]() $SE(3)$

in [Reference Anderson and Barfoot1], while [Reference Tang, Yoon and Barfoot30] proposed an extension to consider white-noise-on-jerk prior formulations, assuming a constant acceleration instead of constant velocities. In [Reference Wong, Yoon, Schoellig and Barfoot34], the Singer prior is proposed, which introduces an additional parameter to the GP prior, representing latent accelerations. Learning this parameter from data can potentially lead to priors that represent the robot’s motion more accurately than the WNOA and WNOJ priors. Lastly, derivations for formulations of the WNOA GP prior for general matrix Lie groups, beyond just

$SE(3)$

in [Reference Anderson and Barfoot1], while [Reference Tang, Yoon and Barfoot30] proposed an extension to consider white-noise-on-jerk prior formulations, assuming a constant acceleration instead of constant velocities. In [Reference Wong, Yoon, Schoellig and Barfoot34], the Singer prior is proposed, which introduces an additional parameter to the GP prior, representing latent accelerations. Learning this parameter from data can potentially lead to priors that represent the robot’s motion more accurately than the WNOA and WNOJ priors. Lastly, derivations for formulations of the WNOA GP prior for general matrix Lie groups, beyond just

![]() $SE(3)$

, are proposed in [Reference Dong, Boots and Dellaert11, Reference Dong, Mukadam, Boots and Dellaert12]. All of the aforementioned GP priors are relatively simple physically motivated formulations that do not consider exogenous control inputs. Instead, velocity or acceleration inputs are often incorporated as measurements of the state [Reference Burnett, Schoellig and Barfoot8, Reference Burnett, Schoellig and Barfoot9].

$SE(3)$

, are proposed in [Reference Dong, Boots and Dellaert11, Reference Dong, Mukadam, Boots and Dellaert12]. All of the aforementioned GP priors are relatively simple physically motivated formulations that do not consider exogenous control inputs. Instead, velocity or acceleration inputs are often incorporated as measurements of the state [Reference Burnett, Schoellig and Barfoot8, Reference Burnett, Schoellig and Barfoot9].

The continuous-time motion prior for the estimation of mobile robot trajectories was further repurposed to the continuous estimation of continuum-robot shapes. In [Reference Lilge, Barfoot and Burgner-Kahrs22], the WNOA prior was adopted to estimate the quasi-static continuous shape and strain of continuum robots, considering arclength as the continuous parameter instead of time. This approach was extended to coupled robot topologies in [Reference Lilge, Barfoot and Burgner-Kahrs23], while [Reference Ferguson, Rucker and Webster13] adopts a WNOJ prior to additionally estimate continuously applied forces to the continuum body. A recent study compares the GP-based continuous-time estimation approach with parametric spline-based methods, noting that results are similar regarding the achieved accuracies and computation times [Reference Johnson, Mangelson, Barfoot and Beard18].

Another approach to continuous state estimation using GPs is presented in [Reference Gentil, Vidal-Calleja and Huang20] and [Reference Gentil and Vidal-Calleja19]. Here, GPs are used to pre-integrate high-rate IMU measurements, creating pseudo-measurements between two discretely estimated states. The idea of pre-integrating high-rate measurements was first discussed in [Reference Lupton and Sukkarieh25], which uses numerical integration schemes, and is the backbone of many discrete-time batch estimation schemes [Reference Forster, Carlone, Dellaert and Scaramuzza14, Reference Forster, Carlone, Dellaert and Scaramuzza15]. Contrary to these traditional methods, the approach in [Reference Gentil, Vidal-Calleja and Huang20] and [Reference Gentil and Vidal-Calleja19] uses a continuous GP latent space representation fitted to measurements, which is then integrated in closed form (i.e., Bayesian quadrature). This results in a smooth pseudo-measurement, which is then used as a factor between two discrete states in a batch estimation approach. Unlike the continuous-time estimation methods discussed before, this work does not feature an underlying continuous motion prior and carries out estimation purely in discrete time after pre-integration. It is thus unable to estimate the continuous mean and covariance of a robot’s trajectory and could fail if the high-rate measurements between two discrete states drop out. A similar pre-integration approach is presented in [Reference Cioffi, Bauersfeld and Scaramuzza10]. Here, conventional on-manifold pre-integration [Reference Forster, Carlone, Dellaert and Scaramuzza15] is combined with a data-driven dynamics model, to incorporate dynamic motion model factors into the discrete-time batch estimation of drone trajectories.

To conclude, the main limitations of current approaches lie in the simplicity of the physical assumptions underlying most continuous-time state estimation methods, such as constant velocity or constant acceleration. While these assumptions work well if the robot motion is close to these conditions, they fail to adequately represent more complex or varying motion. Pre-integration methods partially offer a solution by capturing robot motion through pre-integrated factors considering high-rate measurements. However, they revert back to a discrete-time estimation problem at runtime, sacrificing the ability to continuously query the state at any desired time.

Consequently, a key question remains open: how can more intricate physics models, especially ones incorporating exogenous control inputs, be integrated into continuous-time state estimation frameworks? Addressing this challenge is crucial for formulating priors that more accurately represent the actual motion of robots. This issue is also identified as an open research question in a recent survey on continuous-time state estimation methods [Reference Talbot, Nubert, Tuna, Cadena, Dümbgen, Tordesillas, Barfoot and Hutter29]. Tackling this problem has the potential to enhance both the accuracy and applicability of continuous-time state estimation methods.

1.2. Contributions

This work presents a direct extension of the established WNOA Gaussian process prior [Reference Anderson and Barfoot1, Reference Lilge, Barfoot and Burgner-Kahrs22] to include exogenous control inputs. The derivations presented throughout this manuscript allow for the inclusion of inputs on both the velocity and acceleration. To the best of the authors’ knowledge, this work presents the first GP-based continuous-time estimation approach that combines a physically motivated motion prior with such control inputs. By combining ideas from the pre-integration literature [Reference Gentil and Vidal-Calleja19, Reference Gentil, Vidal-Calleja and Huang20] with previously proposed continuous-time motion priors [Reference Anderson and Barfoot1, Reference Lilge, Barfoot and Burgner-Kahrs22], a single factor between two discrete robot states is derived, which can then be used in a batch estimation approach.

While our method shares similarities with existing approaches in the current literature, there are some striking differences that set it apart. As discussed in the previous section, continuous-time state estimation methods for robotics are traditionally based on temporal basis functions, such as splines, or GPs [Reference Johnson, Mangelson, Barfoot and Beard18, Reference Talbot, Nubert, Tuna, Cadena, Dümbgen, Tordesillas, Barfoot and Hutter29]. While spline formulations are not directly tied to motion process models, GPs are motivated by the underlying physics of robot motion, theoretically allowing the inclusion of complex process models and control inputs. However, previous GP-based methods have been limited to simple motion models such as constant velocity or constant acceleration [Reference Anderson and Barfoot1, Reference Tang, Yoon and Barfoot30].

Our approach makes contributions to the field by introducing a GP prior capable of representing more complex robot motions defined by exogenous control inputs. This is the first time such an extension has been proposed. Consequently, our priors are more informed, more general, and potentially more accurate. Similar to pre-integration methods [Reference Gentil and Vidal-Calleja19, Reference Gentil, Vidal-Calleja and Huang20], which pre-integrate IMU measurements to compute relative pseudo-measurement factors, our motion prior factors describe the relative motion between discrete states in time. However, unlike pre-integration methods, which estimate the states at discrete times and are unable to query it at arbitrary times after computation of the factors, our GP-based approach allows straightforward interpolation of the mean and covariance of the robot’s state at any query time. This enables the recovery of the local shape and uncertainty of the trajectory between discrete states. Additionally, in the case in which no known inputs are available, the proposed method gracefully falls back to the underlying WNOA motion prior for estimation and interpolation.

In conclusion, this work advances continuous-time state estimation, introducing a GP-based framework that incorporates process models considering exogenous control inputs, potentially paving the way for more versatile continuous-time estimation approaches in robotics. Throughout this manuscript, we derive formulations that consider continuous control inputs in

![]() $SE(3)$

, allowing us to approximate the resulting GP prior in closed form. The proposed approach is applied to both the estimation of continuous mobile robot trajectories and quasi-static continuum-robot shapes, highlighting advantages over the existing GP motion priors. We show that considering control inputs is particularly useful when the available measurement data are sparse.

$SE(3)$

, allowing us to approximate the resulting GP prior in closed form. The proposed approach is applied to both the estimation of continuous mobile robot trajectories and quasi-static continuum-robot shapes, highlighting advantages over the existing GP motion priors. We show that considering control inputs is particularly useful when the available measurement data are sparse.

2. Continuous state estimation using Gaussian process regression with inputs

In the following, we outline derivations for our new GP prior formulation considering control inputs. We further briefly discuss how the resulting prior can be used in a maximum a posteriori (MAP) batch estimation scheme, but refer the reader to [Reference Anderson and Barfoot1, Reference Lilge, Barfoot and Burgner-Kahrs22] for additional details.

We note that the following derivations are written for mobile robot trajectory estimation, featuring time as the continuous independent variable as well as pose and generalized velocity (i.e., twist) as the robot’s state variables. However, analogous formulations can be derived for quasi-static continuum-robot shape estimation problems, replacing time with arclength and velocity with strain (see [Reference Lilge, Barfoot and Burgner-Kahrs22] for details of this analogy).

2.1. Gaussian process prior

Throughout the following, we are using the notation established in [Reference Barfoot3]. We describe the continuous-time mobile robot state with the following time-varying stochastic differential equations:

These equations describe how the robot’s pose

![]() $\mathbf {T}(t) \in SE(3)$

and body-centric velocity

$\mathbf {T}(t) \in SE(3)$

and body-centric velocity

![]() ${\boldsymbol {\varpi }}(t) \in \mathbb {R}^6$

evolve over time. In contrast to the traditional WNOA equations from [Reference Anderson and Barfoot1], we consider two additional inputs terms, one for velocity

${\boldsymbol {\varpi }}(t) \in \mathbb {R}^6$

evolve over time. In contrast to the traditional WNOA equations from [Reference Anderson and Barfoot1], we consider two additional inputs terms, one for velocity

![]() $\mathbf {v}_{\mathrm {in}}(t) \in \mathbb {R}^6$

and one for acceleration inputs

$\mathbf {v}_{\mathrm {in}}(t) \in \mathbb {R}^6$

and one for acceleration inputs

![]() $\mathbf {a}_{\mathrm {in}}(t) \in \mathbb {R}^6$

. The overall robot body-centric velocity

$\mathbf {a}_{\mathrm {in}}(t) \in \mathbb {R}^6$

. The overall robot body-centric velocity

![]() ${\boldsymbol {\varpi }}(t)$

now consists of both the velocity inputs

${\boldsymbol {\varpi }}(t)$

now consists of both the velocity inputs

![]() $\mathbf {v}_{\mathrm {in}}(t) \in \mathbb {R}^6$

and a velocity bias term

$\mathbf {v}_{\mathrm {in}}(t) \in \mathbb {R}^6$

and a velocity bias term

![]() ${\boldsymbol {\varpi }}_{\mathrm {bias}}(t)$

. This bias term describes motion that is not a direct result of the velocity inputs. Its derivative is driven by a white-noise GP

${\boldsymbol {\varpi }}_{\mathrm {bias}}(t)$

. This bias term describes motion that is not a direct result of the velocity inputs. Its derivative is driven by a white-noise GP

![]() $\mathbf {w}(t)$

with positive-definite power-spectral density matrix

$\mathbf {w}(t)$

with positive-definite power-spectral density matrix

![]() $\mathbf {Q}_c$

. In addition, we consider potential acceleration inputs

$\mathbf {Q}_c$

. In addition, we consider potential acceleration inputs

![]() $\mathbf {a}_{\mathrm {in}}(t)$

as part of this derivative.

$\mathbf {a}_{\mathrm {in}}(t)$

as part of this derivative.

Given the stated equations, both inputs

![]() $\mathbf {v}_{\mathrm {in}}(t)$

and

$\mathbf {v}_{\mathrm {in}}(t)$

and

![]() $\mathbf {a}_{\mathrm {in}}(t)$

are independent from each other (i.e.,

$\mathbf {a}_{\mathrm {in}}(t)$

are independent from each other (i.e.,

![]() $\mathbf {a}_{\mathrm {in}}(t)$

is not the derivative of

$\mathbf {a}_{\mathrm {in}}(t)$

is not the derivative of

![]() $\mathbf {v}_{\mathrm {in}}(t)$

). In theory, both inputs can be applied simultaneously, resulting in robot motion described by a superposition of velocity and acceleration inputs. In practice, we expect to use either velocity or acceleration inputs, with either

$\mathbf {v}_{\mathrm {in}}(t)$

). In theory, both inputs can be applied simultaneously, resulting in robot motion described by a superposition of velocity and acceleration inputs. In practice, we expect to use either velocity or acceleration inputs, with either

![]() $\mathbf {v}_{\mathrm {in}}(t)$

or

$\mathbf {v}_{\mathrm {in}}(t)$

or

![]() $\mathbf {a}_{\mathrm {in}}(t)$

set to zero. When both inputs are zero, the equations simplify to the traditional WNOA case discussed in [Reference Anderson and Barfoot1].

$\mathbf {a}_{\mathrm {in}}(t)$

set to zero. When both inputs are zero, the equations simplify to the traditional WNOA case discussed in [Reference Anderson and Barfoot1].

Following our previous work, we use a series of local Gaussian processes to approximate the nonlinear stochastic differential equations above. We discretize the continuous robot state into

![]() $K$

discrete states at times

$K$

discrete states at times

![]() $t_k$

. Between each pair of neighboring estimation times,

$t_k$

. Between each pair of neighboring estimation times,

![]() $t_k$

and

$t_k$

and

![]() $t_{k+1}$

, local pose variables are defined in the Lie algebra

$t_{k+1}$

, local pose variables are defined in the Lie algebra

![]() ${\boldsymbol {\xi }}_k(t) \in \mathfrak {se}(3)$

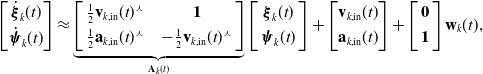

(see Figure 2, top). We can now write

${\boldsymbol {\xi }}_k(t) \in \mathfrak {se}(3)$

(see Figure 2, top). We can now write

where

![]() ${\boldsymbol {\mathcal {J}}}_k\,=\,{\boldsymbol {\mathcal {J}}}(\ln \left (\mathbf {T}(t)\mathbf {T}(t_k)^{-1}\right )^\vee )$

is the left Jacobian of

${\boldsymbol {\mathcal {J}}}_k\,=\,{\boldsymbol {\mathcal {J}}}(\ln \left (\mathbf {T}(t)\mathbf {T}(t_k)^{-1}\right )^\vee )$

is the left Jacobian of

![]() $SE(3)$

[Reference Barfoot3] and inputs

$SE(3)$

[Reference Barfoot3] and inputs

![]() $\mathbf {v}_{k,\mathrm {in}}(t)$

,

$\mathbf {v}_{k,\mathrm {in}}(t)$

,

![]() $\mathbf {a}_{k,\mathrm {in}}(t)$

, as well as noise

$\mathbf {a}_{k,\mathrm {in}}(t)$

, as well as noise

![]() $\mathbf {w}_k(t)$

are now defined on the local time interval. Considering the local Markovian state

$\mathbf {w}_k(t)$

are now defined on the local time interval. Considering the local Markovian state

![]() ${\boldsymbol {\gamma }}_k(t) = {\begin{bmatrix}{\boldsymbol {\xi }}_k(t)^T & {\boldsymbol {\psi }}_k(t)^T\end{bmatrix}}^T$

, we can write the following time-varying stochastic differential equations:

${\boldsymbol {\gamma }}_k(t) = {\begin{bmatrix}{\boldsymbol {\xi }}_k(t)^T & {\boldsymbol {\psi }}_k(t)^T\end{bmatrix}}^T$

, we can write the following time-varying stochastic differential equations:

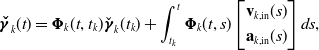

\begin{align} \begin{bmatrix} \dot {{\boldsymbol {\xi }}}_k(t) \\[3pt] {\dot {{\boldsymbol {\psi }}}_k(t)} \end{bmatrix} = \left[\begin{array}{c@{\quad}c} \mathbf {0} & \mathbf {1} \\[3pt] \mathbf {0} & \dot {{\boldsymbol {\mathcal {J}}}}_k^{-1}{\boldsymbol {\mathcal {J}}}_k \end{array}\right] \begin{bmatrix} {\boldsymbol {\xi }}_k(t)\\[3pt] {{\boldsymbol {\psi }}_k(t)} \end{bmatrix} + {\boldsymbol {\mathcal {J}}}_k^{-1}\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(t) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(t) \end{bmatrix} + \left[\begin{array}{c} \mathbf {0} \\[3pt] {\boldsymbol {\mathcal {J}}}_k^{-1} \end{array}\right] \mathbf {w}_k(t), \end{align}

\begin{align} \begin{bmatrix} \dot {{\boldsymbol {\xi }}}_k(t) \\[3pt] {\dot {{\boldsymbol {\psi }}}_k(t)} \end{bmatrix} = \left[\begin{array}{c@{\quad}c} \mathbf {0} & \mathbf {1} \\[3pt] \mathbf {0} & \dot {{\boldsymbol {\mathcal {J}}}}_k^{-1}{\boldsymbol {\mathcal {J}}}_k \end{array}\right] \begin{bmatrix} {\boldsymbol {\xi }}_k(t)\\[3pt] {{\boldsymbol {\psi }}_k(t)} \end{bmatrix} + {\boldsymbol {\mathcal {J}}}_k^{-1}\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(t) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(t) \end{bmatrix} + \left[\begin{array}{c} \mathbf {0} \\[3pt] {\boldsymbol {\mathcal {J}}}_k^{-1} \end{array}\right] \mathbf {w}_k(t), \end{align}

We can further simplify this expression as

\begin{align} \begin{bmatrix} \dot {{\boldsymbol {\xi }}}_k(t)\\[3pt] {\dot {{\boldsymbol {\psi }}}_k(t)} \end{bmatrix} \approx \underbrace {\left[\begin{array}{l@{\quad}c} \frac {1}{2}\mathbf {v}_{k,\mathrm {in}}(t)^\curlywedge & \mathbf {1} \\[3pt] \frac {1}{2}\mathbf {a}_{k,\mathrm {in}}(t)^\curlywedge & -\frac {1}{2}\mathbf {v}_{k,\mathrm {in}}(t)^\curlywedge \end{array}\right]}_{\mathbf {A}_k(t)} \left[\begin{array}{c} {\boldsymbol {\xi }}_k(t)\\[3pt] {{\boldsymbol {\psi }}_k(t)} \end{array}\right] + \begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(t) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(t) \end{bmatrix} + \left[\begin{array}{c} \mathbf {0} \\[3pt] \mathbf {1} \end{array}\right] \mathbf {w}_k(t), \end{align}

\begin{align} \begin{bmatrix} \dot {{\boldsymbol {\xi }}}_k(t)\\[3pt] {\dot {{\boldsymbol {\psi }}}_k(t)} \end{bmatrix} \approx \underbrace {\left[\begin{array}{l@{\quad}c} \frac {1}{2}\mathbf {v}_{k,\mathrm {in}}(t)^\curlywedge & \mathbf {1} \\[3pt] \frac {1}{2}\mathbf {a}_{k,\mathrm {in}}(t)^\curlywedge & -\frac {1}{2}\mathbf {v}_{k,\mathrm {in}}(t)^\curlywedge \end{array}\right]}_{\mathbf {A}_k(t)} \left[\begin{array}{c} {\boldsymbol {\xi }}_k(t)\\[3pt] {{\boldsymbol {\psi }}_k(t)} \end{array}\right] + \begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(t) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(t) \end{bmatrix} + \left[\begin{array}{c} \mathbf {0} \\[3pt] \mathbf {1} \end{array}\right] \mathbf {w}_k(t), \end{align}

while using the approximations

![]() ${\boldsymbol {\mathcal {J}}}_k^{-1} \approx {\boldsymbol {1}} - \frac {1}{2}{\boldsymbol {\xi }}_k(t)^\curlywedge$

and

${\boldsymbol {\mathcal {J}}}_k^{-1} \approx {\boldsymbol {1}} - \frac {1}{2}{\boldsymbol {\xi }}_k(t)^\curlywedge$

and

![]() ${\boldsymbol {\mathcal {J}}}_k \approx {\boldsymbol {1}} + \frac {1}{2}{\boldsymbol {\xi }}_k(t)^\curlywedge$

, and assuming that

${\boldsymbol {\mathcal {J}}}_k \approx {\boldsymbol {1}} + \frac {1}{2}{\boldsymbol {\xi }}_k(t)^\curlywedge$

, and assuming that

![]() ${\boldsymbol {\xi }}_k(t)$

and

${\boldsymbol {\xi }}_k(t)$

and

![]() ${\boldsymbol {\varpi }}_{\mathrm {bias}}(t)$

are small. The above expression can now be integrated stochastically in closed form to obtain a GP prior (i.e., kernel function) with mean

${\boldsymbol {\varpi }}_{\mathrm {bias}}(t)$

are small. The above expression can now be integrated stochastically in closed form to obtain a GP prior (i.e., kernel function) with mean

![]() ${\boldsymbol {\check {\gamma }}}_k(t)$

and covariance

${\boldsymbol {\check {\gamma }}}_k(t)$

and covariance

![]() $\check {\mathbf {P}}(t)$

:

$\check {\mathbf {P}}(t)$

:

\begin{align} {\boldsymbol {\check {\gamma }}}_k(t) &= {\boldsymbol {\Phi }}_k(t,t_k) \check {{\boldsymbol {\gamma }}}_k(t_k) + \int _{t_k}^{t}{\boldsymbol {\Phi }}_k(t,s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds, \\[-2pt] \nonumber\end{align}

\begin{align} {\boldsymbol {\check {\gamma }}}_k(t) &= {\boldsymbol {\Phi }}_k(t,t_k) \check {{\boldsymbol {\gamma }}}_k(t_k) + \int _{t_k}^{t}{\boldsymbol {\Phi }}_k(t,s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds, \\[-2pt] \nonumber\end{align}

Here,

![]() ${{\boldsymbol {\Phi }}}_k(t,t_0)$

is the transition function between two times. It is computed by solving the initial value problem of the differential equation

${{\boldsymbol {\Phi }}}_k(t,t_0)$

is the transition function between two times. It is computed by solving the initial value problem of the differential equation

Additionally,

![]() $\mathbf {Q}(t - t_k)$

is the covariance accumulated between two times, which can be computed as

$\mathbf {Q}(t - t_k)$

is the covariance accumulated between two times, which can be computed as

\begin{align} \mathbf {Q}(t - t_k) = \int _{t_k}^t{{\boldsymbol {\Phi }}}_k(t,s)\begin{bmatrix} \mathbf {0} \\[3pt] \mathbf {1} \end{bmatrix} \mathbf {Q}_c \begin{bmatrix} \mathbf {0} & \mathbf {1} \end{bmatrix}{{\boldsymbol {\Phi }}}_k(t,s)^Tds. \end{align}

\begin{align} \mathbf {Q}(t - t_k) = \int _{t_k}^t{{\boldsymbol {\Phi }}}_k(t,s)\begin{bmatrix} \mathbf {0} \\[3pt] \mathbf {1} \end{bmatrix} \mathbf {Q}_c \begin{bmatrix} \mathbf {0} & \mathbf {1} \end{bmatrix}{{\boldsymbol {\Phi }}}_k(t,s)^Tds. \end{align}

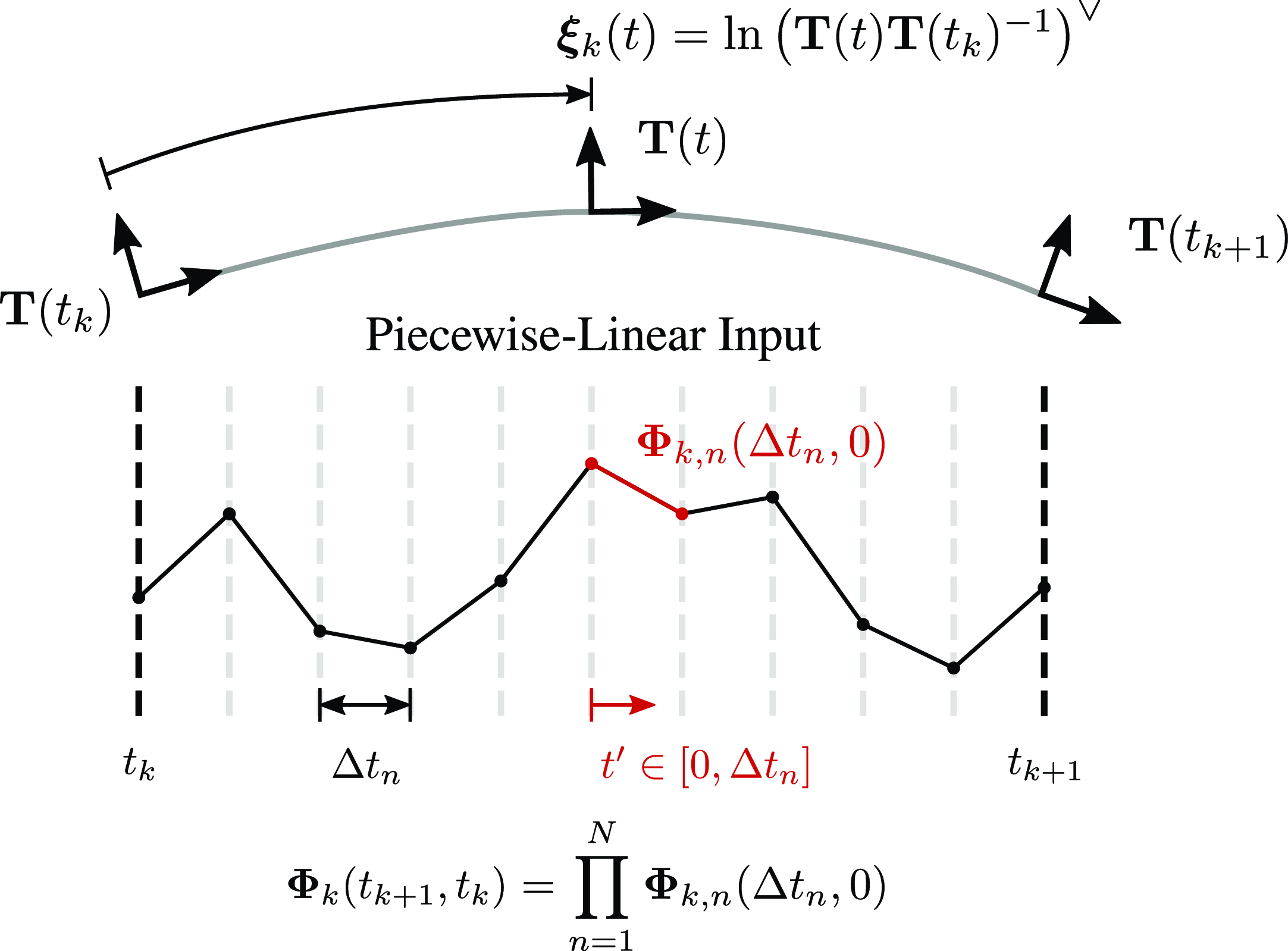

Top: Definition of local pose variables,

![]() ${\boldsymbol {\xi }}_k(t)$

, between two discrete robot states. Bottom: Example of piecewise-linear inputs between two discrete robot states. The overall transition function between the two states

${\boldsymbol {\xi }}_k(t)$

, between two discrete robot states. Bottom: Example of piecewise-linear inputs between two discrete robot states. The overall transition function between the two states

![]() $\boldsymbol{\Phi }_k(t_{k+1},t_k)$

is a product of the individual transition functions for each piecewise-linear segment.

$\boldsymbol{\Phi }_k(t_{k+1},t_k)$

is a product of the individual transition functions for each piecewise-linear segment.

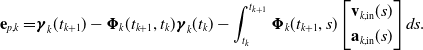

2.2. Prior error term

Next, we derive an error term, evaluating how well a given state aligns with the derived GP prior. The general motion-prior error term for GP regression is

Substituting our expression for the state mean in (7) leads to

\begin{align} \mathbf {e}_{p,k} = &{\boldsymbol {\gamma }}_k(t_{k+1}) - {\boldsymbol {\Phi }}_k(t_{k+1},t_{k}) {\boldsymbol {\gamma }}_k(t_{k}) - \int _{t_{k}}^{t_{k+1}}{\boldsymbol {\Phi }}_k(t_{k+1},s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds. \end{align}

\begin{align} \mathbf {e}_{p,k} = &{\boldsymbol {\gamma }}_k(t_{k+1}) - {\boldsymbol {\Phi }}_k(t_{k+1},t_{k}) {\boldsymbol {\gamma }}_k(t_{k}) - \int _{t_{k}}^{t_{k+1}}{\boldsymbol {\Phi }}_k(t_{k+1},s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds. \end{align}

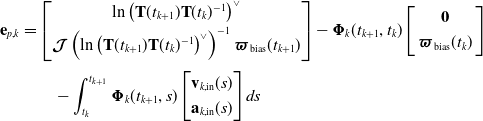

Using (2) and (3), we can further express this error term using our global variables as

\begin{align} \mathbf {e}_{p,k} &= \begin{bmatrix} \ln \left (\mathbf {T}(t_{k+1})\mathbf {T}(t_{k})^{-1}\right )^\vee\\[3pt] {\boldsymbol {\mathcal {J}}}\left (\ln \left (\mathbf {T}(t_{k+1})\mathbf {T}(t_{k})^{-1}\right )^\vee \right )^{-1}{\boldsymbol {\varpi }}_{\mathrm {bias}}(t_{k+1}) \end{bmatrix} - {\boldsymbol {\Phi }}_k(t_{k+1},t_{k}) \left[\begin{array}{c} \mathbf {0}\\[3pt] {\boldsymbol {\varpi }}_{\mathrm {bias}}(t_{k}) \end{array}\right] \\[3pt] & \qquad - \int _{t_{k}}^{t_{k+1}}{\boldsymbol {\Phi }}_k(t_{k+1},s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds \nonumber \end{align}

\begin{align} \mathbf {e}_{p,k} &= \begin{bmatrix} \ln \left (\mathbf {T}(t_{k+1})\mathbf {T}(t_{k})^{-1}\right )^\vee\\[3pt] {\boldsymbol {\mathcal {J}}}\left (\ln \left (\mathbf {T}(t_{k+1})\mathbf {T}(t_{k})^{-1}\right )^\vee \right )^{-1}{\boldsymbol {\varpi }}_{\mathrm {bias}}(t_{k+1}) \end{bmatrix} - {\boldsymbol {\Phi }}_k(t_{k+1},t_{k}) \left[\begin{array}{c} \mathbf {0}\\[3pt] {\boldsymbol {\varpi }}_{\mathrm {bias}}(t_{k}) \end{array}\right] \\[3pt] & \qquad - \int _{t_{k}}^{t_{k+1}}{\boldsymbol {\Phi }}_k(t_{k+1},s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds \nonumber \end{align}

Using this prior error, we can construct the following squared-error cost term to represent its negative log-likelihood:

with

![]() $\mathbf {Q}_{k} = \mathbf {Q}(t_{k+1}-t_{k})$

. Each of the prior cost terms is a binary factor between two discrete states if thought of as a factor-graph representation. It expresses how close the two corresponding states are to the assumption of the motion prior, considering the incorporated control inputs.

$\mathbf {Q}_{k} = \mathbf {Q}(t_{k+1}-t_{k})$

. Each of the prior cost terms is a binary factor between two discrete states if thought of as a factor-graph representation. It expresses how close the two corresponding states are to the assumption of the motion prior, considering the incorporated control inputs.

2.3. Maximum a posteriori batch state estimation

We can use these cost terms in a MAP batch state estimation approach, where they are weighed off against any noisy state observations and measurements. This allows us to obtain a GP posterior

![]() $\hat {{\boldsymbol {\gamma }}}(t_k)$

of the state at discrete times

$\hat {{\boldsymbol {\gamma }}}(t_k)$

of the state at discrete times

![]() $t_k$

. We can again use (2) and (3) to obtain a posterior estimate of our global state variables

$t_k$

. We can again use (2) and (3) to obtain a posterior estimate of our global state variables

![]() $\hat {\mathbf {T}}(t_k)$

and

$\hat {\mathbf {T}}(t_k)$

and

![]() $\hat {{\boldsymbol {\varpi }}}(t_k)$

at these times. We refer to [Reference Anderson and Barfoot1] and [Reference Lilge, Barfoot and Burgner-Kahrs22] for details on the implementation of the MAP batch state estimation approach.

$\hat {{\boldsymbol {\varpi }}}(t_k)$

at these times. We refer to [Reference Anderson and Barfoot1] and [Reference Lilge, Barfoot and Burgner-Kahrs22] for details on the implementation of the MAP batch state estimation approach.

It is noted that during the MAP optimization, the transition function in (9), the accumulated covariance in (10), and the integral term in (13) only have to be computed during the first iteration and can be held constant afterward, as they do not depend on the state variables themselves. This significantly reduces the computational burden of the newly introduced derivations.

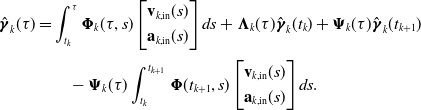

2.4. Gaussian process interpolation

Lastly, we are interested in interpolating the GP posterior at any arbitrary time

![]() $\tau$

along the continuous trajectory. For this, we start with the general interpolation equations for the GP mean stated in [Reference Barfoot3, Sec. 11.3]:

$\tau$

along the continuous trajectory. For this, we start with the general interpolation equations for the GP mean stated in [Reference Barfoot3, Sec. 11.3]:

with

where

![]() $\mathbf {Q}_\tau = \mathbf {Q}(\tau -t_{k})$

. In our case, we can rewrite these equations using the expression for our prior mean in (7) as

$\mathbf {Q}_\tau = \mathbf {Q}(\tau -t_{k})$

. In our case, we can rewrite these equations using the expression for our prior mean in (7) as

\begin{align} \hat {{\boldsymbol {\gamma }}}_k(\tau ) &= \int _{t_k}^{\tau }{\boldsymbol {\Phi }}_k(\tau, s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds + {\boldsymbol {\Lambda }}_k(\tau )\hat {{\boldsymbol {\gamma }}}_k(t_k) + {\boldsymbol {\Psi }}_k(\tau )\hat {{\boldsymbol {\gamma }}}_k(t_{k+1}) \\[3pt] & \qquad - {\boldsymbol {\Psi }}_k(\tau )\int _{t_{k}}^{t_{k+1}}{\boldsymbol {\Phi }}(t_{k+1},s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s)\end{bmatrix} ds. \nonumber \end{align}

\begin{align} \hat {{\boldsymbol {\gamma }}}_k(\tau ) &= \int _{t_k}^{\tau }{\boldsymbol {\Phi }}_k(\tau, s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds + {\boldsymbol {\Lambda }}_k(\tau )\hat {{\boldsymbol {\gamma }}}_k(t_k) + {\boldsymbol {\Psi }}_k(\tau )\hat {{\boldsymbol {\gamma }}}_k(t_{k+1}) \\[3pt] & \qquad - {\boldsymbol {\Psi }}_k(\tau )\int _{t_{k}}^{t_{k+1}}{\boldsymbol {\Phi }}(t_{k+1},s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s)\end{bmatrix} ds. \nonumber \end{align}

Now, we can solve for

![]() $\hat {\mathbf {T}}(\tau )$

and

$\hat {\mathbf {T}}(\tau )$

and

![]() $\hat {{\boldsymbol {\varpi }}}(\tau )$

considering the mapping between global and local pose variables in (2) and (3). The covariance of the posterior GP can be interpolated similarly. Derivations are omitted for the sake of space, but details can be found in [Reference Barfoot3, Sec. 11.3] and [Reference Anderson2]. This interpolation scheme can further be used during the MAP optimization to incorporate measurement terms at query times different from our discrete times

$\hat {{\boldsymbol {\varpi }}}(\tau )$

considering the mapping between global and local pose variables in (2) and (3). The covariance of the posterior GP can be interpolated similarly. Derivations are omitted for the sake of space, but details can be found in [Reference Barfoot3, Sec. 11.3] and [Reference Anderson2]. This interpolation scheme can further be used during the MAP optimization to incorporate measurement terms at query times different from our discrete times

![]() $t_k$

[Reference Burnett, Schoellig and Barfoot8].

$t_k$

[Reference Burnett, Schoellig and Barfoot8].

Although querying the trajectory remains

![]() $O(1)$

, it may be computationally more expensive than in the WNOA case [Reference Anderson and Barfoot1], depending on the inputs

$O(1)$

, it may be computationally more expensive than in the WNOA case [Reference Anderson and Barfoot1], depending on the inputs

![]() $\mathbf {v}_{k,\mathrm {in}}(t)$

and

$\mathbf {v}_{k,\mathrm {in}}(t)$

and

![]() $\mathbf {a}_{k,\mathrm {in}}(t)$

. This increase arises from two factors: (1) the transition function

$\mathbf {a}_{k,\mathrm {in}}(t)$

. This increase arises from two factors: (1) the transition function

![]() ${{\boldsymbol {\Phi }}}_k(t,t_0)$

and accumulated covariance

${{\boldsymbol {\Phi }}}_k(t,t_0)$

and accumulated covariance

![]() $\mathbf {Q}(t-t_0)$

may require more computation, and (2) the integral terms in (18) add additional overhead.

$\mathbf {Q}(t-t_0)$

may require more computation, and (2) the integral terms in (18) add additional overhead.

This completes the general derivations of our GP prior formulation considering inputs. We note that the newly introduced expressions are not analytically solvable in the general case. In the following, we will discuss the particular example of piecewise-linear inputs, for which analytical approximations of all necessary formulations can be derived.

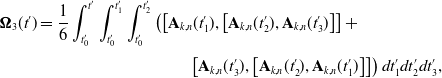

2.5. Example of piecewise-linear inputs

We discretize the inputs between two discrete states at times

![]() $t_k$

and

$t_k$

and

![]() $t_{k+1}$

into

$t_{k+1}$

into

![]() $n\in \left \{1, \dots, N\right \}$

segments, each represented by a piecewise-linear function (see Figure 2, bottom). The inputs over each individual segment are now defined on a local interval

$n\in \left \{1, \dots, N\right \}$

segments, each represented by a piecewise-linear function (see Figure 2, bottom). The inputs over each individual segment are now defined on a local interval

![]() $t^{\prime} \in [0,\Delta t_n]$

as

$t^{\prime} \in [0,\Delta t_n]$

as

where

![]() $\Delta t_n = ({t_{k+1} - t_k})/{N}$

. Given these expressions for our inputs, we can now find the piecewise transition function between two times

$\Delta t_n = ({t_{k+1} - t_k})/{N}$

. Given these expressions for our inputs, we can now find the piecewise transition function between two times

![]() $t^{\prime}_0$

and

$t^{\prime}_0$

and

![]() $t^{\prime}$

for each individual segment. Given linear inputs in the form of (19) and (20), the system matrix in (9) can be written as a linear function itself on the local interval such that

$t^{\prime}$

for each individual segment. Given linear inputs in the form of (19) and (20), the system matrix in (9) can be written as a linear function itself on the local interval such that

with

![]() $\mathbf {A}_{k,n}(t^{\prime}) = \mathbf {B}_{k,n} + \mathbf {C}_{k,n}t^{\prime}$

. Solving this ODE allows us to describe the transition function on the local interval

$\mathbf {A}_{k,n}(t^{\prime}) = \mathbf {B}_{k,n} + \mathbf {C}_{k,n}t^{\prime}$

. Solving this ODE allows us to describe the transition function on the local interval

![]() $t^{\prime} \in [0,\Delta t_n]$

. While there exists no closed-form solution to this differential equation, we can use the Magnus expansion [Reference Blanes, Casas, Oteo and Ros5] to approximate the piecewise transition function for each segment

$t^{\prime} \in [0,\Delta t_n]$

. While there exists no closed-form solution to this differential equation, we can use the Magnus expansion [Reference Blanes, Casas, Oteo and Ros5] to approximate the piecewise transition function for each segment

![]() $n$

. The Magnus expansion expresses the result of a linear ODE, such as the one in (21), as an exponential series:

$n$

. The Magnus expansion expresses the result of a linear ODE, such as the one in (21), as an exponential series:

The first three terms of

![]() ${\boldsymbol {\Omega }}(t)$

are given as

${\boldsymbol {\Omega }}(t)$

are given as

\begin{align} {\boldsymbol {\Omega }}_3(t^{\prime}) &= \frac {1}{6}\int _{t^{\prime}_0}^{t^{\prime}}\int _{t^{\prime}_0}^{t^{\prime}_1}\int _{t^{\prime}_0}^{t^{\prime}_2}\left (\left [\mathbf {A}_{k,n}(t^{\prime}_1),\left [\mathbf {A}_{k,n}(t^{\prime}_2),\mathbf {A}_{k,n}(t^{\prime}_3)\right ]\right ] + \right . \nonumber\\[3pt] & \qquad \qquad \qquad \qquad \quad \left . \left [\mathbf {A}_{k,n}(t^{\prime}_3),\left [\mathbf {A}_{k,n}(t^{\prime}_2),\mathbf {A}_{k,n}(t^{\prime}_1)\right ]\right ]\right )dt^{\prime}_1dt^{\prime}_2dt^{\prime}_3, \end{align}

\begin{align} {\boldsymbol {\Omega }}_3(t^{\prime}) &= \frac {1}{6}\int _{t^{\prime}_0}^{t^{\prime}}\int _{t^{\prime}_0}^{t^{\prime}_1}\int _{t^{\prime}_0}^{t^{\prime}_2}\left (\left [\mathbf {A}_{k,n}(t^{\prime}_1),\left [\mathbf {A}_{k,n}(t^{\prime}_2),\mathbf {A}_{k,n}(t^{\prime}_3)\right ]\right ] + \right . \nonumber\\[3pt] & \qquad \qquad \qquad \qquad \quad \left . \left [\mathbf {A}_{k,n}(t^{\prime}_3),\left [\mathbf {A}_{k,n}(t^{\prime}_2),\mathbf {A}_{k,n}(t^{\prime}_1)\right ]\right ]\right )dt^{\prime}_1dt^{\prime}_2dt^{\prime}_3, \end{align}

where

![]() $\left [\mathbf {A},\mathbf {B}\right ] = \mathbf {AB} - \mathbf {BA}$

is the usual Lie bracket. Because

$\left [\mathbf {A},\mathbf {B}\right ] = \mathbf {AB} - \mathbf {BA}$

is the usual Lie bracket. Because

![]() $\mathbf {A}_{k,n}(t^{\prime})$

is linear, given our piecewise-linear inputs, we can compute these expressions in closed form. Using these first three terms of the Magnus expansion, we now have

$\mathbf {A}_{k,n}(t^{\prime})$

is linear, given our piecewise-linear inputs, we can compute these expressions in closed form. Using these first three terms of the Magnus expansion, we now have

\begin{align} {\boldsymbol {\Phi }}_{k,n}(t^{\prime},t^{\prime}_0) \approx &\exp \Bigl (\mathbf {B}_{k,n}(t^{\prime}-t^{\prime}_0) + \frac {1}{2}\mathbf {C}_{k,n}(t^{\prime 2}-t_0^{\prime 2}) + \frac {1}{12}\left [\mathbf {C}_{k,n},\mathbf {B}_{k,n}\right ](t^{\prime}-t^{\prime}_0)^3 \\[3pt] & \qquad \qquad + \frac {1}{240}\left [\mathbf {C}_{k,n}\left [\mathbf {C}_{k,n},\mathbf {B}_{k,n}\right ]\right ](t^{\prime}-t^{\prime}_0)^5\Bigr ).\nonumber \end{align}

\begin{align} {\boldsymbol {\Phi }}_{k,n}(t^{\prime},t^{\prime}_0) \approx &\exp \Bigl (\mathbf {B}_{k,n}(t^{\prime}-t^{\prime}_0) + \frac {1}{2}\mathbf {C}_{k,n}(t^{\prime 2}-t_0^{\prime 2}) + \frac {1}{12}\left [\mathbf {C}_{k,n},\mathbf {B}_{k,n}\right ](t^{\prime}-t^{\prime}_0)^3 \\[3pt] & \qquad \qquad + \frac {1}{240}\left [\mathbf {C}_{k,n}\left [\mathbf {C}_{k,n},\mathbf {B}_{k,n}\right ]\right ](t^{\prime}-t^{\prime}_0)^5\Bigr ).\nonumber \end{align}

Intuitively, including more terms in the Magnus expansion would improve the approximation of our transition function, but we find the first three terms sufficiently accurate. The total resulting transition function between the two discrete times

![]() $t_k$

and

$t_k$

and

![]() $t_{k+1}$

can now be computed by concatenating the individual piecewise ones such that

$t_{k+1}$

can now be computed by concatenating the individual piecewise ones such that

In order to fully express the prior mean and covariance, we need to compute additional integral terms. For the mean in (7), we compute

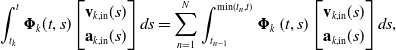

\begin{align} \int _{t_k}^{t}{\boldsymbol {\Phi }}_k(t,s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds &= \sum _{n=1}^{N}\int _{t_{n-1}}^{\mathrm {min}(t_n,t)}\boldsymbol{\Phi }_k\left (t,s\right )\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds, \end{align}

\begin{align} \int _{t_k}^{t}{\boldsymbol {\Phi }}_k(t,s)\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds &= \sum _{n=1}^{N}\int _{t_{n-1}}^{\mathrm {min}(t_n,t)}\boldsymbol{\Phi }_k\left (t,s\right )\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds, \end{align}

\begin{align} &= \sum _{n=1}^{N}\int _{t_{n-1}}^{\mathrm {min}(t_n,t)}\underbrace {\boldsymbol{\Phi }_k\left (t,t_{N-1}\right )\cdots }_{\mathrm {constant}}\boldsymbol{\Phi }_k\left (t_n,s\right )\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds, \\[6pt] \nonumber \end{align}

\begin{align} &= \sum _{n=1}^{N}\int _{t_{n-1}}^{\mathrm {min}(t_n,t)}\underbrace {\boldsymbol{\Phi }_k\left (t,t_{N-1}\right )\cdots }_{\mathrm {constant}}\boldsymbol{\Phi }_k\left (t_n,s\right )\begin{bmatrix} \mathbf {v}_{k,\mathrm {in}}(s) \\[3pt] \mathbf {a}_{k,\mathrm {in}}(s) \end{bmatrix} ds, \\[6pt] \nonumber \end{align}

with

Here, we are computing the whole integral by splitting it up into several smaller intervals, defined by the discretization of the piecewise-linear input as shown in Figure 2. By writing out the transition function in these integrals as a product of the individual transition functions of each segment, we see that only one of those terms depends on the integration variable

![]() $s$

, allowing us to pull the remaining terms out of the integral. Given the analytical approximation from (27) and the expression for the piecewise-linear inputs in (19) and (20), we can now solve the integral in closed form. We omit the details of this derivation for brevity. We further note that we truncate the computed integral after the fifth-order terms, which we find sufficiently accurate for implementation.

$s$

, allowing us to pull the remaining terms out of the integral. Given the analytical approximation from (27) and the expression for the piecewise-linear inputs in (19) and (20), we can now solve the integral in closed form. We omit the details of this derivation for brevity. We further note that we truncate the computed integral after the fifth-order terms, which we find sufficiently accurate for implementation.

The same strategy can be used to compute the integral for the accumulated covariance

![]() $\mathbf {Q}(t - t_k)$

in (10), which we need for the prior covariance in (8):

$\mathbf {Q}(t - t_k)$

in (10), which we need for the prior covariance in (8):

\begin{align} \mathbf {Q}(t - t_k) &= \int _{t_k}^{t}{\boldsymbol {\Phi }}_k(t,s)\begin{bmatrix} \mathbf {0} \\[3pt] \mathbf {1} \end{bmatrix}\mathbf {Q}_c\begin{bmatrix} \mathbf {0} & \mathbf {1} \end{bmatrix}{\boldsymbol {\Phi }}_k(t,s)^T ds, \end{align}

\begin{align} \mathbf {Q}(t - t_k) &= \int _{t_k}^{t}{\boldsymbol {\Phi }}_k(t,s)\begin{bmatrix} \mathbf {0} \\[3pt] \mathbf {1} \end{bmatrix}\mathbf {Q}_c\begin{bmatrix} \mathbf {0} & \mathbf {1} \end{bmatrix}{\boldsymbol {\Phi }}_k(t,s)^T ds, \end{align}

\begin{align} &= \sum _{n=1}^{N}\int _{t_{n-1}}^{\mathrm {min}(t_n,t)}{\boldsymbol {\Phi }}_k(t,s)\begin{bmatrix} \mathbf {0} \\[3pt] \mathbf {1} \end{bmatrix}\mathbf {Q}_c\begin{bmatrix} \mathbf {0} & \mathbf {1} \end{bmatrix}{\boldsymbol {\Phi }}_k(t,s)^T ds, \end{align}

\begin{align} &= \sum _{n=1}^{N}\int _{t_{n-1}}^{\mathrm {min}(t_n,t)}{\boldsymbol {\Phi }}_k(t,s)\begin{bmatrix} \mathbf {0} \\[3pt] \mathbf {1} \end{bmatrix}\mathbf {Q}_c\begin{bmatrix} \mathbf {0} & \mathbf {1} \end{bmatrix}{\boldsymbol {\Phi }}_k(t,s)^T ds, \end{align}

\begin{align} &= \sum _{n=1}^{N}\int _{t_{n-1}}^{\mathrm {min}(t_n,t)} \underbrace {\boldsymbol{\Phi }_k\left (t,t_{N-1}\right )\cdots }_{\mathrm {constant}}\boldsymbol{\Phi }_k\left (t_n,s\right )\begin{bmatrix} \mathbf {0} \\[3pt] \mathbf {1} \end{bmatrix}\mathbf {Q}_c\begin{bmatrix} \mathbf {0} & \mathbf {1} \end{bmatrix}\boldsymbol{\Phi }_k\left (t_n,s\right )^T \underbrace {\cdots \boldsymbol{\Phi }_k\left (t,t_{N-1}\right )^T}_{\mathrm {constant}} ds. \\[6pt] \nonumber \end{align}

\begin{align} &= \sum _{n=1}^{N}\int _{t_{n-1}}^{\mathrm {min}(t_n,t)} \underbrace {\boldsymbol{\Phi }_k\left (t,t_{N-1}\right )\cdots }_{\mathrm {constant}}\boldsymbol{\Phi }_k\left (t_n,s\right )\begin{bmatrix} \mathbf {0} \\[3pt] \mathbf {1} \end{bmatrix}\mathbf {Q}_c\begin{bmatrix} \mathbf {0} & \mathbf {1} \end{bmatrix}\boldsymbol{\Phi }_k\left (t_n,s\right )^T \underbrace {\cdots \boldsymbol{\Phi }_k\left (t,t_{N-1}\right )^T}_{\mathrm {constant}} ds. \\[6pt] \nonumber \end{align}

Using the same strategy as above, we see that several constant terms can again be pulled out of the integral and we can solve the remaining expression using the analytical approximation from (27). We again omit the detailed steps for solving this integral and truncate the result after the fifth order during implementation, which we find sufficiently accurate.

Given the above derivations, further simplifications can be made if the inputs between two times are constant, in which case

![]() $\mathbf {A}_{k,n}(t^{\prime}) = \mathbf {B}_{k,n}$

, or zero, in which case the derivations will simplify to the constant-velocity prior discussed in [Reference Anderson and Barfoot1].

$\mathbf {A}_{k,n}(t^{\prime}) = \mathbf {B}_{k,n}$

, or zero, in which case the derivations will simplify to the constant-velocity prior discussed in [Reference Anderson and Barfoot1].

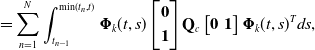

Example scenarios using the proposed GP prior formulation, featuring angular velocity inputs (top) and angular acceleration inputs (bottom). Both scenarios highlight the resulting prior as well as the posterior, when considering an additional position measurement. In each case, the angular velocities as well as the rotation and position are plotted over time, including mean and

![]() $3\sigma$

-covariance envelopes and using red, green, and blue colors for the

$3\sigma$

-covariance envelopes and using red, green, and blue colors for the

![]() $x$

,

$x$

,

![]() $y$

, and

$y$

, and

![]() $z$

components, respectively. Estimation nodes are highlighted with diamonds. Additional renderings of the resulting trajectories are depicted on the right.

$z$

components, respectively. Estimation nodes are highlighted with diamonds. Additional renderings of the resulting trajectories are depicted on the right.

As mentioned previously, the transition function, accumulated covariances, and integrals discussed above generally only have to be computed once. Afterward, they can be kept constant during the MAP optimization, as they do not depend on the state variables themselves. Nevertheless, when querying the posterior GP after convergence at arbitrary times, some transition functions, accumulated covariances, and integrals must be re-evaluated, which is now more computationally expensive than in the WNOA case [Reference Anderson and Barfoot1]. However, by storing most of the intermediate results of the initial computations, some terms can be reused for this interpolation, which leads to an increased efficiency. This is particularly useful if the interpolation times coincide with the piecewise-linear discretization shown in Figure 2.

2.6. Examples

Figure 3 shows two example scenarios using the proposed GP prior formulation with inputs. Both examples feature robot trajectories over three seconds, while considering a known initial pose and forward velocity of 1 m/s.

The prior of the first example uses piecewise-constant angular velocity inputs featuring discontinuities, leading to a prior shape composed of three concatenated constant-curvature arcs. The second example considers a sinusoidal angular acceleration input, approximated via piecewise-linear inputs, leading to the depicted sinusoidal velocity profile. For both examples, we show the employed prior as well as the resulting posterior, when an additional position measurement at the end of the trajectory is considered. It can be seen that after fusing with the measurement, the posterior maintains the local shape of the prior in both cases. Further, the robot’s velocity and pose can be accurately recovered along the continuous trajectory using GP interpolation. Thus, even though both examples consider the same position measurement, vastly different posterior trajectories are achieved. We further note that the posterior is able to maintain the discontinuities in the velocity profile of the first example. This would not be possible when using the conventional WNOA prior, which could consider the velocity inputs as measurements, as it forces smooth and continuous velocity profiles.

3. Mobile robot trajectory estimation

In the following, we are evaluating our proposed method using a mobile robot trajectory estimation experiment.

3.1. Dataset

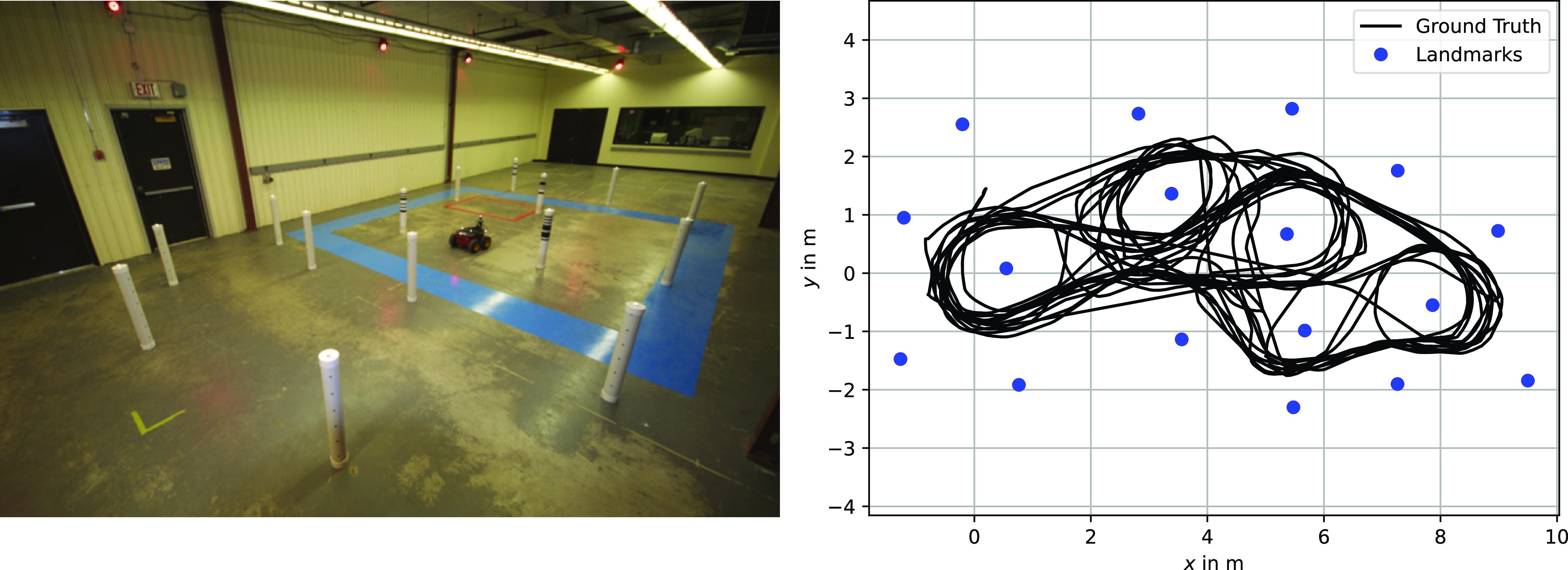

We use a mobile robot dataset similar to the one reported in [Reference Tong, Furgale and Barfoot33], collected in an indoor, planar environment featuring 17 tubular landmarks. Both the robot and the tubes were equipped with reflective markers tracked by a ten-camera Vicon motion capture system. This system provides sub-millimeter accuracy for the position of each reflective marker, which we use as ground truth for the robot’s trajectory. It further allows us to determine the exact location of the tubular landmarks, which we assume to be known during our localization experiment.

The mobile robot was driven around for 21 min. The dataset includes laser rangefinder scans from a Hokuyo URG-04 LX sensor, consisting of 681 range measurements spread over a 240

![]() $^\circ$

horizontal field of view centered straight ahead, logged at 10 Hz. Additionally, wheel odometry readings were recorded at 10 Hz to provide estimates of the robot’s body-centric velocity. The experimental setup and ground-truth data of the recorded trajectory are shown in Figure 4. It can be seen that the robot’s path is quite twisted and looping.

$^\circ$

horizontal field of view centered straight ahead, logged at 10 Hz. Additionally, wheel odometry readings were recorded at 10 Hz to provide estimates of the robot’s body-centric velocity. The experimental setup and ground-truth data of the recorded trajectory are shown in Figure 4. It can be seen that the robot’s path is quite twisted and looping.

Left: Setup of the mobile robot localization experiment. Right: Ground-truth data of the mobile robot trajectory and landmark positions.

3.2. Localization evaluation

For evaluation, we consider the localization problem, where landmark locations are known. We are using the wheel odometry readings as piecewise-linear velocity inputs at a rate of 10 Hz to create a GP motion prior. Landmark distance readings are included as discrete measurements, which are fused with the prior to create a posterior estimate. We employ a MAP estimation scheme that computes the posterior distribution of the entire trajectory in a single batch. We are particularly interested in how the proposed approach performs compared to the conventional WNOA GP prior baseline, in which odometry readings are considered as velocity measurements of the state, instead of as inputs. We believe that this comparison is meaningful, as the WNOA prior is one of the most widely used and well-established methods for continuous-time state estimation [Reference Talbot, Nubert, Tuna, Cadena, Dümbgen, Tordesillas, Barfoot and Hutter29], which our proposed method directly extends. While other methods, such as spline-based techniques, exist, they are fundamentally different from the GP-based methods considered in this work, with detailed comparisons reported in [Reference Johnson, Mangelson, Barfoot and Beard18]. For both our proposed method and the chosen baseline, we optimize the hyperparameters of the prior, namely

![]() $\mathbf {Q}_c$

, on a subset of the available mobile robot driving data. For the WNOA method, we additionally optimize the covariance of the odometry velocity measurements. The covariance for the landmark distance measurements is given. The resulting hyperparameters used throughout the experiment are summarized in Table I. Here, the prior covariances include one translational and one rotational degree of freedom, considering a non-holonomic mobile robot in a two-dimensional environment. Since our derivations are formulated in

$\mathbf {Q}_c$

, on a subset of the available mobile robot driving data. For the WNOA method, we additionally optimize the covariance of the odometry velocity measurements. The covariance for the landmark distance measurements is given. The resulting hyperparameters used throughout the experiment are summarized in Table I. Here, the prior covariances include one translational and one rotational degree of freedom, considering a non-holonomic mobile robot in a two-dimensional environment. Since our derivations are formulated in

![]() $SE(3)$

, the remaining degrees of freedom are locked during optimization. Similarly, the velocity measurement covariance includes two values, as we measure the forward and yaw velocities of the mobile robot using wheel odometry readings.

$SE(3)$

, the remaining degrees of freedom are locked during optimization. Similarly, the velocity measurement covariance includes two values, as we measure the forward and yaw velocities of the mobile robot using wheel odometry readings.

Hyperparameters used for mobile robot experiment.

When estimating the robot’s trajectory, we consider different frequencies in which landmark distance measurements are available to study increasingly sparse measurement availabilities. For the initial evaluation, we include a discrete estimation state at each of the 10 Hz odometry updates into our batch optimization approach. Afterward, evaluation is repeated, while only including estimation states at times at which landmark distance measurements are available, meaning that less states are explicitly estimated as the landmark reading frequency decreases. In these cases, odometry data is still considered at a rate of 10 Hz. For the proposed method, the high-rate odometry information can be considered in a straightforward manner by constructing GP priors using piecewise-linear inputs between two estimation times. For the WNOA GP prior baseline, high-rate velocity measurements are incorporated while relying on GP interpolation at runtime to associate them with the robot’s state at the corresponding time (see [Reference Burnett, Schoellig and Barfoot8] for details).

In each case, the state is evaluated and compared to ground truth at 10 Hz, meaning that we have to rely on GP interpolation to recover the posterior estimates at times between the discrete estimation times. For the proposed approach, this interpolation considers the underlying motion prior with the incorporated inputs, allowing us to recover the local shape of the robot trajectory. For the WNOA GP prior baseline, interpolation is solely based on the motion prior, in which the most likely robot state features a constant velocity. In every case, we compute the root-mean-square errors (RMSE) for position and orientation between the ground-truth and estimated robot states. We further record the computation time of the state estimation, using a C++ implementation of our method on a consumer laptop featuring a 2.4 GHz Intel i5 processor with 16 GB of RAM.

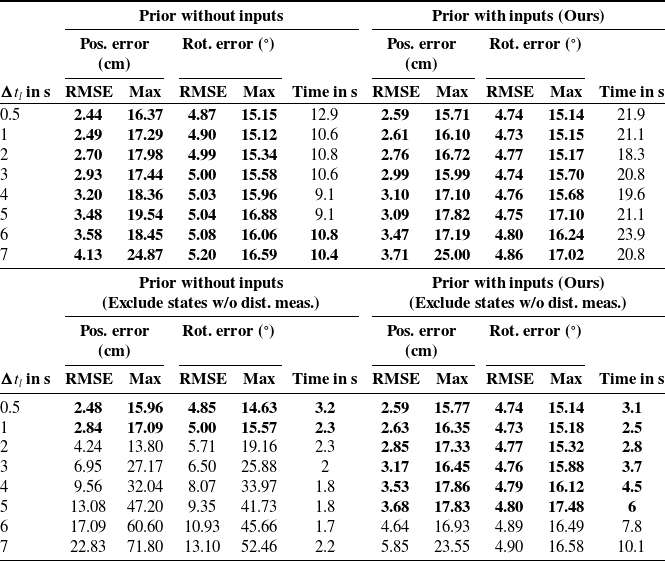

Mobile robot trajectory estimation results considering odometry readings as measurements versus as inputs.

3.3. Results

The results are summarized in Table II, including the estimation errors and computation times for increasing times between landmark distance measurements

![]() $\Delta t_l$

. When including a discrete state at every odometry update, both methods achieve similar results. The resulting accuracies differ only by a few mm and less than half of a degree, while the WNOA GP prior baseline achieves shorter computation times. In each case shown, the 21-minute trajectory can be estimated in just a few seconds. When including discrete states only when landmark distance measurements occur, the proposed prior formulation using inputs significantly outperforms the baseline. While the traditional WNOA GP prior is able to achieve competitive accuracies for short time distances

$\Delta t_l$

. When including a discrete state at every odometry update, both methods achieve similar results. The resulting accuracies differ only by a few mm and less than half of a degree, while the WNOA GP prior baseline achieves shorter computation times. In each case shown, the 21-minute trajectory can be estimated in just a few seconds. When including discrete states only when landmark distance measurements occur, the proposed prior formulation using inputs significantly outperforms the baseline. While the traditional WNOA GP prior is able to achieve competitive accuracies for short time distances

![]() $\Delta t_l$

, it fails to accurately represent the local shape of the trajectory when landmark measurements occur less frequently (

$\Delta t_l$

, it fails to accurately represent the local shape of the trajectory when landmark measurements occur less frequently (

![]() $\Delta t_l \gt 1$

s). The proposed GP prior with inputs is able to maintain the estimation accuracy for longer times. Here the error only starts to significantly increase for

$\Delta t_l \gt 1$

s). The proposed GP prior with inputs is able to maintain the estimation accuracy for longer times. Here the error only starts to significantly increase for

![]() $\Delta t_l \gt 5$

s. Both methods show significantly reduced computation times when excluding states without landmark distance measurements in the batch estimation. However, the computation time increases when using a prior with inputs as

$\Delta t_l \gt 5$

s. Both methods show significantly reduced computation times when excluding states without landmark distance measurements in the batch estimation. However, the computation time increases when using a prior with inputs as

![]() $\Delta t_l$

increases. In these cases, more states have to be recovered using the GP interpolation schemes, which requires additional effort for the proposed method to solve the Magnus expansion and integral terms.

$\Delta t_l$

increases. In these cases, more states have to be recovered using the GP interpolation schemes, which requires additional effort for the proposed method to solve the Magnus expansion and integral terms.

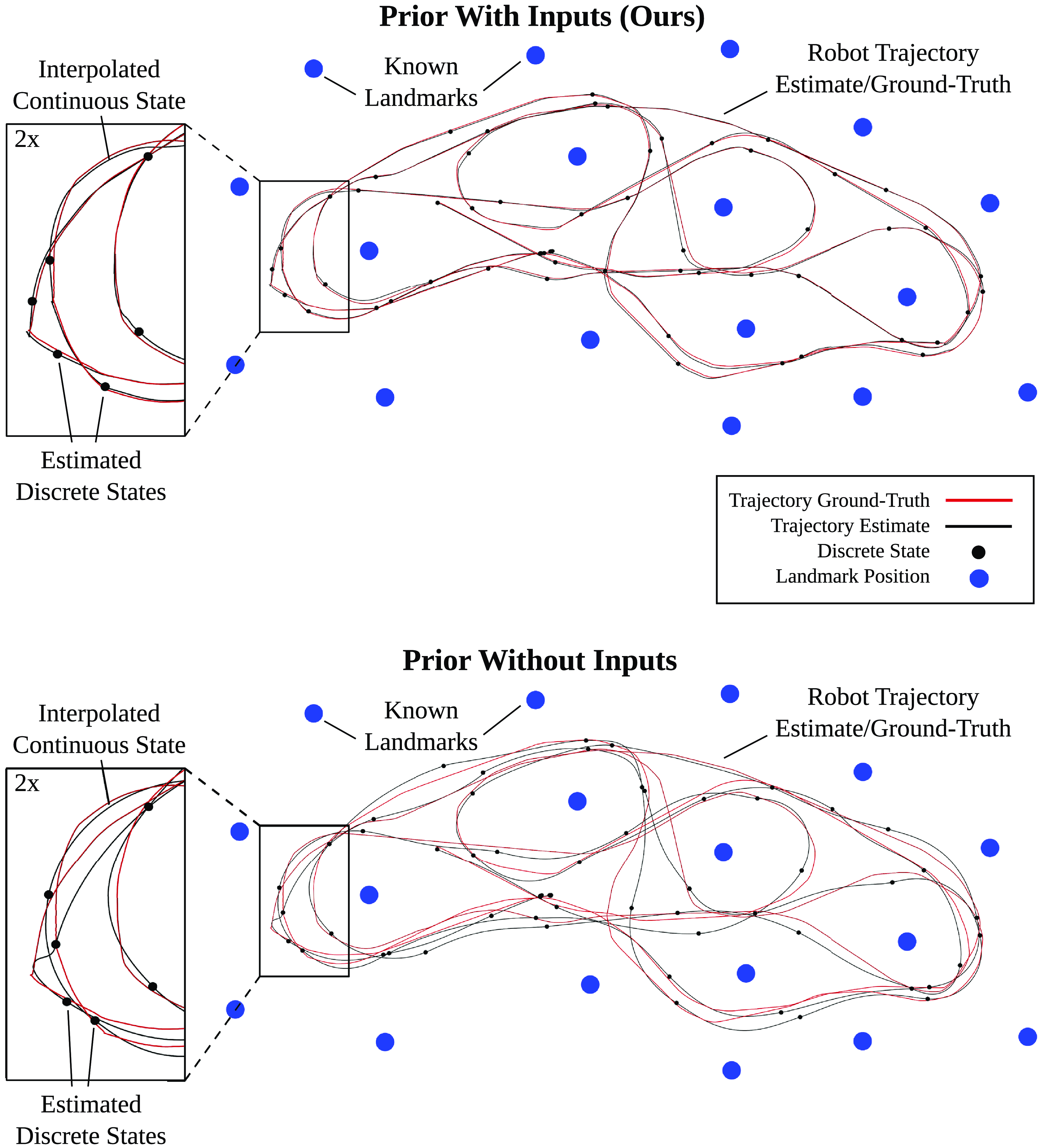

Example state estimation results using the first 25% of the mobile robot dataset. Trajectory estimates are depicted in black with ground truth in red. Landmarks are shown in blue. The discrete robot state is estimated every 5 s, coinciding with landmark distance measurements, while the continuous state relies on interpolation. On the top, the results for the newly proposed state estimation method are shown, using the odometry readings as velocity inputs. On the bottom, the conventional WNOA GP prior is used, considering the odometry as velocity measurements instead.

We conclude that using the proposed GP prior with inputs presents an interesting use case, as it is able to maintain the estimation accuracy, while significantly reducing the computational effort. This is thanks to its ability to maintain the local shape of the odometry over longer time intervals without needing to include discrete states in the batch estimation. We can exploit this advantage, as long as

![]() $\Delta t_l$

does not grow too large, in which case the accuracy and computation times are not competitive with the WNOA GP prior baseline, even without excluding discrete states from the batch estimation. In our case, it is beneficial to use the newly proposed method for

$\Delta t_l$

does not grow too large, in which case the accuracy and computation times are not competitive with the WNOA GP prior baseline, even without excluding discrete states from the batch estimation. In our case, it is beneficial to use the newly proposed method for

![]() $\Delta t_l \leq 5$

s, excluding states without landmark distance measurements. For

$\Delta t_l \leq 5$

s, excluding states without landmark distance measurements. For

![]() $\Delta t_l \gt 5$

s the original WNOA method should be applied, including all discrete states and treating odometry velocities as measurements.

$\Delta t_l \gt 5$

s the original WNOA method should be applied, including all discrete states and treating odometry velocities as measurements.

To further illustrate the differences between the two approaches, Figure 5 shows state estimation results for the first 25% of the mobile robot dataset, while considering landmark distance measurements every

![]() $\Delta t_l = 5$

s and including discrete estimation states only at these measurement times. The proposed method is able to accurately represent the local shape of the trajectory between the discrete states. In contrast, the baseline, which relies on the constant-velocity prior, cannot accurately capture the local shape of the trajectory and instead produces a highly smoothed result. This leads to considerable inaccuracies in the resulting state estimation. This is particularly evident in the zoomed-in area of the trajectory, which includes a sharp turn. Here, the robot stopped and pivoted on the spot between two discrete estimation times, resulting in a non-smooth trajectory. This motion is captured by the prior that considers velocities as inputs, but cannot be represented by the WNOA prior, whose interpolation overly smooths the trajectory, deviating from the ground truth.

$\Delta t_l = 5$

s and including discrete estimation states only at these measurement times. The proposed method is able to accurately represent the local shape of the trajectory between the discrete states. In contrast, the baseline, which relies on the constant-velocity prior, cannot accurately capture the local shape of the trajectory and instead produces a highly smoothed result. This leads to considerable inaccuracies in the resulting state estimation. This is particularly evident in the zoomed-in area of the trajectory, which includes a sharp turn. Here, the robot stopped and pivoted on the spot between two discrete estimation times, resulting in a non-smooth trajectory. This motion is captured by the prior that considers velocities as inputs, but cannot be represented by the WNOA prior, whose interpolation overly smooths the trajectory, deviating from the ground truth.

4. Continuum robot shape estimation

As discussed in detail in [Reference Lilge, Barfoot and Burgner-Kahrs22], the GP batch state estimation approach from [Reference Anderson and Barfoot1] for mobile robot trajectories can also be applied to continuously estimate quasi-static continuum-robot shapes. Consequently, the proposed GP prior that incorporates inputs can be extended to this domain as well. Analogous to (1a), we can describe the continuum-robot shape along its arclength

![]() $s$

with the following stochastic differential equation:

$s$

with the following stochastic differential equation:

\begin{align} \frac {d}{ds}{\mathbf {T}}(s) &= (\underbrace {{\boldsymbol {\varepsilon }}_{\mathrm {bias}}(s) + {\boldsymbol {\varepsilon }}_{\mathrm {in}}(s)}_{{\boldsymbol {\varepsilon }}(s)})^\wedge \mathbf {T}(s),\\[-12pt] \nonumber \end{align}

\begin{align} \frac {d}{ds}{\mathbf {T}}(s) &= (\underbrace {{\boldsymbol {\varepsilon }}_{\mathrm {bias}}(s) + {\boldsymbol {\varepsilon }}_{\mathrm {in}}(s)}_{{\boldsymbol {\varepsilon }}(s)})^\wedge \mathbf {T}(s),\\[-12pt] \nonumber \end{align}

These equations describe the evolution of the robot’s pose

![]() $\mathbf {T}(s) \in SE(3)$

and strain

$\mathbf {T}(s) \in SE(3)$

and strain

![]() ${\boldsymbol {\varepsilon }}(s) \in \mathbb {R}^6$

expressed in the body frame along its arclength. Together, they define the quasi-static shape of the continuum robot. Similar to the mobile robot equations, we consider two potential inputs: an input strain

${\boldsymbol {\varepsilon }}(s) \in \mathbb {R}^6$

expressed in the body frame along its arclength. Together, they define the quasi-static shape of the continuum robot. Similar to the mobile robot equations, we consider two potential inputs: an input strain

![]() ${\boldsymbol {\varepsilon }}_{\mathrm {in}}(s) \in \mathbb {R}^6$

and distributed forces and moments

${\boldsymbol {\varepsilon }}_{\mathrm {in}}(s) \in \mathbb {R}^6$

and distributed forces and moments

![]() ${\boldsymbol{{f}}}_{\mathrm {in}}(s) \in \mathbb {R}^6$

, which can act as an input to the system. These distributed loads are mapped to the strain derivative through the stiffness matrix

${\boldsymbol{{f}}}_{\mathrm {in}}(s) \in \mathbb {R}^6$

, which can act as an input to the system. These distributed loads are mapped to the strain derivative through the stiffness matrix

![]() $\boldsymbol {\mathcal {K}}$

of the continuum robot’s backbone. Lastly,

$\boldsymbol {\mathcal {K}}$

of the continuum robot’s backbone. Lastly,

![]() $\mathbf {w}(s)$

is again a white-noise GP with positive-definite power-spectral density matrix

$\mathbf {w}(s)$

is again a white-noise GP with positive-definite power-spectral density matrix

![]() $\mathbf {Q}_c$

. Using this expression and the previous derivations, we can derive an analogous GP prior with inputs for the continuous estimation of the quasi-static shape of continuum robots.

$\mathbf {Q}_c$

. Using this expression and the previous derivations, we can derive an analogous GP prior with inputs for the continuous estimation of the quasi-static shape of continuum robots.

Throughout this section, we validate this GP prior formulation with additional continuum-robot experiments.

Tendon-driven continuum-robot prototype and experimental setup.

4.1. Experimental setup

For the experiments, we are using the tendon-driven continuum-robot (TDCR) prototype and sensor setup presented in [Reference Lilge, Barfoot and Burgner-Kahrs22] (see Figure 6). This prototype features two controllable segments, with tendons routed parallel to the backbone through spacer disks and attached at the end of each segment. The bending of both segments can be individually actuated by pulling and releasing the tendons. For sensing, we consider a single six-degree-of-freedom pose measurement at the robot’s tip using an electromagnetic tracking sensor (Aurora v3, Northern Digital Inc., Canada). Ground truth is obtained using a coordinate measurement arm with an attached laser probe (FARO Edge with FARO Laser Line Probe HD, FARO Technologies Inc., USA). This measurement arm allows us to capture a detailed laser scan of the robot’s shape in the form of a point cloud, from which the discrete pose of each spacer disk can be reconstructed. Six simple bending configurations of the continuum robot were exclusively recorded to calibrate the sensor and ground-truth frames. Using the same procedure outlined in [Reference Lilge, Barfoot and Burgner-Kahrs22], the remaining calibration error at the tip of the robot results in

![]() $3.3 \pm 1.1$

mm.

$3.3 \pm 1.1$

mm.

4.2. Evaluation

For evaluation, we consider nine different TDCR configurations, with three sets of applied tendon tensions. Each set of tensions is shared across three configurations. In each configuration, an additional unknown force is applied to the robot’s tip to induce further deflection.

For state estimation, we now wish to compare the conventional prior formulation from [Reference Lilge, Barfoot and Burgner-Kahrs22] with the newly proposed one considering inputs. As discussed above, there are two possibilities to incorporate known inputs. One can either consider known input strains

![]() ${\boldsymbol {\varepsilon }}_{\mathrm {in}}(s)$

or known distributed actuation loads

${\boldsymbol {\varepsilon }}_{\mathrm {in}}(s)$

or known distributed actuation loads

![]() ${\boldsymbol{{f}}}_{\mathrm {in}}(s)$

. Either of these possible inputs can, for instance, be provided by another open-loop continuum-robot model.

${\boldsymbol{{f}}}_{\mathrm {in}}(s)$

. Either of these possible inputs can, for instance, be provided by another open-loop continuum-robot model.

We use the second approach and convert the known applied tendon tensions to moments acting on the robot backbone, considering the simplified point-moment model discussed in [Reference Rucker and Webster27]. Using this model, we compute the discrete moments applied to the tendon termination points. We then approximate these discrete moments with piecewise-linear inputs to obtain an expression for

![]() ${\boldsymbol{{f}}}_{\mathrm {in}}(s)$

, which we map to the strain derivative using the known stiffness

${\boldsymbol{{f}}}_{\mathrm {in}}(s)$

, which we map to the strain derivative using the known stiffness

![]() $\boldsymbol {\mathcal {K}}$

of the robot’s Nitinol backbone, based on properties reported by the manufacturer. While this simplified model exhibits inaccuracies in out-of-plane bending scenarios [Reference Rucker and Webster27], we deem it sufficiently accurate to be fused with additional measurements. Alternatively, more complex actuation load distributions could be incorporated, as long as they can be expressed in the robot’s body frame independently from the state variables.

$\boldsymbol {\mathcal {K}}$

of the robot’s Nitinol backbone, based on properties reported by the manufacturer. While this simplified model exhibits inaccuracies in out-of-plane bending scenarios [Reference Rucker and Webster27], we deem it sufficiently accurate to be fused with additional measurements. Alternatively, more complex actuation load distributions could be incorporated, as long as they can be expressed in the robot’s body frame independently from the state variables.

In the mobile robot experiments, velocity inputs were included as measurements in the traditional WNOA method for a fairer comparison. However, we do not include the inputs as measurements for the baseline method in the continuum-robot evaluations. This is because our inputs are defined on the strain derivative, which is not part of the system state, making it difficult to directly include such measurements in a straightforward manner.

Both the traditional prior from [Reference Lilge, Barfoot and Burgner-Kahrs22] as well as the newly proposed prior considering inputs are fused with a single pose measurement at the tip of the robot. As an additional degenerate case, we further consider the scenario in which only the robot tip’s position can be measured. For every case, we compute the RMSE and maximum errors for the robot’s position and orientation along its shape, considering the discrete ground-truth poses at each disk. Hyperparameters are tuned empirically on representative continuum-robot configurations. For both methods, the prior covariance is set to

![]() $\mathbf {Q}_{c}\,=\,\mbox {diag}\left (1\mathrm {e}{-2}\,\mathrm {m}^2\,1\mathrm {e}{-2}\,\mathrm {m}^21\mathrm {e}{-2}\,\mathrm {m}^2\,1\mathrm {e}{3}\,\mathrm {rad}^2\,1\mathrm {e}{3}\,\mathrm {rad}^2\,1\mathrm {e}{3}\,\mathrm {rad}^2\right )$

, while the pose measurement covariance is

$\mathbf {Q}_{c}\,=\,\mbox {diag}\left (1\mathrm {e}{-2}\,\mathrm {m}^2\,1\mathrm {e}{-2}\,\mathrm {m}^21\mathrm {e}{-2}\,\mathrm {m}^2\,1\mathrm {e}{3}\,\mathrm {rad}^2\,1\mathrm {e}{3}\,\mathrm {rad}^2\,1\mathrm {e}{3}\,\mathrm {rad}^2\right )$

, while the pose measurement covariance is

![]() $\mathbf {R}_{\mathrm {pose}}\,=\,\mbox {diag}\left ( 4\mathrm {e}{-7}\,\mathrm {m}^2\,4\mathrm {e}{-7}\,\mathrm {m}^2\,4\mathrm {e}{-7}\,\mathrm {m}^2\,2.5\mathrm {e}{-4}\,\mathrm {rad}^2\,2.5\mathrm {e}{-4}\,\mathrm {rad}^2\,2.5\mathrm {e}{-4}\,\mathrm {rad}^2\right )$

.