With knowledge workers abounding in today’s service-based economy, organizations continue to invest in training and learning opportunities to enhance human capital. According to the Association for Talent Development’s 2014 State of the Industry Report, U.S. organizations spend $1,208 per employee/per year on training. Clearly, one of the primary goals of such training investments is to enhance positive transfer, or the degree to which learning from training is applied to the job, and to produce relevant changes in employee and job performance (Burke, Hutchins, & Saks, Reference Burke, Hutchins, Saks and Paludi2013; Grossman & Salas, Reference Grossman and Salas2011). Unfortunately, there is empirical evidence that little of what has been acquired during training is transferred to the workplace (Saks, Reference Saks2002). Given less than ideal transfer rates, means to promote transfer represent a key challenge for training scholars and practitioners alike (Burke, Reference Burke2001). If trainees fail to successfully transfer new knowledge and skills, training expenditures are ultimately poor investments.

Many studies over the last few decades have examined methods for promoting transfer. For example, research has focused on enhancing transfer through posttraining interventions such as goal-setting and self-management training (e.g., Brown, Reference Brown2005; Burke & Baldwin, Reference Burke and Baldwin1999; Richman-Hirsch, Reference Richman-Hirsch2001; Taylor, Russ-Eft, & Chan, Reference Taylor, Russ-Eft and Chan2005; Tews & Tracey, Reference Tews and Tracey2008), transfer climate (e.g., Holton, Bates, & Ruona, Reference Holton, Bates and Ruona2000; Rouiller & Goldstein, Reference Rouiller and Goldstein1993; Tracey & Tews, Reference Tracey and Tews2005), and supervisory and peer support (e.g., Cromwell & Kolb, Reference Cromwell and Kolb2004; Hutchins, Burke, & Berthelsen, Reference Hutchins, Burke and Berthelsen2010; Lim & Johnson, Reference Lim and Johnson2002). Furthermore, various exhaustive reviews have summarized the existing body of research on transfer of training (Blume et al., Reference Blume, Ford, Baldwin and Huang2010; Burke & Hutchins, Reference Burke and Hutchins2007; Grossman & Salas, Reference Grossman and Salas2011). While there is evidence that transfer is influenced by various individual trainee characteristics, training design, and different aspects of organizational support, there is less clarity on which antecedents matter most (Hilbert, Preskill, & Russ-Eft, Reference Hilbert, Preskill and Russ-Eft1997), though some have offered their insights (Grossman & Salas, Reference Grossman and Salas2011).

Despite advances in transfer research, we contend that overall the body of research lacks synthesis (Blume et al., Reference Blume, Ford, Baldwin and Huang2010) and remains principally atheoretical. The training and development field has organizing frameworks to help classify transfer elements (such as before, during, and after training; Broad, Reference Broad2005), yet further theoretically grounded guidance would help design specific transfer strategies to use in different workplace contexts. Toward this end, the goal of the present chapter is to create an integrative conceptual framework that is theory driven and provides context-relevant implications for stakeholders of training transfer design.

The fundamental premise of this chapter is that accountability may be lacking in organizations for trainees to apply what they have learned in training on the job. As Kopp (Reference Kopp2006) claims, transfer seems to be viewed as “nice-to-have,” and often stakeholders are not held accountable for transfer success in a meaningful way. Drawing on Schlenker and colleagues’ (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) model of accountability and Yelon and Ford’s (Reference Yelon and Ford1999) multidimensional model of transfer, the present chapter delineates means to enhance accountability for training transfer in different work contexts. Burke and Saks (Reference Burke and Saks2009) recently applied Schlenker’s framework to transfer in general, and in this chapter we go further by considering work context. Specifically, this chapter focuses on the means to promote accountability for transfer of open and closed skills performed under either supervised or autonomous working conditions. It is important to consider the nature of the skill and degree of supervision because these dimensions affect ease of proficiency acquisition, latitude in adapting skills, responsibility for monitoring posttraining behavior, and the level of posttraining support required.

The structure of the chapter is as follows. First, we will review previous research related to accountability and transfer of training. Then, we will provide an overview of Schlenker and colleagues’ (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) model of accountability and Yelon and Ford’s (Reference Yelon and Ford1999) multidimensional model of transfer. We then synthesize these theoretical perspectives and articulate strategies to promote accountability for transfer in various work contexts.

The Importance of Accountability in Training Transfer

Frink and Klimoski (Reference Frink and Klimoski1998) defined accountability as a perceived need to justify an action to some audience that has reward or sanction power. From a management perspective, when individuals know they will be held accountable for their behaviors and decisions, they are more motivated to focus on achieving specific outcomes, use self-regulatory strategies, and exert greater effort and persist in overcoming obstacles. Organizations have a range of formal mechanisms and informal mechanisms to influence perceived accountability (Frink & Klimoski, Reference Frink and Klimoski1998). Formal accountability mechanisms may include performance reviews, promotions or demotions, disciplinary action, and incentives like merit or bonus pay. Informal mechanisms may include cultural norms, peer influence, and coaching from supervisors. While accountability has been studied in decision making, selection, and performance appraisal contexts, it has been examined to a lesser extent in the training context (i.e., DeMatteo, Lundby, & Dobbins, Reference DeMatteo, Lundby and Dobbins1997). In the training transfer domain, accountability addresses the extent to which transfer is expected from trainees (Brinkerhoff & Montesino, Reference Brinkerhoff and Montesino1995; Burke & Saks, Reference Burke and Saks2009).

We contend that accountability can be deficient with respect to transfer on several fronts. At a general level, training may be perceived as an isolated event, divorced from the natural work environment. In this respect, trainers may be held accountable only for designing and delivering an effective training session, without an eye toward transfer. Furthermore, training practitioners and managers may not be fully aware of the typically limited rates of transfer and merely assume that transfer spontaneously occurs. Extending these arguments, supervisors may not be cognizant of the transfer problem, or they might also view promoting transfer as someone else’s responsibility. Following that trainers and supervisors may not focus on transfer, trainees may not place a priority on transfer and instead direct their efforts toward what they perceive to be more pressing demands or more likely rewarded. Given these challenges, it is important to strengthen responsibility mechanisms to help ensure that transfer is maximized (Broad & Newstrom, Reference Broad and Newstrom1992).

The inclusion of accountability in transfer models and measures is surprisingly lacking despite various studies presenting direct or indirect implications for integrating accountability in transfer interventions (Burke & Saks, Reference Burke and Saks2009). In another study by Baldwin and Magjuka (Reference Baldwin and Magjuka1991), it was demonstrated that trainees expecting some form of follow-up assessment after training reported stronger intentions to transfer. In commenting on this finding, Tannenbaum and Yukl (Reference Tannenbaum and Yukl1992) stated: “The fact that their supervisor would require them to prepare a post-training report or undergo an assessment meant that they were being held accountable for their own learning and apparently conveyed the message that the training was important” (418). In addition, DeMatteo and colleagues (Reference DeMatteo, Lundby and Dobbins1997) conducted a systematic manipulation of training accountability in a lab setting and found that accountability interventions (either a postdiscussion with the trainer or a video critique) produced more note taking, learning, and trainee satisfaction if the trainees were notified prior to the training of such assessments. Longenecker (Reference Longenecker2004) also identified enhancing accountability for application, such as requiring posttraining reports from trainees, as a key learning imperative articulated by managers. Saks and Belcourt (Reference Saks and Belcourt2006) subsequently found such accountability mechanisms to be significantly related to transfer. Further support for the role of accountability in transfer can be found in Taylor, et al.’s (Reference Taylor, Russ-Eft and Chan2005) meta-analysis of 117 behavioral modeling studies that demonstrated a larger effect for transfer when sanctions and rewards were instituted in trainees’ work environments, such as the incorporation of newly learned skills into performance reviews.

Transfer climate constructs, which encompass a variety of support mechanisms to facilitate transfer, also highlight the need for accountability in directing transfer. Rouiller and Goldstein (Reference Rouiller and Goldstein1993) conceptualized transfer climate as encompassing two dimensions – situational cues and consequences. Situational cues included goal cues, social cues, and task and structural cues. Consequences included feedback and rewards. Tracey, Tannenbaum, and Kavanagh (Reference Tracey, Tannenbaum and Kavanagh1995) found support for the relationship between transfer climate and transfer, with Tracey and Tews’s (2005) conceptualization of transfer climate encompassing organizational support, managerial support, and job support. Organizational support refers to policies, and reward systems to support training. Managerial support refers to managers encouraging learning and supporting transfer. Finally, job support refers to whether jobs are designed to promote continuous learning. While such transfer climate constructs may not have explicitly identified accountability as a specific factor, they point to the importance of responsibility and consequences associated with transfer.

More recent research further signals the importance of accountability in the context of transfer. In an empirical study, Cheramie and Simmering (Reference Cheramie and Simmering2010) found that learners low in conscientiousness exhibited higher levels of learning when they perceived accountability for training outcomes as high, and concluded that “organizations should implement formal controls to increase perceived accountability and improve learning” (44). Lastly, Saks and Burke (Reference Saks and Burke2012), in another empirical investigation, provided evidence that training evaluation frequency was related to higher rates of transfer in organizations when organizations measured behavioral change and results-oriented criteria after training (as compared to trainee satisfaction or learning).

Schlenker’s Accountability Theory and Training Transfer

Schlenker and colleagues’ (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) theory of accountability (i.e., responsibility) provides an overarching theoretical framework that we contend can usefully guide transfer research (Burke & Saks, Reference Burke and Saks2009). Schlenker et al. (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994) argue that accountability is a key mechanism through which social entities control the behavior of members. In particular, accountability reflects “being answerable to audiences for performing up to prescribed standards that are relevant to fulfilling obligations, duties, expectations, and other charges” (Schenkler, Reference Schlenker1997: 249). When individuals are held responsible by others for adhering to a course of action, they can be evaluated with respect to a relevant event. Moreover, when individuals perceive themselves as accountable for executing a course of action, individuals become more motivated to follow prescribed behaviors and achieve goals and objectives. Thus, enhancing responsibility helps guarantee that organizational members adhere to performance expectations.

Fundamentally, designing accountability into the transfer process enhances stakeholders’ sense of ownership and responsibility for skill enhancement such that trainers, trainees, and managers face a source of discomfort from the organization if they do not follow through on their obligation to transfer learning to their job. As Schlenker (Reference Schlenker1997) claims, gaps between an employee’s behavior and “oughts,” such as “I ought to transfer skills I learn”; “I ought to hold my employees responsible for transfer”; or “I ought to share evidence of my training program’s influence on behavioral change with top managers,” produce a state of incompleteness. To embed accountability in transfer of training, individuals must understand what transfer behaviors are expected of them, how their actions will be measured, and what rewards or sanctions will be imposed for transfer or lack thereof (Santos & Stuart, Reference Santos and Stuart2003).

Schlenker et al. (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994) argue that accountability “involves an evaluative reckoning in which individuals are judged” (634). The essential facets of accountability include inquiry, accounting, and verdict. That is, questions are raised about how well a person performs (inquiry), evidence is presented and evaluated (accounting), and the person is rewarded or punished (verdict). All such evaluations, whether by others or by the actor him- or herself, involve information about three key variables: prescriptions, identity, and event. Prescriptions refer to expectations, rules, and standards of conduct to guide an individual’s behavior, which may be formal or informal, explicit or tacit. Identity refers to attributes of the actor, such as his or her roles, values, commitments, and aspirations. The event refers to a focal course of action that is anticipated or has transpired. Promoting accountability to engage in future desired behaviors, such as training transfer, should focus on these variables and the links between them.

Schlenker et al. (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994) connect these three variables to form three links that contain the adhesive glue to bind individuals to situations and courses of action. Strengthening these links increases responsibility and, thereby, accountability. The prescription-event link relates to whether a clear set of expectations applies to a given event, resulting in goal and process clarity. In the context of transfer, the prescription-event link reflects expectations for the use and application of trained skills. When the link is strong, individuals have clear goals for transfer and know how to proceed to meet them. The prescription-identity link relates to whether prescriptions are applicable to individuals by virtue of their roles and other personal characteristics, serving to enhance a sense of ownership of a course of action. This link captures the extent to which individuals believe transfer is important because of their role in an organization or their personal sense of obligation. Finally, the identity-event link relates to whether individuals are connected to the event and have control and freedom over their actions. In the context of transfer, this link reflects the extent to which individuals have personal control over their transfer behavior.

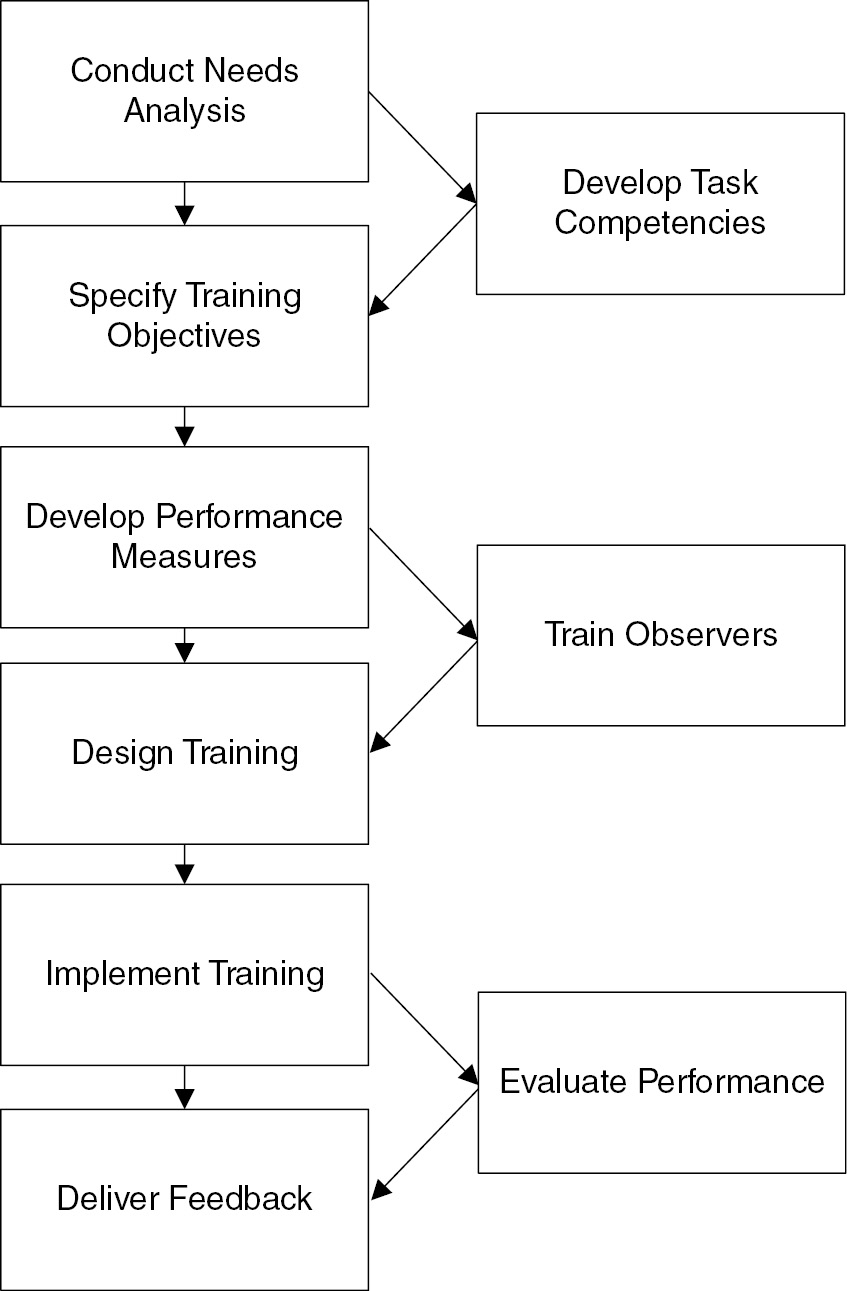

The three variables and their links are presented graphically in Figure 9.1, known collectively as “the responsibility triangle” (Schlenker et al., Reference Schlenker, Britt, Pennington, Murphy and Doherty1994: 635). To summarize, a strong prescription-event link requires goal and process clarity; the prescription-identity link necessitates a sense of ownership of the goal and process by individuals; and the identity-event link requires individuals’ perceived control over the event. When these links are strong, individuals’ self-regulatory systems engage, and they become more determined, committed to goals, and “unwavering in pursuing them despite obstacles, distractions, and temptations” (Schlenker, Reference Schlenker1997: 268). Moreover, when people feel more personally responsible, they are less apt to make excuses and engage in avoidance strategies (Schlenker, Reference Schlenker1997). By overlaying accountability mechanisms across stakeholders involved in training transfer, a “psychological adhesive” connects these critical parties to common expectations for and commitment to transfer outcomes.

Figure 9.1. Accountability mechanisms driving training transfer.

Burke and Saks (Reference Burke and Saks2009) recently applied Schlenker’s (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) model specifically to transfer of training. In particular, they articulated the accountability linkages for trainers, trainees, and supervisors to ensure these stakeholders focus on transfer enhancement. According to Burke and Saks (Reference Burke and Saks2009), trainers should have clear expectations for incorporating transfer enhancement in training programs (prescription-event link), a clear sense of duty to include transfer in training content (prescription-identity link), and control over developing training that focuses on focal skills as well as transfer (identity-event link). Trainees should have clear goals for transfer (prescription-event link), a clear sense of obligation to apply what they have learned (prescription-identity link), and personal control over opportunities to transfer (identity-event link). Finally, supervisors should have clear performance expectations to aid their employees in transfer (prescription-event link), an obligation to focus on trainee transfer (prescription-identity link), and personal control to help facilitate trainee transfer (identity-event link) (Burke & Saks, Reference Burke and Saks2009).

Burke and Saks further propose practical strategies to enhance accountability among these different stakeholders. Trainers, for example, can clearly establish transfer expectations prior to training, devote time specifically to transfer enhancement during training sessions, and systematically evaluate the extent to which trainees use new knowledge and skills on the job. In turn, trainees can set specific goals with supervisors for transfer before training, commit to transfer during training, and document as well as share their learning posttraining. With respect to supervisors, they can discuss transfer objectives with trainees prior to their training, prepare a list of activities to commit to after training to promote transfer among their employees, provide rewards and incentives for transfer, and include transfer in employee performance appraisals. As such, Burke and Saks’s application of Schlenker’s (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) model provides theoretically derived strategies to enhance the transfer of training, as summarized in Table 9.1.

Table 9.1 Stakeholder accountability mechanisms for transfer

| Stakeholder | Prescription-Event Link | Prescription-Identity Link | Identity-Event Link |

|---|---|---|---|

| Trainer | Should have clear expectations for incorporating transfer enhancement in training programs | Should have a clear sense of duty to include transfer strategies in training | Should have control over developing training that targets focal skills and transfer |

| Trainee | Should have clear goals for transfer | Should have a clear sense of obligation to apply what has been learned | Should have personal control over opportunities to transfer |

| Supervisor | Should have clear performance expectations to aid their employees in transfer | Should have a personal obligation to focus on trainee transfer to the job | Should have personal control to help facilitate trainee transfer |

Notwithstanding the value of their contribution, we contend that accountability strategies should vary based on the work context. Toward this end, we extend Burke and Saks’s (Reference Burke and Saks2009) work to promote trainee accountability in various work contexts, specifically in terms of the nature of the trained skill set and the degree of supervision the trainee is subject to, as originally crafted by Yelon and Ford (Reference Yelon and Ford1999) and described next.

Yelon and Ford’s Multidimensional Framework of Transfer

Most models of training transfer present prescriptions and theoretical relationships presumably applicable to all trained tasks. However, such universalistic models may not be appropriate in all contexts. To better delineate the conditions for successful transfer, Yelon and Ford (Reference Yelon and Ford1999) developed a multidimensional transfer of training model. By multidimensional, Yelon and Ford are referring to two dimensions of the skill set to be performed that should be considered when selecting among transfer strategies. The first dimension of the Yelon and Ford model is task adaptability, and the second dimension is the degree of employee supervision.

The first dimension of the framework, task adaptability, refers to the extent to which the task to be performed ranges from closed to open. Performing a closed task involves responding to predictable situations with standardized responses. In contrast, performing an open task involves responding to variable situations with adaptive, tailored responses. There is one best way to perform closed tasks; whereas there are multiple ways to perform open tasks that are contingent upon the situation at hand. Examples of closed tasks include preparing food in a fast food restaurant, cleaning a hotel room, filling out a report, and checking in an airline passenger. Examples of open tasks include facilitating discussions in a training session, performing a role in a stage play, motivating employees, and responding to difficult customers.

Yelon and Ford’s (Reference Yelon and Ford1999) second dimension is the degree of supervision under which trained skills are performed on the job. This dimension ranges from heavy supervision to autonomous working conditions. Under heavily supervised conditions, supervisors can closely monitor employee performance on trained skills, provide positive and constructive feedback, and administer appropriate rewards. Further, under such conditions, employees may be more apt to engage in appropriate behaviors with the knowledge of close observation. Under autonomous working conditions, however, employees have more discretion whether to engage in trained behaviors and must be more responsible for ensuring that behaviors are appropriately executed.

Based on these two dimensions – task adaptability and degree of job supervision – Yelon and Ford (Reference Yelon and Ford1999) developed a corresponding 2 × 2 matrix. The resulting four cells in the Yelon and Ford model include: (1) supervised trainees and closed skills; (2) autonomous trainees and closed skills; (3) autonomous trainees and open skills; and (4) supervised trainees and open skills. For each cell, Yelon and Ford then provide differentiated strategies to enhance transfer, as summarized in Table 9.2.

Table 9.2 Exemplar transfer enhancement strategies from Yelon and Ford’s (Reference Yelon and Ford1999) multidimensional framework

| Supervised Trainees | Autonomous Trainees | |

|---|---|---|

| Closed Skill |

|

|

| Open Skill |

|

|

Supervised Trainees and Closed Skills

For supervised trainees performing closed skills, trainees are required to execute specific standards under the guidance of their supervisors. With respect to employee selection, organizations should choose candidates with a detail orientation and willingness to follow direction. Training content should focus on declarative knowledge, procedural knowledge, shaping favorable attitudes toward adhering to standards, and cooperating with supervisors. Yelon and Ford (Reference Yelon and Ford1999) also recommend high-fidelity training, training in specific and detailed procedures, providing practice opportunities to facilitate automaticity, and providing trainees with detailed procedural checklists. Once on the job, supervisors should manage adherence to standards and provide appropriate incentives and rewards.

Autonomous Trainees and Closed Skills

Yelon and Ford’s (Reference Yelon and Ford1999) prescriptions for autonomous trainees and closed skills diverge from supervised trainees with closed skills on several fronts. On a selection front, hiring those with a learning orientation is advised as such individuals persist in challenging situations that might more often occur when working independently. To help ensure trainees are motivated to perform the closed skill, trainers should relate the skill to trainees’ personal and job goals. Trainers should also engender favorable attitudes toward seeking support to help ensure that trainees perform skills effectively posttraining. Regarding specific instructional strategies, trainees should discuss their intentions to use the closed skill, perform an obstacle assessment and create a response plan, and practice meta-cognitive skills to reflect on their behavior. Once on the job, there should be avenues for trainees to obtain any needed guidance.

Autonomous Trainees and Open Skills

Again, the difference between an open skill and closed skill is that there is not necessarily one best way to perform an open skill. Performing an open skill requires attention to contingency variables to determine a course of action. That is, successful performance requires strategic knowledge – information about when and why to use a particular knowledge or skill on the job (Kraiger, Ford, & Salas, Reference Kraiger, Ford and Salas1993). Accordingly, training for an open skill requires attention to strategic knowledge to determine when and how trainees should perform trained skills. To enhance the flexibility required for performing open skills, trainers could develop meta-cognitive competence and favorable attitudes toward experimentation. Regarding individual differences on which to select employees, those with a learning orientation may also be better able to learn and transfer open skills. Such individuals focus on developing new skills, attempt to understand their tasks, and successfully achieve self-referenced standards for success (Button, Mathieu, & Zajac, Reference Button, Mathieu and Zajac1996). Related to the autonomous nature of the skill being performed, the prescriptions for transfer are similar to those discussed in the preceding text for autonomous trainees performing closed skills.

Supervised Trainees and Open Skills

For supervised trainees with open skills, training should include many of the strategies advised for such skills performed autonomously. To successfully transfer open skills in a heavily supervised work setting, the following three strategies may be appropriate. First, training employees with favorable attitudes toward cooperation with supervisors may facilitate productive supervisor-subordinate relationships. Second, training supervisors in the target skills may lead to better management of trainees on the job. Third, the trainee and supervisor should jointly perform an obstacle assessment to determine potential barriers to transfer and means to overcome them.

Context-Dependent Transfer Accountability Strategies

Yelon and Ford’s (Reference Yelon and Ford1999) multidimensional framework and Burke and Saks’s (Reference Burke and Saks2009) application of Schlenker’s accountability model (1994, 1997) have each independently advanced research on transfer of training. Burke and Saks have highlighted the importance of the prescription-event, prescription-identity, and identity-event links to increase accountability and articulated transfer strategies to strengthening these links. That said, Burke and Saks did not address means to strengthen these links in different work contexts. Yelon and Ford’s work is valuable as they emphasized the importance of context for transfer and delineated strategies appropriate for different skills and degrees of supervision, but they did not position their model and corresponding transfer strategies in the context of accountability. Yelon and Ford’s framework thus represents a useful means to extend Burke and Saks’s accountability work. It should be noted that not all the original transfer strategies proposed by Yelon and Ford are necessarily applicable to the three accountability links, nor are all their transfer strategies exhaustive. However, they serve as a valuable guide and foundation. Rather than treat these two theoretical perspectives as independent, we suggest that value is to be gained by integrating them to provide greater theoretical precision on how best to foster accountability for training transfer.

Toward this end, we articulate context-dependent transfer accountability strategies. For each of Schlenker’s (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) three links, several strategies are discussed for specific contexts. Namely, to strengthen each of the prescription-event, prescription-identity, and identity-event links, we propose how to modify transfer enhancement strategies for closed and open skills and, within each skill set, for supervised and autonomous working conditions. When doing so, we also discuss strategies to overcome challenges that might weaken these links. In practice, human resource practitioners combine strategies to bolster the prescription-event, prescription-identity, and identity-event linkages for their specific context.

Bolstering the Prescription-Event Link

In this section, we discuss means to strengthen the prescription-event link for transfer. Fundamentally, the foundation for bolstering this link, and thereby enhancing goal and process clarity, is trainees knowing what and how to transfer. The strategies in this section address how to articulate to individuals that they should focus on the transfer of newly acquired skills and the standards to which they should adhere. Using diverse and complementary strategies helps ensure that trainees do not revert to old patterns of behavior, divergent personal preferences, or superordinate prescriptions advocated by irrelevant others (Dose & Klimoski, Reference Dose and Klimoski1995). In order, we discuss strategies to explicitly communicate expectations for transfer and the use of reward systems to indirectly communicate expectations for transfer, while including strategies to overcome challenges of competing job responsibilities.

Communicating Transfer Expectations

The communication of transfer expectations is relatively straightforward for closed skills, given that the successful execution of closed skills requires following standard procedures. Yelon and Ford (Reference Yelon and Ford1999) advocate high-fidelity training, training in specific and detailed procedures, and providing trainees with detailed procedural checklists, and these strategies will likely serve to enhance the prescription-event link. Because there is one best way to execute a closed skill, there must be a high degree of fidelity between training content and job requirements, which may be obtained through careful needs assessment (Broad, Reference Broad2005). When closed skills are supervised, supervisors may also require training in the standards so they can manage behavior consistently. When supervisors’ standards differ from training standards, trainees will more likely follow the supervisors’ standards, given the inherent power supervisors possess. The reinforcement of closed skills is arguably more challenging with autonomous employees, as supervisors cannot readily reinforce the closed skill. Thus, a greater responsibility is placed on organizations to appropriately select conscientious workers and on trainers to ensure that skills are fully learned during training. In these situations, accessible on-the-job reference information and trainer follow-ups may also be needed to cement standards.

Communication of expectations for transfer is less straightforward for open skills. A strong prescription-event link requires clear expectations, yet open skills need to be adapted to a variety of situations. This requirement for adaptation could be translated as ambiguity. It may not always be clear how to execute open skills, nor is it feasible to delineate all possible variations of open skill adaptation. In this respect, trainees should be taught general principles that can be applied to different situations (Yelon & Ford, Reference Yelon and Ford1999). Trainers should identify a manageable, yet diverse, set of response-by-situation models to help trainees develop skill adaptability. Moreover, we contend that boundaries should be established to ensure individuals execute adaptation within limits and possess an apt sensitivity to what degree of variability is acceptable. Given that all possible variations cannot be prescribed, trainees may also need to be encouraged to seek guidance and support and socialized to comply with general principles and company values.

When employees are supervised transferring an open skill, supervisors can help them refine their skill in adapting to different situations by incorporating feedback from customers, co-workers, and other key constituents where appropriate. In doing so, supervisors are further reinforcing performance expectations and strengthening the prescription-event link. A challenge is that some supervisors could be tempted to micromanage the open skill. Supervisors likely have expertise and could possess particular ideas for how open skills should be transferred. That said, appropriately selected and trained supervisors in these situations should allow employees to adapt the skill within the general principles and parameters taught in training. Along the same lines, supervisors need to communicate through informal coaching or more formal performance appraisal that exercising discretion and making sound decisions are inherent components of open skills. If supervisors micromanage or if employees do not make their own decisions, an open skill may ultimately devolve into a closed skill, which could render employees inflexible and at times ineffective.

When employees are working autonomously to transfer an open skill, posttraining interventions could strengthen the prescription-event link. For example, requiring employees to complete action plans and follow-up reports detailing how they intend to use or have used trained skills could be valuable (Tews & Tracey, Reference Tews and Tracey2008). Interventions such as these could signal to trainees that transfer is important and motivate individual effort toward applying new knowledge and skills. In this respect, they direct individuals toward transfer and further skill development, rather than other demands. Further, such interventions represent a vehicle to reinforce training content and expectations that may not have been fully cemented during training. Following that open skills are often more complex than closed skills, open skills are less apt to have been fully learned during training. Accordingly, training content and performance expectations may need to be further learned on the job. One caveat is that these posttraining interventions reflect additional work for individuals. To ensure use of these interventions, they should be simple and easy to use, and there can be accountability mechanisms built into systems such as performance reviews to help guarantee they become a part of trainees’ work routines.

Rewarding Transfer

Reward systems could strengthen the prescription-event link by signaling that transfer is important in organizations (Dose & Klimoski, Reference Dose and Klimoski1995). One challenge of rewarding the transfer of any particular skill is that the skill may only represent one aspect of an employee’s job performance. It is often the case that an employee’s total job performance is rewarded, not just a specific behavior, such as transferring one skill. Consequently, trainees may perceive little connection between transfer and rewards obtained. Another issue is that the rewards for transfer may not have enough value to motivate employee effort. That said, if transfer is not rewarded meaningfully, employees may not transfer and may instead focus on tasks where such recognition is present.

Yelon and Ford (Reference Yelon and Ford1999) suggest rewarding adherence to standards for closed skills. When such skills are supervised, it may be relatively easy to reward both behavior and results. In the context of a receptionist following a script, a supervisor could reward adherence to the script and ratings of customer satisfaction. However, when such skills are performed autonomously, rewards may need to only focus on results because results are the only source of easily accessible information (e.g., ratings of customer satisfaction). When a closed skill is relatively simple, which is sometimes the case, informal recognition may be more appropriate and feasible than formal rewards. To the extent that a closed skill has less economic value than other skills, managers may need to reward a closed skill primarily with informal recognition, feedback, and praise. Of course, this strategy depends on the closed skill in question.

With respect to open skills, Yelon and Ford (Reference Yelon and Ford1999) suggest rewards for experimentation. Rewards should focus on experimentation as opposed to performance because open skills may not be fully learned in training due to their inherent complexity and the need for adaptation on the job. When open skills are supervised, supervisors could certainly reward trainee experimentation. However, supervisors typically reward maximum performance, which is reduced by experimentation. Accordingly, it may be unclear what to reward when transfer does not meet expectations, and rewarding effort such as experimentation is fraught with ambiguity. Therefore, supervisors may need to allow for a “penalty free” period of experimentation after training that does not affect the reward system.

When autonomous employees perform open skills, rewarding experimentation is more difficult. Managers may need to base rewards on objective measures of experimentation. If none exist, managers and trainees may have no choice but to use measures of outcomes, such as focusing on patient recovery rates or student evaluations of instruction. In such cases, however, employees do not have full control over such measures, and metrics may decline posttraining if individuals are experimenting. These arguments are certainly not meant to undermine the potential value of rewards in promoting transfer, but rather highlight that if not properly designed, reward systems could send mixed messages and weaken the prescription-event link.

Managing Other Job Responsibilities

Finally, careful attention needs to be paid to managing the trainee’s total set of job responsibilities to free attention and time to practice the trained skill. When work demands are high, individuals will focus on core job responsibilities and immediate job performance in lieu of focusing on transfer (Marx, Reference Marx1982). Temporarily curtailing job responsibilities is perhaps most relevant for open skills when further skill learning is required on the job, as compared to closed skills that are relatively straightforward. That said, not all closed skills are simple skills, and even simple skills may require further learning on the job. Managing job responsibilities to accommodate transfer is relatively straightforward when trained skills are closely supervised as supervisors directly manage employee workloads. When the trained skill is autonomously performed, trainers bear a stronger responsibility for helping trainees best manage their job responsibilities to accommodate transfer.

Bolstering the Prescription-Identity Link

The prescription-identity link in Schlenker’s (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) framework is the extent to which prescriptions apply to individuals by virtue of who they are. In the transfer context, the extent to which the trained skill is required and perceived as appropriate by a trainee is a function of both his or her formal role and personal attributes. When individuals view prescriptions as consistent with their identities, they are more apt to transfer the trained skill (Burke & Saks, Reference Burke and Saks2009). Strengthening this link requires an alignment between the transfer expectations and the trainee’s perceptions of their appropriateness. As Schlenker (Reference Schlenker1997) argues, when goals are not ennobling and the prescription is perceived to have little value, individuals will avoid them. If trainees’ identities are threatened, individuals are more likely to engage in avoidance strategies, such as delaying or concealing attempts to follow prescriptions for transfer, distracting others so as not to notice their transfer efforts or lack thereof, or perhaps discrediting those who seek to hold them accountable for transfer. As means to bolster this link, we discuss selecting employees whose individual differences match the skill set and the degree of supervision and inculcating favorable attitudes toward the skill and supervision context. In addition, we discuss strategies for managing the challenge of mismatches between prescriptions and trainees’ overall job.

Selecting Employees

A long-run strategy for maximizing fit between transfer prescriptions and trainees’ identities is selecting employees with individual differences congruent with a skill set and degree of supervision. While many individual differences could be positioned in the context of Yelon and Ford’s (Reference Yelon and Ford1999) framework, here we will highlight a few exemplars. Although conscientiousness is important irrespective of context (Colquitt, LePine, & Noe, Reference Colquitt, LePine and Noe2000), the orderliness and dutifulness facets of conscientiousness are likely particularly important for executing closed skills that require adherence to strict standards (Costa, McCrae, & Dye, Reference Costa, McCrae and Dye1991). General mental ability is also one of the most dominant predictors of training success (Colquitt et al., Reference Colquitt, LePine and Noe2000), but is certainly more important for open skills high in complexity. As discussed by Yelon and Ford, a learning orientation is also key for open skills as they require adaptation, reflection, and problem solving. Noe, Tews, and Marand (Reference Noe, Tews and Marand2013) recently demonstrated that zest, where individuals approach life with eagerness, energy, and anticipation (Peterson & Seligman, Reference Peterson and Seligman2004), was a significant predictor of informal learning. Following that open skills require informal learning on the job, zest should be relevant to this skill set. Regarding degree of supervision, the compliance facet of agreeableness is especially relevant for supervised trainees (Costa et al., Reference Costa, McCrae and Dye1991), whereas a proactive personality is especially germane for autonomous trainees because such individuals are unconstrained by situational forces and persevere until they achieve desired results (Parker & Sprigg, Reference Parker and Sprigg1999).

Promoting Favorable Attitudes

Another strategy is for trainers and supervisors, where appropriate, to inculcate favorable attitudes toward the specific skill and supervision context (Tracey et al., Reference Tracey, Tannenbaum and Kavanagh1995). Links are weaker when a prescription appears arbitrarily imposed or to only benefit the person imposing it (DeHart-Davis, Reference DeHart-Davis2009). For closed skills, trainers could focus on strengthening attitudes toward the standards and the process of executing them, noting adverse consequences of deviations. Imposing nonvalued standards is likely less of an issue for closed skills performed under supervision due to the nature of a close employee-supervisor working relationship. With respect to open skills, trainers could attempt to enhance trainees’ appreciation for creativity, risk taking, problem solving, and the ability to adapt principles in different contexts. When skills will be transferred under close supervision, trainers could develop in trainees positive attitudes toward supervision, accepting feedback from others, and the benefits of teamwork. Supervisors should accept some adaptation and variation and confer with trainees to gain their acceptance of prescriptions. In turn, for autonomously performed open skills, trainers could target the benefits of working independently, freedom, and self-determination. Extending the aforementioned arguments, a potentially fruitful strategy is to leverage trainees’ individual differences by linking them to the specific characteristics of the skill to be transferred. For example, for closed skills, trainers could appeal to trainees’ orderliness and dutifulness, and for autonomously performed skills, trainers would appeal to trainees’ proactive personality.

Limiting Mismatches

One challenge that may weaken the prescription-identity link is a mismatch between prescriptions for a specific skill set and the overall nature of an individual’s job. That is, the link could be compromised when trained skills are inconsistent with the overall set of responsibilities for a given job and the degree of supervision an individual typically receives at work (Mathieu & Martineau, Reference Mathieu, Martineau and Ford1997). There appears to be an implicit assumption in the transfer literature that there is congruence on this front, which may not always be the case. For example, an administrative assistant who normally performs closed tasks may be trained in more complex project management skills. As another example, a college professor who normally performs autonomously may be evaluated three times a semester in his or her use of a new classroom management technology, a potential affront to autonomy. In such instances, trainees may resist transfer if they are given prescriptions inconsistent with their identity and job.

To overcome this challenge and strengthen the prescription-identity link, practitioners can either limit mismatches or acknowledge them when they are necessary. Furthermore, alternative strategies should be employed to minimize identity threats depending on whether trainees will be expanding or narrowing the scope of their work. When moving from closed to open skills or supervised to autonomous working conditions, trainers should seek to explicitly expand the trainee’s identity to encompass the new task. For example, trainers and supervisors could appeal to an individual’s need for growth, development, and autonomy. However, when moving from open to closed skills or from autonomous to supervised working conditions, people may experience threats to their perceived competence because they are being constrained. To limit identity threats in these instances, trainers should seek to appeal to trainees’ willingness and ability to do the task well for the benefit of the organization and recognize and reward their sacrifice.

Bolstering the Identity-Event Link

The identity-event link reflects the extent to which the actor has personal control over the event; higher perceived control enhances felt responsibility (Dose & Klimoski, Reference Dose and Klimoski1995). In the context of transfer, this link is stronger when individuals have confidence in their ability to successfully use new knowledge and skills and favorably influence the desired outcome of transfer, namely improved job performance. Self-efficacy beliefs, which have been demonstrated to have a positive impact on behavior across a wide set of domains (Bandura, Reference Bandura1986; Judge & Bono, Reference Judge and Bono2001; Stajkovic & Luthans, Reference Stajkovic and Luthans1998), are central to strengthening the identity-event link (Schlenker, Reference Schlenker1997).

Bandura (Reference Bandura1986) defined self-efficacy as individuals’ “judgments of their capabilities to organize and execute courses of action required to attain designated types of performances” (391). Wood and Bandura (Reference Wood and Bandura1989) contend that self-efficacy beliefs relate to individuals’ perceived capabilities “to mobilize the motivation, cognitive resources, and courses of action to meet given situational demands” (408). Self-efficacy beliefs may include both traitlike individual differences (Chen, Gully, & Eden, Reference Chen, Gully and Eden2001; Judge, Erez, & Bono, Reference Judge, Erez and Bono1998; Judge, Locke, & Durham, Reference Judge, Locke and Durham1997) and task-specific states that can be enhanced through mastery experiences (Bandura, Reference Bandura1986; Stajkovic & Luthans, Reference Stajkovic and Luthans1998). As such, strengthening self-efficacy to strengthen the identity-event link could be achieved by careful employee selection and providing posttraining support (Gist & Mitchell, Reference Gist and Mitchell1992) including goal-setting and self-management training, to which we now turn. A major challenge to strengthening this link is unrealistic expectations.

Selecting Employees

Two traits could be used in the selection process to facilitate trainees’ perceived personal control. The first is generalized self-efficacy (GSE), which refers to the extent to which an individual has an enduring belief that he or she is capable of accomplishment irrespective of the situation or task demands (Chen et al., Reference Chen, Gully and Eden2001; Judge et al., Reference Judge, Erez and Bono1998; Judge et al., Reference Judge, Locke and Durham1997). Given that those higher in GSE believe they can succeed in any achievement situation, they likely will have confidence in their ability to transfer. In addition, the perceived ability to learn and solve problems (PALS), which relates to self-efficacy in acquiring new knowledge and skills and effective problem solving, may have particular relevance for transfer of training (Tews, Michel, & Noe, Reference Tews, Michel and Noe2011). Although PALS has not been examined in the context of learning explicitly, Tews and colleagues demonstrated that PALS was significantly related to job performance for managers and entry-level employees. Moreover, PALS was found to explain additional variance in performance beyond general mental ability, personality, and similar constructs related to learning and problem and solving. In the context of Yelon and Ford’s (Reference Yelon and Ford1999) model, GSE and PALS are more relevant for open skills and for those autonomously performed as they place greater demands on individuals. GSE and PALS are likely less relevant for closed, supervised work because its standardized nature reduces the importance of human judgment and creativity.

Providing Posttraining Support

A variety of goal-setting interventions may bolster accountability for autonomous employees, particularly those performing open skills. In an early study in this area, Wexley and Nemeroff (Reference Wexley and Nemeroff1975) demonstrated in the development of managerial and negotiation skills that trainees who received assigned goals, coupled with on-the-job coaching sessions with trainers, exhibited superior on-the-job performance compared to trainees who attended classroom training only. Richman-Hirsch (Reference Richman-Hirsch2001) illustrated that goal-setting training focused on action planning within the formal classroom resulted in better customer service performance for trainees who participated in this supplement compared to those who received classroom training only. Furthermore, Tews and Tracey (Reference Tews and Tracey2008) demonstrated that a self-coaching program designed to improve the transfer of interpersonal skills for managers resulted in higher posttraining performance and self-efficacy beliefs for trainees compared to those who received classroom training only. This intervention involved trainees completing written self-assessments in which they reflected on their performance and established learning and performance goals for several weeks after completing the formal training.

Self-management training, which is similar to goal-setting interventions, could also have relevance for transferring autonomously performed skills. Self-management training is training in the formal classroom environment designed to equip individuals with skills necessary to support successful transfer (Marx, Reference Marx1982; Richman-Hirsch, Reference Richman-Hirsch2001). This training typically involves lectures and discussions on these self-management strategies, as well as opportunities for trainees to establish goals for themselves, identify potential challenges to successful performance, and develop specific strategies to facilitate transfer (Richman-Hirsch, Reference Richman-Hirsch2001). It should be noted that while accountability mechanisms typically involve an external audience to evaluate an individual’s performance (Frink & Klimoski, Reference Frink and Klimoski1998), by definition, autonomous trainees lack such an audience much of the time. Consequently, autonomous trainees must serve as the first line of accountability, making self-management training and related techniques necessary vehicles. Some research has demonstrated a positive impact for self-management training on posttraining performance (Noe, Sears, & Fullenkamp, Reference Noe, Sears and Fullenkamp1990; Tziner, Haccoun, & Kadish, Reference Tziner, Haccoun and Kadish1991), but other studies have not (Burke, Reference Burke1997; Gaudine & Saks, Reference Gaudine and Saks2004; Richman-Hirsh, Reference Richman-Hirsch2001; Wexley & Baldwin, Reference Wexley and Baldwin1986). Self-management training may rely too heavily on individuals to manage their performance on the job. One potential means to improve its effectiveness is to have trainees formally meet with supervisors or trainers, which would increase the degree of accountability and allow for follow-up coaching and advice.

Minimizing Unrealistic Expectations

Unrealistic expectations for a successful event diminish the strength of the identity-event link (Schlenker, Reference Schlenker1997), representing a key challenge. Accordingly, successful attempts at transfer should not be perceived as too difficult. Given that closed skills are relatively easier to acquire than open skills, initial proficiency may be expected sooner posttraining for closed skills; however, not all closed tasks are simple, so proficiency is not always quickly attained. Supervisors should be sure there is enough time and excess trainee capacity to facilitate transfer. Because open skills are more complex, supervisors need to pull back in initial proficiency expectations even more than for closed skills and reward (formally and informally) progression. Supervisors should place a greater emphasis for open skills on learning goals, where individuals are allowed to focus on further skill acquisition, effort, challenge, and errors (Kozlowski et al., Reference Kozlowski, Gully, Brown, Salas, Smith and Nason2001). When skills are autonomously performed, trainees bear a greater responsibility for setting realistic transfer goals to enhance their personal control. Moreover, for autonomously performed skills, there is a greater need for goal-setting and self-management training to help trainees traverse the transfer process.

Guidelines for Practice

We have identified several useful strategies in the preceding sections to strengthen the prescription-event, prescription-identity, and identity-event links to enhance accountability and transfer in different contexts. The framework encourages careful consideration of what happens during training and in the broader organization to increase trainees’ role clarity, sense of ownership, and perceived control over transfer. A common theme throughout this chapter has been that one size does not fit all and that careful consideration must be paid to the nature of the skill and the conditions under which the skill will be performed. It is important that practitioners not necessarily assume that a transfer strategy that worked well in one context will work well in another. Practitioners should be well versed in a broad set of transfer accountability strategies, as there is no quick fix.

The complexity and challenges of transfer highlight the importance of conducting a careful needs assessment to determine the specific context for transfer and the availability of support and accountability mechanisms to facilitate the application of new knowledge and skills. Along the same lines, multiple stakeholders should be involved in the needs assessment process and the implementation of accountability strategies. Transfer is not the responsibility of one, but of many stakeholders – trainers, supervisors, and trainees.

We summarize the conditions that must be satisfied to bolster Schlenker’s (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) three accountability links in Yelon and Ford’s (Reference Yelon and Ford1999) four contexts in Tables 9.3–9.6. These tables provide a useful reference for practitioners to design strategies for transfer; a sample job and task is provided for each context to help the reader. In designing transfer strategies, practitioners should strive to bolster all three links. Consider the context of actors developing a character role, a supervised open skill. To address the prescription-event link, the actors would require clear outcome goals for putting on a good performance, clear expectations for skilladaptation in developing their characters, and clear expectations that they will be directed and not be wholly autonomous. To address the prescription-identity link, the actors must value skill adaption, as well as value and be receptive to taking direction. Finally, to address the identity-event link, the actors must perceive that they have the ability to create a well-developed character. We acknowledge that designing accountability strategies may not always be easy, and they may not be necessary at all times. However, we encourage practitioners to make the attempt for knowledge and skill sets of particular strategic importance.

Table 9.3 Transfer conditions for supervised closed skills

| Example Job: Fast Food Restaurant Cook Focal Skill to Transfer: Making Food to Standards | ||

|---|---|---|

| Transfer Requirement | Accountability Link | Trainee Conditions Necessary for Transfer |

| Trainees must follow precise standards under closely supervised conditions | Prescription-Event: The extent to which clear and unambiguous expectations exist for transfer | Trainees require precise standards for skill application and following direction from supervisors |

| Prescription-Identity: The extent to which prescriptions are relevant to trainees by virtue of their role, values, or other personal attributes | Trainees value adhering to precise standards and willingly accept direction | |

| Identity-Event: The extent to which trainees have personal control over their ability to transfer | Trainees have the capacity to adhere to standards and follow direction | |

Table 9.4 Transfer conditions for autonomous closed skills

| Example Job: Hotel Guest Room Attendant Focal Skill to Transfer: Cleaning a Guest Room to Standards | ||

|---|---|---|

| Transfer Requirements | Accountability Link | Trainee Conditions Necessary for Transfer |

| Trainees must follow precise standards under autonomous working conditions | Prescription-Event: The extent to which clear and unambiguous expectations exist for transfer | Trainees require precise standards for skill application and clear expectations for taking responsibility for monitoring standards themselves |

| Prescription-Identity: The extent to which prescriptions are relevant to trainees by virtue of their role, values, or other personal attributes | Trainees value adhering to precise standards and working independently | |

| Identity-Event: The extent to which trainees have personal control over their ability to transfer | Trainees have the capacity to adhere to standards and work independently | |

Table 9.5 Transfer conditions for autonomous open skills

| Example Job: Supervisor Focal Skill to Transfer: Motivating Employees | ||

|---|---|---|

| Transfer Requirements | Accountability Link | Necessary Trainee Conditions for Transfer |

| Trainees must adapt skill autonomously | Prescription-Event: The extent to which clear and unambiguous expectations exist for transfer | Trainees require clear outcome goals and clear expectations for skill adaptation and taking responsibility for monitoring their performance |

| Prescription-Identity: The extent to which prescriptions are relevant to trainees by virtue of their role, values, or other personal attributes | Trainees value skill experimentation and working independently | |

| Identity-Event: The extent to which trainees have personal control over their ability to transfer | Trainees have the capacity to adapt skills and work independently | |

Table 9.6 Transfer conditions for supervised open skills

| Example Job: Actor Focal Skill to Transfer: Character Development | ||

|---|---|---|

| Transfer Requirements | Accountability Link | Trainee Conditions Necessary for Transfer |

| Trainees must adapt skill under supervised conditions; must have receptivity to take direction | Prescription-Event: The extent to which clear and unambiguous expectations exist for transfer | Trainees require clear outcome goals and clear expectations for skill adaptation and taking direction from supervisors |

| Prescription-Identity: The extent to which prescriptions are relevant to trainees by virtue of their role, values, or other personal attributes | Trainees value skill experimentation and are open to taking direction from others | |

| Identity-Event: The extent to which trainees have personal control over their ability to transfer | Trainees have the capacity to engage in skill experimentation and take direction from others | |

Future Research

By integrating Schlenker’s (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) and Yelon and Ford’s (Reference Yelon and Ford1999) frameworks, we have offered a number of strategies to enhance transfer. Some of these propositions were empirically referenced, but others remain theoretical. While we have identified several potentially viable transfer enhancement strategies, organizations may be using a host of additional strategies. Descriptive research would therefore be valuable to generate data on accountability strategies already being employed in workplaces. To extend the contribution of this chapter, research is needed to test the extent to which the strategies offered herein are effective. A fundamental tenet of this chapter is that situational specificity matters. As such, when validating these strategies, context must either be experimentally manipulated or measured and modeled in survey research. In addition to assessing the direct influence of the strategies on transfer, research could examine trainees’ perceptions of goal/process clarity, ownership of transfer, and personal control as mediators in strategy-transfer relationships. Such work would validate the hypothesized central role of accountability for transfer.

An area in need of research is how best to promote favorable trainee attitudes toward performing open and closed skills under varying degrees of supervision. One challenge to development of favorable attitudes may be threats to an individual’s identity. For example, performing a closed skill under supervised working conditions may likely be resisted by those who prefer to perform open skills autonomously. Research would be worthwhile that compares whether a discussion-based training format where trainees generate the benefits and importance of executing a specific skill yields more favorable attitudes than an approach where trainers communicate the benefits. Following Deci and Ryan’s self-determination theory (Reference Deci and Ryan2002), which posits that individuals seek to be self-directed agents, such an approach might yield potentially high returns.

We suggest several specific comparisons to validate our proposed integrated model. One potentially useful comparison is to examine the value of a single strategy across different contexts. For example, research could examine the different effects of self-management training for open versus closed skills. Given the complexity inherent in open skills, we would hypothesize that self-management training would be more effective in facilitating transfer in this context. Another useful comparison is to assess the effectiveness of different strategies that address a specific link. Both personality characteristics and perceived role breadth were argued to influence the prescription-identity link, and research would be worthwhile that examines which matters more.

Further, research would be valuable that examines the relative importance of the different links in a particular context. While the combination of all links forms the social adhesive to promote accountability, the links may not always be of equal importance in specific situations. We believe that the prescription-event link may be more important than the prescription-identity link for supervised closed skills and that the prescription-identity link is more important than the prescription event-link for autonomous open skills. Testing such relationships is warranted to ascertain whether greater precision is in fact necessary or whether a more a parsimonious set of strategies suffice.

These avenues for research could be addressed either through survey research or through experimental manipulations. A survey of employees could assess training context, training design and delivery, work environment support, individual differences, and transfer, preferably with data collected at multiple points in time. In an ideal design, employees from a large organization or across multiple organizations would be sampled to provide the necessary variability for comparative studies. Although it is difficult to secure such samples for training research, the increased availability of online panels through Qualtrics, for example, makes such research more feasible. When conducting transfer studies across contexts, the nature of the job, performance, and focal training content are likely different. Thus, researchers must pay careful attention to the selection of dependent variables that are applicable across contexts and lend themselves to meaningful comparisons. In this regard, measures of transfer should be general (e.g., “I successfully apply material from training on the job”) as opposed to content specific (e.g., “I successfully apply customer skills from training on the job”).

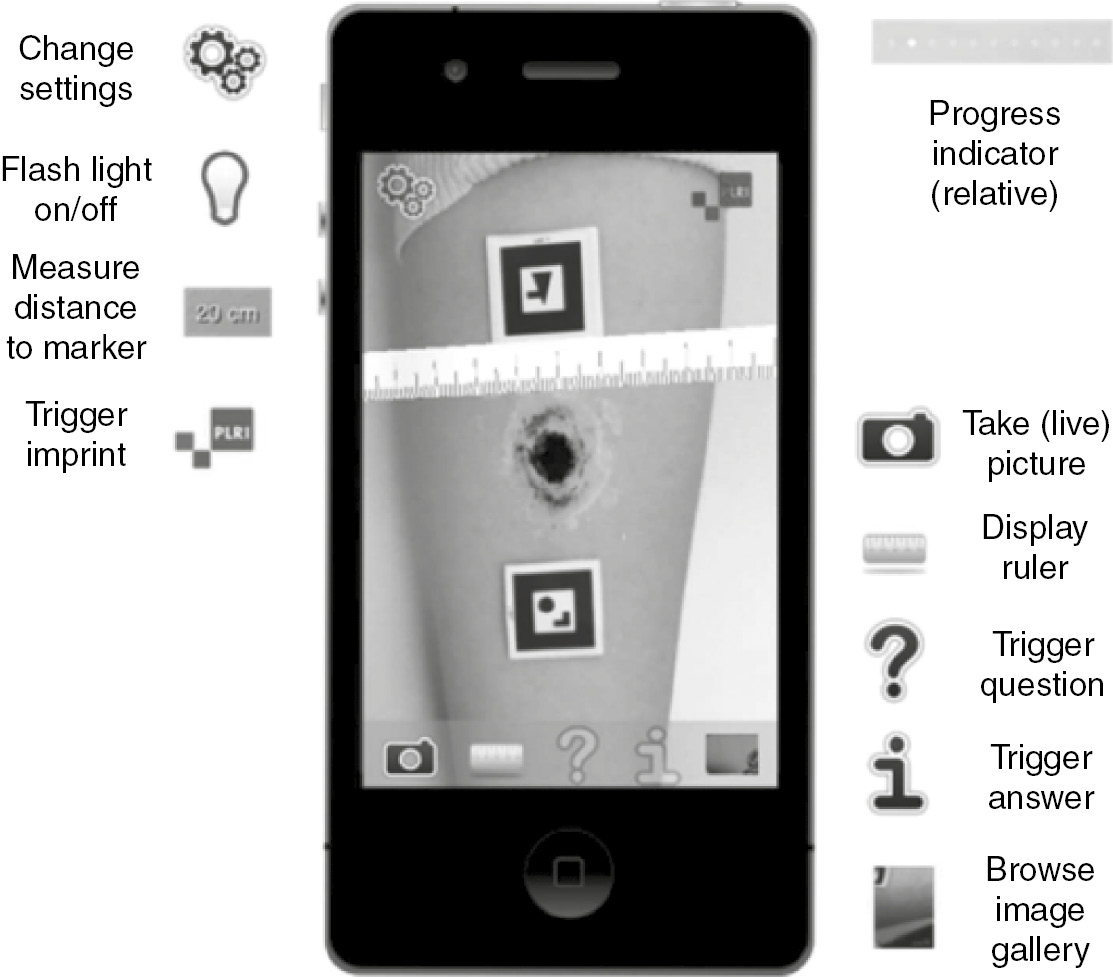

Experimental studies could also be conducted in the field to further substantiate cause-and-effect relationships. Given the challenge of access to organizations, experimental research with samples of working students may also be valuable. The aforementioned DeMatteo et al. (Reference DeMatteo, Lundby and Dobbins1997) lab study is a useful exemplar. In their 2 × 2 design, they studied two accountability interventions and their temporal position (before vs. after training) using a student sample and measured students’ satisfaction and learning. Although DeMatteo and colleagues did not measure transfer, it is feasible to do so with the advent of mobile technologies such as Socrative® that enable researchers to survey individuals with simple questions by phones, tablets, and laptops about their transfer of class learning to the workplace.

Conclusion

In this chapter, we provided a synthesized framework for transfer of training that is theory driven, integrative, and context sensitive. By integrating the work of Schlenker and colleagues (Reference Schlenker, Britt, Pennington, Murphy and Doherty1994; Reference Schlenker1997) and Yelon and Ford (Reference Yelon and Ford1999), this chapter has discussed how to enhance trainees’ role clarity, sense of ownership, and perceived control over transfer for open and closed skills performed either under supervised or autonomous working conditions. Promoting transfer of training represents a perennial challenge for scholars and practitioners. Yet, promoting transfer is critical to ensure that employees possess the knowledge and skills to succeed in today’s competitive and dynamic business environments. It is our hope that this chapter has provided a useful framework for understanding accountability issues associated with transfer, guiding future research efforts, and facilitating transfer design in practice.

Any company has the potential to build deep specialization. Think about those job roles that define your company’s competitive advantage. In most, these roles are in research, engineering, or manufacturing. Start here – and ask your business leaders to define what an expert really is. Study these people and use them as models to build deep specialization programs for others. (Bersin, Reference Bersin2009)

Work is becoming more knowledge driven and global in scope requiring a deeper combination of information, experience, understanding, and problem-solving skills that can be applied to decisions and actions around strategically critical situations (Kraiger & Ford, Reference Kraiger, Ford and Koppes2007). This reality highlights the need for enhancing the development of deep specialization for functional experts in key or “mission critical” jobs that are important for future growth (Ziebell, Reference Ziebell2008). For example, at Intel, 80% of their worldwide staff works in technical positions (Bersin, Reference Bersin2009). This company and others like it are dependent on the level of knowledge and expertise in specialized areas. For example, the nuclear industry sets criteria for job position risk assessment with the highest level being jobs where: (1) individuals have critical and unique knowledge or skills that can have the potential for significant reliability and safety impacts, (2) years of training and experience are required, and (3) there are no ready replacements available (International Atomic Energy Agency, 2006).

The importance of understanding and enhancing deep specialization is particularly relevant today in response to the imminent retirement of such experts (i.e., the “grey tsunami”) in many strategic business areas, especially in the United States (Moon, Hoffman, & Ziebell, Reference Moon, Hoffman and Ziebell2009). It is important to not only understand in broad terms the distribution of expertise by job category, but also to identify what type of expertise (what tasks, skills, knowledge) is likely to be lost (risk assessment) through expected promotions, turnover, and retirements and to create processes to address expertise loss and strategically develop talent where there will be critical shortages before they occur (Ziebell, Reference Ziebell2008).

As noted by Schein (Reference Schein1993), an individual’s career can be studied as a series of movements along three different dimensions: (1) moving up in the hierarchy; (2) moving laterally across various subfields; and (3) moving toward the centers of influence in an organization. The concept of deep specialization in core areas that are critical to organizational success is targeted toward this third, and less researched, career movement. As noted by Bersin (Reference Bersin2009), it is time to rethink the traditional career pyramid by increasing efforts to develop and enhance deep levels of skills and knowledge and hence accelerate the time it takes to become an expert in a career field. While organizations rely on experts in critical jobs to achieve strategic goals, they often do not fully appreciate or understand the impact of expertise on day to day operations (Borton, Reference Borton2007; Prietula & Simon, Reference Prietula and Simon1989).

Organizations often focus on bringing newcomers up to speed on a job to get immediate value out of the investment of recruitment, selection, and initial training costs (Byham, Reference Byham2008). Less attention is paid to longer-term developmental strategies to facilitate the move to deep specialization (Lord & Hall, Reference Lord and Hall2005; Ziebell, Reference Ziebell2008). Identifying effective strategies for long-term development is a critical step in considering how to speed up the development cycle. Given that it can take years of training, learning activities, and work experiences to develop deep specialization in a technical career field, it is important to identify key levers to accelerate individual development (Hoffman et al., Reference Hoffman, Ward, Feltovich, DiBello, Fiore and Andrews2014).

The purpose of this chapter is to examine intentional learning strategies that can be enacted to develop individuals in highly specialized jobs throughout the course of their career. There have been some attempts to focus not just on training in isolation but on continued development throughout a career (e.g., Caligiuri & Tarique, Reference Caligiuri, Tarique, Osland, Li and Wang2014; Cerasoli et al., Reference Cerasoli, Alliger, Donsbach, Mathieu, Tannenbaum and Orvis2014). Salas and Rosen (Reference Salas, Rosen, Kozlowski and Salas2009) reviewed the literature on the development process as individuals move from novice to expert and provided a framework for understanding the characteristics of expertise, as well as discussed how expertise can be developed and maintained. Based on this framework, they developed 17 principles for developing expertise at work (e.g., provide variability in learning activities). While the framework and principles have value, only one of the 17 principles dealt explicitly with differences in learning strategies to support learning needs through transitions from beginner to intermediate and to advanced learners.

The present chapter explores changing developmental needs and effective learning strategies as an individual moves from a relative newcomer to becoming a valued employee with deep specialization. We first identify three key characteristics that evolve as individuals develop expertise in their job and performance indicators that are associated with this evolution. The chapter then provides a framework for the goals of development over time. The final section provides insights into strategies for building knowledge and skills as a person progresses toward expertise.

The Road to Expertise

The goal of development is the achievement of consistent, superior performance through enhanced mental and physical processes. This goal is pursued by providing employees experience, training, and on-the-job learning activities that have a “developmental punch” (Ford & Kraiger, Reference Ford, Kraiger, Cooper and Robertson1995; Kraiger & Ford, Reference Kraiger, Ford and Koppes2007; Quiñones, Ford, & Teachout, Reference Quiñones, Ford and Teachout1995). Researchers have begun to identify the depth of knowledge and skill building that characterize deep specialization in a career field (Ericsson, Nandagopal, & Roring, Reference Ericsson, Nandagopal and Roring2009; McCall, Reference McCall2004). This section discusses the shifts that occur to change a person’s status from a relative novice to expert, as well as indicators of expert performance.

Qualitative Shifts That Define Expertise

Dreyfus and Dreyfus’s (Reference Dreyfus and Dreyfus1986) identify five developmental stages: novice, advanced beginner, competent, proficient, and expert. Variants of this stage model exist, adding terms such as naivete, initiate, apprentice, and journeyman (Alexander, Reference Alexander2003; Hoffman, Reference Hoffman, Williams, Faulkner and Fleck1996; Hoffman et al., Reference Hoffman, Shadbolt, Burton and Klein1995; Kinchin & Cabot, Reference Kinchin and Cabot2010). Research generally supports that there is a stepwise (but not necessarily linear) progression of skill and practice in developing expertise (Dall’Alba & Sandberg, Reference Dall’Alba and Sandberg2006; Kinchin & Cabot, Reference Kinchin and Cabot2010). During the beginning phase, novices are focused on learning facts and using deliberate reasoning, and they rely on general strategies across situations. By the competent stage, learners have begun to organize related pieces of information into mental models and have routinized many (but not all) types of processes. By the time learners have reached the expert stage, they can deliberately reason about their own intuitions regarding a situation or problem and generate new rules or strategies to use (see Dreyfus & Dreyfus, Reference Dreyfus and Dreyfus1986).