Refine search

Actions for selected content:

49525 results in Computer Science

Towards capsule endoscope locomotion in large volumes: design, fuzzy modeling, and testing

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Contract lenses: Reasoning about bidirectional programs via calculation

-

- Journal:

- Journal of Functional Programming / Volume 33 / 2023

- Published online by Cambridge University Press:

- 06 November 2023, e10

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

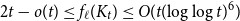

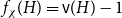

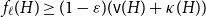

On the choosability of

$H$-minor-free graphs

$H$-minor-free graphs

- Part of

-

- Journal:

- Combinatorics, Probability and Computing / Volume 33 / Issue 2 / March 2024

- Published online by Cambridge University Press:

- 03 November 2023, pp. 129-142

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Parametrized polyconvex hyperelasticity with physics-augmented neural networks

- Part of

-

- Journal:

- Data-Centric Engineering / Volume 4 / 2023

- Published online by Cambridge University Press:

- 03 November 2023, e25

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A semantic similarity-based method to support the conversion from EXPRESS to OWL

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Embedding data science innovations in organizations: a new workflow approach

-

- Journal:

- Data-Centric Engineering / Volume 4 / 2023

- Published online by Cambridge University Press:

- 03 November 2023, e26

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Incomplete to complete multiphysics forecasting: a hybrid approach for learning unknown phenomena

-

- Journal:

- Data-Centric Engineering / Volume 4 / 2023

- Published online by Cambridge University Press:

- 03 November 2023, e27

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Material dialogues for first-order logic in constructive type theory: extended version

-

- Journal:

- Mathematical Structures in Computer Science / Volume 34 / Issue 7 / August 2024

- Published online by Cambridge University Press:

- 03 November 2023, pp. 689-709

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Security in the Cyber Age

- An Introduction to Policy and Technology

-

- Published online:

- 02 November 2023

- Print publication:

- 16 November 2023

2 - Bird’s–Eye View

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 31-54

-

- Chapter

- Export citation

6 - Visual Perception

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 129-154

-

- Chapter

- Export citation

10 - Interaction

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 245-270

-

- Chapter

- Export citation

11 - Audio

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 271-292

-

- Chapter

- Export citation

9 - Tracking

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 211-244

-

- Chapter

- Export citation

References

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 340-356

-

- Chapter

- Export citation

3 - The Geometry of Virtual Worlds

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 55-81

-

- Chapter

- Export citation

1 - Introduction

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 1-30

-

- Chapter

- Export citation

7 - Visual Rendering

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 155-184

-

- Chapter

- Export citation

8 - Motion in Real and Virtual Worlds

-

- Book:

- Virtual Reality

- Published online:

- 12 October 2023

- Print publication:

- 02 November 2023, pp 185-210

-

- Chapter

- Export citation