Refine listing

Actions for selected content:

142352 results in Open Access

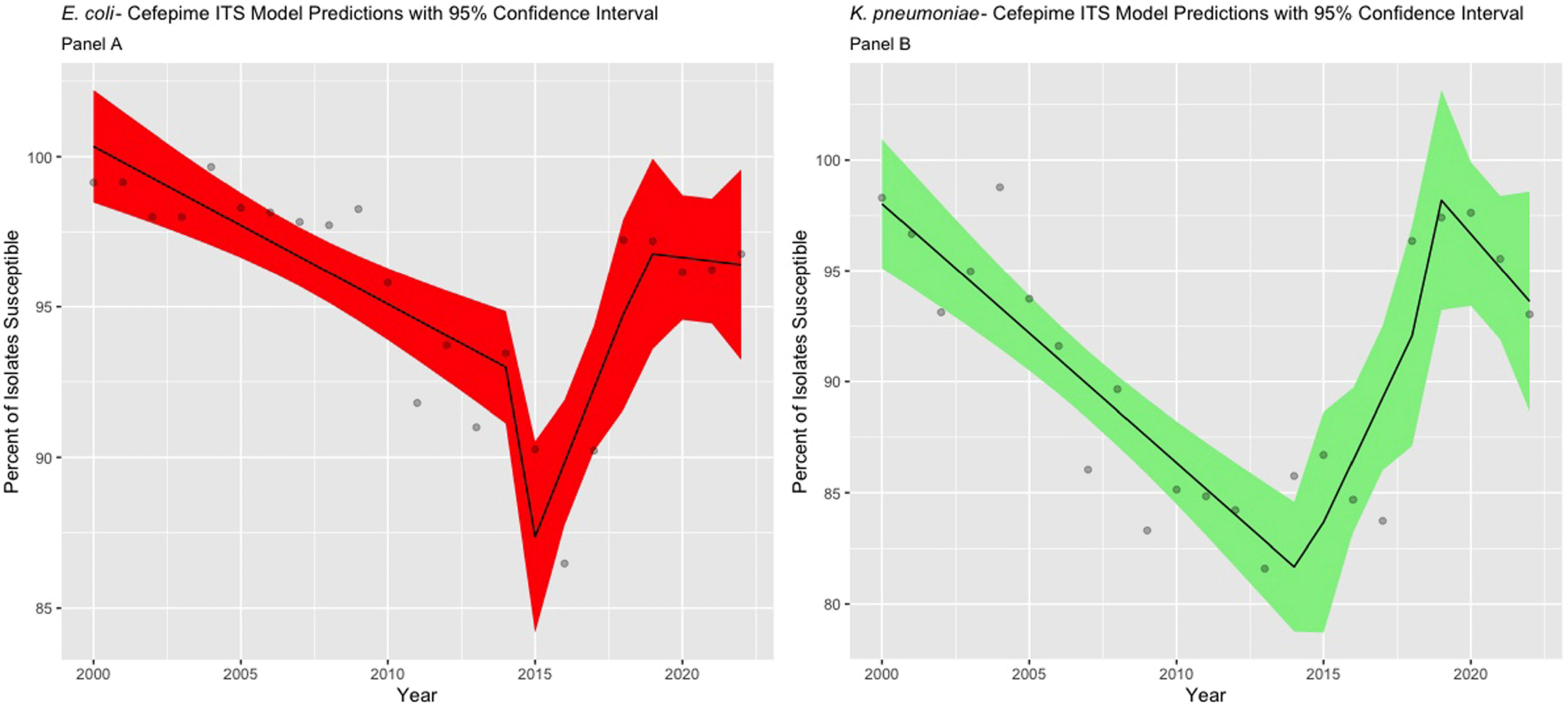

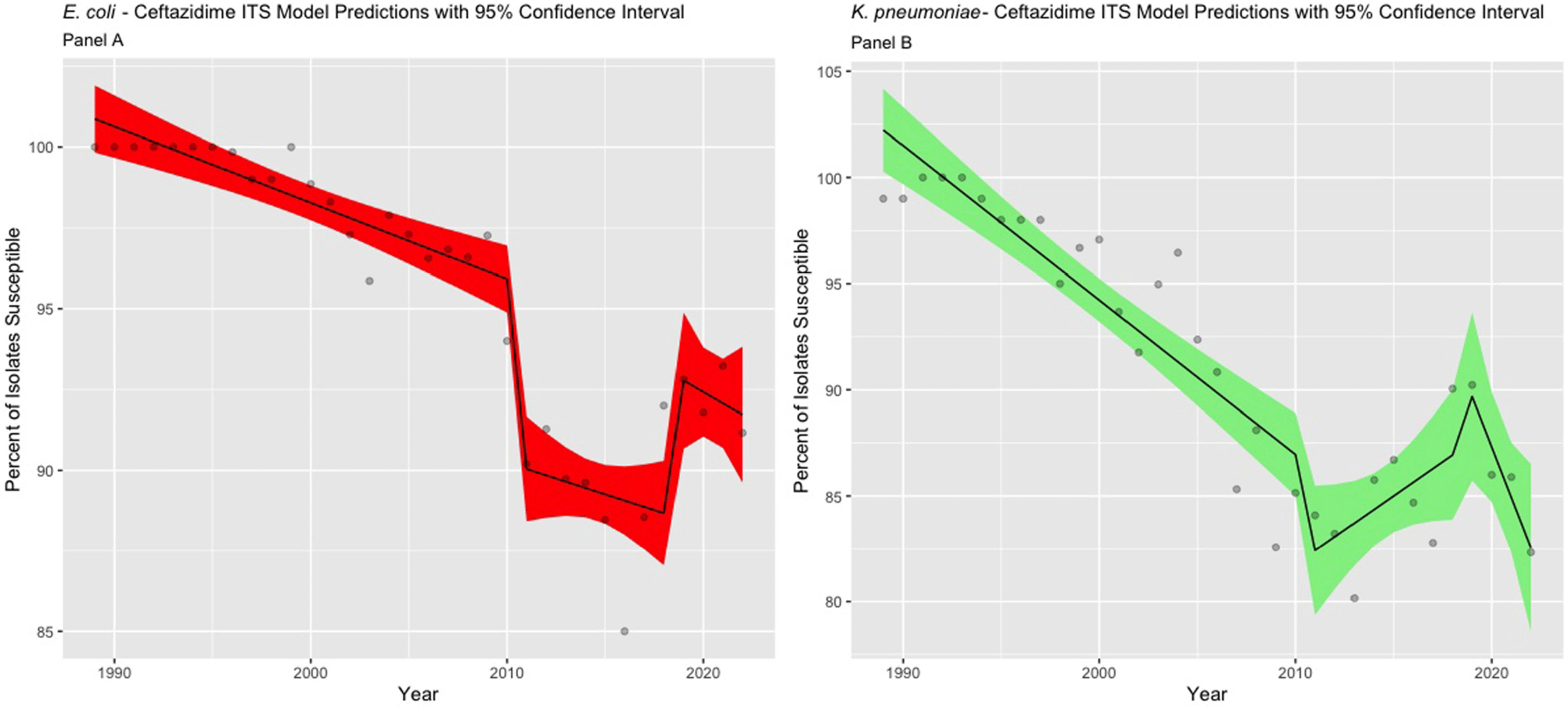

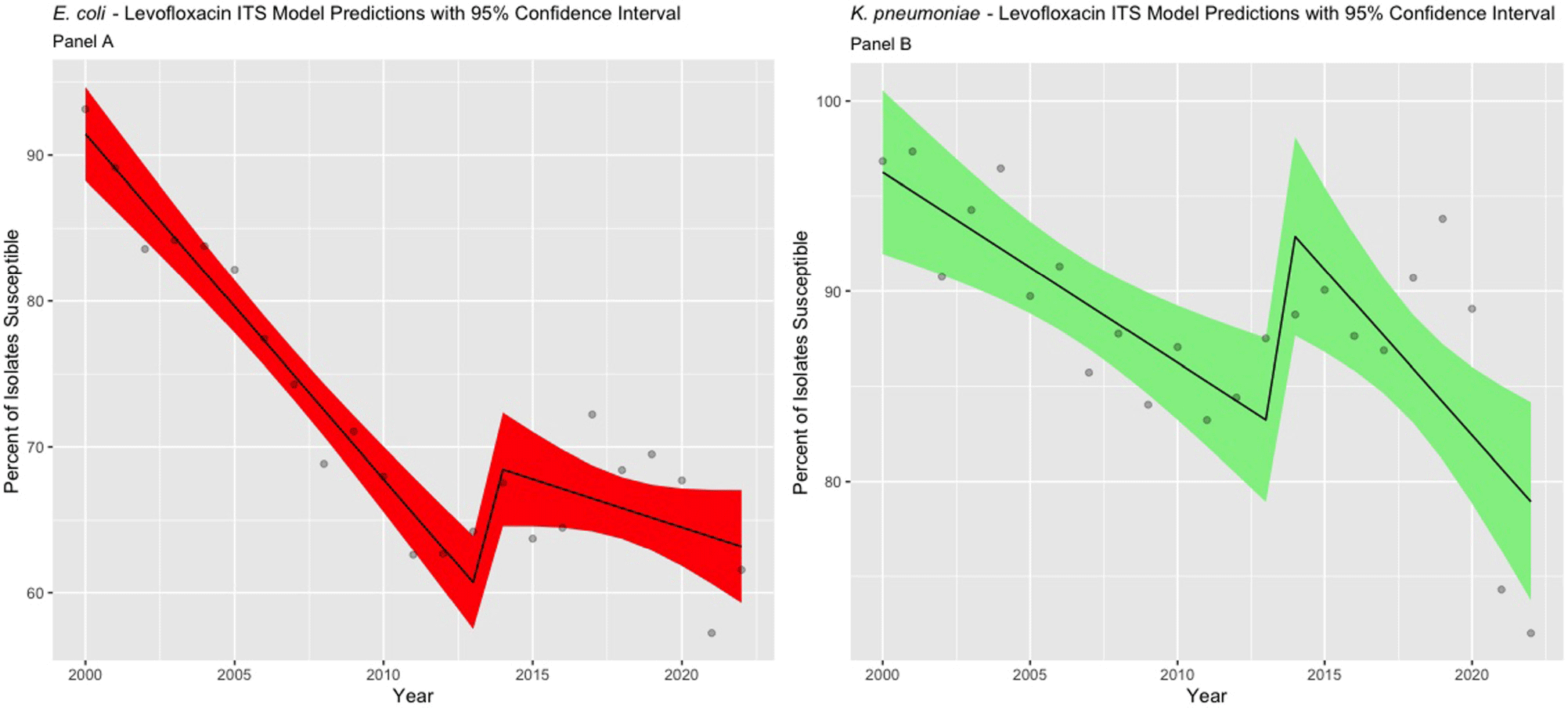

Impact of MIC Breakpoint Changes for Enterobacterales on Trends of Antibiotic Susceptibilities in An Academic Medical Center

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s54-s56

-

- Article

-

- You have access

- Open access

- Export citation

A little rain

-

- Journal:

- Palliative & Supportive Care / Volume 22 / Issue 5 / October 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. 1537

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Nosocomial Transmission of Mycobacterium tuberculosis in an Oncological Setting

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s107

-

- Article

-

- You have access

- Open access

- Export citation

Optimal Weight-Based Dosing of Vancomycin to Achieve an Area Under the Curve of 400 to 600 Stratified by Body Mass Index

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s131

-

- Article

-

- You have access

- Open access

- Export citation

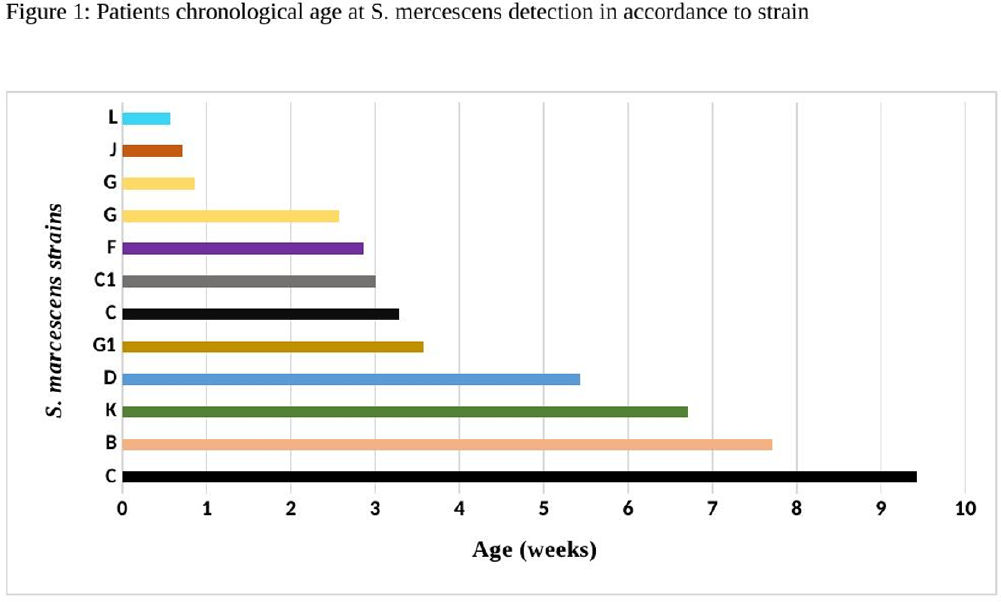

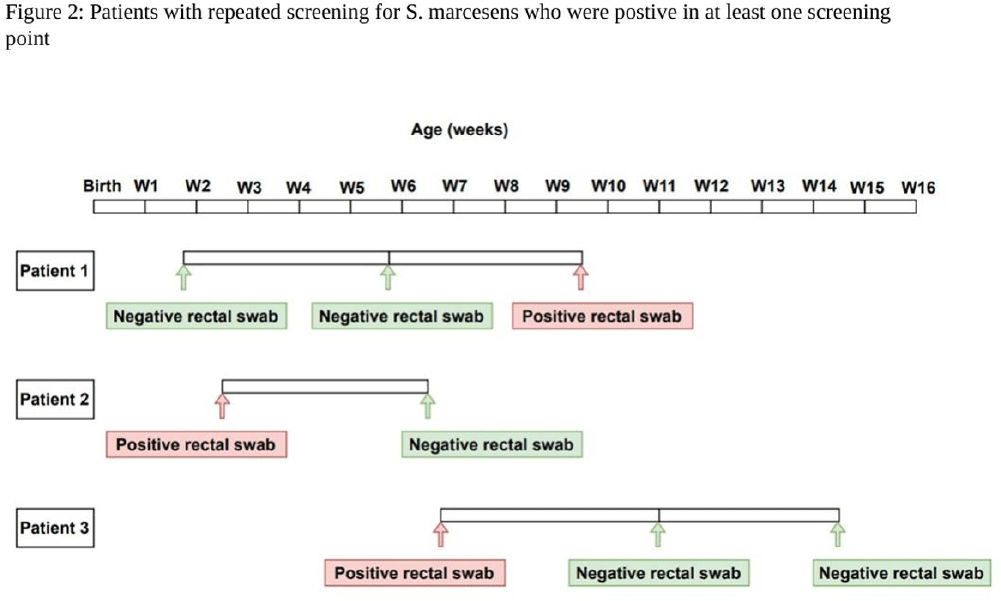

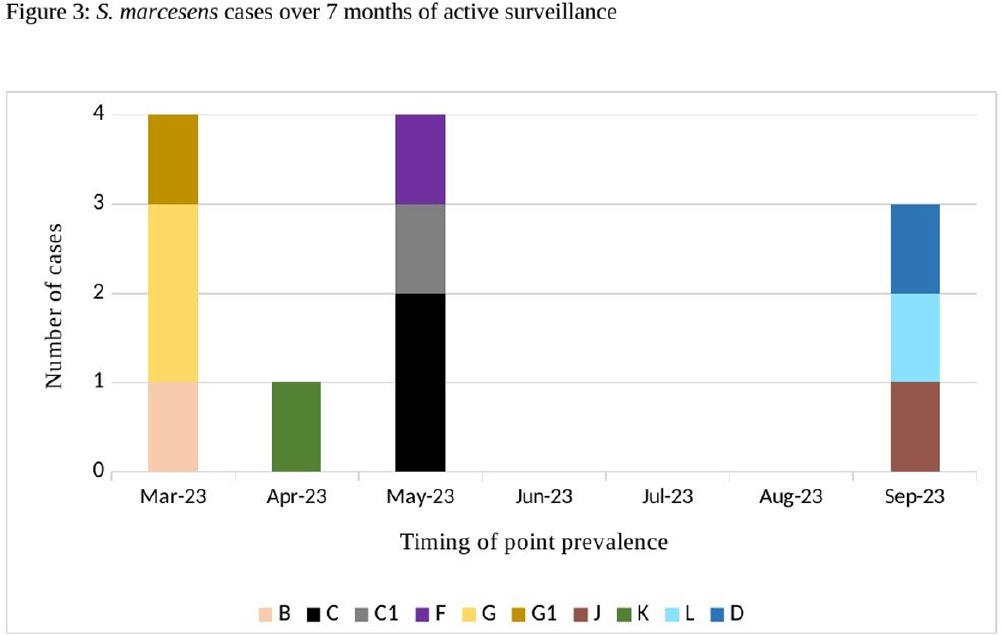

Serratia marcescens Burden in a Neonatal Intensive Care Unit: Colonization Rate, Clinical Infections and Strain Relatedness

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s153-s154

-

- Article

-

- You have access

- Open access

- Export citation

Microbiologic Evaluation of Colorectal Surgical Site Infections to Guide Surgical Prophylaxis Recommendations

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s143

-

- Article

-

- You have access

- Open access

- Export citation

User-Centered Education for Patients/Caregivers about Urinary Tract Infections, Asymptomatic Bacteriuria, and Antibiotics

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s5-s6

-

- Article

-

- You have access

- Open access

- Export citation

Practical, effective and safer: Placing traps above ground is an improved capture method for the critically endangered ngwayir (western ringtail possum; Pseudocheirus occidentalis)

- Part of

-

- Journal:

- Animal Welfare / Volume 33 / 2024

- Published online by Cambridge University Press:

- 16 September 2024, e29

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A Stepwise Diagnostic Stewardship Approach to Reduce Unnecessary Urine Cultures, Asymptomatic Bacteriuria, and CAUTI Rate

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s79-s80

-

- Article

-

- You have access

- Open access

- Export citation

Comparison of COVID-19 Sentinel Surveillance and COVID-19 School Absentee Surveillance in Japan

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s148-s149

-

- Article

-

- You have access

- Open access

- Export citation

Sustainability of Surgical Site Infection (SSI) Prevention Bundle for Pediatric Cardiothoracic Surgery Patients

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s145-s146

-

- Article

-

- You have access

- Open access

- Export citation

Clearing the Air, Breathe Easy: Intensive Care Unit Remodeling Unveils Insights into Aspergillosis Prevention

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s126

-

- Article

-

- You have access

- Open access

- Export citation

An “Epic” Journey to Improve Antimicrobial Stewardship

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s157-s159

-

- Article

-

- You have access

- Open access

- Export citation

The Mechanics, Art, and Value of Central Line Stewardship

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s71-s72

-

- Article

-

- You have access

- Open access

- Export citation

Improving Consistency and Accuracy: A Novel C. auris Colonization Screening Strategy Using a Nares + Hands Composite Swab

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s112-s113

-

- Article

-

- You have access

- Open access

- Export citation

Assessing Mupirocin Resistance in MRSA Isolates in Hospitals in Cleveland, OH and Detroit, MI

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s117

-

- Article

-

- You have access

- Open access

- Export citation

Viral Kinetics of SARS-CoV-2 in Nursing Home Residents and Staff

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s73-s74

-

- Article

-

- You have access

- Open access

- Export citation

Antimicrobial Use in Veterans Affairs Community Living Centers, 2015 - 2019

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s6-s7

-

- Article

-

- You have access

- Open access

- Export citation

Epidemiology and Duration of Therapy in Patients with Gram-negative Bloodstream Infections: Retrospective Analysis

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s43

-

- Article

-

- You have access

- Open access

- Export citation

Hospital-Onset Clostridioides difficile infection in chronic kidney disease patients

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s68-s69

-

- Article

-

- You have access

- Open access

- Export citation