Refine listing

Actions for selected content:

142352 results in Open Access

Aerosol optical depth disaggregation: toward global aerosol vertical profiles

-

- Journal:

- Environmental Data Science / Volume 3 / 2024

- Published online by Cambridge University Press:

- 16 September 2024, e16

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Occupational percutaneous injuries and exposures at a dental teaching institution from 2017 to 2023

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s120

-

- Article

-

- You have access

- Open access

- Export citation

Perceptions on Penicillin Allergy Labels among Nurses and Prescribers in Three Pediatric Urgent Care Sites

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s45

-

- Article

-

- You have access

- Open access

- Export citation

First Detected Transmission of C. auris within a Minnesota Healthcare Facility Following Exposure in the Emergency Department

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, p. s92

-

- Article

-

- You have access

- Open access

- Export citation

Timesavers: Clinical Decision Support and Automation of MRSA and VRE Deisolation

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s114-s117

-

- Article

-

- You have access

- Open access

- Export citation

Bereavement guilt among young adults impacted by caregivers’ cancer: Associations with attachment style, experiential avoidance, and psychological flexibility

-

- Journal:

- Palliative & Supportive Care / Volume 22 / Issue 6 / December 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. 1998-2006

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Understanding the fluvial capture of the Guadix-Baza Basin in SE Spain through its oldest exorheic deposits

-

- Journal:

- Quaternary Research / Volume 122 / November 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. 106-121

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

A comparison of the welfare of free-ranging native pony herds on common land with those used for conservation grazing in the UK

-

- Journal:

- Animal Welfare / Volume 33 / 2024

- Published online by Cambridge University Press:

- 16 September 2024, e30

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Trends of Early Onset Group B Streptococcus infections and Observed Racial and Geographic Disparities Associated with GBS Infections in Tennessee, 2005-2021

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s131-s132

-

- Article

-

- You have access

- Open access

- Export citation

Are there risk factors commonly observed on Australian farms where the welfare of livestock is poor?

-

- Journal:

- Animal Welfare / Volume 33 / 2024

- Published online by Cambridge University Press:

- 16 September 2024, e34

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Extended-Spectrum Beta-Lactamase Producing Enterobacterales Infections in the United States, 2012-2021

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s32-s33

-

- Article

-

- You have access

- Open access

- Export citation

Informing synthetic passive microwave predictions through Bayesian deep learning with uncertainty decomposition

- Part of

-

- Journal:

- Environmental Data Science / Volume 3 / 2024

- Published online by Cambridge University Press:

- 16 September 2024, e17

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Moving Beyond the Reflex: Effect of a Clinical Decision Support Tool on Urine Culture Ordering Practices

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s84-s85

-

- Article

-

- You have access

- Open access

- Export citation

The Collective Responsibilities of Science: Toward a Normative Framework

-

- Journal:

- Philosophy of Science / Volume 92 / Issue 1 / January 2025

- Published online by Cambridge University Press:

- 16 September 2024, pp. 1-18

- Print publication:

- January 2025

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Antimicrobial Stewardship Practice Changes Following a Statewide Educational Conference in Nebraska

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s37-s38

-

- Article

-

- You have access

- Open access

- Export citation

In Pursuit of the Analytical Unit. Island Archaeology as a Case Study

-

- Journal:

- Cambridge Archaeological Journal / Volume 34 / Issue 4 / November 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. 583-600

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Can Artificial Intelligence Support Infection Prevention and Control Consultations?

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s29-s30

-

- Article

-

- You have access

- Open access

- Export citation

Teachers’ Perceptions of Formative Assessment for Students With Disability: A Case Study From India

- Part of

-

- Journal:

- Australasian Journal of Special and Inclusive Education / Volume 48 / Issue 2 / December 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. 122-135

-

- Article

-

- You have access

- Open access

- HTML

- Export citation

Antibiotic Prescribing by General Dentists in the Outpatient Setting — United States, 2018–2022

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s22-s23

-

- Article

-

- You have access

- Open access

- Export citation

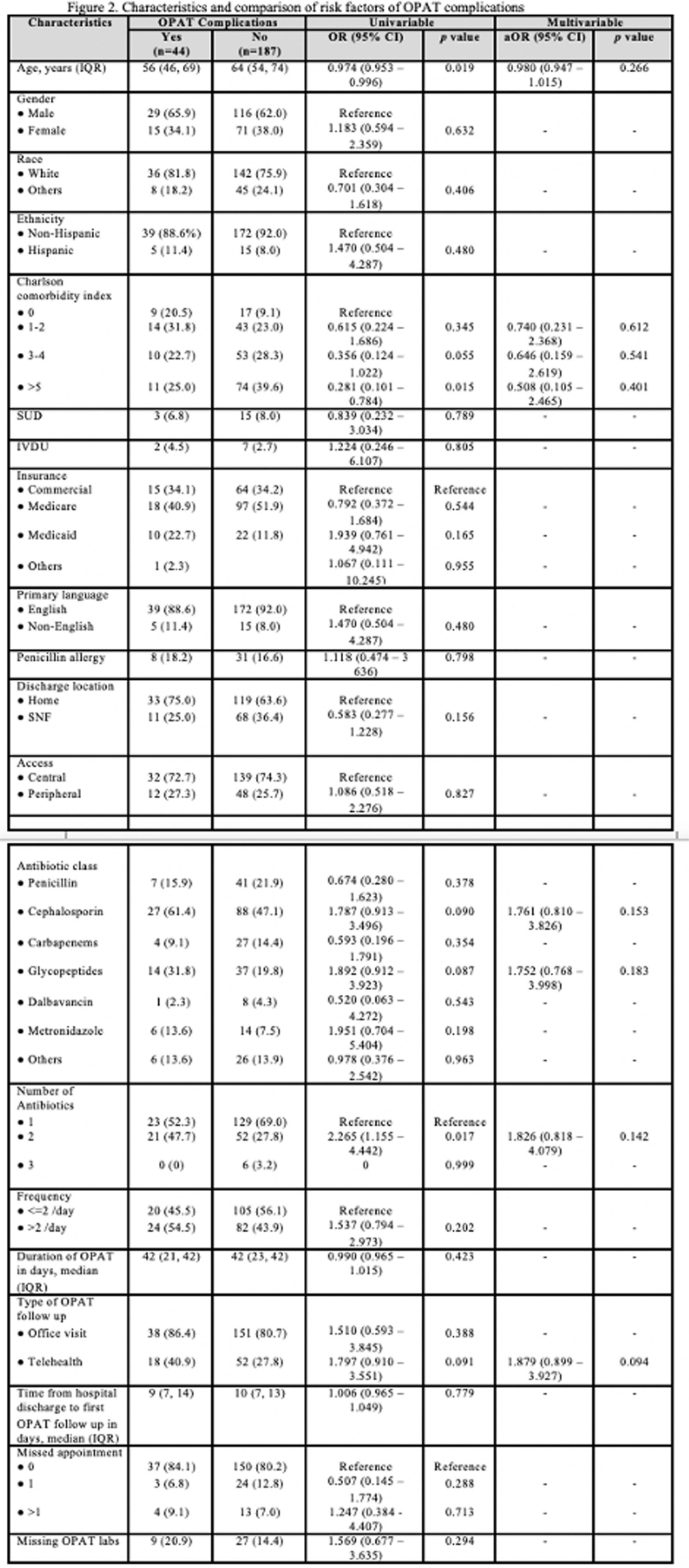

Risk Factors Predicting Complication of OPAT in an Academic Center: A Retrospective Cohort Study

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s53-s54

-

- Article

-

- You have access

- Open access

- Export citation